A Systematic Literature Review on the Automatic Creation of Tactile Graphics for the Blind and Visually Impaired

Abstract

:1. Introduction

2. Background and Motivation

2.1. Background of Tactile Graphics and Automatic Tactile Graphics Generation

2.2. Artificial Intelligence Algorithms for Generating Tactile Graphics

- It has become the first systematic review paper in the field of static and dynamic tactile graphic generation using conventional computer vision and AI technologies. It provided an in-depth analysis of articles from the last six years and explained the current state of this research field.

- It compared different tactile graphics generation approaches for BVI individuals and summarized the results of the studies.

- It determined the level of importance of tactile graphics in the education and social life of BVI individuals.

- It defined the role of AI in automatic tactile graphics generation.

2.3. Existing Literature Review and Motivation

- What are the most advanced accomplishments and innovations in tactile graphics generation?

- What is the impact of AI on automatic tactile graphics generation for BVI individuals?

- What is the difference between scientific results and commercially available technologies in the life of BVI individuals?

- What are the main gaps and difficulties that are not addressed in research today?

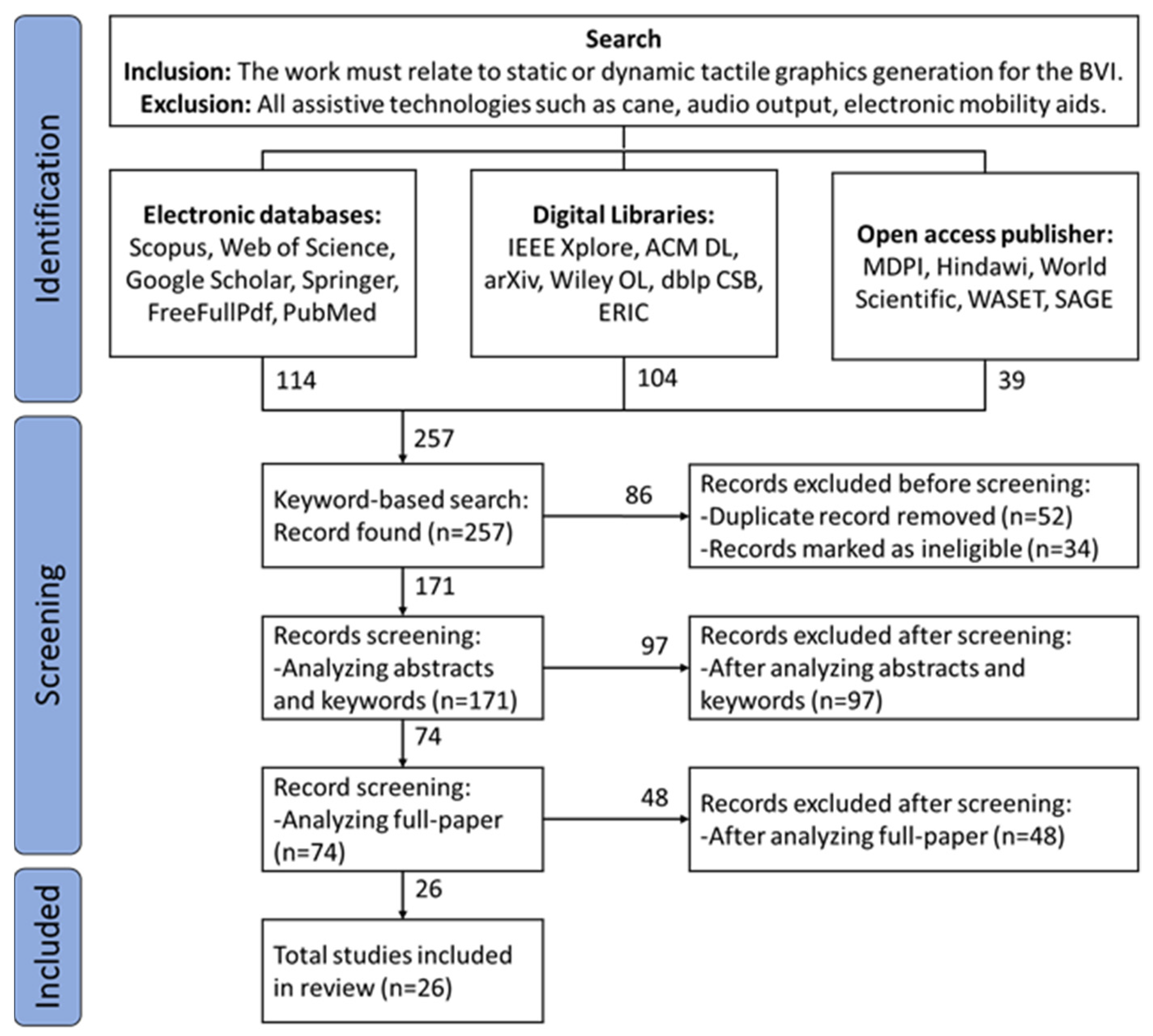

3. Review Methodology

3.1. Research Questions

- RQ1: What is the role of tactile graphics in the education of BVI individuals and their adaptation to the society?

- RQ2: What are the current methods and commercially available technologies for dynamic tactile graphics generation?

- RQ3: What are the advantages of solutions using AI and 3D printers and what are the gaps that need to be addressed for future developments?

3.2. Search Strategy

3.3. Criteria for Inclusion and Exclusion

- The work must have related to tactile graphics generation for BVI individuals. Static or dynamic tactile graphics generation were also considered.

- Works related to tactile drawing by sighted persons were considered but their results were examined in depth.

- All methods based on AI algorithms for BVI individuals were analyzed in-depth and the results were sorted according to their novelty.

- Works related to tactile sensors for robotics were not considered.

- All AT, including cane, audio output, and electronic mobility aids, were not considered.

- Works that focus only on braille text were not considered.

3.4. Electronic Databases and Digital Libraries

3.5. Study Selection

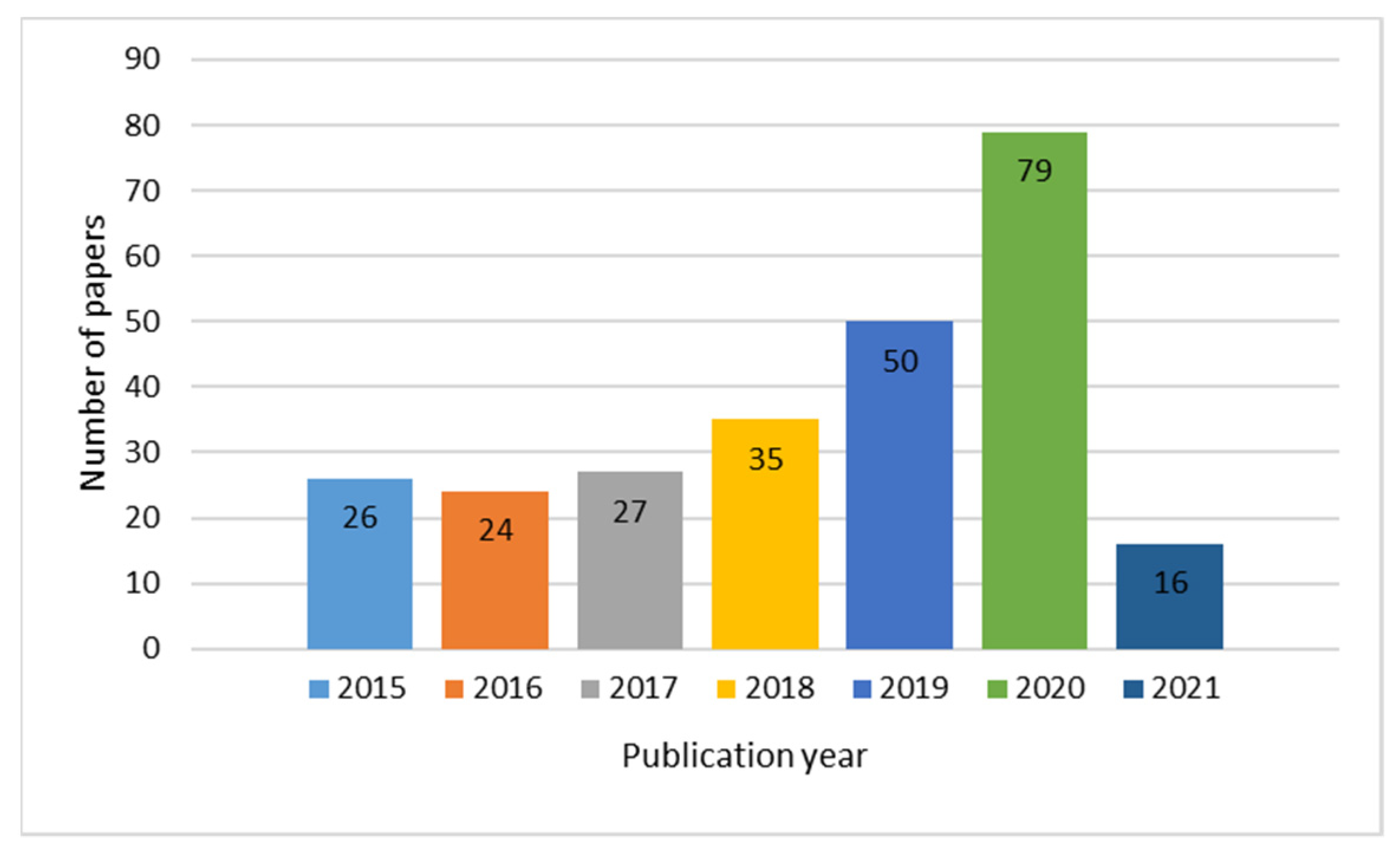

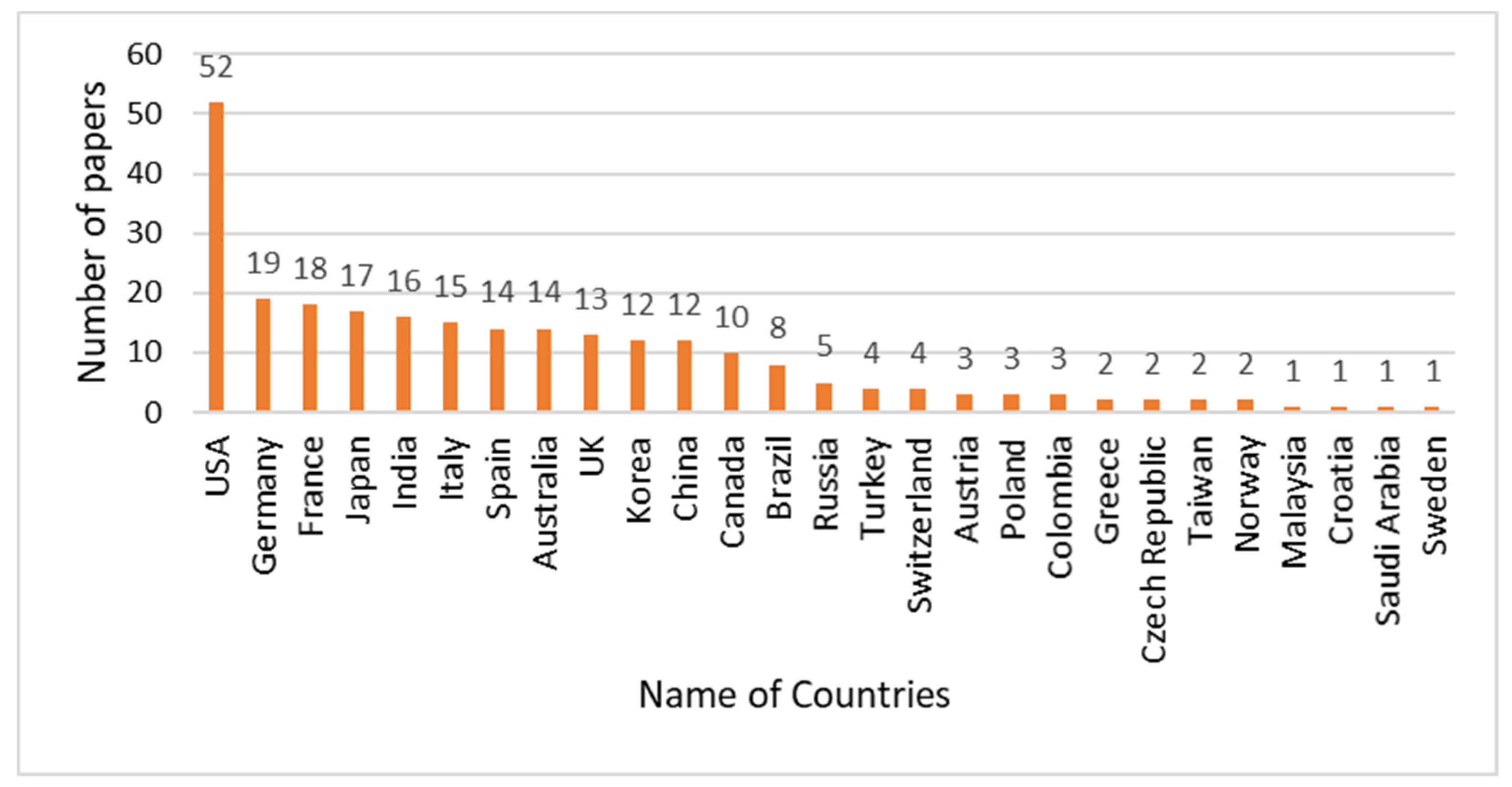

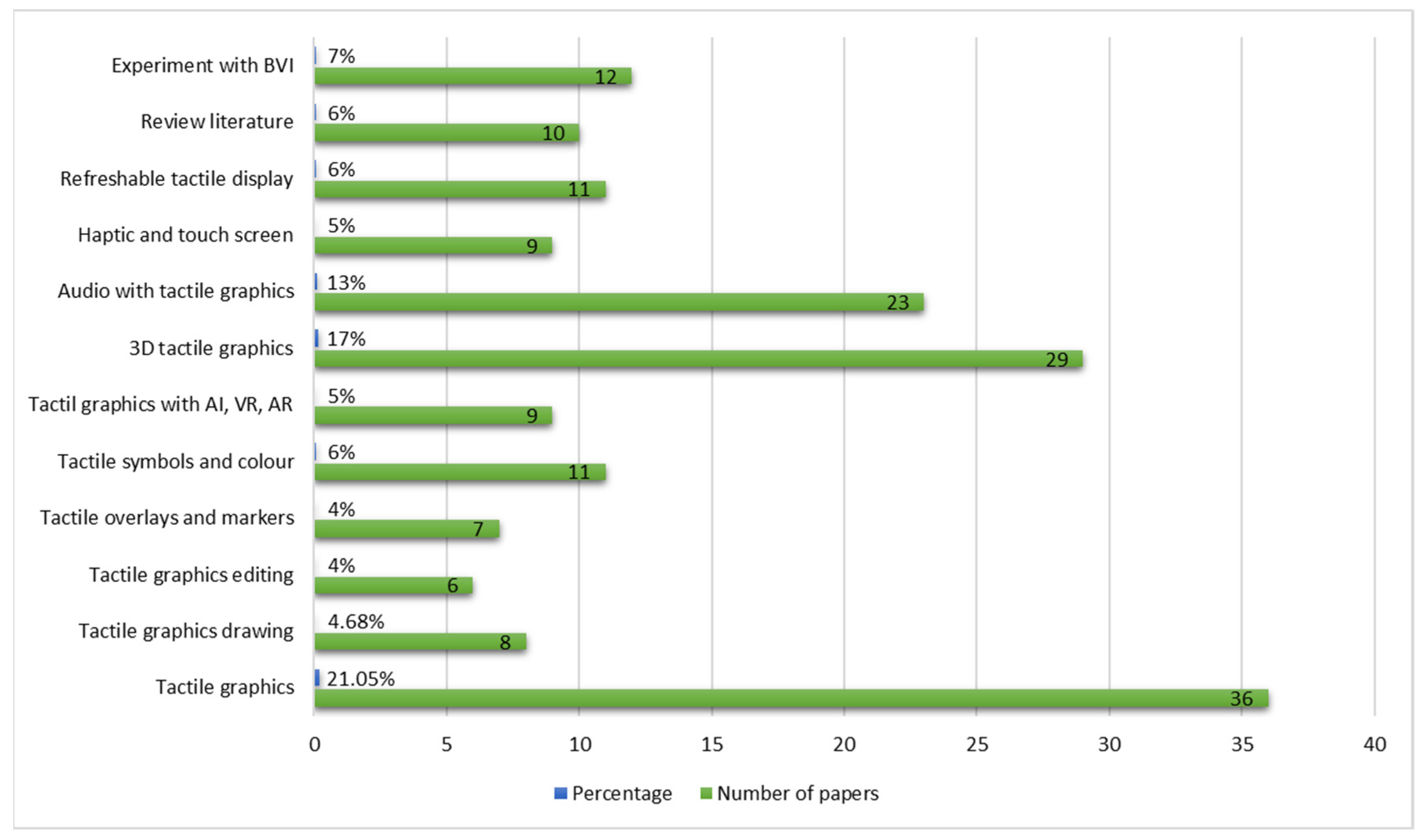

4. Review Results

4.1. Overview

4.2. Title and Abstract Analysis

4.3. Full-Paper Analysis

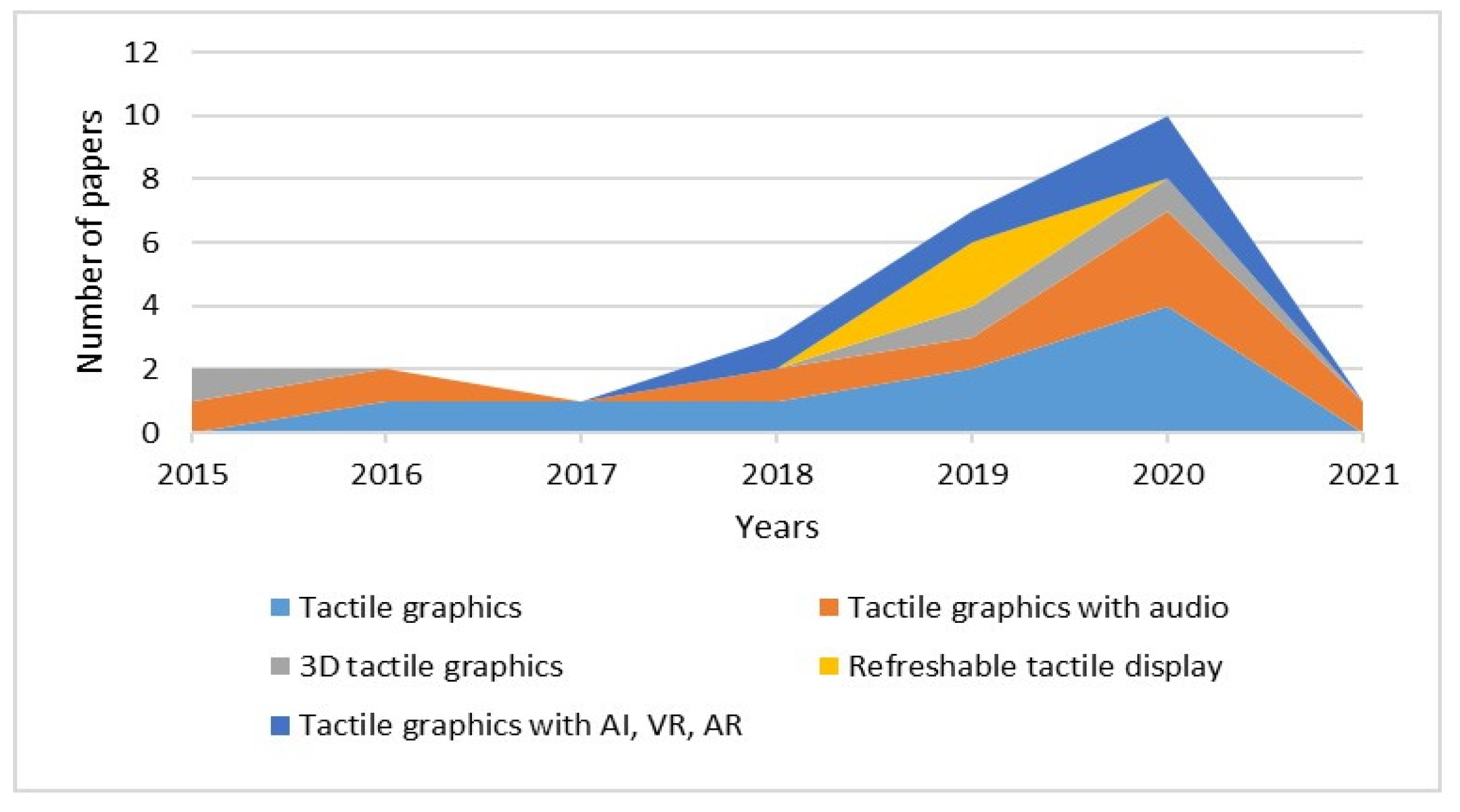

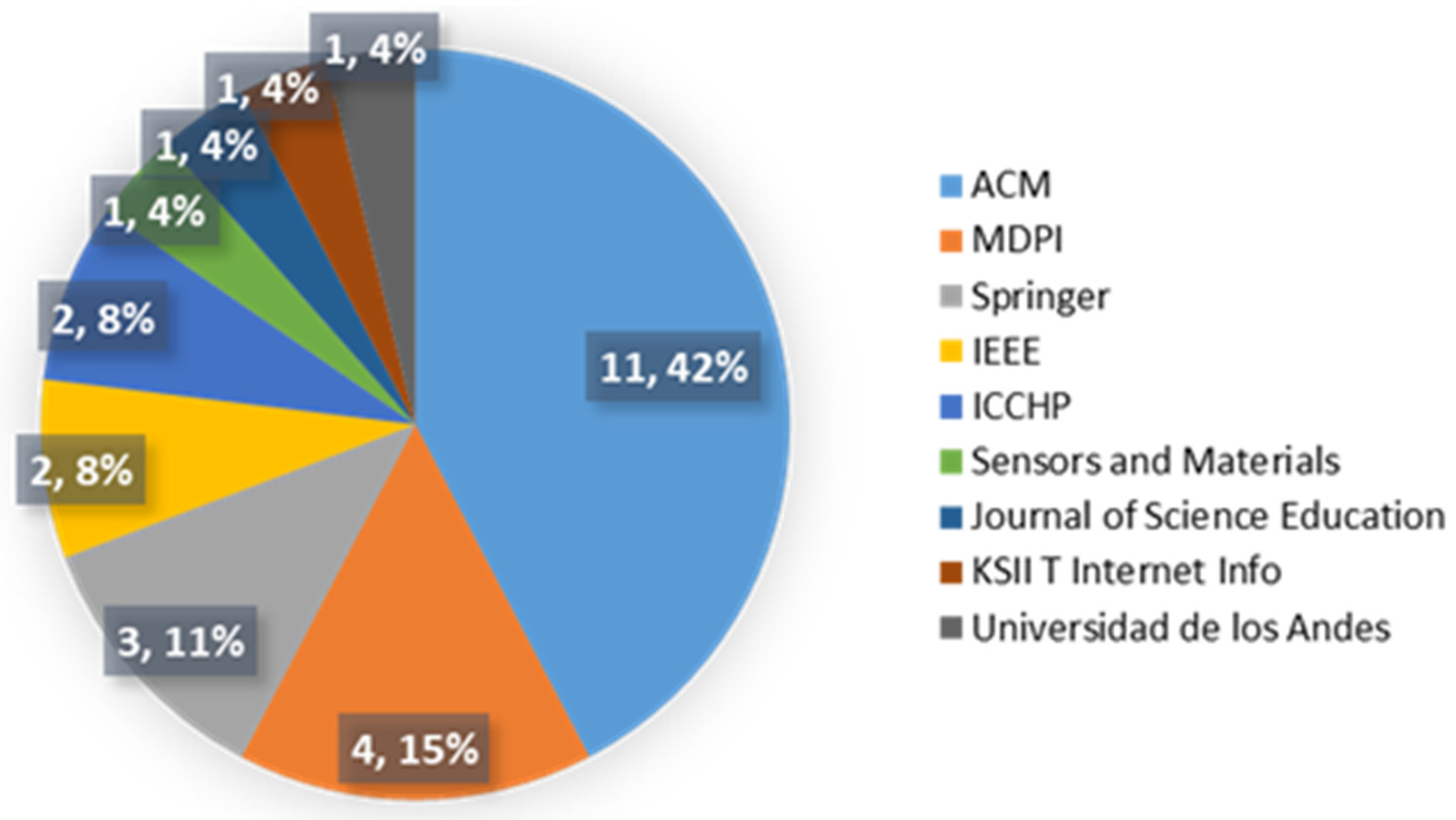

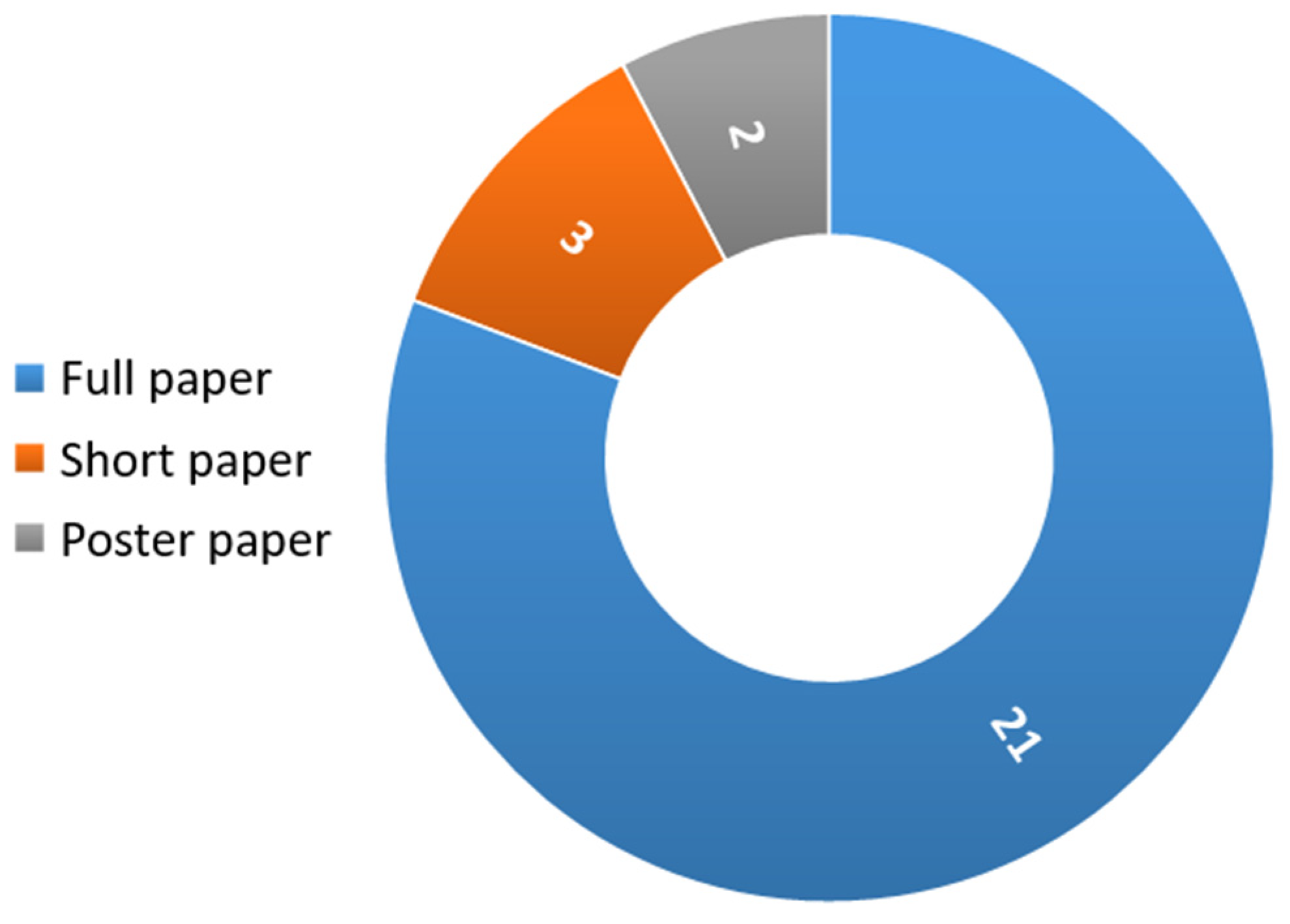

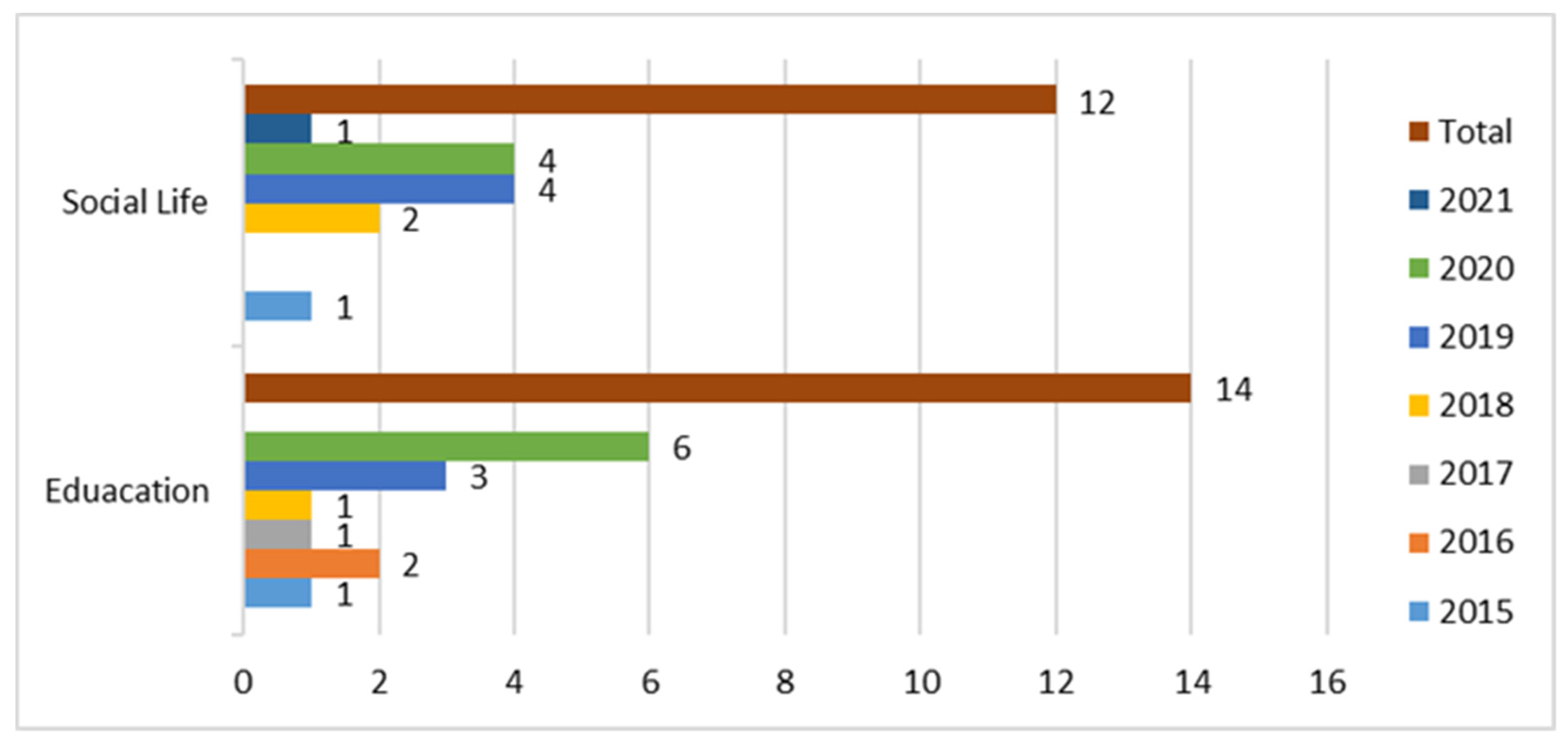

Technologies and Venues

4.4. Research Question 1: What Is the Role of Tactile Graphics in the Education of BVI Individuals and Their Adaptation of the BVI to the Society?

4.4.1. The Role of Tactile Graphics

4.4.2. Tactile Graphics for STEM Subjects and Braille Books

4.4.3. Involvement of Blind and Visually Impaired

4.4.4. Involvement of Sighted Teachers and Instructors

4.4.5. Advantages and Disadvantages of Existing Solutions in Education and Adaption of BVI

4.5. Research Question 2: What Are the Current Methods and Commercially Available Technologies for Dynamic Tactile Graphics Generation?

4.5.1. Currently Available Platform and Framework

4.5.2. Currently Available Methods from Images

4.5.3. Currently Available Methods from Electronic Books

4.5.4. Currently Available Methods Using Audio

4.5.5. Commercially Available Software and Hardware

4.5.6. Currently Available Online Tutorials

4.5.7. Intended Application Domains

4.5.8. Advantages and Disadvantages of Current Methods

4.6. Research Question 3: What Are the Advantages of Solutions Using AI and 3D Printer and the Gaps That Need to Be Addressed for Future Developments?

4.6.1. Use of VR and Deep Learning

4.6.2. Use of 3D Printing

4.6.3. Advantages and Disadvantages of AI and VR Based Methods

4.6.4. Summary for RQ3

5. Discussions

- Despite the variety of tactile graphics generation methods and algorithms available, it is difficult to create simple tactile graphics from materials and images in STEM subjects.

- Creating tactile graphics or 3D models requires a specialist and takes a long time to produce quality results because the full process is not automated.

- Researchers working on this topic have not collaborated with BVI individuals. Thus, many science-based solutions have remained theoretical without reaching the production stage.

- The cost of commercially available technologies and applications remains prohibitively expensive for low-income users.

- Despite the development of AI algorithms for many years, methods for creating tactile graphics have mostly used traditional methods. Hence, it is crucial to create custom datasets to develop machine-learning models.

- Considering the increasing number of BVI people in the world, it is essential to expand the range of AT that assists them in receiving quality education.

6. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Appendix A

| Article Technology | Tactile Graphics in Education (E) and Social Life (SL) | Tactile Graphics Generation Methodology | Experiments and Evaluation | Results and Conclusion |

| [38] Tactile graphics | Generating accessible text and tactile graphics for a variety of graphics types in an HTML document. (E) | Machine learning models were developed to classify graphics into categories. | n/a | The software generated tactile graphics for diagrams, trees, graphs, flowcharts digital circuits, bar chart, etc. |

| [48] Tactile graphics | Tactile graphics of seven bar charts, six line charts, three pie charts, and five scatterplots. (E) | Tactile charts were developed with different design characteristics to evaluate their effectiveness. | 48 blind participants tested the chart types and design features in reading data values. | 13 aspects were identified as essential in remote studies with blind people. |

| [58] Tactile graphics | Generating tactile graphics from images to perceive education materials and environment. (SL) | Salient objects were extracted and contours were detected from images using Canny edge detection. | Quantitative and qualitative comparisons of salient object detection algorithms were evaluated. | Visually salient objects were translated into assistive technology systems for visually impaired individuals. |

| [56] Tactile graphics | Tactile schematic symbols and nine guidelines were proposed to create readable tactile graphics. (E) | 11 rounds of iterations of six schematics were completed to produce readable versions. | The schematics were evaluated by low-vision and blind participants over the age of 18. | The schematic symbols of popular textbooks and guidelines were produced. |

| [59] Tactile graphics | Detecting the outer boundaries and inner edges of salient objects for simple tactile graphics generation. (SL) | The contours of the extracted salient objects were printed as tactile graphics using Index Braille Embosser. | 14 blind students evaluated the tactile graphics and recognized the tactile graphics content in one minute. | The visually impaired students clearly identified 74% of the tactile graphics. |

| [49] Tactile graphics | Software plugin for readily producing variable-height tactile graphics of biological molecules and proteins. (E) | The plugin introduced representation schemes designed to produce variable-height tactile graphics. | Three-dimensional printed models and tactile graphics enabled blind students to analyze the protein structures. | The results enabled undergraduate blind students to conduct quality research on protein structure. |

| [50] Tactile graphics | A controlled user study of four tactile representations of social networks and biological networks. (E) | Four tactile representations of network data were generated using different methods and swell paper. | Eight participants reported about the overview, connectivity cluster, and common connection of the network data. | All the participants noticed that the two node-link diagram representations were more natural and intuitive. |

| [54] Tactile graphics | Automatically translating characters and images into tactile graphics for an electronic braille page. (E) | The labeling and filtering of a scanned image, character, and graphic labels were determined. | 10 visually impaired individuals evaluated the electronic braille pages printed by the braille printer. | Time and cost were significantly reduced and more reading materials were provided for the visually impaired individuals. |

| [55] Tactile graphics | Automatically converting scanned textbook images to tactile diagrams and text into braille. (E) | This includes the extraction and recognition of text and geometric lines and circles. | n/a | The first version of the software was capable of enhancing the productivity of tactile designers. |

| [51] Tactile graphics with audio | Improving the tactile chart creation process by an automation tool that includes an accessible GUI. (E) | The production process was divided into five basic components such as Input Data, User Input, etc. | Two blind participants evaluated the bar and line charts and scatterplots in two-hour sessions. | The structure and elements of the charts and all properties were recognized by both participants. |

| [11] Tactile graphics with audio | Tracking a student’s fingers by a machine vision-based tactile graphics helper (TGH). (E) | The TGH includes a mounted camera placed across the tactile graphic and recognizes different tactile graphics. | Three participants who were university students with STEM majors tested the TGH with six tactile graphics. | The TGH can improve STEM students’ skills and efficiency in accessing educational content. |

| [52] Tactile graphics with audio | A device that provides audio and haptic guidance via skin-stretch feedback to a user’s hand. (E) | The device can support two teaching scenarios and two guidance interactions. | One blind engineering student evaluated two developed applications that focused on the learning scenario. | All the participants and experts of tactile graphics commented for improving several technical functions. |

| [21] Tactile graphics with audio | Tactile graphics with a voice (TGV), a device used to access label information in tactile graphics using QR codes to replace the text. (E) | TGV comprises tactile graphics with QR code labels and a smartphone application. | 10 blind participants tested the tasks using three modes on the smartphone application (1) no guidance, (2) verbal, (3) finger-pointing guidance. | The accuracy of the 12 tasks did not vary across the different modes (silent mode: 88%; verbal mode: 88%; finger Pointing mode: 89%). |

| [7] Tactile graphics with audio | A mobile audio-tactile learning environment, TPad, which facilitates the addition of real educational materials. (E) | The system consists of three components: 1) touchpad, 2) a mobile app, and 3) teacher’s interface. | Two teachers and five blind used the system in mathematics, social science, handicraft, computer science, and physics. | All the participants perceived a tactile graphic faster and correctly answered more than 70% of the questions. |

| [53] Tactile graphics with audio | A platform that shares graphic math content (charts, geometric figures, etc.) in audio-tactile form for the blind. (E) | The test bench consists of a touch tablet and developed software for interactive audio-tactile display. | 10 blind students solved 15 math exercises containing graphic content available in tactile form. | The developed method can be helpful for both a teacher and a blind student for self-study. |

| [61] Tactile graphics with audio | An audio-tactile graphic system outputs an audio guidance from iPhone. (SL) | The system uses edge detection from the RGB image and extracts coordinates of the pixels. | Eight blind participants tested the system in three sessions using the tactile maps with six, 12, and 30 rooms. | Audio guidance function is effective for understanding tactile graphics. |

| [64] Tactile graphics with audio | An interactive multi-modal guide prototype that uses the audio and tactile experience of visual artworks. (SL) | Several techniques, i.e., 3D lasertriangulation, were used to extract the topographical information. | 18 participants evaluated and compared the multi-modal and tactile graphic accessible exhibits. | The approach is simple, easy to use, and improves confidence when exploring visual artworks. |

| [65] 3D tactile graphics | Illustration design of 3D objects assumes the identification of relevant data in topology and geometry. (SL) | Multi-projection rendering strategy was introduced to display the geometric information of 3D geometry. | 20 blind participants tested the 3D object replication and tactile illustrations of teapot, lamp, eyeglasses, chair, etc. | The results can be used as a tool to depict products ranging from furniture to art pieces in a museum. |

| [62] 3D tactile graphics | 3D printed maps on-site at a public event to examine their suitability for the design of future 3D maps. (SL) | All the 3D maps were designed by a researcher with 20 years of experience in tactile graphics. | 10 participants tested the 3D maps and answered different questions to evaluate the usefulness of these maps. | Different recommendations were proposed for the design and use of 3D printed maps for accessibility. |

| [60] 3D tactile graphics | Six stakeholder groups attempt to create 3D printable accessible tactile pictures (3DP-ATPs). (SL) | Libraries, schools, volunteer centers, art galleries to offer workshops on 3DP-ATPs at their sites. | 69 participants focused on creating 3D printable 3DP-ATPs and reported their different experiences. | The participants offered advice for making the design task: five different skillsets. |

| [31] Refreshabletactile displays | Two methodologies are presented for delivering multimedia content to BVI individuals using a haptic and braille display. (SL) | A braille electronic book reader application that can share text, figures, and audio content. | The experiment was performed by using a combination of tablet and smartphone. | Braille display increases the accessibility of BVI individuals to multimedia as well as 3D and 2D haptic information delivery. |

| [66] Refreshabletactile displays | The transformation of DAISY and EPUB formats into 2D braille display. (SL) | This application was based on DAISY and EPUB. It supports contents display, text highlight, MP3, TTS. | The tablets and smartphones used were Samsung Tab S, Galaxy Tab S2 8.0, Samsung S7 Edge, S6, and Note 5. | The 2D mobile braille displaycan be very useful for literature, scientific figures, and education. |

| [63] Tactile graphics with AI | An AR to easily augment real objects, i.e., botanical atlas with audio feedback. (SL) | Two main processes used: augment the object with electrical components and digitally represent the object. | Three instructors augmented an existing tactile map of the school. Five BVI students tested the botanical atlas. | The participants found the interactive graphics are new to use for their mental imagery skills. |

| [36] Tactile graphics with AI | A machine learning (ML) model that identifies suitable and unsuitable images for tactile graphics (TG). (SL) | The ML model was built on top of MobileNet, and it classifies images into two categories. | The identification, search, and retraining functionalities were implemented in a web platform. | A web platform consists of (1) images that are transformable to TGs and (2) an online learning model. |

| [57] Tactile graphics with AI | Tactile educational tools for presenting the shapes of objects to visually impaired students using VR. (E) | 3D-CAD data were used to express the shapes of objects. A haptic device was used to develop a system. | Two blind and five low-vision persons guessed the shape of the objects. | The participants seemed to have difficulty in identifying the shape because of the reduced amount of information. |

| [35] Tactile graphics with AI | Develop techniques for finding images on the web that are suitable for use as tactile graphics or test their own digital images. (SL) | The machine learning model used Google Cloud AutoML. The system developed used NodeJs, Express web framework, and MongoDB. | The model used 1729 train and 194 test images, and it was reviewed by an accessibility technologies expert. | In the review, the expert discovered that some of the images were wrongly classified. |

References

- Luo, S.; Bimbo, J.; Dahiya, R.; Liu, H. Robotic tactile perception of object properties: A review. Mechatronics 2017, 48, 54–67. [Google Scholar] [CrossRef] [Green Version]

- Brulé, E.; Tomlinson, B.J.; Metatla, O.; Jouffrais, C.; Serrano, M. Review of Quantitative Empirical Evaluations of Technology for People with Visual Impairments. In Proceedings of the 2020 CHI Conference on Human Factors in Computing Systems, Honolulu, HI, USA, 25–30 April 2020; pp. 1–14. [Google Scholar]

- Matthew, B.; Holloway, L.; Reinders, S.; Goncu, C.; Marriott, K. Technology Developments in Touch-Based Accessible Graphics: A Systematic Review of Research 2010–2020. In Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems, Yokohama, Japan, 8–13 May 2021; pp. 1–15. [Google Scholar]

- Wabiński, J.; Mościcka, A. Automatic (Tactile) Map Generation—A Systematic Literature Review. ISPRS Int. J. Geo-Inf. 2019, 8, 293. [Google Scholar]

- World Health Organization. World Report on Vision; World Health Organization: Geneva, Switzerland, 2019; Licence: CC BY-NC-SA 3.0 IGO; Available online: https://www.who.int/publications/i/item/9789241516570 (accessed on 4 March 2021).

- Zebehazy, K.T.; Wilton, A.P. Quality, importance, and instruction: The perspectives of teachers of students with visual impairments on graphics use by students. J. Vis. Impair. Blind. 2014, 108, 5–16. [Google Scholar] [CrossRef]

- Melfi, G.; Müller, K.; Schwarz, T.; Jaworek, G.; Stiefelhagen, R. Understanding what you feel: A mobile audio-tactile system for graphics used at schools with students with visual impairment. In Proceedings of the 2020 CHI Conference on Human Factors in Computing Systems, Honolulu, HI, USA, 25–30 April 2020; pp. 1–12. [Google Scholar]

- Ferro, T.J.; Pawluk, D.T. Automatic image conversion to tactile graphic. In Proceedings of the 15th International ACM SIGACCESS Conference on Computers and Accessibility (ASSETS ’13), Bellevue, WA, USA, 21–23 October 2013; pp. 1–2. [Google Scholar]

- Zebehazy, K.T.; Wilton, A.P. Straight from the source: Perceptions of students with visual impairments about graphic use. J. Vis. Impair. Blind. 2014, 108, 275–286. [Google Scholar] [CrossRef] [Green Version]

- Smith, D.W.; Smothers, S.M. The role and characteristics of tactile graphics in secondary mathematics and science textbooks in braille. J. Vis. Impair. Blind. 2012, 106, 543–554. [Google Scholar] [CrossRef]

- Fusco, G.; Morash, V.S. The tactile graphics helper: Providing audio clarification for tactile graphics using machine vision. In Proceedings of the 17th International ACM SIGACCESS Conference on Computers & Accessibility, Lisbon, Portugal, 26–28 October 2015; pp. 97–106. [Google Scholar]

- Ferro, T.J.; Pawluk, D.T. Providing Dynamic Access to Electronic Tactile Diagrams. In Proceedings of the International Conference on Universal Access in Human-Computer Interaction, Las Vegas, NV, USA, 15–20 July 2017; Springer: Cham, Switzerland, 2017; pp. 269–282. [Google Scholar]

- Way, T.P.; Barner, K.E. Automatic visual to tactile translation—Part I: Human factors, access methods, and image manipulation. IEEE Trans. Rehabil. Eng. 1997, 5, 81–94. [Google Scholar] [CrossRef]

- Way, T.P.; Barner, K.E. Automatic visual to tactile translation—Part II: Evaluation of the TACTile Image Creation System. IEEE Trans. Rehabil. Eng. 1997, 5, 95–105. [Google Scholar] [CrossRef]

- Krufka, S.E.; Barner, K.E.; Aysal, T.C. Visual to tactile conversion of vector graphics. IEEE Trans. Neural Syst. Rehabil. Eng. 2007, 15, 310–321. [Google Scholar] [CrossRef]

- Jayant, C.; Renzelmann, M.; Wen, D.; Krisnandi, S.; Ladner, R.; Comden, D. Automated Tactile Graphics Translation: In the Field. In Proceedings of the 9th International ACM SIGACCESS Conference on Computers and Accessibility, Tempe, AZ, USA, 15–17 October 2007; pp. 75–82. [Google Scholar]

- Hernandez, S.E.; Barner, K.E. Joint Region Merging Criteria for Watershed-Based Image Segmentaion. In Proceedings of the International Conference on Image Processing, Vancouver, BC, Canada, 10–13 September 2000; Volume 2, pp. 108–111. [Google Scholar]

- Ladner, R.E.; Ivory, M.Y.; Rao, R.; Burgstahler, S.; Comden, D.; Hahn, S.; Renzelmann, M.J.; Krisnandi, S.; Ramasamy, M.; Slabosky, B.; et al. Automating tactile graphics translation. In Proceedings of the 7th International ACM SIGACCESS Conference on Computers and Accessibility, Baltimore, MD, USA, 9–12 October 2005; pp. 150–157. [Google Scholar]

- Brock, A.M.; Truillet, P.; Oriola, B.; Picard, D.; Jouffrais, C. Interactivity improves usability of geographic maps for visually impaired people. Hum. Comput. Interact. 2015, 30, 156–194. [Google Scholar] [CrossRef]

- Suzuki, R.; Stangl, A.; Gross, M.D.; Yeh, T. FluxMarker: Enhancing Tactile Graphics with Dynamic Tactile Markers. In Proceedings of the 19th International ACM SIGACCESS Conference on Computers and Accessibility, Baltimore, MD, USA, 29 October–1 November 2017; pp. 190–199. [Google Scholar]

- Baker, C.M.; Milne, L.R.; Scofield, J.; Bennett, C.L.; Ladner, R.E. Tactile graphics with a voice: Using QR codes to access text in tactile graphics. In Proceedings of the 16th International ACM SIGACCESS Conference on Computers & Accessibility, New York, NY, USA, 20–22 October 2014; pp. 75–82. [Google Scholar]

- Miele, J.A.; Landau, S.; Gilden, D. Talking TMAP: Automated generation of audio-tactile maps using Smith-Kettlewell’s TMAP software. Br. J. Vis. Impair. 2006, 24, 93–100. [Google Scholar] [CrossRef]

- Yu, W.; Ramloll, R.; Brewster, S. Haptic graphs for blind computer users. In Proceedings of the International Workshop on Haptic Human-Computer Interaction, Glasgow, UK, 31 August–1 September 2000; Springer: Berlin/Heidelberg, Germany, 2000; pp. 41–51. [Google Scholar]

- Rice, M.; Jacobson, R.D.; Golledge, R.G.; Jones, D. Design considerations for haptic and auditory map interfaces. Cartogr. Geogr. Inf. Sci. 2005, 32, 381–391. [Google Scholar] [CrossRef]

- Zeng, L.; Weber, G. Audio-haptic browser for a geographical information system. In Proceedings of the International Conference on Computers for Handicapped Persons, Vienna, Austria, 14–16 July 2010; Springer: Berlin, Germany, 2010; pp. 466–473. [Google Scholar]

- McGookin, D.; Robertson, E.; Brewster, S. Clutching at straws: Using tangible interaction to provide non-visual access to graphs. In Proceedings of the SIGCHI conference on human factors in computing systems, Atlanta, GA, USA, 10–15 April 2010; pp. 1715–1724. [Google Scholar]

- Ramloll, R.; Yu, W.; Brewster, S.; Riedel, B.; Burton, M.; Dimigen, G. Constructing sonified haptic line graphs for the blind student: First steps. In Proceedings of the Fourth International ACM Conference on Assistive Technologies, Arlington, VA, USA, 13–15 November 2000; pp. 17–25. [Google Scholar]

- Brown, C.; Hurst, A. Viztouch: Automatically generated tactile visualizations of coordinate spaces. In Proceedings of the Sixth International Conference on Tangible, Embedded and Embodied Interaction, Kingston, ON, Canada, 19–22 February 2012; pp. 131–138. [Google Scholar]

- Štampach, R.; Mulícková, E. Automated generation of tactile maps. J. Maps 2016, 12, 532–540. [Google Scholar] [CrossRef] [Green Version]

- Jungil, J.; Hongchan, Y.; Hyelim, L.; Jinsoo, C. Graphic haptic electronic board-based education assistive technology system for blind people. In Proceedings of the 2015 IEEE International Conference on Consumer Electronics (ICCE), Las Vegas, NV, USA, 9–12 January 2015; pp. 364–365. [Google Scholar]

- Kim, S.; Ryu, Y.; Cho, J.; Ryu, E.-S. Towards Tangible Vision for the Visually Impaired through 2D Multiarray Braille Display. Sensors 2019, 19, 5319. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Prescher, D.; Weber, G.; Spindler, M. A tactile windowing system for blind users. In Proceedings of the 12th International ACM SIGACCESS conference on Computers and accessibility, Orlando, FL, USA, 25–27 October 2010; pp. 91–98. [Google Scholar]

- Schmitz, B.; Ertl, T. Interactively displaying maps on a tactile graphics display. In Proceedings of the 2012 Workshop on Spatial Knowledge Acquisition with Limited Information Displays, Bavaria, Germany, 31 August 2012; pp. 13–18. [Google Scholar]

- Zeng, L.; Weber, G. ATMap: Annotated tactile maps for the visually impaired. In Cognitive Behavioural Systems; Lecture Notes in Computer Science; Springer: Berlin, Germany, 2012; Volume 7403, pp. 290–298. [Google Scholar]

- Felipe, M.P.; Guerra-Gómez, J.A. ML to Categorize and Find Tactile Graphics. Bachelor’s Thesis, Universidad de los Andes, Bogota, Columbia, 2020. [Google Scholar]

- Gonzalez, R.; Gonzalez, C.; Guerra-Gomez, J.A. Tactiled: Towards more and better tactile graphics using machine learning. In Proceedings of the 21st International ACM SIGACCESS Conference on Computers and Accessibility, Pittsburgh, PA, USA, 28–30 October 2019; pp. 530–532. [Google Scholar]

- Guinness, D.; Muehlbradt, A.; Szafir, D.; Kane, S.K. RoboGraphics: Dynamic Tactile Graphics Powered by Mobile Robots. In Proceedings of the 21st International ACM SIGACCESS Conference on Computers and Accessibility, Pittsburgh, PA, USA, 28–30 October 2019; pp. 318–328. [Google Scholar]

- Bose, R.; Bauer, M.A.; Jürgensen, H. Utilizing Machine Learning Models for Developing a Comprehensive Accessibility System for Visually Impaired People. In Proceedings of the International Conference on Computers Helping People with Special Needs, Lecco, Italy, 9–11 September 2020; p. 83. [Google Scholar]

- Yuksel, B.F.; Fazli, P.; Mathur, U.; Bisht, V.; Kim, S.J.; Lee, J.J.; Jin, S.J.; Siu, Y.-T.; Miele, J.A.; Yoon, I. Human-in-the-Loop Machine Learning to Increase Video Accessibility for Visually Impaired and Blind Users. In Proceedings of the 2020 ACM Designing Interactive Systems Conference, New York, NY, USA, 6–10 July 2020; pp. 47–60. [Google Scholar]

- Yuksel, B.F.; Kim, S.J.; Jin, S.J.; Lee, J.J.; Fazli, P.; Mathur, U.; Bisht, V.; Yoon, I.; Siu, Y.-T.; Miele, J.A. Increasing Video Accessibility for Visually Impaired Users with Human-in-the-Loop Machine Learning. In Proceedings of the Extended Abstracts of the 2020 CHI Conference on Human Factors in Computing Systems, Honolulu, HI, USA, 25–30 April 2020; pp. 1–9. [Google Scholar]

- Buonamici, F.; Carfagni, M.; Furferi, R.; Governi, L.; Volpe, Y. Are we ready to build a system for assisting blind people in tactile exploration of bas-reliefs? Sensors 2016, 16, 1361. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Lin, Y.; Wang, K.; Yi, W.; Lian, S. Deep learning based wearable assistive system for visually impaired people. In Proceedings of the IEEE/CVF International Conference on Computer Vision Workshops, Seoul, Korea, 27–28 October 2019. [Google Scholar]

- Klingenberg, O.G.; Holkesvik, A.H.; Augestad, L.B.; Erdem, E. Research evidence for mathematics education for students with visual impairment: A systematic review. Cogent Educ. 2019, 6, 1626322. [Google Scholar] [CrossRef]

- Oh, U.; Joh, H.; Lee, Y.J. Image Accessibility for Screen Reader Users: A Systematic Review and a Road Map. Electronics 2021, 10, 953. [Google Scholar] [CrossRef]

- Cole, H. Tactile cartography in the digital age: A review and research agenda. Prog. Hum. Geogr. 2021, 45, 834–854. [Google Scholar]

- Kitchenham, B.A.; Charters, S. Guidelines for Performing Systematic Literature Reviews in Software Engineering Version 2.3; ACM Press: New York, NY, USA, 2007. [Google Scholar]

- Page, M.J.; Moher, D.; Bossuyt, P.M.; Boutron, I.; Hoffmann, T.C.; Mulrow, C.D.; Shamseer, L.; Tetzlaff, J.M.; Akl, E.A.; Brennan, S.E.; et al. PRISMA 2020 explanation and elaboration: Updated guidance and exemplars for reporting systematic reviews. Br. Med. J. 2021, 372. [Google Scholar] [CrossRef]

- Engel, C.; Weber, G. User study: A detailed view on the effectiveness and design of tactile charts. In Proceedings of the IFIP Conference on Human-Computer Interaction, Paphos, Cyprus, 2–6 September 2019; Springer: Cham, Switzerland, 2019; pp. 63–82. [Google Scholar]

- Show, O.R.; Hadden-Perilla, J.A. TactViz: A VMD Plugin for Tactile Visualization of Protein Structures. J. Sci. Educ. Stud. Disabil. 2020, 23, 14. [Google Scholar]

- Yang, Y.; Marriott, K.; Butler, M.; Goncu, C.; Holloway, L. Tactile presentation of network data: Text, matrix or diagram? In Proceedings of the Extended Abstracts of the 2020 CHI Conference on Human Factors in Computing Systems, Honolulu, HI, USA, 25–30 April 2020; pp. 1–12. [Google Scholar]

- Engel, C.; Müller, E.F.; Weber, G. SVGPlott: An accessible tool to generate highly adaptable, accessible audio-tactile charts for and from blind and visually impaired people. In Proceedings of the 12th ACM International Conference on PErvasive Technologies Related to Assistive Environments, Rhodes, Greece, 5–7 June 2019; pp. 186–195. [Google Scholar]

- Chase, E.D.; Siu, A.F.; Boadi-Agyemang, A.; Kim, G.S.; Gonzalez, E.J.; Follmer, S. PantoGuide: A Haptic and Audio Guidance System to Support Tactile Graphics Exploration. In Proceedings of the 22nd International ACM SIGACCESS Conference on Computers and Accessibility, Virtual Event, Greece, 26–28 October 2020; pp. 1–4. [Google Scholar]

- Maćkowski, M.; Brzoza, P.; Meisel, R.; Bas, M.; Spinczyk, D. Platform for Math Learning with Audio-Tactile Graphics for Visually Impaired Students. In Proceedings of the International Conference on Computers Helping People with Special Needs, Lecco, Italy, 9–11 September 2020; p. 75. [Google Scholar]

- Park, T.; Jung, J.; Cho, J. A method for automatically translating print books into electronic Braille books. Sci. China Inf. Sci. 2016, 59, 1–14. [Google Scholar] [CrossRef] [Green Version]

- Gupta, R.; Balakrishnan, M.; Rao, P.V.M. Tactile diagrams for the visually impaired. IEEE Potentials 2017, 36, 14–18. [Google Scholar] [CrossRef]

- Race, L.; Fleet, C.; Miele, J.A.; Igoe, T.; Hurst, A. Designing Tactile Schematics: Improving Electronic Circuit Accessibility. In Proceedings of the 21st International ACM SIGACCESS Conference on Computers and Accessibility, Pittsburgh, PA, USA, 28–30 October 2019; pp. 581–583. [Google Scholar]

- Asakawa, N.; Wada, H.; Shimomura, Y.; Takasugi, K. Development of VR Tactile Educational Tool for Visually Impaired Children: Adaptation of Optical Motion Capture as a Tracker. Sens. Mater. 2020, 32, 3617–3626. [Google Scholar]

- Yoon, H.; Kim, B.-H.; Mukhiddinov, M.; Cho, J. Salient Region Extraction based on Global Contrast Enhancement and Saliency Cut for Image Information Recognition of the Visually Impaired. KSII Trans. Internet Inf. Syst. 2018, 12, 2287–2312. [Google Scholar]

- Abdusalomov, A.; Mukhiddinov, M.; Djuraev, O.; Khamdamov, U.; Whangbo, T.K. Automatic salient object extraction based on locally adaptive thresholding to generate tactile graphics. Appl. Sci. 2020, 10, 3350. [Google Scholar] [CrossRef]

- Stangl, A.; Hsu, C.-L.; Yeh, T. Transcribing across the senses: Community efforts to create 3D printable accessible tactile pictures for young children with visual impairments. In Proceedings of the 17th International ACM SIGACCESS Conference on Computers & Accessibility, Lisbon, Portugal, 26–28 October 2015; pp. 127–137. [Google Scholar]

- Hashimoto, Y.; Takagi, N. Development of audio-tactile graphic system aimed at facilitating access to visual information for blind people. In Proceedings of the 2018 IEEE International Conference on Systems, Man, and Cybernetics (SMC), Miyazaki, Japan, 7–10 October 2018; pp. 2283–2288. [Google Scholar]

- Holloway, L.; Marriott, K.; Butler, M.; Reinders, S. 3D Printed Maps and Icons for Inclusion: Testing in the Wild by People who are Blind or have Low Vision. In Proceedings of the 21st International ACM SIGACCESS Conference on Computers and Accessibility, Pittsburgh, PA, USA, 28–30 October 2019; pp. 183–195. [Google Scholar]

- Thevin, L.; Brock, A.M. Augmented reality for people with visual impairments: Designing and creating audio-tactile content from existing objects. In Proceedings of the International Conference on Computers Helping People with Special Needs, Linz, Austria, 11–13 July 2018; Springer: Cham, Switzerland, 2018; pp. 193–200. [Google Scholar]

- Cavazos, Q.L.; Bartolomé, J.I.; Cho, J. Accessible Visual Artworks for Blind and Visually Impaired People: Comparing a Multimodal Approach with Tactile Graphics. Electronics. 2021, 10, 297. [Google Scholar] [CrossRef]

- Panotopoulou, A.; Zhang, X.; Qiu, T.; Yang, X.-D.; Whiting, E. Tactile line drawings for improved shape understanding in blind and visually impaired users. ACM Trans. Graph. 2020, 39, 89. [Google Scholar] [CrossRef]

- Kim, S.; Park, E.-S.; Ryu, E.-S. Multimedia vision for the visually impaired through 2D multiarray braille display. Appl. Sci. 2019, 9, 878. [Google Scholar] [CrossRef] [Green Version]

- Thinkable, Tactile Drawing Software. Available online: https://thinkable.nl/ (accessed on 4 March 2021).

- American Printing House, Tactile Graphics Kit. Available online: https://www.aph.org/product/tactile-graphics-kit/ (accessed on 4 March 2021).

- American Thermoform, Tactile Graphics. Available online: http://www.americanthermoform.com/product-category/tactile-graphics/ (accessed on 5 March 2021).

- ViewPlus, IVEO 3 Hands-On Learning System. Available online: https://viewplus.com/product/iveo-3-hands-on-learning-system/ (accessed on 4 March 2021).

- SeeWriteHear, Braille & Tactile Graphics. Available online: https://www.seewritehear.com/services/document-accessibility/braille-tactile-graphics/ (accessed on 5 March 2021).

- Power Contents Technology, Tactile Pro & Edu. Available online: http://www.powerct.kr/ (accessed on 5 March 2021).

- Orbit Research, Graphiti. Available online: http://www.orbitresearch.com/product/graphiti/ (accessed on 4 March 2021).

- Bristol Braille Technology, Canute 360. Available online: http://www.bristolbraille.co.uk/index.htm (accessed on 5 March 2021).

- González Álvarez, C.E. ML to Categorize and Translate Images into Tactile Graphics. Bachelor’s Thesis, Universidad de los Andes, Bogota, Columbia, 2020. [Google Scholar]

| Source | URL | Date of Search | Results |

|---|---|---|---|

| IEEE Xplore | https://ieeexplore.ieee.org/ | 1 March 2021 | 45 |

| ACM DL | https://dl.acm.org/ | 1 March 2021 | 36 |

| Web of Science | http://webofknowledge.com/ | 2 March 2021 | 18 |

| Scopus | https://www.scopus.com/ | 2 March 2021 | 23 |

| Google Scholar | https://scholar.google.com/ | 3 March 2021 | 56 |

| Springer | https://link.springer.com/ | 3 March 2021 | 8 |

| FreeFullPDf | http://www.freefullpdf.com/ | 3 March 2021 | 6 |

| arXiv | https://arxiv.org/ | 4 March 2021 | 5 |

| Wiley OL | https://onlinelibrary.wiley.com/ | 4 March 2021 | 4 |

| dblp CSB | https://dblp.uni-trier.de/ | 4 March 2021 | 7 |

| PubMed | https://pubmed.ncbi.nlm.nih.gov/ | 4 March 2021 | 3 |

| ERIC | https://eric.ed.gov/ | 4 March 2021 | 7 |

| Source | URL | Date of Search | Results |

|---|---|---|---|

| MDPI | https://www.mdpi.com/ | 2 March 2021 | 5 |

| Hindawi | https://www.hindawi.com/ | 3 March 2021 | 11 |

| World Scientific | https://www.worldscientific.com/ | 4 March 2021 | 4 |

| WASET | https://publications.waset.org/ | 4 March 2021 | 5 |

| SAGE | https://journals.sagepub.com/ | 4 March 2021 | 14 |

| Tactile Graphics’ Role | Field Name | Number of Papers | Articles |

|---|---|---|---|

| Education | STEM subjects | 8 | [11,21,48,49,50,51,52,53] |

| Braille books with images | 2 | [54,55] | |

| Electronic circuits | 1 | [56] | |

| HTML web pages | 1 | [38] | |

| Computer science and physics | 1 | [7] | |

| Virtual reality objects | 1 | [57] | |

| Social Life | Travelling and tactile graphics | 5 | [35,36,58,59,60] |

| Map and audio guidance | 3 | [61,62,63] | |

| Artworks and object’s shape | 2 | [64,65] | |

| Haptic and braille display | 2 | [31,66] | |

| Orientation and Mobility | 1 | [62] |

| Input Image Type | Number of Papers | Articles |

|---|---|---|

| General images | 9 | [31,35,36,55,58,59,60,61,65] |

| Different charts and diagrams | 7 | [11,21,31,48,51,52,53] |

| Geometric figures | 5 | [35,53,57,60,66] |

| SVG images | 3 | [7,38,63] |

| Book pages | 3 | [21,54,66] |

| Visual artworks | 2 | [64,65] |

| Maps | 2 | [62,63] |

| Electronic circuits | 1 | [56] |

| Biological molecules | 1 | [49] |

| Node-link diagrams | 1 | [50] |

| Methodology | Type of Process | Number of Papers | Articles |

|---|---|---|---|

| Participatory Design | Design | 2 | [52,60] |

| Experiment & Subjective Assessment | Evaluation | 18 | [7,11,21,48,50,51,52,53,54,56,57,58,59,61,62,63,64,65] |

| Interview & Survey | Prototype | 4 | [11,21,63,64] |

| N/A | No user study | 8 | [31,35,36,38,49,55,60,66] |

| Tactile Graphics (TG) Creation | Currently Available Method | Commercial Software and Hardware |

|---|---|---|

| General image to TG | [35,36,55,58,59,60,61,65] | [67,68,69,70,71,72,73] |

| Book to TG | [21,54,66] | [72,73,74] |

| Web pages to TG | [7,38,63] | [72] |

| Chart and diagram to TG | [11,21,31,48,51,52,53] | [67,68,70,72,73,74] |

| Art and culture to TG | [64,65] | [72,73,74] |

| Map and plan to TG | [62,63] | [67,68,70,73] |

| Figure to TG | [35,53,57,60,66] | [67,68,69,70,71,72,74] |

| Audio feedback support | [21,51,52,61,64] | [67,68,70,72,73,74] |

| Tactile display support | [31,58,59,66] | [67,68,70,72,73,74] |

| Intended Application Domain | Number of Papers | Articles |

|---|---|---|

| Education | 26 | [7,11,21,31,38,48,49,50,51,52,53,54,55,56,57,58,59,60,66,67,68,69,70,71,72,73,74] |

| Daily routine | 17 | [31,35,36,38,54,55,58,59,60,63,65,66,67,68,69,70,72] |

| Orientation and mobility | 6 | [60,61,62,67,68,73] |

| Work environment | 5 | [54,60,61,71,72] |

| Museum and art | 4 | [60,64,65,74] |

| Other | 5 | [36,37,67,69,72] |

| Type of Solution | Method | Articles |

|---|---|---|

| Deep learning | Image categorization using Cloud Vision Auto ML model | [35] |

| Image classification using MobileNet model | [36] | |

| 3D printing | 2D graphics from 3D objects using multi-projection rendering | [65] |

| 3D maps for orientation and mobility training | [62] | |

| Virtual reality | Touching object shapes using optical motion and haptic device | [57] |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Mukhiddinov, M.; Kim, S.-Y. A Systematic Literature Review on the Automatic Creation of Tactile Graphics for the Blind and Visually Impaired. Processes 2021, 9, 1726. https://doi.org/10.3390/pr9101726

Mukhiddinov M, Kim S-Y. A Systematic Literature Review on the Automatic Creation of Tactile Graphics for the Blind and Visually Impaired. Processes. 2021; 9(10):1726. https://doi.org/10.3390/pr9101726

Chicago/Turabian StyleMukhiddinov, Mukhriddin, and Soon-Young Kim. 2021. "A Systematic Literature Review on the Automatic Creation of Tactile Graphics for the Blind and Visually Impaired" Processes 9, no. 10: 1726. https://doi.org/10.3390/pr9101726

APA StyleMukhiddinov, M., & Kim, S.-Y. (2021). A Systematic Literature Review on the Automatic Creation of Tactile Graphics for the Blind and Visually Impaired. Processes, 9(10), 1726. https://doi.org/10.3390/pr9101726