Product Quality Detection through Manufacturing Process Based on Sequential Patterns Considering Deep Semantic Learning and Process Rules

Abstract

1. Introduction

2. Literature Review

2.1. Text Mining and Semantic Similarity Measurement

2.2. Application of Different Algorithms in the Manufacturing Process

2.3. Applications of Sequential Pattern Mining Algorithm

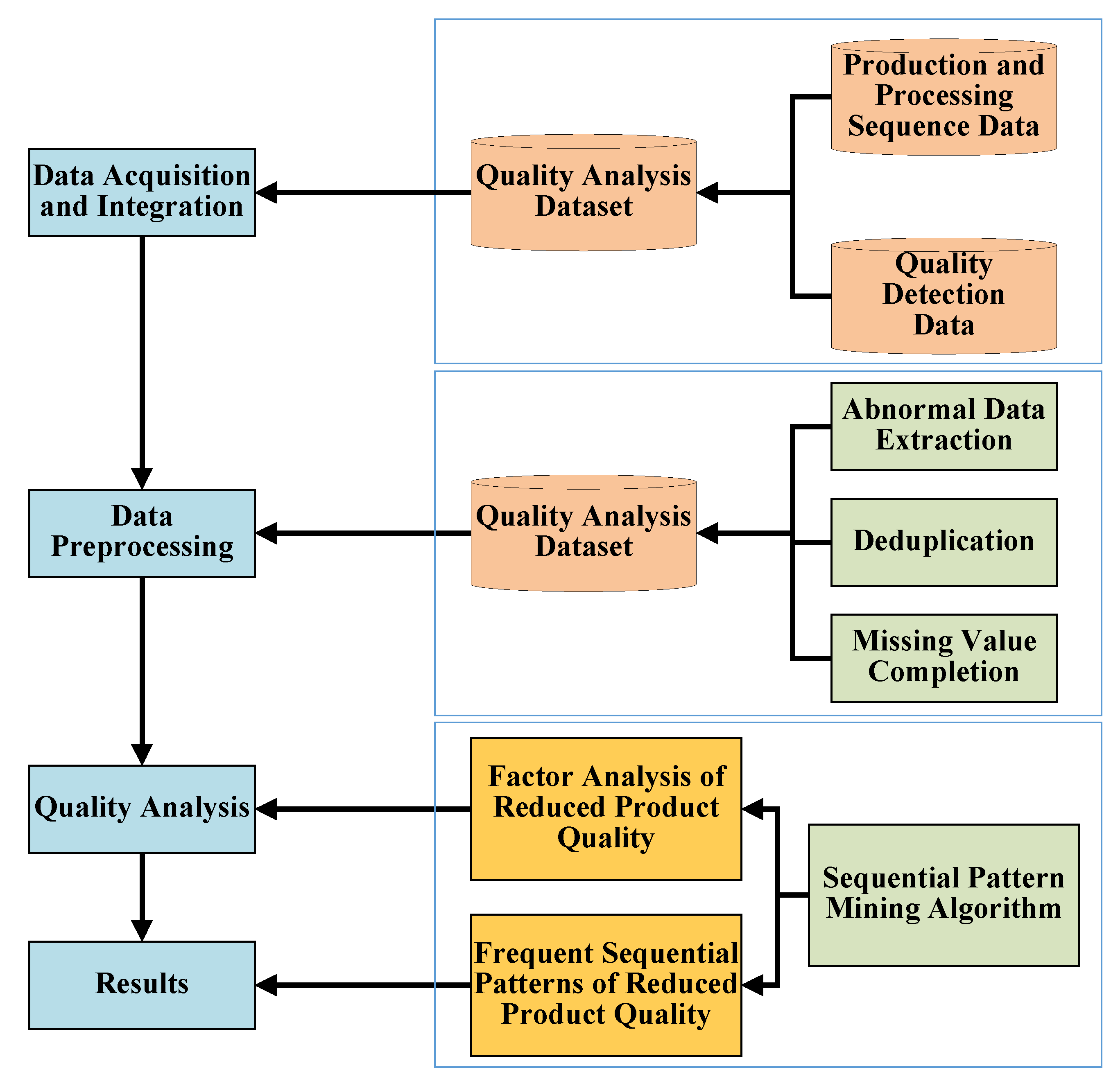

3. Wheel Hub Quality Data Analysis

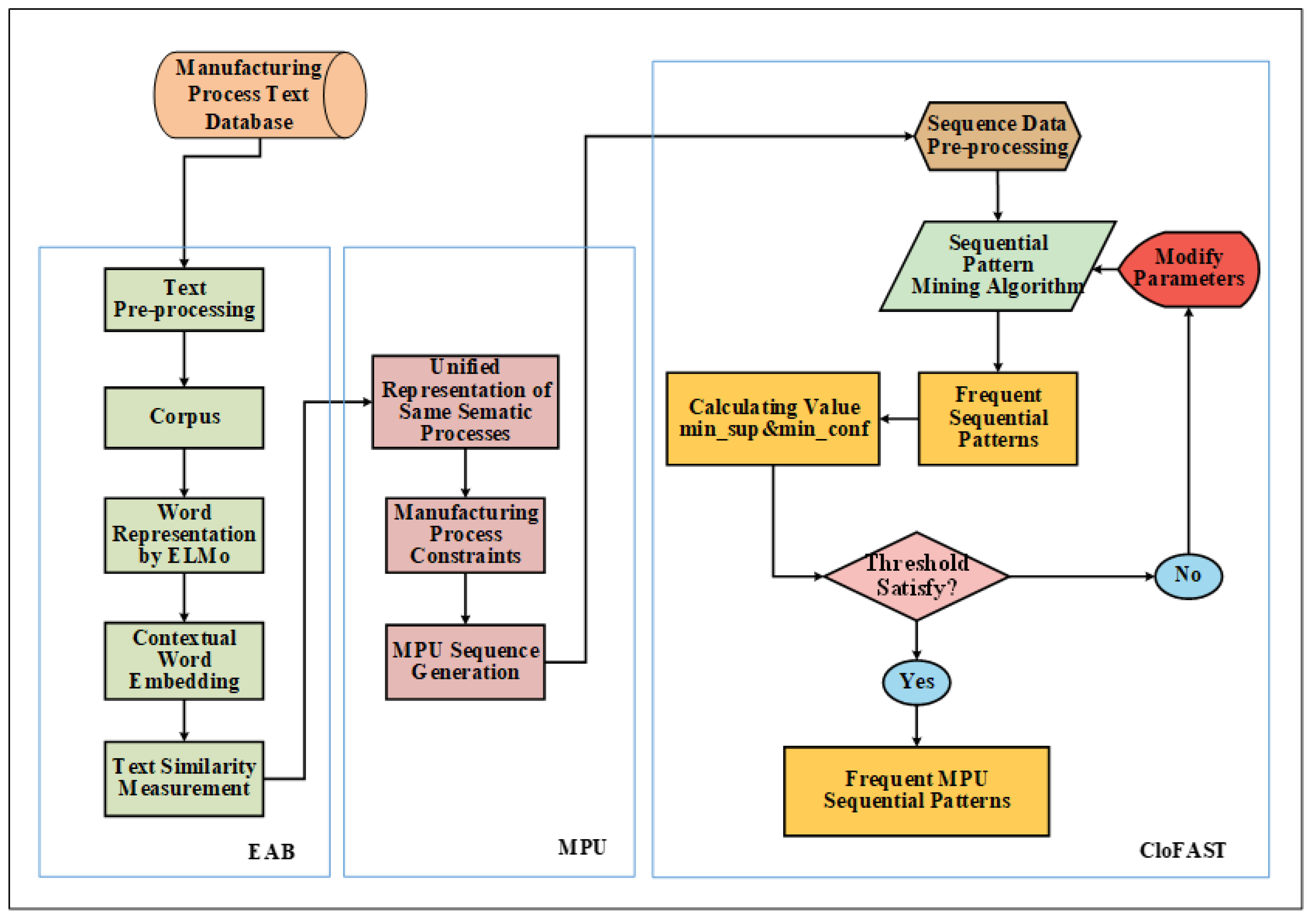

4. EABMC Sequential Patterns Mining Method

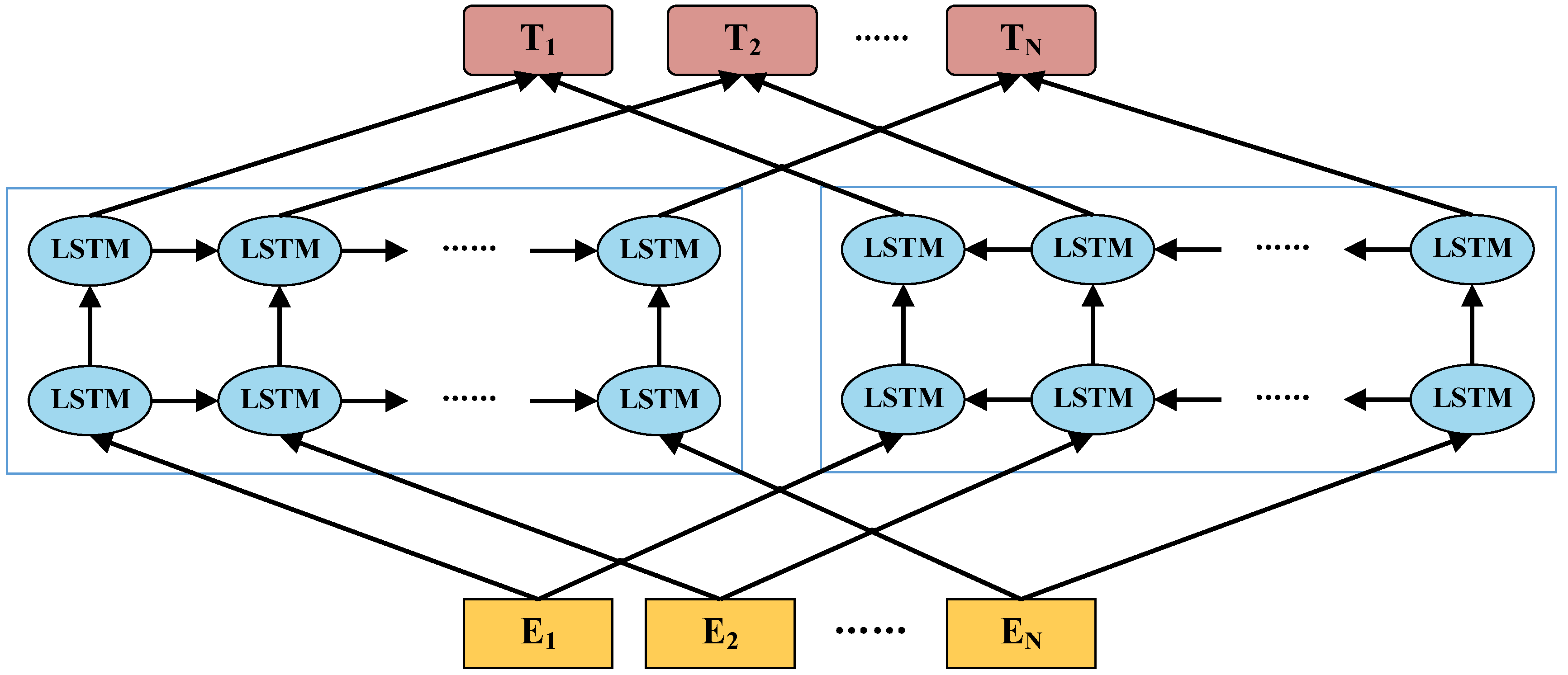

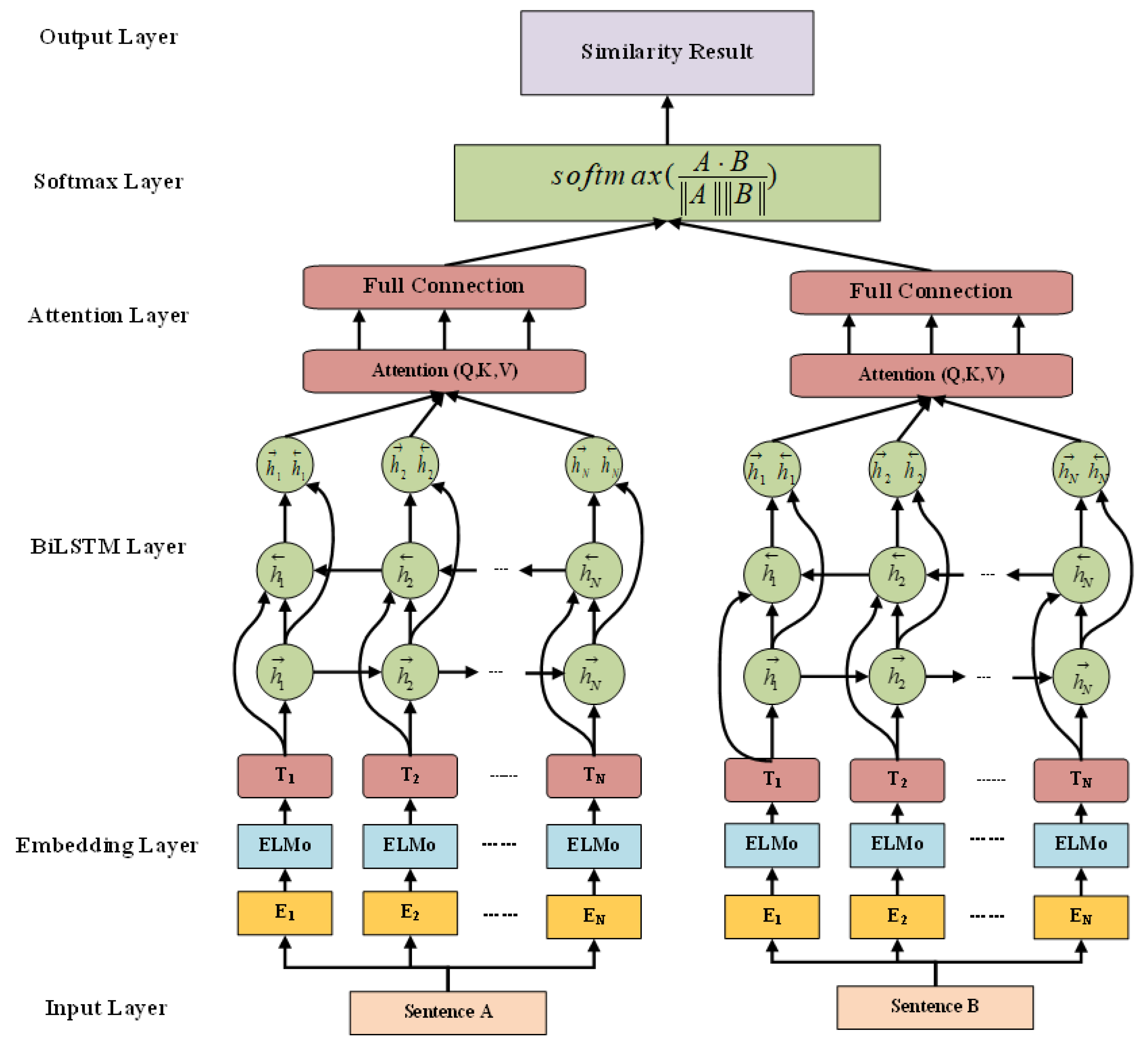

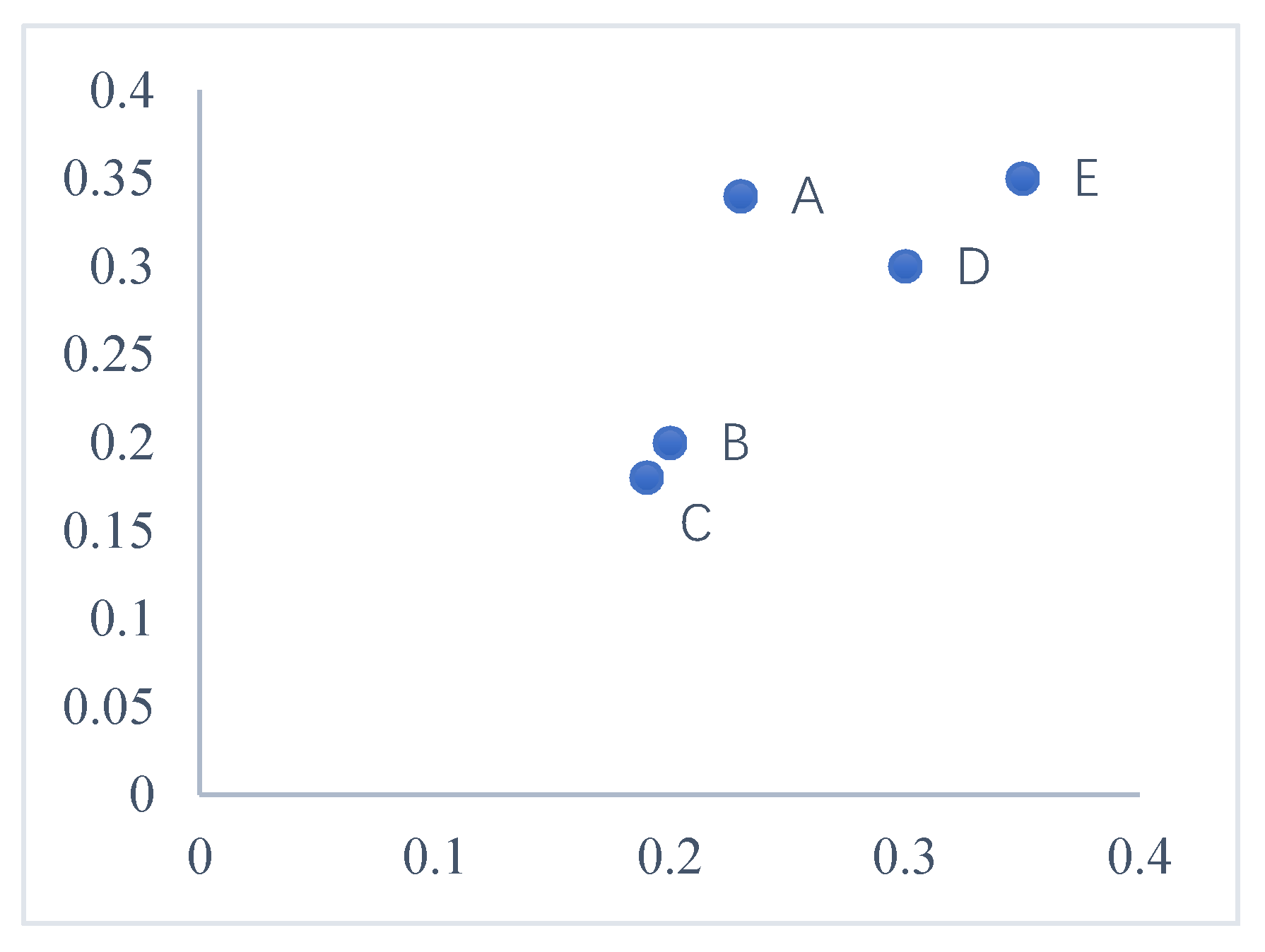

4.1. Measurement of Text Semantic Similarity Based on Contextual Word Embedding

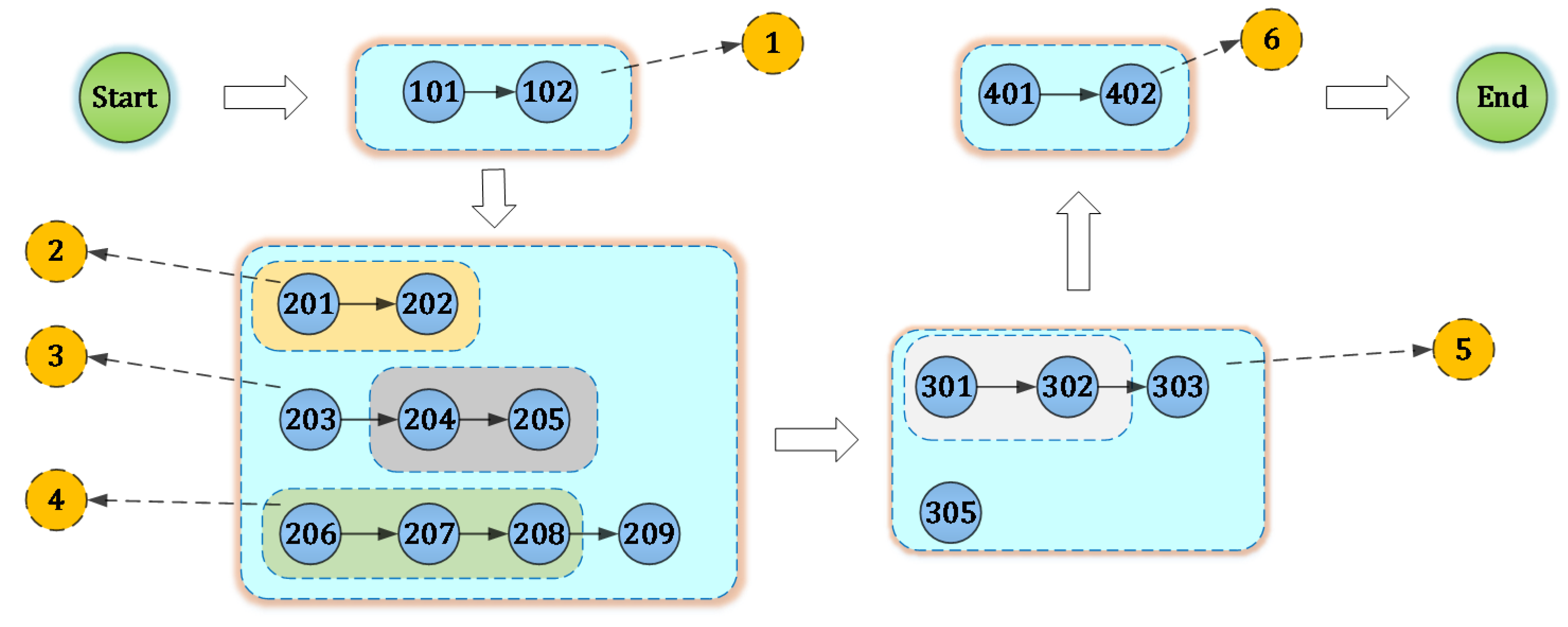

4.2. Manufacturing Process Unit

4.3. EABMC Sequential Pattern Mining Algorithm

| Algorithm 1. EABMC (E-MSDB, min_sup) |

| Input: EAB-MPU Sequence database E-MSDB, int min_sup |

| Output: Complete set of EABMC frequent sequences (CEFS); |

| Data: CSET T = new Tree (), Frequent Items (FI), Closed Frequent Itemset (CFI), Node n |

| 1: // Identify frequent 1-itemsets and establish their SILs; |

| 2: FI = loadFrenquentSILs (E-MSDB, min_sup); |

| 3: // Identify closed frequent frequent itemsets and their SILs |

| 4: CFI = mineClosedFItemset (FI, min_sup); |

| 5: for each cfi ∈ CFI do |

| 6: //Create VILcfi from SILcfi |

| 7: vil = createVil(cfi); |

| 8: // Create CSET node associated to cfi |

| 9: n = createNode(cfi, vil); |

| 10: labelNodeAs(n, “closed”); |

| 11: addChildNode(T, root(T),n); |

| 12: end for |

| 13: for each child ∈ children (T, root(T)) do |

| 14: //start the depth first search |

| 15: sequenceExtension (T, child, min_sup); |

| 16: end for |

| 17: return closedSequentialPatterns(T). |

5. Implementation and Experiment Results

5.1. Data Preprocessing

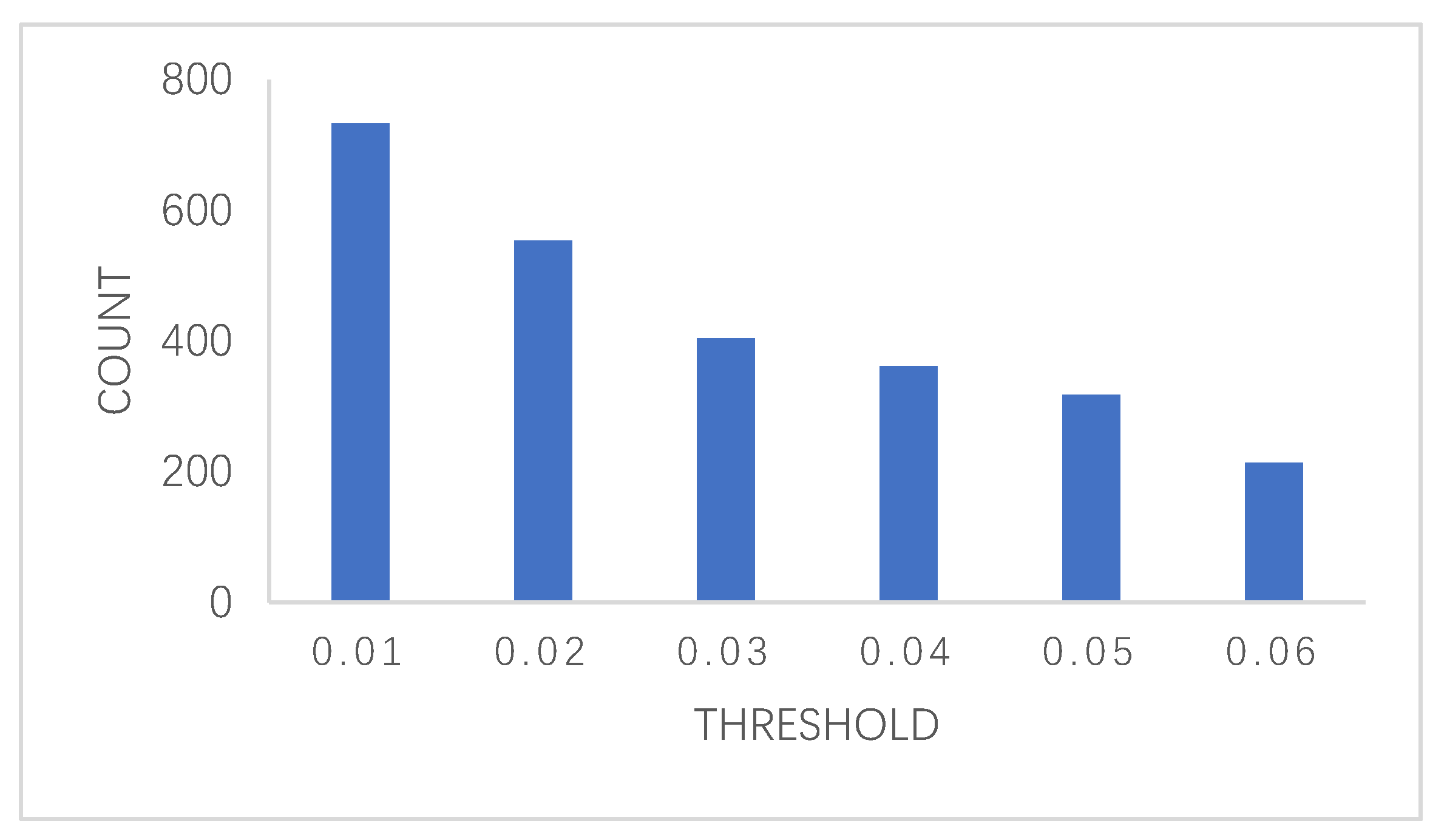

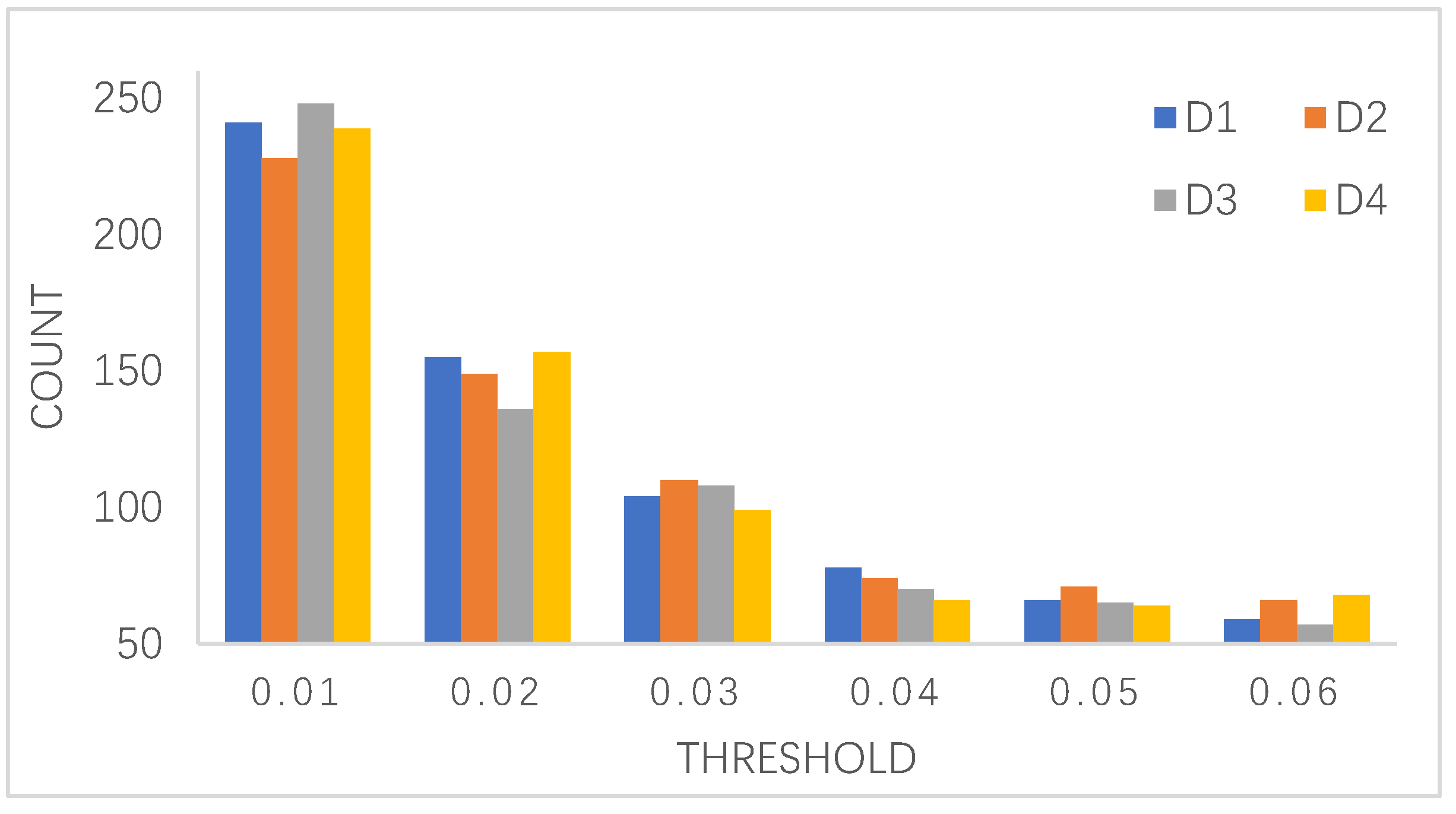

5.2. Discussion of Minimum Support Count

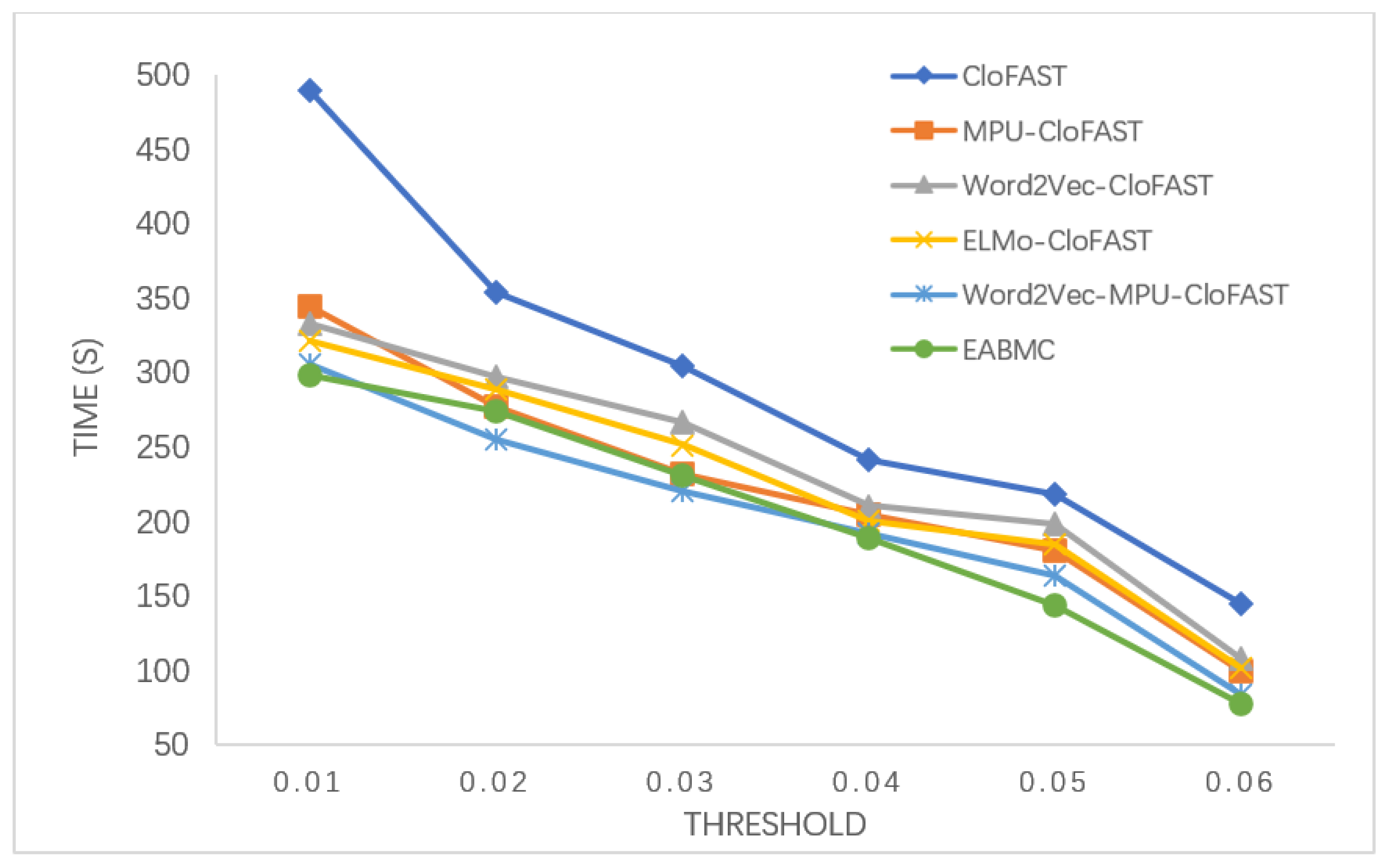

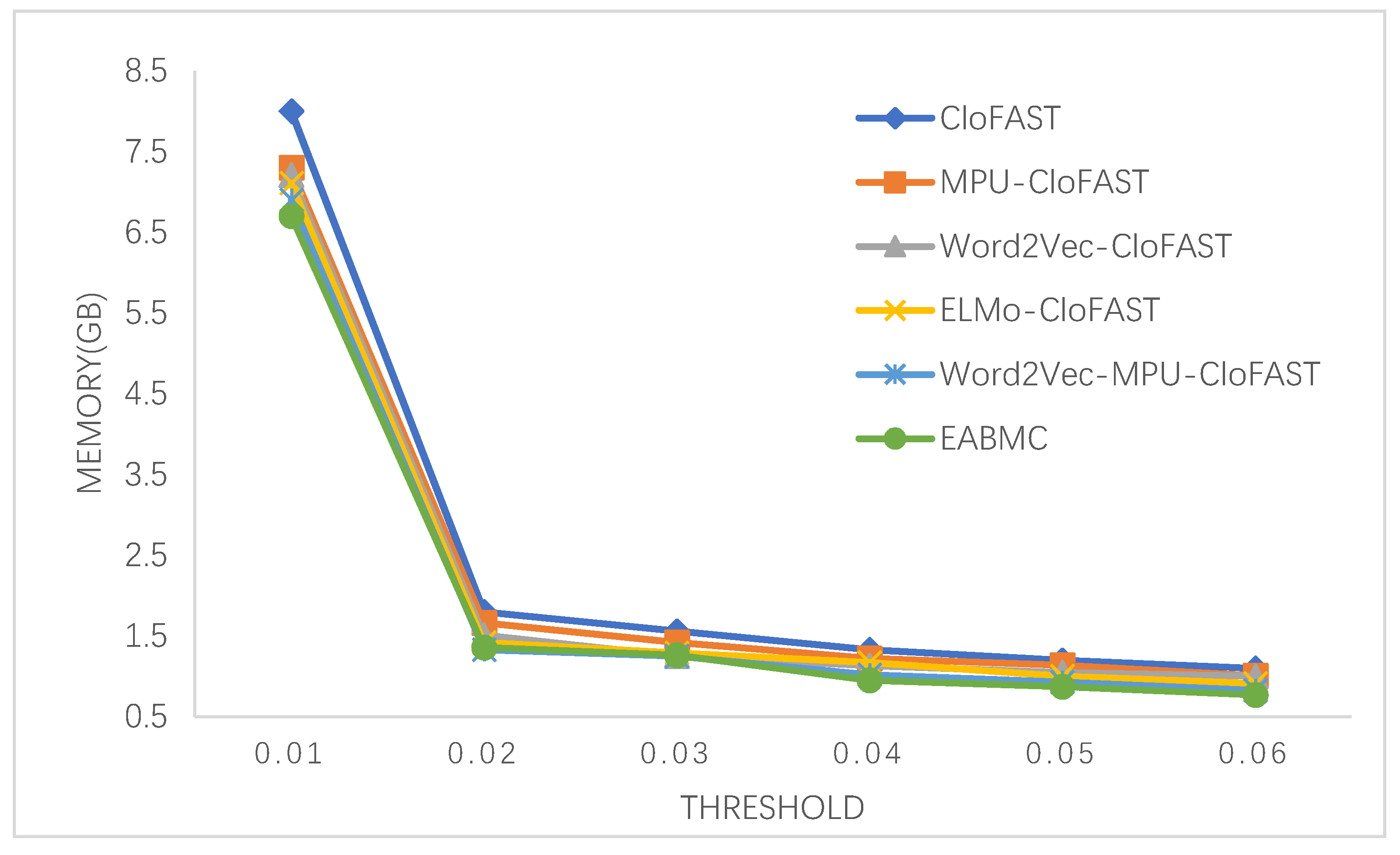

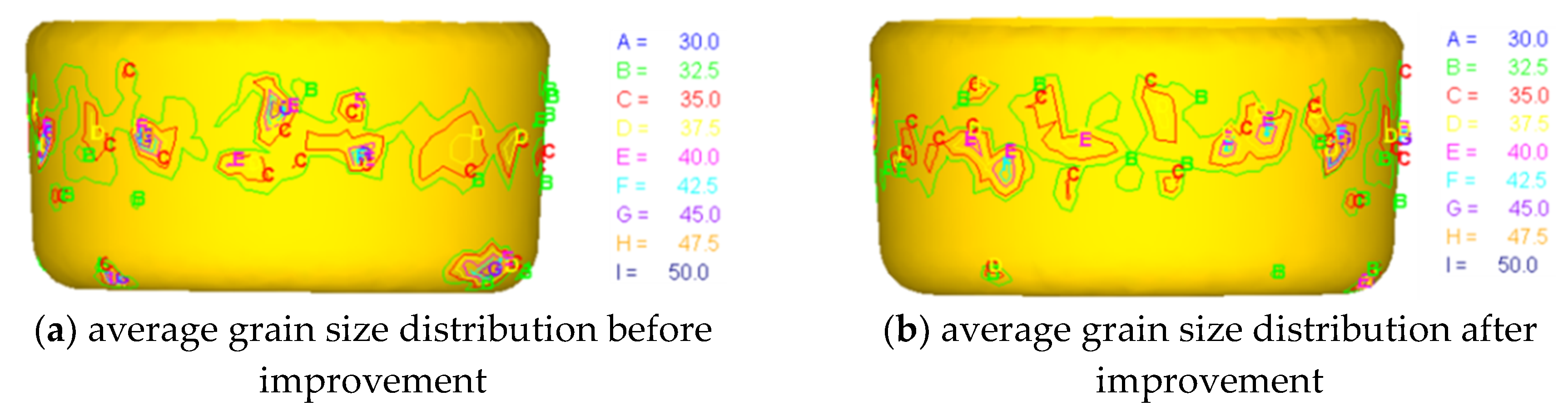

5.3. Experiment Results

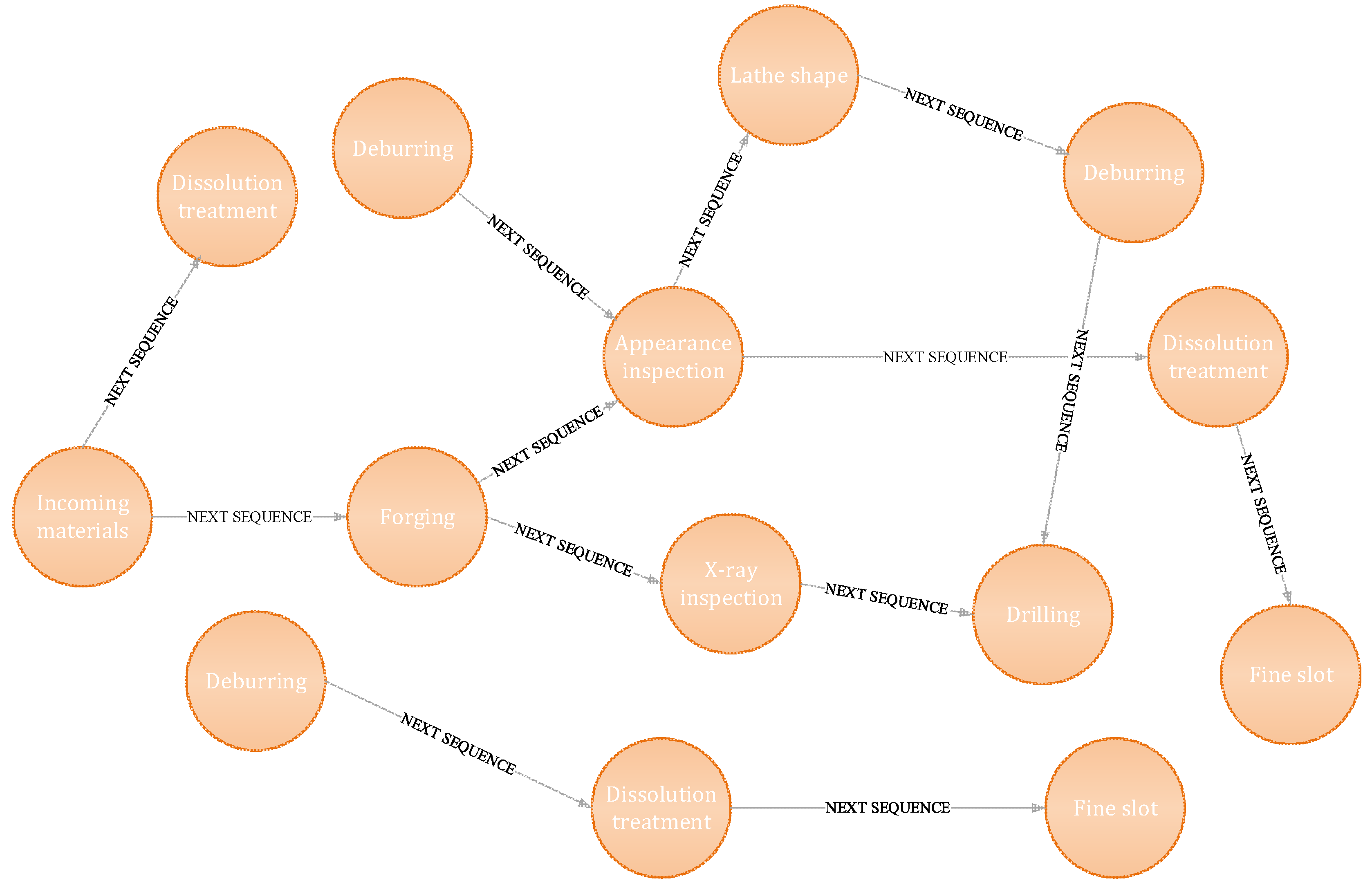

5.4. Sequence Relation Visualization

6. Conclusions and Future Work

Author Contributions

Funding

Conflicts of Interest

References

- Muthalagu, I. Plm Manufacturing Change Order and Data Enrichment Collaboration for Engineering Industries Manufacturing; Social Science Electronic Publishing: New York, NY, USA, 2017. [Google Scholar]

- Fei, T.; Cheng, J.; Qi, Q.; Meng, Z.; He, Z.; Sui, F. Digital twin-driven product design, manufacturing and service with big data. Int. J. Adv. Manuf. Tech. 2018, 94, 3563–3576. [Google Scholar]

- Wu, Y.; He, F.; Zhang, D.; Li, X. Service-oriented feature-based data exchange for cloud-based design and manufacturing. IEEE T. Serv. Comput. 2018, 11, 341–353. [Google Scholar] [CrossRef]

- Sadati, N.; Chinnam, R.B.; Nezhad, M.Z. Observational data-driven modeling and optimization of manufacturing processes. Expert Syst. Appl. 2017, 93, 456–464. [Google Scholar] [CrossRef]

- Youngs, H.; Somerville, C. Best practices for biofuels: Data-based standards should guide biofuel production. Science 2014, 344, 1095–1096. [Google Scholar] [CrossRef]

- Agrawal, R.; Srikant, R. Fast Algorithms for Mining Association Rules. In Proceedings of the 20th Int. Conf. very Large Data Bases, VLDB, Santiago de Chile, Chile, 12–15 September 1994; pp. 487–499. [Google Scholar]

- Agrawal, R.; Srikant, R. Mining sequential patterns. In Proceedings of the Eleventh International Conference on Data Engineering, IEEE, Washington, DC, USA, 6–10 March 1995; pp. 3–14. [Google Scholar]

- Quinlan, J.R. Induction of decision trees. Mach. learn. 1986, 1, 81–106. [Google Scholar] [CrossRef]

- Jain, A.K.; Dubes, R.C. Algorithms for clustering data. Technometrics 1988, 32, 227–229. [Google Scholar]

- Tan, A.-H. Text mining: The state of the art and the challenges. In Proceedings of the PAKDD 1999 Workshop on Knowledge Disocovery from Advanced Databases, Beijing, China, 16–18 April 1999; pp. 65–70. [Google Scholar]

- Argote, L.; Ingram, P. Knowledge transfer: A basis for competitive advantage in firms. Organ. Beh. Hum. Dec. Pro. 2000, 82, 150–169. [Google Scholar] [CrossRef]

- Köksal, G.; Batmaz, İ.; Testik, M.C. A review of data mining applications for quality improvement in manufacturing industry. Expert Syst. Appl. 2011, 38, 13448–13467. [Google Scholar] [CrossRef]

- Hitomi, K. Manufacturing Systems Engineering: A Unified Approach to Manufacturing Technology, Production Management and Industrial Economics; Routledge: London, UK, 2017. [Google Scholar]

- Hallac, D.; Vare, S.; Boyd, S.; Leskovec, J. Toeplitz inverse covariance-based clustering of multivariate time series data. In Proceedings of the 23rd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, ACM, Halifax, NS, Canada, 13–17 August 2017; pp. 215–223. [Google Scholar]

- Salton, G.; Buckley, C. Term-weighting approaches in automatic text retrieval. Inform. Process. Manag. 1988, 24, 513–523. [Google Scholar] [CrossRef]

- Mikolov, T.; Sutskever, I.; Chen, K.; Corrado, G.S.; Dean, J. Distributed representations of words and phrases and their compositionality. Adv. Neural Inf. Process. Syst. 2013, 2, 3111–3119. [Google Scholar]

- Blei, D.M.; Ng, A.Y.; Jordan, M.I.J. Latent dirichlet allocation. J. Mach. Learn. 2003, 3, 993–1022. [Google Scholar]

- Pennington, J.; Socher, R.; Manning, C.D. Glove: Global vectors for word representation. In Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP), Doha, Qatar, 25–29 October 2014; pp. 1532–1543. [Google Scholar]

- Deerwester, S.; Dumais, S.T.; Furnas, G.W.; Landauer, T.K.; Harshman, R. Indexing by latent semantic analysis. J. Am. Soc. Inf. Sci. 1990, 41, 391–407. [Google Scholar] [CrossRef]

- Peters, M.E.; Neumann, M.; Iyyer, M.; Gardner, M.; Clark, C.; Lee, K.; Zettlemoyer, L. Deep contextualized word representations. arXiv 2018, arXiv:1802.05365. [Google Scholar]

- Yang, B.; Qiao, L.; Cai, N.; Zhu, Z.; Wulan, M. Manufacturing process information modeling using a metamodeling approach. Int. J. Adv. Manuf. Tech. 2018, 94, 1579–1596. [Google Scholar] [CrossRef]

- Trojanowska, J.; Kolinski, A.; Galusik, D.; Varela, M.L.R.; Machado, J. A methodology of improvement of manufacturing productivity through increasing operational efficiency of the production process. In Advances in Manufacturing; Springer: New York, NY, USA, 2018. [Google Scholar]

- Su, Y.; Chu, X.; Chen, D.; Sun, X. A genetic algorithm for operation sequencing in capp using edge selection based encoding strategy. J. Intell. Manuf. 2018, 29, 313–332. [Google Scholar] [CrossRef]

- Lan, X.P.; Tran, D.V.; Hoang, S.V.; Truong, S.H. Effective method of operation sequence optimization in capp based on modified clustering algorithm. J. Adv. Mech. Des. Syst. Manuf. 2017, 11, JAMDSM0001. [Google Scholar]

- Wang, W.; Li, Y.; Huang, L. Rule and branch-and-bound algorithm based sequencing of machining features for process planning of complex parts. J. Intell. Manuf. 2018, 29, 1329–1336. [Google Scholar] [CrossRef]

- Milošević, M.; Lukić, D.; Antić, A.; Lalić, B.; Ficko, M.; Šimunović, G. E-capp: A distributed collaborative system for internet-based process planning. J. Manuf. Syst. 2017, 42, 210–223. [Google Scholar] [CrossRef]

- Cheng, J.; Wang, J. Data-driven matching method for processing parameters in process manufacturing. CIMS 2017, 23, 2361–2370. [Google Scholar]

- Yuan, X.; Chang, W.; Zhou, S.; Cheng, Y.J.S. Sequential pattern mining algorithm based on text data: Taking the fault text records as an example. Sustainability 2018, 10, 4330. [Google Scholar] [CrossRef]

- Huang, H.; Yao, L.; Tsai, C.-Y. Transportation service quality improvement through closed sequential pattern mining approach. Cyb. Infor. Tech. 2016, 16, 185–194. [Google Scholar] [CrossRef]

- Amiri, M.; Mohammad-Khanli, L.; Mirandola, R. A sequential pattern mining model for application workload prediction in cloud environment. J. Netw. Comput. Appl. 2018, 105, 21–62. [Google Scholar] [CrossRef]

- Tsai, C.-Y.; Chen, C.-J. A pso-ab classifier for solving sequence classification problems. Appl. Soft Comput. 2015, 27, 11–27. [Google Scholar] [CrossRef]

- Huynh, B.; Trinh, C.; Huynh, H.; Van, T.-T.; Vo, B.; Snasel, V. An efficient approach for mining sequential patterns using multiple threads on very large databases. Eng. Appl. Artif. Intell. 2018, 74, 242–251. [Google Scholar] [CrossRef]

- Tarus, J.K.; Niu, Z.; Yousif, A. A hybrid knowledge-based recommender system for e-learning based on ontology and sequential pattern mining. Future Gener. Com. Sy. 2017, 72, 37–48. [Google Scholar] [CrossRef]

- Zhi, D.; Liao, X.; Su, F.; Fu, D. Mining coastal land use sequential pattern and its land use associations based on association rule mining. Remote Sens. 2017, 9, 116. [Google Scholar]

- Papaioannouaab, G. The evolution of cell formation problem methodologies based on recent studies (1997–2008): Review and directions for future research. Eur. J. Oper. Res. 2010, 206, 509–521. [Google Scholar] [CrossRef]

- Mabroukeh, N.R.; Ezeife, C.I. A taxonomy of sequential pattern mining algorithms. ACM Comput. Surv. (CSUR) 2010, 43, 3. [Google Scholar] [CrossRef]

- Salvemini, E.; Fumarola, F.; Malerba, D.; Han, J. Fast Sequence Mining Based on Sparse Id-Lists; Springer: New York, NY, USA, 2011. [Google Scholar]

- Srikant, R.; Agrawal, R. Mining sequential patterns: Generalizations and performance improvements. In Proceedings of the International Conference on Extending Database Technology, Avignon, France, 25–29 March 1996. [Google Scholar]

- Zaki, M.J. Spade: An efficient algorithm for mining frequent sequences. Mach. Learn. 2001, 42, 31–60. [Google Scholar] [CrossRef]

- Pei, J.; Han, J.; Mortazavi-Asl, B.; Pinto, H.; Chen, Q.; Dayal, U.; Hsu, M.-C. Prefixspan: Mining Sequential Patterns Efficiently by Prefix-Projected Pattern Growth, ICCCN; IEEE: Piscataway, NJ, USA, 2001; p. 0215. [Google Scholar]

- Yan, X.; Han, J.; Afshar, R. Clospan: Mining: Closed sequential patterns in large datasets. In Proceedings of the 2003 SIAM International Conference on Data Mining, SIAM, San Francisco, CA, USA, 1–3 May 2003; pp. 166–177. [Google Scholar]

- Fumarola, F.; Lanotte, P.F.; Ceci, M.; Malerba, D. Clofast: Closed sequential pattern mining using sparse and vertical id-lists. Knowl. Inf. Syst. 2016, 48, 429–463. [Google Scholar] [CrossRef]

- Yun, C.; Wang, H.; Yu, P.S.; Muntz, R.R. Catch the moment: Maintaining closed frequent itemsets over a data stream sliding window. Knowl. Inf. Syst. 2006, 10, 265–294. [Google Scholar]

| Models | Bag-of-Words Model [15] | Topic Model [17] | Word Embedding Distance Model [16] | Latent Semantic Analysis Model [19] | Contextual Word Embedding Model [20] | |

|---|---|---|---|---|---|---|

| Functions | ||||||

| Word embedding | No | Yes | Yes | Yes | Yes | |

| Suitable for short text | No | Yes | Yes | Yes | Yes | |

| Implies contextual semantic | No | No | No | Yes | Yes | |

| Solve the problem of polysemy | No | No | No | Yes | Yes | |

| Model | Precision | Recall | F1 |

|---|---|---|---|

| Word2vec | 0.721 | 0.612 | 0.557 |

| ELMo | 0.820 | 0.804 | 0.781 |

| EAB | 0.861 | 0.854 | 0.843 |

| Sentence ID | Sentence Content |

|---|---|

| A | Check that the tooling model is in good condition and complete and that the preparation of chilled iron, core sand, and alloy meet the process requirements. |

| B | Check that the tooling model has no offset and that the surface of the mold is tight. |

| C | Check that the tooling model is in good condition. |

| D | Fluorescence inspection of the cabin according to HVJ40·23001. |

| E | Vibration aging of cabin according to Ez2082·34002. |

| ID | Process Name | Process Content | MPU ID |

|---|---|---|---|

| 3 | Modeling | First, make the bottom plate shape. Then, wet the core shape on the bottom plate. At the same time, the outer mold and the cover shape are formed. | B |

| 4 | Checking | Check that the chilled iron has no offset and that the surface of the mold is tight. | |

| 7 | Repair modeling | Dress the core and exterior mold, and print the furnace number on the wet core furnace number. | C |

| … | … | … | … |

| 37 | Vsr | Vibration aging of cabin according to Ez2082·34002. | R |

| 38 | Checking | Inspection cabin vibration aging operation meets process requirements. | |

| 40 | Product delivery | Make products into the library. | T |

| Time | Manufacturing Process Sequences | Product Quality |

|---|---|---|

| 20171021 | Incoming materials→Forging→X-ray inspection→Appearance inspection→Deburring→Dissolution treatment→Aging treatment→Lathe surface→Fine slot→Dovetail slot→Deburring→Drilling→Milling inner cavity→Tapping | Qualified |

| 20171201 | Deburring→Drilling→Milling inner cavity→Tapping→Drilling→Cleaning→Detection→Anodizing→Painting→Drying→Sampling detection→Painting→Shipping. | Unqualified |

| …… | …… | …… |

| 20170824 | Dissolution treatment→Aging treatment→Lathe surface→Lathe shape→Fine slot→Dovetail slot→Deburring→Drilling→Milling inner cavity→Tapping→Drilling→Cleaning | Unqualified |

| 20181228 | Dovetail slot→Deburring→Drilling→Milling inner cavity→Tapping→Drilling→Cleaning→Detection→Anodizing→Painting→Drying→Sampling detection→Painting→Shipping | Qualified |

| Model | Original | EAB | EAB+MPU |

|---|---|---|---|

| No. | 29687 | 25373 | 20281 |

| EABMC Sequential Patterns | Support |

|---|---|

| 2-item closed frequent sequential patterns | |

| Incoming materials→Forging Incoming materials→Dissolution treatment | 759 715 |

| 3-item sequential patterns Forging→X-ray inspection→Drilling Deburring→Dissolution treatment→Fine slot | 536 502 |

| 4-item sequential patterns Forging→Appearance inspection→Dissolution treatment→Fine slot | 346 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Yao, L.; Huang, H.; Chen, S.-H. Product Quality Detection through Manufacturing Process Based on Sequential Patterns Considering Deep Semantic Learning and Process Rules. Processes 2020, 8, 751. https://doi.org/10.3390/pr8070751

Yao L, Huang H, Chen S-H. Product Quality Detection through Manufacturing Process Based on Sequential Patterns Considering Deep Semantic Learning and Process Rules. Processes. 2020; 8(7):751. https://doi.org/10.3390/pr8070751

Chicago/Turabian StyleYao, Liguo, Haisong Huang, and Shih-Huan Chen. 2020. "Product Quality Detection through Manufacturing Process Based on Sequential Patterns Considering Deep Semantic Learning and Process Rules" Processes 8, no. 7: 751. https://doi.org/10.3390/pr8070751

APA StyleYao, L., Huang, H., & Chen, S.-H. (2020). Product Quality Detection through Manufacturing Process Based on Sequential Patterns Considering Deep Semantic Learning and Process Rules. Processes, 8(7), 751. https://doi.org/10.3390/pr8070751