A Review of Computational Methods for Clustering Genes with Similar Biological Functions

Abstract

1. Introduction

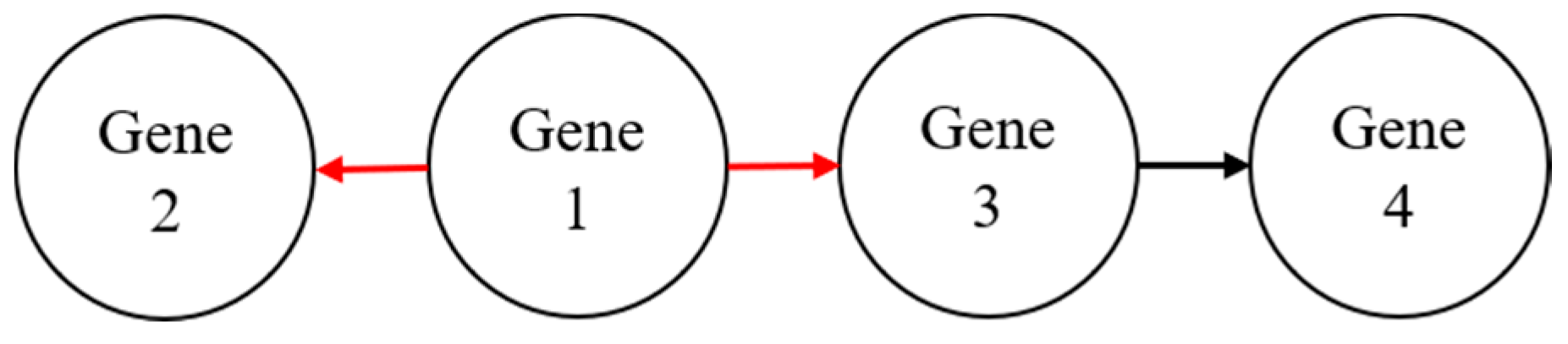

2. Gene Network Clustering Techniques

2.1. Category 1: Partitioning Clustering

2.2. Category 2: Hierarchical Clustering

2.3. Category 3: Grid-Based Clustering

2.4. Category 4: Density-Based Clustering

3. Optimization for Objective Function of Partitioning Clustering Techniques

3.1. Strategy 1: Population-Based Optimization

3.2. Strategy 2: Evolution-Based Optimization

4. Clustering Validation in Measurements

5. Discussion

6. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Chandra, G.; Tripathi, S. A Column-Wise Distance-Based Approach for Clustering of Gene Expression Data with Detection of Functionally Inactive Genes and Noise. In Advances in Intelligent Computing; Springer: Singapore, 2019; pp. 125–149. [Google Scholar]

- Xu, R.; Wunsch, D.C. Clustering algorithms in biomedical research: A review. IEEE Rev. Biomed. Eng. 2010, 3, 120–154. [Google Scholar] [CrossRef]

- Cai, B.; Wang, H.; Zheng, H.; Wang, H. An improved random walk-based clustering algorithm for community detection in complex networks. In Proceedings of the International Conference on Systems, Man, and Cybernetics (SMC), Anchorage, AK, USA, 9–12 October 2011; pp. 2162–2167. [Google Scholar]

- Zhang, H.; Raitoharju, J.; Kiranyaz, S.; Gabbouj, M. Limited random walk algorithm for big graph data clustering. J. Big Data 2016, 3, 26. [Google Scholar] [CrossRef]

- Liu, W.; Li, C.; Xu, Y.; Yang, H.; Yao, Q.; Han, J.; Shang, D.; Zhang, C.; Su, F.; Li, X.; et al. Topologically inferring risk-active pathways toward precise cancer classification by directed random walk. Bioinformatics 2013, 29, 2169–2177. [Google Scholar] [CrossRef]

- Liu, W.; Bai, X.; Liu, Y.; Wang, W.; Han, J.; Wang, Q.; Xu, Y.; Zhang, C.; Zhang, S.; Li, X.; et al. Topologically inferring pathway activity toward precise cancer classification via integrating genomic and metabolomic data: Prostate cancer as a case. Sci. Rep. 2015, 5, 13192. [Google Scholar] [CrossRef]

- Liu, W.; Wang, W.; Tian, G.; Xie, W.; Lei, L.; Liu, J.; Huang, W.; Xu, L.; Li, E. Topologically inferring pathway activity for precise survival outcome prediction: Breast cancer as a case. Mol. Biosyst. 2017, 13, 537–548. [Google Scholar] [CrossRef]

- Wang, W.; Liu, W. Integration of gene interaction information into a reweighted random survival forest approach for accurate survival prediction and survival biomarker discovery. Sci. Rep. 2018, 8, 13202. [Google Scholar] [CrossRef]

- Mehmood, R.; El-Ashram, S.; Bie, R.; Sun, Y. Effective cancer subtyping by employing density peaks clustering by using gene expression microarray. Pers. Ubiquitous Comput. 2018, 22, 615–619. [Google Scholar] [CrossRef]

- Bajo, J.; De Paz, J.F.; Rodríguez, S.; González, A. A new clustering algorithm applying a hierarchical method neural network. Log. J. IGPL 2010, 19, 304–314. [Google Scholar] [CrossRef]

- Majhi, S.K.; Biswal, S. A Hybrid Clustering Algorithm Based on K-means and Ant Lion Optimization. In Emerging Technologies in Data Mining and Information Security; Springer: Singapore, 2019; pp. 639–650. [Google Scholar]

- Ye, S.; Huang, X.; Teng, Y.; Li, Y. K-means clustering algorithm based on improved Cuckoo search algorithm and its application. In Proceedings of the 3rd International Conference on Big Data Analysis (ICBDA), Shanghai, China, 9–12 March 2018; pp. 422–426. [Google Scholar]

- Zelnik-Manor, L.; Perona, P. Self-tuning spectral clustering. In Advances in Neural Information Processing Systems; Neural Information Processing Systems Foundation, Inc. (NIPS): Vancouver, BC, Canada, 2005; pp. 1601–1608. [Google Scholar]

- Sugiyama, M.; Yamada, M.; Kimura, M.; Hachiya, H. On Information-Maximization Clustering: Tuning Parameter Selection and Analytic Solution. In Proceedings of the 28th International Conference on Machine Learning, Bellevue, WA, USA, 28 June–2 July 2011; pp. 65–72. [Google Scholar]

- Pollard, K.S.; Van Der Laan, M.J. A method to identify significant clusters in gene expression data. In U.C. Berkeley Division of Biostatistics Working Paper Series; Working Paper 107; Berkeley Electronic Press: Berkeley, CA, USA, 2002. [Google Scholar]

- Bholowalia, P.; Kumar, A. EBK-means: A clustering technique based on elbow method and k-means in WSN. Int. J. Comput. Appl. 2014, 105. [Google Scholar] [CrossRef]

- Jain, A.K. Data clustering: 50 years beyond K-means. Pattern Recognit. Lett. 2010, 31, 651–666. [Google Scholar] [CrossRef]

- Liu, Y.; Li, Z.; Xiong, H.; Gao, X.; Wu, J. Understanding of internal clustering validation measures. In Proceedings of the 10th International Conference on Data Mining (ICDM), Sydney, Australia, 13–17 December 2010; pp. 911–916. [Google Scholar]

- Garzón, J.A.C.; González, J.R. A gene selection approach based on clustering for classification tasks in colon cancer. Adv. Distrib. Comput. Artif. Intell. J. 2015, 4, 1–10. [Google Scholar]

- Kriegel, H.P.; Kröger, P.; Sander, J.; Zimek, A. Density-based clustering. Wiley Interdiscip. Rev. 2011, 1, 231–240. [Google Scholar] [CrossRef]

- Nagpal, A.; Jatain, A.; Gaur, D. Review based on data clustering algorithms. In Proceedings of the Conference on Information & Communication Technologies, Thuckalay, Tamil Nadu, India, 11–12 April 2013; pp. 298–303. [Google Scholar]

- Chen, Y.; Tang, S.; Bouguila, N.; Wang, C.; Du, J.; Li, H. A Fast Clustering Algorithm based on pruning unnecessary distance computations in DBSCAN for High-Dimensional Data. Pattern Recognit. 2018, 83, 375–387. [Google Scholar] [CrossRef]

- Handl, J.; Knowles, J.; Kell, D.B. Computational cluster validation in post-genomic data analysis. Bioinformatics 2005, 21, 3201–3212. [Google Scholar] [CrossRef]

- Deng, C.; Song, J.; Sun, R.; Cai, S.; Shi, Y. GRIDEN: An effective grid-based and density-based spatial clustering algorithm to support parallel computing. Pattern Recognit. Lett. 2018, 109, 81–88. [Google Scholar] [CrossRef]

- Murtagh, F.; Contreras, P. Algorithms for hierarchical clustering: An overview. Wiley Interdiscip. Rev. Data Min. Knowl. Discov. 2012, 2, 86–97. [Google Scholar] [CrossRef]

- Pilevar, A.H.; Sukumar, M. GCHL: A grid-clustering technique for high-dimensional very large spatial data bases. Pattern Recognit. Lett. 2005, 26, 999–1010. [Google Scholar] [CrossRef]

- Dembele, D.; Kastner, P. Fuzzy C-means method for clustering microarray data. Bioinformatics 2003, 19, 973–980. [Google Scholar] [CrossRef] [PubMed]

- Nayak, J.; Naik, B.; Behera, H.S. Fuzzy C-means (FCM) clustering algorithm: A decade review from 2000 to 2014. In Computational Intelligence in Data Mining-Volume 2; Springer: New Delhi, India, 2015; pp. 133–149. [Google Scholar]

- Datta, S.; Datta, S. Methods for evaluating clustering algorithms for gene expression data using a reference set of functional classes. BMC Bioinform. 2006, 7, 397. [Google Scholar] [CrossRef] [PubMed]

- Mary, C.; Raja, S.K. Refinement of Clusters from K-Means with Ant Colony Optimization. J. Theor. Appl. Inf. Technol. 2009, 6, 28–32. [Google Scholar]

- Remli, M.A.; Daud, K.M.; Nies, H.W.; Mohamad, M.S.; Deris, S.; Omatu, S.; Kasim, S.; Sulong, G. K-Means Clustering with Infinite Feature Selection for Classification Tasks in Gene Expression Data. In Proceedings of the International Conference on Practical Applications of Computational Biology & Bioinformatics, Porto, Portugal, 21–23 June 2017; pp. 50–57. [Google Scholar]

- Garg, S.; Batra, S. Fuzzified cuckoo based clustering technique for network anomaly detection. Comput. Electr. Eng. 2018, 71, 798–817. [Google Scholar] [CrossRef]

- Majhi, S.K.; Biswal, S. Optimal cluster analysis using hybrid K-Means and Ant Lion Optimizer. Karbala Int. J. Mod. Sci. 2018, 4, 347–360. [Google Scholar] [CrossRef]

- Kaufman, L.; Rousseeuw, P.J. Finding Groups in Data: An Introduction to Cluster Analysis; John Wiley & Sons: Hoboken, NJ, USA, 2009; Volume 344. [Google Scholar]

- Vesanto, J.; Alhoniemi, E. Clustering of the self-organizing map. IEEE Trans. Neural Netw. 2000, 11, 586–600. [Google Scholar] [CrossRef] [PubMed]

- Bassani, H.F.; Araujo, A.F. Dimension selective self-organizing maps with time-varying structure for subspace and projected clustering. IEEE Trans. Neural Netw. Learn. Syst. 2015, 26, 458–471. [Google Scholar] [CrossRef]

- Mikaeil, R.; Haghshenas, S.S.; Hoseinie, S.H. Rock penetrability classification using artificial bee colony (ABC) algorithm and self-organizing map. Geotech. Geol. Eng. 2018, 36, 1309–1318. [Google Scholar] [CrossRef]

- Tian, J.; Gu, M. Subspace Clustering Based on Self-organizing Map. In Proceedings of the 24th International Conference on Industrial Engineering and Engineering Management 2018, Changsha, China, 19–21 May 2018; pp. 151–159. [Google Scholar]

- Agrawal, R.; Gehrke, J.; Gunopulos, D.; Raghavan, P. Automatic Subspace Clustering of High Dimensional Data for Data Mining Applications; ACM: New York, NY, USA, 1998; Volume 27, pp. 94–105. [Google Scholar]

- Santhisree, K.; Damodaram, A. CLIQUE: Clustering based on density on web usage data: Experiments and test results. In Proceedings of the 3rd International Conference on Electronics Computer Technology (ICECT), Kanyakumari, India, 8–10 April 2011; Volume 4, pp. 233–236. [Google Scholar]

- Cheng, W.; Wang, W.; Batista, S. Grid-based clustering. In Data Clustering; Chapman and Hall, CRC Press: London, UK, 2018; pp. 128–148. [Google Scholar]

- Wang, W.; Yang, J.; Muntz, R. STING: A statistical information grid approach to spatial data mining. In Proceedings of the 23rd International Conference on Very Large Data Bases, Athens, Greece, 25–29 August 1997; Volume 97, pp. 186–195. [Google Scholar]

- Hu, J.; Pei, J. Subspace multi-clustering: A review. Knowl. Inf. Syst. 2018, 56, 257–284. [Google Scholar] [CrossRef]

- Ester, M.; Kriegel, H.P.; Sander, J.; Xu, X. A density-based algorithm for discovering clusters in large spatial databases with noise. In Proceedings of the Second International Conference on Knowledge Discovery and Data Mining, Portland, OR, USA, 2–4 August 1996; Volume 96, pp. 226–231. [Google Scholar]

- Geng, Y.A.; Li, Q.; Zheng, R.; Zhuang, F.; He, R.; Xiong, N. RECOME: A new density-based clustering algorithm using relative KNN kernel density. Inf. Sci. 2018, 436, 13–30. [Google Scholar] [CrossRef]

- Can, T.; Çamoǧlu, O.; Singh, A.K. Analysis of protein-protein interaction networks using random walks. In Proceedings of the 5th International Workshop on Bioinformatics, Chicago, IL, USA, 21 August 2005; pp. 61–68. [Google Scholar]

- Firat, A.; Chatterjee, S.; Yilmaz, M. Genetic clustering of social networks using random walks. Comput. Stat. Data Anal. 2007, 51, 6285–6294. [Google Scholar] [CrossRef]

- Re, M.; Valentini, G. Random walking on functional interaction networks to rank genes involved in cancer. In Proceedings of the International Conference on Artificial Intelligence Applications and Innovations (IFIP), Halkidiki, Greece, 27–30 September 2012; pp. 66–75. [Google Scholar]

- Golub, T.R.; Slonim, D.K.; Tamayo, P.; Huard, C.; Gaasenbeek, M.; Mesirov, J.P.; Coller, H.; Loh, M.L.; Downing, J.R.; Caligiuri, M.A.; et al. Molecular classification of cancer: Class discovery and class prediction by gene expression monitoring. Science 1999, 286, 531–537. [Google Scholar] [CrossRef] [PubMed]

- Dudoit, S.; Fridlyand, J.; Speed, T.P. Comparison of discrimination methods for the classification of tumors using gene expression data. J. Am. Stat. Assoc. 2002, 97, 77–87. [Google Scholar] [CrossRef]

- Ricci, C.; Marzocchi, C.; Battistini, S. MicroRNAs as biomarkers in amyotrophic lateral sclerosis. Cells 2018, 7, 219. [Google Scholar] [CrossRef] [PubMed]

- Eyileten, C.; Wicik, Z.; De Rosa, S.; Mirowska-Guzel, D.; Soplinska, A.; Indolfi, C.; Jastrzebska-Kurkowska, I.; Czlonkowska, A.; Postula, M. MicroRNAs as Diagnostic and Prognostic Biomarkers in Ischemic Stroke—A Comprehensive Review and Bioinformatic Analysis. Cells 2018, 7, 249. [Google Scholar] [CrossRef] [PubMed]

- Xu, D.; Tian, Y. A comprehensive survey of clustering algorithms. Ann. Data Sci. 2015, 2, 165–193. [Google Scholar] [CrossRef]

- Halkidi, M.; Vazirgiannis, M. Clustering validity assessment: Finding the optimal partitioning of a data set. In Proceedings of the IEEE International Conference on Data Mining (ICDM), San Jose, CA, USA, 29 November–2 December 2001; pp. 187–194. [Google Scholar]

- Rechkalov, T.V. Partition Around Medoids Clustering on the Intel Xeon Phi Many-Core Coprocessor. In Proceedings of the 1st Ural Workshop on Parallel, Distributed, and Cloud Computing for Young Scientists (Ural-PDC 2015), Yekaterinburg, Russia, 17 November 2015; Volume 1513. [Google Scholar]

- Kumar, P.; Wasan, S.K. Comparative study of k-means, pam and rough k-means algorithms using cancer datasets. In Proceedings of the CSIT: 2009 International Symposium on Computing, Communication, and Control (ISCCC 2009), Singapore, 9 October 2011; Volume 1, pp. 136–140. [Google Scholar]

- Mushtaq, H.; Khawaja, S.G.; Akram, M.U.; Yasin, A.; Muzammal, M.; Khalid, S.; Khan, S.A. A Parallel Architecture for the Partitioning around Medoids (PAM) Algorithm for Scalable Multi-Core Processor Implementation with Applications in Healthcare. Sensors 2018, 18, 4129. [Google Scholar] [CrossRef] [PubMed]

- Roux, M. A Comparative Study of Divisive and Agglomerative Hierarchical Clustering Algorithms. J. Classif. 2018, 35, 345–366. [Google Scholar] [CrossRef]

- Wang, J.; Zhu, C.; Zhou, Y.; Zhu, X.; Wang, Y.; Zhang, W. From Partition-Based Clustering to Density-Based Clustering: Fast Find Clusters with Diverse Shapes and Densities in Spatial Databases. IEEE Access 2018, 6, 1718–1729. [Google Scholar] [CrossRef]

- Ding, F.; Wang, J.; Ge, J.; Li, W. Anomaly Detection in Large-Scale Trajectories Using Hybrid Grid-Based Hierarchical Clustering. Int. J. Robot. Autom. 2018, 33. [Google Scholar] [CrossRef]

- Vijendra, S. Efficient clustering for high dimensional data: Subspace based clustering and density-based clustering. Inf. Technol. J. 2011, 10, 1092–1105. [Google Scholar] [CrossRef]

- Yu, X.; Yu, G.; Wang, J. Clustering cancer gene expression data by projective clustering ensemble. PLoS ONE 2017, 12, e0171429. [Google Scholar] [CrossRef] [PubMed]

- Bryant, A.; Cios, K. RNN-DBSCAN: A density-based clustering algorithm using reverse nearest neighbor density estimates. IEEE Trans. Knowl. Data Eng. 2018, 30, 1109–1121. [Google Scholar] [CrossRef]

- Deng, C.; Song, J.; Sun, R.; Cai, S.; Shi, Y. Gridwave: A grid-based clustering algorithm for market transaction data based on spatial-temporal density-waves and synchronization. Multimed. Tools Appl. 2018, 77, 29623–29637. [Google Scholar] [CrossRef]

- Pons, P.; Latapy, M. Computing communities in large networks using random walks. J. Graph Algorithms Appl. 2006, 10, 191–218. [Google Scholar] [CrossRef]

- Petrochilos, D.; Shojaie, A.; Gennari, J.; Abernethy, N. Using random walks to identify cancer-associated modules in expression data. BioData Min. 2013, 6, 17. [Google Scholar] [CrossRef] [PubMed]

- Ma, C.; Chen, Y.; Wilkins, D.; Chen, X.; Zhang, J. An unsupervised learning approach to find ovarian cancer genes through integration of biological data. BMC Genom. 2015, 16, S3. [Google Scholar] [CrossRef] [PubMed][Green Version]

- Zhu, L.; Su, F.; Xu, Y.; Zou, Q. Network-based method for mining novel HPV infection related genes using random walk with restart algorithm. Biochim. Biophys. Acta Mol. Basis Dis. 2018, 1864, 2376–2383. [Google Scholar] [CrossRef] [PubMed]

- Civicioglu, P.; Besdok, E. A conceptual comparison of the Cuckoo-search, particle swarm optimization, differential evolution and artificial bee colony algorithms. Artif. Intell. Rev. 2013, 39, 315–346. [Google Scholar] [CrossRef]

- Fister, I.; Fister, I., Jr.; Yang, X.S.; Brest, J. A comprehensive review of firefly algorithms. Swarm Evol. Comput. 2013, 13, 34–46. [Google Scholar] [CrossRef]

- De Barros Franco, D.G.; Steiner, M.T.A. Clustering of solar energy facilities using a hybrid fuzzy c-means algorithm initialized by metaheuristics. J. Clean. Prod. 2018, 191, 445–457. [Google Scholar] [CrossRef]

- Mortazavi, A.; Toğan, V.; Moloodpoor, M. Solution of structural and mathematical optimization problems using a new hybrid swarm intelligence optimization algorithm. Adv. Eng. Softw. 2019, 127, 106–123. [Google Scholar] [CrossRef]

- Karaboga, D.; Akay, B. A survey: Algorithms simulating bee swarm intelligence. Artif. Intell. Rev. 2009, 31, 61–85. [Google Scholar] [CrossRef]

- García, J.; Crawford, B.; Soto, R.; Astorga, G. A clustering algorithm applied to the binarization of Swarm intelligence continuous metaheuristics. Swarm Evol. Comput. 2019, 44, 646–664. [Google Scholar] [CrossRef]

- Beni, G.; Wang, J. Swarm intelligence in cellular robotic systems. In Robots and Biological Systems: Towards a New Bionics? Springer: Berlin/Heidelberg, Germany, 1993; pp. 703–712. [Google Scholar]

- Abraham, A.; Das, S.; Roy, S. Swarm intelligence algorithms for data clustering. In Soft Computing for Knowledge Discovery and Data Mining; Springer: Boston, MA, USA, 2008; pp. 279–313. [Google Scholar]

- Pacheco, T.M.; Gonçalves, L.B.; Ströele, V.; Soares, S.S.R. An Ant Colony Optimization for Automatic Data Clustering Problem. In Proceedings of the IEEE Congress on Evolutionary Computation (CEC), Rio de Janeiro, Brazil, 8–13 July 2018; pp. 1–8. [Google Scholar]

- Gandomi, A.H.; Yang, X.S.; Alavi, A.H.; Talatahari, S. Bat algorithm for constrained optimization tasks. Neural Comput. Appl. 2013, 22, 1239–1255. [Google Scholar] [CrossRef]

- Gandomi, A.H.; Yang, X.S.; Alavi, A.H. Cuckoo search algorithm: A metaheuristic approach to solve structural optimization problems. Eng. Comput. 2013, 29, 17–35. [Google Scholar] [CrossRef]

- Das, D.; Pratihar, D.K.; Roy, G.G.; Pal, A.R. Phenomenological model-based study on electron beam welding process, and input-output modeling using neural networks trained by back-propagation algorithm, genetic algorithms, particle swarm optimization algorithm and bat algorithm. Appl. Intell. 2018, 48, 2698–2718. [Google Scholar] [CrossRef]

- Xu, X.; Li, J.; Zhou, M.; Xu, J.; Cao, J. Accelerated Two-Stage Particle Swarm Optimization for Clustering Not-Well-Separated Data. IEEE Trans. Syst. Man Cybern. Syst. 2018, 1–12. [Google Scholar] [CrossRef]

- Cao, Y.; Lu, Y.; Pan, X.; Sun, N. An improved global best guided artificial bee colony algorithm for continuous optimization problems. In Cluster Computing; Springer: Berlin, Germany, 2018; pp. 1–9. [Google Scholar]

- Li, Y.; Wang, G.; Chen, H.; Shi, L.; Qin, L. An ant colony optimization-based dimension reduction method for high-dimensional datasets. J. Bionic Eng. 2013, 10, 231–241. [Google Scholar] [CrossRef]

- Cheng, C.; Bao, C. A Kernelized Fuzzy C-means Clustering Algorithm based on Bat Algorithm. In Proceedings of the 2018 10th International Conference on Computer and Automation Engineering, Brisbane, Australia, 24–26 February 2018; pp. 1–5. [Google Scholar]

- Ghaedi, A.M.; Ghaedi, M.; Vafaei, A.; Iravani, N.; Keshavarz, M.; Rad, M.; Tyagi, I.; Agarwal, S.; Gupta, V.K. Adsorption of copper (II) using modified activated carbon prepared from Pomegranate wood: Optimization by bee algorithm and response surface methodology. J. Mol. Liq. 2015, 206, 195–206. [Google Scholar] [CrossRef]

- Yang, X.S. Firefly algorithm, stochastic test functions and design optimisation. arXiv 2010, arXiv:1003.1409. [Google Scholar] [CrossRef]

- Rashedi, E.; Nezamabadi-Pour, H.; Saryazdi, S. GSA: A gravitational search algorithm. Inf. Sci. 2009, 179, 2232–2248. [Google Scholar] [CrossRef]

- Yazdani, S.; Nezamabadi-pour, H.; Kamyab, S. A gravitational search algorithm for multimodal optimization. Swarm Evol. Comput. 2014, 14, 1–14. [Google Scholar] [CrossRef]

- Tharwat, A.; Hassanien, A.E. Quantum-Behaved Particle Swarm Optimization for Parameter Optimization of Support Vector Machine. J. Classif. 2019, 1–23. [Google Scholar] [CrossRef]

- Bandyopadhyay, S.; Saha, S.; Maulik, U.; Deb, K. A simulated annealing-based multi-objective optimization algorithm: AMOSA. IEEE Trans. Evol. Comput. 2008, 12, 269–283. [Google Scholar] [CrossRef]

- Acharya, S.; Saha, S.; Sahoo, P. Bi-clustering of microarray data using a symmetry-based multi-objective optimization framework. Soft Comput. 2018, 1–22. [Google Scholar] [CrossRef]

- Bäck, T.; Rudolph, G.; Schwefel, H.P. Evolutionary programming and evolution strategies: Similarities and differences. In Proceedings of the Second Annual Conference on Evolutionary Programming, Los Altos, CA, USA, 25–26 February 1993. [Google Scholar]

- Ferreira, C. Gene expression programming: A new adaptive algorithm for solving problems. arXiv 2001, arXiv:cs/0102027. [Google Scholar]

- Guven, A.; Aytek, A. New approach for stage–discharge relationship: Gene-expression programming. J. Hydrol. Eng. 2009, 14, 812–820. [Google Scholar] [CrossRef]

- Koza, J.R.; Koza, J.R. Genetic Programming: On the Programming of computers by Means of Natural Selection; MIT Press: Cambridge, MA, USA, 1992. [Google Scholar]

- Mitra, A.P.; Almal, A.A.; George, B.; Fry, D.W.; Lenehan, P.F.; Pagliarulo, V.; Cote, R.J.; Datar, R.H.; Worzel, W.P. The use of genetic programming in the analysis of quantitative gene expression profiles for identification of nodal status in bladder cancer. BMC Cancer 2006, 6, 159. [Google Scholar] [CrossRef] [PubMed]

- Cheng, R.; Gen, M. Parallel machine scheduling problems using memetic algorithms. In Proceedings of the 1996 IEEE International Conference on Systems, Man and Cybernetics. Information Intelligence and Systems (Cat. No. 96CH35929), Beijing, China, 14–17 October 1996; Volume 4, pp. 2665–2670. [Google Scholar]

- Knowles, J.D.; Corne, D.W. M-PAES: A memetic algorithm for multi-objective optimization. In Proceedings of the 2000 Congress on Evolutionary Computation. CEC00 (Cat. No. 00TH8512), Istanbul, Turkey, 5–9 June 2000; Volume 1, pp. 325–332. [Google Scholar]

- Duval, B.; Hao, J.K.; Hernandez, J.C. A memetic algorithm for gene selection and molecular classification of cancer. In Proceedings of the 11th Annual Conference on Genetic and Evolutionary Computation, Montreal, QC, Canada, 8–12 July 2009; pp. 201–208. [Google Scholar]

- Chehouri, A.; Younes, R.; Khoder, J.; Perron, J.; Ilinca, A. A selection process for genetic algorithm using clustering analysis. Algorithms 2017, 10, 123. [Google Scholar] [CrossRef]

- Srivastava, A.; Chakrabarti, S.; Das, S.; Ghosh, S.; Jayaraman, V.K. Hybrid firefly based simultaneous gene selection and cancer classification using support vector machines and random forests. In Proceedings of the Seventh International Conference on Bio-Inspired Computing: Theories and Applications (BIC-TA 2012), Gwalior, India, 14–16 December 2012; pp. 485–494. [Google Scholar]

- Babu, G.P.; Murty, M.N. Clustering with evolution strategies. Pattern Recognit. 1994, 27, 321–329. [Google Scholar] [CrossRef]

- Bäck, T.; Schwefel, H.P. An overview of evolutionary algorithms for parameter optimization. Evol. Comput. 1993, 1, 1–23. [Google Scholar] [CrossRef]

- Bäck, T.; Fogel, D.B.; Michalewicz, Z. (Eds.) Evolutionary Computation 1: Basic Algorithms and Operators; CRC Press: Boca Raton, FL, USA, 2018. [Google Scholar]

- Eiben, A.E.; Smith, J. From evolutionary computation to the evolution of things. Nature 2015, 521, 476. [Google Scholar]

- Lynn, N.; Ali, M.Z.; Suganthan, P.N. Population topologies for particle swarm optimization and differential evolution. Swarm Evol. Comput. 2018, 39, 24–35. [Google Scholar] [CrossRef]

- Liu, Y.; Li, Z.; Xiong, H.; Gao, X.; Wu, J.; Wu, S. Understanding and enhancement of internal clustering validation measures. IEEE Trans. Cybern. 2013, 43, 982–994. [Google Scholar]

- Karo, I.M.K.; MaulanaAdhinugraha, K.; Huda, A.F. A cluster validity for spatial clustering based on davies bouldin index and Polygon Dissimilarity function. In Proceedings of the Second International Conference on Informatics and Computing (ICIC), Jayapura, Indonesia, 1–3 November 2017; pp. 1–6. [Google Scholar]

- Nies, H.W.; Daud, K.M.; Remli, M.A.; Mohamad, M.S.; Deris, S.; Omatu, S.; Kasim, S.; Sulong, G. Classification of Colorectal Cancer Using Clustering and Feature Selection Approaches. In Proceedings of the International Conference on Practical Applications of Computational Biology & Bioinformatics, Porto, Portugal, 21–23 June 2017; pp. 58–65. [Google Scholar]

- Billmann, M.; Chaudhary, V.; ElMaghraby, M.F.; Fischer, B.; Boutros, M. Widespread Rewiring of Genetic Networks upon Cancer Signaling Pathway Activation. Cell Syst. 2018, 6, 52–64. [Google Scholar] [CrossRef] [PubMed]

- Labed, K.; Fizazi, H.; Mahi, H.; Galvan, I.M. A Comparative Study of Classical Clustering Method and Cuckoo Search Approach for Satellite Image Clustering: Application to Water Body Extraction. Appl. Artif. Intell. 2018, 32, 96–118. [Google Scholar] [CrossRef]

- Aarthi, P. Improving Class Separability for Microarray datasets using Genetic Algorithm with KLD Measure. Int. J. Eng. Sci. Innov. Technol. 2014, 3, 514–521. [Google Scholar]

- Gomez-Pilar, J.; Poza, J.; Bachiller, A.; Gómez, C.; Núñez, P.; Lubeiro, A.; Molina, V.; Hornero, R. Quantification of graph complexity based on the edge weight distribution balance: Application to brain networks. Int. J. Neural Syst. 2018, 28, 1750032. [Google Scholar] [CrossRef] [PubMed]

- Oyelade, J.; Isewon, I.; Oladipupo, F.; Aromolaran, O.; Uwoghiren, E.; Ameh, F.; Achas, M.; Adebiyi, E. Clustering algorithms: Their application to gene expression data. Bioinform. Biol. Insights 2016, 10. [Google Scholar] [CrossRef] [PubMed]

- Tang, H.; Zeng, T.; Chen, L. High-order correlation integration for single-cell or bulk RNA-seq data analysis. Front. Genet. 2019, 10, 371. [Google Scholar] [CrossRef]

- Kiselev, V.Y.; Andrews, T.S.; Hemberg, M. Challenges in unsupervised clustering of single-cell RNA-seq data. Nat. Rev. Genet. 2019, 20, 273–282. [Google Scholar] [CrossRef]

- Handhayani, T.; Hiryanto, L. Intelligent kernel k-means for clustering gene expression. Procedia Comput. Sci. 2015, 59, 171–177. [Google Scholar] [CrossRef]

- Shanmugam, C.; Sekaran, E.C. IRT image segmentation and enhancement using FCM-MALO approach. Infrared Phys. Technol. 2019, 97, 187–196. [Google Scholar] [CrossRef]

- Masciari, E.; Mazzeo, G.M.; Zaniolo, C. Analysing microarray expression data through effective clustering. Inf. Sci. 2014, 262, 32–45. [Google Scholar] [CrossRef]

- Bouguettaya, A.; Yu, Q.; Liu, X.; Zhou, X.; Song, A. Efficient agglomerative hierarchical clustering. Expert Syst. Appl. 2015, 42, 2785–2797. [Google Scholar] [CrossRef]

- Lin, C.R.; Chen, M.S. Combining partitional and hierarchical algorithms for robust and efficient data clustering with cohesion self-merging. IEEE Trans. Knowl. Data Eng. 2005, 17, 145–159. [Google Scholar]

- Darong, H.; Peng, W. Grid-based DBSCAN algorithm with referential parameters. Phys. Procedia 2012, 24, 1166–1170. [Google Scholar] [CrossRef]

- Langohr, L.; Toivonen, H. Finding representative nodes in probabilistic graphs. In Bisociative Knowledge Discovery; Springer: Berlin/Heidelberg, Germany, 2012; pp. 218–229. [Google Scholar]

- Carneiro, M.G.; Cheng, R.; Zhao, L.; Jin, Y. Particle swarm optimization for network-based data classification. Neural Netw. 2019, 110, 243–255. [Google Scholar] [CrossRef]

- Yi, G.; Sze, S.H.; Thon, M.R. Identifying clusters of functionally related genes in genomes. Bioinformatics 2007, 23, 1053–1060. [Google Scholar] [CrossRef]

- Somintara, S.; Leardkamolkarn, V.; Suttiarporn, P.; Mahatheeranont, S. Anti-tumor and immune enhancing activities of rice bran gramisterol on acute myelogenous leukemia. PLoS ONE 2016, 11, e0146869. [Google Scholar] [CrossRef]

- Chavali, A.K.; Rhee, S.Y. Bioinformatics tools for the identification of gene clusters that biosynthesize specialized metabolites. Brief. Bioinform. 2017, 19, 1022–1034. [Google Scholar] [CrossRef]

| Categories | Time Complexity | Computing Efficiency | Convergence Rate | Scalability | Initialization of Cluster Number |

|---|---|---|---|---|---|

| Partitioning | Low | High | Low | Low | Yes |

| Hierarchical | High | High | Low | High | No |

| Grid-based | Low | High | Low | High | No |

| Density-based | Middle | High | High | High | No |

| Clustering Techniques | Categories | Advantages | Disadvantages | References |

|---|---|---|---|---|

| Fuzzy C Means (FCM) | Partitioning | Minimize the error function belonging to its objective function and solve the partition factor of the classes. | Unable to achieve high convergence. | [27,28] |

| K-means Clustering | Partitioning | Use a minimum “within-class sum of squares from the centers” criterion to select the clusters. | Need to initialize the number of clusters beforehand. | [9,10,11,12,29,30,31,32,33] |

| Partitioning Around Medoids (PAM) | Partitioning | Deal with interval-scaled measurements and general dissimilarity coefficients. | Consumes large central memory size. | [34] |

| Self-Organizing Maps (SOM)s | Partitioning | Suitable for data survey and getting good insight into the cluster structure of data for data mining purposes. | Distance dissimilarity is ignored. | [35,36,37,38] |

| Agglomerative Nesting (AGNES) | Hierarchical (agglomerative) | Build a hierarchy of clustering from a small cluster and then merge until all data are in one large group. | Starts with details and then works up to large clusters, which is affected by unfortunate decisions in the first step. | [19,34] |

| EISEN Clustering | Hierarchical (agglomerative) | Carry out a clustering in which a mean vector represents each cluster from data in the group. | Starts with details and then works up to large clusters, which can be affected by unfortunate decisions in the first step. | [19] |

| Divisive Analysis (DIANA) | Hierarchical (divisive) | Perform a task starting from a large cluster containing all data to only a single dataset. | Not generally available and rarely applied in most studies. | [19,34] |

| Clustering in Quest (CLIQUE) | Grid-based | Can automatically find subspaces in lower-dimensional subspaces with high-density clusters. | Ignores all projections of dimensional subspaces. | [39,40] |

| Grid-Clustering Technique for High-Dimensional and Large Spatial Databases (GCHL) | Grid-based | Efficient and scalable while handling high dimensionality issue. | Insensitive to noise. | [26,41] |

| Statistical Information Grid (STING) | Grid-based | Facilitate several kinds of spatial queries and less computational cost. | Difficult to identify multiple clusters. | [42,43] |

| Density-Based Spatial Clustering of Applications with Noise (DBSCAN) | Density-based | Can detect clusters with different shapes and able to handle ones with different densities. | Optimization issue. Difficult to select appropriate parameter values. | [44,45] |

| Random Walk based Clustering | Density-based | Reflect the topological features of a functional network. | Considers the interaction between two genes. | [46,47,48] |

| Relative Core Merge (RECOME) | Density-based | Can characterize based on a step function of its parameter. | Scalability issue. Hard to handle a large volume of data. | [45] |

| Categories | Clustering Techniques | Parameter (s) | Number of Genes in the Selected Cluster | Number of Prognostic Markers | Accuracy (%) |

|---|---|---|---|---|---|

| Partitioning | K-means | k = 2 | 275 | 22 | 71.50 |

| Hierarchical | AGNES | k = 2 | 339 | 22 | 78.50 |

| Grid-based | CLIQUE | k = 2 dimension = 10 density = 0.2 | 919 | 67 | 89.00 |

| Density-based | DBSCAN | k = 2 minPts = 10 | 1548 | 103 | 73.00 |

| Strategies | Population-Based | Evolution | |

|---|---|---|---|

| Functions | Exploration | Exploitation | |

| Between technique and solution | The technique can reach the best solution within the search space. | Express the ability of the technique to reach the global optimum solution, which was around the obtained local solutions. | Optimize the mathematical functions of the technique with continuously changeable parameters and extend to solve discrete optimization problems. |

| Application | Metaheuristic search for global optimal solutions using informative parameters. | Processes of selection, recombination, and mutation. | |

| Weakness | Difficult to avoid problems of local minima and early convergence. | Need to control and adjust parameters. | |

| Aim | Imitate the best features in nature and produce a better quality of solution efficiently. | ||

| Techniques | Strategies | Usage | Fitness | References |

|---|---|---|---|---|

| Artificial Bee Colony (ABC) | Population | Can stimulate searching food process of bees based on the found food sources quality. | Position and nectar amount of a food source. | [37,82] |

| Ant Colony Optimization (ACO) | Population | Mimic ant behavior to solve optimization problems. | Pheromone values. | [77,83] |

| Ant Lion Optimization (ALO) | Population | High exploitation to explore search space and quickly converge to a global optimum. | Ant location. | [11,33] |

| Bat Algorithm | Population | Uses the frequency-based tuning and pulse emission rate changes that can lead to better convergence. | Bat behavior. | [78,80,84] |

| Bee Algorithm | Population | Imitate food foraging behavior of swarms of honeybees to find the optimal solution. | Frequency of the dance. | [85] |

| Cuckoo Search (CS) | Population | Combine the obligate brood parasitic behavior of some cuckoo species with Lévy flight behavior of some birds and fruit flies. | Quality of cuckoo bird eggs. | [79] |

| Firefly Algorithm (FA) | Population | Carry out nonlinear design optimization and solve unconstrained stochastic functions. | Brightness of the firefly. | [70,86] |

| Gravitational Search Algorithm (GSA) | Population | Emulate the law of Newtonian gravity to solve various nonlinear optimization problems. | Intelligence factors. | [87,88] |

| Particle Swarm Optimization (PSO) | Population | Balance the weights of a neural network and sweep the search space using a swarm of particles. | A “space” where the particles “move”. | [71,77,81,89] |

| Simulated Annealing (SA) | Population | Use principles of statistical mechanics regarding the behavior of many atoms at low temperature. | Single bit-flips. | [90,91] |

| Differential Evolution (DE) | Evolution | Maintain a population of target vectors at each iteration for stochastic search and global optimization. | Global minimum. | [71] |

| Evolution Strategy (ES) | Evolution | Emphasize the use of normally distributed random mutations (main operator). | Several operators needed to consider in the analysis. | [92] |

| Evolutionary Programming (EP) | Evolution | Use the self-adaptation principle to evolve the parameters on searching. | No recombination operator and difficult to identify useful values for parameter tuning. | [92] |

| Gene Expression Programming (GEP) | Evolution | Extremely versatile and greatly surpasses the existing evolutionary techniques. | Several genetic operators needed to function on selected chromosomes during reproduction. | [93,94] |

| Genetic Algorithm (GA) | Evolution | Use genes with mechanisms to mimic survival of the fittest and inspire the genetics with the evolution of populations. | Priority of the genetic strings. | [71] |

| Genetic Programming (GP) | Evolution | Can select variables and operators automatically then assemble into suitable structures. | No clearly defined termination point in biological processes operating. | [95,96] |

| Memetic Algorithm | Evolution | Useful on the property of global convexity in the search space. | Genetic operators (crossover and mutation) needed to consider in the analysis. | [97,98,99] |

| Criteria of Validation Measurements | Internal | External |

|---|---|---|

| Aim | Assess the fitness between clustering structure and data. | Measure the performance by matching cluster structure to prior information. |

| Suffer from bias |

|

|

| Measurements | Categories | Usage | References |

|---|---|---|---|

| Average of sum of intra-cluster distances | Internal | Measure assessing cluster compactness or homogeneity. | [11,33] |

| Connectivity | Internal | Degree of the connectedness of clusters. | [1,23] |

| Davies and Bouldin (DB) index | Internal | Measure intra- and inter-cluster using spatial dissimilarity function. | [108] |

| Dunn index | Internal | Ratio of the smallest distance among observations in the different cluster to the most considerable intra-cluster distance. | [1,23] |

| Euclidean distance | Internal | Compute distances between the objects to quantify their degree of dissimilarity. | [19,31,34,109] |

| Inter-cluster distance | Internal | Quantify the degree of separation between individual clusters. | [11] |

| Manhattan distance | Internal | Correspond to the sum of lengths of the other two sides of a triangle. | [34] |

| Pearson correlation coefficients (PCC) | Internal | Measure between-state functional similarity. | [23,110] |

| Silhouette width | Internal | Measure the degree of confidence in a clustering assignment and lie in the interval [−1, +1], with well-clustered observations having values near +1 and near -1 for poorly clustered observations. | [1,18,19,31,32,109] |

| Square sum function of the error | Internal | Measure the quality of cluster either by compactness or homogeneity. | [12,23,111] |

| Entropy | External | Measure mutual information based on the probability distribution of random variables. | [30,112,113] |

| F-measure | External | Assess the quality of clustering result at the level of entire partitioning and not for an individual cluster only. | [11,23,30,33] |

| References | Ensemble Methods | Clustering Techniques | Use |

|---|---|---|---|

| Deng et al. [24] | Grid-based and Density-based Spatial Clustering (GRIDEN) | Grid-based Density-based (DBSCAN) | Enhances clustering speed. |

| Oyelade et al. [114] Masciari et al. [119] | Microarray Data Clustering using Binary Splitting (M-CLUBS) | Hierarchical (divisive and agglomerative) | Overcomes the effect of size and shape of clusters, number of clusters, and noise for gene expression data. |

| Oyelade et al. [114] Bouguettaya et al. [120] | Efficient Agglomerative Hierarchical Clustering (KnA) | Hierarchical (agglomerative) Partitioning (k-means) | Relatively consistent in synthetic data. |

| Bouguettaya et al. [120] Lin et al. [121] | Cohesion-based Self-Merging (CSM) | Partitioning (k-means) Hierarchical (divisive) | Clusters the datasets of arbitrary shapes very efficiently. |

| Darong and Peng [122] | Grid-based DBSCAN Technique with Referential Parameters (GRPDBSCAN) | Grid-based Density-based (DBSCAN) | Finds clusters of arbitrary shape and removes noise. |

| References | Clustering Techniques | Optimization for Objective Function of Partitioning Clustering Techniques | Clustering Validation |

|---|---|---|---|

| Majhi and Biswal [11,33] | K-means clustering | Ant Lion Optimization (ALO) |

|

| Ye et al. [12] | K-means clustering | Cuckoo Search | Square sum function of the error |

| Mary and Raja [30] | K-means clustering | Ant Colony Optimization (ACO) |

|

| Garg and Batra [32] |

| Cuckoo Search Optimization (CSO) |

|

| Acharya et al. [91] | Multi-Objective Based Bi-Clustering | Simulated Annealing (SA) | Euclidean distance |

| Labed et al. [111] | K-Harmonic Means | Cuckoo Search Algorithm (CSA) |

|

| Shanmugam and Sekaran [118] | Fuzzy C Means (FCM) | Ant Lion Optimization (ALO) | Square sum function of the error |

| Carneiro et al. [122] | Network-based techniques (e.g., clustering and dimensionality reduction) | Particle Swarm Optimization (PSO) | Euclidean distance |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Nies, H.W.; Zakaria, Z.; Mohamad, M.S.; Chan, W.H.; Zaki, N.; Sinnott, R.O.; Napis, S.; Chamoso, P.; Omatu, S.; Corchado, J.M. A Review of Computational Methods for Clustering Genes with Similar Biological Functions. Processes 2019, 7, 550. https://doi.org/10.3390/pr7090550

Nies HW, Zakaria Z, Mohamad MS, Chan WH, Zaki N, Sinnott RO, Napis S, Chamoso P, Omatu S, Corchado JM. A Review of Computational Methods for Clustering Genes with Similar Biological Functions. Processes. 2019; 7(9):550. https://doi.org/10.3390/pr7090550

Chicago/Turabian StyleNies, Hui Wen, Zalmiyah Zakaria, Mohd Saberi Mohamad, Weng Howe Chan, Nazar Zaki, Richard O. Sinnott, Suhaimi Napis, Pablo Chamoso, Sigeru Omatu, and Juan Manuel Corchado. 2019. "A Review of Computational Methods for Clustering Genes with Similar Biological Functions" Processes 7, no. 9: 550. https://doi.org/10.3390/pr7090550

APA StyleNies, H. W., Zakaria, Z., Mohamad, M. S., Chan, W. H., Zaki, N., Sinnott, R. O., Napis, S., Chamoso, P., Omatu, S., & Corchado, J. M. (2019). A Review of Computational Methods for Clustering Genes with Similar Biological Functions. Processes, 7(9), 550. https://doi.org/10.3390/pr7090550