1. Introduction

A key step of the Oil and Gas chain is midstream natural gas processing, necessary to give destination to the associated natural gas and ensure market-specification for end use [

1]. This area is characterized by dynamic operation scenarios as it needs to adapt to upstream and downstream varying conditions. Those changes translate into several scheduling and production planning prediction scenarios that are daily reviewed with newer information for better decision making. This is especially relevant in the context of the Brazilian gas market, where recent regulatory changes yielded an open market arrangement in which third parties can access the natural gas processing infrastructure, such as that belonging to Petrobras [

2].

In this new dynamic, the gas processor provides a gas processing service for the third-party companies [

3]. As a result, several additional production planning processes were created to account for this fast-paced dynamic now involving multiple contracting parties [

4]. In the Brazilian context, those new scheduling processes require now over a thousand process simulation scenarios per month, for each gas processing plant [

4]. This scenario gets even more complex when the processing site receives raw gas from multiple feedstocks and is formed by multiple Natural Gas Processing Units (NGPUs) and Liquid Fractionation Units (LFUs) with distinguished layouts, technology and performance. The resulting flexibility of this site translates into multiple operation and configuration possibilities, which directly affect the product profile distribution for the same raw gas inputs [

5].

A new simulation strategy is necessary to fulfill the requirements of those new processes: agility, reliability, robustness, and auditability. The traditional first principles approach, however solid in theoretical basis, demands a high execution time, hampering industrial applications for the execution of multiple scenarios in a short time window of availability. First principles simulation is the benchmark approach in this field, and Aspen HYSYS stands out as the market-leading commercial process simulator for natural gas processing [

4,

5]. This approach is capable of depicting the engineering phenomena underlying the process units. However, its drawbacks are: (1) the need for understanding and modeling all the phenomena and knowing all the parameters involved in it to both develop a new model and to update an existing one [

6]; (2) the high simulation cost in terms of execution time for complex models. The latter is particularly relevant when several model evaluations are required, which is the case when (a) the simulation model is coupled with an optimization algorithm or (b) the simulation model is being used for multiple-scenario/case-study evaluations, which applies to this work.

In this context, recent works on modeling and optimization of a natural gas processing site proposed a business optimization framework coupled with a phenomenological modeling for an industrial site with multiple NGPUs and feedstock [

3,

4]. The methodology proposed led to promising results; however, the total MINLP optimization time reached almost 24 h, mostly due to the high cost of first-principles model evaluation using the commercial process simulator Aspen HYSYS, considering the Peng–Robinson equation of state as the thermodynamic model [

4]. Industrial implementation requires a shorter execution time, and therefore, other modeling strategies should be investigated.

In the natural gas market, it is important to optimize the supply chain, particularly the integration between upstream and midstream systems, to maximize gas throughput and meet market demands. This is amplified by the dynamic nature of upstream operations, where operational adjustments are crucial for optimizing performance. Additionally, there is a lack of standardized methodologies for enterprise-wide optimization and the evaluation of maximum plant capacity, as only a few works in the literature and industrial practices explore this integration [

3,

4]. This highlights the demand for robust, scalable, and efficient optimization systems in the natural gas industry, including fast and reliable models for the midstream systems.

A promising approach is to explore machine learning techniques to develop data-driven and/or physics-informed machine learning models. This strategy tends to result in models that demand lower computation effort during usage—this shorter execution time might enable real-time simulation, and therefore, usage for optimization, control, RTO, digital twin and rigorous scheduling applications. Machine learning models are also particularly interesting for industrial applications because of their inherent capability for updating adherence to the real plant—the model parameters can be periodically updated from newly acquired plant data.

Machine learning has been employed in the Oil and Gas industry for generating models for use in optimization and control, among other applications. For example, Soares et al. (2022) [

7] developed a nonlinear model predictive controller (NMPC) for a gas-lift system, in which the internal states were inferred from sensor data using a machine learning algorithm. The literature also presents studies in which machine learning has been applied in gas–oil systems for data-driven process modeling and for forecasting hydrocarbon production. For example, Chahar et al. (2022) and Fernandes et al. (2025) predicted well performance from historical production and reservoir data [

8,

9], and Han and Kwon (2021) applied deep learning models to shale gas wells [

10]. However, the specific use of input–output flowrate modeling based on machine learning to forecast production in NPGUs has yet to be addressed; to the authors’ knowledge, the present study is the first contribution in this regard.

Accordingly, the objective of this study is to develop and evaluate a data-driven modeling strategy for production planning in large-scale natural gas processing under open-market conditions. To fill this gap, this work proposes a new simulation approach, compatible with the dynamism of the open-market midstream sector: a data-driven model built with the aid of machine learning and tested in an industrial case study at the largest gas processing site in Brazil: a Petrobras asset.

This paper is organized as follows.

Section 2 (Materials and Methods) describes the industrial case study, data selection and preprocessing procedures, and the machine learning methods adopted.

Section 3 (Results and Discussion) presents the data analysis steps, model development, and prediction performance. Finally,

Section 4 summarizes the main conclusions and outlines directions for future work.

2. Materials and Methods

To achieve the objective of this work, a practical case study will be used to test the proposed strategy and the resulting data-driven model in an actual industrial plant. The selected site is owned by Petrobras and is the largest natural gas processing plant in Brazil: Cabiúnas Gas Plant (UTGCAB), located in Macaé, Rio de Janeiro.

Information on UTGCAB operation history was collected, analyzed and used as a data source to develop a data-driven model to predict the output production flowrates based on plant data at the same instant of time.

2.1. System Description

UTGCAB is part of the integrated natural gas processing system of the southeast of Brazil (

Figure 1) [

4]. It is a multi-unit and multi-feedstock gas processing asset that receives raw natural gas from both Santos and Campos Basin and processes the gas into four products: sales gas, NGL (natural gas liquid), LPG (liquified petroleum gas) and C5+ (natural gasoline). As shown in

Figure 1, UTGCAB has 5 NGPUs and 3 LFUs, as well as specific units for contaminant removal (mercury and CO

2). It also contains auxiliary systems for compression and multiple possibilities of gas and liquid routing from the slug catcher to the process units, along with several operation configurations for each processing unit, which becomes a challenge when trying to predict the output products’ flowrates.

2.2. Modeling Approach

When it comes to designing and operating process facilities in the Oil and Gas industry, having a digital essential for a wide range of technical evaluations and decision making, such as debottlenecking, technical support, logistics planning, optimization and process design [

1]. However, to achieve that, it is necessary to have readily available, accurate, fast and updatable process models—and that is where the challenge remains.

To address this, a deep neural network (DNN) was adopted as the core modeling strategy. DNNs are well recognized for their strong performance in complex nonlinear regression tasks, due to their capacity as universal function approximators and their ability to model intricate patterns from large-scale, high-dimensional data [

11,

12,

13]. Additionally, DNNs have become a widely adopted tool in scientific and engineering applications, supported by a robust body of theoretical research and practical successes. These characteristics make them particularly well-suited for process modeling tasks where both flexibility and accuracy are required. The network architecture, training strategy, and validation procedures are detailed in the following sections.

2.3. Problem Formulation

The formulated problem is a nonlinear steady-state model with the goal of predicting the flowrates of each plant product: sales gas, NGL, LPG and C5+. Both input and output variables are quantitative and continuous and are used in the same instant of time, without any lag.

There are 44 feature candidates, broken down into (1) raw gas and condensate flowrates from the outlet of the slug catchers to their respective destinations, (2) input gas flowrates of each NGPU, (3) flare and residual gas, (4) NGL flowrate to the LFUs. In total, 18 targets were defined, which are the outlet flowrates of each product, from each process unit (NGPUs and LFUs).

A Forward Neural Network (FNN) framework was used. Even though the dataset used is strictly a time-series, the goal in this work is to come up with a neural network to model the process of natural gas processing. This means that the neural network must be able to provide an output at any given time from a set of inputs at this same time, regardless of what happened in the past. Therefore, this dataset is considered a series of independent observations in a steady state, which explains the random selection of train/test data and the use of FNNs.

For the input layer, a linear activation function was used, whereas all other layers used ReLu [

11]. The input layer has the size of the reduced training set, i.e., 22, whereas the output layer has the size of y, in this case, 18. Adam [

14] was selected as the optimizer, and the mean squared error (MSE), Equation (1), was used as the loss function during training:

MSE is one of the most common metrics for regression models and computes the squared error between the actual target values (y) and the values predicted from the NN model (y

pred).

A validation set of 20% was used during training to monitor the validation loss as a reference metric for Early Stopping, with a patience of 30; the maximum number of epochs was set to 1000. Lastly, two regularization techniques were used: (a) Dropout was applied to each hidden layer, with a drop rate of 20%. (b) Elastic Net regularization (a combination of L1 and L2 techniques) was added to each layer with a default λ = 0.01.

Due to the characteristics of deep learning, there is no general rule for the selection of architecture and hyperparameters—although some rules of thumb could be used. In terms of architecture, the goal was to find the most concise neural network that returns a satisfactory output, in order to avoid the curse of dimensionality and overfitting. There are two main architecture parameters: (i) the number of hidden layers and (ii) the number of neurons per layer. The activation function and regularization techniques are also relevant here. There are still other parameters that affect neural network performance, for instance: (iii) learning rate and (iv) batch size.

In order to find the best neural network architecture within a certain parametric space, the

Optuna (v4.0.0) package was used for hyperparameter optimization. Parameters (i) to (iv) listed above were manipulated, according to

Table 1, and the neural network was trained 500 times, with the objective of minimizing the loss function in the test set.

2.4. Process Dataset

Data selection was one of the most important steps in this work, since the quality of the resulting model would be restricted to the quality of the dataset utilized, and, in this particular case, the data source comes from an industrial basis, featuring real-world complexity. The dataset chosen contains reconciled process history data of an industrial plant for natural gas processing. This data is presented on a daily basis and consists of flowrate values measured/inferred at several distinguished locations in the plant. They represent the overall mass balance of the site and the local mass balance of each NGPU. A total of 1282 observations were selected for use, which describe three and a half years of plant operation, from January 2020 to June 2023.

2.5. Modeling Framework

All data analysis and modeling were executed in Python (v3.12) using the Keras API within TensorFlow (v2.18). Auxiliary packages such as scikit-learn (v1.5), NumPy (v1.6), pandas (v2.2x), Matplotlib (v3.9), and Seaborn (v0.13) were also used. Data analysis and storytelling were performed with the aid of (i) statistical descriptive analysis (mean, variance, and standard deviation), (ii) graphical plots (time-series trends, scatter plots, and histograms), and (iii) unsupervised machine learning techniques. Principal component analysis (PCA) and clustering (hierarchical clustering and K-means) were the unsupervised machine learning methods utilized for data comprehension.

Then, the supervised-learning model was developed using deep neural networks, aiming to predict the outputs at a given instant t from the inputs at this same time.

3. Results and Discussion

3.1. ETL and Data Wrangling

The first steps carried out were data extraction/transformation/loading (ETL) and data wrangling, such as: header, index and variable type configuration, removal of time points with missing values, and dropping non-useful variables. This procedure resulted in a clean and prepared dataset for feature/target selection.

From the original dataset, 44 variables were initially selected as features (x) and 18 as targets (y). After selecting the input variables from the data history, those features were standardized by using the StandardScaler function from the sklearn preprocessing package. This function returned the variables with a mean of zero and standard deviation equal to one, a prerequisite to carrying out PCA.

The standardized features were individually plotted, as shown in

Figure 2. From the plots, it can be observed that variables x

18 to x

24 exhibit a characteristic behavior associated with gas flow to the flare, namely a baseline of low values with occasional high peaks, reflecting operational events. Additionally, variables x

40 and x

41 represent the percentage of residual gas returning for processing. The corresponding time series indicates that these variables were effectively operated as binary (0–1) signals, meaning that either all residual gas was mixed with sales gas or entirely redirected for further processing. For the remaining variables, no clear temporal trend was identified; therefore, histogram plots are presented to provide further insight into their distributional characteristics.

Feature histograms are shown in

Figure 3, indicating that there is no common distributional shape across all variables. While some features, such as x

1, x

11, x

16, x

17, and x

25, exhibit approximately normal distributions, most variables present skewed or heavy-tailed distributions with distinct value ranges. This heterogeneity in data distributions, together with the presence of nonlinear relationships among variables, motivates the use of flexible data-driven models, as linear approaches may struggle to adequately capture such complex behaviors.

Finally, a two-dimensional scatter plot analysis was performed and is shown in

Figure 4. The plots indicate that some features are strongly correlated, suggesting that they convey redundant information—for example, x

3 with x

4, x

6 with x

7, x

9 with x

10, and x

16 with x

17. This provides an initial indication that dimensionality reduction may be possible without significant loss of variance information, as further assessed via PCA. Conversely, other feature pairs exhibit little apparent correlation, such as x

25 and x

26, x

11 and x

12, and x

10 and x

11.

Train and test sets were then randomly determined from the original dataset with a test size of 20%—a typical reference from the literature. This resulted in 1025 observations for training and 257 for testing.

PCA was carried out with the standardized input data. The training set was used to define the transformation space, which was then applied to the test set. The cumulative variance plot was used to help determine the number of components to keep, as seen in

Figure 5. The chart shows the total amount of variance captured (y-axis) depending on the number of principal components included (x-axis). A general rule of thumb is to preserve around 80% of the variance—this is valid for the typical cases in which the first few components account for most of the variance. However,

Figure 4 shows that, for this dataset, no single main feature explains most of the variance. Data variance seems to be incrementally explained with the inclusion of new components for the first 29 principal components, which explain 100% of data variance. The last 15 components, then, seem to be redundant compared to the others. It was then decided to keep the first 22 components, which explain 95.1% of the total variance.

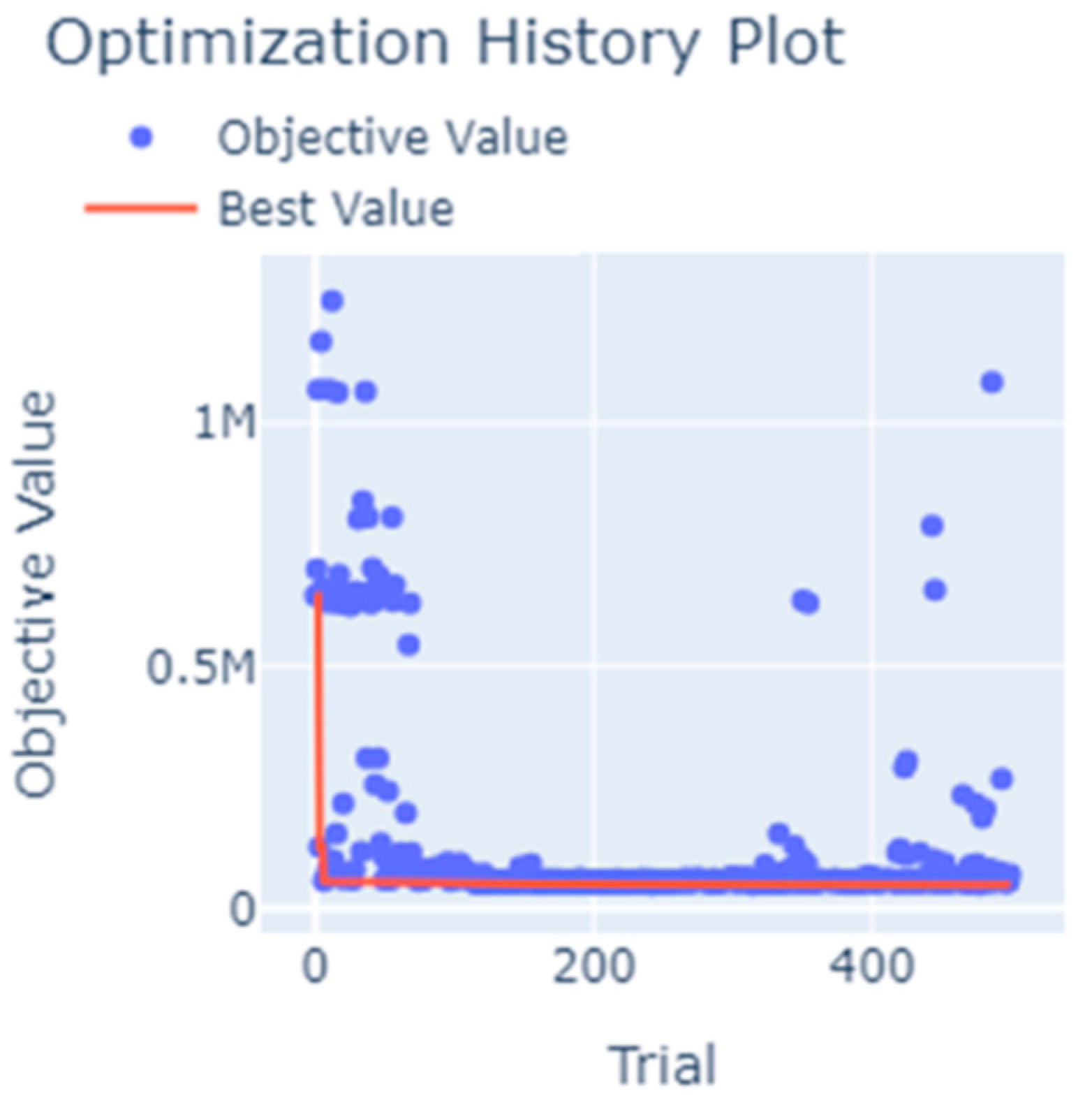

3.2. Framework and Hyperparameters

Figure 6 shows the optimization history plot of the

Optuna study. The optimization was successful and found an optimum set of hyperparameters for the search space considered, as shown in

Table 2.

Optuna results also provide information on relative hyperparameter importance.

Table 2 shows that the number of hidden layers and the learning rate are by far the hyperparameters that affect the loss function the most. Batch size, on the other hand, showed very little effect on the results.

From this point, the optimum parameters from

Table 2 will be utilized. This resulted in the optimum model architecture detailed in

Table 3.

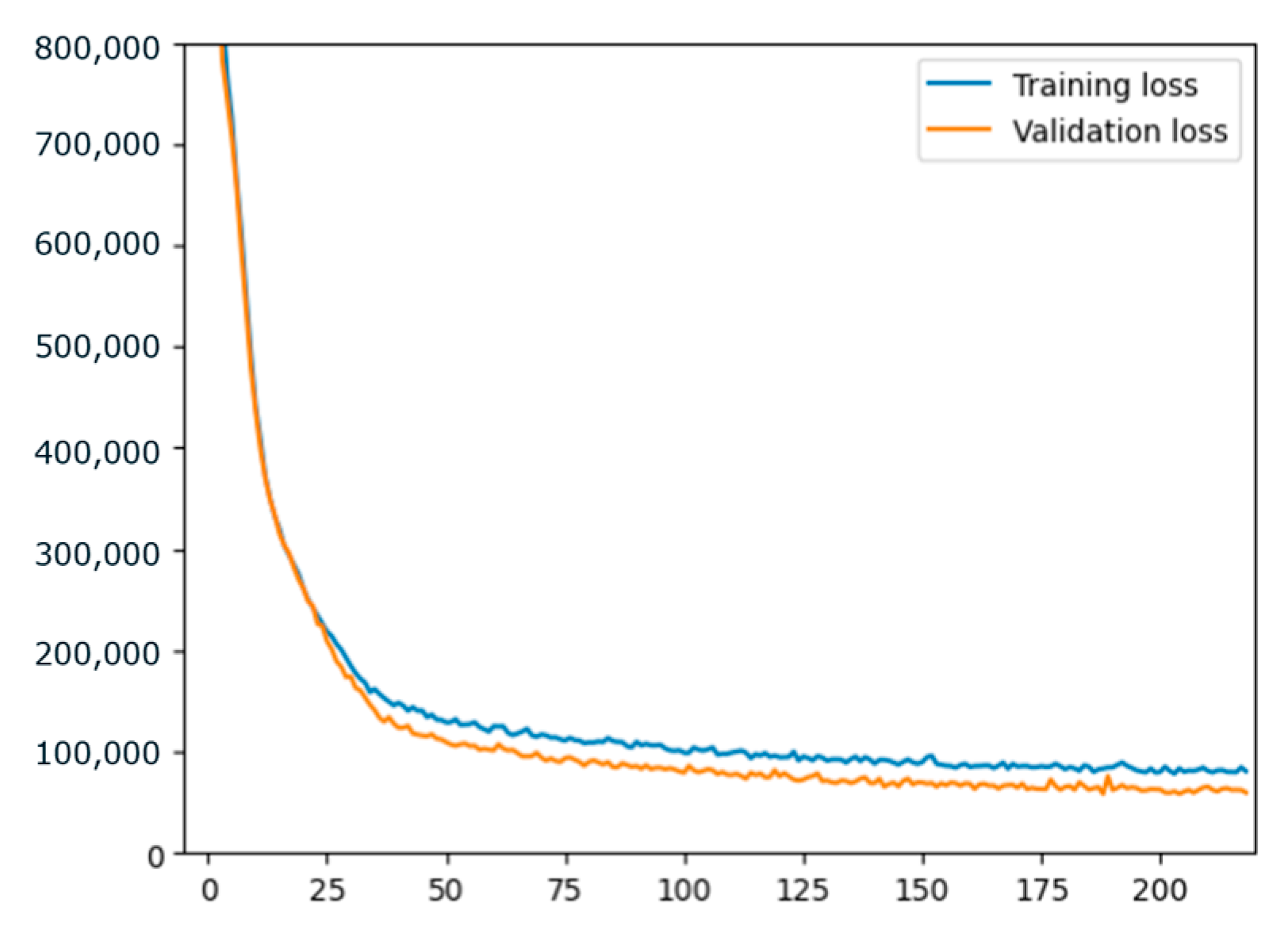

3.3. Performance Results

The FNN was successfully trained, and the convergence is shown in

Figure 7. The plot shows that over 200 epochs were calculated, and considering that there was no more improvement in the validation loss, training was interrupted via an early stopping criterion to avoid overfitting.

After training, the mean absolute error (MAE) was computed, resulting in 0.015 for the training set and 0.017 for the test set, thus confirming that overfitting did not seem to be an issue. Another important performance metric, the R2, was 0.72 for the test set, a very reasonable fit, considering that the features are only related to mass balance and that those are industrial data from a complex processing site.

Since it is not trivial to obtain an appropriate industrial dataset with good quality and trustworthy information, the plant data used had 1282 available points. To prevent overfitting, several strategies were successfully employed. First of all, descriptive analysis and PCA results showed that the available data were highly informative about the system of interest, potentially capturing the essence of the underlying process behavior effectively. Then, the following techniques were used: (i) hyperparameter optimization was performed; (ii) regularization techniques were employed: dropout layer after each hidden layer, elastic net regularization (combining L1 + L2 techniques) and early stopping; and (iii) the error curves for both training and validation were evaluated, showing a consistently higher error for the training set during learning iterations. Additionally, with the availability of more data, the neural networks may be systematically retrained using the same methodology.

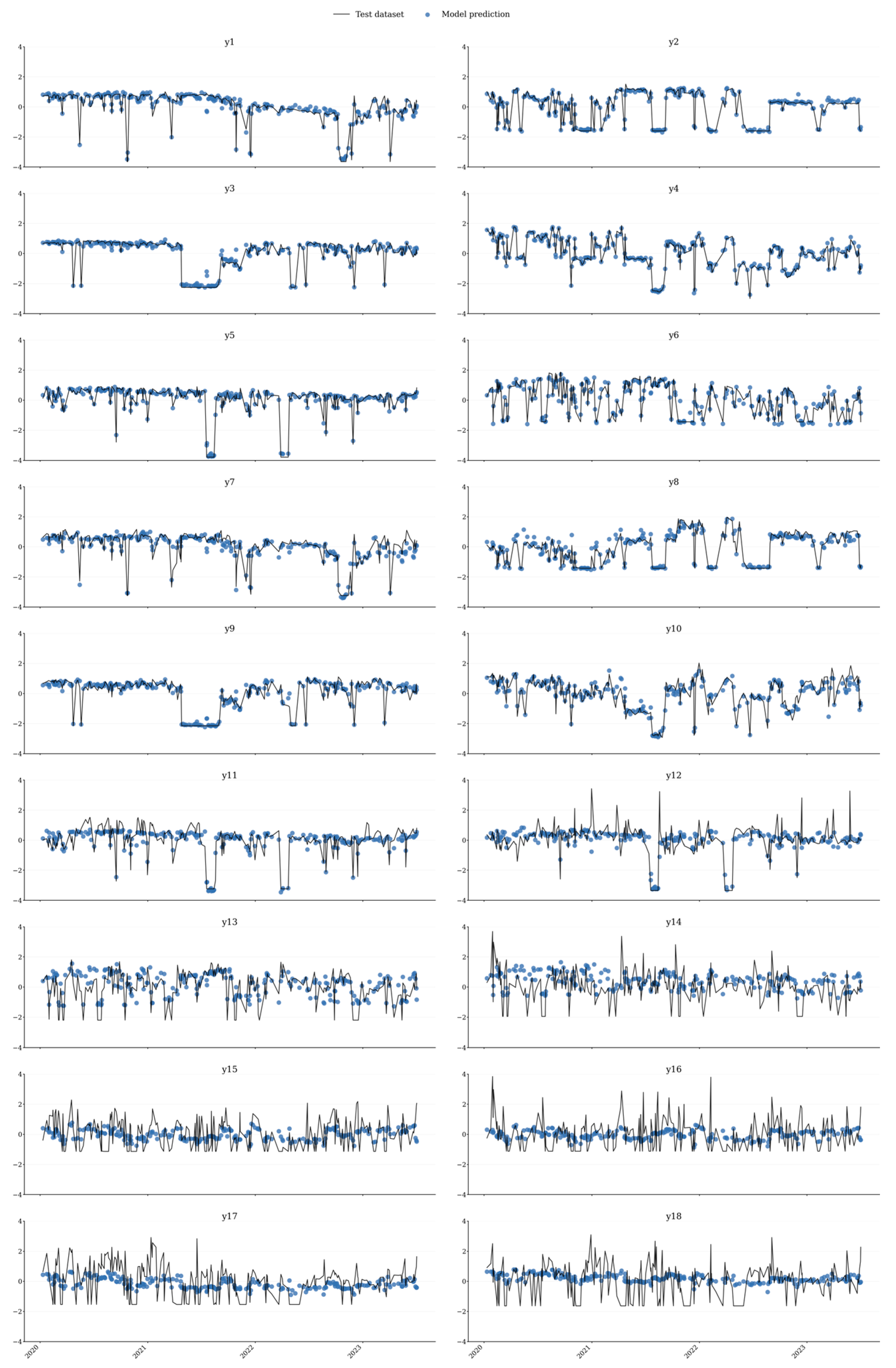

Figure 8 shows the time-series plots of all 18 target variables, comparing the actual values (black line) to the predicted ones (blue dash line) for the whole y set (training plus test). The values predicted from FNN follow the overall actual trend of all targets. The fit is particularly good for the first 10 variables (y

1 to y

10), which represent the flowrate of sales gas and NGL. As a consequence, it is noticeable that the error between actual and predicted values is not equally distributed between the targets. The error is systematically higher for y

11 to y

18, which represent the flowrates of the liquid products LPG and C5+.

This is an interesting and consistent result, since it is known by the Operations team that those liquid product streams are indeed the most difficult to forecast and that they are directly correlated with each other. Also, LPG and C5+ are the products most subject to variations from different operation modes of the plants.

4. Conclusions

In this study, unsupervised and supervised machine learning techniques were utilized for modeling an industrial natural gas processing site from Petrobras (UT-GCAB). The data consisted of three and a half years of daily industrial mass balance history. The model developed constitutes a solution independent of commercial software. All methods were implemented in a Python/Jupyter framework, using TensorFlow, SciPy, and scikit-learn. The presented solution may be considered portable and adaptive, since the same methodology can be employed to update the model under new operational conditions, making it particularly useful during deployment.

PCA was employed for data dimensionality reduction from the 44 initial features to 22 principal components that account for 95.1% of total variance, which were then used as input to train a deep learning model. A deep forward neural network was the framework chosen, due to the objective of building a data-driven model for production planning, instead of the currently used first-principles approach. The architecture and hyperparameters were optimized via the Optuna package, resulting in an FNN with two hidden layers, one dropout layer, 225 neurons per layer, a learning rate of 0.001533 and a batch size of 8.

The FNN was trained with the Adam optimizer and MSE loss criterion for a maximum of 1000 epochs with the possibility of early stopping by monitoring validation loss. The trained network did not show an indication of overfitting—training and test losses were very close—and resulted in an R2 of 0.72, a very reasonable fit considering the simplicity of the dataset and that the system is the largest and the most complex natural gas processing site in Brazil.

The results showed that the fit in the prediction of sales gas and NGL flowrates were systematically better than for LPG and C5+. This is adherent to operational reality and indicates that LPG and C5+ are the products most affected by internal plant configurations, such as variations in the operating modes and temperatures of the processing units. These results indicate that the model developed in this work is already suitable to be used for the prediction of sales gas production at the UTGCAB plant. This is important because sales gas is the main product of any gas processing plant and the most complex to handle, as it operates within a network industry where there is no storage between the exit of the gas plant and the final user. This study also paves the way for further research on data-driven modeling of natural gas processing facilities, such as:

Addition of new features that might have the potential to improve even more the prediction of the heavier liquid products. These could be key process variables of each processing unit, such as temperatures and pressures, which could affect product yield distribution.

Increase the number of targets by trying to predict product compositions.

Couple the data-driven FNN strategy with first-principles knowledge, with the goal of developing a Physics-Informed Neural Network (PINN).

Validation of the developed strategy by application to other UPGNs and exploration of techniques such as transfer learning.

Finally, this research contributes to the global natural gas processing sector by addressing the challenge of developing fast, accurate, flexible, and adaptive models for predicting hydrocarbon production in midstream processing facilities. Deep learning models and process history data are innovatively combined for this purpose. In an open-market context, the flexibility of these models is particularly valuable, as dynamic supply and demand fluctuations require real-time adjustments to optimize operations. The proposed framework is scalable and can be adapted to different operational scenarios using the same methodology. Finally, this research on natural gas processing systems is situated within the broader global effort to reduce greenhouse gas emissions and promote sustainable energy solutions, aligned with the Sustainable Development Goals of the United Nations (SDG 7 and SDG 13).