Bank Stress Testing: A Stochastic Simulation Framework to Assess Banks’ Financial Fragility †

Abstract

:1. Introduction

2. The Limitations of Current Stress Testing Methodologies: Moving towards a New Approach

- The consideration of only one deterministic adverse scenario (or at best a very limited number, 2, 3, … scenarios), limiting the exercise’s results to one specific set of stressed assumptions.

- The use of macroeconomic variables as stress drivers (GDP, interest rate, exchange rate, inflation rate, unemployment, etc.), which must then be converted into bank-specific micro risk factor impacts (typically credit risk and market risk impairments, net interest income, regulatory requirement) by recurring to satellite models (generally based on econometric modeling).

- The total stress test capital impact is determined by adding up through a building block framework the impacts of the different risk factors, each of which is estimated through specific and independent silo-based satellite models.

- The satellite models are often applied with a bottom-up approach (especially for credit and market risk), i.e., using a highly granular data level (single client, single exposure, single asset, etc.) to estimate the stress impacts and then adding up all of the individual impacts.

- In supervisory stress tests, the exercise is performed by the banks and not directly by supervisors, the latter setting the rules and assumptions and limiting their role in checking oversight and challenging how banks apply the exercise rules.

- The exclusive focus of the stress testing exercise on one single or very few worst-case scenarios is probably the main limit of the current approach and precludes its use to adequately assess banks’ financial fragility in broader terms; the best that can be achieved is to verify whether a bank can absorb losses related to that specific set of assumptions and level of stress severity. But, a bank can be hit by a potentially infinite number of different combinations of adverse dynamics in all of the main micro and macro variables that affect its capital. Moreover, a specific worst-case scenario can be extremely adverse for some banks particularly exposed to those risk factors stressed in the scenario, but not for other banks that are less exposed to those factors, but this does not mean that the former banks are, in general, more fragile than the latter; the reverse may be true in other worst-case scenarios. This leads to the thorny issue of how to establish the adverse scenario. What should the relevant adverse set of assumptions be? Which variables should be stressed, and what severity of stress should be applied? This issue is particularly relevant for supervisory authorities when they need to run systemic stress testing exercises, with the risk of setting a scenario the may be either too mild or excessively adverse. Since we do not know what will happen in the future, why should we check for just one single combination of adverse impacts? The “right worst-case scenario” simply does not exist, the ex-ante quest to identify the financial system’s “black swan event” can be a difficult and ultimately useless undertaking. In fact, since banks are institutions in a speculative position by their very nature and structure,5 there are many potential shocks that may severely hit them in different ways. In this sense, the black swan is not that rare, so to focus on only one scenario is too simplistic and intrinsically biased.

- Another critical issue that is related to the “one scenario at a time” approach is that it does not provide the probability of the considered stress impact’s occurrence, lacking the most relevant and appropriate measure for assessing the bank’s capital adequacy and default risk: Current stress test scenarios do not provide any information about the assigned probabilities; this strongly reduces the practical use and interpretation of the stress test results. Why are probabilities so important? Imagine that some stress scenarios are put into the valuation model. It is impossible to act on the result without probabilities: in current practice, such probabilities may never be formally declared. This leaves stress testing in a statistical purgatory. We have some loss numbers, but who is to say whether we should be concerned about them?6 In order to make a proper and effective use of stress test results, we need an output that is expressed in terms of probability of infringing the preset capital adequacy threshold.

- The general assumption that the main threat to banking system stability is typically due to exogenous shock stemming from the real economy can be misleading. In fact, historical evidence and academic debate make this assumption quite controversial.7 Most of the recent financial crises (including the latest) were not preceded (and therefore not caused) by a relevant macroeconomic downturn; generally, quite the opposite was true, i.e., endogenous financial instability caused a downturn in the real economy.8 Hence, the practice of using macroeconomic drivers for stress testing can be misleading because of the relevant bias in the cause-effect linkage, but on closer examination, it also turns out to be an unnecessary additional step with regard to the test’s purpose. In fact, since the stress test ultimately aims to assess the capital impact of adverse scenarios, it would be much better to directly focus on the bank-specific micro variables that affect its capital (revenues, credit losses, non-interest expenses, regulatory requirements, etc.). Working directly on these variables would eliminate the risk of potential bias in the macro-micro translation step. The presumed robustness of the model and the safety net of having an underlying macroeconomic scenario within the stress test fall short, while considering that: (a) we do not know which specific adverse macroeconomic scenario may occur in the future; (b) we have no certainty about how a specific GDP drop (whatever the cause) affects net income; (c) we do not know/cannot consider all other potential and relevant impacts that may affect net income beyond those that are considered in the macroeconomic scenario. Therefore, it is better to avoid expending time and effort in setting a specific macroeconomic scenario from which all impacts should arise, and to instead try to directly assess the extreme potential values of the bank-specific micro variables. Within a single-adverse-scenario approach, the macro scenario definition has the scope of ensuring comparability in the application of the exercise to different banks and to facilitate the stress test storytelling rationale for supervisor communication purposes.9 However, within the multiple scenarios approach that is proposed, which no longer needs to exist, there are other ways to ensure comparability in the stress test. Of course, the recourse to macroeconomic assumptions can also be considered in the stochastic simulation approach proposed, but as we have explained, it can also be avoided; in the illustrative exercise presented below, we avoided modeling stochastic variables in terms of underlying macro assumptions, to show how we can dispense with the false myth of the need for a macro scenario as the unavoidable starting point of the stress test exercise.

- Recourse to a silo-based modeling framework to assess the risk factor capital impacts with aggregation through a building block approach does not ensure a proper handling of risk integration10 and is unfit to adequately manage the non-linearity, path dependence, feedback and cross-correlation phenomena that strongly affects capital in “tail” extreme events. This kind of relationships assumes a growing relevance with the extension of the stress test time horizon and severity. Therefore, a necessary step to properly capture the effects of these phenomena in a multi-period stress test is to abandon the silo-based approach and to adopt an enterprise risk management (ERM) model, which, within a comprehensive unitary model, allows for us to manage the interactions among the fundamental variables, integrating all risk factors and their impacts in terms of P&L-liquidity-capital-requirements11.

- The bottom-up approach to stress test calculations generally entails the use of satellite econometric models in order to translate macroeconomic adverse scenarios into granular risk parameters, and internal analytical risk models to calculate impairments and regulatory requirements. The highly granular data level employed and the consequent use of the linked modeling systems makes stress testing exercises extremely laborious and time-consuming. The high operational cost that is associated with this kind of exercise contributes to limiting analysis to one or few deterministic scenarios. In addition, the high level of fragmentation of input data and the long calculation chain increases the risk of operational errors and makes the link between adverse assumptions and final results less clear. The bottom-up approach is well suited for current-point-in-time analysis characterized by a short-term forward-looking risk analysis (e.g., one year for credit risk); the extension of the bottom-up approach into forecasting analysis necessarily requires a static balance sheet assumption, otherwise the cumbersome modeling systems would lack the necessary data inputs. But, the longer the forecasting time horizon considered (2, 3, 4, … years), the less sense that it makes to adopt a static balance sheet assumption, compromising the meaningfulness of the entire stress test analysis. The bottom-up approach loses its strength when these shortcomings are considered, generating a false sense of accuracy with considerable unnecessary costs.

- In consideration of the use of macroeconomic adverse scenario assumptions and the bottom-up approach that is outlined above, supervisors are forced to rely on banks’ internal models to perform stress tests. Under these circumstances, the validity of the exercise depends greatly on how the stress test assumptions are implemented by the banks in their models, and on the level of adjustments and derogations they applied (often in an implied way). Clearly, this practice leaves open the risk of moral hazard in stress test development and conduct, and it also affects the comparability of the results, since the application of the same set of assumptions with different models does not ensure a coherent stress test exercise across all of the banks involved.12 Supervisory stress testing should be performed directly by the competent authority. In order to do so, they should adopt an approach that does not force them to depend on banks for calculations.13

3. Analytical Framework

3.1. Stochastic Simulation Approach Overview

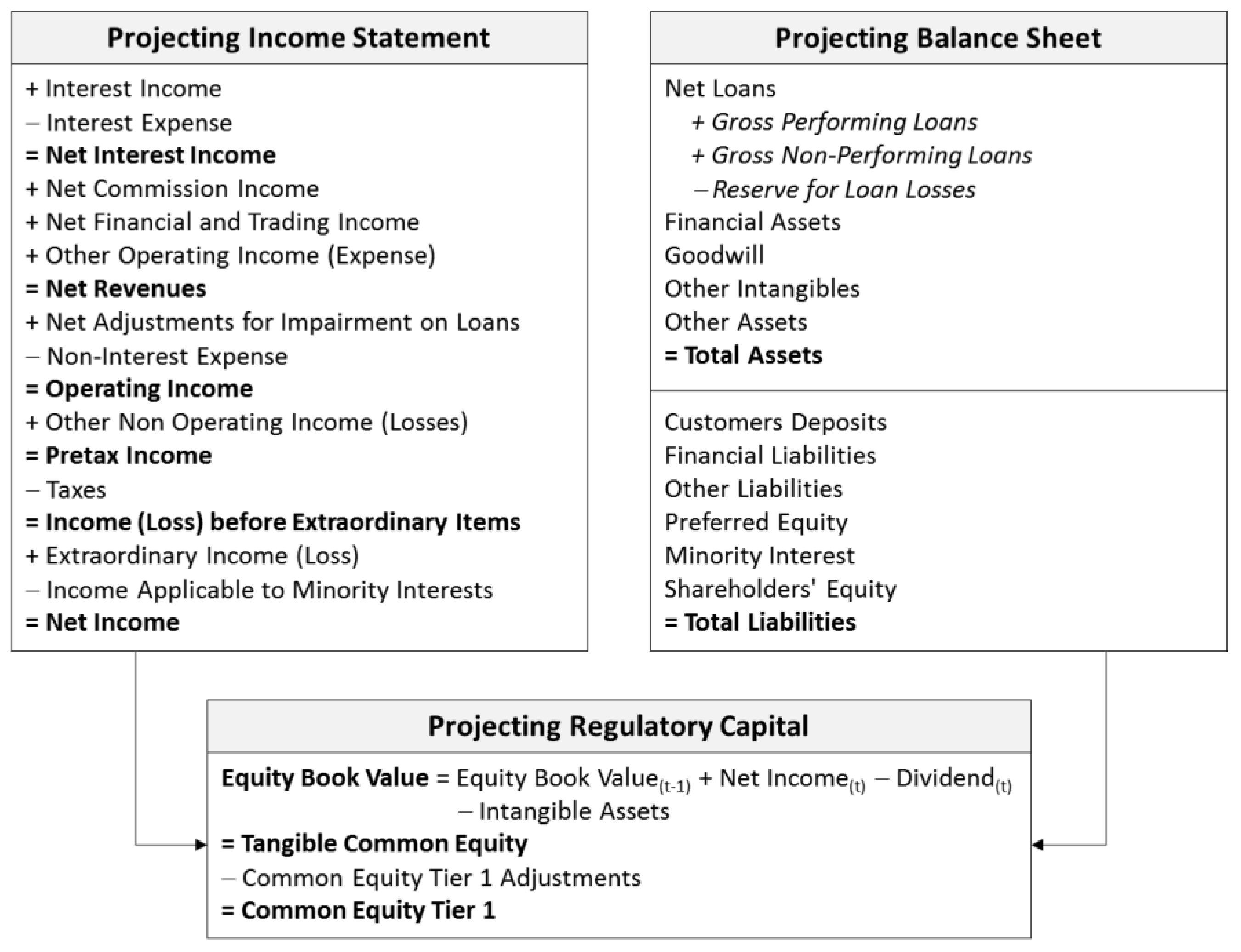

- Multi-period stochastic forecasting model: a forecasting model to develop multiple scenario projections for income statement, balance sheet and regulatory capital ratios, capable of managing all of the relevant bank’s value and risk drivers in order to consistently ensure:

- a dividend/capital retention policy that reflects regulatory capital constraints and stress test aims;

- the balancing of total assets and total liabilities in a multi-period context, so that the financial surplus/deficit generated in each period is always properly matched to a corresponding (liquidity/debt) balance sheet item; and,

- the setting of rules and constraints to ensure a good level of intrinsic consistency and correctly manage potential conditions of non-linearity. The most important requirement of a stochastic model lies in preventing the generation of inconsistent scenarios. In traditional deterministic forecasting models, consistency of results can be controlled by observing the entire simulation development and set of output. However, in stochastic simulation, which is characterized by the automatic generation of a very large number of random scenarios, this kind of consistency check cannot be performed, and we must necessarily prevent inconsistencies ex-ante within the model itself, rather than correcting them ex-post. In practical terms, this entails introducing into the model rules, mechanisms and constraints that ensure consistency, even in stressed scenarios.14

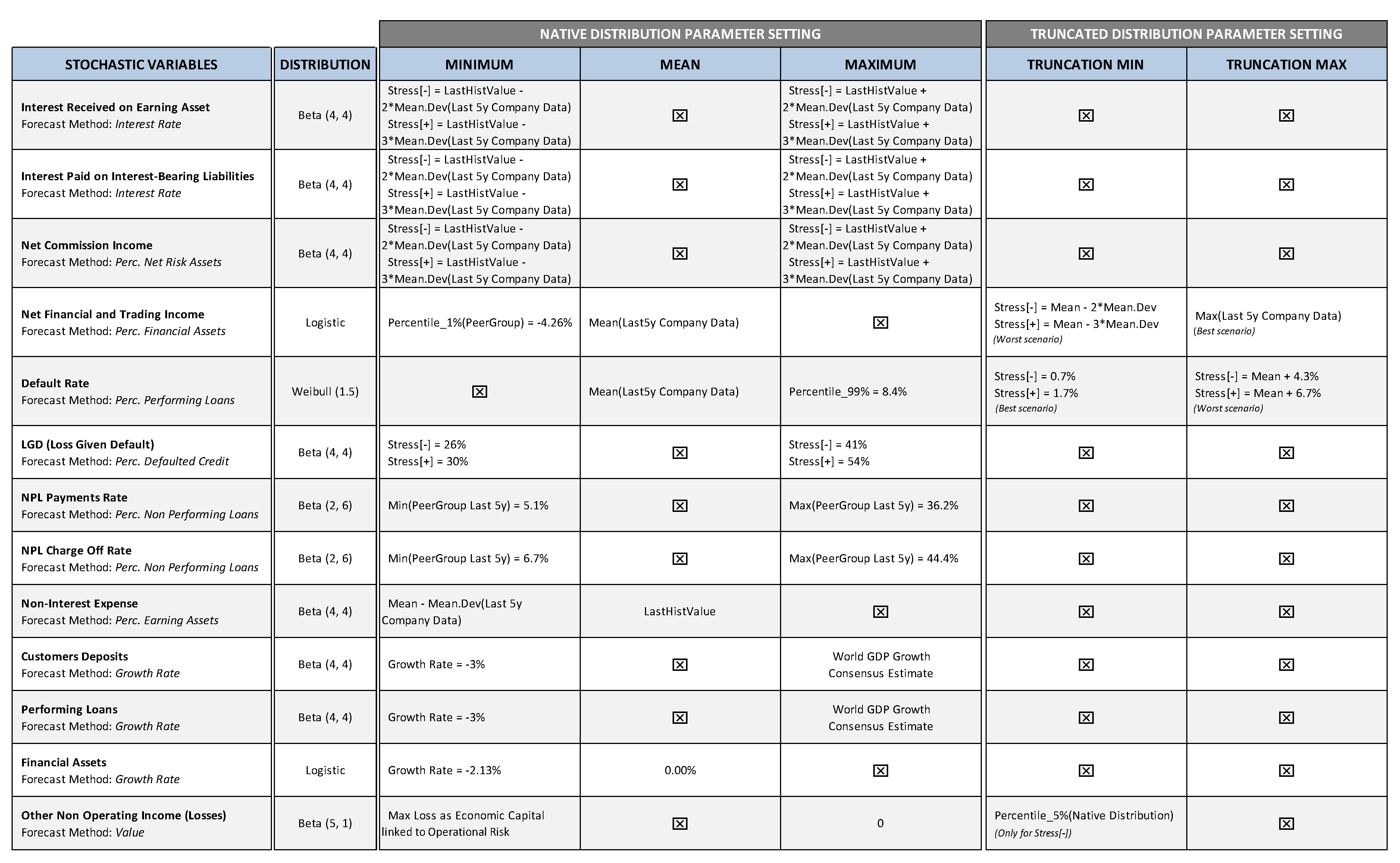

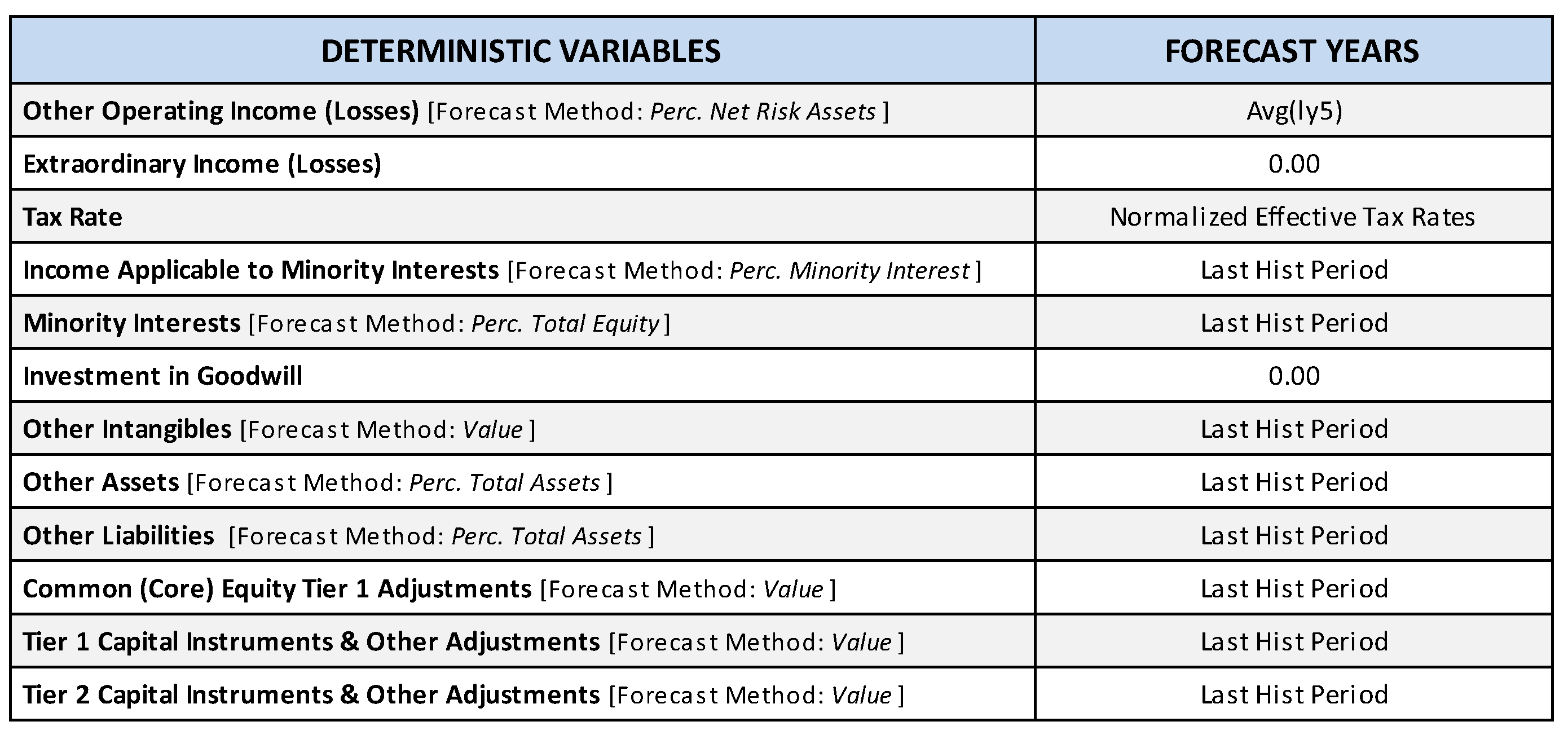

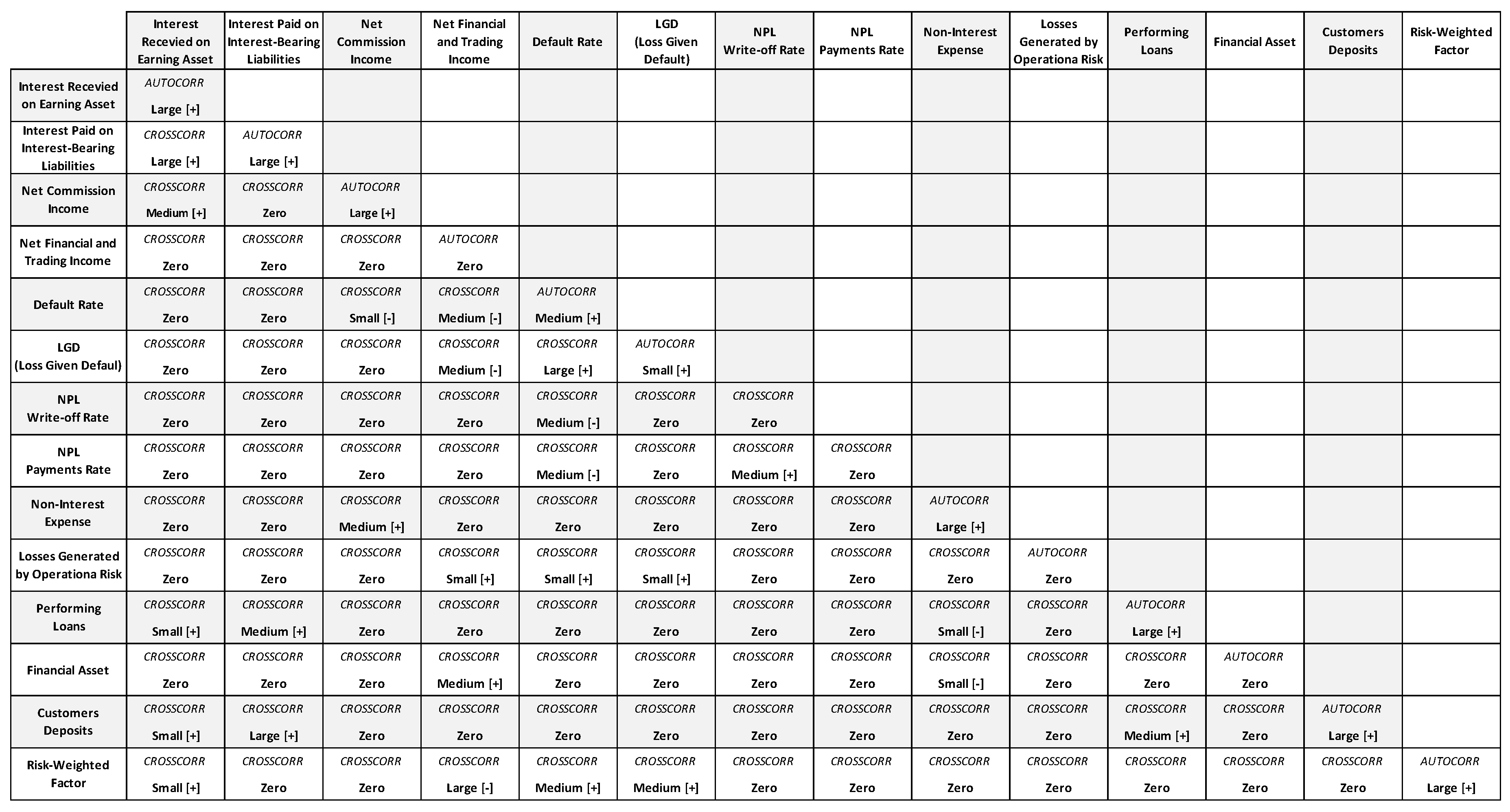

- Forecasting variables expressed in probabilistic terms: the variables that represent the main risk factors for capital adequacy are modeled as stochastic variables and defined through specific probability distribution functions in order to establish their future potential values, while interdependence relations among them (correlations) are also set. The severity of the stress test can be scaled by properly setting the distribution functions of stochastic variables.

- Monte Carlo simulation: this technique allows us to solve the stochastic forecast model in the simplest and most flexible way. The stochastic model can be constructed using a copula-based or other similar approaches, with which it is possible to express the joint distribution of random variables as a function of the marginal distributions.15 Analytical solutions—assuming that it is possible to find them—would be too complicated and strictly bound to the functional relation of the model and of the probability distribution functions adopted, so that any changes in the model and/or probability distribution would require a new analytical solution. The flexibility provided by the Monte Carlo simulation, however, allows for us to very easily modify stress severity and the stochastic variable probability functions.

- Top-down comprehensive view: the simulation process set-up utilizes a high level of data aggregation, in order to simplify calculation and guarantee an immediate view of the causal relations between input assumptions and results. The model setting adheres to an accounting-based structure, aimed at simulating the evolution of the bank’s financial statement items (income statement and balance sheet) and regulatory figures and related constraints (regulatory capital, RWA–Risk Weighted Assets, and minimum requirements). An accounting-based model has the advantage of providing an immediately-intelligible comprehensive overview of the bank that facilitates the standardization of the analysis and the comparison of the results.16

- Risk integration: the impact of all the risk factors is determined simultaneously, consistently with the evolution of all of the economics within a single simulation framework.

3.2. The Forecasting Model

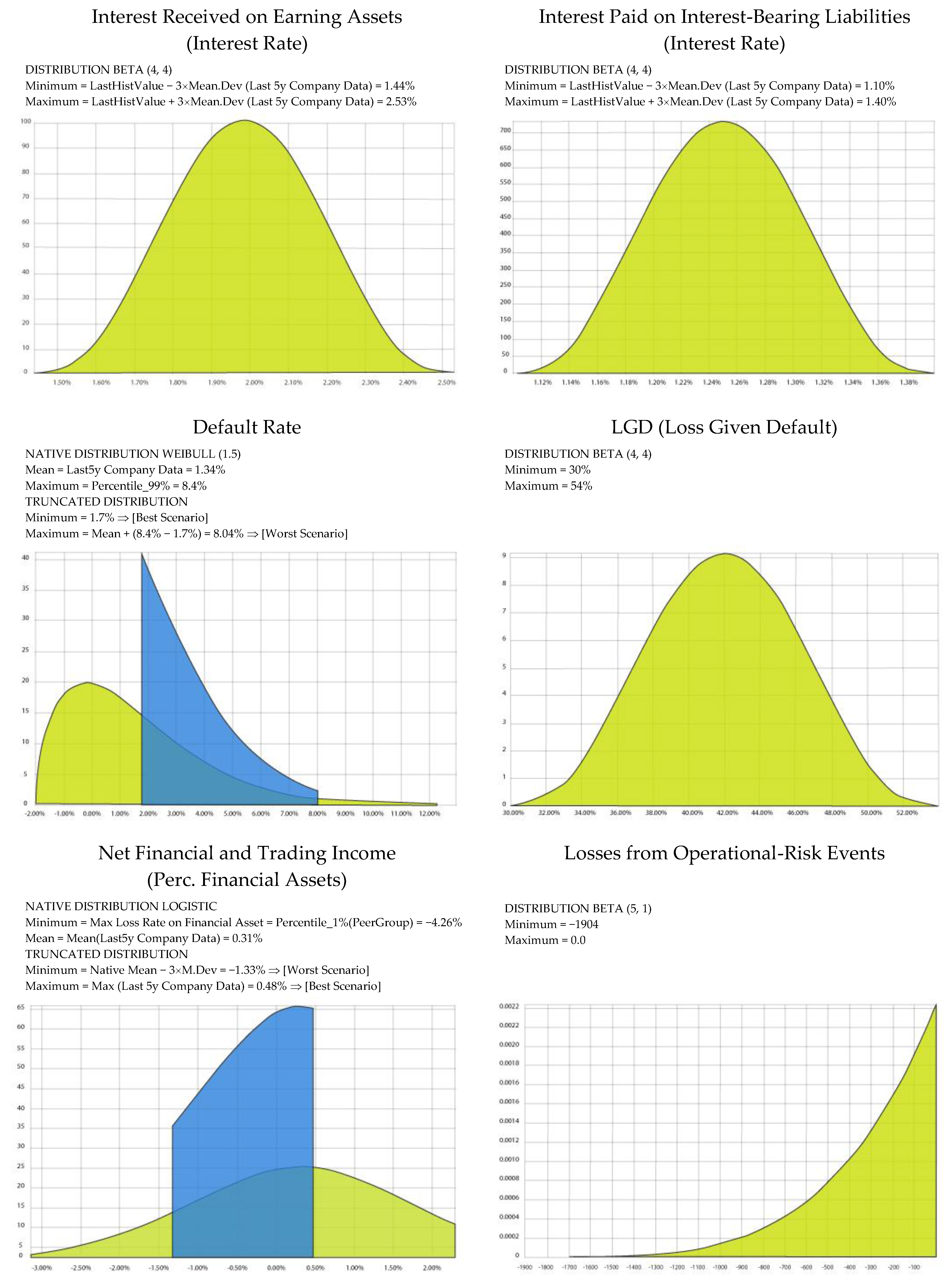

3.3. Stochastic Variables and Risk Factors Modeling

3.4. Results of Stochastic Simulations

3.4.1. Probability of Regulatory Capital Ratio Breach

- Yearly Probability: indicates the frequency of scenarios with which the breach event occurs in a given period. It thus provides a forecast of the bank’s degree of financial fragility in that specific period. [P(CET1t < MinCET1t)]

- Marginal Probability: represents a conditional probability, and it indicates the frequency with which the breach event will occur in a certain period, but only in cases in which said event has not already occurred in previous periods. It thus provides a forecast of the overall risk increase for that given year. [P(CET1t < MinCET1t|CET11 > MinCET11, …, CET1t−1 > MinCET1t−1)]

- Cumulated Probability: provides a measure of overall breach risk within a given time horizon, and is given by the sum of marginal breach probabilities, as in (9). [P(CET11 < MinCET11) + … +P(CET1t < MinCET1t|CET11 > MinCET11, …, CET1t−1 > MinCET1t−1)]

3.4.2. Probability of Default Estimation

- Accounting-Based: In the traditional view, a bank’s risk of default is set in close relation to the total capital held to absorb potential losses and to guarantee debt issued to finance assets held. According to this logic, a bank can be considered in default when the value of capital (regulatory capital, or, alternatively, equity book value) falls beneath a pre-set threshold. This rationale also underlies the Basel regulatory framework, on the basis of which a bank’s financial stability must be guaranteed by minimum capital ratio levels. In consideration of the fact that this threshold constitutes a regulatory constraint on the bank’s viability and also constitutes a highly relevant market signal, we can define the event of default as a common equity tier 1 ratio level below the minimum regulatory threshold, which is currently set at 4.5% (7% with the capital conservation buffer) under Basel III regulation. An interesting alternative to utilizing the CET1 ratio is to use the leverage ratio as an indicator to define the event of default, since, not being related to RWA, it has the advantage of not being conditioned by risk weights, which could alter comparisons of risk estimates between banks in general and/or banks pertaining to different countries’ banking systems.22 The tendency to make the leverage ratio the pivotal indicator is confirmed by the role that is envisaged for this ratio in the new Basel III regulation, and by recent contributions to the literature proposing the leverage ratio as the leading bank capital adequacy indicator within a more simplified regulatory capital framework.23 Therefore, the probability of default (PD) estimation method entails determining the frequency with which, in the simulation-generated distribution function, CET1 Ratio (or leverage ratio) values below the set threshold appear. The means for determining cumulated PD at various points in time are those we have already described for probability of breach.

- Value-Based: This method essentially follows in the footsteps of the theoretical presuppositions of the Merton approach to PD estimation,24 according to which a company’s default occurs when its enterprise value is inferior to the value of its outstanding debt; this equates to a condition in which equity value is less than zero:25

3.4.3. Economic Capital Distribution (Value at Risk, Expected Shortfall)

3.4.4. Potential Funding Shortfalls: A Forward-Looking Liquidity Risk Proxy

3.4.5. Heuristic Measure of Tail Risk

4. Stress Testing Exercise: Framework and Model Methodology

- Credit risk: modeling in through the item loan losses; we adopted the expected loss approach, through which yearly loan loss provisions are estimated as a function of three components: probability of default (PD), loss given default (LGD), and exposure at default (EAD).

- Market risk: modeling in through the item trading and counterparty gains/losses, which includes mark-to market gains/losses, realized and unrealized gains/losses on securities (AFS/HTM) and counterparty default component (the latter is included in market risk because it depends on the same driver as financial assets). The risk factor is expressed in terms of losses/gains rate on financial assets.

- Operational risk: modeling in through the item other non-operating income/losses; this risk factor has been directly modeled, making use of the corresponding regulatory requirement record reported by the banks (considered as maximum losses due to operational risk events); for those banks that did not report any operational risk requirement, we used as proxy the G-SIB sample’s average weight of operational risk over total regulatory requirements.

- Bank’s track record (latest five years).

- Industry track record, based on a peer group sample made up of 73 banks from different geographic areas comparable with the G-SIB banks.34

- Benchmark risk parameters (PD and LGD) based on Hardy and Schmieder (2013).

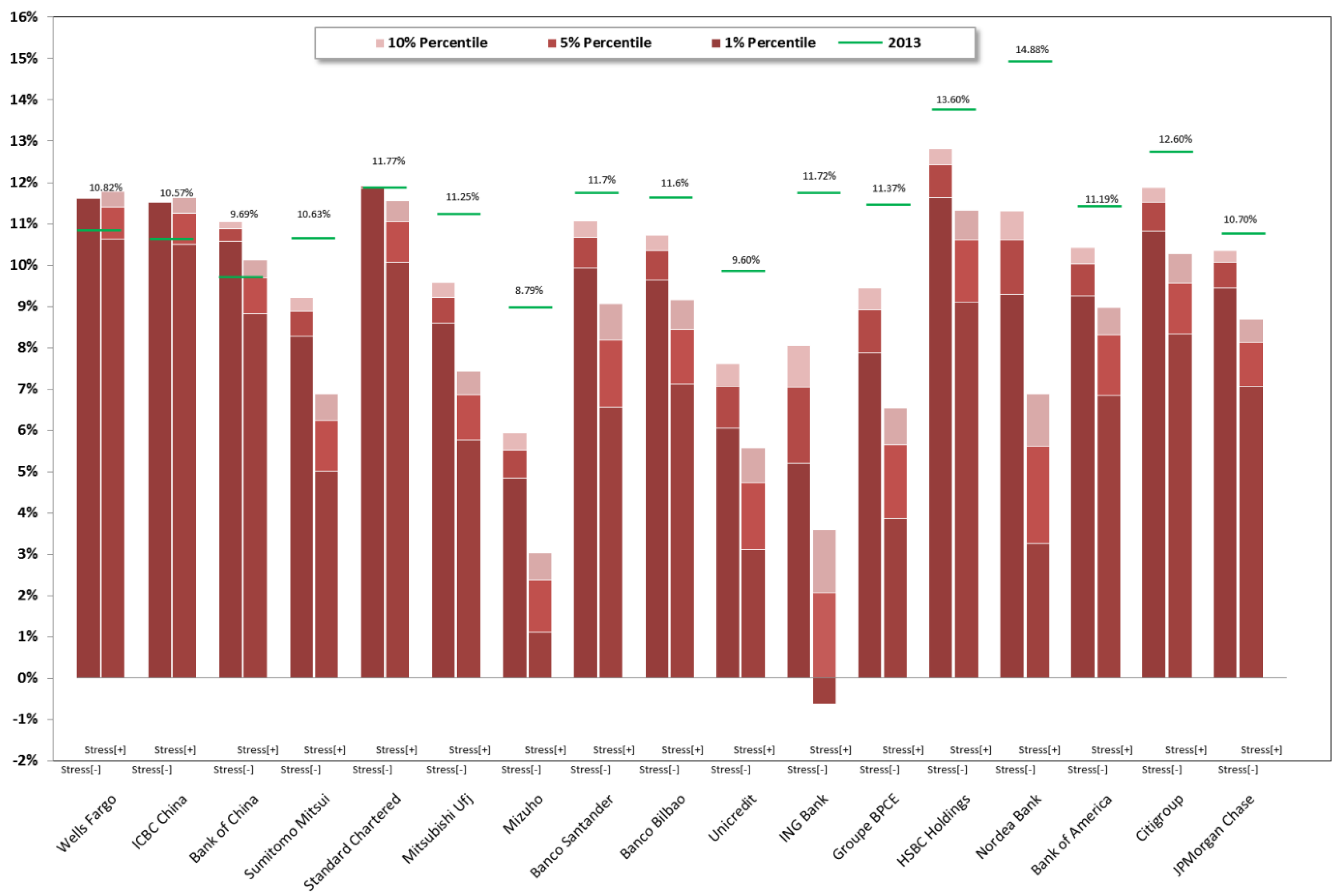

5. Stress Testing Exercise: Results and Analysis

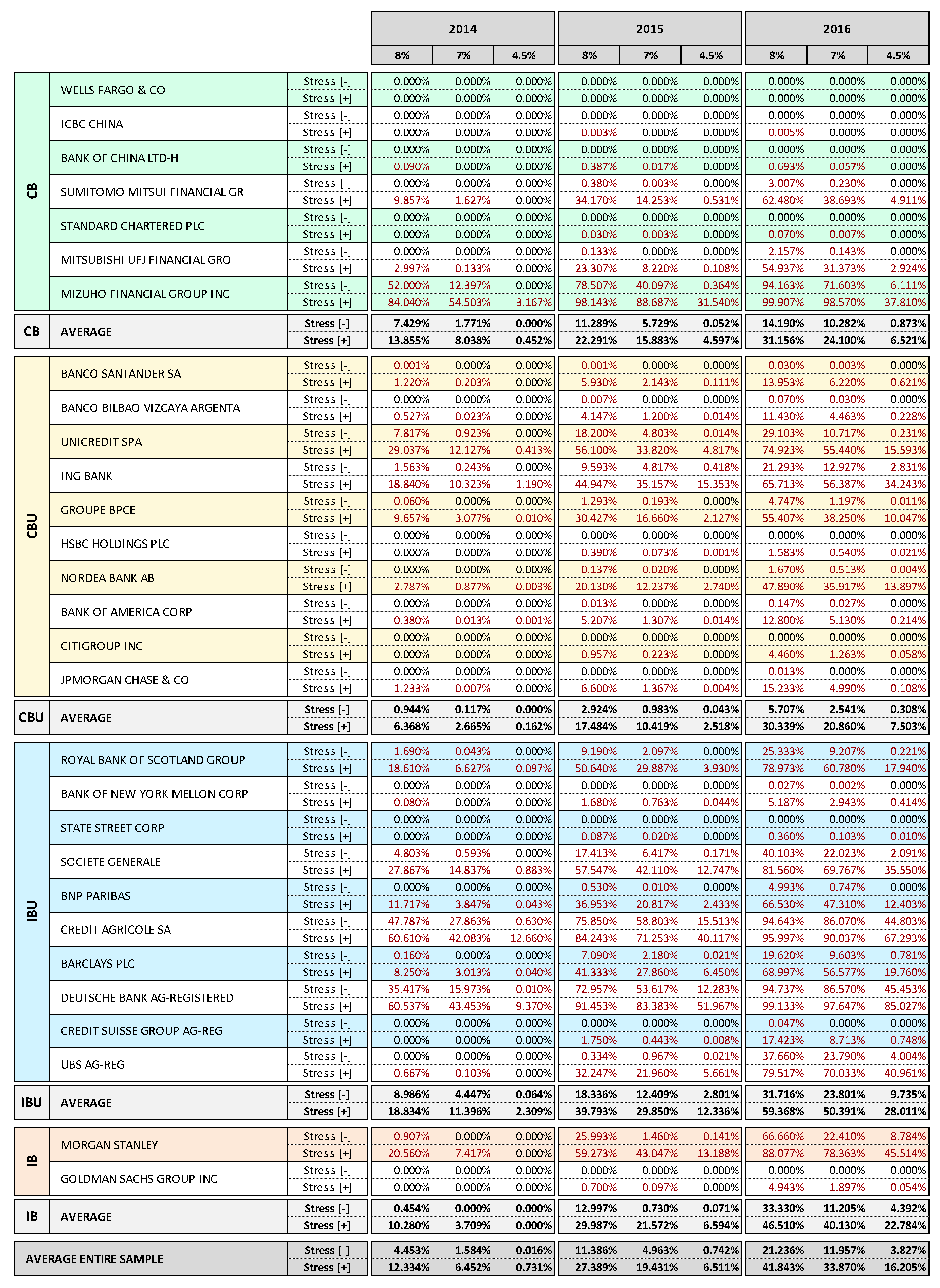

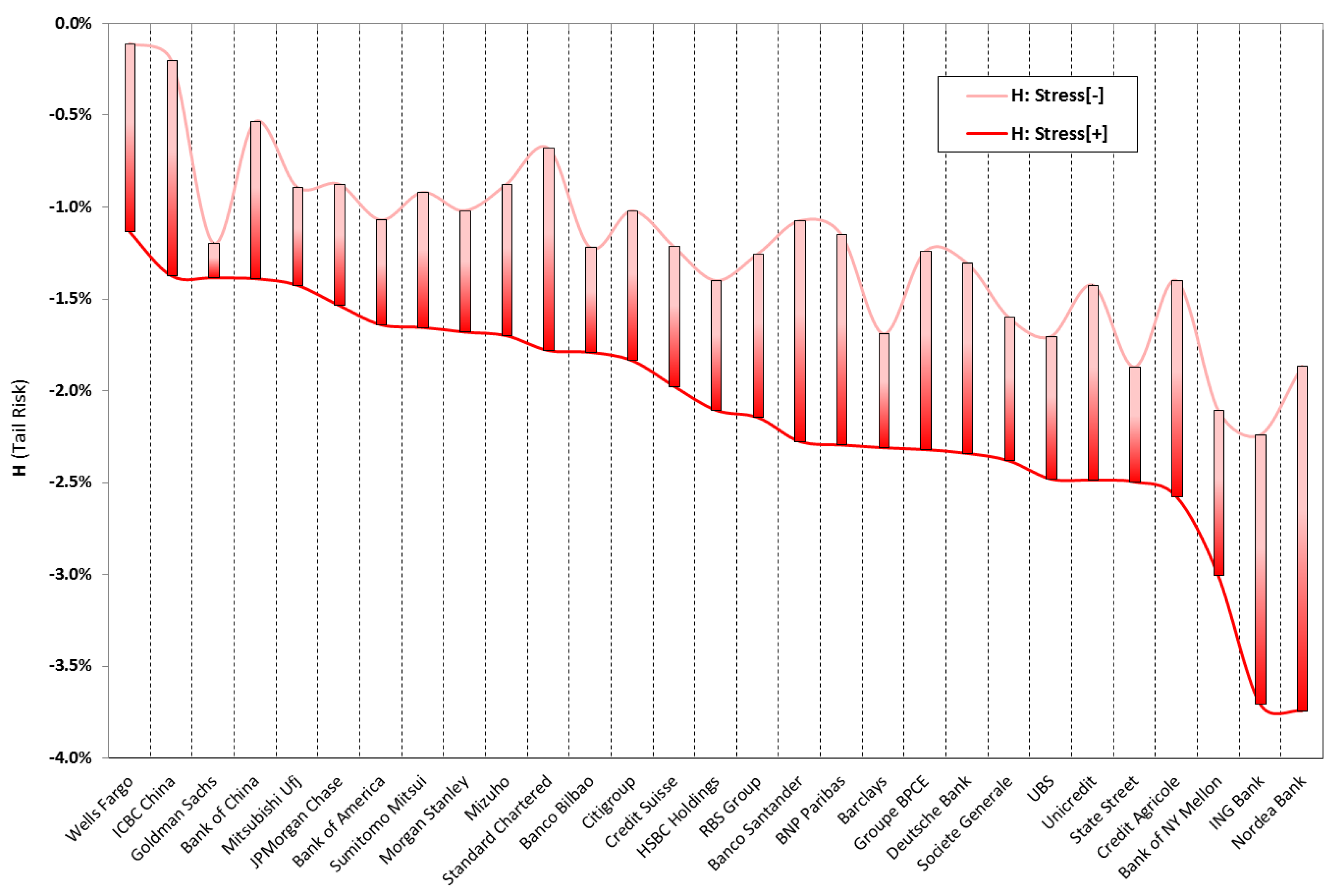

- Current capital base level: banks with higher capital buffers in 2013 came through the stress test better, although this element is not decisive in determining the bank’s fragility ranking.

- Interest income margin: banks with the highest net interest income are the most resilient to the stress test.

- Leverage: banks with the highest leverage are among the most vulnerable to stressed scenarios.36

- Market risk exposures: banks that are characterized by significant financial asset portfolios tend to be more vulnerable to stressed conditions.

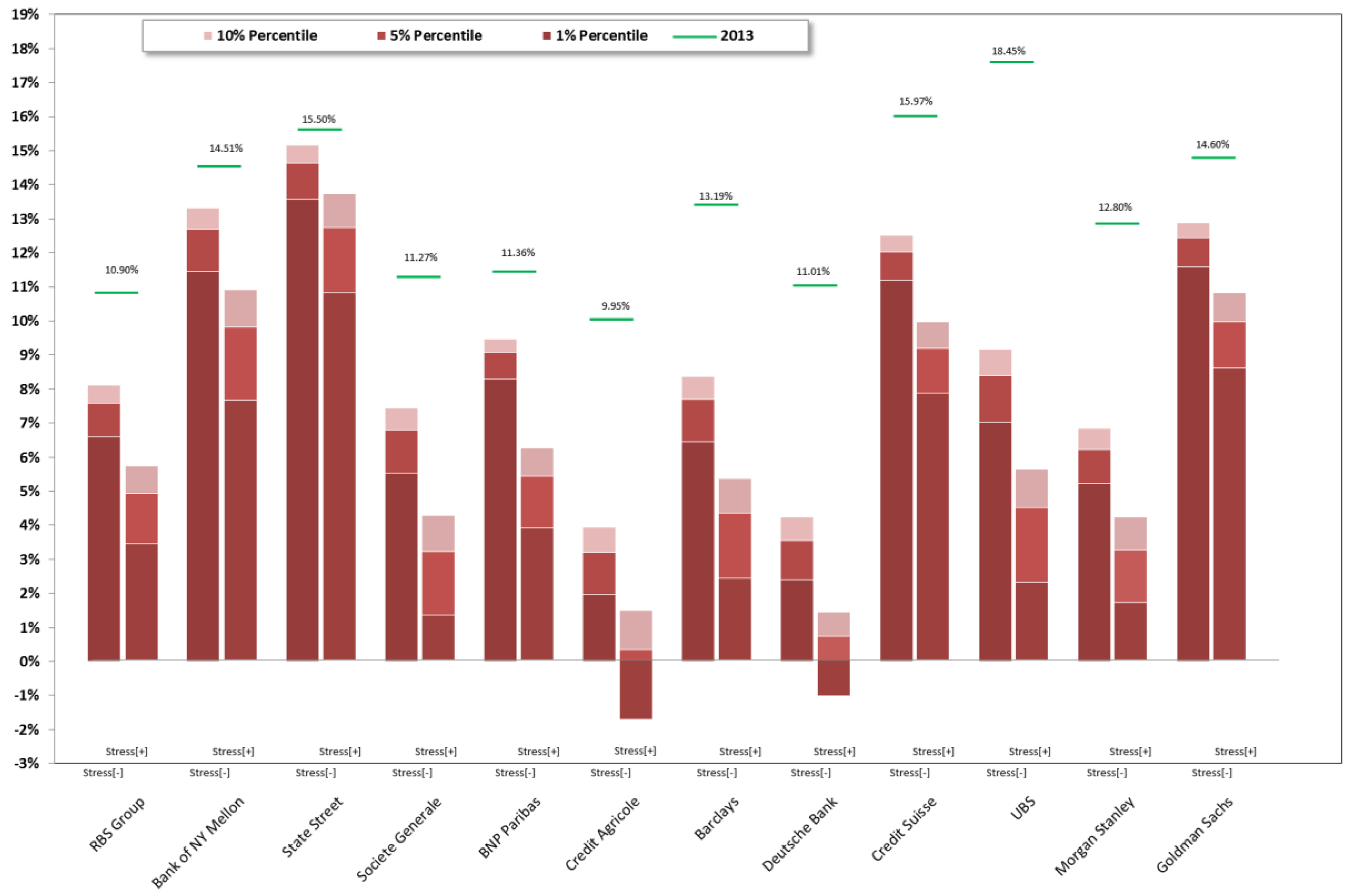

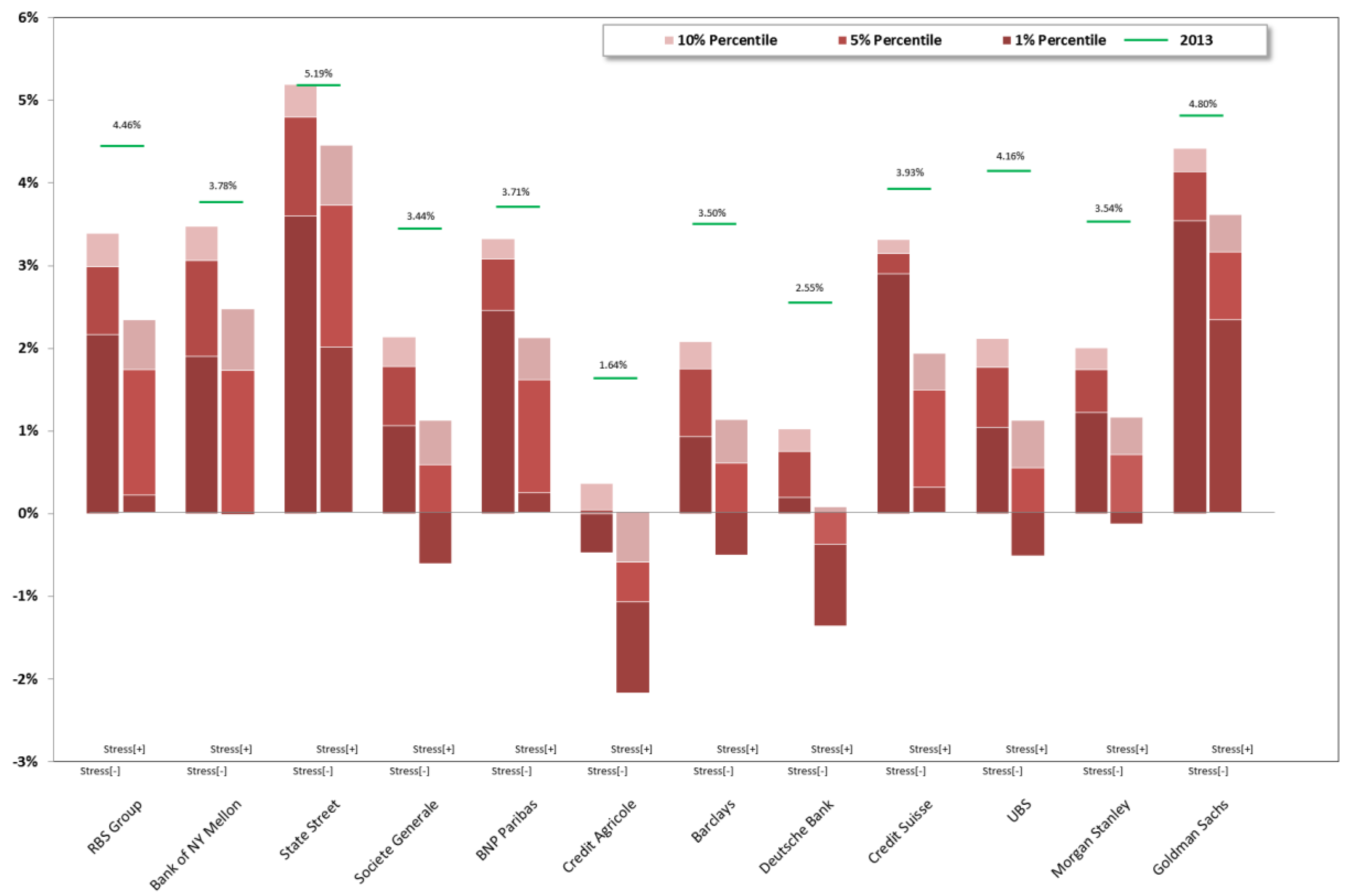

5.1. Supervisory Approach to SREP Capital Requirement

- Set a common predetermined minimum capital ratio “trigger” (α%); of course, this can be done by considering regulatory prescriptions, for example, 4.5% of CET1 ratio, or 7% of CET1 ratio while considering the capital conservation buffer as well.

- Set a common level of confidence “probability threshold” (x%); this probability should be fixed according to the supervisor’s “risk appetite” and also considering the trigger level: the higher the trigger, the lower the probability threshold can be set, since there are higher chances of hitting a high trigger.

- Run a stochastic simulation for each single financial institution with a common standard methodological paradigm.

- Look, for each bank, at the CET1 ratio probability distribution that is generated through the simulation in order to determine the CET1 ratio at the percentile of the distribution that corresponds to the probability threshold (CET1 Ratiox%).

- Compare the value of CET1 Ratiox% to the trigger (α%) in order to see if there is a capital shortfall (−) or excess (+) at that confidence interval (CET1 Ratiox% − α% = ±Δ%); this difference can be transformed into a capital buffer equivalent by multiplying it by the RWA generated in the scenario that corresponds to the percentile threshold (±Δ%·RWAx%).

- Calculate the SREP capital requirement by adding the buffer to the capital position held by the bank at time t0 in the case of capital shortfall at the percentile threshold, or by subtracting the buffer in the case of excess capital; the capital requirement can also be expressed in terms of a ratio by dividing the above capital requirement by the outstanding RWA at t0 or by the leverage exposure if the relevant capital ratio that is considered is the leverage ratio37.

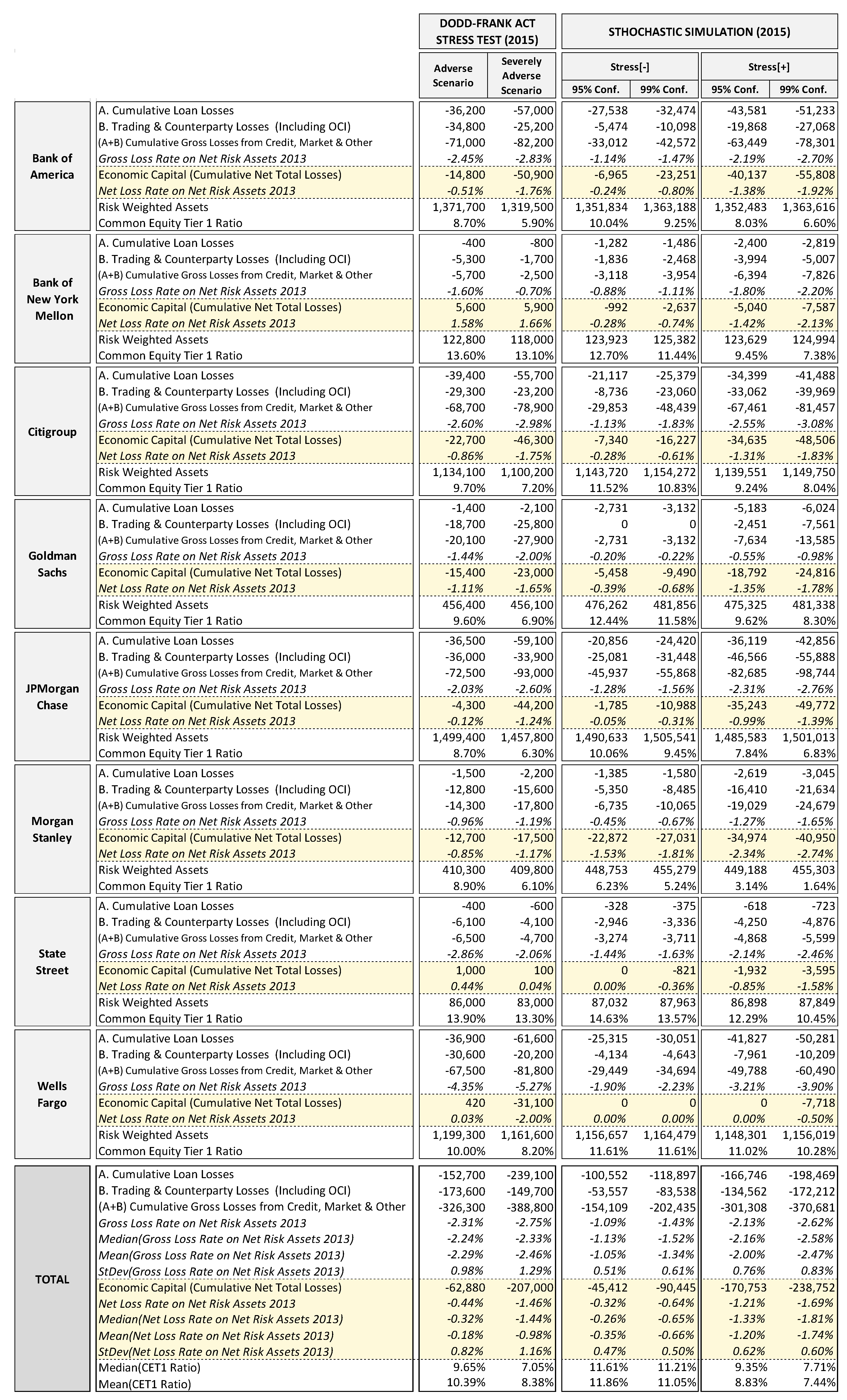

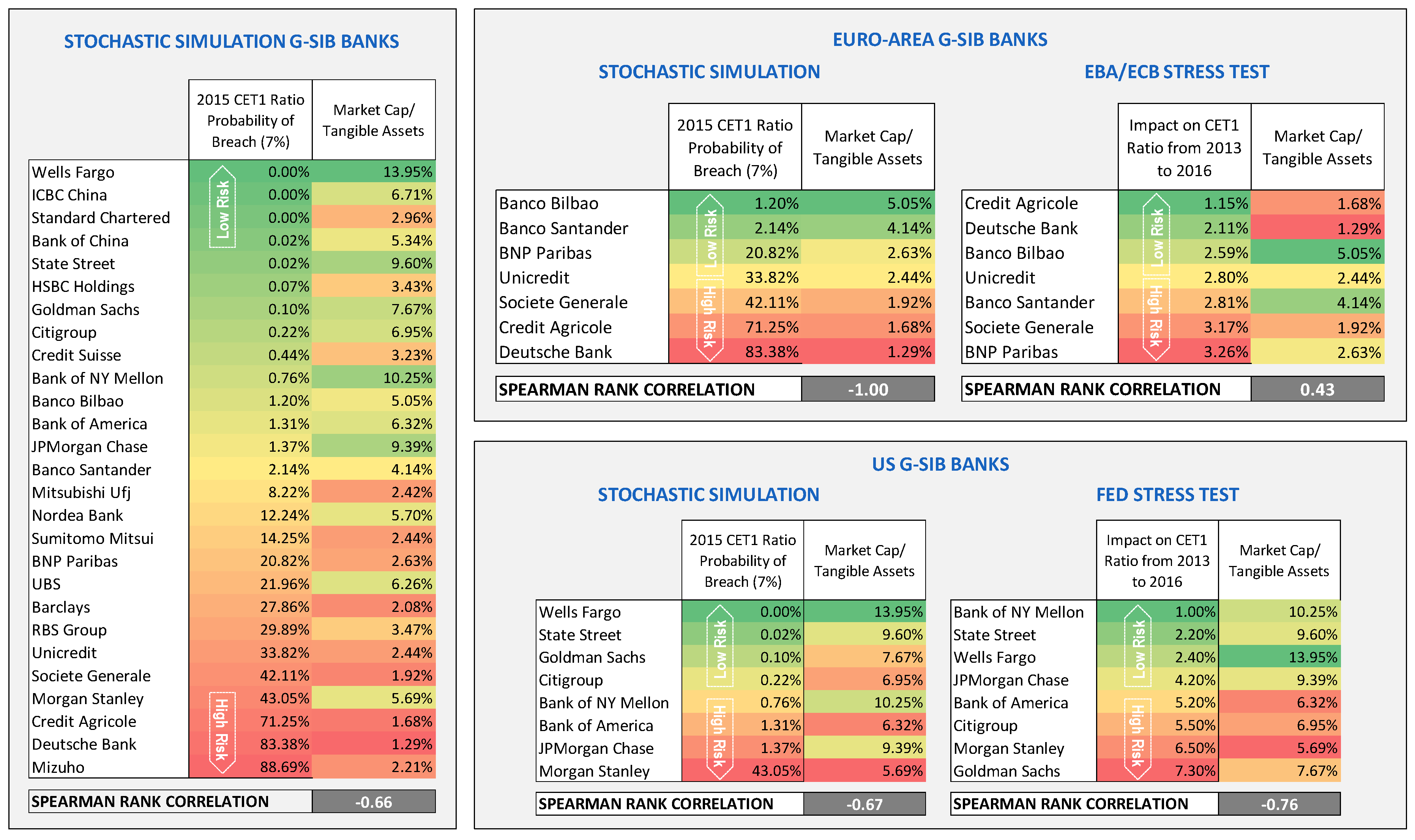

5.2. Stochastic Simulations Stress Test vs. Fed Supervisory Stress Test

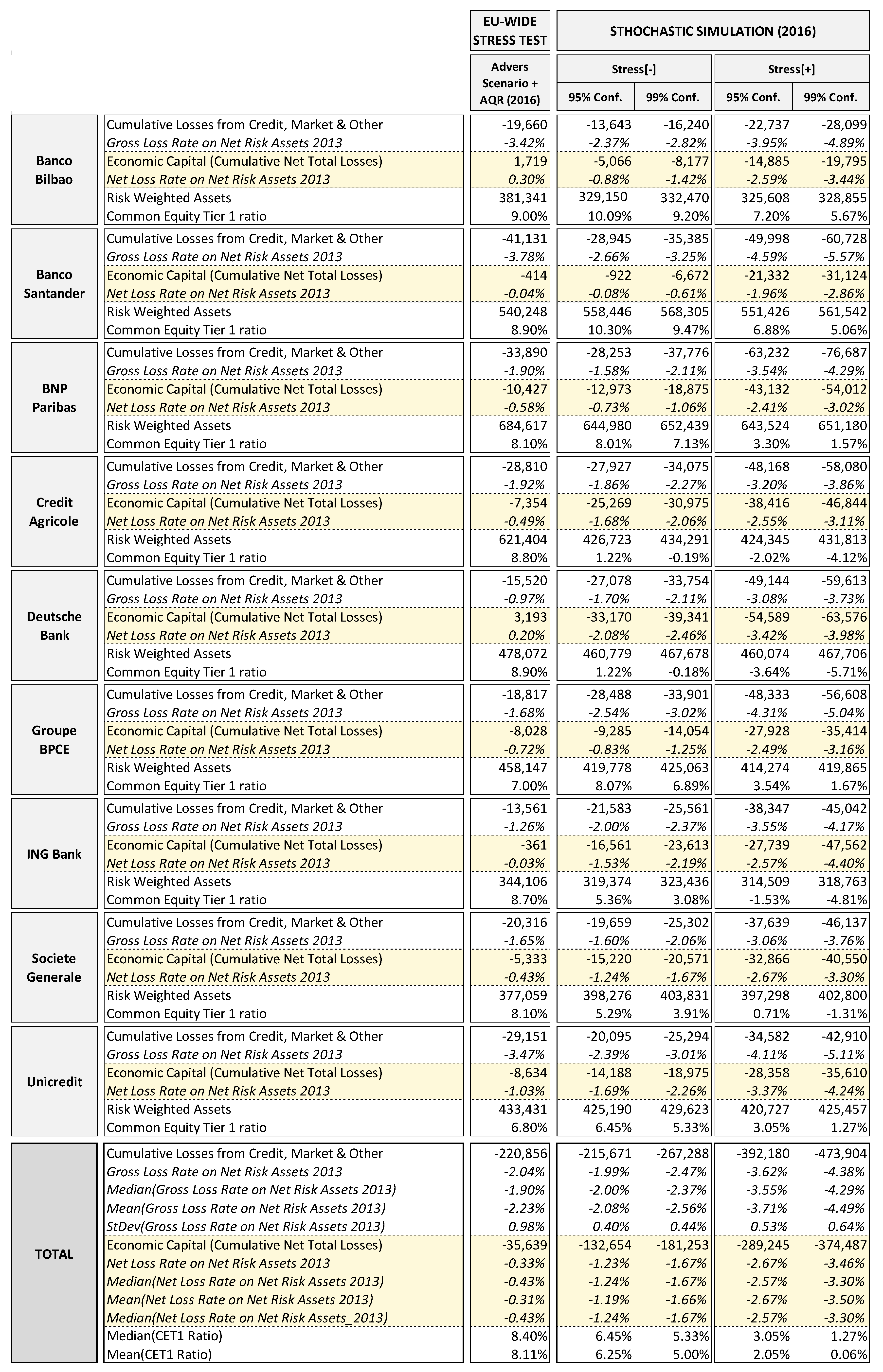

5.3. Stochastic Simulations Stress Test vs. EBA/ECB Supervisory Stress Test

5.4. Relationship between Stress Test Risk and Market Valuation

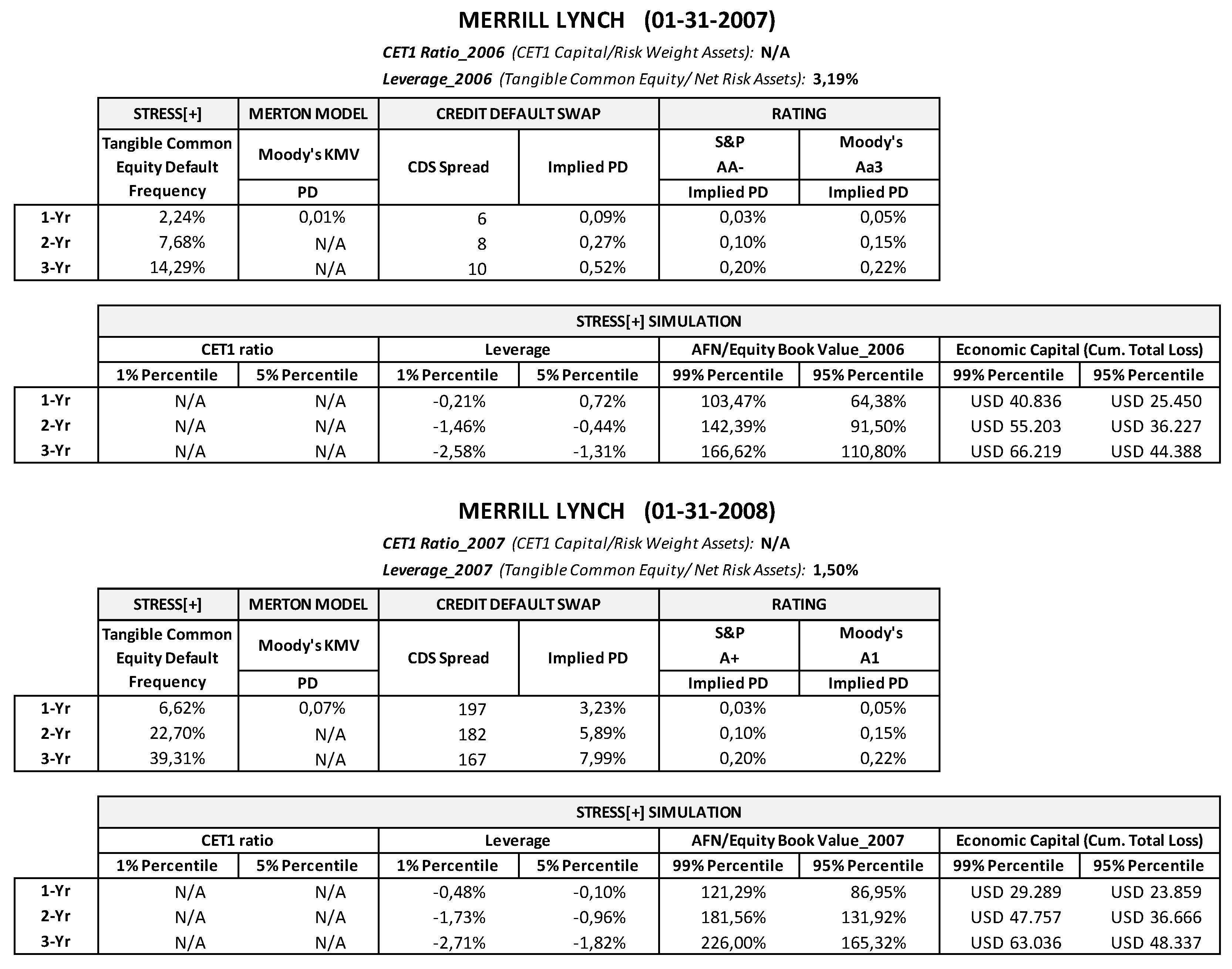

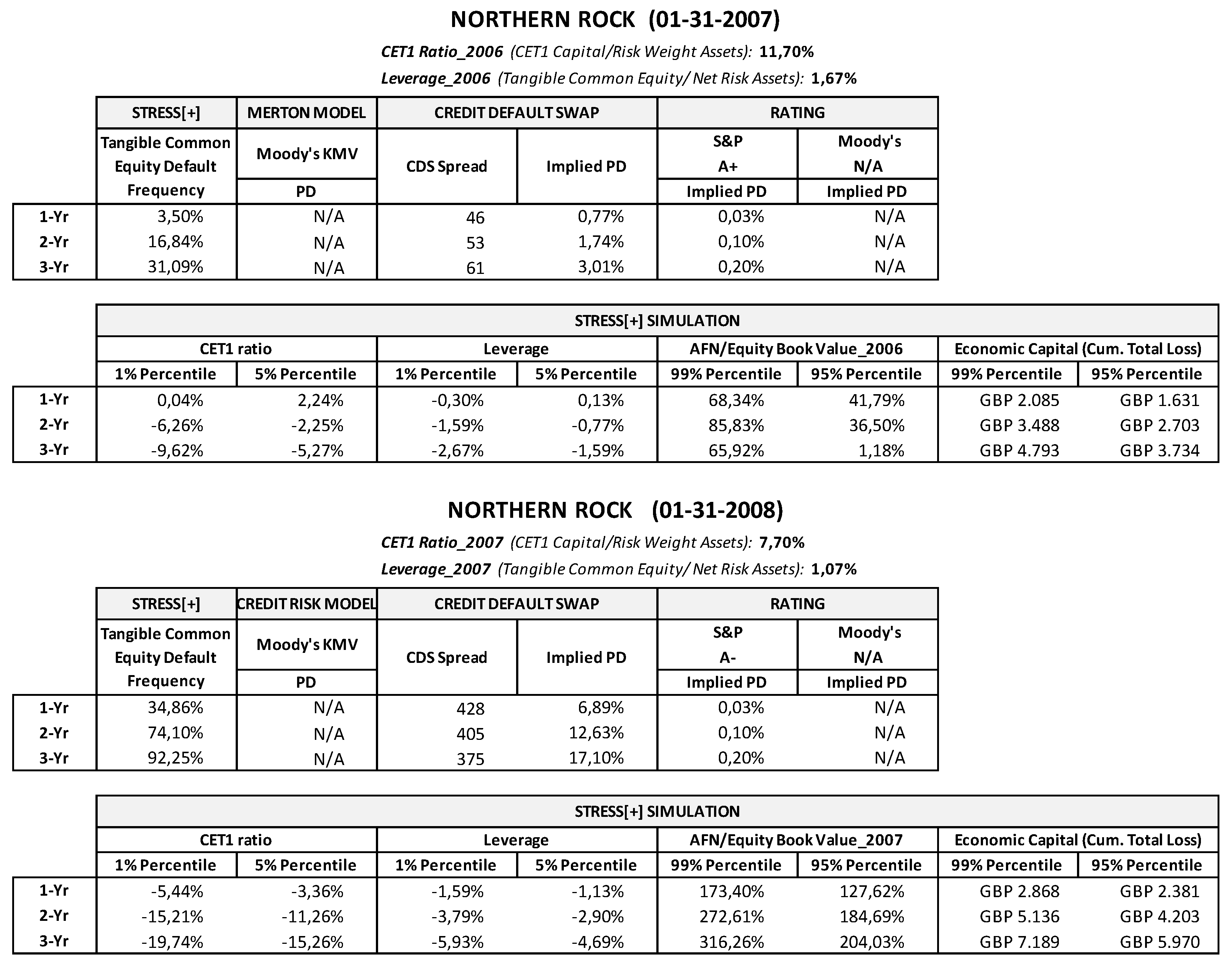

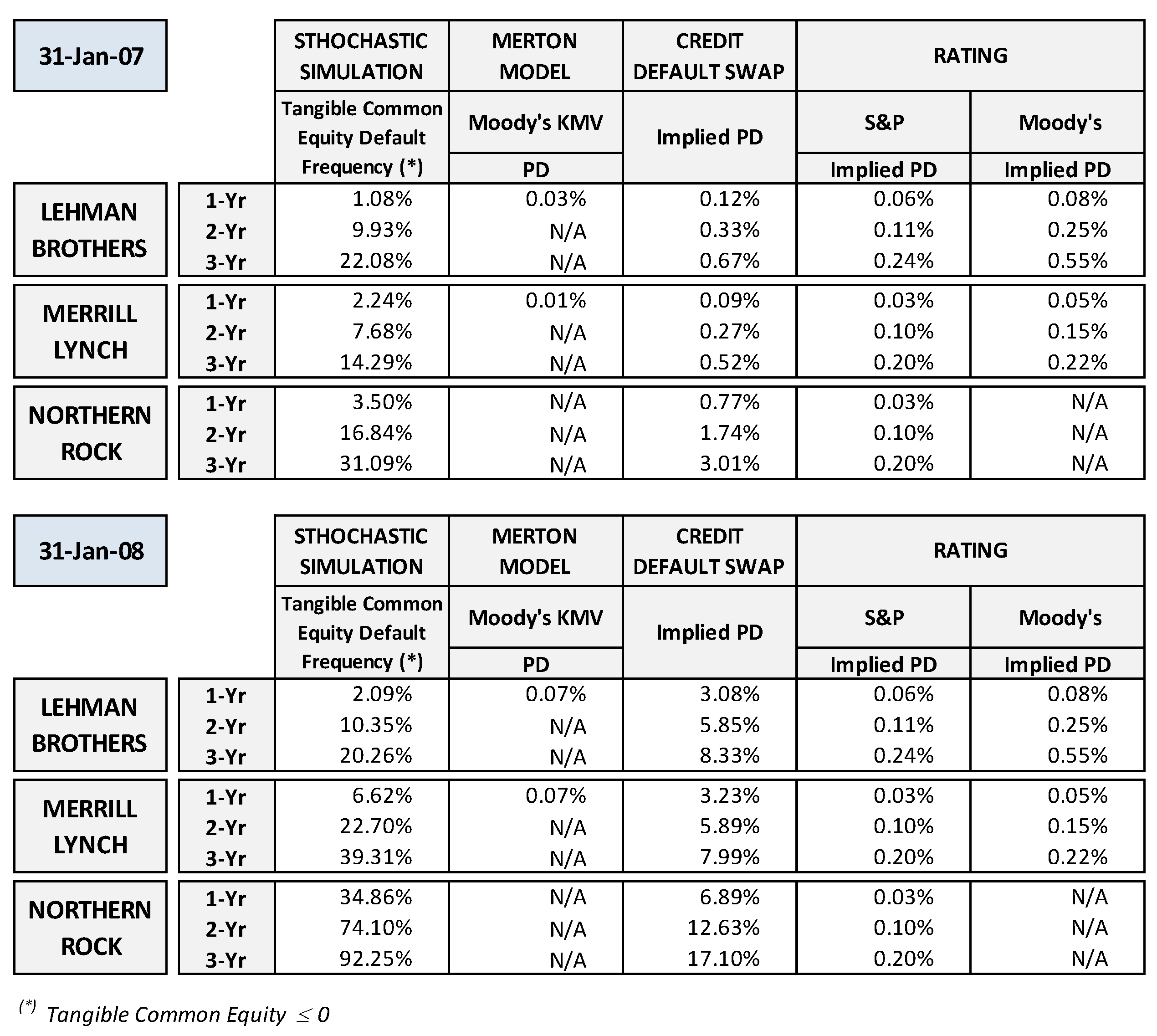

6. Stochastic Simulation Approach Comparative Back-Testing Analysis

- The market & counterparty risk factor (managed through the net financial and trading income variable) has been modeled according to Appendix A assumptions, but the minimum truncation (max loss rate) for all of the banks has been set at −2.13%, which is half that reported by the G-SIB peer group sample in the latest five years (−4.26%).47

- For Merrill Lynch and Lehman Brothers, the target capital ratio has not been set in terms of risk-weighted capital ratio, since those two financial institutions, being investment banks, did not report such regulatory metrics, but in terms of the leverage ratio (calculated as the ratio between tangible common equity and net fixed assets) reported in the last financial statement available at the moment to which the analysis refers.

- Because of the high interest rate volatility recorded in the years before 2007, in modeling the minimum and maximum parameters of the interest rate distribution functions, we applied only one mean deviation (rather than three, as for Stress[+]).

7. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Appendix A. Main Assumptions Adopted in the Stress Test Exercise

| Scenario | Normal | Moderate | Medium | Severe | Extreme |

|---|---|---|---|---|---|

| Default Rates/1 | 0.7% | 1.7% | 2.9% | 5% | 8.4% |

| Projected LGD | 26% | 30% | 34% | 41% | 54% |

- We adopted a Weibull function (1, 5) characterized by right tail; this is a typical widespread modeling of the default rate distribution.

- We defined the native distribution function by setting the mean and maximum parameters; for the entire stress test time horizon the mean is given for each bank by the average of default rate estimates from the last 5 years, and the maximum is set for all banks at 8.4%, which corresponds to the default rate associated in advanced countries with an extremely severe adverse scenario in Hardy and Schmieder benchmarks.

- We truncated the native function. The minimum truncation has been set for all banks at 0.7% in the Stress[−] simulation, which corresponds to a “normal” scenario in Hardy and Schmieder benchmarks; and to 1.7% in the Stress[+] simulation, which corresponds to a “moderately” adverse scenario in Hardy and Schmieder benchmarks. The maximum truncation has been determined for each bank by adding to the bank’s mean value of the native function a stress impact determined on the basis of the benchmark default rate increase realized by switching to a more adverse scenario. This method of modeling default rate extreme values allows us to calibrate the distribution function according to the specific bank’s risk level (i.e., banks with a higher average default rate have a higher maximum truncated default rate). Therefore, to determine the maximum truncation in the Stress[−] simulation we added to the bank’s mean the difference between the “severe” scenario (5%) and the “normal” scenario (0.7%) from Hardy and Schmieder benchmarks, or:

- We adopted a symmetrical Beta function (4, 4).

- We defined the distribution function by setting the minimum and maximum parameters; for the entire stress test time horizon and for all banks, in the Stress[−] simulation we set the minimum at 26%, which corresponds to the LGD benchmark associated in advanced countries (see Table A1) with a normal scenario; the maximum is set to 41%, which corresponds to the LGD benchmarks associated in advanced countries with a severe adverse scenario. In the Stress[+] simulation we set the minimum to 30%, which corresponds to the LGD benchmarks associated in advanced countries with a moderate scenario; the maximum is set to 54%, which corresponds to the LGD benchmarks associated in advanced countries with a extreme adverse scenario.

Appendix B. Stochastic Variables Modeling (Credit Agricole)

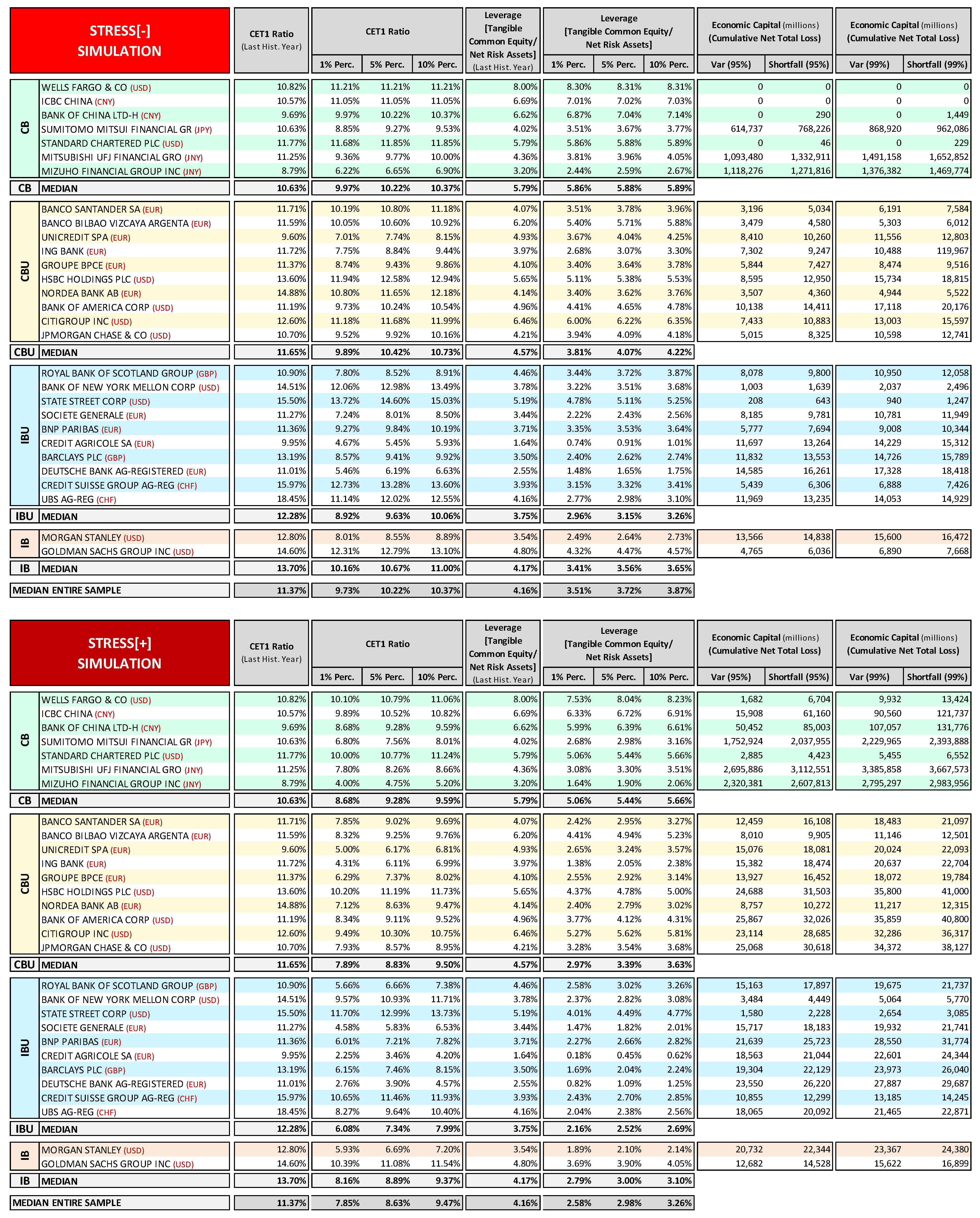

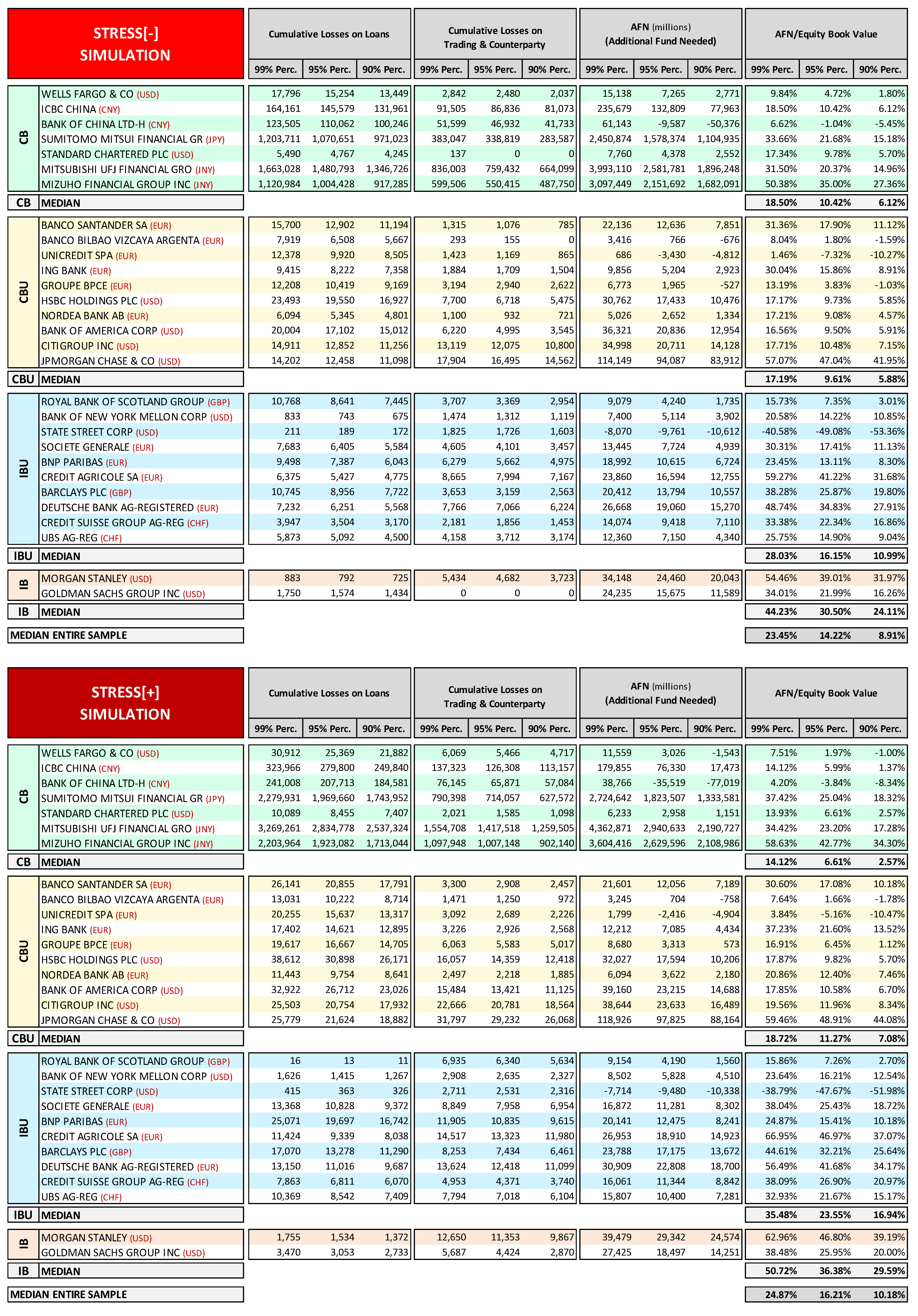

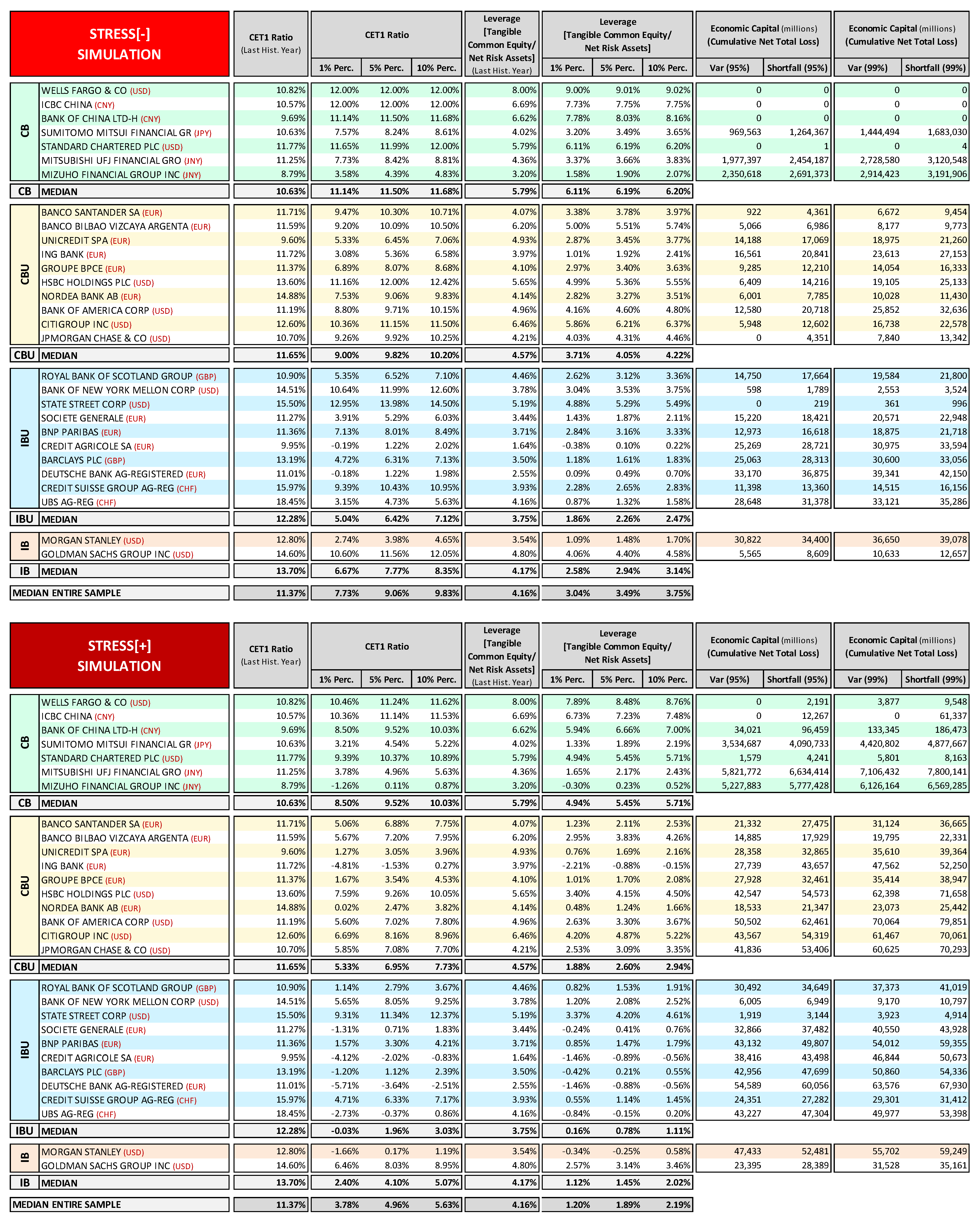

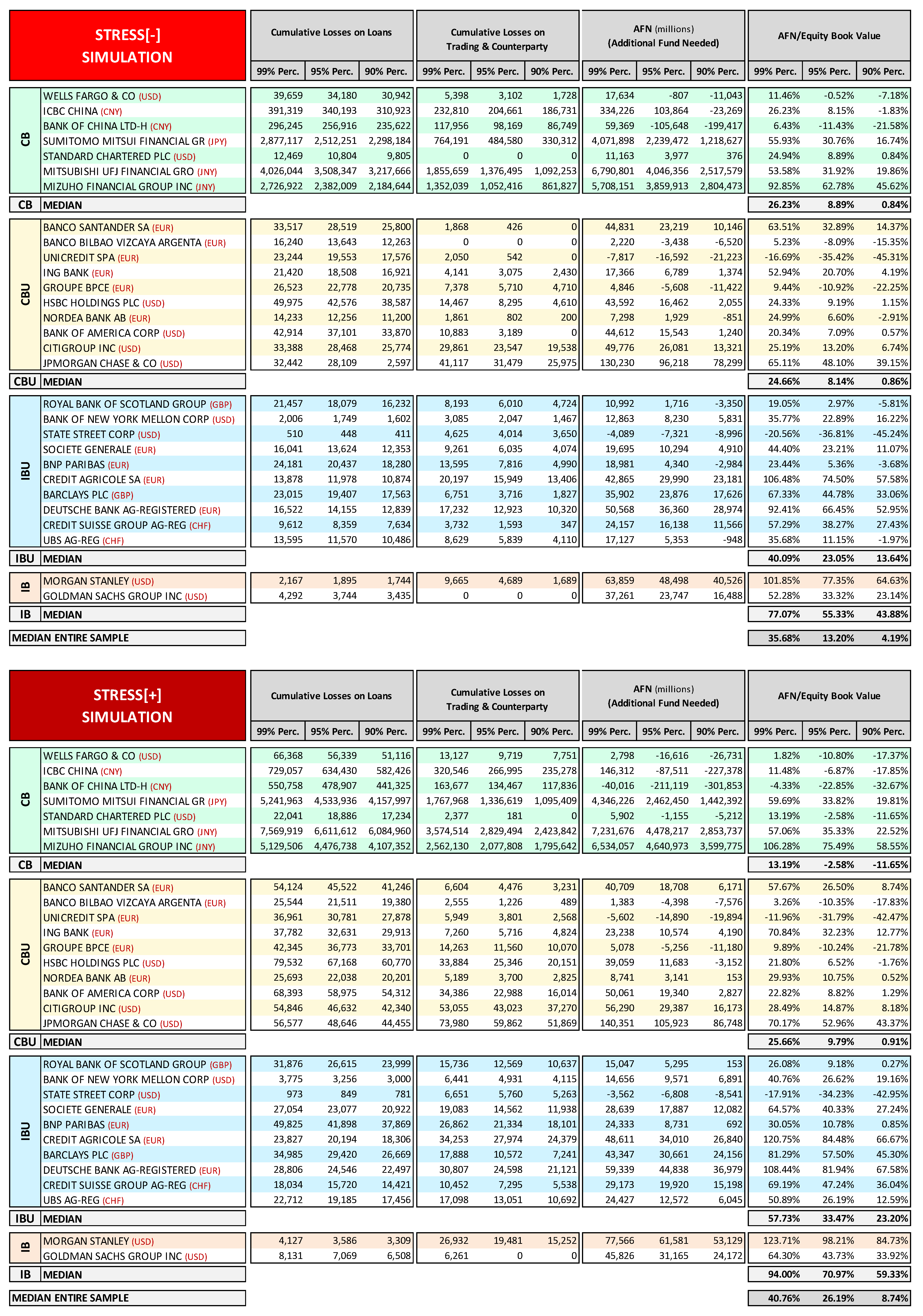

Appendix C. Stochastic Simulation Stress Test Analytical Results

Appendix D. Back-Testing Analysis Results

References

- Admati, Anat R., and Martin Hellwig. 2013. The Bankers’ New Clothes: What’s Wrong with Banking and What to Do about It. Princeton: Princeton University Press. [Google Scholar]

- Admati, Anat R., Franklin Allen, Richard Brealey, Michael Brennan, Markus K. Brunnermeier, Arnoud Boot, John H. Cochrane, Peter M. DeMarzo, Eugene F. Fama, Michael Fishman, and et al. 2010. Healthy Banking System is the Goal, not Profitable Banks. Financial Times, November 9. [Google Scholar]

- Admati, Anat R., Peter M. DeMarzo, Martin F. Hellwig, and Paul C. Pfleiderer. 2016. Fallacies, Irrelevant Facts, and Myths in the Discussion of Capital Regulation: Why Bank Equity Is Not Expensive. Available online: https://dx.doi.org/10.2139/ssrn.2349739 (accessed on 14 August 2018).

- Alfaro, Rodrigo, and Mathias Drehmann. 2009. Macro stress tests and crises: What can we learn? BIS Quarterly Review. December 7, pp. 29–41. Available online: https://www.bis.org/publ/qtrpdf/r_qt0912e.htm (accessed on 14 August 2018).

- Bank of England. 2013. A Framework for Stress Testing the UK Banking System: A Discussion Paper. London: Bank of England, October. [Google Scholar]

- Basel Committee on Banking Supervision. 2009. Principles for Sound Stress Testing Practices and Supervision. No. 155. Basel: Bank for International Settlements, March 13. [Google Scholar]

- Berkowitz, Jeremy. 1999. A coherent framework for stress-testing. Journal of Risk 2: 5–15. [Google Scholar] [CrossRef]

- Bernanke, Ben S. 2013. Stress Testing Banks: What Have We Learned? Paper presented at the "Maintaining Financial Stability: Holding a Tiger by the Tail" Financial Markets Conference, Georgia, GA, USA, April 8. [Google Scholar]

- Borio, Claudio, Mathias Drehmann, and Kostas Tsatsaronis. 2012a. Stress-testing macro stress testing: Does it live up to expectations? Journal of Financial Stability 42: 3–15. [Google Scholar] [CrossRef]

- Borio, Claudio, Mathias Drehmann, and Kostas Tsatsaronis. 2012b. Characterising the financial cycle: Don’t lose sight of the medium term! Social Science Electronic Publishing 68: 1–18. [Google Scholar]

- Carhill, Michael, and Jonathan Jones. 2013. Stress-Test Modelling for Loan Losses and Reserves. In Stress Testing: Approaches, Methods and Applications. Edited by Siddique Akhtar and Iftekhar Hasan. London: Risk Books. [Google Scholar]

- Cario, Marne C., and Barry L. Nelson. 1997. Modeling and Generating Random Vectors with Arbitrary Marginal Distributions and Correlation Matrix. Evanston: Northwestern University. [Google Scholar]

- Cecchetti, Stephen G. 2010. Financial Reform: A Progress Report. Paper presented at the Westminster Economic Forum, National Institute of Economic and Social Research, London, UK, October 4. [Google Scholar]

- Čihák, Martin. 2004. Stress Testing: A Review of Key Concepts. Prague: Czech National Bank. [Google Scholar]

- Čihák, Martin. 2007. Introduction to Applied Stress Testing. IMF Working Papers 07. Washington, DC: International Monetary Fund. [Google Scholar] [CrossRef]

- Clemen, Robert T., and Terence Reilly. 1999. Correlations and copulas for decision and risk analysis. Management Science 45: 208–24. [Google Scholar] [CrossRef]

- Cohen, Jacob. 1988. Statistical Power Analysis for the Behavioral Sciences, 2nd ed. Hillsdale: Lawrence Erlbaum Associates. [Google Scholar]

- Demirguc-Kunt, Asli, Enrica Detragiache, and Ouarda Merrouche. 2013. Bank capital: Lessons from the financial crisis. Journal of Money Credit & Banking 45: 1147–64. [Google Scholar]

- Drehmann, Mathias. 2008. Stress tests: Objectives challenges and modelling choices. Sveriges Riskbank Economic Review 2008: 60–92. [Google Scholar]

- European Banking Authority (EBA). 2011a. Overview of the EBA 2011 Banking EU-Wide Stress Test. London: European Banking Authority. [Google Scholar]

- European Banking Authority (EBA). 2011b. Overview of the 2011 EU-Wide Stress Test: Methodological Note. London: European Banking Authority. [Google Scholar]

- European Banking Authority (EBA). 2013a. Report on the Comparability of Supervisory Rules and Practices. London: European Banking Authority. [Google Scholar]

- European Banking Authority (EBA). 2013b. Third Interim Report on the Consistency of Risk-Weighted Assets—SME and Residential Mortgages. London: European Banking Authority. [Google Scholar]

- European Banking Authority (EBA). 2013c. Report on Variability of Risk Weighted Assets for Market Risk Portfolios. London: European Banking Authority. [Google Scholar]

- European Banking Authority (EBA). 2014a. Fourth Report on the Consistency of Risk Weighted Assets-Residential Mortgages Drill-Down Analysis. London: European Banking Authority. [Google Scholar]

- European Banking Authority (EBA). 2014b. Methodology EU-Wide Stress Test 2014. London: European Banking Authority. [Google Scholar]

- Embrechts, Paul, Alexander McNeil, and Er Mcneil Daniel Straumann. 1999. Correlation: pitfalls and alternatives. Risk Magazine 1999: 69–71. [Google Scholar]

- Estrella, Arturo, Sangkyun Park, and Stavros Peristiani. 2000. Capital ratios as predictors of bank failure. Economic Policy Review 86: 33–52. [Google Scholar]

- Federal Reserve. 2012. Comprehensive Capital Analysis and Review 2012: Methodology for Stress Scenario Projection; Washington, DC: Federal Reserve.

- Federal Reserve. 2013a. Dodd-Frank Act Stress Test 2013: Supervisory Stress Test Methodology and Results; Washington, DC: Federal Reserve.

- Federal Reserve. 2013b. Capital Planning at Large Bank Holding Companies: Supervisory Expectations and Range of Current Practice; Washington, DC: Federal Reserve.

- Federal Reserve. 2014. Dodd–Frank Act Stress Test 2014: Supervisory Stress Test Methodology and Results; Washington, DC: Federal Reserve.

- Federal Reserve, Federal Deposit Insurance Corporation (FDIC), and Office of the Comptroller of the Currency (OCC). 2012. Guidance on Stress Testing for Banking Organizations with Total Consolidated Assets of More Than $10 Billion; Washington, DC: Federal Reserve, Washington, DC: Federal Deposit Insurance Corporation, Washington, DC: Office of the Comptroller of the Currency.

- Ferson, Scott, Janos Hajagos, Daniel Berleant, Jianzhong Zhang, W. Troy Tucker, Lev Ginzburg, and William Oberkampf. 2004. Dependence in Probabilistic Modeling, Dempster-Shafer Theory, and Probability Bounds Analysis; SAND2004-3072. Albuquerque: Sandia National Laboratories.

- Foglia, Antonella. 2009. Stress testing credit risk: A survey of authorities’ approaches. International Journal of Central Banking 5: 9–42. [Google Scholar] [CrossRef]

- Financial Stability Board (FSB). 2013. 2013 Update of Group of Global Systemically Important Banks (G-SIBs). Basel: Financial Stability Board. [Google Scholar]

- Gambacorta, Leonardo, and Adrian van Rixtel. 2013. Structural Bank Regulation Initiatives: Approaches and Implications. No. 412. Basel: Bank for International Settlements. [Google Scholar]

- Geršl, Adam, Petr Jakubík, Tomas Konečný, and Jakub Seidler. 2012. Dynamic stress testing: The framework for testing banking sector resilience used by the Czech National Bank. Czech Journal of Economics and Finance 63: 505–36. [Google Scholar]

- Greenlaw, David, Anil K. Kashyap, Kermit Schoenholtz, and Hyun Song Shin. 2012. Stressed Out: Macroprudential Principles for Stress Testing. No. 71. Chicago: The University of Chicago Booth School of Business. [Google Scholar]

- Guegan, Dominique, and Bertrand K. Hassani. 2014. Stress Testing Engineering: the Real Risk Measurement? In Future Perspectives in Risk Models and Finance. Edited by Alain Bensoussan, Dominique Guegan and Charles S. Tapiero. Cham: Springer International Publishing, pp. 89–124. [Google Scholar]

- Haldane, Andrew G., and Vasileios Madouros. 2012. The dog and the frisbee. Economic Policy Symposium-Jackson Hole 14: 13–56. [Google Scholar]

- Haldane, Andrew G. 2009. Why Banks Failed the Stress Test. Paper presented at The Marcus-Evans Conference on Stress-Testing, London, UK, February 9–10. [Google Scholar]

- Hardy, Daniel. C., and Christian Schmieder. 2013. Rules of Thumb for Bank Solvency Stress Testing. No. 13/232. Washington, DC: International Monetary Fund. [Google Scholar]

- Henry, Jerome, and Christoffer Kok. 2013. A Macro Stress Testing Framework for Assessing Systemic Risks in the Banking Sector. No. 152. Frankfurt: European Central Bank. [Google Scholar]

- Hirtle, Beverly, Anna Kovner, James Vickery, and Meru Bhanot. 2016. Assessing financial stability: The capital and loss assessment under stress scenarios (CLASS) model. Journal of Banking & Finance 69: S35–S55. [Google Scholar] [CrossRef]

- International Monetary Fund (IMF). 2012. Macrofinancial Stress Testing: Principles and Practices. Washington, DC: International Monetary Fund, August 22. [Google Scholar]

- Jagtiani, Julapa, James Kolari, Catharine Lemieux, and Hwan Shin. 2000. Early Warning Models for Bank Supervision: Simpler Could Be Better. Chicago: Federal Reserve Bank of Chicago. [Google Scholar]

- Jobst, Andreas A., Li L Ong, and Christian Schmieder. 2013. A Framework for Macroprudential Bank Solvency Stress Testing: Application to S-25 and Other G-20 Country FSAPs. No. 13/68. Washington, DC: International Monetary Fund. [Google Scholar]

- Kindleberger, Charles P. 1989. Manias, Panics, and Crashes: A History of Financial Crises. New York: Basic Books. [Google Scholar]

- Le Leslé, Vanessa, and Sofiya Avramova. 2012. Revisiting Risk-Weighted Assets: Why Do RWAs Differ across Countries and What Can Be Done about It? No. 12/90. Washington, DC: International Monetary Fund. [Google Scholar]

- Martel, Manuel Merck, Adrian van Rixtel, and Emiliano Gonzalez Mota. 2012. Business Models of International Banks in the Wake of the 2007–2009 Global Financial Crisis. No. 22. Madrid: Bank of Spain, pp. 99–121. [Google Scholar]

- Merton, Robert C. 1974. On the pricing of corporate debt: The risk structure of interest rates. Journal of Finance 29: 449–70. [Google Scholar]

- Minsky, Hyman P. 1972. Financial Instability Revisited: The Economics of Disaster; Washington, DC: Federal Reserve, vol. 3, pp. 95–136.

- Minsky, Hyman P. 1977. Banking and a fragile financial environment. The Journal of Portfolio Management Summer 3: 16–22. [Google Scholar] [CrossRef]

- Minsky, Hyman P. 1982. Can It Happen Again? Essay on Instability and Finance. Armonk: M. E. Sharpe. [Google Scholar]

- Minsky, Hyman P. 2008. Stabilizing an Unstable Economy. New York: McGraw Hill. [Google Scholar]

- Montesi, Giuseppe, and Giovanni Papiro. 2014. Risk analysis probability of default: A stochastic simulation model. Journal of Credit Risk 10: 29–86. [Google Scholar] [CrossRef]

- Nelsen, Roger B. 2006. An Introduction to Copulas. New York: Springer. [Google Scholar]

- Puhr, Claus, and Stefan W. Schmitz. 2014. A view from the top: The interaction between solvency and liquidity stress. Journal of Risk Management in Financial Institutions 7: 38–51. [Google Scholar]

- Quagliariello, Mario. 2009a. Stress-Testing the Banking System: Methodologies and Applications. Cambridge: Cambridge University Press. [Google Scholar]

- Quagliariello, Mario. 2009b. Macroeconomic Stress-Testing: Definitions and Main Components. In Stress-Testing the Banking System: Methodologies and Applications. Edited by Mario Quagliariello. Cambridge: Cambridge University Press. [Google Scholar]

- Rebonato, Riccardo. 2010. Coherent Stress Testing: A Bayesian Approach to the Analysis of Financial Stress. Hoboken: Wiley. [Google Scholar]

- Robert, Christian P., and George Casella. 2004. Monte Carlo Statistical Methods, 2nd ed. New York: Springer. [Google Scholar]

- Rubinstein, Reuven Y. 1981. Simulation and the Monte Carlo Method. New York: John Wiley & Sons. [Google Scholar]

- Schmieder, Christian, Maher Hasan, and Claus Puhr. 2011. Next Generation Balance Sheet Stress Testing. No. 11/83. Washington, DC: International Monetary Fund. [Google Scholar]

- Schmieder, Christian, Heiko Hesse, Benjamin Neudorfer, Claus Puhr, and Stefan W. Schmitz. 2012. Next Generation System-Wide Liquidity Stress Testing. No. 12/03. Washington, DC: International Monetary Fund. [Google Scholar]

- Siddique, Akhtar, and Iftekhar Hasan. 2013. Stress Testing: Approaches, Methods and Applications. London: Risk Books. [Google Scholar]

- Taleb, Nassim N., and Raphael Douady. 2013. Mathematical definition, mapping, and detection of (anti)fragility. Quantitative Finance 13: 1677–89. [Google Scholar] [CrossRef]

- Taleb, Nassim N. 2011. A Map and Simple Heuristic to Detect Fragility, Antifragility, and Model Error. Available online: http://www.fooledbyrandomness.com/heuristic.pdf (accessed on 14 August 2018).

- Taleb, Nassim N. 2012. Antifragile: Things That Gain from Disorder. New York: Random House. [Google Scholar]

- Taleb, Nassim N., Elie Canetti, Tediane Kinda, Elena Loukoianova, and Christian Schmieder. 2012. A New Heuristic Measure of Fragility and Tail Risks: Application to Stress Testing. No. 12/216. Washington, DC: International Monetary Fund. [Google Scholar]

- Tarullo, Daniel K. 2014a. Rethinking the Aims of Prudential Regulation. Paper presented at The Federal Reserve Bank of Chicago Bank Structure Conference, Chicago, IL, USA, May 8. [Google Scholar]

- Tarullo, Daniel K. 2014b. Stress Testing after Five Years. Paper presented at The Federal Reserve Third Annual Stress Test Modeling Symposium, Boston, MA, USA, June 25. [Google Scholar]

- Zhang, Jing. 2013. CCAR and Beyond: Capital Assessment, Stress Testing and Applications. London: Risk Books. [Google Scholar]

| 1 | As highlighted by Taleb (2012, pp. 4–5): “It is far easier to figure out if something is fragile than to predict the occurrence of an event that may harm it. [...] Sensitivity to harm from volatility is tractable, more so than forecasting the event that would cause the harm.” |

| 2 | See Montesi and Papiro (2014). |

| 3 | Guegan and Hassani (2014) propose a stress testing approach, in a multivariate context, that presents some similarities with the methodology outlined in this work. Also, Rebonato (2010) highlights the importance of applying a probabilistic framework to stress testing and presents an approach with similarities to ours. |

| 4 | The topic is covered extensively in the literature. For a survey of stress testing technicalities and approaches see (Berkowitz 1999; Čihák 2004, 2007; Drehmann 2008; Basel Committee on Banking Supervision 2009; Quagliariello 2009a, 2009b; Schmieder et al. 2011; Geršl et al. 2012; Greenlaw et al. 2012; IMF 2012; Siddique and Hasan 2013; Jobst et al. 2013; Henry and Kok 2013; Zhang 2013; Hirtle et al. 2016). For technical documentation, methodology and comments on supervisory stress testing see (Haldane 2009; EBA 2011a, 2011b, 2014b; Federal Reserve/FDIC/OCC 2012; Federal Reserve 2012, 2013a, 2013b, 2014; Bernanke 2013; Bank of England 2013; Tarullo 2014b). |

| 5 | Here the term “speculative position” is to be interpreted according to Minsky’s technical meaning, i.e., a position in which an economic agent needs new borrowing in order to repay outstanding debt. |

| 6 | |

| 7 | See in particular Minsky (1982, 2008) and Kindleberger (1989) contributions on financial instability. |

| 8 | |

| 9 | “If communication is the main objective for a Financial Stability stress test, unobservable factors may not be the first modelling choice as they are unsuited for storytelling. In contrast, using general equilibrium structural macroeconomic models to forecast the impact of shocks on credit risk may be very good in highlighting the key macroeconomic transmission channels. However, macro models are often computationally very cumbersome. As they are designed as tools to support monetary policy decisions they are also often too complex for stress testing purposes”. Drehmann (2008, p. 72). |

| 10 | In this regard the estimate of intra-risk diversification effect is a relevant issue, especially in tail events, for which it is incorrect to simply add up the impacts of the different risk factors estimated separately. For example, consider that for some risk measures, such as VaR, the subadditivity principle is valid only for elliptical distributions (see for example Embrechts et al. 1999). As highlighted by Quagliariello (2009b, p. 34): “ … the methodologies for the integration of different risks are still at an embryonic stage and they represent one of the main challenges ahead.” |

| 11 | Such a model may also in principle be able to capture the capital impact of strategic and/or reputational risk, events that have an impact essentially through adverse dynamics of interest income/expenses, deposits, non-interest income/expenses. |

| 12 | See Haldane (2009, pp. 6–7). |

| 13 | In this regard, Bernanke (2013, pp. 8–9) also underscores the importance of an independent Federal Reserve management and the running of stress tests: “These ongoing efforts are bringing us close to the point at which we will be able to estimate, in a fully independent way, how each firm’s loss, revenue, and capital ratio would likely respond in any specified scenario.” |

| 14 | A typical example is the setting of the dividend/capital retention policy rules. |

| 15 | For a description of the modelling systems of random vectors with arbitrary marginal distribution allowing for any feasible correlation matrix, see: (Rubinstein 1981; Cario and Nelson 1997; Robert and Casella 2004; Nelsen 2006). |

| 16 | As explained above, we avoided recourse to macroeconomic drivers because we considered it a redundant complication. Nevertheless, the simulation modeling framework proposed does allow for the use of macroeconomic drivers. This could be done in two ways: by adding a set of macro stochastic variables (GDP, unemployment rate, inflation, stock market volatility, etc.) and creating a further modeling layer defining the economic relations between these variables and drivers of bank risk (PDs, LGDs, haircut, loans/deposit interest rates, etc.); or more simply (and preferably) by setting the extreme values in the distribution functions of drivers of bank risk according to the values that we assume would correspond to the extreme macroeconomic conditions considered (e.g., the maximum value in the PD distribution function would be determined according to the value associated to the highest GPD drop considered). |

| 17 | It is a sensible assumption considering that under normal conditions, in order to meet its short-term funding needs, a bank tends to issue new debt rather than selling assets. Under stressed conditions the assumption of an asset disposal “mechanism” to cover funding needs is avoided, because it would automatically match any shortfall generated through the simulation, concealing needs that should instead be highlighted. Nevertheless, asset disposal mechanisms can be easily modeled within the simulation framework proposed. |

| 18 | Naturally, in cases where the asset structure is not exogenous, the model must be enhanced to consider the hypothesis that, in the case of a financial surplus, this can be partly used to increase assets. |

| 19 | For example, the cost funding, which is a variable that can have significant effects under conditions of stress, may be directly expressed as a function of a spread linked to the bank’s degree of capitalization. |

| 20 | This risk factor may be introduced in the form of a reputational event risk stochastic variable (simulated, for example, by means of a binomial type of distribution) through which, for each period, the probability of occurrence of a reputational event is established. In scenarios in which reputational events occur, a series of stochastic variables linked to their possible economic impact—such as reduction of commission factor; reduction of deposits factor; increased spread on deposits factor; increase in administrative expenses factor, etc.—is in turn activated. Thus, values are generated that determine the entity of the economic impacts of reputational events in ever scenario in which they occur. |

| 21 | |

| 22 | In this regard see Le Leslé and Avramova (2012). The EBA has been studying this issue for some time (https://www.eba.europa.eu/risk-analysis-and-data/review-of-consistency-of-risk-weighted-assets) and has published a series of reports, see in particular EBA (2013a, 2013b, 2013c, 2014a). |

| 23 | |

| 24 | |

| 25 | While operating business can be distinguished from the financial structure when dealing with corporations, this is not the case for banks, due to the particular nature of their business. Thus in order to evaluate banks’ equity it is more suitable to adopt a levered approach, and consequently it is better to express the default condition directly in terms of equity value <0 rather than as enterprise value < debt. |

| 26 | FCFE directly represents the cash flow generated by the company and available to shareholders, and is made up of cash flow net of all costs, taxes, investments and variations of debt. There are several ways to define FCFE. Given the banks’ regulatory capital constraints, the simplest and most direct way to define it is by starting from net income and then deducting the required change in equity book value, i.e., the capital relation that allows the bank to respect regulatory capital ratio constraints. |

| 27 | On the description and application of this PD estimation method in relation to the corporate world and the differences relative to the option/contingent approach see Montesi and Papiro (2014). |

| 28 | |

| 29 | In fact, since in each scenario of the simulation we simultaneously generate all of the different risk impacts, thinking in terms of VaR would be misleading, because breaking down the total losses related to a certain percentile (and thus to a specific scenario) into risk factor components, we would not necessarily find in that specific scenario a risk factor contribution to the total losses that corresponds to the same percentile level of losses due to that risk factor within the entire series of scenarios simulated. However, if we think in terms of expected shortfall, we can extend the number of tail scenarios considered in the measurement. |

| 30 | Interactions between banks’ solvency and liquidity positions is a very important endogenous risk factor, at both the micro and macro levels, and is too often disregarded in stress testing analysis. Minsky first highlighted the importance of taking this issue into account (see Minsky 1972); other authors have also recently reaffirmed the relevance of modeling the liquidity and solvency risk link in stress test analysis; in particular see Puhr and Schmitz (2014). |

| 31 | The liquidity risk measures and approach outlined may be considered an integration and extension of the liquidity risk framework proposed by Schmieder et al. (2012). |

| 32 | |

| 33 | See FSB (2013, p. 3). |

| 34 | The peer group banks were selected using Bloomberg function: “BI <GO>” (Bloomberg Industries). Specifically, the sample includes banks belonging to the following four peer groups: (1) BIALBNKP: Asian Banks Large Cap—Principle Business Index; (2) BISPRBAC: North American Large Regional Banking Competitive Peers; (3) BIBANKEC: European Banks Competitive Peers; (4) BIERBSEC: EU Regional Banking Europe Southern & Eastern Europe See. Data have been filtered for outliers above the 99th percentile and below the first percentile. |

| 35 | |

| 36 | Empirical research on financial crises confirms that high ratios of equity relative to total assets (risk unweighted), outperform more complex measures as predictors of bank failure. See Estrella et al. (2000), Jagtiani et al. (2000), Demirguc-Kunt et al. (2013), Haldane and Madouros (2012). |

| 37 | In our opinion, it would be better to consider an un-weighted risk base as for the leverage ratio, rather than a RWA-based ratio. In this regard it is worth mentioning Tarullo’s remarks: “The combined complexity and opacity of risk weights generated by each banking organization for purposes of its regulatory capital requirement create manifold risks of gaming, mistake, and monitoring difficulty. The IRB approach contributes little to market understanding of large banks’ balance sheets, and thus fails to strengthen market discipline. The relatively short, backward-looking basis for generating risk weights makes the resulting capital standards likely to be excessively pro-cyclical and insufficiently sensitive to tail risk. That is, the IRB approach—for all its complexity and expense—does not do a very good job of advancing the financial stability and macroprudential aims of prudential regulation. […] The supervisory stress tests developed by the Federal Reserve over the past five years provide a much better risk-sensitive basis for setting minimum capital requirements. They do not rely on firms’ own loss estimate. […] For all of these reasons, I believe we should consider discarding the IRB approach to risk-weighted capital requirements. With the Collins Amendment providing a standardized, statutory floor for risk-based capital; the enhanced supplementary leverage ratio providing a stronger back-up capital measure; and the stress tests providing a better risk-sensitive measure that incorporates a macroprudential dimension, the IRB approach has little useful role to play.” Tarullo (2014a, pp. 14–15). |

| 38 | In consideration of the complications related to regulatory capital calculations in Basel 3 (deduction thresholds, filters, phasing-in, etc.), the amount of losses does not perfectly match an equal amount of regulatory capital to be held in advance; therefore, the regulatory capital endowment calculated in this way would be an approximation of the corresponding regulatory capital. |

| 39 | Recalling Bank Recovery and Resolution Directive terminology, the two addendum may correspond respectively to the loss absorption amount and the recapitalization amount. |

| 40 | Since for both samples of banks the total assets of all banks considered amounts to about 11,000 billion EUR, losses can be compared in absolute terms as well. |

| 41 | Considering the entire group of European banks involved in the EBA/ECB stress test, we could reach the same conclusions, in fact, of the 123 banks involved only 44 (representing less than 10% of the aggregated total assets) reported net loss rates above 1.5% (the average rate in the Fed stress test). |

| 42 | Applying a 1.5% net loss rate (on 2013 net risk assets) to the 2013 CET1, the following banks would have not reached the 5.5% CET1 threshold: Credit Agricole, BNP Paribas, Deutsche Bank, Groupe BPCE, ING Bank, Societe General. |

| 43 | Lehman Brothers defaulted on 15 September 2008 (Chapter 11); Merrill Lynch was saved through bail out by Bank of America on 14 September 2008 (completed in January 2009); Northern Rock has been bailed out by the British government on 22 February 2008 (the bank has been taken over by Virgin Money in 2012). |

| 44 | Moody’s KMV is a credit risk model based on the Merton distance-to-default (1974); this kind of model depends on stock market prices and their volatility. This data was not available for all banks in all years considered. |

| 45 | CDS Spreads are transformed into PD through the following formula: Where LGD represents a loss given default with a Recovery Rate of 40%. |

| 46 | Conversion from Rating to PD is obtained from “Cumulative Average Default Rates” tables, based on historical frequencies of default recorded in various rating classes between 1981–2010 for S&P (see Standard and Poors 2011) and 1983–2013 for Moody’s. To reconstruct the master scale, the historical default tables of rated companies, provided by rating agencies, were utilized. |

| 47 | Instead of selecting another specific sample of comparable data referred to the period 2003–2008, we adopted a simpler and less prudential rule based on the same parameters used for the stress test exercise, but reducing their severity. This simpler approach certainly does not overestimate the market risk modeling for those banks; in fact, consider that in 2008 Merrill Lynch reported a loss rate on financial assets of about 5.4%, while Lehman Brothers reported a loss rate of 1.4% related only to the first two quarters. |

| 48 | We recall that an AFN/equity book ratio greater than 1 means that the bank’s funding need is higher than its capital, highlighting a high liquidity risk. |

| 49 | Also, the EBA guidelines on stress testing strongly highlight the importance of taking in due consideration the solvency-liquidity interlinkage in financial institutions’ stress testing. |

| 50 | |

| 51 | |

| 52 | Of course, more sophisticated modeling may allow for differentiated LGDs. |

| 53 | Write-off on Loans is determined according to the stock of NPLs at the end of the previous period. We assume that only accumulated impairments on NPLs are written off when they become definitive. This implies that impairments on loans are correctly determined in each forecasting period according to a sound loss given default forecast; and that only loans previously classified as non-performing can be written off. In other words, we assume to write-off from the gross value of loans and from the reserve for loan losses only the share of NPLs already covered by provisions; while the remaining part is assumed to be fully recovered through collections, according to the payment rate forecast. The amount recovered will reduce only the gross value of loans and not the reserve for loan losses, since there are no provisions set aside for that share of loans assumed to be recovered. |

| 54 | Since the logistic distribution function does not have, a defined domain, we considered as minimum the first percentile of the distribution function. |

| 55 |

| Risk Factor | Types and Models to Project Losses | P&L Risk Factor Variables | Balance Sheet Risk Factor Variables | RWAs Risk Factor Variables | |||

|---|---|---|---|---|---|---|---|

| Basic Modeling | Breakdown Modeling | Basic Modeling | Breakdown Modeling | Basic Modeling | Analytical Modeling | ||

| PILLAR 1 | |||||||

| CREDIT RISK |

|

|

|

|

|

|

|

|

|

|

|

| |||

| MARKET & COUNTERPARTY RISK |

|

|

|

|

|

|

|

| OPERATIONAL RISK |

|

|

|

|

| ||

| PILLAR 2 | |||||||

| INTEREST RATE RISK ON BANKING BOOK |

|

|

| ||||

| REPUTATIONALRISK |

|

|

|

|

| ||

| STRATEGIC AND BUSINESS RISK |

|

|

|

|

| ||

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Montesi, G.; Papiro, G. Bank Stress Testing: A Stochastic Simulation Framework to Assess Banks’ Financial Fragility †. Risks 2018, 6, 82. https://doi.org/10.3390/risks6030082

Montesi G, Papiro G. Bank Stress Testing: A Stochastic Simulation Framework to Assess Banks’ Financial Fragility †. Risks. 2018; 6(3):82. https://doi.org/10.3390/risks6030082

Chicago/Turabian StyleMontesi, Giuseppe, and Giovanni Papiro. 2018. "Bank Stress Testing: A Stochastic Simulation Framework to Assess Banks’ Financial Fragility †" Risks 6, no. 3: 82. https://doi.org/10.3390/risks6030082

APA StyleMontesi, G., & Papiro, G. (2018). Bank Stress Testing: A Stochastic Simulation Framework to Assess Banks’ Financial Fragility †. Risks, 6(3), 82. https://doi.org/10.3390/risks6030082