Actuarial Geometry

Abstract

:1. Introduction

2. Why Idiosyncratic Insurance Risk Matters

- Technical and axiomatic characterization of risk measures: (Dhaene et al. 2003; Furman and Zitikis 2008; Laeven and Stadje 2013).

- Capital allocation and its relationship with risk measurement: (Dhaene et al. 2003; Venter et al. 2006; Bodoff 2009; Buch and Dorfleitner 2008; Dhaene et al. 2012; Erel et al. 2015; Furman and Zitikis 2008; Powers 2007; Tsanakas 2009).

- The connection between purpose and method in capital allocation: (Dhaene et al. 2008; Zanjani 2010; Bauer and Zanjani 2013b; Goovaerts et al. 2010).

- Questioning the need for capital allocation in pricing: Gründl and Schmeiser 2007.

3. Risk Measures, Risk Allocation and the Ubiquitous Gradient

3.1. Definition and Examples of Risk Measures

3.2. Allocation and the Gradient

4. Two Motivating Examples

5. Lévy process Models of Insurance Losses

5.1. Definition and Basic Properties of Lévy processes

- LP1.

- almost surely;

- LP2.

- X has independent increments, so for the variables are independent;

- LP3.

- X has stationary increments, so has the same distribution as ; and

- LP4.

- X is stochastically continuous, so for all and

5.2. Four Temporal and Volumetric Insurance Loss Models

- IM1.

- . This model assumes there is no difference between insuring given insureds for a longer period of time and insuring more insureds for a shorter period.

- IM2.

- , for a subordinator with . Z is an increasing Lévy process which measures random operational time, rather than calendar time. It allows for systematic time-varying contagion effects, such as weather patterns, inflation and level of economic activity, affecting all insureds. Z could be a deterministic drift or it could combine a deterministic drift with a stochastic component.

- IM3.

- , where C is a mean 1 random variable capturing heterogeneity and non-diversifiable parameter risk across an insured population of size x. C could reflect different underwriting positions by firm, which drive systematic and permanent differences in results. The variable C is sometimes called a mixing variable.

- IM4.

- .

- 1.

- If as we will call temporally diversifying.

- 2.

- If as we will call volumetrically diversifying.

- 3.

- A process which is both temporally and volumetrically diversifying will be called diversifying.

- AM1.

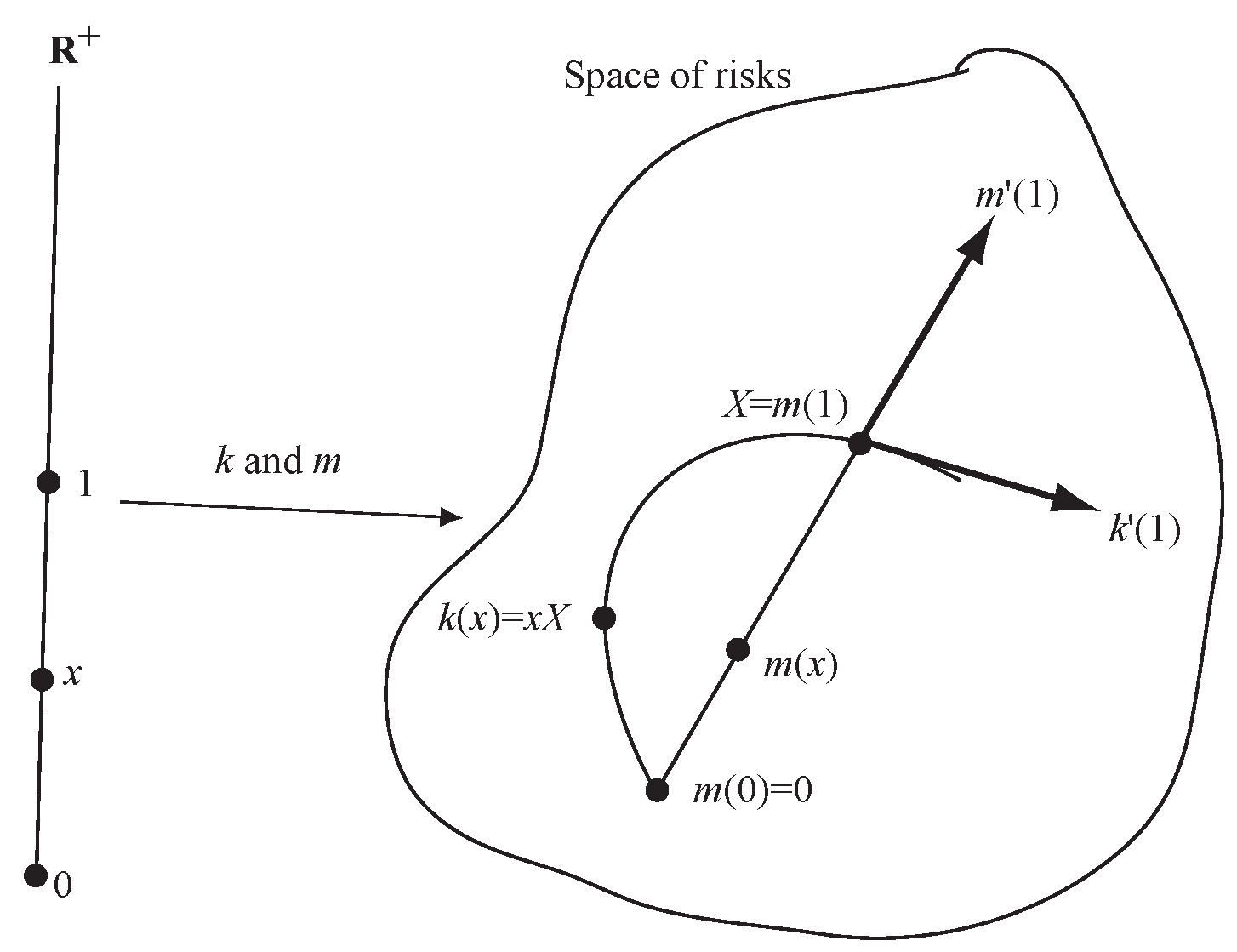

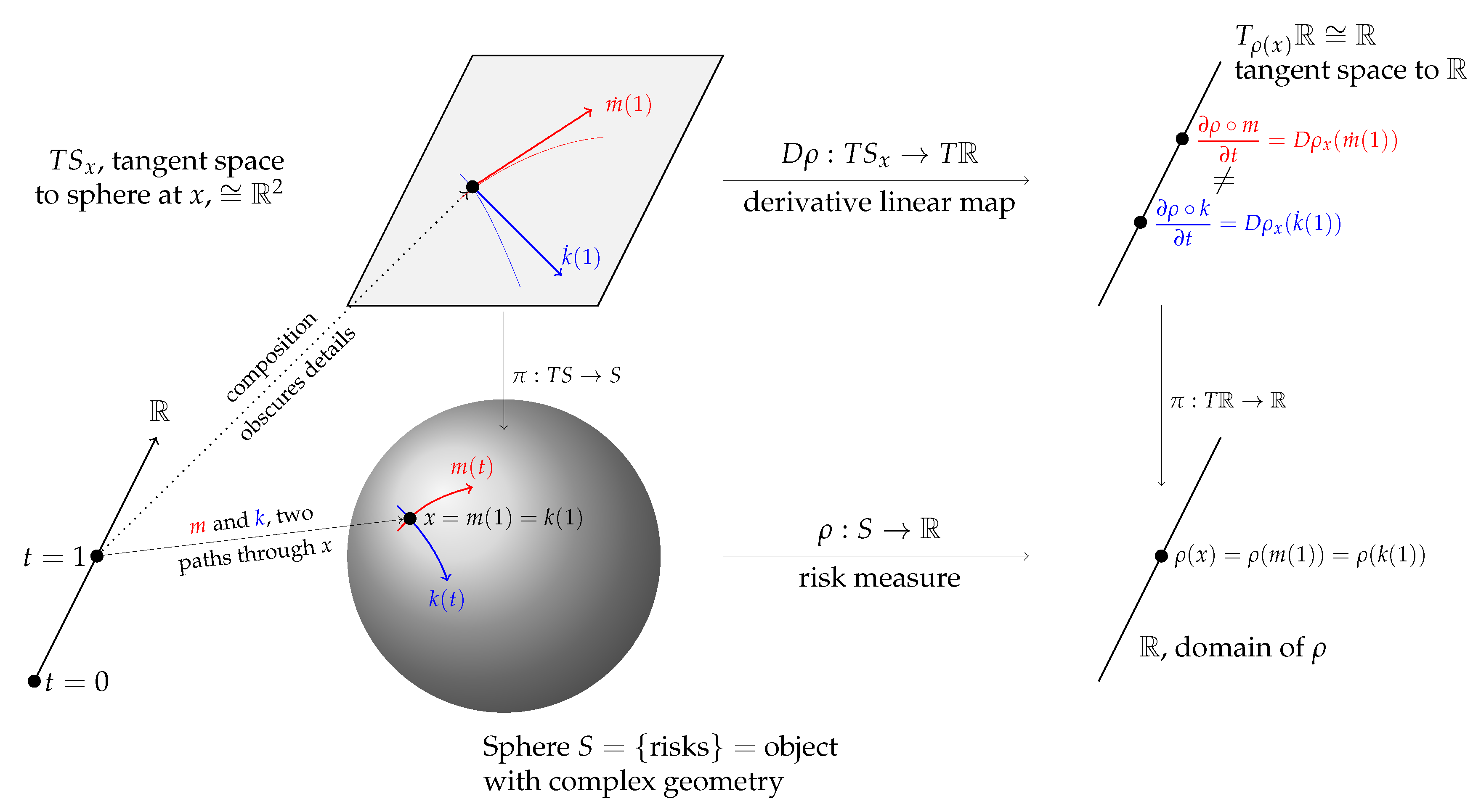

6. Defining the Derivative of a Risk Measure and Directions in the Space of Risks

6.1. Defining the Derivative

6.2. Directions in the Space of Actuarial Random Variables

- The notion that directions, or tangent vectors, live in a separate space called the tangent bundle.

- The identification of tangent vectors as derivatives of curves.

- The idea that Lévy processes, characterized by the additive relation , provide the appropriate analog of rays to use as a basis for insurance risks.

6.3. Examples

6.4. Application to Insurance Risk Models IM1-4 and Asset Risk Model AM1

6.5. Higher Order Identification of the Differences Between Insurance and Asset Models

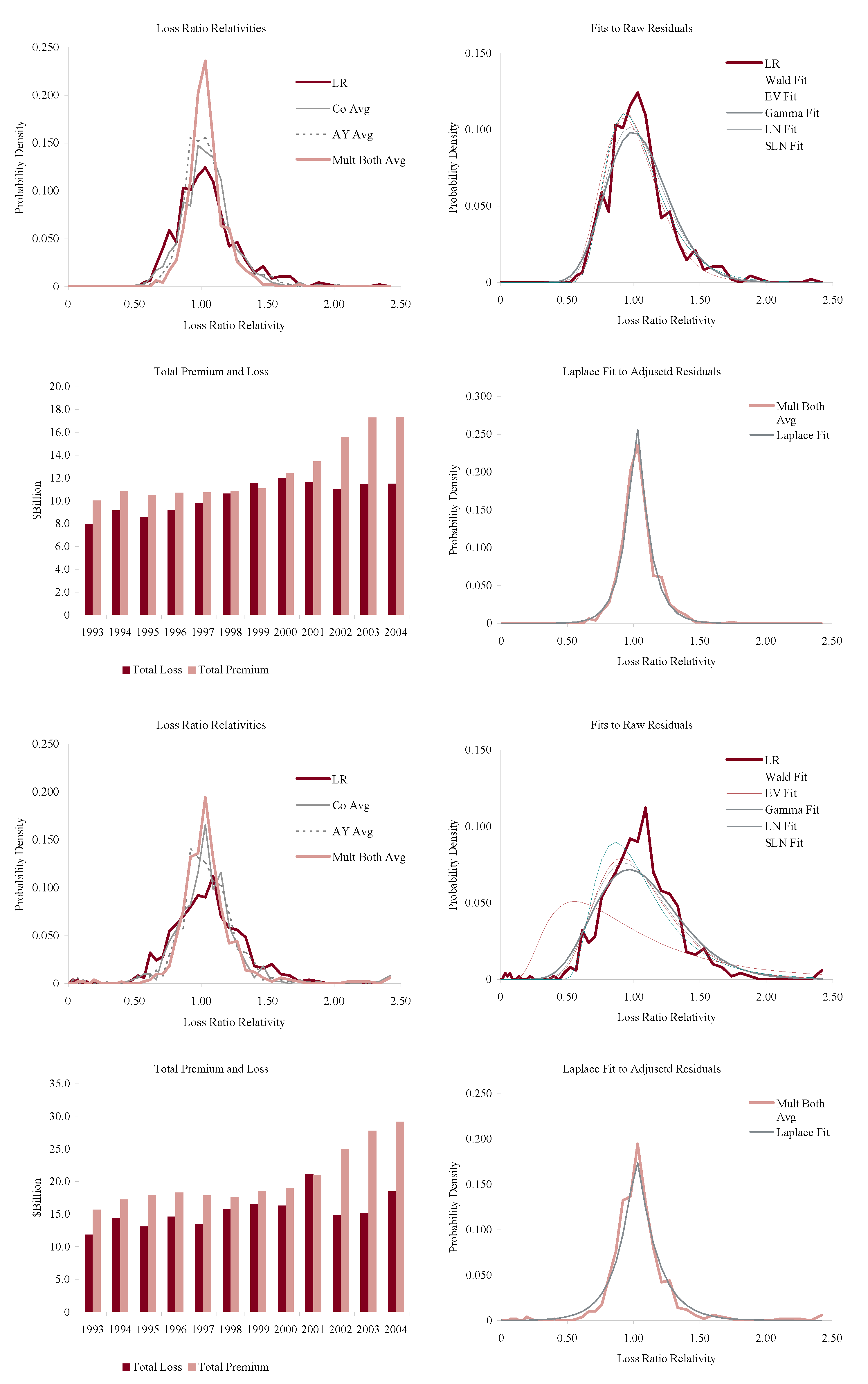

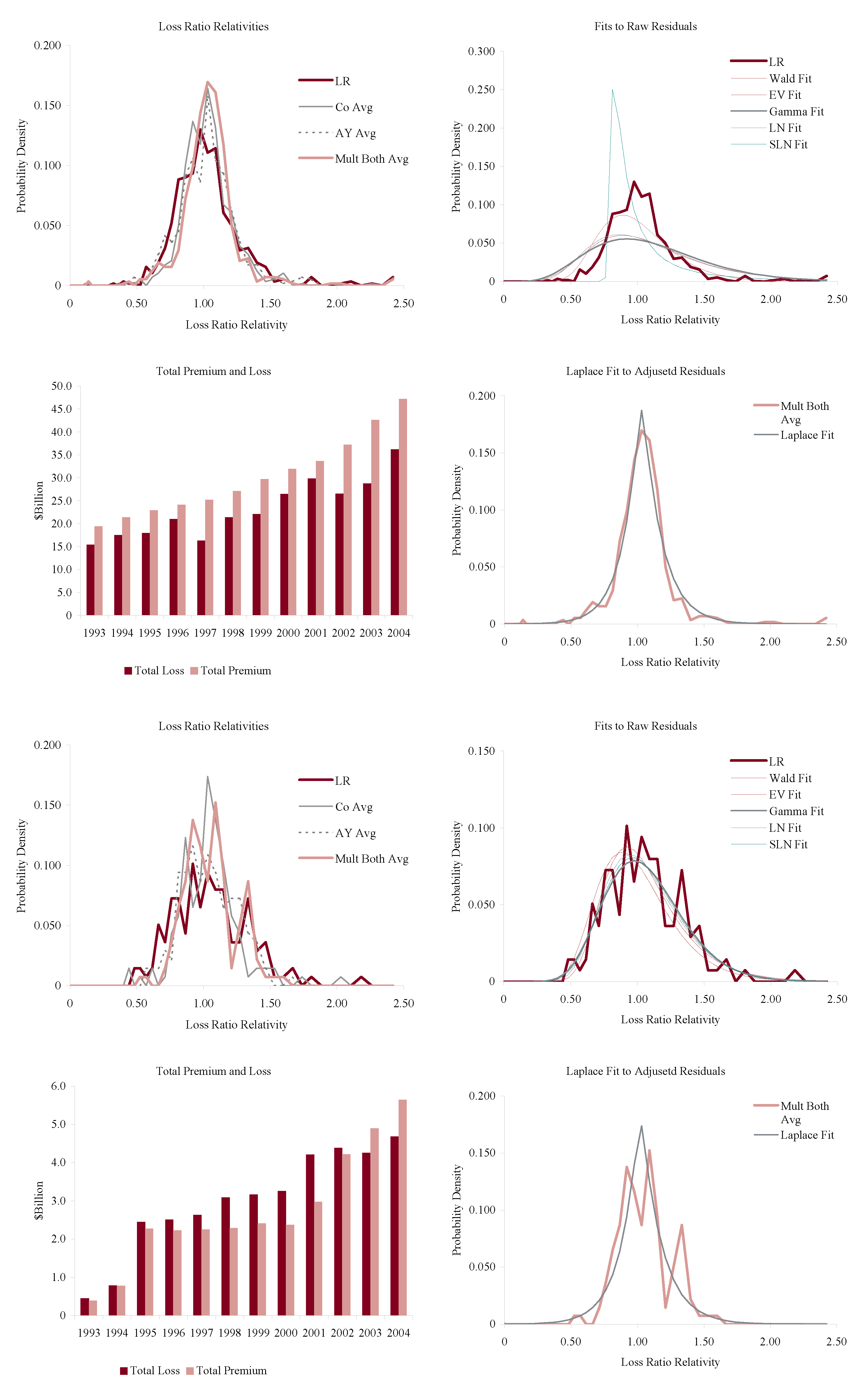

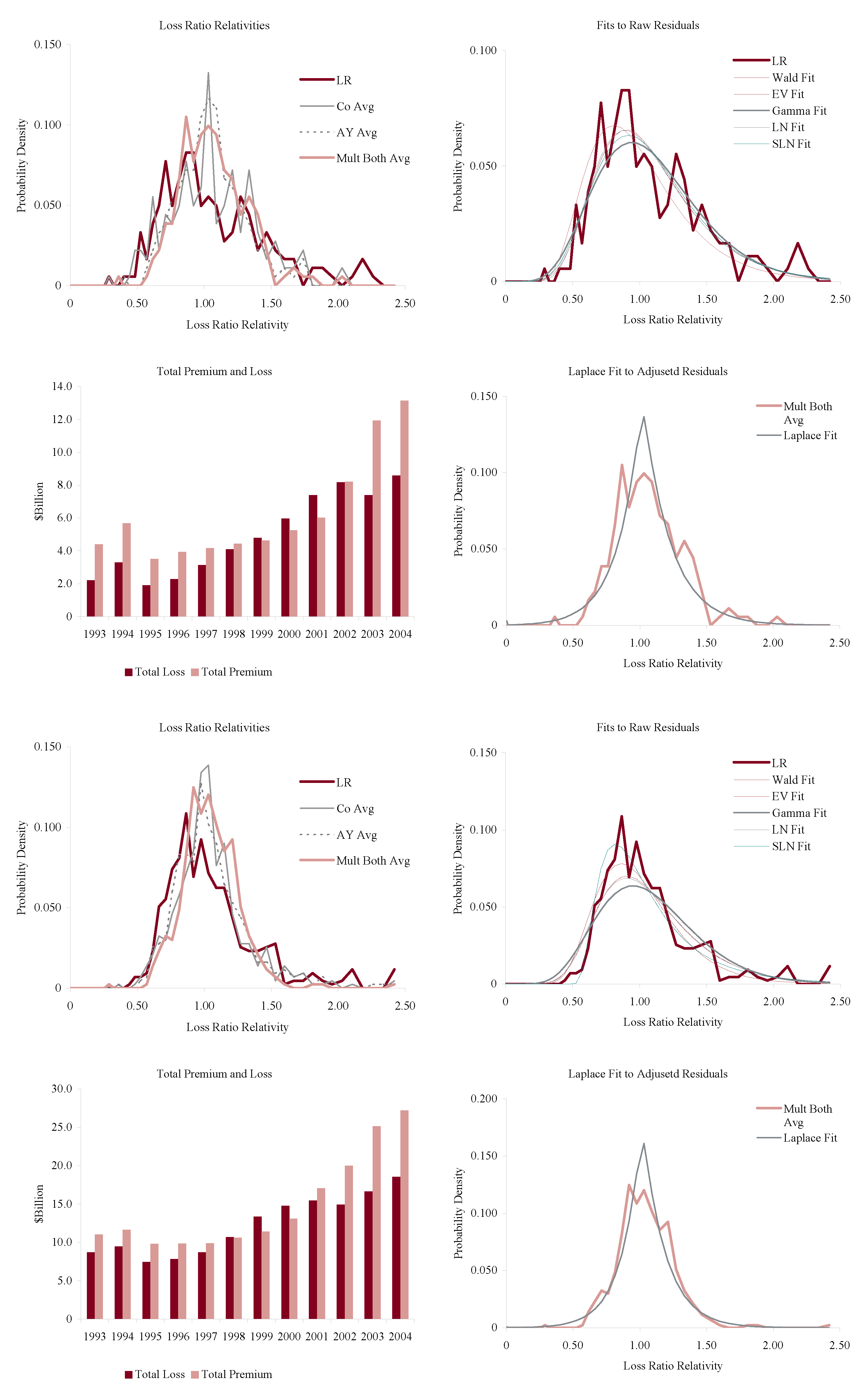

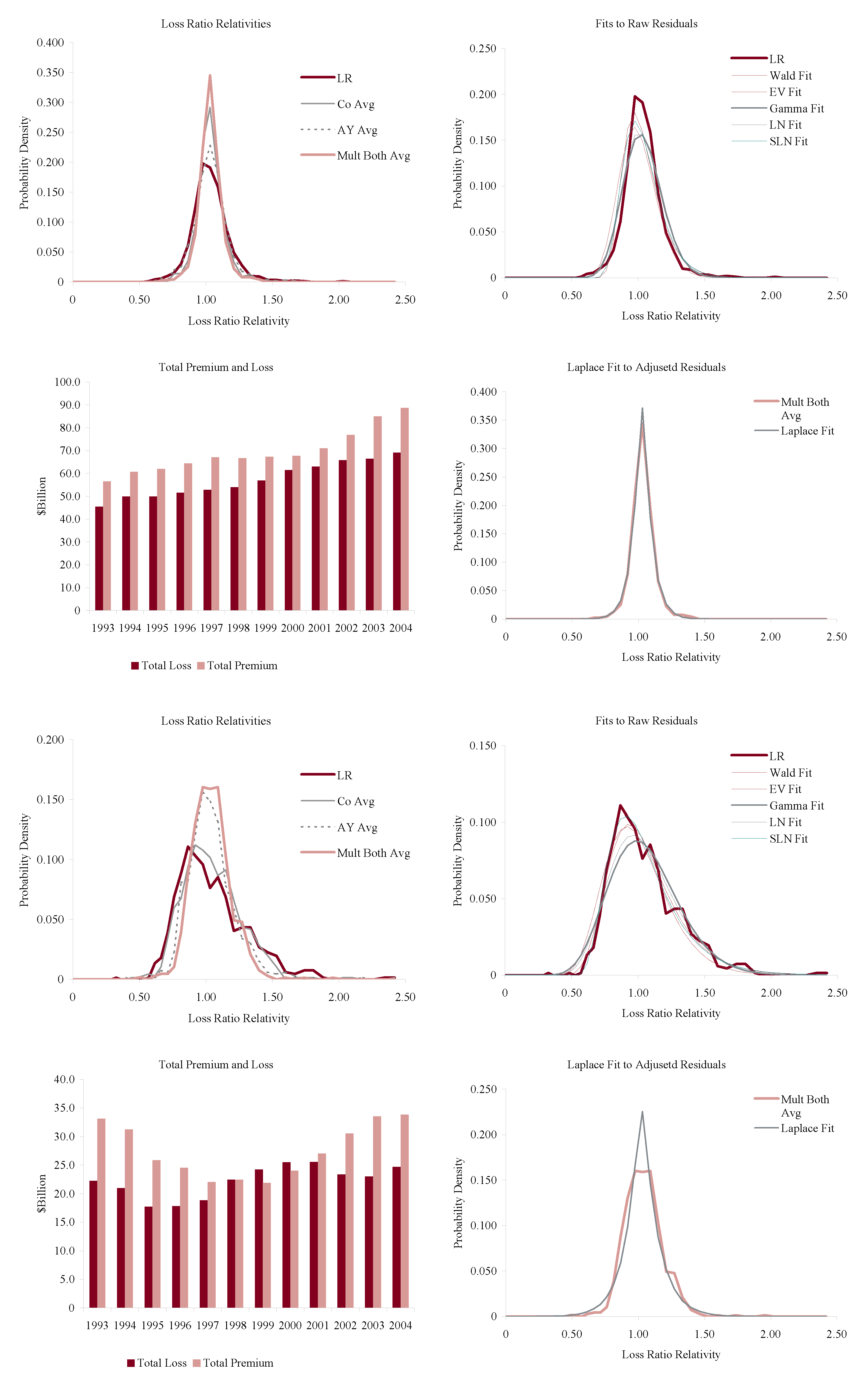

7. Empirical Analysis

7.1. Overview

- H1.

- The asymptotic coefficient of variation or volatility as volume grows is strictly positive.

- H2.

- Time and volume are symmetric in the sense that the coefficient of variation of aggregate losses for volume x insured for time t only depends on .

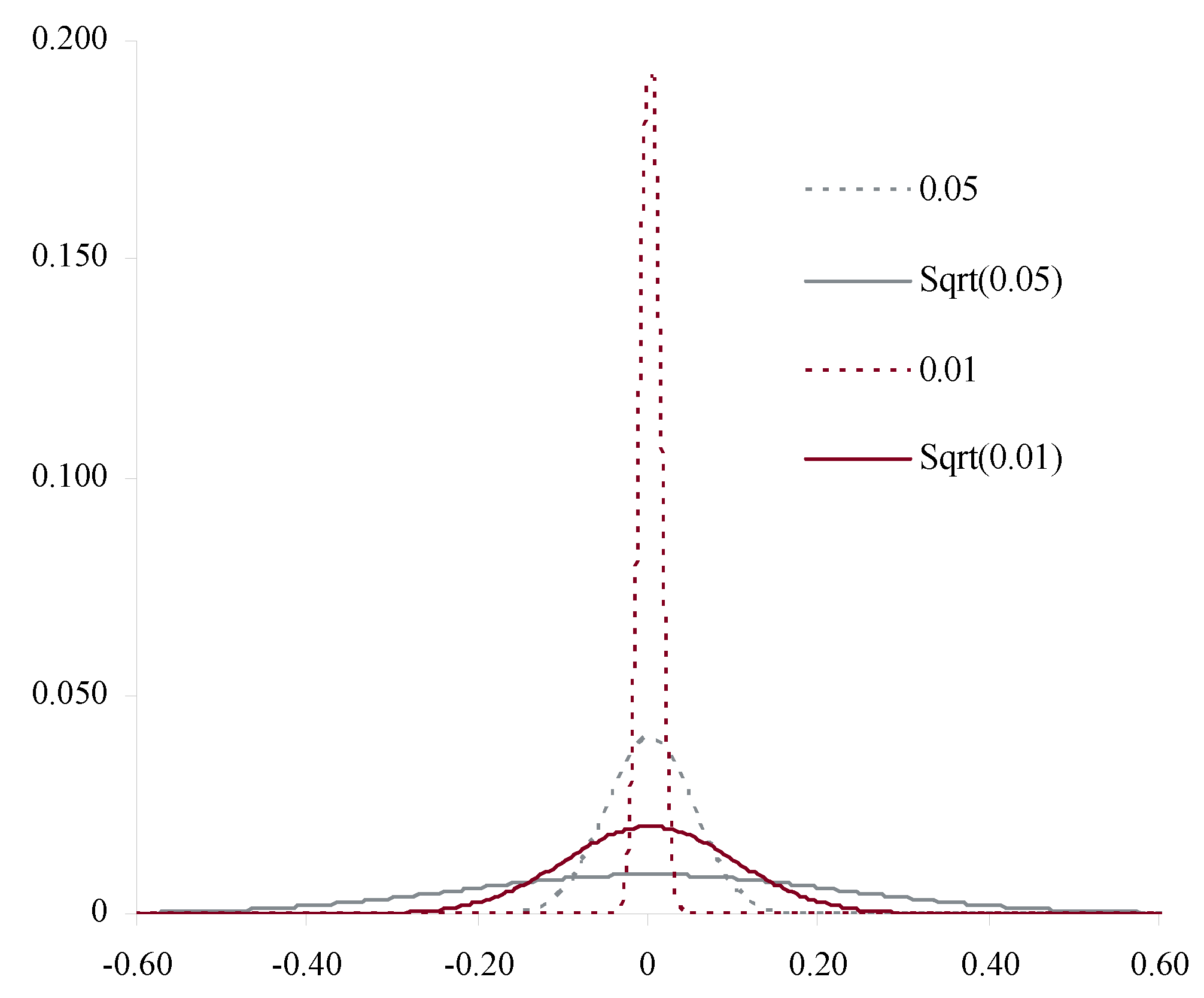

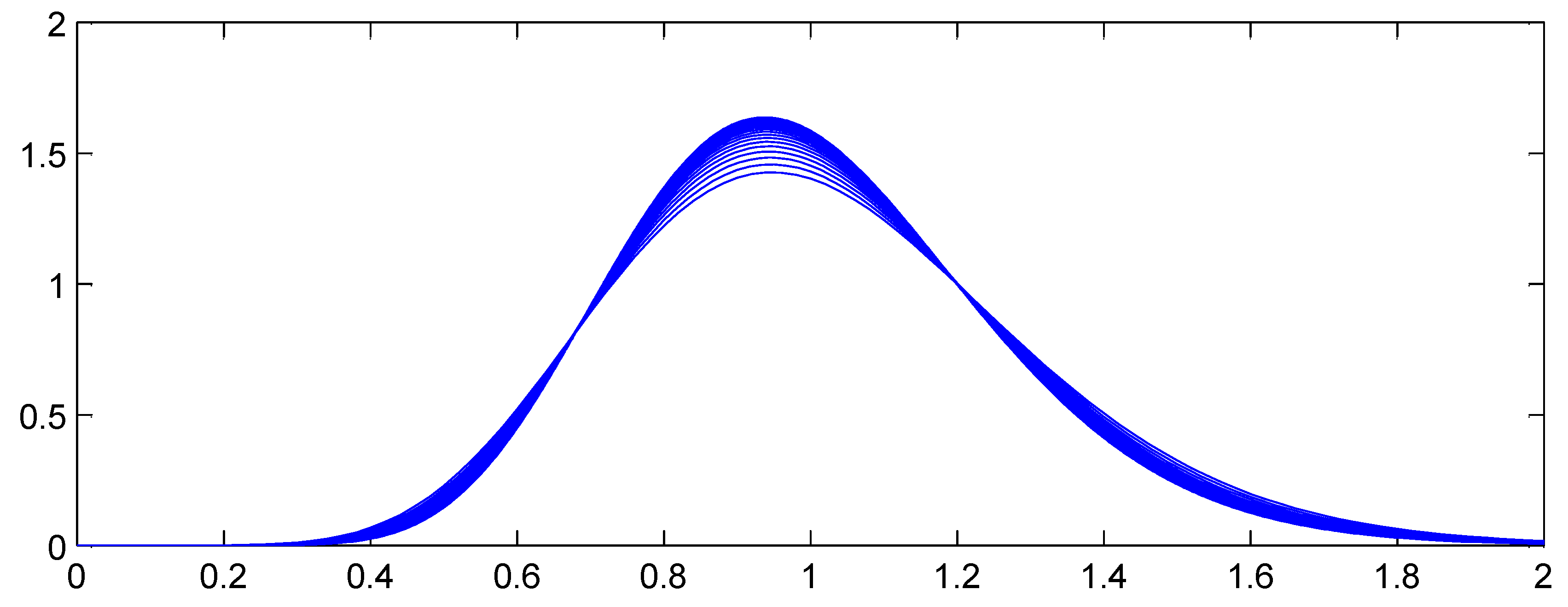

7.2. Isolating the Mixing Distribution

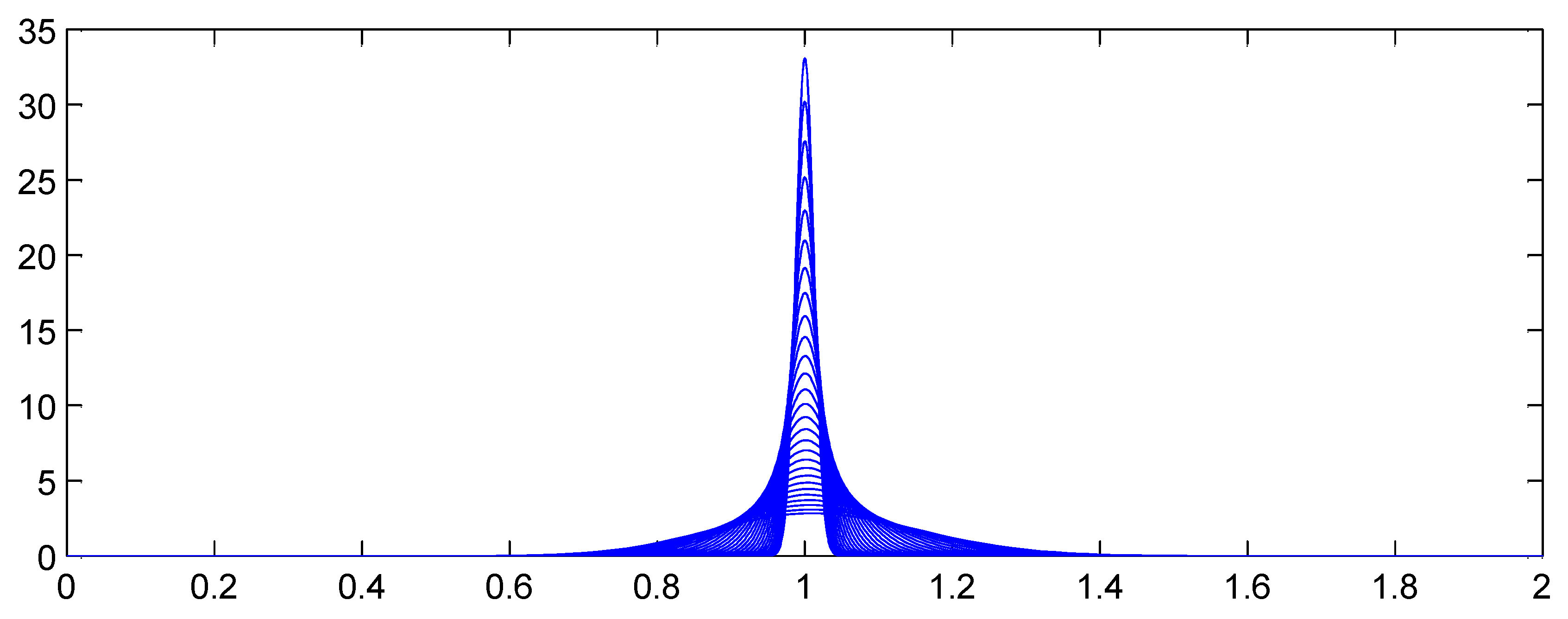

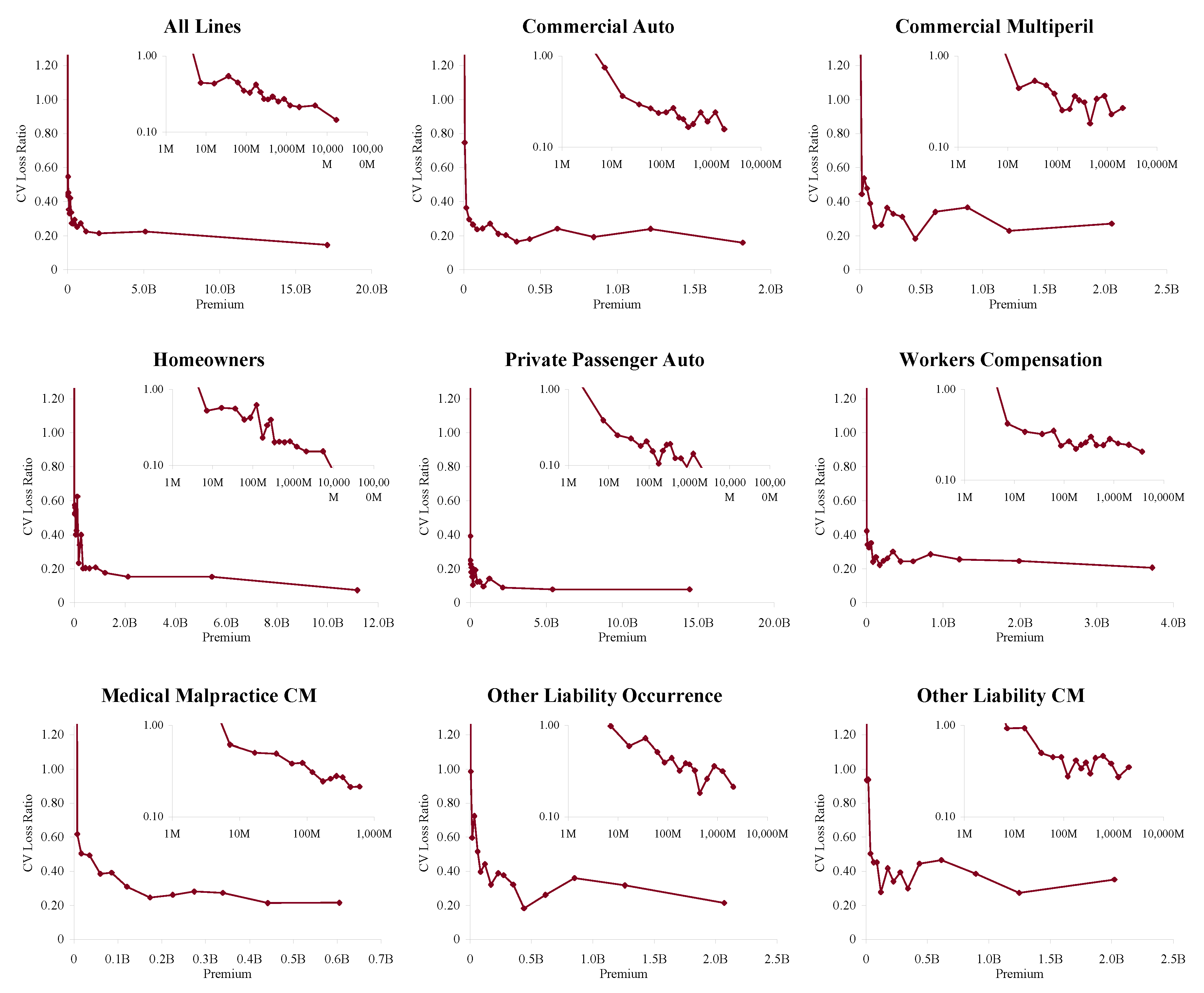

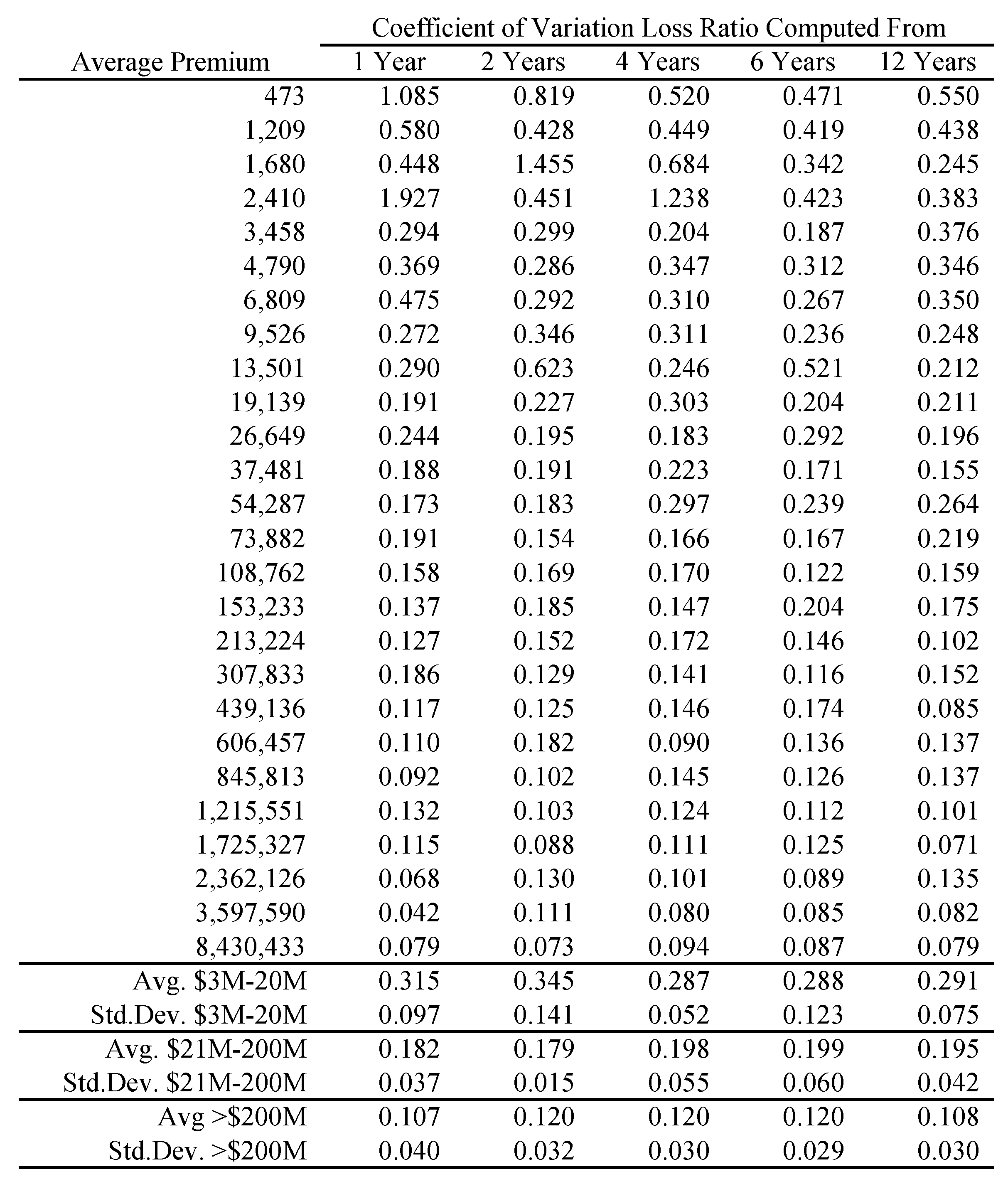

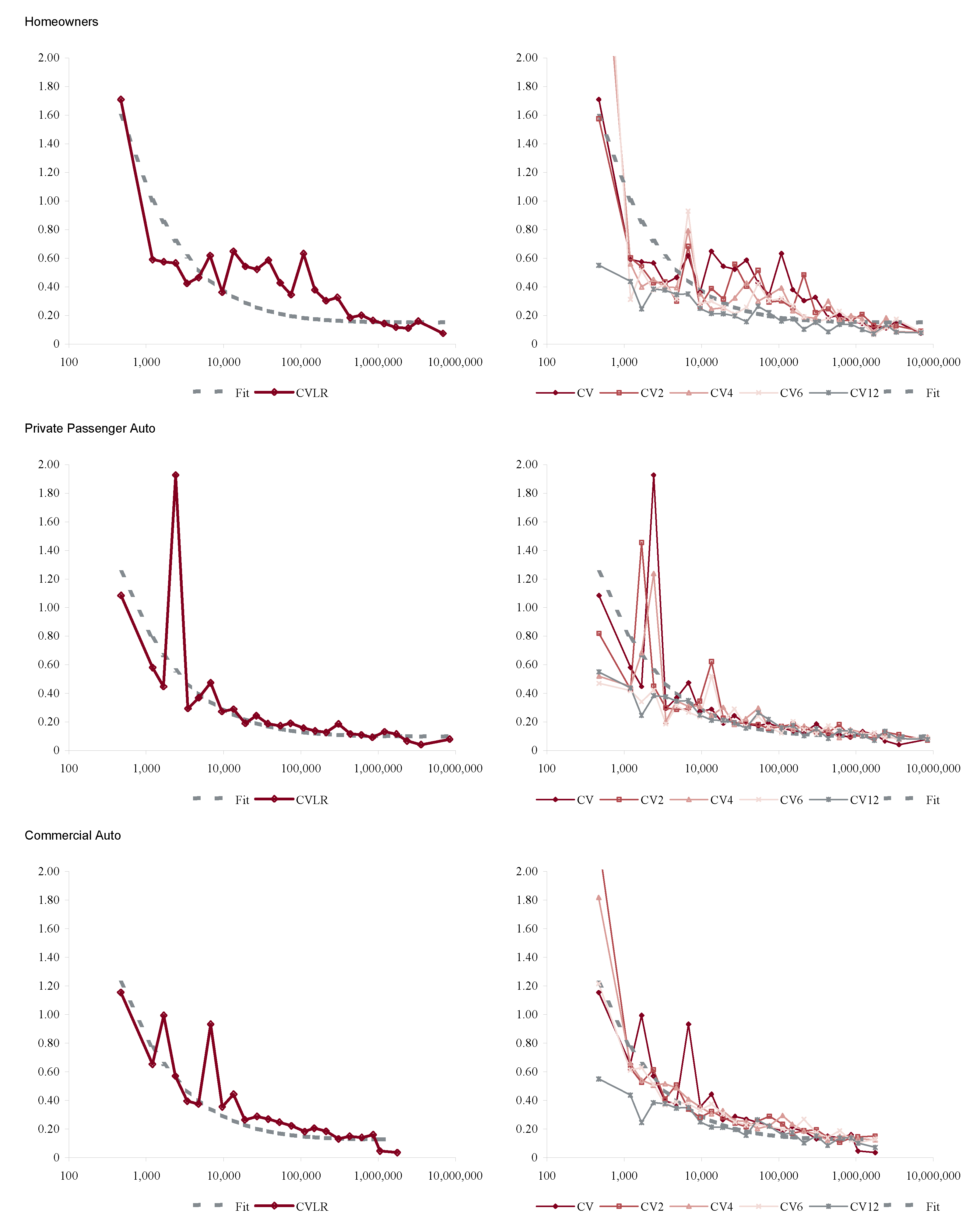

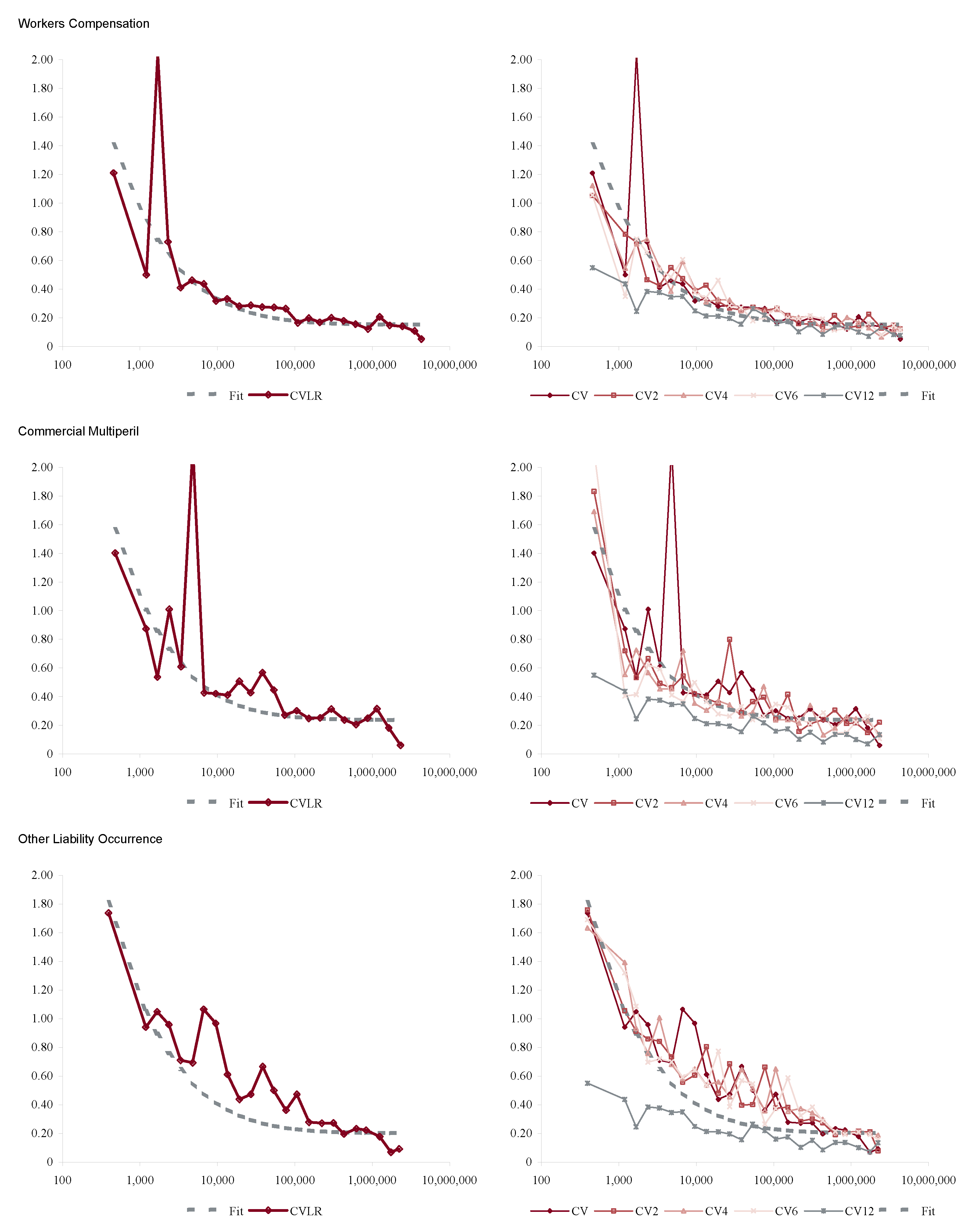

7.3. Volumetric Empirics

- Heterogeneity refers to the level of consistency in terms and conditions and types of insureds within the line, with high heterogeneity indicating a broad range. The two Other Liability lines are catch-all classifications including a wide range of insureds and policies.

- Regulation indicates the extent of rate regulation by state insurance departments.

- Limits refers to the typical policy limit. Personal auto liability limits rarely exceed $300,000 per accident in the US and are characterized as low. Most commercial lines policies have a primary limit of $1M, possibly with excess liability policies above that. Workers compensation policies do not have a limit but the benefit levels are statutorily prescribed by each state.

- Cycle is an indication of the extent of the pricing cycle in each line; it is simply split personal (low) and commercial (high).

- Cats (i.e., catastrophes) covers the extent to which the line is subject to multi-claimant, single occurrence catastrophe losses such as hurricanes, earthquakes, mass tort, securities laddering, terrorism, and so on.

- The loss processes are not volumetrically diversifying, that is the volatility does not decrease to zero with volume.

- Below a range $100M-1B (varying by line) there are material changes in volatility with premium size.

- $100M is a reasonable threshold for large, in the sense that there is less change in volatility beyond $100M.

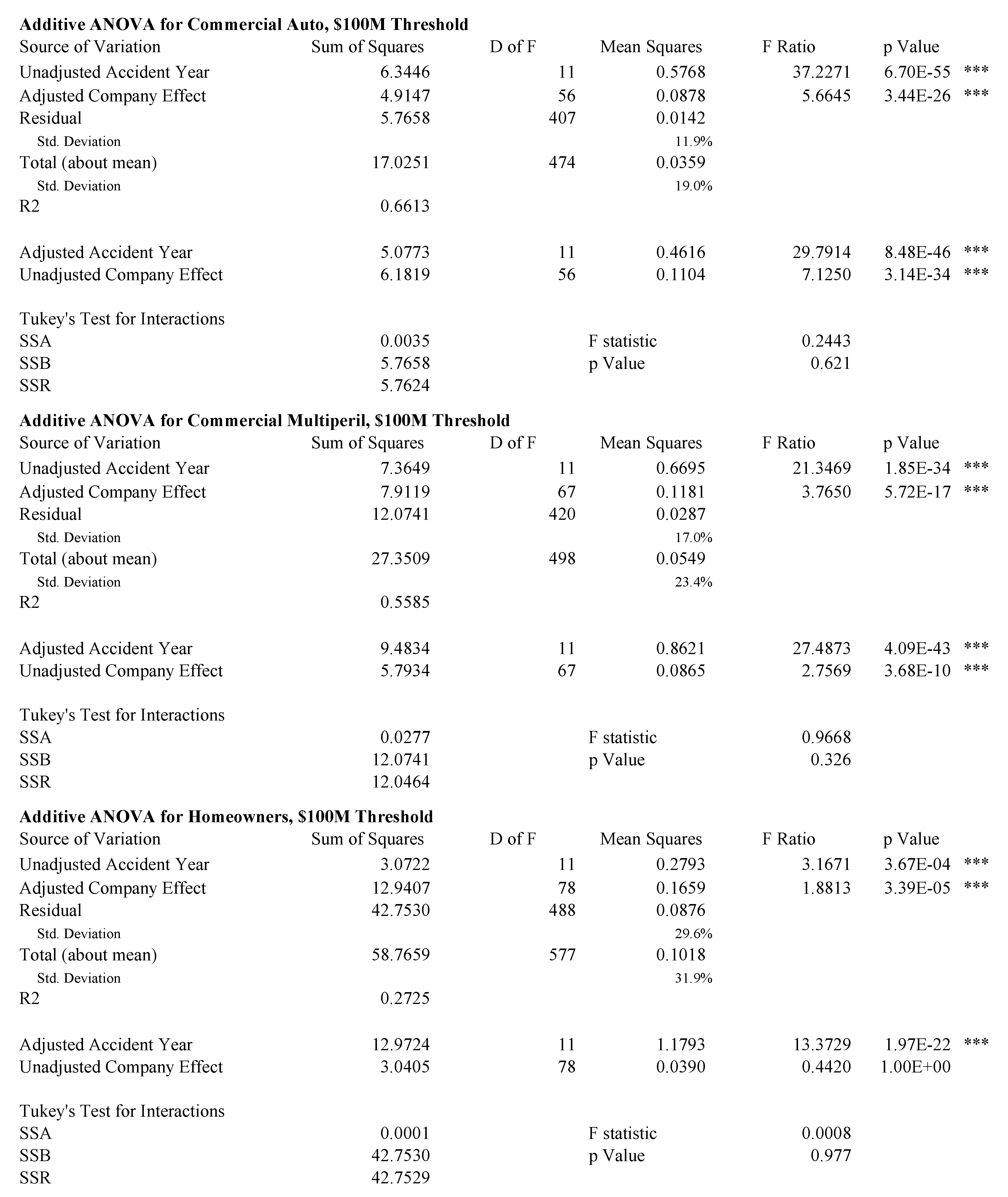

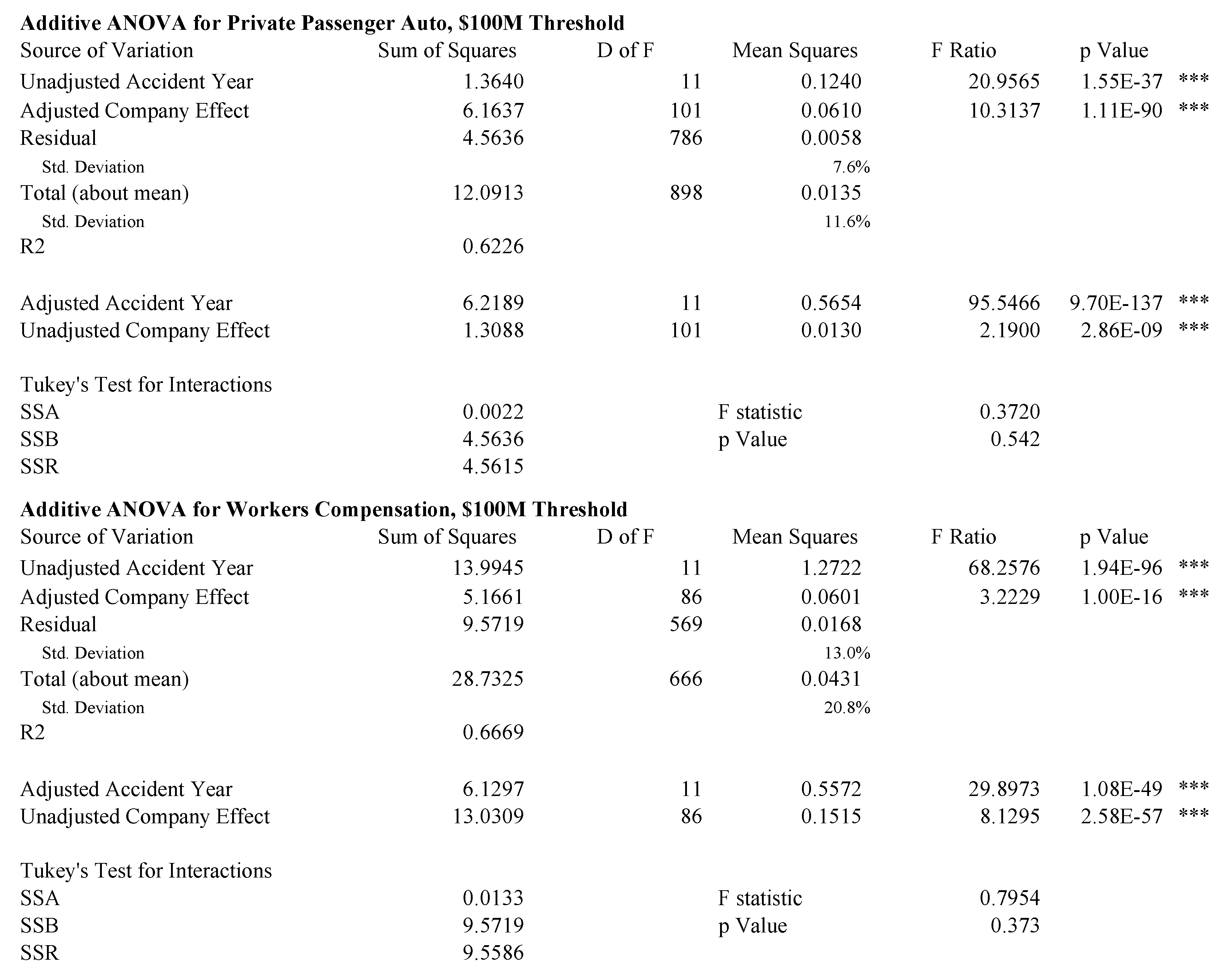

- Res1.

- Raw loss ratio volatility across all twelve years of data for all companies. This volatility includes a pricing cycle effect, captured by accident year, and a company effect.

- Res2.

- Control for the accident year effect . This removes the pricing cycle but it also removes some of the catastrophic loss effect for a year—an issue with the results for homeowners in 2004.

- Res3.

- Control for the company effect . This removes spurious loss ratio variation caused by differing expense ratios, distribution costs, profit targets, classes of business, limits, policy size and so forth.

- Res4.

- Control for both company effect and accident year, i.e., perform an unbalanced two-way ANOVA with zero or one observation per cell. This can be done additively, modeling the loss ratio for company c in year y asor multiplicatively asThe multiplicative approach is generally preferred as it never produces negative fit loss ratios. The statistical properties of the residual distributions are similar for both forms.

7.4. Temporal Empirics

8. Conclusions

Conflicts of Interest

References

- Insurance Risk Study, 2007, First Edition ed. Chicago: Aon Re Global.

- Insurance Risk Study, 2010, Fifth Edition ed. Chicago: Aon Benfield.

- Insurance Risk Study, 2012, Seventh Edition ed. Chicago: Aon Benfield.

- Insurance Risk Study, 2013, Eighth Edition ed. Chicago: Aon Benfield.

- Insurance Risk Study, 2014, Ninth Edition ed. Chicago: Aon Benfield.

- Insurance Risk Study, 2015, Tenth Edition ed. Chicago: Aon Benfield.

- Abraham, Ralph, Jerrold E. Marsden, and Tudor Ratiu. 1988. Manifolds, Tensor Analysis, and Applications, 2nd ed. New York: Springer Verlag. [Google Scholar]

- Applebaum, David. 2004. Lévy Processes and Stochastic Calculus. Cambridge: Cambridge University Press. [Google Scholar]

- Artzner, Philippe, F. Delbaen, J.M. Eber, and D. Heath. 1999. Coherent Measures of Risk. Mathematical Finance 9: 203–28. [Google Scholar] [CrossRef]

- Bühlmann, Hans. 1970. Mathematical Models in Risk Theory. Berlin: Springer Verlag. [Google Scholar]

- Bailey, Robert A. 1967. Underwriting Profits From Investments. Proceedings of the Casualty Actuarial Society LIV: 1–8. [Google Scholar]

- Barndorff-Nielsen, Ole E., Thomas Mikosch, and Sidney I. Resnick, eds. 2001. Lévy Proceeses—Theory and Applications. Boston: Birkhäuser. [Google Scholar]

- Barndorff-Nielsen, Ole Eiler. 2000. Probability Densities and Lévy Densities. Aarhus: University of Aarhus. Centre for Mathematical Physics and Stochastics (MaPhySto), vol. MPS RR 2000-8. [Google Scholar]

- Bauer, Daniel, and George Zanjani. 2013a. The Marginal Cost of Risk in a Multi-Period Risk Model. preprint, November. [Google Scholar]

- Bauer, Daniel, and George H. Zanjani. 2013b. Capital allocation and its discontents. In Handbook of Insurance. New York: Springer, pp. 863–80. [Google Scholar]

- Beard, R. E., T. Pentikäinen, and E. Pesonen. 1969. Risk Theory—The Stochastic Basis of Insurance, 3rd ed. London: Chapman and Hall. [Google Scholar]

- Bertoin, Jean. 1996. Lévy Processes. Cambridge: Cambridge University Press. [Google Scholar]

- Bodoff, Neil M. 2009. Capital Allocation by Percentile Layer. 2007 ERM Symposium. Available online: www.ermsymposium.org/2007/pdf/papers/Bodoff.pdf (accessed on 13 June 2017).

- Boonen, Tim J., Andreas Tsanakas, and Mario V. Wüthrich. 2017. Capital allocation for portfolios with non-linear risk aggregation. Insurance: Mathematics and Economics 72: 95–106. [Google Scholar] [CrossRef]

- Borwein, Jonathan M., and Jon D. Vanderwerff. 2010. Convex Functions: Constructions, Characterizations and Counterexamples. Cambridge: Cambridge University Press, vol. 109. [Google Scholar]

- Bowers, Newton, Hans Gerber, James Hickman, Donald Jones, and Cecil Nesbitt. 1986. Actuarial Mathematics. Schaumburg: Society of Actuaries. [Google Scholar]

- Breiman, Leo. 1992. Probability, Volume 7 of Classics in Applied Mathematics. Philadelphia: Society for Industrial and Applied Mathematics (SIAM). [Google Scholar]

- Buch, Arne, and Gregor Dorfleitner. 2008. Coherent risk measures, coherent capital allocations and the gradient allocation principle. Insurance: Mathematics and Economics 42: 235–42. [Google Scholar] [CrossRef]

- Cummins, J. David, and Scott E. Harrington, eds. 1987. Fair Rate of Return in Property-Liability Insurance. Boston: Kluwer-Nijhoff. [Google Scholar]

- Cummins, J. David, and Richard D. Phillips. 2000. Applications of Financial Pricing Models in Property-Liability Insurance. In Handbook of Insurance. Edited by G. Dionne. Boston: Kluwer Academic. [Google Scholar]

- Cummins, J. David. 1988. Risk-Based Premiums for Insurance Guarantee Funds. Journal of Finance 43: 823–39. [Google Scholar] [CrossRef]

- Cummins, J. David. 2000. Allocation of Capital in the Insurance Industry. Risk Management and Insurance Review 3: 7–27. [Google Scholar] [CrossRef]

- D’Arcy, Stephen P., and Neil A. Doherty. 1988. The Financial Theory of Pricing Property Liability Insurance Contracts. SS Huebner Foundation for Insurance Education, Wharton School, University of Pennsylvania. Homewood: Irwin. [Google Scholar]

- D’Arcy, Stephen P., and Michael A. Dyer. 1997. Ratemaking: A Financial Economics Approach. Proceedings of the Casualty Actuarial Society LXXXIV: 301–90. [Google Scholar]

- Daykin, Chris D., Teivo Pentikäinen, and Martti Pesonen. 1994. Practical Risk Theory for Actuaries. London: Chapman and Hall. [Google Scholar]

- Delbaen, F., and J. Haezendonck. 1989. A martingale approach to premium calculation principles in an arbitrage free market. Insurance: Mathematics and Economics 8: 269–77. [Google Scholar] [CrossRef]

- Delbaen, Freddy. 2000a. Coherent Risk Measures. Monograph, Pisa: Scoula Normale Superiore. [Google Scholar] [CrossRef]

- Delbaen, Freddy. 2000b. Coherent Risk Measures on General Probability Spaces. Advances in finance and stochastics. Berlin: Springer, pp. 1–37. [Google Scholar]

- Denault, Michel. 2001. Coherent allocation of risk capital. Journal of Risk 4: 1–34. [Google Scholar] [CrossRef]

- Dhaene, Jan, Mark J. Goovaerts, and Rob Kaas. 2003. Economic capital allocation derived from risk measures. North American Actuarial Journal 7: 44–56. [Google Scholar] [CrossRef]

- Dhaene, Jan, Steven Vanduffel, Marc J. Goovaerts, Rob Kaas, Qihe Tang, and David Vyncke. 2006. Risk Measures and Comonotonicity: A Review. Stochastic Models 22: 573–606. [Google Scholar] [CrossRef]

- Dhaene, Jan, Roger JA Laeven, Steven Vanduffel, Gregory Darkiewicz, and Marc J. Goovaerts. 2008. Can a coherent risk measure be too subadditive? Journal of Risk and Insurance 75: 365–86. [Google Scholar] [CrossRef]

- Dhaene, Jan, Andreas Tsanakas, Emiliano A. Valdez, and Steven Vanduffel. 2012. Optimal Capital Allocation Principles. Journal of Risk and Insurance 79: 1–28. [Google Scholar] [CrossRef]

- Doherty, Neil A., and James R. Garven. 1986. Price Regulation in Property-Liability Insurance: A Contingent Claims Approach. Journal of Finance XLI: 1031–50. [Google Scholar] [CrossRef]

- Erel, Isil, Stewart C. Myers, and James A. Read. 2015. A theory of risk capital. Journal of Financial Economics 118: 620–635. [Google Scholar] [CrossRef]

- Föllmer, Hans, and Alexander Schi. 2011. Stochastic Finance: An Introduction in Discrete Time. Berlin: Walter de Gruyter. [Google Scholar]

- Feller, William. 1971. An Introduction to Probability Theory and Its Applications, Two Volumes, 2nd ed. New York: John Wiley and Sons. [Google Scholar]

- Ferrari, J. Robert. 1968. The relationship of underwriting, investment, leverage, and exposure to total return on owners’ equity. Proceedings of the Casualty Actuarial Society LV: 295–302. [Google Scholar]

- Fischer, Tom. 2003. Risk Capital Allocation by Coherent Risk Measures Based on One-Sided Moments. Insurance: Mathematics and Economics 32: 135–146. [Google Scholar] [CrossRef]

- Froot, Kenneth A., and Paul GJ O’Connell. 2008. On the pricing of intermediated risks: Theory and application to catastrophe reinsurance. Journal of Banking and Finance 32: 69–85. [Google Scholar] [CrossRef]

- Froot, Kenneth A., and Jeremy C. Stein. 1998. Risk management, capital budgeting, and capital structure policy for inancial institutions: an integrated approach. Journal of Financial Economics 47: 52–82. [Google Scholar] [CrossRef]

- Froot, Kenneth A., David S. Scharfstein, and Jeremy C. Stein. 1993. Risk Management: Coordinating Corporate Investment and Financing Policies. Journal of Finance XLVIII: 1629–58. [Google Scholar] [CrossRef]

- Froot, Kenneth A. 2007. Risk management, capital budgeting, and capital structure policy for insurers and reinsurers. Journal of Risk and Insurance 74: 273–299. [Google Scholar] [CrossRef]

- Furman, Edward, and Riardas Zitikis. 2008. Weighted risk capital allocations. Insurance: Mathematics and Economics 43: 263–69. [Google Scholar] [CrossRef]

- Goovaerts, Marc J., Rob Kaas, and Roger JA Laeven. 2010. Decision principles derived from risk measures. Insurance: Mathematics and Economics 47: 294–302. [Google Scholar] [CrossRef]

- Gründl, Helmut, and Hato Schmeiser. 2007. Capital allocation for insurance companies—what good is it? Journal of Risk and Insurance 74: 301–17. [Google Scholar] [CrossRef]

- Hull, John. 1983. Options Futures and Other Derivative Securities, 2nd ed. Englewood Cliffs: Prentice-Hall. [Google Scholar]

- Jacob, Niels. 2001. Pseduo Differential Operators & Markov Processes: Fourier Analysis and Semigroups. London: Imperial College Press, vol. I. [Google Scholar]

- Jacob, Niels. 2002. Pseduo Differential Operators & Markov Processes: Generators and Their Potential Theory. London: Imperial College Press, vol. II. [Google Scholar]

- Jacob, Niels. 2005. Pseduo Differential Operators & Markov Processes: Markov Processes and Applications. London: Imperial College Press, vol. III. [Google Scholar]

- Kalkbrener, Michael. 2005. An axiomatic approach to capital allocation. Mathematical Finance 15: 425–37. [Google Scholar] [CrossRef]

- Kallop, R. H. 1975. A current look at workers’ compensation ratemaking. Proceedings of the Casualty Actuarial Society LXII: 62–81. [Google Scholar]

- Karatzas, Ioannis, and Steven Shreve. 1988. Brownian Motion and Stochastic Calculus. New York: Springer-Verlag. [Google Scholar]

- Klugman, Stuart A., Harry H. Panjer, and Gordon E. Willmot. 1998. Loss Models, from Data to Decisions. New York: John Wiley and Sons. [Google Scholar]

- Kotz, Samuel, Tomasz Kozubowski, and Krzysztof Podgorski. 2001. The Laplace Distribution and Generalizations. Boston: Birkhauser. [Google Scholar]

- Laeven, Roger JA, and Mitja Stadje. 2013. Entropy coherent and entropy convex measures of risk. Mathematics of Operations 38: 265–93. [Google Scholar] [CrossRef]

- Lange, Jeffrey T. 1966. General liability insurance ratemaking. Proceedings of the Casualty Actuarial Society LIII: 26–53. [Google Scholar]

- Magrath, Joseph J. 1958. Ratemaking for fire insurance. Proceedings of the Casualty Actuarial Society XLV: 176–95. [Google Scholar]

- Meister, Steffen. 1995. Contributions to the Mathematics of Catastrophe Insurance Futures, Unpublished Diplomarbeit, ETH Zurich.

- Merton, Robert C., and Andre Perold. 2001. Theory of Risk Capital in Financial Firms. In The New Corporate Finance, Where Theory Meets Practice. Edited by Donald H. Chew. Boston: McGraw-Hill, pp. 438–54. [Google Scholar]

- Meyers, G. 2005a. Distributing capital: another tactic. Actuarial Rev 32: 25–26, with on-line technical appendix. [Google Scholar]

- Meyers, G. 2005b. The Common Shock Model for Correlated Insurance Losses. Proc. Risk Theory Society. Available online: http://www.aria.org/rts/proceedings/2005/Meyers%20-%20Common%20Shock.pdf (accessed on 13 June 2017).

- Mildenhall, Stephen J. 2004. A Note on the Myers and Read Capital Allocation Formula. North American Actuarial Journal 8: 32–44. [Google Scholar] [CrossRef]

- Mildenhall, Stephen J. 2006. Actuarial Geometry. Proc. Risk Theory Society. Available online: http://www.aria. org/rts/proceedings/2006/Mildenhall.pdf (accessed on 13 June 2017).

- Miller, Robert Burnham, and Dean W. Wichern. 1977. Intermediate Business Statistics: Analysis of Variance, Regression and Time Series. New York: Holt, Rinehart and Winston. [Google Scholar]

- Myers, Stewart C., and James A. Read Jr. 2001. Capital Allocation for Insurance Companies. Journal of Risk and Insurance 68: 545–80. [Google Scholar] [CrossRef]

- Panjer, Harry H., and Gordon E. Willmot. 1992. Insurance Risk Models. Schaumburg: Society of Actuaries. [Google Scholar]

- Panjer, Harry H. 2001. Measurement of Risk, Solvency Requirements and Allocation of Capital Within Financial Conglomerates. Waterloo: University of Waterloo, Institute of Insurance and Pension Research. [Google Scholar]

- Patrik, Gary, Stefan Bernegger, and Marcel Beat Rüegg. 1999. The Use of Risk Adjusted Capital to Support Business Decision-Making. In Casualty Actuarial Society Forum, Spring. Baltimore: Casualty Actuarial Society, pp. 243–334. [Google Scholar]

- Perold, Andre F. 2001. Capital Allocation in Financial Firms. HBS Competition and Strategy Working Paper Series, 98-072; Boston: Harvard Business School. [Google Scholar]

- Phillips, Richard D., J. David Cummins, and Franklin Allen. 1998. Financial Pricing of Insurance in the Multiple-Line Insurance Company. The Journal of Risk and Insurance 65: 597–636. [Google Scholar] [CrossRef]

- Powers, Michael R. 2007. Using Aumann-Shapley values to allocate insurance risk: the case of inhomogeneous losses. North American Actuarial Journal 11: 113–27. [Google Scholar] [CrossRef]

- Ravishanker, Nalini, and Dipak K. Dey. 2002. A First Course in Linear Model Theory. Boca Raton: Chapman & Hall/CRC. [Google Scholar]

- Sato, K. I. 1999. Lévy Processes and Infinitely Divisible Distributions. Cambridge: Cambridge University Press. [Google Scholar]

- Sherris, M. 2006. Solvency, Capital Allocation and Fair Rate of Return in Insurance. Journal of Risk and Insurance 73: 71–96. [Google Scholar] [CrossRef]

- Stroock, Daniel W. 1993. Probability Theory, an Analytic View. Cambridge: Cambridge University Press. [Google Scholar]

- Stroock, Daniel W. 2003. Markov Processes from K. Itô’s Perspective. Annals of Mathematics Studies. Princeton: Princeton University Press. [Google Scholar]

- Tasche, Dirk. 1999. Risk Contributions and Performance Measurement. In Report of the Lehrstuhl für Mathematische Statistik. München: TU München. [Google Scholar]

- Tasche, Dirk. 2004. Allocating portfolio economic capital to sub-portfolios. In Economic Capital: A Practitioner’s Guide. London: Risk Books, pp. 275–302. [Google Scholar]

- Tsanakas, Andreas. 2009. To split or not to split: Capital allocation with convex risk measures. Insurance: Mathematics and Economics 1: 1–28. [Google Scholar] [CrossRef]

- Venter, Gary G., John A. Major, and Rodney E. Kreps. 2006. Marginal Decomposition of Risk Measures. ASTIN Bulletin 36: 375–413. [Google Scholar] [CrossRef]

- Venter, Gary G. 2009. Next steps for ERM: valuation and risk pricing. In Proceedings of the 2010 Enterprise Risk Management Symposium, Chicago, IL, USA, 12–15 April. [Google Scholar]

- Zanjani, George. 2002. Pricing and capital allocation in catastrophe insurance. Journal of Financial Economics 65: 283–305. [Google Scholar] [CrossRef]

- Zanjani, George. 2010. An Economic Approach to Capital Allocation. Journal of Risk and Insurance 77: 523–49. [Google Scholar] [CrossRef]

| 1 | Actuarial Geometry was originally presented to the 2006 Risk Theory Seminar in Richmond, Virginia, Mildenhall (2006). This version is largely based on the original, with some corrections and clarifications, as well as more examples to illustrate the theory. Since 2006 the methodology it described has been successfully applied to a very wide variety of global insurance data in Aon Benfield’s annual Insurance Risk Study, ABI (2007, 2010, 2012, 2013, 2014, 2015), now in its eleventh edition. The findings have remained overwhelmingly consistent. Academically, the importance of the derivative and the gradient allocation method has been re-confirmed in numerous papers since 2006. Applications of Lévy processes to actuarial science and finance have also greatly proliferated. However, the new literature has not touched on the clarification between “direction” in the space of asset return variables and in the space of actuarial variables presented here. |

| Model | Variance | Diversifying | ||

|---|---|---|---|---|

| IM1: | Yes | Yes | ||

| IM2: | No | Yes | ||

| IM3: | No | No | ||

| IM4: | No | No | ||

| AM1: | Const. | Yes | ||

| Characterization of Ray | Required Structure on |

|---|---|

| is the shortest distance between and | Notion of distance in , differentiable manifold |

| , constant velocity, no acceleration | Very complicated on a general manifold. |

| , . | Vector space structure |

| Can add in domain and range, semigroup structure only. |

| Insurance Line | Heterogeneity | Regulation | Limits | Cycle | Cats |

|---|---|---|---|---|---|

| Personal Auto | Low | High | Low | Low | No |

| Commercial Auto | Moderate | Moderate | Moderate | High | No |

| Workers Compensation | Moderate | High | Statutory | High | Possible |

| Medical Malpractice | Moderate | Moderate | Moderate | High | No |

| Commercial Multi-Peril | Moderate | Moderate | Moderate | High | Moderate |

| Other Liability Occurrence | High | Low | High | High | Yes |

| Homeowners Multi-Peril | Moderate | High | Low | Low | High |

| Other Liability Claims Made | High | Low | High | High | Possible |

| Abbreviation | Parameters | Distribution | Fitting Method |

|---|---|---|---|

| Wald | 2 | Wald (inverse Gaussian) | Maximum likelihood |

| EV | 2 | Frechet-Tippet extreme value | Method of moments |

| Gamma | 2 | Gamma | Method of moments |

| LN | 2 | Lognormal | Maximum likelihood |

| SLN | 3 | Shifted lognormal | Method of moments |

| Dimension | Actuarial Geometry | Solvency II |

|---|---|---|

| Time horizon | to ultimate | one year |

| Catastrophe risk | included | excluded |

| Size of company | large | average |

| Line | 1st Edition | 7th Edition | Change |

|---|---|---|---|

| Private Passenger Auto | 14% | 14% | 0% |

| Commercial Auto | 24% | 24% | 0% |

| Workers’ Compensation | 26% | 27% | 1% |

| Commercial Multi Peril | 32% | 34% | 2% |

| Medical Malpractice: Claims-Made | 33% | 42% | 9% |

| Medical Malpractice: Occurrence | 35% | 35% | 0% |

| Other Liability: Occurrence | 36% | 38% | 2% |

| Special Liability | 39% | 39% | 0% |

| Other Liability: Claims-Made | 39% | 41% | 2% |

| Reinsurance Liability | 42% | 67% | 25% |

| Products Liability: Occurrence | 43% | 47% | 4% |

| International | 45% | 72% | 27% |

| Homeowners | 47% | 48% | 1% |

| Reinsurance: Property | 65% | 85% | 20% |

| Reinsurance: Financial | 81% | 93% | 12% |

| Products Liability: Claims-Made | 102% | 100% | −2% |

© 2017 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Mildenhall, S.J. Actuarial Geometry. Risks 2017, 5, 31. https://doi.org/10.3390/risks5020031

Mildenhall SJ. Actuarial Geometry. Risks. 2017; 5(2):31. https://doi.org/10.3390/risks5020031

Chicago/Turabian StyleMildenhall, Stephen J. 2017. "Actuarial Geometry" Risks 5, no. 2: 31. https://doi.org/10.3390/risks5020031

APA StyleMildenhall, S. J. (2017). Actuarial Geometry. Risks, 5(2), 31. https://doi.org/10.3390/risks5020031