Geometrically Constructed Family of the Simple Fixed Point Iteration Method

Abstract

1. Introduction

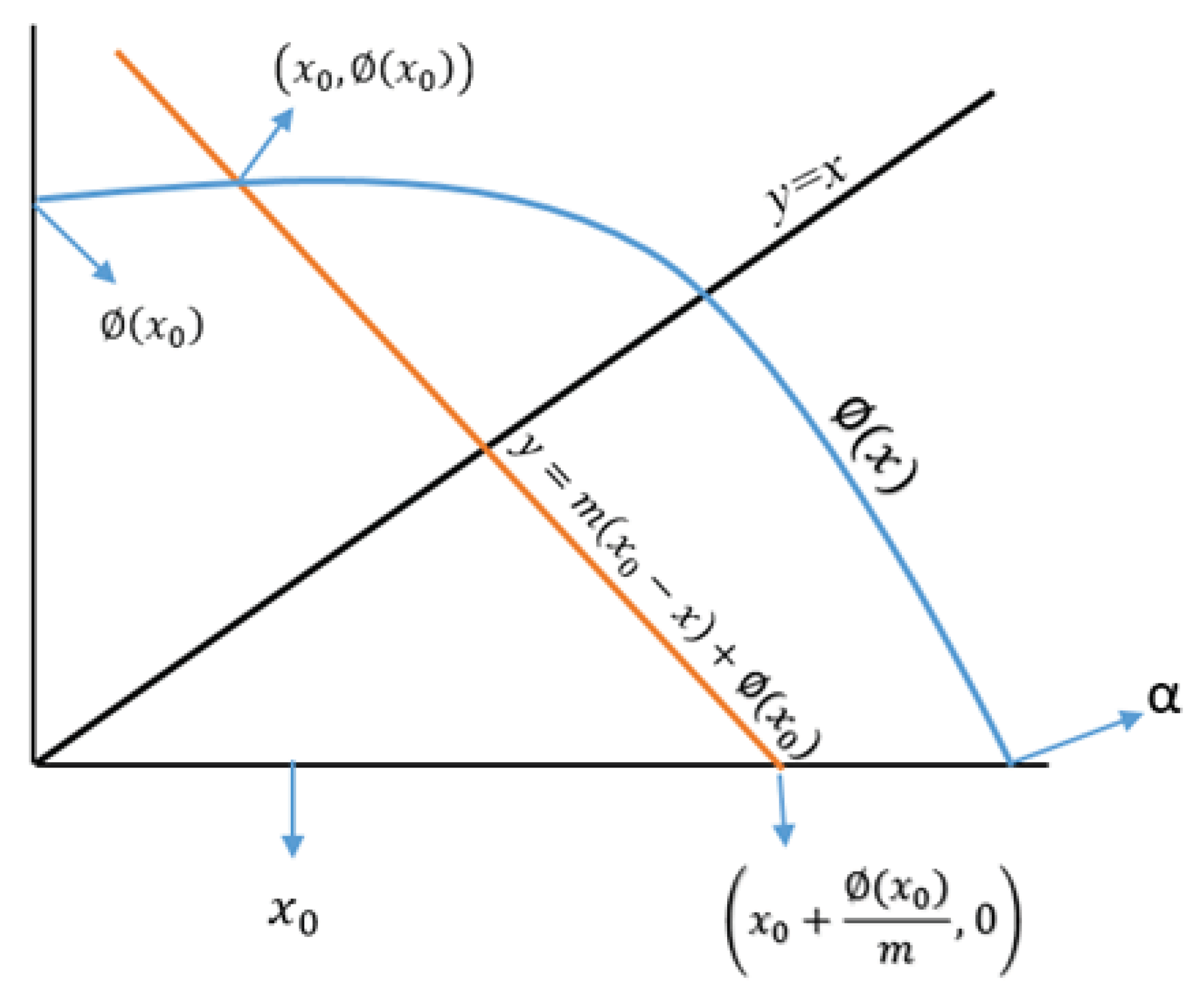

2. Geometric Derivation of the Family

Special Cases

- 1.

- For , Formula (7) corresponds to the classical fixed point method .

- 2.

- 3.

- 4.

- By inserting , in scheme (7), one achieves the following well-known Kranselski’s iteration [20]denoted by for the computational results (see also more recent work on this iteration in the book [21]).Similarly, we can derive several other formulas by taking different specific values of m. Furthermore, we proposed the following new schemes on the basis of some standard means of two quantities and of same signs:

- 5.

- Geometric mean-based fixed point formula is given by

- 6.

- Harmonic mean-based fixed point formula is defined by

- 7.

- Centroidal mean-based fixed point formula is mentioned as follows:

- 8.

- The following fixed point formula based on the Heronian mean is defined as

- 9.

- The fixed point formula based on Contra-harmonic is depicted as follows:

3. Two-Step Iterative Schemes

- 1.

- 2.

- Agarwal et al. [1] have proposed the following iteration scheme defined aswhere and are sequences of positive numbers in . We call this scheme by for the computational work and consider . For , it reduces to the well-known Mann iteration scheme.

- 3.

- Thianwan [23] defined the following two-step iteration scheme aswhere and are sequences of positive numbers in . We denote this method as for the computational work and choose . This scheme is also known as modification of Mann’s method.

Modified Schemes

4. Numerical Examples

5. Role of the Parameter ‘m’

- 1.

- Since implies that . Therefore, the parameter ‘’ ensures that the fixed point divides the interval between and internally in the ratio or , otherwise, there will be an external division and hence, .

- 2.

- Since . As is the sufficient condition for the convergence of modified fixed point method, then we haveThis further implies thatThis is the interval of convergence of our proposed scheme (7). As , so (26) represents a wider domain of convergence in contrast to the classical fixed point method . In particular for (arithmetic mean), (26) gives the following interval of convergence asTherefore, the arithmetic mean formula has a bigger interval of convergence as compared to simple fixed point method.

- 1.

- 2.

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Agawal, R.P.; Regan, D.O.; Sahu, D.R. Iterative constructions of the fixed point of nearly asymptotically nonexpansive mapping. J. Nonlinear Convex Anal. 2012, 27, 145–156. [Google Scholar]

- Traub, J.F. Iterative Methods for the Solution of Equations; Prentice-Hall: Englewood Cliffs, NJ, USA, 1964. [Google Scholar]

- Behl, R.; Salimi, M.; Ferrara, M.; Sharifi, S.; Samaher, K.A. Some real life applications of a newly constructed derivative free iterative scheme. Symmetry 2019, 11, 239. [Google Scholar] [CrossRef]

- Salimi, M.; Nik Long, N.M.A.; Sharifi, S.; Pansera, B.A. A multi-point iterative method for solving nonlinear equations with optimal order of convergence. Jpn. J. Ind. Appl. Math. 2018, 35, 497–509. [Google Scholar] [CrossRef]

- Sharifi, S.; Salimi, M.; Siegmund, S.; Lotfi, T. A new class of optimal four-point methods with convergence order 16 for solving nonlinear equations. Math. Comput. Simul. 2016, 119, 69–90. [Google Scholar] [CrossRef]

- Salimi, M.; Lotfi, T.; Sharifi, S.; Siegmund, S. Optimal Newton-Secant like methods without memory for solving nonlinear equations with its dynamics. Int. J. Comput. Math. 2017, 94, 1759–1777. [Google Scholar] [CrossRef]

- Matthies, G.; Salimi, M.; Sharifi, S.; Varona, J.L. An optimal eighth-order iterative method with its dynamics. Jpn. J. Ind. Appl. Math. 2016, 33, 751–766. [Google Scholar] [CrossRef]

- Sharifi, S.; Ferrara, M.; Salimi, M.; Siegmund, S. New modification of Maheshwari method with optimal eighth order of convergence for solving nonlinear equations. Open Math. (Former. Cent. Eur. J. Math.) 2016, 14, 443–451. [Google Scholar]

- Lotfi, T.; Sharifi, S.; Salimi, M.; Siegmund, S. A new class of three point methods with optimal convergence order eight and its dynamics. Numer. Algor. 2016, 68, 261–288. [Google Scholar] [CrossRef]

- Jamaludin, N.A.A.; Nik Long, N.M.A.; Salimi, M.; Sharifi, S. Review of some iterative methods for solving nonlinear equations with multiple zeros. Afr. Mat. 2019, 30, 355–369. [Google Scholar] [CrossRef]

- Nik Long, N.M.A.; Salimi, M.; Sharifi, S.; Ferrara, M. Developing a new family of Newton–Secant method with memory based on a weight function. SeMA J. 2017, 74, 503–512. [Google Scholar] [CrossRef]

- Ferrara, M.; Sharifi, S.; Salimi, M. Computing multiple zeros by using a parameter in Newton-Secant method. SeMA J. 2017, 74, 361–369. [Google Scholar] [CrossRef]

- Magreñán, A.A.; Argyros, I.K. A Contemporary Study of Iterative Methods: Convergence, Dynamics and Applications; Academic Press: Cambridge, MA, USA; Elsevier: Amsterdam, The Netherlands, 2019. [Google Scholar]

- Argyros, I.K.; Magreñán, A.A. Iterative Methods and Their Dynamics with Applications; CRC Press: New York, NY, USA; Taylor & Francis: Abingdon, UK, 2021. [Google Scholar]

- Burden, R.L.; Faires, J.D. Numerical Analysis; PWS Publishing Company: Boston, MA, USA, 2001. [Google Scholar]

- Ostrowski, A.M. Solution of Equations and Systems of Equation; Pure and Applied Mathematics; Academic Press: New York, NY, USA; London, UK, 1960; Volume IX. [Google Scholar]

- Petkovic, M.S.; Neta, B.; Petkovic, L.; Džunič, J. Multipoint Methods for Solving Nonlinear Equation; Elsevier: Amsterdam, The Netherlands, 2013. [Google Scholar]

- Schaefer, H. Über die methods sukzessiver approximationen. Jahreber Deutsch. Math. Verein 1957, 59, 131–140. [Google Scholar]

- Mann, W.R. Mean Value Methods in Iteration. Proc. Am. Math. Soc. 1953, 4, 506–510. [Google Scholar] [CrossRef]

- Kranselski, M.A. Two remarks on the method of successive approximation (Russian). Uspei Nauk. 1955, 10, 123–127. [Google Scholar]

- Berinde, V. Iterative Approximation of Fixed Points; Springer: Berlin/Heidelberg, Germany; New York, NY, USA, 2002. [Google Scholar] [CrossRef]

- Ishikawa, S. Fixed Point by a New Iteration Method. Proc. Am. Math. Soc. 1974, 44, 147–150. [Google Scholar] [CrossRef]

- Thianwan, S. Common fixed Points of new iterations for two asymptotically nonexpansive nonself mappings in Banach spaces. J. Comput. Appl. Math. 2009, 224, 688–695. [Google Scholar] [CrossRef]

- Noor, M.A. New approximation schemes for general variational inequalities. J. Math. Anal. Appl. 2000, 251, 217–229. [Google Scholar] [CrossRef]

- Phuengrattana, W.; Suantai, S. On the rate of convergence of Mann Ishikawa, Noor and SP iterations for continuous functions on an arbitrary interval. J. Comput. Appl. Math. 2011, 235, 3006–3014. [Google Scholar] [CrossRef]

- Cordero, A.; Torregrosa, J.R. Variants of Newtons method using fith-order quadrature formulas. Appl. Math. Comput. 2007, 190, 686–698. [Google Scholar]

| Predictor | Ishikawa’s | Agarwal | Thianwan |

|---|---|---|---|

| Corrector | Corrector | Corrector | |

| called by | |||

| known by | |||

| denoted by | |||

| called by | |||

| known by |

| Examples | E.C. | FIM | KM | GM | HM | OM1 | OM2 | OM3 | OM4 |

|---|---|---|---|---|---|---|---|---|---|

| R.E. | |||||||||

| (1) | |||||||||

| (2) | |||||||||

| (3) | |||||||||

| (4) | |||||||||

| (5) | |||||||||

| Examples | E.C. | IGM | IHM | IOM1 | IOM2 | IOM3 | |

|---|---|---|---|---|---|---|---|

| R.E. | |||||||

| (1) | |||||||

| (2) | |||||||

| (1) | |||||||

| (4) | |||||||

| (5) | |||||||

| Examples | E.C. | AS | AGM | AHM | AOM1 | AOM2 | AOM3 |

|---|---|---|---|---|---|---|---|

| R.E. | |||||||

| (1) | |||||||

| (2) | |||||||

| (3) | |||||||

| (4) | |||||||

| (5) | |||||||

| Examples | E.C. | MS | TS | TGM | THM | TOM1 | TOM2 | TOM3 |

|---|---|---|---|---|---|---|---|---|

| R.E. | ||||||||

| (1) | ||||||||

| (2) | ||||||||

| (3) | ||||||||

| (4) | ||||||||

| (5) | ||||||||

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kanwar, V.; Sharma, P.; Argyros, I.K.; Behl, R.; Argyros, C.; Ahmadian, A.; Salimi, M. Geometrically Constructed Family of the Simple Fixed Point Iteration Method. Mathematics 2021, 9, 694. https://doi.org/10.3390/math9060694

Kanwar V, Sharma P, Argyros IK, Behl R, Argyros C, Ahmadian A, Salimi M. Geometrically Constructed Family of the Simple Fixed Point Iteration Method. Mathematics. 2021; 9(6):694. https://doi.org/10.3390/math9060694

Chicago/Turabian StyleKanwar, Vinay, Puneet Sharma, Ioannis K. Argyros, Ramandeep Behl, Christopher Argyros, Ali Ahmadian, and Mehdi Salimi. 2021. "Geometrically Constructed Family of the Simple Fixed Point Iteration Method" Mathematics 9, no. 6: 694. https://doi.org/10.3390/math9060694

APA StyleKanwar, V., Sharma, P., Argyros, I. K., Behl, R., Argyros, C., Ahmadian, A., & Salimi, M. (2021). Geometrically Constructed Family of the Simple Fixed Point Iteration Method. Mathematics, 9(6), 694. https://doi.org/10.3390/math9060694