1. Introduction

Signals with time-varying spectral content, known as non-stationary signals, are analyzed using time-frequency signal (TF) signal analysis [

1,

2,

3,

4,

5,

6,

7,

8,

9,

10,

11,

12,

13,

14,

15,

16,

17]. Some commonly used TF representations include short-time Fourier transform (STFT) [

1,

3], pseudo-Wigner distribution (WD) [

1,

9,

12], and S-method (SM) [

3]. Time-scale, multi-resolution analysis using the wavelet transform is an additional approach to characterize non-stationary signal behavior [

4]. Various representations are primarily applied in the instantaneous frequency (IF) estimation and related applications [

8,

9,

10,

11,

12,

13,

14,

15], since they concentrate the energy of a signal component at and around the respective instantaneous frequency. Concentration measures provide a quantitative description of the signal concentration in the given representation domain [

18], and can be used to assess the area of the time-frequency plane covered by a signal component.

In order to characterize multicomponent signals, it is quite common to perform signal decomposition, which assumes that each individual component is extracted for separate analysis, such as for the IF estimation. Decomposition techniques for multicomponent signals are quite efficient if components do not overlap in the time-frequency plane [

19,

20,

21,

22,

23,

24,

25,

26]. The method originally presented in [

26] can be used to completely extract each component by using an intrinsic relation between the PWD and the SM. In the analysis of multicomponent signals, it is, however, common that various components partially overlap in the time-frequency plane, making the decomposition process particularly challenging [

19,

20,

21,

22,

23,

24,

25,

26]. In this rather unfavorable scenario, overlapped components partially share the same domains of supports, and existing decomposition techniques provide only partial results in the univariate case, limited to very narrow signal classes. For example, linear frequency modulated signals are decomposed using the chirplet transform, Radon transform, or similar techniques [

20,

25], whereas sinusoidally modulated signals are separated using the inverse Radon transform [

27]. However, these techniques cannot perform the decomposition when components have a general, non-stationary form.

In the multivariate (multichannel) framework, it is assumed that the signals are acquired using multiple sensors, [

28,

29,

30,

31,

32,

33,

34,

35,

36,

37,

38,

39,

40,

41,

42,

43,

44]. The sensors modify component amplitudes and phases. However, the interdependence of values from various channels can be utilized in the signal decomposition. This concept has also been exploited in the empirical mode decomposition (EMD) [

39,

40,

41,

42,

43]. It was previously shown that WD-based decomposition is possible if signals are available in the multivariate form [

28,

29,

30]. Moreover, the decomposition can be performed by directly engaging the eigenanalysis of the auto-correlation matrix, calculated for signals in the multivariate form [

31,

32,

33,

34]. It should also be noted that the problem of multicomponent signal decomposition has some similarities with the blind source separation [

45,

46,

47,

48]. However, the basic difference is in the aim to extract each signal component in the decomposition framework, whereas in the blind source separation, the aim is to separate signal sources (although one source may generate several components). The mixing scheme from the blind source separation framework is used in a recently proposed mode decomposition approach [

49]. Another line of the decomposition-related research includes mode decomposition techniques, which could be used for separation of modal responses and identification of progressive changes in modal parameters [

50].

Overlapped components pose a challenge in various applications, such as in biomedical signal processing [

44,

51,

52], radar signal processing [

53], and processing of lamb waves [

54]. Popular approaches, such as the EMD and multivariate EMD (MEMD), [

39,

40,

41,

42,

43] cannot respond to the challenges posed by components overlapped in the time-frequency plane and do not provide acceptable decomposition results in this particular case [

28]. Additionally, the applicability of these methods is highly influenced by amplitude variations of the signal components. In this paper, we present a framework for the decomposition of acoustic dispersive environment signals into individual modes based on the multivariate decomposition of multicomponent non-stationary signals. Even when simple signal forms are transmitted, acoustic signals in dispersive channels appear in the multicomponent form, with either very close or partially overlapped components. Being reflected from the underwater surfaces and objects, each individual component carries information about the underwater environment. That information is inaccessible while the signal is in its multicomponent form. This makes analyzing acoustic signals (mainly their localization and characterization) a challenging problem for research [

55,

56,

57,

58,

59,

60]. The presented decomposition approach enables complete separation of components and their individual characterization (e.g., IF estimation, based on which knowledge regarding the underwater environment can be acquired).

We aim at solving this notoriously difficult practical problem by exploiting the interdependencies of multiply acquired signals: such signals can be considered as multivariate and are subject to slight phase changes across various channels, occurring due to different sensing positions and due to various physical phenomena, such as water ripples, uneven seabed, and changes in the seabed substrate. As each eigenvector of the autocorrelation matrix of the input signal represents a linear combination of the signal components [

31,

33], slight phase changes across the various channels are actually favorable for forming an undetermined set of linearly independent equations relating the eigenvectors and the components. Moreover, we have previously shown that each component is a linear combination of several eigenvectors corresponding to the largest eigenvalues, with unknown weights [

31] (the number of these eigenvalues is equal to the number of signal components). Among infinitely many possible combinations of eigenvectors, the aim is to find the weights producing the most concentrated combination, as each individual signal component (mode) is more concentrated than any linear combination of components, as discussed in detail in [

31]. Therefore, we engage concentration measures [

18] to set the optimization criterion and perform the minimization in the space of the weights of linear combinations of eigenvectors.

We revisit our previous research from [

28,

31,

33], and the main contributions are two-fold. The decomposition principles of the auto-correlation matrix [

31,

33] are reconsidered. Instead of exploiting direct search [

31] or a genetic algorithm [

33], we show that the minimization of concentration measure in the space of complex-valued coefficients acting as weights of eigenvectors, which are linearly combined to form the components, can be performed using a steepest-descent-based methodology, originally used in the decomposition from [

28]. The second contribution is the consideration of a practical application of the decomposition methodology.

The paper is organized as follows. After the Introduction, we present the basic theory behind the considered acoustic dispersive environment in

Section 2.

Section 3 presents the principles of multivariate signal decomposition of dispersive acoustic signals. The decomposition algorithm is summarized in

Section 4. The theory is verified on numerical examples and additionally discussed in

Section 5. Whereas the paper ends with concluding remarks.

2. Dispersive Channels and Shallow Water Theory

Our primary goal is the decomposition of signals transmitted through dispersive channels. Decomposition assumes the separation of signal components while preserving the integrity of each component. Signals transmitted through dispersive channels are multicomponent and non-stationary, even in cases when emitted signals have a simple form. This makes the challenging problem of decomposition, localization, and characterization of such signals a fairly studied topic [

55,

56,

57,

58,

59,

60,

61,

62,

63,

64,

65,

66,

67]. The decomposition can be performed using the time-frequency phase-continuity of the signals [

55], or using the mode characteristics of the signal [

56]. After being transmitted through a dispersive environment, measured signals consist of several components called modes. The non-stationarity of these modes is a consequence of frequency dependent properties of the signal propagation media.

The dispersive acoustic environment is commonly studied within the context of shallow waters, defined by the depth of the sea/ocean, which are less than

m [

55,

57,

58,

59,

60,

61,

62,

63,

64,

65,

66,

67]. The speed of signals traveling through water is affected by many factors, such as the salinity, the temperature, or the pressure of the water, but it is usually approximated around 1480–1500 m/s. Note that this speed is larger than the speed of signals traveling through the air, which is estimated at approximately 340–360 m/s. Such setups typically have extremely complex analyses. Moreover, bottom properties and water volume add up to this complexity, as well as noise caused by activities on the water surface and on the coastlines (commonly related to cavitation). Dispersivity of shallow waters occurs due to many reasons, among which are the roughness of the bottom, strength of the waves, and cavity level. Dispersive channels have varying frequency characteristics (phase and spectral content) during the transmission of the signal.

2.1. Normal Mode Solution

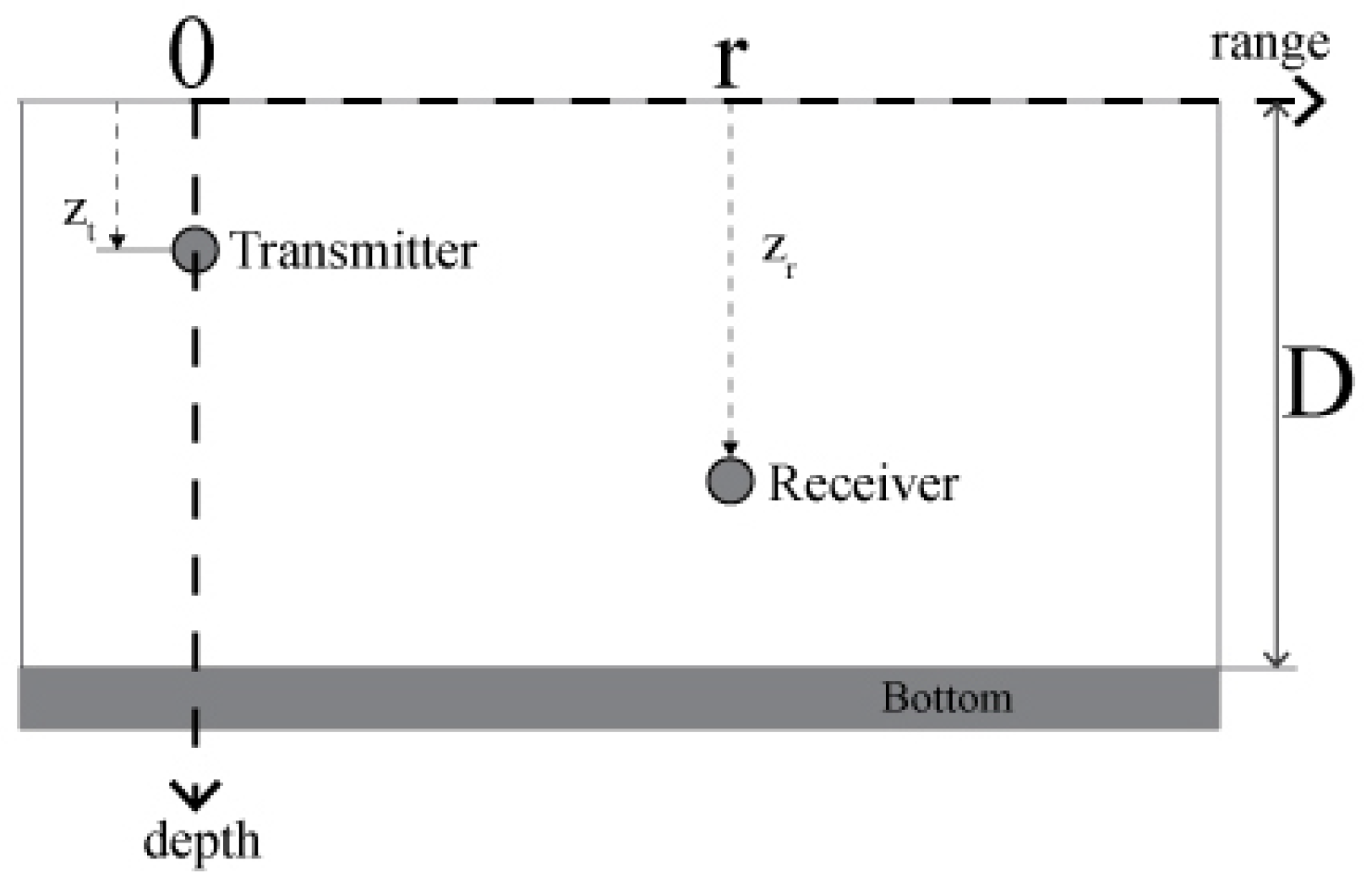

The propagation of sound in a shallow water environment is mathematically represented by the wave equations. Among several methods of deriving the solution of the wave equation, the most commonly used is the normal mode solution, based on solving depth-dependent equations using the method of variable separation. Further analysis will be developed based on the isovelocity waveguide model presented in

Figure 1, which characterizes a rigid boundary of the seabed. This further yields to an ideally spread velocity

c. Furthermore, channel models assume that the structure of a channel is a waveguide, where multiple normal-modes are received as delayed and scaled versions of the transmitted signal [

56,

58,

59,

65]. Our aim is to decompose the received signal by extracting each mode separately. Such extracted modes can be used in further processing, such as IF estimation, characterization, and classification.

More general models assume a more complicated environment, where the boundary of the bottom depends on the nature of the ocean, such as the roughness, depending on the weather conditions and different environments in the ocean itself. These models take into account the scattering of the transmitted signal as well. Our future work will be oriented towards these models as well.

2.2. Problem Formulation—Signal Processing Approach

The practical setup, shown in

Figure 1 is further considered. In this setup, it is assumed that the transmitter is located in the water at the depth of

, whereas the receiver is located at the depth of

meters. It is assumed that the wave is transmitted through an isovelocity channel as in [

55,

56,

57,

61,

62,

63,

67]. The distance between the transmitter and the receiver is

r.

Taking into account the spectrum of the received signal, in the normal-mode case, the transfer function reads

with

and

being the modal functions of the

p-th mode corresponding to the transmitter and the receiver [

55,

56,

65], with the attenuation rate is

. Angular frequency is denoted by

. The modes are dependent on wavenumbers

[

55]

The multicomponent structure of the transfer function is dependent on the number of modes. The speed of sound propagation underwater is m/s.

The response to a monochromatic signal

at the

p-th mode can be written as

The phase velocity of this signal is

This is the horizontal velocity of the corresponding phase for the

p-th mode. The energy propagation of the signal component is represented by the group velocity

The received signal can be represented in the Fourier transform domain as a product of the Fourier transform of the transmitted signal,

and the transfer function

of the channel in the normal-mode form; that is

In time domain, the received signal,

, is the convolution of the transmitted signal,

and the impulse response,

, from (

1), i.e.,

In the following sections, we present an efficient methodology for the decomposition of mode functions, which will make the problem of detecting and estimating the received signal parameters straightforward.

3. Multivariate Decomposition

3.1. Multivariate (Multichannel) Signals

Multivariate or multichannel signals are acquired using multiple sensors. It is further assumed that

C sensors at the receiving position are used for the acquisition of signal

. Here, subscript

R is used to denote the fact that the acquired signal is real-valued. All

C sensors placed at the depth

are part of the receiver. In the range direction, sensor distances from the transmitter are

,

. Deviations

,

, are small as compared to the distance,

r, between the transmitter and receiver locations in

Figure 1 in range direction.

Since the measured signal,

, is real-valued, its analytic extension

is assumed in the further multivariate decomposition setup, where

is the Hilbert transform of this signal. This analytic form assumes only non-negative frequencies. Each sensor modifies the amplitude and the phase of the acquired signal. Therefore, the channel signals take the form

, for each sensor

. When a monocomponent signal

, is measured at sensor

c, this yields

or

in the case of a real-valued signal. The corresponding analytic signal,

is a valid representation of the real amplitude-phase signal

if the spectrum of

is nonzero only within the frequency range

and the spectrum of

occupies a non-overlapping (much) higher frequency range [

5]. If variations of the amplitude,

, are much slower than the phase

variations, then this signal is monocomponent [

31]. A unified representation of a multichannel (multivariate) signal, acquired using

C sensors, assumes the following vector form

3.2. Multivariate Multicomponent Signals

When the measured signal consists of a linear combination of

P linearly independent components

,

, then it is commonly referred to as a multicomponent signal

Component amplitudes, , are characterized by slow-varying dynamics as compared to the variations of the component phases, . Linear independence of the components assumes that neither component can be represented as a linear combination of other components for any considered time instant n.

Incorporation of multicomponent signal definition (

11) into the multichannel form (

10), yields

or, more briefly,

that is

where the vector of signal components,

is, for instant

n, given by

Matrix

of size

, which relates the signal in the

c-th channel,

with signal components,

, in form of a linear combination

has the following form

with elements being complex constants

,

,

. These constants linearly relate the channel signals with signal components. Clearly, the maximum number of independent channels

,

,

in

is

since

.

The relation between the

C measured channel signals,

, and

P components,

, can be, taking into consideration all time instants, formed by introducing

matrix

with elements being the sensed signal values, and

comprising the samples of signal components

. In that case, the relation is

or

Now we can introduce the autocorrelation matrix

of the sensed signal, whose eigenvectors will be used in the multivariate decomposition framework:

where

denotes the Hermitian transpose. Individually, elements of this matrix are products of

and

at given instants

and

:

where

is the column vector of sensed values at a given instant

. As it will be shown next, the eigenvectors of the autocorrelation matrix,

, corresponding to the largest eigenvalues, consist of linear combinations of signal components. This fact will be used to develop the algorithm for the extraction of those components.

3.3. Eigendecomposition of the Autocorrelation Matrix

It is well-known that any square matrix

, of dimensions

, can be subject of eigenvalue decomposition

with

being the eigenvalues and

being the corresponding eigenvectors of matrix

. Matrix

contains eigenvalues

,

on the main diagonal and zeros at other positions. Matrix

contains the eigenvectors

as its columns. Since

is symmetric, eigenvectors are orthogonal.

From definition (

20) and based on relation

, autocorrelation matrix

can be rewritten as

where

is used to denote elements of matrix

and

. Elements of matrix

are

Based on the decomposition of matrix

on its eigenvalues and eigenvectors, we further have

with

. It will be further assumed that the number of sensors,

C is such that

. In that case, there are

eigenvectors in (

25). Two general cases can be further discussed:

Non-overlapped components. Note that the case when no components

and

overlap in the time-frequency plane implies that these components are orthogonal. In that case, the right side of (

25) becomes:

where

. The considered case of non-overlapped (orthogonal) components further implies that

Partially overlapped components. Based on (

25), since the partially overlapped components are non-orthogonal; that is, such components are linearly dependent, eigenvectors can be expressed as linear combinations of such components

with

, i.e., for assumed

,

.

3.4. Components as the Most Concentrated Linear Combinations of Eigenvectors

Based on (

28) and for assumed

, each signal component,

can be expressed as a linear combination of eigenvectors

of matrix

,

; that is

where

,

,

are unknown coefficients. Obviously, there are

linear equations for

P components, with

unknown weights. Among infinitely many solutions of this undetermined system of equations, we aim at finding those combinations that produce signal components. Moreover, since components are partially overlapped, in the case when one component is detected, its contribution should be removed from all equations (linear combinations of eigenvectors) in order to avoid that it is detected again.

Obviously, for the detection of linear combinations of eigenvectors, which represent signal components, a proper detection criterion shall be established. Since non-stationary signals can be suitably represented using time-frequency representations, and signal components tend to be concentrated along their instantaneous frequencies, our criterion will be based on time-frequency representations.

Time-frequency signal analysis provides a mathematical framework for a joint representation of signals in time and frequency domains. If

denotes a real-valued, symmetric window function of length

, then signal

can be represented using the

STFT

which renders the frequency content of the portion of signal around the each considered instant

n, localized by the window function

.

To determine the level of the signal concentration in the time-frequency domain, we can exploit concentration measures. Among various approaches, inspired by the recent compressed sensing paradigm, measures based on the

norm of the

STFT have been used lately [

18]

where

represents the commonly used spectrogram, whereas

. For

, the

-norm is obtained.

We consider P components, . Each of these components has finite support in the time-frequency domain, , with areas of support , . Supports of partially overlapped components are also partially overlapped. Furthermore, we will make a realistic assumption that there are no components that overlap completely. Assume that .

Consider further the concentration measure

of

for

. If all components are present in this linear combination, then the concentration measure

, obtained for

in (

31), will be equal to the area of

.

If the coefficients

,

are varied, then the minimum value of the

-norm based concentration measure is achieved for coefficients

corresponding to the most concentrated signal component

, with the smallest area of support,

, since we have assumed, without the loss of generality, that

holds. Note that, due to the calculation and sensitivity issues related with the

-norm, within the compressive sensing area,

-norm is widely used as its alternative, since under reasonable and realistic conditions, it produces the same results [

31]. Therefore, it can be considered that the areas of the domains of support in this context can be measured using the

-norm.

The problem of extracting the first component, based on eigenvectors of the autocorrelation matrix of the input signal, can be formulated as follows

The resulting coefficients produce the first component (candidate)

Note that if

holds, then the component is exact; that is,

holds. In the case when the number of signal components is larger than two, the concentration measure in (

33) can have several local minima in the space of unknown coefficients

, corresponding not only to individual components but also to linear combinations of two, three or more components. Depending on the minimization procedure, it can happen that the algorithm finds this local minimum; that is, a set of coefficients producing a combination of components instead of an individual component. In that case, we have not extracted successfully a component since

in (

34), but as it will be discussed next, this issue does not affect the final result, as the decomposition procedure will continue with this local minimum eliminated.

3.5. Extraction of Detected Component and Further Decomposition

Upon detection of the first local minimum, being a signal component or a linear combination of several components,

, first eigenvector,

should be replaced by

in the linear combination

i.e.,

is further used as the first eigenvector. However, since (

28) holds, the contribution of this detected component (or linear combination of components) is still present in remaining eigenvectors

and shall be removed from these eigenvectors as well. To this aim, we utilize the signal deflation theory [

31], and remove the projections of this component from remaining eigenvectors using

This ensures that is not repeatedly detected afterward. If , then the first component is found and extracted, whereas its projection on other eigenvectors is removed.

The described procedure is then repeated iteratively, with linear combination

with first eigenvector

and eigenvectors

, modified according to (

36). Upon detecting the second component (or linear combination of a small number of components),

, the second eigenvector is replaced,

, whereas its projections from remaining eigenvectors is removed using

The process repeats until all components are detected and extracted. These principles are incorporated into the decomposition algorithm presented in the next section.

4. The Decomposition Algorithm and Concentration Measure Minimization

4.1. Decomposition Algorithm

The decomposition procedure can be summarized with the following steps:

For given multivariate signal

calculate the input autocorrelation matrix

where

Find eigenvectors and eigenvalues , of matrix .

It should be noted that the number of components,

P, can be estimated based on the eigenvalues of matrix

. Namely, as discussed in [

31],

P largest eigenvalues of matrix

R correspond to signal components. These eigenvalues are larger than the remaining

eigenvalues. This property holds even in the presence of a high-level noise: a threshold for separation of eigenvalues corresponding to signal components can be easily determined based on the input noise variance [

28].

Initialize variable and variable . Variable will store the number of updates of eigenvectors , when projection of detected component (candidate) is removed from eigenvectors , . Variable k represents the index of the detected components.

For , repeat the following steps:

- (a)

Solve minimization problem

where

is the STFT operator. Signal

is scaled with

in order to normalize energy of the combined signal to 1. Coefficients

are obtained as a result of the minimization.

- (b)

Increment component index

- (c)

If for any , holds, then

If , return to Step 3.

Finally, as a result, we obtain the number of components, P, and the set of extracted components, .

It should be noted that checking whether holds in Step 5 is crucial for removing possibly detected local minima of concentration measure not corresponding to individual components, but to a linear combination of several components. Namely, if this situation happens, upon applying signal deflation by removing projection of the linear combination of components from other eigenvectors, a linear dependence among eigenvectors will still remain, and it will not allow to fall to zero. This returns the algorithm to Step 3, and the procedure for the component detection repeats, but with the local minimum removed from the concentration measure since all the eigenvectors are already updated in the previous cycle. Note that the component index k is reset to zero in this case.

Moreover, it should be emphasized that, while the presented procedure produces

P eigenvectors, which is exactly equal to the given number of components, this number is not always

a priori known. In practical applications, it can be determined based on eigenvalues of matrix

. As it will be illustrated in numerical examples, the largest eigenvalues correspond to signal components [

28,

31]. The remaining eigenvalues correspond to the noise. Therefore, a simple threshold can be used to calculate the exact number of signal components. Namely, we simply count the number of eigenvalues larger than a predefined threshold

T, being a small positive constant. In the presence of the noise, threshold

T should be at least equal to the noise variance. The larger the noise, the larger are the eigenvalues corresponding to the noise (thus, larger should the threshold

T be). Of course, the procedure works without the exact information about the number of components: for

, eigenvectors

contain only the noise after the decomposition is finished.

4.2. Concentration Measure Minimization

The concentration measure minimization is performed in the steepest descent manner, as presented in Algorithm 1. The coefficient is fixed for , whereas the values of other coefficients are varied for . Note that real and imaginary parts are varied separately.

For the real part, and for each , , the -norm based concentration measure is calculated in both cases, for auxiliary signal formed when given coefficient is increased by , and for the other auxiliary signal formed when is subtracted from the given coefficient.

For illustration, observe linear combination

. When

is added to given

,

,

, signal

is formed. For this signal, with energy normalized using the

-norm; that is

concentration measure

is calculated, as the

-norm of the corresponding STFT coefficients

Similarly, for the coefficient

changed in the opposite direction; that is, for

, measure

is calculated for signal

| Algorithm 1 Minimization procedure |

Input:Vectors Index i where corresponding vector should be kept with unity coefficient Required precision

|

- 1:

- 2:

- 3:

- 4:

repeat - 5:

- 6:

- 7:

if then - 8:

- 9:

▹ Cancel the last coefficients update - 10:

- 11:

else - 12:

- 13:

end if - 14:

for do - 15:

if then - 16:

- 17:

- 18:

- 19:

- 20:

- 21:

else - 22:

- 23:

end if - 24:

end for - 25:

▹ Coefficients update - 26:

until is below required precision

|

| Output: |

Since each considered coefficient

is complex-valued in general, the same procedure is repeated for the imaginary parts of coefficients. Therefore, signals

and

are formed, serving as a basis to calculate the corresponding concentration measures

and

Now, based on the calculated concentration measures for variations of real and imaginary parts, concentration measure gradient

is calculated and used to determine the direction for the update of

where

used for scaling the gradient is calculated as concentration measure of

scaled by its energy, before updates of coefficient

; that is

For coefficients , , the gradient is equal to zero; that is, , meaning that these coefficients should not be updated.

Coefficient

is updated using the calculated gradient, in the steepest descent manner

The process is repeated until becomes smaller than a predefined precision .

5. Results

For the visual presentation of the results, the discrete Winger distribution (pseudo-Wigner distribution) will be used in our numerical examples. For a discrete signal

, this second-order time-frequency representation is calculated according to

where

is a window function of length

.

For examples 1, 2, and 3, the quality of the decomposition will be determined based on two criteria:

WD calculated for the pth original component (signal is given analytically), denoted by , is compared with , being the WD calculated for pth extracted component, for . Here, denotes the vector of the pth extracted component, whereas is the actual (original) pth signal component.

Estimation results for the discrete IFs obtained from the two previous WDs are compared by the means of mean squared error (MSE) for each pair of components. The IF estimate based on the WD of the original

pth component,

,

is calculated as [

3]

whereas the IF estimate based on the WD of the

pth component extracted by the proposed approach is calculated as

Since the extracted components do not have any particular order after the decomposition is finished, the corresponding pairs of original and extracted components are automatically determined using the following procedure:

Upon determining pairs of original and estimated components,

, respective IF estimation MSE is calculated for each pair

where

.

It should also be noted that in Examples 1–3, in order to avoid IF estimation errors at the ending edges of components (since they are characterized by time-varying amplitudes), the IF estimation is based on the WD auto-term segments larger than

of the maximum absolute value of the WD corresponding to the given component (auto-term), i.e.,

where

is a threshold used to determine whether a component is present at the considered instant

n. If it is smaller than

of the maximal value of the WD, it indicates that the component is not present.

Examples

Example 1. To evaluate the presented theory, we consider a general form of a multicomponent signal consisted of P non-stationary components and . Phases , , are random numbers with uniform distribution drawn from interval . The signal is available in the multivariate form and is consisted of C channels, since it is embedded in a complex-valued, zero-mean noise with a normal distribution of its real and imaginary part, . Noise variance is , whereas . Parameters and are FM parameters, while is used to define the effective width of the Gaussian amplitude modulation for each component. We generate the signal of the form (

58) with

components, whereas the noise variance is

. The respective number of channels is

. The corresponding autocorrelation matrix,

, is calculated, according to (

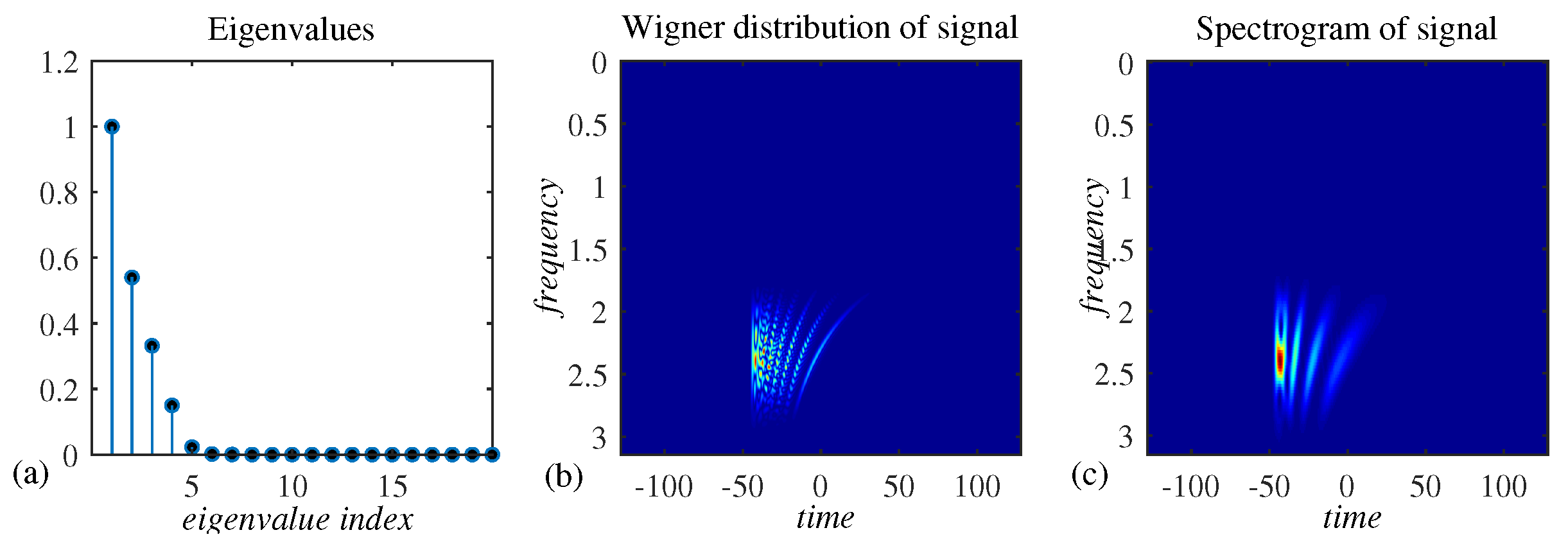

20), and the presented decomposition approach is used to extract the components. Eigenvalues of matrix

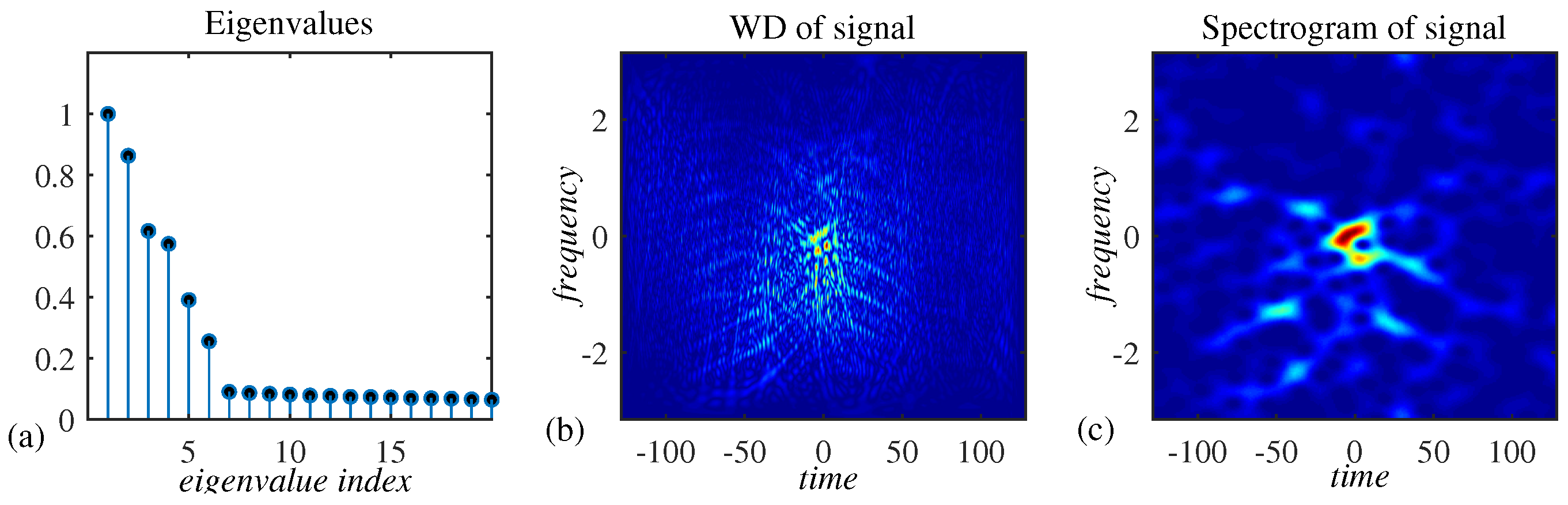

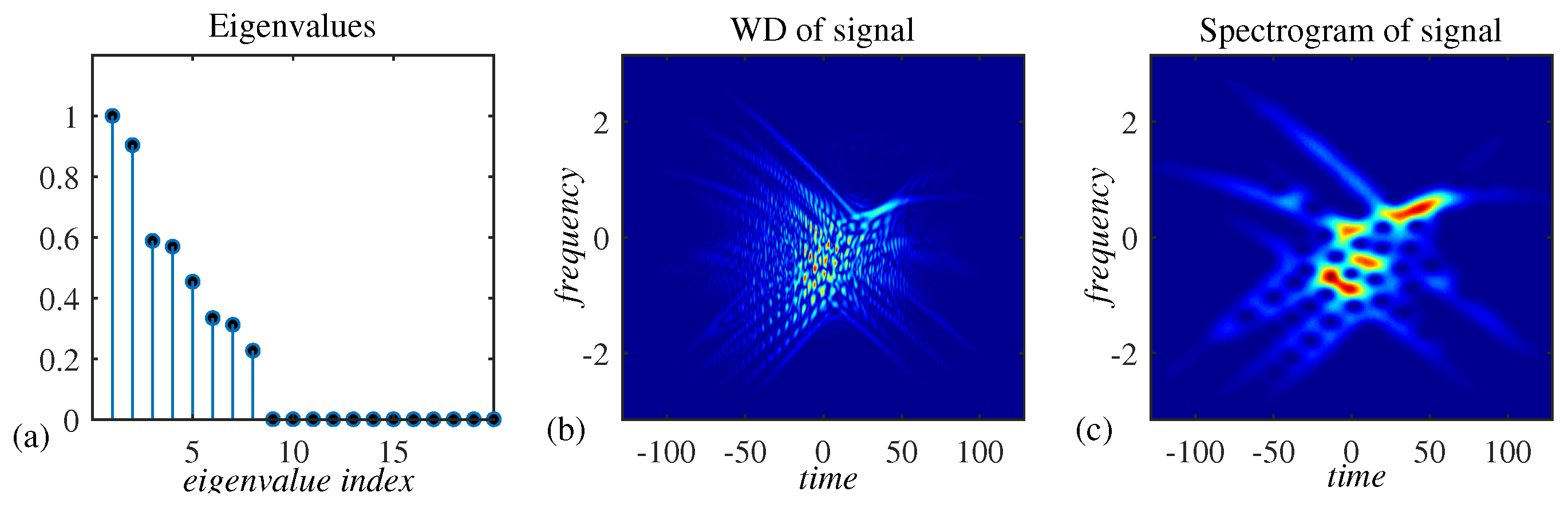

are given in

Figure 2a. Largest six eigenvalues correspond to signal components, and they are clearly separable from the remaining eigenvalues corresponding to the noise. WD and spectrogram of the given signal (from one of the channels) are given in

Figure 2b,c, indicating that the signal is not suitable for the classical TF analysis, since the components are highly overlapped.

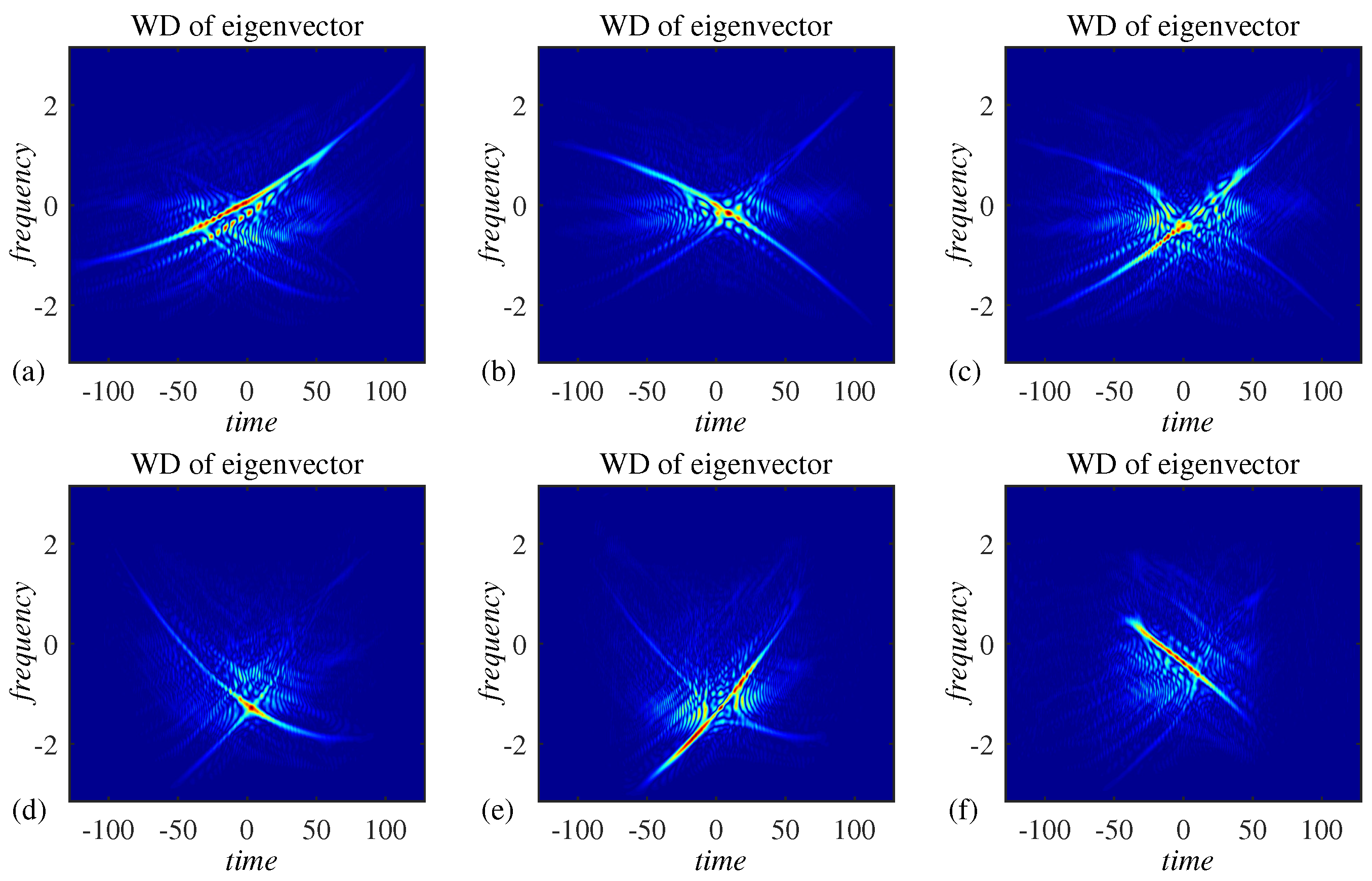

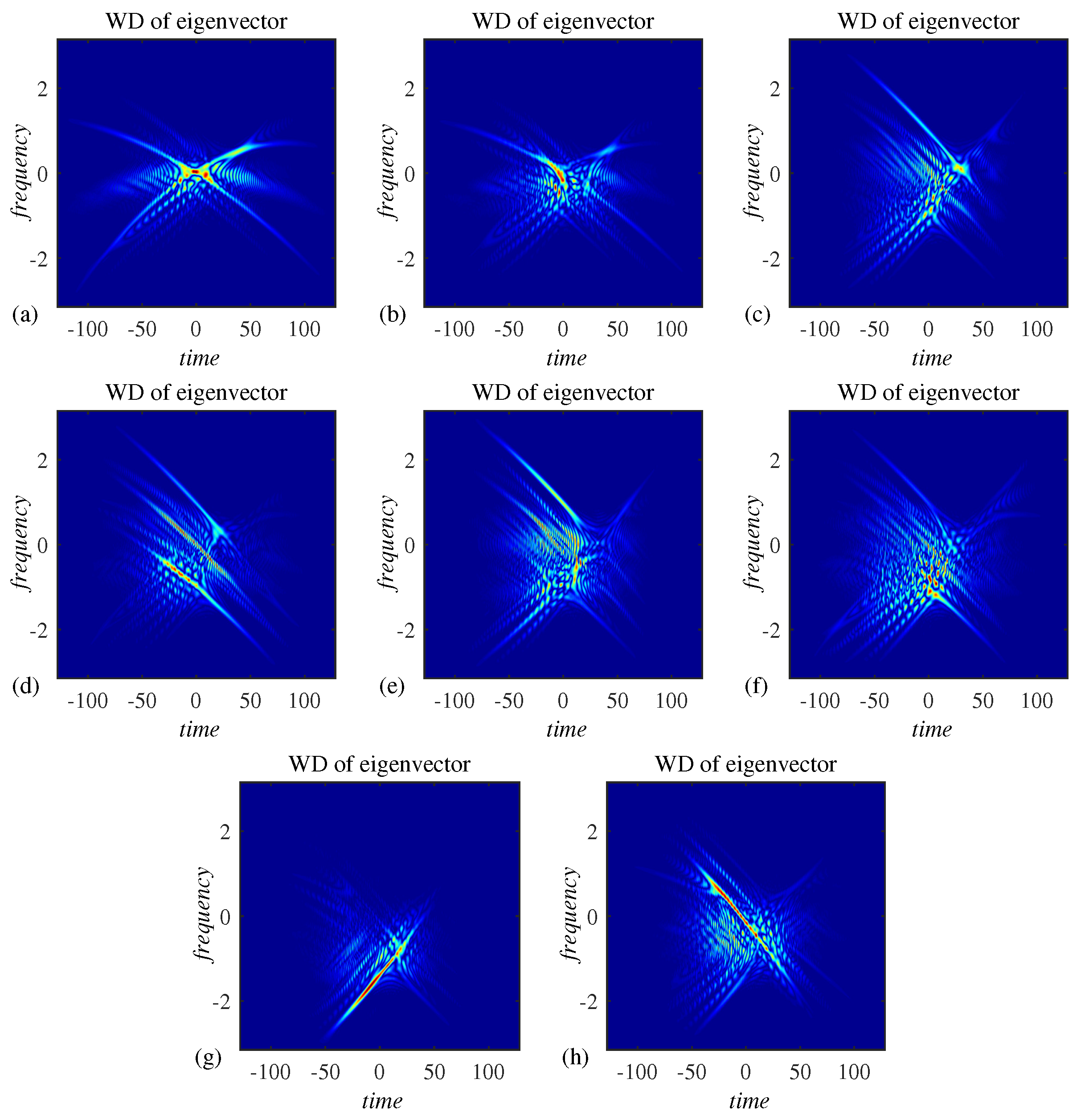

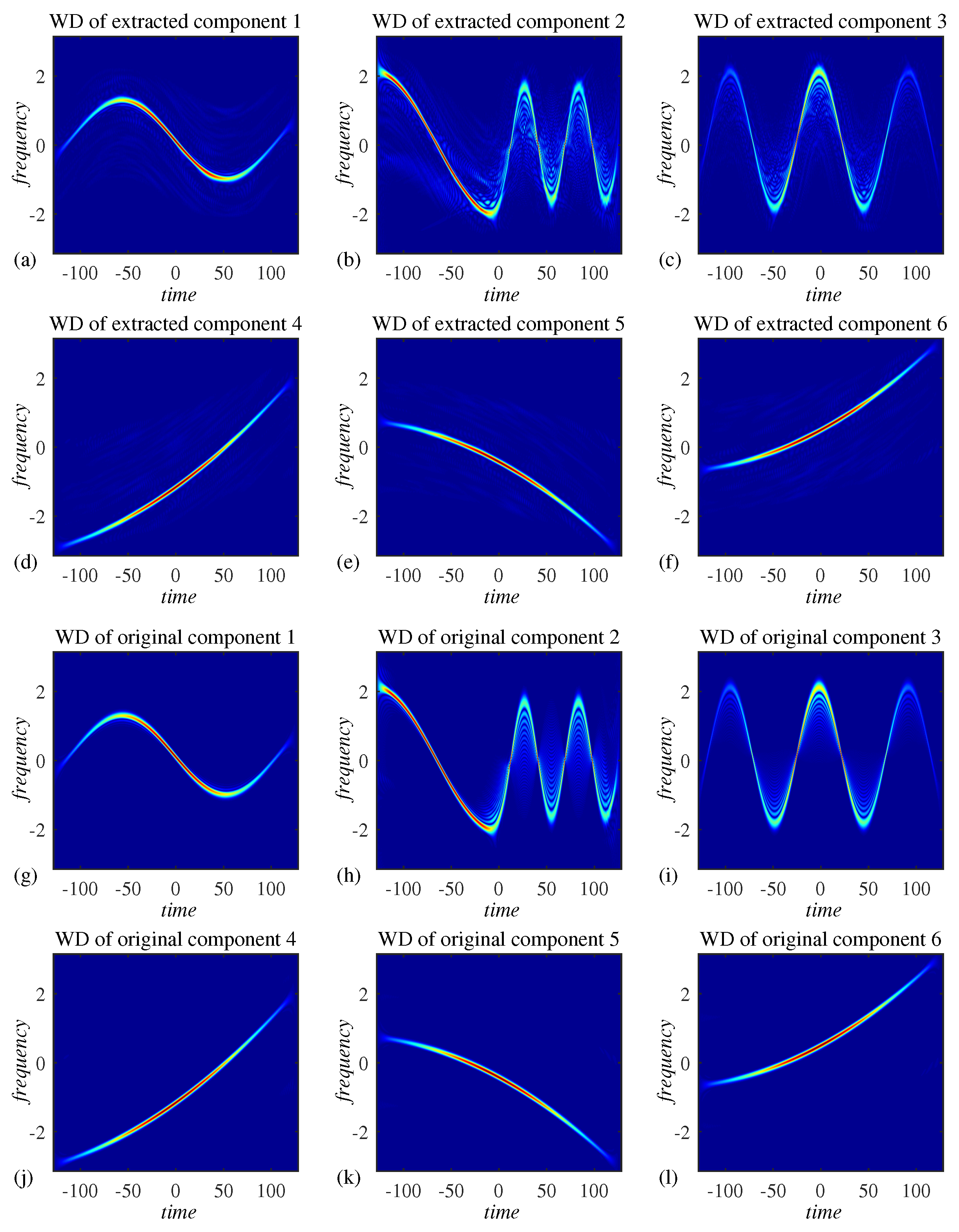

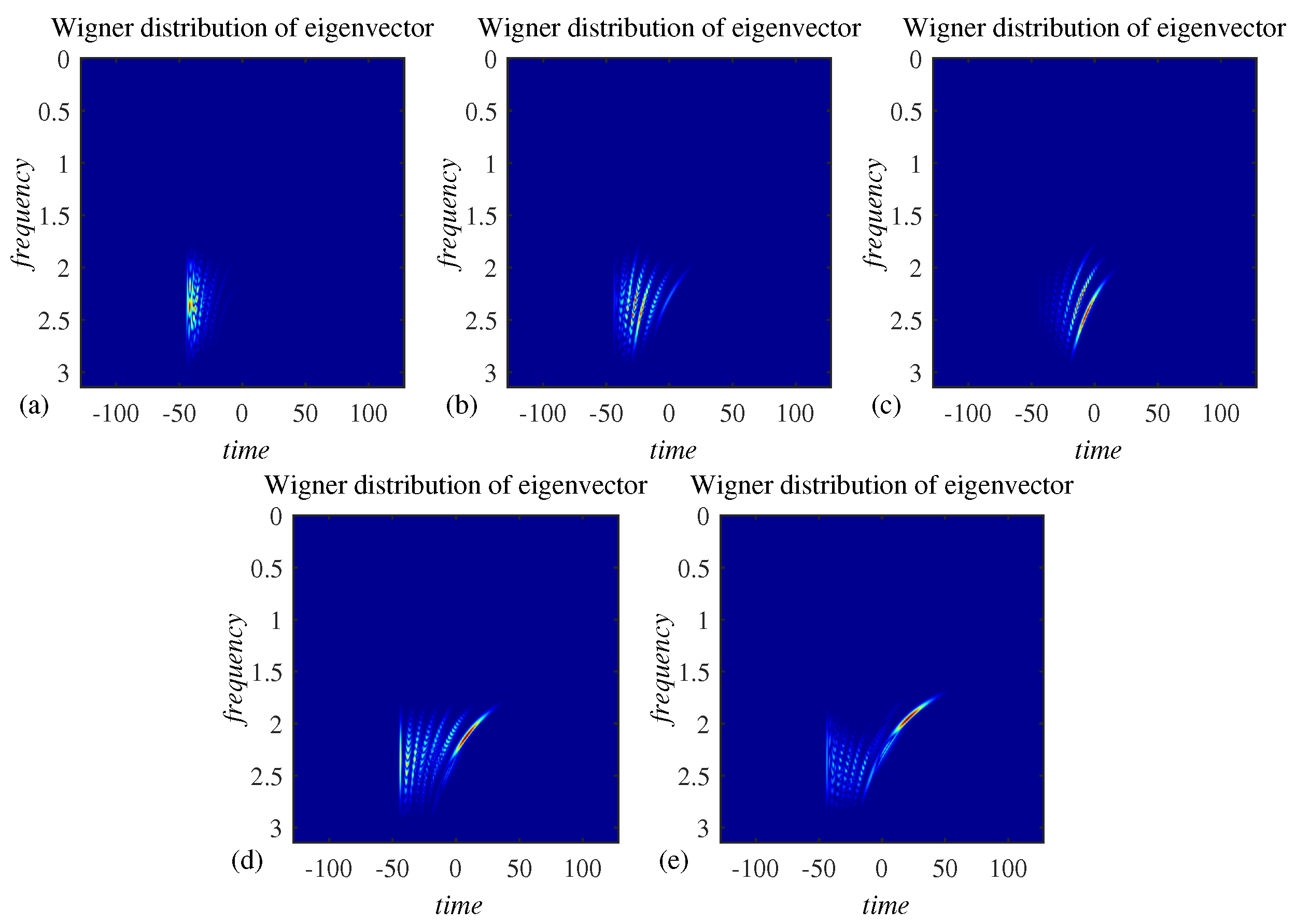

Each of eigenvectors of the matrix

is a linear combination of components, as shown in

Figure 3. The presented decomposition approach is applied to extract the components by linearly combining the eigenvectors from

Figure 3. The results are shown in

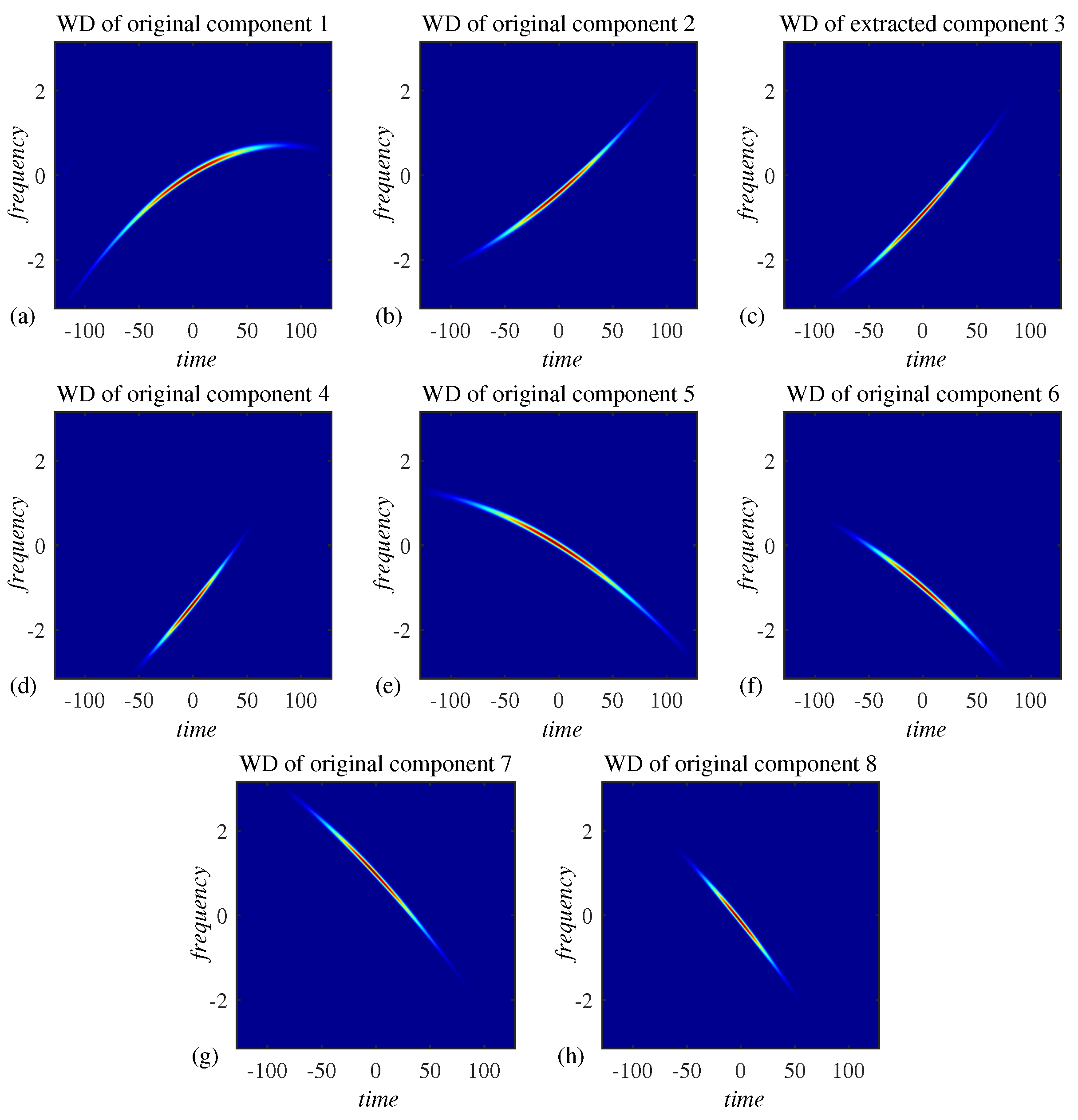

Figure 4a–f. Although a small residual noise is present in the extracted components, they highly match the original components, presented in

Figure 4g–l. The original components in

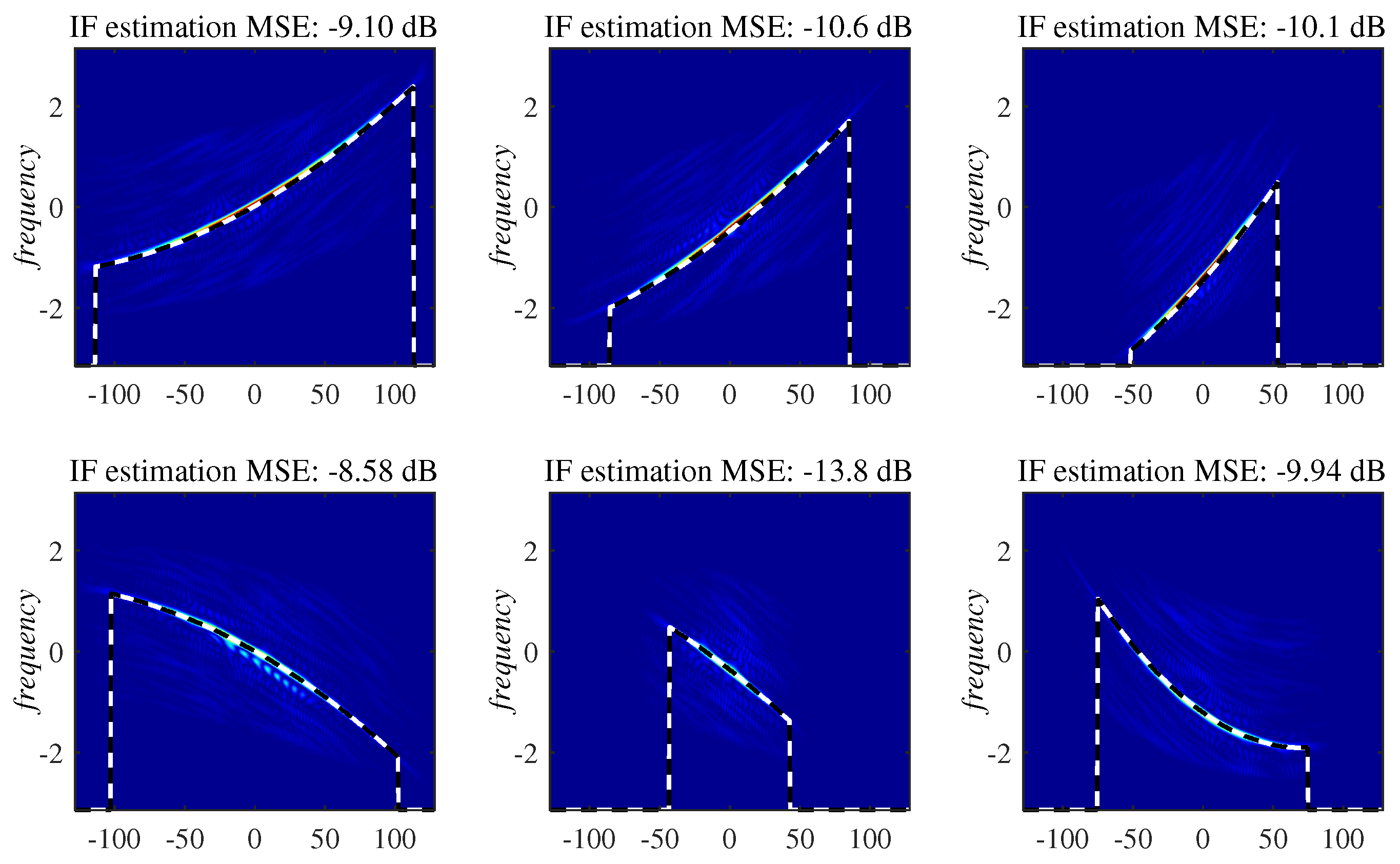

Figure 4g–l are not corrupted by the noise.

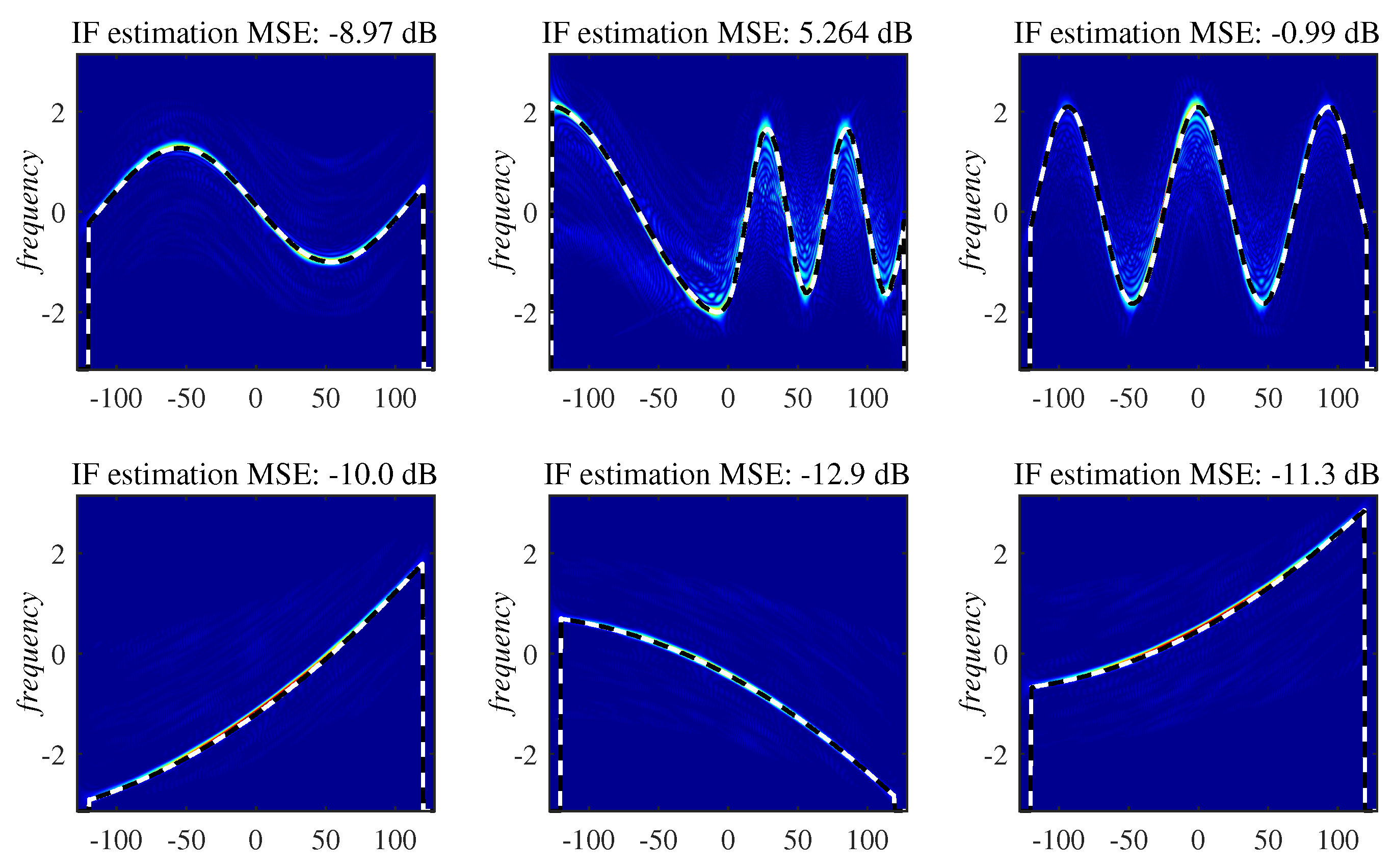

As a measure of quality, we engage

given by (

56), which is the error between the IF estimation result based on the

pth extracted signal component (shown in

Figure 4a–f) versus the IF estimation calculated based on the WD of original, noise-free component (from

Figure 4g–l). The IF estimates and the corresponding MSEs are, for each pair of components, presented in

Figure 5, for standard deviation of the noise

, where the number of channels is

.

Since

given by (

56) serves as a measure of the component extraction quality, we evaluate the decomposition performance for various standard deviations of the noise,

. Results are presented in

Table 1. The presented MSEs are calculated by averaging the results obtained based on 10 realizations of multichannel signal of the form (

58) with random phases

,

and corrupted by random realizations of the noise

, for each observed variance (standard deviation) of the noise. Based on the results from

Table 1, it can be concluded that each signal component is successfully extracted for noise characterized by standard deviation up to

. For stronger noise, only some components are successfully extracted. It shall be noted that the performance of the algorithm depends also on the number of channels,

C. For the results from

Table 1, the number of channels was set to

. A larger value of

C increases the probability of successful decomposition, as investigated in [

31].

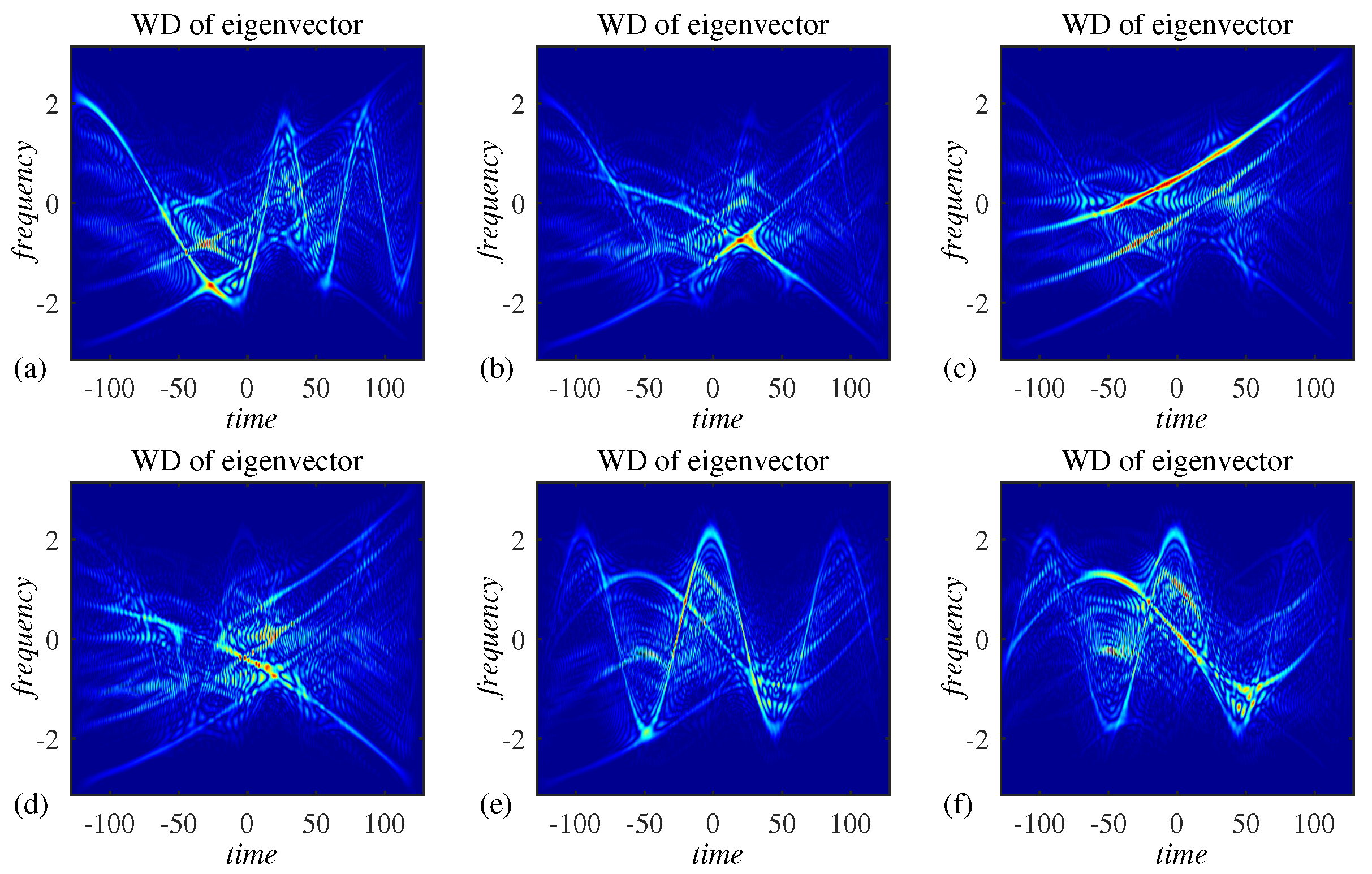

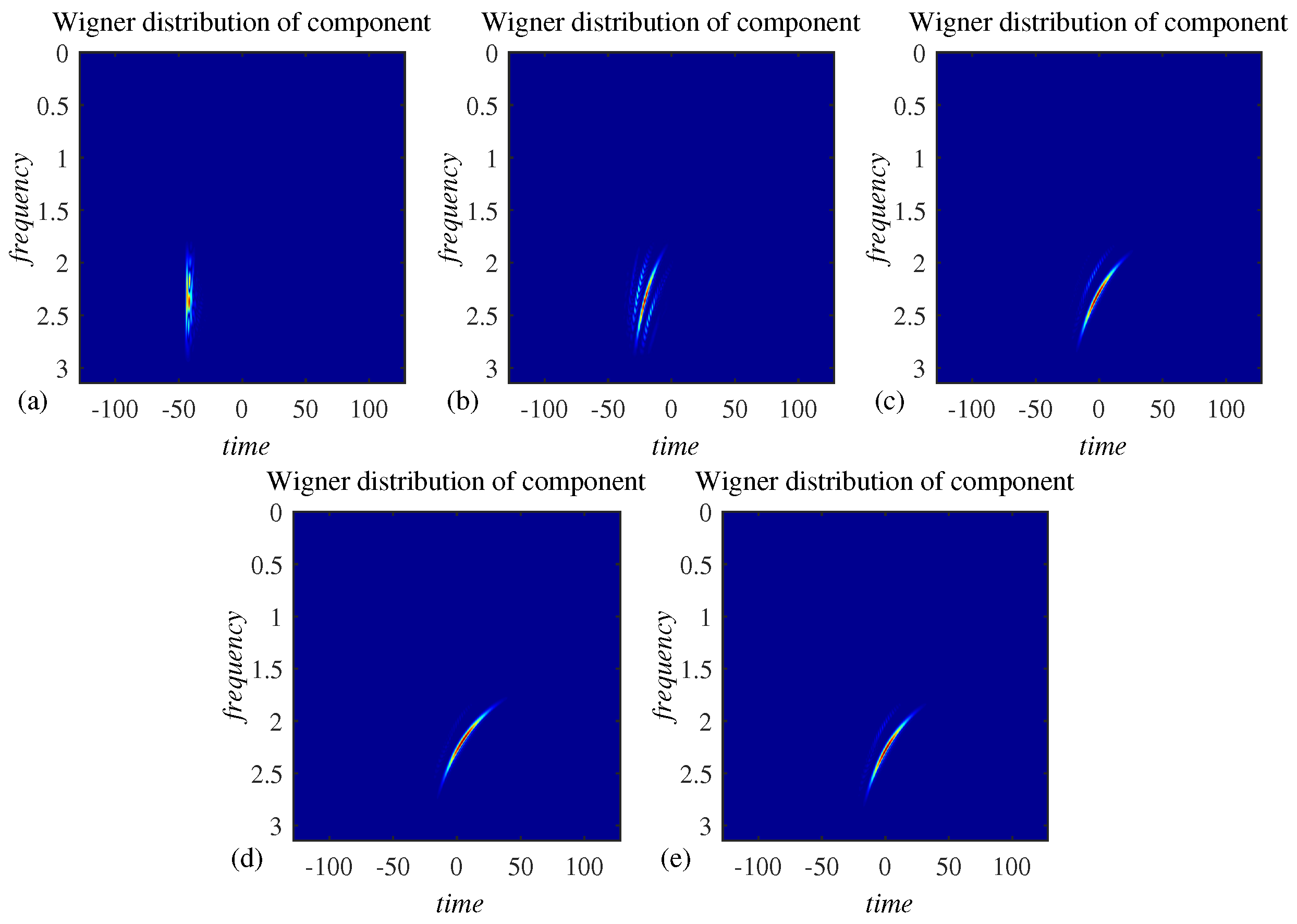

Example 2. The decomposition algorithm is tested on a more complex signal of the form (58), with components, whereas the standard deviation of the noise is now . The number of channels is . After the input autocorrelation matrix, , is calculated, according to (20), eigendecomposition produced the eigenvalues given in Figure 6a. Signal components overlap in the time-frequency domain and, therefore, the corresponding Wigner distribution and spectrogram shown in Figure 6b,c cannot be used as adequate tools for their analysis. Figure 7 indicates that the components are neither visible in the time-frequency representation of any eigenvector corresponding to the largest eigenvalues. This is in accordance with the fact that eigenvectors contain signal components in the form of their linear combinations. Upon applying the presented multivariate decomposition procedure on this set of eigenvectors, we obtain results presented in Figure 8. By comparing the results with Wigner distributions of individual, noise-free components, shown in Figure 9, comprising the considered multicomponent signal, it can be concluded that the components are successfully extracted with preserved integrity. This is additionally confirmed by the IF estimation results shown in Figure 10, where even lower MSE values for each component can be explained by the lower noise level, as compared with results from the previous example. Example 3. To illustrate the applicability of the presented approach in decomposition of components with faster or progressive frequency variations over time, we observe a signal consisted of components, three of which have polynomial modulations as components in model (58), whereas three other components have frequency modulations of sinusoidal nature. The first three components are defined as: The remaining components have polynomial frequency modulation, as in previous examples:for . Again, the signal is defined for discrete indices and phases , , are random numbers with uniform distribution drawn from interval . The resulting multicomponent signal is formed in cth channel as: and is, as in previous examples, embedded in additive, white, complex-valued Gaussian noise, now with variance . The number of channels is . Eigenvalues of the autocorrelation matrix , WD and spectrogram are given in Figure 11, again proving the that the considered signal with heavily overlapped components cannot be analyzed with these tools. Eigenvectors corresponding to the largest six eigenvalues are given in Figure 12. Extracted and original components can be visually compared in Figure 13, again proving the efficiency of the approach, even in the case for components with a faster varying frequency content. This is additionally confirmed by IF estimation results in Figure 14. Larger estimation errors when faster sinusoidal frequency modulations are present are related to poorer concentration of the WD in these cases [3]. Example 4. In this example, we consider the dispersive environment setup described in Section 2.2, with the transmitter located in the water at the depth of . However, to obtain a multivariate signal, instead of one sensor, sensors are placed at the depth , comprising the receiver at distances , , from the transmitter. Moreover, the mutual sensor distances are negligible compared with their distance from the transmitter, m; that is . This further implies that the range direction remains unchanged in our model. As a response to a monochromatic signal , at sensor c, the linear combination of modes is received:where , and wavenumbers are modeled as [55] m and is a random variable drawn from interval with uniform distribution; therefore, corresponding to depth variations of cm, modeling channel depth changes due to surface waves or uneven seabed. The speed of sound propagation underwater is m/s. The same results in this example are obtained for a more precise speed, i.e., at m/s. The received multichannel signal is of the form Upon performing the eigenvalue decomposition of autocorrelation matrix

, eigenvalues shown in

Figure 15a are obtained. The Wigner distribution of the received signal is shown in

Figure 15b, with very close and partially overlapped nodes. Wigner distributions of individual eigenvectors are shown in

Figure 16a–e. The presented procedure for the decomposition of multicomponent signals successfully extracted the individual acoustic modes, as presented in

Figure 17a–e. Such separated acoustic modes can be further analyzed; for example, their IF can be estimated and characterized.

6. Discussion

Decomposition of non-stationary multicomponent signals has been a long-term, challenging topic in time-frequency signal analysis [

19,

20,

21,

22,

23,

24,

25,

26,

27,

28,

29,

30,

31,

32,

33,

34]. Although decomposition of non-overlapping components can be done using the S-method relations with the WD [

26], this approach cannot be applied when the components partially overlap, i.e., share the same domain of support in the time-frequency plane.

Other alternative methodologies are specialized for some specific signal classes, and are efficient in the case of partially overlapped components [

20,

25,

27]. In this sense, chirplet and Radon transform-based decomposition is applicable for linear frequency modulated signals [

20,

25]. Inverse Radon transform has produced excellent results in separation of micro-Doppler appearing in radar signal processing, characterized by a sinusoidal frequency modulation and periodicity [

27]. However, outside the scope of their predefined signal models, these techniques are inefficient in separation of non-stationary signals characterized by some different laws of non-stationarity. Another very popular concept, namely the EMD, has been also applied multivariate data [

39,

40,

41,

42,

43]. However, successful EMD-based multicomponent signal decomposition is possible only for signals having non-overlapping components in the TF plane. Amplitude variations of components pose an additional challenge to the EMD-based decomposition. The efficiency of the proposed method does not depend on the considered frequency range, but only on the ability of a time-frequency representation to concentrate signal components in the time-frequency plane. We use the STFT in concentration measure (

31) due to its ability to concentrate signal energy at the instantaneous frequency of individual signal components. The decomposition approach studied in this paper successfully extracts components highly overlapped in the time-frequency plane. The method is not sensitive to the extent of overlap of the signal components.

Since the modes appearing in the considered acoustic dispersive environment framework are characterized by a non-linear (and non-sinusoidal) law of frequency variations and have a partially overlapped support, neither of the mentioned univariate techniques can produce acceptable decomposition results.