LDAShiny: An R Package for Exploratory Review of Scientific Literature Based on a Bayesian Probabilistic Model and Machine Learning Tools

Abstract

:1. Introduction

2. Topic Modelling for Exploratory Literature

- For every topic k:

- a.

- Draw a distribution over the words (i.e., vocabulary V) [6]

- For every document d:

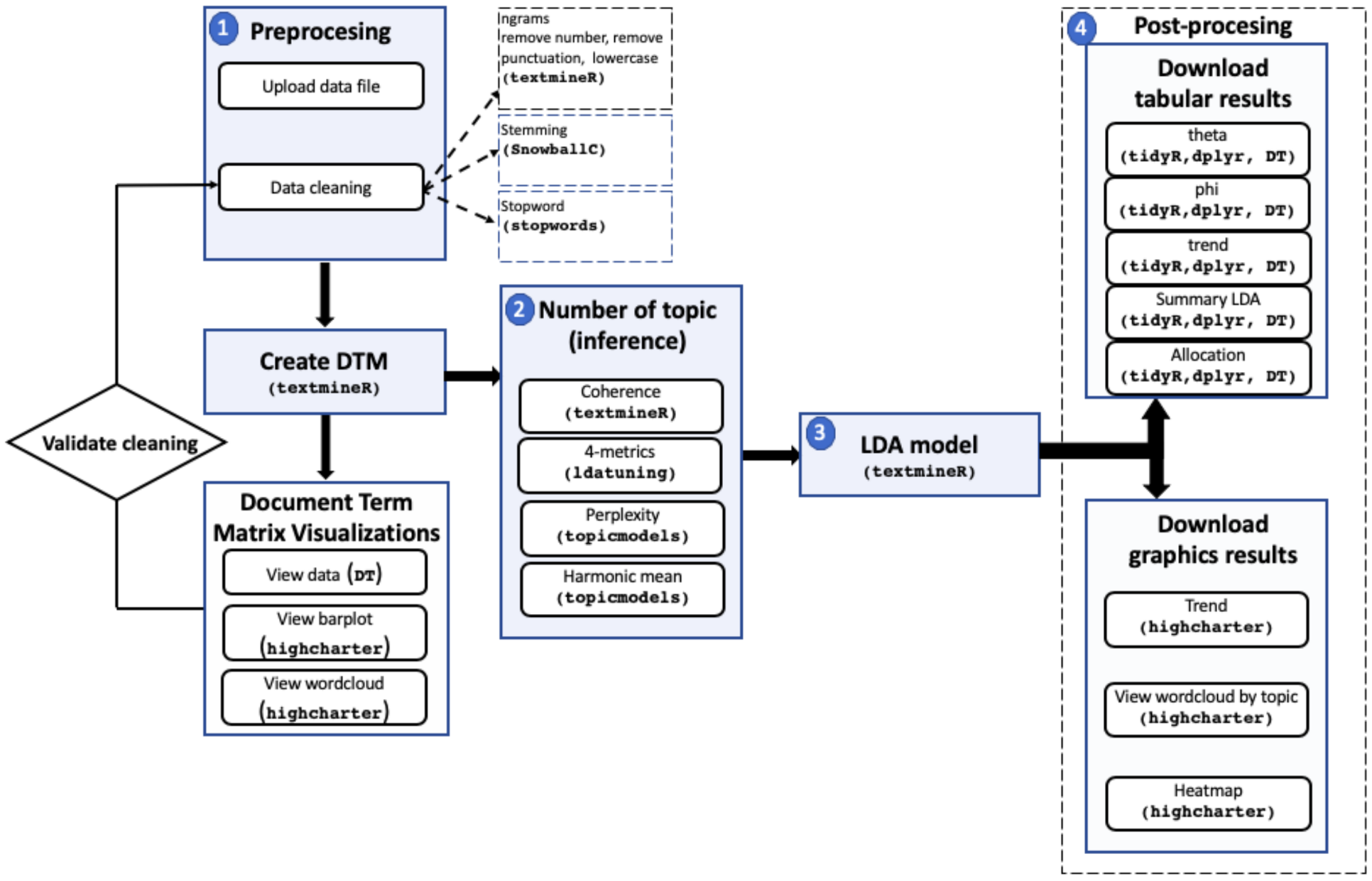

3. Materials and Methods

3.1. Preprocessing

- Tokenization, which is the procedure of separating morphemes (words). According to Jurafsky and Martin [31] it is beneficial in both linguistics and computer science.

- n-gram inclusion: an n-gram is a contiguous sequence of n words [32]. Although it is more usual to analyze individual words, in some cases, such as in the life sciences, incorporating bigrams would be advantageous because scientific names of species are made up of two words. In LDAShiny we can work with unigrams, bigrams or trigrams (three words frequently occurring).

- Remove numbers, despite the fact that numbers are frequently thought to be uninformative, there are some areas of knowledge where numbers can provide valuable information, for instance, in legislative matters, bills or decrees can be significant with respect to content legislation. That is why in the developed application the researcher can decide whether or not to eliminate the numbers.

- Remove StopWord, a term coined by Luhn [33]. The procedure consists of discarding words that have no lexical meaning and that appear in texts very frequently (such as articles and pronouns). There are many potential StopWord lists, however, we restrict ourselves to a pre-compiled list of words provided by the R StopWord [34]. LDAShiny allows performing this procedure in 14 languages Danish, Dutch, English, Finnish, French, German, Hungarian, Italian, Norwegian, Portuguese, Romanian, Russian, Spanish, and Swedish.

- Stemming, which is the simplest version of lemmatization. It consists of reducing words to basic forms [35]. Although it is often used as a reduction technique, it must be used carefully, since it could combine words with different meanings, for example in the phrases “college students partying”, and “political parties”, stemming would reduce partying and parties as the same basic form.

- Remove infrequently used terms (sparsity). This procedure is very useful because it allows removing the terms that appear in very few documents before continuing with the successive phases. Among the reasons for this procedure is the computational feasibility, as this process drastically reduces the size of the matrix without losing significant information and can also eliminate errors in the data, such as misspelled words. This only applies to terms that comply with:where df is the frequency of documents of the term t and N is the number of vectors. For example, if the sparse value is 0.99, the terms that appear in more than 1% of the documents are taken. As a general rule, terms that appear in less than 0.5–1 percent of the articles should be discarded [19,36,37]. However, there has been no systematic examination of the implications of this pre-processing decision on the analyses’ final phase.

- Eliminating blank spaces and punctuation characters, as well as lowering the entire text, are other standard procedures used to prevent a word from being counted twice due to capitalization.

3.2. Inference

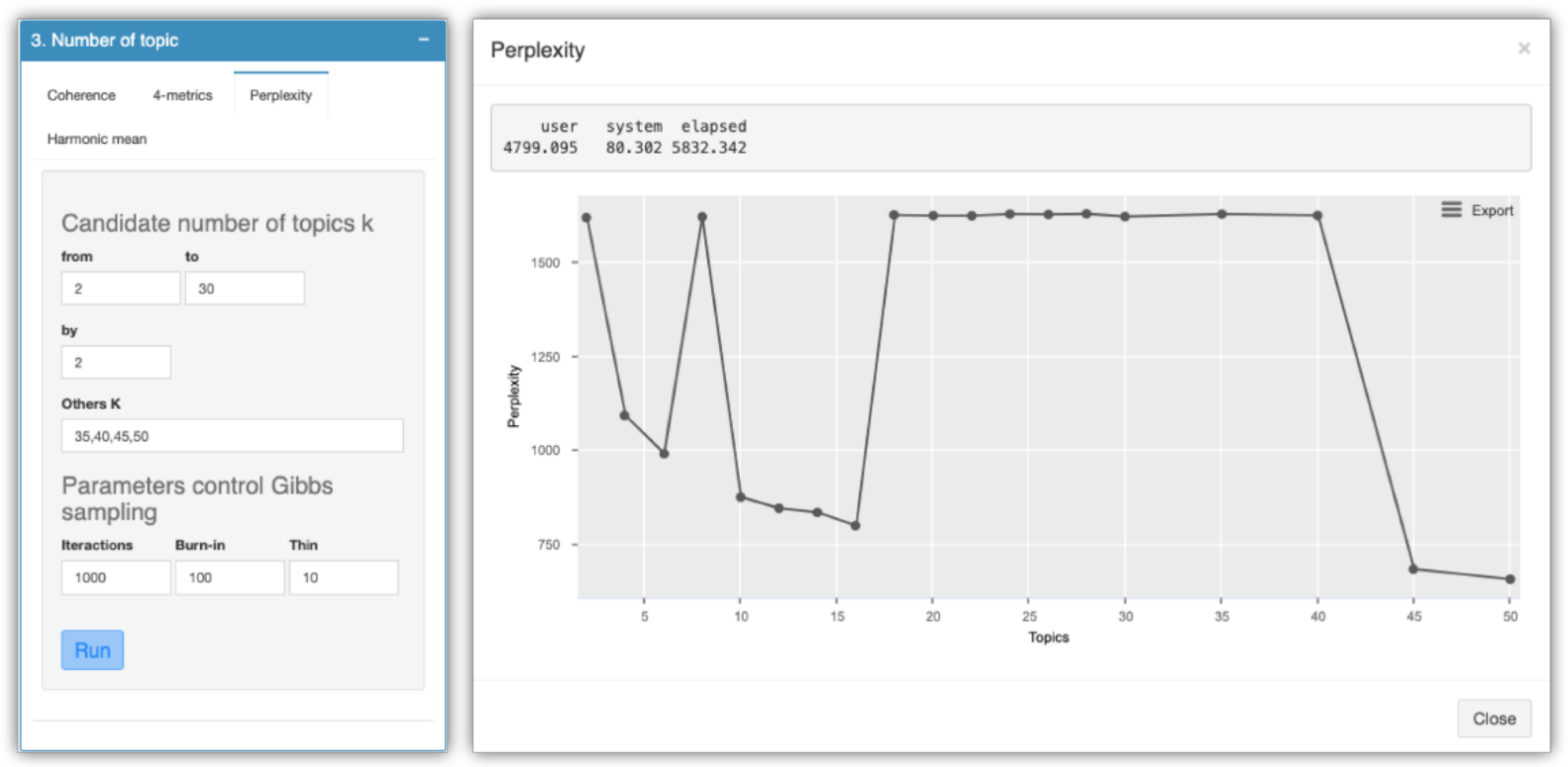

- perplexity defined by [6] for a set of text of M documents as:where is the number of words in the d-document of the text corpus and is the document in the corpus. It is monotonically decreasing and algebraically equivalent to the inverse of the geometric mean probability per word. When comparing several models, the one with the lowest value of perplexity is considered the best [6].

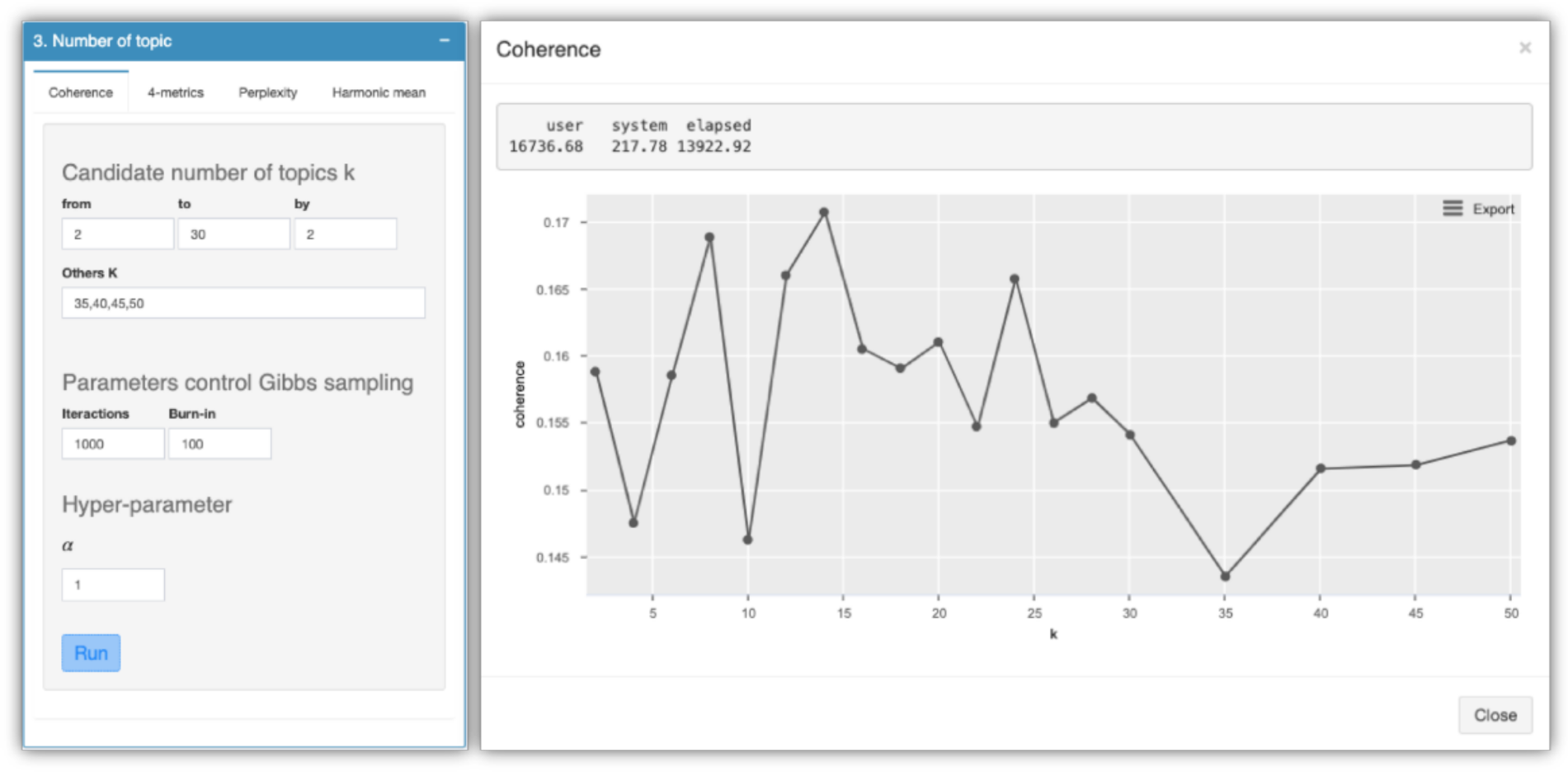

- coherence [41]. It is based on the distribution hypothesis [42] which states that words with similar meanings tend to coexist in similar contexts. The procedure used for this metric is based on the TextmineR package [14], which implements a thematic coherence measure based on probability theory and consists of fitting several models and calculating the coherence for each of them. The best model will be whichever offers the greatest measure of coherence.

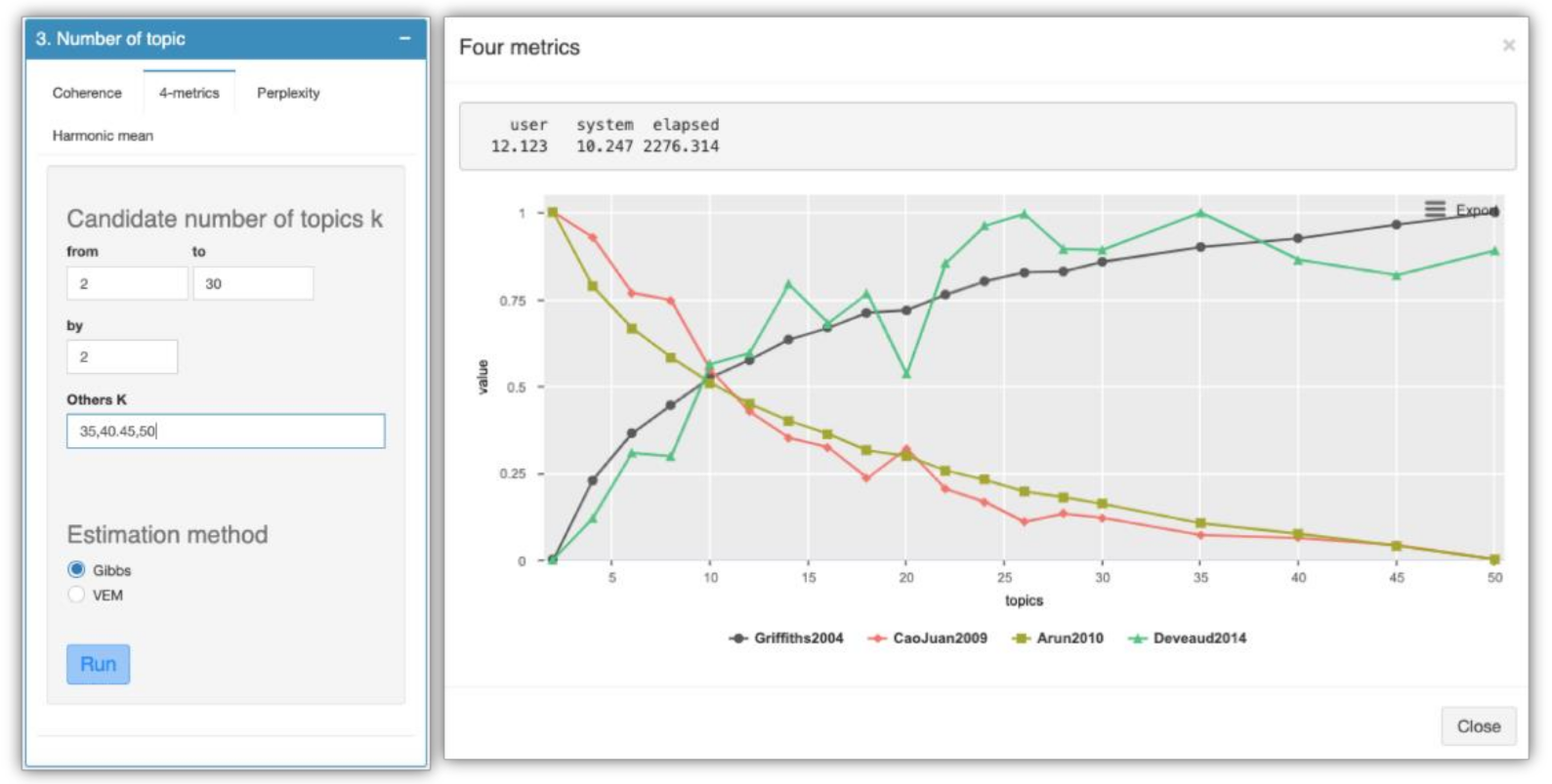

- other metrics can be found in the ldatuning package Arun 2010 [43], CaoJuan 2009 [44], Deveaud 2014 [45], Griffiths 2004 [23]. The approach of these metrics is simple and they are based on finding extreme values (minimization Arun 2010 and CaoJuan 2009; maximization Deveaud 2014 and Griffiths 2004).

3.3. Latent Dirichlet Assignment (LDA) Model

3.4. Post-Processing

4. LDAShiny Graphical User Interface (GUI)

- R > install.packages(“LDAShiny”)

- R > library(“LDAShiny”)

- R > LDAShiny::runLDAShiny()

- About: this panel serves as the software’s introduction page. The application’s general information, as well as the software’s goal, are displayed in English and Spanish.

- Data input and preprocessing: this provides an interface for users to load the data to be analyzed. In addition, there are also different options to perform preprocessing.

- Document term matrix visualizations: the matrix of terms and documents can be viewed both in tabular and graphical form in this menu. The tabular data can be downloaded in csv xlxs or pdf format or can be copied to the clipboard. The graphics (barplot or wordcloud) can be downloaded in .png, .pdf, .jpeg, .svg, and .pdf format.

- Number of topics (inference): The options to set the input parameters of each of the metrics used to find the number are available in this menu.

- LDA model tutorial: this menu offers a vignette (in English and Spanish) with videos that serve as a quick guide where the basic steps to use the software are explained.

5. Demonstration of LDAShiny GUI

5.1. Preprocesing

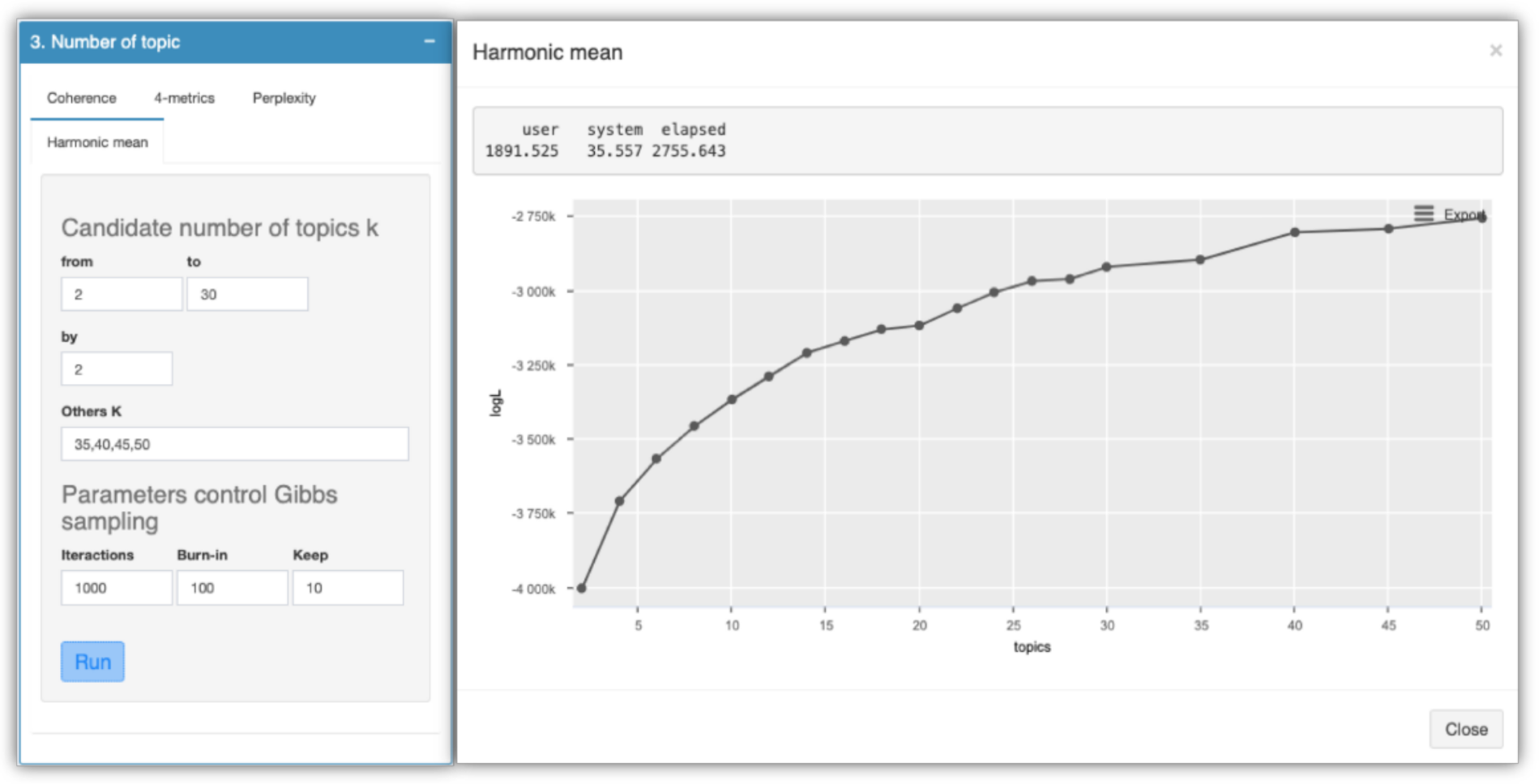

5.2. Number of Topics (Inference)

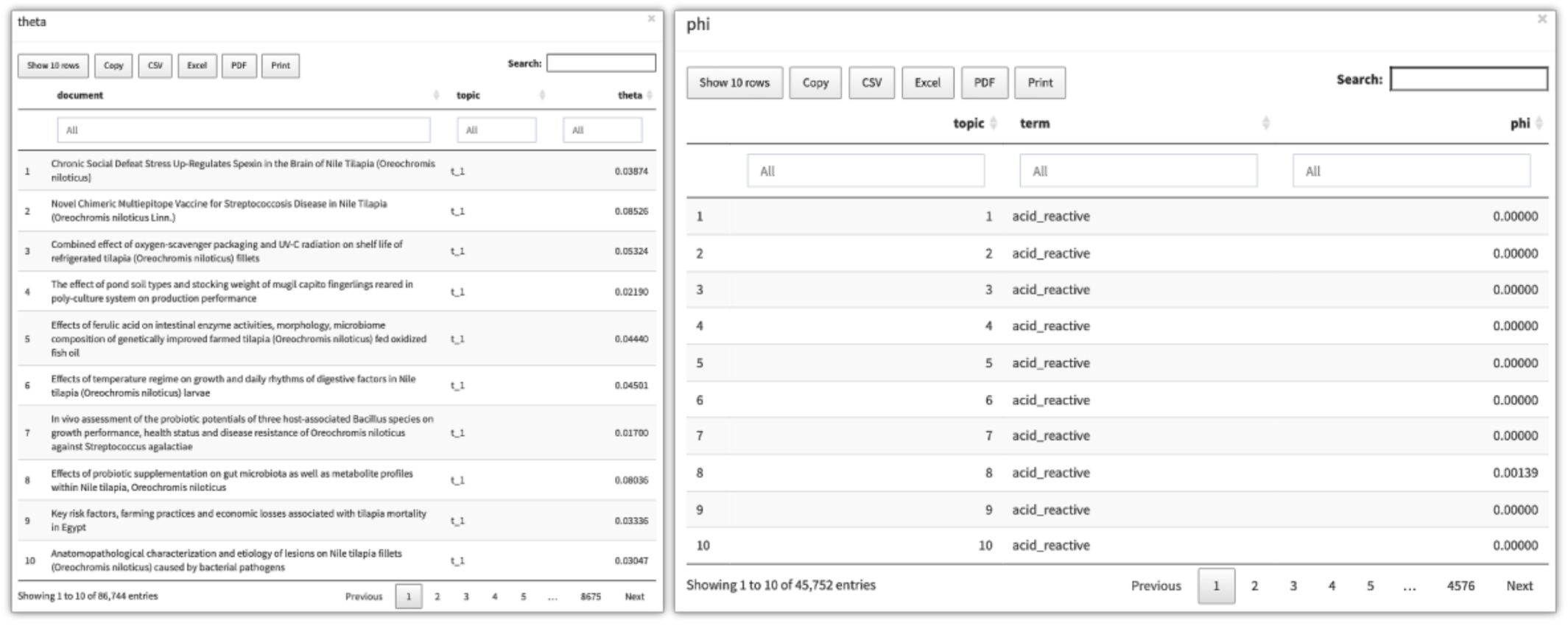

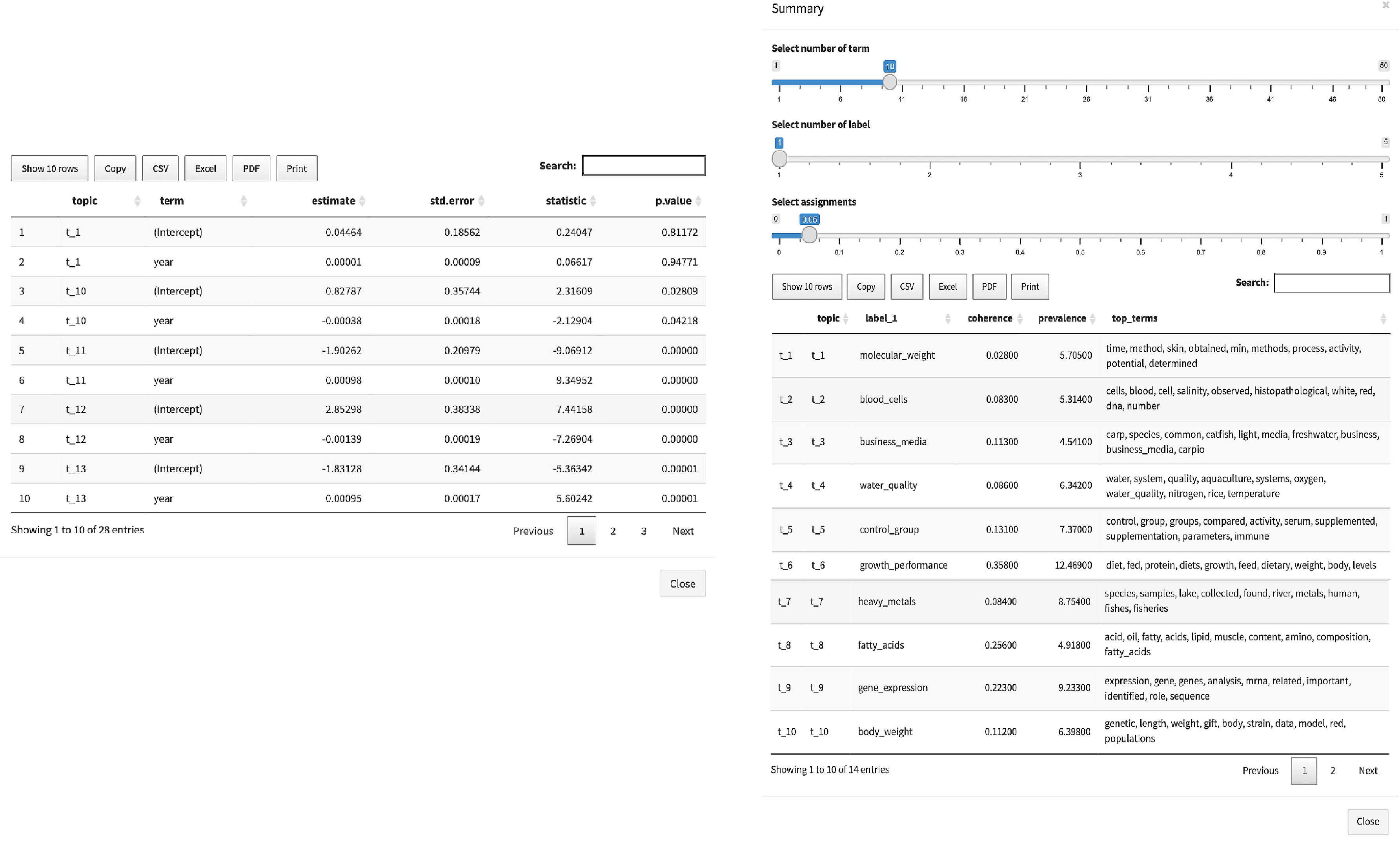

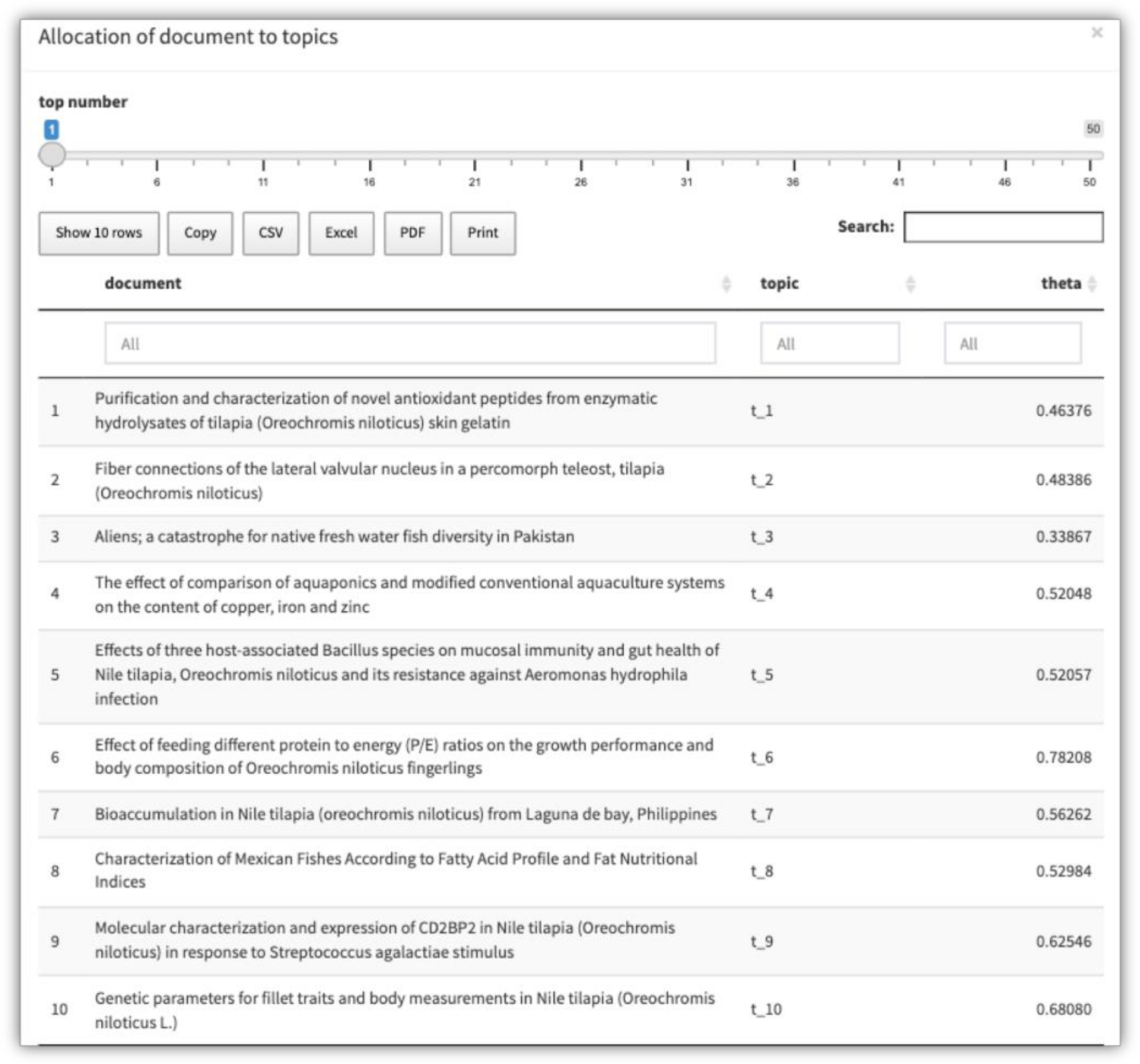

5.3. LDA Model

5.4. Postprocessing

6. Discussion

7. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Brocke, J.; Simons., A.; Niehaves, B.; Niehaves, B.; Reimer, K.; Plattfaut, R.; Cleven, A. Reconstructing the giant: On the importance of rigour in documenting the literature search process. In Proceedings of the 17th European Conference on Information Systems, Verona, Italy, 7–9 June 2009; p. 161. Available online: http://aisel.aisnet.org/ecis2009/161 (accessed on 16 April 2021).

- Harzing, A.W.; Alakangas, S. Google Scholar, Scopus and the Web of Science: A longitudinal and cross-disciplinary comparison. Scientometrics 2016, 106, 787–804. [Google Scholar] [CrossRef]

- DiMaggio, P.; Nag, M.; Blei, D. Exploiting affinities between topic modeling and the sociological perspective on culture: Application to newspaper coverage of US government arts funding. Poetics 2013, 41, 570–606. [Google Scholar] [CrossRef]

- Asmussen, C.B.; Muller, C. Smart literature review: A practical topic modelling approach to exploratory literature review. J. Big Data. 2019, 6, 93. [Google Scholar] [CrossRef] [Green Version]

- R Core Team. R: A Language and Environment for Statistical Computing; R Foundation for Statistical Computing: Vienna, Austria, 2020; Available online: https://www.R-project.org/ (accessed on 16 April 2021).

- Blei, D.M.; Ng, A.Y.; Jordan, M.I. Latent Dirichlet allocation. J. Mach. Learn Res. 2003, 3, 993–1022. [Google Scholar]

- Chang, J. lda: Collapsed Gibbs Sampling Methods for Topic Models, R package version 1.4.2; R Foundation for Statistical Computing: Vienna, Austria, 2015; Available online: https://CRAN.R-project.org/package=lda (accessed on 16 April 2021).

- Erskine, N. lda.svi: Fit Latent Dirichlet Allocation Models Using Stochastic Variational Inference, R package version 0.1.0.; R Foundation for Statistical Computing: Vienna, Austria, 2015; Available online: https://CRAN.Rproject.org/package=lda.svi (accessed on 16 April 2021).

- Rieger, J. ldaPrototype: Prototype of Multiple Latent Dirichlet Allocation Runs, R package version 0.1.1; R Foundation for Statistical Computing: Vienna, Austria, 2015; Available online: https://CRAN.R-project.org/package=ldaPrototype (accessed on 16 April 2021).

- Nikita, M. ldatuning: Tuning of the Latent Dirichlet Allocation Models Parameters, R package version 1.0.2.; R Foundation for Statistical Computing: Vienna, Austria; Available online: https://CRAN.R-project.org/package=ldatuning (accessed on 16 April 2021).

- Sievert, C.; Shirley, K. LDAvis: Interactive Visualization of Topic Models, R package version 0.3.2.; R Foundation for Statistical Computing: Vienna, Austria; Available online: https://CRAN.R-project.org/package=LDAvis (accessed on 16 April 2021).

- Friedman, D. topicdoc: Topic-Specific Diagnostics for LDA and CTM Topic Models, R package version 0.1.0.; R Foundation for Statistical Computing: Vienna, Austria; Available online: https://CRAN.R-project.org/package=topicdoc (accessed on 16 April 2021).

- Grun, B.; Hornik, K. topicmodels: An R Package for Fitting Topic Models. J. Stat. Softw. 2011, 40, 1–30. [Google Scholar] [CrossRef] [Green Version]

- Jones, T. textmineR: Functions for Text Mining and Topic Modeling, R package version 3.0.4.; R Foundation for Statistical Computing: Vienna, Austria, 2019; Available online: https://CRAN.R-project.org/package=textmineR (accessed on 16 April 2021).

- Blei, D.M. Probabilistic topic models. Commun. ACM 2012, 55, 77–84. [Google Scholar] [CrossRef] [Green Version]

- Kao, A.; Poteet, S.R. Natural Language Processing and Text Mining, 1st ed.; Springer Science & Business Media: London, UK, 2007; p. 265. [Google Scholar]

- Deerwester, S.; Dumais, S.T.; Furnas, G.W.; Landauer, T.K.; Harshman, R. Indexing by latent semantic analysis. J. Assoc. Inf. Sci. Technol. 1990, 41, 391–407. [Google Scholar] [CrossRef]

- Hofmann, T. Probabilistic latent semantic indexing. In Proceedings of the 22nd Annual International ACM SIGIR Conference on Research and Development in Information Retrieval, Berkeley, CA, USA, 15–19 August 1999; pp. 50–57. [Google Scholar] [CrossRef]

- Grimmer, J. A Bayesian hierarchical topic model for political texts: Measuring expressed agendas in Senate press releases. Political Anal. 2010, 18, 1–35. [Google Scholar] [CrossRef] [Green Version]

- Jacobi, C.; Van Atteveldt, W.; Welbers, K. Quantitative analysis of large amounts of journalistic texts using topic modelling. Digit. J. 2016, 4, 89–106. [Google Scholar] [CrossRef]

- Iwata, T.; Saito, K.; Ueda, N.; Stromsten, S.; Griffiths, T.L.; Tenenbaum, J.B. Parametric embedding for class visualization. Neural. Comput. 2007, 19, 2536–2556. [Google Scholar] [CrossRef] [PubMed]

- Wang, Y.; Sabzmeydan, P.; Mori, G. Semi-latent Dirichlet allocation: A hierarchical model for human action recognition. In Proceedings of the Second Workshop, Human Motion—Understanding, Modeling, Capture and Animation, Rio de Janeiro, Brazil, 20 October 2007; Elgammal, A., Rosenhahn, B., Klette, R., Eds.; Springer: Berlin/Heidelberg, Germany, 2007. [Google Scholar] [CrossRef] [Green Version]

- Griffths, T.L.; Steyvers, M. Finding scientific topics. Proc. Natl. Acad. Sci. USA 2004, 101 (Suppl. 1), 5228–5235. [Google Scholar] [CrossRef] [Green Version]

- Syed, S.; Spruit, M. Full-text or abstract? Examining topic coherence scores using latent dirichlet allocation. In Proceedings of the 2017 IEEE International Conference on Data Science and Advanced Analytics (DSAA), Tokyo, Japan, 19–21 October 2017; pp. 165–174. [Google Scholar] [CrossRef]

- Newman, D.; Smyth, P.; Welling, M.; Asuncion, A.U. Distributed inference for latent Dirichlet allocation. In Proceedings of the Twenty-First Annual Conference on Neural Information Processing Systems, Vancouver, BC, Canada, 3–6 December 2007; pp. 1081–1088. [Google Scholar]

- Porteous, I.; Newman, D.; Ihler, A.; Asuncion, A.; Smyth, P.; Welling, M. Fast collapsed gibbs sampling for latent Dirichlet allocation. In Proceedings of the 14th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, Las Vegas, NV, USA, 24–27 August 2008; pp. 569–577. [Google Scholar]

- Blei, D.M.; Jordan, M.I. Variational inference for Dirichlet process mixtures. Bayesian Anal. 2006, 1, 121–143. [Google Scholar] [CrossRef]

- Teh, Y.W.; Newman, D.; Welling, M. A collapsed variational Bayesian inference algorithm for latent Dirichlet allocation. In Proceedings of the 20th Annual Conference on Neural Information Processing Systems, Vancouver, BC, Canada, 4–7 December 2007; pp. 1353–1360. [Google Scholar]

- Wang, C.; Paisley, J.; Blei, D.M. Online variational inference for the hierarchical Dirichlet process. In Proceedings of the Fourteenth International Conference on Artificial Intelligence and Statistics, Machine Learning Research, Fort Lauderdale, FL, USA, 11–13 April 2011; pp. 752–760. Available online: http://proceedings.mlr.press/v15/wang11a.html (accessed on 17 April 2021).

- Vijayarani, S.; Ilamathi, M.J.; Nithya, M. Preprocessing techniques for text mining-an overview. Int. J. Comput. Sci. Commun. Netw. 2015, 5, 7–16. [Google Scholar]

- Jurafsky, D.; Martin, J.H. Speech and Language Processing: An Introduction to Natural Language Processing, Computational Linguistics, and Speech Recognition; Pearson: Hoboken, NJ, USA, 2008. [Google Scholar]

- Manning, C.D.; Manning, C.D.; Schutze, H. Foundations of Statistical Natural Language Processing, 2nd ed.; MIT Press: Cambridge, MA, USA, 1999. [Google Scholar]

- Luhn, H.P. The automatic creation of literature abstracts. IBM J. Res. Dev. 1958, 2, 159–165. [Google Scholar] [CrossRef] [Green Version]

- Benoit, K.; Muhr, D.; Watanabe, K. Stopwords: Multilingual Stopword Lists, R package version 0.9.0; R Foundation for Statistical Computing: Vienna, Austria, 2017. Available online: https://CRAN.R-project.org/package=stopwords (accessed on 17 April 2021).

- Porter, M.F. An algorithm for suffix stripping. Programming 1980, 14, 130–137. [Google Scholar] [CrossRef]

- Yano, T.; Smith, N.A.; Wilkerson, J.D. Textual predictors of bill survival in congressional committees. In Proceedings of the 2012 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Montreal, QC, Canada, 3–8 June 2012; pp. 793–802. [Google Scholar]

- Grimmer, J.; Stewart, B.M. Text as data: The promise and pitfalls of automatic content analysis methods for political texts. Political Anal. 2013, 21, 267–297. [Google Scholar] [CrossRef]

- Blei, D.M.; Lafferty, J.D. A correlated topic model of science. Ann. Appl. Stat. 2007, 1, 17–35. [Google Scholar] [CrossRef] [Green Version]

- Sbalchiero, S.; Eder, M. Topic modeling, long texts and the best number of topics. Some problems and solutions. Qual Quant. 2020, 54, 1095–1108. [Google Scholar] [CrossRef]

- Newton, M.A.; Raftery, A.E. Approximate Bayesian inference with the weighted likelihood bootstrap. J. R. Stat. Soc. Series B. Stat. Methodol. 1994, 56, 3–26. [Google Scholar] [CrossRef]

- Roder, M.; Both, A.; Hinneburg, A. Exploring the space of topic coherence measures. In Proceedings of the Eighth ACM International Conference on Web Search and Data Mining, 31 January–6 February 2015; pp. 399–408. [Google Scholar]

- Harris, Z.S. Distributional structure. Word 1954, 10, 146–162. [Google Scholar] [CrossRef]

- Arun, R.; Suresh, V.; Veni Madhavan, C.E.; Narasimha Murthy, M.N. On finding the natural number of topics with latent dirichlet allocation: Some observations. In Advances in Knowledge Discovery and Data Mining. PAKDD 2010. Lecture Notes in Computer Science; Zaki, M.J., Yu, J.X., Ravindran, B., Pudi, V., Eds.; Springer: Berlin/Heidelberg, Germany, 2010; Volume 6118. [Google Scholar] [CrossRef]

- Cao, J.; Xia, T.; Li, J.; Zhang, Y.; Tang, S. A density-based method for adaptive LDA model selection. Neurocomputing 2009, 72, 1775–1781. [Google Scholar] [CrossRef]

- Deveaud, R.; SanJuan, E.; Bellot, P. Accurate and effective latent concept modeling for ad hoc information retrieval. Doc. Numer. 2014, 17, 61–84. [Google Scholar] [CrossRef] [Green Version]

- Lewis, S.C.; Zamith, R.; Hermida, A. Content analysis in an era of big data: A hybrid approach to computational and manual methods. J. Broadcast. Electron. Media. 2013, 57, 34–52. [Google Scholar] [CrossRef]

- Lau, J.H.; Grieser, K.; Newman, D.; Baldwin, T. Automatic labelling of topic models. In Proceedings of the 49th Annual Meeting of the Association for Computational Linguistics: Human Language Technologies, Portland, OR, USA; 2011; pp. 1536–1545. [Google Scholar]

- Xiong, H.; Cheng, Y.; Zhao, W.; Liu, J. Analyzing scientific research topics in manufacturing field using a topic model. Comput. Ind. Eng. 2019, 135, 333–347. [Google Scholar] [CrossRef]

- Chang, W.; Cheng, J.; Allaire, J.; Xie, Y.; McPherson, J. Shiny: Web Application Framework for R, R package version 1.4.0.2; R Foundation for Statistical Computing: Vienna, Austria. Available online: https://CRAN.R-project.org/package=shiny (accessed on 17 April 2021).

- Chang, J.; Gerrish, S.; Wang, C.; Boyd-Graber, J.L.; Blei, D.M. Reading tea leaves: How humans interpret topic models. Advances in Neural Information Processing Systems; MIT Press: Cambridge, MA, USA, 2009; pp. 288–296. [Google Scholar]

- Denny, M.J.; Spirling, A. Text preprocessing for unsupervised learning: Why it matters, when it misleads, and what to do about it. Political Anal. 2018, 26, 168–189. [Google Scholar] [CrossRef] [Green Version]

- Maier, D.; Waldherr, A.; Miltner, P.; Wiedemann, G.; Niekler, A.; Keinert, A.; Adam, S. Applying LDA topic modeling in communication research: Toward a valid and reliable methodology. Commun. Methods Meas. 2018, 12, 93–118. [Google Scholar] [CrossRef]

| Panel | Item | Menu | Description |

|---|---|---|---|

| Preprocessing | Upload data file | Use example data set? | Check box indicating whether a le that comes with the package. |

| Choose csv file | Clicking the Browse button will load local data files in csv format | ||

| Header | Checkbox indicating if the first line of the le contains the names of the columns | ||

| stringAsFactors | String as factors. | ||

| Separator | Field separating character. | ||

| Select | PickerInput presents the loaded dataset and displays it in the Statistical summary table view. | ||

| Data cleaning | Incorporate information | Clicking three times the Incorporate information button will load the data into preprocessing. | |

| Select id document | PickerInput for specifying vector of names for documents. | ||

| Select document vector | PickerInput for specifying character vector of documents | ||

| Select publish year | PickerInput for specify the vector containing the year the document was published | ||

| ngrams | Radio buttons to specify the type of ngram to use (unigram, bigram or trigram). | ||

| Remove number | Checkbox to specify whether or not to delete the numbers in thecorpus (if clicked it will remove the numbers). | ||

| Select language for stopword | PickerInput to specify the language used in the stopword removal (the list contains 14 languages to choose from). | ||

| Stop Words | Text field to include additional stop words to remove (words must be separated by commas). | ||

| Stemming | Checkbox if clicked, stemming is performed | ||

| Sparsity | Slider to select sparse parameter. | ||

| Create Document-Term Matrix DTM | After clicking the Create DTM button, a spinner will be displayed during the process. Once finished, a table with the dimensions of the created matrix is displayed. | ||

| Document Term Matrix Visualizations | View Data | Clicking the View Data button will be display a summary. Also shown are a series of buttons that allow downloading in csv, xlxs or pdf formats, print the le Print, copy it Copy to the clipboard, and a button to configure the number of rows Show to be used in the summary. | |

| View barplot | Clicking the View barplot button will be display a barplot. The number of bars can be configured using the slider shown in the Dropdown button, Select number of term. In the upper right part of the graph (export button), clicking on it, you can download the graph in different formats (.png, .jpeg, .svg and .pdf) | ||

| View wordcloud | Clicking the View wordcloud button will be display a wordcloud. The number of words can be configured by the slider shown in the Dropdown button Select number of term In the upper right part of the graph (export button), clicking on it, you can download the graph in different formats (.png, .jpeg, .svg and .pdf). | ||

| Number of topic (inference) | Tab Coherence | Iterations | Numeric input parameter that specifies how many iterations will be performed |

| Burn-in | Numeric input parameter that specifies how many burn-in for posterior sampling will be performed | ||

| Hyper-parameter | Numeric input parameter that specifies the alpha value of the Dirichlet distribution. | ||

| Tab 4-metrics | Estimation method | There are two radio buttons to select the estimation algorithm, Gibb for Gibbs sampling and VEM for variational expectation maximization | |

| Tab Perplexity | Iteration, Burn-in, and Thin | These parameters control how many Gibbs sampling draws are made. The first burning iterations are discarded and then every thin iteration is returned for each iterations | |

| Tab Harmonic mean | Iteration, Burn-in, and Keep | If a keep parameter was given, the log-likelihood values of every keep iteration, are contained. | |

| LDA model | Run model | The input parameters are the number of topics (K), number of iterations and the alpha parameter of the Dirichlet distribution. Clicking the Run LDA Model button, a spinner will be displayed. Once the process is complete, a table will be displayed that includes coherence score, prevalence, and 10 top-terms for each topic. Also shown are a series of buttons that allow downloading in csv, xlxs or pdf formats, print the file Print, copy it Copy to the clipboard and a button to configure the number of rows (Show rows). | |

| Download tabular results | theta | Clicking on the theta button, a table will be displayed that includes topic, document and theta | |

| phi | Clicking on the phi button, button, a table will be displayed that includes topic, term and phi | ||

| trend | Clicking on the trend button, a table showing the results of a simple linear regression (intercept, slope, test statistic, standard error and p-value) where the year is the dependent variable and the proportions of the topics in the corresponding year is the response variable. | ||

| Summary LDA | Clicking on the Summary LDA button, three sliders will be shown at the top, this allows the summary configuration: Select number of labels, Select number top terms, and Select assignments the latter is a documents by topics matrix similar to theta. This will work best if this matrix is sparse, with only a few non-zero topics per document | ||

| Allocation | Clicking on the Allocation button, a table will be shown where the user can find the documents that can be organized by topic. Thanks to the slider located at the top one we can choose the number of documents per topic to be displayed. | ||

| Download graphics | trend | Clicking on the trend button, a line graph will be shown (one line for each topic) where time trends can be visualized. The graphic is interactive, clicking on the lines they will be removed or displayed as the user decides. | |

| View wordcloud by topic | Clicking the View wordcloud by topic button will be display a wordcloud. In the drop-down button you can select the topic from which we want to generate the wordcloud, also, in the slider you can select the number of words to show | ||

| heatmap | Clicking the heatmap button will display a heatmap. The years are shown on the x-axis, the y-axis shows the topics and the color variation represents the probabilities. | ||

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

De la Hoz-M, J.; Fernández-Gómez, M.J.; Mendes, S. LDAShiny: An R Package for Exploratory Review of Scientific Literature Based on a Bayesian Probabilistic Model and Machine Learning Tools. Mathematics 2021, 9, 1671. https://doi.org/10.3390/math9141671

De la Hoz-M J, Fernández-Gómez MJ, Mendes S. LDAShiny: An R Package for Exploratory Review of Scientific Literature Based on a Bayesian Probabilistic Model and Machine Learning Tools. Mathematics. 2021; 9(14):1671. https://doi.org/10.3390/math9141671

Chicago/Turabian StyleDe la Hoz-M, Javier, Mª José Fernández-Gómez, and Susana Mendes. 2021. "LDAShiny: An R Package for Exploratory Review of Scientific Literature Based on a Bayesian Probabilistic Model and Machine Learning Tools" Mathematics 9, no. 14: 1671. https://doi.org/10.3390/math9141671

APA StyleDe la Hoz-M, J., Fernández-Gómez, M. J., & Mendes, S. (2021). LDAShiny: An R Package for Exploratory Review of Scientific Literature Based on a Bayesian Probabilistic Model and Machine Learning Tools. Mathematics, 9(14), 1671. https://doi.org/10.3390/math9141671