Bi-Smoothed Functional Independent Component Analysis for EEG Artifact Removal

Abstract

1. Introduction

2. Smoothed Functional Independent Component Analysis

2.1. Preliminaries

2.2. Functional ICA of a Smoothed Principal Component Expansion

- The FPCA of with respect to ,

- The FPCA of with respect to ,

- The FPCA of X with respect to ,with

3. Basis Expansion Estimation Using a P-Spline Penalty

4. Parameter Tuning

Penalty Parameter Selection

| Algorithm 1.baseline cross-validation |

Input: Output: for each in :

end for argminbcv. |

5. Simulation Study

| Algorithm 2.functional artifact subtraction |

Input: Output:

|

6. Estimating Brain Signals from Contaminated Event-Related Potentials

7. Discussion

Author Contributions

Funding

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations and Abbreviations

Abbreviations

Abbreviations

| EEG | Electroencephalography |

| FICA | Functional Independent Component Analysis |

| FPCA | Functional Principal Component Analysis |

| ICA | Independent Component Analysis |

| K-L | Karhunen–Loève |

| PCA | Principal Component Analysis |

References

- Wang, X. Neurophysiological and Computational Principles of Cortical Rhythms in Cognition. Physiol. Rev. 2010, 90, 1195–1268. [Google Scholar] [CrossRef] [PubMed]

- Buzsáki, G. Rhythms of the Brain; Oxford University Press: Oxford, UK, 2006. [Google Scholar]

- Castellanos, N.P.; Makarov, V.A. Recovering EEG Brain Signals: Artifact Suppression with Wavelet Enhanced Independent Component Analysis. J. Neurosci. Methods 2006, 158, 300–312. [Google Scholar] [CrossRef]

- Nordhausen, K.; Oja, H. Independent Component Analysis: A Statistical Perspective. WIREs Comput. Stat. 2018, 10, 1–23. [Google Scholar] [CrossRef]

- Hyvärinen, A.; Karhunen, J.; Oja, E. Independent Component Analysis; John Wiley & Sons, Ltd: New York, NY, USA, 2001. [Google Scholar]

- Ramsay, J.; Silverman, B.W. Functional Data Analysis; Springer: New York, NY, USA, 2005. [Google Scholar]

- Hsing, T.; Eubank, R. Theoretical Foundations of Functional Data Analysis, with an Introduction to Linear Operators; Willey: Chichester, UK, 2015. [Google Scholar]

- Wang, J.L.; Chiou, J.M.; Müller, H.G. Functional Data Analysis. Annu. Rev. Stat. Its Appl. 2016, 3, 257–295. [Google Scholar] [CrossRef]

- Mehta, N.; Gray, A. FuncICA for Time Series Pattern Discovery. In Proceedings of the 2009 SIAM International Conference on Data Mining, Sparks, NV, USA, 30 April–2 May 2009; pp. 73–84. [Google Scholar]

- Peña, C.; Prieto, J.; Rendón, C. Independent Components Techniques Based on Kurtosis for Functional Data Analysis; Working Paper 14–10 Statistics and Econometric Series (06); Universidad Carlos III de Madrid: Madrid, Spain, 2014. [Google Scholar]

- Li, B.; Bever, G.V.; Oja, H.; Sabolová, R.; Critchley, F. Functional Independent Component Analysis: An Extension of the Fourth-Order Blind Identification; Technical Report; Université de Namur: Namur, Belgium, 2019. [Google Scholar]

- Virta, J.; Li, B.; Nordhausen, K.; Oja, H. Independent Component Analysis for Multivariate Functional Data. J. Multivar. Anal. 2020, 176, 1–19. [Google Scholar] [CrossRef]

- Ash, R.B.; Gardner, M.F. Topics in Stochastic Processes; Academic Press: New York, NY, USA, 1975. [Google Scholar]

- Xiao, L.; Zipunnikov, V.; Ruppert, D.; Crainiceanu, C. Fast Covariance Estimation for High-Dimensional Functional Data. Stat. Comput. 2016, 26, 409–421. [Google Scholar] [CrossRef]

- Hasenstab, K.; Scheffler, A.; Telesca, D.; Sugar, C.A.; Jeste, S.; DiStefano, C.; Sentürk, D. A Multi-Dimensional Functional Principal Components Analysis of EEG Data. Biometrics 2017, 3, 999–1009. [Google Scholar] [CrossRef]

- Nie, Y.; Wang, L.; Liu, B.; Cao, J. Supervised Functional Principal Component Analysis. Stat. Comput. 2018, 28, 713–723. [Google Scholar] [CrossRef]

- Pokora, O.; Kolacek, J.; Chiu, T.; Qiu, W. Functional Data Analysis of Single-Trial Auditory Evoked Potentials Recorded in the Awake Rat. Biosystems 2018, 161, 67–75. [Google Scholar] [CrossRef] [PubMed]

- Scheffler, A.; Telesca, D.; Li, Q.; Sugar, C.A.; Distefano, C.; Jeste, S.; Şentürk, D. Hybrid Principal Components Analysis for Region-Referenced Longitudinal Functional EEG Data. Biostatistics 2018, 21, 139–157. [Google Scholar] [CrossRef] [PubMed]

- Urigüen, J.A.; Garcia-Zapirain, B. EEG Artifact Removal—State-of-the-Art and Guidelines. J. Neural Eng. 2015, 12, 1–23. [Google Scholar] [CrossRef] [PubMed]

- Akhtar, M.T.; Mitsuhashi, W.; James, C.J. Employing Spatially Constrained ICA and Wavelet Denoising, for Automatic Removal of Artifacts from Multichannel EEG Data. Signal Process. 2012, 92, 401–416. [Google Scholar] [CrossRef]

- Mammone, N.; Morabito, F.C. Enhanced Automatic Wavelet Independent Component Analysis for Electroencephalographic Artifact Removal. Entropy 2014, 16, 6553–6572. [Google Scholar] [CrossRef]

- Bajaj, N.; Requena Carrión, J.; Bellotti, F.; Berta, R.; De Gloria, A. Automatic and Tunable Algorithm for EEG Artifact Removal Using Wavelet Decomposition with Applications in Predictive Modeling During Auditory Tasks. Biomed. Signal Process. Control. 2020, 55, 101624. [Google Scholar] [CrossRef]

- Eilers, P.H.C.; Marx, B.D. Flexible Smoothing with B-Splines and Penalties (with Discussion). Stat. Sci. 1996, 11, 89–121. [Google Scholar] [CrossRef]

- Currie, I.D.; Durban, M. Flexible Smoothing with P-Splines: A Unified Approach. Stat. Model. 2002, 2, 333–349. [Google Scholar] [CrossRef]

- Aguilera, A.M.; Aguilera-Morillo, M.C. Penalized PCA Approaches for B-Spline Expansions of Smooth Functional Data. Appl. Math. Comput. 2013, 219, 7805–7819. [Google Scholar] [CrossRef]

- Aguilera-Morillo, M.; Aguilera, A.; Escabias, M. Penalized Spline Approaches for Functional Logit Regression. TEST 2013, 22, 251–277. [Google Scholar] [CrossRef]

- Aguilera, A.; Aguilera-Morillo, M.; Preda, C. Penalized Versions of Functional PLS Regression. Chemom. Intell. Lab. Syst. 2016, 154, 80–92. [Google Scholar] [CrossRef]

- Aguilera-Morillo, M.; Aguilera, A.; Durbán, M. Prediction of Functional Data with Spatial Dependence: A Penalized Approach. Stoch. Environ. Res. Risk Assess. 2017, 31, 7–22. [Google Scholar] [CrossRef]

- Aguilera-Morillo, M.; Aguilera, A. Multi-Class Classification of Biomechanical Data: A Functional LDA Approach Based on Multi-Class Penalized Functional PLS. Stat. Model. 2020, 20, 592–616. [Google Scholar] [CrossRef]

- Vidal, M.; Aguilera, A.M. pfica: Independent Component Analysis for Univariate Functional Data; R Package Version 0.1.2. 2021. Available online: https://CRAN.R-project.org/package=pfica (accessed on 25 May 2021).

- Ghanem, R.; Spanos, P. Stochastic Finite Elements: A Spectral Approach; Springer: New York, NY, USA, 1991. [Google Scholar]

- Acal, C.; Aguilera, A.M.; Escabias, M. New Modeling Approaches Based on Varimax Rotation of Functional Principal Components. Mathematics 2020, 8, 2085. [Google Scholar] [CrossRef]

- Delaigle, A.; Hall, P. Defining Probability Density for a Distribution of Random Functions. Ann. Stat. 2010, 38, 1171–1193. [Google Scholar] [CrossRef]

- Gutch, H.W.; Theis, F.J. To Infinity and Beyond: On ICA over Hilbert spaces. In Latent Variable Analysis and Signal Separation; Theis, F.J., Cichocki, A., Yeredor, A., Zibulevsky, M., Eds.; Springer: Berlin/Heidelberg, Germany, 2012; pp. 180–187. [Google Scholar]

- Silverman, B.W. Smoothed Functional Principal Components Analysis by Choice of Norm. Ann. Stat. 1996, 24, 1–24. [Google Scholar] [CrossRef]

- Qi, X.; Zhao, H. Some Theoretical Properties of Silverman’s Method for Smoothed Functional Principal Component Analysis. J. Multivar. Anal. 2011, 102, 742–767. [Google Scholar] [CrossRef][Green Version]

- Lakraj, G.P.; Ruymgaart, F. Some Asymptotic Theory for Silverman’s Smoothed Functional Principal Components in an Abstract Hilbert Space. J. Multivar. Anal. 2017, 155, 122–132. [Google Scholar] [CrossRef]

- Ocaña, F.A.; Aguilera, A.M.; Valderrama, M.J. Functional Principal Component Analysis by Choice of Norm. J. Multivar. Anal. 1999, 71, 262–276. [Google Scholar] [CrossRef]

- Aguilera, A.M.; Aguilera-Morillo, M.C. Comparative Study of Different B-Spline Approaches for Functional Data. Math. Comput. Model. 2013, 58, 1568–1579. [Google Scholar] [CrossRef]

- Ocaña, F.A.; Aguilera, A.M.; Escabias, M. Computational Considerations in Functional Principal Component Analysis. Comput. Stat. 2007, 22, 449–465. [Google Scholar] [CrossRef]

- Kollo, T. Multivariate Skewness and Kurtosis Measures with an Application in ICA. J. Multivar. Anal. 2008, 99, 2328–2338. [Google Scholar] [CrossRef]

- Loperfido, N. A New Kurtosis Matrix, with Statistical Applications. Linear Algebra Its Appl. 2017, 512, 1–17. [Google Scholar] [CrossRef]

- Rice, J.A.; Silverman, B.W. Estimating the Mean and Covariance Structure Nonparametrically when the Data are Curves. J. R. Stat. Soc. Ser. B 1991, 53, 233–243. [Google Scholar] [CrossRef]

- Schäfer, J.; Strimmer, K. A Shrinkage Approach to Large-Scale Covariance Matrix Estimation and Implications for Functional Genomics. Stat. Appl. Genet. Mol. Biol. 2005, 4, 1–29. [Google Scholar] [CrossRef] [PubMed]

- Noury, N.; Hipp, J.F.; Siegel, M. Physiological Processes Non-linearly Affect Electrophysiological Recordings During Transcranial Electric Stimulation. Neuroimage 2016, 140, 99–109. [Google Scholar] [CrossRef] [PubMed]

- Novi, S.L.; Roberts, E.; Spagnuolo, D.; Spilsbury, B.M.; Price, D.C.; Imbalzano, C.A.; Forero, E.; Yodh, A.G.; Tellis, G.M.; Tellis, C.M.; et al. Functional Near-Infrared Spectroscopy for Speech Protocols: Characterization of Motion Artifacts and Guidelines for Improving Data Analysis. Neurophotonics 2020, 7, 1–15. [Google Scholar] [CrossRef] [PubMed]

- Artoni, F.; Delorme, A.; Makeig, S. Applying Dimension Reduction to EEG data by Principal Component Analysis Reduces the Quality of its Subsequent Independent Component Decomposition. Neuroimage 2018, 175, 176–187. [Google Scholar] [CrossRef]

- Tong, S.; Thakor, N.V. Quantitative EEG Analysis Methods and Clinical Applications; Artech House: Boston, MA, USA, 2009. [Google Scholar]

- Ieva, F.; Paganoni, A.M.; Tarabelloni, N. Covariance-based Clustering in Multivariate and Functional Data Analysis. J. Mach. Learn. Res. 2016, 17, 1–21. [Google Scholar]

- Zhang, X.; Wang, J.L. From Sparse to Dense Functional Data and Beyond. Ann. Stat. 2016, 44, 2281–2312. [Google Scholar] [CrossRef]

- Xiao, L. Asymptotic Theory of Penalized Splines. Electron. J. Stat. 2019, 13, 747–794. [Google Scholar] [CrossRef]

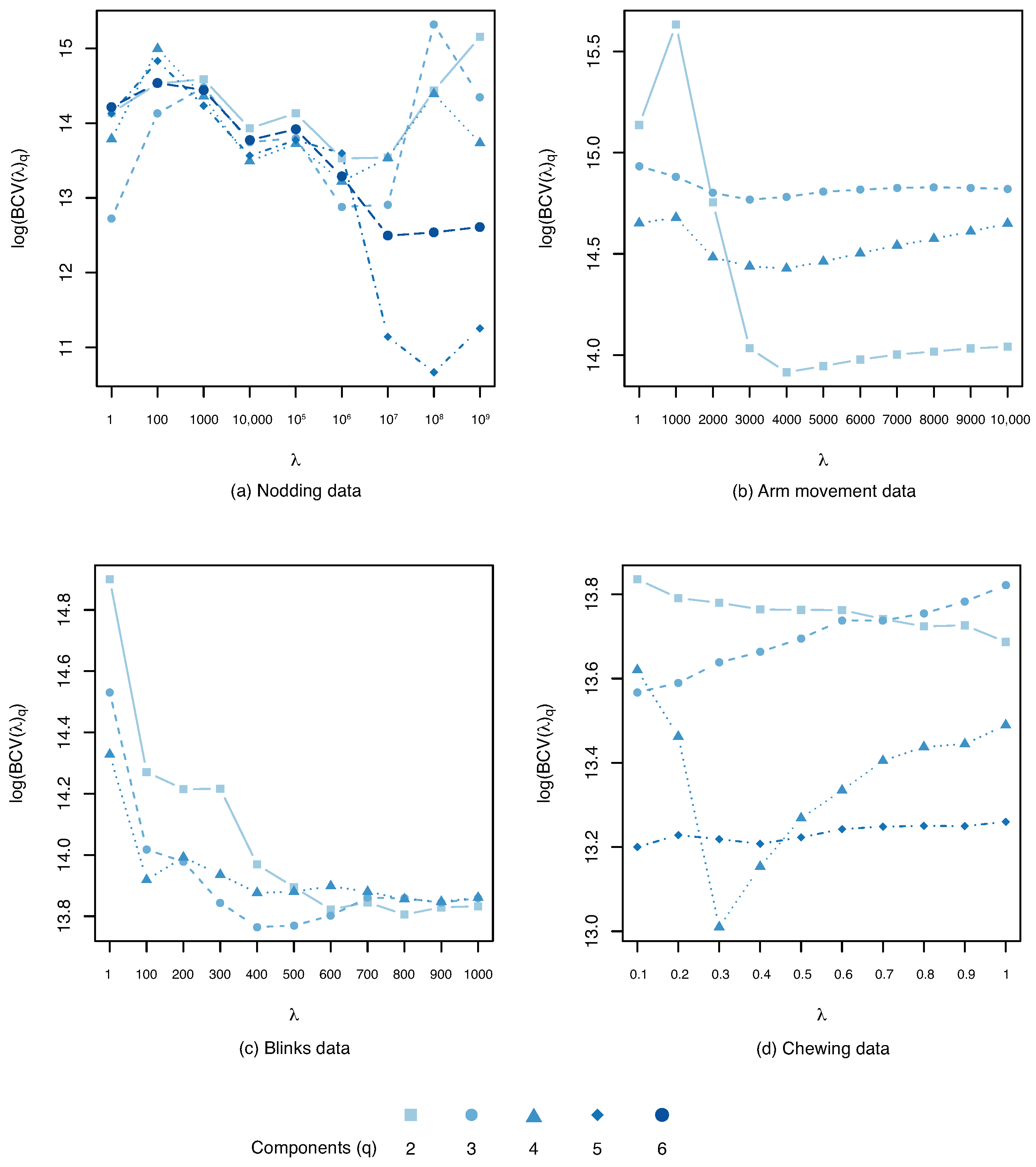

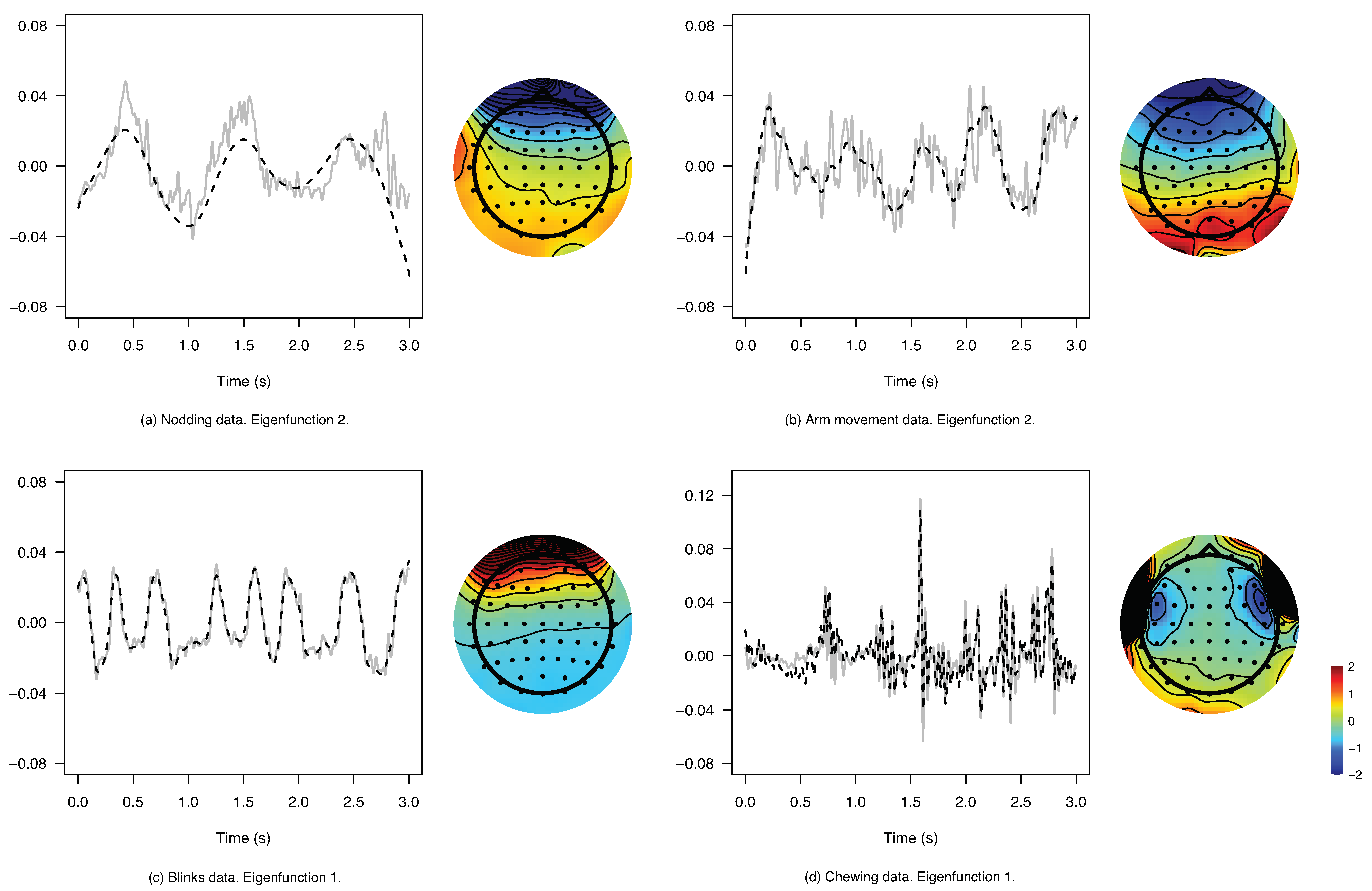

| Trial | q | log-bcv | var (%) | var (%) | ||

|---|---|---|---|---|---|---|

| Nodding | 6 | 5 | 10.66 | 99.40 | 94.43 | |

| Arm mov. | 4 | 2 | 4000 | 13.91 | 75.85 | 62.42 |

| Blinks | 4 | 3 | 400.0 | 13.76 | 97.50 | 93.56 |

| Chewing | 5 | 4 | 0.300 | 13.01 | 68.23 | 68.03 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Vidal, M.; Rosso, M.; Aguilera , A.M. Bi-Smoothed Functional Independent Component Analysis for EEG Artifact Removal. Mathematics 2021, 9, 1243. https://doi.org/10.3390/math9111243

Vidal M, Rosso M, Aguilera AM. Bi-Smoothed Functional Independent Component Analysis for EEG Artifact Removal. Mathematics. 2021; 9(11):1243. https://doi.org/10.3390/math9111243

Chicago/Turabian StyleVidal, Marc, Mattia Rosso, and Ana M. Aguilera . 2021. "Bi-Smoothed Functional Independent Component Analysis for EEG Artifact Removal" Mathematics 9, no. 11: 1243. https://doi.org/10.3390/math9111243

APA StyleVidal, M., Rosso, M., & Aguilera , A. M. (2021). Bi-Smoothed Functional Independent Component Analysis for EEG Artifact Removal. Mathematics, 9(11), 1243. https://doi.org/10.3390/math9111243