1. Introduction

Global navigation satellite systems (GNSS) are widely used in positioning, navigation, and timing (PNT) services. The accuracy of the precise positioning can reach the level of centimeters and satisfy a pervasive use in civil and military applications. GNSS is being developed at a fast pace, and the systems in full operation at present include the United States of America (USA)’s Global Positioning System (GPS) and Russia’s Global Navigation Satellite System (GLONASS). The European Union plans to complete the construction of the Galileo system, while China is going to fully operate the BeiDou Navigation Satellite System (BDS) by 2020 [

1,

2]. The basic principle of GNSS data processing is to mathematically solve the interesting PNT parameters in the observation models with measurements of the distances between GNSS satellites and receivers. However, the biases in the signal measurements lead to errors in the models and degrade the accuracy of the solutions. Consequently, the bias estimation plays an important role in the quality of the final PNT services [

3,

4,

5]. Reducing the variance of the bias estimates can more precisely recover the measurements and improve the service quality.

Fast and precise GNSS data processing uses the carrier-wave phase measurement by the receivers. The phase measurement only records the fractional part of the carrier phase plus the cumulated numbers. Therefore, the phase measurements from GNSS receivers are not directly the satellite–receiver distance, and an additional unknown integer ambiguity needs to be solved so that the distance can be recovered. Methods for solving the integer ambiguities were investigated in the past few decades, and some effective approaches such as the ambiguity function method (AFM) and the Least-squares ambiguity Decorrelation Adjustment (LAMBDA) method were proposed, which are widely used in practice [

6,

7]. The LAMBDA method-based GNSS data processing is usually composed of four steps [

6,

8]. Firstly, the interesting parameters for PNT services are estimated together with the unknown ambiguities by the least-squares method or Kalman filtering. Secondly, the integer ambiguities are resolved according to the float estimates and variance–covariance (VC) matrix by decorrelation and searching methods. Thirdly, the searched integer ambiguities are validated to assure that they are the correct integers. Fourthly, the interesting unknown parameters of PNT services in the measurement models are derived with the validated integer ambiguities. The reliable ambiguity resolution is critical for fast and precise PNT services. The above steps work well when the errors of the measurements are small, but the performance degrades quickly when the errors grow. The errors of the phase measurements affect the solutions of the float ambiguities in the first step and destroy the integer nature of the ambiguities that are searched in the second step. As a result, the fixed integer vector cannot pass the validation in the third step and, thus, the fast and precise GNSS PNT services will be unreachable.

GNSS signals propagate through the device hardware when they are emitted from the satellite or received by the receivers, leading to time delays, i.e., biases. The biases play the role of errors in the measurements when they cannot be successfully estimated and, thus, block the success of ambiguity fixing. The difficulty in the phase bias estimation lies in the correlation between the unknown bias and the ambiguity parameters. This correlation leads to rank-deficiency of the equation set and, thus, the parameters cannot be solved by the least-squares method or Kalman filtering method in the first step of ambiguity fixing [

9,

10]. It should be noted that estimation of some parameters leading to rank-deficiency in GNSS data processing can be avoid by the techniques such as

-system theory [

11]. However, those techniques focus on solving the estimable parameters and cannot solve the problems when the inestimable parameters are critical. In this case, if we want to estimate the bias parameter or the ambiguity parameter accurately, the conventionally inestimable parameter must be accurately known, which is a dilemma for GNSS researchers. Fortunately, the Monte Carlo-based approaches have the potential to solve this dilemma [

12,

13]. Furthermore, it can be found in references that the Monte Carlo method is also used for the ambiguity resolution without phase error estimation in attitude determination [

14] and code multipath mitigation with only code observations [

15]. Those researches use different ideas in data processing and are not related to the topic of phase bias estimation.

The sequential Monte Carlo (SMC) method or particle filtering is used in the state-space approach for time series modeling since the basic procedure proposed by Goden [

16] (see the descriptions in References [

16,

17,

18]). The SMC is mainly to solve problems with non-Gaussian and non-linear models, while it is rarely used in GNSS data processing. SMC can be regarded as Bayesian filtering implemented by the Monte Carlo method [

19]. A state-space model, i.e., hidden Markov model, can be described by two stochastic processes

and

. The latent Markov process of initial density satisfies

, and the Markov transition density is

, with

satisfy

, which is a conditional marginal density. Bayesian filtering gives the estimation of the posterior density

, where

is a normalizing constant. The analytical solution

can be derived for some special cases such as the solution of a Kalman filter for linear models with Gaussian noise. Otherwise, the analytical solution is not available, and the Monte Carlo-based solutions can be used to approximate the solution via random samples as SMC. The probability density of the variable is represented by weighted samples, and the estimates can be expressed by

. The SMC is mainly composed of three steps according to References [

20,

21], the update step which updates the weighs of the particles according to

, the resampling step to avoid degeneracy indicating most particles with weights close to zero, and the prediction step which transits the particles to the next epoch.

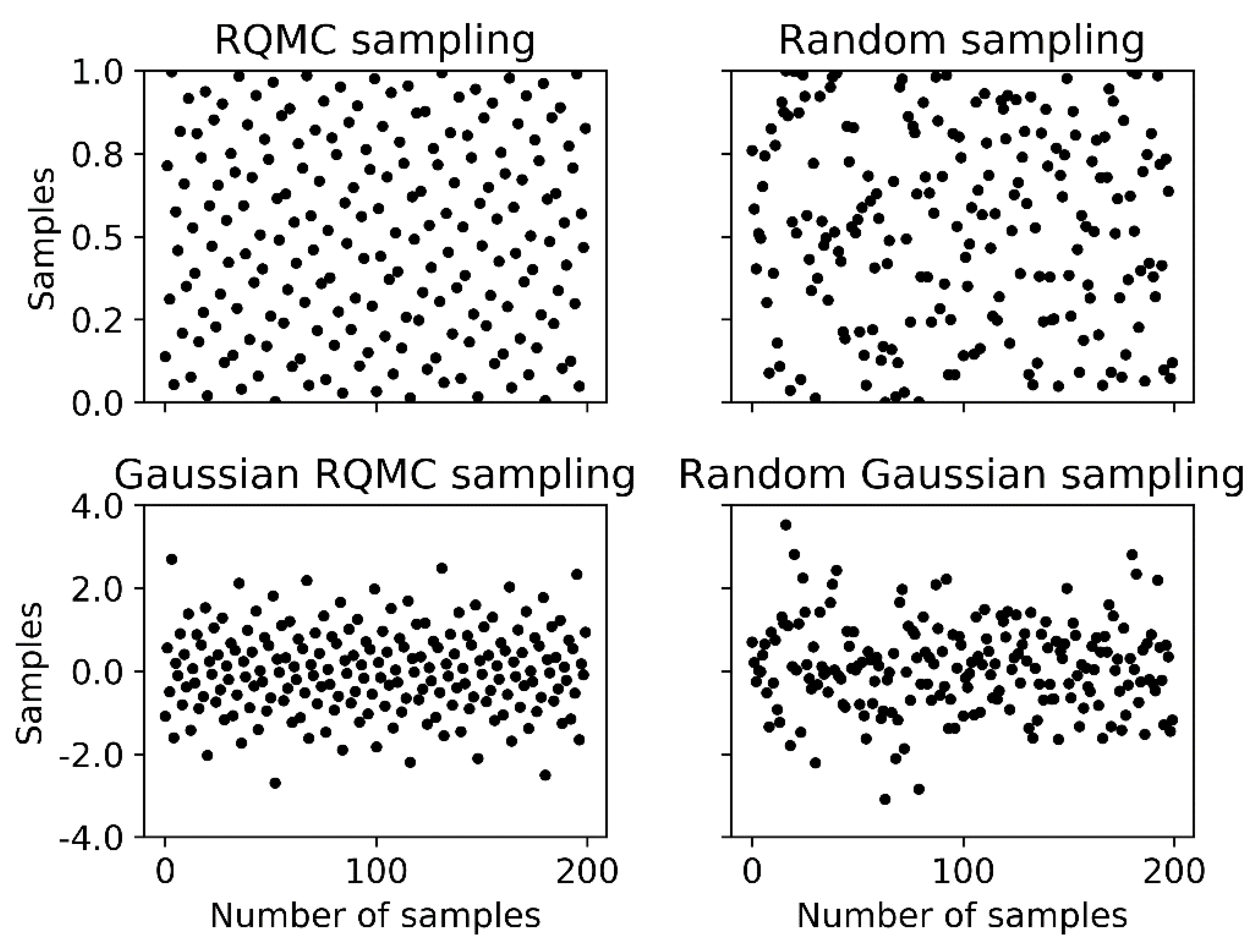

However, the random sequence used in the Monte Carlo method has possible gaps and clusters. Quasi-Monte Carlo (QMC) replaces the random sequence with a low-discrepancy sequence which can reduce the variance and has better performance [

22,

23,

24,

25]. Until now, the QMC-based variance reduction method in GNSS data processing was not addressed. This study aims to combine the GNSS data processing procedure and the sequential QMC (SQMC) methods to obtain precise GNSS phase bias estimates. The paper firstly gives an overview of the mathematical problem in GNSS bias estimation and then provides a renewed algorithm introducing the variance reduction method based on QMC to precisely estimate GNSS phase bias.

The remainder of this article is structured as follows:

Section 2 presents the procedure and mathematical models of the GNSS data processing and introduce the difficulties in phase bias estimation.

Section 3 gives an overview of the QMC theory.

Section 4 describes the proposed GNSS phase bias estimation algorithm based on the SQMC method.

Section 5 gives the results of phase bias estimation with practical data, and

Section 6 draws the key research conclusions.

2. Mathematic Models of GNSS Precise Data Processing and the Dilemma

The code and phase measurements are usually used to derive the interesting parameters for GNSS services. The code measurement is the range obtained by multiplying the traveling time of the signal when it propagates from the satellite to the receiver at the speed of light. The phase measurement is much more precise than the code measurement but is ambiguous by an unknown integer number of wavelengths when used as range, and the ambiguities are different every time the receiver relocks the satellite signals [

7].

In the measurement models, the unknowns include not only the coordinate parameters, but also the time delays caused by the atmosphere and device hardware, as well as the ambiguities for phase measurement. In relative positioning, the hardware delays can be nonzero values and should be considered in multi-GNSS and GLONASS data processing, i.e., inter-system bias (ISB) [

9,

10,

26] and inter-frequency bias (IFB) [

27], respectively. The ISB and IFB of the measurements are correlated with the ambiguities and are the key problems to be solved.

The double difference (DD) measurement models are usually constructed to mitigate the common errors of two non-difference measurements. For short baselines, the DD measurement mathematical models including the interesting parameters for GNSS PNT services, such as coordinates for positioning, the unknown ambiguities, and the ISB or IFB parameters, can be written in the form of matrices as

where

denotes the vector of observation residuals;

is composed of unknown single difference (SD) ambiguities

, where

is the reference satellite and

is the number of the DD-equations, and

and

are the stations;

includes the ISB and IFB rate; vector

contains the unknown station coordinate and the other interesting parameters;

is the measurements from the receiver;

is the design matrix of the elements in

;

is the design matrix with elements of zero and the corresponding carrier wavelength. Matrix

transforms SD ambiguities to DD ambiguities;

is the design matrix of

with elements of zero and the SD of the channel numbers for phase IFB rate parameter, with elements of zero and one for the phase ISB parameter.

GNSS data processing such as for precise positioning is used to precisely determine the elements in

. Denoting the weight matrix of the DD measurements [

7] by

, the normal equation of the least-squares method is

For simplification, the notation in Equation (3) is used.

If the bias vector

is precisely known, the estimation of

can be realized by following four steps.

Step 1: Derive the solution of

and

with float SD-ambiguities by least-squares method.

After the float SD ambiguities in

b are estimated, the SD ambiguities and their VC matrix are transformed into DD ambiguities

and the corresponding VC matrix

by differencing.

Step 2: Fix the integer ambiguities. The elements of

are intrinsically integer values but the values calculated are floats. Resolving the float values to integers can improve the accuracy to sub-centimeter level with fewer observations [

28]. The ambiguity resolution can be expressed by

where function

maps the ambiguities from float values to integers. This process can be implemented by the LAMBDA method [

6,

8] which can efficiently mechanize the integer least square (ILS) procedure [

29]. This method is to solve the ILS problem described by

where

denotes the vector of integer ambiguity candidates. The LAMBDA procedure contains mainly two steps, the reduction step and the search step. The reduction step decorrelates the elements in

and orders the diagonal entries by

Z-transformations to shrink the search space. The search step is a searching process finding the optimal ambiguity candidates in a hyper-ellipsoidal space.

Step 3: Validate the integer ambiguities. The obtained ambiguity combination

is not guaranteed to be the correct integer ambiguity vector and it requires to be validated. The R-ratio test [

29,

30] can be employed. This test tries to ensure that the best ambiguity combination, which is the optimal solution of Equation (6), is statistically better than the second best one. The ratio value is calculated by

where

is the second best ambiguity vector according to Equation (6). The integer ambiguity vector

will be accepted if the ratio value is equal to or larger than a threshold, and it will be refused if the ratio value is smaller than the threshold.

Step 4: Derive the fixed baseline solution. After the integer ambiguity vector passes the validation test,

is used to adjust the float solution of other parameters, leading to the corresponding fixed solution. This process can be expressed by

where

denotes the fixed solution of

;

is the VC matrix of the fixed solution

;

is the VC matrix of

and

;

refers to the float ambiguity solution;

is the float solution of

x.

The fixed solution can reach sub-centimeter level. If errors in the observation models are removed, the successful ambiguity fixing requires observations of only a few epochs, even a single epoch.

From Equations (5) and (6), the successful ambiguity resolution requires accurate float ambiguity estimates and the corresponding VC matrix. If the bias in is unknown, the bias cannot be separated by Equation (4) but stays with the ambiguity parameter. As a result, the obtained ambiguity parameters include bias and the ambiguity resolution will fail. When both the bias and the ambiguity are parameterized simultaneously, the bias parameter is correlated with the ambiguity parameter and, thus, it is impossible to separate the bias values and get precise float ambiguities estimates. Mathematically, the normal equation set (Equation (2)) will be rank-deficient and cannot be solved.

4. SQMC-Based Algorithm for Phase Bias Estimation

The ratio for integer ambiguity validation in step 3 of

Section 2 reflects the closeness of the float ambiguity vector to the integer ambiguity vector and, thus, shows the quality of the ambiguity fixing performance. If the ambiguity is successfully fixed to the integer ambiguities with high probability, the phase bias can be precisely derived. Although the searching and validation step cannot be linearized to satisfy the conditions for using the linear least-squares methods, we can count on the Monte Carlo-based method to develop algorithms for precise phase bias estimation.

The ratio value used in the ambiguity validation reflects the quality of the integer ambiguity fixing performance as used in the ambiguity validation. If

is the correct ambiguity vector and

represents the phase bias parameters at epoch

, we can have the assumption that the conditional probability density

has the proportional relationship

, and simply let

The PDF

is then used as the likelihood function in the Monte Carlo-based estimation method to update the weights. This is expressed as

This designed likelihood function works for the estimation of the phase biases which affect the ratio values in ambiguity fixing.

The following procedure is implemented to calculate at epoch for each element in sample set : (a) is used as known bias values to calibrate the measurement model by Equation (4) and solve the equation set to get float SD ambiguities and the corresponding VC matrix; (b) the DD ambiguities and the VC matrix are calculated, and the integer ambiguities are fixed using the LAMBDA method; (c) is calculated using Equation (7).

Moreover, the phase bias can be regarded as constant between epochs, and the transition function which transports samples from epoch

to epoch

is

where

is the normal distributed noise with each element

.

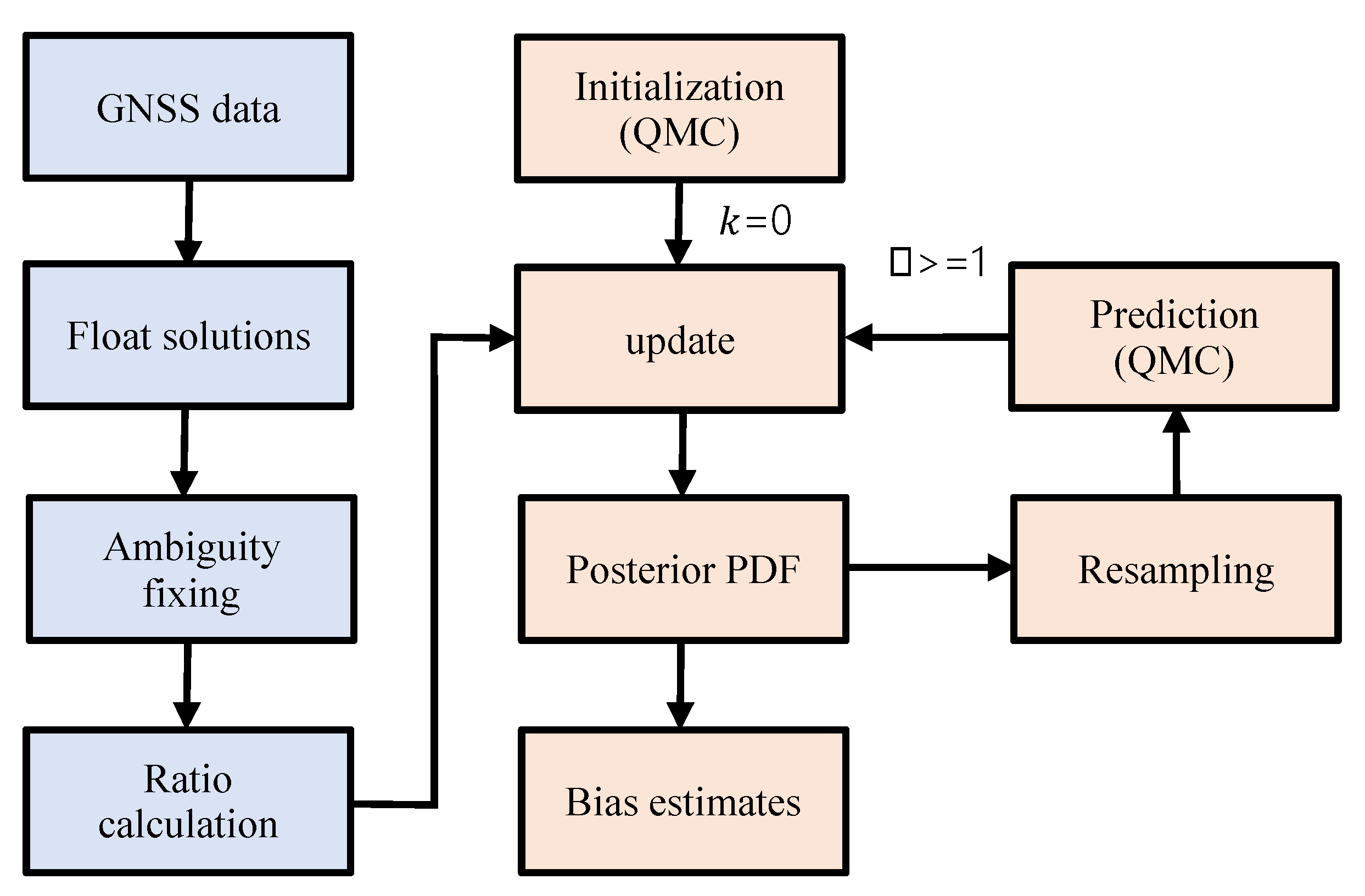

The flowchart of the SQMC procedure for phase bias estimation is plotted in

Figure 2, and the corresponding algorithm is presented as Algorithm 3.

| Algorithm 3: GNSS phase bias estimation (SQMC) |

| Initialization | Generate a QMC or RQMC points where ; generate according to , where is the prior density of |

| Update | Update the weights according to likelihood function of measurements with .

Normalize the weights by , Calculate the estimated value and variance by and , respectively. |

| Resample | Resample if ,

where is the effective number of samples which is calculated by and is a threshold which can be set to the value of . |

| prediction | Generate a QMC or RQMC points where ; draw new samples with noise of . |

| | Repeat steps 2 and 3 for the following epochs. |

Algorithm 3 combines the QMC method and the GNSS ambiguity fixing procedures together to estimate the GNSS phase bias. The low-discrepancy sequences of QMC are included for variance reduction.

Section 5 shows the applications of the approach with practical GNSS data.

5. Experiments with Practical GNSS Data

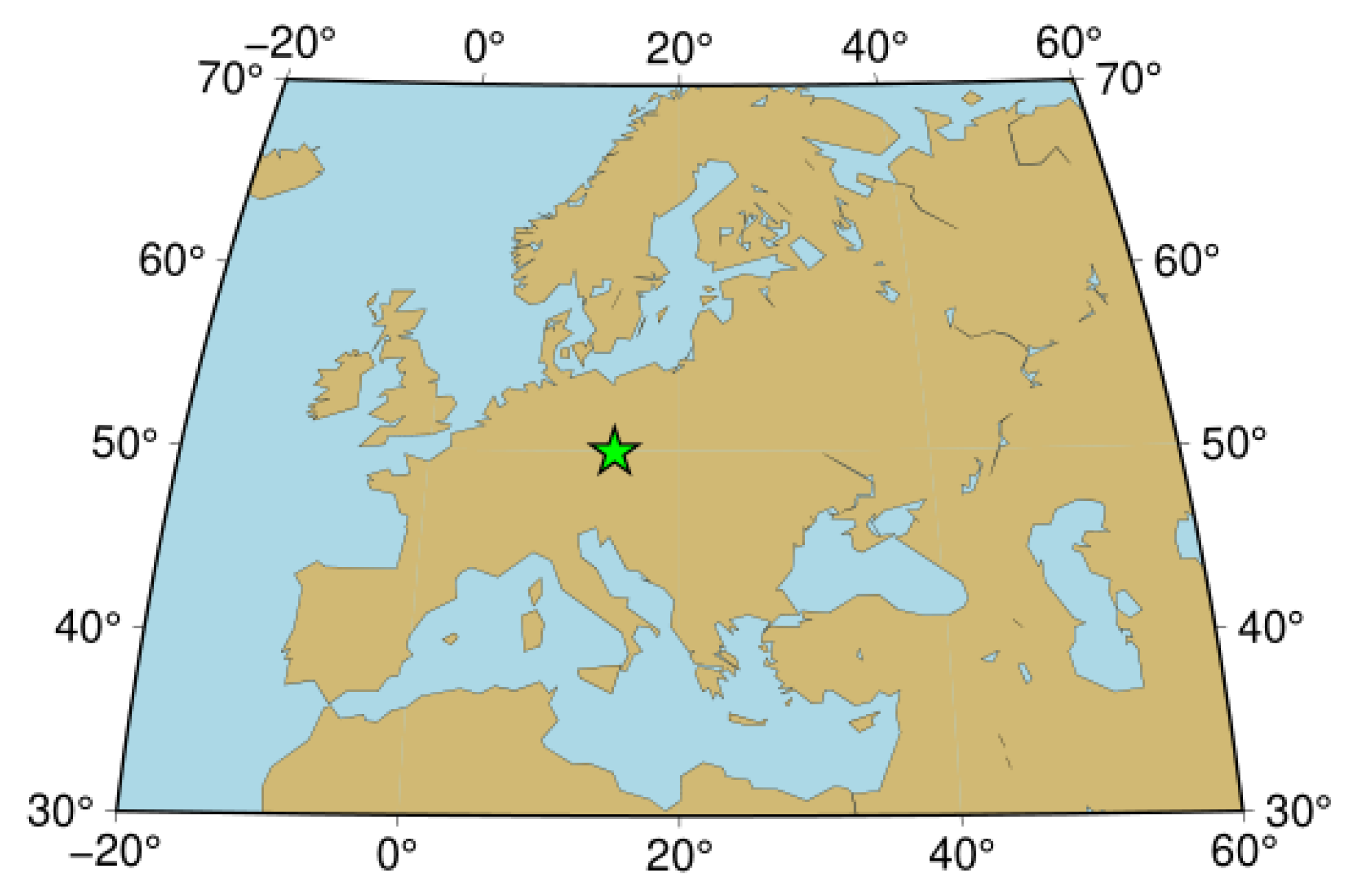

The GLONASS phase IFB estimation of baseline GOP6_GOP7 in networks of international GNSS service (IGS) (

ftp://ftp.cddis.eosdis.nasa.gov/pub/gnss) was taken as an example to demonstrate the variance reduction by QMC. The baseline was in Europe with the location in

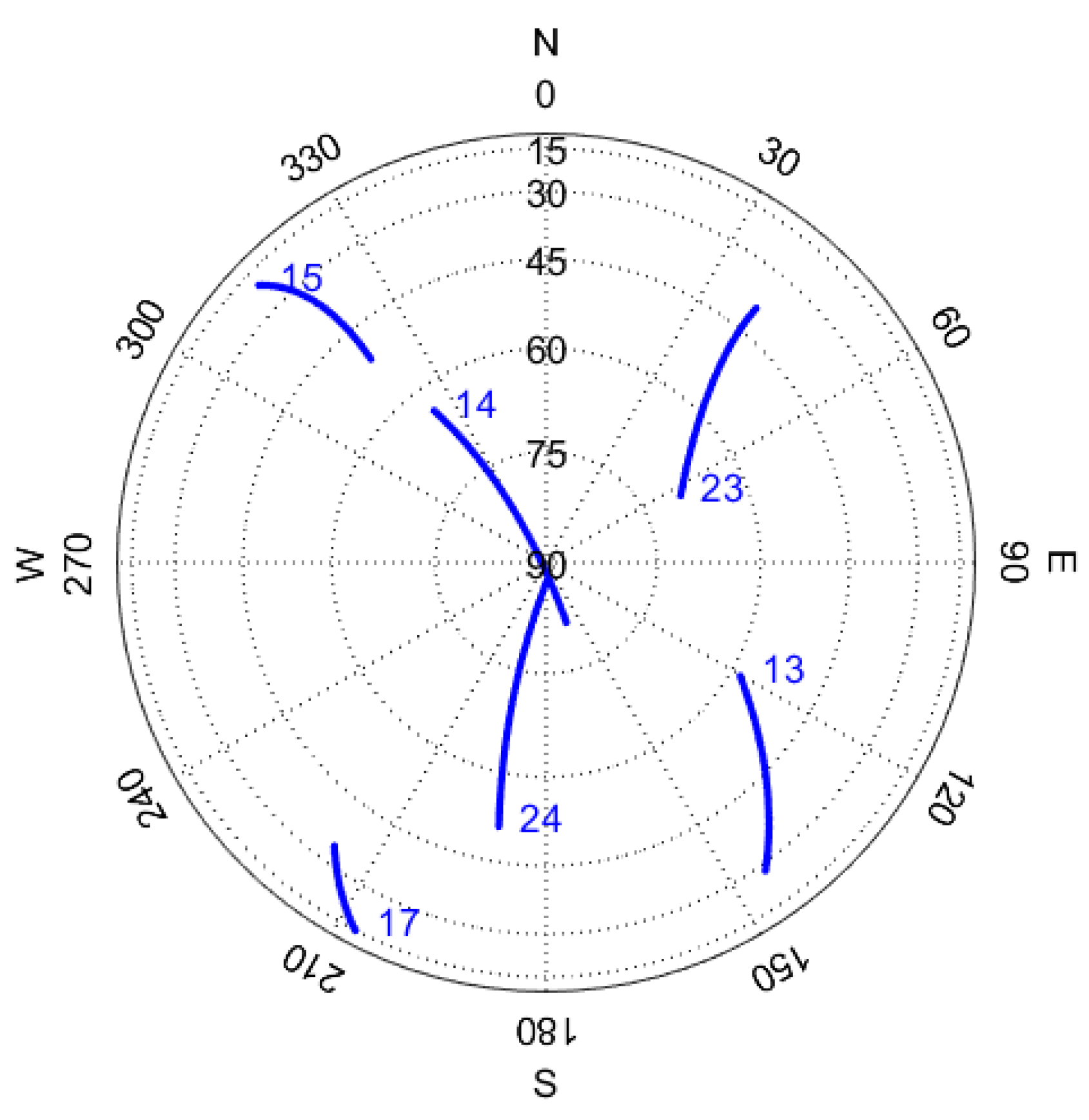

Figure 3, and the two GNSS stations were equipped with LEICA GRX1200+GNSS 9.20 and TRIMBLE NETR9 5.01 receivers, respectively. The measurement data were collected at GPS time (GPST) 9:00–10:00 a.m. on day of year (DOY) 180 of 2018 with an epoch interval of 30 seconds. Six GLONASS satellites were observed during the time span, and the satellite slot numbers are shown at the beginning of the satellite trajectories in

Figure 4. The baseline had a post-processed GLONASS phase IFB around −29.5 mm/frequency number (FN) which can be regarded as the true values of both L1 and L2 frequencies.

Both the code and carrier phase measurements of frequency L1 and L2 were used to form Equation (1) in the experiment. Only one IFB parameter was included because the IFB values for both frequency L1 and L2 were regarded as the same. Algorithm 3 for SQMC-based phase-bias estimation was implemented, and the IFB estimate was derived at each epoch. Furthermore, the IFB estimates were also calculated using the SMC-based approach for comparison. When the bias was estimated many times, the estimates of each time were different because the RQMC sequence was used in the SQMC-based procedure, and the pseudo-random sequence was used in the SMC-based procedure. The standard deviation (SD) of the estimates was calculated to evaluate the performance.

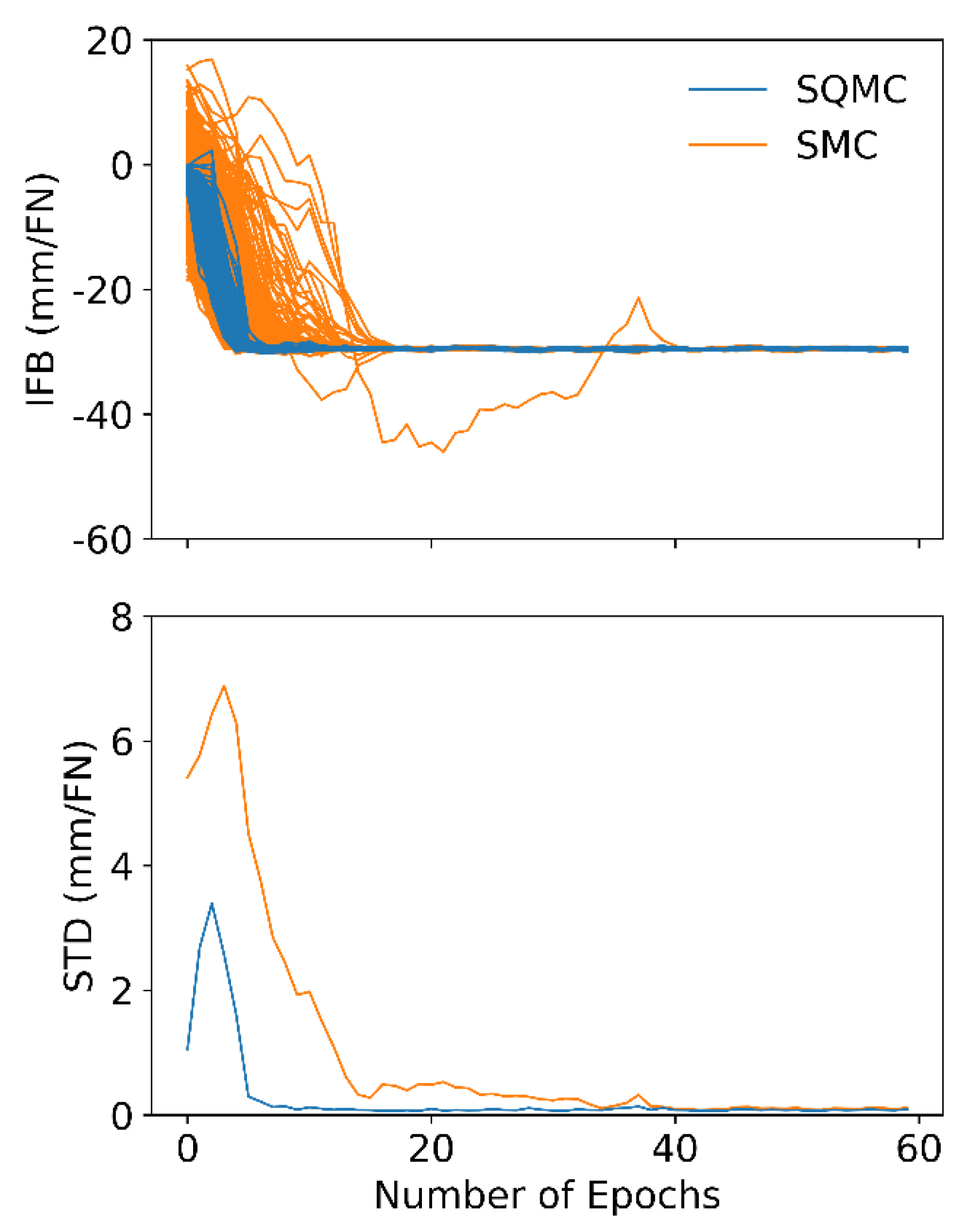

Firstly, the IFB was estimated 1000 times with the SMC-based approach. The sigma of the transition noise in Equation (22) was set to 1 mm/FN, and the sample number was fixed to a value of 100. The 1000 estimates of IFB for the first 60 epochs are drawn in

Figure 5 as yellow lines. Afterward, the SQMC-based approach with a Sobol sequence for IFB estimation was implemented. The SQMC strategy also had a sigma of the transition noise as 1 mm/FN and the number of samples as 100. The IFB was also estimated 1000 times, and the results for the first 60 epochs are plotted in

Figure 5 as blue lines. The SDs of the 1000 estimates of SMC and SQMC approaches are calculated and presented in

Figure 5 as a yellow line and blue line, respectively.

Obviously, both approaches could successfully estimate the IFB values. After convergence, the estimates of the two algorithms were very similar, such as the results after epoch 40th. However, the estimates of the SQMC-based algorithm converged faster than those of the SMC approach, and the corresponding SD was much lower at the beginning. In the worst case of the 1000 estimates, the results converged, i.e., became close to the true value with a difference smaller than 1.5 mm/FN at the seventh epoch for SQMC and at the 40th epoch for SMC.

The variance of the estimates for the SQMC-based algorithm with variation in the sample numbers and the transition noise was also analyzed.

Firstly, the phase IFB was estimated 100 times using the SMC-based and SQMC-based algorithms, separately, with the number of particles varied from 30 to 200. The sigma of transition noise was fixed to 1 mm/FN for each estimation. The SDs of the 100 estimates at epochs 1, 2, 5, and 10 are presented in

Figure 6, where we can see that the SD decreased for both SMC and SQMC as the number of particles increased. The SD for SQMC had much smaller values compared with SQMC. This indicates that the SQMC-based algorithm could achieve estimates with smaller SD than the SMC-based algorithm using even smaller sample numbers. This is very meaningful in GNSS data processing, because the main time-consuming step is the ambiguity fixing procedure in step 2 in

Section 2 for each sample. Fewer samples result in a lighter computation load.

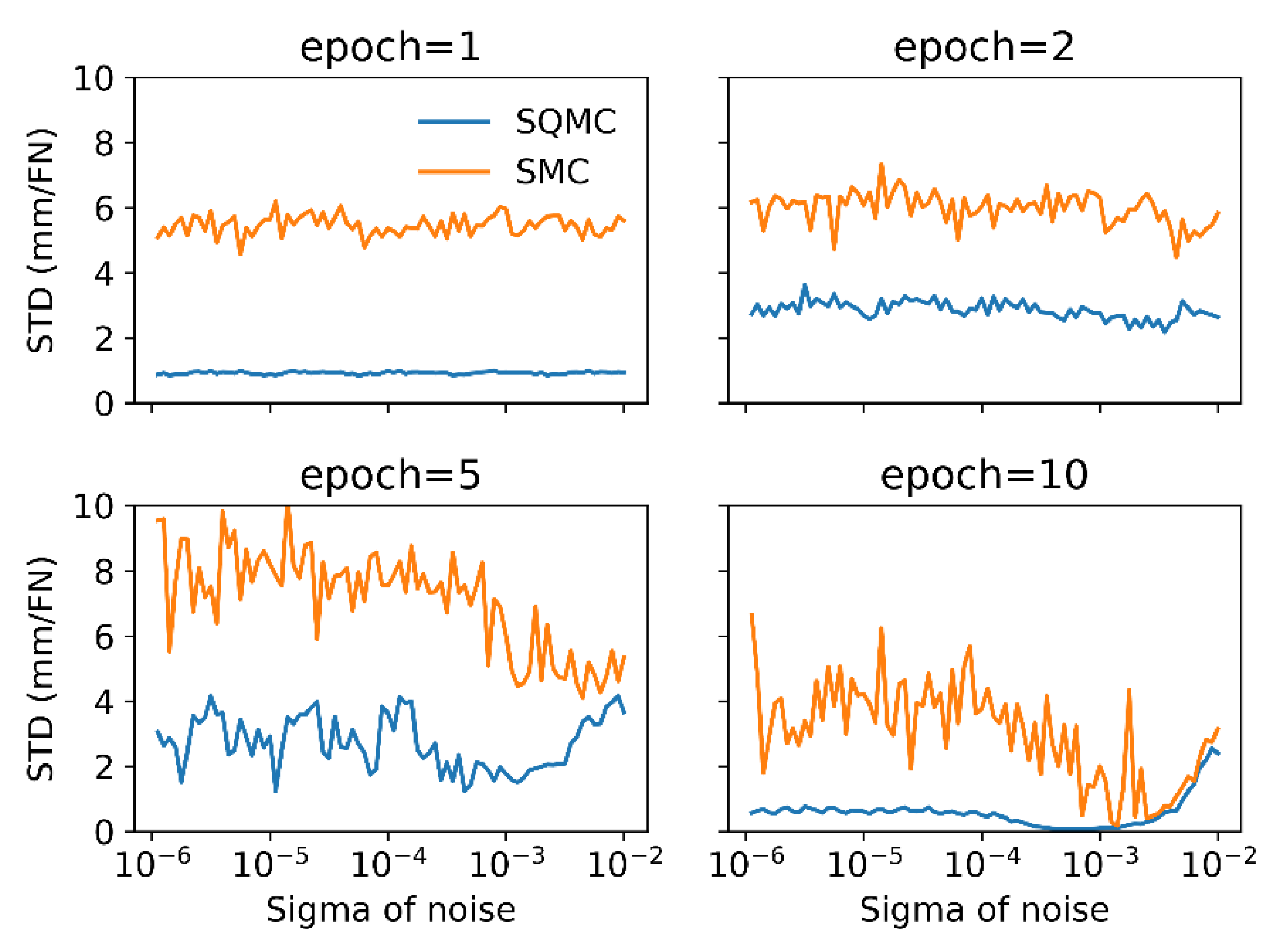

Then, the effects of the transition noise were evaluated. The phase IFB was estimated another 100 times with the sigma of the transition noise from

to

m/FN, and the number of the samples was fixed to 100. The SDs of the 100 estimates were calculated at each epoch and the values at epochs 1, 2, 5, and 10 are plotted in

Figure 7. Obviously, the SDs of the SQMC-based algorithm were much smaller than those of the SMC-based algorithm at all the four epochs. The SDs at epoch 10 showed a curve near

m/FN and were larger than the STDs corresponding to other nearby sigma values. This indicates that the transition noise level in the transition model needs to be set carefully. Too high a transition noise will increase the SDs; however, if the sigma is too small, the samples cannot evolve to the proper field and the prior density cannot be well represented by the samples, also leading to large STD values.