In the case of location-scale classes probability distribution of all considered test statistics does not depend on and , so we generated samples of various sizes n with observations with the c.d.f. and r observations with various alternative distributions concentrated in the outlier region. We shall call such observations “contaminant outliers”, shortly c-outliers. As was mentioned, outliers which are not c-outliers, i.e., outliers from regular observations with the c.d.f. , are very rare.

An outlier identification method is ideal if each outlier is detected and each non-outlier is declared as a non-outlier. In practice it is impossible to do with the probability one. Two errors are possible: (a) an outlier is not declared as such (masking effect); (b) a non-outlier is declared as an outlier (swamping effect). We shall write shortly “masking value” for the mean number of non-detected c-outliers and “swamping value” for the mean number of “normal” observations declared as outliers in the simulated samples.

If swamping is small for two tests then a test with smaller masking effect should be preferred because in this case the distribution of the data remaining after excluding of suspected outliers should be closer to the distribution of non-outlier data.

From the other side, if swamping for Method 1 is considerably bigger than swamping of Method 2 and masking is smaller for Method 1, then it does not mean that Method 1 is better because this method rejects many extreme non-outliers from the tails of the regular distribution and the sample remaining after classification may be not treated as a sample from this regular distribution even if all c-outliers are eliminated.

We used two different classes of alternatives: in the first case c-outliers are spread widely in the outlier region around the mean, in the second case c-outliers are concentrated in a very short interval laying in the outlier region. More precisely, if right outliers were searched, then we simulated r observations concentrated in in the right outlier region using the following alternative families of distribution:

For lack of place we present a small part of our investigations. Please note that the results are very similar for all sample sizes . Multiple outlier problem is not very relevant for smaller sample sizes.

6.1. Investigation of Outlier Identification Methods for Normal Data

We use notation , and for the Bolshev’s, Hawking’s, Rosner’s, Davies-Gather’s, and the new methods, respectively. If method is based on maximum likelihood estimators, then we write method, if it is based on robust estimators, we write method.

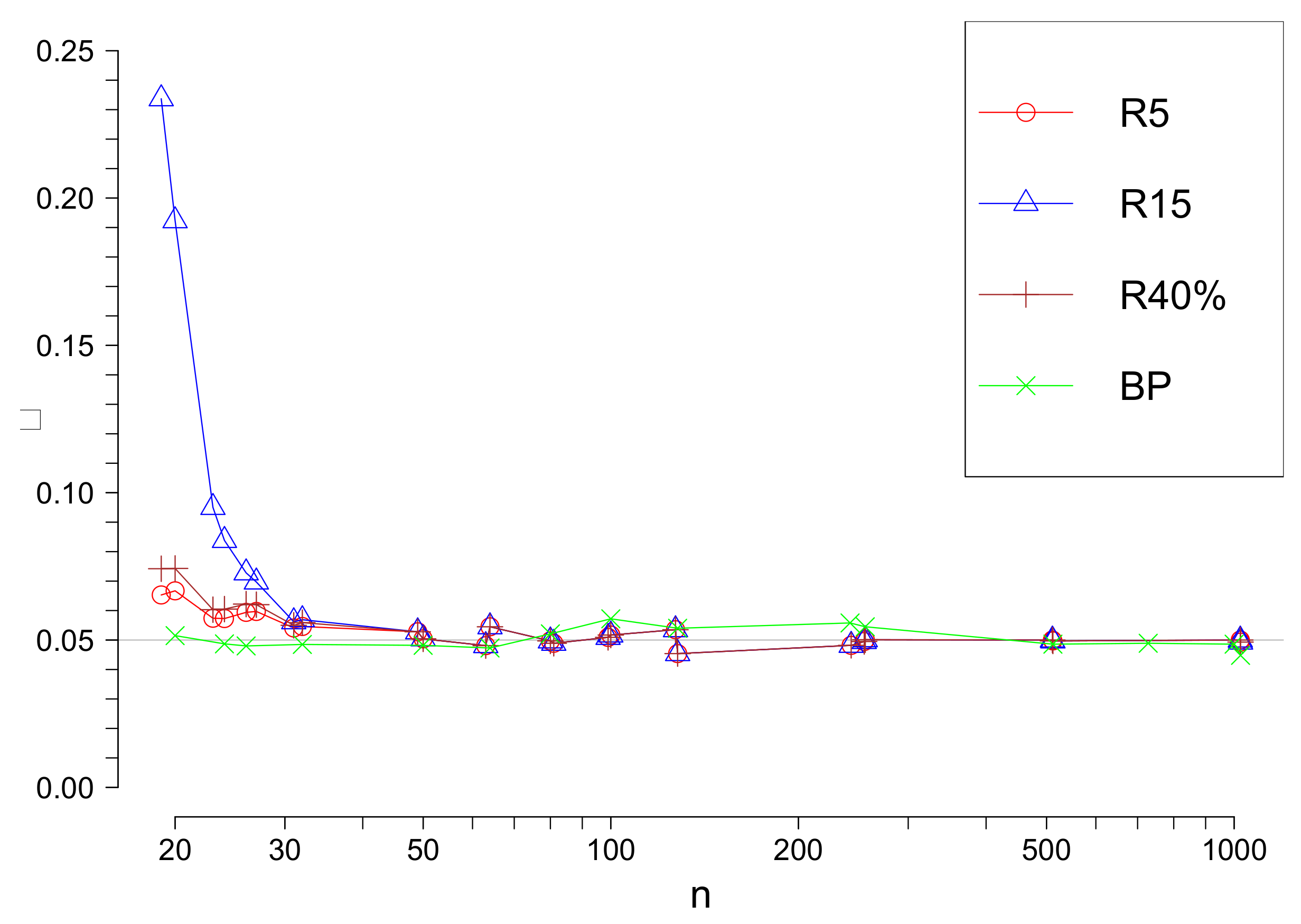

For comparison of above considered methods we fixed the significance level

. We remind that the significance level

is the probability to reject minimum one observation as an outlier under the hypothesis

which means that all observations are realizations of i.i.d. with the same normal distribution. The only test, namely R method uses approximate critical values of the test statistic, so the significance values for this test is only approximately

and depends on

s and

n. In

Figure 1 the true significance level value for

and

in function of

n are given.

The , and R tests methods have a drawback that the upper bound for the possible number of outliers s must be fixed. The and tests have an advantage that they do not require it.

Our investigations showed that H,B and methods have other serious drawbacks. So firstly let us look closer at these methods.

If the true number of c-outliers

r exceeds

s, then the

B and

H methods cannot find them even if they are very far from the limits of the outlier region. Nevertheless, suppose that

r does not exceed

s and look at the performance of the

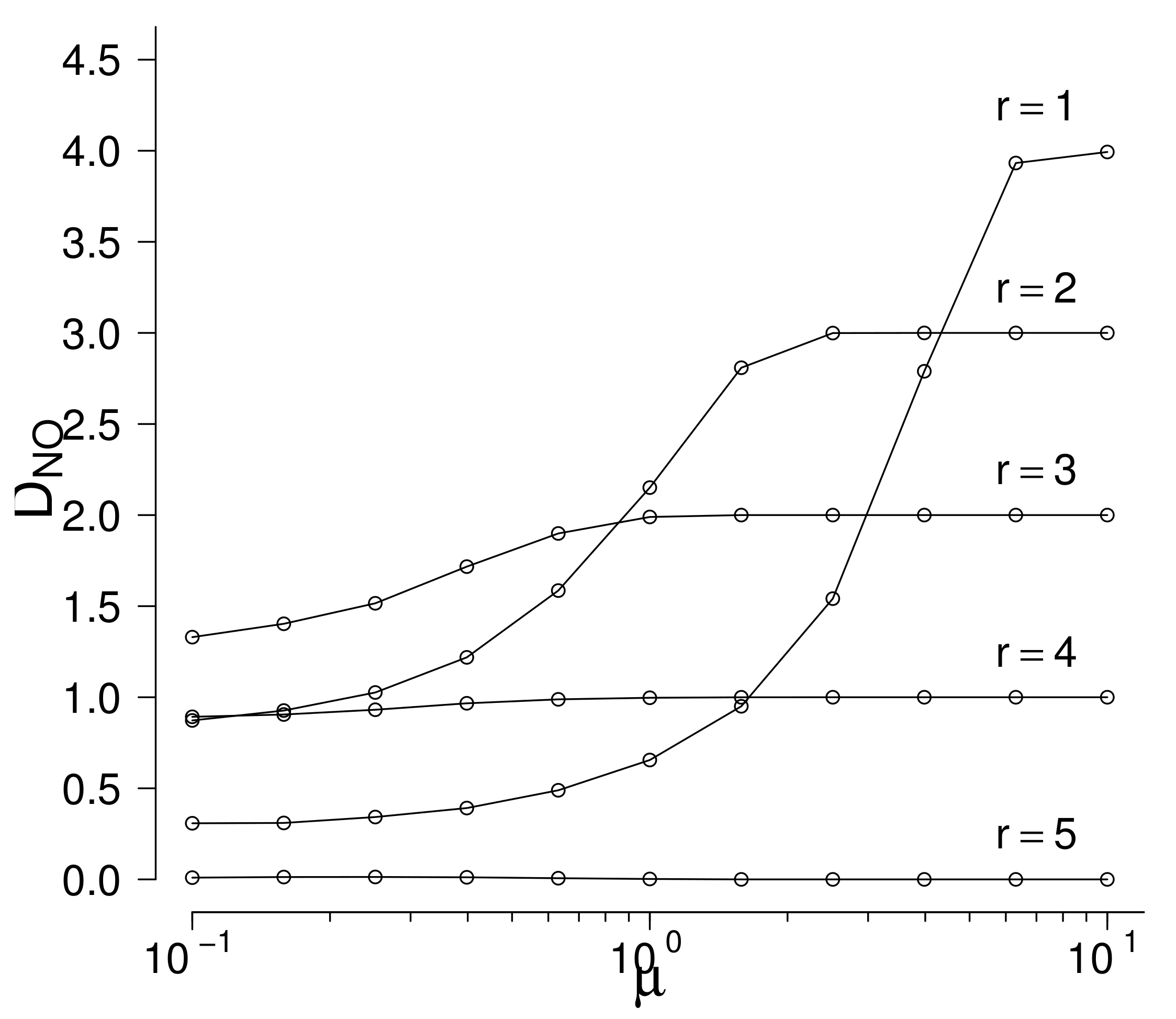

H method. Set

,

, and suppose that c-outliers are generated by right-truncated normal distribution

with fixed

and increasing

. Note that the true number of c-outliers is supposed to be unknown but do not exceed

. In

Figure 2 the mean numbers of rejected non-c-outliers

are given in function of the parameter

(the value of the parameter

is fixed) for fixed values of

r see

Figure 2. In

Table 7 the values of

plus the values of the mean numbers of truly rejected c-outliers are given.

Table 7 shows that if

, then if

is sufficiently large, the c-outlier is found but the number of rejected non-c-outliers

increases to 4, so swamping is very large. Similarly, if

, then

increases to 3, so swamping is large. Beginning from

not all c-outliers are found even for large

. Swamping is smallest if the true value

r coincides with

s but even in this case one c-outlier is not found even for large

. Taking into account that the true number

r of c-outliers is not known in real data, the performance of the

H methos is very poor. Results are similar for other values of

n,

s, and distributions of c-outliers. As a rule,

H mehod finds rather well the c-outliers but swamping is very large because this method has a tendency to reject a number near

s of observations for remote alternatives. which is good if

but is bad if

r is different from

s.

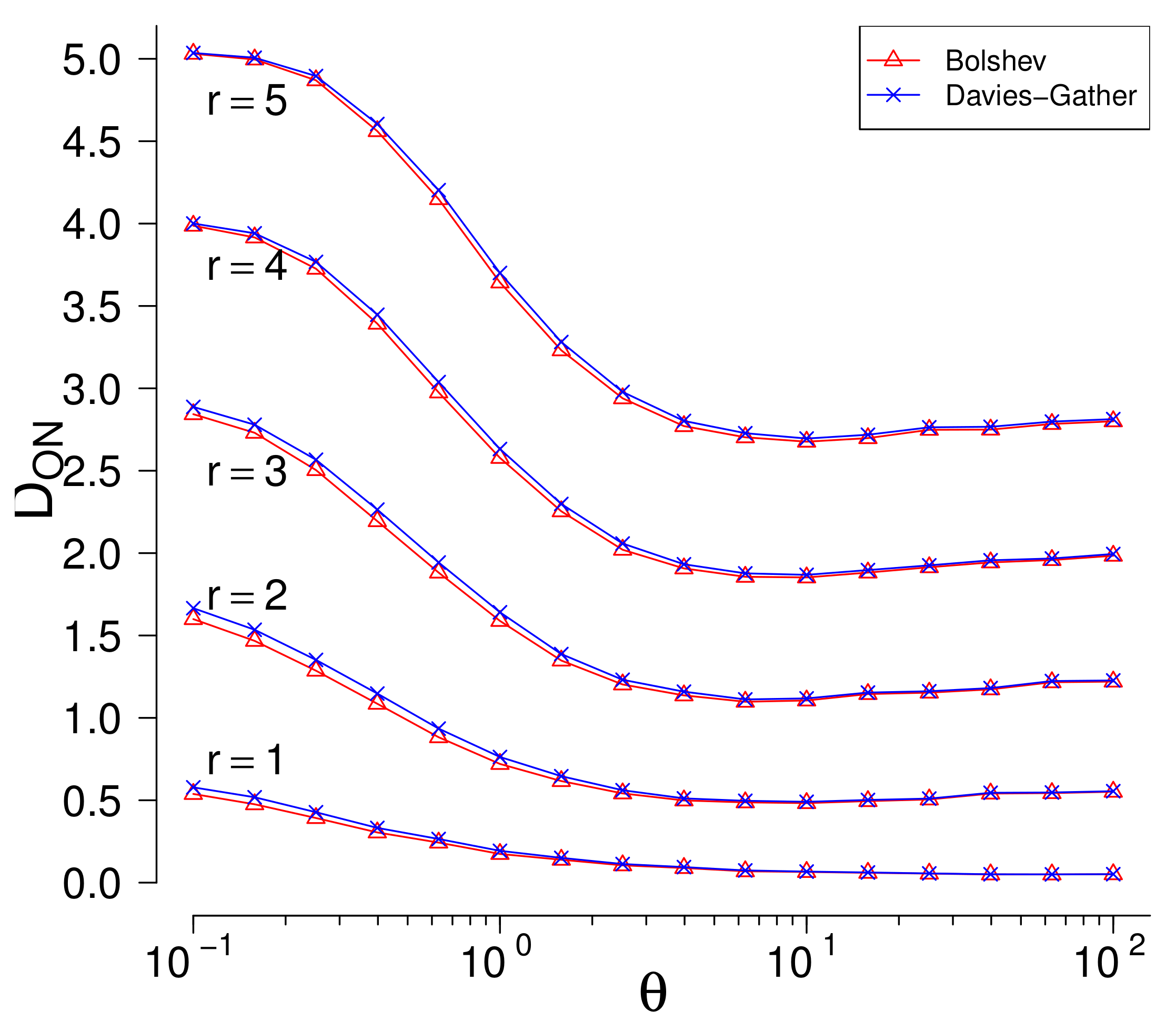

The

B and

tests have a drawback that they use maximum likelihood estimators which are not robust and estimate parameters badly in presence of outliers. Once more, set

,

, and suppose that c-outliers are generated by two-parameters exponential distribution

with increasing

. Swamping values are negligible in, so only masking values( mean numbers of non-rejected c-outliers

) are important. In

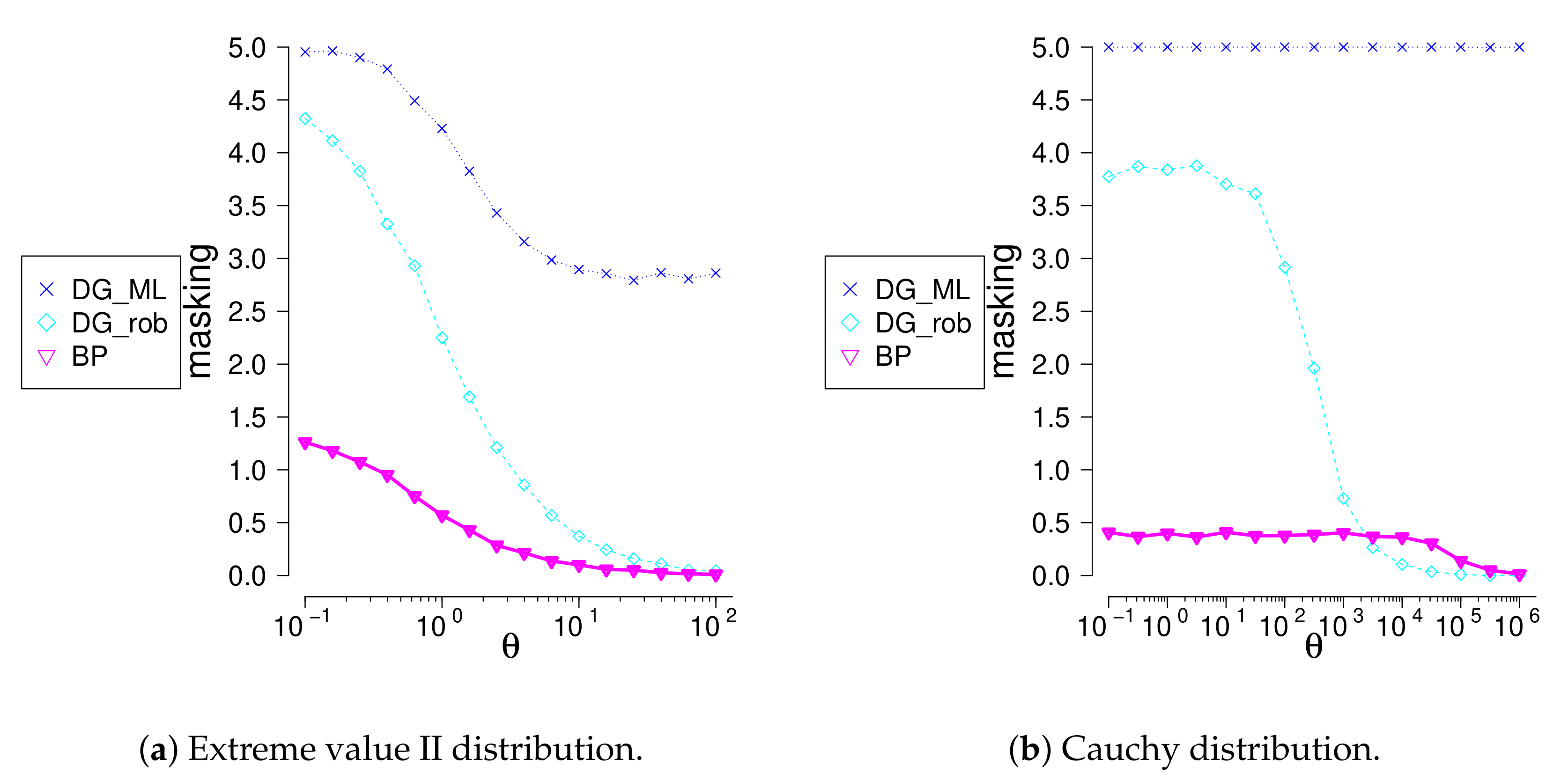

Figure 3 the masking values in function of the parameter

are given for fixed values of

r.

Both methods perform very similarly. The masking values are large for every value of . If r increases, then masking values increase, too. For example, if , then almost 3 c-outliers from 5 are not rejected on average even for large values of .

Similar results hold taking other values of n, s and various distributions of c-outliers.

The above analysis shows that the B, H, methods have serious drawbacks, so we exclude these methods from further consideration.

Let us consider the remaining three methods:

R,

, and

. For small

n the true significance level of Rosner’s test differ considerably from the suggested, so we present comparisons of tests performance for

(see

Table 8 and

Table 9). Truncated exponential distribution was used for outliers simulation. Remoteness of the mean of outliers from the border of the outlier region is characterized by the parameter

.

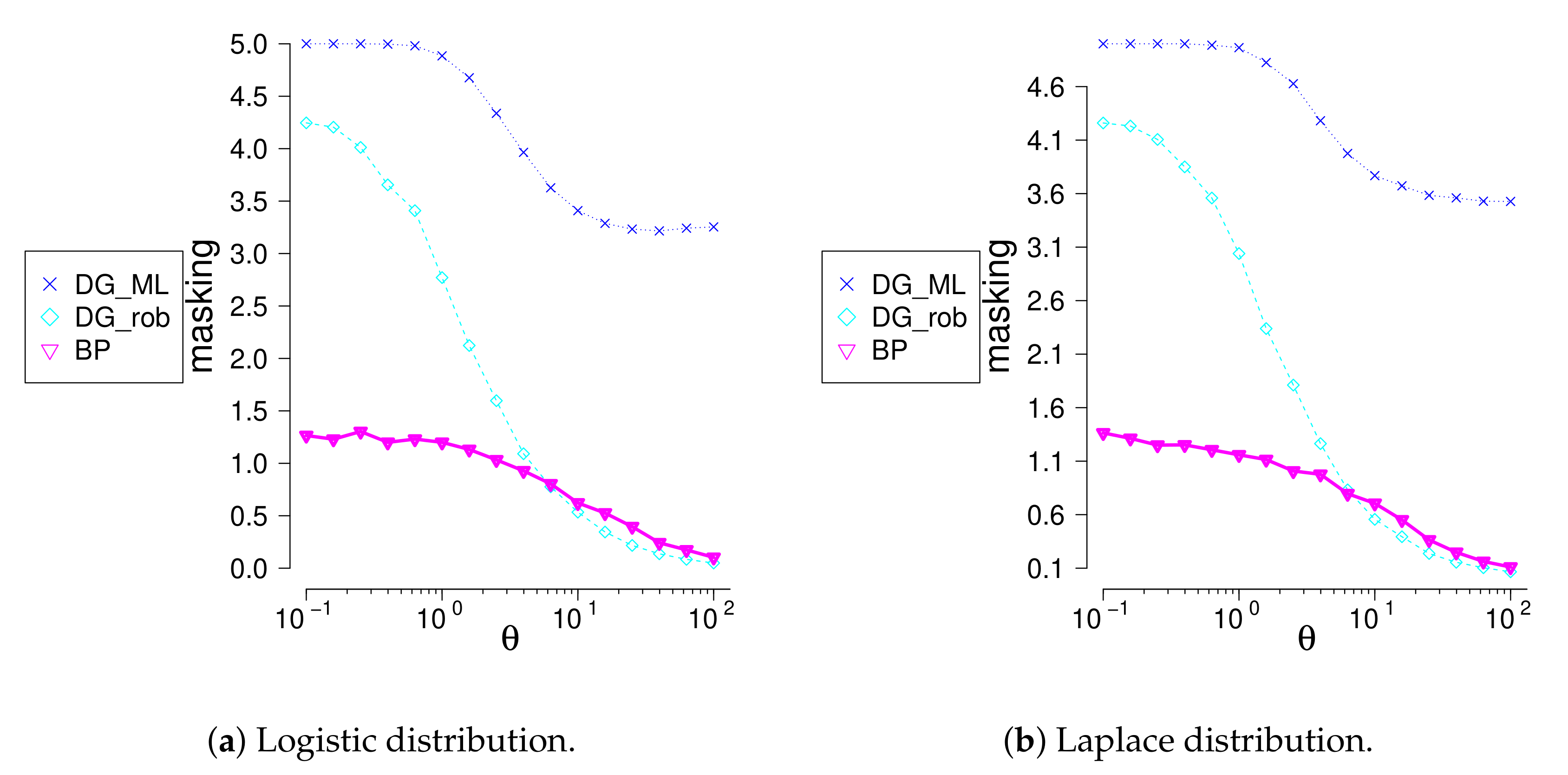

Swamping values (the mean numbers of non-c-outliers declared as outliers) are very small for all tests. For example, even if , the R and methods reject on average as outliers only 0.05 from non-c-outliers. For the method this number is from , and 900 non-c-outliers, respectively. So only masking values (the mean numbers of c-outliers declared as non-outliers) are important for outlier identification methods comparison.

Necessity to guess the upper limit s for a possible number of outliers is considered as a drawback of the Rosner’s method. Indeed, if the true number of outliers r is greater than the chosen upper limit s, then outliers are not identified with the probability one. In addition, even if , it is not clear how important is closeness of r to s. So first we investigated the problem of the upper limit choice.

Here we present masking values of the Rosner’s tests for and . Similar results are obtained for other values of s.

Our investigations show that it is sufficient to fix

, which is clearly larger than it can be expected in real data. Indeed,

Table 8 and

Table 9 show that for

and

do not find

outliers even if they are very remote, as it should be. Nevertheless, we see that even if the true number of outliers

r is much smaller than

, for any considered

n,

the masking values of the

test are approximately the same (even a little smaller) as the masking values of the tests

and

, for

they are clearly smaller.

Hence, should be recommended for Rosner’s test application, and performance of , Davies-Gather robust ( and the proposed methods should be compared.

All three methods find all c-outliers if they are sufficiently remote. For the method gives uniformly smallest masking values and the method gives uniformly largest masking values for any considered r in all diapason of alternatives. For and the result is the same. For and (it means that even for very small the data is seriously corrupted) the BP method is also the best except that for the most remote alternatives the method slightly outperforms the BP method. For and the most of alternatives the BP method strongly outperforms other methods, except the most remote alternatives.

The and Rosner’s methods have very large masking if many outliers are concentrated near the outlier region border. In this case data is seriously corrupted; however, these methods do not see outliers.

Conclusion: in most considered situations the method is the best outlier identification method. The second is Rosner’s method with , and the third is the Davies-Gather method based on robust estimation. Other methods have poor performance.