Abstract

This paper applies the Method of Successive Approximations (MSA) based on Pontryagin’s principle to solve optimal control problems with state constraints for semilinear parabolic equations. Error estimates for the first and second derivatives of the function are derived under -bounded conditions. An augmented MSA is developed using the augmented Lagrangian method, and its convergence is proven. The effectiveness of the proposed method is demonstrated through numerical experiments.

MSC:

49M37; 35K58; 65M15

1. Introduction

In this paper, we investigate the optimal control problem for parabolic equations with state constraints and Neumann boundary conditions, formulated as follows:

subject to the dynamics

where A is a second-order elliptic operator. The specific problem setup is described in Section 2.1.

The solution methods most commonly employed to address such problems include solving the Hamilton–Jacobi–Bellman (HJB) equation [1,2] and utilizing the Pontryagin maximum principle [3,4]. Although both approaches have been extensively studied, analytical solutions are generally unavailable for most practical cases, leading to the development of various numerical methods.

Current numerical techniques for solving the HJB equation include semi-Lagrangian schemes [2], sparse grid approaches [5], tensor-calculus-based methods [6], and successive Galerkin approximations [7,8,9,10]. Notably, policy iteration and Howard’s algorithm [11,12,13] are discrete implementations of the continuous Galerkin method. In the numerical solution of Pontryagin’s optimality principle, approaches such as the two-point boundary value problem method [14,15], collocation methods combined with nonlinear programming techniques [16,17,18,19], and the Method of Successive Approximations (MSA) [20] are often applied.

MSA plays a crucial role in the numerical resolution of both types of problems, as it is an iterative procedure based on alternating propagation and optimization steps. In [21], MSA is employed to solve optimal control problems governed by ordinary differential equations, with an extended version derived via the augmented Lagrangian method [22]. Additionally, in [23], a modified version of MSA for stochastic control problems is proposed, and convergence of the algorithm is established. In this paper, we provide an error estimate for MSA applied to optimal control problems of parabolic equations under specific conditions (see Lemma 3) and propose the corresponding augmented MSA.

The structure of this paper is as follows: In Section 2, we present preliminary results related to the original problem (1) and the corresponding Pontryagin maximum principle. In Section 3, we apply the Method of Successive Approximations (MSA) and its improved variant to solve the problem. Specifically, in Section 3.1, we introduce the basic MSA based on the Pontryagin principle; in Section 3.2, we state certain assumptions under which we derive the error estimate for the basic MSA; in Section 3.3, we introduce the augmented Pontryagin principle and the augmented MSA based on it; and in Section 3.4, we prove the convergence of the augmented MSA. Finally, in Section 4, we demonstrate the effectiveness of the augmented MSA through numerical experiments.

Notation Throughout this paper, denotes the inner product in , and denotes the inner product in . The constant C appearing in the text may represent different values and will be adjusted as necessary.

2. Preliminary Results

2.1. Problem Setting

Let be an open, bounded subset of with a -boundary, where . That is, the boundary is a -dimensional manifold of class , and lies locally on one side of its boundary. A function is said to be of class if it is of class and its second-order partial derivatives are Hölder continuous with exponent . For a fixed time interval , we define the space–time domain and its lateral boundary .

The function space is given by

where denotes the Hilbert space of functions y such that and . The associated norm is defined by

The control spaces are defined as and , where the exponents and ensure the required regularity and integrability conditions.

Consider the second-order differential operator A given by

where the coefficients satisfy for all . The operator A is assumed to be uniformly elliptic, meaning there exists a constant such that

The goal is to minimize the cost functional

subject to the parabolic system

where the conormal derivative is given by

and denotes the unit outward normal vector to .

The following assumption applies throughout this paper, and the functions f, F, G, and L are assumed to satisfy the conditions below.

Assumption 1.

Let , , , , , , and be a nondecreasing function mapping to . Furthermore, let and . The following conditions hold:

- 1.

- The function :- For every , is measurable on .- For almost every , is continuous on .- For almost every and every , is of class on .- For almost every ,

- 2.

- The function :- For every , is measurable on Ω.- For almost every , is continuous on and is of class on .- For almost every ,

- 3.

- The function :- For every , is measurable on .- For almost every , is continuous on .- For almost every and every , is of class on .- For almost every ,

- 4.

- The function :- For every , is measurable on .- For almost every , is continuous on .- For almost every and every , is of class on .- For almost every ,

2.2. Pontryagin’s Principle

In this section, we will introduce the first-order necessity condition for (1), which is Pontryagin’s principle. The complete proof process of Pontryagin’s principle of the control problem governed by the semilinear parabolic equation involved in this paper is given in [4].

First, we define the Hamiltonian function expression of (1), including the distribution Hamiltonian function and the boundary Hamiltonian function, and the expression is as follows:

for every

for every .

Theorem 1.

Let be the solution of . Then there exists a unique adjoint state , satisfying the following equation:

such that

Proof.

See Theorem 2.1 of [4]. □

3. Method of Successive Approximations

In this section, we propose a numerical algorithm to solve Problem (1) based on the maximum principle. The algorithm’s error analysis and convergence properties will be examined in the framework of continuous time and space. To achieve this, we employ the Method of Successive Approximations (MSA), initially introduced by Chernousko and Lyubushin in [20]. Below, we present the fundamental formulation of the method.

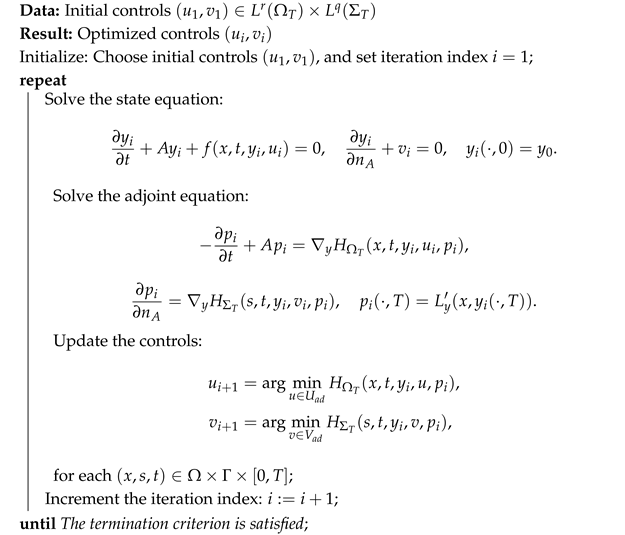

3.1. Basic MSA

The basic MSA alternates between solving the state equation and the adjoint equation, followed by an optimization step to update the control variables. In this approach, the state equation captures the system’s dynamics, while the adjoint equation provides gradient information essential for optimizing the Hamiltonian. At each iteration, the control variables are updated by minimizing the Hamiltonian over the admissible control sets. This iterative process is repeated until a predefined termination criterion is met, ensuring convergence under suitable conditions. The detailed steps of the algorithm are presented in Algorithm 1 below.

| Algorithm 1: Basic MSA |

|

The convergence of the basic MSA has been established for a limited class of linear quadratic regulators [24]. However, it is well documented that the method often diverges in more general settings, particularly when unfavorable initial controls are chosen [20,24]. This divergence underscores the need to understand the instability of the algorithm, specifically the relationship between the maximization step in Algorithm 1 and the underlying optimization problem (1). To address this issue, our goal is to modify the basic MSA to ensure robust convergence, even under less favorable conditions.

3.2. Error Estimate

In this section, we establish the relationship between the control index J and the minimization of the Hamiltonian through a lemma. Prior to this, we introduce the necessary assumptions.

Assumption 2.

For every , , and , the first-order and second-order derivatives of f, L, F, and G satisfy the following:

where is a constant.

Before deriving the error estimate, we first establish upper bounds for and under their respective norms. These bounds are obtained through the following two lemmas. Here, we denote , , , and .

Lemma 1.

Let Assumption 1 hold. Then, there exists a constant such that

Proof.

Refer to Proposition 4.1 of [4], along with the embedding and , to obtain the result. □

Lemma 2.

Let Assumptions 1 and 2 be fulfilled. Then, there exist constants such that

Proof.

By multiplying both sides of the parabolic Equation (3) corresponding to by and integrating over , we obtain

Since A is a uniform elliptic operator, we have

Therefore,

From the trace embedding theorem, we know :

where we used Young’s inequality for (6). So, we obtain

which implies

Thus, the differential form of Gronwall’s inequality yields the following estimate:

Since the embedding

we can prove

□

Lemma 3.

Suppose Assumptions 1 and 2 hold. Then, there exists a constant such that for any control variables , ,

Proof.

By considering the difference , the right-hand side of the equation below can be divided into three parts:

The third part can be estimated using Hölder’s inequality and Assumption 2, yielding

Adding A and D, and utilizing (3) and (4), along with the Taylor expansion, we obtain

Using the definition of the Hamiltonian, Lemma 1, and Hölder’s inequality, we obtain the following estimates:

Using a similar approach as above, we can derive an estimate for :

Substituting the estimates of into (9), and applying Young’s inequality and Lemma 2, we derive

Using Lemmas 1 and 2, and Hölder’s inequality, we derive the final estimate

where

□

From Lemma 3, it can be observed that Algorithm 1 replaces the minimization of the overall control index J with the minimization of the Hamiltonian. However, if the control gap between the updated control and the control generated in the previous iteration is too large, the overall control index J may fail to decrease. To address this issue, it is necessary to modify the Hamiltonian optimization step in Algorithm 1 to ensure that J decreases after each iteration.

3.3. Augmented MSA

To guarantee the decrease in the overall control index J, it is crucial to regulate the gap between the updated control variable and the control variable from the previous iteration. Inspired by the augmented Lagrangian method, a penalty term is incorporated into the original Hamiltonian. For a fixed penalty factor , we define an augmented distributed Hamiltonian function and an augmented boundary Hamiltonian function as follows:

Next, we present the corresponding first-order necessary condition, referred to as the augmented Pontryagin principle.

Proposition 1.

Suppose is the solution of . Then, there exists a unique adjoint state , satisfying Equation (4), such that

Proof.

The following results can be readily derived using Pontryagin’s principle:

□

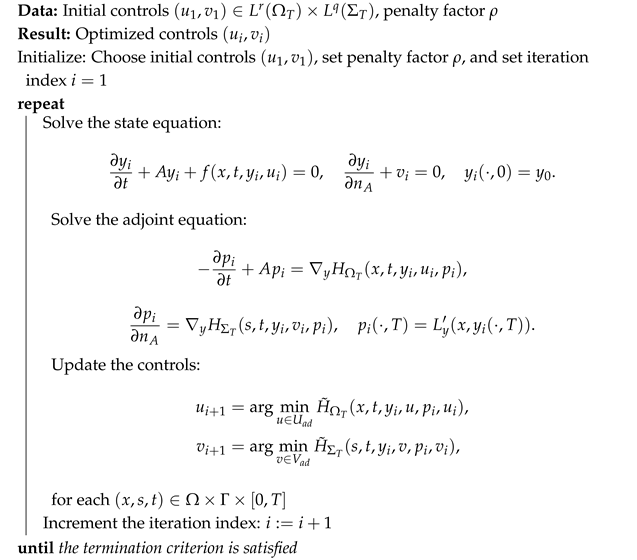

Based on the above proposition, we derive the augmented MSA as presented below.

In Algorithm 2, the Hamiltonian minimization step in the MSA is replaced with the minimization of the augmented Hamiltonian. By selecting an appropriate value for the penalty factor , the control index J is guaranteed to decrease with each iteration.

| Algorithm 2: Augmented MSA |

|

3.4. Convergence of Algorithm

We now establish the convergence of Algorithm 2 through the following theorem.

Theorem 2.

Suppose Assumptions 1 and 2 hold, and let and be any initial measurable controls satisfying . Further, assume that . Then, for , if and are generated by Algorithm 2, there exist and such that

Proof.

According to Lemma 3, we have

From the augmented Hamiltonian minimization step of Algorithm 2, we obtain

which implies

Summing N terms under the condition , we derive the following estimate

Thus, we have

By the Cauchy convergence criterion, there exist and such that in and in . □

4. Numerical Tests

In this section, we present numerical results for an optimal control problem governed by a parabolic partial differential equation defined on a two-dimensional domain . Problem (1) is solved using the Augmented Method of Successive Approximations (AMSA), as described in Algorithm 2. For comparison purposes, the Interior Point Method (IPM) and the Configuration Method (CM) are also implemented to solve the same problem. The numerical performance of these methods is compared to demonstrate the effectiveness and efficiency of the proposed AMSA.

All algorithms were implemented in Python 3.9.13. The numerical experiments were carried out on a desktop computer equipped with an Intel(R) Xeon(R) W-2245 CPU @ 3.90 GHz. Unless otherwise stated, all experiments were conducted under the same computational conditions.

The termination condition for the algorithm is given by

We consider the following optimal control problem with :

subject to

Here, we choose the following parameters: , , , , and .

To solve the state and adjoint equations, we employ the finite difference method. The spatial step size is set to in each direction, and the time step size is set to . The learning rate of gradient descent in the subproblem is dynamically adjusted, starting from and multiplying by if the change is not reduced and if the change is reduced. The termination criterion is set to . The numerical results obtained are presented below.

In order to evaluate the scalability of the proposed algorithm, Table 1 reports the computational time and maximum memory usage for solving the aforementioned example under varying temporal and spatial discretizations. Specifically, different time step sizes and spatial mesh resolutions are considered to assess the algorithm’s performance with respect to computational efficiency and memory consumption.

Table 1.

Computation time and maximum memory usage of the proposed algorithm under different temporal and spatial discretizations.

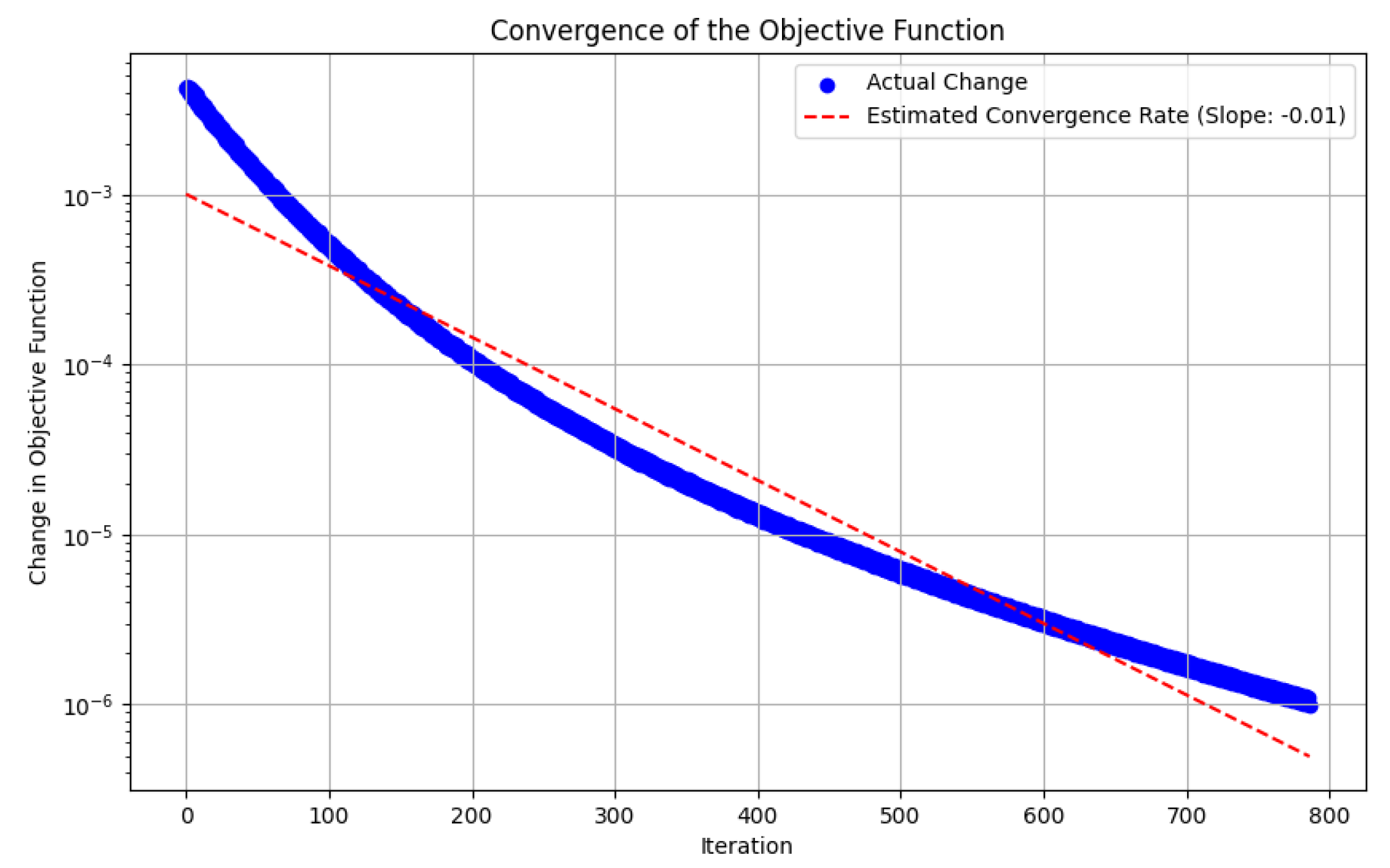

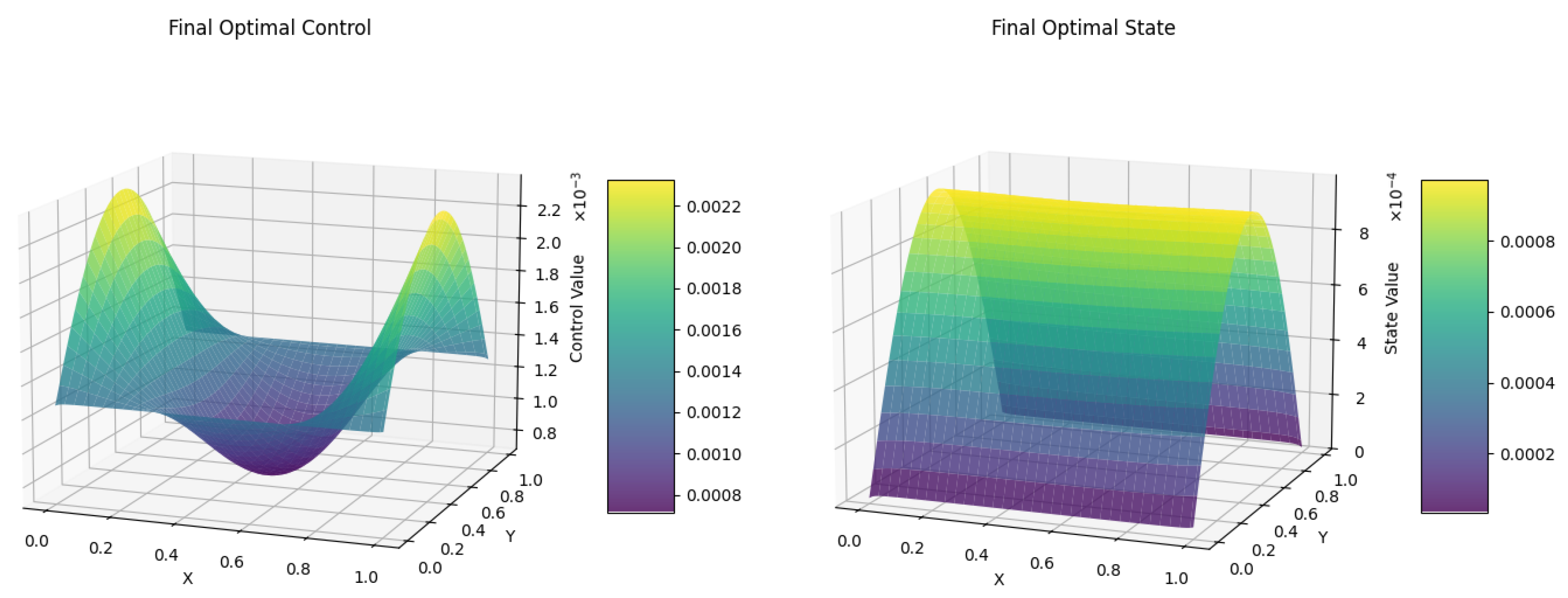

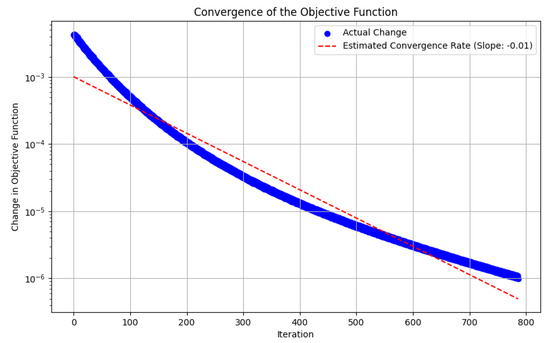

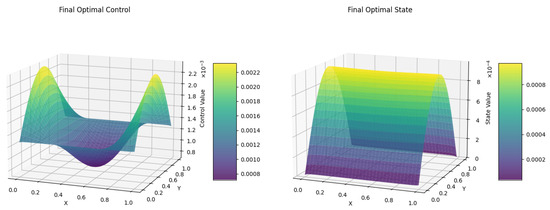

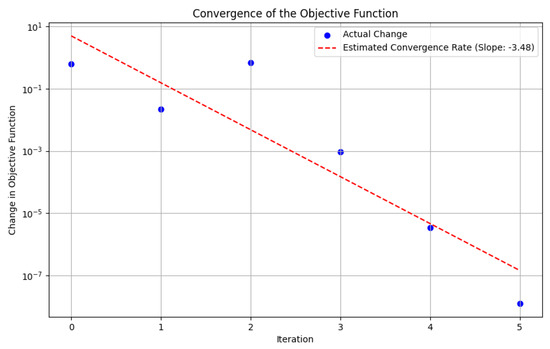

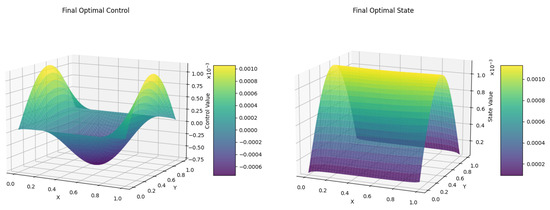

As shown in Figure 1, the difference in objective function values generally exhibits a monotonically decreasing trend, which demonstrates the effectiveness of the proposed Augmented Method of Successive Approximations (AMSA). Figure 2 presents the numerically obtained optimal control u and the corresponding optimal state y. It is noted that, since a gradient descent method is employed to solve the Hamiltonian optimization subproblem, the algorithm may converge to a local optimal solution. The robustness and solution quality can be further enhanced by employing stochastic gradient descent, multi-start strategies, adaptive learning rate schemes, or by integrating global optimization techniques. These approaches have the potential to effectively mitigate the aforementioned limitation.

Figure 1.

Difference in Objective Function − via AMSA(GD).

Figure 2.

Computed optimal state y (right) and optimal control u (left) via AMSA(GD).

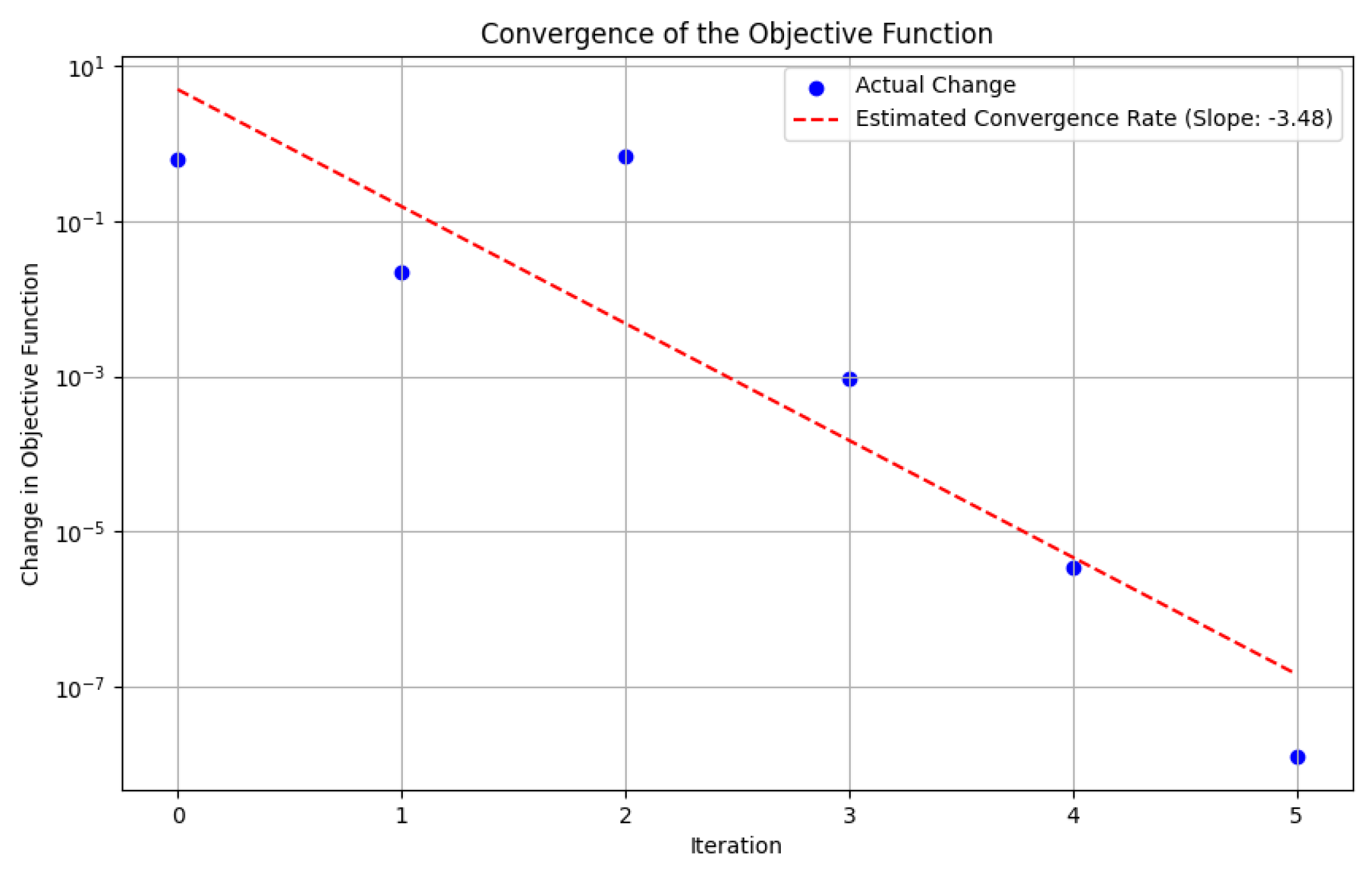

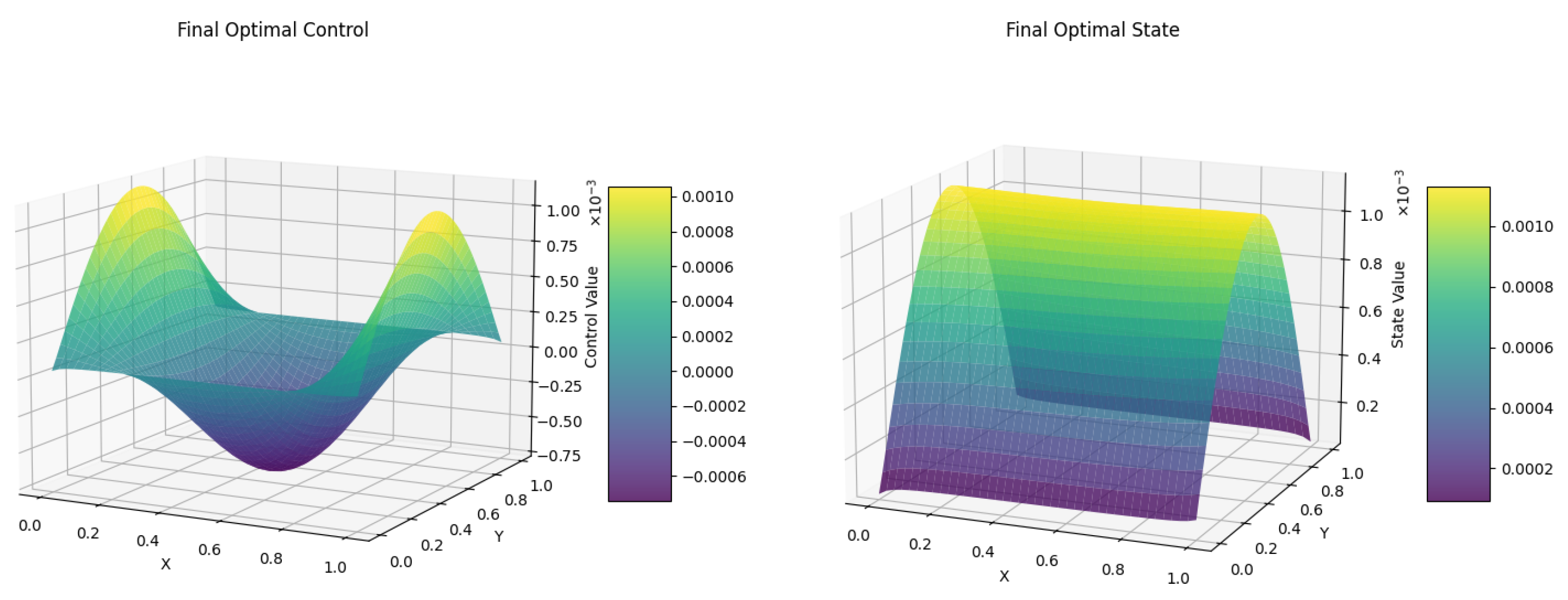

To further investigate this possibility, we replace the gradient descent method in the Hamiltonian optimization step with the Simulated Annealing (SA) algorithm. In this case, the initial temperature is set to 100, and the cooling rate is set to . The temporal and spatial discretization steps are both fixed at . The corresponding numerical results are presented as follows. Figure 3 illustrates the difference in the objective function obtained using the AMSA (SA) method. Figure 4 presents the optimal control u derived from this method, along with the corresponding optimal state y.

Figure 3.

Difference in Objective Function − via AMSA(SA).

Figure 4.

Computed optimal state y (right) and optimal control u (left) via AMSA(SA).

Table 2 reports the computation time, maximum memory usage, and the optimal objective function value obtained when employing the gradient descent (GD) algorithm and the Simulated Annealing (SA) algorithm to solve the augmented Hamiltonian subproblem. In this experiment, both the time step size and the space step size are fixed at .

Table 2.

Comparison of computation time, maximum memory usage, and optimal objective function value using GD and SA algorithms for the augmented Hamiltonian subproblem. The time step size and space step size are both set to .

As shown in Table 2, the SA method avoids convergence to a local optimum and achieves a superior optimal objective function value compared to the GD method. In addition, the computation time of SA is significantly reduced, while the maximum memory usage remains similar.

Furthermore, we compare the optimal objective function values, computation time, and maximum memory usage of the Interior Point Method (IPM), Configuration Method (CM), and the proposed AMSA (implemented with SA) under the same discretization parameters. Specifically, the time step size and space step size are both set to 0.05. The corresponding numerical results are summarized in Table 3.

Table 3.

Comparison of IPM, CM, and AMSA (with SA) in terms of computation time, maximum memory usage, and optimal objective function value. The time step size and space step size are both set to .

From Table 3, it can be observed that AMSA exhibits significantly lower computation time and memory consumption compared to IPM and CM for the same time and space step sizes. However, the optimal objective function value obtained by AMSA is inferior to that of IPM. By combining the observations from Table 2 and Table 3, it can be concluded that AMSA is capable of obtaining an optimal solution superior to IPM when finer discretization (smaller time and space step sizes) is employed. Despite the improved solution quality, AMSA still maintains much lower computation time and memory usage than IPM, highlighting its effectiveness and computational efficiency.

5. Discussion

Based on the work presented in [21], the AMSA algorithm is applied to the optimal control problem of a class of parabolic equations, for which a rigorous convergence proof has been provided. This framework facilitates the integration of more sophisticated deep learning algorithms that are capable of decoupling and iteratively updating the parameters of each layer.

Moreover, by incorporating global optimization algorithms into the solution process of the augmented Hamiltonian subproblem, the issue of convergence to a local optimal solution can be effectively mitigated. Future work will focus on the development of second-order convergence algorithms with accelerated convergence rates. The goal is to solve the augmented Hamiltonian maximization subproblem more efficiently and robustly, ensuring both the convergence quality and computational efficiency of the overall method.

Author Contributions

Conceptualization, W.Y.; methodology, W.Y.; software, W.Y.; validation, W.Y. and F.Z.; formal analysis, W.Y.; investigation, W.Y.; resources, F.Z.; writing—original draft preparation, W.Y.; writing—review and editing, W.Y. and F.Z.; visualization, W.Y.; supervision, F.Z.; project administration, F.Z.; funding acquisition, F.Z. All authors have read and agreed to the published version of the manuscript.

Funding

This research was funded in part by the National Natural Science Foundation of China (Nos. 12071292, 42450269).

Data Availability Statement

The original contributions presented in the study are included in the article material, further inquiries can be directed to the corresponding author.

Conflicts of Interest

The authors declare no conflicts of interest.

Abbreviations

The following abbreviations are used in this manuscript:

| MSA | Method of Successive Approximations |

| HJB | Hamilton–Jacobi–Bellman |

| AMSA | Augmented Method of Successive Approximations |

| IPM | Interior Point Method |

| CM | Configuration Method |

| SA | Simulated Annealing |

| GD | Gradient Descent |

References

- Bardi, M.; Capuzzo-Dolcetta, I. Optimal Control and Viscosity Solutions of Hamilton-Jacobi-Bellman Equations; With Appendices by Maurizio Falcone and Pierpaolo Soravia; Systems & Control: Foundations & Applications; Birkhäuser Boston, Inc.: Boston, MA, USA, 1997; p. xviii+570. [Google Scholar] [CrossRef]

- Falcone, M.; Ferretti, R. Semi-Lagrangian Approximation Schemes for Linear and Hamilton-Jacobi Equations; Society for Industrial and Applied Mathematics (SIAM): Philadelphia, PA, USA, 2014; p. xii+319. [Google Scholar]

- Casas, E. Pontryagin’s principle for state-constrained boundary control problems of semilinear parabolic equations. SIAM J. Control Optim. 1997, 35, 1297–1327. [Google Scholar] [CrossRef]

- Raymond, J.P.; Zidani, H. Hamiltonian Pontryagin’s principles for control problems governed by semilinear parabolic equations. Appl. Math. Optim. 1999, 39, 143–177. [Google Scholar] [CrossRef]

- Kang, W.; Wilcox, L.C. Mitigating the curse of dimensionality: Sparse grid characteristics method for optimal feedback control and HJB equations. Comput. Optim. Appl. 2017, 68, 289–315. [Google Scholar] [CrossRef]

- Stefansson, E.; Leong, Y.P. Sequential alternating least squares for solving high dimensional linear Hamilton-Jacobi-Bellman equation. In Proceedings of the 2016 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Daejeon, Republic of Korea, 9–14 October 2016; pp. 3757–3764. [Google Scholar] [CrossRef]

- Beard, R.W.; Saridis, G.N.; Wen, J.T. Galerkin approximations of the generalized Hamilton-Jacobi-Bellman equation. Automatica J. IFAC 1997, 33, 2159–2177. [Google Scholar] [CrossRef]

- Beard, R.W.; Saridis, G.N.; Wen, J.T. Approximate solutions to the time-invariant Hamilton-Jacobi-Bellman equation. J. Optim. Theory Appl. 1998, 96, 589–626. [Google Scholar] [CrossRef]

- Beeler, S.C.; Tran, H.T.; Banks, H.T. Feedback control methodologies for nonlinear systems. J. Optim. Theory Appl. 2000, 107, 1–33. [Google Scholar] [CrossRef]

- Kalise, D.; Kunisch, K. Polynomial approximation of high-dimensional Hamilton-Jacobi-Bellman equations and applications to feedback control of semilinear parabolic PDEs. SIAM J. Sci. Comput. 2018, 40, A629–A652. [Google Scholar] [CrossRef]

- Alla, A.; Falcone, M.; Kalise, D. An efficient policy iteration algorithm for dynamic programming equations. SIAM J. Sci. Comput. 2015, 37, A181–A200. [Google Scholar] [CrossRef]

- Bokanowski, O.; Maroso, S.; Zidani, H. Some convergence results for Howard’s algorithm. SIAM J. Numer. Anal. 2009, 47, 3001–3026. [Google Scholar] [CrossRef]

- Howard, R.A. Dynamic Programming and Markov Processes; Technology Press of M.I.T.: Cambridge, MA, USA; John Wiley & Sons, Inc.: New York, NY, USA; London, UK, 1960; p. viii+136. [Google Scholar]

- Bryson, A.E., Jr.; Ho, Y.C. Applied Optimal Control: Optimization, Estimation, and Control; Revised Printing; Hemisphere Publishing Corp.: Washington, DC, USA; Distributed by Halsted Press [John Wiley & Sons, Inc.]: New York, NY, USA; London, UK; Sydney, Australia, 1975; p. xiv+481. [Google Scholar]

- Roberts, S.M.; Shipman, J.S. Two-point boundary value problems: Shooting methods. In Modern Analytic and Computational Methods in Science and Mathematics; American Elsevier Publishing Co., Inc.: New York, NY, USA, 1972; Volume 31, p. xiv+277. [Google Scholar]

- Betts, J.T.; Frank, P.D. A sparse nonlinear optimization algorithm. J. Optim. Theory Appl. 1994, 82, 519–541. [Google Scholar] [CrossRef]

- Betts, J.T. Experience with a sparse nonlinear programming algorithm. In Large-Scale Optimization with Applications, Part II (Minneapolis, MN, 1995); Springer: New York, NY, USA, 1997; Volume 93, pp. 53–72. [Google Scholar] [CrossRef]

- Bertsekas, D.P. Nonlinear Programming, 2nd ed.; Athena Scientific Optimization and Computation Series; Athena Scientific: Belmont, MA, USA, 1999; p. xiv+777. [Google Scholar]

- Bazaraa, M.S.; Sherali, H.D.; Shetty, C.M. Nonlinear Programming, 3rd ed.; Theory and Algorithms; Wiley-Interscience [John Wiley & Sons]: Hoboken, NJ, USA, 2006; p. xvi+853. [Google Scholar] [CrossRef]

- Chernous‘ko, F.L.; Lyubushin, A.A. Method of successive approximations for solution of optimal control problems. Optimal Control Appl. Methods 1982, 3, 101–114. [Google Scholar] [CrossRef]

- Li, Q.; Chen, L.; Tai, C. Maximum principle based algorithms for deep learning. J. Mach. Learn. Res. 2017, 18, 1–29. [Google Scholar]

- Hestenes, M.R. Multiplier and gradient methods. J. Optim. Theory Appl. 1969, 4, 303–320. [Google Scholar] [CrossRef]

- Sethi, D.; Šiška, D. The modified MSA, a gradient flow and convergence. Ann. Appl. Probab. 2024, 34, 4455–4492. [Google Scholar] [CrossRef]

- Aleksandrov, V.V. On the accumulation of perturbations in the linear systems with two coordinates. Vestn. MGU 1968, 3, 67–76. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).