1. Introduction

Dynamical systems are employed to describe various phenomena across disciplines, from studying the climate system [

1,

2,

3] to epidemiological studies [

4,

5,

6], rumour propagation mechanisms [

7], and coupled behaviour–disease dynamics [

8]. While dynamical systems exhibit numerous intriguing aspects, one particularly captivating focus in recent years has been the possibility of bifurcation and tipping points [

8,

9,

10,

11]. In natural systems, a local bifurcation can occur as external conditions gradually change, bringing the system near an equilibrium state and potentially triggering a qualitative shift in behaviour when a tipping point is reached [

12,

13].

Bifurcation theory is a rich branch of mathematics that delves into the theoretical properties of dynamical systems experiencing different types of bifurcations [

14]. One of its most significant theories, the Centre Manifold Theorem, along with the Normal Form Theorem, simplifies nonlinear dynamical systems by transforming them into their simplest equivalent form, termed the “normal form”. Through coordinate transformations, it eliminates unnecessary nonlinear terms while preserving the system’s essential behaviour, making it easier to examine features like bifurcations [

15,

16,

17].

While these theorems are generally valid, this paper specifically focuses on three types of local bifurcations, as they represent distinct behaviours in dynamical systems: the abrupt jump from one equilibrium state to another (fold bifurcation) [

18], smoothly shifting stability (transcritical bifurcation) [

19], and the transition to oscillatory behaviour (Hopf bifurcation) [

20]. For these bifurcation types, the dominant eigenvalue of the derivative matrix crosses the imaginary axis at the bifurcation point. Leading up to the bifurcation, the system can exhibit critical slowing down, corresponding to prolonged recovery from perturbations and reduced resilience to disturbances, as measured by increased auto-correlation and variance [

21]. Numerous generic indicators of local bifurcations have been proposed based on these consequences [

22,

23,

24,

25]. For instance, the variance and lag-1 auto-correlation of a time series prior to bifurcation shows trends that can serve as early warning signals (EWSs) [

26]. While such indicators have been instrumental in identifying and predicting tipping points and abrupt shifts, they also possess limitations [

27], such as an inability to specify the type of impending bifurcation. With recent advances in artificial intelligence (AI) and the development of deep learning (DL) models, it was anticipated that these models could eventually provide EWSs with greater accuracy and fewer weaknesses than generic indicators.

The first study applying AI to the detection of tipping points in dynamical systems is by Bury et al. [

28], who showed that convolutional and recurrent neural networks could identify EWS in time series drawn from a random library of differential equations. Their approach demonstrated that DL architectures could outperform traditional statistical indicators. Deb et al. [

29] introduced EWSNet, a hybrid Convolutional Neural Network–Long Short-Term Memory (CNN-LSTM) network trained on simulated time series from ecological, biological, and climate models. EWSNet showed high accuracy in classifying catastrophic and non-catastrophic transitions and proved robust to noise, short time series, and irregular sampling. These previous works were extended in a follow-up study by Bury et al. [

30] to discrete-time systems, further validating the generalizability of DL models to diverse bifurcation scenarios. Dylewsky et al. [

31] demonstrated that universal EWSs could be extracted from DL models trained on climate system simulations, supporting the broader applicability of these methods. Dylewsky et al. [

25] advanced this work by embedding bifurcations in high-dimensional systems, showing that DL models remain effective even when the underlying signals are distributed across many variables. Most recently, Huang et al. [

32] addressed the challenge of rate-induced tipping (R-tipping), where classical indicators like critical slowing down fail. Their interpretable DL framework predicted transition probabilities in noisy, time-varying systems and extracted higher-order fingerprints of instability. In parallel, Dylewsky et al. [

33] tackled spatially patterned phase transitions using neural architectures tailored for spatiotemporal systems. Together, these studies illustrate a growing body of work that supports the use of deep learning, with CNN–LSTM architectures being one of the main and most effective models for robust and generalizable detection of critical transitions in noisy, nonlinear dynamical systems.

In contrast to previous approaches that generate extensive libraries of stochastic differential equations and discard those that do not exhibit bifurcations [

30], we propose a theoretically grounded method by constructing the training dataset directly from the normal forms of canonical bifurcations. This significantly reduces computational overhead while ensuring that the training data captures the essential dynamical features associated with each bifurcation type. The first objective of this study is to demonstrate that such a targeted construction is not only feasible but also more efficient than relying on random dynamical systems. Furthermore, we extend the DL-based early warning framework of [

28] to accommodate coloured noise, a feature often overlooked despite its prevalence in empirical systems. Many ecological and climate systems that undergo critical transitions are influenced by auto-correlated, coloured noise [

34,

35], prompting recent interest in its ecological impacts [

36,

37,

38,

39,

40,

41,

42,

43,

44,

45]. By generating training data with varying levels of redness, we evaluate the robustness of our CNN-LSTM classifier under both white and coloured noise, enhancing the applicability of EWS frameworks to real-world, noisy, nonlinear systems.

3. Results

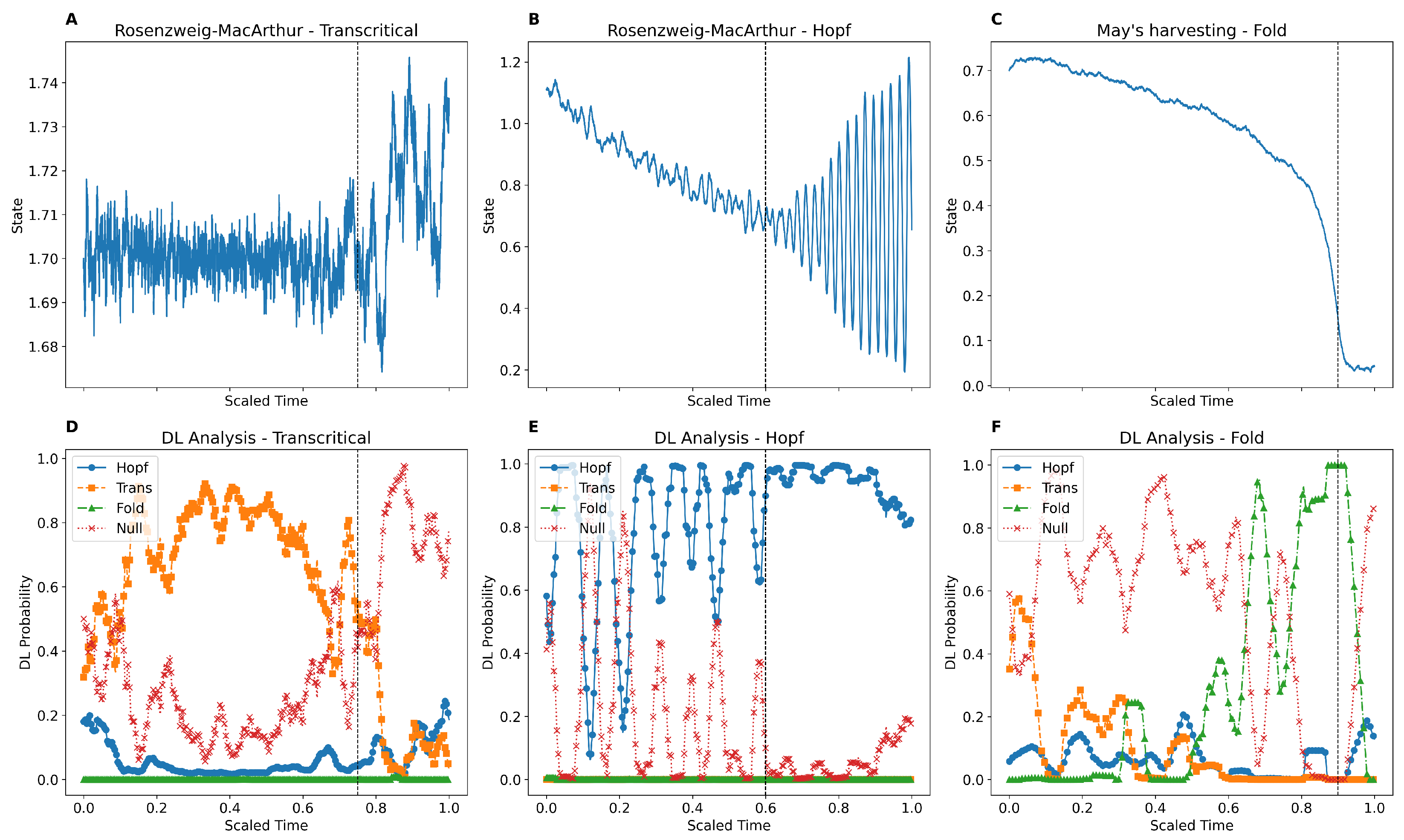

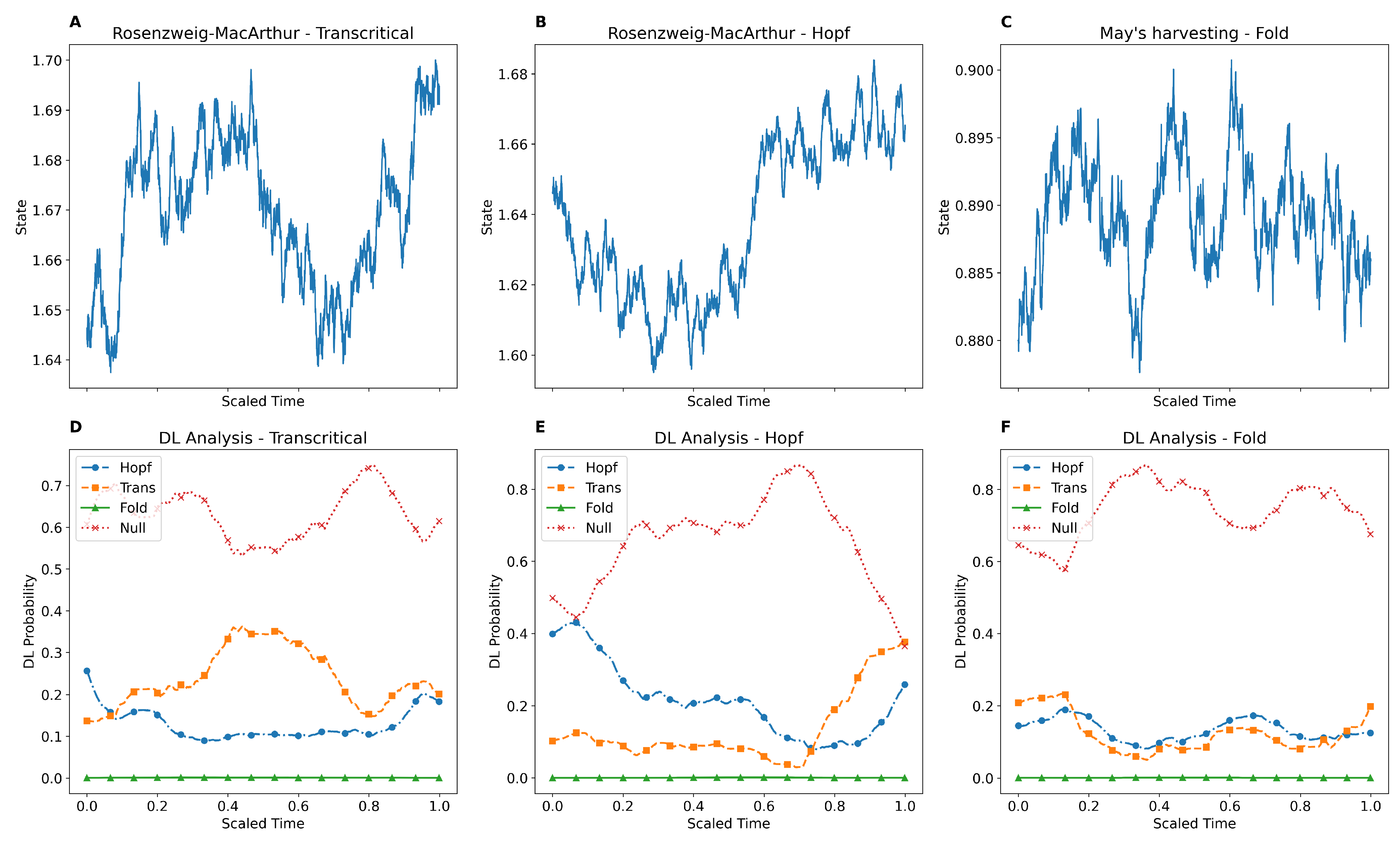

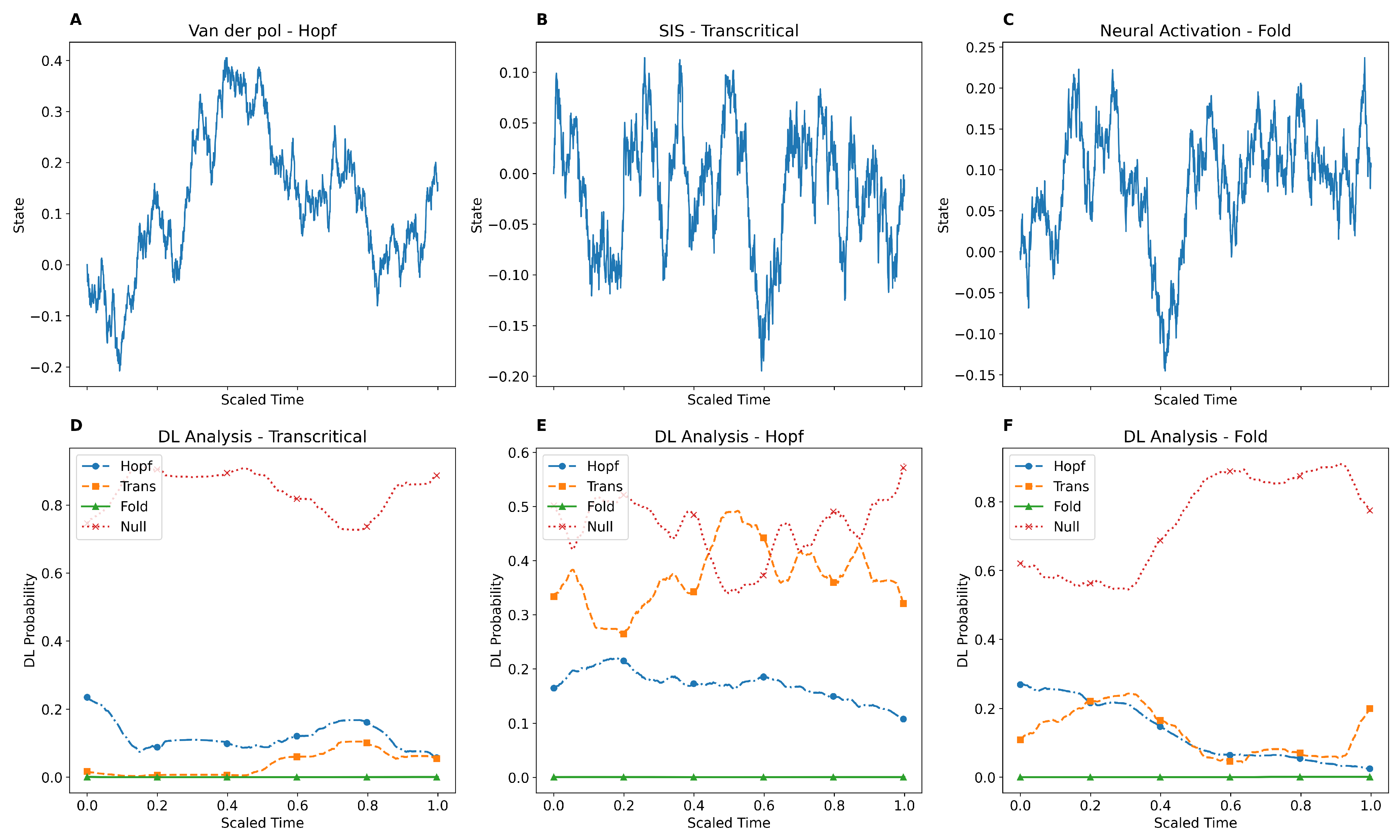

We have demonstrated that the DL model can provide EWS for bifurcations in mathematical models. For this purpose, we utilised six well-known mathematical models: neural activation and May’s harvesting model for fold bifurcation, the Rosenzweig–MacArthur and SIS models for transcritical bifurcation, and the Rosenzweig–MacArthur and Van der Pol models for Hopf bifurcation. We simulated these mathematical models with an additive stochastic term, starting from the equilibrium point and linearly varying the bifurcation parameter toward the bifurcation point throughout the simulation. Since the tipping point can occur earlier than the theoretical prediction, we employed the binary segmentation algorithm to locate the tipping point. The DL model assigns four probabilities (fold, transcritical, Hopf, and null) to each point in the simulated time series. The null category in our study represents stochastic time series that either do not include a tipping point or are relatively distant from it. Assigning a relatively high probability to the correct type of bifurcation before the actual location of the tipping point is considered a signal in this study.

Figure 1 and

Figure 2 depict the results of the DL analysis of the introduced mathematical models. The estimated location of the tipping point is indicated on the plots with a dashed line. As shown, the DL model is not only capable of providing EWS but also accurately identifies the correct type of bifurcation. Furthermore, at the beginning of the simulated time series, where the tipping point is relatively distant, the DL model assigns the highest probability to null. As the series approaches the tipping point, the model transitions to selecting the correct type of bifurcation and then returns to assigning null after the tipping point has passed. This behaviour is consistent with the observations from the analysis of all six mathematical models. The mathematical descriptions, full details of the simulations, and null analyses are provided in the

Section 5.

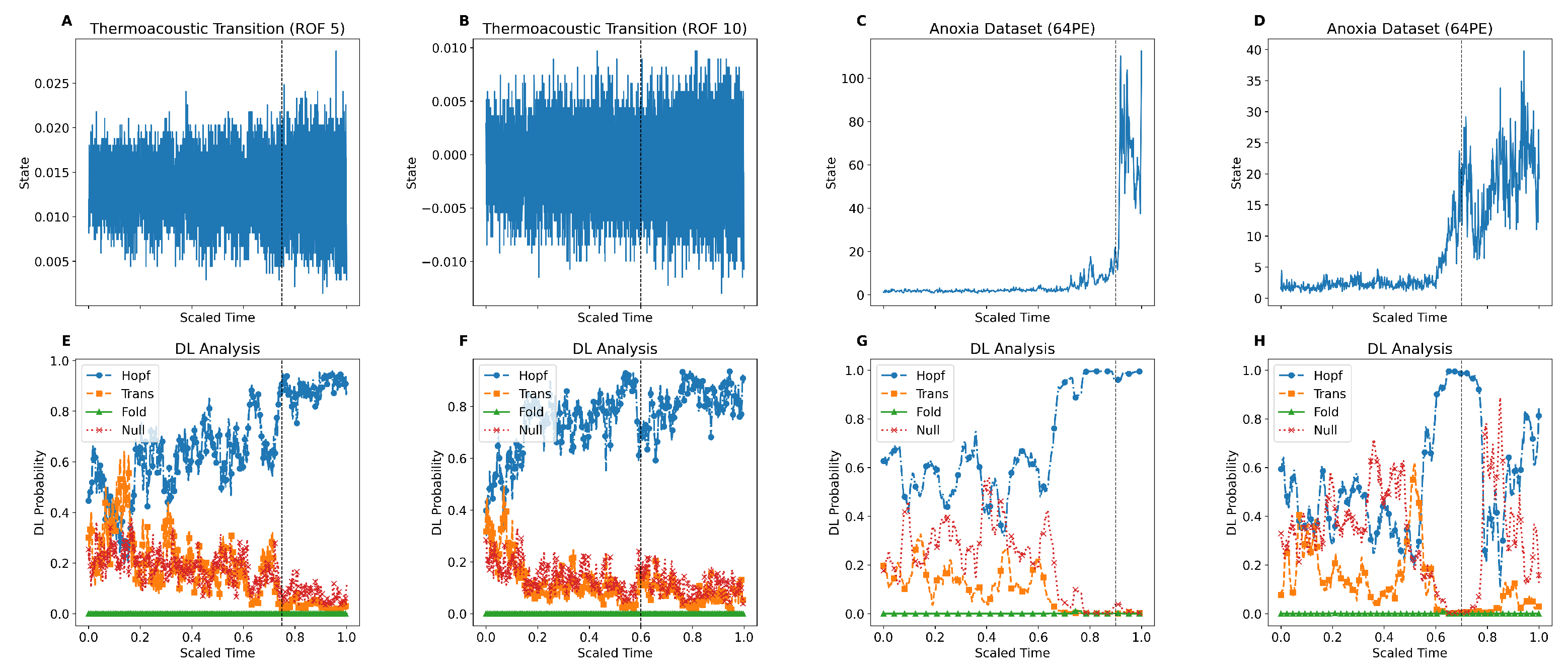

In addition, we have demonstrated that the DL model exhibits robust performance in providing EWS on empirical data containing tipping points. We analysed two sets of empirical data: one representing the transition to thermoacoustic instability in a horizontal Rijke tube, a prototypical thermoacoustic system, and the other capturing episodes of anoxia in the Eastern Mediterranean from sedimentary archives. To ensure proper resolution and quality for the analysis, we expanded the size of the time series for these datasets using the Linear Interpolation method.

Figure 3 illustrates the results of the DL analysis on four examples of these time series (two from each dataset). The estimated location of the tipping point is indicated with a dashed line on each subplot. As shown, the DL model successfully provides EWS on these time series before the actual tipping point, transitioning from assigning null to identifying one of the types of bifurcation. Notably, for the anoxia data, the DL classifier predicts a Hopf transition, whereas the empirical data suggest a transition more akin to a fold-type bifurcation. Additional analysis on empirical data is provided in the

Section 5.

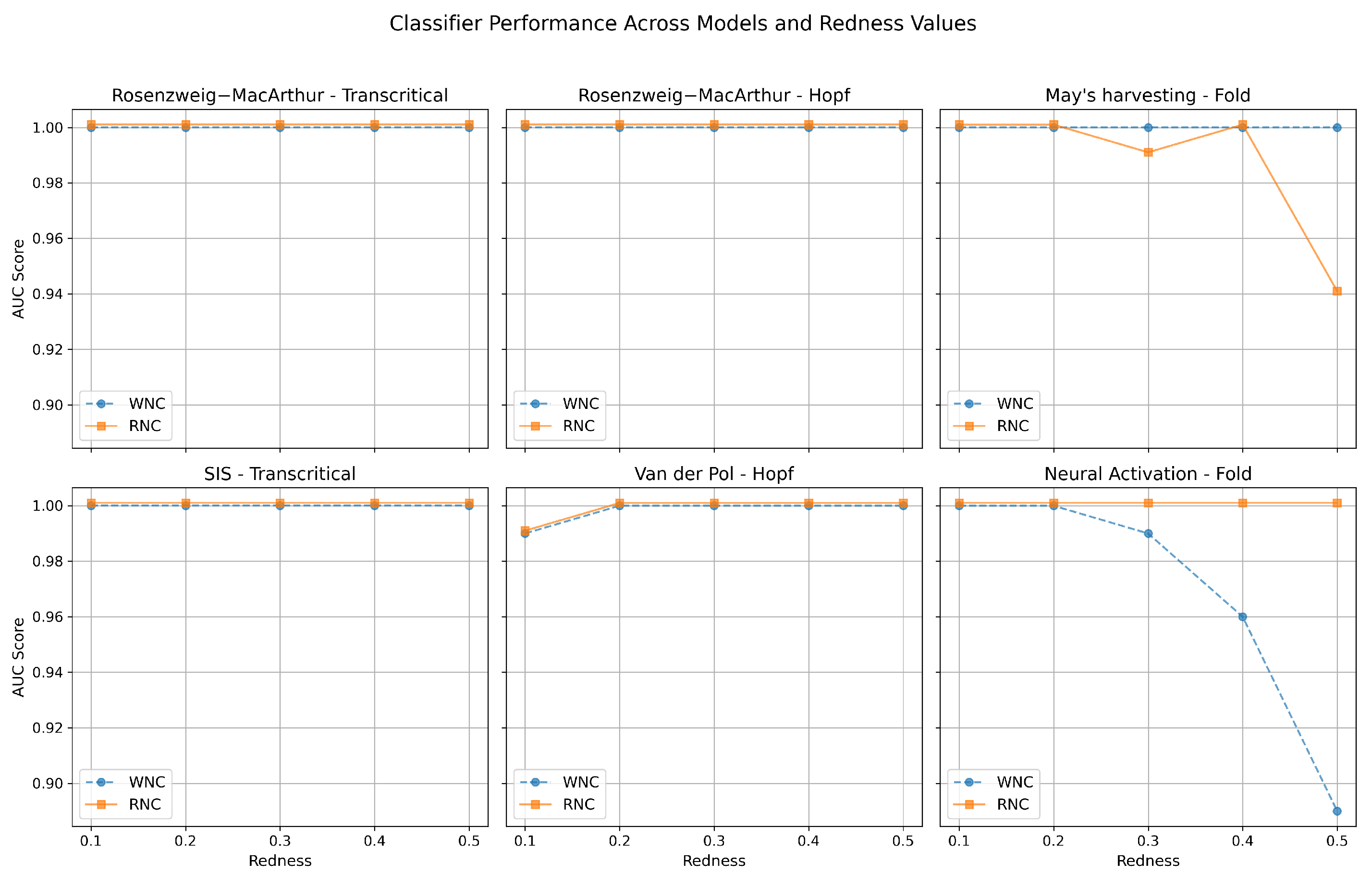

Moreover, the DL model demonstrates consistent success with stochastic time series that include coloured noise. To illustrate this, we repeated the same training process using the same DL architecture (CNN-LSTM), but this time incorporated red noise (with specific redness values) as an additive stochastic term while simulating the deterministic normal forms of different types of bifurcation. As a result, we generated five additional DL classifiers, each trained on normal forms combined with red noise (redness values of 0.1, 0.2, 0.3, 0.4, and 0.5). A larger redness value in red noise signifies greater dominance of low-frequency components, stronger persistence, and smoother, long-term variability in the time series. Note that the baseline DL model used in previous sections is based on white noise and can be considered a special case of this process with a redness value of 0. To quantify and compare the performance of the red noise classifier against the white noise classifier, we simulated stochastic time series for the six mathematical models using different redness values (ranging from 0 to 0.5). Each mathematical model was simulated 50 times, and the ROC curves for all classifiers were plotted. By calculating the AUC score for each classifier across mathematical models and redness values, we could compare their performance. The objective was to demonstrate that the DL model trained on white noise can maintain strong performance in detecting bifurcations on time series that include red noise. This expectation is confirmed by the results shown in

Figure 4. Each of the six subplots in this figure corresponds to one of the mathematical models. The y-axis represents the AUC score, while the x-axis represents redness values. The performance of the white noise classifier is shown in blue, while the red noise classifier is depicted in orange. The figure shows that, for each redness value, the classifier trained on white noise exhibited comparable performance to the classifier trained on red noise with the corresponding redness value. Further explanations are provided in the

Section 5.

The results presented in this section demonstrate that our approach to developing a DL model for providing the EWSs of tipping points can be generalised to coloured noise and applied to highly coloured simulated or empirical time series. Moreover, these findings indicate that a classifier trained on white noise can still maintain a high level of performance on coloured time series.

4. Discussion

4.1. Conclusions

In this study, we developed a DL framework for detecting the EWSs of tipping points in complex dynamical systems. Our primary objective was to construct a training methodology grounded in theory by generating time series exclusively from the normal forms of canonical bifurcations. This approach eliminates the need for large, randomly sampled libraries of stochastic differential equations and ensures that the training data directly reflects the core dynamical patterns associated with critical transitions.

We first demonstrated that a CNN-LSTM model trained on these theoretically constructed time series is capable of accurately identifying EWS in out-of-sample data. This included both synthetic time series from unseen mathematical models and real-world empirical datasets, highlighting the model’s ability to generalise beyond the training distribution.

A second objective was to assess the robustness of this framework under realistic noise conditions. To this end, we replaced white noise in the training simulations with coloured (red) noise, introducing temporal auto-correlation using AR(1) processes. We found that the performance of the DL model remains stable across a wide range of redness values.

Finally, we performed a systematic evaluation using ROC curves and their corresponding AUC scores. These experiments confirmed that models trained on coloured noise perform exceptionally well when tested on coloured stochastic time series with bifurcations. Importantly, we also showed that models trained on white noise generalise well to coloured noise settings, underscoring the flexibility of the training approach. Taken together, our results demonstrate that deep learning models trained on theoretically grounded simulations can serve as reliable tools for bifurcation detection, even in the presence of realistic stochasticity and noise correlations.

4.2. Limitation and Future Work

Nevertheless, the model and assumptions used in this study limited its scope. For instance, we focused exclusively on three specific types of local bifurcations. Global bifurcations could also be studied by expanding the training set to include these, as DL models can only recognise patterns they have been trained on. Furthermore, the study of coloured noise in our work was limited. Coloured noise refers to noise with a power spectral density (PSD) proportional to , where f is frequency and is the spectral exponent. Each value of characterises a different type of noise. While our method creates a continuum between white and red noise ( and ), it does not address other types of noise, such as blue noise () and violet noise (). Moreover, the development of the training set relied entirely on normal forms, whereas incorporating higher-order terms could increase the accuracy of the DL model.

In future work, we aim to address these limitations. Specifically, we intend to expand the scope of this study to include other types of local and global bifurcations. Although climate empirical data predominantly rely on red noise, we plan to incorporate other types of coloured noise to create a more comprehensive classifier. Additionally, adding higher-order terms to the training set is expected to enhance the accuracy and efficiency of the DL classifier, which will be a focus of our future work.

5. Supporting Information

5.1. Model Architecture and Data Preparation

5.1.1. Generation of Training Data for the DL Classifier

We used the normal forms of three types of bifurcation with an additive stochastic term to construct the training dataset. For fold bifurcation, we used

The bifurcation occurs at

. For transcritical bifurcation, we used

The bifurcation occurs at

. For Hopf bifurcation, we used

The bifurcation occurs at

. For the null case, we used

Here,

is a constant, and

is a stochastic term, which can be either white noise drawn from a Gaussian distribution with a mean of zero and standard deviation of one, or red noise derived using Equation (

1).

For each type of bifurcation, we simulated 5000 time series, starting from an equilibrium point far from bifurcation (e.g., a random number between and , and for fold bifurcation). The system was gradually and linearly brought closer to the bifurcation point over 1000 steps. The simulation was halted before the bifurcation point to ensure that the deep learning (DL) model was exposed only to the pre-bifurcation phase.

Once the training set was constructed using white noise (equivalent to redness ), we repeated the process for redness values of , , , , and . The Euler–Maruyama method was used to simulate these time series with .

Before feeding these time series into the DL model, we pre-processed them using a Gaussian kernel. Additionally, we tested various pre-processing methods such as moving average, differencing, and Z-score normalisation, but achieved the best performance with a Gaussian kernel, where was set to 0.1 times the length of the time series (=100).

5.1.2. Data Requirements and Sequence Length Sensitivity

In the left panel of

Figure 5, we evaluate how the amount of training data affects model performance. Starting with a dataset of 20,000 time series, we incrementally increase the fraction used for training while keeping the test set fixed, and assess the model’s performance using the F1-score. We observe a significant improvement in performance as the training fraction increases from 0.1 to 0.7, with the F1-score rising sharply in the early stages. Beyond a training fraction of 0.7, the performance plateaus, indicating that the model reaches a saturation point in learning. This behaviour suggests that the training process is robust and that a subset of the full dataset (around 70 percent) is sufficient to achieve near-optimal performance, highlighting the data efficiency of our DL framework.

In the right panel of

Figure 5, we examine the impact of time series length on model precision. Using the full set of 20,000 time series, we simulate the same experimental setting across various sequence lengths to test the model’s sensitivity to temporal resolution. The results show that for relatively short time series, model precision is notably lower—likely due to insufficient temporal information to capture the dynamics preceding a bifurcation. However, as the sequence length increases, performance improves steadily, eventually plateauing beyond 800 time steps. This indicates a minimum required length for capturing the relevant precursors to tipping points. While longer sequences beyond this threshold do not significantly improve performance, they may be advantageous when training classifiers for use with higher-resolution simulated or empirical datasets.

5.1.3. Robustness of Training Process

To evaluate the stability and reproducibility of our model, we trained the same CNN-LSTM architecture 10 times using the same training dataset containing white noise and identical hyperparameter settings.

Table 3 reports the mean and standard deviation of the key performance metrics—accuracy, F

1-score, precision, and recall—across these repeated runs. The results show strong and consistent performance, with mean values centered around 0.82 and standard deviations ranging from 0.009 to 0.010. This low variability across identical training conditions demonstrates that the model’s performance is robust to random initialization and other sources of stochasticity inherent in the training process, reinforcing the reliability of our deep learning framework.

5.2. Deep Learning Architecture and Training Process

5.2.1. Deep Learning Model Architecture

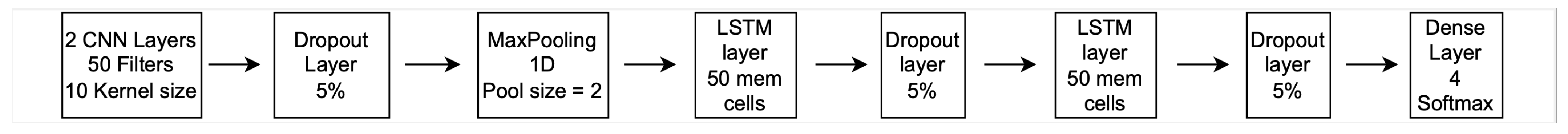

The deep learning model employed in this study is implemented as a sequential model using the Keras API (TensorFlow 2.12.1, Keras 2.12.0), tailored for multi-class sequence classification. The architecture begins with two one-dimensional convolutional (Conv1D) layers. The first layer comprises 50 filters, followed by a second layer with 100 filters—both using a kernel size of 10, ReLU activation, and ‘same’ padding to preserve the temporal dimension of the input. These convolutional layers are followed by a dropout layer with a rate of 0.05 to mitigate overfitting. A MaxPooling1D layer with a pool size of 2 and stride of 2 is then applied to downsample the feature maps.

The convolutional block is followed by two standard, stacked unidirectional Long Short-Term Memory (LSTM) layers. The first LSTM layer includes 50 memory cells and returns sequences to preserve temporal structure for the next LSTM layer, which contains 10 units. Each LSTM layer is followed by an additional dropout layer with a rate of 0.05. The network concludes with a fully connected dense output layer with four units, corresponding to the number of classes, and uses a softmax activation function to output class probabilities. All layers utilize the LeCun kernel initializer for weight initialization.

For training, the model uses the Adam optimizer with a learning rate of 0.01. Training is conducted for up to 200 epochs with a batch size of 128. An early stopping callback is employed to halt training if the validation accuracy does not improve for 10 consecutive epochs, and a Model Checkpoint is configured to save the best-performing model based on validation accuracy. The loss function used is ‘sparse categorical crossentropy’, suitable for multi-class classification tasks with integer labels. Model performance is monitored using both ‘accuracy’ and ‘sparse categorical accuracy’ metrics during training.

After experimenting with CNN layers, LSTM layers, and Inception layers, we found that the combination of CNN and LSTM layers produced the best results. We also implemented a simplified LSTM-only model to perform an ablation study. The LSTM-only architecture consisted of two stacked LSTM layers (50 and 10 units) with the same dropout, dense output, and training procedure as the CNN–LSTM model. All models were trained using the same dataset and evaluated under consistent conditions. Our choice of hyperparameters was informed by prior work by [

28], and we further refined these settings via internal experiments to ensure optimal performance. We used the pre-processed time series for each redness value, allocating 90% of the time series for the training process and 10% for validation.

Figure 6 provides a detailed overview of the deep learning (DL) architecture used in this study. The learning rate was set to 0.0005, training was performed in 200 epochs, and early stopping was employed to prevent overfitting. The code is written in python 3.12.11 using TensorFlow 2.12.1. The validation accuracy at the end of the training process is provided in

Table 2 for different redness values.

5.2.2. Training Process Information

Here we provide information on training process, most specifically the confusion and classification matrix for different redness values.

5.3. Theoretical Models Used for Testing

We have used some well-known mathematical models to test the performance of our trained DL model for providing early warning signals (EWSs) of bifurcations:

May’s Harvesting Model:

where

x represents the population size,

r is the intrinsic growth rate, and

h is the harvesting rate. The term

introduces a saturating effect in the harvesting process, with

s controlling the steepness of the saturation. A fold bifurcation occurs as

h is varied.

Neural Activation Model:

where

x is the activation level,

S represents the stimulus strength,

is the steepness of the sigmoid function, and

is the threshold. A fold bifurcation occurs as

S is varied.

Rosenzweig–MacArthur Consumer–Resource Model: Transcritical Bifurcation

Here, x is the resource population, y is the consumer population, r is the intrinsic growth rate of the resource, K is the carrying capacity, a is the maximum predation rate, b is the half-saturation constant, c is the conversion efficiency, and d is the death rate of the consumer. A transcritical bifurcation occurs as d is varied.

Rosenzweig–MacArthur Consumer–Resource Model: Hopf Bifurcation

The same equations govern this case, but a Hopf bifurcation occurs when d crosses a critical threshold, leading to oscillatory dynamics.

Van der Pol Oscillator: Hopf Bifurcation

Here, x and y are the state variables, and is the bifurcation parameter. A Hopf bifurcation occurs at , where the system transitions from a stable equilibrium to sustained oscillations.

SIS Model: Transcritical Bifurcation

In this model, S is the susceptible population, I is the infected population, is the infection rate, and is the recovery rate. A transcritical bifurcation occurs at the critical threshold , where is the basic reproduction number.

All these models were simulated with an additive stochastic term. After simulation, the DL model analysed each time series using a sliding window of 100 steps, assigning probabilities to each segment. To achieve a smoother result, we applied a moving average with a window size of 100 as post-processing on the DL analysis output.

Validation on Bifurcating and Null Dynamical Systems

To evaluate the model’s ability to avoid false positives and correctly assign low confidence to bifurcation classes when no critical transition is present, we simulate time series from six nonlinear dynamical systems. In each case, control parameters are held fixed such that the system remains outside any bifurcation regime throughout the simulation. These represent purely null dynamics, meaning no tipping point occurs. This setup allows us to assess whether the classifier can reliably withhold predictions of bifurcations when presented with stable, non-transitioning systems—an essential capability for reducing false alarms in real-world applications.

Rosenzweig–MacArthur Predator–Prey Model (Hopf or Transcritical):

with the fixed parameters

,

,

,

, and

. For the transcritical bifurcation, the predation rate

a is held constant at 6, and for Hopf it is held constant at 12.

Fold Bifurcation Model (Harvesting):

where the harvesting rate

h is held constant at 0.1 over the course of the simulation.

Van der Pol Oscillator (Hopf):

with

.

SIS Epidemic Model (Transcritical):

where

,

.

Neural Activation Model (Fold):

with

.

Null Dynamics: The null simulations are derived by fixing the control parameters in each system, preventing any bifurcation. For example, in the Rosenzweig–MacArthur model,

is held constant to avoid crossing a bifurcation threshold. Similarly, in the fold and transcritical systems, the control parameters are set below the bifurcation point and remain unchanged throughout the simulation. The results are presented in

Figure 7 and

Figure 8.

5.4. Empirical Systems Used for Testing

In this paper, we have used two sources of empirical data to test the DL classifier.

This dataset provides high-resolution reconstructions of oxygen dynamics from sediment cores in the eastern Mediterranean Sea [

52]. It captures transitions between oxic and anoxic states, with evidence of EWS preceding these transitions [

53]. Data consist of molybdenum (Mo) and uranium (U) concentrations, proxies for anoxic and suboxic conditions, respectively, spanning eight anoxic events across three cores. A total of 26 time series are analyzed with a resolution of 10–50 years, depending on the core.

This dataset investigates transitions to self-sustained oscillations caused by thermoacoustic instability. Experiments were conducted in a horizontal Rijke tube with controlled voltage across a heated wire mesh to trigger transitions via subcritical Hopf bifurcations [

54]. Data include 19 forced trajectories with varying voltage ramp rates and 10 steady-state trajectories at fixed voltages. Downsampled data (4 kHz or 10 kHz to 2 kHz) capture 1500 points prior to transitions.

Both datasets offer insights into detecting early signs of critical transitions in distinct physical systems.

5.5. Evaluation via ROC Curve Analysis

To assess the discriminative performance of our deep learning classifier in detecting early warning signals of bifurcations, we compute Receiver Operating Characteristic (ROC) curves across a range of simulated dynamical systems. Each system is configured to either gradually approach a bifurcation point (signal present) or remain in a stable regime with fixed parameters (signal absent). This binary labeling enables quantitative evaluation of the classifier’s ability to distinguish between pre-bifurcation and null dynamics.

Each time series is then classified using two CNN-LSTM models: one trained on white noise () and one trained on coloured (red) noise generated using an AR(1) process with varying auto-correlation levels (). The model outputs softmax probabilities for four classes: transcritical, Hopf, fold, and null.

For ROC construction, we treat the mean probability of the correct bifurcation class as the classifier score. By sweeping over 200 thresholds

, we compute binary predictions at each level by classifying a window as “signal present” if the score exceeds

. The true positive rate (TPR) and false positive rate (FPR) are then defined as:

Varying the threshold traces out the ROC curve, revealing the sensitivity–specificity trade-off. To ensure robustness against stochasticity in simulation and training, this procedure is repeated over 50 Monte Carlo runs, and the resulting TPR and FPR values are averaged. The area under the ROC curve (AUC) is used to summarize performance: values close to 1 indicate strong discriminative ability, while values near 0.5 suggest random guessing. This evaluation is conducted separately for classifiers trained on white and red noise to compare their generalization under different noise structures.

5.6. Extended Evaluation of the DL Model Using Empirical Datasets

We evaluated the deep learning model on both experimental and geological datasets to demonstrate its ability to generalize from synthetic to empirical time series. Specifically, the analysis includes thermoacoustic transition signals from combustor experiments at ROF 3 and ROF 4, as well as geological anoxia indicators from sediment cores at site 64PE.

Figure 9 shows the corresponding time series and the model’s bifurcation predictions.