Author Contributions

Conceptualization, Y.Y.; methodology, S.C. and X.Z. (Xiaomin Zhu); software, S.C.; validation, S.C., L.N., J.L. and X.Z. (Xuebin Zhuang); formal analysis, S.C. and X.Z. (Xuebin Zhuang); investigation, S.C.; data curation, S.C.; writing—original draft preparation, S.C. and X.Z. (Xiaomin Zhu); writing—review and editing, L.N., J.L., X.Z. (Xiaomin Zhu) and X.Z. (Xuebin Zhuang); visualization, S.C.; supervision, Y.Y., X.Z. (Xiaomin Zhu) and X.Z. (Xuebin Zhuang); project administration, Y.Y. All authors have read and agreed to the published version of the manuscript.

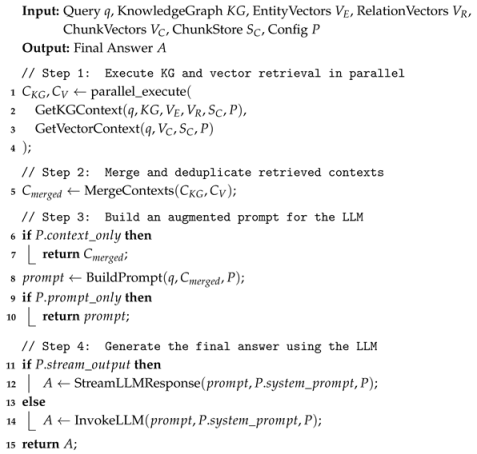

Figure 1.

System architecture diagram.

Figure 1.

System architecture diagram.

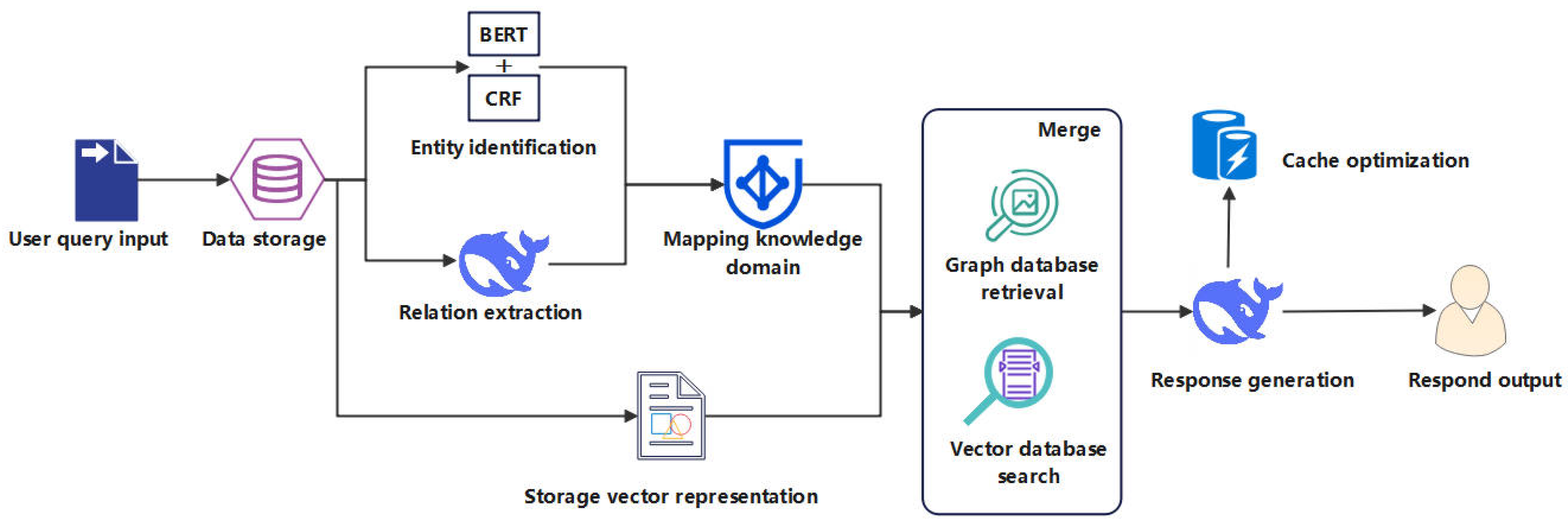

Figure 2.

Overall diagram of BERT, CRF, and BERT-CRF model architectures. (a) Schematic diagram of the BERT model. (b) Example of an entity annotation sequence for the Chinese text “交通运输部函〔2018〕785号” (Ministry of Transport Letter, No. 785 (2018)). (c) BERT-CRF model architecture diagram.

Figure 2.

Overall diagram of BERT, CRF, and BERT-CRF model architectures. (a) Schematic diagram of the BERT model. (b) Example of an entity annotation sequence for the Chinese text “交通运输部函〔2018〕785号” (Ministry of Transport Letter, No. 785 (2018)). (c) BERT-CRF model architecture diagram.

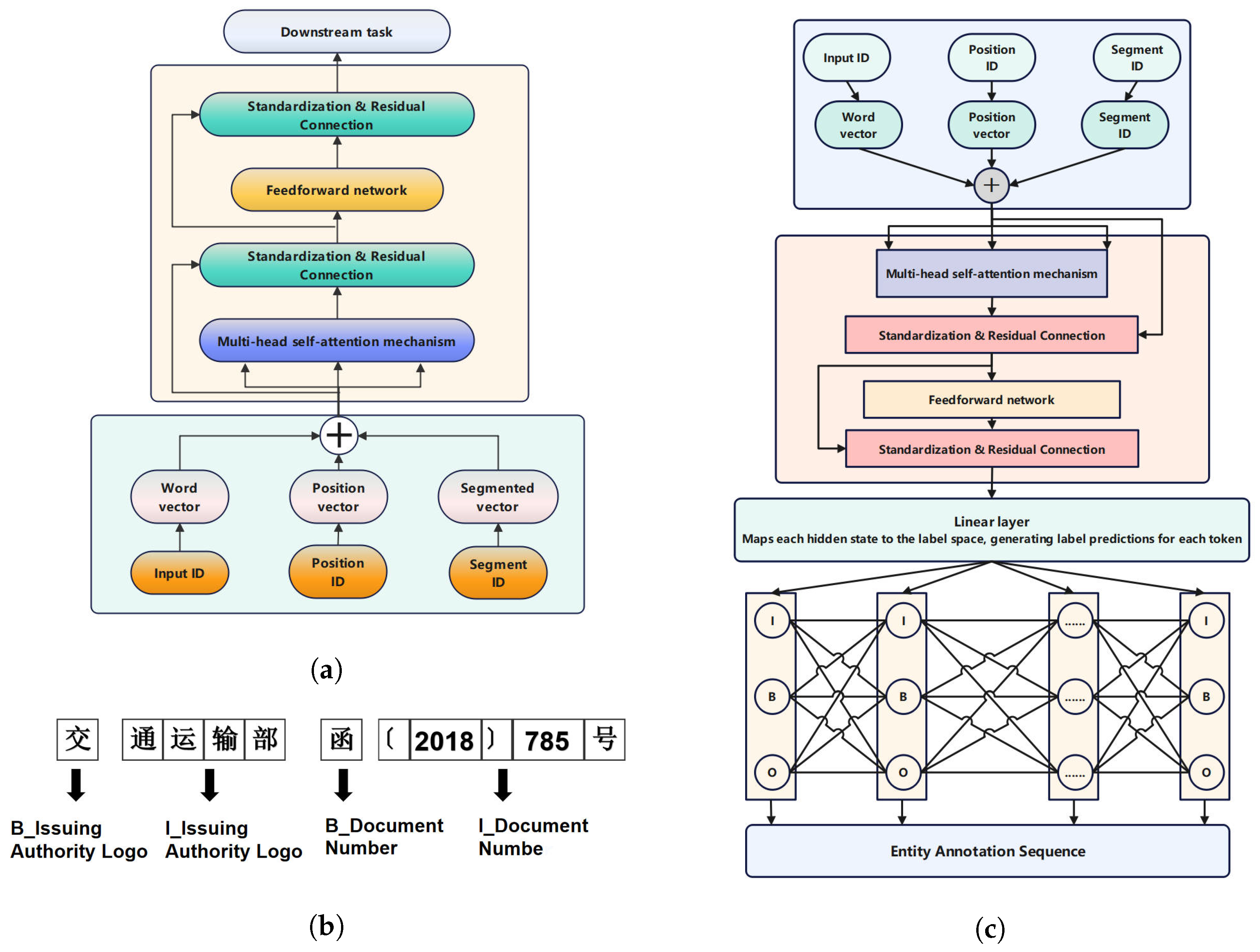

Figure 3.

Dynamic knowledge graph constructed based on entity relationships. In this graph, nodes are colored according to their entity type (e.g., organization, person, location, event). The thickness of an edge represents the strength or frequency of the relationship, with thicker lines indicating stronger or more frequent connections, and thinner lines indicating weaker or less frequent ones.

Figure 3.

Dynamic knowledge graph constructed based on entity relationships. In this graph, nodes are colored according to their entity type (e.g., organization, person, location, event). The thickness of an edge represents the strength or frequency of the relationship, with thicker lines indicating stronger or more frequent connections, and thinner lines indicating weaker or less frequent ones.

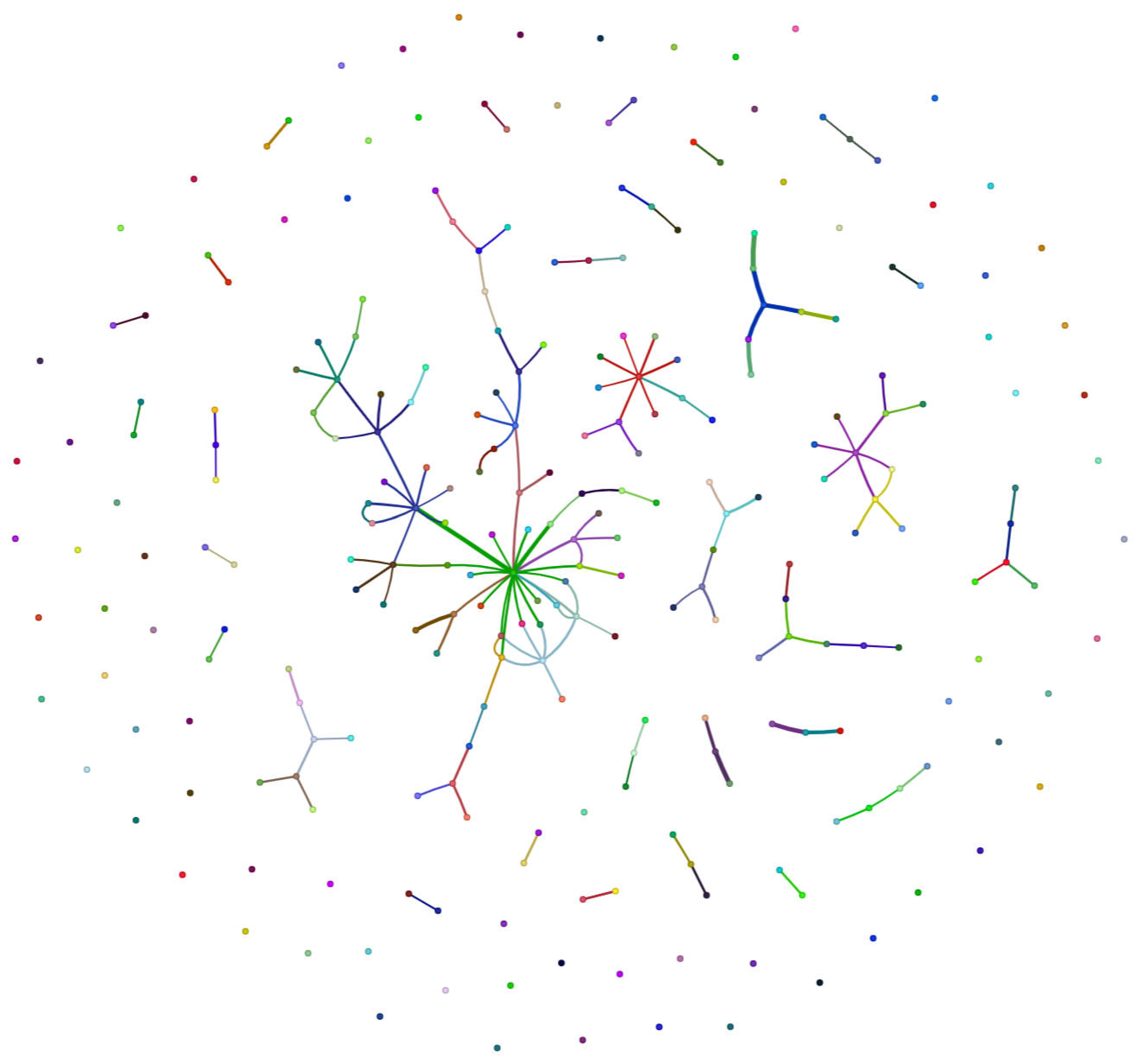

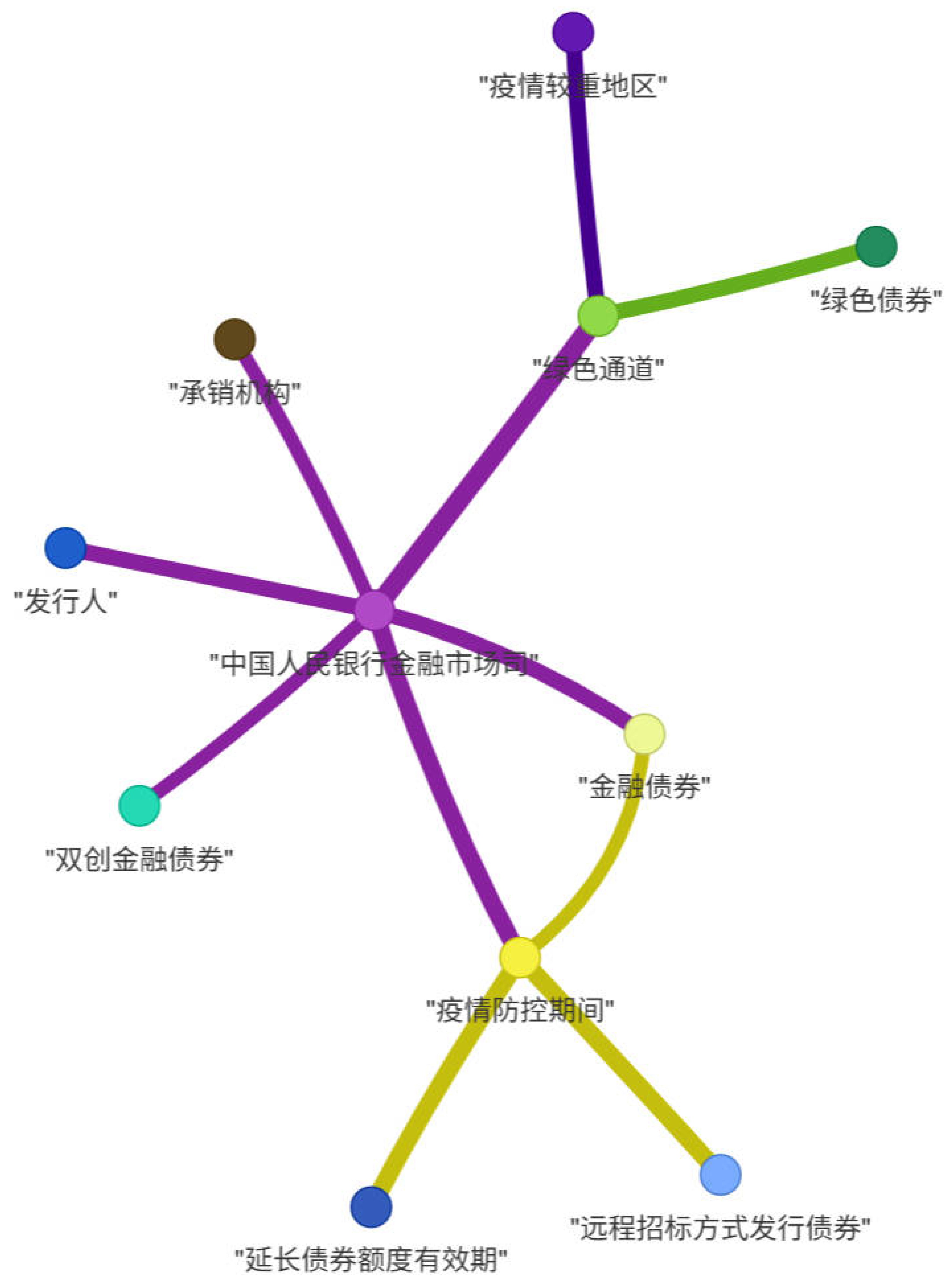

Figure 4.

Entity relationship network example, centered on the “Financial Market Department of the People’s Bank of China” (中国人民银行金融市场司). This graph illustrates how the central financial authority connects with various policy measures and economic entities during specific periods, such as the pandemic. The connecting lines represent the relationships (e.g., issuance, support, regulation) between these entities. Legend for Chinese terms: ‘发行人’ (Issuers), ‘承销机构’ (Underwriting Institutions), ‘双创金融债券’ (“Dual Innovation” Financial Bonds), ‘金融债券’ (Financial Bonds), ‘疫情防控期间’ (During the Pandemic Prevention and Control Period), ‘绿色通道’ (Green Channel, an expedited process), ‘绿色债券’ (Green Bonds), ‘疫情较重地区’ (Severely Pandemic-Affected Areas), ‘延长债券额度有效期’ (Extending the Validity of Bond Quotas), and ‘远程招标方式发行债券’ (Issuing Bonds via Remote Bidding).

Figure 4.

Entity relationship network example, centered on the “Financial Market Department of the People’s Bank of China” (中国人民银行金融市场司). This graph illustrates how the central financial authority connects with various policy measures and economic entities during specific periods, such as the pandemic. The connecting lines represent the relationships (e.g., issuance, support, regulation) between these entities. Legend for Chinese terms: ‘发行人’ (Issuers), ‘承销机构’ (Underwriting Institutions), ‘双创金融债券’ (“Dual Innovation” Financial Bonds), ‘金融债券’ (Financial Bonds), ‘疫情防控期间’ (During the Pandemic Prevention and Control Period), ‘绿色通道’ (Green Channel, an expedited process), ‘绿色债券’ (Green Bonds), ‘疫情较重地区’ (Severely Pandemic-Affected Areas), ‘延长债券额度有效期’ (Extending the Validity of Bond Quotas), and ‘远程招标方式发行债券’ (Issuing Bonds via Remote Bidding).

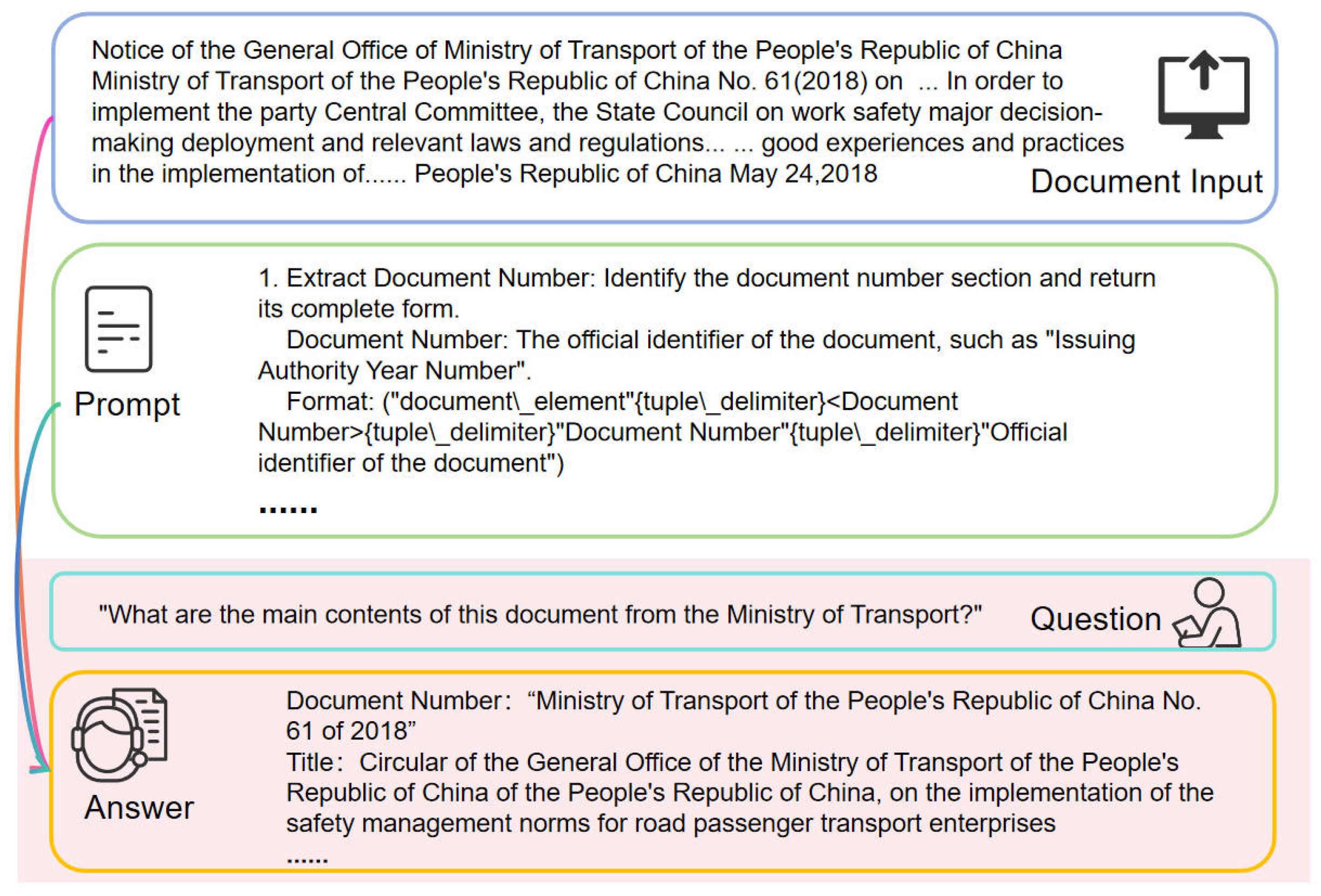

Figure 5.

Prompt engineering diagram illustrating the overall workflow from document input to final answer generation. The ellipses (…) in the example text indicate that the content has been abbreviated for illustrative purposes.

Figure 5.

Prompt engineering diagram illustrating the overall workflow from document input to final answer generation. The ellipses (…) in the example text indicate that the content has been abbreviated for illustrative purposes.

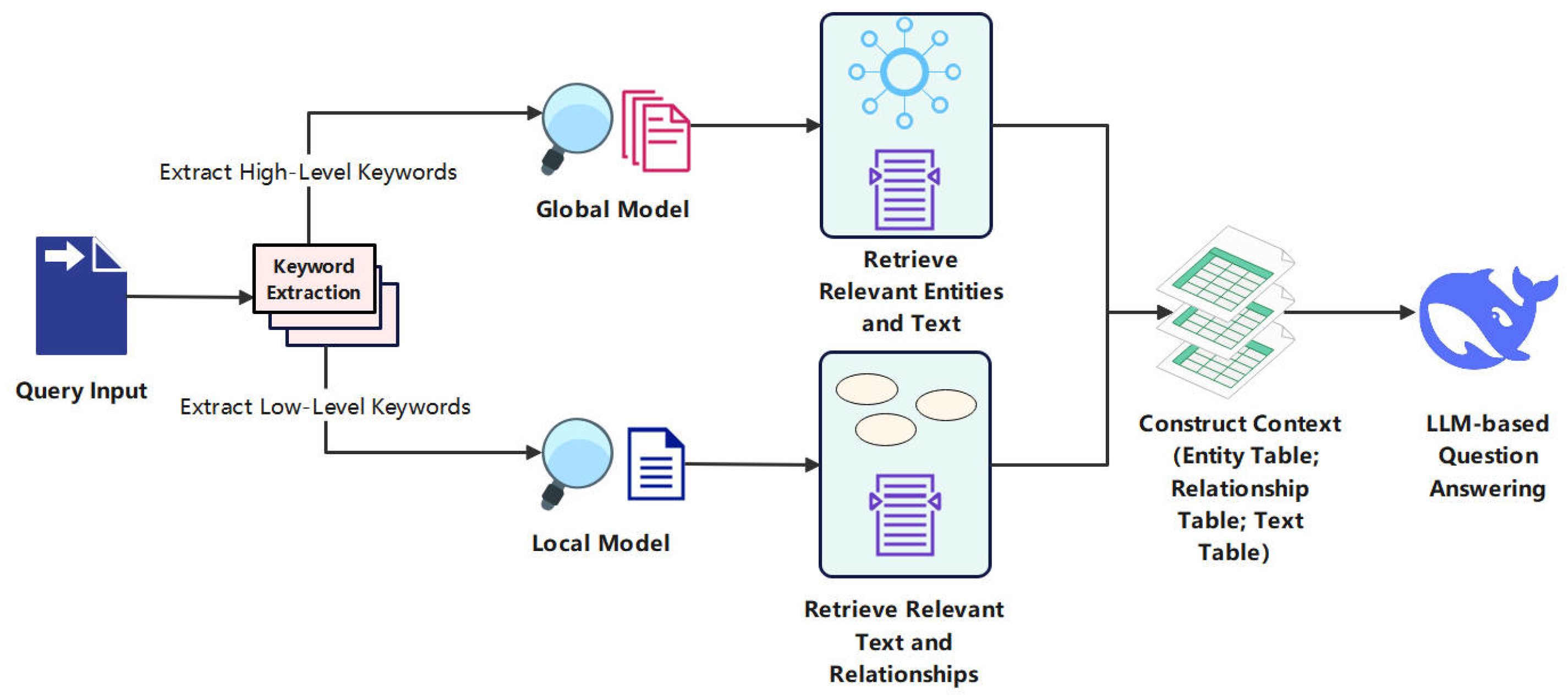

Figure 6.

Enhanced retrieval process diagram, showing keyword extraction followed by parallel retrieval from both global (KG) and local (document) models.

Figure 6.

Enhanced retrieval process diagram, showing keyword extraction followed by parallel retrieval from both global (KG) and local (document) models.

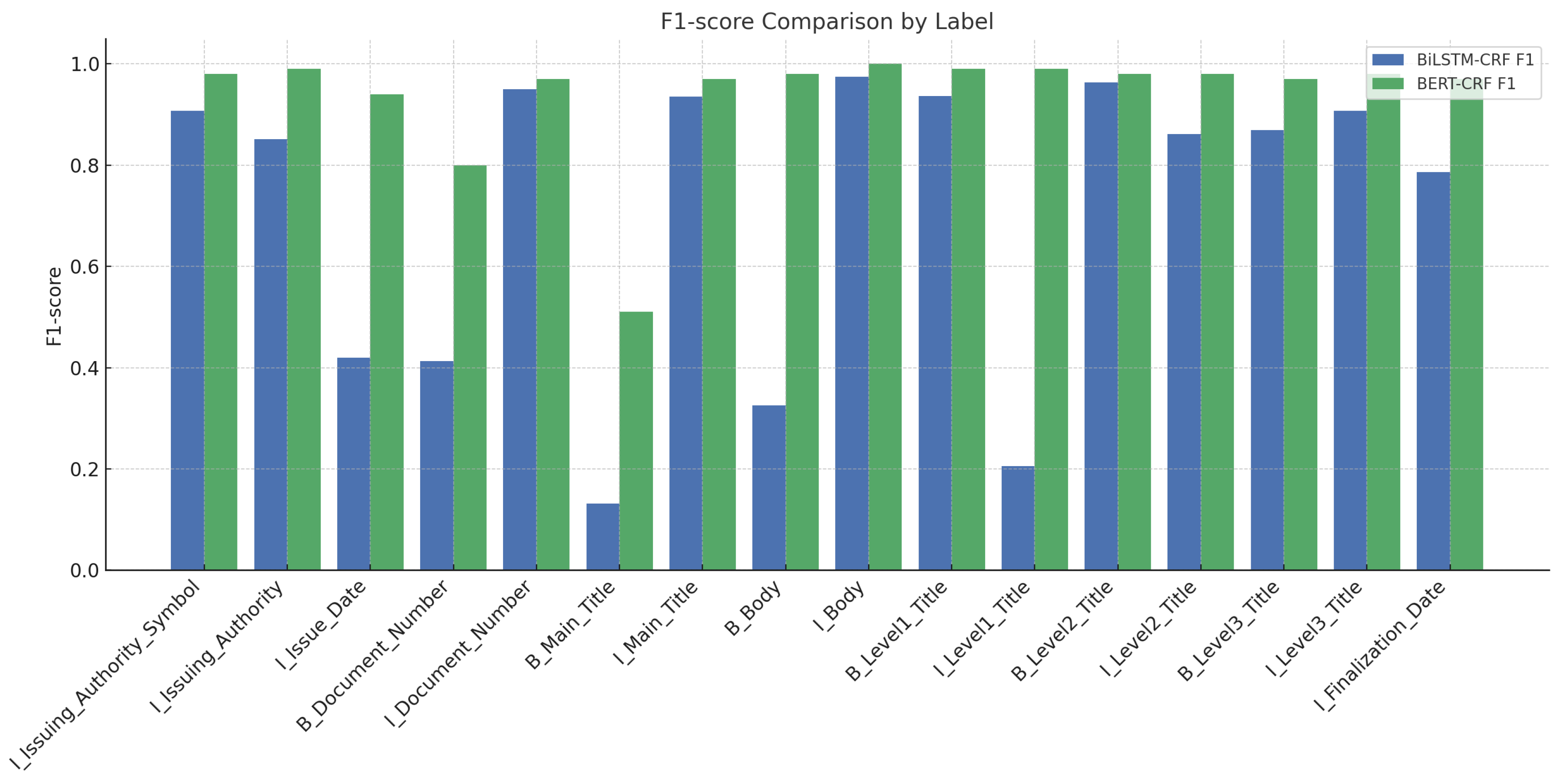

Figure 7.

F1 score comparison by label.

Figure 7.

F1 score comparison by label.

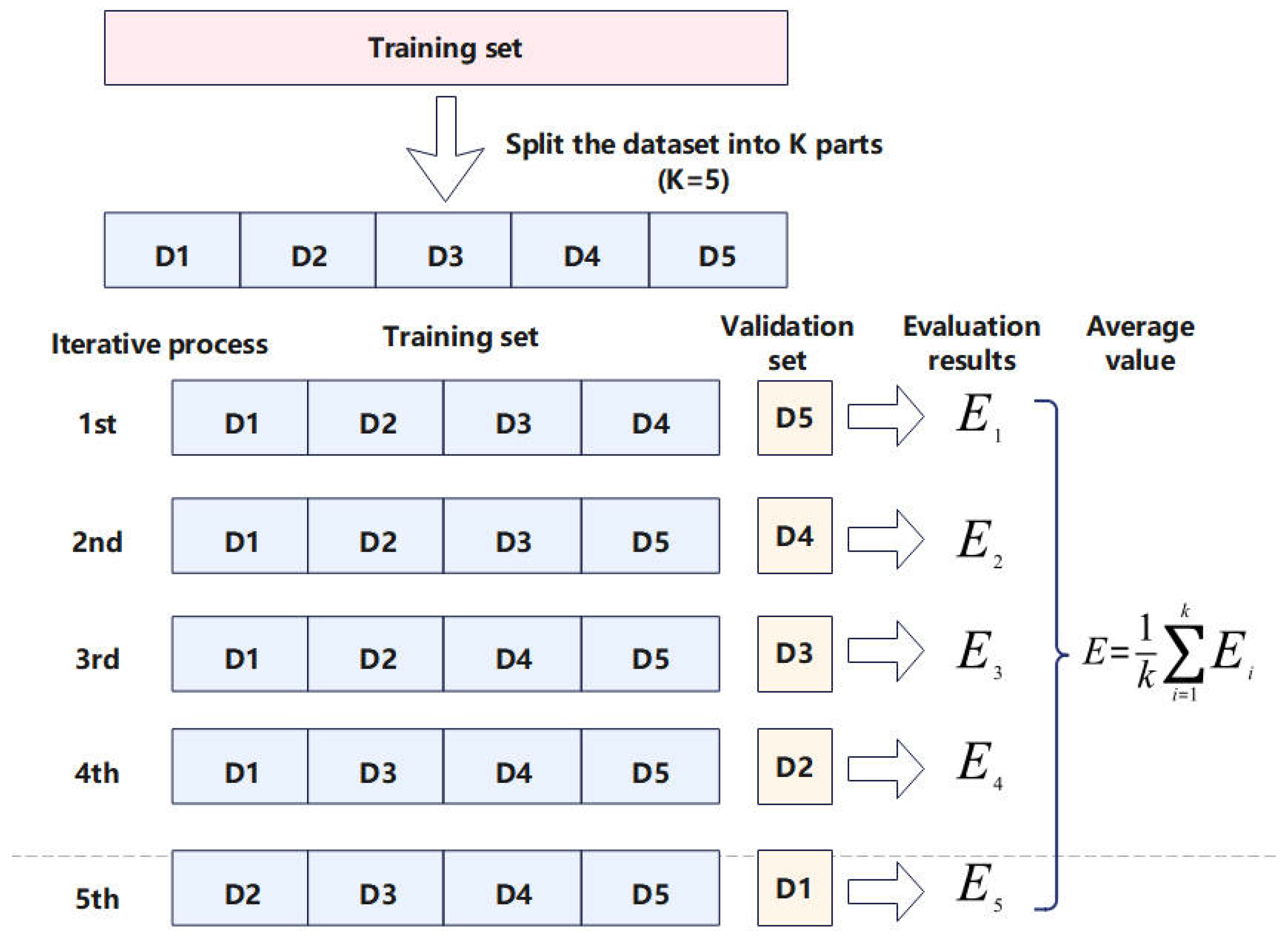

Figure 8.

Schematic illustration of the K-fold cross-validation process.

Figure 8.

Schematic illustration of the K-fold cross-validation process.

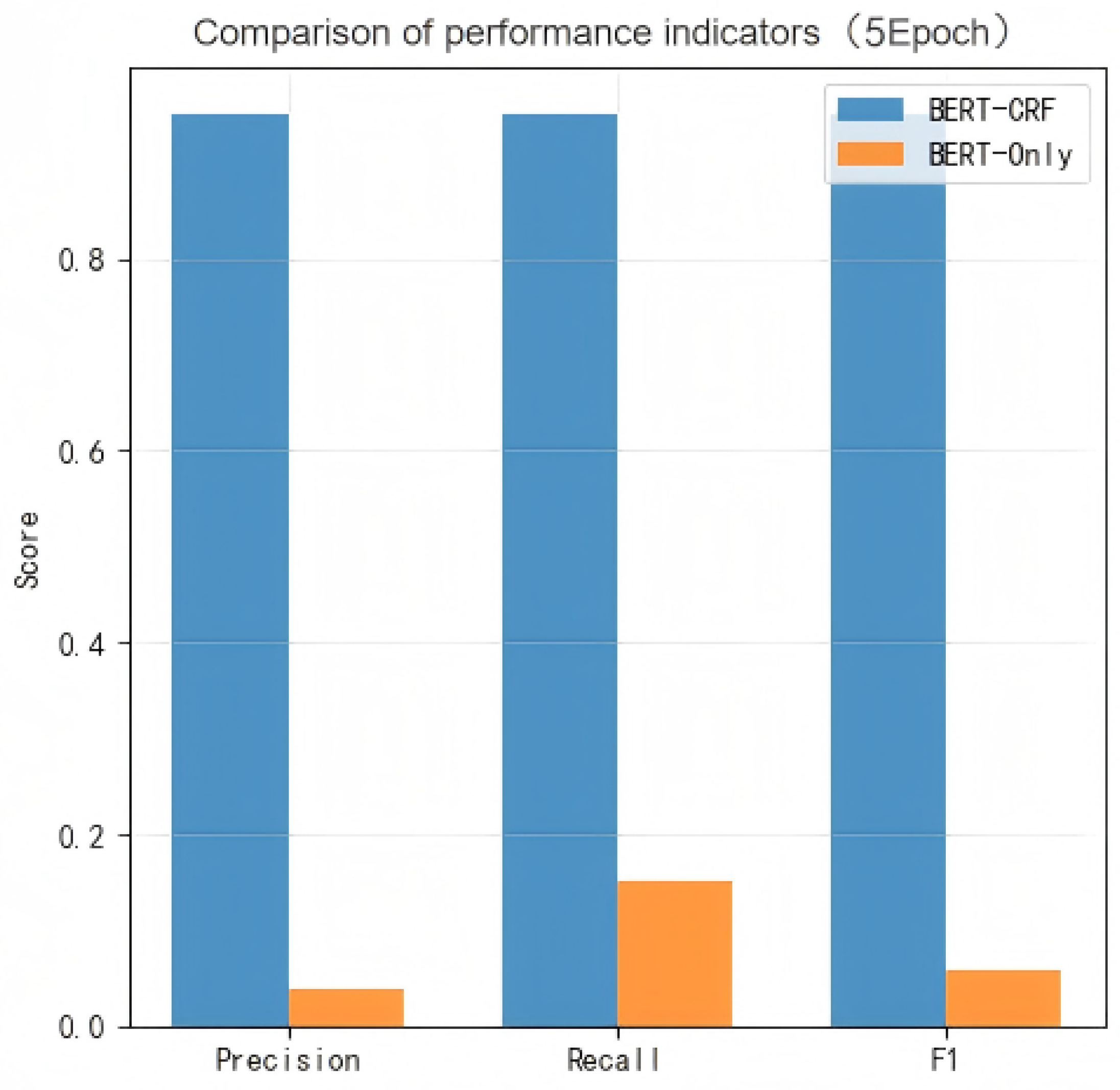

Figure 9.

Comparison of performance indicators.

Figure 9.

Comparison of performance indicators.

Figure 10.

Radar plot comparing evaluation metrics across models.

Figure 10.

Radar plot comparing evaluation metrics across models.

Table 1.

Document entity relationship table.

Table 1.

Document entity relationship table.

| Source Entity | Relationship Type | Target Entity | Relationship Description |

|---|

| Issuing Authority | Issuance Relationship | Document Number | The authority issues the document number through an official process. |

| Title of Main Text | Subordination Relationship | Main Text | The title summarizes the core content of the main text. |

| Primary Addressee | Pointing Relationship | Main Text | The main addressee of the document is related to the execution requirements in the main text. |

| Primary Heading | Hierarchical Relationship | Secondary Heading | The hierarchical progression of headings, breaking down the content. |

| Secondary Heading | Hierarchical Relationship | Tertiary Heading | Further refinement and classification of content. |

| Date of Creation | Temporal Relationship | Main Text | Date is related to the timeliness of policies in the main text. |

| Issuing Authority Logo | Representation Relationship | Issuing Authority | Logo as the official symbol of organizational identity. |

Table 2.

Comparison of hybrid prompt vs. generic prompt.

Table 2.

Comparison of hybrid prompt vs. generic prompt.

| Feature | Our Hybrid Prompt | Generic Prompt |

|---|

| Information Sources | Combines structured results from KG and unstructured results from vector search. | Contains only a single source of information (e.g., vector search results). |

| Data Organization | Explicitly separates context into “knowledge graph (KG)” and “document chunks (DC)”. | Treats all context as a single, undifferentiated block. |

| Context Management | Designed to handle different formats for different information types. | A single format for all context. |

| Citation Requirement | Requires the LLM to specify the source (KG or DC) for each piece of information. | Simple citation or no requirement to differentiate sources. |

| Structural Guidance | Explicitly instructs the LLM to organize the answer into sections. | Fewer requirements for structuring the answer. |

Table 3.

Evaluation metric system.

Table 3.

Evaluation metric system.

| Dimension | Specific Metric | Measurement Method |

|---|

| Entity Recognition | Precision/Recall/F1 | Strict Boundary Matching |

| QA Quality | Answer Matching Degree | Keyword Overlap Rate (Jaccard) |

| Context Relevance | Answer Containment Rate | Boolean Determination |

| Comprehensive Performance | Weighted Score (0–100) | Linear Weighting (0.4:0.3:0.3) |

Table 4.

Experimental environment.

Table 4.

Experimental environment.

| Category | Details |

|---|

| Operating System | Windows |

| Virtual Environment | Anaconda |

| Hardware Configuration | NVIDIA GTX 1080 Ti |

| Programming Language | Python 3.7 |

| Deep Learning Framework | TensorFlow 2.11.0 |

| | PyTorch 1.10.0 |

| CUDA | 11.2 |

Table 5.

Training configuration.

Table 5.

Training configuration.

| Item | Configuration Description |

|---|

| Batch Size | 16, balancing training efficiency and hardware resource limitations |

| Optimizer | Adam [20] |

| Initial Learning Rate | 3 × 10−5, experimentally tuned to effectively prevent gradient explosion or vanishing |

| Training Epochs | 5, to ensure that the model fully learns the data features |

| Learning Rate Strategy | The adaptive adjustment mechanism built into the Adam optimizer, accelerating convergence |

| Training Method | The training samples are input in batches using a data loader and optimized through the loss function in PyTorch 1.10.0 with CUDA 11.2 support |

| Training Devices | GPU supported by CUDA, used to accelerate training and handle large-scale data |

Table 6.

Final training results of the BERT-CRF model.

Table 6.

Final training results of the BERT-CRF model.

| Entity Label | Precision | Recall | F1 Score | Support |

|---|

| O | 1.00 | 1.00 | 1.00 | 25,994 |

| B_Issuing Authority Logo | 0.92 | 0.85 | 0.98 | 466 |

| I_Issuing Authority Logo | 0.97 | 0.99 | 0.98 | 3798 |

| B_Issuing Authority | 0.99 | 0.98 | 0.99 | 573 |

| I_Issuing Authority | 1.00 | 0.99 | 0.99 | 3529 |

| B_Issuing Date | 0.89 | 1.00 | 0.94 | 123 |

| I_Issuing Date | 0.88 | 1.00 | 0.94 | 1099 |

| B_Document Number | 0.86 | 0.75 | 0.80 | 449 |

| I_Document Number | 0.96 | 0.99 | 0.97 | 5380 |

| B_Main Text Title | 0.50 | 0.52 | 0.51 | 427 |

| I_Main Text Title | 0.98 | 0.97 | 0.97 | 7409 |

| B_Primary Addressee | 0.00 | 0.00 | 0.00 | 18 |

| I_Primary Addressee | 0.97 | 0.28 | 0.43 | 107 |

| B_Main Text | 0.98 | 0.97 | 0.98 | 3652 |

| I_Main Text | 1.00 | 1.00 | 1.00 | 448,626 |

| B_Heading Level 1 | 0.99 | 0.99 | 0.99 | 1998 |

| I_Heading Level 1 | 0.99 | 0.99 | 0.99 | 34,568 |

| B_Heading Level 2 | 0.98 | 0.98 | 0.98 | 1821 |

| I_Heading Level 2 | 0.99 | 0.98 | 0.98 | 40,292 |

| B_Heading Level 3 | 0.96 | 0.98 | 0.97 | 862 |

| I_Heading Level 3 | 0.96 | 1.00 | 0.98 | 23,876 |

| B_Completion Date | 1.00 | 0.95 | 0.97 | 343 |

| I_Completion Date | 0.98 | 0.96 | 0.97 | 3050 |

| Accuracy | – | – | 0.99 | 608,460 |

| Macro-Average | 0.90 | 0.87 | 0.88 | 608,460 |

| Weighted Average | 0.99 | 0.99 | 0.99 | 608,460 |

Table 7.

BERT-CRF cross-validation results.

Table 7.

BERT-CRF cross-validation results.

| Fold | Accuracy | Macro-Avg. | Weighted Avg. |

|---|

| 1 | 0.980 | 0.830 | 0.980 |

| 2 | 0.990 | 0.870 | 0.990 |

| 3 | 0.980 | 0.800 | 0.980 |

| 4 | 0.990 | 0.880 | 0.990 |

| 5 | 0.980 | 0.870 | 0.980 |

| Avg. | 0.984 | 0.850 | 0.984 |

Table 8.

BiLSTM-CRF cross-validation results.

Table 8.

BiLSTM-CRF cross-validation results.

| Fold | Accuracy | Macro-Avg. | Weighted Avg. |

|---|

| 1 | 0.410 | 0.660 | 0.700 |

| 2 | 0.690 | 0.690 | 0.690 |

| 3 | 0.440 | 0.680 | 0.690 |

| 4 | 0.710 | 0.450 | 0.710 |

| 5 | 0.700 | 0.510 | 0.670 |

| Avg. | 0.590 | 0.598 | 0.692 |

Table 9.

Generalization results of the BERT–CRF model on the Guangxi Government Information Disclosure Dataset.

Table 9.

Generalization results of the BERT–CRF model on the Guangxi Government Information Disclosure Dataset.

| Entity Label | Precision | Recall | F1 Score | Support |

|---|

| B-BODY | 0.890 | 0.905 | 0.898 | 3079 |

| B-DISEASES | 0.858 | 0.863 | 0.861 | 1311 |

| B-DRUG | 0.942 | 0.881 | 0.911 | 480 |

| B-EXAMINATIONS | 0.864 | 0.846 | 0.855 | 345 |

| B-TEST | 0.826 | 0.731 | 0.777 | 572 |

| B-TREATMENT | 0.869 | 0.821 | 0.844 | 162 |

| I-BODY | 0.757 | 0.861 | 0.806 | 2435 |

| I-DISEASES | 0.962 | 0.863 | 0.910 | 6858 |

| I-DRUG | 0.962 | 0.881 | 0.919 | 1433 |

| I-EXAMINATIONS | 0.853 | 0.875 | 0.864 | 833 |

| I-TEST | 0.800 | 0.742 | 0.770 | 344 |

| I-TREATMENT | 0.962 | 0.913 | 0.937 | 1870 |

| Micro-Avg. | 0.875 | 0.857 | 0.866 | 20,992 |

| Macro-Avg. | 0.881 | 0.847 | 0.869 | 20,992 |

| Weighted Avg. | 0.878 | 0.857 | 0.866 | 20,992 |

Table 10.

Overall performance score comparison.

Table 10.

Overall performance score comparison.

| System | Average Score |

|---|

| Our Hybrid RAG System | 87.1 |

| Pure LLM (No RAG) | 60.0 |

| Vector-Only RAG System | 53.5 |

| Improvement of Hybrid RAG | |

| vs. Pure LLM | +45.2% |

| vs. Vector-Only RAG | +62.8% |

Table 11.

Case study: comparison of answers from three different systems.

Table 11.

Case study: comparison of answers from three different systems.

| Query | “What are the specific support measures for SME financing in the ‘Several Opinions of the State Council on Promoting the Development of SMEs’?” |

Answer with

Our Hybrid

RAG System | Based on the provided document, the ‘Several Opinions of the State Council on Promoting the Development of SMEs’ outlines several specific support measures for financing:

Broaden Financing Channels: Encourages the development of direct financing for SMEs, supports them to list on the main board and GEM, and allows the issuance of SME collective bonds. Establish a Credit Guarantee System: Pushes for the establishment of a credit guarantee system for SMEs, with central and local governments providing financial support to guarantee institutions to increase their capacity. Incentivize Financial Institutions: Proposes implementing differentiated regulatory policies, such as lowering the risk weight for SME loans and increasing the tolerance for non-performing loans, to encourage commercial banks to lend to SMEs. Tax Incentives: Provides tax exemptions and reductions for qualified guarantee institutions to lower the financing costs for SMEs.

References:

[DC] Section 3, Item 12: “Broaden direct financing channels for SMEs…”

[KG] (SME Financing) –>[supported by]–> (Credit Guarantee System) |

Answer with

Vector-Only RAG | The document mentions support for SME financing. Measures include using capital markets for financing and providing credit support. The government will support guarantee institutions. Commercial banks are encouraged to lend to SMEs. It also mentions tax policies for these institutions. |

Answer without

RAG (Pure LLM) | The ‘Several Opinions of the State Council on Promoting the Development of SMEs’ generally encourages financial support for small and medium-sized enterprises. This includes asking banks to increase lending, innovating financial products, and developing multi-level capital markets. It emphasizes solving financing difficulties but does not provide highly specific measures, as these are often detailed in subsequent documents by individual ministries. |