Constrained Optimal Control of Information Diffusion in Online Social Hypernetworks

Abstract

1. Introduction

2. Related Work

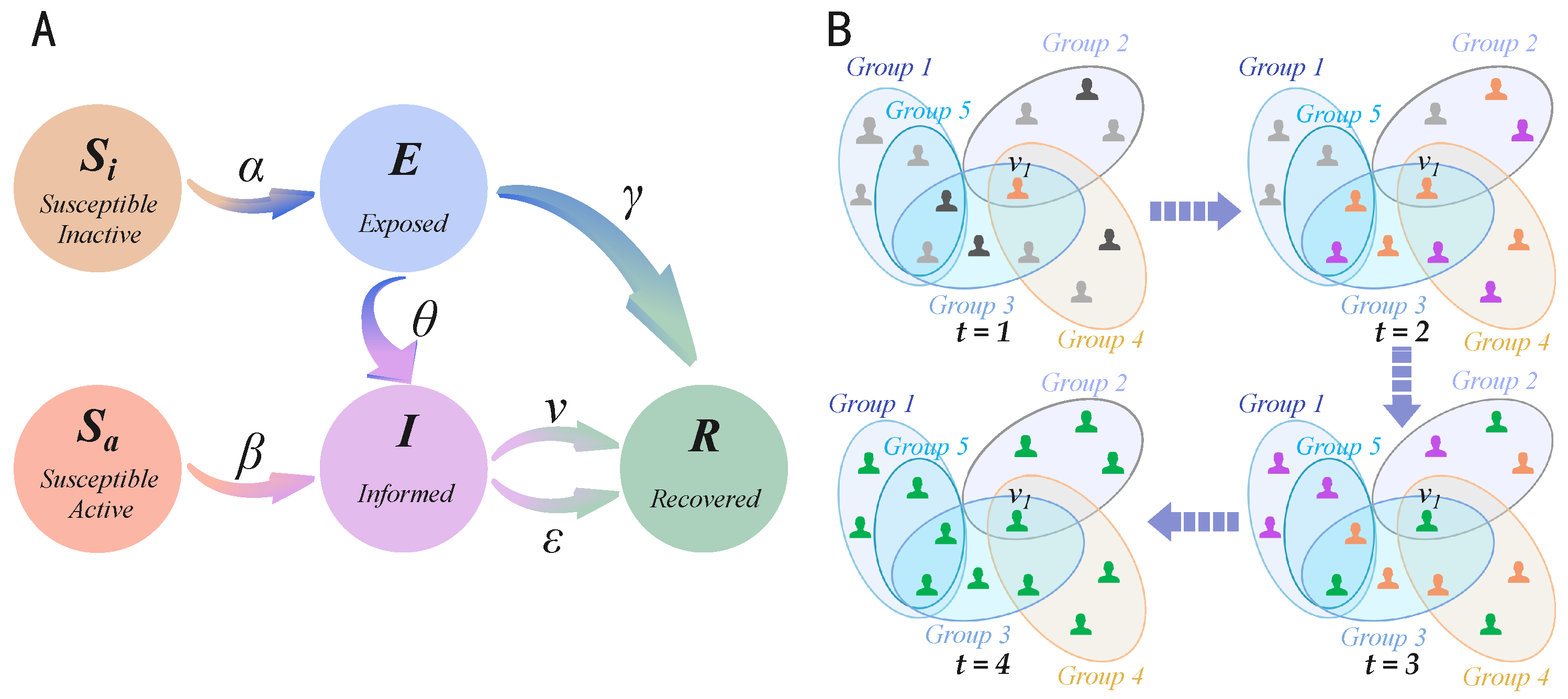

2.1. The Model on Online Social Hypernetworks

- Transition from -state to E-state: When a node in the -state is adjacent to a node in the I-state, it transitions to the E-state with probability .

- Transition from -state to I-state: When a node in the -state is adjacent to a node in the I-state, it transitions to the I-state with probability , thereby initiating the dissemination of information.

- Transition from E-state to I-state or R-state: A node in the E-state transitions to the I-state with probability and begins to disseminate information. Additionally, the node may also transition directly to the R-state with probability , becoming immune to the information.

- Transition from I-state to R-state: A node in the I-state transitions to the R-state with probability , thereby ceasing the dissemination of information. Furthermore, the node may forget the information at a rate v, resulting in a transition to the R-state and termination of the spreading process.

2.2. Pontryagin’s Maximum (Or Minimum) Principle

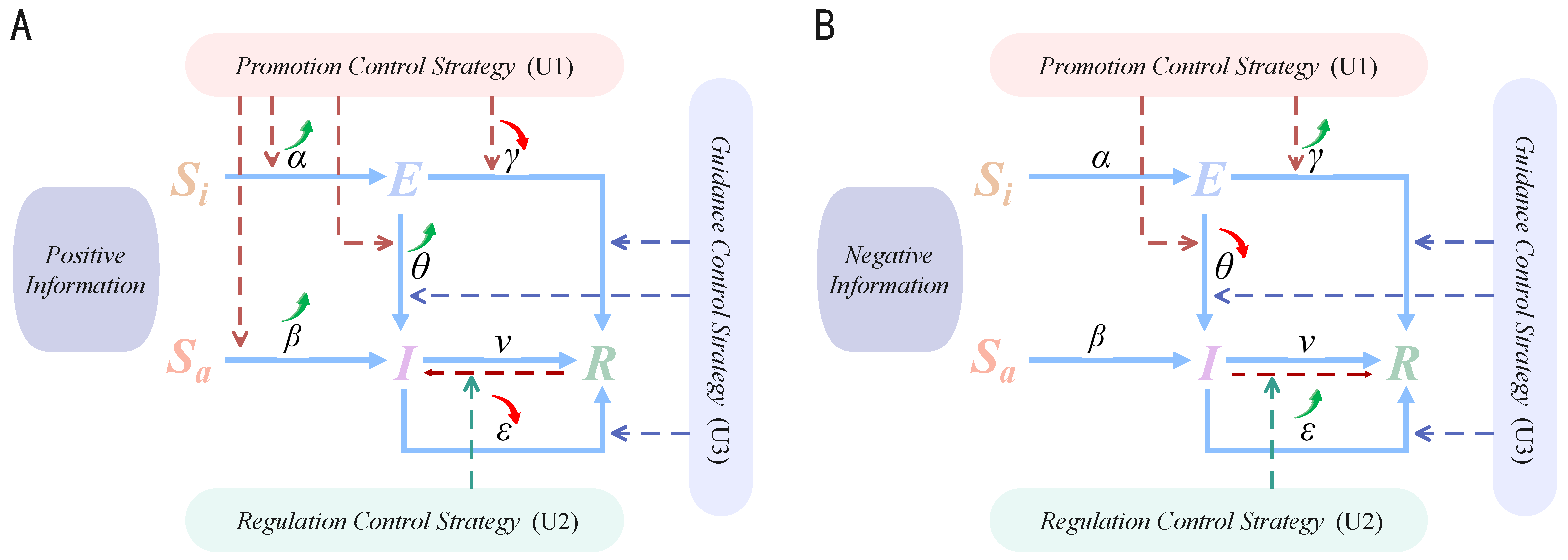

3. Our Control Model

3.1. Information Diffusion Model with Control Strategies

- Users in the -stateand -state primarily undergo state transitions upon initial exposure to information under the influence of user attributes, such as individual judgment ability and interest in the information.

- Users in the E-state, regarded as swing users who may transition to either the I-state or R-state, are influenced by a broader range of factors, including user attributes, environmental attributes, and information attributes.

- Users in the I-state, as active disseminators of information, exhibit varying levels of enthusiasm for diffusing information, which are affected by both information attributes and environmental attributes.

- Cost Constraint: Reflects the limits imposed by available resources and intervention costs, expressed as upper and lower bounds on the control variables.

- Triggering Constraint: A mechanism whereby control strategies are activated only when specific diffusion state conditions are satisfied, formally represented as complementarity conditions.

- Cost Constraint

- Positive information

- Negative information

- 2.

- Triggering Constraint

- When , the triggering condition is not satisfied, and the control intensity .

- When , the control variable is free to take values within its defined upper bound.

- When , the triggering condition is not met; this case falls outside the feasible domain of the constraint and need not be considered in the model.

3.2. System Benefit Analysis

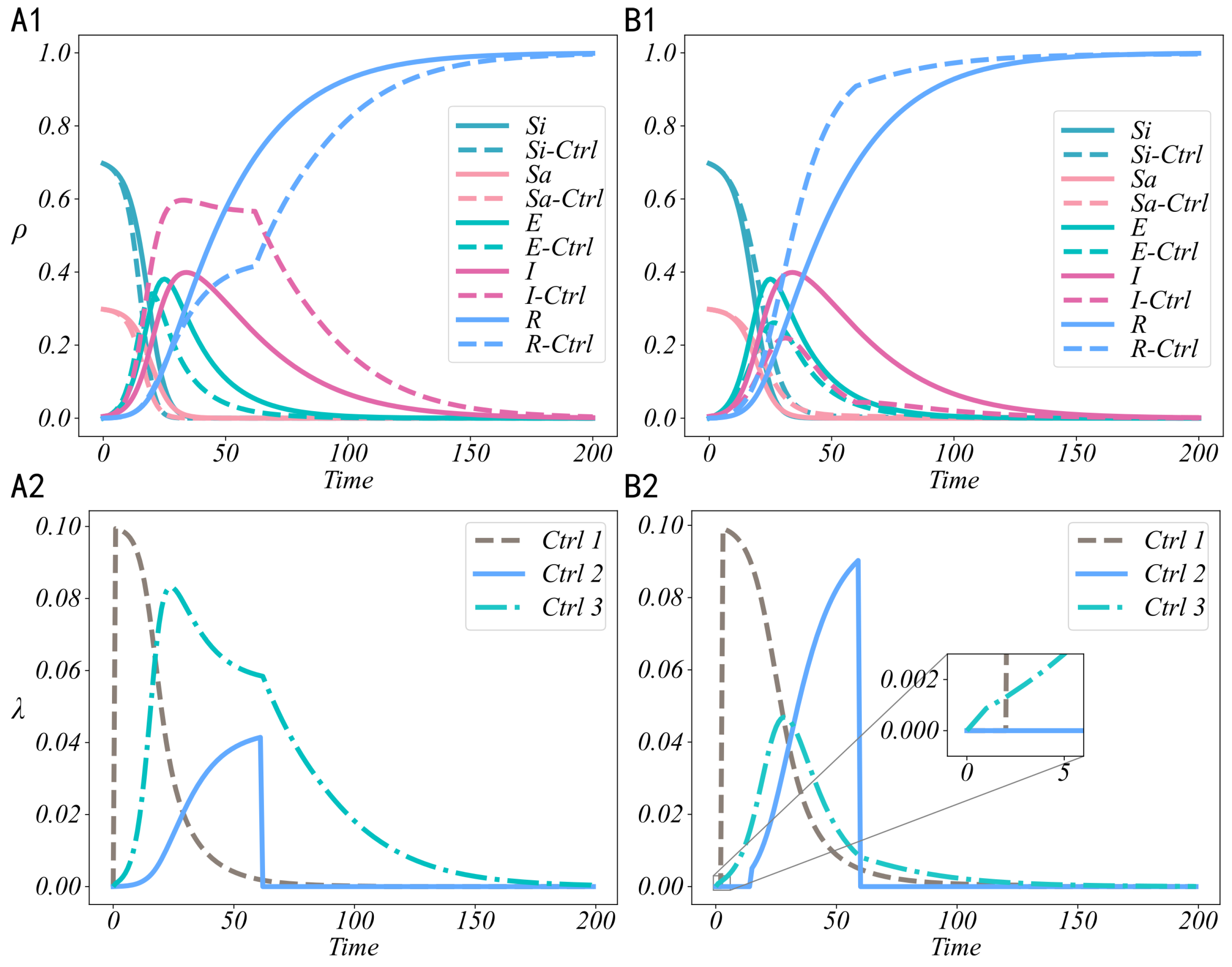

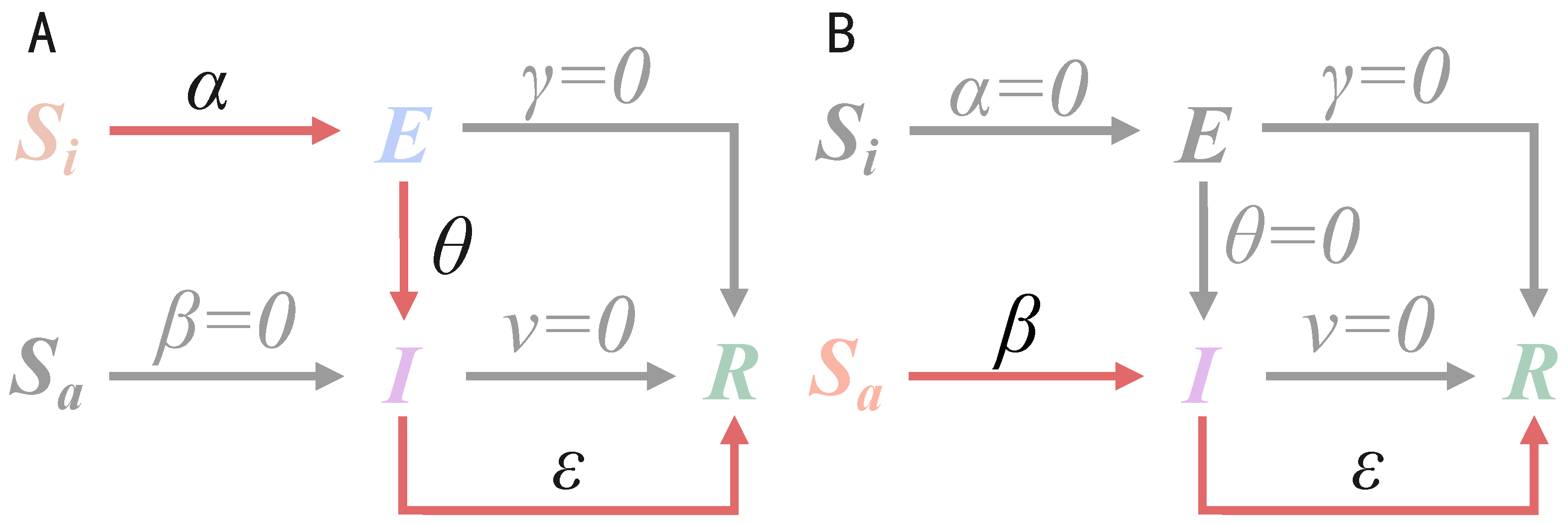

4. Optimal Control of Positive and Negative Information

4.1. Optimal Control Intensity Analysis

4.2. Definition of System Performance Metrics

5. Performance Analysis

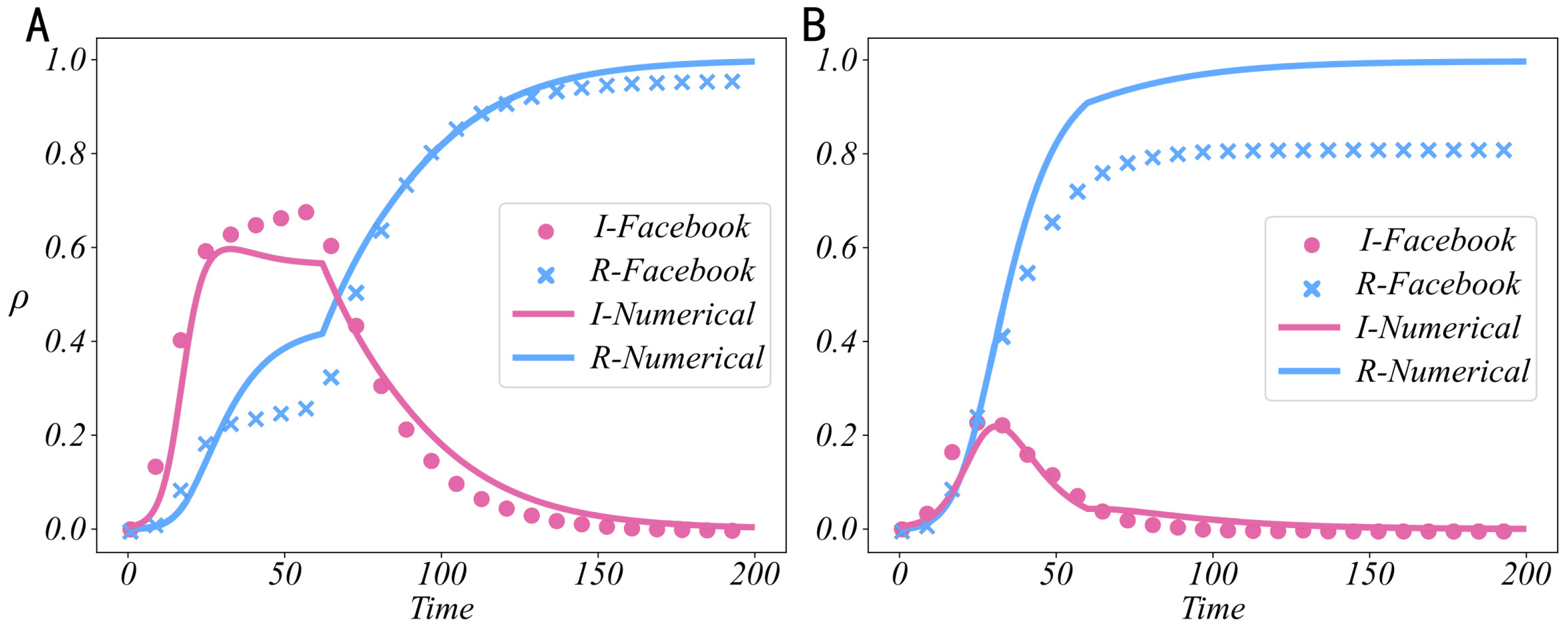

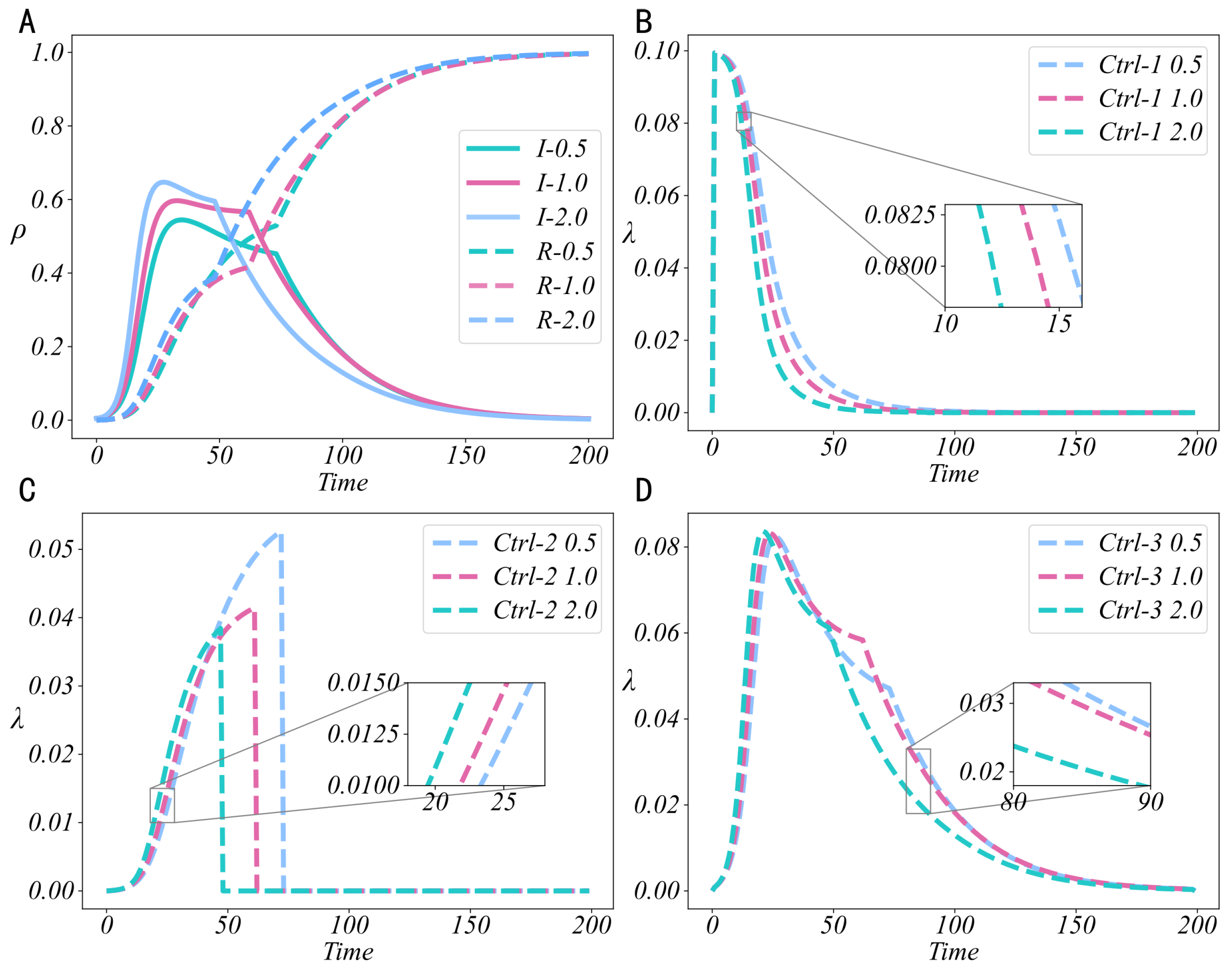

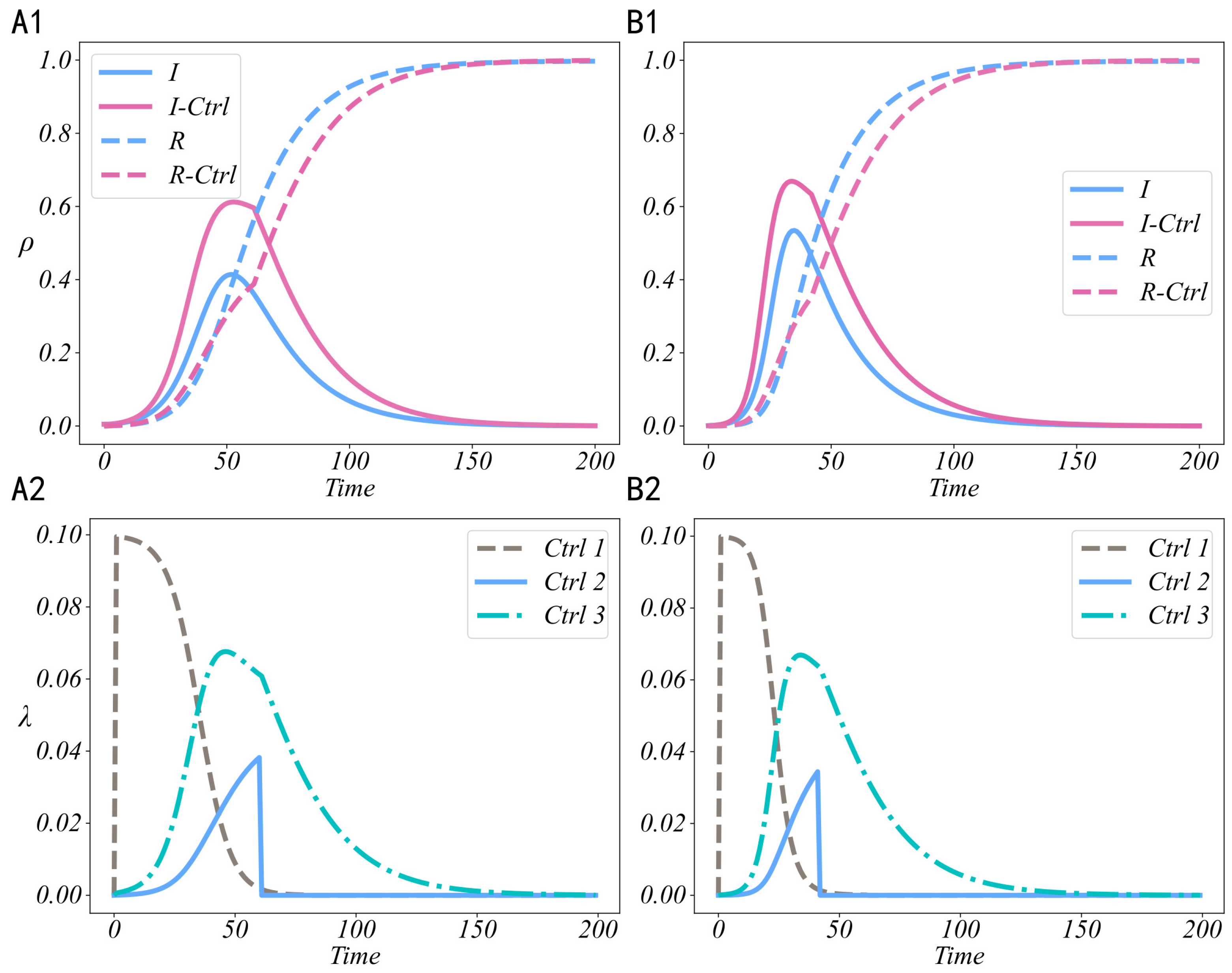

5.1. Effectiveness Analysis of Control Strategies

5.2. Benefit Analysis of Control Strategies

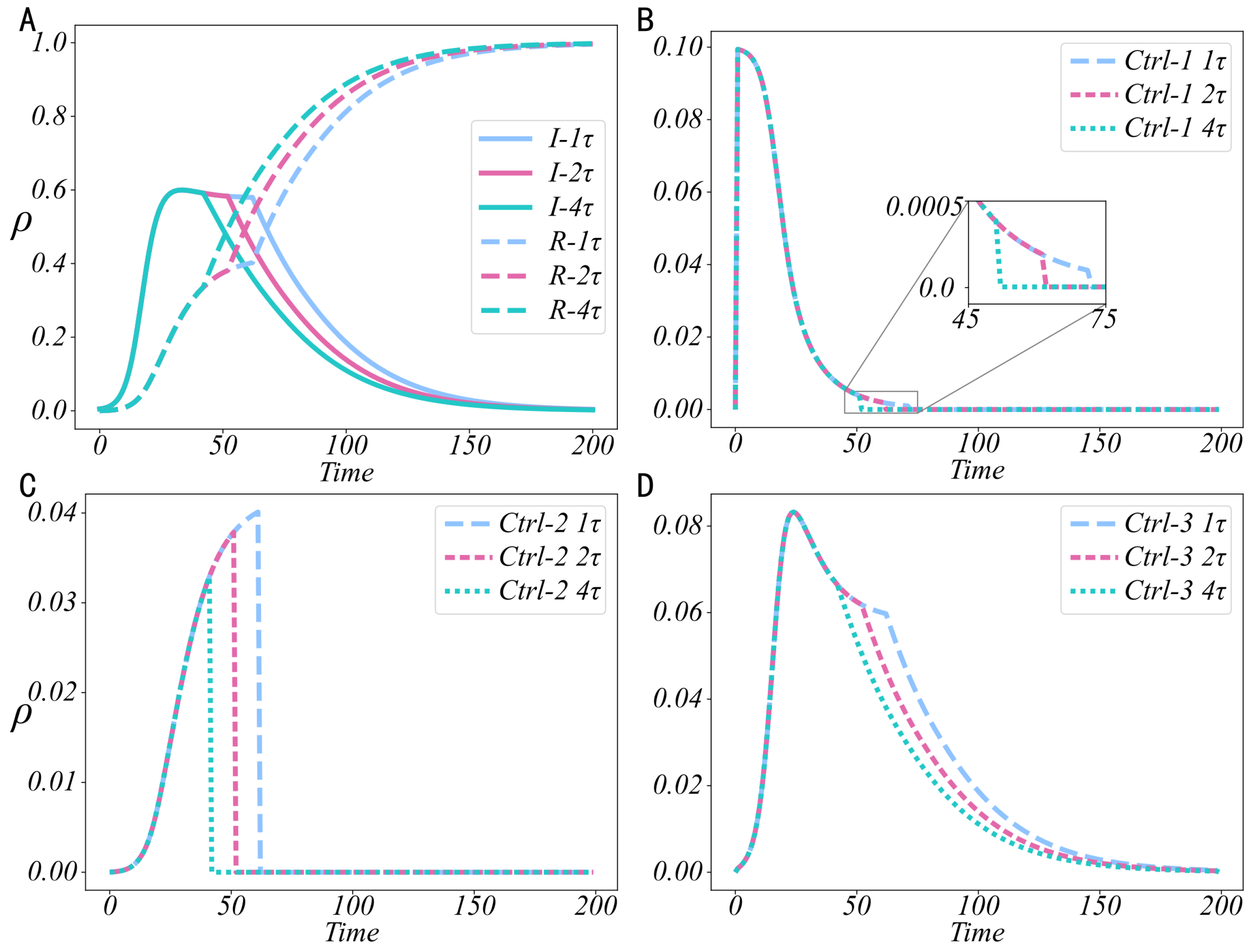

5.3. Sensitivity Analysis of Control Strategies

5.4. Universality Analysis of Optimal Control Strategies

6. Conclusions and Discussion

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Appendix A. Existence of Optimal Solution

- The first-order partial derivatives of the function F are continuous, and there exists a constant C such that: .

- The system (26) as well as the control set and the set of feasible solutions are non-empty.

- The function satisfies the form: .

- The control set U is closed and compact.

- The integrand of the objective function J is concave over the control set.

Appendix B. Uniqueness of Optimal Solution

- If , then

- If , then

- If , then

- If , then

Appendix C. Stability Analysis of the Controlled System

References

- Brady, W.J.; McLoughlin, K.; Doan, T.N.; Crockett, M.J. How social learning amplifies moral outrage expression in online social networks. Sci. Adv. 2021, 7, eabe5641. [Google Scholar] [CrossRef] [PubMed]

- Yu, X.; Yuan, C.; Kim, J.; Wang, S. A new form of brand experience in online social networks: An empirical analysis. J. Bus. Res. 2021, 130, 426–435. [Google Scholar] [CrossRef]

- Park, S.; Zhong, R.R. Pattern recognition of travel mobility in a city destination: Application of network motif analytics. J. Travel Res. 2022, 61, 1201–1216. [Google Scholar] [CrossRef]

- Zhang, R.; Hu, Z. Access control method of network security authentication information based on fuzzy reasoning algorithm. Measurement 2021, 185, 110103. [Google Scholar] [CrossRef]

- Tang, Z.; Miller, A.S.; Zhou, Z.; Warkentin, M. Does government social media promote users’ information security behavior towards COVID-19 scams? Cultivation effects and protective motivations. Gov. Inf. Q. 2021, 38, 101572. [Google Scholar] [CrossRef]

- Song, C.; Shu, K.; Wu, B. Temporally evolving graph neural network for fake news detection. Inf. Process. Manag. 2021, 58, 102712. [Google Scholar] [CrossRef]

- Goffman, W.; Newill, V. Generalization of epidemic theory: An application to the transmission of ideas. Nature 1964, 204, 225–228. [Google Scholar] [CrossRef]

- Zhu, L.; Yang, F.; Guan, G.; Zhang, Z. Modeling the dynamics of rumor diffusion over complex networks. Inf. Sci. 2021, 562, 240–258. [Google Scholar] [CrossRef]

- Pan, W.; Yan, W.; Hu, Y.; He, R.; Wu, L. Dynamic analysis of a SIDRW rumor propagation model considering the effect of media reports and rumor refuters. Nonlinear Dyn. 2023, 111, 3925–3936. [Google Scholar] [CrossRef]

- Zino, L.; Cao, M. Analysis, prediction, and control of epidemics: A survey from scalar to dynamic network models. IEEE Circ. Syst. Mag. 2021, 21, 4–23. [Google Scholar] [CrossRef]

- Yan, Y.; Yu, S.; Yu, Z.; Jiang, H. Dynamics analysis and control of positive–negative information propagation model considering individual conformity psychology. Nonlinear Dyn. 2024, 112, 16613–16638. [Google Scholar] [CrossRef]

- Wang, X.; Li, Y.; Li, J.; Liu, Y.; Qiu, C. A rumor reversal model of online health information during the COVID-19 epidemic. Inf. Process. Manag. 2021, 58, 102731. [Google Scholar] [CrossRef] [PubMed]

- Yin, F.; Jiang, X.; Qian, X.; Xia, X.; Pan, Y.; Wu, J. Modeling and quantifying the influence of rumor and counter-rumor on information propagation dynamics. Chaos Solitons Fractals 2022, 162, 112392. [Google Scholar] [CrossRef]

- Zhang, J.; Wang, X.; Chen, S. Study on the interaction between information dissemination and infectious disease dissemination under government prevention and management. Chaos Solitons Fractals 2023, 173, 113601. [Google Scholar] [CrossRef]

- Zhang, H.; Yao, Y.; Tang, W.; Zhu, J.; Zhang, Y. Opinion-aware information diffusion model based on multivariate marked Hawkes process. Knowl.-Based Syst. 2023, 279, 110883. [Google Scholar] [CrossRef]

- Battiston, F.; Amico, E.; Barrat, A.; Bianconi, G.; Ferraz de Arruda, G.; Franceschiello, B.; Iacopini, I.; Kefi, S.; Latora, V.; Moreno, Y.; et al. The physics of higher-order interactions in complex systems. Nat. Phys. 2021, 17, 1093–1098. [Google Scholar] [CrossRef]

- Xing, Y.; Wang, X.; Qiu, C.; Li, Y.; He, W. Research on opinion polarization by big data analytics capabilities in online social networks. Technol. Soc. 2022, 68, 101902. [Google Scholar] [CrossRef]

- Wang, J.; Wang, Z.; Yu, P.; Xu, Z. The impact of different strategy update mechanisms on information dissemination under hyper network vision. Commun. Nonlinear Sci. Numer. Simul. 2022, 113, 106585. [Google Scholar] [CrossRef]

- Berge, C. Graphs and Hypergraphs; Elsevier: New York, NY, USA, 1973. [Google Scholar]

- Xiao, H.B.; Hu, F.; Li, P.Y.; Song, Y.R.; Zhang, Z.K. Information propagation in hypergraph-based social networks. Entropy 2024, 26, 957. [Google Scholar] [CrossRef]

- Cheng, Y.; Huo, L.; Zhao, L. Stability analysis and optimal control of rumor spreading model under media coverage considering time delay and pulse vaccination. Chaos Solitons Fractals 2022, 157, 111931. [Google Scholar] [CrossRef]

- Wang, X.; Pang, N.; Xu, Y.; Huang, T.; Kurths, J. On State-Constrained Containment Control for Nonlinear Multiagent Systems Using Event-Triggered Input. IEEE Trans. Syst. Man Cybern. Syst. 2024, 54, 2530–2538. [Google Scholar] [CrossRef]

- Wang, Z.; Mu, C.; Hu, S.; Chu, C.; Li, X. Modelling the Dynamics of Regret Minimization in Large Agent Populations: A Master Equation Approach. In Proceedings of the Thirty-First International Joint Conference on Artificial Intelligence, IJCAI-22, Vienna, Austria, 23–29 July 2022; pp. 534–540. [Google Scholar]

- Wang, X.; Guang, W.; Huang, T.; Kurths, J. Optimized Adaptive Finite-Time Consensus Control for Stochastic Nonlinear Multiagent Systems with Non-Affine Nonlinear Faults. IEEE Trans. Autom. Sci. Eng. 2024, 21, 5012–5023. [Google Scholar] [CrossRef]

- Luo, H.; Meng, X.; Zhao, Y.; Cai, M. Exploring the impact of sentiment on multi-dimensional information dissemination using COVID-19 data in China. Comput. Hum. Behav. 2023, 144, 107733. [Google Scholar] [CrossRef] [PubMed]

- Dabija, D.C.; Câmpian, V.; Pop, A.R.; Băbuț, R. Generating loyalty towards fast fashion stores: A cross-generational approach based on store attributes and socio-environmental responsibility. Oeconom. Copernic. 2022, 13, 891–934. [Google Scholar] [CrossRef]

- Zhang, J.; Zhang, Q.; Wu, L.; Zhang, J. Identifying influential nodes in complex networks based on multiple local attributes and information entropy. Entropy 2022, 24, 293. [Google Scholar]

- Ma, N.; Yu, G.; Jin, X. Dynamics of competing public sentiment contagion in social networks incorporating higher-order interactions during the dissemination of public opinion. Chaos Solitons Fract. 2024, 182, 114753. [Google Scholar]

- You, X.; Zhang, M.; Ma, Y.; Tan, J.; Liu, Z. Impact of higher-order interactions and individual emotional heterogeneity on information disease coupled dynamics in multiplex networks. Chaos Solitons Fract. 2023, 177, 114186. [Google Scholar]

- Li, M.; Huo, L. Influences of individual interaction validity on coupling propagation of information and disease in a two-layer higher-order network. Chaos 2025, 35, 2. [Google Scholar]

- Bretto, A. Hypergraph Theory: An Introduction; Springer: Berlin/Heidelberg, Germany, 2013. [Google Scholar]

- Gong, Y.C.; Wang, M.; Liang, W.; Hu, F.; Zhang, Z.K. UHIR: An effective information dissemination model of online social hypernetworks based on user and information attributes. Inf. Sci. 2023, 644, 119284. [Google Scholar] [CrossRef]

- Luo, L.; Nian, F.; Cui, Y.; Li, F. Fractal information dissemination and clustering evolution on social hypernetwork. Chaos 2024, 34, 093101. [Google Scholar] [CrossRef]

- Boscain, U.; Sigalotti, M.; Sugny, D. Introduction to the Pontryagin maximum principle for quantum optimal control. PRX Quantum 2021, 2, 030203. [Google Scholar] [CrossRef]

- Ma, Z.; Zou, S. Optimal Control Theory; Springer: Singapore, 2021. [Google Scholar]

- Lin, C.; Ma, Y.; Sels, D. Application of Pontryagin’s maximum principle to quantum metrology in dissipative systems. Phys. Rev. A 2022, 105, 042621. [Google Scholar] [CrossRef]

- Liang, X.; Xu, J. Control for networked control systems with remote and local controllers over unreliable communication channel. Automatica 2018, 98, 86–94. [Google Scholar] [CrossRef]

- Liang, X.; Qi, Q.; Zhang, H.; Xie, L. Decentralized control for networked control systems with asymmetric information. IEEE Trans. Autom. Control 2022, 67, 2076–2083. [Google Scholar] [CrossRef]

- Yan, G.; Zhang, X.; Pei, H.; Li, Y. An emotion-information spreading model in social media on multiplex networks. Commun. Nonlinear Sci. Numer. Simul. 2024, 138, 108251. [Google Scholar] [CrossRef]

- Dong, Y.; Huo, L. A multi-scale mathematical model of rumor propagation considering both intra- and inter-individual dynamics. Chaos Solitons Fractals 2024, 185, 115065. [Google Scholar] [CrossRef]

- Liu, Q.; Yao, Y.; Jia, M.; Li, H.; Pan, Q. An opinion evolution model for online social networks considering higher-order interactions. PLoS ONE 2025, 20, e0321718. [Google Scholar] [CrossRef]

- Wang, J.; Wang, Z.; Yu, P.; Wang, P. The SEIR dynamic evolutionary model with Markov chains in hyper networks. Sustainability 2022, 14, 13036. [Google Scholar] [CrossRef]

- Han, Z.M.; Liu, Y.; Zhang, S.Q.; An, Y.Q. A topic dissemination model based on hypernetwork. Sci. Rep. 2025, 15, 1–18. [Google Scholar] [CrossRef]

- Liang, Y.; Wang, B.C.; Zhang, H. Discrete-time indefinite linear-quadratic mean field games and control: The finite-population case. Automatica 2024, 162, 111518. [Google Scholar] [CrossRef]

- Yaghmaie, F.A.; Gustafsson, F.; Ljung, L. Linear quadratic control using model-free reinforcement learning. IEEE Trans. Autom. Control 2022, 68, 737–752. [Google Scholar] [CrossRef]

- Ganjian-Aboukheili, M.; Shahabi, M.; Shafiee, Q.; Guerrero, J.M. Linear quadratic regulator based smooth transition between microgrid operation modes. IEEE Trans. Smart Grid 2021, 12, 4854–4864. [Google Scholar] [CrossRef]

- Abdullah, M.A.; Al-Shetwi, A.Q.; Mansor, M.; Hannan, M.A.; Tan, C.W.; Yatim, A.H.M. Linear quadratic regulator controllers for regulation of the DC-bus voltage in a hybrid energy system: Modeling, design and experimental validation. Sustain. Energy Technol. Assess. 2022, 50, 101880. [Google Scholar] [CrossRef]

- McAuley, J.; Leskovec, J. Learning to discover social circles in ego networks. In Proceedings of the 26th International Conference on Neural Information Processing Systems (NIPS’12), Lake Tahoe, NV, USA, 3–6 December 2012; Curran Associates Inc.: Red Hook, NY, USA; pp. 539–547. [Google Scholar]

| Parameter | Value | Parameter | Value |

|---|---|---|---|

| 0.8 | 0.5 | ||

| 0.6 | 0.3 | ||

| 0.05 | 0.9 | ||

| 0.01 | 0.1 | ||

| 0.03 | 0.1 | ||

| 0.01 | 0.1 |

| A | B | ||||

|---|---|---|---|---|---|

| Strategy | Improvement Rate | Strategy | Improvement Rate | ||

| 16.685 | 9.76% | 28.467 | 22.76% | ||

| 38.969 | 22.79% | 37.366 | 29.87% | ||

| 21.049 | 12.31% | 31.181 | 24.93% | ||

| 50.221 | 29.37% | 60.169 | 48.10% | ||

| 32.489 | 19.00% | 46.498 | 37.17% | ||

| 50.779 | 29.70% | 55.232 | 44.15% | ||

| 57.855 | 33.84% | 69.557 | 55.60% | ||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Xiao, H.-B.; Hu, F.; Zhao, Y.-F.; Song, Y.-R. Constrained Optimal Control of Information Diffusion in Online Social Hypernetworks. Mathematics 2025, 13, 2751. https://doi.org/10.3390/math13172751

Xiao H-B, Hu F, Zhao Y-F, Song Y-R. Constrained Optimal Control of Information Diffusion in Online Social Hypernetworks. Mathematics. 2025; 13(17):2751. https://doi.org/10.3390/math13172751

Chicago/Turabian StyleXiao, Hai-Bing, Feng Hu, You-Feng Zhao, and Yu-Rong Song. 2025. "Constrained Optimal Control of Information Diffusion in Online Social Hypernetworks" Mathematics 13, no. 17: 2751. https://doi.org/10.3390/math13172751

APA StyleXiao, H.-B., Hu, F., Zhao, Y.-F., & Song, Y.-R. (2025). Constrained Optimal Control of Information Diffusion in Online Social Hypernetworks. Mathematics, 13(17), 2751. https://doi.org/10.3390/math13172751