Desynchronization Resilient Audio Watermarking Based on Adaptive Energy Modulation

Abstract

1. Introduction

- We propose a linearly decreasing buffer compensation mechanism that enhances the robustness of audio watermarking against desynchronization attacks, such as TSM and PSM, while reducing the distortion caused by energy adjustment.

- We theoretically analyze the resilience of the proposed method against both common signal processing and desynchronization attacks, and experimentally validate its effectiveness through comprehensive evaluations.

- Experimental results show that the proposed method maintains stable performance under common signal processing operations, with the highest BER being only 4.01%, and exhibits enhanced robustness against desynchronization attacks such as jitter, TSM, and PSM, with the BER never exceeding 12.31% under all tested conditions.

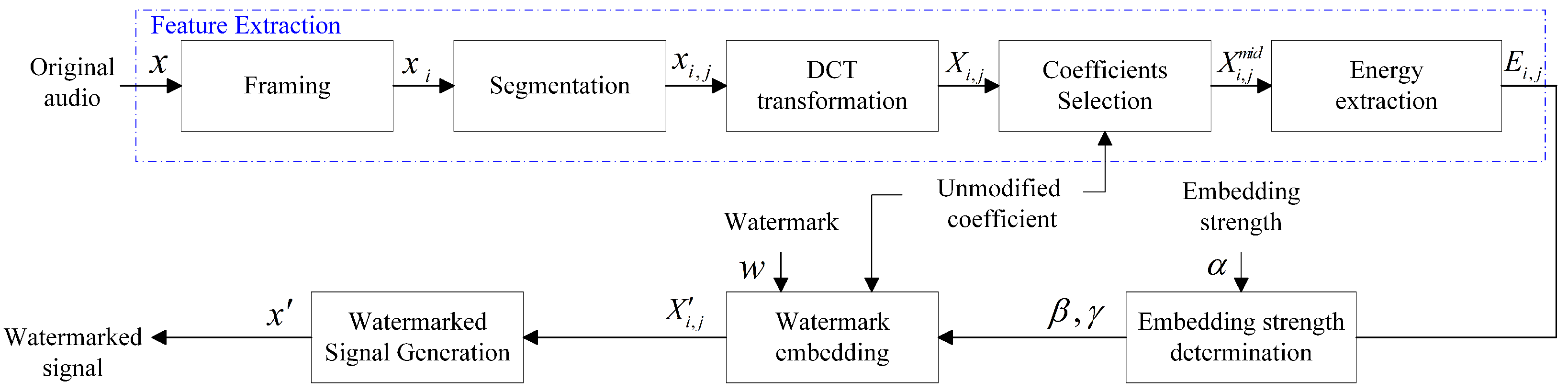

2. Proposed Methods

2.1. Energy Feature Extraction

2.2. Watermark Embedding

2.3. Watermark Extraction

2.4. Parameter Optimization

2.5. Theoretical Analysis of Robustness

3. Experimental Results and Analysis

3.1. Setup

Metrics

3.2. Imperceptibility Experiment

3.3. Robustness Against Common Attacks

- Non-attack: The watermark is extracted directly from the watermarked audio signal without any modification.

- Noise attack: Additive white Gaussian noise (AWGN) is added to the watermarked audio.

- Quantization attack: The watermarked audio is re-quantized before watermark extraction.

- Amplitude attack: The amplitude of the watermarked audio is scaled.

- Echo attack: An echo signal with a time delay is added to the watermarked audio.

- MP3 attack: The watermarked audio is compressed using MPEG-1 Layer III encoding.

- AAC attack: The watermarked audio is compressed using MPEG-4 Advanced Audio Coding (AAC).

- Resampling attack: The watermarked audio is first downsampled and then upsampled.

- Low-pass filter attack: A low-pass filter is applied to the watermarked signal.

- High-pass filter attack: A high-pass filter is applied to the watermarked signal.

- Jitter: A certain proportion of sample points from each segment of the watermarked signal is randomly removed.

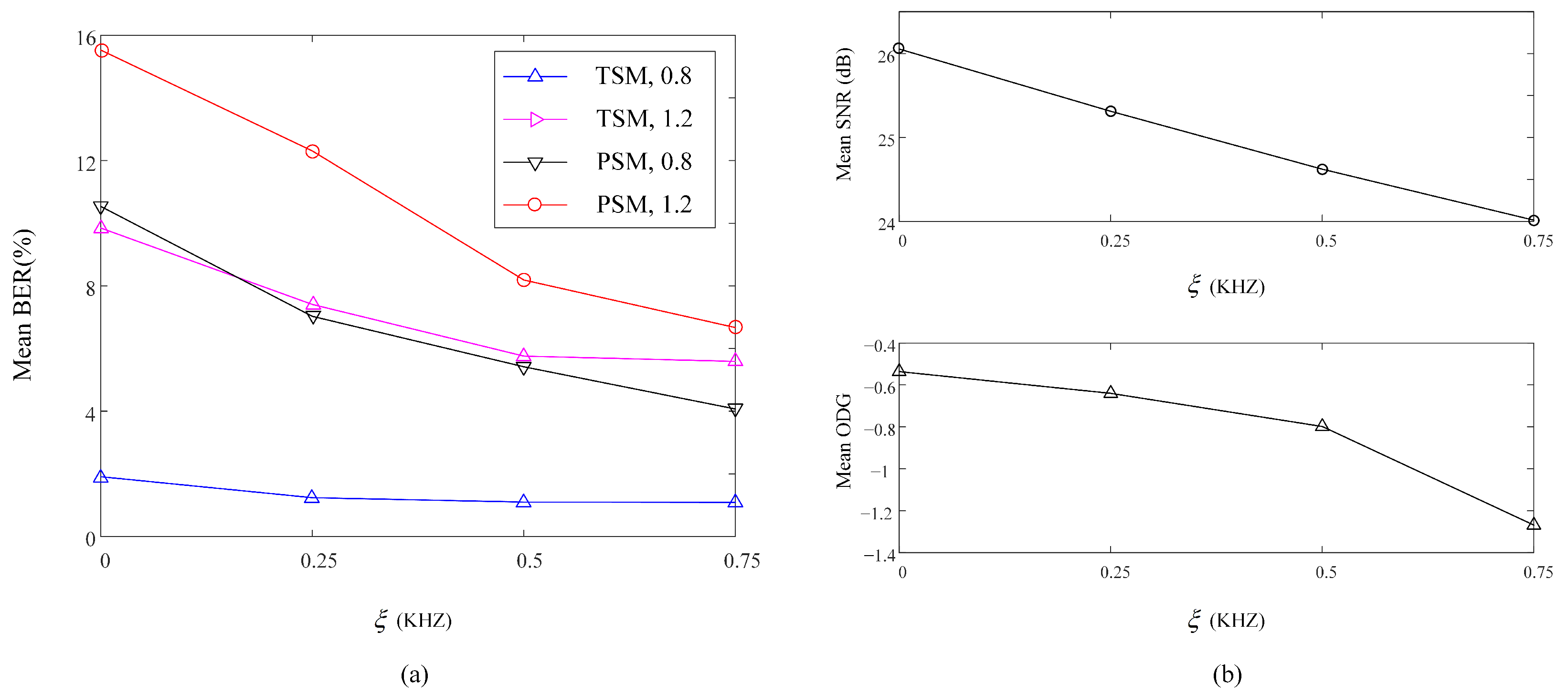

- TSM: The duration of the signal is modified while the pitch remains unchanged.

- PSM: The pitch of the signal is altered while maintaining its duration.

3.4. Runtime Analysis

3.5. Ablation Study

4. Conclusions and Discussion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Yang, Z.; Zhao, G.; Wu, H. Watermarking for large language models: A survey. Mathematics 2025, 13, 1420. [Google Scholar] [CrossRef]

- Wang, B.; Zhao, P. An adaptive image watermarking method combining SVD and Wang-Landau sampling in DWT domain. Mathematics 2020, 8, 691. [Google Scholar] [CrossRef]

- Zheng, Q.; Liu, N.; Wang, F. An adaptive embedding strength watermarking algorithm based on Shearlets’ capture directional features. Mathematics 2020, 8, 1377. [Google Scholar] [CrossRef]

- Ye, C.; Tan, S.; Wang, J.; Shi, L.; Zuo, Q.; Xiong, B. Double security level protection based on chaotic maps and SVD for medical images. Mathematics 2025, 13, 182. [Google Scholar] [CrossRef]

- Wang, Y.; Xue, Y.; Liu, X.; Wen, J. An adaptive audio watermarking with frame-wise control parameter searching. Digit. Signal Process. 2025, 160, 105025. [Google Scholar] [CrossRef]

- Zhao, J.; Zong, T.; Xiang, Y.; Hua, G.; Lei, X.; Gao, L.; Beliakov, G. Frequency spectrum modification process-based anti-collusion mechanism for audio signals. IEEE Trans. Cybern. 2023, 53, 5510–5522. [Google Scholar] [CrossRef]

- Kim, H.-J.; Choi, Y.-H. A novel echo-hiding scheme with backward and forward kernels. IEEE Trans. Circuits Syst. Video Technol. 2003, 13, 885–889. [Google Scholar] [CrossRef]

- Xiang, S.; Huang, J. Histogram-based audio watermarking against time-scale modification and cropping attacks. IEEE Trans. Multimed. 2007, 9, 1357–1372. [Google Scholar] [CrossRef]

- Huang, X.; Ito, A. Imperceptible and reversible acoustic watermarking based on modified integer discrete cosine transform coefficient expansion. Appl. Sci. 2024, 14, 2757. [Google Scholar] [CrossRef]

- Hua, G.; Goh, J.; Thing, V.L. Cepstral analysis for the application of echo-based audio watermark detection. IEEE Trans. Inf. Forensics Secur. 2015, 10, 1850–1861. [Google Scholar] [CrossRef]

- Wang, S.; Yuan, W.; Zhang, Z.; Wang, J.; Unoki, M. Synchronous multi-bit audio watermarking based on phase shifting. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Toronto, ON, Canada, 6–11 June 2021; pp. 2700–2704. [Google Scholar]

- Dronyuk, I.; Fedevych, O.; Kryvinska, N. Constructing of digital watermark based on generalized Fourier transform. Electronics 2020, 9, 1108. [Google Scholar] [CrossRef]

- Hu, H.-T.; Hsu, L.-Y. Robust, transparent and high-capacity audio watermarking in dct domain. Signal Process. 2015, 109, 226–235. [Google Scholar] [CrossRef]

- Saadi, S.; Merrad, A.; Benziane, A. Novel secured scheme for blind audio/speech norm-space watermarking by arnold algorithm. Signal Process. 2019, 154, 74–86. [Google Scholar] [CrossRef]

- Jiang, W.; Huang, X.; Quan, Y. Audio watermarking algorithm against synchronization attacks using global characteristics and adaptive frame division. Signal Process. 2019, 162, 153–160. [Google Scholar] [CrossRef]

- Karajeh, H.; Khatib, T.; Rajab, L.; Maqableh, M. A robust digital audio watermarking scheme based on dwt and schur decomposition. Multimed. Tools Appl. 2019, 78, 18395–18418. [Google Scholar] [CrossRef]

- Wu, Q.; Wu, M. Adaptive and blind audio watermarking algorithm based on chaotic encryption in hybrid domain. Symmetry 2018, 10, 284. [Google Scholar] [CrossRef]

- Xiang, Y.; Natgunanathan, I.; Peng, D.; Hua, G.; Liu, B. Spread spectrum audio watermarking using multiple orthogonal pn sequences and variable embedding strengths and polarities. IEEE/ACM Trans. Audio Speech Lang. Process. 2017, 26, 529–539. [Google Scholar] [CrossRef]

- Zhong, J.; Huang, S. An enhanced multiplicative spread spectrum watermarking scheme. IEEE Trans. Circuits Syst. Video Technol. 2006, 16, 1491–1506. [Google Scholar] [CrossRef]

- Xiang, Y.; Natgunanathan, I.; Rong, Y.; Guo, S. Spread spectrum-based high embedding capacity watermarking method for audio signals. IEEE/ACM Trans. Audio Speech Lang. Process. 2015, 23, 2228–2237. [Google Scholar] [CrossRef]

- Natgunanathan, I.; Xiang, Y.; Hua, G.; Beliakov, G.; Yearwood, J. Patchwork-based multilayer audio watermarking. IEEE/ACM Trans. Audio Speech Lang. Process. 2017, 25, 2176–2187. [Google Scholar] [CrossRef]

- Natgunanathan, I.; Xiang, Y.; Rong, Y.; Peng, D. Robust patchwork-based watermarking method for stereo audio signals. Multimed. Tools Appl. 2014, 72, 1387–1410. [Google Scholar] [CrossRef]

- Natgunanathan, I.; Xiang, Y.; Rong, Y.; Zhou, W.; Guo, S. Robust patchwork-based embedding and decoding scheme for digital audio watermarking. IEEE Trans. Audio Speech Lang. Process. 2012, 20, 2232–2239. [Google Scholar] [CrossRef]

- Malvar, H.; Florencio, D. Improved spread spectrum: A new modulation technique for robust watermarking. IEEE Trans. Signal Process. 2003, 51, 898–905. [Google Scholar] [CrossRef]

- Hwang, M.-J.; Lee, J.; Lee, M.; Kang, H.-G. Svd-based adaptive qim watermarking on stereo audio signals. IEEE Trans. Multimed. 2017, 20, 45–54. [Google Scholar] [CrossRef]

- Khaldi, K.; Boudraa, A.-O. Audio watermarking via emd. IEEE Trans. Audio Speech Lang. Process. 2013, 21, 675–680. [Google Scholar] [CrossRef]

- Erfani, Y.; Pichevar, R.; Rouat, J. Audio watermarking using spikegram and a two-dictionary approach. IEEE Trans. Inf. Forensics Secur. 2017, 12, 840–852. [Google Scholar] [CrossRef]

- Zhao, J.; Zong, T.; Xiang, Y.; Gao, L.; Zhou, W.; Beliakov, G. Desynchronization attacks resilient watermarking method based on frequency singular value coefficient modification. IEEE/ACM Trans. Audio Speech Lang. Process. 2021, 29, 2282–2295. [Google Scholar] [CrossRef]

- Zhang, G.; Zheng, L.; Su, Z.; Zeng, Y.; Wang, G. M-sequences and sliding window based audio watermarking robust against large-scale cropping attacks. IEEE Trans. Inf. Forensics Secur. 2023, 18, 1182–1195. [Google Scholar] [CrossRef]

- Lei, B.; Soon, I.Y.; Tan, E.-L. Robust SVD-based audio watermarking scheme with differential evolution optimization. IEEE Trans. Audio Speech Lang. Process. 2013, 21, 2368–2378. [Google Scholar] [CrossRef]

- Gui-jun, N.; Shuxun, W. Robust adaptive audio watermarking algorithm in cepstrum. J. Jilin Univ. 2008, 26, 55–61. [Google Scholar]

- Dessein, A.; Cont, A. An information-geometric approach to real-time audio segmentation. IEEE Signal Process. Lett. 2013, 20, 331–334. [Google Scholar] [CrossRef]

- Xiang, Y.; Natgunanathan, I.; Guo, S.; Zhou, W.; Nahavandi, S. Patchwork-based audio watermarking method robust to de-synchronization attacks. IEEE/ACM Trans. Audio Speech Lang. Process. 2014, 22, 1413–1423. [Google Scholar] [CrossRef]

- Li, J.; Xiang, S. Audio-lossless robust watermarking against desynchronization attacks. Signal Process. 2022, 198, 108561. [Google Scholar] [CrossRef]

- Liu, Z.; Huang, Y.; Huang, J. Patchwork-based audio watermarking robust against de-synchronization and recapturing attacks. IEEE Trans. Inf. Forensics Secur. 2019, 14, 1171–1180. [Google Scholar] [CrossRef]

- Zhao, J.; Zong, T.; Natgunanathan, I.; Xiang, Y.; Song, X.; Hua, G.; Gao, L.; Zhou, W. Fragment-energy audio watermarking resilient to de-synchronization attacks. Expert Syst. Appl. 2025, 296, 128980. [Google Scholar] [CrossRef]

- Zhao, J.; Zong, T.; Xiang, Y.; Gao, L.; Hua, G.; Sood, K.; Zhang, Y. Ssvs-ssvd based desynchronization attacks resilient watermarking method for stereo signals. IEEE/ACM Trans. Audio Speech Lang. Process. 2023, 31, 448–461. [Google Scholar] [CrossRef]

| Common Attacks | Ref. [21] | Ref. [35] | Ref. [28] | Proposed | |||||

|---|---|---|---|---|---|---|---|---|---|

| BER | NCC | BER | NCC | BER | NCC | BER | NCC | ||

| Non-attack | 0.00 | 1.000 | 0.00 | 1.000 | 0.00 | 1.000 | 0.00 | 1.000 | |

| Noise | 30 dB | 0.05 | 0.999 | 22.59 | 0.688 | 0.17 | 0.998 | 0.13 | 0.999 |

| 20 dB | 1.27 | 0.987 | 42.44 | 0.300 | 2.08 | 0.978 | 1.45 | 0.985 | |

| Quantization | 0.11 | 0.999 | 14.37 | 0.856 | 0.19 | 0.998 | 0.13 | 0.999 | |

| Amplitude | 0.8 | 0.00 | 1.000 | 0.00 | 1.000 | 0.00 | 1.000 | 0.00 | 1.000 |

| 1.2 | 0.00 | 1.000 | 0.00 | 1.000 | 0.00 | 1.000 | 0.00 | 1.000 | |

| Echo | 1.04 | 0.989 | 7.29 | 0.919 | 2.12 | 0.978 | 4.01 | 0.960 | |

| MP3 | 128 kbps | 0.00 | 1.000 | 2.05 | 0.976 | 0.00 | 1.000 | 0.00 | 1.000 |

| 96 kbps | 0.00 | 1.000 | 8.41 | 0.902 | 0.00 | 1.000 | 0.00 | 1.000 | |

| AAC | 128 kbps | 0.00 | 1.000 | 0.02 | 1.000 | 0.00 | 1.000 | 0.00 | 1.000 |

| 96 kbps | 0.00 | 1.000 | 0.02 | 1.000 | 0.00 | 1.000 | 0.00 | 1.000 | |

| Resampling | 0.00 | 1.000 | 30.78 | 0.593 | 0.00 | 1.000 | 0.00 | 1.000 | |

| Low-pass filtering | 0.00 | 1.000 | 0.04 | 1.000 | 0.00 | 1.000 | 0.00 | 1.000 | |

| High-pass filtering | 0.00 | 1.000 | 44.15 | 0.355 | 0.03 | 1.000 | 0.00 | 1.000 | |

| Desynchronization Attacks | Ref. [21] | Ref. [35] | Ref. [28] | Proposed | |||||

|---|---|---|---|---|---|---|---|---|---|

| BER | NCC | BER | NCC | BER | NCC | BER | NCC | ||

| Jitter (1%) | 3.39 | 0.963 | 37.93 | 0.446 | 9.58 | 0.906 | 10.55 | 0.902 | |

| TSM | 80% | 0.25 | 0.997 | 25.47 | 0.676 | 1.83 | 0.981 | 1.24 | 0.987 |

| 90% | 0.13 | 0.999 | 22.26 | 0.724 | 1.91 | 0.980 | 0.68 | 0.993 | |

| 110% | 0.15 | 0.998 | 19.19 | 0.767 | 2.27 | 0.976 | 1.93 | 0.980 | |

| 120% | 0.17 | 0.998 | 21.33 | 0.737 | 6.51 | 0.933 | 7.40 | 0.923 | |

| PSM | 80% | 31.94 | 0.639 | 33.18 | 0.544 | 11.36 | 0.882 | 7.03 | 0.928 |

| 90% | 49.13 | 0.441 | 27.83 | 0.639 | 3.49 | 0.963 | 2.17 | 0.978 | |

| 110% | 52.10 | 0.382 | 20.47 | 0.750 | 8.02 | 0.916 | 3.71 | 0.962 | |

| 120% | 30.83 | 0.663 | 24.11 | 0.697 | 16.48 | 0.829 | 12.31 | 0.872 | |

| Step | Avg Runtime (ms) | Std (ms) | Percentage |

|---|---|---|---|

| DCT + IDCT | 0.185 | – | 88.5% |

| Buffer Scaling | 0.007 | – | 3.3% |

| Other Operations | 0.017 | – | 8.2% |

| Total | 0.209 | 0.016 | 100% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhu, W.; Zhou, Y.; Wu, D.; Zhao, G.; Dong, Z.; Ye, J.; Wu, H. Desynchronization Resilient Audio Watermarking Based on Adaptive Energy Modulation. Mathematics 2025, 13, 2736. https://doi.org/10.3390/math13172736

Zhu W, Zhou Y, Wu D, Zhao G, Dong Z, Ye J, Wu H. Desynchronization Resilient Audio Watermarking Based on Adaptive Energy Modulation. Mathematics. 2025; 13(17):2736. https://doi.org/10.3390/math13172736

Chicago/Turabian StyleZhu, Weinan, Yanxia Zhou, Deyang Wu, Gejian Zhao, Zhicheng Dong, Jingyu Ye, and Hanzhou Wu. 2025. "Desynchronization Resilient Audio Watermarking Based on Adaptive Energy Modulation" Mathematics 13, no. 17: 2736. https://doi.org/10.3390/math13172736

APA StyleZhu, W., Zhou, Y., Wu, D., Zhao, G., Dong, Z., Ye, J., & Wu, H. (2025). Desynchronization Resilient Audio Watermarking Based on Adaptive Energy Modulation. Mathematics, 13(17), 2736. https://doi.org/10.3390/math13172736