Fourier Graph Convolution Network for Time Series Prediction

Abstract

1. Introduction

- The existing methods learn the periodicity based on frequency-domain methods, such as spectral analysis and traditional Fourier Transform [14,15,16,17]. These models generally require manual parameters and comply with rigorous assumptions, making these methods incapable of capturing various periodicities.

- There is still a lack of an efficient way to learn dynamic volatility for improving robusticity, which is crucial to the dynamic spatial-temporal pattern recognition of the traffic network.

- Some models capture periodicity and volatility, but these methods capture them independently and ignore their inherent relationship.

- A novel Fourier Embedding module is proposed to capture periodicity patterns, which is proven to learn diversified periodicity patterns.

- A stackable Spatial-Temporal ChebyNet layer, including a Fine-grained Volatility Module and a Temporal Volatility Module, is proposed to handle the complex volatility and learn dynamic temporal volatility for improving the system’s robusticity.

- A dynamic Fourier Graph Convolution Network framework is proposed to integrate the periodicity and volatility analysis, which could be easily trained in an end-to-end method. Extensive experiments are conducted on several real-world traffic flow data, and the results significantly outperform state-of-the-art methods.

2. Literature Review

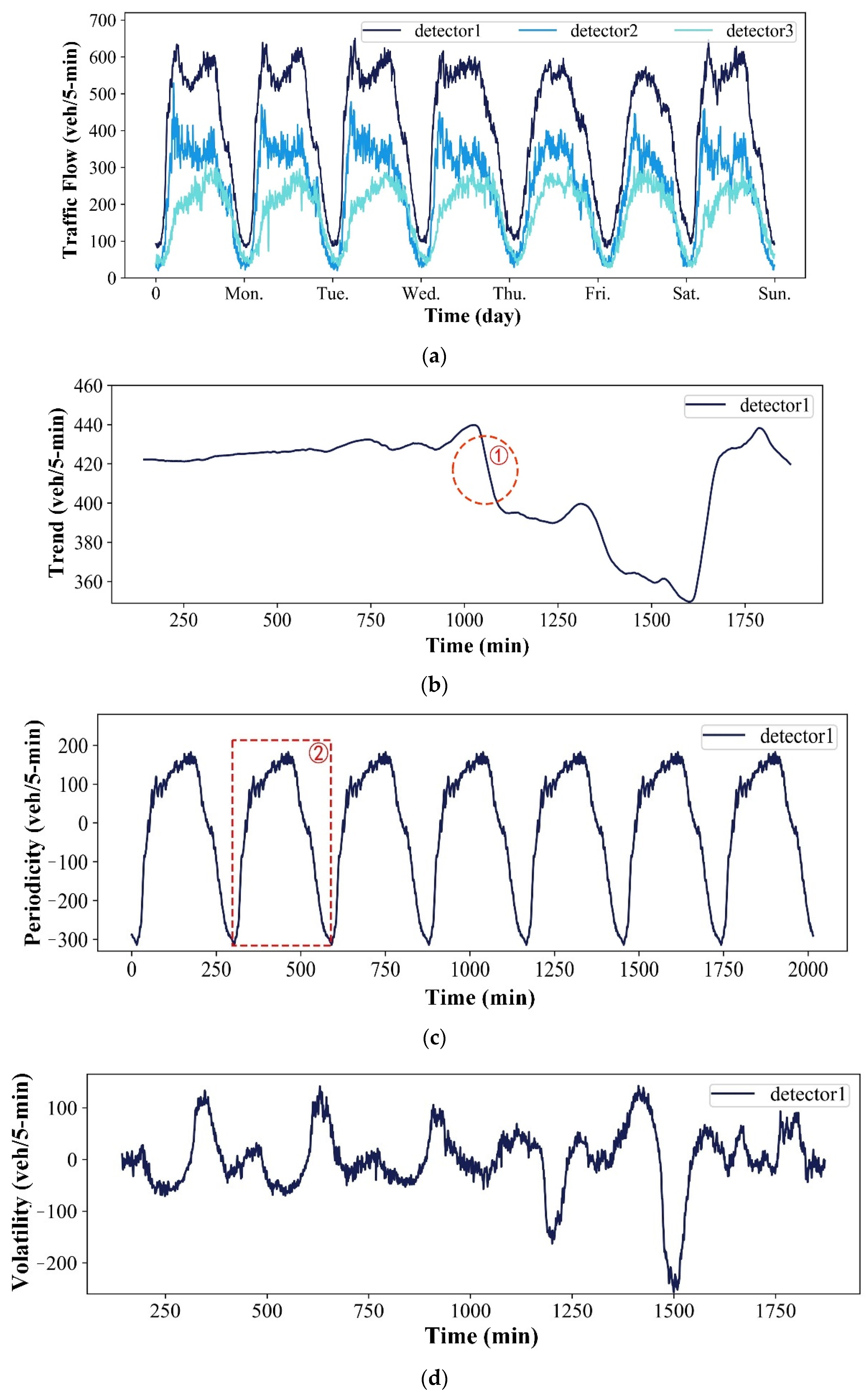

2.1. Traffic Flow Data Decomposition

2.2. Graph Convolution Network

3. Methods

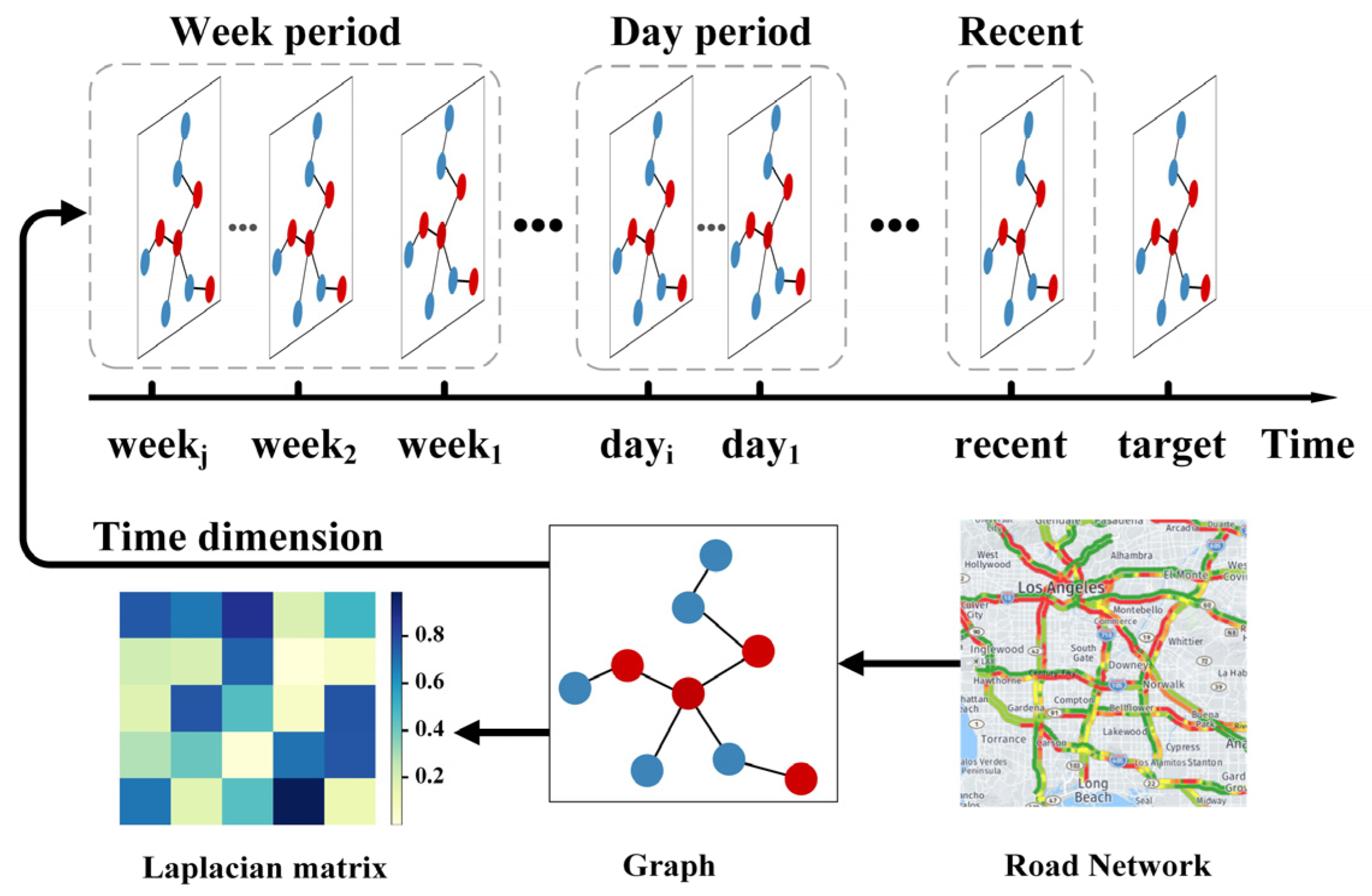

3.1. Preliminaries

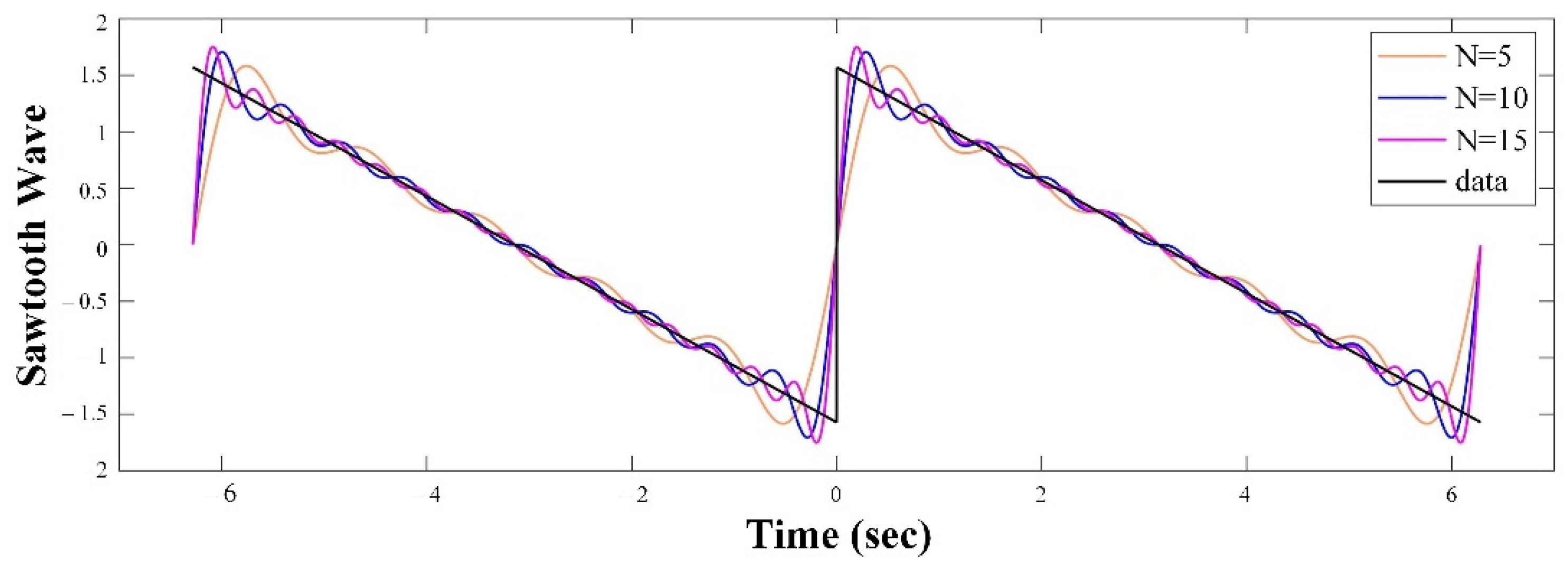

3.1.1. The Complex Fourier Series

3.1.2. Real Fourier Series

3.1.3. Problem Statement

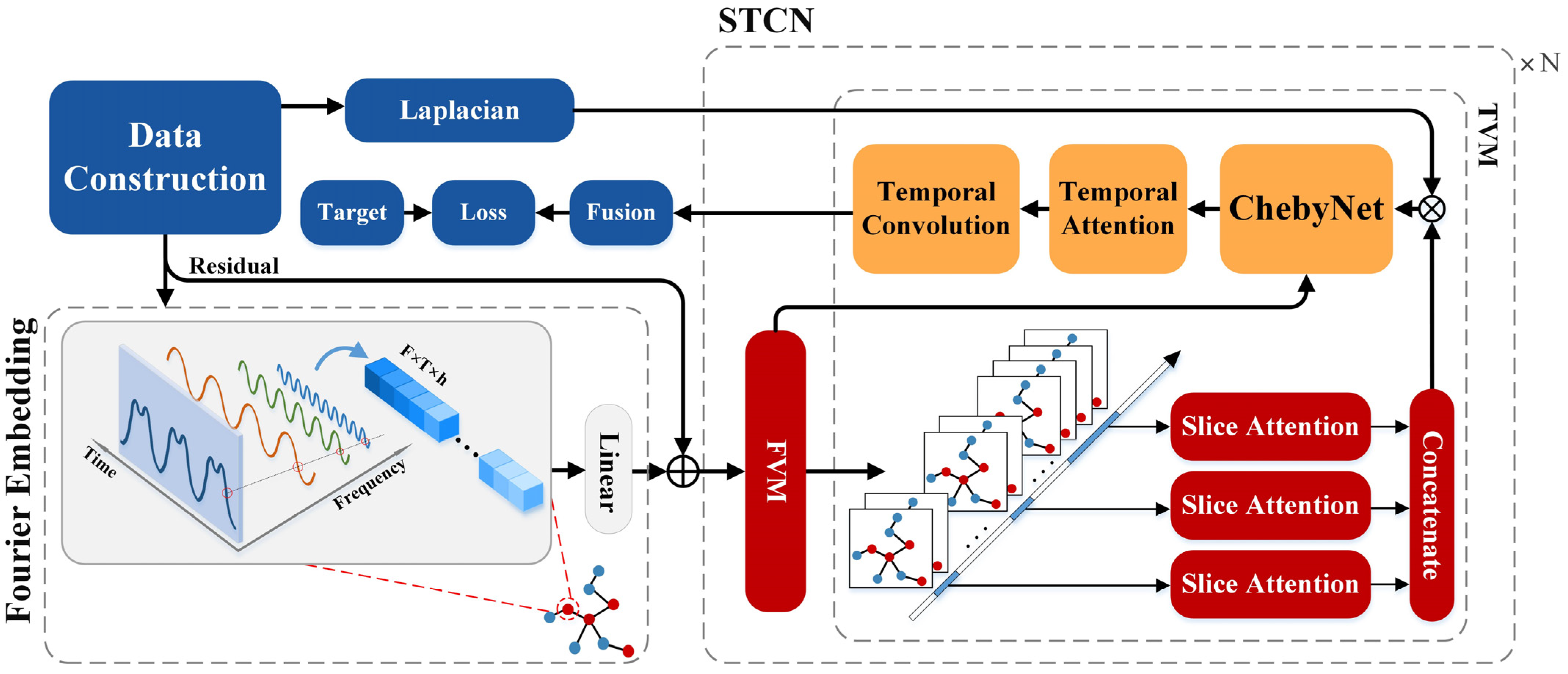

3.2. Fourier Graph Convolution Network

3.2.1. Data Construction

3.2.2. Fourier Embedding

3.2.3. Spatial-Temporal ChebyNet Layer

- A.

- Fine-grained Volatility Module

- B.

- Temporal Volatility Module

3.3. Fusion & Loss Function

| Algorithm 1 Pseudocode for the F-GCN model |

| Input: The F-GCN input feature , including the week period , day period , and recent-period ; Laplacian matrix ; Output:

|

4. Results and the Discussion

4.1. Data Description

4.2. Evaluation Metrics

4.3. Experimental Settings

4.4. Baselines and State-of-the-Art Methods

4.5. Experiment Results

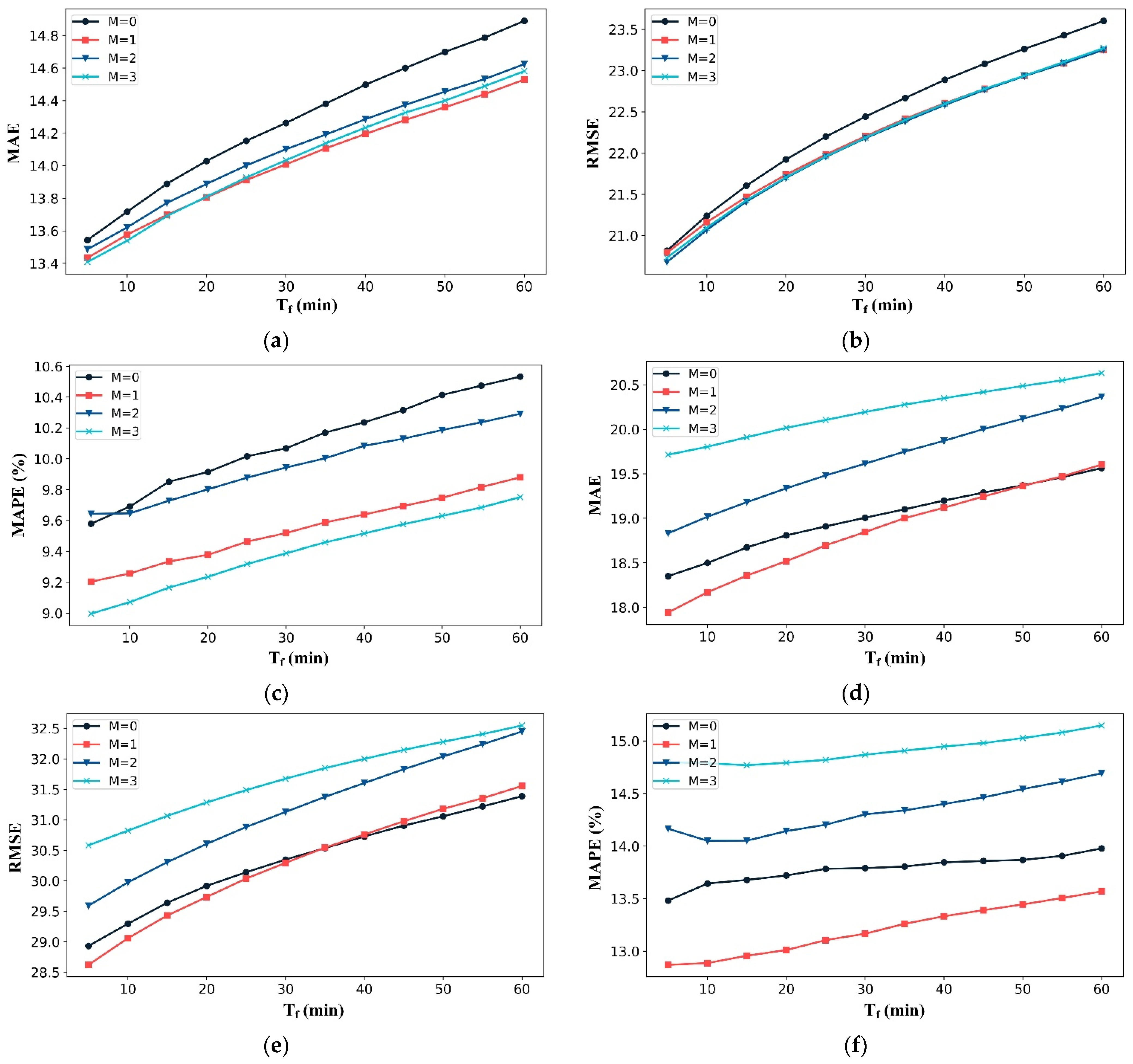

4.6. Performance of FE and STCN Modules

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Nomenclatures

| AGCRN | Adaptive Graph Convolutional Recurrent Network |

| ASTGCN | Attention-based Spatial-Temporal Graph Convolution Network |

| ARCH | Autoregressive Conditional Heteroskedasticity |

| ARIMA | Autoregressive Integrated Moving Average |

| BV | Boundedly Varied |

| CFS | Complex Fourier Series |

| CNN | Convolution Neural Networks |

| DC | Dirichlet Condition |

| DGCN | Dynamic Graph Convolution Network |

| EMD | Empirical Mode Decomposition |

| EEMD | Ensemble Empirical Mode Decomposition |

| ELM | Extreme Learning Machine |

| FE | Fourier Embedding |

| F-GCN | Fourier Graph Convolution Network |

| GRU | Gated Recurrent Unit networks |

| GCN | Graph Convolution Network |

| HA | Historical Average |

| iGCGCN | improved Dynamic Chebyshev Graph Convolution Network |

| ITS | Intelligent Transportation Systems |

| MAE | Mean Absolute Error |

| MAPE | Mean Absolute Percentage Error |

| MSE | Mean Square Error |

| PeMS | Performance Measurement System |

| RFS | Real Fourier series |

| RMSE | Root Mean Square Error |

| SP | Signal Processing |

| STCN | Spatial-Temporal ChebyNet |

| STSGCN | Spatial-Temporal Synchronous Graph Convolutional Networks |

| STGCN | Spatio-Temporal Graph Convolutional Network |

| SVR | Support Vector Regression |

| T-GCN | Temporal Graph Convolutional Network |

| TSA-SL | Time-Series Analysis and Supervised-Learning |

| The length of a set. | |

| Hadamard product. | |

| [△, ○] | The concatenation of and . |

| σ(∙) | The sigmoid function. |

| , | The sine and cosine functions. |

| ⋆ | The Causal convolution operator. |

| ∗ | The Graph convolution operator. |

| A graph. | |

| V | The set of nodes in a graph. |

| E | The set of edges in a graph. |

| A | The adjacency matrix of the graph. |

| D | The degree matrix of , . |

| L | The Laplacian matrix . |

| The edge between node and node . | |

| The spatial-temporal graph by Data Construction. | |

| The spatial-temporal graph in the case of . | |

| The output of the FE module. | |

| The residual . | |

| The output of the Fine-grained Volatility Module. | |

| The output of the Temporal Volatility Module. | |

| The number of original characteristics. | |

| The nodes of the graph. | |

| The number of time slices of the graph. | |

| The length of the vector embedding. | |

| The length of historical data and prediction data. | |

| The order of the Fourier polynomial in the FE module. |

References

- Duan, H.; Wang, G. Partial differential grey model based on control matrix and its application in short-term traffic flow prediction. Appl. Math. Model. 2023, 116, 763–785. [Google Scholar] [CrossRef]

- Han, Y.; Zhao, S.; Deng, H.; Jia, W. Principal graph embedding convolutional recurrent network for traffic flow prediction. Appl. Intell. 2023, 1–15. [Google Scholar] [CrossRef]

- Guo, K.; Hu, Y.; Qian, Z.; Sun, Y.; Gao, J.; Yin, B. Dynamic graph convolution network for traffic forecasting based on latent network of laplace matrix estimation. IEEE Trans. Intell. Transp. Syst. 2020, 23, 1009–1018. [Google Scholar] [CrossRef]

- Li, W.; Wang, X.; Zhang, Y.; Wu, Q. Traffic flow prediction over muti-sensor data correlation with graph convolution network. Neurocomputing 2021, 427, 50–63. [Google Scholar] [CrossRef]

- Xue, Y.; Tang, Y.; Xu, X.; Liang, J.; Neri, F. Multi-objective feature selection with missing data in classification. IEEE Trans. Emerg. Top. Comput. Intell. 2021, 6, 355–364. [Google Scholar] [CrossRef]

- Williams, B.M.; Hoel, L.A. Modeling and forecasting vehicular traffic flow as a seasonal ARIMA process: Theoretical basis and empirical results. J. Transp. Eng. 2003, 129, 664–672. [Google Scholar] [CrossRef]

- Engle, R. Risk and volatility: Econometric models and financial practice. Am. Econ. Rev. 2004, 94, 405–420. [Google Scholar] [CrossRef]

- Xue, Y.; Xue, B.; Zhang, M. Self-adaptive particle swarm optimization for large-scale feature selection in classification. ACM Trans. Knowl. Discov. Data 2019, 13, 1–27. [Google Scholar] [CrossRef]

- Xue, Y.; Wang, Y.; Liang, J.; Slowik, A. A self-adaptive mutation neural architecture search algorithm based on blocks. IEEE Comput. Intell. Mag. 2021, 16, 67–78. [Google Scholar] [CrossRef]

- Zhao, L.; Song, Y.; Zhang, C.; Liu, Y.; Wang, P.; Lin, T.; Deng, M.; Li, H. T-GCN: A Temporal Graph Convolutional Network for Traffic Prediction. IEEE Trans. Intell. Transp. Syst. 2019, 21, 3848–3858. [Google Scholar] [CrossRef]

- Guo, S.; Lin, Y.; Feng, N.; Song, C.; Wan, H. Attention based spatial-temporal graph convolutional networks for traffic flow forecasting. In Proceedings of the AAAI’19: AAAI Conference on Artificial Intelligence, Honolulu, HI, USA, 27 January–1 February 2019. [Google Scholar] [CrossRef]

- Song, C.; Lin, Y.; Guo, S.; Wan, H. Spatial-Temporal Synchronous Graph Convolutional Networks: A New Framework for Spatial-Temporal Network Data Forecasting. In Proceedings of the AAAI Conference on Artificial Intelligence, New York, NY, USA, 7–12 February 2020. [Google Scholar] [CrossRef]

- Liao, L.; Hu, Z.; Zheng, Y.; Bi, S.; Zou, F.; Qiu, H.; Zhang, M. An improved dynamic Chebyshev graph convolution network for traffic flow prediction with spatial-temporal attention. Appl. Intell. 2022, 52, 16104–16116. [Google Scholar] [CrossRef]

- Chen, L.; Zheng, L.; Yang, J.; Xia, D.; Liu, W. Short-term traffic flow prediction: From the perspective of traffic flow decomposition. Neurocomputing 2020, 413, 444–456. [Google Scholar] [CrossRef]

- Zhang, Y.; Zhang, Y.; Haghani, A. A hybrid short-term traffic flow forecasting method based on spectral analysis and statistical volatility model. Transp. Res. Part C Emerg. Technol. 2014, 43, 65–78. [Google Scholar] [CrossRef]

- Chen, X.; Lu, J.; Zhao, J.; Qu, Z.; Yang, Y.; Xian, J. Traffic flow prediction at varied time scales via ensemble empirical mode decomposition and artificial neural network. Sustainability 2020, 12, 3678. [Google Scholar] [CrossRef]

- Tian, Z. Approach for short-term traffic flow prediction based on empirical mode decomposition and combination model fusion. IEEE Trans. Intell. Transp. Syst. 2020, 22, 5566–5576. [Google Scholar] [CrossRef]

- Zivot, E.; Wang, J. Vector autoregressive models for multivariate time series. Model. Financ. Time Ser. S-Plus® 2006, 385–429. [Google Scholar] [CrossRef]

- Sun, S.; Zhang, C.; Yu, G. A bayesian network approach to traffic flow forecasting. IEEE Trans. Intell. Transp. Syst. 2006, 7, 124–132. [Google Scholar] [CrossRef]

- Liu, L.; Zhen, J.; Li, G.; Zhan, G.; He, Z.; Du, B.; Lin, L. Dynamic spatial-temporal representation learning for traffic flow prediction. IEEE Trans. Intell. Transp. Syst. 2020, 22, 7169–7183. [Google Scholar] [CrossRef]

- Shu, W.; Cai, K.; Xiong, N.N. A Short-Term Traffic Flow Prediction Model Based on an Improved Gate Recurrent Unit Neural Network. IEEE Trans. Intell. Transp. Syst. 2021, 23, 16654–16665. [Google Scholar] [CrossRef]

- Guo, S.; Lin, Y.; Li, S.; Chen, Z.; Wan, H. Deep spatial–temporal 3D convolutional neural networks for traffic data forecasting. IEEE Trans. Intell. Transp. Syst. 2019, 20, 3913–3926. [Google Scholar] [CrossRef]

- Bruna, J.; Zaremba, W.; Szlam, A.; LeCun, Y. Spectral networks and locally connected networks on graphs. arXiv 2013, arXiv:1312.6203. [Google Scholar] [CrossRef]

- Defferrard, M.; Bresson, X.; Vandergheynst, P. Convolutional neural networks on graphs with fast localized spectral filtering. Adv. Neural Inf. Process. Syst. 2016, 29. [Google Scholar] [CrossRef]

- Kipf, T.N.; Welling, M. Semi-supervised classification with graph convolutional networks. arXiv 2016, arXiv:1609.02907. [Google Scholar] [CrossRef]

- Kearnes, S.; McCloskey, K.; Berndl, M.; Pande, V.; Riley, P. Molecular graph convolutions: Moving beyond fingerprints. J. Comput.-Aided Mol. Des. 2016, 30, 595–608. [Google Scholar] [CrossRef]

- Niepert, M.; Ahmed, M.; Kutzkov, K. Learning convolutional neural networks for graphs. arXiv 2016, arXiv:1605.05273. [Google Scholar] [CrossRef]

- Hamilton, W.L.; Ying, R.; Leskovec, J. Inductive representation learning on large graphs. Adv. Neural Inf. Process. Syst. 2017, 30. [Google Scholar] [CrossRef]

- Chen, C.; Li, K.; Teo, S.G.; Zou, X.; Wang, K.; Wang, J.; Zeng, Z. Gated residual recurrent graph neural networks for traffic prediction. In Proceedings of the AAAI Conference on Artificial Intelligence, Honolulu, HI, USA, 27 January–1 February 2019. [Google Scholar] [CrossRef]

- Bai, L.; Yao, L.; Li, C.; Wang, X.; Wang, C. Adaptive graph convolutional recurrent network for traffic forecasting. arXiv 2020, arXiv:2007.02842. [Google Scholar] [CrossRef]

- Kazemi, S.M.; Goel, R.; Eghbali, S.; Ramanan, J.; Sahota, J.; Thakur, S.; Wu, S.; Smyth, C.; Poupart, P.; Brubaker, M. Time2vec: Learning a vector representation of time. arXiv 2019, arXiv:1907.05321. [Google Scholar] [CrossRef]

- Meyer, F.; van der Merwe, B.; Coetsee, D.J.J.U.C.S. Learning Concept Embeddings from Temporal Data. J. Univers. Comput. Sci. 2018, 24, 1378–1402. [Google Scholar] [CrossRef]

- Hsu, C.-Y.; Huang, H.-Y.; Lee, L.-T. An interactive procedure to preserve the desired edges during the image processing of noise reduction. EURASIP J. Adv. Signal Process. 2010, 2010, 923748. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. Adv. Neural Inf. Process. Syst. 2017, 30. [Google Scholar] [CrossRef]

- Xue, Y.; Qin, J. Partial connection based on channel attention for differentiable neural architecture search. IEEE Trans. Ind. Inform. 2022, 1–10. [Google Scholar] [CrossRef]

- Chen, C.; Petty, K.; Skabardonis, A.; Varaiya, P.; Jia, Z. Freeway performance measurement system: Mining loop detector data. Transp. Res. Rec. 2001, 1748, 96–102. [Google Scholar] [CrossRef]

| Models | HA | ARIMA | GRU | STGCN | ASTGCN | STSGCN | AGCRN | F-GCN | ||

|---|---|---|---|---|---|---|---|---|---|---|

| Datasets | Time | Metrics | ||||||||

| PeMSD4 | 15 min | MAE | 30.505 | 28.366 | 24.240 | 21.191 | 20.448 | 20.019 | 18.850 | 18.358 |

| RMSE | 41.873 | 36.443 | 36.458 | 33.235 | 32.072 | 31.927 | 30.970 | 29.429 | ||

| MAPE | 27.043 | 47.375 | 19.561 | 18.921 | 15.210 | 13.530 | 12.536 | 12.956 | ||

| 30 min | MAE | 37.245 | 34.455 | 25.732 | 23.909 | 20.735 | 21.543 | 19.520 | 18.844 | |

| RMSE | 50.054 | 45.595 | 38.671 | 35.743 | 32.780 | 34.180 | 32.130 | 30.292 | ||

| MAPE | 36.648 | 50.316 | 20.879 | 20.465 | 15.146 | 14.320 | 12.962 | 13.166 | ||

| 45 min | MAE | 43.930 | 42.042 | 27.433 | 25.727 | 21.048 | 23.053 | 20.040 | 19.244 | |

| RMSE | 58.101 | 59.311 | 41.149 | 38.362 | 33.453 | 36.390 | 33.100 | 30.975 | ||

| MAPE(%) | 53.502 | 51.071 | 22.553 | 22.193 | 15.216 | 15.260 | 13.310 | 13.390 | ||

| 60 min | MAE | 50.539 | 52.997 | 29.408 | 27.617 | 21.494 | 24.627 | 20.960 | 19.603 | |

| RMSE | 65.982 | 77.380 | 44.017 | 41.077 | 34.247 | 38.563 | 34.420 | 31.555 | ||

| MAPE(%) | 72.040 | 54.230 | 24.701 | 24.054 | 15.500 | 16.410 | 13.889 | 13.569 | ||

| PeMSD8 | 15 min | MAE | 25.157 | 32.571 | 19.206 | 17.542 | 16.779 | 16.599 | 15.080 | 13.646 |

| RMSE | 34.234 | 34.120 | 29.764 | 25.871 | 24.941 | 25.371 | 23.730 | 21.384 | ||

| MAPE(%) | 16.053 | 22.634 | 13.629 | 13.080 | 11.888 | 10.989 | 9.650 | 9.424 | ||

| 30 min | MAE | 30.945 | 38.310 | 20.452 | 18.774 | 17.069 | 17.849 | 16.090 | 14.013 | |

| RMSE | 41.130 | 43.402 | 31.687 | 28.038 | 25.600 | 27.280 | 25.570 | 22.171 | ||

| MAPE(%) | 20.438 | 30.260 | 15.048 | 13.917 | 11.842 | 11.566 | 10.183 | 9.634 | ||

| 45 min | MAE | 36.689 | 42.830 | 21.928 | 20.040 | 17.387 | 18.903 | 16.960 | 14.269 | |

| RMSE | 47.836 | 47.158 | 33.818 | 30.150 | 26.257 | 28.933 | 26.950 | 22.742 | ||

| MAPE(%) | 25.163 | 35.444 | 16.799 | 14.867 | 11.933 | 12.200 | 10.736 | 9.792 | ||

| 60 min | MAE | 42.364 | 42.860 | 23.675 | 21.362 | 17.874 | 20.116 | 18.170 | 14.516 | |

| RMSE | 54.379 | 45.810 | 36.333 | 32.223 | 27.088 | 30.642 | 28.710 | 23.230 | ||

| MAPE(%) | 30.236 | 35.495 | 18.986 | 15.923 | 12.210 | 13.040 | 11.514 | 9.924 | ||

| Dataset | Order | MAE | RMSE | MAPE | s/Epoch |

|---|---|---|---|---|---|

| PeMSD8 | 2 | 14.14 | 22.29 | 9.82 | 52.59 |

| 3 | 14.02 | 22.20 | 9.54 | 56.71 | |

| 4 | 14.29 | 22.42 | 9.76 | 59.92 | |

| 5 | 14.03 | 22.26 | 9.69 | 66.62 | |

| PeMSD4 | 2 | 18.98 | 30.36 | 14.27 | 93.06 |

| 3 | 18.86 | 30.29 | 13.21 | 104.17 | |

| 4 | 20.42 | 32.49 | 14.79 | 107.97 | |

| 5 | 19.60 | 31.11 | 13.63 | 116.93 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Liao, L.; Hu, Z.; Hsu, C.-Y.; Su, J. Fourier Graph Convolution Network for Time Series Prediction. Mathematics 2023, 11, 1649. https://doi.org/10.3390/math11071649

Liao L, Hu Z, Hsu C-Y, Su J. Fourier Graph Convolution Network for Time Series Prediction. Mathematics. 2023; 11(7):1649. https://doi.org/10.3390/math11071649

Chicago/Turabian StyleLiao, Lyuchao, Zhiyuan Hu, Chih-Yu Hsu, and Jinya Su. 2023. "Fourier Graph Convolution Network for Time Series Prediction" Mathematics 11, no. 7: 1649. https://doi.org/10.3390/math11071649

APA StyleLiao, L., Hu, Z., Hsu, C.-Y., & Su, J. (2023). Fourier Graph Convolution Network for Time Series Prediction. Mathematics, 11(7), 1649. https://doi.org/10.3390/math11071649