1. Introduction

Neurodevelopmental disorders (NDs) are complex conditions affecting brain functions, altering neurological development, and causing difficulties in social, cognitive, learning, communication, behavior, and emotional functioning [

1,

2,

3]. DSM-5 provides a framework for diagnosis and describes that Neurodevelopmental Disorders (NDs), among others, mainly include [

1,

2,

3,

4]:

Autism Spectrum Disorders (ASD): are characterized by deficits in (i) social communication and social interaction and (ii) restricted repetitive patterns of behavior, interests, and activities.

Attention Deficit Hyperactivity Disorder (ADHD): is characterized by inattention, impulsiveness, and hyperactivity, interfering with daily activities and functioning.

Intellectual Disability (ID): comprises impairments of general mental abilities that impact adaptive functioning (determine how well an individual copes with everyday tasks) in the conceptual, social, and practical domains. [

4].

Specific Learning Disorder (SLD): is characterized by difficulties in learning and processing specific academic skills, such as reading, writing, or mathematics, despite normal intelligence and adequate educational opportunities. These symptoms can affect academic and daily functioning.

Communication Disorders (CD): involve language disorder, speech sound disorder, childhood-onset fluency disorder, and social (pragmatic) communication disorder (difficulties in the social uses of verbal and nonverbal communication).

NDs commonly onset throughout development stages from young infancy to adolescence and persist into adulthood or may go undiagnosed until one is an adult [

1]. The deficits’ severity in NDs varies and may co-occur with other disorders. These deficits can affect the quality of life for individuals and their families, causing significant care needs and extensive community assets [

5,

6].

Speech and language deficiencies can be early indicators of many neurodevelopmental disorders. In addition, effective communication is critical to human development and social interaction, suggesting developmental continuity from early years to later life [

1,

7]. To screen and diagnose the NDs’ various features, clinicians commonly rely on diagnostic instrumentation, child observations, perceived behaviors, parent interviews, and testing, occasionally resulting in subjective evaluation [

5]. However, since clinical evaluations include complex, challenging, non-standardized, multiparametric procedures, and uncertainties in the diagnostic fit, they require high-level clinical expertise and objective measurements [

8]. Moreover, early identification and treatment of speech and language deficiencies can help diminish NDs’ impact on an individual’s overall development and functioning [

9]. Thus, there is a highly demanding need to contribute to the need for additional support in eliminating the over- or under-diagnosed child [

10].

Recent advancements and innovations in artificial intelligence (AI) spark great interest in their potential benefits in speech and language pathology and special education for individuals with developmental disabilities, learning disabilities, articulation disorders, voice disorders, and more [

9,

11,

12,

13,

14,

15,

16]. Computer science, mathematical algorithms, AI, and other emerging technologies introduce new prospects to support clinical decision-making [

10] primarily for an accurate diagnosis, even in rare medical conditions [

17,

18,

19]. The current literature documents the growing attention in AI algorithms and automated measurement tools for decision-making, classification, and clinical assessment in communication deficiencies and NDs in research [

8,

9,

10,

20]. The results of a pilot study of an integrated technology solution, including a serious game using machine learning models and a mobile app for monitoring ADHD behaviors, indicate ML’s potential in ADHD prediction based on gameplay data [

8]. The applicability of eye-tracking data to aid the early screening of autism in children reveals that using ML methods strongly suggests that eye-tracking data can help clinicians for a quick and reliable autism screening [

10]. In addition, for the classification of developmental delay, the use of AI, serious games, and fine motor movements captured from touching a mobile display have been suggested [

9]. Moreover, online gamified testing with a predictive machine learning model for individuals with dyslexia reports results that correctly detect over 80% of the participants with dyslexia, presenting the potential of using a ML approach for dyslexia screening [

20].

Hence, this study aims to assist clinicians’ decision-making and support evaluation procedures. To screen typically and non-typically developed children for speech and language communication deficiencies, various neural networks adopting different optimizers have been implemented and tested in a new biometric dataset to automatically classify the individuals.

This study is organized into sections as follows:

Section 1 explains the significance of clinical evaluation procedures for NDs and speech and language deficiencies in children, the importance of early and objective evaluation procedures, and includes a short description of the research’s motivation;

Section 2 summarizes the required background knowledge on neural networks and the implemented optimizers;

Section 3 presents the methods used in this paper, including the dataset and how the implemented neural networks have been formulated in this research work; followed by

Section 4 that presents the experimental results, in which the provided results are discussed. Finally, the paper concludes with

Section 5, presenting the conclusions, limitations, and suggestions for future research.

2. Background Information

This section briefly provides the required background information for this study and the corresponding algorithms. Specifically, it is devoted to Artificial Neural Networks (ANNs), K-Nearest Neighbor (KNN), Support Vector Machines (SVM), and the corresponding optimizers used in work, namely the Adam optimizer, the Broyden–Fletcher–Goldfarb–Shanno (BFGS), Genetic algorithm (GAs), and Particle Swarm Optimization algorithm (PSO).

ANNs are parametric machine learning tools [

21,

22] that utilize a series of parameters commonly called weights or processing units. These tools have found application in a variety of scientific areas, such as physics [

23,

24,

25], the solution of differential equations [

26,

27], agriculture [

28,

29], chemistry [

30,

31,

32], economics [

33,

34,

35], and health [

36,

37]. In addition, recently, neural networks have been used in solar radiation prediction [

38], 3D printing [

39], and lung cancer research [

40].

A neural network typically uses a special function, called the activation function, that decides whether a neuron should be activated or not. A commonly used activation function is the sigmoid function, defined as [

21,

22]:

The neural network has hidden nodes and each one is expressed as,

where

wi is the weight vector, and

θi is the bias of the

ith node. A neural network can be defined as in the following equation,

where

H is the total number of processing units, and

vi stands for the output weight of the

ith node.

The training error of the neural network is defined as:

where the set

is the training dataset for the neural network,

stands for the input vector, and

stands for the assigned class. Essentially, the training of the artificial neural network includes the determination of the optimal vector of

parameters through the minimization of Equation (4). During recent years, a variety of optimization methods have been proposed to minimize this equation such as the Back Propagation method [

41,

42], the RPROP method [

43,

44,

45], Quasi Newton methods [

46,

47], Simulated Annealing [

48,

49], GAs [

50,

51], and PSO [

52,

53].

BFGS is a widely used iterative optimization in various fields [

54], including machine learning algorithms [

55]. Specifically, it approximates the inverse of the Hessian matrix (the matrix of second-order partial derivatives) to determine the search direction in which the objective function should be minimized. Furthermore, it updates the approximation in each iteration based on the gradient information. BFGS has good convergence properties, is well-suited for problems with high dimensionality, and is often used in machine learning to optimize the weights of neural networks. Despite its popularity, BFGS can be sensitive to the choice of initial guess and may converge to a suboptimal solution in the case of non-convex objective functions. However, convex optimization problems present fast and reliable results.

GAs are a class of heuristic search algorithms inspired by the mechanics of natural selection and genetics [

56,

57,

58]. Precisely, GAs initialize a population of candidate solutions by forming the corresponding problem’s parameters into chromosomes. The population evolves through the application of genetic operators, such as selection, crossover, and mutation. First, a fitness function is used to evaluate their value, and the best-performing individuals are then selected to create a new population in the next generation. This process is repeated until a satisfactory solution or termination criteria are met. GAs have been applied to a wide range of optimization problems, including scheduling, resource allocation, and neural networks, and have shown to be effective and efficient in many cases.

PSO is a computational optimization method introduced by Eberhart and Kennedy in 1995 [

59]. The main inspiration came from the social behavior of birds in a flock. PSO initiates a population of particles representing candidate solutions to probe the search space. Their positions are adjusted based on their own best solution and the overall best solution found by the swarm or a predefined neighborhood. The algorithm iterates continuously, and the best-found solution is reported. PSO proposed parameters such as population number, inertia weight, cognitive and social acceleration, and maximum velocity. The linear decrease in inertia weight determines how much the particles are influenced by their previous velocity over time. The self-adaptation of inertia weight allows the swarm to transition from exploring the solution space to exploiting the best-known solution, effectively guiding the search toward the global optimum [

60,

61,

62].

The INN is an advanced method of training artificial neural networks which identifies the optimal interval for initializing and training artificial neural networks [

63]. The location of the optimal interval is performed using rules evolving from a genetic algorithm. The method has two phases: (i) an attempt is made to locate the optimal interval, and (ii) the artificial neural network is initialized and trained in this interval using a global optimization method, such as a genetic algorithm. The method has been tested on various categorization and function learning data, and the experimental results were incredibly encouraging [

63].

The Adam optimizer is an adaptive gradient-based optimization technique frequently used in machine learning algorithms [

64]. The technique keeps a different learning rate for the supplied neural network weights and adapts the learning rate as needed throughout training. Adam is a standard optimization method that is well-known for being efficient and for being able to handle sparse gradients.

The K-Nearest Neighbor (KNN) algorithm is a straightforward but effective classification algorithm [

65,

66]. This algorithm differs as it does not use a training dataset to build a model. It operates by locating the k training samples closest to the new data point in the feature space and assigning it to the majority class or average value of these k neighbors. KNN’s simplicity puts it in the top selections, but its performance is sensitive to the choice of the number of nearest neighbors and the distance metric used.

Support Vector Machines (SVM) [

67,

68] is another popular and effective supervised classification algorithm. The method finds the best decision boundary that maximally separates the classes by maximizing the margin. The margin refers to the distance between the decision boundary and the closest data points from each class. SVMs are known to be effective in handling complex data distributions, and their performance is less sensitive to overfitting than other machine learning algorithms. However, SVMs can be computationally expensive and require careful kernel function and hyperparameter selection to achieve optimal performance.

3. Materials and Methods

We designed a serious game to collect and process players’ responses. This serious game contains numerous activities on screening/assessment procedures for NDs [

69]. The game data are processed on a dedicated server back-end service to examine early clinical screening/diagnostic patterns on specified domains or skills towards automated indications.

This study is part of the “Smart Computing Models, Sensors, and Early diagnostic speech and language deficiencies indicators in Child Communication” research project with the acronym “SmartSpeech”. SmartSpeech is an ongoing research project funded by the Region of Epirus in Greece and the European Regional Development Fund (ERDF).

3.1. Data Description

The sample in our analysis consisted of children, with a total of 435 participants with an average age of 9 years, of which 224 were males and 211 were females. The 339 participants had typical development (TD) (with no NDs), whereas 96 had NDs. We categorized them according to DSM-5. More specifically, 17 had ASD, 18 had ADHD, 8 had ID, 19 had SLD, and 42 had CD. Some of the participants exhibited more than one disorder. The sample recruitment was conducted after various calls through health and educational sectors supporting TD and non-TD children. Parents were informed of the nature and scope of the project, the procedures, and the project approval by the Research Ethics Committee of the University of Ioannina, Greece (Reg. Num.: 18435/15.5.2020), which complies with the General Data Protection Regulation GDPR. Next, they signed the parent consent form.

The participation process included registration in the database and the completion of questionnaires about the child’s developmental profile. Then, guided by the clinician, the child played the interactive game explicitly designed for this purpose. Overall, at the end of the process, the variables we used in the analysis came from the game’s scores and the bio-signal measurements, i.e., heart rate and eye-tracking measurements.

The SmartSpeech game is designed in the Unity environment [

70] and generates several variables regarding scores on the game’s activity performances and biometric data. The developed game activities represent the overall performance according to the known developmental skills that children typically acquire. Several activities correspond to these specific speech and language skills [

69]. In addition to the scores based on direct responses of the child/player via the touchscreen with clicks and hand movements, other biometric data were also measured, namely voice, heart rate (HR), and gaze.

Voice was recorded in mp3 files when the child needed to answer verbally in a posing question. For this purpose, a speech-to-text program was used [

71], for which a Greek model was trained [

72] and used. The child was required to give about 40 verbal replies, including but not restricted to naming objects, fruits, vegetables, and characters’ names. The SmartSpeech game using this speech-to-text program transcribes the audio files into text and then matches the child’s response with the correct answer in a manner of the true–false outcome.

During the gameplay, the child wore a smartwatch with dedicated software developed, which continuously captured the heart rate values in bpm (beats per minute) units. For every game activity, we took the signal for the corresponding period and calculated three metrics: the mean, the standard deviation, and the range of HR. Ideally, we would like to have had the heart rate variability (HRV) in hand, but due to hardware limitations, this was not possible. Hence, we used the dispersion statistics above as an alternative to the mean baseline.

Furthermore, the game presented the child with several visual stimuli to detect the areas on the screen that attracted the player’s focus. We conducted this procedure by eye-tracking software [

73] executed during the game by capturing the child’s gaze via the tablet’s camera. When the viewer focused on a specific area, this led to a particular metric called a fixation. The software gave these fixations, and we computed three standard variables in eye-tracking [

74]. These were:

The number of fixations (fixation count—FC);

The time that passed until the first fixation (time to first fixation—TTFF);

The total duration of fixations (time spent—TS).

3.2. Data Formulation & Methods Description

As for the eye-tracking variables, the filtering process left only the fixations count and the time spent on areas of interest. The time to first fixation had many missing values and was removed from the dataset.

The dataset is divided into three subsets that correspond to the categories of (i) game scores, (ii) heart rate statistics, and (iii) eye-tracker metrics. Each of these subsets constitutes the set of input variables to the classification process. Several missing and non-valid data were filtered out. Thus, our dataset was forced to reduce the number of cases, although our initial dataset was larger. Ultimately, this also reduced instances of the pathological population. The following tables summarize the input variables.

Table 1 shows the variables of the game scores. In total, 30 variables corresponded to the types described in

Table 1,

Table 2 and

Table 3 that summarize the variables from the heart rate and eye tracking accordingly. A total of 15 HR variables used all statistical means, standard deviations, and range. As for the eye-tracking variables, the filtering process left only the fixations’ count and time spent on areas of interest, 16 variables in total. The time to first fixation had many missing values and was removed from the dataset.

Table 4 shows the target variables defining the classes that were used. These variables are binary, meaning either they had the condition or not. The Disorder variable denotes TD and non-TD children. ASD, ADHD, ID, SLD, and CD variables suggest more specifically the disorder as described above, according to DSM-5.

Descriptive statistics for the variables (means, St Ds) are summarized in

Appendix A.

4. Application Details and Experimental Results

In this section, the application details of the applied classifiers and their corresponding parameterization are described in detail, followed by the experimental results.

Seven different classifiers were considered to assess their performance on the provided dataset. Specifically, each neural network employed one layer with ten neurons, and four different optimizers were adopted accordingly, namely BFGS [

75], genetic algorithm [

58,

74,

76], PSO [

77], and Adam [

64]. The same population and chromosome number for PSO and genetic algorithms was used, accordingly,

N = 200. At the same time, the parameters of the rest of the optimizers remained the same as in the original papers. Furthermore, an INN rule construction method, a KNN method [

65] with five neighborhoods, and an SVM method [

68] (using the freely available library libsvm [

78]) were also considered for the comparisons. Finally, the maximum number of iterations was set at 200 for fair comparisons.

The datasets were split into ten subsets using the 10-fold cross-validation technique to estimate their performance reasonably. Nine of the produced subsets were used for training, and the remaining one was used for the test. Thirty independent experiments were conducted for each instance, calculating each algorithm and the average classification error. For this purpose, different seed numbers were also used for each experiment using the drand48() random number generator of the C programming language. The experiments were performed using the freely available in-house software from

https://github.com/itsoulos/IntervalGenetic (accessed on 15 February 2023). The cells in the experimental tables describe average results on the corresponding test set.

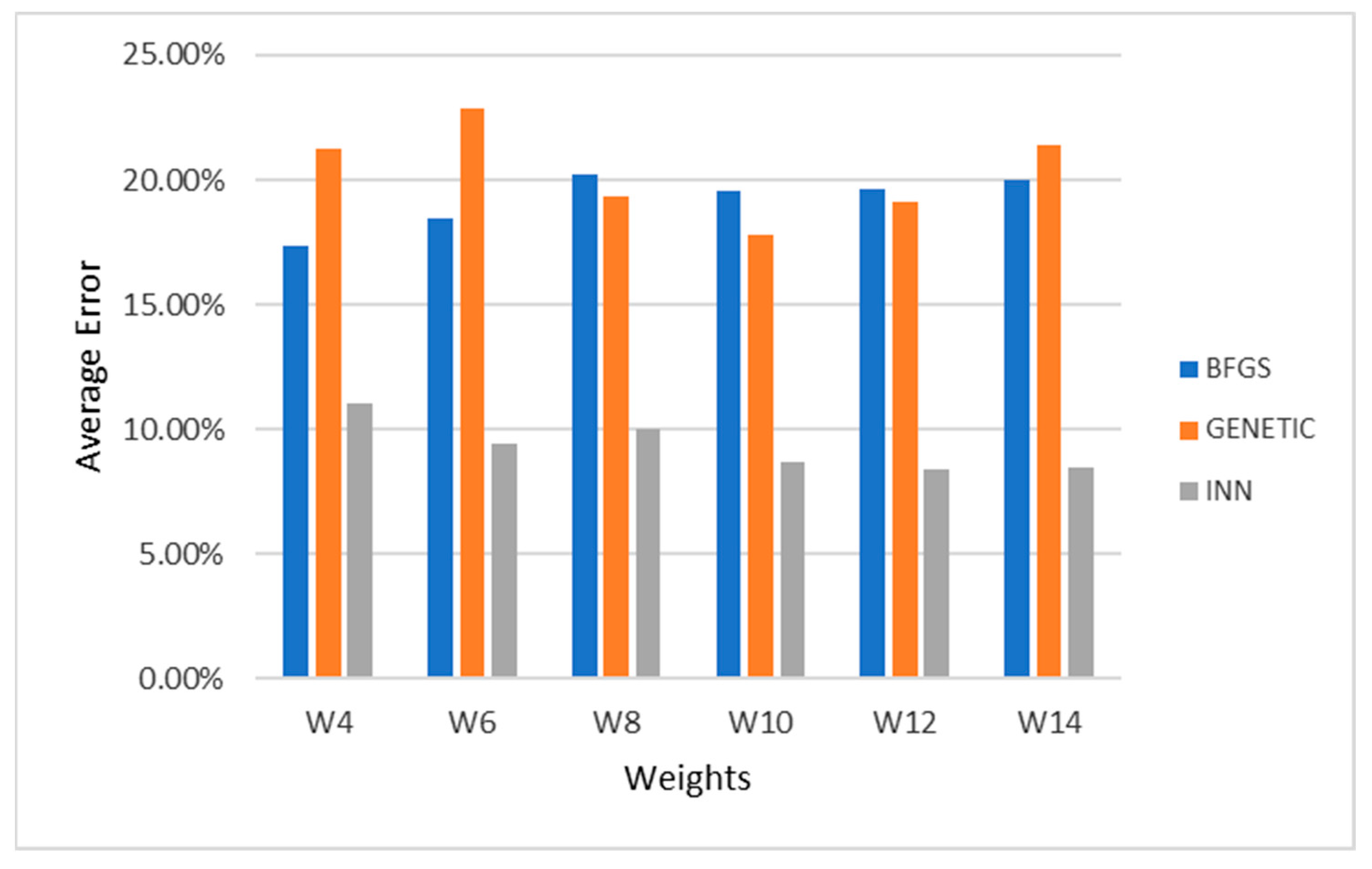

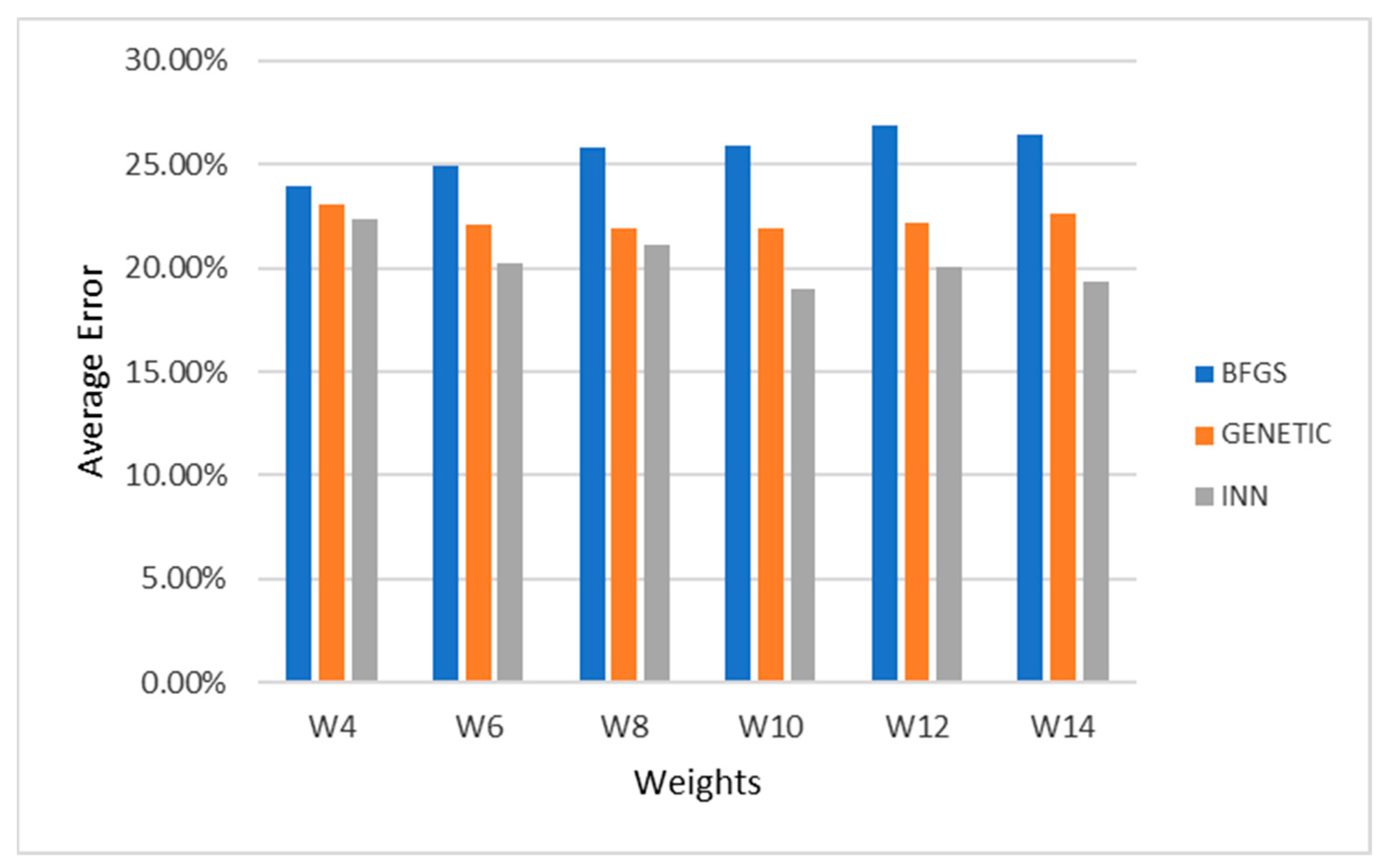

Additionally, neural network neurons were changed to examine the performance disturbances, and, specifically, they ranged from 4 to 14. This experiment was conducted for the three disorder datasets, and the results are graphically demonstrated in

Figure 1,

Figure 2 and

Figure 3. Observing the related graphs shows that 8–10 processing nodes usually achieve the lowest values in the control dataset in almost all techniques.

Next,

Table 5,

Table 6 and

Table 7 compare the utilized classification methods using error rate (%) for the eye-tracking, heart rate, and game responses datasets.

Table 8 shows the precision and recall metrics indicatively for Genetic and INN when applied to this study’s datasets.

As can be observed from the tables presenting the experimental results, BFGS achieved lower results for all instances and all datasets since it is a local optimization algorithm. Specifically, BFGS achieved an average error rate of 28.85% for the eye-tracking dataset, while the genetic and PSO have marginally better results. INN achieved the best average error rate, namely 20.02%, indicating that it can detect the best areas that the weights can range. INN is conducted in two phases. During the first phase, a branch and bound algorithm locates the most promising intervals for the neural network parameters. In the second phase, a genetic algorithm optimizes the neural network inside the interval located in the first phase. Additionally, the experimental results indicated that Adam slightly overcomes SVM and KNN in most cases.

Moreover, the average error rate concerning whether an individual has a disorder is more profitable since the corresponding data are more extensive than those from other instances, such as ID and ADHD. Furthermore, as mentioned in

Section 3.2, gathering data on child populations is challenging, with missing data reported. For instance, regarding eye-track activities, there is in-line evidence of difficulty obtaining continuous and valid measurements due to the child’s spontaneous movements [

79]. The same patterns were also applied in the heart rate and the game score datasets. INN proved better than the rest, achieving a classification error of around 20% aligning with the results of precision and recall rates. It is clear that the classification for “Disorder” for the eye-tracking dataset, thus screening between TD and non-TD children, reports the best results for all the optimizers. The highest performance is clearly achieved using the INN optimizer (8.67% error rate).

In the same way as this study, others have looked into the potential of drag-and-drop data as a digital biomarker and proposed a classification model to categorize kids with developmental disorders [

9]. They created an algorithm for a deep-learning convolutional neural network model with promising findings suggesting diagnoses of developmental disorders. In a different study, the potential for the early detection of developmental impairments in children was explored, using diagnostic information from the International Classification of Diseases (ICD) and supplementary information, including prescription history, treatment duration, and frequency records [

80]. By combining four algorithms, namely k-nearest neighbor, random forest, logistic regression, and gradient boosting, they created the best model for the early diagnosis of impairments. Their classification model for detecting disorders yielded high accuracy outcomes, just as in our study. It also specified delivering diagnoses around a year earlier than the usual diagnostic age.

5. Conclusions

Screening and evaluating speech and language deficiencies and NDs is a challenging, rigorous, and complex procedure that may occasionally result in misleading outcomes due to uncertainties in the diagnostic fit, subjective evaluation, and clinical expertise. Delayed or inaccurate evaluation eliminates chances for early identification and treatment, while if detected in time, it can help diminish NDs’ impact on an individual’s overall development and functioning. This highlights the significance of this study, using artificial intelligence for automatic classification.

For this reason, in this study, a first attempt to enhance the clinician’s decision-making assessment was conducted using machine learning methodologies. Specifically, the collected data provided by a novel, recently developed serious game were used as a test bed to estimate the classification performance of the proposed neural network algorithms. The provided dataset includes a variety of variables stemming from the game, along with biometrical data from a total of 435 participants. The experiments were conducted in a series of different neural networks adopting a variety of optimizers, and the average classification error was collected.

The results were promising, opening new inquiries for future research. INN proved to be the most competitive algorithm, achieving an average classification error of 20%. This performance may be further improved by using different optimization and machine learning methodologies, and/or by increasing the number of participants, which we will thoroughly examine in future work. The results of this study are expected to contribute towards developing an innovative digital approach to support health care. They may be valuable tools for the early identification of NDs, delivering objective metrics complementary to the clinician’s diagnosis, reducing screening and diagnostic costs, and enriching clinician efficiency.