Pattern-Multiplicative Average of Nonnegative Matrices Revisited: Eigenvalue Approximation Is the Best of Versatile Optimization Tools

Abstract

1. Introduction

2. Materials and Methods

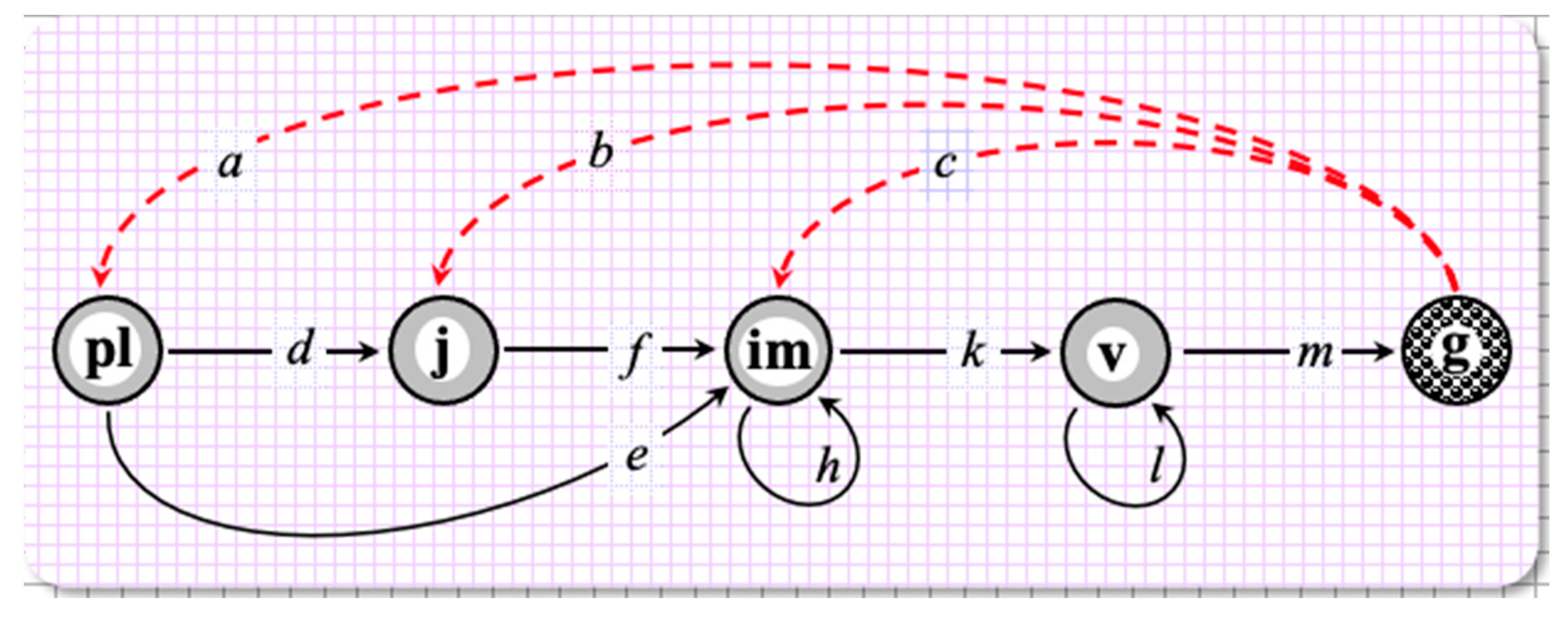

2.1. Case Study

2.2. Pattern-Multiplicative Average of Annual PPMs

2.3. Approximate PMA as a Nonlinear Constrained Minimization Problem

| Minimal | Vital Rate | Maximal |

|---|---|---|

| 4/3 | a | 49 |

| 3 | b | 85 |

| 0 | c | 25 |

| 0 | d | 7/15 |

| 0 | e | 1 |

| 1/49 | f | 7/9 |

| 0 | h | 2/3 |

| 6/95 | k | 5/6 |

| 8/25 | l | 22/23 |

| 1/35 | m | 5/15 |

2.4. Approximate PMA as an Eigenvalue-Constrained Optimization Problem

(G)3 v = λ1(G)3 v,

…,

(G)13 v = λ1(G)13 v.

bv5 + dv1 − λv2 = 0,

cv5 + ev1+ fv2+ hv3 − λv3 = 0,

kv3 + lv4 − λv4 = 0,

mv4 − λv5 = 0,

i.e., for the ten unknown entries of G and the 11th formal variable λ.

3. Results

3.1. Case Study Outcome

3.2. Pattern-Multiplicative Averaging as an Approxiation Problem

3.3. Minimizing the Approximation Error as a Matrix Norm

3.4. Eigenvalue-Constrained Optimization Problem

4. Discussion and Conclusions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A

Appendix B

Appendix C

- Similar lines for the places of (2, 1) and (3, 1) return, respectively, 7/13 and 1/13.

References

- COMADRE. Available online: https://compadre-db.org/Data/Comadre (accessed on 30 May 2023).

- COMPADRE. Available online: https://compadre-db.org/Data/Compadre (accessed on 30 May 2023).

- Berman, A.; Plemmons, R.J. Nonnegative Matrices in the Mathematical Sciences; Society for Industrial and Applied Mathematics: Philadelphia, PA, USA, 1994. [Google Scholar]

- Caswell, H. Matrix Population Models: Construction, Analysis and Interpretation, 2nd ed.; Sinauer Associates: Sunderland, MA, USA, 2001. [Google Scholar]

- Horn, R.A.; Johnson, C.R. Matrix Analysis; Cambridge University Press: Cambridge, UK, 1990. [Google Scholar]

- Gantmacher, F.R. Matrix Theory; Chelsea Publ.: New York, NY, USA, 1959. [Google Scholar]

- Logofet, D.O.; Salguero-Gómez, R. Novel challenges and opportunities in the theory and practice of matrix population modelling: An editorial for the special feature “Theory and Practice in Matrix Population Modelling”. Ecol. Model. 2021, 443, 109457. [Google Scholar] [CrossRef]

- Logofet, D.O. Does averaging overestimate or underestimate population growth? It depends. Ecol. Model. 2019, 411, 108744. [Google Scholar] [CrossRef]

- Cohen, J.E. Comparative statics and stochastic dynamics of age-structured populations. Theor. Popul. Biol. 1979, 16, 159–171. [Google Scholar] [CrossRef] [PubMed]

- Tuljapurkar, S.D.; Orzack, S.H. Population dynamics in variable environments I. Long-run growth rates and extinction. Theor. Popul. Biol. 1980, 18, 314–342. [Google Scholar] [CrossRef]

- Tuljapurkar, S.D. Demography in stochastic environments. II. Growth and convergence rates. J. Math. Biol. 1986, 24, 569–581. [Google Scholar] [CrossRef] [PubMed]

- Tuljapurkar, S.D. Population Dynamics in Variable Environments; Lecture Notes in Biomathematics; Springer: Berlin, Germany, 1990; Volume 85. [Google Scholar] [CrossRef]

- Logofet, D.O. Averaging the population projection matrices: Heuristics against uncertainty and nonexistence. Ecol. Complex. 2018, 33, 66–74. [Google Scholar] [CrossRef]

- Protasov, V.Y.; Zaitseva, T.I.; Logofet, D.O. Pattern-multiplicative average of nonnegative matrices: When a constrained minimization problem requires versatile optimization tools. Mathematics 2022, 10, 4417. [Google Scholar] [CrossRef]

- Logofet, D.O.; Golubyatnikov, L.L.; Kazantseva, E.S.; Belova, I.N.; Ulanova, N.G. Thirteen years of monitoring an alpine short-lived perennial: Novel methods disprove the former assessment of population viability. Ecol. Model. 2023, 477, 110208. [Google Scholar] [CrossRef]

- Logofet, D.O.; Kazantseva, E.S.; Belova, I.N.; Onipchenko, V.G. How long does a short-lived perennial live? A modelling approach. Biol. Bul. Rev. 2018, 8, 406–420. [Google Scholar] [CrossRef]

- Logofet, D.O.; Kazantseva, E.S.; Onipchenko, V.G. Seed bank as a persistent problem in matrix population models: From uncertainty to certain bounds. Ecol. Modell. 2020, 438, 109284. [Google Scholar] [CrossRef]

- MathWorks. Documentation. Available online: https://www.mathworks.com/help/gads/globalsearch.html?s_tid=doc_ta (accessed on 30 May 2023).

- MathWorks. Documentation. Available online: https://www.mathworks.com/help/optim/ug/linprog.html?s_tid=doc_ta (accessed on 30 May 2023).

- Deb, K.; Roy, P.C.; Hussein, R. Surrogate modeling approaches for multiobjective optimization: Methods, taxonomy, and results. Math. Comput. Appl. 2021, 26, 5. [Google Scholar] [CrossRef]

- Krantz, S.G. Real Analysis and Foundations, 5th ed.; CRC Press: BoicaRaton, FL, USA, 2022; pp. 325–413. [Google Scholar]

- Robert, C.P.; Casella, G. Monte Carlo Statistical Methods, 2nd ed.; Springer: Berlin/Heidelberg, Germany, 2004. [Google Scholar] [CrossRef]

- Luenberger, D.G. Introduction to Linear and Non-linear Programming; Addison-Wesley: Reading, MA, USA, 1973. [Google Scholar]

- Zhukova, L.A. Polyvariance of the meadow plants. In Zhiznennye Formy v Ekologii i Sistematike Rastenii (Life Forms in Plant Ecology and Systematics); Mosk. Gos. Pedagog. Inst.: Moscow, Russia, 1986; pp. 104–114. [Google Scholar]

- Zhukova, L.A. Populyatsionnaya Zhizn’ Lugovykh Rastenii (Population Life of Meadow Plants); Lanar: Yoshkar-Ola, Russia, 1995. [Google Scholar]

- MathWorks. Documentation. Available online: https://www.mathworks.com/help/matlab/math/multidimensional-arrays.html (accessed on 30 May 2023).

- MathWorks. Documentation. Available online: https://www.mathworks.com/help/matlab/ref/nnz.html?s_tid=doc_ta (accessed on 30 May 2023).

| Census Year, t | Matrix L(t): t → t + 1 | λ1(L(t)) | Vector x *, % |

|---|---|---|---|

| 2009 j = 0 | L0 | 0.5661 | |

| 2010 j = 1 | L1 = | 1.2283 | |

| 2011 j = 2 | L2 = | 1.5779 | |

| 2012 j = 3 | L3 = | 1.2641 | |

| 2014 j = 5 | L5 = | 0.3988 | |

| 2015 j = 6 | L6 = | 1.0679 | |

| 2016 j = 7 | L7 = | 0.9611 | |

| 2017 j = 8 | L8 = | 1.1206 | |

| 2018 j = 9 | L9 = | 0.9617 | |

| 2019 j = 10 | L10 = | 0.8496 | |

| 2020 j = 11 | L11 = | 1.3008 | |

| 2021 j = 12 | L12 = | 1.1143 |

| Matrix Prod | λ1(G13), ρ0 | Vector v*, % | ||||

|---|---|---|---|---|---|---|

| 0.021185585295608 | 0.039538528446472 | 0.086369212318887 | 0.321576284325812 | 0.312397941844407 | 0.31893645391 0.91584799085 | 29.2923 |

| 0.032875920640909 | 0.061354070510449 | 0.134019914355007 | 0.498983075380778 | 0.484789540051083 | 45.4532 | |

| 0.003661007803335 | 0.006824023057585 | 0.014887199373520 | 0.055368303504470 | 0.054013601100565 | 5.0480 | |

| 0.013845526439184 | 0.025919668563603 | 0.056669501627721 | 0.210813605518226 | 0.203676548113200 | 19.1962 | |

| 0.000729124010650 | 0.001363464687049 | 0.002980796589266 | 0.011096313418442 | 0.010736266465211 | 1.0103 | |

| Optimization Method, Loss Function | Matrix G | λ1(G) | Approximation Error | ||||

|---|---|---|---|---|---|---|---|

| Basin hopping, | 0 | 0 | 0 | 0 | 3.3309 | 0.8585 | 0.002374 |

| 0.4530 | 0 | 0 | 0 | 7.8767 | |||

| 0.0288 | 0.2936 | 0.1474 | 0 | 0 | |||

| 0 | 0 | 0.1726 | 0.7589 | 0 | |||

| 0 | 0 | 0 | 0.1034 | 0 | |||

| Basin hopping, and penalty for constraint violations | 0 | 0 | 0 | 0 | 3.3309 | 0.8585 | 0.002374 |

| 0.4533 | 0 | 0 | 0 | 7.8757 | |||

| 0.0287 | 0.22936 | 0.1474 | 0 | 0 | |||

| 0 | 0 | 0.1726 | 0.7589 | 0 | |||

| 0 | 0 | 0 | 0.1034 | 0 | |||

| Basin hopping, S(G) = Φ(G)/Ψ(G)′, where | 0 | 0 | 0 | 0 | 3.3348 | 0.8584 | 0.002379 |

| 0.4322 | 0 | 0 | 0 | 7.9666 | |||

| 0.0363 | 0.28976 | 0.1485 | 0 | 0.0022 | |||

| 0 | 0 | 0.1728 | 0.7587 | 0 | |||

| 0 | 0 | 0 | 0.1034 | 0 | |||

| Inner Problem (16) | Matrix G(x) | λ1(G) | Approximation Error | ||||

|---|---|---|---|---|---|---|---|

| , | 0 | 0 | 0 | 0 | 26.5530 | 0.915847990853247 | 0 |

| 0 | 0 | 0 | 0 | 41.2023 | |||

| 0 | 0.2777 | 0.6667 | 0 | 0 | |||

| 0 | 0 | 0.0632 | 0.8992 | 0 | |||

| 0 | 0 | 0 | 0.0482 | 0 | |||

| , | 0 | 0 | 0 | 0 | 26.5530 | 0.915847990853247 | 1.1102 × 10−16 |

| 0 | 0 | 0 | 0 | 41.2023 | |||

| 0 | 0.2777 | 0.6667 | 0 | 0 | |||

| 0 | 0 | 0.0632 | 0.8992 | 0 | |||

| 0 | 0 | 0 | 0.0482 | 0 | |||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Logofet, D.O. Pattern-Multiplicative Average of Nonnegative Matrices Revisited: Eigenvalue Approximation Is the Best of Versatile Optimization Tools. Mathematics 2023, 11, 3237. https://doi.org/10.3390/math11143237

Logofet DO. Pattern-Multiplicative Average of Nonnegative Matrices Revisited: Eigenvalue Approximation Is the Best of Versatile Optimization Tools. Mathematics. 2023; 11(14):3237. https://doi.org/10.3390/math11143237

Chicago/Turabian StyleLogofet, Dmitrii O. 2023. "Pattern-Multiplicative Average of Nonnegative Matrices Revisited: Eigenvalue Approximation Is the Best of Versatile Optimization Tools" Mathematics 11, no. 14: 3237. https://doi.org/10.3390/math11143237

APA StyleLogofet, D. O. (2023). Pattern-Multiplicative Average of Nonnegative Matrices Revisited: Eigenvalue Approximation Is the Best of Versatile Optimization Tools. Mathematics, 11(14), 3237. https://doi.org/10.3390/math11143237