Review of Artificial Intelligence and Machine Learning Technologies: Classification, Restrictions, Opportunities and Challenges

Abstract

:1. Introduction

2. Artificial Intelligence and Machine Learning Technologies Classification

- Standard feed-forward neural network (FFNN).

- Recurrent neural network (RNN).

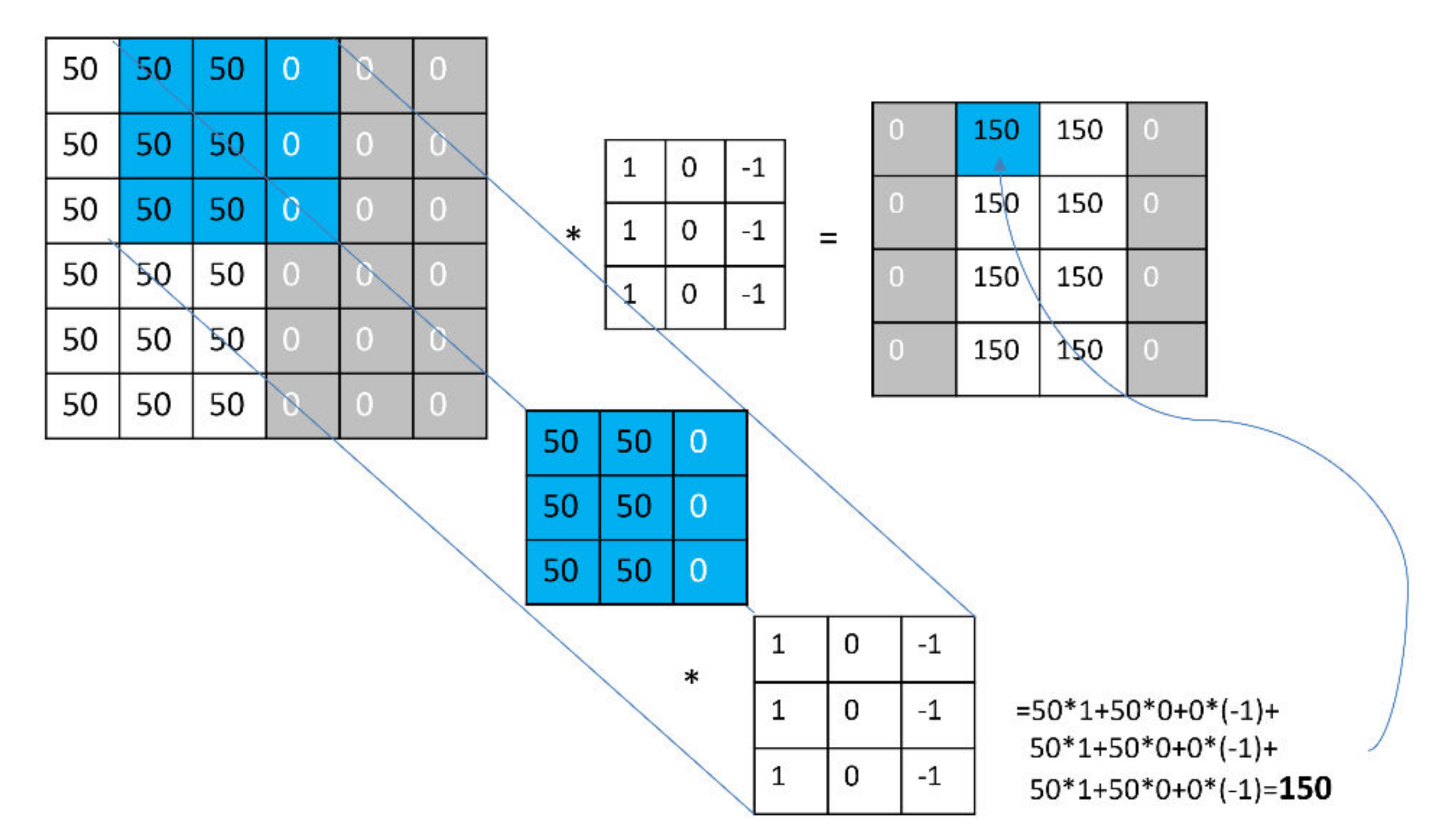

- Convolutional neural network (CNN).

- Hybrid architectures, including elements of 1, 2, 3 basic architectures, for example, Siamese networks and transformers.

3. Limitation and Difficulties in the AI and ML Application

- Insufficient amount of posted data.

- Sample variability, such as variability in tissue and organ samples.

- Prevalence of non-binary classification problems.

- Large image sizes (50,000 × 50,000), while existing deep learning models operate with substantially smaller images (608 × 608 Yolo), 224 × 224 VGG16 [143].

- Turing test dilemma. The final assessment is carried out by a human, which is not always possible.

- Orientation of weak AI to the solution of one task, which increases the complexity of training and leads to the associativity problem indicated below.

- High computational costs mean high costs of AI-based solutions.

- Instability of solutions of the computer vision systems and their dependence on noise in problems of medical diagnostics.

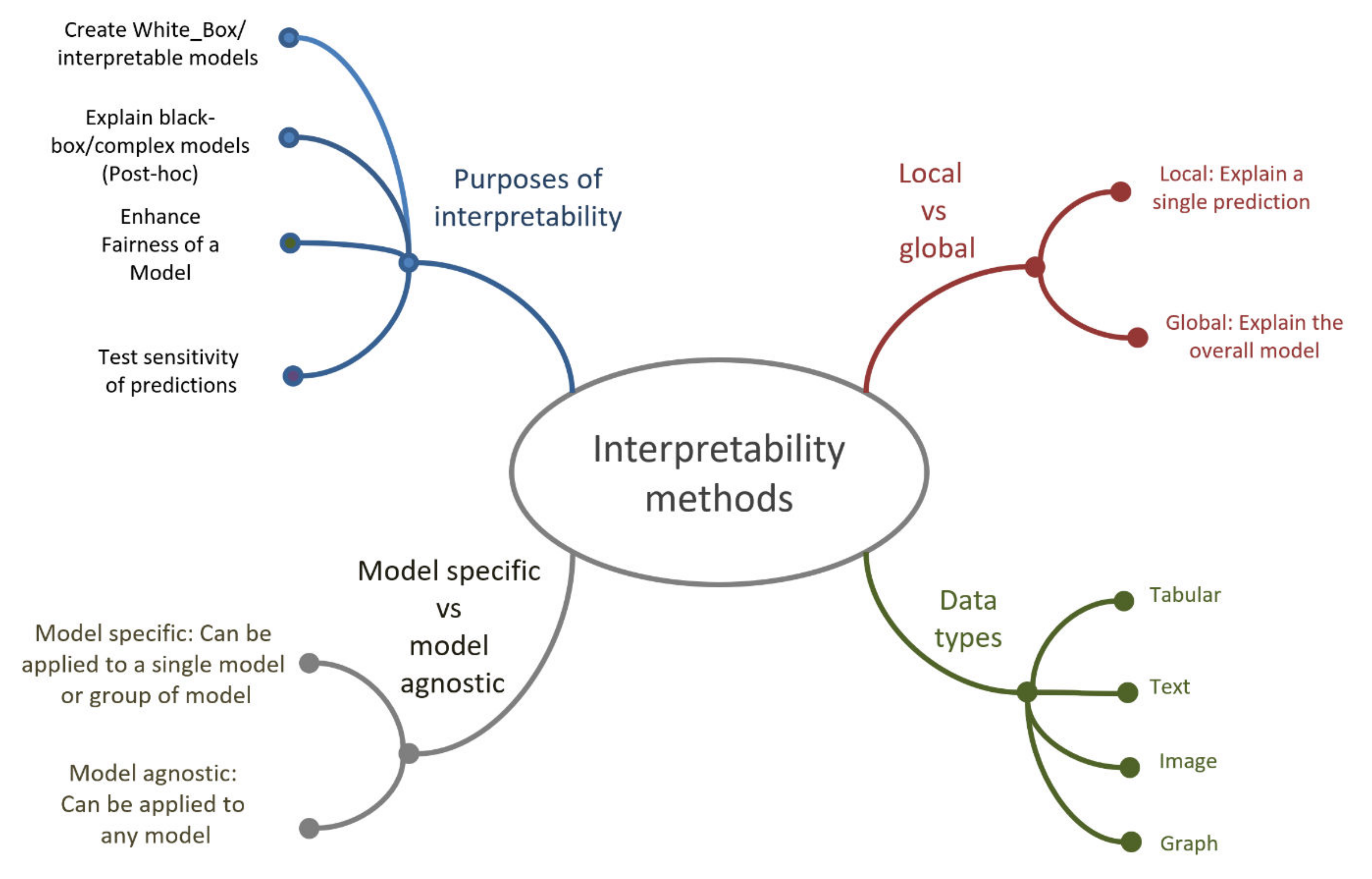

- Lack of transparency and interpretability.

- Difficulties in applying AI in practice. For example, the difficulties of the Watson Health project [144] are associated with the complexity of the practical application, low confidence in the results and high costs.

4. Discussion

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Ghasemi, Y.; Jeong, H.; Choi, S.H.; Park, K.-B.; Lee, J.Y. Deep learning-based object detection in augmented reality: A systematic review. Comput. Ind. 2022, 139, 103661. [Google Scholar] [CrossRef]

- Widdows, D.; Kitto, K.; Cohen, T. Quantum mathematics in artificial intelligence. J. Artif. Intell. Res. 2021, 72, 1307–1341. [Google Scholar] [CrossRef]

- Panetto, H.; Iung, B.; Ivanov, D.; Weichhart, G.; Wang, X. Challenges for the cyber-physical manufacturing enterprises of the future. Annu. Rev. Control. 2019, 47, 200–213. [Google Scholar] [CrossRef]

- Izonin, I.; Tkachenko, R.; Peleshko, D.; Rak, T.; Batyuk, D. Learning-based image super-resolution using weight coefficients of synaptic connections. In Proceedings of the 2015 Xth International Scientific and Technical Conference “Computer Sciences and Information Technologies”(CSIT), Lviv, Ukraine, 14–17 September 2015; pp. 25–29. [Google Scholar]

- Shen, D.; Wu, G.; Suk, H.-I. Deep learning in medical image analysis. Annu. Rev. Biomed. Eng. 2017, 19, 221–248. [Google Scholar] [CrossRef] [Green Version]

- Tuncer, T.; Ertam, F.; Dogan, S.; Aydemir, E.; Pławiak, P. Ensemble residual network-based gender and activity recognition method with signals. J. Supercomput. 2020, 76, 2119–2138. [Google Scholar] [CrossRef]

- Barakhnin, V.; Duisenbayeva, A.; Kozhemyakina, O.Y.; Yergaliyev, Y.; Muhamedyev, R. The automatic processing of the texts in natural language. Some bibliometric indicators of the current state of this research area. In Journal of Physics: Conference Series; IOP Publishing: Bristol, UK, 2018; p. 012001. [Google Scholar]

- Hirschberg, J.; Manning, C.D. Advances in natural language processing. Science 2015, 349, 261–266. [Google Scholar] [CrossRef]

- Abdullahi, M.; Baashar, Y.; Alhussian, H.; Alwadain, A.; Aziz, N.; Capretz, L.F.; Abdulkadir, S.J. Detecting Cybersecurity Attacks in Internet of Things Using Artificial Intelligence Methods: A Systematic Literature Review. Electronics 2022, 11, 198. [Google Scholar] [CrossRef]

- Kim, D.; Kim, S.-H.; Kim, T.; Kang, B.B.; Lee, M.; Park, W.; Ku, S.; Kim, D.; Kwon, J.; Lee, H. Review of machine learning methods in soft robotics. PLoS ONE 2021, 16, e0246102. [Google Scholar] [CrossRef]

- Torres, E.P.; Torres, E.A.; Hernández-Álvarez, M.; Yoo, S.G. EEG-based BCI emotion recognition: A survey. Sensors 2020, 20, 5083. [Google Scholar] [CrossRef]

- Kuchin, Y.; Mukhamediev, R.; Yakunin, K.; Grundspenkis, J.; Symagulov, A. Assessing the impact of expert labelling of training data on the quality of automatic classification of lithological groups using artificial neural networks. Appl. Comput. Syst. 2020, 25, 145–152. [Google Scholar] [CrossRef]

- Kotsiantis, S.B.; Zaharakis, I.; Pintelas, P. Supervised machine learning: A review of classification techniques. Emerg. Artif. Intell. Appl. Comput. Eng. 2007, 160, 3–24. [Google Scholar]

- Hastie, T.; Tibshirani, R.; Friedman, J. Unsupervised learning. In The Elements of Statistical Learning; Springer: Berlin/Heidelberg, Germany, 2009; pp. 485–585. [Google Scholar]

- Van der Maaten, L.; Hinton, G. Visualizing data using t-SNE. J. Mach. Learn. Res. 2008, 9, 2579–2605. [Google Scholar]

- Adopting, Deploying, and Applying AI. Available online: https://www.mckinsey.com/featured-insights/artificial-intelligence/ai-adoption-advances-but-foundational-barriers-remain (accessed on 21 April 2022).

- Zhao, H. Assessing the economic impact of artificial intelligence. ITU Trends. In Emerging Trends in ICTs; Morgan Kaufmann Publishers: Burlington, MA, USA, 2018. [Google Scholar]

- Financial Climate in the Republic of Kazakhstan. Available online: https://www2.deloitte.com/kz/ru/pages/about-deloitte/articles/financial_climate_in_kazakhstan.html (accessed on 21 April 2022).

- Bureau of National Statistics of the Agency for Strategic Planning and Reforms of the Republic of Kazakhstan. Available online: https://stat.gov.kz/search (accessed on 21 April 2022).

- Strategic Development Plan of the Republic of Kazakhstan until 2025. Available online: https://adilet.zan.kz/rus/docs/U1800000636 (accessed on 21 April 2022).

- Hidalgo, C.A.; Hausmann, R. The building blocks of economic complexity. Proc. Natl. Acad. Sci. USA 2009, 106, 10570–10575. [Google Scholar] [CrossRef] [Green Version]

- List of Countries by Economic Complexity. Available online: https://en.wikipedia.org/wiki/List_of_countries_by_economic_complexity (accessed on 21 April 2022).

- The Global Competitiveness Report 2019. Available online: http://www3.weforum.org/docs/WEF_TheGlobalCompetitivenessReport2019.pdf (accessed on 21 April 2022).

- The Socio-Economic Impact of AI in Healthcare, October 2020. Available online: https://www.medtecheurope.org/wp-content/uploads/2020/10/mte-ai_impact-in-healthcare_oct2020_report.pdf (accessed on 21 April 2022).

- Haseeb, M.; Mihardjo, L.W.; Gill, A.R.; Jermsittiparsert, K. Economic impact of artificial intelligence: New look for the macroeconomic assessment in Asia-Pacific region. Int. J. Comput. Intell. Syst. 2019, 12, 1295. [Google Scholar] [CrossRef] [Green Version]

- Van Roy, V. AI Watch-National Strategies on Artificial Intelligence: A European Perspective in 2019; Joint Research Centre: Seville, Spain, 2020. [Google Scholar]

- A National Artificial Intelligence Cluster Will Appear in Kazakhstan. Available online: https://kapital.kz/tehnology/93211/natsional-nyy-klaster-iskusstvennogo-intellekta-poyavit-sya-v-kazakhstane.html (accessed on 21 April 2022).

- Mukhamediev, R.I.; Yakunin, K.; Mussabayev, R.; Buldybayev, T.; Kuchin, Y.; Murzakhmetov, S.; Yelis, M. Classification of negative information on socially significant topics in mass media. Symmetry 2020, 12, 1945. [Google Scholar] [CrossRef]

- Yakunin, K.; Kalimoldayev, M.; Mukhamediev, R.I.; Mussabayev, R.; Barakhnin, V.; Kuchin, Y.; Murzakhmetov, S.; Buldybayev, T.; Ospanova, U.; Yelis, M. Kaznewsdataset: Single country overall digital mass media publication corpus. Data 2021, 6, 31. [Google Scholar] [CrossRef]

- Artificial Intelligence. Available online: https://www.britannica.com/technology/artificial-intelligence (accessed on 21 April 2022).

- Everitt, T.; Goertzel, B.; Potapov, A. Artificial general intelligence. In Lecture Notes in Artificial Intelligence; Springer: Berlin/Heidelberg, Germany, 2017. [Google Scholar]

- Tizhoosh, H.R.; Pantanowitz, L. Artificial intelligence and digital pathology: Challenges and opportunities. J. Pathol. Inform. 2018, 9, 38. [Google Scholar] [CrossRef]

- Strong, A. Applications of artificial intelligence & associated technologies. In Proceedings of the International Conference on Emerging Technologies in Engineering, Biomedical, Management and Science [ETEBMS-2016], Jodhpur, India, 5–6 March 2016. [Google Scholar]

- The Artificial Intelligence (AI) White Paper. Available online: https://www.iata.org/contentassets/b90753e0f52e48a58b28c51df023c6fb/ai-white-paper.pdf (accessed on 23 February 2021).

- Mukhamediev, R.I.; Symagulov, A.; Kuchin, Y.; Yakunin, K.; Yelis, M. From Classical Machine Learning to Deep Neural Networks: A Simplified Scientometric Review. Appl. Sci. 2021, 11, 5541. [Google Scholar] [CrossRef]

- Szczepanski, M. Economic Impacts of Artificial Intelligence (AI). 2019. EPRS: European Parliamentary Research Service. Available online: https://policycommons.net/artifacts/1334867/economic-impacts-of-artificial-intelligence-ai/1940719/ (accessed on 27 May 2022).

- Watanabe, S.; Hori, T.; Karita, S.; Hayashi, T.; Nishitoba, J.; Unno, Y.; Soplin, N.E.Y.; Heymann, J.; Wiesner, M.; Chen, N. Espnet: End-to-end speech processing toolkit. arXiv 2018, arXiv:1804.00015. [Google Scholar]

- Kerkeni, L.; Serrestou, Y.; Mbarki, M.; Raoof, K.; Mahjoub, M.A.; Cleder, C. Automatic speech emotion recognition using machine learning. In Social Media and Machine Learning; IntechOpen: London, UK, 2019. [Google Scholar]

- An, J.; Mikhaylov, A.; Sokolinskaya, N. Machine learning in economic planning: Ensembles of algorithms. In Journal of Physics: Conference Series; IOP Publishing: Bristol, UK, 2019; p. 012126. [Google Scholar]

- Usuga Cadavid, J.P.; Lamouri, S.; Grabot, B.; Pellerin, R.; Fortin, A. Machine learning applied in production planning and control: A state-of-the-art in the era of industry 4.0. J. Intell. Manuf. 2020, 31, 1531–1558. [Google Scholar] [CrossRef]

- Ogidan, E.T.; Dimililer, K.; Ever, Y.K. Machine learning for expert systems in data analysis. In Proceedings of the 2018 2nd International Symposium on Multidisciplinary Studies and Innovative Technologies (ISMSIT), Ankara, Turkey, 19–21 October 2018; pp. 1–5. [Google Scholar]

- Prasadl, B.; Prasad, P.; Sagar, Y. An approach to develop expert systems in medical diagnosis using machine learning algorithms (asthma) and a performance study. Int. J. Soft Comput. 2011, 2, 26–33. [Google Scholar] [CrossRef]

- Mosavi, A.; Varkonyi, A. Learning in robotics. Int. J. Comput. Appl. 2017, 157, 8–11. [Google Scholar] [CrossRef]

- Artrith, N.; Butler, K.T.; Coudert, F.-X.; Han, S.; Isayev, O.; Jain, A.; Walsh, A. Best practices in machine learning for chemistry. Nat. Chem. 2021, 13, 505–508. [Google Scholar] [CrossRef]

- Mater, A.C.; Coote, M.L. Deep learning in chemistry. J. Chem. Inf. Modeling 2019, 59, 2545–2559. [Google Scholar] [CrossRef]

- Cruz, J.A.; Wishart, D.S. Applications of machine learning in cancer prediction and prognosis. Cancer Inform. 2006, 2, 59–77. [Google Scholar] [CrossRef]

- Miotto, R.; Wang, F.; Wang, S.; Jiang, X.; Dudley, J.T. Deep learning for healthcare: Review, opportunities and challenges. Brief. Bioinform. 2018, 19, 1236–1246. [Google Scholar] [CrossRef]

- Ball, N.M.; Brunner, R.J. Data mining and machine learning in astronomy. Int. J. Mod. Phys. D 2010, 19, 1049–1106. [Google Scholar] [CrossRef] [Green Version]

- Chicco, D. Ten quick tips for machine learning in computational biology. BioData Min. 2017, 10, 1–17. [Google Scholar] [CrossRef]

- Zitnik, M.; Nguyen, F.; Wang, B.; Leskovec, J.; Goldenberg, A.; Hoffman, M.M. Machine learning for integrating data in biology and medicine: Principles, practice, and opportunities. Inf. Fusion 2019, 50, 71–91. [Google Scholar] [CrossRef]

- Liakos, K.G.; Busato, P.; Moshou, D.; Pearson, S.; Bochtis, D. Machine learning in agriculture: A review. Sensors 2018, 18, 2674. [Google Scholar] [CrossRef] [Green Version]

- Mahdavinejad, M.S.; Rezvan, M.; Barekatain, M.; Adibi, P.; Barnaghi, P.; Sheth, A.P. Machine learning for Internet of Things data analysis: A survey. Digit. Commun. Netw. 2018, 4, 161–175. [Google Scholar] [CrossRef]

- Farrar, C.R.; Worden, K. Structural Health Monitoring: A Machine Learning Perspective; John Wiley & Sons: New York, NY, USA, 2012. [Google Scholar]

- Lai, J.; Qiu, J.; Feng, Z.; Chen, J.; Fan, H. Prediction of soil deformation in tunnelling using artificial neural networks. Comput. Intell. Neurosci. 2016, 2016, 33. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Recknagel, F. Applications of machine learning to ecological modelling. Ecol. Model. 2001, 146, 303–310. [Google Scholar] [CrossRef]

- Tatarinov, V.; Manevich, A.; Losev, I. A system approach to geodynamic zoning based on artificial neural networks. Gorn. Nauk. I Tekhnologii Min. Sci. Technol. 2018, 3, 14–25. [Google Scholar] [CrossRef]

- Kuchin, Y.I.; Mukhamediev, R.I.; Yakunin, K.O. One method of generating synthetic data to assess the upper limit of machine learning algorithms performance. Cogent Eng. 2020, 7, 1718821. [Google Scholar] [CrossRef]

- Chen, Y.; Wu, W. Application of one-class support vector machine to quickly identify multivariate anomalies from geochemical exploration data. Geochem. Explor. Environ. Anal. 2017, 17, 231–238. [Google Scholar] [CrossRef]

- Mukhamediev, R.I.; Kuchin, Y.; Amirgaliyev, Y.; Yunicheva, N.; Muhamedijeva, E. Estimation of Filtration Properties of Host Rocks in Sandstone-Type Uranium Deposits Using Machine Learning Methods. IEEE Access 2022, 10, 18855–18872. [Google Scholar] [CrossRef]

- Goldberg, Y. A primer on neural network models for natural language processing. J. Artif. Intell. Res. 2016, 57, 345–420. [Google Scholar] [CrossRef] [Green Version]

- Sadovskaya, L.L.; Guskov, A.E.; Kosyakov, D.V.; Mukhamediev, R.I. Natural language text processing: A review of publications. Artif. Intell. Decis. Mak. 2021, 95–115. [Google Scholar] [CrossRef]

- Nassif, A.B.; Shahin, I.; Attili, I.; Azzeh, M.; Shaalan, K. Speech recognition using deep neural networks: A systematic review. IEEE Access 2019, 7, 19143–19165. [Google Scholar] [CrossRef]

- Li, Y. Deep reinforcement learning: An overview. arXiv 2017, arXiv:1701.07274. [Google Scholar]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef]

- Jain, A.K.; Murty, M.N.; Flynn, P.J. Data clustering: A review. ACM Comput. Surv. 1999, 31, 264–323. [Google Scholar] [CrossRef]

- Barbakh, W.A.; Wu, Y.; Fyfe, C. Review of clustering algorithms. In Non-Standard Parameter Adaptation for Exploratory Data Analysis; Springer: Berlin/Heidelberg, Germany, 2009; pp. 7–28. [Google Scholar]

- Weiss, K.; Khoshgoftaar, T.M.; Wang, D. A survey of transfer learning. J. Big Data 2016, 3, 1–40. [Google Scholar] [CrossRef] [Green Version]

- Altman, N.S. An introduction to kernel and nearest-neighbor nonparametric regression. Am. Stat. 1992, 46, 175–185. [Google Scholar]

- Dudani, S.A. The distance-weighted k-nearest-neighbor rule. IEEE Trans. Syst. Man Cybern. 1976, 4, 325–327. [Google Scholar] [CrossRef]

- Yu, H.-F.; Huang, F.-L.; Lin, C.-J. Dual coordinate descent methods for logistic regression and maximum entropy models. Mach. Learn. 2011, 85, 41–75. [Google Scholar] [CrossRef] [Green Version]

- Cortes, C.; Vapnik, V. Support-vector networks. Mach. Learn. 1995, 20, 273–297. [Google Scholar] [CrossRef]

- Zhang, G.P. Neural networks for classification: A survey. IEEE Trans. Syst. Man Cybern. Part C (Appl. Rev.) 2000, 30, 451–462. [Google Scholar] [CrossRef] [Green Version]

- MacQueen, J. Some methods for classification and analysis of multivariate observations. In Proceedings of the Fifth Berkeley Symposium on Mathematical Statistics and Probability, Auckland, CA, USA, 7 January 1967; pp. 281–297. [Google Scholar]

- Tenenbaum, J.B.; Silva, V.d.; Langford, J.C. A global geometric framework for nonlinear dimensionality reduction. Science 2000, 290, 2319–2323. [Google Scholar] [CrossRef]

- Roweis, S.T.; Saul, L.K. Nonlinear dimensionality reduction by locally linear embedding. Science 2000, 290, 2323–2326. [Google Scholar] [CrossRef] [Green Version]

- Borg, I.; Groenen, P.J. Modern multidimensional scaling: Theory and applications. J. Educ. Meas. 2005, 40, 277–280. [Google Scholar] [CrossRef]

- Friedman, J.H. Greedy function approximation: A gradient boosting machine. Ann. Stat. 2001, 29, 1189–1232. [Google Scholar] [CrossRef]

- Breiman, L. Random forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef] [Green Version]

- Hochreiter, S.; Schmidhuber, J. Long short-term memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef]

- Schmidhuber, J. Deep learning in neural networks: An overview. Neural Netw. 2015, 61, 85–117. [Google Scholar] [CrossRef] [Green Version]

- LeCun, Y.; Kavukcuoglu, K.; Farabet, C. Convolutional networks and applications in vision. In Proceedings of the 2010 IEEE International Symposium on Circuits and Systems, Paris, France, 30 May–2 June 2010; pp. 253–256. [Google Scholar]

- Mou, L.; Ghamisi, P.; Zhu, X.X. Deep recurrent neural networks for hyperspectral image classification. IEEE Trans. Geosci. Remote Sens. 2017, 55, 3639–3655. [Google Scholar] [CrossRef] [Green Version]

- The Neural Network Zoo. Available online: https://www.asimovinstitute.org/neural-network-zoo/ (accessed on 21 April 2022).

- Nguyen, G.; Dlugolinsky, S.; Bobák, M.; Tran, V.; Lopez Garcia, A.; Heredia, I.; Malík, P.; Hluchý, L. Machine learning and deep learning frameworks and libraries for large-scale data mining: A survey. Artif. Intell. Rev. 2019, 52, 77–124. [Google Scholar] [CrossRef] [Green Version]

- Nayebi, A.; Vitelli, M. Gruv: Algorithmic music generation using recurrent neural networks Course CS224D. Deep. Learn. Nat. Lang. Processing (Stanf.) 2015, 1–6. Available online: https://anayebi.github.io/files/projects/CS_224D_Final_Project_Writeup.pdf (accessed on 27 May 2022).

- Lu, S.; Zhu, Y.; Zhang, W.; Wang, J.; Yu, Y. Neural text generation: Past, present and beyond. arXiv 2018, arXiv:1803.07133. [Google Scholar]

- Zhang, L.; Wang, S.; Liu, B. Deep learning for sentiment analysis: A survey. Wiley Interdiscip. Rev. Data Min. Knowl. Discov. 2018, 8, e1253. [Google Scholar] [CrossRef] [Green Version]

- Lample, G.; Ballesteros, M.; Subramanian, S.; Kawakami, K.; Dyer, C. Neural architectures for named entity recognition. arXiv 2016, arXiv:1603.01360. [Google Scholar]

- Liu, X. Deep recurrent neural network for protein function prediction from sequence. arXiv 2017, arXiv:1701.08318. [Google Scholar]

- Wu, Y.; Schuster, M.; Chen, Z.; Le, Q.V.; Norouzi, M.; Macherey, W.; Krikun, M.; Cao, Y.; Gao, Q.; Macherey, K. Google’s neural machine translation system: Bridging the gap between human and machine translation. arXiv 2016, arXiv:1609.08144. [Google Scholar]

- Hannun, A.; Case, C.; Casper, J.; Catanzaro, B.; Diamos, G.; Elsen, E.; Prenger, R.; Satheesh, S.; Sengupta, S.; Coates, A. Deep speech: Scaling up end-to-end speech recognition. arXiv 2014, arXiv:1412.5567. [Google Scholar]

- Devlin, J.; Chang, M.-W.; Lee, K.; Toutanova, K. Bert: Pre-training of deep bidirectional transformers for language understanding. arXiv 2018, arXiv:1810.04805. [Google Scholar]

- Peters, M.; Neumann, M.; Iyyer, M.; Gardner, M.; Clark, C.; Lee, K.; Zettlemoyer, L. Deep contextualized word representations. arXiv 2018, arXiv:1802.05365. [Google Scholar]

- Goodfellow, I.; Pouget-Abadie, J.; Mirza, M.; Xu, B.; Warde-Farley, D.; Ozair, S.; Courville, A.; Bengio, Y. Generative adversarial nets. Adv. Neural Inf. Processing Syst. 2014, 27, 1–9. Available online: https://proceedings.neurips.cc/paper/2014/file/5ca3e9b122f61f8f06494c97b1afccf3-Paper.pdf (accessed on 27 May 2022).

- Creswell, A.; White, T.; Dumoulin, V.; Arulkumaran, K.; Sengupta, B.; Bharath, A.A. Generative adversarial networks: An overview. IEEE Signal Processing Mag. 2018, 35, 53–65. [Google Scholar] [CrossRef] [Green Version]

- Agnese, J.; Herrera, J.; Tao, H.; Zhu, X. A survey and taxonomy of adversarial neural networks for text-to-image synthesis. Wiley Interdiscip. Rev. Data Min. Knowl. Discov. 2020, 10, e1345. [Google Scholar] [CrossRef] [Green Version]

- LeCun, Y.; Bottou, L.; Bengio, Y.; Haffner, P. Gradient-based learning applied to document recognition. Proc. IEEE 1998, 86, 2278–2324. [Google Scholar] [CrossRef] [Green Version]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. Imagenet classification with deep convolutional neural networks. Adv. Neural Inf. Processing Syst. 2012, 25, 1–9. Available online: https://proceedings.neurips.cc/paper/2012/file/c399862d3b9d6b76c8436e924a68c45b-Paper.pdf (accessed on 27 May 2022). [CrossRef]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, arXiv:1409.1556. [Google Scholar]

- Szegedy, C.; Liu, W.; Jia, Y.; Sermanet, P.; Reed, S.; Anguelov, D.; Erhan, D.; Vanhoucke, V.; Rabinovich, A. Going deeper with convolutions. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 1–9. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Redmon, J.; Divvala, S.; Girshick, R.; Farhadi, A. You only look once: Unified, real-time object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 779–788. [Google Scholar]

- Ronneberger, O.; Fischer, P.; Brox, T. U-net: Convolutional networks for biomedical image segmentation. In Proceedings of the International Conference on Medical Image Computing and Computer-Assisted Intervention, Munich, Germany, 5–9 October 2015; pp. 234–241. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. Adv. Neural Inf. Processing Syst. 2017, 30, 1–11. Available online: https://proceedings.neurips.cc/paper/2017/file/3f5ee243547dee91fbd053c1c4a845aa-Paper.pdf (accessed on 27 May 2022).

- Yuan, L.; Chen, D.; Chen, Y.-L.; Codella, N.; Dai, X.; Gao, J.; Hu, H.; Huang, X.; Li, B.; Li, C. Florence: A new foundation model for computer vision. arXiv 2021, arXiv:2111.11432. [Google Scholar]

- Liu, Z.; Hu, H.; Lin, Y.; Yao, Z.; Xie, Z.; Wei, Y.; Ning, J.; Cao, Y.; Zhang, Z.; Dong, L. Swin transformer v2: Scaling up capacity and resolution. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 19–20 June 2022; pp. 12009–12019. [Google Scholar]

- Zhang, H.; Li, F.; Liu, S.; Zhang, L.; Su, H.; Zhu, J.; Ni, L.M.; Shum, H.-Y. Dino: Detr with improved denoising anchor boxes for end-to-end object detection. arXiv 2022, arXiv:2203.03605. [Google Scholar]

- Kipf, T.N.; Welling, M. Semi-supervised classification with graph convolutional networks. arXiv 2016, arXiv:1609.02907. [Google Scholar]

- Veličković, P.; Cucurull, G.; Casanova, A.; Romero, A.; Lio, P.; Bengio, Y. Graph attention networks. arXiv 2017, arXiv:1710.10903. [Google Scholar]

- Gallicchio, C.; Micheli, A. Graph echo state networks. In Proceedings of the 2010 International Joint Conference on Neural Networks (IJCNN), Barcelona, Spain, 18–23 July 2010; pp. 1–8. [Google Scholar]

- Li, Y.; Tarlow, D.; Brockschmidt, M.; Zemel, R. Gated graph sequence neural networks. arXiv 2015, arXiv:1511.05493. [Google Scholar]

- Riba, P.; Fischer, A.; Lladós, J.; Fornés, A. Learning graph distances with message passing neural networks. In Proceedings of the 2018 24th International Conference on Pattern Recognition (ICPR), Beijing, China, 20–24 August 2018; pp. 2239–2244. [Google Scholar]

- Battaglia, P.W.; Hamrick, J.B.; Bapst, V.; Sanchez-Gonzalez, A.; Zambaldi, V.; Malinowski, M.; Tacchetti, A.; Raposo, D.; Santoro, A.; Faulkner, R. Relational inductive biases, deep learning, and graph networks. arXiv 2018, arXiv:1806.01261. [Google Scholar]

- Do, K.; Tran, T.; Venkatesh, S. Graph transformation policy network for chemical reaction prediction. In Proceedings of the 25th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining, Anchorage, AK, USA, 4–8 August 2019; pp. 750–760. [Google Scholar]

- Peng, H.; Li, J.; He, Y.; Liu, Y.; Bao, M.; Wang, L.; Song, Y.; Yang, Q. Large-scale hierarchical text classification with recursively regularized deep graph-cnn. In Proceedings of the 2018 World Wide Web Conference, Lyon, France, 23–27 April 2018; pp. 1063–1072. [Google Scholar]

- Garcia, V.; Bruna, J. Few-shot learning with graph neural networks. arXiv 2017, arXiv:1711.04043. [Google Scholar]

- Zhou, J.; Cui, G.; Hu, S.; Zhang, Z.; Yang, C.; Liu, Z.; Wang, L.; Li, C.; Sun, M. Graph neural networks: A review of methods and applications. AI Open 2020, 1, 57–81. [Google Scholar] [CrossRef]

- Islas-Cota, E.; Gutierrez-Garcia, J.O.; Acosta, C.O.; Rodríguez, L.-F. A systematic review of intelligent assistants. Future Gener. Comput. Syst. 2022, 128, 45–62. [Google Scholar] [CrossRef]

- Motai, Y.; Siddique, N.A.; Yoshida, H. Heterogeneous data analysis: Online learning for medical-image-based diagnosis. Pattern Recognit. 2017, 63, 612–624. [Google Scholar] [CrossRef]

- Kilian, M.A.; Kattenbeck, M.; Ferstl, M.; Ludwig, B.; Alt, F. Towards task-sensitive assistance in public spaces. Aslib J. Inf. Manag. 2019, 71, 344–367. [Google Scholar] [CrossRef]

- Chuan, C.-H.; Morgan, S. Creating and evaluating chatbots as eligibility assistants for clinical trials: An active deep learning approach towards user-centered classification. ACM Trans. Comput. Healthc. 2020, 2, 1–19. [Google Scholar] [CrossRef]

- Migkotzidis, P.; Liapis, A. SuSketch: Surrogate models of gameplay as a design assistant. IEEE Trans. Games 2021, 14, 273–283. [Google Scholar] [CrossRef]

- Sahadat, N.; Sebkhi, N.; Ghovanloo, M. Simultaneous multimodal access to wheelchair and computer for people with tetraplegia. In Proceedings of the 20th ACM International Conference on Multimodal Interaction, Boulder, CO, USA, 16–20 October 2018; pp. 393–399. [Google Scholar]

- Haescher, M.; Matthies, D.J.; Srinivasan, K.; Bieber, G. Mobile assisted living: Smartwatch-based fall risk assessment for elderly people. In Proceedings of the 5th International Workshop on Sensor-Based Activity Recognition and Interaction, Berlin, Germany, 20–21 September 2018; pp. 1–10. [Google Scholar]

- Kumar Shastha, T.; Kyrarini, M.; Gräser, A. Application of reinforcement learning to a robotic drinking assistant. Robotics 2019, 9, 1. [Google Scholar] [CrossRef] [Green Version]

- Viceconti, M.; Hunter, P.; Hose, R. Big data, big knowledge: Big data for personalized healthcare. IEEE J. Biomed. Health Inform. 2015, 19, 1209–1215. [Google Scholar] [CrossRef]

- Hemminahaus, J.; Kopp, S. Towards adaptive social behavior generation for assistive robots using reinforcement learning. In Proceedings of the 2017 12th ACM/IEEE International Conference on Human-Robot Interaction, Vienna, Austria, 6–9 March 2017; pp. 332–340. [Google Scholar]

- Duguleană, M.; Briciu, V.-A.; Duduman, I.-A.; Machidon, O.M. A virtual assistant for natural interactions in museums. Sustainability 2020, 12, 6958. [Google Scholar] [CrossRef]

- Mardani, A.; Nilashi, M.; Antucheviciene, J.; Tavana, M.; Bausys, R.; Ibrahim, O. Recent fuzzy generalisations of rough sets theory: A systematic review and methodological critique of the literature. Complexity 2017, 2017, 33. [Google Scholar] [CrossRef] [Green Version]

- Paliwal, C.; Biyani, P. To each route its own ETA: A generative modeling framework for ETA prediction. In Proceedings of the 2019 IEEE Intelligent Transportation Systems Conference (ITSC), Auckland, New Zealand, 27–30 October 2019; pp. 3076–3081. [Google Scholar]

- Sanenga, A.; Mapunda, G.A.; Jacob, T.M.L.; Marata, L.; Basutli, B.; Chuma, J.M. An overview of key technologies in physical layer security. Entropy 2020, 22, 1261. [Google Scholar] [CrossRef] [PubMed]

- Blackburn, H. Biobanking genetic material for agricultural animal species. Annu. Rev. Anim. Biosci. 2018, 6, 69–82. [Google Scholar] [CrossRef] [PubMed]

- UNESCO, Artificial Intelligence in Education: Challenges and Opportunities for Sustainable Development. 2019. Available online: https://en.unesco.org/news/challenges-and-opportunities-artificial-intelligence-education (accessed on 27 May 2022).

- Tahiru, F. AI in education: A systematic literature review. J. Cases Inf. Technol. 2021, 23, 1–20. [Google Scholar] [CrossRef]

- Opportunities and Challenges of Artificial Intelligence Technologies for the Cultural and Creative Sectors. Available online: https://data.europa.eu/doi/10.2759/144212 (accessed on 27 May 2022).

- Phua, A.; Davies, C.; Delaney, G. A digital twin hierarchy for metal additive manufacturing. Comput. Ind. 2022, 140, 103667. [Google Scholar] [CrossRef]

- Rocha-Jácome, C.; Carvajal, R.G.; Chavero, F.M.; Guevara-Cabezas, E.; Hidalgo Fort, E. Industry 4.0: A Proposal of Paradigm Organization Schemes from a Systematic Literature Review. Sensors 2021, 22, 66. [Google Scholar] [CrossRef]

- Hussein, W.N.; Kamarudin, L.; Hussain, H.N.; Zakaria, A.; Ahmed, R.B.; Zahri, N. The prospect of internet of things and big data analytics in transportation system. In Journal of Physics: Conference Series; IOP Publishing: Bristol, UK, 2018; p. 012013. [Google Scholar]

- AI Adoption Advances, But Foundational Barriers Remain. Available online: https://www.mckinsey.com/featured-insights/artificial-intelligence/ai-adoption-advances-but-foundational-barriers-remain (accessed on 21 April 2022).

- 4 Major Barriers to AI Adoption. Available online: https://www.agiloft.com/blog/barriers-to-ai-adoption/ (accessed on 21 April 2022).

- 3 Barriers to AI Adoption. Available online: https://www.gartner.com/smarterwithgartner/3-barriers-to-ai-adoption/ (accessed on 21 April 2022).

- Wang, W.; Siau, K. Artificial intelligence, machine learning, automation, robotics, future of work and future of humanity: A review and research agenda. J. Database Manag. 2019, 30, 61–79. [Google Scholar] [CrossRef]

- Reddy, A.S.B.; Juliet, D.S. Transfer learning with ResNet-50 for malaria cell-image classification. In Proceedings of the 2019 International Conference on Communication and Signal Processing (ICCSP), Melmaruvathur, India, 4–6 April 2019; pp. 0945–0949. [Google Scholar]

- A Reality Check for IBM’S AI Ambitions. Available online: https://www.technologyreview.com/2017/06/27/4462/a-reality-check-for-ibms-ai-ambitions/#:~:text=IBM%2C%20number%2039%20on%20our,to%20make%20medicine%20much%20smarter (accessed on 21 April 2022).

- Lu, H.; Li, Y.; Chen, M.; Kim, H.; Serikawa, S. Brain intelligence: Go beyond artificial intelligence. Mob. Netw. Appl. 2018, 23, 368–375. [Google Scholar] [CrossRef] [Green Version]

- Mukhamediev, R.I.; Symagulov, A.; Kuchin, Y.; Zaitseva, E.; Bekbotayeva, A.; Yakunin, K.; Assanov, I.; Levashenko, V.; Popova, Y.; Akzhalova, A. Review of Some Applications of Unmanned Aerial Vehicles Technology in the Resource-Rich Country. Appl. Sci. 2021, 11, 10171. [Google Scholar] [CrossRef]

- Zhong, Y.; Hu, X.; Luo, C.; Wang, X.; Zhao, J.; Zhang, L. WHU-Hi: UAV-borne hyperspectral with high spatial resolution (H2) benchmark datasets and classifier for precise crop identification based on deep convolutional neural network with CRF. Remote Sens. Environ. 2020, 250, 112012. [Google Scholar] [CrossRef]

- Peres, R.S.; Jia, X.; Lee, J.; Sun, K.; Colombo, A.W.; Barata, J. Industrial artificial intelligence in industry 4.0-systematic review, challenges and outlook. IEEE Access 2020, 8, 220121–220139. [Google Scholar] [CrossRef]

- Mhlanga, D. Industry 4.0 in finance: The impact of artificial intelligence (ai) on digital financial inclusion. Int. J. Financ. Stud. 2020, 8, 45. [Google Scholar] [CrossRef]

- European Commission. Ethics Guidelines for Trustworthy AI. 2019. Available online: https://digital-strategy.ec.europa.eu/en/library/ethics-guidelines-trustworthy-ai (accessed on 27 May 2022).

- Macas, M.; Wu, C.; Fuertes, W. A survey on deep learning for cybersecurity: Progress, challenges, and opportunities. Comput. Netw. 2022, 109032. [Google Scholar] [CrossRef]

- Rico-Bautista, D.; Guerrero, C.D.; Collazos, C.A.; Maestre-Gongora, G.; Sánchez-Velásquez, M.C.; Medina-Cárdenas, Y.; Parra-Sánchez, D.T.; Barreto, A.G.; Swaminathan, J. Key Technology Adoption Indicators for Smart Universities: A Preliminary Proposal. In Intelligent Sustainable Systems; Springer: Berlin/Heidelberg, Germany, 2022; pp. 651–663. [Google Scholar]

- Government AI Readiness Index 2020—Oxford Insights. Available online: https://www.oxfordinsights.com/government-ai-readiness-index-2020 (accessed on 27 May 2022).

- Machine Learning and the Five Vectors of Progress. Available online: https://www2.deloitte.com/us/en/insights/focus/signals-for-strategists/machine-learning-technology-five-vectors-of-progress.html (accessed on 21 April 2022).

- Rychnovská, D. Anticipatory governance in biobanking: Security and risk management in digital health. Sci. Eng. Ethics 2021, 27, 1–18. [Google Scholar] [CrossRef]

- Khan, S.; Khan, A.; Maqsood, M.; Aadil, F.; Ghazanfar, M.A. Optimized gabor feature extraction for mass classification using cuckoo search for big data e-healthcare. J. Grid Comput. 2019, 17, 239–254. [Google Scholar] [CrossRef]

- Tkachenko, R.; Izonin, I. Model and principles for the implementation of neural-like structures based on geometric data transformations. In Proceedings of the International Conference on Computer Science, Engineering and Education Applications, Kiev, Ukraine, 18–20 January 2018; pp. 578–587. [Google Scholar]

- Kulkarni, V.; Kulkarni, M.; Pant, A. Quantum computing methods for supervised learning. Quantum Mach. Intell. 2021, 3, 1–14. [Google Scholar] [CrossRef]

- Negro, P.; Pons, C.F. Artificial Intelligence techniques based on the integration of symbolic logic and deep neural networks: A systematic review of the literature. Intel. Artif. 2022, 25. [Google Scholar] [CrossRef]

- Verhulst, S.G.; Young, A. Open Data in Developing Economies: Toward building an Evidence Base on What Works and How; African Minds: Cape Town, South Africa, 2017. [Google Scholar]

- Imagenet. Available online: http://image-net.org/index (accessed on 21 April 2022).

- Open Images Dataset M5+ Extensions. Available online: https://storage.googleapis.com/openimages/web/index.html (accessed on 21 April 2022).

- COCO Dataset. Available online: http://cocodataset.org/#home (accessed on 21 April 2022).

- Wong, M.Z.; Kunii, K.; Baylis, M.; Ong, W.H.; Kroupa, P.; Koller, S. Synthetic dataset generation for object-to-model deep learning in industrial applications. PeerJ Comput. Sci. 2019, 5, e222. [Google Scholar] [CrossRef] [Green Version]

- Ros, G.; Sellart, L.; Materzynska, J.; Vazquez, D.; Lopez, A.M. The synthia dataset: A large collection of synthetic images for semantic segmentation of urban scenes. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 3234–3243. [Google Scholar]

- Cho, S. How to Generate Image Dataset based on 3D Model and Deep Learning Method. Int. J. Eng. Technol. 2015, 7, 221–225. [Google Scholar] [CrossRef]

- Müller, M.; Casser, V.; Lahoud, J.; Smith, N.; Ghanem, B. Sim4cv: A photo-realistic simulator for computer vision applications. Int. J. Comput. Vis. 2018, 126, 902–919. [Google Scholar] [CrossRef] [Green Version]

- Doan, A.-D.; Jawaid, A.M.; Do, T.-T.; Chin, T.-J. G2D: From GTA to Data. arXiv 2018, arXiv:1806.07381. [Google Scholar]

- Dosovitskiy, A.; Ros, G.; Codevilla, F.; Lopez, A.; Koltun, V. CARLA: An open urban driving simulator. In Proceedings of the Conference on Robot Learning, Mountain View, CA, USA, 13–15 November 2017; pp. 1–16. [Google Scholar]

- Arvanitis, T.N.; White, S.; Harrison, S.; Chaplin, R.; Despotou, G. A method for machine learning generation of realistic synthetic datasets for Validating Healthcare Applications. Health Inform. J. 2022, 28, 14604582221077000. [Google Scholar] [CrossRef]

- Nikolenko, S.I. Synthetic Data for Deep Learning; Springer: Berlin/Heidelberg, Germany, 2021. [Google Scholar]

- Pan, S.J.; Yang, Q. A survey on transfer learning. IEEE Trans. Knowl. Data Eng. 2009, 22, 1345–1359. [Google Scholar] [CrossRef]

- Davenport, T.; Kalakota, R. The potential for artificial intelligence in healthcare. Future Healthc. J. 2019, 6, 94. [Google Scholar] [CrossRef] [Green Version]

- Arrieta, A.B.; Díaz-Rodríguez, N.; Del Ser, J.; Bennetot, A.; Tabik, S.; Barbado, A.; García, S.; Gil-López, S.; Molina, D.; Benjamins, R. Explainable Artificial Intelligence (XAI): Concepts, taxonomies, opportunities and challenges toward responsible AI. Inf. Fusion 2020, 58, 82–115. [Google Scholar] [CrossRef] [Green Version]

- Linardatos, P.; Papastefanopoulos, V.; Kotsiantis, S. Explainable ai: A review of machine learning interpretability methods. Entropy 2020, 23, 18. [Google Scholar] [CrossRef]

- Ribeiro, M.T.; Singh, S.; Guestrin, C. “ Why should i trust you?” Explaining the predictions of any classifier. In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, San Francisco, CA, USA, 13–17 August 2016; pp. 1135–1144. [Google Scholar]

- Lundberg, S.M.; Lee, S.-I. A unified approach to interpreting model predictions. Adv. Neural Inf. Processing Syst. 2017, 30, 1–10. Available online: https://proceedings.neurips.cc/paper/2017/file/8a20a8621978632d76c43dfd28b67767-Paper.pdf (accessed on 27 May 2022).

- Van den Broeck, G.; Lykov, A.; Schleich, M.; Suciu, D. On the tractability of SHAP explanations. In Proceedings of the 35th Conference on Artificial Intelligence (AAAI), Washington, DC, USA, 7–14 February 2021. [Google Scholar]

- Miller, T. Explanation in artificial intelligence: Insights from the social sciences. Artif. Intell. 2019, 267, 1–38. [Google Scholar] [CrossRef]

- Erol, B.A.; Majumdar, A.; Benavidez, P.; Rad, P.; Choo, K.-K.R.; Jamshidi, M. Toward artificial emotional intelligence for cooperative social human–machine interaction. IEEE Trans. Comput. Soc. Syst. 2019, 7, 234–246. [Google Scholar] [CrossRef]

- Schuller, D.; Schuller, B.W. The age of artificial emotional intelligence. Computer 2018, 51, 38–46. [Google Scholar] [CrossRef]

- Shaban-Nejad, A.; Michalowski, M.; Buckeridge, D.L. Health intelligence: How artificial intelligence transforms population and personalized health. NPJ Digit. Med. 2018, 1, 1–2. [Google Scholar] [CrossRef] [PubMed]

- Shaham, U.; Yamada, Y.; Negahban, S. Understanding adversarial training: Increasing local stability of supervised models through robust optimization. Neurocomputing 2018, 307, 195–204. [Google Scholar] [CrossRef] [Green Version]

- Jarrahi, M.H. Artificial intelligence and the future of work: Human-AI symbiosis in organizational decision making. Bus. Horiz. 2018, 61, 577–586. [Google Scholar] [CrossRef]

- Muhamedyev, R.I.; Aliguliyev, R.M.; Shokishalov, Z.M.; Mustakayev, R.R. New bibliometric indicators for prospectivity estimation of research fields. Ann. Libr. Inf. Stud. 2018, 65, 62–69. [Google Scholar]

- Research and Development (% of GDP). Available online: https://data.worldbank.org/indicator/GB.XPD.RSDV.GD.ZS?locations=KZ-GB-AT-DE-RU (accessed on 21 April 2022).

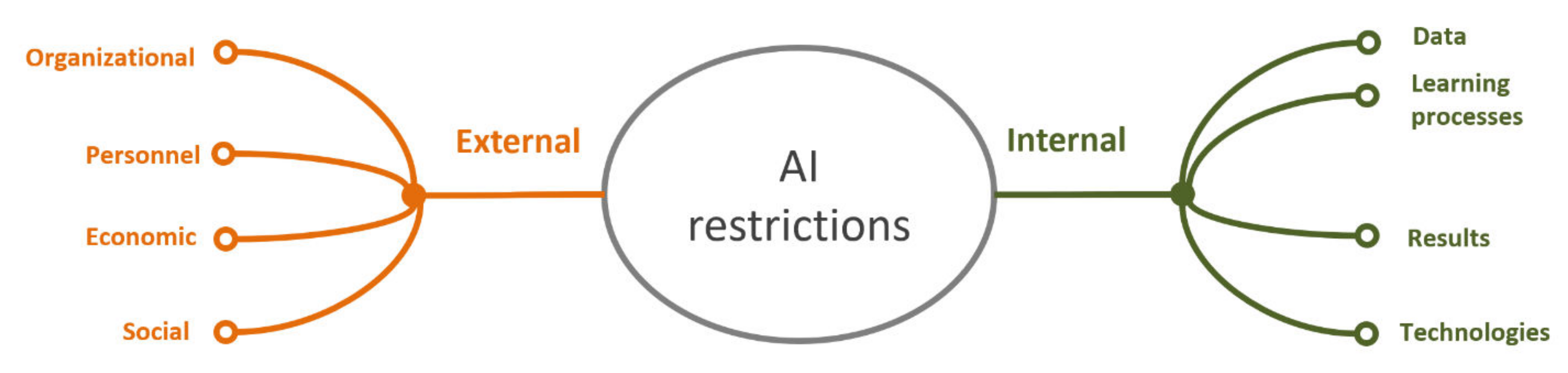

| Problems External to AI Technology | |||

|---|---|---|---|

| Organizational [139] | Personnel [140] | Economic [142] | Social [142] |

|

|

|

|

| Internal Limitations of AI Technology [32,145,148,154] | |||

|---|---|---|---|

| Data | Learning Processes | Results | Technology |

|

|

|

|

| Overcoming the External Limitations of AI&ML | |

|---|---|

| Organizational | Unification of the data set formation processes, development of policies and technologies for the data accumulation and use. |

| Personnel | Training of specialists and explanatory work in the environment of the applied specialists |

| Economic | Creation of unified solutions suitable for application in many areas. Development of economic models of AI application. |

| Social | Social research and the empowerment of explainable machine intelligence [179]. The researches in the field of artificial emotional intelligence [180,181] should improve the quality of human–machine interaction and personalized AI; for example, if AI is employed in the field of medicine, it should identify the patients’ preferences, personalize assistance to patients (and their families) in participating in the care process, personalize “general” therapy plans, and the information provided to patients [182]. |

| Overcoming the Internal Limitations of AI&ML | |

| Data | Unification of the data collection and the data markup processes. Formation of the data sets in different areas of AI&ML application. |

| Learning processes | Research in the field of transfer learning. Research in the field of increasing the computing power and new technical solutions. |

| Results | Development of systems for interpreting the results of machine learning models and simplification of the interaction with the applied specialists. Although there are similar models, they are intended for specialists only. |

| Technologies | Research in the field of improving the stability of the results generated by the machine learning models [183]. Development of models for specific cases of application of the machine learning (drones). Research in the field of general or strong AI. Research in the field of the human-machine symbiosis oriented towards the expansion of the human intelligence “intelligence augmentation”, instead of replacing it [184]. |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Mukhamediev, R.I.; Popova, Y.; Kuchin, Y.; Zaitseva, E.; Kalimoldayev, A.; Symagulov, A.; Levashenko, V.; Abdoldina, F.; Gopejenko, V.; Yakunin, K.; et al. Review of Artificial Intelligence and Machine Learning Technologies: Classification, Restrictions, Opportunities and Challenges. Mathematics 2022, 10, 2552. https://doi.org/10.3390/math10152552

Mukhamediev RI, Popova Y, Kuchin Y, Zaitseva E, Kalimoldayev A, Symagulov A, Levashenko V, Abdoldina F, Gopejenko V, Yakunin K, et al. Review of Artificial Intelligence and Machine Learning Technologies: Classification, Restrictions, Opportunities and Challenges. Mathematics. 2022; 10(15):2552. https://doi.org/10.3390/math10152552

Chicago/Turabian StyleMukhamediev, Ravil I., Yelena Popova, Yan Kuchin, Elena Zaitseva, Almas Kalimoldayev, Adilkhan Symagulov, Vitaly Levashenko, Farida Abdoldina, Viktors Gopejenko, Kirill Yakunin, and et al. 2022. "Review of Artificial Intelligence and Machine Learning Technologies: Classification, Restrictions, Opportunities and Challenges" Mathematics 10, no. 15: 2552. https://doi.org/10.3390/math10152552

APA StyleMukhamediev, R. I., Popova, Y., Kuchin, Y., Zaitseva, E., Kalimoldayev, A., Symagulov, A., Levashenko, V., Abdoldina, F., Gopejenko, V., Yakunin, K., Muhamedijeva, E., & Yelis, M. (2022). Review of Artificial Intelligence and Machine Learning Technologies: Classification, Restrictions, Opportunities and Challenges. Mathematics, 10(15), 2552. https://doi.org/10.3390/math10152552