An Investigation of Students’ Use of a Computational Science Simulation in an Online High School Physics Class

Abstract

1. Introduction

2. Literature Review

2.1. Models and Simulations in Science Education

2.2. Work with Data in the Context of Simulations

2.3. Using Simulations in Online Science Classes

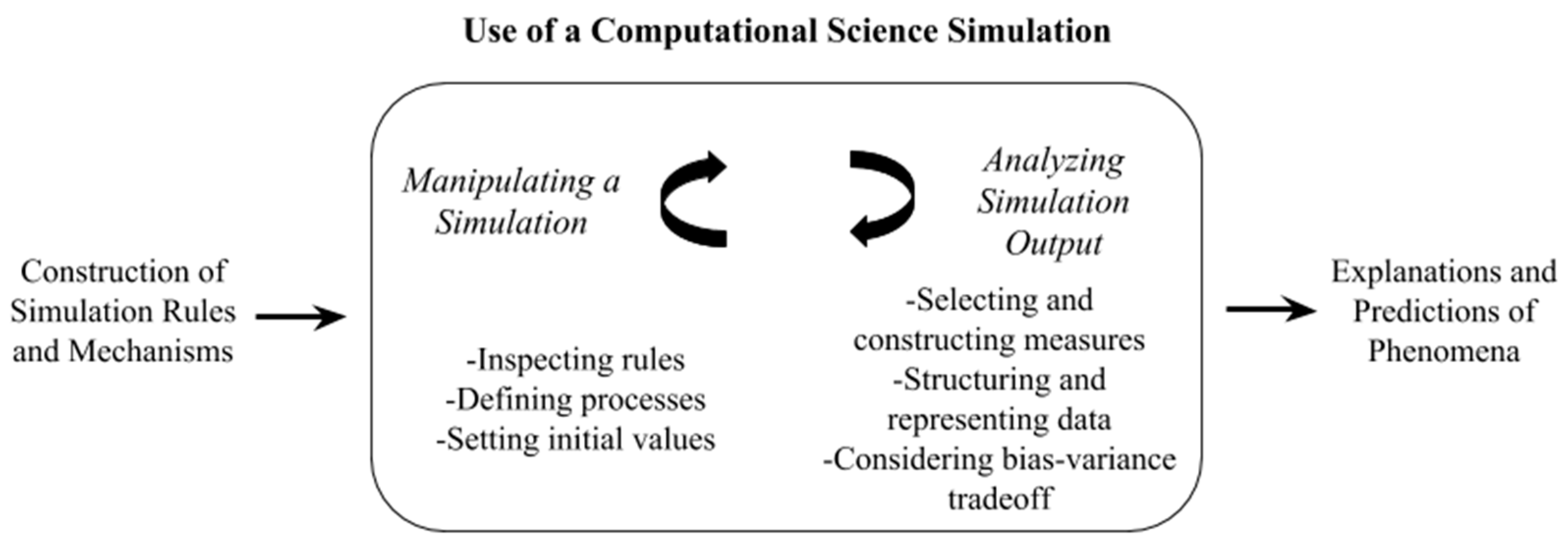

2.4. Framework for Using a Computational Science Simulation

3. Research Questions

- RQ1: How do students’ explanations change over the course of three lessons that involve using a simulation and modeling its output?

- RQ2: What do students perceive the strengths and weaknesses of using the simulation to be?

- RQ3: How do students approach modeling the classroom data set to account for its variability?

4. Methods

4.1. Participants and Context

4.2. Simulation Design

4.3. Lesson Sequence

4.3.1. Lesson 1: Tinker with the Simulation

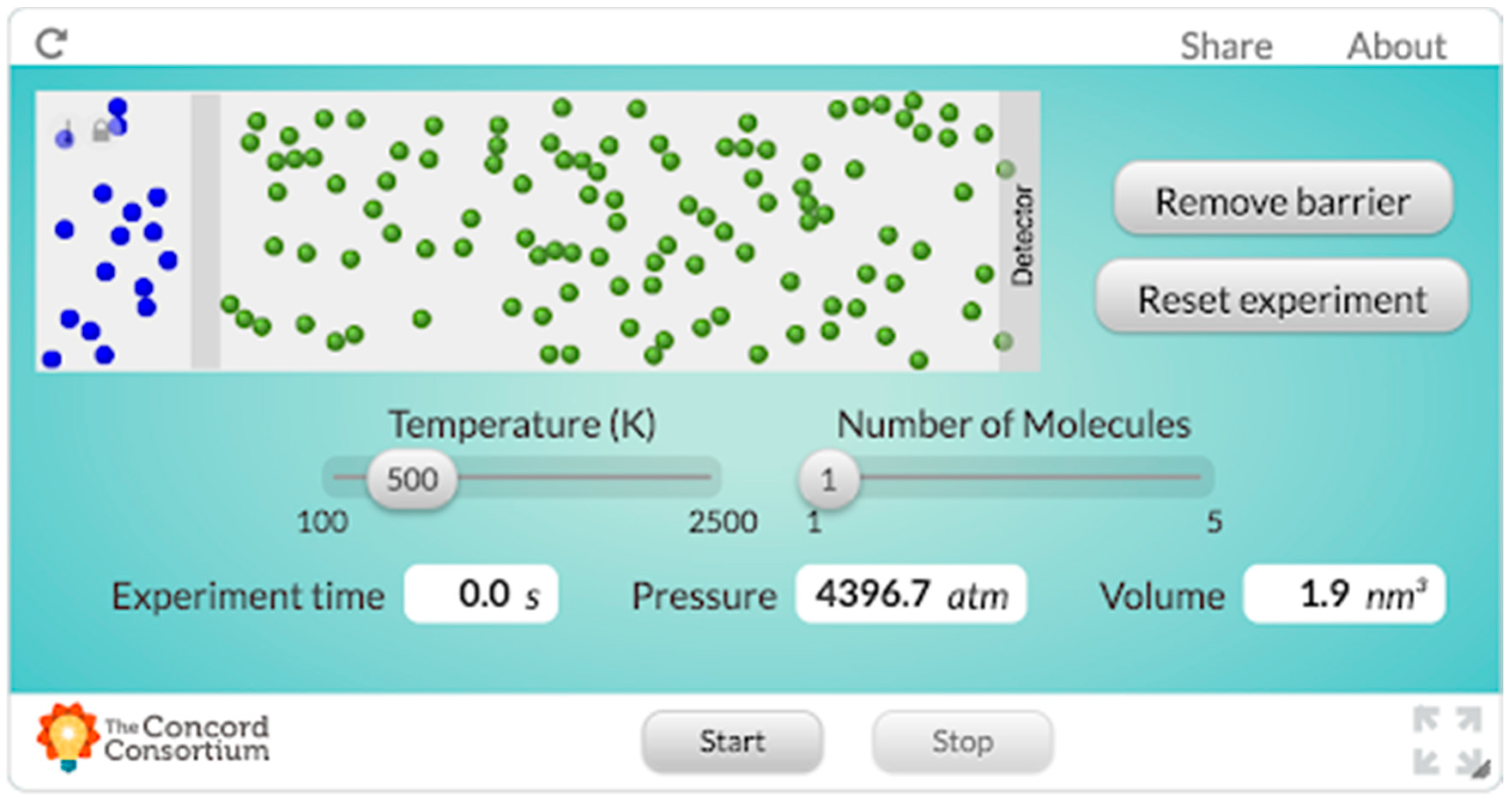

In this activity, students accessed the simulation (presented in Figure 2; see Rosenberg, 2016, [40] for a link to the simulation).Think about when a classmate of yours peels an orange, or, in science class, when a yucky smell is introduced or encountered in an investigation. Classmates who are close to the source of this smell initially report its presence. Gradually, the smell makes its ways throughout the room, and everyone has sensed it. What’s going on? How exactly does smell move through the room? Can we describe these phenomena based on our prior experiences - or do we need to conduct an experiment? Can the way that smell moves through the room be represented in a graphical depiction or even a formula? Is there some “speed of smell?”

Students responded to questions that prompted them to generate ideas about what they understood about how temperature, pressure, and volume are related and what they thought would happen in the simulation both before and after tinkering with it.In our model, you are free to think of all molecules as solid billiard balls that can collide with each other as they move randomly in straight lines through the room. The collisions between the billiard balls are “elastic,” so the total velocity (and mass) of all of the molecules is specified to be the same throughout the simulation remains the same, as well as the total kinetic energy of all the molecules. The green billiard balls on the right represent the air molecules throughout the room, the gray wall represents the closed perfume jar, and the blue billiard balls on the left represent tiny molecules of perfume floating in the air which (when the jar is opened, and the wall is removed) will eventually make their way toward the “gas sensor” also known as your nose. All of the molecules have the same mass.

4.3.2. Lesson 2: Collect Data from the Simulation

This simulation is not programmed with any gas laws or thermodynamic equations. It is simply modeling the motion of gas particles as though they were rigid billiard balls colliding with one another. Different pieces of information are available to us, from the total kinetic energy, in electron volts, to the pressure, volume, and temperature. We could use this simulation to examine the relationship between temperature and pressure. We could also examine the relationship between kinetic energy and temperature; since the volume, which here represents the volume of the container (including the volume behind the barrier); to calculate the volume, a depth 1 molecule deep is used. This simulation could be much more complex with greater depth!

4.3.3. Lesson 3: Generate a Model-based Explanation Using a Class Dataset

Imagine you want to “model” a relationship, such as how the number of likes of your photos on your social network is related to the number of times you post each day. One way you could do this is through a “line of best fit,” or a linear model. A line of best fit is a simple but powerful model: This model is simply a straight line through a scatterplot of data. If you look at the individual data points, you may notice that some are above the line, and some are below it; overall, the distance from the line to each point above the line and the distance from the line to each point below it will equal zero; as a result, if you have data points that are way higher than the other points, or way lower, they can affect where the line is. Something we can use to determine how well a line fits is called the coefficient of determination, or R2. The closer R2 is to 1 (which means the lines fits perfectly through every point!), the better. The R2 for the line above is 0.60.

4.4. Data Sources and Collection

4.5. Data Analysis

5. Findings

5.1. Findings for RQ1: Development of Student Responses Over the Course of the Lesson Sequence

5.1.1. Students Who Began with Sophisticated Conceptual Understanding

Because the temperature of a substance is proportional to its kinetic energy. Also, the kinetic energy of a substance is proportional to its mass and velocity. And velocity is what we are interested in if we want to know how quick a gas will reach another place. If the perfume has a lower temperature, the air will transfer its temperature with collisions, causing the perfume to go at a higher speed.

5.1.2. Students Who Showed Improvement in Sophistication

5.1.3. Students Who Showed Little Improvement in the Sophistication of Their Responses

5.2. Findings for RQ2: Strengths and Weaknesses of the Computational Science Simulation

5.3. Findings for RQ3: How Students Approached Modeling the Data to Account for its Variability

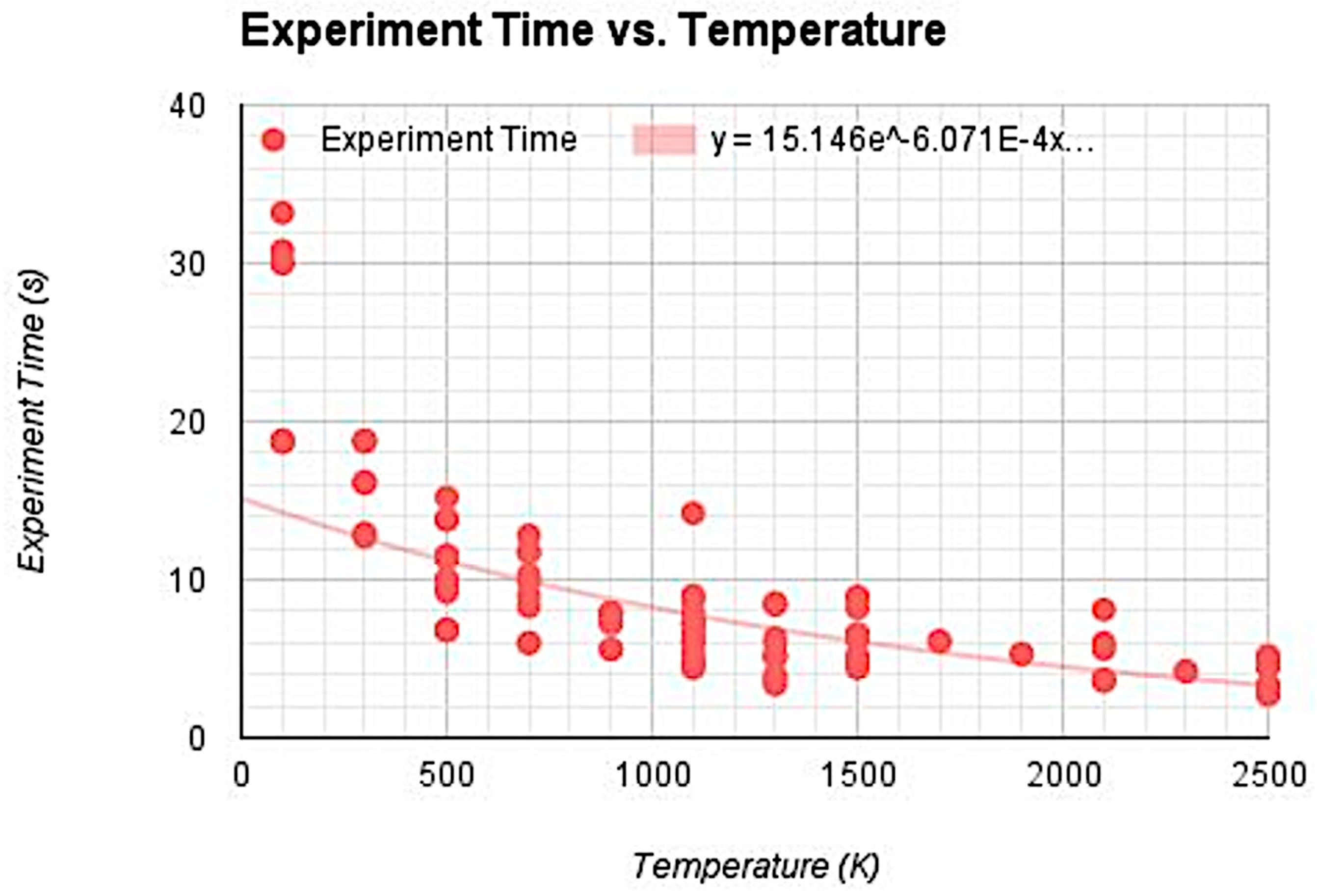

This response indicates that the student balanced between modeling as much of the variability as possible (using a 4th-degree polynomial function), but still considered the practicality of their model in terms of being able to make meaningful predictions about phenomena (either phenomena represented within the simulation or the wider world outside of the simulation). Student 8 demonstrated through the creation of their model (see Figure 3) a similar consideration between modeling the variability in the data and choosing a model that was interpretable in light of the data.I used this 4th power polynomial function because it had a much higher R2 value than the linear, exponential, and lower degree functions. Though some of the higher degree functions had better R2 values, it only increased from 0.809 to 0.815 which is pretty insignificant. Any degree function lower than 4 and the R2 values began to drop significantly, close to around 0.7 and below. Having a polynomial function with a higher degree of 4 makes it really laborious to manipulate and use to predict values.

6. Discussion

6.1. Limitations

6.2. Recommendations for Future Research

6.3. Implications for Practice

7. Conclusions

Supplementary Materials

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- NGSS Lead States. Next Generation Science Standards: For States, by States; National Academies Press: Washington, DC, USA, 2013. [Google Scholar]

- Common Core State Standards Initiative. Common Core State Standards for Mathematics; National Governors Association Center for Best Practices and the Council of Chief State School Officers: Washington, DC, USA, 2010. [Google Scholar]

- Berland, L.K.; Schwarz, C.V.; Krist, C.; Kenyon, L.; Lo, A.S.; Reiser, B. Epistemologies in practice: Making scientific practices meaningful for students. J. Res. Sci. Teach. 2016, 53, 1082–1112. [Google Scholar] [CrossRef]

- Lehrer, R.; Schauble, L. The development of scientific thinking. Handb. Child Psychol. Dev. Sci. 2015, 2, 671–714. [Google Scholar]

- Weisberg, M. Simulation and Similarity: Using Models to Understand the World; Oxford University Press: Oxford, UK, 2012. [Google Scholar]

- Honey, M.A.; Hilton, M. (Eds.) Learning Science Through Computer Games and Simulations; The National Academies Press: Washington, DC, USA, 2011. [Google Scholar]

- National Research Council. A Framework for K-12 Science Education: Practices, Crosscutting Concepts, and Core Ideas; The National Academies Press: Washington, DC, USA, 2012. [Google Scholar]

- Schwarz, C.V.; Reiser, B.J.; Davis, E.A.; Kenyon, L.; Achér, A.; Fortus, D.; Shwartz, Y.; Hug, B.; Krajcik, J. Developing a learning progression for scientific modeling: Making scientific modeling accessible and meaningful for learners. J. Res. Sci. Teach. 2009, 46, 632–654. [Google Scholar] [CrossRef]

- Stewart, J.; Cartier, J.L.; Passmore, C.M. Developing understanding through model-based inquiry. In How Students Learn: Science in the Classroom; Donovan, M., Bransford, J., Eds.; The National Academies Press: Washington, DC, USA, 2005; pp. 515–565. [Google Scholar]

- Clark, D.B. Longitudinal conceptual change in students’ understanding of thermal equilibrium: An examination of the process of conceptual restructuring. Cogn. Instr. 2006, 24, 467–563. [Google Scholar] [CrossRef]

- Jacobson, M.J.; Taylow, C.; Richards, D.; Lai, P. Computational scientific inquiry with virtual worlds and agent-based models: New ways of doing science to learn science. Interact. Learn. Environ. 2015, 24, 2080–2108. [Google Scholar] [CrossRef]

- Roschelle, J.; Kaput, J.; Stroup, W. SimCalc: Accelerating students’ engagement with the mathematics of change. In Educational Technology and Mathematics and Science for the 21st Century; Jacobson, M., Kozma, R., Eds.; Erlbaum: Mahwah, NJ, USA, 2000; pp. 470–475. [Google Scholar]

- Smetana, L.K.; Bell, R.L. Computer simulations to support science instruction and learning: A critical review of the literature. Int. J. Sci. Educ. 2012, 34, 1337–1370. [Google Scholar] [CrossRef]

- White, B.Y. ThinkerTools: Causal models, conceptual change, and science education. Cogn. Instr. 1993, 10, 1–100. [Google Scholar] [CrossRef]

- White, B.Y.; Frederiksen, J.R. Inquiry, modeling, and metacognition: Making science accessible to all students. Cogn. Instr. 1998, 16, 3–118. [Google Scholar] [CrossRef]

- White, B.Y.; Schwarz, C.V. Alternative approaches to using modeling and simulation tools for teaching science. In Modeling and Simulation in Science and Mathematics Education; Feurzeig, W., Roberts, N., Eds.; Springer: New York, NY, USA, 1999; pp. 226–256. [Google Scholar]

- Schwartz, C.V.; White, B.Y. Metamodeling knowledge: Developing students’ understanding of scientific modeling. Cogn. Instr. 2005, 23, 165–205. [Google Scholar] [CrossRef]

- Wieman, C.E.; Adams, W.K.; Perkins, K.K. PhET: Simulations that enhance learning. Science 2008, 322, 682–683. [Google Scholar] [CrossRef] [PubMed]

- Brinson, J.R. Learning outcome achievement in non-traditional (virtual and remote) versus traditional (hands-on) laboratories: A review of the empirical research. Comput. Educ. 2015, 87, 218–237. [Google Scholar] [CrossRef]

- Fan, X.; Geelan, D.R. Enhancing students’ scientific literacy in science education using interactive simulations: A critical literature review. J. Comput. Math. Sci. Teach. 2013, 32, 125–171. [Google Scholar]

- Fan, X.; Geelan, D.; Gillies, R. Evaluating a novel instructional sequence for conceptual change in physics using interactive simulations. Educ. Sci. 2018, 8, 29. [Google Scholar] [CrossRef]

- Geelan, D.R.; Fan, X. Teachers using interactive simulations to scaffold inquiry instruction in physical science education. In Science Teachers Use of Visual Representations; Eilam, B., Gilbert, J., Eds.; Springer International Publishing: Basel, Switzerland, 2014; pp. 249–270. [Google Scholar]

- Schwarz, C.V.; Gwekwewere, Y.N. Using a guided inquiry and modeling instructional framework (EIMA) to support preservice K-8 science teaching. Sci. Educ. 2005, 91, 158–186. [Google Scholar] [CrossRef]

- Wilensky, U.; Resnick, M. Thinking in levels: A dynamic systems approach to making sense of the world. J. Sci. Educ. Technol. 1999, 8, 3–19. [Google Scholar] [CrossRef]

- Cholmsky, P. Why GIZMOS Work: Empirical Evidence for the Instructional Effectiveness of Explore Learning’s Interactive Content. 2003. Available online: https://www.explorelearning.com/View/downloads/WhyGizmosWork.pdf (accessed on 31 January 2019).

- Damelin, D.; Krajcik, J.; McIntyre, C.; Bielik, T. Students making systems models: An accessible approach. Sci. Scope 2017, 40, 78–82. [Google Scholar] [CrossRef]

- Lee, V.R.; Wilkerson, M. Data Use by Middle and Secondary Students in the Digital Age: A Status Report and Future Prospects; National Academies of Sciences, Engineering, and Medicine, Board on Science Education, Committee on Science Investigations and Engineering Design for Grades 6–12: Washington, DC, USA, 2018. [Google Scholar]

- Winsberg, E. Science in the Age of Computer Simulation; University of Chicago Press: Chicago, IL, USA, 2010. [Google Scholar]

- Lehrer, R.; Schauble, L. Modeling natural variation through distribution. Am. Educ. Res. J. 2004, 41, 635–679. [Google Scholar] [CrossRef]

- Petrosino, A.J.; Lehrer, R.; Schauble, L. Structuring error and experimental variation as distribution in the fourth grade. Math. Think. Learn. 2003, 5, 131–156. [Google Scholar] [CrossRef]

- Xiang, L.; Passmore, C. A framework for model-based inquiry through agent-based programming. J. Sci. Educ. Technol. 2015, 24, 311–329. [Google Scholar] [CrossRef]

- Dickes, A.C.; Sengupta, P.; Farris, A.V.; Basu, S. Development of mechanistic reasoning and multilevel explanations of ecology in third grade using agent-based models. Sci. Educ. 2016, 100, 734–776. [Google Scholar] [CrossRef]

- Wilkerson-Jerde, M.H.; Wilensky, U.J. Patterns, probabilities, and people: Making sense of quantitative change in complex systems. J. Learn. Sci. 2015, 24, 204–251. [Google Scholar] [CrossRef]

- Wilensky, U.; Reisman, K. Thinking like a wolf, a sheep, or a firefly: Learning biology through constructing and testing computational theories: An embodied modeling approach. Cogn. Instr. 2006, 24, 171–209. [Google Scholar] [CrossRef]

- Potkonjak, V.; Gardner, M.; Callaghan, V.; Mattila, P.; Guetl, C.; Petrović, V.M.; Jovanovic, K. Virtual laboratories for education in science, technology, engineering: A review. Comput. Educ. 2016, 95, 309–327. [Google Scholar] [CrossRef]

- Rowe, R.J.; Koban, L.; Davidoff, A.J.; Thompson, K.H. Efficacy of online laboratory science courses. J. Form. Des. Learn. 2017, 2, 56–67. [Google Scholar] [CrossRef]

- Winsberg, E. Values and uncertainties in the predictions of global climate models. Kennedy Inst. Ethics J. 2012, 22, 111–137. [Google Scholar] [CrossRef] [PubMed]

- Morrison, J.; Roth McDuffie, A.; French, B. Identifying key components of teaching and learning in a STEM school. Sch. Sci. Math. 2015, 115, 244–255. [Google Scholar] [CrossRef]

- Wilensky, U.; Jacobson, M.J. Complex systems and the learning sciences. In The Learning Sciences; Sawyer, R.K., Ed.; Cambridge University Press: Cambridge, UK, 2014; pp. 319–338. [Google Scholar]

- Rosenberg, J.M. Diffusion & Temperature—AP Physics 2 (MVS) [Lab Interactive Simulation]. Available online: http://lab.concord.org/interactives.html#interactives/external-projects/msu/temperature-diffusion.json (accessed on 31 January 2019).

- Shankar, R. Fundamentals of Physics: Mechanics, Relativity, and Thermodynamics; Yale University Press: New Haven, CT, USA, 2014. [Google Scholar]

- Shwartz, Y.; Weizman, A.; Fortus, D.; Krajcik, J.; Reiser, B. The IQWST experience: Coherence as a design principle. Elem. Sch. J. 2008, 109, 199–219. [Google Scholar] [CrossRef]

- Hatch, J.A. Doing Qualitative Research in Educational Settings; State University of New York Press: Albany, NY, USA, 2002. [Google Scholar]

- Saldaña, J. The Coding Manual for Qualitative Researchers, 3rd ed.; Sage: London, UK, 2015. [Google Scholar]

- Godfrey-Smith, P. Darwinian Populations and Natural Selection; Oxford University Press: New York, NY, USA, 2011. [Google Scholar]

- Russ, R.S.; Scherr, R.E.; Hammer, D.; Mikeska, J. Recognizing mechanistic reasoning in student scientific inquiry: A framework for discourse analysis developed from philosophy of science. Sci. Stud. Sci. Educ. 2008, 92, 499–525. [Google Scholar] [CrossRef]

- Schwarz, C.V.; Ke, L.; Lee, M.; Rosenberg, J.M. Developing mechanistic explanations of phenomena: Case studies of two fifth grade students’ epistemologies in practice over time. In Learning and Becoming in Practice: The International Conference of the Learning Sciences (ICLS) 2014; Polman, J.L., Kyza, E.A., O’Neill, K., Tabak, I., Penuel, W.R., Jurow, A.S., D’Amico, L., Eds.; ISLS: Boulder, CO, USA, 2014; Volume 1, pp. 182–189. [Google Scholar]

- Parong, J.; Mayer, R.E. Learning science in immersive virtual reality. J. Educ. Psychol. 2018, 110, 785–797. [Google Scholar] [CrossRef]

- Lamb, R.; Antonenko, P.; Etopio, E.; Seccia, A. Comparison of virtual reality and hands on activities in science education via functional near infrared spectroscopy. Comput. Educ. 2018, 124, 14–26. [Google Scholar] [CrossRef]

| Research Questions and Sub Questions | Embedded Assessment Questions (Lesson Collected) |

|---|---|

| RQ1: How do students’ explanations change over the course of three lessons that involve using a simulation and modeling its output? | When the temperature of the room is increased, the perfume particles reach the gas sensor more quickly. Explain why you think this happens. (Lesson 1) |

| Based on your observations of the simulation, explain why the perfume particles reach the gas sensor more quickly when the temperature of the room is increased. (Lesson 1) | |

| Earlier, we asked you why when the temperature of the room is increased the perfume particles reach the gas sensor more quickly. Please explain why this happens now, knowing what you know from working through data from the simulation. (Lesson 3) | |

| RQ2: What do students perceive the strengths and weaknesses of using the simulation to be? | What are some of the benefits of this model of a gas? And what are some of its weaknesses? (Lesson 1) |

| How does your model of data help you explain what is going on? Why? (Lesson 3) | |

| RQ3: How do students approach modeling the classroom data set to account for its variability? | Explain why you selected this mathematical model as the best fit to the data. (Lesson 3) |

| Time Collected | Group 1 (Student 2) | Group 2 (Student 7) | Group 3 (Student 8) |

|---|---|---|---|

| Lesson 1: Before exploring the simulation | This happens because a higher temperature means that the gas particles vibrate more quickly (have more kinetic energy) so they move faster and can get across the room in less time. | Temperature is directly related to energy: as temperature increases, the energy of the system increases. This results in an increase in kinetic energy, and since mass is constant, the velocity will increase throughout the whole system, reducing the time it takes to travel across a fixed distance. | I think that when the temperature rises, the molecules have more energy and move faster, hitting each other more often. |

| Lesson 1: After the exploring the simulation | This seems to be the case because the molecules do move less when they are cold, so it takes longer for the smell to work its way across the chamber. When they are hot the move much more quickly and are able to bounce around from one side of the room to the other quite easily. | Higher temperature → more energy → more collisions in a given amount of time → gets across the fixed distance faster | When the temperature is increased there is more energy in the particles, so they move faster and hit each other and other surfaces more often, so the gas reaches the sensor sooner. |

| Lesson 3: After completing the lesson sequence. | This happens because the particles are travelling at a greater velocity. Since velocity is distance over time, and they have to cover the same distance, the time must decrease to factor in this increased velocity. Though there are particles in the way of the perfume and the sensor, they are physically able to travel faster, so assuming the bounces that they go through are random in each scenario, this should slow each temperature particles equally, and so it comes down to the velocity of the particles. | The model shows us that as temperature increases the time for the particles to reach the sensor decreases. Not only does this model show us this visually, but it also gives us an equation to predict the time it would take a particle to reach the sensor given a temperature. We know that this equation fits our data and model due to the r squared value. The R squared value is a sort of measurement of the accuracy of our equation on a scale from 0-1. The closer the r squared is to one, the better our equation. | I now know through the simulation that the particles move faster and bump around more often the higher the temperature. The more they bump around, the faster particles travel and take up more space. |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Rosenberg, J.M.; Lawson, M.A. An Investigation of Students’ Use of a Computational Science Simulation in an Online High School Physics Class. Educ. Sci. 2019, 9, 49. https://doi.org/10.3390/educsci9010049

Rosenberg JM, Lawson MA. An Investigation of Students’ Use of a Computational Science Simulation in an Online High School Physics Class. Education Sciences. 2019; 9(1):49. https://doi.org/10.3390/educsci9010049

Chicago/Turabian StyleRosenberg, Joshua M., and Michael A. Lawson. 2019. "An Investigation of Students’ Use of a Computational Science Simulation in an Online High School Physics Class" Education Sciences 9, no. 1: 49. https://doi.org/10.3390/educsci9010049

APA StyleRosenberg, J. M., & Lawson, M. A. (2019). An Investigation of Students’ Use of a Computational Science Simulation in an Online High School Physics Class. Education Sciences, 9(1), 49. https://doi.org/10.3390/educsci9010049