Using the Learner-Generated Digital Media (LGDM) Framework in Tertiary Science Education: A Pilot Study

Abstract

1. Introduction

2. Literature Review

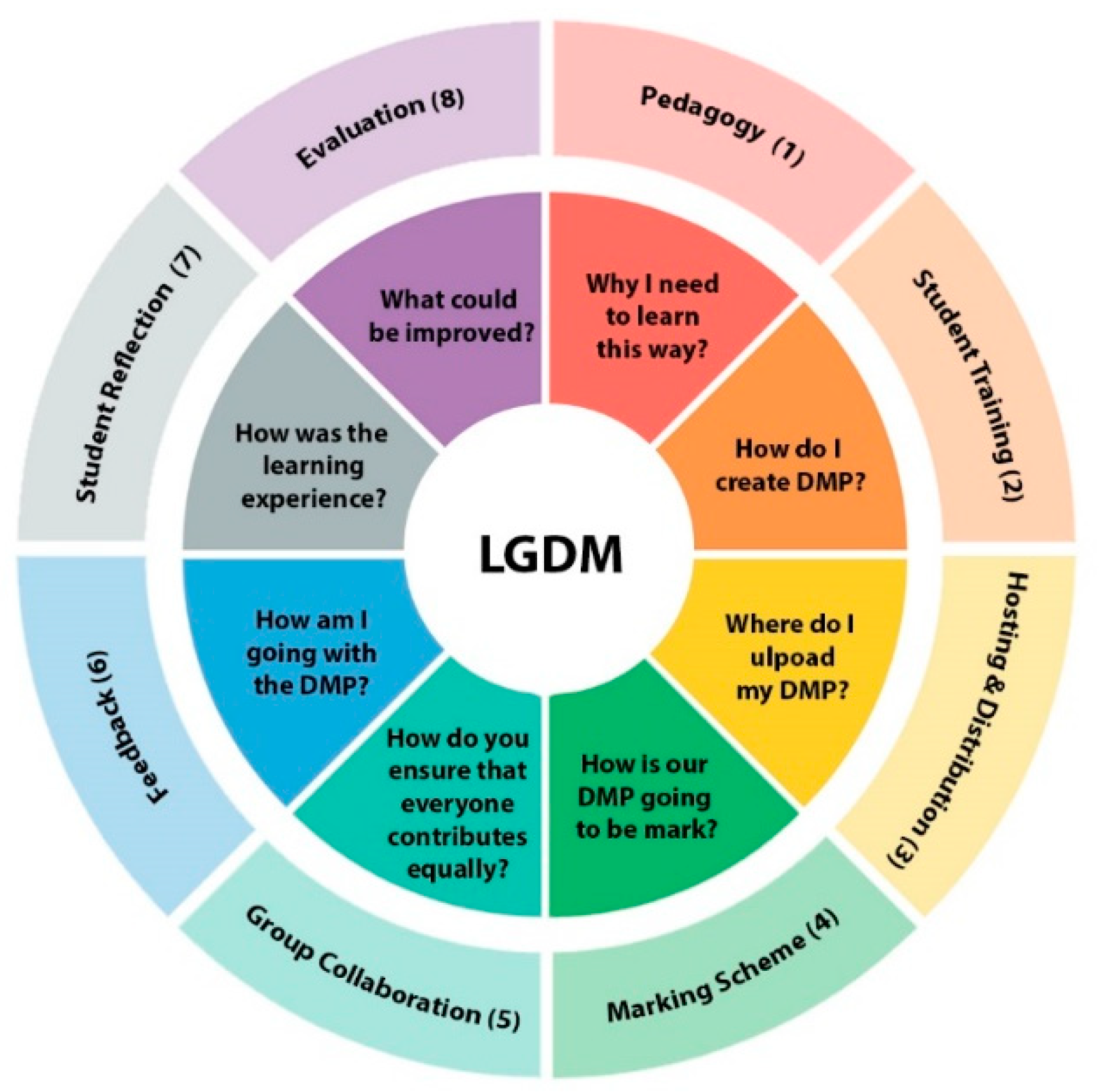

3. The LGDM Implementation Framework

3.1. Pedagogy

3.2. Student Training

3.3. Hosting and Distribution

3.4. Marking Schemes

3.5. Group Contribution

3.6. Feedback

3.7. Student Reflection

3.8. Evaluation

4. Materials and Methods

4.1. Selection of Subjects

4.2. LGDM Assessment Design

4.3. Data Gathering and Questionnaire Design

5. Results

5.1. Demographics

5.2. Questionnaire Validation Using Factor Analysis

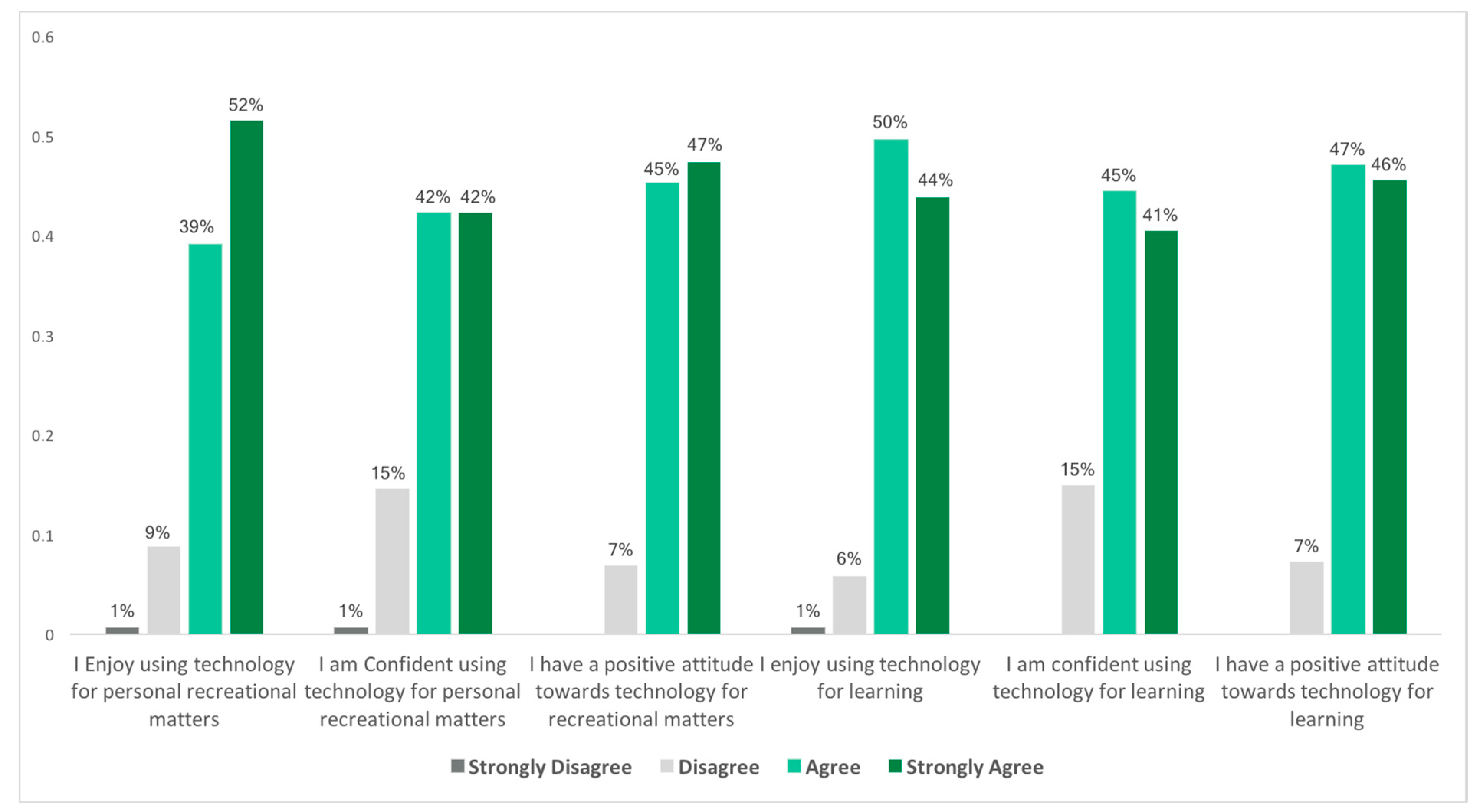

5.3. Attitude toward Technology

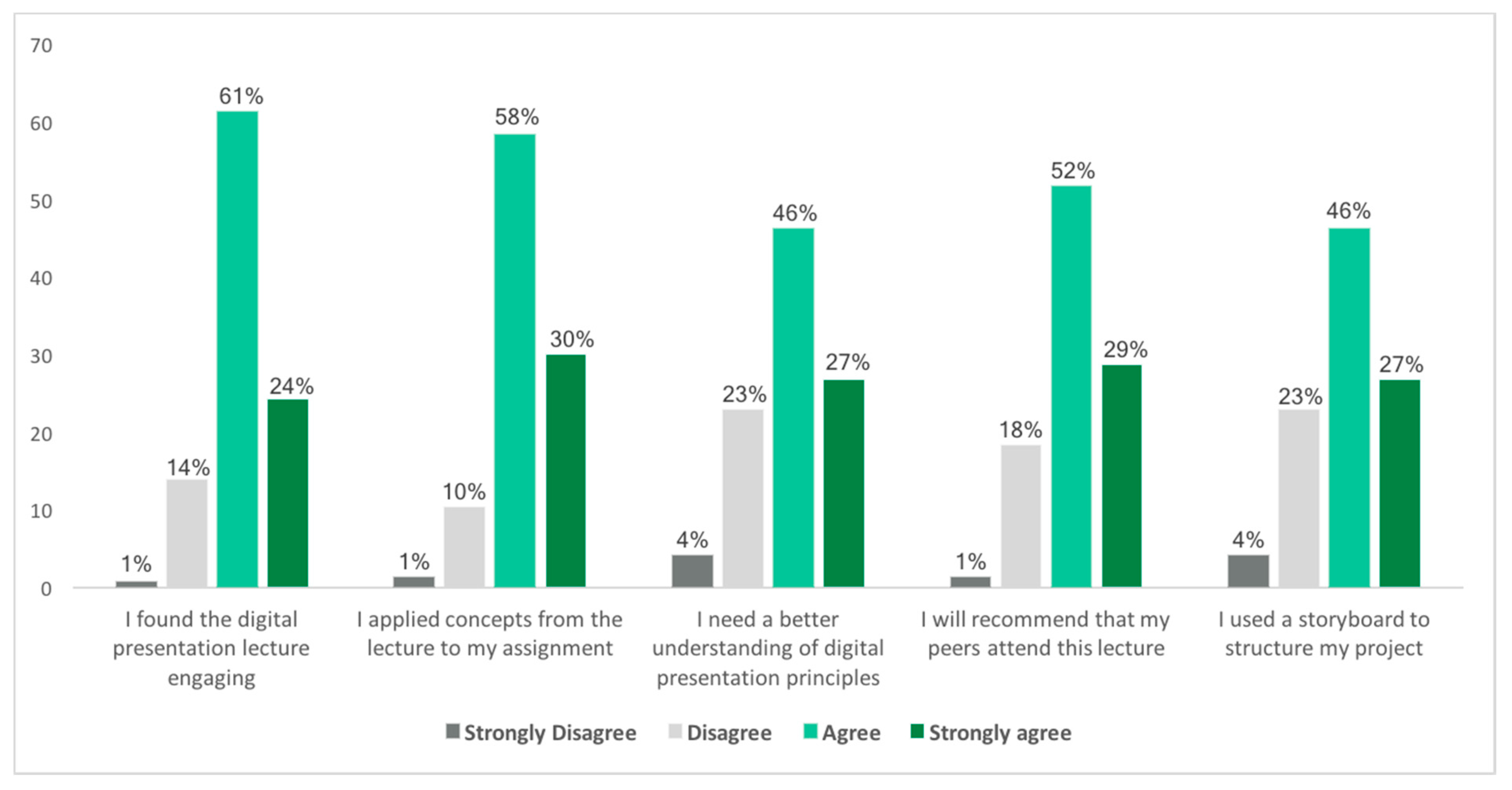

5.4. Digital Media Support

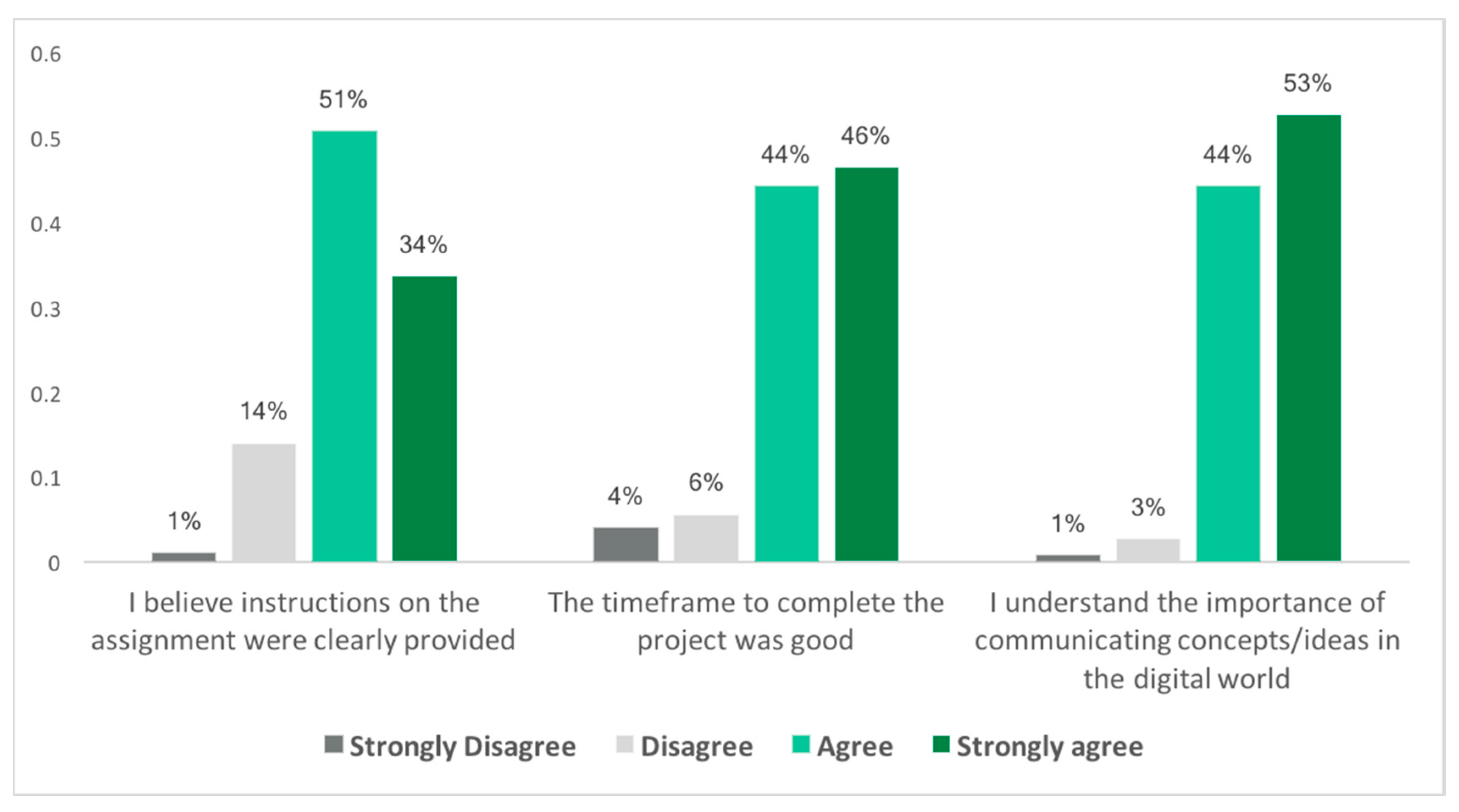

5.5. Understanding the Assignment

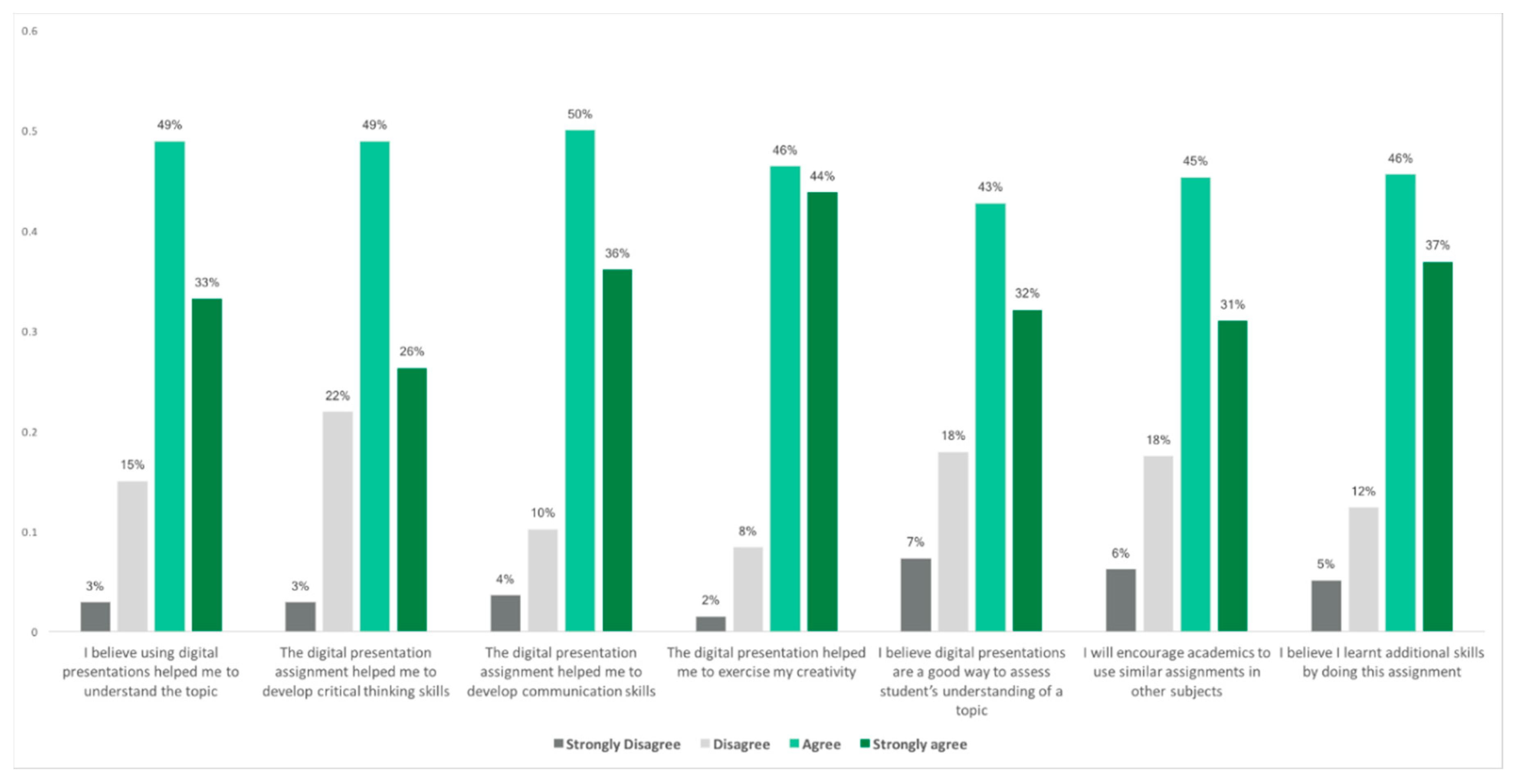

5.6. Knowledge Construction

5.7. Open-Ended Questions

5.8. Group Contribution Data

5.9. Grades Attained

6. Discussion

7. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Reyna, J.; Hanham, J.; Meier, P. Theoretical Considerations to Design Learner-Generated Digital Media (LGDM) Assignments in Higher Education. In Proceedings of the 12th annual International Technology, Education and Development Conference, Valencia, Spain, 5–7 March 2018. [Google Scholar] [CrossRef]

- Kearney, M.; Schuck, S. Students in the director’s seat: Teaching and learning with student-generated video. In Proceedings of the Ed-Media 2005 World Conference on Educational Multimedia, Hypermedia and Telecommunications, Montréal, QC, Canada, 27 June–2 July 2005. [Google Scholar]

- Crean, D. QuickTime streaming: a gateway to multi-modal social analyses. In Proceedings of the Apple University Consortium Conference, James Cook University, Townsville, Australia, 23–26 September 2001. [Google Scholar]

- Ludewig, A. iMovie. A student project with many side-effects. In Proceedings of the AUC Conference, James Cook University. Townsville, Australia, 23–26 September 2001. [Google Scholar]

- Blum, M.; Barger, A. The CASPA Model: An Emerging Approach to Integrating Multimodal Assignments. In EdMedia: World Conference on Educational Media and Technology 2017; Johnston, J.P., Ed.; Association for the Advancement of Computing in Education (AACE): Washington, DC, USA, 2017; pp. 709–717. [Google Scholar]

- Campbell, L.O.; Cox, T.D. Digital Video as a Personalized Learning Assignment: A Qualitative Study of Student Authored Video Using the ICSDR Model. J. Scholarsh. Teach. Learn. 2018, 18, 11–24. [Google Scholar] [CrossRef]

- Spicer, S. Perspectives on the Role of Instructional Video in Higher Education: Evolving Pedagogy, Copyright Challenges, and Support Models. In The Routledge Companion to Media Education, Copyright, and Fair Use; Routledge: New York, NY, USA, 2018; pp. 37–58. [Google Scholar]

- Reynolds, C.; Stevens, D.D.; West, E. “I’m in a Professional School! Why Are You Making Me Do This?” A Cross-Disciplinary Study of the Use of Creative Classroom Projects on Student Learning. Coll. Teach. 2013, 61, 51–59. [Google Scholar] [CrossRef]

- Devine, T.; Gormley, C.; Doyle, P. Lights, Camera, Action: Using Wearable Camera and Interactive Video Technologies for the Teaching and Assessment of Lab Experiments. Int. J. Innov. Sci. Math. Educ. 2015, 23, 22–23. [Google Scholar]

- Nilsen, S. Use of a GoPro® camera as a non-obtrusive research tool. J. Play. Pract. 2017, 4, 39–47. [Google Scholar] [CrossRef]

- Handley, F.J. Developing Digital Skills and Literacies in UK Higher Education: Recent developments and a case study of the Digital Literacies Framework at the University of Brighton. UK Publ. 2018, 48, 109–126. [Google Scholar] [CrossRef]

- Becker, S.A.; Pasquini, L.A.; Zentner, A. Digital Literacy Impact Study: An NMC Horizon Project Strategic Brief. 2017, The New Media Consortium. In Rebooting Learning for the Digital Age: What Next for Technology-Enhanced Higher Education? Davies, S., Mullan, J., Feldman, P., Eds.; Higher Education Policy Institute: Oxford, UK, 2017; pp. 1–58. [Google Scholar]

- Davies, S.; Mullan, J.; Feldman, P. Rebooting Learning for the Digital Age: What Next for Technology-Enhanced Higher Education? Higher Education Policy Institute: Oxford, UK, 2017. [Google Scholar]

- Barra, E.; Herrera, S.A.; Caño, J.Y.P.; Vives, J.Q. Using multimedia and peer assessment to promote collaborative e-learning. New Rev. Hypermedia Multimedia 2014, 20, 103–121. [Google Scholar] [CrossRef]

- Cox, A.M.; Vasconcelos, A.C.; Holdridge, P. Diversifying assessment through multimedia creation in a non-technical module: reflections on the MAIK project. Assess. Eval. High. Educ. 2010, 35, 831–846. [Google Scholar] [CrossRef]

- Hamm, S.; Robertson, I. Preferences for deep-surface learning: A vocational education case study using a multimedia assessment activity. Australas. J. Educ. Technol. 2010, 26, 961–965. [Google Scholar] [CrossRef]

- Berardi, V.; Blundell, G.E. A learning theory conceptual foundation for using capture technology in teaching. Inf. Syst. Educ. J. 2014, 12, 64–73. [Google Scholar]

- Morel, G.; Keahey, H. Student-generated multimedia projects as a multidimensional assessment method in a health information management graduate program. In Proceedings of the Society for Information Technology and Teacher Education International Conference, Savannah, GA, USA, 21 March 2016; Chamblee, G., Langub, L., Eds.; Association for the Advancement of Computing in Education (AACE): Chesapeake, VA, USA, 2016. [Google Scholar]

- Ohler, J. New-media literacies. Academe 2009, 95, 30–33. [Google Scholar]

- Hakkarainen, K. A knowledge-practice perspective on technology-mediated learning. Int. J. Comput. Support. Collab. Learn. 2009, 4, 213–231. [Google Scholar] [CrossRef]

- Potter, J.; McDougall, J. Digital Media, Culture and Education: Theorising Third Space Literacies; Palgrave Macmillan: London, UK, 2017; ISBN 978-1-137-55315-7. [Google Scholar]

- Hoban, G.; Nielsen, W.; Shepherd, A. Student-Generated Digital Media in Science Education: Learning, Explaining and Communicating Content; Routledge: Abingdon-on-Thames, UK, 2015; pp. 1–254. [Google Scholar]

- Duffy, T.M.; Jonassen, D.H. Constructivism and the Technology of Instruction: A Conversation; Routledge: Abingdon-on-Thame, UK, 2013; pp. 1–232. [Google Scholar]

- Rich, P.J.; Hannafin, M. Video annotation tools technologies to scaffold, structure, and transform teacher reflection. J. Teach. Educ. 2009, 60, 52–67. [Google Scholar] [CrossRef]

- Kearney, M. Learner-generated digital video: Using Ideas Videos in Teacher Education. J. Technol. Teach. Educ. 2013, 21, 321–336. [Google Scholar]

- Pirhonen, J.; Rasi, P. Student-generated instructional videos facilitate learning through positive emotions. J. Biol. Educ. 2017, 51, 215–227. [Google Scholar] [CrossRef]

- Pearce, K.L.; Vanderlelie, J.J. Teaching and evaluating graduate attributes in multimedia science-based assessment task. In Proceedings of the Australian Conference on Science and Mathematics Education, Brisbane, Australia, 28–29 September 2016. [Google Scholar]

- Reyna, J.; Meier, P.; Geronimo, F.; Rodgers, K. Implementing Digital Media Presentations as Assessment Tools for Pharmacology Students. Am. J. Educ. Res. 2016, 4, 983–991. [Google Scholar]

- Nielsen, W.; Hoban, G.; Hyland, C. Pharmacology Students’ Perceptions of Creating Multimodal Digital Explanations. Chem. Educ. Res. Pract. 2017, 18, 329–339. [Google Scholar] [CrossRef]

- Henriksen, B.; Henriksen, J.; Thurston, J.S. Building Health Literacy and Cultural Competency through Video Recording Exercises. INNOVATIONS Pharm. 2016, 7, 1–2. [Google Scholar] [CrossRef]

- Powell, L.; Robson, F. Learner-generated podcasts: a useful approach to assessment? Innov. Educ. Teach. Int. 2014, 51, 326–337. [Google Scholar] [CrossRef]

- Vasilchenko, A.; Green, D.P.; Qarabash, H.; Preston, A.; Bartindale, T.; Balaam, M. Media Literacy as a By-Product of Collaborative Video Production by CS Students. In Proceedings of the 2017 ACM Conference on Innovation and Technology in Computer Science Education, Bologna, Italy, 3–5 July 2017. [Google Scholar] [CrossRef]

- Reyna, J.; Horgan, F.; Ramp, D.; Meier, P. Using Learner-Generated Digital Media (LGDM) as an Assessment Tool in Geological Sciences. In Proceedings of the 11th Annual International Technology, Education and Development Conference, Valencia, Spain, 6–8 March 2017. [Google Scholar] [CrossRef]

- McLoughlin, C.; Loch, B. Engaging students in cognitive and metacognitive processes using screencasts. In EdMedia: World Conference on Educational Media and Technology 2012; Amiel, T., Wilson, B., Eds.; Association for the Advancement of Computing in Education (AACE): Denver, CO, USA, 2012; pp. 1107–1110. [Google Scholar]

- Calder, N. The layering of mathematical interpretations through digital media. Educ. Stud. Math. 2012, 80, 269–285. [Google Scholar] [CrossRef]

- Anuradha, V.; Rengaraj, M. Storytelling: Creating a Positive Attitude toward Narration among Engineering Graduates. IUP J. Engl. Stud. 2017, 12, 32–38. [Google Scholar]

- Johnson, C.I.; Mayer, R.E. Applying the self-explanation principle to multimedia learning in a computer-based game-like environment. Comput. Hum. Behav. 2010, 26, 1246–1252. [Google Scholar] [CrossRef]

- Hobbs, R. Create to Learn: Introduction to Digital Literacy; Wiley-Blackwell: Hoboken, NJ, USA, 2017; pp. 1–296. [Google Scholar]

- Yeh, H.-C. Exploring the perceived benefits of the process of multimodal video making in developing multiliteracies. Lang. Learn. Technol. 2018, 22, 28–37. [Google Scholar]

- Nelson, M.E. Mode, meaning, and synaesthesia in multimedia L2 writing. ICFAI J. Engl. Stud. 2006, 2, 69–91. [Google Scholar]

- Shin, D.-S.; Cimasko, T. Multimodal composition in a college ESL class: New tools, traditional norms. Comput. Compos. 2008, 25, 376–395. [Google Scholar] [CrossRef]

- Nelson, M.E.; Hull, G.A. Self-presentation through multimedia: A Bakhtinian perspective on digital storytelling. In Digital Storytelling, Mediatized Stories: Self-Representations in New Media; Peter Lang: New York, NY, USA, 2008; pp. 123–144. [Google Scholar]

- Hoban, G.; Nielsen, W.; Carceller, C. Articulating constructionism: Learning science through designing and making “Slowmations” (student-generated animations). In Conference of the Australasian Society for Computers in Learning in Tertiary Education; University of Queenland: Brisbane, Australia, 2010; pp. 433–443. [Google Scholar]

- Türkay, S. The effects of whiteboard animations on retention and subjective experiences when learning advanced physics topics. Comput. Educ. 2016, 98, 102–114. [Google Scholar] [CrossRef]

- Hoban, G.; Loughran, J.; Nielsen, W. Slowmation: Preservice elementary teachers representing science knowledge through creating multimodal digital animations. J. Res. Sci. Teach. 2011, 48, 985–1009. [Google Scholar] [CrossRef]

- Miller, S.T.; James, C.R. The Effect of Animations Within PowerPoint Presentations on Learning Introductory Astronomy. Astron. Educ. Rev. 2011, 10, 107–119. [Google Scholar] [CrossRef]

- Jacobs, B.; Clark, J.C. Create to critique: Animation creation as conceptual consolidation. Teach. Sci. J. Aust. Sci. Teach. Assoc. 2018, 64, 26–36. [Google Scholar]

- Reyna, J.; Hanham, J.; Rodgers, K.; Meier, P. Learner-Generated Digital Media (LGDM) Framework. In Proceedings of the INTED2017, Valencia, Spain, 6–8 March 2017. [Google Scholar]

- Van Dijk, A.M.; Lazonder, A.W. Scaffolding students’ use of learner-generated content in a technology-enhanced inquiry learning environment. Interact. Learn. Environ. 2016, 24, 194–204. [Google Scholar] [CrossRef]

- Hmelo-Silver, C.E. Problem-based learning: What and how do students learn? Educ. Psychol. Rev. 2004, 16, 235–266. [Google Scholar] [CrossRef]

- Goodsell, A.S. Collaborative Learning: A Sourcebook for Higher Education; National Center on Postsecondary Teaching, Learning and Assessment: Washington, DC, USA, 1997; Volume II. [Google Scholar]

- Doolittle, P.E. Understanding cooperative learning through Vygotsky. In Proceedings of the Lily National Conference on Excellence in College Teaching, Colombia, SC, USA, 2–4 June 1995. [Google Scholar]

- Foot, H.; Howe, C. The psychoeducational basis of peer-assisted learning. In Peer-Assisted Learning; Topping, K.J., Ehly, S.W., Eds.; Lawrence Erlbaum Associates: Mahwah, NJ, USA, 1998; pp. 27–43. [Google Scholar]

- Fuller, I.C.; France, D. Does digital video enhance student learning in field-based experiments and develop graduate attributes beyond the classroom? J. Geogr. High. Educ. 2016, 40, 193–206. [Google Scholar] [CrossRef]

- Frawley, J.K.; Dyson, L.E.; Tyler, J.; Wakefield, J. Building graduate attributes using student generated screencasts. Globally connected, digitally enabled. In Proceedings of the Ascilite 2015, Perth, Australia, 30 November–2 December 2015. [Google Scholar]

- Greene, H.; Crespi, C. The value of student-created videos in the college classroom—An exploratory study in marketing and accounting. Int. J. Arts Sci. 2012, 5, 273–283. [Google Scholar]

- Pearce, K.L. Undergraduate creators of video, animations and blended media: The students’ perspective. In Proceedings of the Australian Conference on Science and Mathematics Education (formerly UniServe Science Conference), Sydney, Australia, 29 September 2014. [Google Scholar]

- Coulson, S.; Frawley, J.K. Student-generated multimedia for supporting learning in an undergraduate physiotherapy course. In Proceedings of the ASCILITE 2017: 34th International Conference on Innovation, Practice and Research in the Use of Educational Technologies in Tertiary Education, Toowoomba, Southern Queensland, Australia, 4–6 December 2017. [Google Scholar]

- Alexander, B.; Becker, S.A.; Cummins, M. Digital Literacy: An NMC Horizon Project Strategic Brief; The New Media Consortium: Austin, TX, USA, October 2016; Volume 3.3. [Google Scholar]

- Sturges, M.; Reyna, J. Use of Vimeo on-line video sharing services as a reflective tool in higher educational settings: A preliminary report. In Proceedings of the ASCILITE-Australian Society for Computers in Learning in Tertiary Education Annual Conference, Sydney, Australia, 5–8 December 2010. [Google Scholar]

- Snelson, C. YouTube Across the Disciplines: A Review of the Literature. MERLOT J. Online Learn. Teach. 2011, 7, 159–169. [Google Scholar]

- Kearney, M. Towards a learning design for student-generated digital storytelling. In Proceedings of the Future of Learning Design Conference, University of Wollongong, Sydney, Australia, 10 December 2009. [Google Scholar]

- Musburger, R.B.; Kindem, G. Introduction to Media Production: The Path to Digital Media Production; Focal Press: Burlington, MA, USA, 2012; pp. 74–78. [Google Scholar]

- Sørensen, B.H.; Levinsen, K.T. Digital Production and Students as Learning Designers. Des. Learn. 2014, 7, 54–74. [Google Scholar] [CrossRef]

- Reyna, J.; Hanham, J.; Meier, P. A taxonomy of digital media types for Learner-Generated Digital Media assignments. e-Learn. Digit. Med. 2017, 14, 309–322. [Google Scholar] [CrossRef]

- Willey, K.; Gardner, A. Investigating the capacity of self and peer assessment activities to engage students and promote learning. Eur. J. Eng. Educ. 2010, 35, 429–443. [Google Scholar] [CrossRef]

- Hanrahan, S.J.; Isaacs, G. Assessing self-and peer-assessment: The students’ views. High. Educ. Res. Dev. 2001, 20, 53–70. [Google Scholar] [CrossRef]

- Hattie, J.; Timperley, H. The power of feedback. Rev. Educ. Res. 2007, 77, 81–112. [Google Scholar] [CrossRef]

- Phillips, R.; McNaught, C.; Kennedy, G. Evaluating e-Learning: Guiding Research and Practice; Routledge: Abingdon-on-Thames, UK, 2012. [Google Scholar]

- Tashakkori, A.; Teddlie, C. Sage Handbook of Mixed Methods in Social & Behavioural Research; Sage: Thousand Oaks, CA, USA, 2010; pp. 27–35. [Google Scholar]

- Beavers, A.S.; Lounsbury, J.W.; Richards, J.K.; Huck, S.W.; Skolits, G.J.; Esquivel, S.L. Practical considerations for using exploratory factor analysis in educational research. Pract. Assess. Res. Eval. 2013, 18, 1–13. [Google Scholar]

- Gorissen, P.; Bruggen, J.V.; Jochems, W. Methodological triangulation of the students’ use of recorded lectures. Int. J. Learn. Technol. 2013, 8, 20–40. [Google Scholar] [CrossRef]

- Reyna, J.; Hanham, J.; Meier, P. The Internet explosion, digital media principles and implications to communicate effectively in the digital space. e-Learn. Digit. Media 2018, 15, 36–52. [Google Scholar] [CrossRef]

- Powell, L.M. Evaluating the Effectiveness of Self-Created Student Screencasts as a Tool to Increase Student Learning Outcomes in a Hands-On Computer Programming Course. Inf. Syst. Educ. J. 2015, 13, 106–111. [Google Scholar]

- Braun, M. Comparative Evaluation of Online and In-Class Student Team Presentations. J. Univ. Teach. Learn. Pract. 2017, 14, 1–21. [Google Scholar]

- Hoban, G.; Nielsen, W. Learning Science through Creating a ‘Slowmation’: A case study of preservice primary teachers. Int. J. Sci. Educ. 2013, 35, 119–146. [Google Scholar] [CrossRef]

- Georgiou, H.; Nielsen, W.; Doran, Y.; Turney, A.; Jones, P. Analysing student-generated digital media in science. In Proceedings of the Australian Conference on Science and Mathematics Education, Brisbane, Australia, 28–29 September 2016. [Google Scholar]

- Anderson, J. Evaluating student-generated film as a learning tool for qualitative methods: geographical “drifts” and the city. J. Geogr. High. Educ. 2013, 37, 136–146. [Google Scholar] [CrossRef]

- Collins, A.; Halverson, R. Rethinking Education in the Age of Technology: The Digital Revolution and Schooling in America; Teachers College Press: New York, NY, USA, 2018. [Google Scholar]

- Pomerantz, J.; Brooks, D.C. ECAR Study of Faculty and Information Technology; ECAR: Louisville, CO, USA, 2017; pp. 1–43. [Google Scholar]

- Hatlevik, O.E.; Throndsen, I.; Loi, M.; Gudmundsdottir, G.B. Students’ ICT self-efficacy and computer and information literacy: Determinants and relationships. Comput. Educ. 2018, 118, 107–119. [Google Scholar] [CrossRef]

- Hoban, G.; Nielsen, W. Using ‘Slowmation’ to Enable Preservice Primary Teachers to Create Multimodal Representations of Science Concepts. Res. Sci. Educ. 2012, 42, 1101–1119. [Google Scholar] [CrossRef]

- Jablonski, D.; Hoban, G.F.; Ransom, H.S.; Ward, K.S. Exploring the use of “slowmation” as a pedagogical alternative in science teaching and learning. Pac.-Asian Educ. J. 2015, 27, 5–20. [Google Scholar]

- Banner, O.; Ostherr, K. Design in Motion: Introducing Science/Animation. Discourse J. Theor. Stud. Media Cult. 2015, 37, 175–192. [Google Scholar] [CrossRef]

- Stockman, S. How to Shoot Video That Doesn’t Suck: Advice to Make Any Amateur Look Like a Pro; Workman Publishing: New York, NY, USA, 2011; pp. 45–56. [Google Scholar]

- Hashimoto, A.; Clayton, M. Visual Design Fundamentals: A Digital Approach; Charles River Media: Hingham, MA, USA, 2009; pp. 29–33. [Google Scholar]

- Beetham, H.; Sharpe, R. Rethinking Pedagogy for a Digital Age: Designing for 21st Century Learning; Routledge: Abingdon-on-Thames, UK, 2013; p. 311. [Google Scholar]

- Bates, T. Teaching in a Digital Age; University of British Columbia, Tony Bates Associates: Vancouver, BC, Canada, 2016; p. 606. [Google Scholar]

- Bennett, S.; Maton, K.; Kervin, L. The ‘digital natives’ debate: A critical review of the evidence. Br. J. Educ. Technol. 2008, 39, 775–786. [Google Scholar] [CrossRef]

- Yang, I.; Lau, B.T. Undergraduate Students’ Perceptions as Producer of Screencast Videos in Learning Mathematics. In Redesigning Learning for Greater Social Impact; Springer: New York, NY, USA, 2018; pp. 277–286. [Google Scholar]

- Yang, X.; Guo, X.; Yu, S. Student-generated content in college teaching: Content quality, behavioural pattern and learning performance. J. Comput. Assist. Learn. 2016, 32, 1–15. [Google Scholar] [CrossRef]

- Zimmerman, B.J.; Schunk, D. Motivational sources and outcomes of self-regulated learning and performance. In Handbook of Self-Regulation of Learning and Performance; Routledge: New York, NY, USA, 2011; pp. 49–64. [Google Scholar]

- Graybill, J.K. Teaching energy geographies via videography. J. Geogr. High. Educ. 2016, 40, 55–66. [Google Scholar] [CrossRef]

- Zimmerman, B.J. Self-Efficacy: An Essential Motive to Learn. Contemp. Educ. Psychol. 2000, 25, 82–91. [Google Scholar] [CrossRef] [PubMed]

- Azevedo, R.; Cromley, J.G. Does training on self-regulated learning facilitate students’ learning with hypermedia? J. Educ. Psychol. 2004, 96, 523. [Google Scholar] [CrossRef]

- Dabbagh, N.; Kitsantas, A. Supporting self-regulation in student-centered web-based learning environments. Int. J. e-Learn. 2004, 3, 40–47. [Google Scholar]

- Pannabecker, V.; Barroso, C.S.; Lehmann, J. The Flipped Classroom: Student-Driven Library Research Sessions for Nutrition Education. Int. Ref. Serv. Q. 2014, 19, 139–162. [Google Scholar] [CrossRef]

- Hofer, M.; Owings-Swan, K. Digital moviemaking—The harmonization of technology, pedagogy and content. Int. J. Technol. Teach. Learn. 2005, 1, 102–110. [Google Scholar]

| Subject | Yr | N | Sample Size (%) | Digital Media Type | Assessment Weight | Mode of Instruction |

|---|---|---|---|---|---|---|

| Pharmacology 2 | 2 | 169 | 98 (58%) | Digital story, video, blended media | 30% | B |

| Geological Processes | 2 | 101 | 73 (72%) | Digital story, video, blended media | 10% | B |

| Animal Behaviour and Physiology | 2 | 106 | 34 (31%) | Digital story, video, blended media | 10% | B |

| Evaluating TCM: Theory, Practice and Research | 3 | 43 | 35 (81%) | Digital story, video, blended media | 20% | B |

| Introductory Pharmacology and Microbiology | 3 | 39 | 34 (87%) | Brochure, poster design | 25% | O |

| TOTAL | 458 | 274 (60%) |

| Category | Item |

|---|---|

| Demographics | 1. Gender 2. Age 3. Education 4. English as an additional language (EAL) |

| Digital media support | 5. I found the digital presentation lecture engaging. 6. I applied concepts from the lecture to my assignment. 7. I need a better understanding of digital presentation principles. 8. I will recommend that my peers attend this lecture. 9. I used a storyboard to structure my project. 10. Overall, the technical support to complete my project was good. |

| Attitude towards technology | 11. I Enjoy using technology for personal/recreational matters. 12. I am confident using technology for personal/recreational matters. 13. I have a positive attitude towards technology for recreational matters. 14. I enjoy using technology for learning. 15. I am confident using technology for learning. 16. I have a positive attitude towards technology for learning. |

| Understanding of the assignment | 17. I believe instructions on the assignment were clearly provided. 18. The timeframe to complete the project was good. 19. I understand the importance of communicating concepts/ideas in the digital world. 20. Overall, I was happy about the digital media presentation assignment. |

| Knowledge construction | 21. I believe using digital presentations helped me to understand the topic. 22. The digital presentation assignment helped me to develop critical thinking skills. 23. The digital presentation assignment helped me to develop communication skills. 24. The digital presentation helped me to work as a part of a team. 25. The digital presentation helped me to exercise my creativity. 26. I believe digital presentations are a good way to assess students’ understanding of a topic. 27. I will encourage academics to use similar assignments in other subjects. 28. I believe I learnt additional skills by doing this assignment. |

| Open-ended Questions | 29. Did you experience any issue with the assignment? 30. What did you like most about the assignment? 31. What did you like least about the assignment? 32. Do you have any feedback on how to improve this assignment? 33. Is there anything that you would like to say that has not been covered in the previous questions? If so, please feel free to provide additional feedback in the space below: |

| Characteristic | N | % |

|---|---|---|

| Gender | ||

| Male | 102 | 37.2 |

| Female | 172 | 62.8 |

| Age bracket | ||

| 18–29 | 239 | 87.2 |

| 30–49 | 31 | 11.3 |

| 50–64 | 4 | 1.5 |

| Level of education | ||

| High school graduate | 179 | 65.3 |

| Some college | 15 | 5.5 |

| College graduate | 5 | 1.8 |

| University degree | 66 | 24.1 |

| Trade/technical/vocational training | 9 | 3.3 |

| English as an Additional Language (EAL) | ||

| Yes | 55 | 20.1 |

| No | 219 | 79.1 |

| Factor | ||||

|---|---|---|---|---|

| Item | Digital Media Support | Attitude Toward Technology | Understanding the Assignment | Knowledge Construction |

| 5 | 0.724 | |||

| 6 | 0.714 | |||

| 9 | 0.687 | |||

| 8 | 0.607 | |||

| 7 | 0.561 | |||

| 13 | 0.874 | |||

| 12 | 0.855 | |||

| 15 | 0.851 | |||

| 14 | 0.823 | |||

| 16 | 0.823 | |||

| 11 | 0.809 | |||

| 18 | 0.742 | |||

| 17 | 0.682 | |||

| 19 | 0.561 | |||

| 26 | 0.833 | |||

| 27 | 0.817 | |||

| 28 | 0.784 | |||

| 22 | 0.762 | |||

| 21 | 0.749 | |||

| 23 | 0.744 | |||

| 25 | 0.681 | |||

| Question | Frequencies | |||

|---|---|---|---|---|

| SD | D | A | SA | |

| I enjoy using technology for personal/recreational matters. | 2 (0.7%) | 24 (8.8%) | 107 (39.1%) | 141 (51.5%) |

| I am confident using technology for personal/recreational matters. | 2 (0.7%) | 40 (14.6%) | 116 (42.3%) | 116 (42.3%) |

| I have a positive attitude towards technology for recreational matters. | 1 (0.4%) | 19 (6.9%) | 124 (45.3%) | 130 (47.4%) |

| I enjoy using technology for learning. | 2 (0.7%) | 16 (5.8%) | 136 (49.6%) | 120 (43.8%) |

| I am confident using technology for learning. | - | 41 (15%) | 122 (44.5%) | 111 (40.5%) |

| I have a positive attitude towards technology for learning. | - | 20 (7.3%) | 129 (47.1%) | 125 (45.6%) |

| Question | Frequencies | |||

|---|---|---|---|---|

| SD | D | A | SA | |

| I found the digital presentation lecture engaging. | 2 (0.7%) | 33 (13.8%) | 147 (61.3%) | 58 (24.2%) |

| I applied concepts from the lecture to my assignment. | 3 (1.3%) | 25 (10.4%) | 140 (58.3%) | 72 (30.0%) |

| I used a storyboard to structure my project. | 10 (4.1%) | 55 (22.9%) | 111 (46.3%) | 64 (26.7%) |

| I will recommend that my peers attend this lecture. | 3 (1.3%) | 44 (18.3%) | 124 (51.7%) | 69 (28.7%) |

| I need a better understanding of digital presentation principles. | 10 (4.1%) | 55 (22.9%) | 111 (46.3%) | 64 (26.7%) |

| Question | Frequencies | |||

|---|---|---|---|---|

| SD | D | A | SA | |

| I believe instructions on the assignment were clearly provided. | 5 (1.8%) | 38 (13.9%) | 39 (50.7%) | 92 (33.6%) |

| The timeframe to complete the project was good. | 11 (4%) | 15 (5.5%) | 121 (44.2%) | 127 (46.4%) |

| I understand the importance of communicating concepts/ideas in the digital world. | 2 (0.7%) | 7 (2.6%) | 121 (44.2%) | 144 (52.6%) |

| Question | Frequencies | |||

|---|---|---|---|---|

| SD | D | A | SA | |

| I believe using digital presentations helped me to understand the topic. | 8 (2.9%) | 41 (15%) | 134 (48.9%) | 91 (33.2%) |

| The digital presentation assignment helped me to develop critical thinking skills. | 8 (2.9%) | 60 (21.9%) | 134 (48.9%) | 72 (26.3%) |

| The digital presentation assignment helped me to develop communication skills. | 10 (3.6%) | 28 (10.2%) | 137 (50%) | 99 (36.1%) |

| The digital presentation helped me to exercise my creativity. | 4 (1.5%) | 23 (8.4%) | 127 (46.4%) | 120 (43.8%) |

| I believe digital presentations are a good way to assess students’ understanding of a topic. | 20 (7.3%) | 49 (17.9%) | 117 (42.7%) | 88 (32.1%) |

| I will encourage academics to use similar assignments in other subjects. | 17 (6.2%) | 48 (17.5%) | 124 (45.3%) | 85 (31%) |

| I believe I learnt additional skills by doing this assignment. | 14 (5.1%) | 34 (12.4%) | 125 (45.6%) | 101 (36.9%) |

| Theme | Frequency | % |

|---|---|---|

| No issues | 110 | 68 |

| Inadequate skills in digital media creation | 20 | 12 |

| Not understanding the assignment | 11 | 7 |

| Short time to complete the assignment | 10 | 6 |

| Other | 12 | 7 |

| Theme | Frequency | % |

|---|---|---|

| Creativity | 47 | 28 |

| Teamwork | 38 | 23 |

| Learning digital media Learning subject content | 35 16 | 21 10 |

| Different to other assignments | 16 | 10 |

| Self-expression | 10 | 6 |

| Other | 7 | 2 |

| Theme | Frequency | % |

|---|---|---|

| Nothing Group issues | 46 32 | 32 22 |

| Inadequate digital media skills | 20 | 14 |

| Understanding the assignment | 14 | 10 |

| Time-consuming | 13 | 9 |

| Not enough time to produce the assignment | 5 | 4 |

| Other | 13 | 9 |

| Theme | Frequency | % |

|---|---|---|

| No feedback | 48 | 37 |

| Additional software training | 31 | 24 |

| More assignment instructions | 12 | 9 |

| Small group size | 6 | 5 |

| Start task earlier in the semester | 7 | 5 |

| Equipment available to students | 5 | 4 |

| Ability to choose group members | 5 | 4 |

| More topics to choose from | 5 | 4 |

| Other | 10 | 8 |

| Theme | Frequency | % |

|---|---|---|

| Positive comments about assignment | 30 | 55 |

| No comment | 18 | 32 |

| Other | 7 | 13 |

| Subject | N | Min | Max | Mean | S. D | Variance |

|---|---|---|---|---|---|---|

| Geological Processes | 96 | 0.19 | 1.18 | 0.99 | 0.14 | 0.019 |

| Introductory Pharmacology & Microbiology | 40 | 0.60 | 1.12 | 0.99 | 0.11 | 0.012 |

| Pharmacology 2 | 167 | 0.29 | 1.15 | 0.99 | 0.09 | 0.007 |

| Total | 303 |

| Contribution Level (%) | Geological Processes | Introductory Pharmacology & Microbiology | Pharmacology 2 |

|---|---|---|---|

| Optimum (RPF > 1.0) | 60.0 | 62.5 | 49.1 |

| Acceptable (RPF = 0.8–1.0) | 33.7 | 30.0 | 47.9 |

| Poor (RPF < 0.8) | 6.3 | 7.5 | 3.0 |

| Subject | N | Min | Max | Mean | S. D |

|---|---|---|---|---|---|

| Pharmacology 2 | 169 | 33 | 96 | 79 | 9.25 |

| Geological Processes | 101 | 67 | 100 | 95 | 7.51 |

| Animal Behaviour and Physiology | 106 | 53 | 100 | 77 | 14.45 |

| Evaluating TCM: Theory, Practice & Research | 43 | 70 | 95 | 84 | 7.83 |

| Introductory Pharmacology and Microbiology | 39 | 61 | 97 | 82 | 12.48 |

| Total | 458 |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Reyna, J.; Meier, P. Using the Learner-Generated Digital Media (LGDM) Framework in Tertiary Science Education: A Pilot Study. Educ. Sci. 2018, 8, 106. https://doi.org/10.3390/educsci8030106

Reyna J, Meier P. Using the Learner-Generated Digital Media (LGDM) Framework in Tertiary Science Education: A Pilot Study. Education Sciences. 2018; 8(3):106. https://doi.org/10.3390/educsci8030106

Chicago/Turabian StyleReyna, Jorge, and Peter Meier. 2018. "Using the Learner-Generated Digital Media (LGDM) Framework in Tertiary Science Education: A Pilot Study" Education Sciences 8, no. 3: 106. https://doi.org/10.3390/educsci8030106

APA StyleReyna, J., & Meier, P. (2018). Using the Learner-Generated Digital Media (LGDM) Framework in Tertiary Science Education: A Pilot Study. Education Sciences, 8(3), 106. https://doi.org/10.3390/educsci8030106