The Moderating Effect of Gender Equality and Other Factors on PISA and Education Policy

Abstract

1. Introduction

1.1. Education Policy in the Global Context

1.2. The OECD and PISA

1.3. Critiques of the Power of PISA

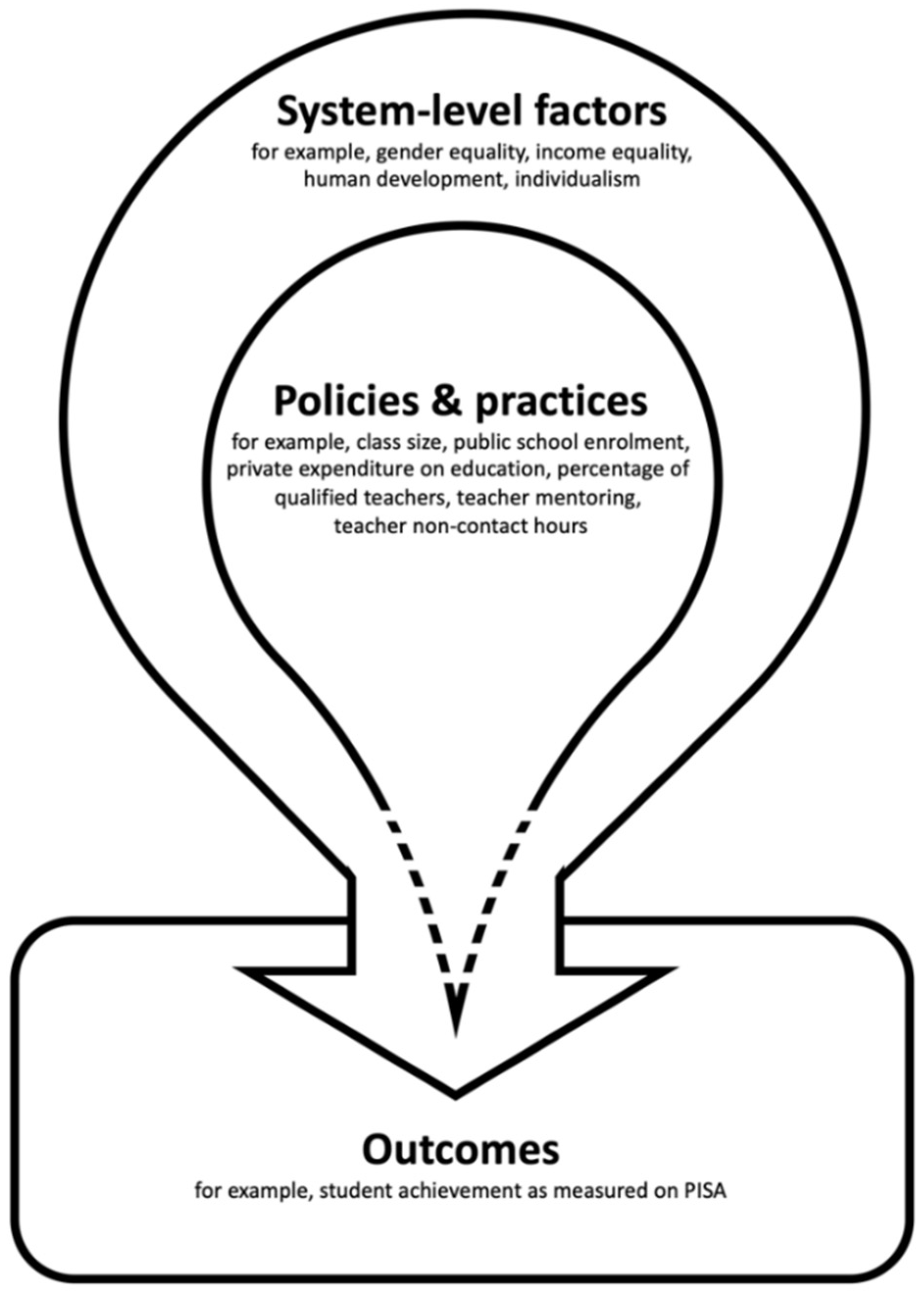

1.4. The Moderating Effect of System-Specific Factors

- Which system-specific factors are associated with PISA 2015 results?

- Do these system-specific factors moderate the relationship between education conditions and student outcomes, and if so, how?

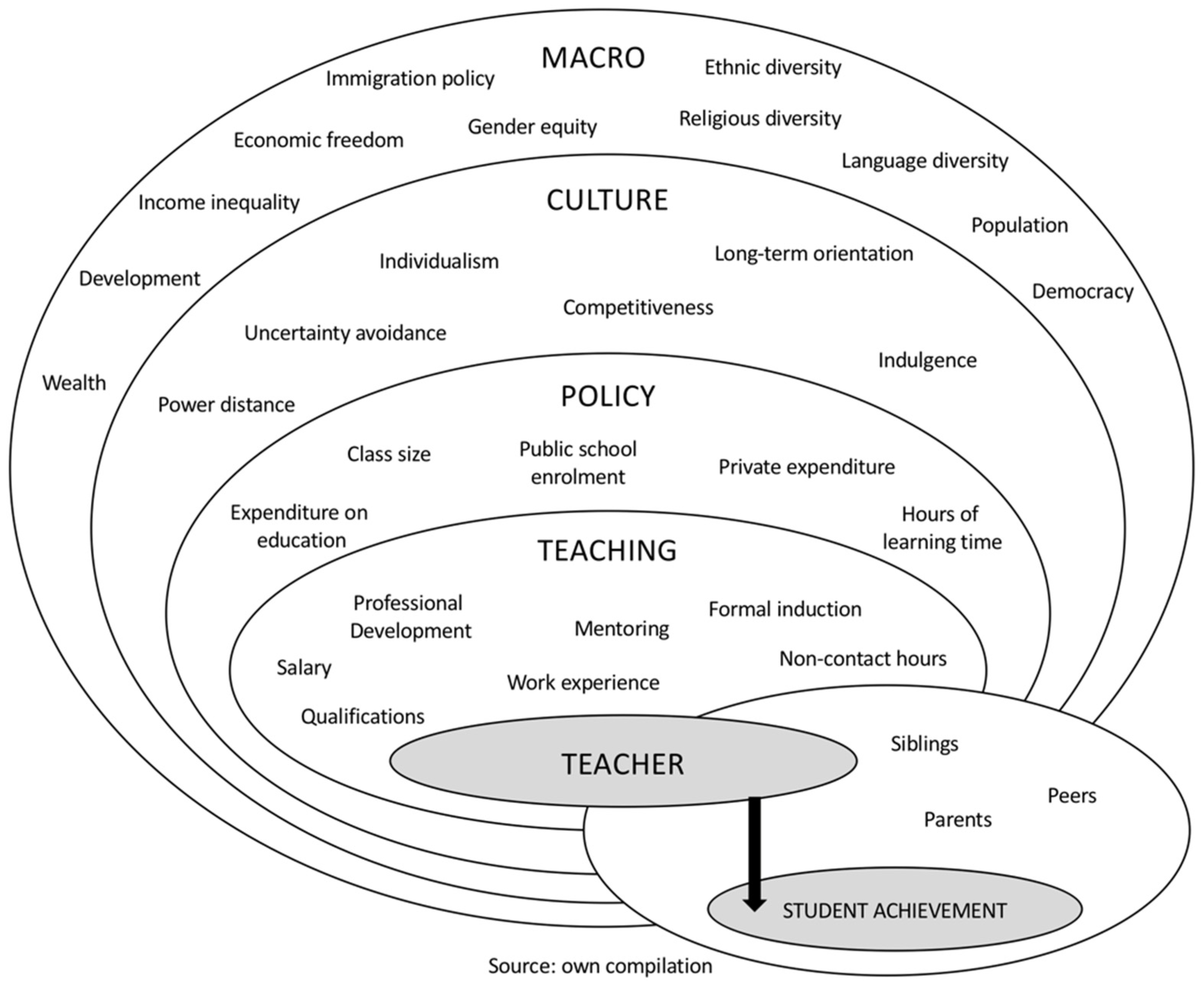

2. Theoretical Framework and Selection of System-Specific Factors

2.1. Socio-Economic and Cultural Factors

2.1.1. Human Development

2.1.2. Income Inequality

2.1.3. Gender Equality

2.1.4. Individualism

2.2. Education Policy Variables

3. Methods and Materials

3.1. Methods

3.1.1. Qualitative Comparative Analysis

3.1.2. Correlational Analyses

3.2. Data

3.2.1. Cases

3.2.2. Student Achievement

3.2.3. System-Specific Factors and Education Policy Variables

4. Results

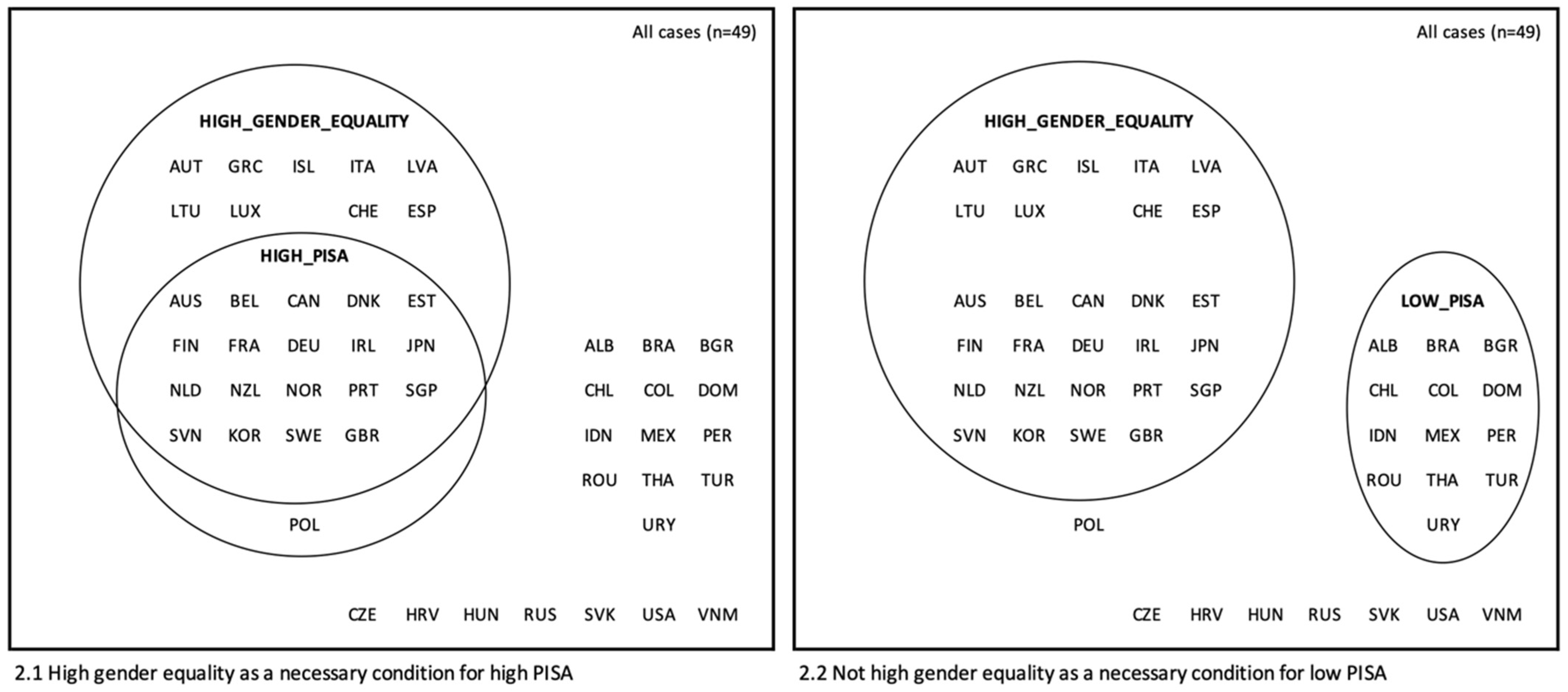

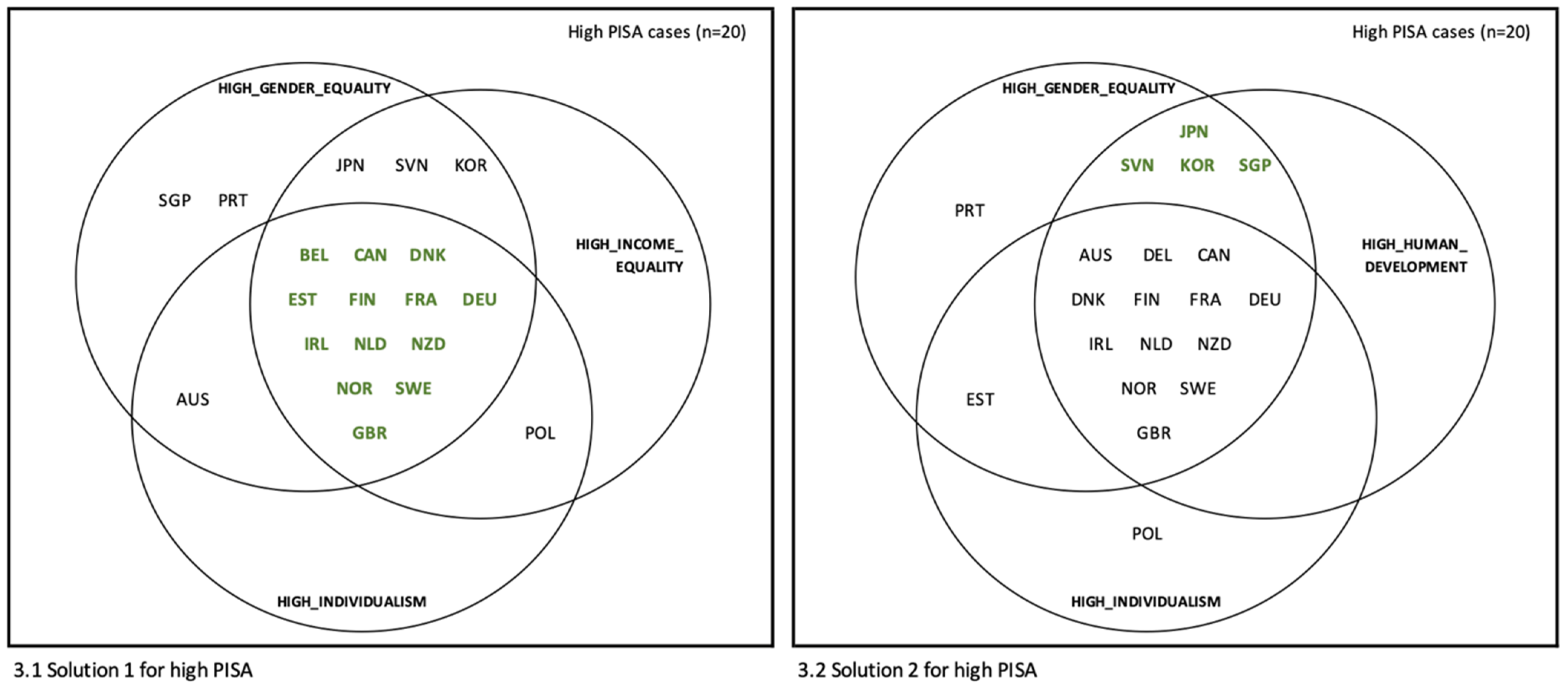

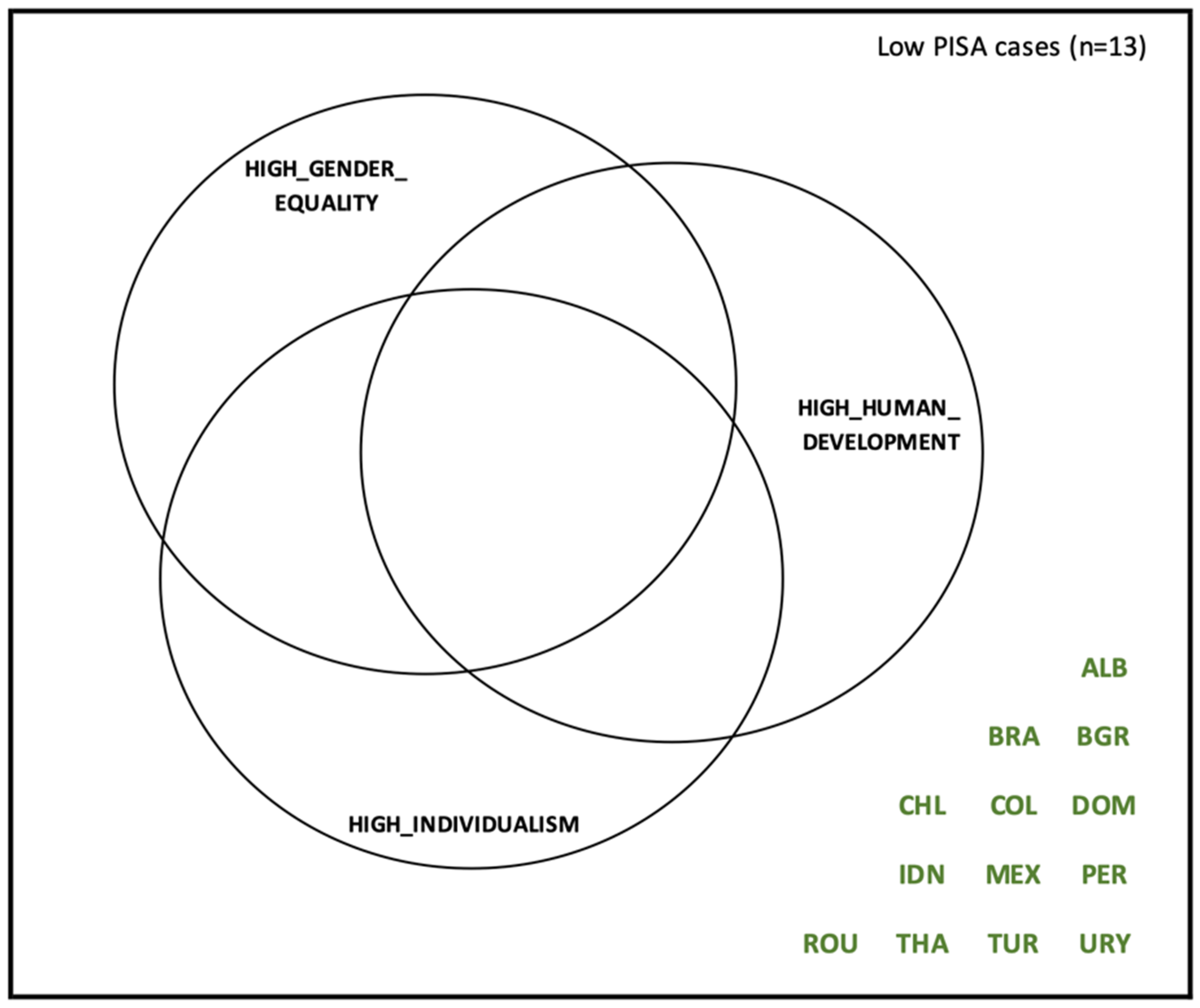

4.1. System-Specific Factors as Outcome-Enabling Conditions

(consistency 0.812, coverage 0.650)

(consistency 1.000, coverage 0.200)

(consistency 0.812, coverage 1.000)

4.2. The Moderating Effect

5. Discussion

5.1. Gender Equality

5.2. Meaningful Peer Countries

5.3. The Future of PISA and Policy Transfer

6. Limitations and Future Research

7. Conclusions

Supplementary Materials

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Silova, I.; Rappleye, J.; Auld, E. Beyond the western horizon: Rethinking education, values, and policy transfer. In Handbook of Education Policy Studies; Fan, G., Popkewitz, T., Eds.; Springer: Singapore, 2020; pp. 3–29. [Google Scholar]

- Meyer, H.; Benavot, A. PISA and the globalization of education governance: Some puzzles and problems. In PISA, Power, and Policy: The Emergence of Global Educational Governance; Meyer, H., Benavot, A., Eds.; Symposium Books Ltd.: Oxford, UK, 2013; pp. 9–26. [Google Scholar]

- Gorur, R. Producing calculable worlds: Education at a glance. Discourse Stud. Cult. Politi Educ. 2015, 36, 578–595. [Google Scholar] [CrossRef]

- Gorur, R. Seeing like PISA: A cautionary tale about the performativity of international assessments. Eur. Educ. Res. J. 2016, 15, 598–616. [Google Scholar] [CrossRef]

- Auld, E.; Morris, P. PISA, policy and persuasion: Translating complex conditions into education ‘best practice’. Comp. Educ. 2016, 52, 202–229. [Google Scholar] [CrossRef]

- Steiner-Khamsi, G. Understanding policy borrowing and lending: Building comparative policy studies. In Policy Borrowing and Lending in Education; Steiner-Khamsi, G., Waldow, F., Eds.; Routledge: New York, NY, USA, 2012; pp. 3–18. [Google Scholar]

- Steiner-Khamsi, G. Cross-national policy borrowing: Understanding reception and translation. Asia Pac. J. Educ. 2014, 34, 153–167. [Google Scholar] [CrossRef]

- Grek, S. Governing by numbers: The PISA ‘effect’ in Europe. J. Educ. Pol. 2009, 24, 23–37. [Google Scholar] [CrossRef]

- World Bank. What Matters Most for Teacher Policies: A Framework Paper. 2013. Available online: https://openknowledge.worldbank.org/handle/10986/20143 (accessed on 5 November 2020).

- Sadler, M. How far can we learn anything of practical value from the study of foreign systems of education? In Proceedings of the Guildford Educational Conference, Guildford, UK, 20 October 1900. [Google Scholar]

- Kandel, I. Studies in Comparative Education; George G. Harrap & Co Ltd.: London, UK, 1933. [Google Scholar]

- Steiner-Khamsi, G. The politics and economics of comparison. Comp. Educ. Rev. 2010, 54, 323–342. [Google Scholar] [CrossRef]

- Grey, S.; Morris, P. PISA: Multiple “truths” and mediatised global governance. Comp. Educ. 2018, 54, 109–131. [Google Scholar] [CrossRef]

- Xiaomin, L.; Auld, E. A historical perspective on the OECD’s ‘humanitarian turn’: PISA for development and the learning framework 2030. Comp. Educ. 2020, 56, 503–521. [Google Scholar] [CrossRef]

- Barber, M.; Mourshed, M. How the World’s Best-Performing School Systems Come Out on Top; McKinsey & Co: London, UK, 2007; Available online: https://www.mckinsey.com/industries/public-and-social-sector/our-insights/how-the-worlds-best-performing-school-systems-come-out-on-top# (accessed on 5 November 2020).

- OECD. Measuring Student Knowledge and Skills: A New Framework for Assessment; OECD Publishing: Paris, France, 1999. [Google Scholar]

- Kamens, D. Globalization and the emergence of an audit culture: PISA and the search for ‘best practices’ and magic bullets. In PISA, Power, and Policy: The Emergence of Global Educational Governance; Meyer, H., Benavot, A., Eds.; Symposium Books Ltd.: Oxford, UK, 2013; pp. 117–139. [Google Scholar]

- Gustafsson, J. Effects of international comparative studies on educational quality on the quality of educational research. Eur. Educ. Res. J. 2008, 7, 1–17. [Google Scholar] [CrossRef]

- Breakspear, S. How Does PISA Shape Education Policymaking? Why How We Measure Learning Determines What Counts in Education; Center for Strategic Education: Melbourne, Australia, 2014. [Google Scholar]

- Sahlberg, P.; Hargreaves, A. The Tower of PISA Is Badly Leaning: An Argument for Why It Should Be Saved. The Washington Post. 24 March 2015. Available online: https://www.washingtonpost.com/news/answer-sheet/wp/2015/03/24/the-tower-of-pisa-is-badly-leaning-an-argument-for-why-it-should-be-saved/ (accessed on 5 November 2020).

- Mourshed, M.; Chijioke, C.; Barber, M. How the World’s Most Improved School Systems Keep Getting Better; McKinsey & Co: London, UK, 2010; Available online: https://www.mckinsey.com/industries/public-and-social-sector/our-insights/how-the-worlds-most-improved-school-systems-keep-getting-better (accessed on 5 November 2020).

- Rowley, K.; Edmunds, C.; Dufur, M.; Jarvis, J.; Silviera, F. Contextualising the achievement gap: Assessing educational achievement, inequality, and disadvantage in high-income countries. Comp. Educ. 2020, 56, 459–483. [Google Scholar] [CrossRef]

- Dobbins, M.; Martens, K. Towards an education approach à la finlandaise? French education policy after PISA. J. Educ. Pol. 2012, 27, 23–43. [Google Scholar] [CrossRef]

- Meyer, H.; Zahedi, K. Open letter to Andreas Schleicher. Policy Futur. Educ. 2014, 12, 872–877. [Google Scholar] [CrossRef]

- OECD. Response of OECD to points raised in Heinz-Dieter Meyer and Katie Zahedi, ‘Open Letter’. Policy Futur. Educ. 2014, 12, 878–879. [Google Scholar] [CrossRef]

- Reimers, F.; O’Donnell, E. (Eds.) Fifteen Letters on Education in Singapore; Lulu Publishing Services: Morrisville, NC, USA, 2016; ISBN 978-1483450629. Available online: https://books.google.com/books?hl=zh-CN&lr=&id=QmEUDAAAQBAJ&oi=fnd&pg=PA1&dq=Fifteen+Letters+on+Education+in+Singapore&ots=Spk5eXNrvT&sig=IZnGyIfTQXgci7uh605YJibZi4c#v=onepage&q=Fifteen%20Letters%20on%20Education%20in%20Singapore&f=false (accessed on 30 December 2020).

- Burdett, N.; O’Donnell, S. Lost in translation? The challenges of educational policy borrowing. Educ. Res. 2016, 58, 113–120. [Google Scholar] [CrossRef]

- Carnoy, M.; Rhoten, D. What does globalization mean for educational change? A comparative approach. Comp. Educ. Rev. 2002, 46, 1–9. [Google Scholar] [CrossRef]

- Bronfenbrenner, U. The Ecology of Human Development: Experiments by Design and Nature; Harvard University Press: Cambridge, MA, USA, 1979. [Google Scholar]

- Darling, N. Ecological systems theory: The person in the center of the circles. Res. Hum. Dev. 2007, 4, 203–217. [Google Scholar] [CrossRef]

- Abbott, A. Transcending general linear reality. Sociol. Theory 1988, 6, 169–186. [Google Scholar] [CrossRef]

- Rohlfing, I. Case Studies and Causal Inference: An Integrative Framework; Palgrave MacMillan: Hampshire, UK, 2012. [Google Scholar]

- Hall, P. Aligning ontology and methodology in comparative politics. In Comparative Historical Analysis in the Social Sciences; Mahoney, J., Rueschemeyer, D., Eds.; Cambridge University Press: Cambridge, UK, 2003; pp. 373–404. [Google Scholar]

- Ragin, C. The Comparative Method: Moving beyond Qualitative and Quantitative Strategies; University of California Press: Los Angeles, CA, USA, 1987. [Google Scholar]

- Mill, J. A System of Logic, Ratiocinative and Inductive: Being a Connected View of the Principles of Evidence, and the Methods of Scientific Investigation; John W. Parker: London, UK, 1843. [Google Scholar]

- Berg-Schlosser, D.; De Meur, G.; Ragin, C.; Rihoux, B. Qualitative Comparative Analysis (QCA) as an approach. In Configurational Comparative Methods: Qualitative Comparative Analysis (QCA) and Related Techniques; Rihoux, B., Ragin, C., Eds.; SAGE Publications Ltd.: Thousand Oaks, CA, USA, 2009; pp. 1–18. [Google Scholar]

- Rihoux, B. Qualitative comparative analysis (QCA) and related systematic comparative methods: Recent advances and remaining challenges for social science research. Int. Sociol. 2006, 21, 679–706. [Google Scholar] [CrossRef]

- Toots, A.; Lauri, T. Institutional and contextual factors of quality in civic and citizenship education: Exploring possibilities of qualitative comparative analysis. Comp. Educ. 2015, 51, 247–275. [Google Scholar] [CrossRef]

- Bara, C. Incentives and opportunities: A complexity-oriented explanation of violent ethnic conflict. J. Peace Res. 2014, 51, 696–710. [Google Scholar] [CrossRef]

- OECD. Supporting Teacher Professionalism: Insights from TALIS 2013; OECD Publishing: Paris, France, 2016. [Google Scholar] [CrossRef]

- Meyer, H.; Schiller, K. Gauging the role of non-educational effects in large-scale assessments: Socio-economics, culture and PISA outcomes. In PISA, Power, and Policy: The Emergence of Global Educational Governance; Meyer, H., Benavot, A., Eds.; Symposium Books Ltd.: Oxford, UK, 2013; pp. 207–224. [Google Scholar]

- Schneider, C. Two-step QCA revisited: The necessity of context conditions. Qual. Quant. 2019, 53, 1109–1126. [Google Scholar] [CrossRef]

- O’Connor, E.; McCartney, K. Examining teacher-child relationships and achievement as part of an ecological model for development. Am. Educ. Res. J. 2007, 44, 340–369. [Google Scholar] [CrossRef]

- Price, D.; McCallum, F. Ecological influences on teachers’ well-being and “fitness”. Asia Pac. J. Teach. Educ. 2015, 43, 195–209. [Google Scholar] [CrossRef]

- Abbott, A. Linked ecologies: States and universities as environments for professions. Sociol. Theory 2005, 23, 245–274. [Google Scholar] [CrossRef]

- IEA. Assessment Framework: IEA International Civic and Citizenship Education Study 2016; IEA Secretariat: Amsterdam, The Netherlands, 2016. [Google Scholar]

- Schulz, W.; Sibberns, H. IEA Civic Study Technical Report; IEA Secretariat: Amsterdam, The Netherlands, 2004. [Google Scholar]

- Snyder, S. The simple, the complicated, and the complex: Educational reform through the lens of complexity theory. In OECD Education Working Papers, No. 96; OECD Publishing: Paris, France, 2013. [Google Scholar]

- OECD. PISA 2012 Assessment and Analytical Framework: Mathematics, Reading, Science, Problem Solving and Financial Literacy; OECD Publishing: Paris, France, 2013. [Google Scholar] [CrossRef]

- Miller, P. ‘Culture’, ‘context’, school leadership and entrepreneurialism: Evidence from sixteen countries. Educ. Sci. 2018, 8, 76. [Google Scholar] [CrossRef]

- Gorard, S. Overcoming equity-related challenges for the education and training systems of Europe. Educ. Sci. 2020, 10, 305. [Google Scholar] [CrossRef]

- Wilkinson, R.; Pickett, K. The Spirit Level: Why Equality Is Better for Everyone; Penguin Books: London, UK, 2010. [Google Scholar]

- Ragin, C.; Fiss, P. Intersectional Inequality: Race, Class, Test Scores, and Poverty; University of Chicago Press: Chicago, IL, USA, 2017. [Google Scholar]

- Alexander, R. Culture and Pedagogy: International Comparisons in Primary Education; Wiley-Blackwell: Malden, MA, USA, 2001. [Google Scholar]

- Alesina, A.; Devleeschauwer, A.; Easterly, W.; Kurlat, S.; Wacziarg, R. Fractionalization. J. Econ. Growth 2003, 8, 155–194. [Google Scholar] [CrossRef]

- Hofstede, G. Cultures Consequences: Comparing Values, Behaviors, Institutions and Organizations across Cultures; SAGE Publications: Thousand Oaks, CA, USA, 2001. [Google Scholar]

- Hofstede, G. Dimensions do not exist: A reply to Brendan McSweeney. Hum. Relat. 2002, 55, 1355–1361. [Google Scholar] [CrossRef]

- Hofstede, G. Hofstede Insights. Available online: https://www.hofstede-insights.com (accessed on 10 November 2020).

- Purves, A. IEA—An agenda for the future. Int. Rev. Educ. 1987, 33, 103–107. Available online: http://www.jstor.org/stable/3444047 (accessed on 11 November 2020). [CrossRef]

- Von Kopp, B. On the question of cultural context as a factor in international academic achievement. Eur. Educ. 2003, 35, 70–98. [Google Scholar] [CrossRef]

- Bissessar, C. An application of Hofstede’s cultural dimensions among female educational leaders. Educ. Sci. 2018, 8, 77. [Google Scholar] [CrossRef]

- McSweeney, B. Hofstede’s model of national cultural differences and their consequences: A triumph of faith—A failure of analysis. Hum. Relat. 2002, 55, 89–118. [Google Scholar] [CrossRef]

- Jones, M. Hofstede—culturally questionable? In Oxford Business & Economics Conference; Oxford University: Oxford, UK, 2007. [Google Scholar]

- OECD. Education at a Glance 2016: OECD Indicators; OECD Publishing: Paris, France, 2016. [Google Scholar] [CrossRef]

- De Condorcet, M. Outlines of an Historical View of the Progress of the Human Mind; J. Johnson: London, UK, 1795. [Google Scholar]

- Mill, J. The Subjection of Women; Longmans, Green, Reader, and Dyer: London, UK, 1869. [Google Scholar]

- Unterhalter, E. Thinking about gender in comparative education. Comp. Educ. 2014, 50, 112–126. [Google Scholar] [CrossRef]

- OECD. TALIS 2013 Results: An International Perspective on Teaching and Learning; OECD Publishing: Paris, France, 2014. [Google Scholar] [CrossRef]

- OECD. PISA 2015 Results (Volume II): Policies and Practices for Successful Schools; OECD Publishing: Paris, France, 2016. [Google Scholar] [CrossRef]

- Schneider, C.; Wagemann, C. Set-Theoretic Methods for the Social Sciences: A Guide to Qualitative Comparative Analysis; Cambridge University Press: Cambridge, UK, 2012. [Google Scholar]

- Schneider, C.; Wagemann, C. Standards of good practice in qualitative comparative analysis and fuzzy-sets. Comp. Sociol. 2010, 9, 397–418. [Google Scholar] [CrossRef]

- Cohen, J. Statistical Power Analysis for the Behavioral Sciences, 2nd ed.; Erlbaum: Hillsdale, NJ, USA, 1988; ISBN 9780203771587. [Google Scholar]

- Welch, B. On the comparison of several mean values: An alternative approach. Biometrica 1951, 38, 330–336. [Google Scholar] [CrossRef]

- Fisher, R. Frequency distribution of the values of the correlation coefficient in samples of an indefinitely large population. Biometrica 1915, 10, 507–521. [Google Scholar] [CrossRef]

- R Core Team. R: A Language and Environment for Statistical Computing; R Foundation for Statistical Computing: Vienna, Austria, 2018; Available online: https://www.R-project.org (accessed on 11 November 2020).

| Factor | Index | Source |

|---|---|---|

| Wealth | GDP per Capita, 2015 | The World Bank |

| Human development | Human Development Index, 2015 | The United Nations Development Programme |

| Income inequality | 80/20 Index, 2015 | The World Bank, Income share highest 20% and lowest 20% |

| Economic freedom | Economic Freedom Index, 2015 | The Heritage Foundation |

| Immigration | Migrant Stock, 10–14 years old, 2015 | The United Nations Department of Economic and Social Affairs |

| Gender inequality | Gender Inequality Index, 2015 | The United Nations Development Programme |

| Gender gap | Gender Gap Index, 2015 | The World Economic Forum |

| Ethnic diversity/tension | Ethnic Fractionalization, 2003 * | Alesina, Devleeschauwer, Easterly, Kurlat, and Wacziarg [55] |

| Religious diversity/tension | Religious Fractionalization, 2003 * | |

| Language diversity/tension | Language Fractionalization, 2003 * | |

| Country population | Country Population Data, 2015 | The United Nations Department of Economic and Social Affairs |

| Democracy | Democracy Index, 2015 | The Economist Intelligence Unit |

| Code | Country | 2015 PISA Score | Set Membership | |||

|---|---|---|---|---|---|---|

| Mathematics | Reading | Science | HIGH_PISA (1) | LOW_PISA (2) | ||

| ALB | Albania | 413 | 405 | 427 | 0 | 1 |

| AUS | Australia | 494 | 503 | 510 | 1 | 0 |

| AUT | Austria | 497 | 485 | 495 | 0 | 0 |

| BEL | Belgium | 507 | 499 | 502 | 1 | 0 |

| BRA | Brazil | 377 | 407 | 401 | 0 | 1 |

| BGR | Bulgaria | 441 | 432 | 446 | 0 | 1 |

| CAN | Canada | 516 | 527 | 528 | 1 | 0 |

| CHL | Chile | 423 | 459 | 447 | 0 | 1 |

| COL | Colombia | 390 | 425 | 416 | 0 | 1 |

| HRV | Croatia | 464 | 487 | 475 | 0 | 0 |

| CZE | Czech Republic | 492 | 487 | 493 | 0 | 0 |

| DNK | Denmark | 511 | 500 | 502 | 1 | 0 |

| DOM | Dominican Republic | 328 | 358 | 332 | 0 | 1 |

| EST | Estonia | 519 | 519 | 534 | 1 | 0 |

| FIN | Finland | 511 | 526 | 531 | 1 | 0 |

| FRA | France | 493 | 499 | 495 | 1 | 0 |

| DEU | Germany | 506 | 509 | 509 | 1 | 0 |

| GRC | Greece | 454 | 467 | 455 | 0 | 0 |

| HUN | Hungary | 477 | 470 | 477 | 0 | 0 |

| ISL | Iceland | 488 | 482 | 473 | 0 | 0 |

| IDN | Indonesia | 386 | 397 | 403 | 0 | 1 |

| IRL | Ireland | 504 | 521 | 503 | 1 | 0 |

| ITA | Italy | 490 | 485 | 481 | 0 | 0 |

| JPN | Japan | 532 | 516 | 538 | 1 | 0 |

| LVA | Latvia | 482 | 488 | 490 | 0 | 0 |

| LTU | Lithuania | 478 | 472 | 475 | 0 | 0 |

| LUX | Luxembourg | 486 | 481 | 483 | 0 | 0 |

| MEX | Mexico | 408 | 423 | 416 | 0 | 1 |

| NLD | Netherlands | 512 | 503 | 509 | 1 | 0 |

| NZL | New Zealand | 495 | 509 | 513 | 1 | 0 |

| NOR | Norway | 502 | 513 | 498 | 1 | 0 |

| PER | Peru | 387 | 398 | 397 | 0 | 1 |

| POL | Poland | 504 | 506 | 501 | 1 | 0 |

| PRT | Portugal | 492 | 498 | 501 | 1 | 0 |

| ROU | Romania | 444 | 434 | 435 | 0 | 1 |

| RUS | Russia | 494 | 495 | 487 | 0 | 0 |

| SGP | Singapore | 564 | 535 | 556 | 1 | 0 |

| SVK | Slovak Republic | 475 | 453 | 461 | 0 | 0 |

| SVN | Slovenia | 510 | 505 | 513 | 1 | 0 |

| KOR | South Korea | 524 | 517 | 516 | 1 | 0 |

| ESP | Spain | 486 | 496 | 493 | 0 | 0 |

| SWE | Sweden | 494 | 500 | 493 | 1 | 0 |

| CHE | Switzerland | 521 | 492 | 506 | 0 | 0 |

| THA | Thailand | 415 | 409 | 421 | 0 | 1 |

| TUR | Turkey | 420 | 428 | 425 | 0 | 1 |

| GBR | United Kingdom | 492 | 498 | 509 | 1 | 0 |

| USA | United States | 470 | 497 | 496 | 0 | 0 |

| URY | Uruguay | 418 | 437 | 435 | 0 | 1 |

| VNM | Viet Nam | 495 | 487 | 525 | 0 | 0 |

| Average (all PISA countries) | 461 | 460 | 465 | n = 20 | n = 13 | |

| Average (included countries) | 473 | 476 | 478 | |||

| Average (OECD) | 490 | 493 | 493 | |||

| HIGH_ GENDER_ EQUALITY | HIGH_ INCOME_ EQUALITY | HIGH_ HUMAN_ DEVELOP | HIGH_ INDIVID | Out | n | Cons. | Cases |

|---|---|---|---|---|---|---|---|

| 1 | 1 | 1 | 1 | 1 | 15 | 0.800 | AUT, BEL, CAN, DNK, FIN, FRA, DEU, IRL, ISL, NLD, NZL, NOR, SWE, CHE, GBR |

| 1 | 1 | 1 | 0 | 1 | 3 | 1.000 | JPN, SVN, KOR |

| 1 | 0 | 1 | 0 | 1 | 1 | 1.000 | SGP |

| 1 | 1 | 0 | 1 | 1 | 1 | 1.000 | EST |

| 0 | 0 | 0 | 0 | 0 | 13 | 0.000 | BRA, BGR, CHL, COL, DOM, IDN, MEX, PER, RUS, THA, TUR, URY, VNM |

| 0 | 1 | 0 | 1 | 0 | 4 | 0.250 | CZE, HUN, POL, SVK |

| 1 | 0 | 0 | 1 | 0 | 4 | 0.000 | ITA, LVA, LTU, ESP |

| 0 | 1 | 0 | 0 | 0 | 3 | 0.000 | ALB, HRV, ROU |

| 1 | 0 | 0 | 0 | 0 | 2 | 0.500 | GRC, PRT |

| 1 | 0 | 1 | 1 | 0 | 2 | 0.500 | AUS, LUX |

| 0 | 0 | 1 | 1 | 0 | 1 | 0.000 | USD |

| 0 | 0 | 0 | 1 | ? | 0 | - | |

| 0 | 0 | 1 | 0 | ? | 0 | - | |

| 0 | 1 | 1 | 0 | ? | 0 | - | |

| 0 | 1 | 1 | 1 | ? | 0 | - | |

| 1 | 1 | 0 | 0 | ? | 0 | - |

| HIGH_ GENDER_ EQUALITY | HIGH_ HUMAN_ DEVELOP | HIGH_ INDIVID | Out | n | Cons. | Cases |

|---|---|---|---|---|---|---|

| 0 | 0 | 0 | 1 | 16 | 0.812 | ALB, BRA, BGR, CHL, COL, HRV, DOM, IDN, MEX, PER, ROU, RUS, THA, TUR, URY, VNM |

| 1 | 1 | 1 | 0 | 17 | 0.000 | AUS, AUT, BEL, CAN, DNK, FIN, FRA, DEU, ISL, IRL, LUX, NLD, NZL, NOR, SWE, CHE, GBR |

| 1 | 0 | 1 | 0 | 5 | 0.000 | EST, ITA, LVA, LTU, ESP |

| 0 | 0 | 1 | 0 | 4 | 0.000 | CZE, HUN, POL, SVK |

| 1 | 1 | 0 | 0 | 4 | 0.000 | JPN, SGP, SVN, KOR |

| 1 | 0 | 0 | 0 | 2 | 0.000 | GRC, PRT |

| 0 | 1 | 1 | 0 | 1 | 0.000 | USA |

| 0 | 1 | 1 | ? | 0 | - |

| All Countries | High Gender Equality Countries | NOT High Gender Equality Countries | Comparison between Groups | ||||||

|---|---|---|---|---|---|---|---|---|---|

| Number of Tests | Mean (sd) | Correlation with PISA Scores | Mean (sd) | Correlation with PISA Scores | Mean (sd) | Correlation with PISA Scores | Difference Means Cohens D (a) | Difference Correlations Z-Score (b) | |

| PISA scores | 147 | 475.9 (44.6) | - | 502.8 (19.1) | - | 440.2 (43.7) | - | −1.951 *** | - |

| Cumulative expenditure on education ($) | 108 | 90,484 (34,108) | 0.418 *** | 101,136 (30,401) | 0.027 | 58,529 (23,022) | 0.549 ** | 1.481 *** | −2.527 * |

| Student hours in classroom | 135 | 26.8 (1.9) | 0.025 | 26.8 (1.5) | 0.117 | 26.7 (2.5) | −0.028 | 0.041 | 0.799 |

| Class size in language of instruction class | 147 | 27.3 (6.0) | −0.451 *** | 24.8 (3.9) | 0.498 *** | 30.6 (6.7) | −0.426 *** | 1.087 *** | 5.881 *** |

| Public school enrolment rate (%) | 141 | 83.4 (17.0) | 0.075 | 82.4 (18.2) | −0.142 | 84.9 (15.3) | 0.430 *** | 0.147 | −3.459 *** |

| Private expenditure on primary and secondary education (% of total) | 105 | 8.8 (6.1) | −0.444 *** | 7.4 (5.6) | 0.163 | 12.2 (6.0) | −0.820 *** | 0.836 *** | 5.855 *** |

| Teacher salaries ($) | 102 | 36,621 (25,776) | 0.567 *** | 49,094 (23,211) | 0.334 ** | 16,472 (14,570) | 0.243 | −1.602 *** | 0.471 |

| Qualified teachers (%) | 138 | 89.5 (9.2) | 0.483 *** | 91.6 (6.0) | 0.396 *** | 86.3 (12.0) | 0.430 ** | −0.592 ** | −0.229 |

| Teacher professional development in previous 3 months (%) | 147 | 50.6 (16.5) | 0.237 ** | 53.1 (16.5) | 0.300 ** | 47.4 (16.0) | 0.108 | −0.305 * | 1.181 |

| Average years of teacher experience | 93 | 16.6 (2.7) | −0.106 | 16.4 (2.9) | −0.415 *** | 16.8 (2.4) | 0.279 | 0.136 | −3.229 ** |

| Teachers that participated in an induction program (%) | 93 | 47.2 (19.5) | 0.037 | 44.6 (22.2) | 0.324 * | 52.1 (12.2) | 0.164 | 0.389 * | 0.756 |

| Teachers that have a mentor (%) | 93 | 11.4 (9.7) | 0.095 | 11.8 (10.4) | 0.628*** | 10.7 (8.4) | −0.556 *** | −0.118 | 6.052 *** |

| Teacher non-contact hours (% of total) | 87 | 48 (11) | 0.508 *** | 51 (8) | 0.255 | 42 (14) | 0.422 * | −0.871 ** | −0.803 |

| GENDER EQUALITY | HUMAN DEVELOPMENT | INCOME EQUALITY | INDIVIDUALISM | ||

|---|---|---|---|---|---|

| 1 | Cumulative expenditure on education |   |    |  | |

| 2 | Student hours in classroom |  | |||

| 3 | Class size |    |    |   |  |

| 4 | Public-school enrolment |   |   |   |   |

| 5 | Private expenditure on education |    |    |   |   |

| 6 | Teacher salaries |  |  |   | |

| 7 | Percentage of qualified teachers |  |  |  |   |

| 8 | Rate of teacher professional dev. |  |  |  | |

| 9 | Average years of teacher experience |   |    | ||

| 10 | Percentage teachers received induction |  |   |   |   |

| 11 | Percentage teachers with mentor |   |    |  | |

| 12 | Proportion of non-contact hours |  |  |   |  |

Statistically significant difference between group means Statistically significant difference between group means | |||||

Statistically significant difference between group correlations Statistically significant difference between group correlations | |||||

Positive correlation in one group; Negative correlation in the other group Positive correlation in one group; Negative correlation in the other group | |||||

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Campbell, J.A. The Moderating Effect of Gender Equality and Other Factors on PISA and Education Policy. Educ. Sci. 2021, 11, 10. https://doi.org/10.3390/educsci11010010

Campbell JA. The Moderating Effect of Gender Equality and Other Factors on PISA and Education Policy. Education Sciences. 2021; 11(1):10. https://doi.org/10.3390/educsci11010010

Chicago/Turabian StyleCampbell, Janine Anne. 2021. "The Moderating Effect of Gender Equality and Other Factors on PISA and Education Policy" Education Sciences 11, no. 1: 10. https://doi.org/10.3390/educsci11010010

APA StyleCampbell, J. A. (2021). The Moderating Effect of Gender Equality and Other Factors on PISA and Education Policy. Education Sciences, 11(1), 10. https://doi.org/10.3390/educsci11010010