The Rise of Artificial Intelligence under the Lens of Sustainability

Abstract

1. Introduction

2. Background

2.1. Artificial Intelligence

- Artificial narrow intelligence (ANI): Machines are trained for a particular task and can make a decision only in one sphere. (e.g., Google search, passenger planes [21])

- Artificial general intelligence (AGI): AGI which are also known as strong AI,” “human-level AI,” and “true synthetic intelligence [22] are machines that has ability to reach and then pass the intelligence level of a human, meaning it has the ability to reason, plan, solve problems, think abstractly, comprehend complex ideas, learn quickly, and learn from experience. (e.g., autonomous cars)

- Mechanics: Minimal degree of learning or adaption (e.g., McDonald’s “Create Your Taste” touchscreen kiosks)

- Analytical: Learns and adapts systematically based on data (e.g., Toyota’s in-car intelligent systems replacing problem diagnostic tasks for technicians)

- Intuitive: Learns and adapts intuitively based on understanding (e.g., Associated Press’ robot reporters taking on the reporting task for minor league baseball games)

- Empathetic: Learn and adapt empathetically based on experience (e.g., Chatbots communicating with customers and learning from these experiences)

2.2. Sustainability Analysis

- The individual dimension covers individual freedom and agency (the ability to act in an environment), human dignity, and fulfillment. It includes individuals’ ability to thrive, exercise their rights, and develop freely.

- The social dimension covers relationships between individuals and groups. For example, it covers the structures of mutual trust and communication in a social system and the balance between conflicting interests.

- The economic dimension covers financial aspects and business value. It includes capital growth and liquidity, investment questions, and financial operations.

- The technical dimension covers the ability to maintain and evolve artificial systems (such as software) over time. It refers to maintenance and evolution, resilience, and the ease of system transitions.

- The environmental dimension covers the use and stewardship of natural resources. It includes questions ranging from immediate waste production and energy consumption to the balance of local ecosystems and climate change concerns.

2.3. Sustainable Development

- To eradicate extreme poverty and hunger

- To achieve universal primary education

- To promote gender equality and empower women

- To reduce child mortality

- To improve maternal health

- To combat HIV/AIDS, malaria, and other diseases

- To ensure environmental sustainability

- To develop a global partnership for development

- End poverty in all its forms, everywhere

- End hunger, achieve food security and improved nutrition, and promote sustainable agriculture

- Ensure healthy lives and promote well-being for all people at all ages

- Ensure inclusive and equitable quality education and promote lifelong learning opportunities for all

- Achieve gender equality and empower all women and girls

- Ensure the availability and sustainable management of water and sanitation for all

- Ensure access to affordable, reliable, sustainable, and modern energy for all

- Promote sustained, inclusive, and sustainable economic growth, full and productive employment, and decent work for all

- Build a resilient infrastructure, promote inclusive and sustainable industrialization, and foster innovation

- Reduce inequality within and among countries

- Make cities and human settlements inclusive, safe, resilient, and sustainable

- Ensure sustainable consumption and production patterns

- Take urgent action to combat climate change and its impacts*

- Conserve and sustainably use the oceans, seas, and marine resources for sustainable development

- Protect, restore, and promote the sustainable use of terrestrial ecosystems, sustainably manage forests, combat desertification, and halt and reverse land degradation and biodiversity loss

- Promote peaceful and inclusive societies for sustainable development, provide access to justice for all, and build effective, accountable, and inclusive institutions at all levels

- Strengthen the means of implementation and revitalize the global partnership for sustainable development

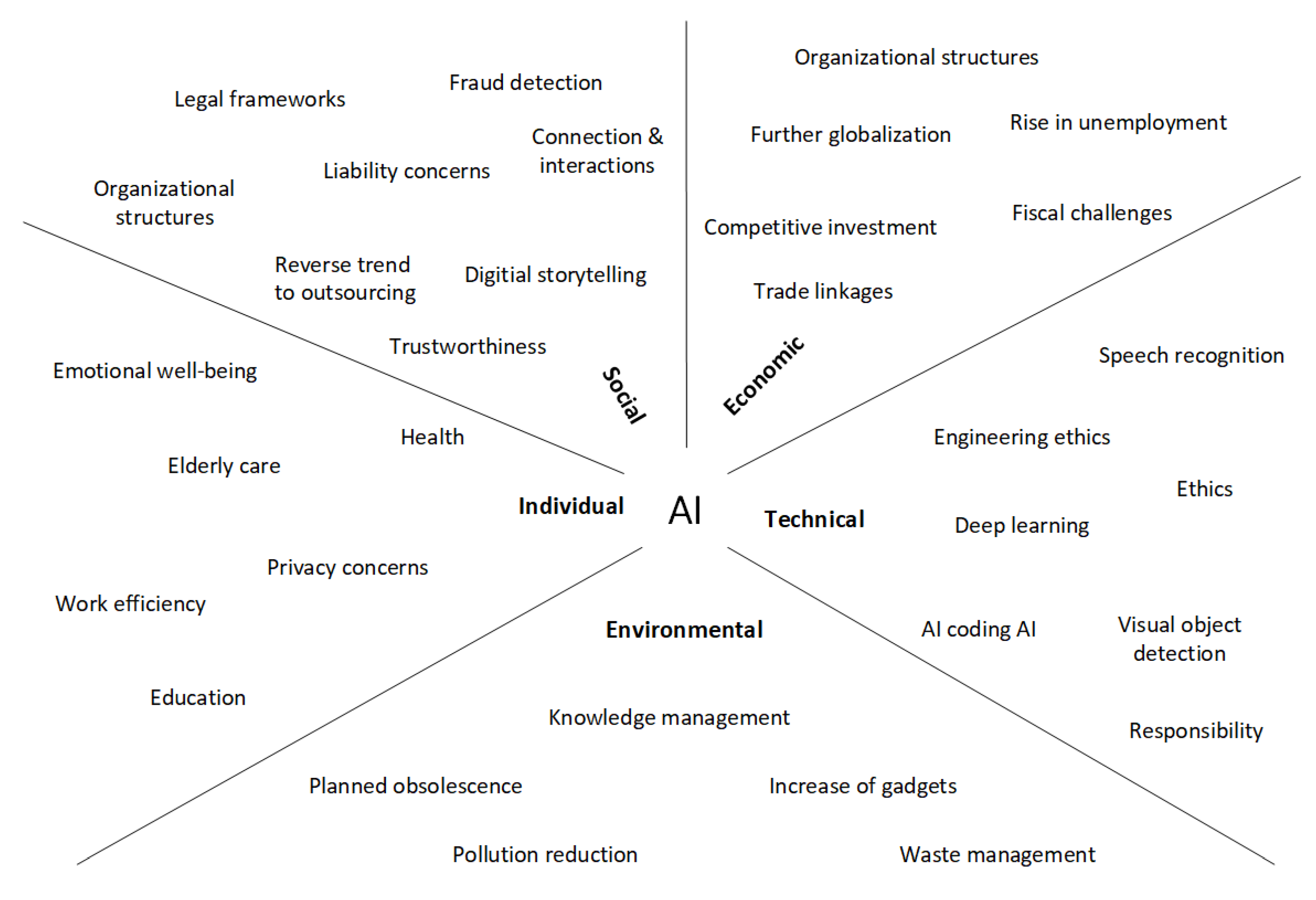

3. AI under a Sustainability Analysis Perspective

3.1. Economic Dimension

3.2. Technical Dimension

3.3. Environmental Dimension

- Programmed autonomous vehicles could fully take advantage of the principles of eco-driving throughout a journey, reducing fuel consumption by as much as 20 percent and reducing greenhouse gas emissions to a similar extent.

- Autonomous vehicles could reduce traffic congestion by recommending alternative routes and shortest routes possible in urbanized areas and by sharing traffic information to other vehicles on the motorways, resulting in less fuel consumption.

- Autonomous vehicles could drive in accordance with imposed limits, resulting in smooth driving that would minimalize the necessity of the energy-intensive process of accelerating. This would ensure that the least amount of fuel is used.

- Finally, autonomous vehicles would reduce the distance between cars, would reduce fuel consumption due to reduction of aerodynamic resistance, and would reduce greenhouse gas emissions.

3.4. Individual Dimension

3.5. Social Dimension

4. Discussion

5. Conclusions

- (i)

- AI Application Domains: A more in-depth sustainability analysis should be performed for several application domains of AI whereby an analysis of the three orders of effect (life cycle, enabling, and structural) is included.

- (ii)

- Ethics and transparency of AI: An interdisciplinary analysis that considers the transparency and ethical aspects of AI should be performed in a joint effort by behavioral psychologists, philosophers of science, psychologists, and computer scientists.

- (iii)

- Responsibility & accountability for AI: A qualitative analysis should be conducted on how much citizens are willing to give up the freedom of choice and have AI take somewhat optimized decisions for them, how much operators are willing to pass on their responsibility to AI, and how much developers are willing to be accountable in case something fails, along with how to allow for and ensure transparency; and

- (iv)

- Perceptions of AI: A larger-scale empirical analysis should be carried out on individuals’ perceptions in diverse stakeholder roles toward having AI integrated in society on several levels of technological intervention, e.g., as small-scale personal assistants, as substitute teachers, nurses, and doctors, or as decision support systems for governments and legislation.

Author Contributions

Funding

Conflicts of Interest

References

- McClure, P.K. “You’re Fired,” Says the Robot. Soc. Sci. Comput. Rev. 2017. [Google Scholar] [CrossRef]

- McCarthy, J.; Minsky, M.L.; Rochester, N.; Shannon, C.E. A proposal for the Dartmouth summer research project on artificial intelligence. AI Mag. 2006, 27, 12–14. [Google Scholar] [CrossRef]

- Copeland, B.J. The Essential Turing: Seminal Writings in Computing, Logic, Philosophy, Artificial Intelligence, and Artificial Life: Plus The Secrets of Enigma; Copeland, B.J., Ed.; Oxford University Press: Oxford, UK, 2004; ISBN 0-19-825080-0. [Google Scholar]

- Tegmark, M. Life 3.0: Being Human in the Age of Artificial Intelligence; Knopf: New York, NY, USA, 2017. [Google Scholar]

- Harari, Y. The Guardian. 2017. Available online: https://www.theguardian.com/culture/yuval-noah-harari (accessed on 3 November 2018).

- Morgan, C. Researchers Say Fake News Had “Substantial Impact” on 2016 Election. Available online: http://thehill.com/policy/cybersecurity/381449-researchers-say-fake-news-had-substantial-impact-on-2016-election (accessed on 6 July 2018).

- Pamela, B. Rutledge How Cambridge Analytica Mined Data for Voter Influence. Available online: https://www.psychologytoday.com/us/blog/positively-media/201803/how-cambridge-analytica-mined-data-voter-influence (accessed on 20 June 2018).

- Booch, G. Don’t Fear Superintelligent ai. TED@IBM. Available online: https://www.ted.com/talks/grady_booch_don_t_fear_superintelligence?language=en (accessed on 1 July 2018).

- Kevin, K. How AI Can Bring on a Second Industrial Revolution. TED Talk. Available online: https://www.ted.com/talks/kevin_kelly_how_ai_can_bring_on_a_second_industrial_revolution (accessed on 10 May 2018).

- Harris Sam Can We Build Ai Without Losing Control Over It? Available online: https://www.ted.com/talks/sam_harris_can_we_build_ai_without_losing_control_over_it (accessed on 15 June 2018).

- Tufekci, Z. Machine Intelligence Makes Human Morals More Important. TED Talk, 2016. Available online: https://www.ted.com/talks/zeynep_tufekci_machine_intelligence_makes_human_morals_more_important (accessed on 19 June 2018).

- Tegman, M. How to Get Empowered, Not Overpowered, by ai. TED Talk, 2018. Available online: https://www.ted.com/talks/max_tegmark_how_to_get_empowered_not_overpowered_by_ai?language=en (accessed on 11 July 2018).

- Fisher, D.H. A Selected Summary of AI for Computational Sustainability. In Proceedings of the Thirty-First AAAI Conference on Artificial Intelligence, San Francisco, CA, USA, 4–9 February 2017; pp. 4852–4857. [Google Scholar]

- Becker, C.; Betz, S.; Chitchyan, R.; Duboc, L.; Easterbrook, S.M.; Penzenstadler, B.; Seyff, N.; Venters, C.C. Requirements: The Key to Sustainability. IEEE Softw. 2016, 33, 56–65. [Google Scholar] [CrossRef]

- Tredinnick, L. Artificial intelligence and professional roles. Bus. Inf. Rev. 2017, 34, 37–41. [Google Scholar] [CrossRef]

- Hoehndorf, R.; Queralt-Rosinach, N. Data Science and symbolic AI: Synergies, challenges and opportunities. Data Sci. 2017, 1, 27–38. [Google Scholar] [CrossRef]

- Jiang, F.; Jiang, Y.; Zhi, H.; Dong, Y.; Li, H.; Ma, S.; Wang, Y.; Dong, Q.; Shen, H.; Wang, Y. Artificial intelligence in healthcare: Past, present and future. Stroke Vasc Neurol. 2017, 2, 230–243. [Google Scholar] [CrossRef] [PubMed]

- Huang, M.-H.; Rust, R.T. Artificial Intelligence in Service. J. Serv. Res. 2018, 21, 155–172. [Google Scholar] [CrossRef]

- Weizenbaum, J. ELIZA—A Computer Program For the Study of Natural Language Communication Between Man And Machine. Commun. ACM 1966, 9, 36–45. [Google Scholar] [CrossRef]

- Müller, V.C. Risks of general artificial intelligence. J. Exp. Theor. Artif. Intell. 2014, 26, 297–301. [Google Scholar] [CrossRef]

- Strelkova, O.; Pasichnyk, O. Three Types of Artificial Intelligence; Khmelnitsky National University: Khmelnytskyi, Ukraine, 2017; pp. 1–4. [Google Scholar]

- Wang, P.; Goertzel, B. Introduction: Aspects of artificial general intelligence. In Proceedings of the 2007 Conference on Advances in Artificial General Intelligence: Concepts, Architectures and Algorithms: Proceedings of the AGI Workshop 2006; IOS Press: Amsterdam, The Netherlands, 2007; pp. 1–16. [Google Scholar]

- Cully, A.; Clune, J.; Tarapore, D.; Mouret, J.B. Robots that can adapt like animals. Nature 2015, 521, 503–507. [Google Scholar] [CrossRef] [PubMed]

- Silver, D.; Huang, A.; Maddison, C.J.; Guez, A.; Sifre, L.; Van Den Driessche, G.; Schrittwieser, J.; Antonoglou, I.; Panneershelvam, V.; Lanctot, M.; et al. Mastering the game of Go with deep neural networks and tree search. Nature 2016, 539, 484–489. [Google Scholar] [CrossRef] [PubMed]

- Shead, S. Stephen Hawking And Elon Musk Backed 23 Principles To Ensure Humanity Benefits From AI. Available online: https://nordic.businessinsider.com/stephen-hawking-elon-musk-backed-asimolar-ai-principles-for-artificial-intelligence-2017-2 (accessed on 29 July 2018).

- Carriço, G. The EU and artificial intelligence: A human-centred perspective. Eur. View 2018, 17, 29–36. [Google Scholar] [CrossRef]

- Popenici, S.A.D.; Kerr, S. Exploring the impact of artificial intelligence on teaching and learning in higher education. Res. Pract. Technol. Enhanc. Learn. 2017, 12, 22. [Google Scholar] [CrossRef]

- Pavaloiu, A.; Kose, U. Ethical Artificial Intelligence—An Open Question. J. Multidiscip. Dev. 2017, 2, 15–27. [Google Scholar]

- Penzenstadler, B. Sustainability analysis and ease of learning in artifact-based requirements engineering: The newest member of the family of studies (It’s a girl!). Inf. Softw. Technol. 2018, 95, 130–146. [Google Scholar] [CrossRef]

- Hilty, L.M.; Aebischer, B. Ict for sustainability: An emerging research field. Adv. Intell. Syst. Comput. 2015. [Google Scholar] [CrossRef]

- Penzenstadler, B.; Femmer, H.; Richardson, D. Who is the advocate? Stakeholders for sustainability. In Proceedings of the 2013 2nd International Workshop on Green and Sustainable Software (GREENS), San Francisco, CA, USA, 20 May 2013. [Google Scholar]

- Seyff, N.; Betz, S.; Duboc, L.; Venters, C.; Becker, C.; Chitchyan, R.; Penzenstadler, B.; Nobauer, M. Tailoring Requirements Negotiation to Sustainability. In Proceedings of the 2018 IEEE 26th International Requirements Engineering Conference (RE), Banff, AB, Canada, 20–24 August 2018; Volume 9, pp. 304–314. [Google Scholar]

- Brundtland, G.H. Our Common Future: Report of the World Commission on Environment and Development. United Nations Commun. 1987, 4, 300. [Google Scholar] [CrossRef]

- United Nations. The Millennium Development Goals Report 2009; United Nations: New York, NY, USA, 2009. [Google Scholar]

- United Nations. The Millennium Development Goals Report 2015; United Nations: New York, NY, USA, 2015. [Google Scholar]

- Department of Public Information, United Nations. Sustainable Development Goals 2015–2030; United Nations Publications: New York, NY, USA, 2015. [Google Scholar]

- United Nations. The Sustainable Development Goals Report 2018; United Nations: New York, NY, USA, 2018. [Google Scholar]

- Andrews, W. Craft an Artificial Intelligence Strategy: A Gartner Trend Insight Report What You Need to Know; Gartner, Inc.: Stamford, CT, USA, 2018. [Google Scholar]

- Greg, C.; Hancock, M. Tech Sector Backs British Ai Industry With Multi Million Pound Investment. Available online: https://www.gov.uk/government/news/tech-sector-backs-british-ai-industry-with-multi-million-pound-investment--2 (accessed on 25 July 2018).

- Cerulus Laurens Macron: France to Invest Nearly €1.5B for AI Until 2022. Available online: https://www.politico.eu/article/macron-france-to-invest-nearly-e1-5-billion-for-ai-until-2022/ (accessed on 7 May 2018).

- Papadopoulos, I.; Trigkas, M.; Karagouni, G.; Papadopoulou, A. The contagious effects of the economic crisis regarding wood and furniture sectors in Greece and Cyprus. World Rev. Entrep. Manag. Sustain. Dev. 2014, 10, 334. [Google Scholar] [CrossRef]

- Hawking, S. Stephen Hawking: AI Will Be “Either Best or Worst Thing” for Humanity. Available online: https://www.theguardian.com/commentisfree/2016/dec/01/stephen-hawking-dangerous-time-planet-inequality (accessed on 15 June 2018).

- Lee, C.C.; Czaja, S.J.; Sharit, J. Training older workers for technology-based employment. Educ. Gerontol. 2009, 35, 15–31. [Google Scholar] [CrossRef] [PubMed]

- Organisation for Economic Co-operation and Development. Maintaining Prosperity in An Ageing Society: The OECD Study on the Policy Implications of Ageing Workforce Aging: Consequences and Policy Responses; Organisation for Economic Co-operation and Development: Paris, France, 1998. [Google Scholar]

- LeCun, Y.; Bengio, Y.; Hinton, G.E. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef] [PubMed]

- Simard, P.Y.; Steinkraus, D.; Platt, J.C. Best practices for convolutional neural networks applied to visual document analysis. In Proceedings of the Seventh International Conference on Document Analysis and Recognition 2003, Edinburgh, UK, 6 August 2003; Volume 1, pp. 958–963. [Google Scholar]

- Duan, Y.; Schulman, J.; Chen, X.; Bartlett, P.L.; Sutskever, I.; Abbeel, P. RL2: Fast Reinforcement Learning via Slow Reinforcement Learning. arXiv, 2016; arXiv:1611.02779. [Google Scholar]

- Simonite, T. AI Software Learns to Make AI Software. Available online: https://www.technologyreview.com/s/603381/ai-software-learns-to-make-ai-software/ (accessed on 10 July 2018).

- Anderson, R.E.; Johnson, D.G.; Gotterbarn, D.; Perrolle, J. Using the new ACM code of ethics in decision making. Commun. ACM 1993, 36, 98–107. [Google Scholar] [CrossRef]

- Al-Jarrah, O.; Abu-Qdais, H. Municipal solid waste landfill siting using intelligent system. Waste Manag. 2006. [Google Scholar] [CrossRef] [PubMed]

- Ramchandran, G.; Nagawkar, J.; Ramaswamy, K.; Ghosh, S.; Goenka, A.; Verma, A. Assessing environmental impacts of aviation on connected cities using environmental vulnerability studies and fluid dynamics: an Indian case study. AI Soc. 2017. [Google Scholar] [CrossRef]

- Norouzzadeh, M.S.; Nguyen, A.; Kosmala, M.; Swanson, A.; Palmer, M.S.; Packer, C.; Clune, J. Automatically identifying, counting, and describing wild animals in camera-trap images with deep learning. Proc. Natl. Acad. Sci. USA 2018, 115, E5716–E5725. [Google Scholar] [CrossRef] [PubMed]

- Igliński, H.; Babiak, M. Analysis of the Potential of Autonomous Vehicles in Reducing the Emissions of Greenhouse Gases in Road Transport. Procedia Eng. 2017, 192, 353–358. [Google Scholar] [CrossRef]

- Packard, V. The Waste Makers; David McKay Co., Inc.: New York, NY, USA, 1960. [Google Scholar]

- Victor, S.P.; Kumar, S.S. Planned Obsolescence—Roadway To Increasing E-Waste in Indian Government Sector. Int. J. Soft Comput. Eng. 2012, 2, 554–559. [Google Scholar]

- Guiltinan, J. Creative destruction and destructive creations: Environmental ethics and planned obsolescence. J. Bus. Ethics 2009. [Google Scholar] [CrossRef]

- World Business Council for Sustainable Development (WBCSD). Eco-Efficiency: Creating more Value with less Impact; WBCSD: Geneva, Switzerland, 2000. [Google Scholar]

- Yamin, A.E. The right to health under international law and its relevance to the United States. Am. J. Public Health 2005, 95, 1156–1161. [Google Scholar] [CrossRef] [PubMed]

- Hanley, N.; Shogren, J.F.; White, B. The Economy and the Environment: Two Parts of a Whole. In Environmental Economics in Theory and Practice; Macmillan Education: London, UK, 1997; pp. 1–21. [Google Scholar]

- Lightman, A. In Praise of Wasting Time (TED Books); Simon and Schuster: New York, NY, USA, 2018. [Google Scholar]

- Schor, J.B. The Overworked American: The Unexpected Decline of Leisure. Contemp. Sociol. 1992, 21, 843. [Google Scholar] [CrossRef]

- Song, J.-T.; Lee, G.; Kwon, J.; Park, J.-W.; Choi, H.; Lim, S. The Association between Long Working Hours and Self-Rated Health. Ann. Occup. Environ. Med. 2014, 26, 2. [Google Scholar] [CrossRef] [PubMed]

- Bannai, A.; Tamakoshi, A. The association between long working hours and health: A systematic review of epidemiological evidence. Scand. J. Work. Environ. Health 2014, 40, 5–18. [Google Scholar] [CrossRef] [PubMed]

- Alexandris, K.; Carroll, B. Constraints on recreational sport participation in adults in Greece: Implications for providing and managing sport services. J. Sport Manag. 1999, 13, 317–332. [Google Scholar] [CrossRef]

- Khakurel, J.; Melkas, H.; Porras, J. Tapping into the wearable device revolution in the work environment: A systematic review. Inf. Technol. People 2018, 31, 791–818. [Google Scholar] [CrossRef]

- Chowdhury, A.P. 10 Areas Where Artificial Intelligence Is Going To Impact Our Lives In Future. Available online: https://analyticsindiamag.com/10-areas-artificial-intelligence-going-impact-lives-future/ (accessed on 25 June 2018).

- Sorell, T.; Draper, H. Robot carers, ethics, and older people. Ethics Inf. Technol. 2014, 16, 183–195. [Google Scholar] [CrossRef]

- Chen, T.L.; Bhattacharjee, T.; Beer, J.M.; Ting, L.H.; Hackney, M.E.; Rogers, W.A.; Kemp, C.C. Older adults’ acceptance of a robot for partner dance-based exercise. PLoS ONE 2017, 12. [Google Scholar] [CrossRef] [PubMed]

- Ivanov, S. Robonomics—Principles, Benefits, Challenges, Solutions; Yearbook of Varna University of Management: Varna, Bulgaria, 2017; Volume 10. [Google Scholar]

- Gale, C.R.; Westbury, L.; Cooper, C. Social isolation and loneliness as risk factors for the progression of frailty: The English Longitudinal Study of Ageing. Age Ageing 2017. [Google Scholar] [CrossRef] [PubMed]

- Villani, C. For a Meaningful Artificial Intelligence: Towards a French and European Strategy; Government of France: Paris, France. Available online: https://www.aiforhumanity.fr/pdfs/MissionVillani_Report_ENG-VF.pdf (accessed on 3 November 2018).

- Bostrom, N.; Yudkowsky, E. The ethics of artificial intelligence. In The Cambridge Handbook of Artificial Intelligence; Cambridge University Press: Cambridge, UK, 2011; pp. 316–334. [Google Scholar]

- Dignum, V. Ethics in artificial intelligence: introduction to the special issue. Ethics Inf. Technol. 2018, 20, 1–3. [Google Scholar] [CrossRef]

- Simpson, J.A.; Farrell, A.K.; Oriña, M.M.; Rothman, A.J. Power and social influence in relationships. In APA Handbook of Personality and Social Psychology, Volume 3: Interpersonal Relations; American Psychological Association: Washington, DC, USA, 2015; Volume 3, pp. 393–420. [Google Scholar]

- Wisskirchen, G.; Thibault, B.; Bormann, B.U.; Muntz, A.; Niehaus, G.; Soler, G.J.; Von Brauchitsch, B. Artificial Intelligence and Robotics and Their Impact on the Workplace; IBA Global Employment Institute: London, UK, 2017. [Google Scholar]

- Hasse, C. Posthuman learning: AI from novice to expert? AI Soc. 2018. [Google Scholar] [CrossRef]

- Serholt, S.; Barendregt, W.; Vasalou, A.; Alves-Oliveira, P.; Jones, A.; Petisca, S.; Paiva, A. The case of classroom robots: Teachers’ deliberations on the ethical tensions. AI Soc. 2017. [Google Scholar] [CrossRef]

- Borenstein, J.; Arkin, R.C. Nudging for good: Robots and the ethical appropriateness of nurturing empathy and charitable behavior. AI Soc. 2017. [Google Scholar] [CrossRef]

- Fan, W.; Gordon, M.D. The power of social media analytics. Commun. ACM 2014. [Google Scholar] [CrossRef]

- Varol, O.; Ferrara, E.; Menczer, F.; Flammini, A. Early detection of promoted campaigns on social media. EPJ Data Sci. 2017. [Google Scholar] [CrossRef]

- Copeland, S.; de Moor, A. Community Digital Storytelling for Collective Intelligence: Towards a Storytelling Cycle of Trust. AI Soc. 2018, 33, 101–111. [Google Scholar] [CrossRef]

- Kingston, J. Artificial Intelligence and Legal Liability. arXiv, 2018; arXiv:1802.07782. [Google Scholar]

- Hallevy, P.G. The Criminal Liability of Artificial Intelligence Entities. SSRN Electron. J. 2010. [Google Scholar] [CrossRef]

- Yano, K. How Artificial Intelligence Will Change HR. People Strategy, p. 42+. Academic OneFile. 2017. Available online: http://go.galegroup.com/ps/anonymous?id=GALE|A499598708&sid=googleScholar&v=2.1&it=r&linkaccess=abs&issn=19464606&p=AONE&sw=w (accessed on 3 November 2018).

- Kirkpatrick, K. AI in contact centers. Commun. ACM 2017. [Google Scholar] [CrossRef]

- Lebeuf, C.; Storey, M.-A.; Zagalsky, A. Software Bots. IEEE Softw. 2018, 35, 18–23. [Google Scholar] [CrossRef]

- Ehrenfeld, J. Beyond sustainability: Why an all-consuming campaign to reduce unsustainability fails. ChangeThis 2006, 25, 1–17. [Google Scholar]

- Rescher, N. Introduction to Value Theory (Upa Nicholas Rescher Series), 1st ed.; Prentice Hall: Englewood Cliffs, NJ, USA, 1969. [Google Scholar]

- Rokeach, M. The Nature of Human Values; Free Press: New York, NY, USA, 1973; ISBN 0029267501. [Google Scholar]

- Cheng, A.-S.; Fleischmann, K.R. Developing a meta-inventory of human values. Proc. Am. Soc. Inf. Sci. Technol. 2010, 47, 1–10. [Google Scholar] [CrossRef]

- Leiserowitz, A.A.; Kates, R.W.; Parris, T.M. Sustainability Values, Attitudes, and Behaviors: A Review of Multinational and Global Trends. Annu. Rev. Environ. Resour. 2006, 31, 413–444. [Google Scholar] [CrossRef]

- Lammers, J.; Stoker, J.I.; Rink, F.; Galinsky, A.D. To Have Control Over or to Be Free From Others? The Desire for Power Reflects a Need for Autonomy. Personal. Soc. Psychol. Bull. 2016, 42, 498–512. [Google Scholar] [CrossRef] [PubMed]

- Frieden, J.; Rogowski, R. The Impact of the International Economy on National Policies: An Analytical Overview; Cambridge University Press: Cambridge, UK, 1996. [Google Scholar]

- Bundesregierung, D. Eckpunkte der Bundesregierung für Eine Strategie Künstliche Intelligenz. Bonn. Available online: https://www.bmbf.de/files/180718%20Eckpunkte_KI-Strategie%20final%20Layout.pdf (accessed on 27 July 2018).

- Digital Single Market EU Member States Sign up to Cooperate on Artificial Intelligence. Available online: https://ec.europa.eu/digital-single-market/en/news/eu-member-states-sign-cooperate-artificial-intelligence (accessed on 28 July 2018).

- Gesellschaft für Informatik Ethische Leitlinien. Available online: https://gi.de/ueber-uns/organisation/unsere-ethischen-leitlinien/ (accessed on 1 August 2018).

- Gibney, E. The ethics of computer science: This researcher has a controversial proposal. Nature 2018. [Google Scholar] [CrossRef]

- Roberts, K. The impact of leisure on society. World Leis. J. 2000, 42, 3–10. [Google Scholar] [CrossRef]

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Khakurel, J.; Penzenstadler, B.; Porras, J.; Knutas, A.; Zhang, W. The Rise of Artificial Intelligence under the Lens of Sustainability. Technologies 2018, 6, 100. https://doi.org/10.3390/technologies6040100

Khakurel J, Penzenstadler B, Porras J, Knutas A, Zhang W. The Rise of Artificial Intelligence under the Lens of Sustainability. Technologies. 2018; 6(4):100. https://doi.org/10.3390/technologies6040100

Chicago/Turabian StyleKhakurel, Jayden, Birgit Penzenstadler, Jari Porras, Antti Knutas, and Wenlu Zhang. 2018. "The Rise of Artificial Intelligence under the Lens of Sustainability" Technologies 6, no. 4: 100. https://doi.org/10.3390/technologies6040100

APA StyleKhakurel, J., Penzenstadler, B., Porras, J., Knutas, A., & Zhang, W. (2018). The Rise of Artificial Intelligence under the Lens of Sustainability. Technologies, 6(4), 100. https://doi.org/10.3390/technologies6040100