Beyond Short-Frame Acoustic Features: Capturing Long-Term Speech Patterns for Depression Detection

Abstract

1. Introduction

- Novel global acoustic features that explicitly capture depression-related speech behaviors under a subject-independent experimental setting are designed and introduced.

- By combining the proposed features with conventional general-purpose acoustic features in classification experiments, enhanced classification performance is achieved; this demonstrates the effectiveness and robustness of the proposed feature design.

- Feature importance analysis reveals that acoustic features related to pause interval play a particularly important role in depression classification.

2. Related Works

2.1. Studies on Depression Classification Using Machine Learning

2.2. Studies on Depression Classification Based on Acoustic Features

3. Dataset

4. Methods

4.1. Definition of Temporal Intervals in Speech Signals

4.2. Conventional Acoustic Features

4.2.1. openSMILE Features

4.2.2. Volume Level Features

4.3. Proposed Acoustic Features

4.3.1. Utterance Interval Feature Set: UL, UN, and UR

4.3.2. Pause Interval Feature Set: PL, PN, and PR

4.3.3. Response Interval Feature Set: RL, RN, and RR

4.3.4. Speech Density: SD

4.4. Construction of the Classification Model

4.4.1. Classification Algorithm

4.4.2. Feature Selection

4.4.3. Training and Classification

5. Experiments

5.1. Experimental Settings

5.2. Evaluation Metrics for Classification Performance

6. Results

6.1. Performance Comparison Between Linear and Nonlinear SVMs

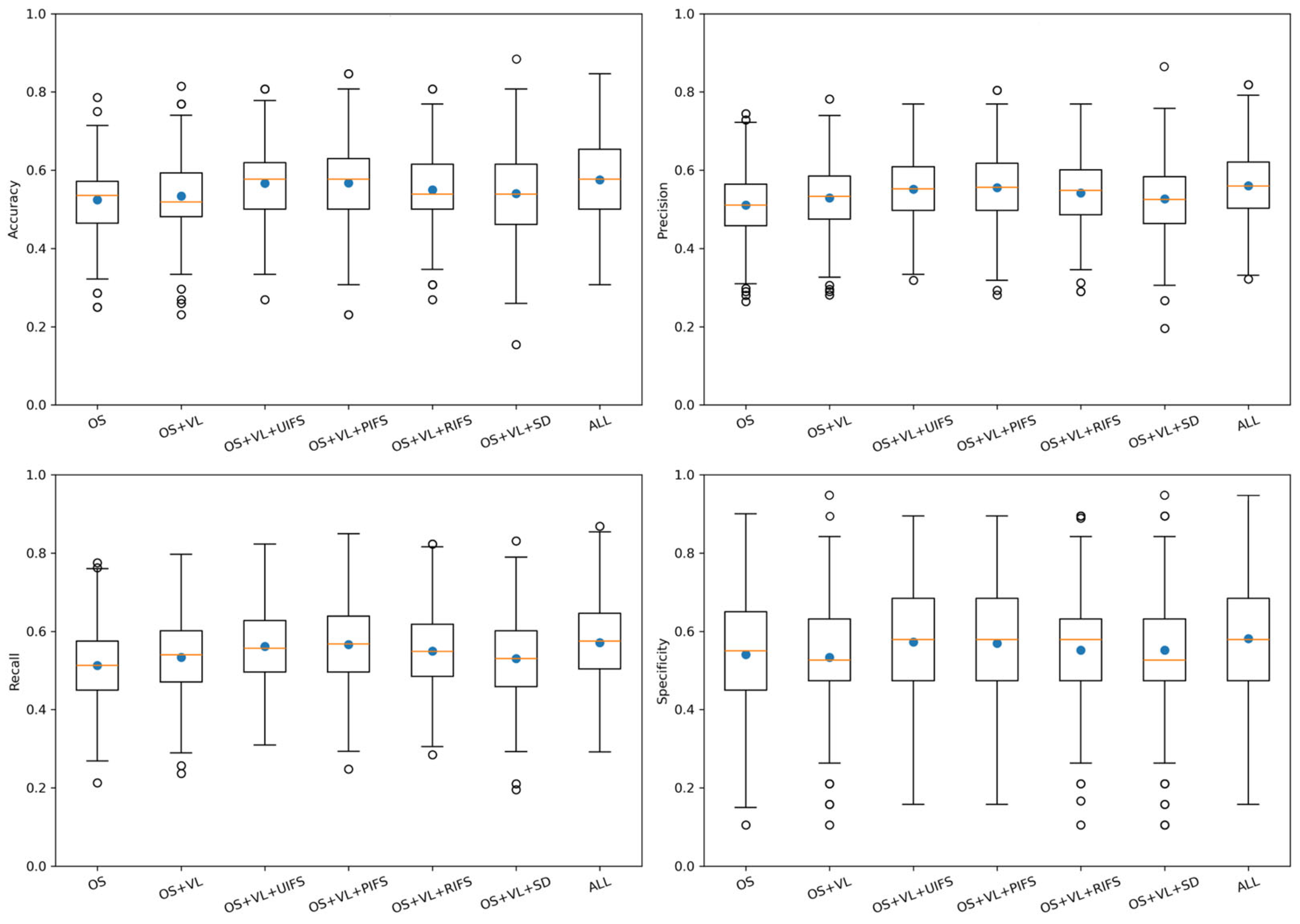

6.2. Classification Results Using Conventional and Proposed Acoustic Features

6.3. Feature Importance of the Best-Performing Feature Set

7. Discussion

7.1. Discussion on Performance Comparison Between Linear and Nonlinear SVMs

7.2. Classification Results of Conventional and Proposed Acoustic Features

7.2.1. Effectiveness of the Proposed Acoustic Features

7.2.2. Comparison of Classification Performance with Previous Studies

7.3. Limitations and Future Directions of the Study

- (1)

- The integration of multimodal information represents a critical future direction for depression classification. Previous studies have reported that multimodal approaches combining speech with facial expressions, textual content, or physiological signals have achieved improved classification performance [52,53]. Using multiple modalities, individual differences and symptom variability can be more effectively captured while mitigating the impact of noise or missing data or modality-specific limitations. Future research should explore multimodal fusion strategies that combine acoustic features with complementary information sources to achieve more robust and reliable depression detection.

- (2)

- Data imbalance and limited sample size remain a significant challenge. Herein, under-sampling was applied to address class imbalance, which inevitably reduced the number of training samples. In general, increasing the number of training samples improves model generalization and robustness [54]. Therefore, expanding the dataset via large-scale data collection, multi-institutional collaboration, or public dataset integration constitutes an important direction for future research. Such data expansion will facilitate the application of deep learning models, which typically require large-scale datasets to fully exploit their representational capacity.

- (3)

- The exploration of feature selection strategies was limited to a single method, RFECV. Although RFECV provides an effective framework for selecting discriminative features, alternative methods such as sequential forward selection (SFS) and sequential backward selection (SBS) may yield different feature subsets and classification performance. A systematic comparison of multiple feature selection techniques would provide deeper insights into optimal feature subset construction and contribute to more robust model design for depression classification.

- (4)

- In addition, the SD metric showed relatively lower discriminative performance compared with the other proposed feature sets. One possible reason is that the current implementation does not employ a dedicated VAD algorithm, and the separation between unvoiced consonants and background noise is not always perfect. Such misclassification could lead to an overestimation or underestimation of SD. Therefore, introducing a more robust VAD or noise-resilient voicing detection method will be important for improving the SD feature in future work.

- (5)

- Furthermore, this study did not control demographic factors such as gender, age, and speaker-specific characteristics, and these factors may have influenced both the extracted acoustic features and the generalization ability of the trained model. In particular, clear differences in fundamental frequency and formant structure between male and female speakers are well known, and gender-dependent patterns in depressive speech have also been reported [55]. In addition, demographic attributes such as gender, age, and speaker-specific characteristics have been identified as potential sources of bias in depression-detection models [18]. Therefore, future work should utilize datasets containing a wider range of demographic profiles and develop methods that appropriately separate and evaluate the influence of speaker-related factors on depression classification.

8. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| UIFS | Utterance interval feature set |

| PIFS | Pause interval feature set |

| RIFS | Response interval feature set |

| SD | Speech density |

| UL | Utterance length |

| UN | Utterance number |

| UR | Utterance ratio |

| PL | Pause length |

| PN | Pause number |

| PR | Pause ratio |

| RL | Response length |

| RN | Response number |

| RR | Response ratio |

| OS | openSMILE features |

| VL | Volume level features |

| LLD | Low-level descriptor |

| MFCC | Mel-frequency cepstral coefficient |

| PLP-CC | Perceptual linear prediction cepstral coefficients |

| LPC | Linear predictive coding |

| LSP | Line spectral pair |

| HNR | Harmonics-to-noise ratio |

| DCT | Discrete cosine transform |

| ACF | Autocorrelation function |

| SHS | Sub-harmonic summation |

| FFT | Fast Fourier transform |

| CENS | Chroma energy normalized statistics |

| SVM | Support vector machine |

| RBF | Radial basis function |

| RFECV | Recursive feature elimination with cross-validation |

| RFE | Recursive feature elimination |

| CV | Cross-validation |

| SFS | Sequential forward selection |

| SBS | Sequential backward selection |

| DNN | Deep neural network |

| CNN | Convolutional neural network |

| RNN | Recurrent neural network |

| LSTM | Long short-term memory |

| True positive | |

| True negative | |

| False positive | |

| False negative | |

| DAIC-WOZ | Distress Analysis Interview Corpus-Wizard of Oz |

References

- Marx, W.; Penninx, B.W.J.H.; Solmi, M.; Furukawa, T.A.; Firth, J.; Carvalho, A.F.; Berk, M. Major depressive disorder. Nat. Rev. Dis. Primers 2023, 9, 44. [Google Scholar] [CrossRef] [PubMed]

- World Health Organization. Depression. Available online: https://www.who.int/news-room/fact-sheets/detail/depression (accessed on 15 November 2022).

- Amanat, A.; Rizwan, M.; Javed, A.R.; Abdelhaq, M.; Alsaqour, R.; Pandya, S.; Uddin, M. Deep learning for depression detection from textual data. Electronics 2022, 11, 676. [Google Scholar] [CrossRef]

- Liu, D.; Feng, X.L.; Ahmed, F.; Shahid, M.; Guo, J. Detecting and measuring depression on social media using a machine learning approach: Systematic review. JMIR Ment. Health 2022, 9, e27244. [Google Scholar] [CrossRef] [PubMed]

- Tahir, W.B.; Khalid, S.; Almutairi, S.; Abohashrh, M.; Memon, S.A.; Khan, J. Depression detection in social media: A comprehensive review of machine learning and deep learning techniques. IEEE Access 2025, 13, 12789–12818. [Google Scholar] [CrossRef]

- He, L.; Jiang, D.; Sahli, H. Automatic depression analysis using dynamic facial appearance descriptor and Dirichlet process Fisher encoding. IEEE Trans. Multimed. 2019, 21, 1476–1486. [Google Scholar] [CrossRef]

- Cao, X.; Zhai, L.; Zhai, P.; Li, F.; He, T.; He, L. Deep learning based depression recognition through facial expression: A systematic review. Neurocomputing 2025, 627, 129605. [Google Scholar] [CrossRef]

- Wang, R.; Huang, J.; Zhang, J.; Liu, X.; Zhang, X.; Liu, Z.; Zhao, P.; Chen, S.; Sun, X. FacialPulse: An efficient RNN based depression detection via temporal facial landmarks. arXiv 2024, arXiv:2408.03499. [Google Scholar] [CrossRef]

- Asgari, M. Algorithms for Extracting Robust and Accurate Speech Features and Their Application in Clinical Domain. Ph.D. Dissertation, Oregon Health & Science University, Portland, OR, USA, 2014. [Google Scholar]

- Maran, P.L.; Braquehais, M.D.; Vlaic, A.; Alonzo Castillo, M.T.; Vendrell Serres, J.; Ramos Quiroga, J.A.; Rodríguez Urrutia, A. Performance of automatic speech analysis in detecting depression: Systematic review and meta-analysis. JMIR Ment. Health 2025, 12, e67802. [Google Scholar] [CrossRef]

- Almaghrabi, S.A.; Clark, S.R.; Baumert, M. Bioacoustic features of depression: A review. Biomed. Signal Process. Control 2023, 85, 105020. [Google Scholar] [CrossRef]

- Esposito, A.; Raimo, G.; Maldonato, M.; Vogel, C.; Conson, M.; Cordasco, G. Behavioral sentiment analysis of depressive states. In Proceedings of the 11th IEEE International Conference on Cognitive Infocommunications (CogInfoCom 2020), Naples, Italy, 16–18 September 2020. [Google Scholar]

- Degottex, G.; Kane, J.; Drugman, T.; Raitio, T.; Scherer, S. COVAREP: A collaborative voice analysis repository for speech technologies. In Proceedings of the ICASSP 2014, Florence, Italy, 4–9 May 2014. [Google Scholar]

- Eyben, F.; Wöllmer, M.; Schuller, B. openSMILE—The Munich versatile and fast open-source audio feature extractor. In Proceedings of the ACM MM 2010, Florence, Italy, 25–29 October 2010. [Google Scholar]

- Solieman, H.; Pustozerov, E.A. The detection of depression using multimodal models based on text and voice quality features. In Proceedings of the ElConRus 2021, St. Petersburg, Russia, 26–29 January 2021. [Google Scholar]

- Higuchi, M.; Nakamura, M.; Shinohara, S.; Omiya, Y.; Takano, T.; Mizuguchi, D.; Sonota, N.; Toda, H.; Saito, T.; So, M.; et al. Detection of major depressive disorder based on a combination of voice features: An exploratory approach. Int. J. Environ. Res. Public Health 2022, 19, 11397. [Google Scholar] [CrossRef]

- Tasnim, M.; Stroulia, E. Detecting depression from voice. In Proceedings of the Advances in Artificial Intelligence: Proceedings of Canadian AI 2019, Kingston, ON, Canada, 28–31 May 2019. [Google Scholar]

- Dumpala, S.H.; Rodriguez, S.; Rempel, S.; Uher, R.; Oore, S. Significance of speaker embeddings and temporal context for depression detection. arXiv 2021, arXiv:2107.13969. [Google Scholar] [CrossRef]

- Scherer, S.; Stratou, G.; Gratch, J.; Morency, L.P. Investigating voice quality as a speaker-independent indicator of depression and PTSD. In Proceedings of the Interspeech 2013, Lyon, France, 25–29 August 2013. [Google Scholar]

- Wang, J.; Ravi, V.; Flint, J.; Alwan, A. Unsupervised instance discriminative learning for depression detection from speech signals. In Proceedings of the Interspeech 2022, Incheon, Republic of Korea, 18–22 September 2022. [Google Scholar]

- Ishimaru, M.; Okada, Y.; Uchiyama, R.; Horiguchi, R.; Toyoshima, I. Classification of depression and its severity based on multiple audio features using a graphical convolutional neural network. Int. J. Environ. Res. Public Health 2023, 20, 1588. [Google Scholar] [CrossRef] [PubMed]

- Kim, A.Y.; Jang, E.H.; Lee, S.H.; Choi, K.Y.; Park, J.G.; Shin, H.C. Automatic depression detection using smartphone-based text-dependent speech signals: Deep convolutional neural network approach. J. Med. Internet Res. 2023, 25, e34474. [Google Scholar] [CrossRef] [PubMed]

- Wang, J.; Ravi, V.; Flint, J.; Alwan, A. Speechformer-CTC: Sequential modeling of depression detection with speech temporal classification. Speech Commun. 2024, 163, 103049. [Google Scholar] [CrossRef]

- Menne, F.; Dörr, F.; Schräder, J.; Tröger, J.; Habel, U.; König, A.; Wagels, L. The voice of depression: Speech features as biomarkers for major depressive disorder. BMC Psychiatry 2024, 24, 794. [Google Scholar] [CrossRef]

- Mundt, J.C.; Vogel, A.P.; Feltner, D.E.; Lenderking, W.R. Vocal acoustic biomarkers of depression severity and treatment response. Biol. Psychiatry 2012, 72, 580–587. [Google Scholar] [CrossRef]

- Trifu, R.N.; Nemeș, B.; Herta, D.C.; Bodea-Hategan, C.; Talaș, D.A.; Coman, H. Linguistic markers for major depressive disorder: A cross-sectional study using an automated procedure. Front. Psychol. 2024, 15, 1355734. [Google Scholar] [CrossRef]

- Cortes, C.; Vapnik, V. Support-vector networks. Mach. Learn. 1995, 20, 273–297. [Google Scholar] [CrossRef]

- Sumali, B.; Mitsukura, Y.; Liang, K.C.; Yoshimura, M.; Kitazawa, M.; Takamiya, A.; Fujita, T.; Mimura, M.; Kishimoto, T. Speech quality feature analysis for classification of depression and dementia patients. Sensors 2020, 20, 3599. [Google Scholar] [CrossRef]

- Nasir, M.; Jati, A.; Shivakumar, P.G.; Chakravarthula, S.N.; Georgiou, P. Multimodal and multiresolution depression detection from speech and facial landmark features. In Proceedings of the AVEC 2016, Amsterdam, The Netherlands, 16 October 2016. [Google Scholar]

- Rejaibi, E.; Komaty, A.; Meriaudeau, F.; Agrebi, S.; Othmani, A. MFCC-based recurrent neural network for automatic clinical depression recognition and assessment from speech. Biomed. Signal Process. Control 2022, 71, 103149. [Google Scholar] [CrossRef]

- Zhang, X.; Zhang, X.; Chen, W.; Li, C.; Yu, C. Improving speech depression detection using transfer learning with wav2vec 2.0 in low-resource environments. Sci. Rep. 2024, 14, 9543. [Google Scholar] [CrossRef] [PubMed]

- Wei, Y.; Qin, S.; Liu, F.; Liu, R.; Zhou, Y.; Chen, Y.; Xiong, X.; Zheng, W.; Ji, G.; Meng, Y.; et al. Acoustic-based machine learning approaches for depression detection in Chinese university students. Front. Public Health 2025, 13, 1561332. [Google Scholar] [CrossRef] [PubMed]

- Wang, Y.; Liang, L.; Zhang, Z.; Xu, X.; Liu, R.; Fang, H.; Zhang, R.; Wei, Y.; Liu, Z.; Zhu, R.; et al. Fast and accurate assessment of depression based on voice acoustic features: A cross-sectional and longitudinal study. Front. Psychiatry 2023, 14, 1195276. [Google Scholar] [CrossRef] [PubMed]

- Dumpala, S.H.; Dikaios, K.; Rodriguez, S.; Langley, R.; Rempel, S.; Uher, R.; Oore, S. Manifestation of depression in speech overlaps with characteristics used to represent and recognize speaker identity. Sci. Rep. 2023, 13, 11155. [Google Scholar] [CrossRef]

- Miao, X.; Li, Y.; Liu, Y.; Julian, I.N.; Guo, H. Fusing features of speech for depression classification based on higher-order spectral analysis. Speech Commun. 2022, 143, 46–56. [Google Scholar] [CrossRef]

- Lim, E.; Jhon, M.; Kim, J.W.; Kim, S.H.; Kim, S.; Yang, H.J. A lightweight approach based on cross-modality for depression detection. Comput. Biol. Med. 2025, 186, 109618. [Google Scholar] [CrossRef]

- Gratch, J.; Artstein, R.; Lucas, G.; Stratou, G.; Scherer, S.; Nazarian, A.; Wood, R.; Boberg, J.; DeVault, D.; Marsella, S.; et al. The distress analysis interview corpus of human and computer interviews. In Proceedings of the LREC 2014, Reykjavik, Iceland, 26–31 May 2014. [Google Scholar]

- Kroenke, K.; Strine, T.W.; Spitzer, R.L.; Williams, J.B.W.; Berry, J.T.; Mokdad, A.H. The PHQ-8 as a measure of current depression in the general population. J. Affect. Disord. 2009, 114, 163–173. [Google Scholar] [CrossRef]

- Eyben, F.; Scherer, K.R.; Schuller, B.W.; Sundberg, J.; André, E.; Busso, C. The geneva minimalistic acoustic parameter set (GeMAPS) for voice research and affective computing. IEEE Trans. Affect. Comput. 2016, 7, 190–202. [Google Scholar] [CrossRef]

- Toyoshima, I.; Okada, Y.; Ishimaru, M.; Uchiyama, R.; Tada, M. Multi-input speech emotion recognition model using mel spectrogram and GeMAPS. Sensors 2023, 23, 1743. [Google Scholar] [CrossRef]

- Atmaja, B.T.; Akagi, M. Dimensional speech emotion recognition from speech features and word embeddings by using multitask learning. APSIPA Trans. Signal Inf. Process. 2020, 9, e17. [Google Scholar] [CrossRef]

- Jordan, E.; Terrisse, R.; Lucarini, V.; Alrahabi, M.; Krebs, M.-O.; Desclés, J.; Lemey, C. Speech emotion recognition in mental health: Systematic review of voice-based applications. JMIR Ment. Health 2025, 12, e74260. [Google Scholar] [CrossRef] [PubMed]

- Wang, J.; Zhang, L.; Liu, T.; Pan, W.; Hu, B.; Zhu, T. Acoustic differences between healthy and depressed people: A cross-situation study. BMC Psychiatry 2019, 19, 300. [Google Scholar] [CrossRef] [PubMed]

- Schneider, K.; Leinweber, K.; Jamalabadi, H.; Teutenberg, L.; Brosch, K.; Pfarr, J.K.; Thomas-Odenthal, F.; Usemann, P.; Wroblewski, A.; Straube, B.; et al. Syntactic complexity and diversity of spontaneous speech production in schizophrenia spectrum and major depressive disorders. Schizophrenia 2023, 9, 63. [Google Scholar] [CrossRef] [PubMed]

- Mundt, J.C.; Snyder, P.J.; Cannizzaro, M.S.; Chappie, K.; Geralts, D.S. Voice acoustic measures of depression severity and treatment response collected via interactive voice response (IVR) technology. J. Neurolinguist. 2007, 20, 50–64. [Google Scholar] [CrossRef]

- Deurzen, P.A.M.; Buitelaar, J.K.; Brunnekreef, J.A.; Ormel, J.; Minderaa, R.B.; Hartman, C.A.; Huizink, A.C.; Speckens, A.E.M.; Oldehinkel, A.J.; Slaats-Willemse, D.I.E. Response time variability and response inhibition predict affective problems in adolescent girls, not in boys: The TRAILS study. Eur. Child Adolesc. Psychiatry 2012, 21, 277–287. [Google Scholar] [CrossRef]

- Mauch, M.; Dixon, S. PYIN: A fundamental frequency estimator using probabilistic threshold distributions. In Proceedings of the ICASSP 2014, Florence, Italy, 4–9 May 2014. [Google Scholar]

- Guyon, I.; Weston, J.; Barnhill, S.; Vapnik, V. Gene selection for cancer classification using support vector machines. Mach. Learn. 2002, 46, 389–422. [Google Scholar] [CrossRef]

- Bennabi, D.; Vandel, P.; Papaxanthis, C.; Pozzo, T.; Haffen, E. Psychomotor retardation in depression: A systematic review of diagnostic, pathophysiologic, and therapeutic implications. Biomed. Res. Int. 2013, 2013, 158746. [Google Scholar] [CrossRef]

- Yamamoto, M.; Takamiya, A.; Sawada, K.; Yoshimura, M.; Kitazawa, M.; Liang, K.-C.; Fujita, T.; Mimura, M.; Kishimoto, T. Using speech recognition technology to investigate the association between timing-related speech features and depression severity. PLoS ONE 2020, 15, e0238726. [Google Scholar] [CrossRef]

- Cummins, N.; Vlasenko, B.; Sagha, H.; Schuller, B. Enhancing speech-based depression detection through gender dependent vowel-level formant features. In Proceedings of the Artificial Intelligence in Medicine (AIME 2017), Vienna, Austria, 30 May 2017. [Google Scholar]

- Xu, C.; Chen, Y.; Tao, Y.; Xie, W.; Liu, X.; Lin, Y.; Liang, C.; Du, F.; Lin, Z.; Shi, C. Deep learning-based detection of depression by fusing auditory, visual and textual clues. J. Affect. Disord. 2025, 391, 119860. [Google Scholar] [CrossRef]

- Nurfidausi, A.F.; Mancini, E.; Torroni, P. TRI-DEP: A trimodal comparative study for depression detection using speech, text, and EEG. arXiv 2025, arXiv:2510.14922. [Google Scholar] [CrossRef]

- Qiu, J.; Wu, Q.; Ding, G.; Xu, Y.; Feng, S. A survey of machine learning for big data processing. EURASIP J. Adv. Signal Process. 2016, 2016, 67. [Google Scholar] [CrossRef]

- Esposito, A.; Esposito, A.M.; Likforman-Sulem, L.; Maldonato, N.M.; Vinciarelli, A. On the significance of speech pauses in depressive disorders: Results on read and spontaneous narratives. In Recent Advances in Nonlinear Speech Processing; Springer: Cham, Switzerland, 2016; pp. 73–82. [Google Scholar]

| Over the Last 2 Weeks, How Often Have You Been Bothered by Any of the Following Problems? | Not at All | Several Days | More Than Half the Days | Nearly Every Day |

|---|---|---|---|---|

| 1. Little interest or pleasure in doing things | 0 | 1 | 2 | 3 |

| 2. Feeling down, depressed, or hopeless | 0 | 1 | 2 | 3 |

| 3. Trouble falling or staying asleep or sleeping too much | 0 | 1 | 2 | 3 |

| 4. Feeling tired or having little energy | 0 | 1 | 2 | 3 |

| 5. Poor appetite or overeating | 0 | 1 | 2 | 3 |

| 6. Feeling bad about yourself or that you are a failure or have let yourself or your family down | 0 | 1 | 2 | 3 |

| 7. Trouble concentrating on things such as reading the newspaper or watching television | 0 | 1 | 2 | 3 |

| 8. Moving or speaking so slowly that other people could have noticed? Or the opposite, being so fidgety or restless that you have been moving around a lot more than usual | 0 | 1 | 2 | 3 |

| PHQ-8 Scores | 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 | 12 | 13 | 14 | 15 | 16 | 17 | 18 | 19 | 20 | 21 | 22 | 23 | 24 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Severity level | Asymptomatic | Mild | Moderate | Moderate to severe | Severe | ||||||||||||||||||||

| Assessment | Nondepressed | Depressed | |||||||||||||||||||||||

| Feature Group | Description |

|---|---|

| Waveform | Zero crossings, extremes, and DC offset |

| Signal energy | Root mean square energy and logarithmic energy |

| Loudness | Intensity and approximate loudness |

| FFT spectrum | Phase and magnitude (lin, dB, and dBA scales) |

| ACF, Cepstrum | Autocorrelation and Cepstrum |

| Mel/Bark spectr. | Bands 0- |

| Semitone spectrum | FFT-based and filter-based |

| Cepstral | Cepstral feature, e.g., MFCC and PLP-CC |

| Pitch | via ACF and SHS methods; probability of voicing |

| Voice Quality | HNR, jitter, and shimmer |

| LPC | LPC coefficients, reflection coefficients, residual signal, line spectral pairs (LSPs) |

| Auditory | Auditory spectra and PLP coefficients |

| Formants | Center frequencies and bandwidths |

| Spectral | Energy in N user-defined bands, multiple roll-off points, centroid, entropy, flux, and relative position of maxima/minima |

| Tonal | CHROMA, CENS, CHROMA-based features |

| Category | Description |

|---|---|

| Extremes | Extreme values, positions of extrema, and ranges |

| Means | Arithmetic mean, quadratic mean, and geometric mean |

| Moments | Standard deviation, variance, kurtosis, and skewness |

| Percentiles | Percentiles and percentile ranges |

| Regression | Liner and quadratic approximation coefficients, regression error, and centroid |

| Peaks | Number of peaks, mean peak distance, and mean peak amplitude |

| Segments | Number of segments based on delta thresholding and mean segment length |

| Sample values | Contour values at configurable relative positions |

| Times/durations | Up- and down-level times, rise and fall times, and duration |

| Onsets | Number of onsets and relative position of first and last on-/offset |

| DCT | Coefficients of the discrete cosine transformation (DCT) |

| Zero crossings | Zero-crossing rate and mean-crossing rate |

| Acoustic Feature Set (Dimensionality) | Acoustic Feature | Description |

| UIFS (19) | UL | Total duration, mean, median, mode, count, skewness, kurtosis, and standard deviation |

| UN | Total count of utterances | |

| UR | Ratio of the total utterance interval to the total duration of utterance and nonutterance intervals | |

| PIFS (19) | PL | Total duration, mean, median, mode, count, skewness, kurtosis, and standard deviation |

| PN | Total count of pauses | |

| PR | Ratio of the total pause interval to the total duration of utterance and nonutterance intervals | |

| RIFS (19) | RL | Total duration, mean, median, mode, count, skewness, kurtosis, and standard deviation |

| RN | Total count of responses | |

| RR | Ratio of the total response interval to the total duration of utterance and nonutterance intervals | |

| SD (1) | Occurrence frequency of speech components per unit time | |

| Acoustic Feature | OS | VL | UIFS | PIFS | RIFS | SD | |

|---|---|---|---|---|---|---|---|

| Experimental Condition | |||||||

| OS (Baseline 1) | ○ | — | — | — | — | — | |

| OS + VL (Baseline 2) | ○ | ○ | — | — | — | — | |

| OS + VL + UIFS | ○ | ○ | ○ | — | — | — | |

| OS + VL + PIFS | ○ | ○ | — | ○ | — | — | |

| OS + VL + RIFS | ○ | ○ | — | — | ○ | — | |

| OS + VL + SD | ○ | ○ | — | — | — | ○ | |

| ALL | ○ | ○ | ○ | ○ | ○ | ○ | |

| Experimental Condition | Model | Accuracy | Precision | Recall | Specificity |

|---|---|---|---|---|---|

| OS | Linear | 0.54 [0.46–0.57] | 0.51 [0.45–0.56] | 0.51 [0.45–0.57] | 0.55 [0.45–0.65] |

| Nonlinear | 0.54 [0.46–0.57] | 0.51 [0.46–0.56] | 0.51 [0.45–0.58] | 0.55 [0.45–0.65] | |

| OS + VL | Linear | 0.54 [0.46–0.59] | 0.52 [0.46–0.58] | 0.52 [0.46–0.60] | 0.53 [0.47–0.63] |

| Nonlinear | 0.52 [0.48–0.59] | 0.53 [0.47–0.59] | 0.54 [0.47–0.60] | 0.53 [0.47–0.63] | |

| OS + VL + UIFS | Linear | 0.58 [0.50–0.65] | 0.56 [0.50–0.62] | 0.57 [0.50–0.64] | 0.58 [0.53–0.68] |

| Nonlinear | 0.58 [0.50–0.62] | 0.55 [0.50–0.61] | 0.56 [0.50–0.63] | 0.58 [0.47–0.68] | |

| OS + VL + PIFS | Linear | 0.58 [0.51–0.64] | 0.56 [0.50–0.61] | 0.57 [0.50–0.63] | 0.58 [0.53–0.68] |

| Nonlinear | 0.58 [0.50–0.63] | 0.56 [0.50–0.62] | 0.57 [0.50–0.64] | 0.58 [0.47–0.68] | |

| OS + VL + RIFS | Linear | 0.54 [0.50–0.62] | 0.54 [0.48–0.60] | 0.54 [0.48–0.61] | 0.58 [0.47–0.67] |

| Nonlinear | 0.54 [0.50–0.62] | 0.55 [0.49–0.60] | 0.55 [0.49–0.62] | 0.58 [0.47–0.63] | |

| OS + VL + SD | Linear | 0.54 [0.46–0.59] | 0.52 [0.46–0.58] | 0.52 [0.45–0.58] | 0.53 [0.47–0.63] |

| Nonlinear | 0.54 [0.46–0.62] | 0.53 [0.46–0.58] | 0.53 [0.46–0.60] | 0.53 [0.47–0.63] | |

| ALL | Linear | 0.58 [0.50–0.65] | 0.56 [0.49–0.62] | 0.57 [0.49–0.65] | 0.58 [0.53–0.68] |

| Nonlinear | 0.58 [0.50–0.65] | 0.56 [0.50–0.62] | 0.58 [0.50–0.65] | 0.58 [0.47–0.68] |

| Feature Set | Accuracy | Precision | Recall | Specificity |

|---|---|---|---|---|

| UIFS | 0.52 [0.48–0.60] | 0.52 [0.46–0.60] | 0.52 [0.47–0.60] | 0.55 [0.46–0.70] |

| PIFS | 0.50 [0.43–0.57] | 0.50 [0.43–0.57] | 0.50 [0.44–0.57] | 0.55 [0.40–0.64] |

| RIFS | 0.55 [0.45–0.60] | 0.55 [0.45–0.61] | 0.55 [0.45–0.60] | 0.55 [0.40–0.70] |

| SD | 0.50 [0.43–0.55] | 0.49 [0.38–0.60] | 0.50 [0.45–0.55] | 0.18 [0.09–0.30] |

| Author | Method | Feature | Accuracy | Precision | Recall | Specificity |

|---|---|---|---|---|---|---|

| S. Dumpala et al. [34] | DNN | COVAREP | 0.56 | |||

| CNN | COVAREP | 0.61 | ||||

| LSTM | COVAREP | 0.60 | ||||

| DNN | openSMILE | 0.59 | ||||

| CNN | openSMILE | 0.63 | ||||

| LSTM | openSMILE | 0.64 | ||||

| E. Lim et al. [36] | CNN | Mel spectrogram | 0.44 | 0.78 | ||

| Multimodal fusion (cross-modality) | Mel spectrogram | 0.59 | 0.60 | |||

| Multimodal fusion cross-modality model | HuBERT | 0.49 | 0.70 | |||

| Ours | Non-linear SVM | ALL | 0.58 | 0.56 | 0.58 | 0.58 |

| Author | Method | Feature | Accuracy | Precision | Recall | Specificity |

| S. Dumpala et al. [34] | DNN | openSMILE + Speaker Embeddings | 0.65 | |||

| CNN | openSMILE + Speaker Embeddings | 0.72 | ||||

| LSTM | openSMILE + Speaker Embeddings | 0.74 | ||||

| E. Lim et al. [36] | Multimodal fusion (cross-modality model) | Mel spectrogram + Text | 0.67 | 0.70 | ||

| Multimodal fusion (cross-modality model) | HuBERT + Text | 0.66 | 0.70 | |||

| Ours | Non-linear SVM | ALL | 0.58 | 0.56 | 0.58 | 0.58 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Fushimi, S.; Azani, M.A.; Chiba, M.; Okada, Y. Beyond Short-Frame Acoustic Features: Capturing Long-Term Speech Patterns for Depression Detection. Technologies 2026, 14, 198. https://doi.org/10.3390/technologies14040198

Fushimi S, Azani MA, Chiba M, Okada Y. Beyond Short-Frame Acoustic Features: Capturing Long-Term Speech Patterns for Depression Detection. Technologies. 2026; 14(4):198. https://doi.org/10.3390/technologies14040198

Chicago/Turabian StyleFushimi, Shizuku, Mohammad Aiman Azani, Mizuto Chiba, and Yoshifumi Okada. 2026. "Beyond Short-Frame Acoustic Features: Capturing Long-Term Speech Patterns for Depression Detection" Technologies 14, no. 4: 198. https://doi.org/10.3390/technologies14040198

APA StyleFushimi, S., Azani, M. A., Chiba, M., & Okada, Y. (2026). Beyond Short-Frame Acoustic Features: Capturing Long-Term Speech Patterns for Depression Detection. Technologies, 14(4), 198. https://doi.org/10.3390/technologies14040198