A comparative study of the SOTA CV models mentioned above was conducted to select the optimal one for the presented work. The models’ variants were also studied. Initially, a transfer learning strategy was adopted by using pretrained weights from the COCO dataset. This was carried out to accelerate the training process, and this approach allowed the SOTA CV models to converge faster and obtain better results. During the training phase, the created dataset was used to train the SOTA CV models while varying the hyperparameters, backbones, and loss functions. The SOTA CV models’ performance was evaluated based on accuracy and speed. The performance was assessed using metrics such as precision (P), recall (R), average precision (AP), and mean average precision (mAP).

Additionally, modifications to model depth and layer sizes were explored by experimenting with architectures from compact to deep (e.g., from the baseline model to multiple variations, each defined in the subscript), resulting in noticeable improvements in detection performance.

In contrast, hyperparameters like the batch size and optimizer type were found to be of lower sensitivity and thus had minimal impact on the final results. Throughout the training experiments, models were iteratively refined through changes in the epoch count, backbone architecture, and auxiliary parameters. Each version—represented as SOTA CV models

, etc.—reflects a progressively improved configuration, validated through consistent improvements in evaluation metrics such as the mAP, precision, and recall. The SOTA CV models, referred to as

and so on, which will be shown in the coming sections, represent different training configurations and fine-tuning adjustments, starting with model

as the baseline. This tuning process demonstrates a principled approach to hyperparameter optimization aligned with empirical sensitivity trends observed in DL research [

17].

2.3.2. You Only Look Once (YOLO)

The YOLO algorithm was introduced in [

21]. Since then, several variants of the YOLO algorithm have been proposed. The variants introduced improvements to the original YOLO algorithm, such as anchor boxes, skip connections, and feature pyramid networks, leading to further improvements in detection accuracy and speed. YOLO works by dividing an input image into a grid and predicting the bounding boxes, class probabilities, and Objectness scores for each cell in the grid [

22,

23,

24]. YOLO differs from other object detection algorithms that use region proposal networks (RPNs), which can be computationally expensive [

25].

YOLOv3 uses a 53-layer Darknet backbone as a feature extractor with residual connections to improve the quality of feature extractions and settle upon a brilliant solution for the gradient vanishing problem [

22]. As illustrated in the YOLOv3 architecture, the network then applies upsampling and feature-map concatenation (skip fusion) to increase the spatial resolution of the feature grids passed to the scale-prediction layers, enabling better detection of smaller objects. In these prediction layers, na denotes the number of anchors per scale and nc denotes the number of classes, so each output tensor has na×(nc+5) channels per grid cell, as shown in

Figure 6 and

Figure 7. YOLOv3 processes input images through a 106-layer convolutional network, combining the 53-layer Darknet backbone with additional layers for detection. It generates multi-scale outputs for bounding boxes, described by the Objectness scores, coordinates, and class labels, with the IoU and NMS used to refine overlapping predictions.

The YOLOv3 model architecture has no pooling layers to downsample the size of the convolution layer. Instead, YOLOv3 uses convolutional layers with a stride of two to downsample the convolution layers. This advantage prevents the model from losing low-level features and improves the ability of the model to predict the smaller objects perfectly. While YOLOv3 may not be the optimal choice for real-time deployment on edge boards due to its computational demands, it offers impressive performance capabilities. Nevertheless, by employing the same architecture in a smaller model (Tiny), real-time object detection with minimal degradation in accuracy can be achieved. This makes smaller models a more suitable option for edge devices where computational resources are limited.

The training optimizer choices were SGD and Adam optimizers, the batch size was selected to be between 12 and 32, and the training epochs varied between 100 and 300.

Table 4 presents the training results for the YOLOv3 (

) model, which was trained using SGD for up to 170 epochs. Early stopping was applied after 30 epochs without improvement, which slightly enhanced the training performance, with a P of 97.5%, R of 97.6%, mAp at an IoU of 0.5 (mAp@50) of 96.97%, and mAp50:95 through an IoU increasing by 0.05 (mAp@50:95) of 80.6%.

The YOLOv4 model architecture uses a backbone of CSPDarknet-53 or Darknet-53 which is responsible for extracting the features of the input image through five Resblocks, each consisting of a series of convolution layers with sizes of 1 × 1 and 3 × 3 [

23]. Additionally, each convolution layer is accompanied by a batch normalization (BN) layer and a leaky-ReLU activation layer.

The architecture of YOLOv4 is shown in

Figure 8, which highlights the model’s use of CSP or Darknet-53 as the backbone, enhanced by Mish activation functions, multi-scale detection, and advanced augmentation techniques. This configuration enables the model to achieve high accuracy, especially in detecting small objects.

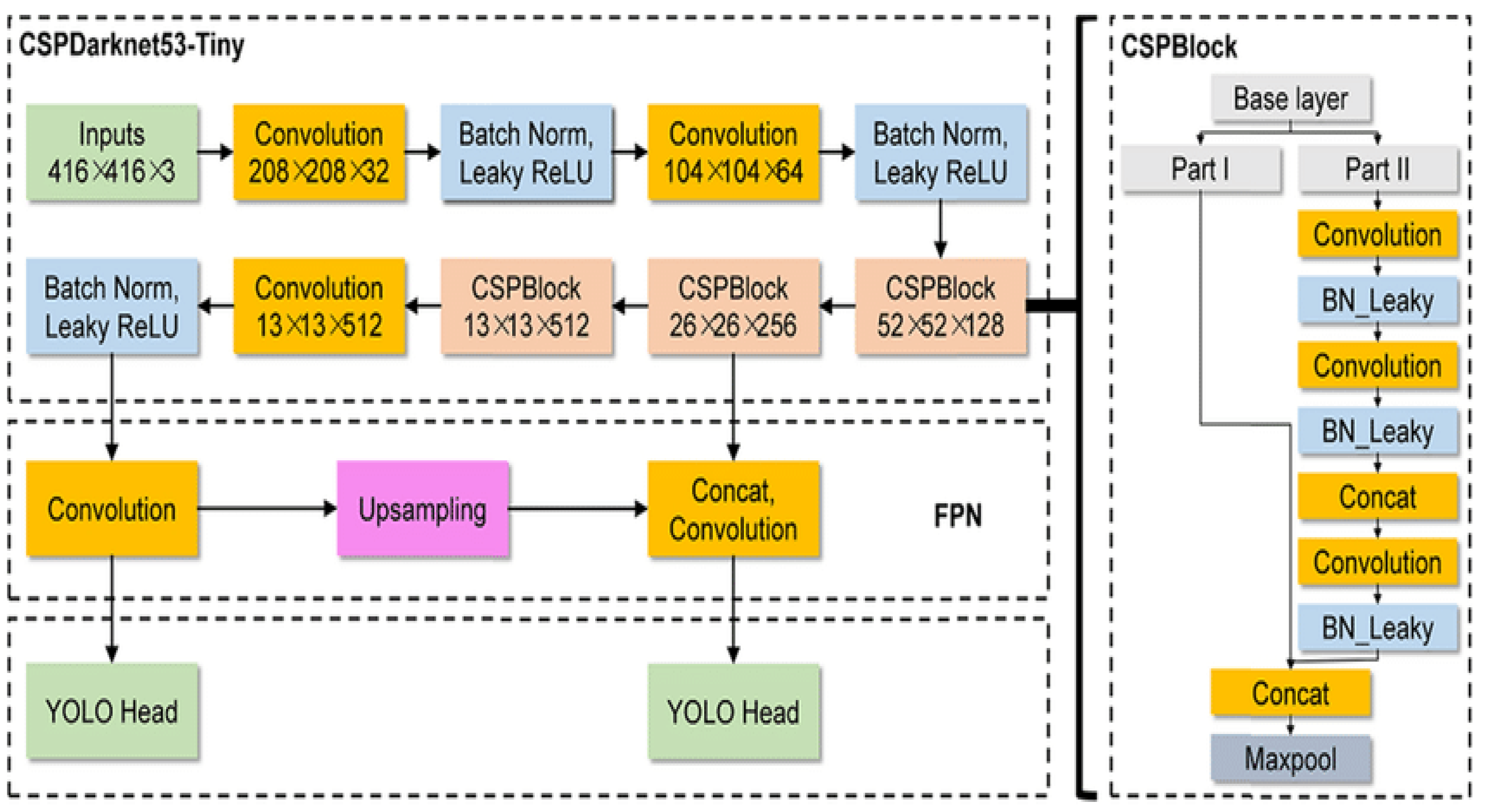

Figure 9 illustrates the YOLOv4-Tiny model, a streamlined version that retains the core principles of YOLOv4 but reduces the number of layers and computations for faster inference, which makes it more suitable for deployment on edge devices with limited resources. Both models employ three detection heads at different scales.

In terms of object detection, YOLOv4 significantly outperformed YOLOv3, especially in small object detection, thanks to advanced data augmentation techniques such as mosaic augmentation and self-adversarial training. These techniques allow YOLOv4 to be more generalized across different scales and environments, leading to improved accuracy. The YOLOv4 models were trained with SGD through 100 epochs with CSP as a backbone and a leaky-ReLU as an activation function. The YOLOv4 model (

) showed the best performance, with a P of 65.1%, R of 97.5%, mAp@50 of 96.97%, and mAp@50:95 of 78.4%, as shown in

Table 5.

The YOLOv5 series offers different architecture sizes, such as YOLOv5x, YOLOv5l, YOLOv5m, YOLOv5s, and YOLOv5n, with varying network architectures and floating-point operations per second (FLOPS) values [

24]. For our case study, the smaller models (YOLOv5m, YOLOv5s, and YOLOv5n) were chosen due to their suitability for training and deployment on edge devices. A key feature that sets YOLOv5 apart from previous versions, including YOLOv4, is the auto-learning bounding box anchors. In previous models, anchors were manually predefined or tuned based on a specific dataset. However, YOLOv5 introduces a dynamic approach where the model automatically learns the optimal anchor box sizes during training.

The network architecture for YOLOv5 (s,m) exhibits a structural similarity to the YOLOv5n model, as shown in

Figure 10 and

Figure 11. However, YOLOv5 (s,m) models incorporate an increased number of modules, leading to a more complex architecture compared with YOLOv5n. This complexity results in higher accuracy at the cost of a reduced inference speed.

As shown in

Table 6, the model trained with SGD through 300 epochs with a batch size of 64 (YOLOv5n (

)) showed the best performance among the smaller models, with a P of 98.07%, R of 95.46%, mAp@50 of 96.46%, and mAp@50:95 of 79.25%. Meanwhile, as shown in

Table 7, the model trained with SGD through 100 epochs with a batch size of 16 (YOLOv5m (

)) had the best performance, with a P of 96.96%, R of 96.61%, mAp@50 of 97.33%, and mAp@50:95 of 81.69%. This was the best achieved accuracy among the larger YOLOv5 models.

The YOLOv7 model uses Bag of Freebies and Bag of Specials as in YOLOv4 [

28]. At its core, YOLOv7 employs CSPDarknet-53 as its backbone network, serving as the primary feature extraction engine that processes input images through multiple convolutional layers enhanced with residual connections. Similar to YOLOv4, the architecture optimizes the performance without increasing the inference time. These residual connections play a crucial role in maintaining gradient flow throughout the deep network while facilitating effective feature propagation.

In addition to the backbone, YOLOv7 develops two innovative blocks, namely the Attention-Enhanced Local Aggregation Network (a-ELAN) and Bottleneck-Enhanced a-ELAN (b-E-ELAN), as shown in

Figure 12. These blocks significantly enhance feature extraction and improve object detection accuracy. The a-ELAN block applies an attention mechanism to focus on important features while reducing the effect of irrelevant regions in the image. These enhancements enable YOLOv7 to detect fine-grained details in small objects more effectively, outperforming previously used YOLO architectures in terms of both speed and accuracy.

The architecture of YOLOv7, shown in

Figure 13, incorporates CSPDarknet-53 as the backbone, along with SiLU activation and multi-scale detection techniques to improve region detection accuracy. Additionally, YOLOv7 uses data augmentation techniques, such as mosaic augmentation and self-adversarial training (SAT), as introduced in YOLOv4 architecture, which help the model generalize better across various object scales [

30,

31]. The inclusion of a-ELAN and b-E-ELAN as shown in

Figure 12 further optimizes YOLOv7’s ability to focus on critical region areas of the image, ensuring higher accuracy. This combination of architectural features and techniques enables YOLOv7 to achieve higher performance compared with its predecessors.

YOLOv7 was trained using the Adam optimizer for 300 epochs with CSPDarknet-53 as the backbone and SiLU as the activation function. The YOLOv7 model (

) achieved impressive performance, with a P of 97.8%, R of 93.7%, mAp@50 of 95.75%, and mAp@50:95 of 75.21%, as shown in

Table 8.

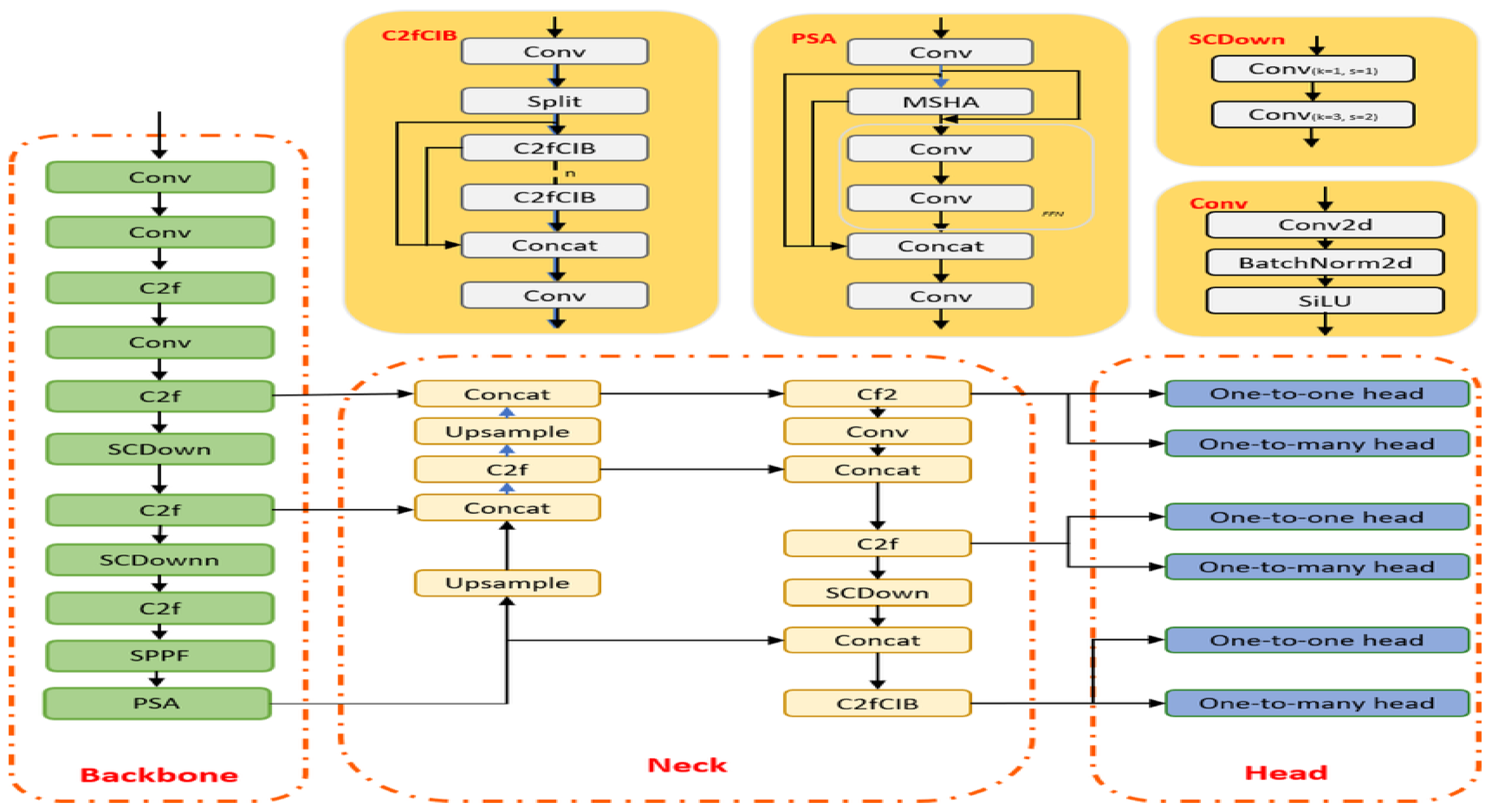

YOLOv10 has a different architecture compared with other YOLO models [

32]. YOLOv10’s backbone is based on a more advanced and efficient CSPDarknet-63 backbone, an enhancement over the CSPDarknet-53 backbone used in earlier versions, which extracts features from the input image through a series of convolution layers, using efficient residual connections to enhance gradient flow and feature propagation. Like its predecessors, YOLOv10 employs BN layers and activation functions such as leaky-ReLU to ensure stable training.

The architecture of YOLOv10, as shown in

Figure 14, highlights the use of CSPDarknet-63 as the backbone, alongside Mish activation functions and advanced augmentation techniques. YOLOv10 introduces innovative techniques like a new attention module to enhance feature extraction and improve the model’s accuracy. Moreover, the model uses optimized multi-scale detection for better performance on both small and large objects. Moreover, the YOLOv10-Nano model, depicted in

Figure 15, is a lighter and more efficient variation of YOLOv10 designed for deployment on edge devices with limited computational resources. It reduces the number of layers and operations to achieve faster inference times while still retaining high accuracy for small object detection tasks. Similarly, the YOLOv10-Small version maintains the core principles of YOLOv10 but offers a balance between computational efficiency and performance. Both the Nano and Small models use multiple detection heads at different scales to maximize detection accuracy across various object sizes.

YOLOv10 uses distributed focal loss in the training phase to address class imbalance issues by dynamically weighting the contribution of easy and hard examples during training. This approach enables the model to focus more effectively on difficult-to-detect objects while reducing emphasis on well-classified objects. In the previous YOLO models discussed (YOLOv3, YOLOv4, YOLOv5, and YOLOv7), the distributed loss function was not implemented. Therefore, a significant advancement in YOLOv10’s optimization strategy was noticed.

For training the YOLOv10 models, the Adam optimizer was used, with the models trained over 100 epochs and utilizing CSPDarknet-63 backbone variants with Mish activation functions. The YOLOv10n model (

) achieved impressive performance, with a P of 98.1%, R of 94.5%, mAp@50 of 98.65%, and mAp@50:95 of 81.2% using the CSPDarknet-63 tiny backbone at a 320 × 320 input resolution with a batch size of 32, as shown in

Table 9.