Evaluating the Potential of Decision Tree Modeling to Augment Return-to-Duty Decisions Following Major Limb Injury

Abstract

1. Introduction

2. Methods

2.1. Participants

2.2. Data Collection

2.3. Variables

2.4. Data Analysis

2.5. Statistical Analysis

3. Results

3.1. Unpruned Model

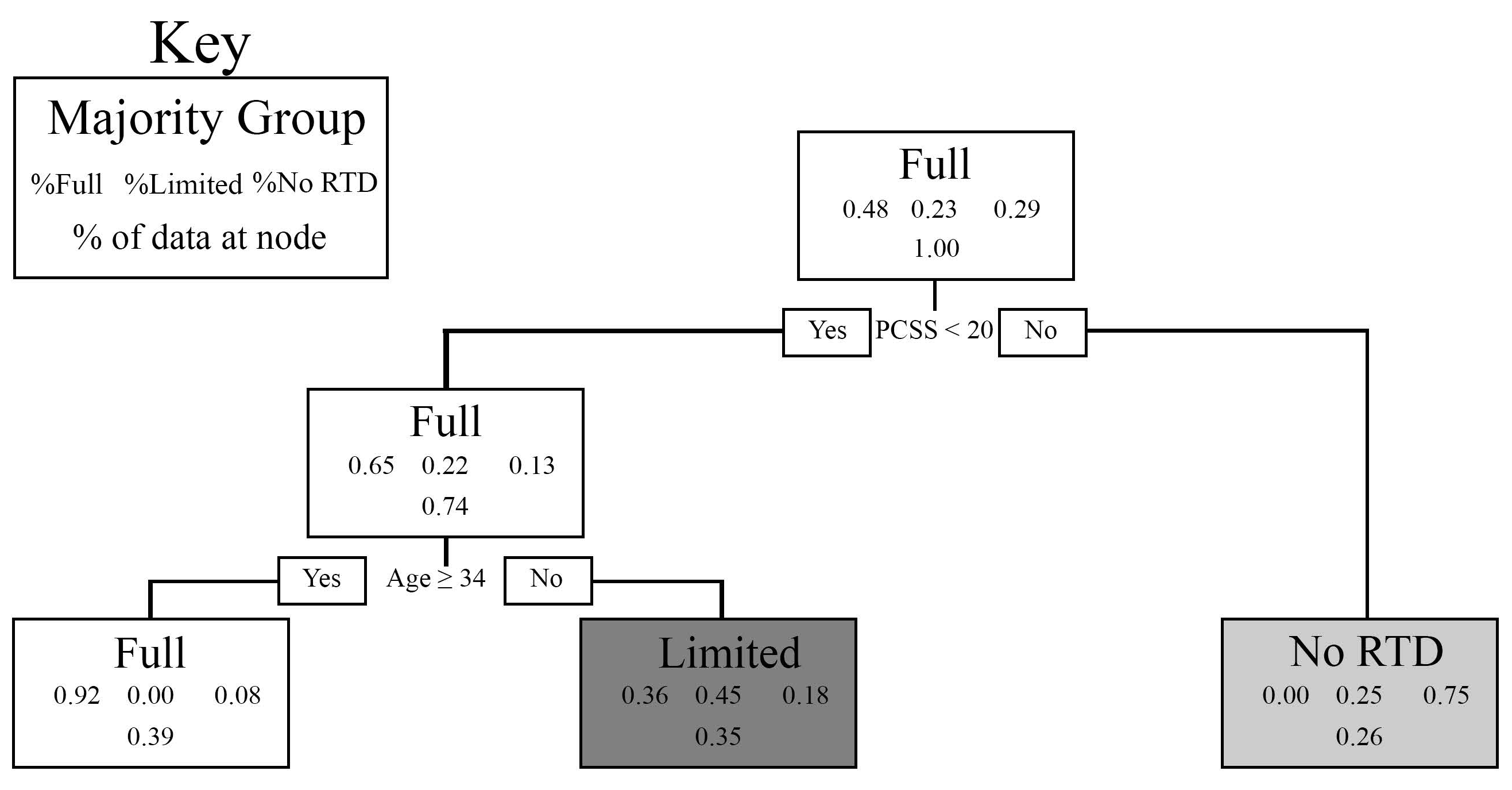

3.2. Finalized Pruned Model

3.3. Variable Importance Scores

4. Discussion

Study Limitations

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| GSW SF T-Score | PROMIS General Self-Efficacy Score |

| ITB | In-the-Bag |

| IRB | Institutional Review Board |

| NASA | National Aeronautics and Space Administration |

| O&P | Orthotic and Prosthetic |

| OOB | Out-of-the-Bag |

| PEB | Physical Evaluation Board |

| PCL-M | PTSD Checklist—Military |

| PCSS | Post-Concussion Symptom Survey |

| PROMIS | Patient-Reported Outcomes Measurement Information System |

| PTSD | Post-Traumatic Stress Disorder |

| REDOp | Readiness Evaluation during Simulated Dismounted Operations |

| RTD | Return to duty |

| SM | Service member |

| SPS | Stand–Prone–Stand |

| SKS-(R/L) | Stand–Kneel–Stand (Right or Left Leg) |

| VIMP | Variable Importance |

References

- Niebuhr, D.W.; Page, W.F.; Cowan, D.N.; Urban, N.; Gubata, M.E.; Richard, P. Cost-effectiveness analysis of the U.S. Army Assessment of Recruit Motivation and Strength (ARMS) program. Mil. Med. 2013, 178, 1102–1110. [Google Scholar] [CrossRef]

- Belmont, P.J.; Schoenfeld, A.J.; Goodman, G. Epidemiology of combat wounds in Operation Iraqi Freedom and Operation Enduring Freedom: Orthopaedic burden of disease. J. Surg. Orthop. Adv. 2010, 19, 2–7. [Google Scholar]

- Mazzone, B.; Farrokhi, S.; Depratti, A.; Stewart, J.; Rowe, K.; Wyatt, M. High-Level Performance After the Return to Run Clinical Pathway in Patients Using the Intrepid Dynamic Exoskeletal Orthosis. J. Orthop. Sports Phys. Ther. 2019, 49, 529–535. [Google Scholar] [CrossRef]

- Franklin, N.; Hsu, J.R.; Wilken, J.; McMenemy, L.; Ramasamy, A.; Stinner, D.J. Advanced Functional Bracing in Lower Extremity Trauma: Bracing to Improve Function. Sports Med. Arthrosc. Rev. 2019, 27, 107–111. [Google Scholar] [CrossRef]

- Belisle, J.G.; Wenke, J.C.; Krueger, C.A. Return-to-duty rates among US military combat-related amputees in the global war on terror: Job description matters. J. Trauma Acute Care Surg. 2013, 75, 279–286. [Google Scholar] [CrossRef] [PubMed]

- Binkley, J.M.; Stratford, P.W.; Lott, S.A.; Riddle, D.L. The Lower Extremity Functional Scale (LEFS): Scale development, measurement properties, and clinical application. North American Orthopaedic Rehabilitation Research Network. Phys. Ther. 1999, 79, 371–383. [Google Scholar] [PubMed]

- Sheehan, R.C.; Ohm, K.A.; Wilken, J.M.; Rabago, C.A. Novel Metrics for Assessing Mobility During Ground-Standing Transitions. Mil. Med. 2023, 188, e1975–e1980. [Google Scholar] [CrossRef] [PubMed]

- Rabago, C.A.; Sheehan, R.C.; Schmidtbauer, K.A.; Vernon, M.C.; Wilken, J.M. A novel assessment for Readiness Evaluation during Simulated Dismounted Operations: A reliability study. PLoS ONE 2019, 14, e0226386. [Google Scholar] [CrossRef]

- Hastie, T.; Tibshirani, R.; Friedman, J. The Elements of Statistical Learning, Data Mining, Inference, and Predicition, 2nd ed.; Springer Series in Statistics; Springer: New York, NY, USA, 2009; pp. XXII–745. ISBN 978-0-387-84857-0. [Google Scholar] [CrossRef]

- Sheehan, R.C.; Fain, A.C.; Wilson, J.B.; Wilken, J.M.; Rabago, C.A. Inclusion of a Military-specific, Virtual Reality-based Rehabilitation Intervention Improved Measured Function, but Not Perceived Function, in Individuals with Lower Limb Trauma. Mil. Med. 2021, 186, e777–e783. [Google Scholar] [CrossRef]

- Hays, R.D.; Bjorner, J.B.; Revicki, D.A.; Spritzer, K.L.; Cella, D. Development of physical and mental health summary scores from the patient-reported outcomes measurement information system (PROMIS) global items. Qual. Life Res. 2009, 18, 873–880. [Google Scholar] [CrossRef]

- Tucker, C.A.; Escorpizo, R.; Cieza, A.; Lai, J.S.; Stucki, G.; Ustun, T.B.; Kostanjsek, N.; Cella, D.; Forrest, C.B. Mapping the content of the Patient-Reported Outcomes Measurement Information System (PROMIS(R)) using the International Classification of Functioning, Health and Disability. Qual. Life Res. 2014, 23, 2431–2438. [Google Scholar] [CrossRef]

- Keen, S.M.; Kutter, C.J.; Niles, B.L.; Krinsley, K.E. Psychometric properties of PTSD Checklist in sample of male veterans. J. Rehabil. Res. Dev. 2008, 45, 465–474. [Google Scholar] [CrossRef] [PubMed]

- Lovell, M. The neurophysiology and assessment of sports-related head injuries. Phys. Med. Rehabil. Clin. N. Am. 2009, 20, 39–53. [Google Scholar] [CrossRef] [PubMed]

- Lovell, M.R.; Iverson, G.L.; Collins, M.W.; Podell, K.; Johnston, K.M.; Pardini, D.; Pardini, J.; Norwig, J.; Maroon, J.C. Measurement of symptoms following sports-related concussion: Reliability and normative data for the post-concussion scale. Appl. Neuropsychol. 2006, 13, 166–174. [Google Scholar] [CrossRef]

- Page, S.J.; Shawaryn, M.A.; Cernich, A.N.; Linacre, J.M. Scaling of the revised Oswestry low back pain questionnaire. Arch. Phys. Med. Rehabil. 2002, 83, 1579–1584. [Google Scholar] [CrossRef] [PubMed]

- Roland, M.; Morris, R. A study of the natural history of back pain. Part I: Development of a reliable and sensitive measure of disability in low-back pain. Spine 1983, 8, 141–144. [Google Scholar] [CrossRef]

- Cook, K.F.; Dunn, W.; Griffith, J.W.; Morrison, M.T.; Tanquary, J.; Sabata, D.; Victorson, D.; Carey, L.M.; MacDermid, J.C.; Dudgeon, B.J.; et al. Pain assessment using the NIH Toolbox. Neurology 2013, 80, S49–S53. [Google Scholar] [CrossRef]

- Ware, J.E., Jr.; Sherbourne, C.D. The MOS 36-item short-form health survey (SF-36). I. Conceptual framework and item selection. Med. Care 1992, 30, 473–483. [Google Scholar] [CrossRef]

- Hart, S.G.; Staveland, L.E. Development of NASA-TLX (Task Load Index): Results of empirical and theoretical research. In Human Mental Workload; Hancock, P.A., Meshkati, N., Eds.; North Holland Press: Amsterdam, The Netherlands, 1988; Volume 52, pp. 139–183. [Google Scholar]

- Dite, W.; Temple, V.A. A clinical test of stepping and change of direction to identify multiple falling older adults. Arch. Phys. Med. Rehabil. 2002, 83, 1566–1571. [Google Scholar] [CrossRef]

- Herr, H.; Popovic, M. Angular momentum in human walking. J. Exp. Biol. 2008, 211, 467–481. [Google Scholar] [CrossRef]

- Sheehan, R.C.; Beltran, E.J.; Dingwell, J.B.; Wilken, J.M. Mediolateral angular momentum changes in persons with amputation during perturbed walking. Gait Posture 2015, 41, 795–800. [Google Scholar] [CrossRef] [PubMed]

- Varoquaux, G.; Colliot, O. Evaluating machine learning models and their diagnostic value. In Machine Learning for Brain Disorders; Colliot, O., Ed.; Humana: New York, NY, USA, 2023; pp. 601–630. [Google Scholar]

- R Core Team. R: A Language and Environment for Statistical Computing; R Foundation for Statistical Computing: Vienna, Austria, 2021. [Google Scholar]

- Therneau, T.M.; Atkinson, E.J. An Introduction to Recursive Partitioning Using the RPAT Routines; Mayo Foundation: Rochester, MN, USA, 2023. [Google Scholar]

- Eagle, S.R.; Womble, M.N.; Elbin, R.J.; Pan, R.; Collins, M.W.; Kontos, A.P. Concussion Symptom Cutoffs for Identification and Prognosis of Sports-Related Concussion: Role of Time Since Injury. Am. J. Sports Med. 2020, 48, 2544–2551. [Google Scholar] [CrossRef] [PubMed]

- Wilson, J.B.; Rábago, C.A.; Hoppes, C.W.; Harper, P.L.; Gao, J.; Russell Esposito, E. Should I stay or should I go? Identifying intrinsic and extrinsic factors in the decision to return to duty following lower extremity injury. Mil. Med. 2021, 186, 430–439. [Google Scholar] [CrossRef] [PubMed]

| Category | Variable | Study 1 (n = 14) | Study 2 (n = 17) |

|---|---|---|---|

| Military | Years of service prior to injury | 14 | 17 |

| Desire to return to duty | 14 | 17 | |

| Officer or Enlisted | 14 | 17 | |

| Combat or Support role | 14 | 17 | |

| Branch | 14 | 17 | |

| Demographic | Height | 14 | 17 |

| Weight | 14 | 17 | |

| Age | 14 | 17 | |

| Sex | 14 | 17 | |

| Education | 14 | 17 | |

| Race | 14 | 17 | |

| Injury | Change in injury status since assessment | 14 | 17 |

| Injury Side | 14 | 17 | |

| Type (amputation or limb salvage) | 14 | 17 | |

| Joint injured | 14 | 17 | |

| Nerve involvement | 14 | 17 | |

| Time Since Injury | 14 | 17 | |

| Time to first ambulation after injury | 14 | 17 | |

| Clinical Outcomes | Stand–Prone–Stand (SPS) | 14 | 17 |

| Stand–Kneel–Stand Left (SKS-L) | 14 | 16 | |

| Stand–Kneel–Stand Right (SKS-R) | 14 | 16 | |

| Four Square Step Test | 8 | 0 | |

| PROMIS—Self-Efficacy | 0 | 17 | |

| PROMIS—Pain Interference | 0 | 17 | |

| PROMIS—Pain Behavior | 0 | 17 | |

| PROMIS—Cognitive Function | 0 | 17 | |

| PTSD Checklist—Military | 14 | 17 | |

| Post-Concussion Symptom Scale (PCSS) | 14 | 17 | |

| Lower Extremity Functional Scale | 14 | 17 | |

| Modified Oswestry Low Back Pain Questionnaire | 0 | 17 | |

| Roland–Morris Disability Questionnaire | 0 | 17 | |

| NASA Task Load Index | 13 | 0 | |

| Veterans RAND 36 Physical | 14 | 0 | |

| Veterans RAND 36 Mental | 14 | 0 | |

| Baseline Pain Level | 14 | 17 | |

| REDOp | Distance Completed | 14 | 17 |

| Shooting Accuracy | 14 | 17 | |

| Shooting Precision | 14 | 17 | |

| Reaction Time | 14 | 17 | |

| Angular Momentum Frontal Plane | 14 | 11 | |

| Angular Momentum Transverse Plane | 14 | 11 | |

| Angular Momentum Sagittal Plane | 14 | 11 |

| Dependent Variable | VIMP |

|---|---|

| Post-Concussion Symptom Survey (PCSS) | 4.809 |

| PTSD Checklist—Military (PCL-M) | 3.438 |

| Age | 2.997 |

| Stand–Prone–Stand | 2.621 |

| Pain at Baseline | 1.803 |

| Lower Extremity Functional Scale | 1.803 |

| Time Since Injury | 1.635 |

| Weight | 1.202 |

| Distance Completed | 1.09 |

| Height | 1.09 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Sheehan, R.C.; Levine, N.A.; King, D.; Childers, W.L.; Fergason, J.; Loftsgaarden, M.; Alderete, J. Evaluating the Potential of Decision Tree Modeling to Augment Return-to-Duty Decisions Following Major Limb Injury. Technologies 2026, 14, 107. https://doi.org/10.3390/technologies14020107

Sheehan RC, Levine NA, King D, Childers WL, Fergason J, Loftsgaarden M, Alderete J. Evaluating the Potential of Decision Tree Modeling to Augment Return-to-Duty Decisions Following Major Limb Injury. Technologies. 2026; 14(2):107. https://doi.org/10.3390/technologies14020107

Chicago/Turabian StyleSheehan, Riley C., Nicholas A. Levine, David King, Walter Lee Childers, John Fergason, Megan Loftsgaarden, and Joseph Alderete. 2026. "Evaluating the Potential of Decision Tree Modeling to Augment Return-to-Duty Decisions Following Major Limb Injury" Technologies 14, no. 2: 107. https://doi.org/10.3390/technologies14020107

APA StyleSheehan, R. C., Levine, N. A., King, D., Childers, W. L., Fergason, J., Loftsgaarden, M., & Alderete, J. (2026). Evaluating the Potential of Decision Tree Modeling to Augment Return-to-Duty Decisions Following Major Limb Injury. Technologies, 14(2), 107. https://doi.org/10.3390/technologies14020107