Developing Oral Comprehension Skills with Students with Limited or Interrupted Formal Education

Abstract

1. Introduction

2. Materials and Methods

2.1. Participants

2.2. Intervention

2.2.1. Video Material

2.2.2. Procedure

2.3. Instruments

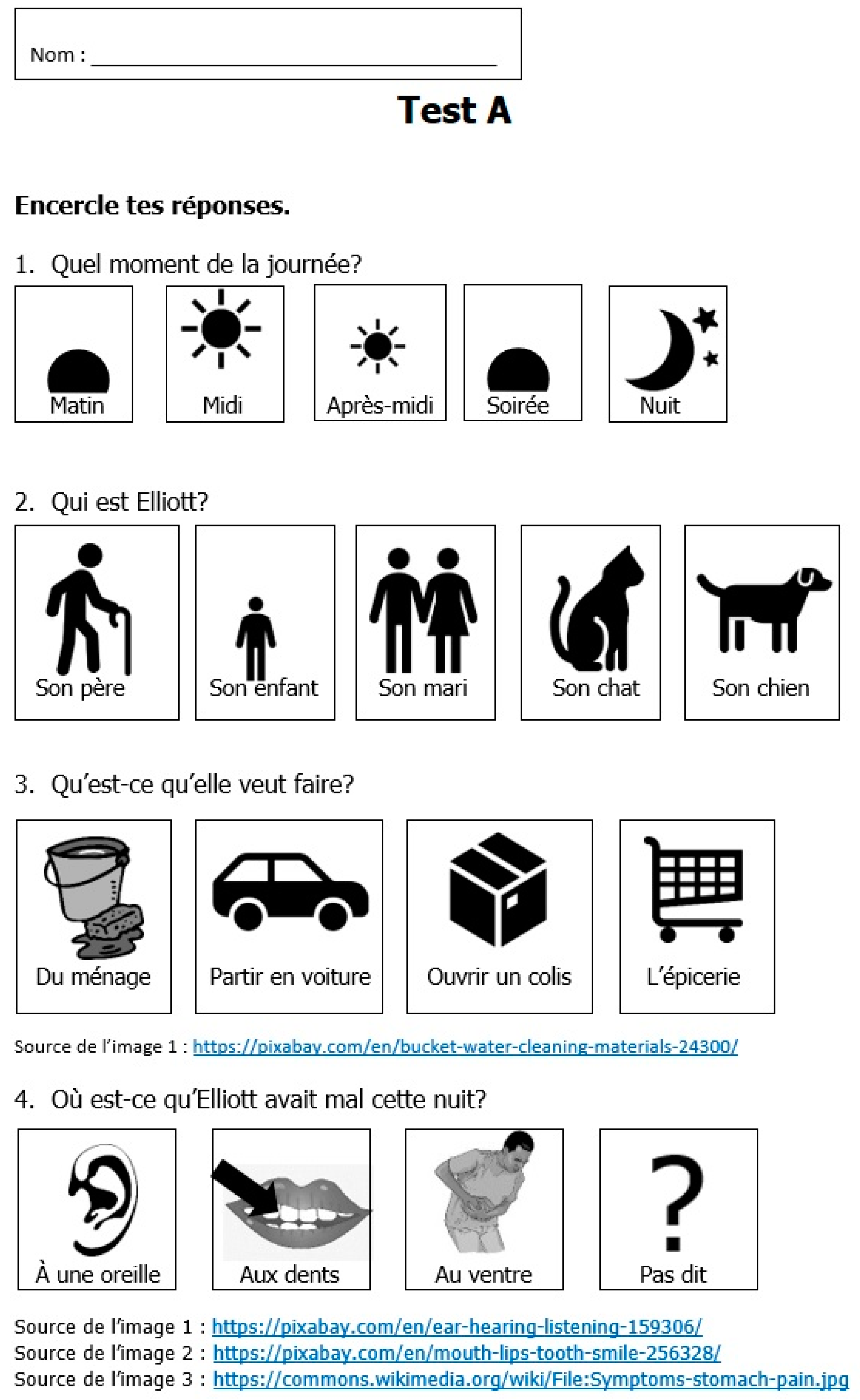

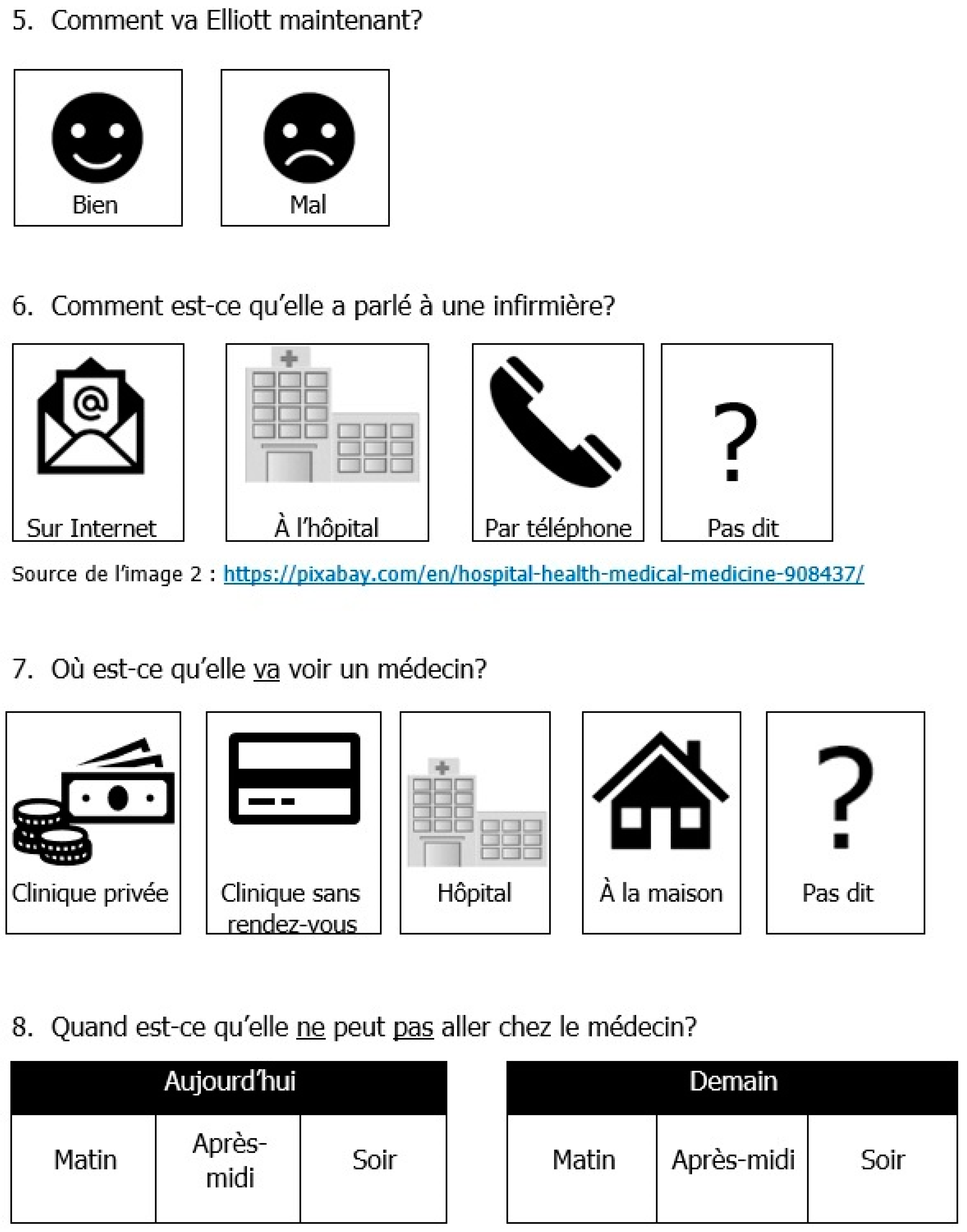

2.3.1. Quantitative Test Design, Data Collection Procedures and Coding

2.3.2. Qualitative Data Collection Procedures and Coding

3. Results

3.1. Listening Performance on the Quantitative Measure: Pre, Post and Delayed Tests Scores

3.2. Listening Performance on the Qualitative Measure: Scores on First and Last Implicit Listening Strategies Training

| 1. | B09 | Elle dit: le: partement (.) trop (.)↘grand↘ “She says: the: apartment (.) too (.) big↘” |

| R. | Oui “Yes” | |

| B09 | Beaucoup cher (.) “Very expensive (.)” |

| 2. | Hum (.) De: Prome(.) promener dans quartier: je pense que (.) elle a cherché↗ l’appartement: Chien (.) Chien (.) [euh] Son chien↗ accepté: ou non↘ |

| “Hum (.) to: wa(.) walk in the neighbourhoo:d I think that (.) she looked for↗ the apartment: dog(.) dog (.) [uh] her dog↗ accepte:d or not↘” |

| 3. | [Euh] Je pense que elle déjà: ↗ (.) habite Montréal↘ |

| “[uh] I think that she already: ↗ (.) lives in Montreal↘” |

4. Discussion and Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Appendix A

Appendix B. Vlog Excerpts Used during the Intervention Sessions

Appendix C

| Score | Descriptors and Excerpts |

|---|---|

| 1− | A few isolated words are uttered as if reciting a list. They are generally not related to the content of the excerpt. |

| “travail (.) que que travail [euh] je ne sais pas(.) K(.) K (.) Koodo Koodo telephone” [A06-01-03] ‘work (.) that that work [uh] I do not know K (.) K (.) Koodo Koodo telephone’ | |

| 1+ | A few isolated words are uttered as if reciting a list. They are generally closely related to the key content of the excerpt. |

“Appartement(.)Montréal  C’est argent C’est argent  Petit Petit  Argent Argent  [euh] Petit [euh] Petit  Argent Argent Montréal Montréal ” [A14-01-03] ” [A14-01-03]‘Apartment (.) Montreal  It’s money It’s money Small Small Money Money [uh] Small [uh] Small Money Money Montreal Montreal ’ ’ | |

| 2− | Isolated phrases and words are uttered as if reciting a list. They are generally not related to the content of the excerpt. |

“Elle regarde Prix Prix Elle regarde Elle regarde Prix [indistinct mumble] Prix [indistinct mumble]  Couleur blanc les (pointing at the walls) Couleur blanc les (pointing at the walls) ” [A01-01-03] ” [A01-01-03]‘She looks at  Prices Prices She looks at She looks at Prices [indistinct mumble] Prices [indistinct mumble]  Colour white the (pointing at the walls) Colour white the (pointing at the walls) ’ ’ | |

| 2+ | Isolated phrases and words are uttered as if reciting a list. They are generally closely related to the key content of the excerpt. |

“Prend l’appartement . Nouvel appartement . Nouvel appartement . (3.0) Plus cher . (3.0) Plus cher . (5.5) Plus petit . (5.5) Plus petit ” [B11-01-03] ” [B11-01-03]‘Takes the apartment  . New apartment . New apartment . (3.0) More expensive . (3.0) More expensive . (5.5) Smaller . (5.5) Smaller .’ .’ | |

| 3− | Connected phrases are uttered. The connexions established by the participants are generally not related to the content of the excerpt. |

| “Appartement problème (.) c’est fenêtre appartement payer par mois problème” [A04-01-03] ‘Apartment problem (.) it’s window apartment paying each month problem’ | |

| 3+ | Connected phrases are uttered. The connexions established by the participants are generally closely related to the key content of the excerpt. |

“Elle a rendez-vous (.) la banque la semaine prochaine . Ah: Elle a déménagé Montréal . Ah: Elle a déménagé Montréal ” [B06-01-03] ” [B06-01-03]‘She has appointment (.) the bank next week  . Ah: She moved Montreal . Ah: She moved Montreal ’ ’ | |

| 4− | Connected phrases are uttered in the form of a (partial) narration. The content of the (partial) narration is not entirely related to the excerpt. |

| “La madame la maison difficile parce que la madame la maison grande pas fenêtre. Rendez-vous banque pour le condo condo condo (.) parce que arrête sortie: difficile pas d’argent.” [B13-01-03] ‘The woman the house difficult because the woman the house big no window. Appointment bank for the condo condo condo (.) because stop going out: difficult no money.’ | |

| 4+ | Connected phrases are uttered in the form of a (partial) narration. The content of the (partial) narration is generally closely related to the key content of the excerpt. |

“Elle a appartement très gros (euh) Elle a (.) payé plus plus cher (.) Elle a fatigué (.) Elle a sortir autre appartement Montréal (.) Elle amie ” [B03-01-03] ” [B03-01-03]‘She has apartment very big (uh) She has (.) paid more more expensive(.) She has tired (.) She went out other apartment Montreal (.) She friend  ’ ’ |

References

- Bigelow, Martha, and Elaine Tarone. 2004. The role of literacy level in second language acquisition: Doesn’t who we study determine what we know? TESOL Quarterly 38: 689–700. [Google Scholar] [CrossRef]

- Bigelow, Martha, and Patsy Vinogradov. 2011. Teaching adult second language learners who are emergent readers. Annual Review of Applied Linguistics 31: 120–36. [Google Scholar] [CrossRef]

- Bigelow, Martha, Robert DelMas, Kit Hansen, and Elaine Tarone. 2006. Literacy and the processing of oral recasts in SLA. TESOL Quarterly 40: 665–89. [Google Scholar] [CrossRef]

- Brown, Gillian. 1995. Dimensions of difficulty in listening comprehension. In A Guide for the Teaching of Second Language Listening. Edited by David J. Mendelsohn and Joan Rubin. San Diego: Dominie Press, pp. 59–73. [Google Scholar]

- Condelli, Larry, Heide Spruck Wrigley, and Kwang Suk Yoon. 2009. “What works” for adult literacy students of English as a second language. In Tracking Adult Literacy and Numeracy Skills. Edited by Stepehn Reder and John Bynner. New York and London: Routledge, pp. 132–59. [Google Scholar]

- Cross, Jeremy. 2010. Metacognitive instruction for helping less-skilled listeners. ELT Journal 65: 408–16. [Google Scholar] [CrossRef]

- DeCapua, Andrea. 2016. Reaching students with limited or interrupted formal education through culturally responsive teaching. Language and Linguistics Compass 10: 225–37. [Google Scholar] [CrossRef]

- DeCapua, Andrea, and Helaine W. Marshall. 2015. Reframing the conversation about students with limited or interrupted schooling: From achievement gap to cultural dissonance. NASSP Bulletin 99: 356–70. [Google Scholar] [CrossRef]

- Diaz, Itala. 2015. Training in metacognitive strategies for students’ vocabulary improvement by using learning journals. Profile Issues in TeachersProfessional Development 17: 87–102. [Google Scholar] [CrossRef]

- Graham, Suzanne. 2017. Research into practice: Listening strategies in an instructed classroom setting. Language Teaching 50: 107–19. [Google Scholar] [CrossRef]

- Graham, Suzanne, and Ernesto Macaro. 2008. Strategy instruction in listening for lower-intermediate learners of French. Language Learning 58: 747–83. [Google Scholar] [CrossRef]

- Graham, Suzanne, Denise Santos, and Robert Vanderplank. 2008. Listening comprehension and strategy use: A longitudinal exploration. System 36: 52–68. [Google Scholar] [CrossRef]

- Henrich, Joseph, Steven J. Heine, and Ara Norenzayan. 2010. The weirdest people in the world? Behavioral and Brain Sciences 33: 61–135. [Google Scholar] [CrossRef] [PubMed]

- Huettig, Falk, and Ramesh K. Mishra. 2014. How literacy acquisition affects the illiterate mind—A critical examination of theories and evidence. Language and Linguistics Compass 8: 401–27. [Google Scholar] [CrossRef]

- Hulstijn, Jan H. 2019. An individual-differences framework for comparing nonnative with native speakers: Perspectives from BLC theory. Language Learning 69: 157–83. [Google Scholar] [CrossRef]

- Keller, Philip. 2017. The pedagogical implications of orality on refugee ESL students. Dialogues: An Interdisciplinary Journal of English Language Teaching and Research 1: 1–12. [Google Scholar] [CrossRef]

- Kim, Hae-Young. 1995. Intake from the speech stream: Speech elements that L2 learners attend to. In Attention and Awareness in Foreign Language Learning. Edited by Richard Schmidt. Honolulu: University of Hawaii Press, pp. 65–83. [Google Scholar]

- Kosmidis, Mary H., Maria Zafiri, and Nina Politimou. 2011. Literacy versus formal schooling: Influence on working memory. Archives of Clinical Neuropsychology 26: 575–82. [Google Scholar] [CrossRef] [PubMed]

- Loring, Ariel. 2017. Literacy in citizenship preparatory classes. Journal of Language, Identity & Education 16: 172–88. [Google Scholar] [CrossRef]

- Mackey, Alison, and Susan M. Gass. 2016. Stimulated Recall Methodology in Applied Linguistics and L2 Research. New York and London: Routledge. [Google Scholar]

- Moore, Leslie. 1999. Language socialization research and French language education in Africa: A Cameroonian case study. Canadian Modern Language Review 56: 329–50. [Google Scholar] [CrossRef]

- Ortega, Lourdes. 2019. SLA and the study of equitable multilingualism. The Modern Language Journal 103: 23–38. [Google Scholar] [CrossRef]

- Rost, Michael. 2011. Teaching and Researching: Listening, 2nd ed.Harlow: Pearson Education. [Google Scholar]

- Seo, Kyoko. 2005. Development of a listening strategy intervention program for adult learners of Japanese. International Journal of Listening 19: 63–78. [Google Scholar] [CrossRef]

- Strube, Susanna, Ineke van de Craats, and Roeland van Hout. 2013. Grappling with the oral skills: The learning processes of the low-educated adult second language and literacy learner. Apples Journal of Applied Language Studies 7: 45–65. [Google Scholar]

- Tafaghodtari, Marzieh H., and Larry Vandergrift. 2008. Second and foreign language listening: Unraveling the construct. Perceptual and Motor Skills 107: 99–113. [Google Scholar] [CrossRef] [PubMed]

- Tarone, Elaine. 2010. Second language acquisition by low-literate learners: An under-studied population. Language Teaching 43: 75–83. [Google Scholar] [CrossRef]

- Vandergrift, Larry. 2006. Second language listening: Listening ability or language proficiency? The Modern Language Journal 9: 6–18. [Google Scholar] [CrossRef]

- Vandergrift, Larry. 2008. Learning strategies for listening comprehension. In Language learning Strategies in Independent Settings. Edited by Stella Hurd and Tim Lewis. Bristol: Multilingual Matters, pp. 84–102. [Google Scholar]

- Vandergrift, Larry, and Jeremy Cross. 2017. Replication research in L2 listening comprehension: A conceptual replication of Graham & Macaro (2008) and an approximate replication of Vandergrift & Tafaghodtari (2010) and Brett (1997). Language Teaching 50: 80–89. [Google Scholar] [CrossRef]

- Vandergrift, Larry, and Christine C. M. Goh. 2012. Teaching and Learning Second Language Listening: Metacognition in Action. New York, London: Routledge. [Google Scholar]

- Vandergrift, Larry, and Marzieh H. Tafaghodtari. 2010. Teaching L2 learners how to listen does make a difference: An empirical study. Language Learning 60: 470–97. [Google Scholar] [CrossRef]

- Wolvin, Andrew D. 2018. Listening processes. In The TESOL Encyclopedia of English Language Teaching, Online ed. Edited by John I. Liontas. Hoboken: Wiley & Sons. [Google Scholar] [CrossRef]

- Young-Scholten, Martha. 2013. Low-educated immigrants and the social relevance of second language acquisition research. Second Language Research 29: 441–54. [Google Scholar] [CrossRef]

- Young-Scholten, Martha, and Nancy Strom. 2006. First-time L2 readers: Is there a critical period? In Low Educated Adult Second Language and Literacy. Edited by Jeanne Kurvers, Ineke van de Craats and Martha Young-Scholten. Utrecht: LOT, pp. 45–68. [Google Scholar]

| 1 | Informed consent from all participants was obtained before their inclusion in the study, in accordance with the Declaration of Helsinki. The protocol was approved by the Ethics Committee of Université Laval (2017-368/01-02-2018). |

| 2 | The education centre does not collect information regarding the length of residence, nor prior years of formal schooling of their students. General information regarding participants reported here came from our informal discussions with them, between the intervention sessions. |

| 3 | According to the centre’s house program, students at levels 2 and 3 are very similar in terms of oral comprehension and oral production skills. However, their written production and comprehension abilities differ slightly: while students in level 2 can read and write simple, predictable sentences, level 3 students can read and write marginally longer paragraphs or texts as long as they remain simple and predictable. |

| 4 | References for all the vlog excerpts are provided in Appendix B. |

| 5 | To make the modelling phase appear realistic to the learners, both teachers read the first author’s field notes about what pairs of participants said they understood after the first, second and third viewings, and they attempted to closely emulate a free recall performance similar to their group’s. |

| 6 | Isolated phrases and words are uttered as if reciting a list. They are generally closely related to the key content of the excerpt (2 + score). |

| 7 | Several connected phrases are uttered. The participant offers a (partial) narration of key content of the excerpt (4 + score). |

| Participants | Tests | |||

|---|---|---|---|---|

| Experimental Group | Control Group | T1 | T2 | T3 |

| A03; A07; A08 *; A12; A14; B01 *; B02 **; B03; B05 * | C01 **; C02; C03; C04 | A | B | C |

| A02; A06; A09; A11 **; B06; B08 *; B12; B15 | C05; C06; C07; C08 | B | C | A |

| A01; A04; A05; A13 *; B09; B10 **; B11; B13 | C09; C10; C11; C12 * | C | A | B |

| Condition | Group | Pretest | Posttest 1 | Posttest 2 |

|---|---|---|---|---|

| Exp. | A | 42.3 (12.0) | 46.3 (18.6) | 40.6 (20.0) |

| Exp. | B | 69.4 (10.3) | 63.9 (14.6) | 55.5 (9.1) |

| Control | C | 50.0 (16.9) | 60.3 (10.9) | 52.5 (21.1) |

| Group | Pretest | Posttest 1 | Posttest 2 | ||||

|---|---|---|---|---|---|---|---|

| Condition | Group | Expl. | Impl. | Expl. | Impl. | Expl. | Impl. |

| Exp. | A | 44.9 (19.9) | 26.6 (21.9) | 51.2 (21.2) | 34.3 (39.4) | 48.6 (28.2) | 21.5 (22.3) |

| Exp. | B | 77.0 (17.6) | 46. 8 (44.6) | 72.6 (20.6) | 42.9 (37.3) | 70.7 (8.8) | 18.9 (22.7) |

| Control | C | 60.4 (15.9) | 28.8 (27.9) | 70.7 (13.6) | 39.1 (35.6) | 61.8 (10.2) | 30.0 (28.7) |

| Participants | T1 | T5 |

|---|---|---|

| A01 | 2− | 3+ |

| A04 | 3− | 2+ |

| A05 | 1+ | 1+ |

| A06 | 1− | 1+ |

| A07 | 3− | 3− |

| A09 | 2+ | 2+ |

| A12 | 3− | 3+ |

| A13 | 2+ | 2+ |

| A14 | 1+ | 2+ |

| B01 | 4− | 3+ |

| B03 | 4+ | 4+ |

| B05 | 3+ | 4− |

| B06 | 3+ | 3+ |

| B09 | 2+ | 4+ |

| B11 | 2+ | 3+ |

| B12 | 4+ | 4+ |

| B13 | 4− | 4− |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Laberge, C.; Beaulieu, S.; Fortier, V. Developing Oral Comprehension Skills with Students with Limited or Interrupted Formal Education. Languages 2019, 4, 75. https://doi.org/10.3390/languages4030075

Laberge C, Beaulieu S, Fortier V. Developing Oral Comprehension Skills with Students with Limited or Interrupted Formal Education. Languages. 2019; 4(3):75. https://doi.org/10.3390/languages4030075

Chicago/Turabian StyleLaberge, Carl, Suzie Beaulieu, and Véronique Fortier. 2019. "Developing Oral Comprehension Skills with Students with Limited or Interrupted Formal Education" Languages 4, no. 3: 75. https://doi.org/10.3390/languages4030075

APA StyleLaberge, C., Beaulieu, S., & Fortier, V. (2019). Developing Oral Comprehension Skills with Students with Limited or Interrupted Formal Education. Languages, 4(3), 75. https://doi.org/10.3390/languages4030075