3.1. Stability Results

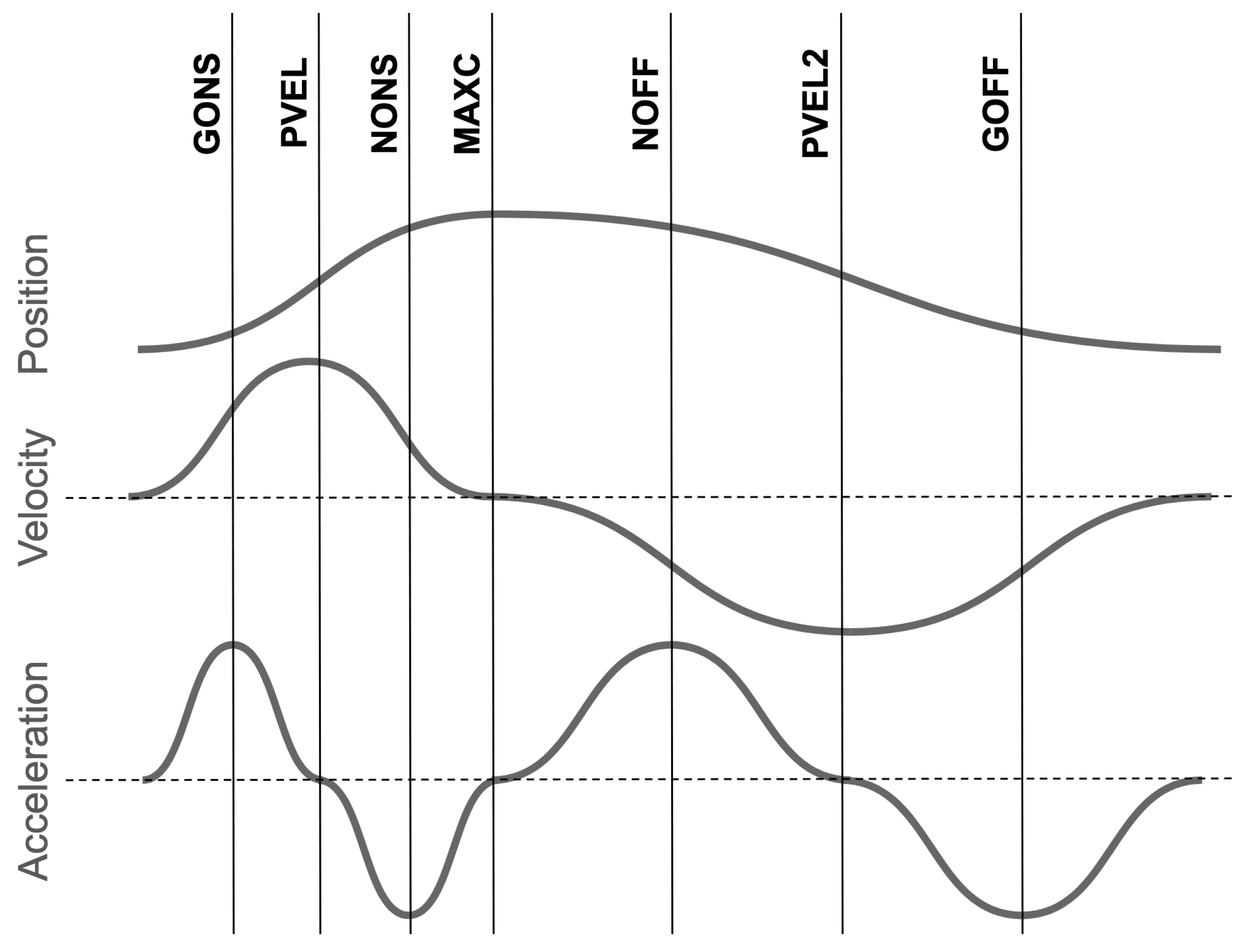

The lag between gestural measures and the consonant-velocity-defined syllable pulses are presented in

Figure 2. The syllable pulse generally takes place shortly after the end of the consonant gesture and just past the middle of the vowel gesture.

Note that the lags from the vowel landmarks appear substantially more variable than the lags from the consonant landmarks. This difference may result from the trajectories or the nature of the landmarks, but it also reflects the fact that the syllable pulse is defined by the onset and coda consonant gestures, including C_PVEL2 shown here.

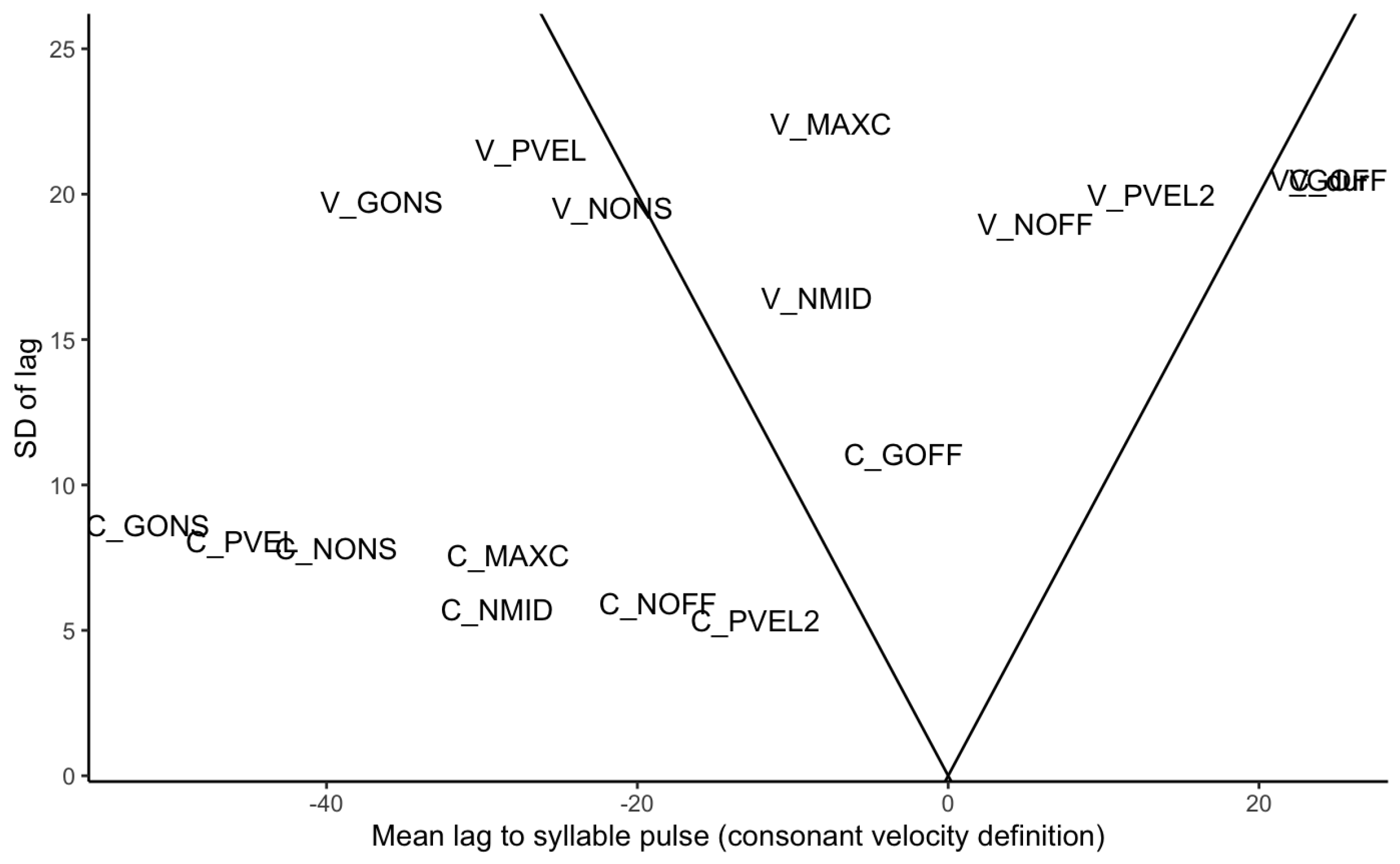

The relationship between mean and standard deviation of the same lags is presented in

Figure 3. If each landmark were coordinated to the syllable pulse with equivalent stability, then the SD would increase as the mean values increased. Among the consonant landmarks, this pattern appears to hold: landmarks farther from the syllable pulse have lags with higher SDs, with the exception of C_GOFF, the C gesture offset. For the vowel, however, the lag to all the landmarks shows a relatively high SD.

If a landmark had a particularly stable relationship to the syllable pulse, it would appear as an outlier in the bottom left or bottom right of

Figure 3—that is, its lag would have a low SD relative to its mean. For most of the consonant landmarks, the SD of the lag increased slowly relative to the mean lag, with no visibly apparent outlier. Thus, even though C_PVEL2 was used to define the syllable pulse, its position on the chart did not stand out from the other C gestures. Interestingly, the consonant landmark with the highest lag SD, C_GOFF, is also temporally closest to the syllable pulse. This could result from the release being less controlled than the closure, but in some cases, it may also reflect coarticulation with the coda consonant. As for the vowel landmarks, this figure broadly suggests that no particular relationship exists between the syllable pulse and the vowel. If anything, the landmarks closest to the beginning and end of the vowel gesture may be somewhat more stable than expected.

The Relative Standard Deviation (RSD) values of these lags are presented in

Table 2.

In interpreting the RSD values, it is important to consider them in the context of the mean values. This is particularly the case when values cluster near zero, where the RSD is unhelpfully large. The lowest RSD value, both in the overall data and in the two sample words, is that of C_GONS, which is notable because it is lower than that of the other consonant landmarks despite having the largest lag.

In light of the shortcomings with the RSD, an alternative metric for stability was used: the residuals of a regression model fit to the data. A linear mixed-effects model was fit to the landmark-to-pulse intervals, with a fixed effect of landmark, random slopes for speaker and word (models with random slopes failed to converge). See

Appendix C for details of the model. The standard deviation of the residuals are presented in

Table 2: the larger the SDs of the residuals, the more variability is present beyond what can be explained by the model.

Since C_PVEL2 was used to identify the syllable pulse, it is unsurprising that this yielded the lowest SD of the residuals (as well as the lowest SD of observations). If no consonant landmarks exhibited particularly stable timing to the syllable pulse, the landmarks farther from C_PVEL2 should have produced progressively larger Residual SD values. Conversely, if a landmark had a lower Residual SD value than its neighbors, this would suggest a stable relationship to the pulse. Since no landmark exhibited a dramatically lower Residual SD than the others, there is no evidence that C_GONS or any other consonantal landmark is particularly stably timed to the syllable pulse.

As for the vowel landmarks, the values are substantially larger, and no clear pattern emerges. It is worth noting that the SD of the residuals generally tracks the SD of the observations, though not exactly: the most-stable interval by observed SD and RSD is V_GONS, while the Residual SD indicates V_NONS and V_NMID (the latter being the midpoint of V_NONS and V_NOFF). Given the small difference in these values and the lack of obvious pattern, there is no clear evidence that V_GONS or any other vowel landmark is particularly stably timed to the syllable pulse.

Overall, the results follow from the fact that the C_PVEL2 landmark was used in calculating the syllable pulse. Consonant landmarks exhibited more stable timing to the syllable pulse than the vowel landmarks did, with the the most stable being C_PVEL2.

3.2. C-V Lag

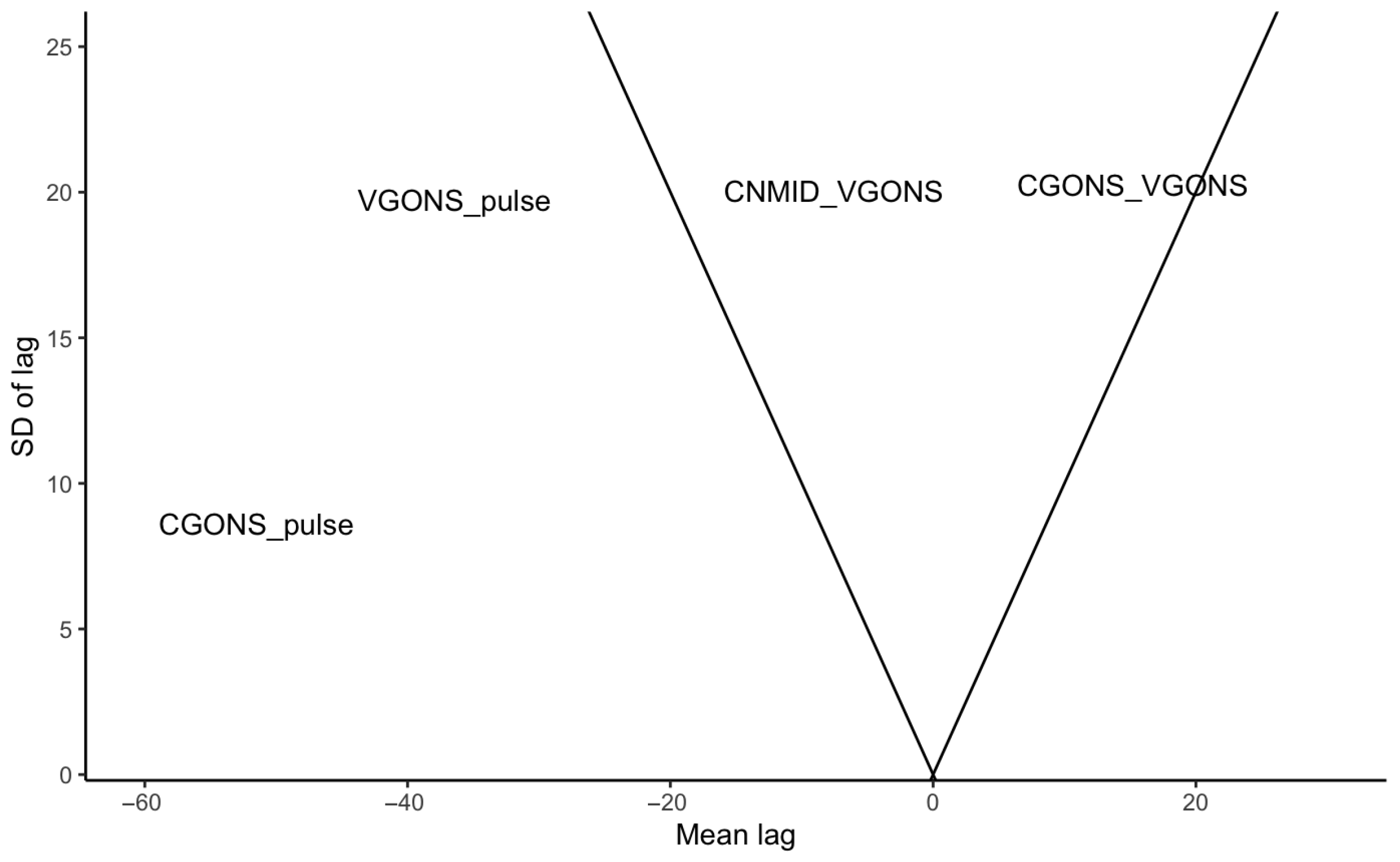

This section compares the lag between consonant and vowel to the lags discussed in

Section 3.1. The GONS-to-syllable-pulse lags, which had the most stable timing to the syllable pulse, are also plotted in

Figure 4. Here, they are joined by two forms of C-V lag: from consonant GONS to vowel GONS and from consonant NMID to vowel GONS.

It thus appears that C-V lag, whether measured from the consonant GONS or NMID, is about as stable as vowel-to-pulse measures and thus less stable than consonant-to-pulse measures. The mean, SD, and RSD values are shown in

Table 3.

As above, a linear mixed-effects model was fit to the intervals, with a fixed effect of lag type and random intercepts and slopes for speaker and word. See

Appendix C for details of the model. The standard deviation results of the residuals are presented in

Table 3. The SD of the residuals mirrors the RSD and original SD of the observations.

These results indicate that C-V lag, whether measured from the start of the consonant or the midpoint of its plateau, is comparably stable to the V-to-pulse interval. The C-to-pulse interval is more stable than these, which is consistent with the pulse being defined by the consonants.

3.3. Timing Covariation

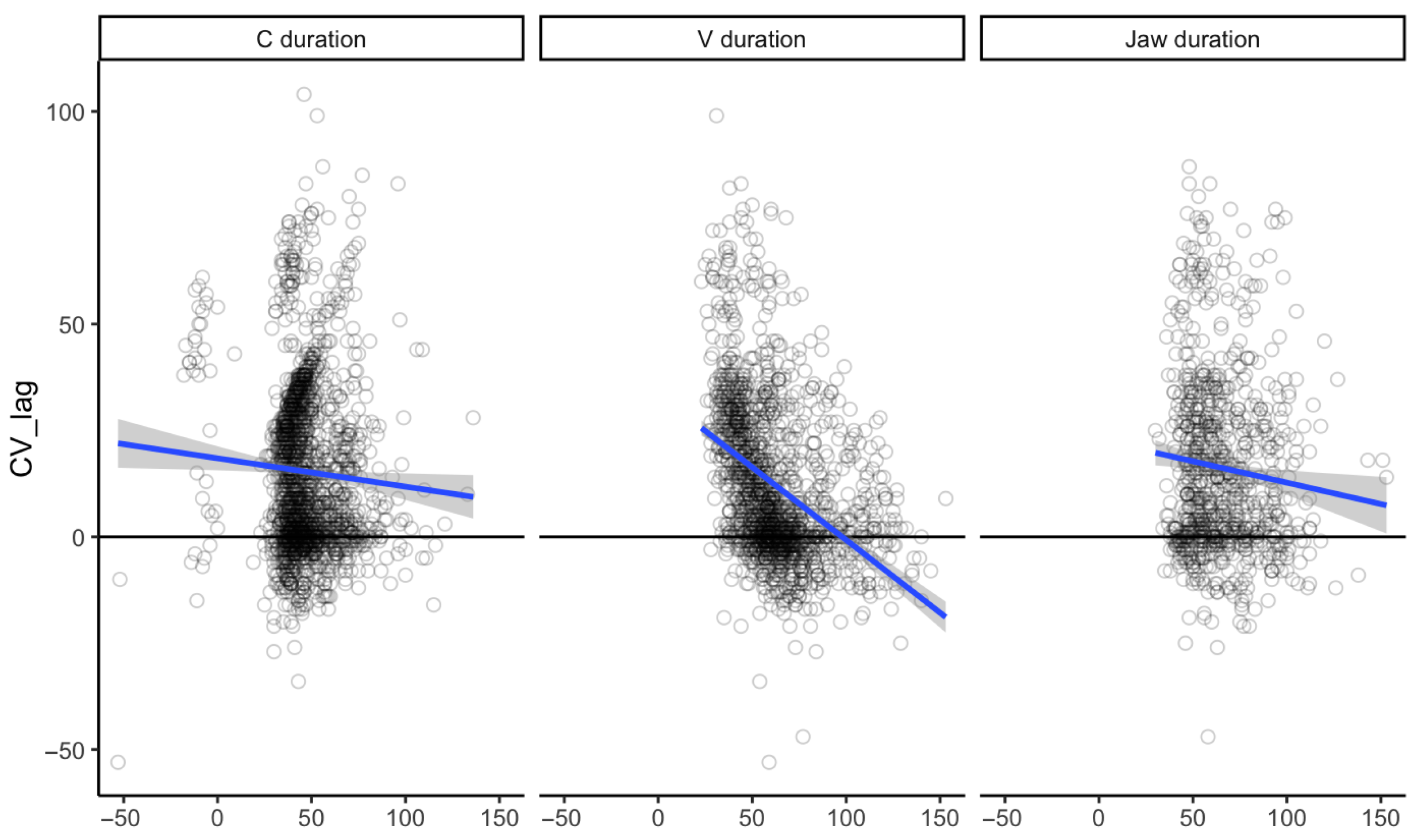

The relationship between the duration of gestures and C-V lag is presented in

Figure 5. If C-V timing is purely a matter of onset coordination, then the C-V lag should be independent of gesture duration. Conversely, if gestural coordination references points within the gesture after the onset, then gesture length should affect the C-V lag. Specifically, longer consonant gestures would result in longer C-V lag, while longer vowel gestures would result in shorter C-V lag.

A series of linear mixed-effects models were fit to investigate the relationship between each of these variables and C-V lag, as shown in

Table 4. A baseline model included random intercepts for speaker and word (models with random slopes failed to converge). This was compared to a series of models that each included a fixed effect of one of the durations in

Figure 5.

Comparison of the AIC and BIC shows that the models including C duration and V duration performed better than the baseline, while the model that included jaw duration did not. The model that included factors for both C duration and V duration improved over both the models that included just one of these factors.

In the combined model, consonant duration had a small positive effect on C-V lag (slope: 0.15, se: 0.01, ), while vowel duration had a slightly larger negative effect (slope: −0.32, se: 0.03, ). These results are not consistent with the hypothesis of purely onset-based coordination.

The relationship between the duration of gestures and their timing difference to the syllable pulse is presented in

Figure 6. If the gesture onset is coordinated with the syllable pulse, there should be no relationship between the gesture duration and the syllable pulse. Conversely, if the syllable pulse is coordinated to a later point in the gesture, then longer gestures should be associated with earlier gesture onsets relative to the syllable pulse. Since the CGONS-to-syllable-pulse values are negative, longer consonant gestures are predicted to be

negatively correlated with the GONS-to-syllable-pulse timing.

Linear mixed-effects models were fit to investigate the relationship between each of these variables and C-V lag. A baseline model included random intercepts for speaker and word (models with random slopes failed to converge). This was compared to models that each included a fixed effect of C duration and jaw duration.

Comparison of the AIC and BIC in

Table 5 shows that the model including C duration improved over the baseline, while the model that included jaw duration did not. In the C duration model, consonant duration had a negative effect on C-V lag (slope: −0.28, se: 0.02,

). These results, and those presented earlier in this section, are not consistent with the hypothesis of purely onset-based coordination.

3.5. Alternative Syllable Pulses

The results presented so far have used a single metric for defining the syllable pulse, namely, the midpoint of vowel-adjacent consonant velocity peaks. This section also considers three other landmarks that may index syllable timing: the midpoint of jaw velocity peaks, midpoint of jaw plateau (defined by acceleration peaks), and onset of jaw movement. Previous research in the C/D Model has not used jaw kinematics to identify the temporal location of the syllable pulse; this section considers jaw kinematics given the important role of the jaw magnitude in the C/D Model (see Erickson, this issue). Two landmarks, the midpoints of jaw plateau and jaw velocity peaks, might be considered alternative candidates for a syllable pulse landmark. The third, the jaw onset, has been included given the relatively stable timing already observed for the onsets of movement in C and V gestures.

For each of these points, the temporal distances between this point and the C GONS and V GONS landmarks were calculated. These durations were compared with each other and with the duration between C GONS and the consonant-velocity-defined syllable pulse (as in

Section 3.1). The results are visualized in

Figure 8, and the mean, SD, and RSD values are presented in

Table 7.

The three syllable-central landmarks are quite close together. The distance from syllable pulse to jaw plateau midpoint is , from syllable pulse to jaw velocity peak midpoint is , and from jaw plateau midpoint to jaw velocity peak midpoint is .

While visual inspection of

Figure 8 is not particularly informative, the SD and RSD values of

Table 7 show that the original syllable pulse landmark produced a more stable timing to both CGONS and VGONS. This suggests that these jaw landmarks alone are not a useful way to identify syllable pulses.