1. Introduction

The conceptual design of novel aerospace vehicles is usually based on the assessment of initially suggested configurations. Such an approach often implies the consideration of a limited number of initial ideas resulting from earlier designer experience or collective multidisciplinary brainstorming [

1]. When designing new aircraft generations however, one should acknowledge that the optimal solution integrating certain disruptive technologies may be left out of scope [

2]. Furthermore, one should consider that an early concept fixation reduces the room for later design adjustments, also associated with additional costs [

1,

3]. However, the certain choice of an optimal concept can be assured by parametric optimization and a detailed analysis of each alternative. This approach is not feasible for novel technologies lacking test data.

The mentioned challenges indicate the necessity for (a) the systematic generation of a larger number of concepts and (b) an efficient and robust approach to assess and compare alternative configurations of complex systems such as aerospace vehicles without relying on quantitative data, especially when such is not available (yet).

These are key focal aspects of the Advanced Morphological Approach (AMA) by Rakov and Bardenhagen [

3]. It aims to structure a complex design problem in an intuitive way and generate an exhaustive and consistent solution space [

3]. In order to replace lacking test data of innovative components, the method intents to lean on the professional opinion of dedicated experts, who would be required to assess the technological alternatives. These evaluations will be used as a scientific basis for the qualitative evaluations and the generation of a wider solution space. The resulting exhaustive solution space allows the consideration of solutions possibly let out of scope during conventional idea generation. By clustering the solutions, the designer is able to identify sub-groups of similar, especially advantageous configurations. These optimal sub-spaces could be then defined as the search boundaries within parametric optimization with Multidisciplinary Aircraft Optimization (MDAO). In other words, the AMA aids to find the optimal design sub-spaces for further investigation with MDAO.

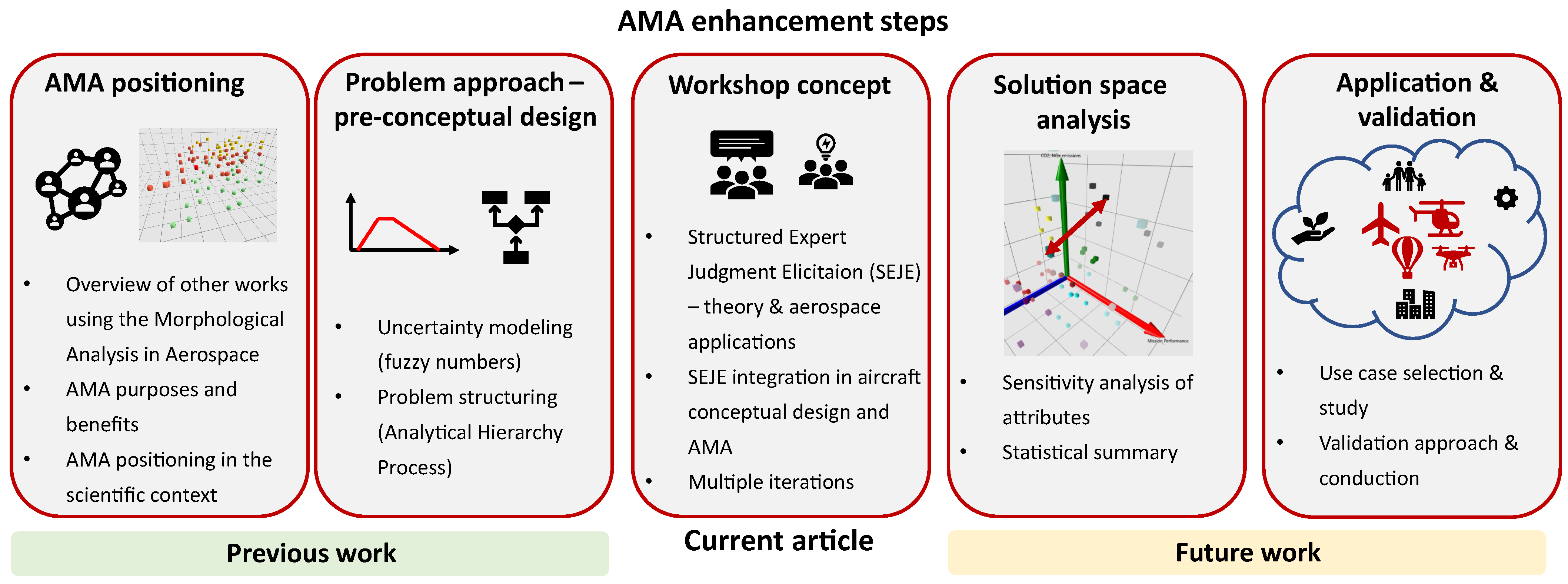

In order to define the AMA as a structured and robust method for conceptual design in aerospace (and complex engineering products in general), the implemented techniques should be carefully studied and justified. For this reason, the enhancement of the initial AMA represents a multi-stage project, visualized in

Figure 1. The first stage was dedicated to the definition of the objectives and benefits of the AMA, the overview of other work using the MA in Aerospace, as well as the AMA positioning in the scientific context, presented in Reference [

2]. Then, the problem structuring, uncertainty modeling and the main data flow was establishes in the next stage, shown in Reference [

4]. The current project stage (the third stage in the figure) focuses on the use of expert opinions as a scientific basis for the qualitative assessment of innovative technologies lacking test data. This context is covered by the current paper which aims to integrate structured expert judgment elicitation (SEJE) methods in pre-conceptual aircraft design and its application in the form of an expert workshop on a wing morphing use case. A deeper solution space analysis, integration of technology interaction aspects, verification, and validation of the “full-scale” methodology (fourth and fifth stage) remain subjects of future work.

In this context, it is first necessary to give a brief overview of the AMA method and define the concrete objectives of the current paper.

1.1. Advanced Morphological Approach

The AMA is based on the classical Morphological Analysis (MA) by Fritz Zwicky [

5] dating to the middle of the twentieth century. The initial MA introduced product decomposition into functional and/or characteristic attributes, which can be each fulfilled by corresponding sets of given implementation alternatives (denoted as “options”), systematized in a Morphological matrix (MM). Such problem structuring allows to obtain an exhaustive solution space, containing all possible option combinations (denoted as “solutions”).

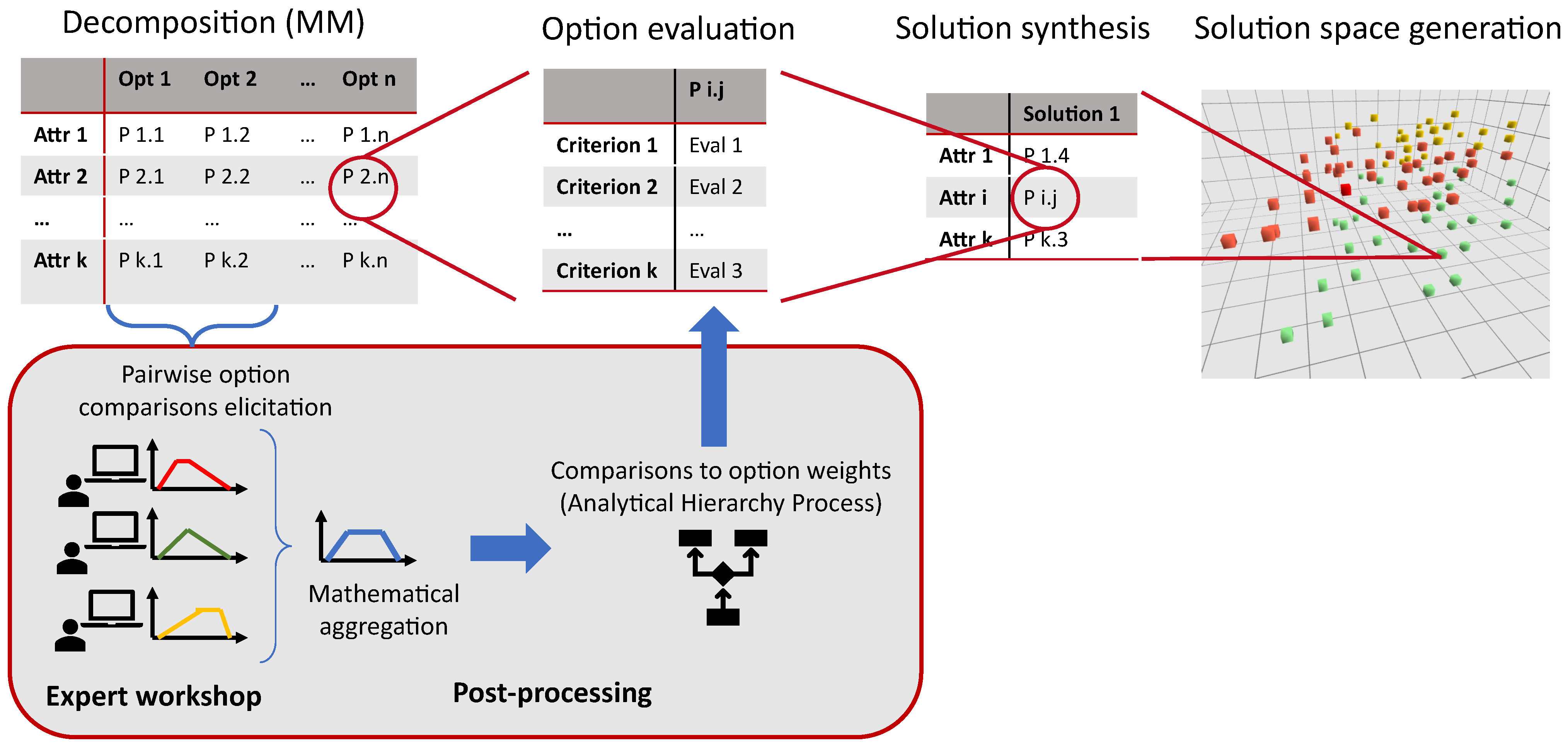

The AMA by Rakov and Bardenhagen [

3] is based on the MA and its application in the search for promising technical systems by Rakov [

6] from the late twentieth century. The main steps of the AMA combined with a brief presentation of the workshop data flow are schematically shown in

Figure 2. The method extends the MA by assigning evaluations to each option according to pre-defined criteria on a qualitative scale from 0 to 9 [

3]. The generated solutions combine the scores of the selected options by adding their separated criteria evaluations, which can also be weighted. Subsequently, one can visualize the resulting solutions based on their summed criteria scores, allowing to compare their criterion-specific and multi-criteria performance [

3]. The lower left part of

Figure 2 exhibits the main data processing steps after obtaining individual pairwise technology comparisons during an expert workshop and their integration into the main AMA methodology.

One of the main problems of morphological analysis is to reduce the dimensionality of the problem and to reduce the number of solutions to be solved [

7]. Often, the reduction of the morphological set of solutions by the analysis of incompatible options is used. In some cases, graph theory and genetic algorithms are used [

8,

9]. In the case of AMA, clustering is used to aid the identification of similar solutions in vast solution spaces.

The MA has been applied in conceptual aircraft design in different forms and contexts, discussed and compared with the AMA in detail in Reference [

2]. For example, the Technology Identification, Evaluation and Selection method by Mavris and Kirby [

10] uses the MM for impact estimation of technologies and the search for their optimal combination for improved resource allocation. Although direct system simulation is also avoided, the method uses “physics-based analytical models” [

10] which are applicable for a more extensive configuration modeling/representation up to a preliminary configuration design. In another work, Ölvander et al. [

11] implement conceptual design of sub-systems by describing the MM options with quantitative data and mathematical models. In contrast with these MA applications, the AMA uses a qualitative approach to compare non-existent technologies lacking experimental data or/and are hard to model.

As shown in

Figure 1, the AMA has underwent further development by implementing uncertainty modeling with fuzzy numbers, problem-specific hierarchical problem definition by means of the Fuzzy Analytical Hierarchy Process (FAHP) as well as multi-criteria decision-making (MCDM) methods (see Reference [

4]). Based on this approach, a methodology for organized expert panels shall be studied, which would evaluate the MM options and serve as a scientific ground for the generated solution space. A first iteration of the methodology testing has already been conducted within a first workshop on the design use case of a search and rescue aircraft (SAR), described in detail in Reference [

12]. As a result, a solution space containing 54 configurations was generated which yielded a multi-criteria optimum implementing hybrid aerodynamic/aerostatic lift generation, fully hydrogen-based non-distributed propulsion and wing morphing. The first workshop implemented a set of initial features such as solely individual evaluations, mathematical aggregation and the use of fuzzy numbers for uncertainty modeling. It served as a starting point for the development of a full scale concept, aimed in the second workshop iteration.

In this context, the second workshop extended the functionality of the workshop and its post-processing to a next level and was applied on the use case of wing morphing architectures. The added major improvements include the behavioral aggregation of evaluations in the form of group discussions focused on aircraft design aspects, the possibility for the experts to edit their evaluations, and the weighting of the participants’ assessments based on their expertise. The current article presents the methodology and results of the second workshop, as well as the aspects needed for the integration of SEJE methods into aircraft conceptual design with the AMA.

1.2. Current Objectives

Among the current challenges of the AMA remains the scientific acquisition of qualitative technology evaluations from domain experts, in order to appropriately assess innovative technologies still lacking deterministic test data. Hence, the specific adaptation and integration of SEJE methods in conceptual aircraft design and its application represents the paramount objectives of the current paper. This introduces the necessity to justify the developed methodology and selected/adapted SEJE techniques. For this purpose, a brief overview of workshop types and established SEJE methods will be given and analyzed by positioning the present work within the global SEJE context found in the literature. Next, the current state of the developed workshop methodology will be described and justified.

The second part of the paper is dedicated to the structure, conduction and outcome of the second AMA workshop. Its first aim is to obtain and analyze a generated solution space containing different implementations of wing morphing technologies. Another equally important target is the thorough analysis of the workshop conduction, collection of valuable participant feedback, and the derivation of improvement proposals for next potential iterations.

Hence, the following global research questions have been defined for the current project stage:

2. Structured Expert Judgment Elicitation

One of the AMA’s focal points is the conceptual design integrating non-existent or prominent innovative technologies, often lacking deterministic test or performance data. This represents a challenge for the objective, transparent and scientific comparison of the generated aircraft solutions. In such cases, one usually seeks the professional opinion of domain experts [

13,

14]. Regardless of their expertise level, however, such assessments would always exhibit a certain level of subjectivity and uncertainty simply due to their human nature [

15]. This is the motivation behind the research questions defined in

Section 1.2.

For such purposes, researchers usually apply structured expert judgment elicitation (SEJE) methods. These define aspects such as elicitation type (remote or in person), questionnaire design, knowledge aggregation possibilities, uncertainty handling, etc. The current section will start by giving a definition of a SEJE method and presenting the most prominent approaches in this domain. Subsequently, the current research will be placed into the literature context and positioned in reference to similar works. Finally, the concepts applied within this paper will be selected and justified.

2.1. Literature Overview

The concepts of expert judgment elicitation originate from the fields of psychology, decision analysis and knowledge acquisition [

14]. The literature suggests the following definitions for expert judgments and their elicitation:

“data given by an expert in response to a technical problem.” [

14]

“we consider each elicited datum to be a snapshot that can be compared to a snapshot of the expert’s state of knowledge at the time of the elicitation.” [

14]

“Judgements are inferences or evaluations that go beyond objective statements of fact, data, or the convention of a discipline...a judgement that requires a special expertise is defined as “expert judgement”.” [

16]

“...elicitation, which may be defined as the facilitation of the quantitative expression of subjective judgement, whether about matters of fact or matters of value.” [

17]

“Elicitation is a broad field and in particular includes asking experts for specific information (facts, data, sources, requirements, etc.) or for expert judgements about things (preferences, utilities, probabilities, estimates, etc.). In the case of asking for specific pieces of information, it is clear that we are asking for expert knowledge in the sense of eliciting information that the expert knows.” [

18]

In this context, one seeks the judgments from qualified professionals (experts) in their respective domains. According to the definitions, the elicitation process is not simply asking for the required information. It also implies the extraction of the required subjective knowledge, the filtration of biases and its possibly precise representation in a quantitative form—be it the evaluation of concrete physical (one might dare say “tangible”) parameters or simply placing it on a qualitative scale.

One should note the various terminologies used throughout the literature to denote structured elicitation of expert opinions such as structured expert judgments, structured expert knowledge, expert knowledge elicitation or elicitation protocol. These in general stand for the principle of aiming to scientifically elicit expert knowledge. In the current paper, the abbreviation SEJE (structured expert judgment elicitation) will be used for this purpose. Similarly, the widely used notation of the participating experts as decision-makers (or DMs) is also adopted in this work.

The main advantage of formalized SEJE methods is the transparency and the possibility to review the process [

14], therefore contributing to the scientific significance of the results. In this context, Cooke [

19,

20] advocates the achievement of rational consensus by adhering to the principles of Scrutability/Accountability (reproducibility of methods and results), Empirical Control (empirical quality control for quantitative evaluations), Neutrality (application of methods aiming to reflect true expert opinions) and Fairness (no prejudgment of the experts). However, it is necessary to underline that these requirements have been outlined during the definition of the Classical Model, which serves as a method for quantitative elicitation.

SEJE approaches have been developed starting from at least the 1950–60s or earlier [

16,

21]. In some cases, these are formalized into structured elicitation protocols and guidelines by/for authorities and companies [

21], e.g., by the European Food Safety Authority [

18] or the procedures guide in the field of nuclear science and technology [

22]. Further applications can be found in the domains of human health, natural hazards, environmental protection [

20], and provision of public services [

17]. Some aeronautical disciplines have also profited from SEJE methods, which will be discussed in the next subsection.

Available SEJE methods establish the main components of a structured elicitation and present various possibilities to implement these. In order to identify and derive the appropriate SEJE process for the integration into the AMA methodology, the most prominent SEJE methods are briefly presented, summarized and compared—namely the Classical Method by Cooke [

19], the The Sheffield Elicitation Framework [

23], the Delphi method [

24] and the IDEA protocol [

25].

2.1.1. The Classical Model

The Classical Model (CM) was developed by Cooke [

19] and represents an established method for the elicitation of quantitative values. Instead of qualitative approximations, the experts are asked to roughly estimate a value of a given parameter on a continuous scale, e.g., “From a fleet of 100 new aircraft engines how many will fail before 1000 h of operations?” [

20]. This approach advocates uncertainty quantification in the form of subjective probability distributions by eliciting from the experts distribution percentiles—typically at

,

and

[

20,

26]. These allow the fitting of a “minimally informative non-parametric distribution” [

26] for the estimation.

In order to cope with the empirical control requirement defined by Cooke [

19], the method involves the attribution of an individual weight to each expert, called calibration. This is executed by preparing two sets of questions—the so-called seed and target questions, incorporating the same types of elicitation heuristics. The seed evaluations involve quantitative inquiries with known correct answers and are used to “assess formally and auditably” [

20] the expert’s deviation from the precise solution in a domain, denoted as a calibration score. However, even a perfect calibration does not stand for an informative decision in the form of a narrow probability distribution around the precise value. For this purpose, the information score is introduced which reflects the precision of the answer. Then, the correction factors or weights for each expert represent a combination of the calibration and the information score. The target questions refer to the information of interest. Hence, in order to obtain elicited data with highest possible precision, the experts’ assessments are mapped with their corresponding weights.

The CM advocates mathematical aggregation of the varying expert evaluations, e.g., in the form of a Cumulative Distribution Function (see Reference [

20] for more detail).

2.1.2. The Sheffield Elicitation Framework

The Sheffield elicitation framework (SHELF) has established itself as another notable SEJE methodology [

23]. Opposed to the mathematical aggregation used in the CM, SHELF strongly relies on behavioral approaches to combine knowledge from different experts. The process is executed via gathering an expert panel moderated by a dedicated facilitator. After discussing the evaluation subject(s), the DMs give their individual assessments which are then fitted into an aggregated distribution. In a next step, the individual and group evaluations are shown to the panel and discussed, while the experts have the opportunity to edit their previous judgments. The discussions and reevaluations are repeated until a consensus on the aggregated distribution(s) is reached.

It becomes obvious that the method was initially developed to elicit knowledge on a single variable, however extensions for multivariate assessments have been introduced as well [

23].

Although SHELF incorporates mathematical aggregation, the result still depends on the common acceptance of the combined distribution, which leans on behavioral aggregation. Accordingly, one expects an increased influence of group dynamics and the corresponding group biases.

2.1.3. Delphi Method

Some authors mark the Delphi method as one of the first formal elicitation approaches of structured expert judgments [

16] dating back to the 1950–60s. By applying a structured questionnaire, the experts are asked remotely, individually and anonymously/confidentially for their responses. After that, their answers are collated [

16,

24] (while removing the

upper and

lower responses in some cases [

16]). In a second round, selected questions are sent back to the DMs for them to review and eventually correct their initial evaluations. This is repeated until the expert group reaches consensus [

16,

24].

The outlined advantages of the Delphi technique include the avoidance of group biases common for personal meetings as well as the participation of larger expert panel through the remote inquiries [

24]. The main drawbacks pointed out are the time-consuming character [

24], possible questionnaire ambiguity and the possible alteration of the responses before the next evaluation round [

16].

2.1.4. IDEA Protocol

Similarly to the SHELF method, the IDEA protocol is attributed to the mixed approaches using both mathematical and behavioral aggregation of expert knowledge [

27]. This is done by structuring the process in four main steps, forming the IDEA acronym [

25,

27]:

Investigate —clarification of the questions and entering of individual estimations;

Discuss—the answers of the experts in relation to the rest are shown and serve as a basis for a group discussion, aiming to discover further meanings, reasoning and dimensions to the question;

Estimate—the DMs may give a second/final private answer as a correction to their initial one;

Aggregate—final results are obtained through mathematical aggregation of the experts’ estimations.

The original formulation of the IDEA protocol as defined in References [

25,

27] aims at eliciting numerical quantities or probability by implementing sets of questions.

2.1.5. Summary of SEJE Components

In their guide on SEJE, Meyer and Booker [

14] give an overview of methodology components and how to select among their available implementation options. The source emphasizes on different elicitation situations, response modes, dispersion measures, types of aggregation and documentation methods. Their summary is given in a form of a MM which could be used for the design of new SEJE processes, shown in

Table 1. It combines the elements from [

14] as well as other aspects from the literature mentioned earlier.

Based on the descriptions in the previous subsection,

Table 2 summarizes the implementations of the SEJE components by the prominent methods found in the literature. It is necessary to underline that these methods have been shown according their original definition. Many of these have been further extended throughout the years and numerous modifications can be found in the literature—e.g., by adding expert calibration or extending the elicitation to multiple variables [

16,

23,

28].

2.1.6. Bias as a Source of Uncertainty

One of the main reasons for the thorough structuring of SEJE approaches is the difficulty to obtain data reflecting expert knowledge and experience as exactly as possible. This is mostly due to the presence of multiple types of bias [

14,

15], often resulting in systematic errors when a person is asked to give objective scientific judgment based on knowledge and experience. The literature knows multiple descriptions and overviews of bias for the purposes of knowledge elicitation [

14,

15]. Instead of giving another review, the current work will use the bias definitions and guidance in order to construct a tailored SEJE method for the AMA aiming to minimize uncertainties.

Meyer and Booker [

14] define two views on bias—motivational and cognitive. Bias can be considered motivational when the elicitation task aims at reflecting the expert’s opinion as precisely as possible. This is for example the case when one’s purpose is to understand the DM’s way of thinking or problem solving. In such cases, ambiguous definitions or faulty elicitation processes could alter the expert’s point of view and therefore be the source of motivational bias. The main types of motivational biases are social pressure (in group dynamics), misinterpretation (influence of sub-optimal elicitation methodology or questionnaires), misrepresentation (flawed modeling of expert knowledge) and wishful thinking (influence of one’s involvement in the subject of inquiry) [

14].

Meanwhile, the cognitive bias view should be preferred for the purpose of likelihood estimation or a mathematically/statistically correct quantification of given parameters [

14]. In such cases, it is linked to the cognitive shortcuts people use to process information. Examples of cognitive bias are inconsistency (the inability to yield identical results to the same problem throughout time), anchoring (resolving a problem under the influence of a first impression), availability (vastly relying on a easier retrievable from memory event), and underestimation of uncertainty [

14].

To the knowledge of the current article’s authors, the majority of developed SEJE methods (originally) aim at eliciting probability distributions for physical values. In this context, an observation has been made that the literature pays more attention to the cognitive view of bias [

15,

20].

Although the mentioned motivational and cognitive biases might appear complimentary to each other, Meyer and Brooke [

14] advise to take a single view of uncertainty depending on the purpose of the project. They bring forward the following justification. On the one hand, the reduction of motivational bias aims to help the data reflect the knowledge of the expert as well as possible by adjusting the methodology accordingly. On the other hand, the cognitive view states the inability of the expert to represent their opinion in an exact mathematical manner and tries to guide the DMs to express themselves in a correct statistical way [

14].

2.1.7. Elicitation Format

The main elicitation formats are the remote inquiry or individual interviews opposed to group interactions, which bring their own advantages and drawbacks in view of the different bias types these invoke.

The remote or separate elicitation from the experts implies no interaction among them, which excludes biases originating from group dynamics such as social pressure, hierarchy influence or personality traits [

14]. Such an approach could be strengthened by expert calibration, weighting and mathematical aggregation (e.g., in the CM) to contribute to the empirical control and method transparency.

Although the researcher should acknowledge the presence of group think biases within interactive groups, personal meetings of expert panels has a spectrum of advantages as well [

14]. In particular, it can contribute to creative thinking by combining different expertise and generate a wider variety of ideas. Furthermore, data with bigger precision could be acquired through interactions.

2.1.8. Qualitative versus Quantitative Variables

As previously mentioned, the majority of studied sources on SEJE aim the elicitation of physical parameters describing a system or a phenomenon. Such elicitation variables can be labeled as quantitative, since there theoretically exists a precise correct value, which should be approximated by the experts.

However, as the purposes of the AMA have shown, a SEJE might be required under the following circumstances:

A global assessment of a system or product in the early stages of conceptual design;

Evaluation according to qualitative criteria in a vague linguistic form such as “mission performance” or “system complexity” which summarize multiple characteristics;

The necessity to consider innovative concepts or technologies in a current study;

The lack of test data or statistical estimations on such technologies.

In such cases, the expert would have insufficient experience or knowledge on nonexistent concepts. Therefore the attempts to quantify system parameters would not only be challenging but would also lead to a significant increase of epistemic uncertainty.

The alternative is to use qualitative scale for the assessment regarding non-deterministic criteria. Usually, it reflects a linguistic evaluation grades such as “very good”, “good” or “bad” in a categorical form. For the purposes of quantification and further data processing, these statements have been transferred to a continuous numerical scale from 1 to 9 within the initial AMA as defined in [

29]. One of its main drawbacks is obviously the ill-defined character of the numerical definitions for the assessment of technological alternatives—should a certain technology be evaluated with 7 (“very good”) or 6 (between “good” and “very good”)? This is found ambiguous especially by representatives of technical fields used to precise quantities (which has been observed during both conducted workshops within the current project so far). Further disadvantage is the lack of a reference for the qualitative values, at least for the extremas of the scale. This issue is addressed within the Analytical Hierarchy Process (AHP) by Saaty [

30], where pairwise comparisons should be evaluated on the scale from 1 (equal) to 9 (absolutely superior) to denote the superiority of a certain alternative over another one.

At this point, one should recall the SEJE definition in the beginning of the current subsection, denoting such a process as a quantification of subjective expert knowledge. Although such scales are considered “qualitative” in the current work, these still represent a way to quantify the DM’s experience and knowledge in a certain data format. Hence, this can also be defined as a form of elicitation.

2.1.9. SEJE Integration with Multi-Criteria Decision-Making

The aim of the SEJE methods is to ensure a transparent and scientific elicitation of expert knowledge by offering sometimes multiple steps of aggregation, reevaluation or discussion. However, the thorough conduction of such routines might be applicable for the elicitation of a relatively small amount of variables simply due to time constraints and limitations related to participant engagement and their attention span. This was experienced during the first [

12] and second workshop of the current project.

However, one might need to select among a significant amount of alternative scenarios or technologies. Furthermore, the selection might be defined as the outcome of a multi-criteria assessment, which is the case for the AMA. Hence, a certain structure is required both for the elicitation process and the subsequent data processing in order to obtain the option comparison results in the form of comparisons, ranks, etc. The algorithms which represent a framework to transform expert inputs into final option ranking or assessments by accounting for multiple criteria simultaneously are denoted as Multi-Criteria Decision-Making (MCDM) methods [

31].

A selection and the possible integration of a MCDM approach into the AMA process is given in Reference [

4]. The source also justifies the choice of FAHP for the problem structuring. Once a MCDM algorithm/framework has been selected, it is necessary to choose or adapt an appropriate SEJE approach.

This raises the question of integration of MCDM and SEJE methodologies which is subject to the following challenges:

Time- and energy-efficient elicitation of a larger number of variables;

Ensure compatible format of the elicited variables from the SEJE and the input variables into the MCDM;

Ensure transparent value transformations within the methods;

Minimize the additional bias or noise of the uncertain data caused by the methods.

2.1.10. Purposes for SEJE Applications

Regardless of the application domain, an extended research on SEJE has yielded a spectrum of terms using participative approaches such as “technology assessment”, “scenario workshops”, “future workshops” or “stakeholder workshop”. Therefore, it is first necessary to distinguish among these notations in order to position the AMA workshops in this scientific context.

Technology Assessment (TA) has the general purpose of identifying promising innovations and evaluating the socio-technical impact of new technologies or improvements [

32]. Ultimately, this could aid the political decision-making on administrative or company level. Such assessments might involve cost-benefit and risk analysis, as well as the potential relationships to markets and society [

32]. In particular, some sources lay focus on the technology integration in policy making and public acceptance [

32,

33], as well as the “co-evolution of technology and society” [

32]. For this purpose, stakeholder workshops are conducted which allow the gathering of representatives from concerned circles such as companies, society, political and research institutions [

34]. In a structured process, these attempt to form visions of the innovative technology interaction with the public and its administrative regulation by aiming to resolve existing concerns. This vision is denoted as a “scenario”, which encompasses a possible implementation of the novelty and its impact on a spectrum of dimensions such as various society levels, the environment and public/consumer acceptance [

34]. There exist numerous types of workshops and conferences aiming to define multiple scenarios, select the most promising ones and/or detail their implementation. Prominent examples are the scenario workshop, future workshop, consensus conference, future search or search conference [

35]. The current article will not describe these in detail. Instead, it is purposeful to introduce the process of creativity use for the creation and definition of scenarios (or visions, futures, etc.).

Vidal [

35] summarizes that creativity encompasses two types of thinking: divergent, which allows to see multiple perspective of a certain situation, and convergent thinking which helps to “continue to question until satisfaction is reached” [

35]. Methods for creative problem solving incorporate switching between these two thinking approaches. In this context, both the scenario workshops [

34] and the future workshops [

36] include steps which encourage divergent thinking to generate new ideas and convergent thinking to select among these the most appropriate one(s) and to improve their level of detail. To date, the conducted workshops applying the enhanced AMA have used only the convergent thinking by asking the experts to compare technological alternatives.

2.2. SEJE Applications in the Aerospace Domain

The uncertain character of the conceptual design phase of aerospace vehicles has encouraged researchers to seek expert opinions on upcoming innovations. An extensive research and development of SEJE techniques was conducted at NASA (National Aeronautics and Space Administration) to estimate parameters of weight, sizing and operations support for a launch vehicle [

37]. In particular, probabilistic values were elicited by creating appropriate expert calibration [

38] and aggregation [

39] techniques in combination with a specially designed questionnaire [

13].

Additionally, the use of scenarios in the aircraft design process has been discussed by Strohmayer [

40]. The author argues that based on market analysis, one could derive requirements and identify technologies for new aircraft by organizing and evaluating these in the form of consistent scenarios.

Authorities in the aeronautical domain have also shown interest in expert judgments. The Federal Aviation Administration (FAA, United States Department of Transportation) assumed the use of SEJE for the development of a risk assessment tool for the electrical wire interconnect system [

41]. This idea has been further studied and refined by Peng et al. [

42] by checking the validation of expert opinions and estimating the agreement within the panel.

Despite of the available statistics and databases on accidents in aviation, Badanik et al. [

43] used expert judgments as a possible aid for airlines to estimate accident probabilities. For this purpose, the CM was applied to analyze the answers of airline pilots to assess the probabilities of some IATA (International Air Transport Association) accident types for occurring Flight Data Monitoring events on multiple aircraft.

2.3. Justification of the Developed Workshop Concept

In order to position the current research and justify the developed SEJE methodology, it is first necessary to summarize the objectives of the AMA and the elicitation as follows:

Idea generation for conceptual aerospace design;

Consideration of innovative technologies lacking historical data;

Evaluation of the technological options by experts;

Benefiting from the expertise and creativity of the domain professionals.

Therefore, one can categorize the AMA workshops as a variation of TA. However, depending on the selected use case and the attending participants, the research conducted so far does not necessarily focus on the social impact of new concepts, but rather on an optimal selection of technologies to use in vehicle designs (e.g., during the first and second workshops).

The consideration of novel or non-existent technologies with scarce or no historical performance data implies the challenging elicitation of deterministic physical parameters of the configurations. In addition, the limited experience of the professionals with such components will further increase the epistemic uncertainty during the quantitative elicitation of system parameters. Hence, a qualitative expression of the performance evaluations of the options has been defined. In combination with the FAHP by Buckley and Saaty, the experts are required to enter pairwise comparisons of the technological options in the form of trapezoidal fuzzy numbers on the scale from 1 (both technologies are equal) to 9 (technology A is absolutely superior to technology B)—see Reference [

4] for more details.

By using the qualitative evaluation character, the current work leans on the assumption that intuitive elicitation of expert knowledge and experience are a reliable scientific basis for concept derivation. In this context, the motivational view on bias is selected, as defined by Meyer and Booker [

14]. The reason for that is the dominant importance of clear problem and methodology definition leading to a cleaner elicitation of expert knowledge rather than the accurate statistical estimation of precise physical parameters (as in the case of the cognitive view on bias).

In order to engage the full potential of the experts’ knowledge and creativity, the method would benefit from both mathematical and behavioral aggregation. For this purpose, a modification of the IDEA protocol is derived to fit the contextual evaluation of MM options. A detailed description of the entire methodology and its implementation can be found in the following section on the second AMA workshop.

3. Second Workshop Methodology

For the first development iteration of the enhanced AMA, a first workshop was conducted and described in Reference [

12], where the conceptional design of a search and rescue aircraft was studied. The current article discusses the conduction and results of a second workshop which aimed to consider the lessons learned and test new ideas. The following subsections will reveal major aspects related to the workshop, namely use case definition, developed methodology, questionnaire design, data post-processing, and results analysis.

3.1. Airfoil Morphing and Integration—Use Case Definition and Morphological Matrix

In this context, the conceptual design of a subsystem was conducted for the purpose of selection and integration of wing morphing technologies. Firstly, this is a representative use case to demonstrate the current methodology state on innovative technologies. Secondly, the subject reflects the demand for future work on morphing wings technologies as stated at an earlier project stage in Reference [

3].

The use case focuses on two main aspects: the geometric shape modification and the morphing technology to implement it. These were defined in a MM as three attributes with their corresponding implementation possibilities (

Figure 3). The morphing mode selected in the current work represents the modification of the cross-section wing geometry, denoted here as “airfoil morphing”. The selected responses (first attribute in the MM) are the deformation solely of the trailing edge, of both the trailing and leading edge or the deformation of the entire airfoil geometry. The next questions that arises is the positioning of the morphable airfoil sections on the wing. Therefore, the second attribute allows to select possible wing sections for morphing, namely an area near the wingtips, near the wing root or the whole wing. For the third attribute, a research yielded the following technologies studied for the purpose of airfoil morphing:

Mechanical extension—these are mechanical devices which extend the trailing and/or leading edge of the airfoil [

44]. In this context, solely the concepts of conventional flaps and slats are considered, serving as an existing reference option.

Contour morphing—the deformation of the airfoil contour via hydraulic or electric actuators as presented in Reference [

45].

Piezoelectric macro-fiber composite plates—such plates exhibit the ability to deform when submitted to electric current and vice versa [

46].

Shape memory alloys—these smart materials deform under certain loading and temperature conditions [

47].

Such a definition of the MM by no means aims to represent an exhaustive outline of all possible morphing technologies or their integration, neither to support a complete design process. As previously mentioned, the main target of the second workshop is to test the enhanced AMA methodology and the evaluation of perspective technologies with scarce test data.

3.2. Evaluation Criteria

In order to compare the alternative morphing technologies and their integration options, a set of relevant evaluation criteria should be chosen. By considering the main advantages and challenges for such architectures, the following criteria have been selected:

Flight performance

Required energy

System complexity

Improvements in flight performance represent the primary reason for the consideration of morphing wing structures in the first place. Evaluations according to this criterion should reflect the difference in aerodynamic qualities, flight stability, integration of innovative flight controls, etc. However, a trade-off should be made with the energy required for different morphing modes and morphable wing areas, making it a second criterion. Last but not least, the MM options should be compared according to their system complexity and weight in order to round-up the subject-relevant main global aspects.

For the purpose of easier results interpretation, the criteria will not be weighted for the final solution evaluation.

3.3. Uncertainty Modeling

In order to model the uncertainties incorporated into the experts’ subjective answers, the concept of ordinary fuzzy number is used for each technology comparison. The benefits of this approach and the justification for its use within the AMA are described in more detail in References [

4,

12].

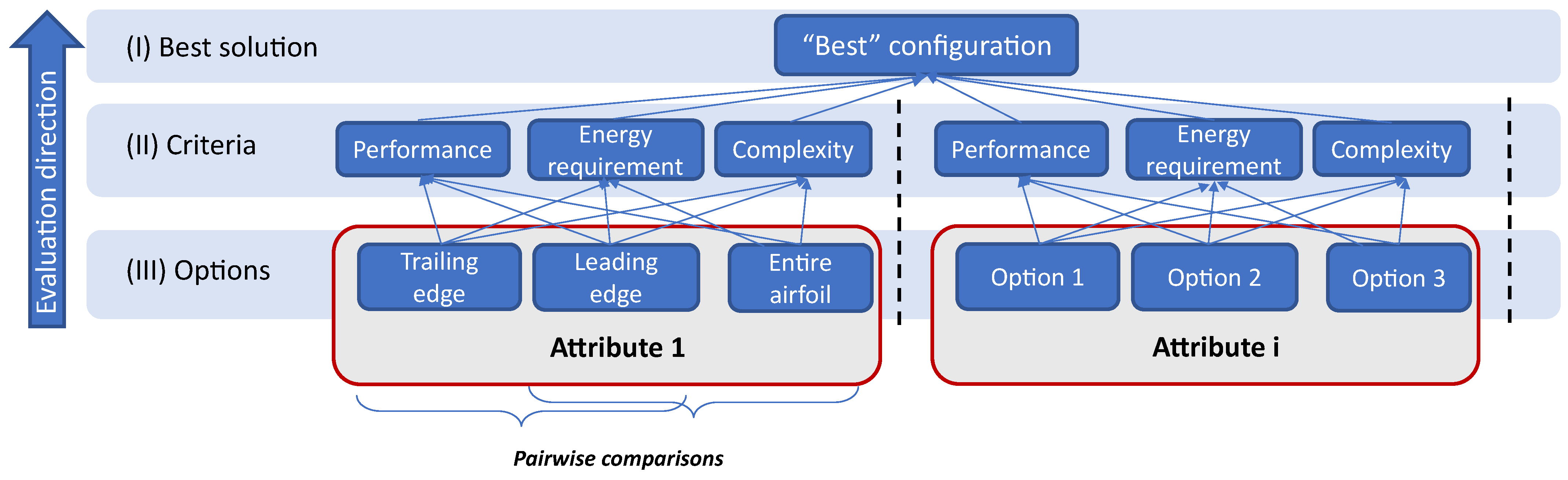

3.4. Problem Hierarchy Structure

In order to capture the multi-dimensional character of the design problem, the workshop evaluations are structured by applying the FAHP suggested Buckley [

48], which builds on the classical AHP by Saaty [

30]. Reference [

4] explains the use of the approach based on fuzzy comparisons within the AMA and the definition of necessary system hierarchies based on the problem statement.

The hierarchy used for the current workshop is exhibited in

Figure 4. The organization of options and criteria in levels has been executed analogically to the problem structuring in the first workshop (see Reference [

12]). The options from the same attributes are compared regarding each criterion of the level above. The generic right part of the diagram showcases the positioning of options from different attributes into separate hierarchy branches due to their incomparability.

After obtaining all option comparisons from the DMs the algorithm yields the global weights of each option according the criteria. Naturally, the comparisons are elicited only for options from the same attribute.

3.5. Workshop Structure

The previous workshop aimed to test only a single step of a SEJE methodology, namely the individual expert evaluations by leaving DMs interactions and behavioral knowledge aggregation out of scope. The current workshop implements both features by adapting the IDEA protocol for the pairwise comparisons of MM options. Further focus has been laid on contextual consistency of the tasks (the discussion of attribute options are followed immediately after the DMs evaluated these) and on the optimization of workshop duration and expert concentration.

The workshop was introduced with a presentation outlining the objectives of the study, the problem statement and instructions on the interactive elicitation. The evaluations were entered on a software platform specially developed for SEJE workshops within the AMA. As an upgrade of the version used for the first workshop [

12], it also integrated a carefully designed User Interface (UI) in the front-end, and a back-end, which ensured the smooth execution of the background operations and the evaluation storage in a database. The architecture allowed the interactive input of the DMs’ evaluations according to a specially developed questionnaire design, presented in the next subsection.

The main steps of the IDEA protocol are repeated for each attribute row of the MM. In the Investigate part, the experts individually evaluate the pairwise option comparisons according to all criteria. Subsequently, a moderated discussion round is conducted which aims to share ideas among the participants. The purpose is to broaden their horizon and point their attention at forgotten or maybe unknown aspects which might influence the evaluation. If this is the case, the DMs have the possibility to edit their previous evaluations. After finishing the evaluations for a single attribute row, the same procedure is conducted for the next one.

The discussion rounds consisted of the following steps:

Mind mapping—for each criterion, the sub-criteria relative for the current attribute are derived;

“Best” options mapping—based on a group consensus, an option from this attribute with the best performance is assigned for each sub-criterion;

Visual overview—individual identification of option dominance for the sub-criteria;

Re-evaluation—based on this mapping, the DMs might consider editing their initial evaluations.

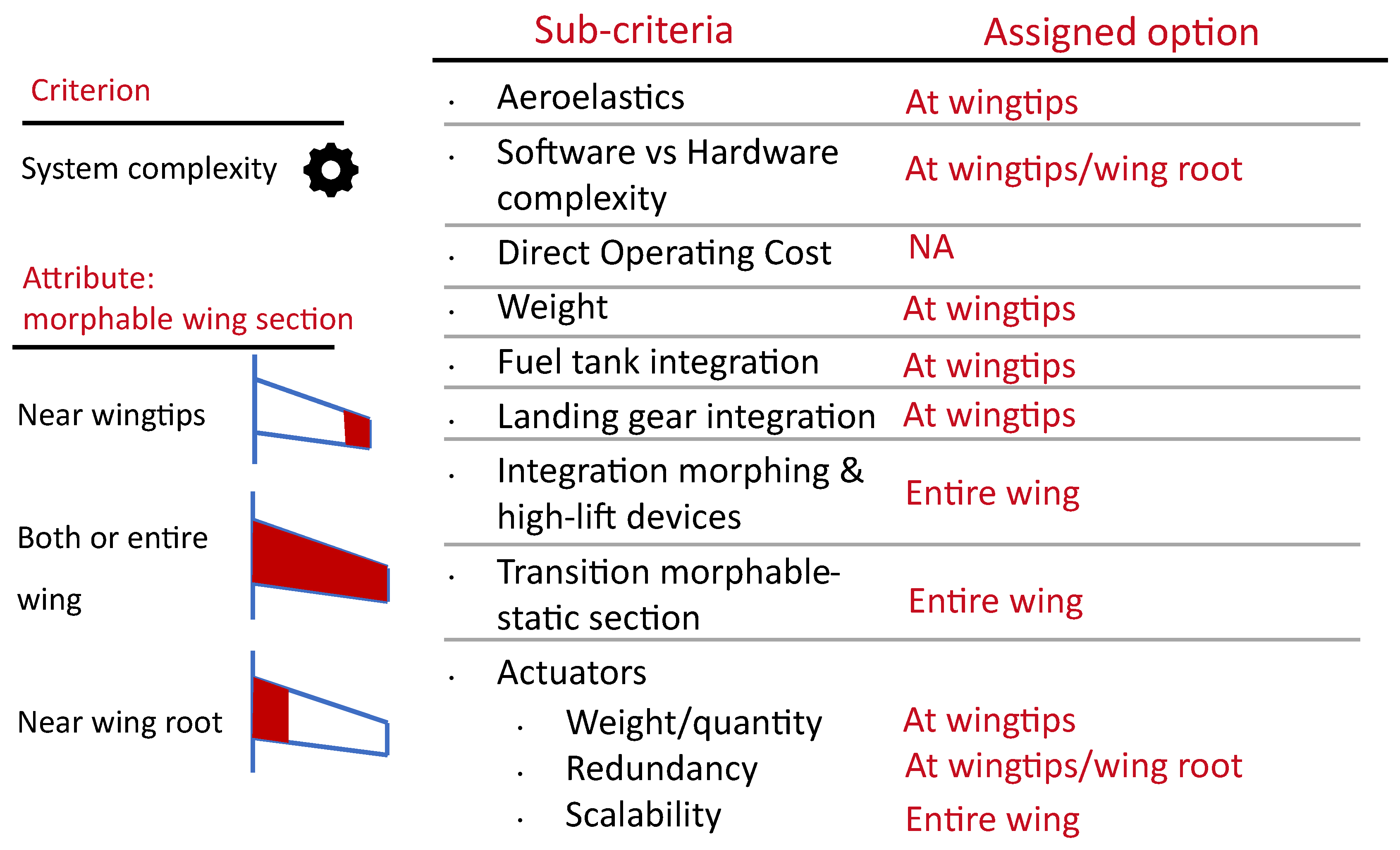

The result of such mapping from the second workshop is described in

Section 4.3.2. Such an approach benefits from divergent thinking and allows the group to gather more aspects in order to increase the objectivity of an evaluation. One should stress that a consensus is required only for the definition of a most suitable option for each sub-criterion, roughly based on majority agreement. Beyond this point, each expert is left to decide for themselves whether the mapping of the options is convincing enough for them to edit their evaluations.

3.6. Questionnaire Design

The questionnaire consisted of two main parts: the professional background questionnaire and a technology assessment section.

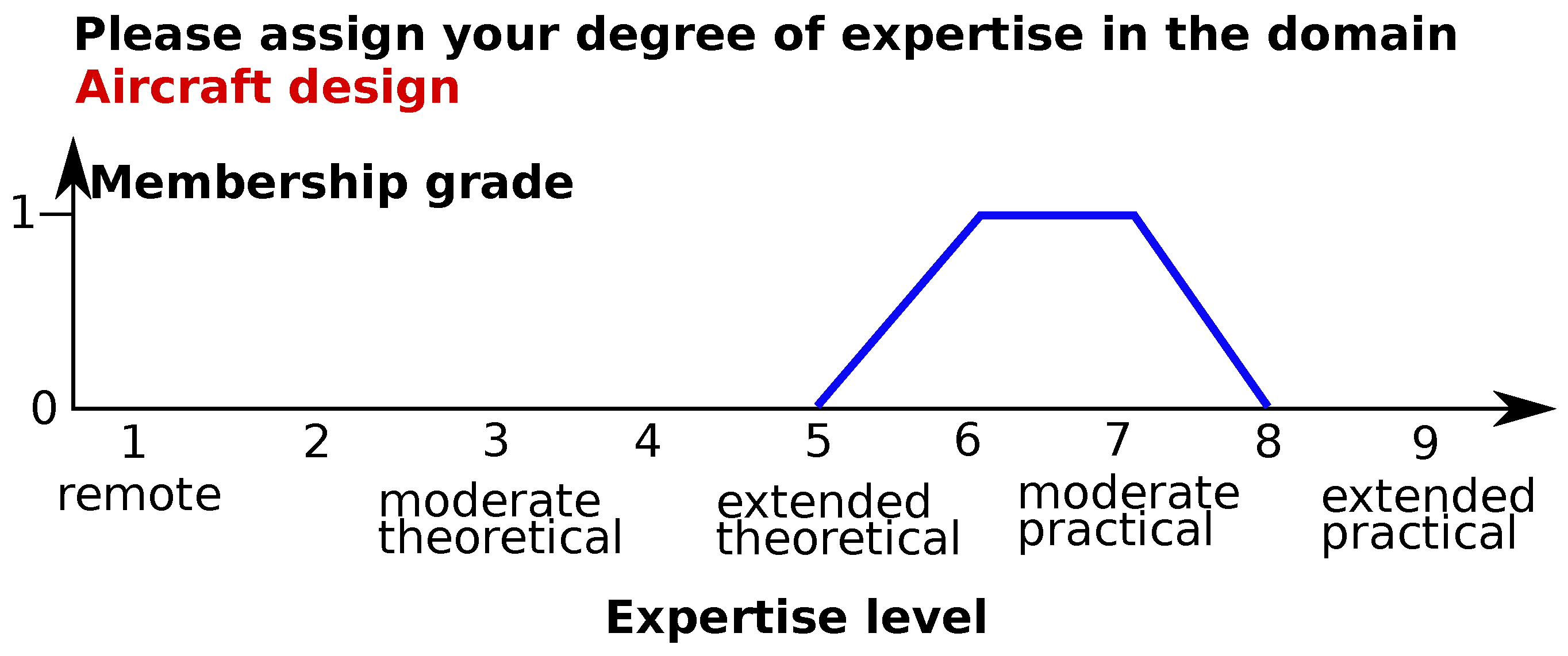

3.6.1. Professional Background Questionnaire

The background questionnaire aims to elicit the participants’ level of expertise in the relevant domains based on their own perception. This approach was inspired by the questionnaire design from the NASA’s SEJE method mentioned in

Section 2.2 and described in Reference [

38]. However, the source implies asking for the DM’s age and expertise in the form of integers from 1 to 5 [

38] and combining these into a coefficient. Instead, the current questionnaire requires self-assessment of knowledge or experience in the domains as fuzzy numbers on the scale from 0 to 9, reflecting the progressive increase in theoretical knowledge and practical hands-on experience. An example is exhibited in

Figure 5. Taking into account the MM structure and the represented domain expertise in the panel, the expertise of the DMs has been elicited in the disciplines aircraft design, aerostructures, aeroengines, flight mechanics and aerodynamics.

3.6.2. Technology Assessment Questionnaire

The technology assessment questionnaire design builds upon the one used in the first workshop by considering the lessons learned in Reference [

12]. The same diagram for the input of fuzzy estimates is used. Since the experts are asked to evaluate pairwise comparisons, the positive values of the qualitative x-axis express the superiority of one technological option over another and the negative—its inferiority. The fuzzy evaluations are then placed by the DMs according to their perception.

The feedback after the first workshop included the abundance of labels and text around the diagram as well as the tiresome and confusing switching between evaluations of the same comparisons but regarding different criteria [

12]. For this purpose, the labeling has been simplified and a single answer page included diagrams for the same option comparisons according to all available criteria. This allows an easier visual perception of multiple questions simultaneously and a strict division of the page into two vertical halves, each corresponding to a technological option, while the diagrams (or the “rows” of the page) are assigned to the criteria.

In order to further increase simplicity, the terms “superiority” (expressed with positive values) and “inferiority” (expressed with negative values) of one option over another have been omitted. Instead, the positive side of the x-axis was denoted to be in favor of option A and the negative—in favor of option B.

Furthermore, the first workshop required the participants to enter evaluations for reciprocal comparisons as well (e.g., separate questions “compare technology A referred to technology B” and “compare technology B referred to technology A” ) in order to encourage the DMs to correct their answers [

12]. This was regarded by the participants as a rather useless and time-consuming feature, leading to its removal in the current questionnaire. The new version requires an input on the comparisons of each option pair only once.

3.7. Expert Weighting

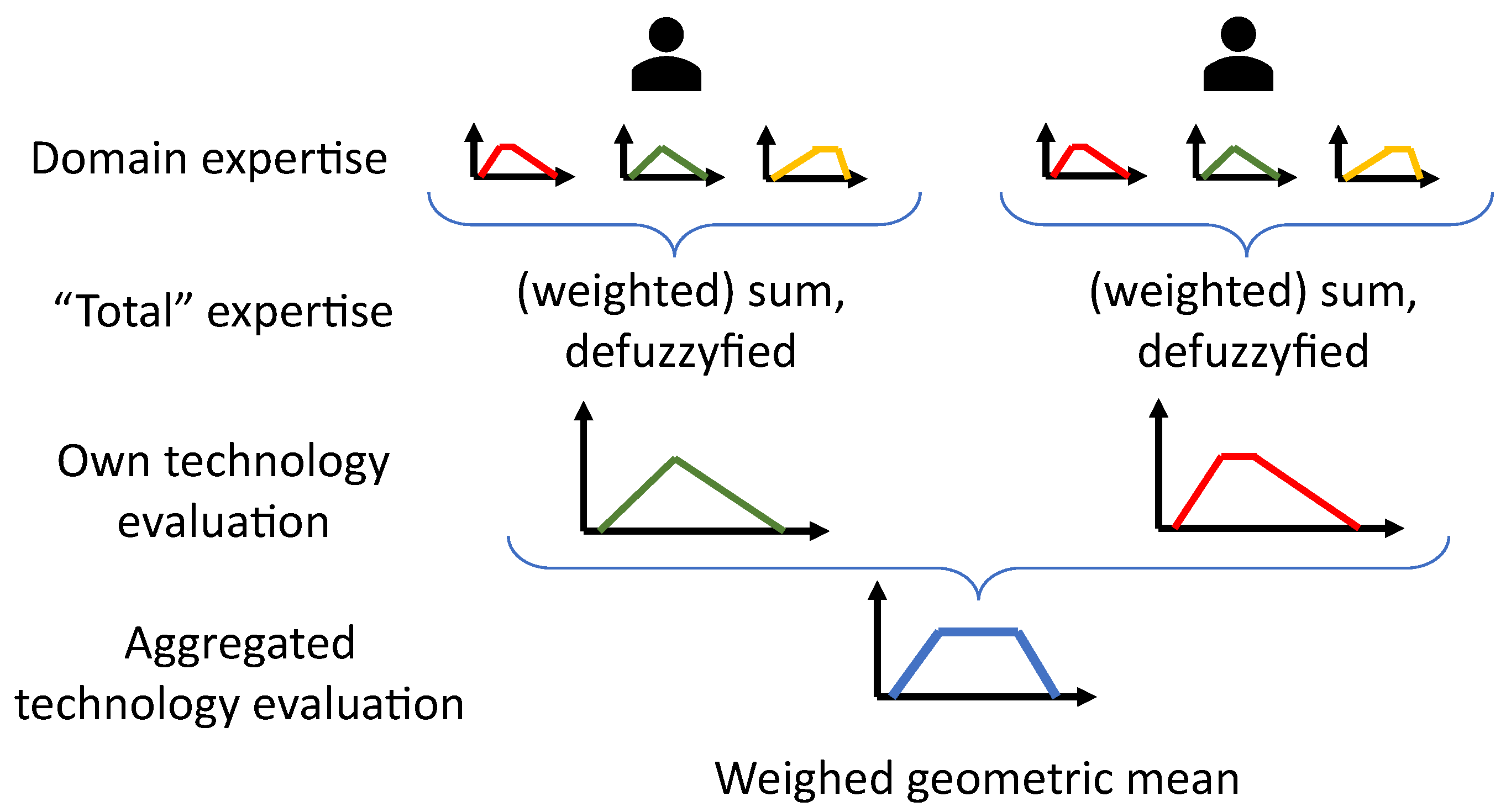

Based on the background questionnaire explained in the previous subsection, an approach to assign weights to the expert evaluations has been derived, which is summarized in

Figure 6. Each expert leans on their own perception to enter their expertise in the domains aircraft design, aerostructures, aeroengines, flight mechanics and aerodynamics. The DM’s abstract “total” relevant expertise is then calculated by simply adding or weighting the domain self-evaluation. Subsequently, the technology comparisons made by different experts are aggregated by applying the weighted geometric mean. The weights are calculated by normalizing the expertise of each participant with the sum of the expertise of all experts.

3.8. Expert Anonymity

The level of participant anonymity during and after the elicitation is an aspect concerning not only the experts themselves. It also serves as a potential source of social pressure biases able to influence the evaluations and therefore the final results [

14]. Such biases can occur during the group discussions as well as when the experts enter their evaluations. In the first case, the group think bias might be present, representing the alteration of one’s own opinion or action in order to be aligned with common positions [

14]. The same effect could be observed when hierarchical structures are present in the group. In order to reduce these effects, the current workshop implements individual evaluations which are not shown to the panel. However, even such a setting hides risks of revealing the participants identity to the researcher during the data evaluation or/and to society, if the evaluations are associated with their person later on. This is connected to the impression management bias, which stands for the concern with the reaction of people not present [

14].

For this reason, the workshop concept ensures the anonymity of the participants to the researchers and to the public. This is done by requiring them to log in to the questionnaire with a random identification number chosen by them. In the further data processing, the evaluations are associated solely with the identification number which cannot be related to any person.

3.9. Post-Processing of the Raw Results

The raw output from the workshop yields fuzzy comparisons of the MM options from each expert. These should be adapted to comply with the input format of the AMA process in order to analyze the final solution space. The conducted steps for that purpose are depicted in

Figure 7 and explained in the following:

Workshop conduction

The workshop stages represented the first three aspects of the IDEA protocol—Investigate (individual assessment), Discuss and Estimate (possible reevaluation).

Data cleaning

As with the first workshop, there was a set of typical errors and inconsistencies observed in the raw user inputs, a more detailed overview of which can be found in Reference [

12]. One should stress that these did not represent meaningless data noise but rather comprehensible deviations which could be corrected in a logical manner. These were dealt with by applying the previously developed automatic workflow which cleaned the inconsistencies.

Mathematical aggregation

In the current use case, one aims to generate a single solution space based on the evaluations of all experts. Hence, it is necessary to combine their input. During the workshop, behavioral knowledge aggregation took place in the form of group discussions. The post-processing involves mathematical aggregation of the DMs’ opinions. For this purpose, the fuzzy comparisons of the same options according to the same criteria but from different experts are combined with a geometric mean.

Option weights

The aggregated fuzzy comparisons are then used as input to the FAHP algorithm by Buckley [

48] in order to obtain the fuzzy option weights regarding to the criteria of the level above.

Defuzzification

At this stage, the AMA accepts only crisp (real) numbers for option evaluations. For this reason, the fuzzy option weights are defuzzified by using the center of gravity method analogically to the first workshop [

12].

Solution space generation

The AMA then combines the option weights into solutions and generates the solution space, which is analyzed in more detail.

3.10. Software Implementation Aspects

Own specialized software has been developed for the following purposes:

Workshop conduction platform—back-end and front-end with a user interface for evaluation input;

Mathematical aggregation;

Implementation and application of the FAHP;

AMA software—definition of MM and criteria, solution space generation and clustering.

Major sub-tasks have been developed and validated with available examples, e.g., the implementation of the FAHP. Single smaller sub-tasks have been accomplished by using the following external packages or libraries:

Scikit-learn [

49]—an implementation of the K-Means clustering algorithm for the solution space analysis in the Python programming language;

Helix Toolkit [

50]—certain objects for the three-dimensional visualization of the solution space;

Plotly.NET [

51]—plotting of the interactive fuzzy evaluations within the workshop software platform;

Scikit-Fuzzy [

52]—implementation of single operations with fuzzy numbers, based on Reference [

53]. However, the majority of fuzzy functions and definitions have been developed autonomously.

4. Results of the Second Workshop

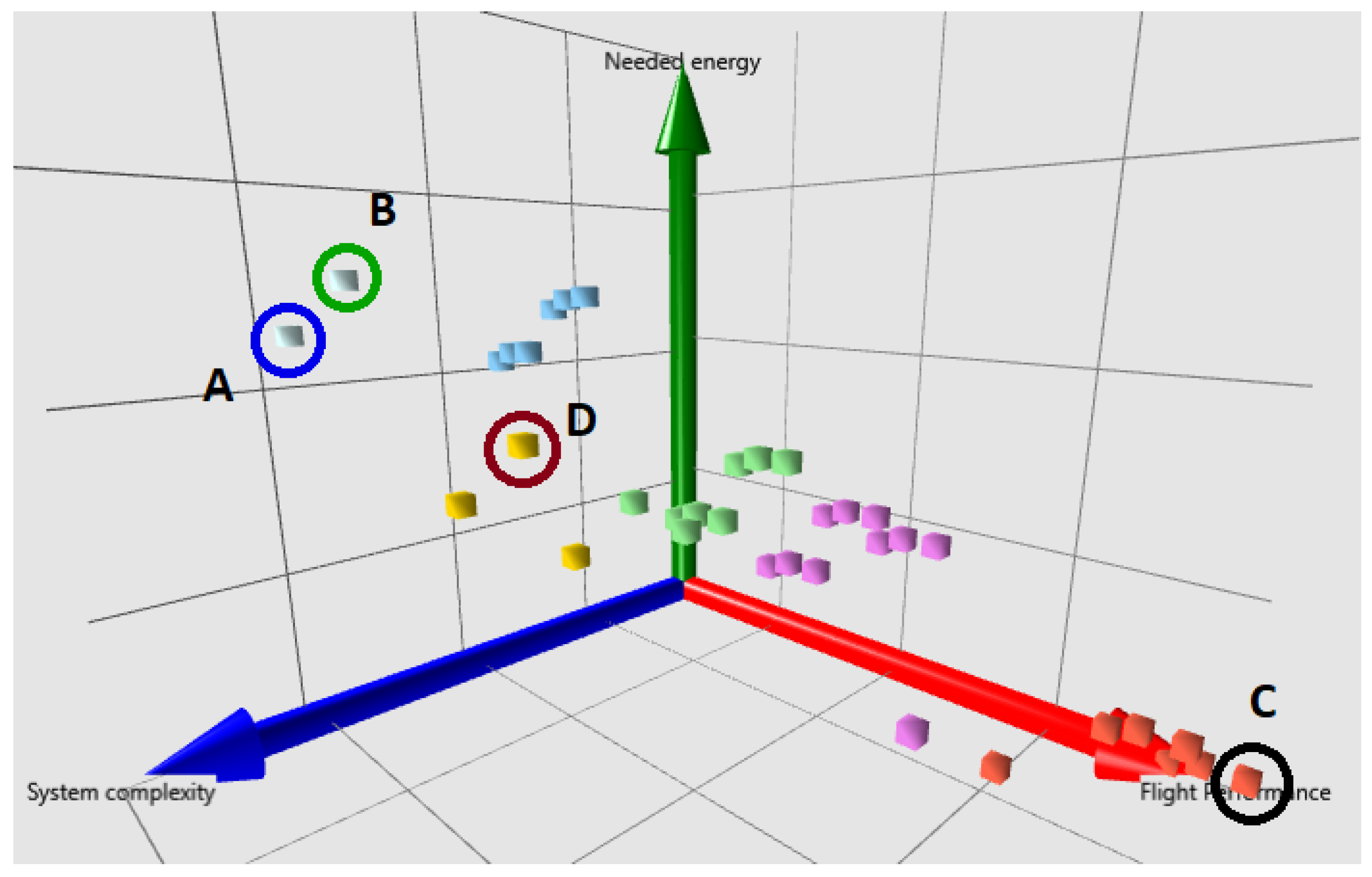

Based on the option evaluations obtained from the workshop, the AMA software generated a solution space (

Figure 8) with the following parameters:

Three diagram dimensions, corresponding to the criteria flight performance (red), system complexity (blue) and needed energy (green);

Size—36 generated solutions resulting from the exhaustive combinations of the MM options. No inconsistent options were observed;

Clustering—application of the K-Means clustering method.

Similarly to the result presentation of the first workshop [

12], the further position of a configuration along a certain axis implies its improvement according to the criterion. For example, further position along the system complexity axis stands for better/less system complexity.

The following subsections will be dedicated to the different visualizations of the solution space within the AMA software and its analysis.

4.1. Influence of the Expert Weights

Regarding the influence of expert weighting, two solution spaces have been generated—one applying geometric mean aggregation with equal expert weights and another one using the DMs’ expertise as weights (see

Section 3.7). Multiple weighting possibilities have been experimented with when obtaining the total expertise of each DM—e.g., no weighting of the expertise domains, their equal weights, as well as the selection of specific (most relevant) domains for the corresponding attribute. However, the analysis of the aggregation results and the obtained solutions yields a negligible difference in the final option evaluations and thus almost identical solution spaces. Two possible causes for such outcome might be:

The first aspect might be due to the homogeneity of the professional background of most participants coming mostly from the domains of aircraft design and aerostructures.

4.2. Cluster Overview

4.2.1. Optimal Amount of Clusters

The K-Means clustering algorithm uses the number of clusters as an input parameter. The optimal amount of six clusters was obtained beforehand by using the “elbow method” [

54] as in the first workshop [

12]. The generated clusters are visualized in

Figure 8 and described in

Table 3.

4.2.2. Cluster Metrics

The metrics used to describe the clusters in

Table 3 have been introduced in Reference [

12]. These are defined as follows:

Max. norm. sol. score—“the maximum value of the cluster solution scores referred to the average solution score in the entire solution space. Indicates the score of the ”best” solution within the cluster compared to the whole solution space.” [

12]

Score std. dev.—“the standard deviation of the total solution scores within the cluster. Indicates the numerical compactness of the cluster based on the total scores.” [

12]

Rel. Hamming distance—“the average Hamming distance within the cluster relative to the average Hamming distance of the whole solution space. Represents the qualitative cluster compactness, based on the variation of the selected technological options.” [

12]

According to

Table 3, no cluster yields a solution which is a definitive global optimum considering all three criteria simultaneously—the maximal solution scores in each cluster vary within a

interval referred to the average solution space score. Meanwhile, the average cluster scores are distributed around the global solution space average, exhibiting normalized scores between

and

.

Nevertheless, if a compromise among all three criteria is sought, one recognizes the solution marked with the letter D. It has a normalized score of merely above the solution space average, which is still the maximal solution score in the whole space. This morphing configuration implements morphing of the entire airfoil with mechanical components solely near the wingtips.

4.2.3. Local Maxima

The local maxima are defined as solutions indicating maximal scores regarding at least one criterion (along the respective diagram axis). These are marked with the letters A, B and C in

Figure 8 and described in

Table 4. The local maxima according to the needed energy (B) and system complexity (A) criteria are located close to each other in the solution space and integrate classical mechanical trailing edge morphing near the wing root or the wing tips. Opposed to these, solution C shows the maximum value for flight performance and comes with contour morphing of the leading and trailing edge on the entire wing.

4.2.4. Trend Analysis

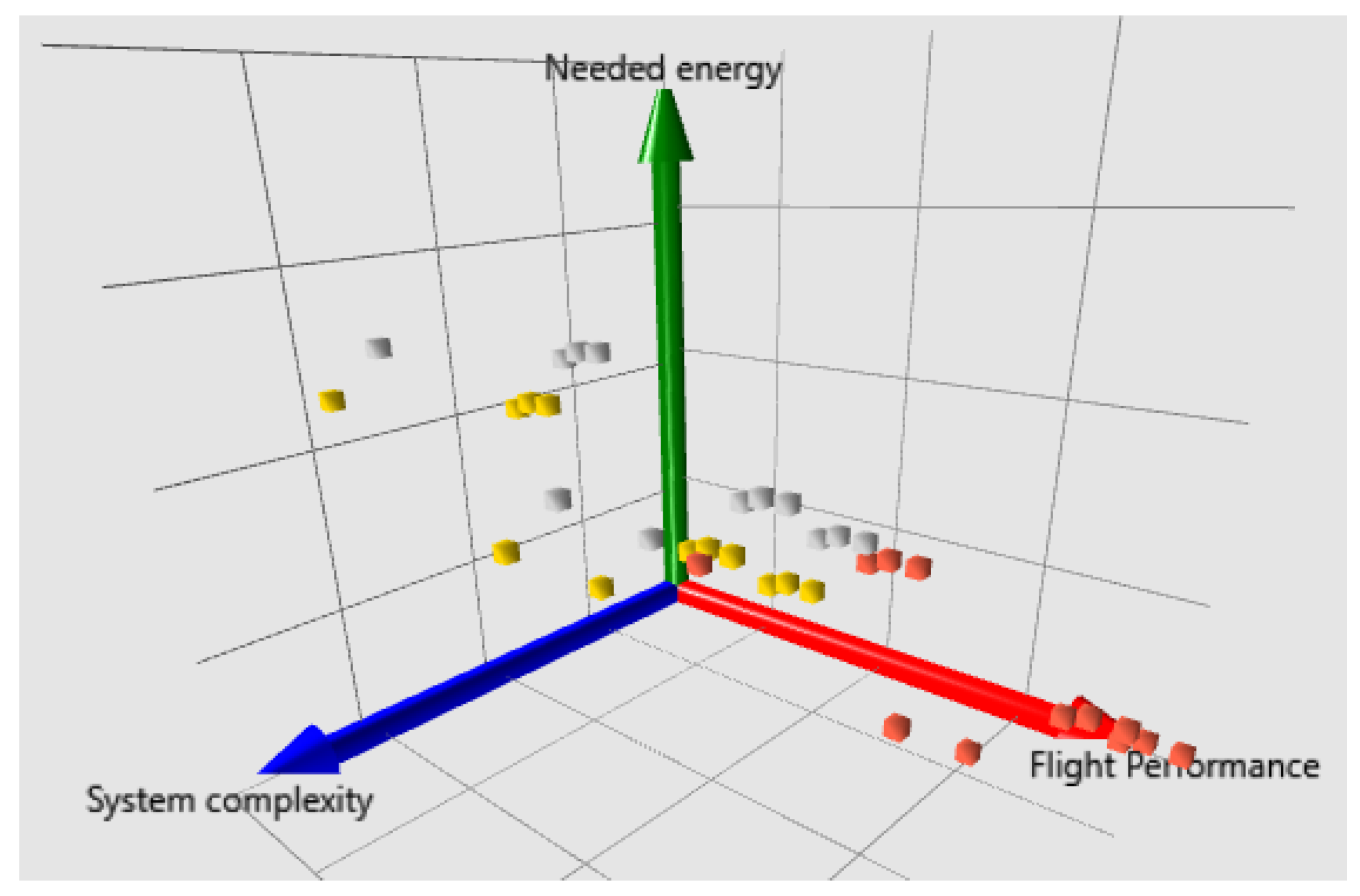

With the AMA software, it is possible to filter the solution coloring according to the applied technological options for a given attribute.

Figure 9 showcases the distribution of the options for the morphable wing section attribute. It points out the definitive advantage of entire wing morphing regarding flight performance. Simultaneously, morphing only at wing root appears to contribute to slightly less system complexity than morphing at the wing tip. The influence of these two options while fixing the other attributes results in pure solution translation along the axes, which, however, is not very significant compared to the size of the solution space. This speaks for small solution score sensitivity against these options.

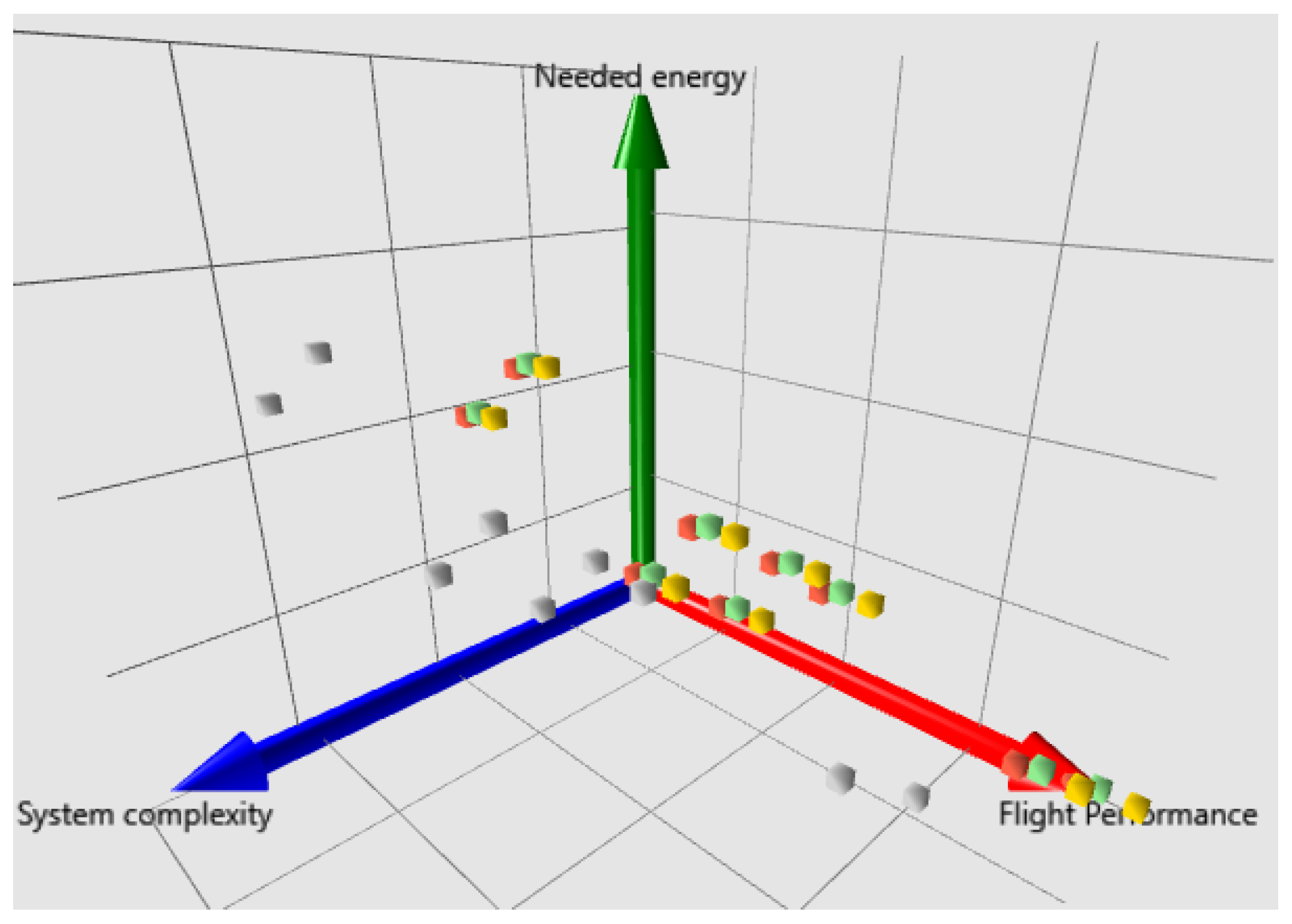

Furthermore, the distribution of different morphing technologies is observed in

Figure 10. Mechanical extension of the airfoil with flaps and/or slats showcases less system complexity compared to the other mechanisms. Similarly to the previous visualization, compact groups of solutions can be observed, which incorporate different morphing technologies with the other attributes fixed. Again, this testifies the reduced influence of the contour morphing, the memory alloys and the piezoelectric plates referred to the solution space size.

4.3. Participant Feedback

Since the current article describes another iteration of the method development, the feedback of the participating experts represents an important result combined with the solution space.

4.3.1. Overall Impression

Concerning the overall workshop structure and conduction, the DMs reported an improvement compared to the first workshop. This is reflected in a less time-consuming questionnaire and the presence of group discussions. The enhanced and more compact questionnaire layout has also been remarked, which contributed to an easier visual perception and reduced effort during the evaluations.

4.3.2. Group Discussions

The outcomes of group discussions can be summarized in the mapping of best options described in

Section 3.6. The example shown in

Figure 11 shows the option mapping for the morphing wing section attribute and the system complexity criterion. The first question that the participants answer together is: which sub-criteria or aspects associated with system complexity are related to or influenced by different morphable wing sections? After outlining the sub-criteria, the experts discuss which options of this attribute exhibit best performance according to the sub-criteria. These promising options are then noted in “Best option” column. Based on the dominance level of the different options obtained during the discussion, the experts might consider editing their initial evaluations.

Regarding one of the new methodology elements, namely the group discussions and the behavioral aggregations, one could observe both positive feedback and wish for further improvement. Fruitful conversations and idea sharing were an important part of the behavioral aggregation. The derivation of sub-criteria and the associated best options contributed to largely structured conversations. The initial idea behind the visual mapping of best options was to introduce new aspects (reduce the “availability” bias) and probably motivate the experts to correct some initial evaluations. However, this purpose was not entirely supported by the majority of the participants, so the question arose whether such a discussion was necessary in the first place. Nevertheless, two participants () stated they had indeed wished to correct their initial evaluations after the discussion.

4.3.3. Technology and Terminology Definition

As a considerable drawback was mentioned the ambiguity of some criteria and option definitions. Furthermore, the lack of knowledge on specific technologies such as the morphing mechanisms served as a source of epistemic uncertainty, which clearly reflected in the evaluations. For example, the energy input criterion was a subject of clarification discussions. The struggle was between the absolute energy needed for morphing in a special case and the ratio of required energy and the energy savings due to the new drag-efficient wing.

5. Discussion

As mentioned in the discussion of the first workshop [

12], a full-scale data-based validation of this AMA stage is hardly possible for such studies, considering the lacking experimental data on the morphing attributes. For this purpose, the relative positioning of the option implementation is qualitatively verified. For instance, the definitive flight performance advantage of an entire morphable wing over separate morphable sections confirms the logical expectation. Similarly, mechanical extensions such as flaps and slats exhibit slightly less system complexity than the other morphing mechanisms included in the MM. However, as stated in the previous section, the difference among solutions involving certain options is seen to be relatively weak, which is reflected in the small solution translations along the axes. This might speak for two things: (a) prominent epistemic uncertainty (lack of knowledge on these options) or/and (b) overly general definition of the options preventing the experts from focusing on the most relevant aspects. Based on these observations, one could declare a partial reliability of the results.

The expert feedback also plays a key role as a partial method validation. In this context, the aspects put forward by the participants not only indicate more space for improvement possibilities. Together with a global workshop conduction analysis, these also point out further dimensions of SEJE integration with the AMA design process. Such is, for example, the purpose of group discussions. On the one hand, a significant number of profound professional arguments have been heard when deciding on the best options for the sub-criteria. On the other hand, the purpose of “re-convincing” the expert to possibly correct their evaluation was rejected by the majority of the DMs. Its influence on the results has been assessed as low by the researchers as well. This brings forward the following questions:

Which tasks in conceptual aircraft design are more suitable for individual assessments and which would benefit more from a group effort?

Which tasks in conceptual design would benefit more from convergent or divergent thinking?

How should the discussions be structured?

How should the influence of technologies on criteria be structured and integrated into a collective expert assessment?

Furthermore, based on the results and the participant feedback, the following improvement proposals have been drawn:

Use case handouts. The lack of knowledge on specific topics such as morphing mechanisms have shown the necessity of preliminary preparation of the experts. In this context, it would be useful to prepare a brief summary of the study case, which would be sent to the experts prior to the workshop.

Avoid ambiguous or overly-general formulations of technologies and criteria.

Expert selection from heterogeneous professional backgrounds. The first two workshops involved participants mainly from the aircraft design and aerostructures domains. This was reflected in the almost identical solution spaces resulting from the weighted and non-weighted aggregation of the evaluations.

Group discussions improvement. In order to fully benefit from such expert panels, it would be senseless to reject behavioral aggregation and ideas sharing in group discussions. Instead, a clearer purpose and a better structure of the exchange should be developed.

6. Conclusions

The present paper describes the definition and application of the technology evaluation stage of the AMA design process based on expert workshops. In particular, the derivation and integration of SEJE techniques in the conceptual aircraft design with the AMA. The following research objectives have been fulfilled: (a) justification for the development of a SEJE approach adapted to the AMA needs and (b) the extraction of use case results and expert feedback for further method development from a second conducted workshop.

In order to justify the derived methodology, an extensive literature review on SEJE has been conducted first. As a result, the most prominent SEJE methods (the Classical Method, the SHELF framework and the IDEA protocol) were summarized, compared and used for the identification of common SEJE components. Further important aspects for the preparation and conduction of such elicitation have been researched and commented, namely the role of bias, the remote and personal formats as well as qualitative and quantitative elicitation variables. Additionally, the integration of these aspects and the combination of SEJE methods with MCDM frameworks were considered. The importance of such approaches is underlined by SEJE application examples in aerospace. This analysis was used for the development of a SEJE methodology adopted specifically for conceptual aircraft design within the AMA design framework and its positioning in the scientific context.

The second part of the paper is dedicated to the description and application of this methodology in the form of a second AMA workshop. Its design took into account the lessons learned from the first workshop (see Reference [

12]). The consideration of novel technologies was implemented by selecting the design of wing morphing concepts as a use case for the workshop. In particular, it studied the influence of morphing technologies and their integration in different airfoil and wing sections. During the workshop, these were compared by the expert panel regarding the criteria flight performance, system complexity, and energy input by entering their fuzzy numbers in the specially developed software platform. The expert weighting was based on their self-designated expertise and did not show any significant influence on the results. This might be due either to the homogeneity of the panel’s professional background or to an undiscovered methodological flaw. The workshop consisted of two main steps: individual evaluation of the options and group discussions. The latter aimed to combine ideas and help the experts correct their evaluations, thus reducing bias and increasing results objectivity.

After applying the FAHP, the AMA software was used to generate the solution space. On the one hand, it confirmed some expected trends, e.g., the flight performance advantage of an entirely morphable wing referred to only partial morphing. On the other hand, it revealed the unexpectedly low difference of some option scores, mostly among the morphing mechanisms. The reason for this might be the very similar evaluations given by the experts as a result of lacking knowledge on this very specific topic (epistemic uncertainty).

Along with the various visual results presentation and their analysis, a vital outcome of the work was the participants’ feedback. It serves not only as a partial validation source of the methodology, but also as a valuable guideline for its further development.

Based on these results, improvement proposals for future work on the project have been drawn. These refer mostly to ambiguity in the definition of technology options and criteria. The need for additional use case structuring and definition was pointed out along with the necessity for preliminary preparation on specialized topics. Furthermore, the full capacity of expert panel discussions should be used by restructuring these and defining clearer purpose and deliverables.

Although based on a thorough literature study on SEJE methods and the corresponding biases, a deeper look into technology assessments and scenario workshops for a better process structuring is required. Future studies would benefit not only from the lessons learned through the current work. Potential workshops would also profit from widening their purpose, namely by extending the pure technical assessment of technologies to their integration with the environment and society.

The findings are not only an integral part of the AMA enhancement process, but can also serve as a solid basis for technology evaluation via SEJE methods focused mostly on technical qualities of the solutions, which is rarely found in the existing literature. This work represents a “stand-alone” methodological component for similar studies in the aerospace domain and beyond.

The major elicitation components that were added to the methodology take the extended workshop concept to a new level. It exhibits not only the development of a full-scale SEJE method, but most importantly its integration into the early stages of conceptual aircraft design. This has been achieved by the appropriate definition of attributes, options, and criteria, as well as by orienting the elicitation towards the problematic of aircraft architecture design. In particular, the evaluations required references to specific aspects from design and operational point of view. Additionally, the group discussions aimed to broaden the experts’ horizon on a certain technology group by gathering different views from various engineering disciplines in order to obtain results with increased objectivity.

In a global perspective, the AMA, the qualitative evaluation approach, and the corresponding expert workshops are seen as a step prior to the application of “classical” MDAO algorithms. The flexible definition of the MM and the workshops implies the qualitative multi-criteria comparison of options from possibly different aircraft classes (e.g., aerostatic lift generation against fixed wings), otherwise hardly feasible within a single MDAO framework. The resulting exhaustive solution space allows the consideration of solutions possibly let out of scope during conventional idea generation.

Author Contributions

Conceptualization, V.T.T., D.R. and A.B.; methodology, V.T.T. and D.R.; software, V.T.T.; validation, V.T.T. and A.B.; formal analysis, V.T.T.; investigation, V.T.T.; resources, D.R. and A.B.; data curation, V.T.T.; writing—original draft preparation, V.T.T. and D.R.; writing—review and editing, D.R. and A.B.; visualization, V.T.T.; supervision, D.R. and A.B.; project administration, A.B.; funding acquisition, A.B. All authors have read and agreed to the published version of the manuscript.

Funding

This research was funded by Deutsche Forschungsgemeinschaft (DFG, German Research Foundation) grant number 443831887.

Institutional Review Board Statement

Not applicable.

Data Availability Statement

Data can be made available on demand.

Conflicts of Interest

The authors declare no conflict of interest.

Abbreviations

The following abbreviations are used in this manuscript:

| AMA | Advanced Morphological Approach |

| SEJE | Structured expert judgment elicitation |

| MA | Morphological Analysis (classical) |

| MM | Morphological matrix |

| FAHP | Fuzzy Analytical Hierarchy Process |

| AHP | Analytical Hierarchy Process |

| SAR | search and rescue |

| CM | Classical Model |

| SHELF | Sheffield Elicitation Framework |

| IDEA | Investigate, Discuss, Estimate, Aggregate |

| DM | decision-maker |

| MCDM | Multi-Criteria Decision-Making |

| TA | Technology Assessment |

| SW | scenario workshop |

| NASA | National Aeronautics and Space Administration |

| FAA | Federal Aviation Administration |

| IATA | International Air Transport Association |

| UI | user interface |

| MDAO | Multidisciplinary Aircraft Optimization |

References

- Bernstein, J.I. Design Methods in the Aerospace Industry: Looking for Evidence of Set-Based Practices. Master’s Thesis, Massachusetts Institute of Technology, Cambridge, MA, USA, 1998. [Google Scholar]

- Todorov, V.T.; Rakov, D.; Bardenhagen, A. Creation of innovative concepts in Aerospace based on the Morphological Approach. IOP Conf. Ser. Mater. Sci. Eng. 2022, 1226, 012029. [Google Scholar] [CrossRef]

- Bardenhagen, A.; Rakov, D. Analysis and Synthesis of Aircraft Configurations during Conceptual Design Using an Advanced Morphological Approach; Deutsche Gesellschaft für Luft- und Raumfahrt—Lilienthal-Oberth e.V.: Bonn, Germany, 2019. [Google Scholar] [CrossRef]

- Todorov, V.T.; Rakov, D.; Bardenhagen, A. Enhancement Opportunities for Conceptual Design in Aerospace Based on the Advanced Morphological Approach. Aerospace 2022, 9, 78. [Google Scholar] [CrossRef]

- Zwicky, F. Discovery, Invention, Research—Through the Morphological Approach; The Macmillan Company: Toronto, ON, Canada, 1969. [Google Scholar]

- Rakov, D. Morphological synthesis method of search for promising technical system. IEEE Aerosp. Electron. Syst. Mag. 1996, 11, 3–8. [Google Scholar] [CrossRef]

- Ritchey, T. General morphological analysis as a basic scientific modelling method. Technol. Forecast. Soc. Chang. 2018, 126, 81–91. [Google Scholar] [CrossRef]

- Frank, C.P.; Marlier, R.A.; Pinon-Fischer, O.J.; Mavris, D.N. Evolutionary multi-objective multi-architecture design space exploration methodology. Optim. Eng. 2018, 19, 359–381. [Google Scholar] [CrossRef]

- Villeneuve, F. A Method for Concept and Technology Exploration of Aerospace Architectures. Ph.D. Thesis, Georgia Institute of Technology, Atlanta, GA, USA, 2007. [Google Scholar]

- Mavris, D.N.; Kirby, M.R. Technology Identification, Evaluation, and Selection for Commercial Transport Aircraft; Georgia Tech Library: Atlanta, GA, USA, 1999. [Google Scholar]

- Ölvander, J.; Lundén, B.; Gavel, H. A computerized optimization framework for the morphological matrix applied to aircraft conceptual design. Comput.-Aided Des. 2009, 41, 187–196. [Google Scholar] [CrossRef]

- Todorov, V.T.; Rakov, D.; Bardenhagen, A. Improvement and Testing of the Advanced Morphological Approach in the Domain of Conceptual Aircraft Design; Deutsche Gesellschaft für Luft- und Raumfahrt—Lilienthal-Oberth e.V.: Bonn, Germany, 2022. [Google Scholar] [CrossRef]

- Monroe, R.W. A Synthesized Methodology for Eliciting Expert Judgment for Addressing Uncertainty in Decision Analysis. Ph.D. Thesis, Old Dominion University Libraries, Norfolk, VA, USA, 1997. [Google Scholar] [CrossRef]

- Meyer, M.; Booker, J. Eliciting and Analyzing Expert Judgment: A Practical Guide; Technical Report NUREG/CR-5424; LA-11667-MS; OSTI.GOV: Oak Ridge, TN, USA, 1990. [CrossRef]