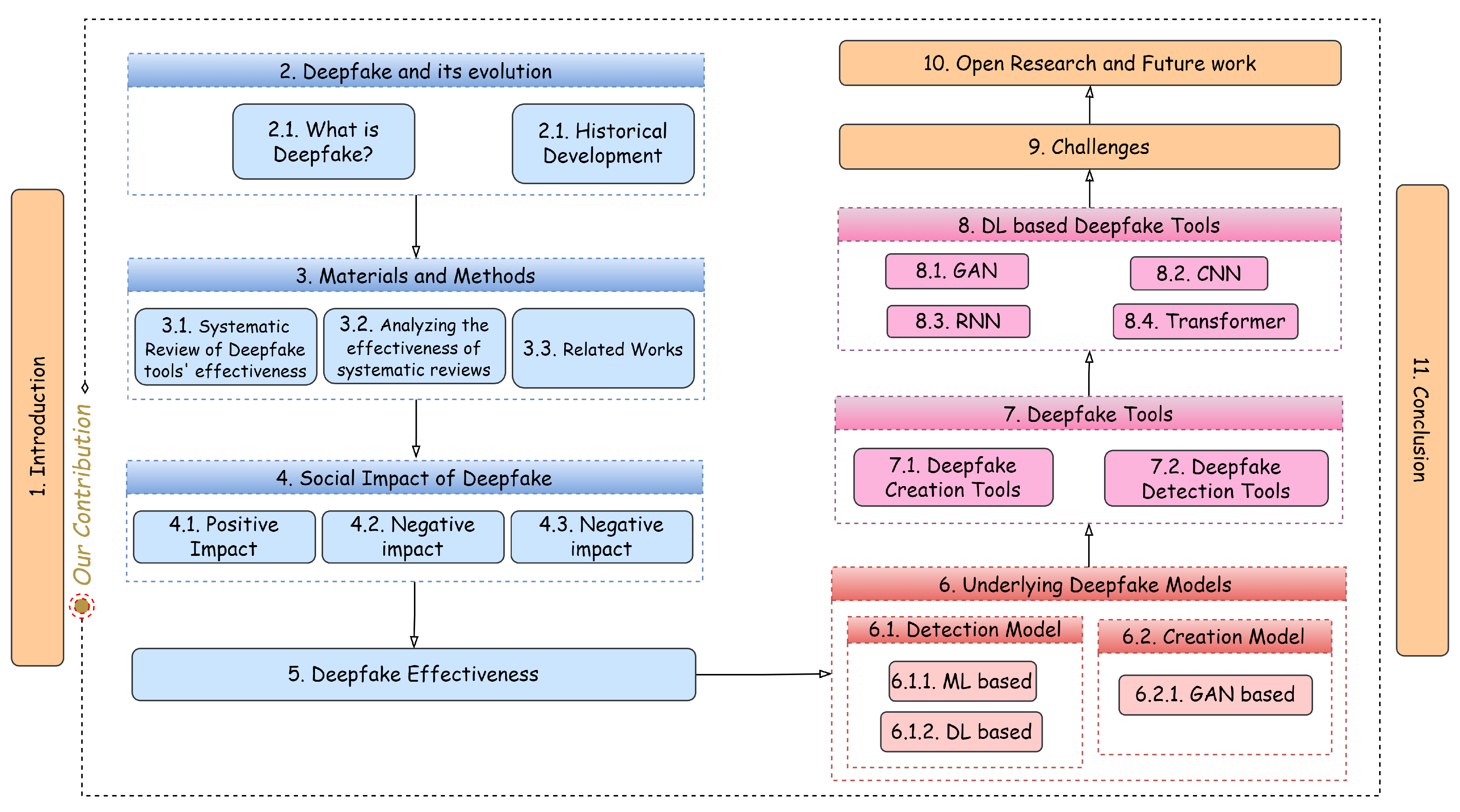

An Investigation of the Effectiveness of Deepfake Models and Tools

Abstract

1. Introduction

- An in-depth, up-to-date review is carried out in the field of deepfake models and deepfake tools.

- Two separate taxonomies are proposed that categorize the existing deepfake models and tools.

- The effectiveness of existing deepfake models and detection tools are compared in terms of underlying algorithms, datasets used and accuracy.

2. Deepfake and Its Evolution

2.1. What Is Deepfake?

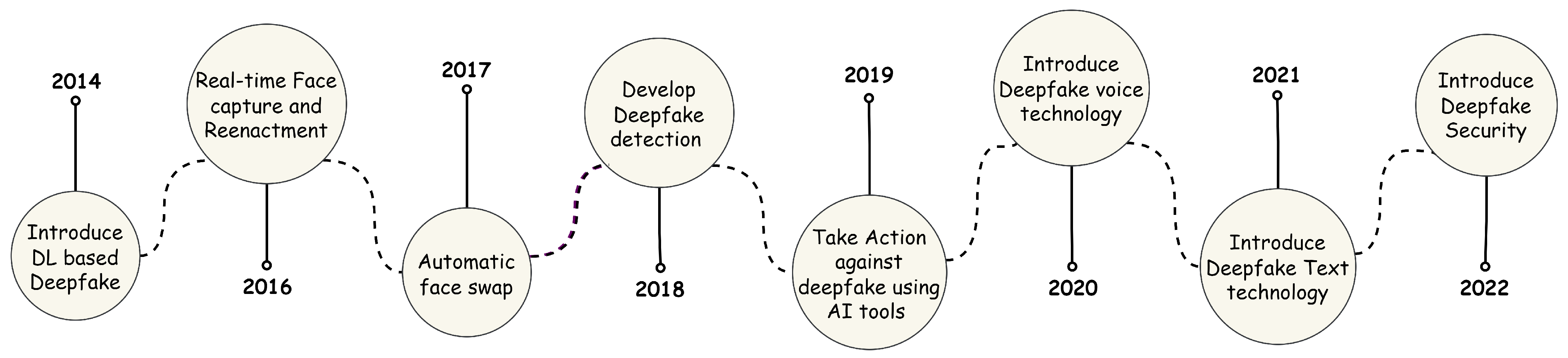

2.2. Historical Development of Deepfake

3. Materials and Methods

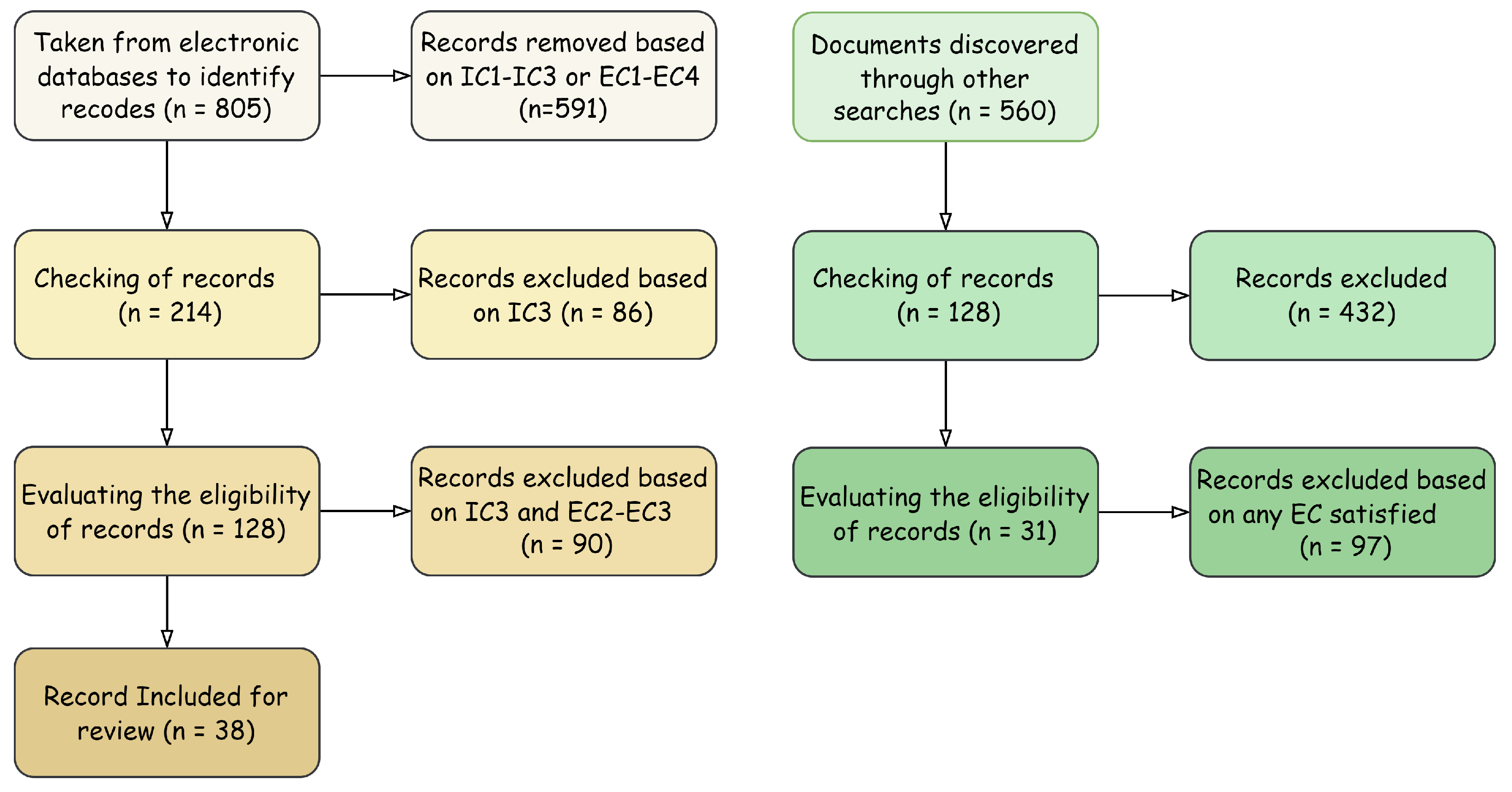

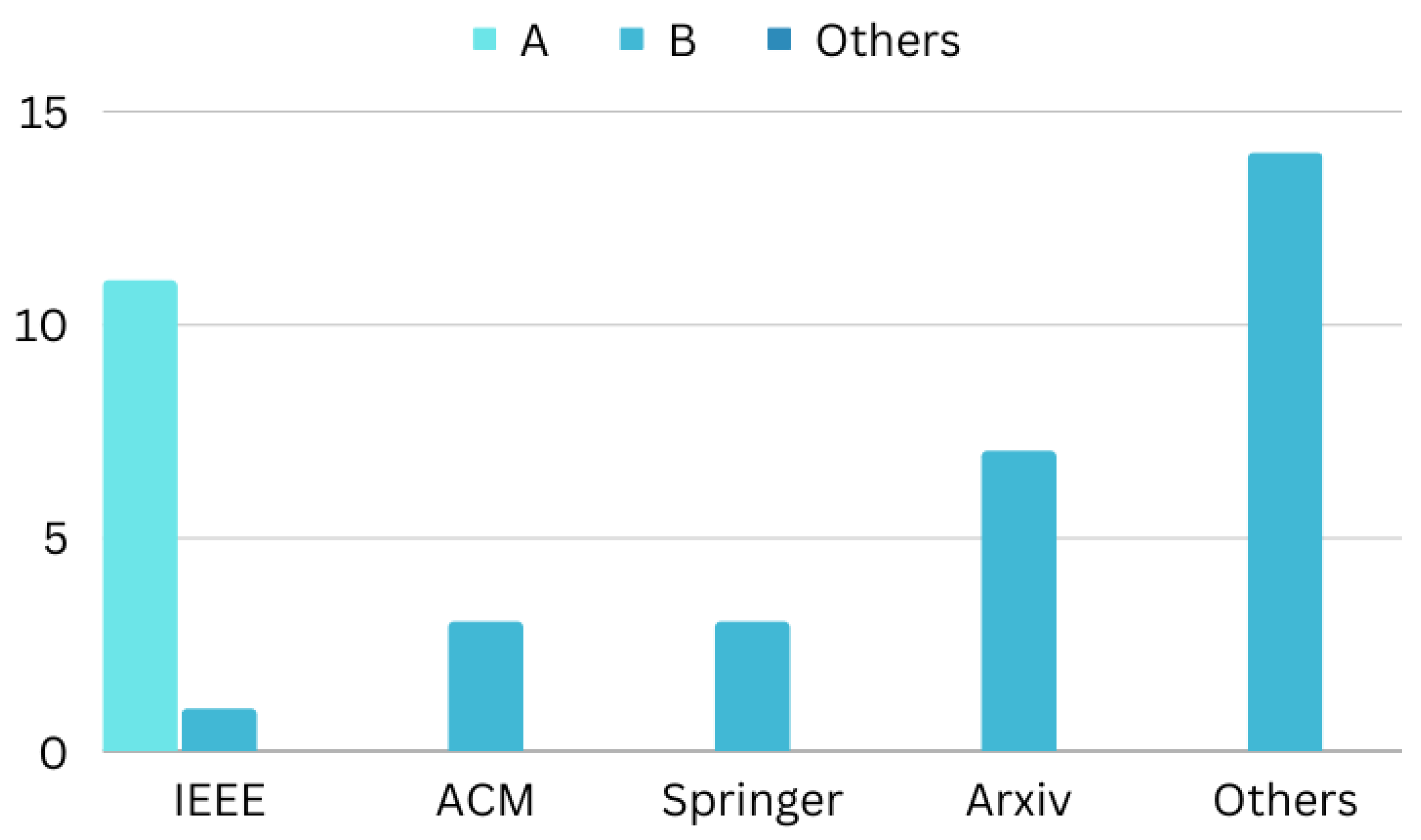

3.1. Systematic Review of Deepfake Tools’ Effectiveness

3.2. Analyzing the Effectiveness of Systematic Reviews

3.3. Related Works

4. Social Impact of Deepfake

4.1. Positive Impact

- Deepfake technology has many advantages for the movie business. For instance, it can be used to update film footage rather than reshoot it or to create artificial voices for performers who lost theirs due to illness. The ability for filmmakers to reproduce iconic movie moments and produce new films starring long-dead performers can be brought back to life in post-production with the use of cutting-edge facial editing and visual effects. Deepfake technology also enables automatic and lifelike voice dubbing for films in any language, enhancing the viewing experience for different audiences of movies and instructional media.

- Deepfake technology enables digital doubles of individuals, realistic-sounding and smart-looking assistants [72], and enhanced telepresence in online games and virtual chat environments [73]. This promotes improved online communication and interpersonal relationships [74,75]. In the social and medical spheres, technology can also be beneficial. By digitally bringing a deceased friend "back to life," deepfakes can assist a grieving loved one in saying goodbye to her. This can help people deal with the death of a loved one [76,77]. Additionally, it can be used to digitally replicate an amputee’s leg or assist transgender people in better visualizing their preferred gender. It is even possible to engage with a younger face that the used may remember thanks to deep-fake technology [76]. In order to accelerate the development of new materials and medical treatments [78], researchers are also investigating the use of GANs to detect anomalies in X-rays [79].

- Businesses are intrigued by the possibility of brand-applicable deepfake technology since it has the chance to significantly change e-commerce and marketing [75]. For instance, businesses can hire phony models and actresses to display fashionable attire on a wide range of models with various heights, weights, and skin colors [80]. Additionally, deepfakes facilitate super-personal information that transforms customers into models; the technology allows for virtual modification to help customers see how an outfit would appear on themselves before buying it and can produce specifically aimed fashion advertisements that change based on the period, climate, and viewing public [75,80].

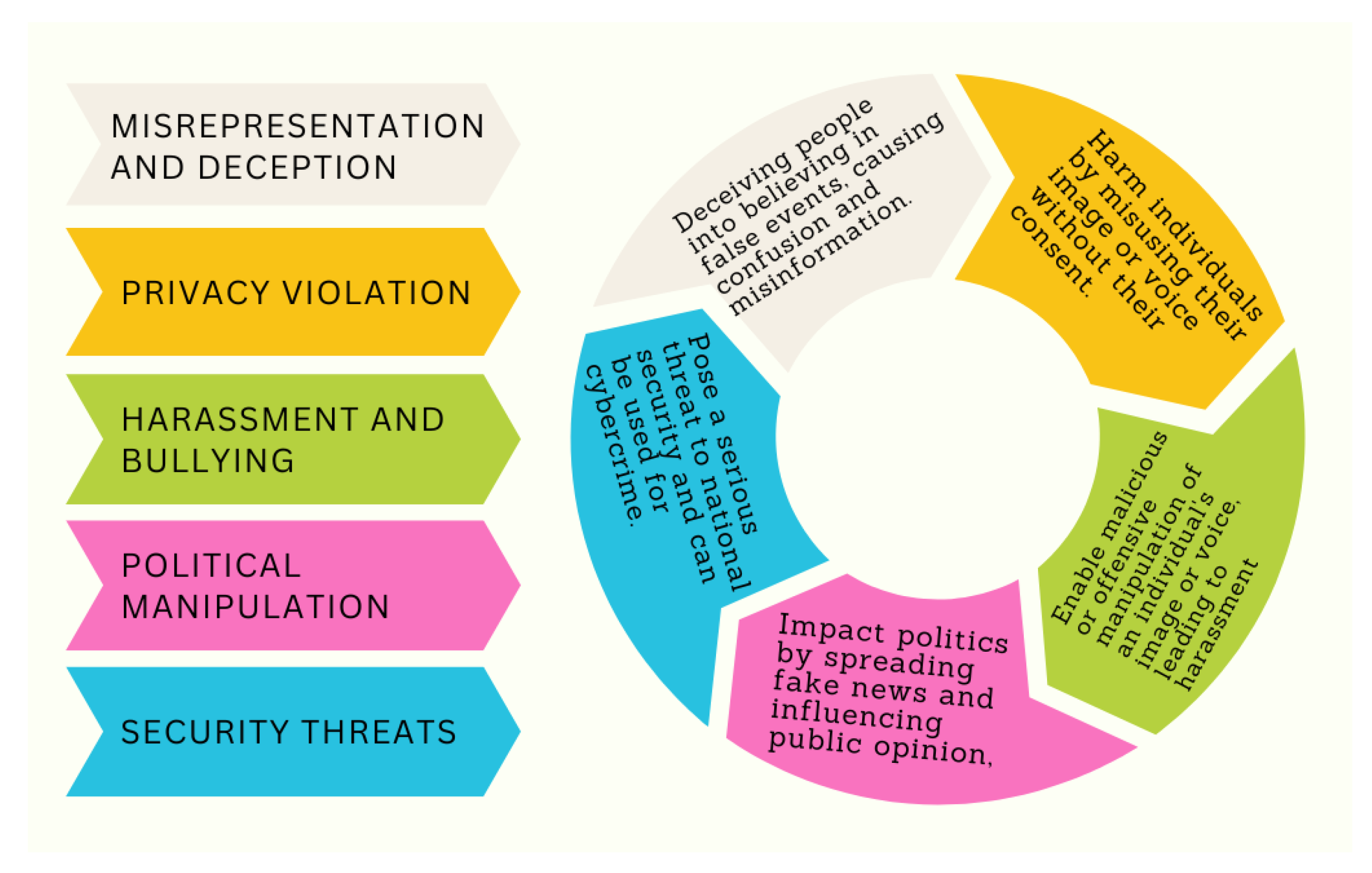

4.2. Negative Impact

4.3. Ethics of Deepfake

- A person may be threatened, intimidated, or suffer mental damage as a result of pornographic deepfakes. Women are treated with harshness and discrimination, which results in psychological pain, injury to one’s reputation, bullying, and in certain situations even loss of money or career. When it comes to consenting to artificial pornography, the ethical problem is considerably more complicated. Mutually acceptable deepfakes could normalize the concept of synthetic pornography, which might increase worries about the harmful effects of pornography on emotional and sexual development. Some may claim that this is similar to the ethically right activity of sexual fantasizing.

- Synthetic resurrection is one more field of worry. People have the ability to determine how their likenesses are used for commercial purposes. The biggest issue with public figures is who will control their voice and appearance once they pass away. Most of the time, they are used primarily for marketing, propaganda, and financial benefit. Deepfakes can be employed to falsely depict political leaders’ reputations after their deaths in order to further political and legal objectives, which raises issues of morality and ethics. Despite the fact that there are valid barriers against using a dead person’s voice or image for profit, relatives who are granted the right to utilize these attributes may do so for their own business advantage.

- Extending the truth, emphasizing a political platform, and offering other facts are common strategies in politics. They aid in organizing, influencing, and persuading individuals to collect funds and votes. Although unethical, political opportunism has become the standard. If politicians decide to employ deepfakes and artificial media, the results of the election could be significantly affected. People who are deceived cannot make judgments that are in their individual greatest advantage because deception prevents them from doing so. Voters are manipulated into supporting the deceiver’s agenda when misleading information about the opposing party is purposefully spread or a candidate is presented with a different version of events [85]. There is a little legal remedy for these immoral activities. A deepfake that is employed to frighten people into not casting their ballots is also unethical.

5. Deepfake Effectiveness

6. Underlying Deepfake Models

6.1. Detection Models

6.1.1. Conventional ML-Based Deepfake

6.1.2. DL-Based Deepfake

- CNN-based Models

- RNN-based Models

- Transformer-based Models

- Accuracy of deepfake-detection models

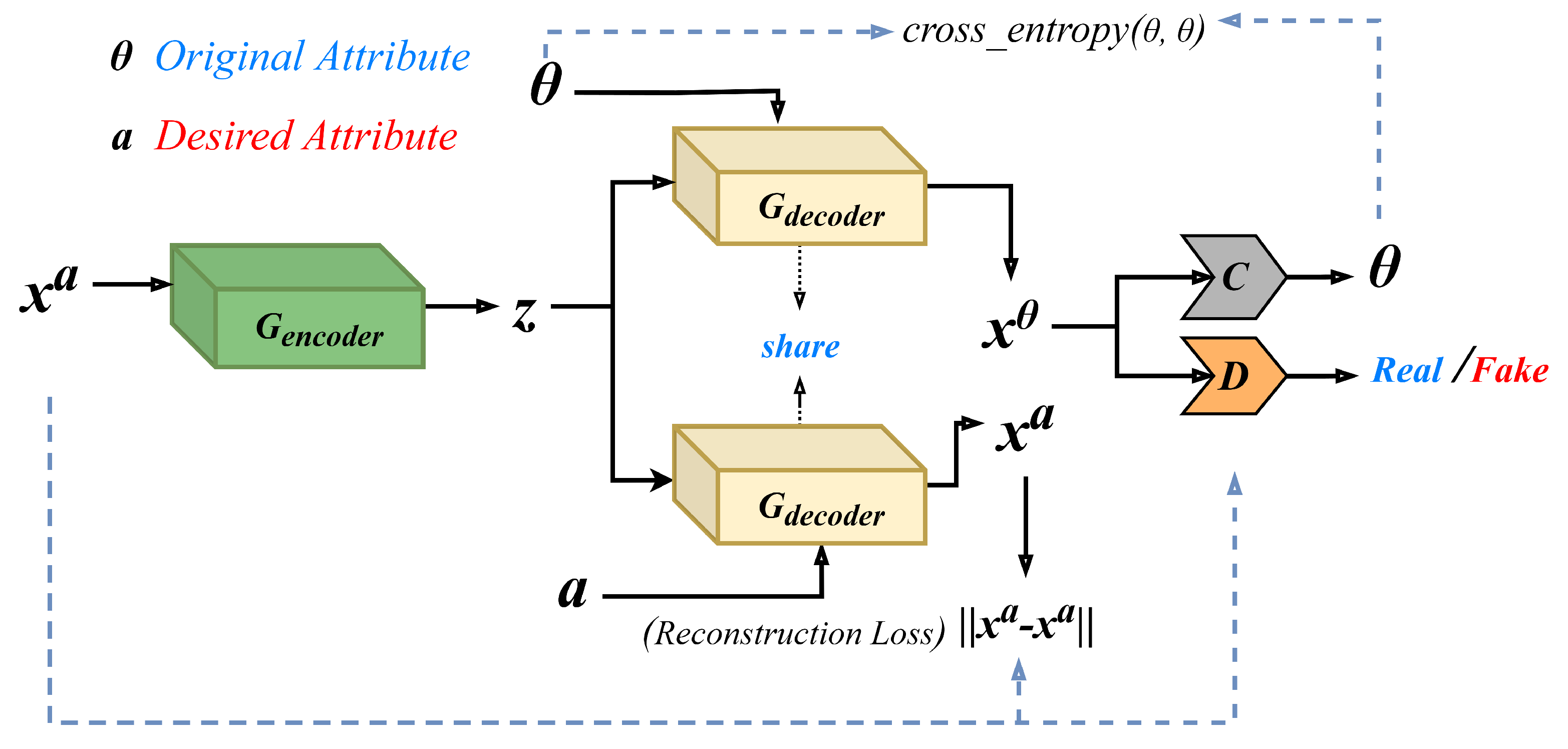

6.2. Creation Models

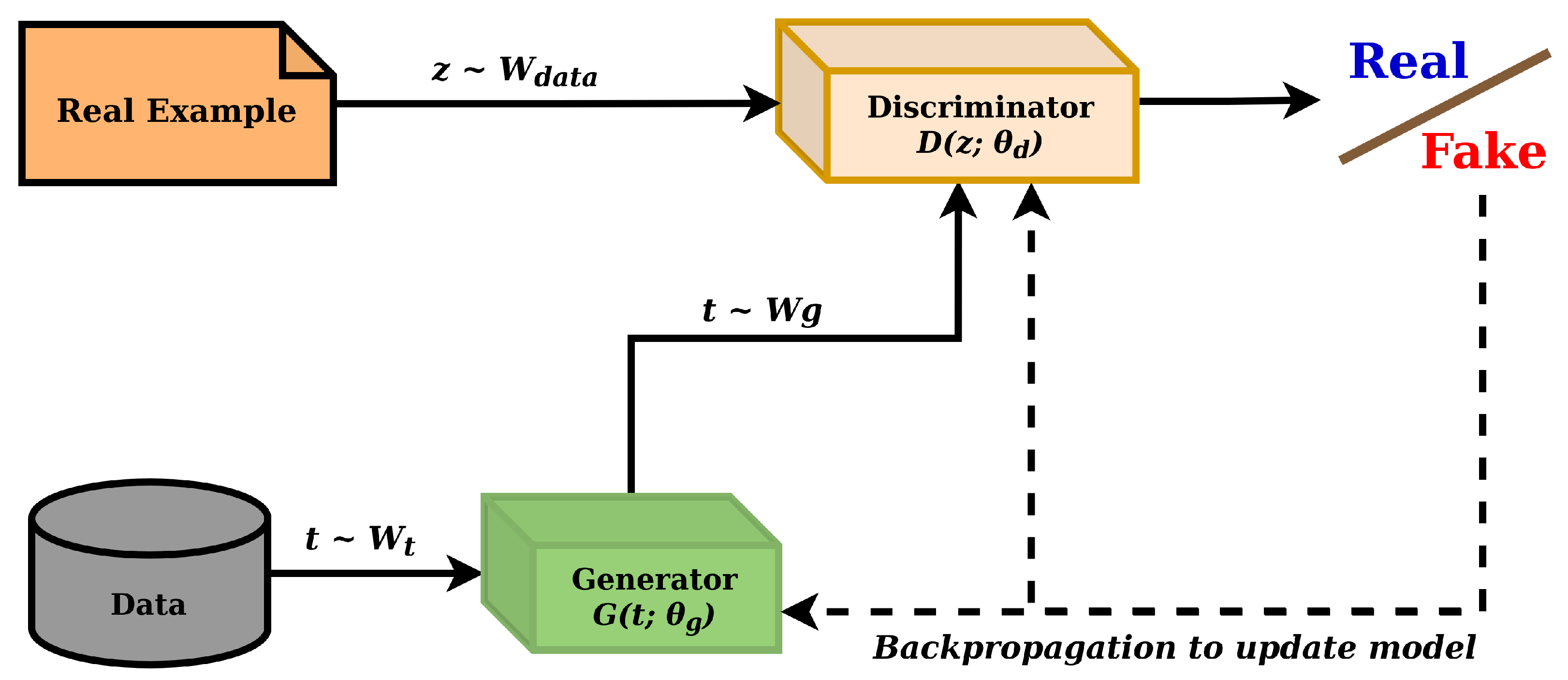

6.2.1. GANs-Based Deepfake

| Paper | Type | Published | DF Tools | DF Detection Model | DF Creation Model | Tool’s Effectiveness | DF Detection Tools | DF Creation Tools | Comparison of DF Tools | Scope | ||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Accuracy | Speed | Usabilty | ||||||||||

| [67] | Survey | IEEE | × | ✓ | × | × | × | × | × | × | × | Groups 112 articles into 4 categories: deep learning, classical machine learning, statistical, and blockchain techniques. Evaluates detection performance on various datasets. |

| [68] | Survey | IEEE | ✓ | ✓ | ✓ | × | × | × | × | × | × | Explores trends and challenges in deepfake datasets and detection models, as well as challenges in creating and detecting deepfakes. |

| [11] | Survey | Elsevier | ✓ | ✓ | ✓ | × | × | × | × | × | × | Provides an overview of deepfake creation algorithms and detection methods. It also covers the challenges and future directions of deepfake technology. |

| [9] | Survey | ACM | × | ✓ | ✓ | × | × | × | × | × | × | Improves understanding of deepfakes by discussing their creation, detection, trends, limitations of current defenses, and areas requiring further research. |

| [69] | Survey | Springer | ✓ | ✓ | ✓ | × | × | × | × | × | × | Offers survey of deepfake algorithms and tools, along with discussions on challenges and research trends. |

| [70] | SLR | MDPI | × | ✓ | ✓ | × | × | × | × | × | × | Focuses on recent research on deepfake creation and detection methods, covering tweets, pictures, and videos. It also discusses popular deepfake apps and research in the field. |

| Our | Systematic review | MDPI | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | Provides a thorough and current evaluation of deepfake models and tools. It proposes two classification systems to categorize these models and tools and compares their effectiveness based on factors such as algorithms, datasets, and accuracy. |

| Category | Model | Dataset | Accuracy |

|---|---|---|---|

| CNN | Xception [54] | FF++ | 99.26% |

| GoogleNet [48] | Own Dataset | 92.70% | |

| VGG16 [50] | VidTIMIT | 84.60% | |

| VGG19 [49] | FF++ | 92.02% | |

| ResNet50 [50] | VidTIMIT | 99.90% | |

| ResNet101 [50] | VidTIMIT | 87.60% | |

| ResNet152 [50] | VidTIMIT | 99.40% | |

| Y-shaped Autoencoder [51] | FF, FF++ | 93.01% | |

| MesoNet [52] | FF++ | 95.23% | |

| Meso-4 [52] | VidTIMIT | 87.80% | |

| MesoInception-4 [52] | VidTIMIT | 80.40% | |

| DeepRhythm [53] | FF++ | 98.00% | |

| DeepRhythm [53] | DFDC | 64.10% | |

| Convolutional Attention Network(CAN) [55] | Celeb-DF | 99.90% | |

| Convolutional Attention Network(CAN) [55] | DFDC | 98.20% | |

| PixelCNN++ [56] | FF | 96.20% | |

| EfficentNet [57] | FF++ | 95.10% | |

| 3D CNN [58] | FF++ | 88.57% | |

| FD2Net [59] | FF++ | 99.45% | |

| FD2Net [59] | DFD | 78.65% | |

| FD2Net [59] | DFDC | 66.09% | |

| DFT-MF [60] | Celeb-DF | 71.25% | |

| DFT-MF [60] | VidTIMIT | 98.70% | |

| RNN | BiLSTM [94] | FF++ | 99.34% |

| FacenetLSTM [62] | FF++ | 97.00% | |

| Neural-ODE [95] | COHFACE | 99.01% | |

| Neural-ODE [95] | VidTIMIT | 99.02% | |

| MTCNN+RNN | CLRNet [61] | FF++ | 96.00% |

| Transformer | Transformer-based model (EfficentNet+ViT) [57] | FF++ | 95.10% |

| GAN Model | Process of the Model | Application Area | Limitation | Used in Deepfake |

|---|---|---|---|---|

| STARGAN [33] | To use a particular model, image-to-image translations at several domains. | Image-to-image translations (e.g., facial attribute, facial expression). | StarGAN tries to manipulate the age of the source images and is unable to generate facial expressions when the incorrect mask vector is utilized. | [103,104,105,106] |

| STYLEGAN [34] | Sends semantic information to a target domain with a distinctive style from the source domain. | Create incredibly genuine, high-resolution photographs of people’s faces. | It is obvious from looking at the distribution of training data that low-density regions are underrepresented, making it more challenging for the generator to learn in those areas. | [106,107,108,109,110,111,112,113] |

| ATTGAN [35] | Constrained categorization and the transmission of facial traits. | Attribute intensity control, attribute style manipulation | Cannot manipulate style attribute. | [105,111,114] |

| CycleGAN [36] | To convert a visual representation from one domain to another when there are not any paired examples. | Style transfer, object transfiguration, season transfer, photo enhancement. | Many of their outcomes are rendered hazy and do not keep the level of clarity as observed in the input, failing to keep the identification of the input. | [115,116,117] |

| GDWCT [37] | Increases the capacity for styling. | Specially designed for image translations. | It makes mistakes while classifying gender. | [37,105,111] |

| Ic-GAN [38] | Mapping a real picture into a conditional interpretation and latent space. | Apply to recreate and alter real-world pictures of faces based on random attributes. | By producing photographs of men, the self-identity in the picture is not preserved. | [38] |

| VAE/GAN [39] | While translating an image, swaps element-wise mistakes for feature-wise defects to effectively capture the distribution of data. | Use it on pictures of faces and identify element-wise matching. | The relationship between the latent representation and the characteristics cannot be modeled. | [39,118] |

| FS-GAN [31] | Face swapping and reenactment. | Adjustments for changes in attitude and expressions in an image or a video clip. | The texture is blurred when the face recreation generator is used too frequently and the sparse landmark tracking technique fails to capture the complexity of facial emotions. | [31,110,119] |

6.2.2. Summary on GAN

7. Deepfake Tools

7.1. Deepfake Creation Tools

Comparative Analysis of Deepfake Creation Tools

- Computational efficiency

- Robustness against adversarial attack

- Scalability and usability

- Advantages and Limitations

7.2. Deepfake Detection Tools

- a.

- Sensity AI. A deepfake detection software called Sensity AI makes use of machine learning and artificial intelligence methods to spot altered material. It is renowned for finding deepfakes quickly and precisely, even in huge datasets. To stop the spread of deepfakes, the technique has been used by a number of groups, including social networking sites and law enforcement organizations. Sensity AI is made to scan vast volumes of data rapidly and has demonstrated great levels of accuracy, with accuracy scores of up to 95%. The tool’s easy-to-use layout makes it usable to both technical and lay users, and it is scalable and adaptable to various sectors and user needs. In conclusion, Sensity AI is a useful tool for recognizing and mitigating the risks of deepfakes.

- b.

- Truepic. This is a platform that provides services to verify the authenticity of photos and videos and to detect any manipulation or tampering in the media. It offers various features, including cryptographic techniques to verify authenticity, advanced forensic analysis capabilities, real-time verification, integration with different platforms, a user-friendly interface, and high accuracy rates in detecting manipulated media. Truepic can verify media from various sources and formats, ensuring wide coverage. Additionally, it provides a transparent and auditable trail of the verification process for reliable validation of the media’s authenticity.

- c.

- D-ID. This is a tool that utilizes advanced algorithms to protect users’ privacy by transforming photos and videos in a way that prevents facial recognition systems from identifying the individual in the media. The tool is effective in achieving this goal, with high accuracy rates in facial anonymization. It is easy to integrate into various applications, works quickly, and is compatible with various platforms such as iOS, Android, and web applications. Additionally, D-ID’s algorithm is robust against attacks by adversarial machine learning techniques, ensuring that anonymization remains effective even in the face of such attacks.

- d.

- Amber Video. This is a deepfake-detection tool that is known for its high accuracy in detecting deepfakes, particularly those created using generative models. The tool’s effectiveness parameters include the use of multiple levels of detection, continuous learning to adapt to emerging threats, real-time detection with scalability, a cloud-based solution for easy deployment and integration, and customization options for detection rules and thresholds to meet specific needs.

- e.

- Deeptrace. This is a tool for detecting deepfake videos that uses machine learning algorithms. The tool is effective in detecting deepfakes due to its high accuracy rate, which is achieved through the use of state-of-the-art machine learning algorithms. It can detect deepfakes in real-time, making it useful for identifying fake videos as they are being shared. Deeptrace uses a combination of audio-, visual-, and text-based analysis to detect deepfakes, which makes it more comprehensive than other tools. The tool is scalable and can analyze large datasets, meeting the needs of different organizations. Additionally, Deeptrace continuously learns and adapts to new deepfake techniques, ensuring that it can effectively detect the latest types of deepfakes.

- f.

- FooSpidy’s Fake Finder. This is a deepfake-detection tool that uses image forensics and deep neural networks to identify manipulations in images and videos. Its effectiveness parameters include high accuracy, real-time detection, a user-friendly interface, and customizable settings. The tool has an accuracy rate of over 90% and allows users to quickly upload and scan media files for deepfakes. Additionally, users can customize detection settings to enhance accuracy and precision in identifying deepfakes.

- g.

- DeepSecure.ai. This is a deepfake-detection tool that uses a unique approach of analyzing the semantic content of videos to detect deepfakes. Some of its effectiveness parameters include high accuracy, real-time speed, scalability, ease of use, and versatility. It has a reported accuracy rate of 96% in detecting deepfakes and can handle large volumes of videos. Additionally, it can detect a wide range of deepfake techniques, including face-swapping and voice cloning, among others. The tool is user-friendly and can be easily integrated into existing workflows, making it accessible to a wide range of users.

- h.

- HooYu, This is a platform for digital identity verification that offers fraud protection, customer onboarding, and identity authentication solutions. To assure high accuracy, the platform’s verification technology makes use of a variety of verification methods and data sources. HooYu’s automatic verification procedure is rapid and effective, allowing companies to quickly onboard clients. The platform is built to provide clients with a seamless and user-friendly experience while adhering to regulatory regulations. It is adaptable and may be tailored to fit the unique requirements of different businesses, including e-commerce and financial services.

- i.

- iProov. This is a tool designed to detect deepfakes by using a proprietary technology called Flashmark to identify any signs of manipulation in facial biometric data. This tool’s effectiveness lies in its ability to accurately detect even the most sophisticated deepfake attempts, providing real-time verification of users’ faces, and it has a user-friendly interface. It is platform-agnostic, working seamlessly across iOS, Android, and web browsers, with a high level of trust from various government agencies that use it to verify identities and prevent fraud.

- j.

- Blackbird.AI. This is a tool designed for deepfake detection that combines machine learning algorithms with human intelligence to identify and classify manipulated media. Its effectiveness parameters include a high detection accuracy of 98%, advanced machine learning algorithms to detect signs of manipulation, and real-time monitoring of various online sources. Blackbird.AI also uses a team of trained analysts to review flagged videos and confirm their authenticity. Users can customize their settings to meet their specific needs, such as setting the threshold for deepfake detection or excluding certain sources from monitoring. Overall, Blackbird.AI is a reliable and effective solution to combat the proliferation of manipulated media online.

- k.

- Cogito. This is an AI-based behavioral analytics platform that uses machine learning algorithms to identify and prevent deepfakes in real time. Its effectiveness parameters include high accuracy and precision in detecting even the most advanced deepfakes, real-time detection capabilities for immediate flagging and minimization of potential harm, user-friendly and seamless integration into business workflows, customization of deepfake detection protocols, and scalability to address evolving threats and challenges. Overall, Cogito’s platform is an effective and adaptable solution for businesses seeking to combat the spread of manipulated media online.

- l.

- Veracity.ai. This is a deepfake-detection tool that uses algorithms based on ML and AI to accurately pinpoint media that has been altered. The program is especially helpful for use in live video streams since it can identify deepfakes in real-time. In order to provide thorough coverage, it can also identify deepfakes across a variety of modalities, including video, audio, and pictures. Veracity.ai is simple to use and does not require any technical knowledge to use. To further its efficiency, it is additionally regularly updated with the most recent deepfake detecting methods and methodologies.

- m.

- XRVision Sentinel. A deep learning technology called XRVision Sentinel analyzes the structure and composition of facial photos and videos to detect those that have been altered. It is capable of spotting deep fakes in a variety of contexts, including political campaigns, news media, and social media. Advanced machine learning techniques are used by the instrument to identify minute variations in facial expressions, lip movements, and eye movements. Additionally, it has the ability to recognize deep fakes created using a variety of methods, such as GAN-based models and facial reenactment techniques. Testing of XRVision Sentinel on datasets such as the Deepfake Detection Challenge dataset showed that it had a high degree of accuracy in identifying deepfakes and a low proportion of false positives.

- n.

- Amber Authenticate. This is a tool that utilizes cryptographic techniques to validate the authenticity of image and video content and to prevent the spread of deepfakes. The tool has been shown to be highly accurate and efficient in detecting deepfakes in real time. It is compatible with various media file formats and has a user-friendly interface, making it easy for users of all technical levels to operate. Overall, Amber Authenticate is a versatile and reliable tool for detecting deepfakes across various platforms and applications.

- o.

- FaceForensics++. A deepfake detection program called FaceForensics++ focuses on identifying face exchanges in videos. It is highly effective at identifying deepfakes by studying minute facial movements and has the capacity to recognize deep fakes produced using a variety of techniques. FaceForensics++ features an easy-to-use interface that requires little technical knowledge, is open-source, and may be freely used and modified by researchers. Finally, it is a scalable tool that can be incorporated into real-time deepfake detection systems because it can effectively analyze large datasets of videos.

- p.

- FakeSpot. This is an AI-powered web-based tool that can identify fake reviews on e-commerce websites with an accuracy rate of 90%. It can analyze thousands of reviews in seconds and is easy to use, even for non-technical users. FakeSpot also offers a browser extension that can be installed on Chrome, Firefox, and Safari, making it more accessible to users. Additionally, it is compatible with multiple e-commerce platforms including Amazon, Yelp, TripAdvisor, and Walmart, making it a versatile tool for detecting fake reviews across various websites.

Comparative Analysis of Deepfake Detection Tools

- Computational Efficiency

- Scalability

- Robustness against Adversarial Attacks

- Usability

- Advantages and Limitations

8. DL Based-Deepfake Tools

8.1. CNN-Based Tools

- Face2Face [2,141] shows a much more accurate real-time facial emotion exchange from a source to a target film. It displays the effects of live manipulation of a target YouTube video using a webcam-captured source video stream. Additionally, it is compared to cutting-edge reenactment techniques and exceeds in terms of the final video quality and run-time.

- Face swapping is carried out by FaceSwap [3] using picture blending, Gauss–Newton optimization, and a deep neural network-based face alignment. The detected face and features for a given input photo are first found by the algorithm. Additionally, a 3D model matches the features whose edges are mapped to the picture space and are transformed into textural positions.

- Deepfake Faceswap [142] is a platform for swapping face applications that consist of a set of encoder–decoder-based deep learning models. The goal of developing FaceSwap is to reduce its abuse potential while enhancing its usefulness as a tool for research, experimentation, and legal face swapping.

- FaceSwap_Nirkin [143,144] is an automatic image-swapping tool. It demonstrates that, rather than designing algorithms specifically for face segmentation, a typical fully convolutional network (FCN) can perform amazingly quick and precise segmentation if trained on a large enough number of rich sample sets. It makes use of specialized image segmentation to provide face identification under unusual circumstances, to fit 3D facial features, and to assess the impact of intra-subject and inter-subject face swapping on identification. It gives a face-swapping accuracy of around 98.12% in the COFW dataset [145].

- Deepware Scanner [6] is a deepfake-detection tool that produces results on a variety of deepfake sets of data, together with natural deepfake and actual videos. Here, an EfficientNet B7 [57,146] model that has been pre-trained on the ImageNet dataset is used, and the classification algorithm is trained using only Facebook’s DFDC [110] dataset, which contains 120k videos. Then, the model is trained to work in production, with an emphasis on fewer false positives. The model is a frame-based classifier, which means it does not take into account temporal coherence. Because video is a temporal medium, we believe this is a significant shortcoming that must be addressed.

- DFace [7,147] is a face-recognition and identification toolkit with attention to efficiency and usability. With certain enhancements, most importantly on storage overflows, this is a narrowed version of Timesler’s FaceNet [148] (constructed using Inception Resnet (V1) models that have undergone VGGFace2 and CASIA-Webface pretraining) repository. FaceNet is employed to create facial embeddings, and MTCNN [149] is applied to detect faces.

- MesoNet [8] is a compact facial video detection techniques network. In [52], they examined a technique for dynamically spotting altered faces in video recordings. Deepfake and Face2Face are two contemporary methods used to produce forged clips that are incredibly lifelike. Clips typically do not lend themselves well to classical visual forensic approaches because of how tightly compressed they are, which severely affects the data. As a result, they use deep learning and build two networks with a few layers each to concentrate on the mesoscopic characteristics of the image. Utilizing both a new dataset and an existing dataset we created from web videos, we evaluated those rapid networks. For Face2Face and deepfake, our testing shows a success rate of over 98% and 95%, respectively.

- OpenPose [150] is the initial genuine multi-person technology that identifies 135 feature points overall on the facial, human body, hand, and foot feature points on a single image. Zhe Cao et al. [151] offer a real-time method for spotting numerous 2D poses in a picture. The method learns to link parts of bodies with persons in the picture, which is represented using a nonparametric method known as part affinity fields (PAFs). No matter how many people are in the image, our bottom-up approach delivers great accuracy and real-time performance. In earlier research, PAFs and part of the body position measure were improved concurrently during the training stages. Here, PAF-only refinement rather than PAF and part of the body position adjustment leads to a significant improvement in accuracy and runtime efficiency. Initially, an integrated body and foot keypoint detector is also presented, and it is based on a privately published internal foot dataset. In the end, it trains a multi-stage CNN model with a deepfake-detection accuracy of about 84.9%, utilizing data from the COCO 2016 keypoints challenge [152] and MPII human multi-person [153] datasets.

- A framework called DeepfakesONPhys [154] uses a physiological assessment to identify deepfake. Utilizing remote photoplethysmography, it specifically takes into account data regarding the heartbeat (rPPG). In order to more effectively identify fraudulent films, DeepfakesON-Phys employs a convolutional attention network (CAN) [55], which pulls out both spatial and temporal data from video sequences. Utilizing the most recent open datasets in the industry, Celeb-DF and DFDC, it has been systematically assessed. The findings obtained, approximately 98% AUC with both datasets, surpass the current state of science and demonstrate the effectiveness of physiologically based fake classifiers for spotting the most recent Deepfake movies.

- For the purpose of detecting deep fake videos, EfficientNet_ViT [57,155] combines EfficientNet and Vision Transformer. The method does not employ either distillation or ensemble methods, in contrast to cutting-edge techniques. In addition, the technique provides a simple voting-based inference approach for managing many faces in a single video frame. On the DFDC dataset, the best model had an accuracy of 95.10%.

- DeepFaceLab [25,156] is the most popular program for making deepfakes. DeepFaceLab is used to make more than 95% of deepfake videos and is used by well-known YouTube and TikTok channels (e.g., deeptomcruise [157], arnoldschwarzneggar [158], diepnep [159], deepcaprio [160], VFXChris Ume [161], Sham00k [162], NextFace [163], Deepfaker [164], Deepfakes in movie [165], DeepfakeCreator [166], Jarkan [167]). It is possible to substitute faces, reverse aging, replace heads, and even manipulate politicians’ lips using this tool. S3FD [168] is used to detect faces in DFL, 2DFAN [169] and PRNet [170] are used to align faces in DFL, and a fine-grained Face-Segmentation network (TernausNet [171]) is used to segment faces. To train the DFL model, the FF++ dataset is used and gains deepfake detection accuracy of around 99% better than Face2Face, FaceSwap, and deepfake.

- The Deepfakesweb [173] app is a cloud-based deepfake tool. This app handles everything else; the user only needs t upload clips and photos and then press a button. This app allows the model to be used again after training. By doing so, users can create new films or enhance the outcome’s face-swapping quality without having to train a model again. The excellence and duration of the films determine how good a deepfake is.

8.2. GAN-Based Tools

- FaceShifter [29,124] is a special two-stage face-swapping technique for high accuracy and occlusion-sensitive face swapping. In contrast to earlier techniques, it can handle facial occlusions utilizing a second synthesis step that consists of a heuristic error acknowledging refinement network (HEAR-Net).It is capable of producing high-quality identity-preserving face-swapping outcomes. First, a high-quality face-swapping outcome is produced using an adaptive embedding integration network (AEINet) based on the integration of information. Then, it produces a heuristic error recognizing network (HEAR-Net) to handle the difficult facial occlusions. The datasets CelebA-HQ [174], and FFHQ [34] are used to train AEI-NET. HEAR-Net, on the other hand, makes use of the faces’ upper half. It gives a fake classification accuracy of around 97.38%.

- SimSwap [30,123] is a highly effective face-swapping tool. In order to effectively aid their system in implicitly preserving the face attributes, Simswap presents the Weak Feature Matching Loss. According to experimental findings, they are more capable of preserving qualities than earlier state-of-the-art techniques. It employs a GAN-based model and trains the model using VGGFace2 [175] and FF++ [54] datasets. Encoder, ID Injection Module (IIM), and Decoder are the three components that make up the generator. It produces deepfakes with a 96.57 percent accuracy rate.

- FaceSwap-GAN [31,122] is one of the GAN-based deepfake-detection techniques. It can produce accurate and reliable eye motions, and it produces videos with better face orientation and improved quality. With a deepfake-detection accuracy of over 99%, it uses the Segmentation CNN + Recurrent Reenactment Generator model and is trained on the FF++ [54] dataset.

- DiscoFaceGAN [32,125] is a technique used for producing synthetic faces of people using perfectly adjustable, completely separated latent representations of their identity, appearance, position, and lighting. Adversarial learning is used in this case to incorporate three-dimensional priors, and the network is trained to mimic the picture creation of an analytical three-dimensional facial image modification and rendering procedure.

- Faceapp [126] is a mobile application that enables users to make customized deep-fake videos. This is a deep-learning-based tool that uses cycleGAN as a model with extremely accurate results. It simply creates amazingly realistic facial changes for pictures. Using a mobile phone, it is possible to alter the face, haircut, age, gender, as well as other characteristics.

9. Challenges

- It is associated with the body of research. In this research, we compile the related papers from various conferences, journals, websites, and archives of numerous e-libraries. There is still a chance that our database of studies lacks some of the relevant papers. Additionally, we might have made a few errors while categorizing these experiments using the selection or rejection criteria we employed. In order to remedy such inaccuracies, we double-checked our evaluation of the papers in our collection.

- When encountering low-quality films compared to high-resolution videos, detection algorithms frequently show a performance decline. Videos may also undergo procedures such as picture reshaping and rotations in addition to compression techniques. When constructing detection algorithms, flexibility becomes a crucial quality that must be taken into account.

- When used in a real-world setting, time consumption assumes substantial significance. Deepfake-detection techniques will be broadly applied to media services in the near future to minimize the harm that deepfake films cause to social security. Moreover, because of their extensive time requirements, existing detection techniques are still far from being widely used in real-world situations.

- When we wish to create deepfake movies using a character, deepfake models are frequently trained on a specific collection of datasets, but the model is unable to produce an accurate output since there is not enough data for this character. Finding sufficient information for a single character, however, could be challenging. It takes time to retrain the model to recognize each distinct target.

- The majority of datasets are developed in highly favorable conditions (such as ideal lighting, flawless facial expression, high-quality photographs or videos, etc.), but in the testing phase, we give data that do not keep this quality. This makes dataset quality one of the challenging areas.

10. Open Research and Future Work

- Because of flaws in present face-forensic innovation, antiforensic technology has been invented. Neural networks are frequently employed in the area of deepfake detection to identify fake videos. Neural networks are unable to fend off attacks from adversarial samples because of inherent flaws [176]. Researchers must develop more flexible strategies that can withstand prospective threats that are identified in order to prevent these attacks in certain situations.

- It has been demonstrated that multitask learning, which involves carrying out several tasks at once, improves prediction performance when compared to single-task learning. It has been discovered that combining forgery location and deepfake-detection tasks can increase deepfake-detection task accuracy. The model may complete two jobs at once while taking into account the losses incurred by each, significantly enhancing the performance of the model. The authors in [177,178] demonstrate how the placement of a forgery is crucial to the deepfake detection challenge. Thus, there is a lot of room for improving deepfake detection using multitasking.

- One of the key areas where researchers can focus their research is to enhance deepfake photo- or video-generation models that cannot produce deepfake movies more realistically using one or a few photographs or videos. Because the majority of deepfake creation models are trained on such a qualitative dataset, users face many challenges in managing many quality images or videos as the testing or source data.

11. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Westerlund, M. The emergence of deepfake technology: A review. Technol. Innov. Manag. Rev. 2019, 9, 39–52. [Google Scholar] [CrossRef]

- Thies, J.; Zollhofer, M.; Stamminger, M.; Theobalt, C.; Nießner, M. Face2face: Real-time face capture and reenactment of rgb videos. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 2387–2395. [Google Scholar]

- Kowalski, M. FaceSwap. 2021. Available online: https://github.com/MarekKowalski/FaceSwap (accessed on 6 November 2022).

- Singh, R.; Shrivastava, S.; Jatain, A.; Bajaj, S.B. Deepfake Images, Videos Generation, and Detection Techniques Using Deep Learning. In Machine Intelligence and Smart Systems: Proceedings of MISS 2021; Springer: Berlin/Heidelberg, Germany, 2022; pp. 501–514. [Google Scholar]

- Vaccari, C.; Chadwick, A. Deepfakes and disinformation: Exploring the impact of synthetic political video on deception, uncertainty, and trust in news. Soc. Media Soc. 2020, 6, 2056305120903408. [Google Scholar] [CrossRef]

- Mertyyanik. Deepware Scanner (CLI). 2020. Available online: https://github.com/deepware/deepfake-scanner (accessed on 6 November 2022).

- Dodobyte. dFace. 2019. Available online: https://github.com/deepware/dface (accessed on 6 November 2022).

- DariusAf. MesoNet. 2018. Available online: https://github.com/DariusAf/MesoNet (accessed on 6 November 2022).

- Mirsky, Y.; Lee, W. The creation and detection of deepfakes: A survey. ACM Comput. Surv. CSUR 2021, 54, 1–41. [Google Scholar] [CrossRef]

- Ahmed, S.R.; Sonuç, E.; Ahmed, M.R.; Duru, A.D. Analysis survey on deepfake detection and recognition with convolutional neural networks. In Proceedings of the 2022 International Congress on Human-Computer Interaction, Optimization and Robotic Applications (HORA), Virtual, 9–11 June 2022; pp. 1–7. [Google Scholar]

- Nguyen, T.T.; Nguyen, Q.V.H.; Nguyen, D.T.; Nguyen, D.T.; Huynh-The, T.; Nahavandi, S.; Nguyen, T.T.; Pham, Q.V.; Nguyen, C.M. Deep learning for deepfakes creation and detection: A survey. Comput. Vis. Image Underst. 2022, 223, 103525. [Google Scholar] [CrossRef]

- Kugler, M.B.; Pace, C. Deepfake privacy: Attitudes and regulation. Nw. UL Rev. 2021, 116, 611. [Google Scholar] [CrossRef]

- Gerstner, E. Face/off: “Deepfake” face swaps and privacy laws. Def. Couns. J. 2020, 87, 1. [Google Scholar]

- Harris, K.R. Video on demand: What deepfakes do and how they harm. Synthese 2021, 199, 13373–13391. [Google Scholar] [CrossRef]

- Sharma, M.; Kaur, M. A Review of Deepfake Technology: An Emerging AI Threat. In Soft Computing for Security Applications; Springer: Berlin/Heidelberg, Germany, 2022; pp. 605–619. [Google Scholar]

- Woo, S. ADD: Frequency Attention and Multi-View Based Knowledge Distillation to Detect Low-Quality Compressed Deepfake Images. In Proceedings of the AAAI Conference on Artificial Intelligence, Vancouver, BC, Canada, 20–27 February 2022; Volume 36, pp. 122–130. [Google Scholar]

- Lyu, S. Deepfake detection: Current challenges and next Steps. In Proceedings of the 2020 IEEE International Conference on Multimedia & Expo Workshops (ICMEW), London, UK, 6–10 July 2020; pp. 1–6. [Google Scholar]

- Zi, B.; Chang, M.; Chen, J.; Ma, X.; Jiang, Y.G. Wilddeepfake: A challenging real-world dataset for deepfake detection. In Proceedings of the 28th ACM International Conference on Multimedia, Seattle, WA, USA, 12–16 October 2020; pp. 2382–2390. [Google Scholar]

- Felixrosberg. FaceDancer. 2021. Available online: https://github.com/felixrosberg/FaceDancer (accessed on 27 November 2022).

- Rosberg, F.; Aksoy, E.E.; Alonso-Fernandez, F.; Englund, C. FaceDancer: Pose-and Occlusion-Aware High Fidelity Face Swapping. arXiv 2022, arXiv:2210.10473. [Google Scholar]

- Kingma, D.P.; Welling, M. An introduction to variational autoencoders. Found. Trends Mach. Learn. 2019, 12, 307–392. [Google Scholar] [CrossRef]

- Khalid, H.; Woo, S.S. OC-FakeDect: Classifying deepfakes using one-class variational autoencoder. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, Seattle, WA, USA, 14–19 June 2020; pp. 656–657. [Google Scholar]

- Du, M.; Pentyala, S.; Li, Y.; Hu, X. Towards generalizable deepfake detection with locality-aware autoencoder. In Proceedings of the 29th ACM International Conference on Information & Knowledge Management, Virtual Event, 19–23 October 2020; pp. 325–334. [Google Scholar]

- Goodfellow, I.; Pouget-Abadie, J.; Mirza, M.; Xu, B.; Warde-Farley, D.; Ozair, S.; Courville, A.; Bengio, Y. Generative adversarial networks. Commun. ACM 2020, 63, 139–144. [Google Scholar] [CrossRef]

- Perov, I.; Gao, D.; Chervoniy, N.; Liu, K.; Marangonda, S.; Umé, C.; Dpfks, M.; Facenheim, C.S.; RP, L.; Jiang, J.; et al. DeepFaceLab: Integrated, flexible and extensible face-swapping framework. arXiv 2020, arXiv:2005.05535. [Google Scholar]

- Harris, D. Deepfakes: False pornography is here and the law cannot protect you. Duke L. Tech. Rev. 2018, 17, 99. [Google Scholar]

- Chen, T.; Kumar, A.; Nagarsheth, P.; Sivaraman, G.; Khoury, E. Generalization of Audio Deepfake Detection. In Proceedings of the Odyssey 2020, The Speaker and Language Recognition Workshop, Tokyo, Japam, 1–5 November 2020; pp. 132–137. [Google Scholar]

- Pilares, I.C.A.; Azam, S.; Akbulut, S.; Jonkman, M.; Shanmugam, B. Addressing the challenges of electronic health records using blockchain and ipfs. Sensors 2022, 22, 4032. [Google Scholar] [CrossRef]

- Li, L.; Bao, J.; Yang, H.; Chen, D.; Wen, F. Faceshifter: Towards high fidelity and occlusion aware face swapping. arXiv 2019, arXiv:1912.13457. [Google Scholar]

- Chen, R.; Chen, X.; Ni, B.; Ge, Y. Simswap: An efficient framework for high fidelity face swapping. In Proceedings of the 28th ACM International Conference on Multimedia, Seattle, WA, USA, 12–16 October 2020; pp. 2003–2011. [Google Scholar]

- Nirkin, Y.; Keller, Y.; Hassner, T. Fsgan: Subject agnostic face swapping and reenactment. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Seoul, Republic of Korea, 27 October–2 November 2019; pp. 7184–7193. [Google Scholar]

- Deng, Y.; Yang, J.; Chen, D.; Wen, F.; Tong, X. Disentangled and Controllable Face Image Generation via 3D Imitative-Contrastive Learning. In Proceedings of the IEEE Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020. [Google Scholar]

- Choi, Y.; Choi, M.; Kim, M.; Ha, J.W.; Kim, S.; Choo, J. Stargan: Unified generative adversarial networks for multi-domain image-to-image translation. In Proceedings of the Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 8789–8797. [Google Scholar]

- Karras, T.; Laine, S.; Aila, T. A style-based generator architecture for generative adversarial networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seoul, Republic of Korea, 27 October–2 November 2019; pp. 4401–4410. [Google Scholar]

- He, Z.; Zuo, W.; Kan, M.; Shan, S.; Chen, X. Attgan: Facial attribute editing by only changing what you want. IEEE Trans. Image Process. 2019, 28, 5464–5478. [Google Scholar] [CrossRef] [PubMed]

- Zhu, J.Y.; Park, T.; Isola, P.; Efros, A.A. Unpaired image-to-image translation using cycle-consistent adversarial networks. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 2223–2232. [Google Scholar]

- Cho, W.; Choi, S.; Park, D.K.; Shin, I.; Choo, J. Image-to-image translation via group-wise deep whitening-and-coloring transformation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seoul, Republic of Korea, 27 October–2 November 2019; pp. 10639–10647. [Google Scholar]

- Perarnau, G.; van de Weijer, J.; Raducanu, B.; Álvarez, J.M. Invertible conditional gans for image editing. arXiv 2016, arXiv:1611.06355. [Google Scholar]

- Larsen, A.B.L.; Sønderby, S.K.; Larochelle, H.; Winther, O. Autoencoding beyond pixels using a learned similarity metric. In Proceedings of the International Conference on Machine Learning, New York, NY, USA, 24–26 June 2016; pp. 1558–1566. [Google Scholar]

- Kaliyar, R.K.; Goswami, A.; Narang, P. DeepFakE: Improving fake news detection using tensor decomposition-based deep neural network. J. Supercomput. 2021, 77, 1015–1037. [Google Scholar] [CrossRef]

- Narayan, K.; Agarwal, H.; Mittal, S.; Thakral, K.; Kundu, S.; Vatsa, M.; Singh, R. DeSI: Deepfake Source Identifier for Social Media. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 2858–2867. [Google Scholar]

- Agarwal, H.; Singh, A.; Rajeswari, D. Deepfake Detection using SVM. In Proceedings of the 2021 Second International Conference on Electronics and Sustainable Communication Systems (ICESC), Coimbatore, India, 4–6 August 2021; pp. 1245–1249. [Google Scholar]

- Fagni, T.; Falchi, F.; Gambini, M.; Martella, A.; Tesconi, M. TweepFake: About detecting deepfake tweets. PLoS ONE 2021, 16, e0251415. [Google Scholar] [CrossRef]

- Durall, R.; Keuper, M.; Pfreundt, F.J.; Keuper, J. Unmasking deepfakes with simple features. arXiv 2019, arXiv:1911.00686. [Google Scholar]

- Ismail, A.; Elpeltagy, M.S.; Zaki, M.; Eldahshan, K. A New Deep Learning-Based Methodology for Video Deepfake Detection Using XGBoost. Sensors 2021, 21, 5413. [Google Scholar] [CrossRef]

- Rupapara, V.; Rustam, F.; Amaar, A.; Washington, P.B.; Lee, E.; Ashraf, I. Deepfake tweets classification using stacked Bi-LSTM and words embedding. PeerJ Comput. Sci. 2021, 7, e745. [Google Scholar] [CrossRef] [PubMed]

- Chollet, F. Xception: Deep learning with depthwise separable convolutions. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 1251–1258. [Google Scholar]

- Zhou, P.; Han, X.; Morariu, V.I.; Davis, L.S. Two-stream neural networks for tampered face detection. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Honolulu, HI, USA, 21–26 July 2017; pp. 1831–1839. [Google Scholar]

- Nguyen, H.H.; Yamagishi, J.; Echizen, I. Capsule-forensics: Using capsule networks to detect forged images and videos. In Proceedings of the ICASSP 2019—2019 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Brighton, UK, 12–17 May 2019; pp. 2307–2311. [Google Scholar]

- Li, Y.; Lyu, S. Exposing deepfake videos by detecting face warping artifacts. arXiv 2018, arXiv:1811.00656. [Google Scholar]

- Nguyen, H.H.; Fang, F.; Yamagishi, J.; Echizen, I. Multi-task learning for detecting and segmenting manipulated facial images and videos. In Proceedings of the 2019 IEEE 10th International Conference on Biometrics Theory, Applications and Systems (BTAS), Tampa, FL, USA, 23–26 September 2019; pp. 1–8. [Google Scholar]

- Afchar, D.; Nozick, V.; Yamagishi, J.; Echizen, I. Mesonet: A compact facial video forgery detection network. In Proceedings of the 2018 IEEE International Workshop on Information Forensics and Security (WIFS), Hong Kong, China, 11–13 December 2018; pp. 1–7. [Google Scholar]

- Qi, H.; Guo, Q.; Juefei-Xu, F.; Xie, X.; Ma, L.; Feng, W.; Liu, Y.; Zhao, J. Deeprhythm: Exposing deepfakes with attentional visual heartbeat rhythms. In Proceedings of the 28th ACM International Conference on Multimedia, Seattle, WA, USA, 12–16 October 2020; pp. 4318–4327. [Google Scholar]

- Rossler, A.; Cozzolino, D.; Verdoliva, L.; Riess, C.; Thies, J.; Nießner, M. Faceforensics++: Learning to detect manipulated facial images. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Seoul, Republic of Korea, 27 October–2 November 2019; pp. 1–11. [Google Scholar]

- Hernandez-Ortega, J.; Tolosana, R.; Fierrez, J.; Morales, A. Deepfakeson-phys: Deepfakes detection based on heart rate estimation. arXiv 2020, arXiv:2010.00400. [Google Scholar]

- Khodabakhsh, A.; Busch, C. A generalizable deepfake detector based on neural conditional distribution modelling. In Proceedings of the 2020 International Conference of the Biometrics Special Interest Group (BIOSIG), Online, 16–18 September 2020; pp. 1–5. [Google Scholar]

- Coccomini, D.A.; Messina, N.; Gennaro, C.; Falchi, F. Combining efficientnet and vision transformers for video deepfake detection. In Proceedings of the International Conference on Image Analysis and Processing; Springer: Berlin/Heidelberg, Germany, 2022; pp. 219–229. [Google Scholar]

- Ganiyusufoglu, I.; Ngô, L.M.; Savov, N.; Karaoglu, S.; Gevers, T. Spatio-temporal features for generalized detection of deepfake videos. arXiv 2020, arXiv:2010.11844. [Google Scholar]

- Zhu, X.; Wang, H.; Fei, H.; Lei, Z.; Li, S.Z. Face forgery detection by 3d decomposition. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Virtual, 19–25 June 2021; pp. 2929–2939. [Google Scholar]

- Jafar, M.T.; Ababneh, M.; Al-Zoube, M.; Elhassan, A. Forensics and analysis of deepfake videos. In Proceedings of the 2020 11th International Conference on Information and Communication Systems (ICICS), Irbid, Jordan, 7–9 April 2020; pp. 053–058. [Google Scholar]

- Zhang, K.; Zhang, Z.; Li, Z.; Qiao, Y. Joint face detection and alignment using multitask cascaded convolutional networks. IEEE Signal Process. Lett. 2016, 23, 1499–1503. [Google Scholar] [CrossRef]

- Sohrawardi, S.J.; Chintha, A.; Thai, B.; Seng, S.; Hickerson, A.; Ptucha, R.; Wright, M. Poster: Towards robust open-world detection of deepfakes. In Proceedings of the 2019 ACM SIGSAC Conference on Computer and Communications Security, London, UK, 11–15 November 2019; pp. 2613–2615. [Google Scholar]

- Wang, J.; Wu, Z.; Ouyang, W.; Han, X.; Chen, J.; Jiang, Y.G.; Li, S.N. M2tr: Multi-modal multi-scale transformers for deepfake detection. In Proceedings of the 2022 International Conference on Multimedia Retrieval, Newark, NJ, USA, 27–30 June 2022; pp. 615–623. [Google Scholar]

- Heo, Y.J.; Choi, Y.J.; Lee, Y.W.; Kim, B.G. Deepfake detection scheme based on vision transformer and distillation. arXiv 2021, arXiv:2104.01353. [Google Scholar]

- Wodajo, D.; Atnafu, S. Deepfake video detection using convolutional vision transformer. arXiv 2021, arXiv:2102.11126. [Google Scholar]

- Stroebel, L.; Llewellyn, M.; Hartley, T.; Ip, T.S.; Ahmed, M. A systematic literature review on the effectiveness of deepfake detection techniques. J. Cyber Secur. Technol. 2023, 7, 83–113. [Google Scholar] [CrossRef]

- Rana, M.S.; Nobi, M.N.; Murali, B.; Sung, A.H. Deepfake detection: A systematic literature review. IEEE Access 2022, 10, 25494–25513. [Google Scholar] [CrossRef]

- Malik, A.; Kuribayashi, M.; Abdullahi, S.M.; Khan, A.N. Deepfake detection for human face images and videos: A survey. IEEE Access 2022, 10, 18757–18775. [Google Scholar] [CrossRef]

- Deshmukh, A.; Wankhade, S.B. Deepfake Detection Approaches Using Deep Learning: A Systematic Review. In Intelligent Computing and Networking: Proceedings of IC-ICN 2020; Springer: Berlin/Heidelberg, Germany, 2020; pp. 293–302. [Google Scholar]

- Shahzad, H.F.; Rustam, F.; Flores, E.S.; Luís Vidal Mazón, J.; de la Torre Diez, I.; Ashraf, I. A Review of Image Processing Techniques for Deepfakes. Sensors 2022, 22, 4556. [Google Scholar] [CrossRef]

- Mahmud, B.U.; Sharmin, A. Deep insights of deepfake technology: A review. arXiv 2021, arXiv:2105.00192. [Google Scholar]

- Kan, M. This AI Can Recreate Podcast Host Joe Rogan’s Voice to Say Anything, 2019. Available online: https://www.pcmag.com/news/this-ai-can-recreate-podcast-host-joe-rogans-voice-to-say-anything#:~:text=A%20group%20of%20engineers%20has,to%20almost%20every%20word%20said (accessed on 2 May 2023).

- Solsman, J.E. Samsung Deepfake AI Could Fabricate a Video of You from a Single Profile Pic, 2019. Available online: https://www.cnet.com/tech/computing/samsung-ai-deepfake-can-fabricate-a-video-of-you-from-a-single-photo-mona-lisa-cheapfake-dumbfake/ (accessed on 2 May 2023).

- Evans, C. Spotting Fake News in a World with Manipulated Video, 2018. Available online: https://www.cbsnews.com/news/spotting-fake-news-in-a-world-with-manipulated-video (accessed on 2 May 2023).

- Baron, K. Digital Doubles: The Deepfake Tech Nourishing New Wave Retail, 2019. Available online: https://www.forbes.com/sites/katiebaron/2019/07/29/digital-doubles-the-deepfake-tech-nourishing-new-wave-retail/?sh=5428ce694cc7 (accessed on 2 May 2023).

- Brandon, J. Terrifying High-Tech Porn: Creepy ‘Deepfake’ Videos Are on the Rise, 2018. Available online: https://www.foxnews.com/tech/terrifying-high-tech-porn-creepy-deepfake-videos-are-on-the-rise (accessed on 2 May 2023).

- Dickson, B. When AI Blurs the Line between Reality and Fiction. 2018. Available online: https://www.pcmag.com/news/when-ai-blurs-the-line-between-reality-and-fiction (accessed on 6 November 2022).

- Chivers, T. What Do We Do about Deepfake Video? 2019. Available online: https://www.theguardian.com/technology/2019/jun/23/what-do-we-do-about-deepfake-video-ai-facebook (accessed on 6 November 2022).

- Singh, D. WGoogle, Facebook, Twitter Put on Notice about Deepfakes in 2020 Election. 2019. Available online: https://www.cnet.com/tech/mobile/google-facebook-and-twitter-sent-letters-about-deepfakes-by-rep-schiff/ (accessed on 6 November 2022).

- Dietmar, J. GANs and Deepfakes Could Revolutionize the Fashion Industry. 2019. Available online: https://www.forbes.com/sites/forbestechcouncil/2019/05/21/gans-and-deepfakes-could-revolutionize-the-fashion-industry/?sh=6f22c1723d17 (accessed on 6 November 2022).

- Bell, K. The Most Urgent Threat of Deepfakes Isn’t Politics. 2020. Available online: https://www.youtube.com/watch?v=hHHCrf2-x6w&t=2s (accessed on 6 November 2022).

- Karasavva, V.; Noorbhai, A. The real threat of deepfake pornography: A review of canadian policy. Cyberpsychology Behav. Soc. Netw. 2021, 24, 203–209. [Google Scholar] [CrossRef] [PubMed]

- Kerner, C.; Risse, M. Beyond porn and discreditation: Epistemic promises and perils of deepfake technology in digital lifeworlds. Moral Philos. Politics 2021, 8, 81–108. [Google Scholar] [CrossRef]

- Fido, D.; Rao, J.; Harper, C.A. Celebrity status, sex, and variation in psychopathy predicts judgements of and proclivity to generate and distribute deepfake pornography. Comput. Hum. Behav. 2022, 129, 107141. [Google Scholar] [CrossRef]

- Diakopoulos, N.; Johnson, D. Anticipating and addressing the ethical implications of deepfakes in the context of elections. New Media Soc. 2021, 23, 2072–2098. [Google Scholar] [CrossRef]

- Hoven, J.v.d. Responsible innovation: A new look at technology and ethics. In Responsible Innovation 1; Springer: Berlin/Heidelberg, Germany, 2014; pp. 3–13. [Google Scholar]

- Siegel, D.; Kraetzer, C.; Seidlitz, S.; Dittmann, J. Media forensics considerations on deepfake detection with hand-crafted features. J. Imaging 2021, 7, 108. [Google Scholar] [CrossRef]

- Wang, G.; Jiang, Q.; Jin, X.; Cui, X. FFR_FD: Effective and Fast Detection of Deepfakes Based on Feature Point Defects. arXiv 2021, arXiv:2107.02016. [Google Scholar] [CrossRef]

- Yang, X.; Li, Y.; Lyu, S. Exposing deep fakes using inconsistent head poses. In Proceedings of the ICASSP 2019—2019 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Brighton, UK, 12–17 May 2019; pp. 8261–8265. [Google Scholar]

- Korshunov, P.; Marcel, S. Deepfakes: A new threat to face recognition? assessment and detection. arXiv 2018, arXiv:1812.08685. [Google Scholar]

- Chen, H.S.; Rouhsedaghat, M.; Ghani, H.; Hu, S.; You, S.; Kuo, C.C.J. Defakehop: A light-weight high-performance deepfake detector. In Proceedings of the 2021 IEEE International Conference on Multimedia and Expo (ICME), Shenzhen, China, 5–9 July 2021; pp. 1–6. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–20 June 2016; pp. 770–778. [Google Scholar]

- Szegedy, C.; Ioffe, S.; Vanhoucke, V.; Alemi, A.A. Inception-v4, inception-resnet and the impact of residual connections on learning. In Proceedings of the Thirty-First AAAI Conference on Artificial Intelligence, San Francisco, CA, USA, 4–9 February 2017. [Google Scholar]

- Masi, I.; Killekar, A.; Mascarenhas, R.M.; Gurudatt, S.P.; AbdAlmageed, W. Two-branch recurrent network for isolating deepfakes in videos. In Proceedings of the European Conference on Computer Vision; Springer: Berlin/Heidelberg, Germany, 2020; pp. 667–684. [Google Scholar]

- Fernandes, S.; Raj, S.; Ortiz, E.; Vintila, I.; Salter, M.; Urosevic, G.; Jha, S. Predicting heart rate variations of deepfake videos using neural ode. In Proceedings of the IEEE/CVF International Conference on Computer Vision Workshops, Seoul, Republic of Korea, 27–28 October 2019. [Google Scholar]

- Tariq, S.; Lee, S.; Woo, S.S. A convolutional LSTM based residual network for deepfake video detection. arXiv 2020, arXiv:2009.07480. [Google Scholar]

- Güera, D.; Delp, E.J. Deepfake video detection using recurrent neural networks. In Proceedings of the 2018 15th IEEE International Conference on Advanced Video and Signal Based Surveillance (AVSS), Auckland, New Zealand, 17–30 November 2018; pp. 1–6. [Google Scholar]

- Chintha, A.; Thai, B.; Sohrawardi, S.J.; Bhatt, K.; Hickerson, A.; Wright, M.; Ptucha, R. Recurrent convolutional structures for audio spoof and video deepfake detection. IEEE J. Sel. Top. Signal Process. 2020, 14, 1024–1037. [Google Scholar] [CrossRef]

- Montserrat, D.M.; Hao, H.; Yarlagadda, S.K.; Baireddy, S.; Shao, R.; Horváth, J.; Bartusiak, E.; Yang, J.; Guera, D.; Zhu, F.; et al. Deepfakes detection with automatic face weighting. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, Seattle, WA, USA, 14–19 June 2020; pp. 668–669. [Google Scholar]

- Khormali, A.; Yuan, J.S. DFDT: An End-to-End Deepfake Detection Framework Using Vision Transformer. Appl. Sci. 2022, 12, 2953. [Google Scholar] [CrossRef]

- Khan, S.A.; Dai, H. Video transformer for deepfake detection with incremental learning. In Proceedings of the 29th ACM International Conference on Multimedia, Virtual Event, 20–24 October 2021; pp. 1821–1828. [Google Scholar]

- Li, M.; Zuo, W.; Zhang, D. Deep identity-aware transfer of facial attributes. arXiv 2016, arXiv:1610.05586. [Google Scholar]

- Wang, X.; Huang, J.; Ma, S.; Nepal, S.; Xu, C. Deepfake Disrupter: The Detector of Deepfake Is My Friend. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 14920–14929. [Google Scholar]

- Guarnera, L.; Giudice, O.; Guarnera, F.; Ortis, A.; Puglisi, G.; Paratore, A.; Bui, L.M.; Fontani, M.; Coccomini, D.A.; Caldelli, R.; et al. The Face Deepfake Detection Challenge. J. Imaging 2022, 8, 263. [Google Scholar] [CrossRef] [PubMed]

- Guarnera, L.; Giudice, O.; Battiato, S. Deepfake detection by analyzing convolutional traces. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, Seattle, WA, USA, 14–19 June 2020; pp. 666–667. [Google Scholar]

- Wang, S.Y.; Wang, O.; Zhang, R.; Owens, A.; Efros, A.A. CNN-generated images are surprisingly easy to spot…for now. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 14–19 June 2020; pp. 8695–8704. [Google Scholar]

- Yang, C.; Lim, S.N. One-shot domain adaptation for face generation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 14–19 June 2020; pp. 5921–5930. [Google Scholar]

- Yang, C.; Lim, S.N. Unconstrained facial expression transfer using style-based generator. arXiv 2019, arXiv:1912.06253. [Google Scholar]

- Songsri-in, K.; Zafeiriou, S. Complement face forensic detection and localization with faciallandmarks. arXiv 2019, arXiv:1910.05455. [Google Scholar]

- Dolhansky, B.; Bitton, J.; Pflaum, B.; Lu, J.; Howes, R.; Wang, M.; Ferrer, C.C. The deepfake detection challenge (dfdc) dataset. arXiv 2020, arXiv:2006.07397. [Google Scholar]

- Guarnera, L.; Giudice, O.; Battiato, S. Fighting deepfake by exposing the convolutional traces on images. IEEE Access 2020, 8, 165085–165098. [Google Scholar] [CrossRef]

- Frank, J.; Eisenhofer, T.; Schönherr, L.; Fischer, A.; Kolossa, D.; Holz, T. Leveraging frequency analysis for deep fake image recognition. In Proceedings of the International Conference on Machine Learning, Virtual, 13–18 July 2020; pp. 3247–3258. [Google Scholar]

- Wolter, M.; Blanke, F.; Hoyt, C.T.; Garcke, J. Wavelet-packet powered deepfake image detection. arXiv 2021, arXiv:2106.09369. [Google Scholar]

- Fernandes, S.; Raj, S.; Ewetz, R.; Pannu, J.S.; Jha, S.K.; Ortiz, E.; Vintila, I.; Salter, M. Detecting deepfake videos using attribution-based confidence metric. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, Seattle, WA, USA, 14–19 June 2020; pp. 308–309. [Google Scholar]

- Huang, Y.; Juefei-Xu, F.; Wang, R.; Guo, Q.; Ma, L.; Xie, X.; Li, J.; Miao, W.; Liu, Y.; Pu, G. Fakepolisher: Making deepfakes more detection-evasive by shallow reconstruction. In Proceedings of the 28th ACM International Conference on Multimedia, Seattle, WA, USA, 12–16 October 2020; pp. 1217–1226. [Google Scholar]

- Pu, J.; Mangaokar, N.; Wang, B.; Reddy, C.K.; Viswanath, B. Noisescope: Detecting deepfake images in a blind setting. In Proceedings of the Annual Computer Security Applications Conference, Austin, TX, USA, 6–10 December 2020; pp. 913–927. [Google Scholar]

- Mitra, A.; Mohanty, S.P.; Corcoran, P.; Kougianos, E. EasyDeep: An IoT Friendly Robust Detection Method for GAN Generated Deepfake Images in Social Media. In Proceedings of the IFIP International Internet of Things Conference; Springer: Berlin/Heidelberg, Germany, 2021; pp. 217–236. [Google Scholar]

- Zendran, M.; Rusiecki, A. Swapping Face Images with Generative Neural Networks for Deepfake Technology—Experimental Study. Procedia Comput. Sci. 2021, 192, 834–843. [Google Scholar] [CrossRef]

- Narayan, K.; Agarwal, H.; Thakral, K.; Mittal, S.; Vatsa, M.; Singh, R. DeePhy: On Deepfake Phylogeny. arXiv 2022, arXiv:2209.09111. [Google Scholar]

- Karras, T.; Laine, S.; Aittala, M.; Hellsten, J.; Lehtinen, J.; Aila, T. Analyzing and improving the image quality of stylegan. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 8110–8119. [Google Scholar]

- Lample, G.; Zeghidour, N.; Usunier, N.; Bordes, A.; Denoyer, L.; Ranzato, M. Fader networks: Manipulating images by sliding attributes. Adv. Neural Inf. Process. Syst. 2017, 30. [Google Scholar]

- Shaoanlu. Faceswap-GAN. 2018. Available online: https://github.com/shaoanlu/faceswap-GAN (accessed on 6 November 2022).

- Neuralchen. SimSwap. 2021. Available online: https://github.com/neuralchen/SimSwap (accessed on 6 November 2022).

- Usingcolor. Faceshifter. 2020. Available online: https://github.com/mindslab-ai/faceshifter (accessed on 6 November 2022).

- YuDeng. DiscoFaceGan. 2020. Available online: https://github.com/microsoft/DiscoFaceGan (accessed on 6 November 2022).

- Faceapp. 2019. Available online: https://www.faceapp.com/ (accessed on 6 November 2022).

- Heidari, A.; Navimipour, N.J.; Jamali, M.A.J.; Akbarpour, S. A green, secure, and deep intelligent method for dynamic IoT-edge-cloud offloading scenarios. Sustain. Comput. Inform. Syst. 2023, 38, 100859. [Google Scholar] [CrossRef]

- Heidari, A.; Javaheri, D.; Toumaj, S.; Navimipour, N.J.; Rezaei, M.; Unal, M. A new lung cancer detection method based on the chest CT images using Federated Learning and blockchain systems. Artif. Intell. Med. 2023, 141, 102572. [Google Scholar] [CrossRef]

- Dependabot. Sensity AI, 2022. Available online: https://github.com/sensity-ai/dot (accessed on 3 April 2023).

- Truepic. Truepic. 2022. Available online: https://truepic.com/ (accessed on 3 April 2023).

- Ddi. DDI. 2022. Available online: https://www.d-id.com/ (accessed on 3 April 2023).

- Mgongwer. DeepTraCE. 2022. Available online: https://github.com/DeNardoLab/DeepTraCE (accessed on 3 April 2023).

- DSA. Deep Secure AI. 2023. Available online: https://tracxn.com/d/companies/deep-secure-ai/__Vg5KA9H7Is7wzbVWluIoNcwc_XaTgx1t3WSjzigbEE4 (accessed on 3 April 2023).

- Iproov. Iproov. 2022. Available online: https://www.iproov.com/blog/deepfakes-statistics-solutions-biometric-protection (accessed on 3 April 2023).

- Blackbird. Blackbird. 2023. Available online: https://www.blackbird.ai/blog/2023/04/navigating-the-warped-realities-of-generative-ai (accessed on 3 April 2023).

- Sentinel. Sentinel. 2021. Available online: https://thesentinel.ai/ (accessed on 3 April 2023).

- Amber. Amber. 2020. Available online: https://www.wired.com/story/amber-authenticate-video-validation-blockchain-tampering-deepfakes/ (accessed on 3 April 2023).

- Amberapp. Amberapp. 2020. Available online: https://app.ambervideo.co/public (accessed on 3 April 2023).

- FaceForensics. FaceForensics. 2020. Available online: https://github.com/ondyari/FaceForensics (accessed on 3 April 2023).

- Fakespot. Fakespot. 2023. Available online: https://www.fakespot.com/ (accessed on 3 April 2023).

- Datitran. Face2face. 2018. Available online: https://github.com/datitran/face2face-demo (accessed on 6 November 2022).

- Torzdf. Faceswap. 2018. Available online: https://github.com/deepfakes/faceswap (accessed on 6 November 2022).

- YuvalNirkin. FaceSwap. 2017. Available online: https://github.com/YuvalNirkin/face_swap (accessed on 6 November 2022).

- Nirkin, Y.; Masi, I.; Tuan, A.T.; Hassner, T.; Medioni, G. On face segmentation, face swapping, and face perception. In Proceedings of the 2018 13th IEEE International Conference on Automatic Face & Gesture Recognition (FG 2018), Xi’an, China, 15–19 May 2018; pp. 98–105. [Google Scholar]

- Burgos-Artizzu, X.P.; Perona, P.; Dollár, P. Robust face landmark estimation under occlusion. In Proceedings of the IEEE International Conference on Computer Vision, Sydney, Australia, 1–8 December 2013; pp. 1513–1520. [Google Scholar]

- Tan, M.; Le, Q. Efficientnet: Rethinking model scaling for convolutional neural networks. In Proceedings of the International Conference on Machine Learning, Long Beach, CA, USA, 9–15 June 2019; pp. 6105–6114. [Google Scholar]

- Mitchell, T.; Buchanan, B.; DeJong, G.; Dietterich, T.; Rosenbloom, P.; Waibel, A. Machine learning. Annu. Rev. Comput. Sci. 1990, 4, 417–433. [Google Scholar] [CrossRef]

- Timesler. Facenet-Pytorch. 2018. Available online: https://github.com/timesler/facenet-pytorch (accessed on 6 November 2022).

- Xiang, J.; Zhu, G. Joint face detection and facial expression recognition with MTCNN. In Proceedings of the 2017 4th International Conference on Information Science and Control Engineering (ICISCE), Changsha, China, 21–23 July 2017; pp. 424–427. [Google Scholar]

- matkob. OpenPose. 2018. Available online: https://github.com/CMU-Perceptual-Computing-Lab/openpose (accessed on 6 November 2022).

- Cao, Z.; Simon, T.; Wei, S.E.; Sheikh, Y. Realtime multi-person 2d pose estimation using part affinity fields. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 7291–7299. [Google Scholar]

- Lin, T.Y.; Maire, M.; Belongie, S.; Hays, J.; Perona, P.; Ramanan, D.; Dollár, P.; Zitnick, C.L. Microsoft coco: Common objects in context. In Proceedings of the European Conference on Computer Vision; Springer: Berlin/Heidelberg, Germany, 2014; pp. 740–755. [Google Scholar]

- Andriluka, M.; Pishchulin, L.; Gehler, P.; Schiele, B. 2d human pose estimation: New benchmark and state of the art analysis. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 23–28 June 2014; pp. 3686–3693. [Google Scholar]

- BiDAlab. DeepfakesON-Phys. 2020. Available online: https://github.com/BiDAlab/DeepFakesON-Phys (accessed on 6 November 2022).

- Coccomini, D. Combining EfficientNet and Vision Transformers for Video Deepfake Detection. 2021. Available online: https://github.com/davide-coccomini/Combining-EfficientNet-and-Vision-Transformers-for-Video-Deepfake-Detection (accessed on 6 November 2022).

- Iperov. DeepFaceLab. 2018. Available online: https://github.com/iperov/DeepFaceLab (accessed on 6 November 2022).

- Younger, P. Deeptomcruise. Available online: https://www.tiktok.com/@deeptomcruise (accessed on 6 November 2022).

- Schwarz, L. Arnoldschwarzneggar. Available online: https://www.tiktok.com/@arnoldschwarzneggar (accessed on 6 November 2022).

- Diepnep. Available online: https://www.tiktok.com/@diepnep (accessed on 6 November 2022).

- Deepcaprio. 2018. Available online: https://www.tiktok.com/@deepcaprio (accessed on 6 November 2022).

- vfx. VFXChrisUme. 2019. Available online: https://www.youtube.com/c/VFXChrisUme (accessed on 6 November 2022).

- Shamook. Shamook. 2018. Available online: https://www.youtube.com/channel/UCZXbWcv7fSZFTAZV4beckyw/videos (accessed on 6 November 2022).

- NextFace. 2018. Available online: https://www.youtube.com/c/GuusDeKroon (accessed on 6 November 2022).

- Deepfaker. 2018. Available online: https://www.youtube.com/channel/UCkHecfDTcSazNZSKPEhtPVQ (accessed on 6 November 2022).

- Deepfakes in Movie. 2019. Available online: https://www.youtube.com/c/DeepFakesinmovie (accessed on 6 November 2022).

- DeepfakeCreator. 2020. Available online: https://www.youtube.com/c/DeepfakeCreator (accessed on 6 November 2022).

- Jarkancio. Jarkan. 2007. Available online: https://www.youtube.com/c/Jarkan (accessed on 6 November 2022).

- Zhang, S.; Zhu, X.; Lei, Z.; Shi, H.; Wang, X.; Li, S.Z. S3fd: Single shot scale-invariant face detector. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 192–201. [Google Scholar]

- Bulat, A.; Tzimiropoulos, G. How far are we from solving the 2d & 3d face alignment problem? (and a dataset of 230,000 3d facial landmarks). In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 1021–1030. [Google Scholar]

- Feng, Y.; Wu, F.; Shao, X.; Wang, Y.; Zhou, X. Joint 3d face reconstruction and dense alignment with position map regression network. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 534–551. [Google Scholar]

- Iglovikov, V.; Shvets, A. Ternausnet: U-net with vgg11 encoder pre-trained on imagenet for image segmentation. arXiv 2018, arXiv:1801.05746. [Google Scholar]

- Fakeapp. Available online: https://www.fakeapp.com/ (accessed on 6 November 2022).

- Deepfakesweb. Available online: https://deepfakesweb.com/ (accessed on 6 November 2022).

- Karras, T.; Aila, T.; Laine, S.; Lehtinen, J. Progressive growing of gans for improved quality, stability, and variation. arXiv 2017, arXiv:1710.10196. [Google Scholar]

- Cao, Q.; Shen, L.; Xie, W.; Parkhi, O.M.; Zisserman, A. Vggface2: A dataset for recognising faces across pose and age. In Proceedings of the 2018 13th IEEE International Conference on Automatic Face & Gesture Recognition (FG 2018), Xi’an, China, 15–19 May 2018; pp. 67–74. [Google Scholar]

- Carlini, N.; Farid, H. Evading deepfake-image detectors with white-and black-box attacks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, Seattle, WA, USA, 14–19 June 2020; pp. 658–659. [Google Scholar]

- Li, L.; Bao, J.; Zhang, T.; Yang, H.; Chen, D.; Wen, F.; Guo, B. Face X-ray for more general face forgery detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 5001–5010. [Google Scholar]

- Dang, H.; Liu, F.; Stehouwer, J.; Liu, X.; Jain, A.K. On the detection of digital face manipulation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 5781–5790. [Google Scholar]

| Electronic Database | Type | URL |

|---|---|---|

| IEEE Xplore | Digital Library | https://ieeexplore.ieee.org/Xplore/home.jsp (accessed on 12 January 2023) |

| Springer | Digital Library | https://www.springer.com/gp (accessed on 12 January 2023) |

| Google Scholar | Search Engine | https://scholar.google.com.au (accessed on 12 January 2023) |

| Science Direct—Elsevier | Digital Library | https://www.sciencedirect.com (accessed on 12 January 2023) |

| MDPI | Digital Library | https://www.mdpi.com (accessed on 12 January 2023) |

| Researchgate | Social networking site | https://www.researchgate.net (accessed on 12 January 2023) |

| Search Queries (SQ) | |

|---|---|

| SQ1 | “deepfake generation” AND machine learning OR deep learning OR models |

| SQ2 | “deepfake generation” AND machine learning OR deep learning OR tools |

| SQ3 | “deepfake detection” AND machine learning OR deep learning OR models |

| SQ4 | “deepfake detection” AND machine learning OR deep learning OR tools |

| List of Inclusion and Exclusion Criteria | |

|---|---|

| Inclusion Criteria (IC) | |

| IC1 | Should contain at least one of the keywords |

| IC2 | Must be included in one of the selected databases |

| IC3 | Published within the last ten years (2014–2023) |

| IC4 | Publication in a journal, conference is required |

| IC5 | The research being examined should have a matching title, abstract, and full text |

| Exclusion Criteria (EC) | |

| EC1 | Redundant items |

| EC2 | Whole text of paper cannot be taken |

| EC3 | Purpose of the paper is not related to deepfake |

| EC4 | Non-english documents |

| Paper | QE1: Is the Publication Associated with Deepfake? | QE2: Is the Suggested Solution Completely Obvious? | QE3. Is There a Deepfake Model Proposed in the Publication? | QE4. Is a Deepfake Tool Implemented in the Suggested Solution? | QE5. Are Challenges Addressed in the Proposed Solution? | QE6. Is the Proposed Solution Ready for Implementation? | QE7. Did the Publication Define the Limitations of the Proposed Solutions? | Score |

|---|---|---|---|---|---|---|---|---|

| [29] | 4 | 3 | 4 | 1 | 4 | 2 | 4 | 22 |

| [30] | 4 | 2 | 4 | 1 | 4 | 3 | 4 | 22 |

| [31] | 4 | 3 | 2 | 1 | 4 | 3 | 3 | 20 |

| [32] | 4 | 2 | 3 | 1 | 4 | 3 | 4 | 21 |

| [33] | 4 | 2 | 4 | 3 | 3 | 1 | 3 | 20 |

| [34] | 4 | 2 | 3 | 2 | 2 | 3 | 3 | 19 |

| [35] | 4 | 3 | 4 | 4 | 2 | 4 | 2 | 23 |

| [36] | 4 | 3 | 4 | 4 | 3 | 4 | 4 | 26 |

| [37] | 4 | 3 | 4 | 3 | 4 | 4 | 3 | 25 |

| [38] | 4 | 3 | 4 | 3 | 2 | 4 | 4 | 24 |

| [39] | 4 | 4 | 2 | 3 | 3 | 4 | 2 | 22 |

| [31] | 4 | 3 | 4 | 2 | 3 | 4 | 4 | 24 |

| [40] | 4 | 4 | 3 | 4 | 3 | 2 | 4 | 24 |

| [41] | 4 | 3 | 3 | 4 | 3 | 3 | 3 | 23 |

| [42] | 4 | 2 | 3 | 4 | 4 | 3 | 4 | 24 |

| [43] | 4 | 4 | 3 | 3 | 3 | 2 | 4 | 23 |

| [44] | 4 | 3 | 2 | 3 | 2 | 2 | 4 | 20 |

| [45] | 4 | 4 | 1 | 4 | 4 | 3 | 2 | 22 |

| [46] | 4 | 4 | 2 | 4 | 4 | 3 | 3 | 24 |

| [47] | 4 | 3 | 4 | 4 | 4 | 4 | 2 | 25 |

| [48] | 4 | 4 | 4 | 4 | 4 | 2 | 4 | 26 |

| [49] | 4 | 3 | 4 | 3 | 4 | 1 | 4 | 23 |

| [50] | 4 | 2 | 4 | 4 | 3 | 1 | 2 | 20 |

| [51] | 4 | 4 | 3 | 4 | 3 | 4 | 3 | 25 |

| [52] | 4 | 4 | 3 | 3 | 2 | 3 | 2 | 21 |

| [53] | 4 | 4 | 2 | 4 | 3 | 2 | 2 | 21 |

| [54] | 4 | 3 | 2 | 4 | 3 | 4 | 4 | 26 |

| [55] | 4 | 2 | 3 | 3 | 2 | 2 | 4 | 20 |

| [56] | 4 | 4 | 3 | 4 | 1 | 3 | 2 | 21 |

| [57] | 4 | 4 | 1 | 4 | 4 | 4 | 2 | 23 |

| [58] | 4 | 3 | 1 | 4 | 4 | 3 | 2 | 21 |

| [59] | 4 | 4 | 1 | 4 | 3 | 4 | 4 | 24 |

| [60] | 4 | 4 | 3 | 4 | 4 | 3 | 4 | 26 |

| [61] | 4 | 4 | 3 | 3 | 4 | 4 | 2 | 24 |

| [62] | 4 | 4 | 2 | 3 | 3 | 2 | 3 | 21 |

| [63] | 4 | 4 | 4 | 3 | 4 | 2 | 2 | 23 |

| [64] | 4 | 4 | 4 | 4 | 4 | 4 | 1 | 25 |

| [65] | 4 | 4 | 2 | 4 | 4 | 4 | 3 | 25 |

| Tool | Underlying Model | Main Focus | Accuracy | Speed | Usabilty | Security | Availability | Environment |

|---|---|---|---|---|---|---|---|---|

| FaceSwap-GAN [122] | HEAR-Net+AEINet | Face-swapping and reenactment approach can be applied to pairs of faces. | High | Slow | Moderate | Low | Open source | TensorFlow |

| SimSwap [123] | Encoder-Decoder + GAN | Randomized face swapping on both still pictures and moving pictures. | High | Fast | Moderate | Low | Paid | PyTorch1.5+ |

| Fewshot FT GAN | Few-Shot GAN | Generating faces with glasses, hair, geometric distortion, and fixed gaze using consistent faces perform poorly when converting to Asian faces. | High | Moderate | Moderate | Moderate | Paid | TensorFlow |

| FaceShifter [124] | HEAR-Net | Unique two-stage face-swapping method that allows for excellent accuracy and occlusion sensitivity. | High | Fast | Low | Low | Paid | Pytorch |

| DiscofaceGAN [125] | Disentangled StyleGAN | Can be controlled and completely disconnected using three-dimensional representational learning. | High | Slow | Low | Low | Open source | TensorFlow |

| Faceapp [126] | Enables changing the face, hairstyle, gender, age, as well as other physical traits. | Low | Fast | High | Low | Paid | Web | |

| StarGAN [33] | StarGAN | Disentangled and controllable face image generation via 3D imitative-contrastive learning. | Moderate | Slow | Low | Low | Open source | Pytorch |

| StarGAN-V2 | StarGAN-V2 | Meets the requirements for various produced graphics and scalability across several domains. | High | Slow | Low | Low | Open source | Pytorch |

| ATTGAN [35] | Attribute-GAN | Transmission of facial characteristics under classification restrictions. | High | Moderate | Moderate | Low | Open source | TensorFlow |

| Style-Gan [34] | Style-Gan | Style-based GAN that produces deepfakes. | High | Slow | Low | Low | Open source | TensorFlow |

| Style-Gan2 | Style-Gan2 | Proposes weight modification, regularizes path length, modifies the generator, and drops continued expansion to enhance quality images. | High | Slow | Low | Low | Open source | TensorFlow |