Intact Planar Abstraction of Buildings via Global Normal Refinement from Noisy Oblique Photogrammetric Point Clouds

Abstract

1. Introduction

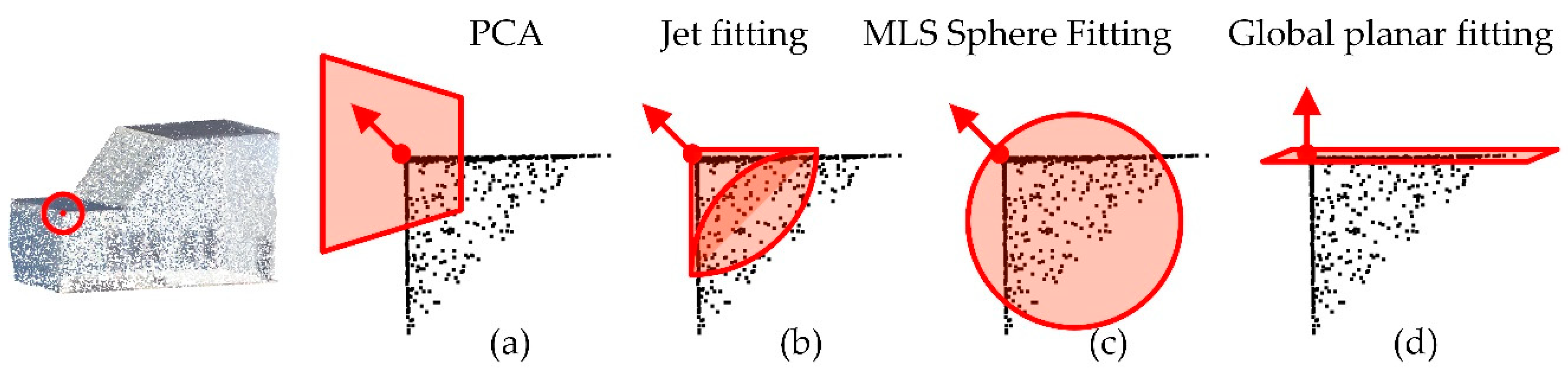

1.1. Related Works

1.2. Contributions

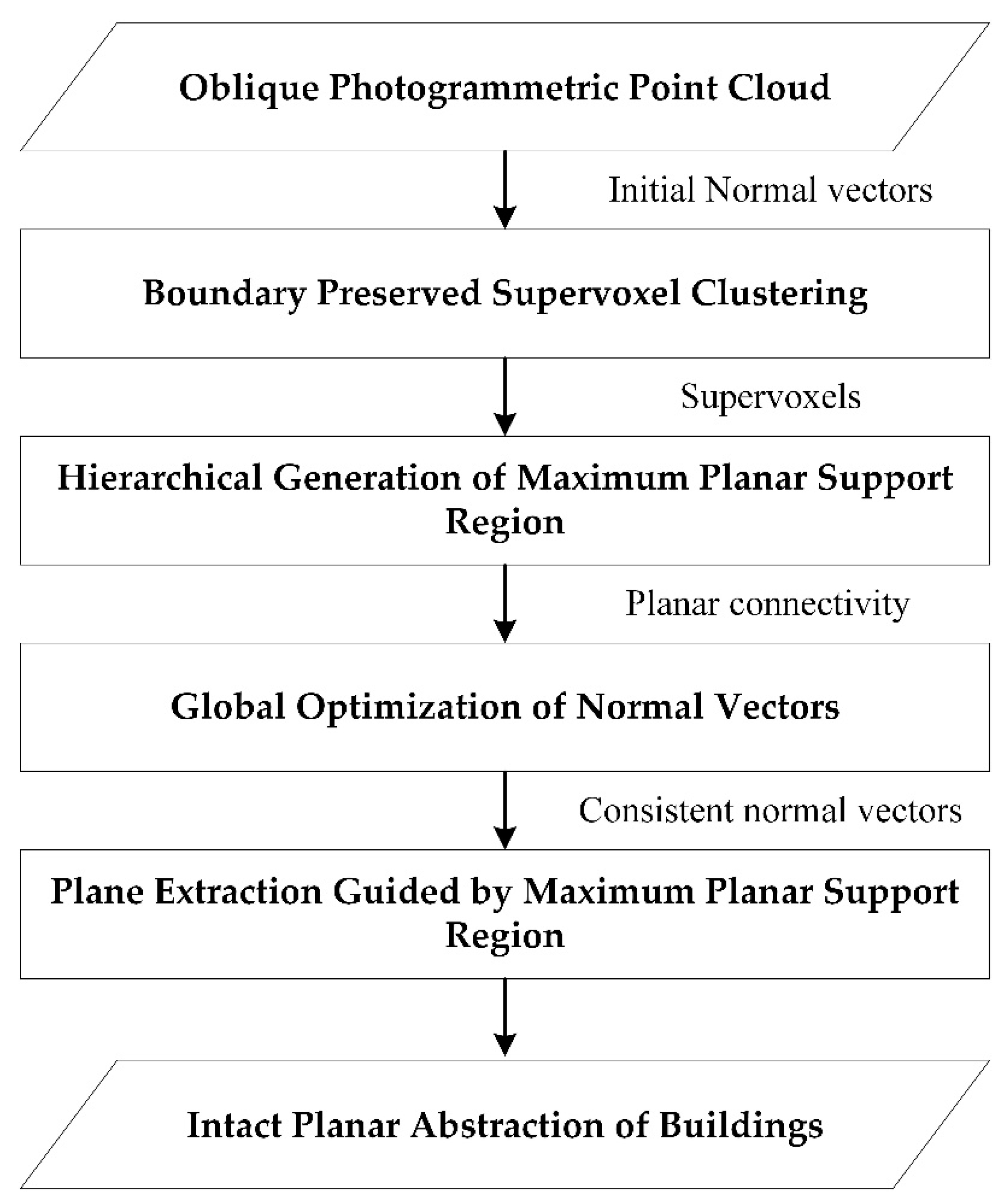

2. Methods

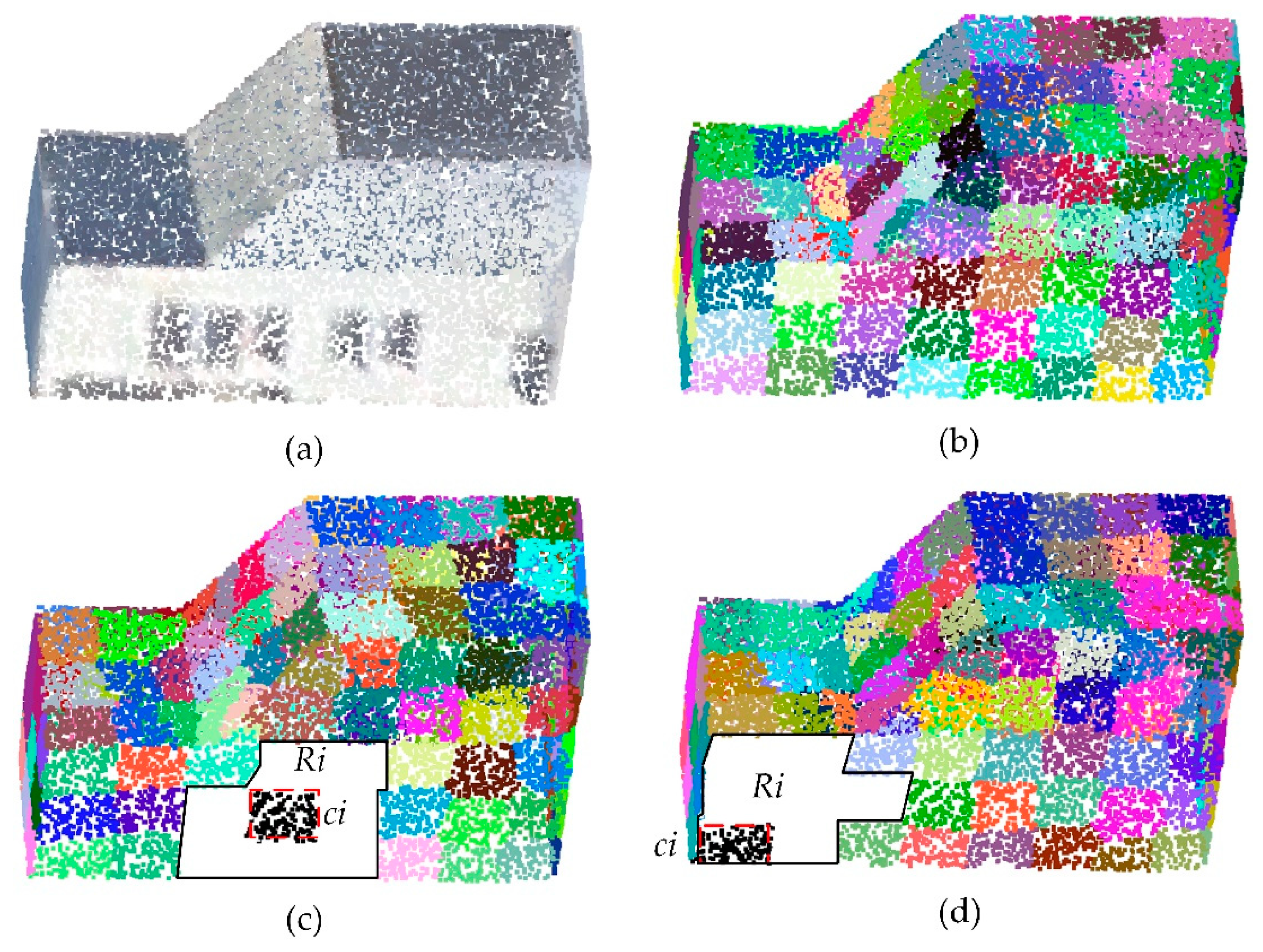

2.1. Boundary-Preserved Supervoxel Clustering

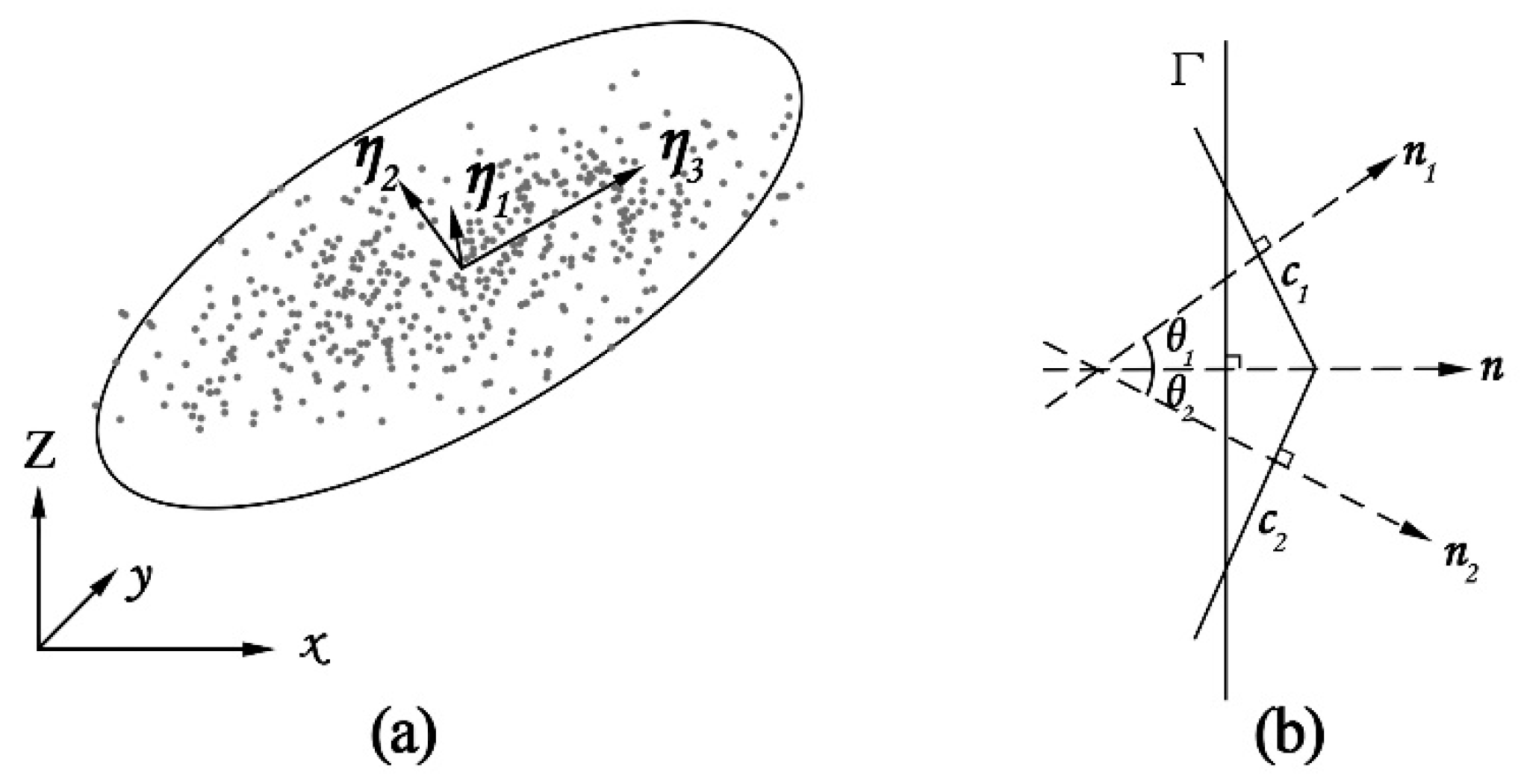

2.2. Hierarchical Generation of the Maximum Planar Support Region

2.3. Global Optimization of Normal Vectors

2.4. Plane Extraction Guided by the Maximum Planar Support Region

3. Experimental Evaluations and Analysis

3.1. Qualitative Evaluations

3.1.1. Evaluations of the Global Normal Optimization

3.1.2. Evaluations of Large-Scale Tilewise Planar Extraction

3.1.3. Evaluations of the Abstraction Quality of a Single Building

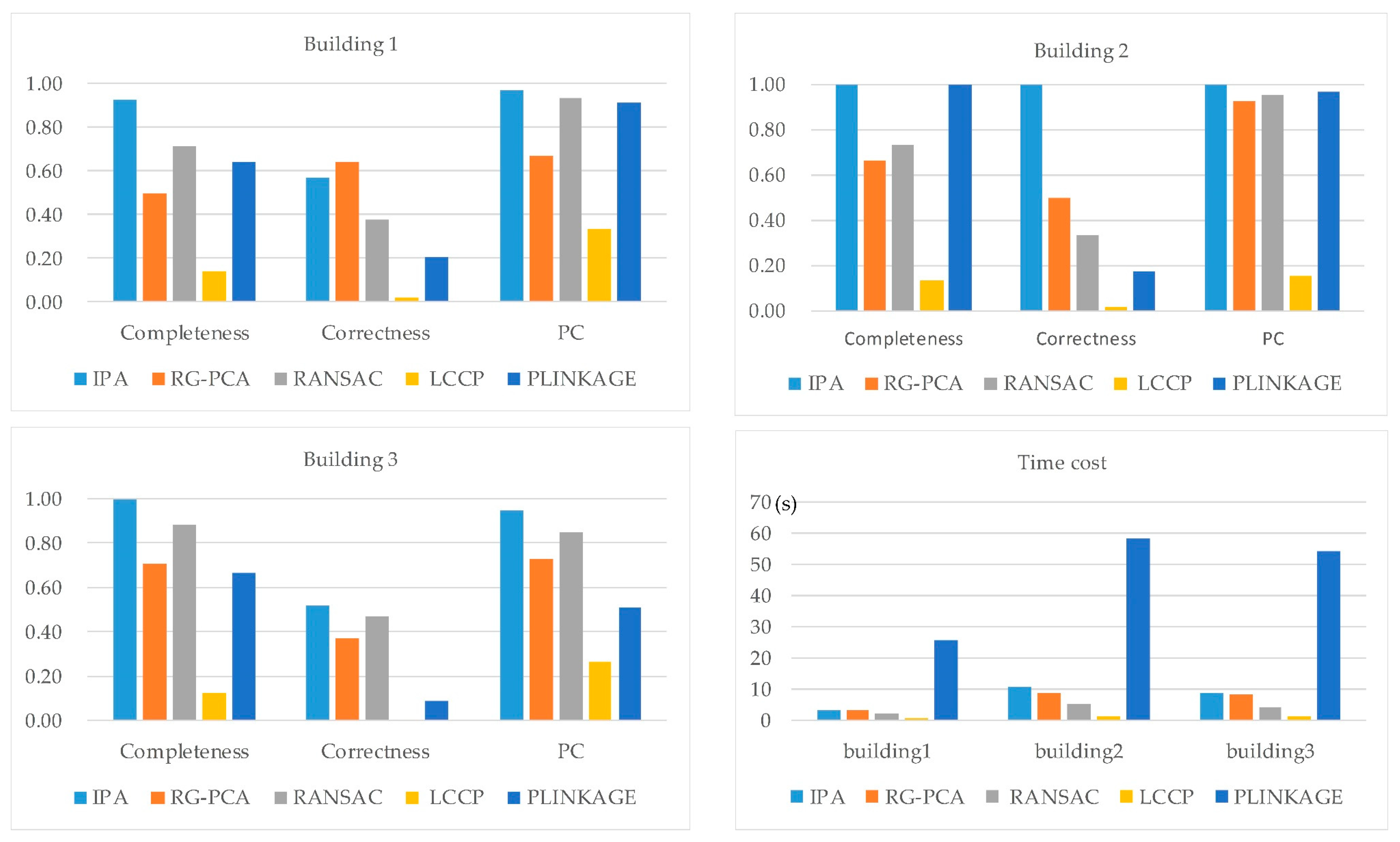

3.2. Quantitative Analysis

4. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Haala, N.; Rothermel, M.; Cavegn, S. Extracting 3D urban models from oblique aerial images. In Proceedings of the IEEE in Urban Remote Sensing Event (JURSE), Lausanne, Switzerland, 30 March–1 April 2015. [Google Scholar]

- Hu, H.; Zhu, Q.; Du, Z.; Zhang, Y.; Ding, Y. Reliable spatial relationship constrained feature point matching of oblique aerial images. Photogramm. Eng. Remote Sens. 2015, 81, 49–58. [Google Scholar] [CrossRef]

- Gerke, M.; Nex, F.; Remondino, F.; Jacobsen, K.; Kremer, J.; Karel, W.; Huf, H.; Ostrowski, W. Orientation of oblique airborne image sets-experiences from the ISPRS/EUROSDR benchmark on multi-platform photogrammetry. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2016, 41, 185–191. [Google Scholar] [CrossRef]

- Xie, L.; Hu, H.; Wang, J.; Zhu, Q.; Chen, M. An asymmetric re-weighting method for the precision combined bundle adjustment of aerial oblique images. ISPRS J. Photogram. Remote Sens. 2016, 117, 92–107. [Google Scholar] [CrossRef]

- Koci, J.; Jarihani, B.; Leon, J.X.; Sidle, R.C.; Wilkinson, S.N.; Bartley, R. Assessment of UAV and ground-based Structure from Motion with multi-view stereo photogrammetry in a gullied savanna catchment. ISPRS Int. J. Geo-Inf. 2017, 6, 328. [Google Scholar] [CrossRef]

- Remondino, F.; Spera, M.G.; Nocerino, E.; Menna, F.; Nex, F. State of the art in high density image matching. Photogramm. Rec. 2014, 29, 144–166. [Google Scholar] [CrossRef]

- Xiong, B.; Elberink, S.O.; Vosselman, G. Building modeling from noisy photogrammetric point clouds. ISPRS Ann. Photogramm. Remote Sens. Spat. Inf. Sci. 2014, 1, 197–204. [Google Scholar] [CrossRef]

- Guerrero, P.; Kleiman, Y.; Ovsjanikov, M.; Mitra, N.J. PCPNet Learning Local Shape Properties from Raw Point Clouds. Comput. Graph. Forum 2018, 37, 75–85. [Google Scholar] [CrossRef]

- Boulch, A.; Marlet, R. Deep learning for robust normal estimation in unstructured point clouds. Comput. Graph. Forum 2016, 35, 281–290. [Google Scholar] [CrossRef]

- Muja, M.; Lowe, D.G. Scalable nearest neighbor algorithms for high dimensional data. IEEE Trans. Pattern Anal. Mach. Intell. 2014, 36, 2227–2240. [Google Scholar] [CrossRef] [PubMed]

- Huang, H.; Li, D.; Zhang, H.; Ascher, H.; Cohen-Or, D. Consolidation of unorganized point clouds for surface reconstruction. ACM Trans. Graph. 2009, 28, 176. [Google Scholar] [CrossRef]

- Kalogerakis, E.; Nowrouzezahrai, D.; Simari, P.; Singh, K. Extracting lines of curvature from noisy point clouds. Comput.-Aided Des. 2009, 41, 282–292. [Google Scholar] [CrossRef]

- Lee, W.; Park, J.; Kim, J.; Kim, W.; Yu, C. New approach to accuracy verification of 3D surface models: An analysis of point cloud coordinates. J. Prosthodont. Res. 2016, 60, 98–105. [Google Scholar] [CrossRef] [PubMed]

- Lin, C.; Chen, J.; Su, P.; Chen, C. Eigen-feature analysis of weighted covariance matrices for LiDAR point cloud classification. ISPRS J. Photogramm. Remote Sens. 2014, 94, 70–79. [Google Scholar] [CrossRef]

- Cazals, F.; Pouget, M. Estimating differential quantities using polynomial fitting of osculating jets. Comput. Aided Geom. Des. 2005, 22, 121–146. [Google Scholar] [CrossRef]

- Dimitrov, A.; Gu, R.; Golparvar Fard, M. Non-Uniform B-Spline Surface Fitting from Unordered 3D Point Clouds for As-Built Modeling. Comput.-Aided Civ. Infrastruct. Eng. 2016, 31, 483–498. [Google Scholar] [CrossRef]

- Li, L.; Yang, F.; Zhu, H.; Li, D.; Li, Y.; Tang, L. An improved RANSAC for 3D point cloud plane segmentation based on normal distribution transformation cells. Remote Sens. 2017, 9, 433. [Google Scholar] [CrossRef]

- Chen, D.; Zhang, L.; Mathiopoulos, P.T.; Huang, X. A methodology for automated segmentation and reconstruction of urban 3-D buildings from ALS point clouds. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2014, 7, 4199–4217. [Google Scholar] [CrossRef]

- Hulik, R.; Spanel, M.; Smrz, P.; Materna, Z. Continuous plane detection in point-cloud data based on 3D Hough Transform. J. Vis. Commun. Image Represent. 2014, 25, 86–97. [Google Scholar] [CrossRef]

- Limberger, F.A.; Oliveira, M.M. Oliveira, Real-time detection of planar regions in unorganized point clouds. Pattern Recognit. 2015, 48, 2043–2053. [Google Scholar] [CrossRef]

- Wang, Y.; Zhu, X. Automatic feature-based geometric fusion of multiview TomoSAR point clouds in urban area. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2015, 8, 953–965. [Google Scholar] [CrossRef]

- Tarsha-Kurdi, F.; Landes, T.; Grussenmeyer, P. Hough-transform and extended ransac algorithms for automatic detection of 3D building roof planes from lidar data. In Proceedings of the ISPRS Workshop on Laser Scanning, Espoo, Finland, 12–14 September 2007. [Google Scholar]

- Yan, J.; Shan, J.; Jiang, W. A global optimization approach to roof segmentation from airborne lidar point clouds. ISPRS J. Photogramm. Remote Sens. 2014, 94, 183–193. [Google Scholar] [CrossRef]

- Schnabel, R.; Wahl, R.; Klein, R. Efficient RANSAC for point-cloud shape detection. Comput. Graph. Forum 2007, 26, 214–226. [Google Scholar] [CrossRef]

- Yu, Y.; Wu, Q.; Khan, Y.; Chen, M. An adaptive variation model for point cloud normal computation. Neural Comput. Appl. 2015, 26, 1451–1460. [Google Scholar] [CrossRef]

- Rabbani, T.; Van Den Heuvel, F.; Vosselmann, G. Segmentation of point clouds using smoothness constraint. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2006, 36, 248–253. [Google Scholar]

- He, M.; Cheng, Y.; Nie, Y.; Zhao, Z.; Zhang, F. An Algorithm of Combining Delaunay TIN Models and Region Growing for Buildings Extraction. In Proceedings of the International Conference on Computer Science and Technology, Guilin, China, 25–29 July 2017. [Google Scholar]

- Xu, Y.; Yao, W.; Hoegner, L.; Stilla, U. Segmentation of building roofs from airborne LiDAR point clouds using robust voxel-based region growing. Remote Sens. Lett. 2017, 8, 1062–1071. [Google Scholar] [CrossRef]

- Nurunnabi, A.; West, G.; Belton, D. Outlier detection and robust normal-curvature estimation in mobile laser scanning 3D point cloud data. Pattern Recognit. 2015, 48, 1404–1419. [Google Scholar] [CrossRef]

- Qin, L.; Wu, W.; Tian, Y.; Xu, W. Lidar filtering of urban areas with region growing based on moving-window weighted iterative least-squares fitting. IEEE Geosci. Remote Sens. Lett. 2017, 14, 841–845. [Google Scholar] [CrossRef]

- Amini Amirkolaee, H.; Arefi, H. 3D Semantic Labeling using Region Growing Segmentation Based on Structural and Geometric Attributes. J. Geomat. Sci. Technol. 2017, 7, 1–16. [Google Scholar]

- Guo, B.; Li, Q.; Huang, X.; Wang, C. An improved method for power-line reconstruction from point cloud data. Remote Sens. 2016, 8, 36. [Google Scholar] [CrossRef]

- Tseng, Y.; Tang, K.; Chou, F. Surface reconstruction from LiDAR data with extended snake theory. In Proceedings of the International Workshop on Energy Minimization Methods in Computer Vision and Pattern Recognition, Ezhou, China, 27–29 August 2007. [Google Scholar]

- Cgal. Available online: https://doc.cgal.org/latest/Point_set_shape_detection_3/index.html (accessed on 5 August 2018).

- Lin, Y.; Wang, C.; Zhai, D.; Li, W.; Li, J. Toward better boundary preserved supervoxel segmentation for 3D point clouds. ISPRS J. Photogramm. Remote Sens. 2018, 143, 39–47. [Google Scholar] [CrossRef]

- Papon, J.; Abramov, A.; Schoeler, M.; Worgotter, F. Voxel cloud connectivity segmentation-supervoxels for point clouds. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Portland, OR, USA, 23–28 Jun 2013. [Google Scholar]

- Wang, H.; Wang, C.; Luo, H. 3-D point cloud object detection based on supervoxel neighborhood with Hough forest framework. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2015, 8, 1570–1581. [Google Scholar] [CrossRef]

- Zhu, Q.; Li, Y.; Hu, H.; Wu, B. Robust point cloud classification based on multi-level semantic relationships for urban scenes. ISPRS J. Photogramm. Remote Sens. 2017, 129, 86–102. [Google Scholar] [CrossRef]

- Dong, Z.; Yang, B.; Hu, P.; Scherer, S. An efficient global energy optimization approach for robust 3D plane segmentation of point clouds. ISPRS J. Photogramm. Remote Sens. 2018, 137, 112–133. [Google Scholar] [CrossRef]

- Rusu, R.B.; Cousins, S. 3D is here: Point cloud library (PCL). In Proceedings of the IEEE International Conference on Robotics and automation (ICRA), Shanghai, China, 11–13 October 2011. [Google Scholar]

- Kong, T.Y.; Rosenfeld, A. Digital topology: Introduction and survey. Comput. Vis. Graph. Image Process. 1989, 48, 357–393. [Google Scholar] [CrossRef]

- Rusu, R.B.; Blodow, N.; Beetz, M. Fast point feature histograms (FPFH) for 3D registration. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA’092009), Kobe, Japan, 12–17 May 2009. [Google Scholar]

- Matei, B.C.; Sawhney, H.S.; Samarasekera, S.; Kim, J.; Kumar, R. Building segmentation for densely built urban regions using aerial lidar data. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Anchorage, AK, USA, 23–28 June 2008. [Google Scholar]

- Ramón, M.J.; Pueyo, E.L.; Oliva-Urcia, B.; Larrasoaña, J.C. Virtual directions in paleomagnetism: A global and rapid approach to evaluate the NRM components. Front. Earth Sci. 2017, 5, 8. [Google Scholar] [CrossRef]

- Vo, A.; Truong-Hong, L.; Laefer, D.F.; Bertolotto, M. Octree-based region growing for point cloud segmentation. ISPRS J. Photogramm. Remote Sens. 2015, 104, 88–100. [Google Scholar] [CrossRef]

- Avron, H.; Sharf, A.; Greif, C.; Cohen-Or, D. ℓ 1-Sparse reconstruction of sharp point set surfaces. ACM Trans. Graph. 2010, 29, 135. [Google Scholar] [CrossRef]

- Belongie, S. “Rodrigues’ Rotation Formula.” From MathWorld—A Wolfram Web Resource, Created by Eric W. Weisstein. Available online: http://mathworld.wolfram.com/RodriguesRotationFormula.html (accessed on 17 October 2018).

- Friedman, J.; Hastie, T.; Tibshirani, R. The Elements of Statistical Learning; Springer: Berlin, Germany, 2001; Volume 1. [Google Scholar]

- Hu, H.; Ding, Y.; Zhu, Q.; Wu, B.; Xie, L.; Chen, M. Stable least-squares matching for oblique images using bound constrained optimization and a robust loss function. ISPRS J. Photogramm. Remote Sens. 2016, 118, 53–67. [Google Scholar] [CrossRef]

- Pukelsheim, F. The three sigma rule. Am. Stat. 1994, 48, 88–91. [Google Scholar]

- Agarwal, S.; Mierle, K. Ceres Solver. 2013. Available online: https://github.com/ceres-solver/ceres-solver (accessed on 5 May 2018).

- Stein, S.C.; Schoeler, M.; Papon, J.; Wörgötter, F. Object Partitioning Using Local Convexity. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 23–28 June 2014. [Google Scholar]

- Lu, X.; Yao, J.; Tu, J.; Li, K.; Li, L.; Liu, Y. Pairwise linkage for point cloud segmentation. ISPRS Ann. Photogramm. Remote Sens. Spat. Inf. Sci. 2016, 3, 201–208. [Google Scholar]

- Chen, Y.; Cheng, L.; Li, M.; Wang, J.; Tong, L.; Yang, K. Multiscale grid method for detection and reconstruction of building roofs from airborne LiDAR data. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2014, 7, 4081–4094. [Google Scholar] [CrossRef]

| Tile 1 | Tile 2 | Tile 3 | |||||||

|---|---|---|---|---|---|---|---|---|---|

| methods | IPA | RG-PCA | RANSAC | IPA | RG-PCA | RANSAC | IPA | RG-PCA | RANSAC |

| number of small holes | 3 | 69 | 29 | 15 | 172 | 43 | 2 | 46 | 24 |

| number of fragments | 9 | 10 | 106 | 44 | 39 | 287 | 13 | 11 | 137 |

| Number of Points | Methods | Nup | TP | FN | FP | |

|---|---|---|---|---|---|---|

| building 1 | 127,665 | IPA | 362 | 13 | 1 | 10 |

| RG-PCA | 24,281 | 7 | 7 | 4 | ||

| RANSAC | 4159 | 10 | 4 | 16 | ||

| LCCP | 0 | 2 | 12 | 87 | ||

| PLINKAGE | / | 9 | 5 | 35 | ||

| building 2 | 278,893 | IPA | 227 | 15 | 0 | 0 |

| RG-PCA | 19,323 | 10 | 5 | 10 | ||

| RANSAC | 5705 | 11 | 4 | 22 | ||

| LCCP | 0 | 2 | 13 | 106 | ||

| PLINKAGE | / | 15 | 0 | 72 | ||

| building 3 | 258,245 | IPA | 2356 | 24 | 0 | 22 |

| RG-PCA | 30,553 | 17 | 7 | 29 | ||

| RANSAC | 7805 | 23 | 3 | 26 | ||

| LCCP | 0 | 3 | 21 | 397 | ||

| PLINKAGE | / | 16 | 8 | 161 |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhu, Q.; Wang, F.; Hu, H.; Ding, Y.; Xie, J.; Wang, W.; Zhong, R. Intact Planar Abstraction of Buildings via Global Normal Refinement from Noisy Oblique Photogrammetric Point Clouds. ISPRS Int. J. Geo-Inf. 2018, 7, 431. https://doi.org/10.3390/ijgi7110431

Zhu Q, Wang F, Hu H, Ding Y, Xie J, Wang W, Zhong R. Intact Planar Abstraction of Buildings via Global Normal Refinement from Noisy Oblique Photogrammetric Point Clouds. ISPRS International Journal of Geo-Information. 2018; 7(11):431. https://doi.org/10.3390/ijgi7110431

Chicago/Turabian StyleZhu, Qing, Feng Wang, Han Hu, Yulin Ding, Jiali Xie, Weixi Wang, and Ruofei Zhong. 2018. "Intact Planar Abstraction of Buildings via Global Normal Refinement from Noisy Oblique Photogrammetric Point Clouds" ISPRS International Journal of Geo-Information 7, no. 11: 431. https://doi.org/10.3390/ijgi7110431

APA StyleZhu, Q., Wang, F., Hu, H., Ding, Y., Xie, J., Wang, W., & Zhong, R. (2018). Intact Planar Abstraction of Buildings via Global Normal Refinement from Noisy Oblique Photogrammetric Point Clouds. ISPRS International Journal of Geo-Information, 7(11), 431. https://doi.org/10.3390/ijgi7110431