1. Introduction

In recent years, location-based services (LBSs) that use data acquired from mobile devices sensors to provide position, navigation, tracking, and awareness of moving objects and people [

1,

2,

3], have become increasingly important factors in several research studies conducted by the scientific community and from industry as well. The growing spread and computational power of mobile phones, with the increase in device connectivity has allowed the development of new Internet of things (IoT) applications in many interesting fields, such as medical care [

4], ambient assisted living [

5], environmental monitoring [

6], transportation [

7], marketing [

8], etc. All these services require accurate positioning to locate people, goods, vehicles, animals and assets. The global navigation satellite system (GNSS) positioning provides good accuracy only in open areas, but when this functionality is transposed in an indoor space or in an urban canyon, the GNSS signal is lost and it is necessary to overcome this issue with the integration of different techniques and sensors. In recent years, some LBSs have been proposed using integration of different technologies and methods of measurements [

1]. Cameras [

9,

10,

11], infrared (Kinect), ultrasound [

12], WLAN/WiFi [

13], RFID [

14], mobile communication [

15] and so forth are examples of the technologies that the scientific community has put at the service of indoor locations. All these positioning systems have pros and cons that make them more useful in specific scenarios, compared to other options. All the technologies using radio frequencies as a physical quantity to define the location have some common issues linked with the necessity of the line of sight (LOS), signal noise corruption, and problems of propagation and multipath. Moreover, the cost of these positioning systems could be very expensive due to the necessary infrastructure. The LBSs based on the camera sensor have strong advantages and do not need to install any network of chipsets in the environment. All the primary sensors are already installed in the user device. In this case, the system could be considered low cost. Moreover, the positioning accuracy with these systems is usually more accurate in comparison to other systems. Furthermore, most of these systems cannot determinate the orientation of the user, with important limitations to support many useful applications like augmented reality.

This scenario illustrates our interest in indoor positioning systems, exploited as unique sensors, such as those found in everyday smartphones, and our attention is focused on densely lived environments that could have critical issues.

This paper is connected to a project conducted by the Politecnico di Torino (Italy) and the Electronic and Telecommunications Research Institute (ETRI, Republic of Korea) with the aim of realising an image-recognition based location (IRBL) procedure useful for estimating the position and orientation of an image taken by a mobile device through the extraction of 3D information from a reference image. It aims to estimate the user location through the smartphone that acquire an image of the environment and query a database in a server where images with 3D information are stored. The database images are synthetic RGB-D images extracted by an accurate 3D model of the environment. The 3D model could come from a light detection and ranging (LiDAR) acquisition, from a 3D CityGML model, from a structure from motion reconstruction, from a time of flight (ToF) camera, or other devices or techniques. This approach can be a component of a hybrid navigation solution with inertial measurements unit (IMU) data [

16,

17,

18].

The project is still in progress and at now has seen the validation of the first results obtained in two test sites: the Bangbae metro station in Seoul and the ETRI research building in Daejeon. The basis of the IRBL procedure is the match between each real-time acquired smartphone images and a corresponding synthetically generated 3D image extracted by a database (DB), all implemented in an automated procedure. In the next sections, the entire workflow will be described. The developed algorithms will be analysed, and a complete description of the activities realised in the test sites with the validation will be reported.

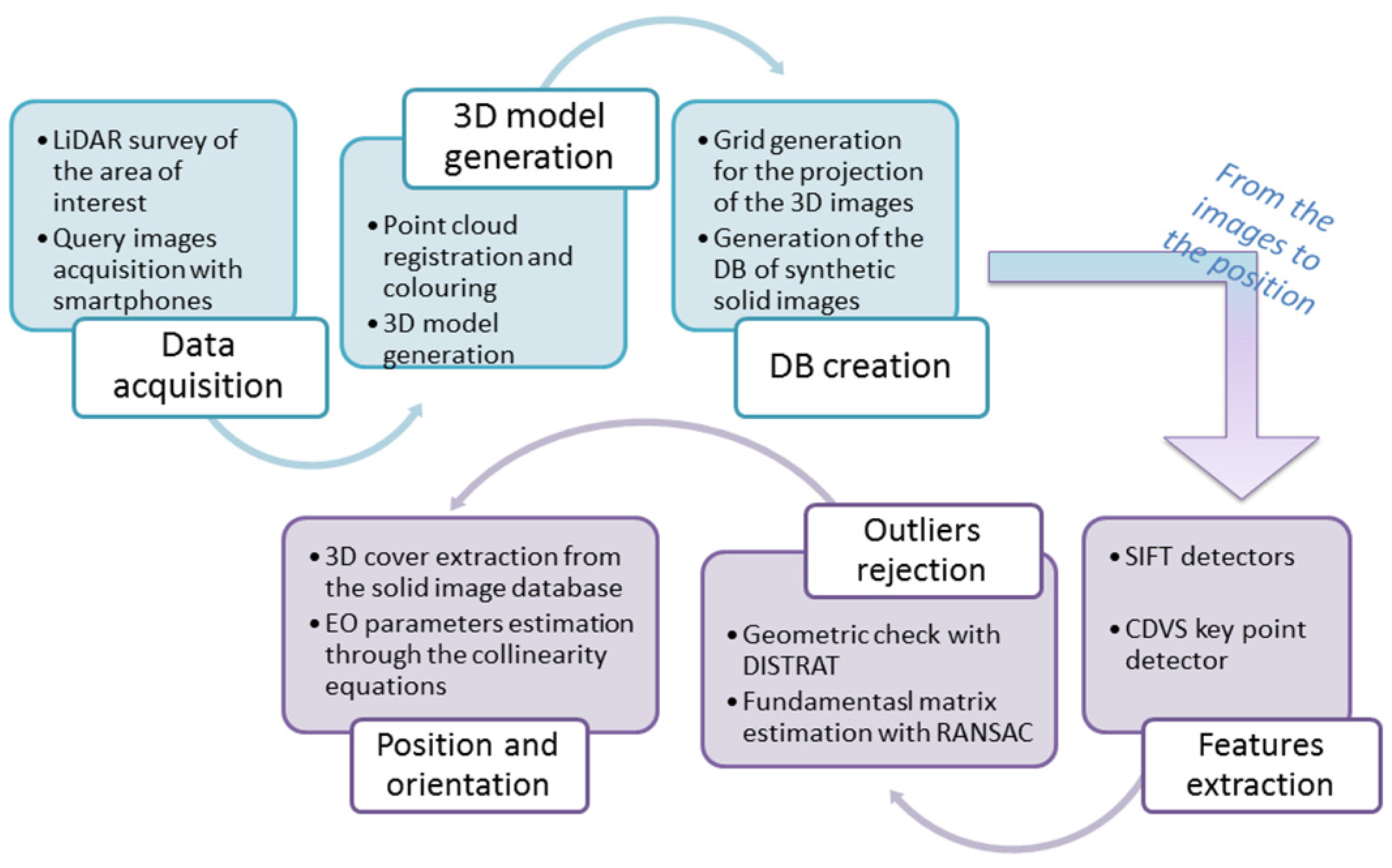

Figure 1 describes the procedure by the sequential steps: the 3D data acquisition with a LiDAR instrument, the 3D model generation, the database of RGB-D image realisation, the image retrieval with MPEG7 Compact Descriptor for Visual Search (CDVS) and the IRBL algorithm applications for positioning. In the next sections, the attention will be focused on data acquisition and generation of the RGB-D image DB as a fundamental part for the correct application of the procedure.

2. State of the Art

As stated above in the research dealing with the smartphone on-board sensors, particularly at this stage of the research, only the camera was analysed. A literature review on optical systems for indoor positioning has been published by Mautz and Tilch in 2011 [

19]. All camera-based positioning systems deal with the definition of position and rotations in a 3D world when the primary observation is a 2D position on a camera sensor. Depth information can be obtained with the motion of the camera or can be measured directly with additional sensors, such as with a laser scanner. In the first approach, the scale of the system cannot be determined, and it requires a separate solution. The transformation from the image space into the object space and this requires distance information. If a stereo camera system is used with a known baseline, the scale can be determined from the stereoscopic images. Alternatively, distances can be measured with additional sensors, such as a laser scanner or range imaging cameras.

There are many previous research studies on indoor image based localisation that pursue different goals and use different methods and technologies also in the function of the field of interest of the research groups. The robotics community has focused on visual odometry approaches [

20] and simultaneous and location mapping (SLAM) [

21,

22], while other groups related to geomatics and graphics are investigating semantic features [

23] or structure from motion.

Some interesting work exploits the computer vision algorithm and in particular the neural network and transfer learning for visual indoor positioning and classification [

3]. Some use RGB-D images to perform object recognition [

24]. Other researchers use omnidirectional cameras to generate an image map database to query [

25]. On the use of a smartphone as a navigation device, some interesting research can be found in [

26,

27,

28,

29].

The main objective of the project related to this paper is to investigate and develop a low-cost positioning solution in an indoor environment, which could define the camera position and orientation with high accuracy, through a database of high resolution synthetic images generated from a very accurate 3D model. Examples could be found in the research by Liang et al. [

10], where the image based localisation has been performed using a backpack acquiring frame and depth information at the same time for the generation of a database of reference images. This work differs from our research due to the lower resolution of the images and the methodology of the database creation.

It is evident that these methods need a-priori information, but nowadays, with the spread of new survey instruments and techniques like photogrammetry, LiDAR, and mobile mapping systems, 3D structure information of large environment could be rapidly acquired with (as positive spillover) an accurate 3D model that could be always available for further upgrading and be usable for collateral tasks.

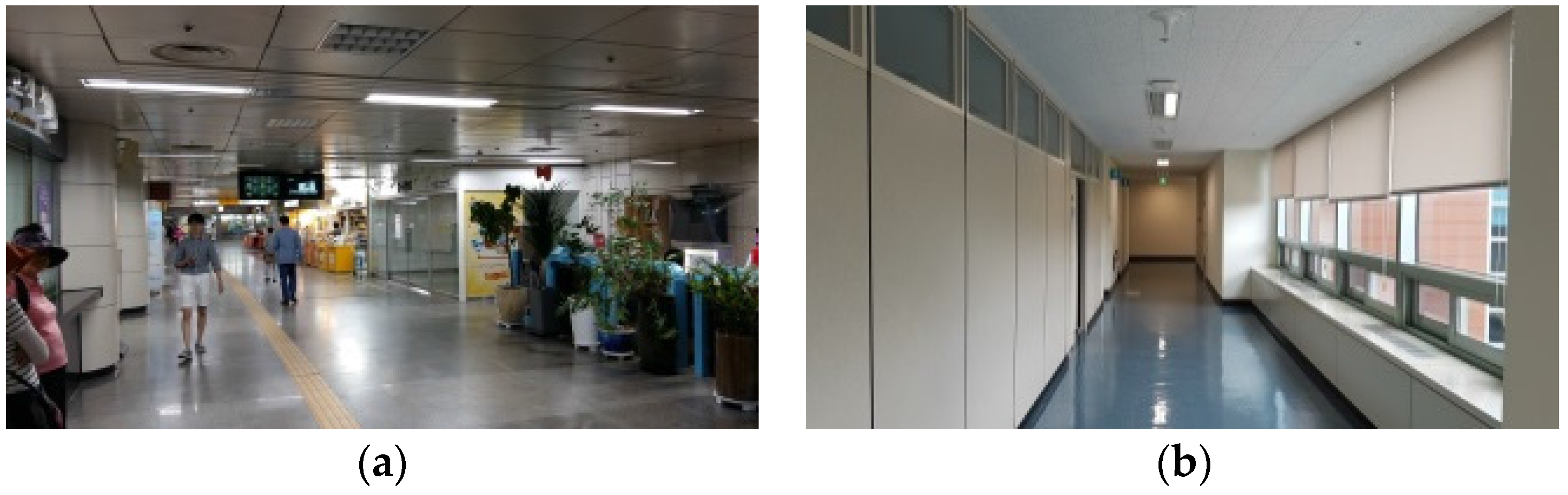

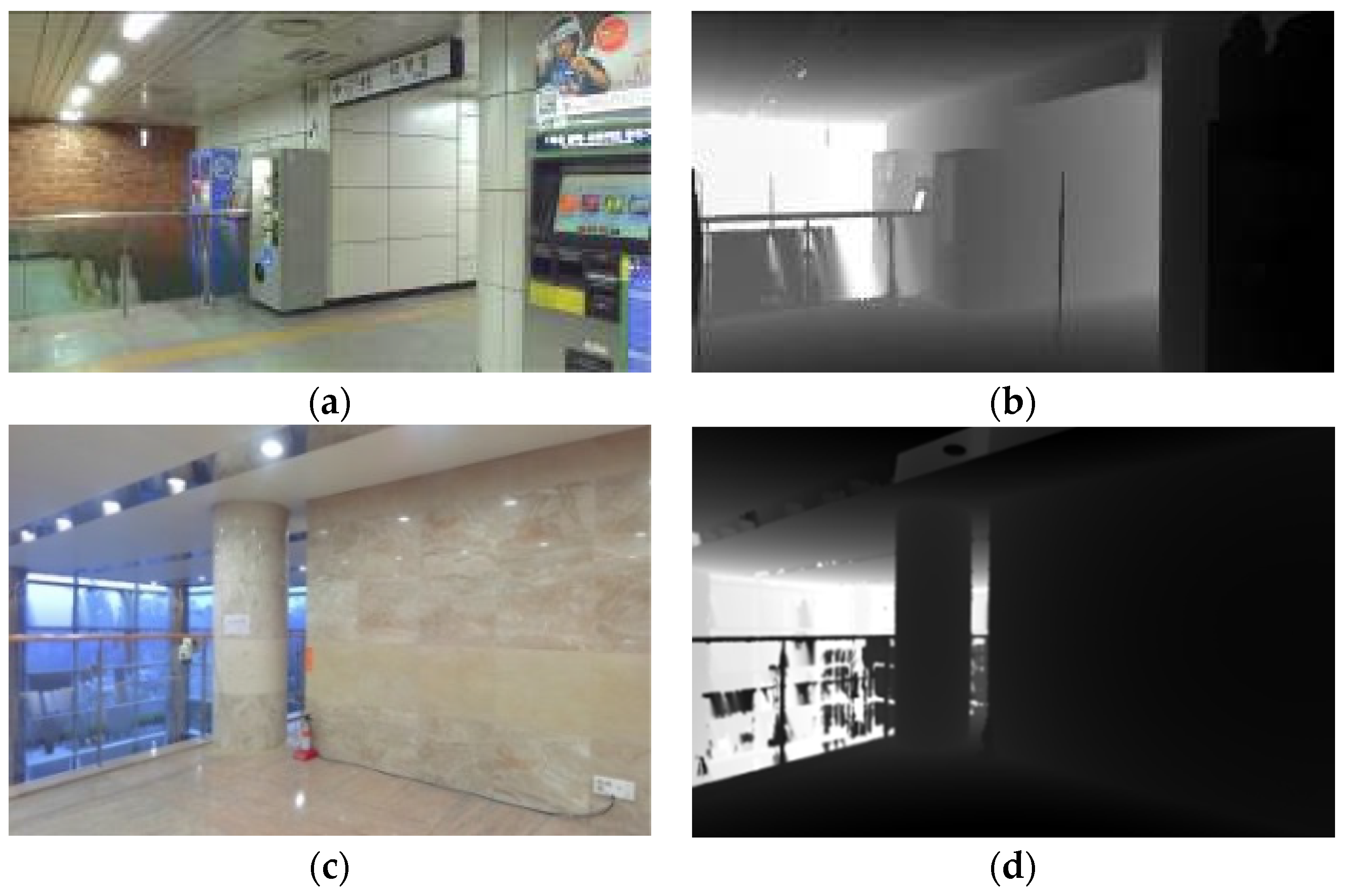

4. Data Acquisition and Processing for Image Database Construction

The research project between Politecnico di Torino and Electronic and Telecommunication Research Institute is based on the validation of the proposed procedure on two different test sites that have been chosen to have two different indoor scenarios with some specific issues. The first environment, the Bangbae metro station of Seoul (Republic of Korea), is an important public infrastructure of interest, where an LBS can better express its usefulness. It presents various indoor spaces with different furniture but also a very repetitive railway floor. It is also very populated, which is an important issue in a IRBL system. The second test site is the research department of the ETRI building in Daejeon (Republic of Korea) where, according to the function (research office), the internal areas are repetitive. Each floor has the same aisle with the same colour and the same furniture. The reason for the different scenario is based on the evaluation of the procedure of indoor localisation in noisy areas (very popular with a lot of people), and in similar areas where, from a first view, is difficult to find differences between the different floors (

Figure 6).

From the operative point of view, the first step of the work was the realisation of a complete survey of the two test areas using a traditional LiDAR instrument and procedure [

45]. To guarantee continuity of the data in all the environments, several images for a typical photogrammetric approach based on structure from motion (SfM) algorithms were acquired with the idea of combining the data in case of loss of information [

46]. As the LiDAR acquisition was suitable for the entire representation of the two environments, the photogrammetric elaboration was not used for the generation of the RGB-D database.

Another aspect that needs to be underlined is that the survey at the ETRI building was not geo-referenced using a topographic network. This lack does not degrade the indoor positioning procedure that will present in this case a relative reference of the camera towards the surrounding environment.

For the metric survey of Bangbae metro station, first a general topographic network of the area and the surroundings was realised to define a common reference coordinate system. In this case, a mixed GNSS and total station (TS) survey strategy was employed. The network was realised on three main levels of the subway station. The GNSS measurements naturally were acquired in outdoor conditions, Furthermore the two vertices were connected to Levels −1 and −2 with traditional TS measurements, as shown in

Figure 7a,b. For the GNSS survey, a Geomax Zenith 35 receiver was employed, and for the TS network, a Leica TS06 was used.

In post-processing, the network has been adjusted with Leica Geo-office and Microsurvey Starnet software using the GNSS permanent station of Suwon (a station of the International GNSS Service network) as reference point. According to the achieved accuracy on each vertex (less than 1 cm), the next step was the survey of the markers positioned on the station area. This operation was performed with the TS using traditional side-shot measurements. The markers, in this case, black and white checkerboards, are commonly used for the registration of scans and for geo-referencing the final model (

Figure 7c).

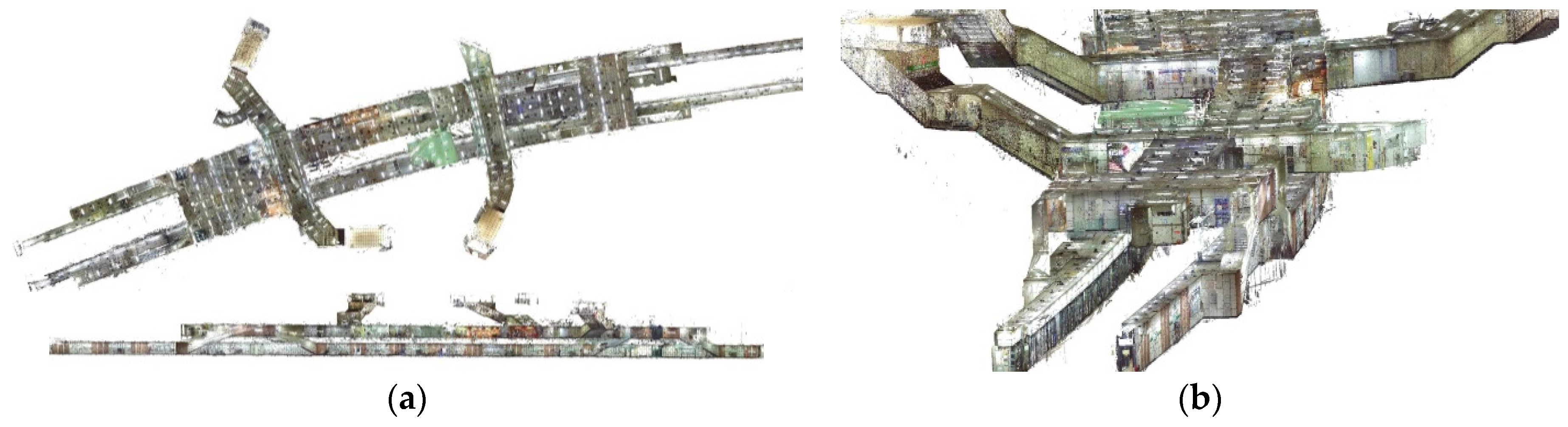

Finally, for the LiDAR acquisitions, two Faro Focus3d X130 were employed. The instrument is a phase shift laser that allows us to acquire 3D point clouds with an accuracy of ±2 mm in the following range: 0.30–130 m. During the point cloud acquisition, due to the included digital camera, it is possible to acquire the images of the scanned area as well. In the test field, the acquisition was performed with a resolution of 1/5 (a point each 9 mm at 10 m) and a quality of 4× (points measured four times). For the complete LiDAR survey of the Bangbae subway station, 114 scans were acquired (55 at Level −1 and 59 at level −2). According to the aforementioned setting of the scanner, each scan contains approximately 26 million points, and about three billion points were measured. The LiDAR data were processed according to the traditional approach [

47] using Scene software by Faro, which includes the following main steps: point cloud colouring, scan registration, and scan geo-referencing. Naturally, using the markers, it is possible to evaluate the accuracy of the geo-referencing according to the residual on the measured point. The mean RMS on the measured markers (85 were employed) was 1.56 cm.

Figure 8 shows three views of the complete point cloud (114 merged point clouds).

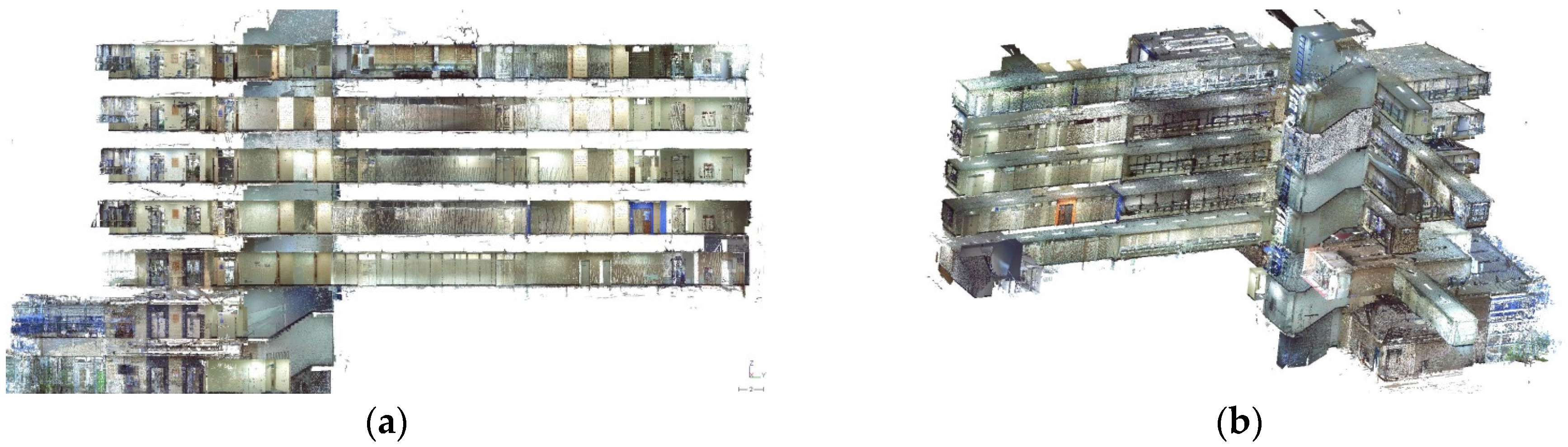

The ETRI building was only surveyed by the LiDAR in a local reference system. All the acquisitions were realised without the usually required topographic network and without the markers for the registration of the clouds. As a consequence, the final point cloud is not located in a known cartographic reference system.

As for the Bangbae station, the LiDAR acquisitions have been performed using the aforementioned Faro Focus 3D X130 that was used at a quite higher resolution: 1/4 (a point each 5 mm at 10 m) with the same quality (4×) of the Bangbae settings. The complete building (seven floors) was completely scanned with 111 scans that, according to the setting of the scanner, delivered each scan with 40 million of points approximately. Approximately 4.5 billion points were measured.

In the case of the ETRI building, the data were processed using Scene software by Faro, but the scan registration was realised using the cloud-to-cloud approach [

48]. This approach, based on the iterative closest point (ICP) well-known algorithms [

49,

50,

51], has been implemented starting from Version 5.5 of the Scene software and, nowadays is working very well in the pipeline of the Scene LiDAR data processing. Using this approach, it is first important to define an initial setting of the several scan positions. After the initial position, the algorithm allows us to improve the position of the adjacent scans using the shape of the different clouds. In terms of accuracy, in this case, it is possible to understand only the discrepancy between the adjacent clouds that, in the case of the ETRI building, were for all the registered scans under 1 cm. Naturally, as is reported above, with the cloud-to-cloud approach, the geo-referencing was not allowed since no ground control points (GCPs) were measured on the area. All the point clouds were referenced to a local system that started from an arbitrary position of the first achieved scan in the building. In

Figure 9, two views of the complete point cloud are shown.

The final step for both the buildings was the generation of the .xyz file. This ASCII file contains the X, Y, and Z coordinates of each point and the R, G, and B values extracted from the LiDAR internal camera. This file was used for the generation of the RGB-D images.

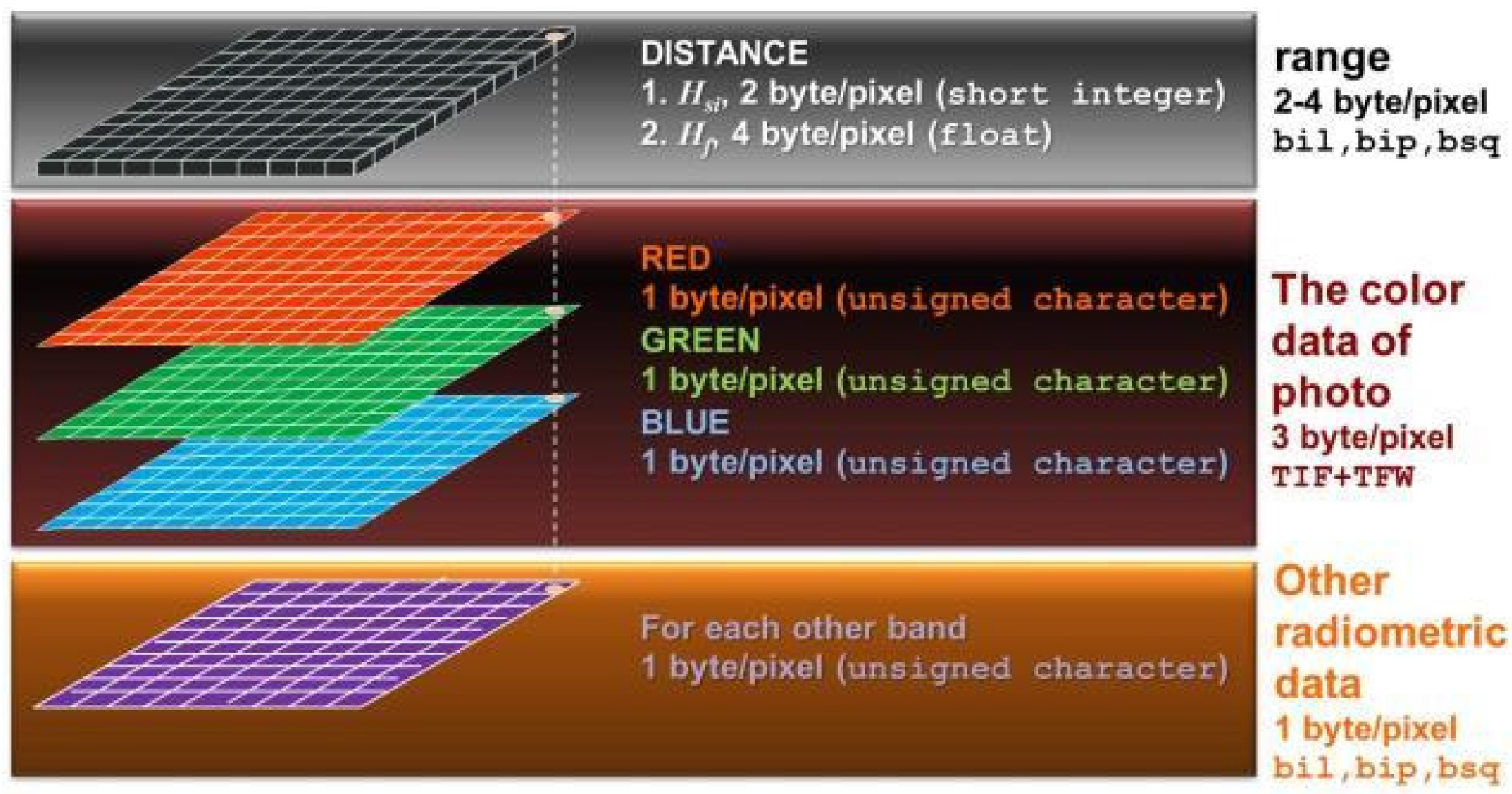

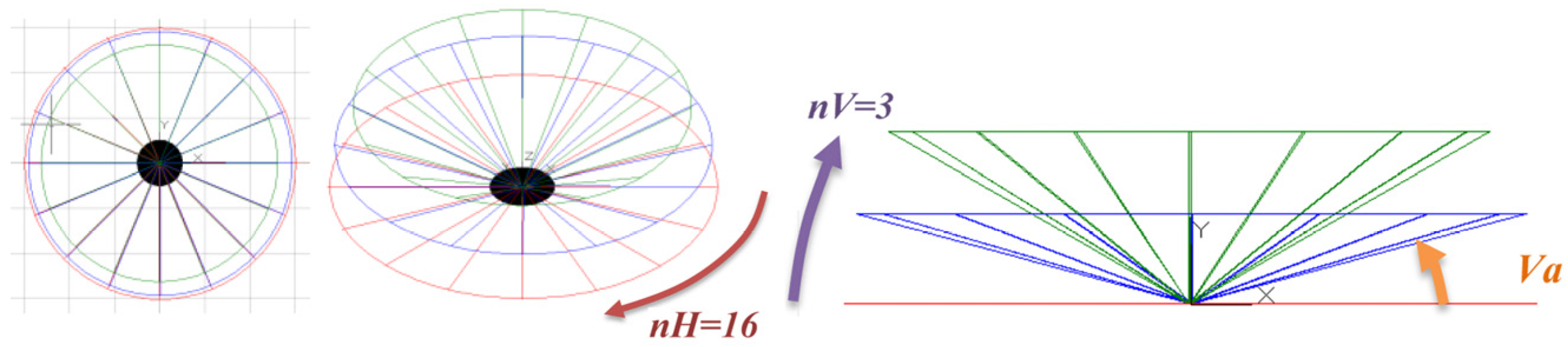

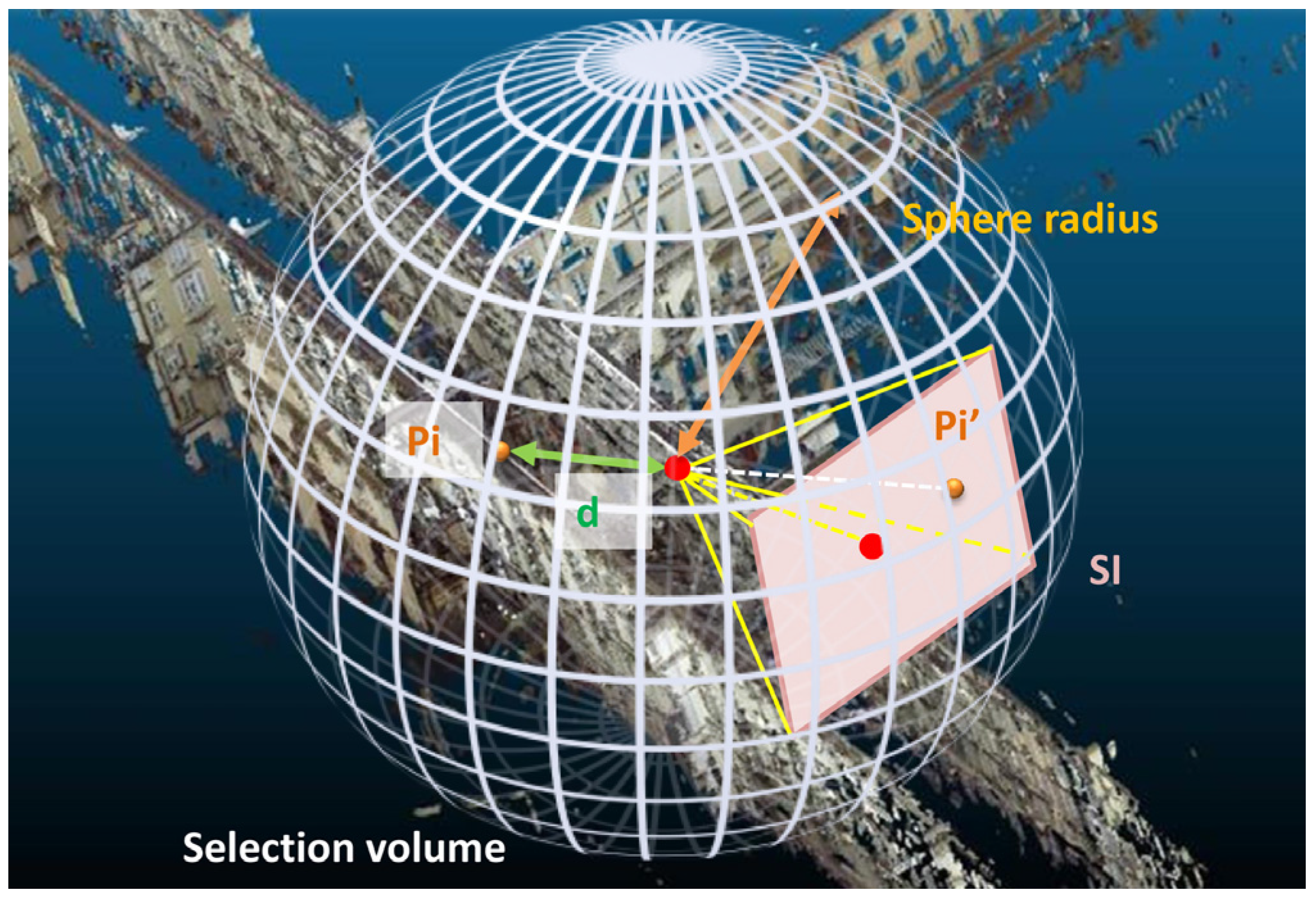

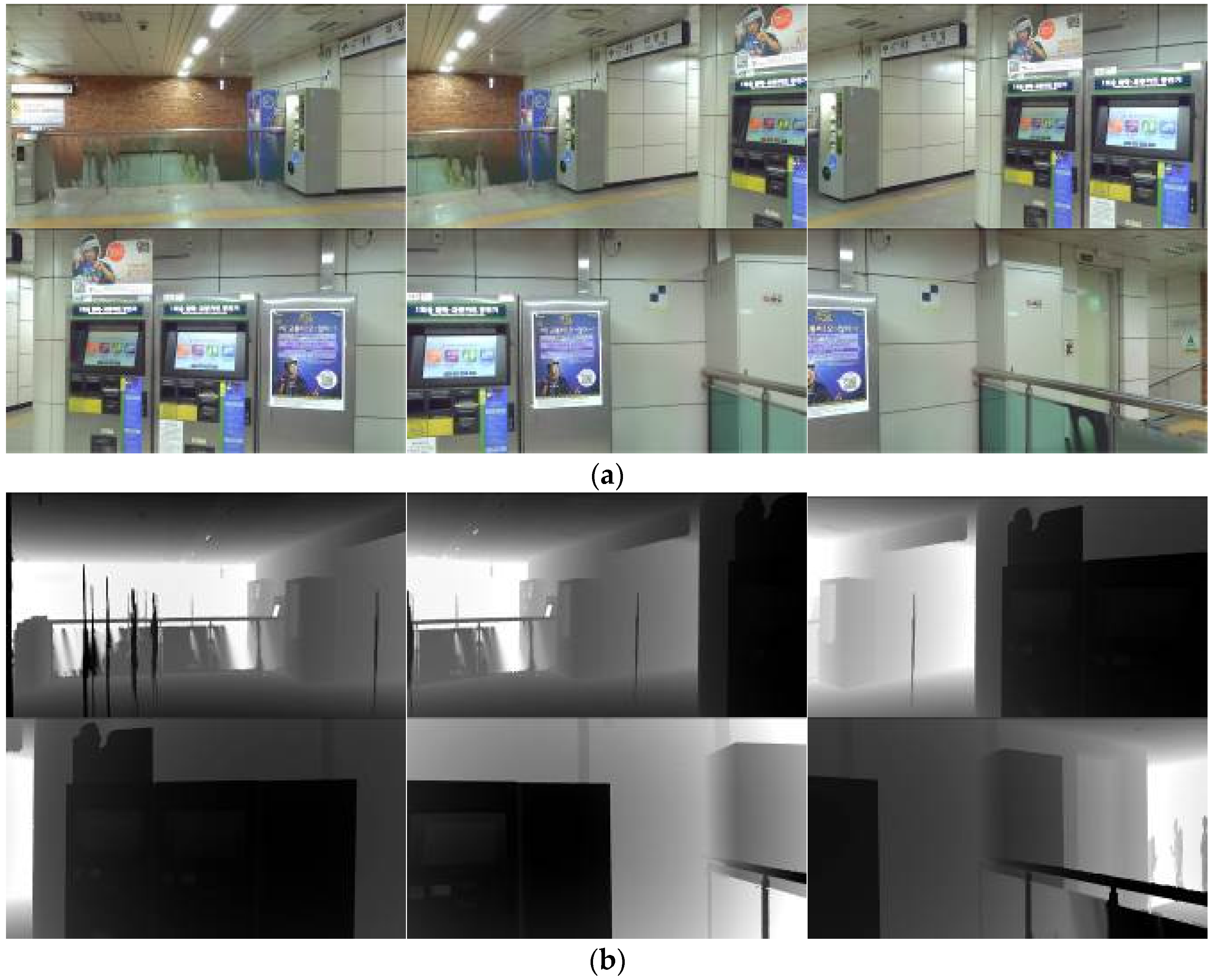

The synthetic RGB-D image can be automatically generated by means of ScanToRGBDImage software tools (developed by the Geomatics research group of the Politecnico di Torino in Intel Visual Fortran) starting from the LiDAR point cloud. The ScanToRGBDImage software generates a set of “synthetic” .JPG images with correspondent range images (

Figure 10). For each scan position, 96 images have been generated: 32 horizontal directions for three different inclinations of 0°, 10°, and 20° with respect to the horizontal plane with 2500 × 1600 pixels, 3 μm pixel size, and a focal length of 4.667 mm. For the Bangbae DB, almost 9700 RGB-D images have been produced in about 36 hours of batch processing time with a desktop computer (i7 5600 U 2.66 GHz 32 Gb RAM), while for the ETRI building, 10,700 images have been produced in about 40 hours with a computer with the same characteristics.

5. Smartphone Image Acquisition for Retrieval Procedure and Definition of Ground Truth

On site, with the aim to evaluate the retrieval procedure, several pictures of the test areas have been taken with commercial mobile devices, namely the Samsung Galaxy A5, Galaxy S5, and Galaxy S7 Edge were used to compare different sensors.

The devices used for the acquisitions are smartphones with an integrated non-metric camera that requires a calibration through analytical procedures to define the characteristics of the optical-digital system to evaluate the distortion parameters and other errors. The calibration allows the evaluation of the effects of the radial and tangential distortion of the sensors that are involved in the definition of the camera internal orientation using the collinearity equations. However, as an approximation, it is possible to consider only the effects of the radial distortion, expressed in this case by two parameters K1, and K2.

Knowing the object coordinates of some points acquired by the camera, it is possible to obtain the unknown parameters by solving the bundle-adjustment calculation. The unknown are the six external orientation parameters of the images and the five parameters of the camera (ξ0, η0, c, K1, and K2). The object on which the calibration is usually made is a calibration grid, which is specifically made where the coordinates of the grid points are known with high precision. This procedure is known as the self-calibration of the camera sensor.

To include the calibration process in the IRBP procedure, the “Camera Calibrator” tool of MATLAB was tested. This tool can estimate intrinsic, extrinsic, and lens distortion parameters to remove the distortion effects and to reconstruct the 3D scene. The application requires the use of a specific checkerboard pattern that must not be square (

Figure 11). The images of the pattern must be acquired with a fixed zoom and focus. The calibration requires at least three images, but it is suggested to use 10–20 images from different distances and orientations to obtain the best results. The tool’s data browser displays the images with the detected points, due to the not square checkerboard pattern. A reference system is also defined using the different numbers of squares in the two directions. The calibration algorithm assumes a pinhole camera model, and after processing the applications, displays the results and the accuracies of the process.

In this work, the self-calibration was made on the three different smartphones used in the procedure of IBRP, and the results are shown in

Table 1.

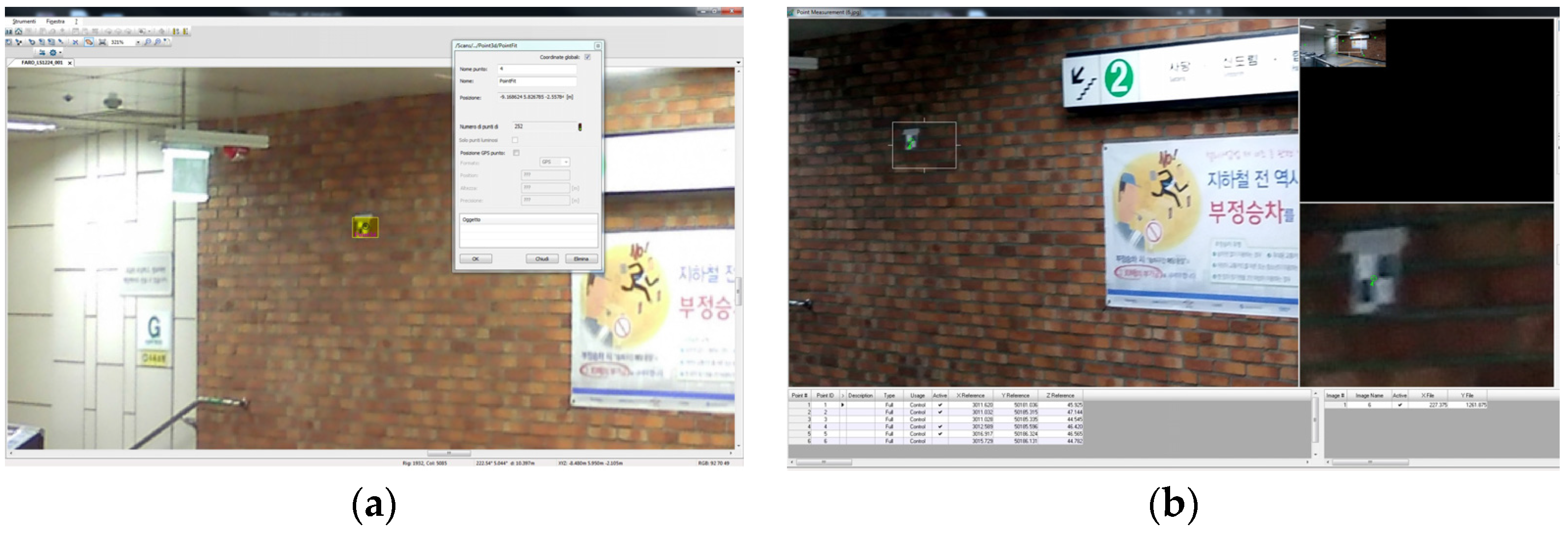

After the internal calibration, to define the position and attitude of the acquired smartphone images and then use it as “ground-truth”, a photogrammetric process was employed. In the case of single-shot acquisition, it is possible to perform s single image adjustment (or pyramid vertex) that allows us to evaluate the coordinates of the acquisition point (X

0, Y

0, and Z

0) and the assets as well (ω,

, and κ). For this task, at least six collinearity equations must be written which means that to perform this process, three plano-altimetric GCPs are required. The coordinates of the GCPs were extracted directly from the previous LiDAR point clouds using Scene. First, a visible point was selected on the smartphone image. Afterwards, the same point was measured on the point cloud, and the coordinate were extracted. These values (coordinates) were used as GCPs in the employed photogrammetric software (

Figure 12). In the present research, Erdas Imagine by Hexagon Geospatial was employed for the process. To have an accurate control of the results, at least six points were used as GCPs. The final precision for all the analysed images was around 5 cm for the position and around 10 mgon for the angular values. Twenty query images were used for the check for Bangbae station (10 images for each floor) and 10 images were used for the ETRI building.

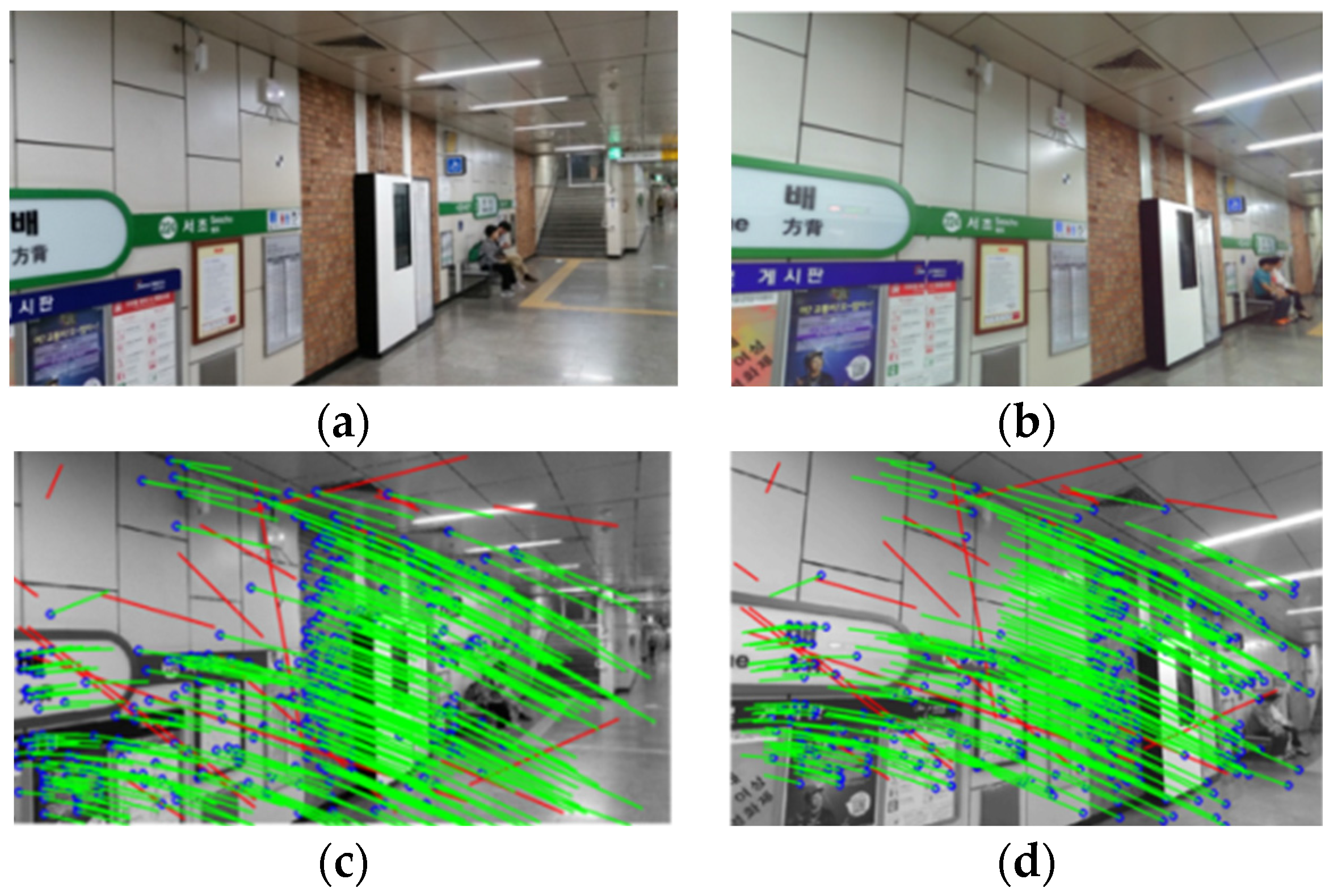

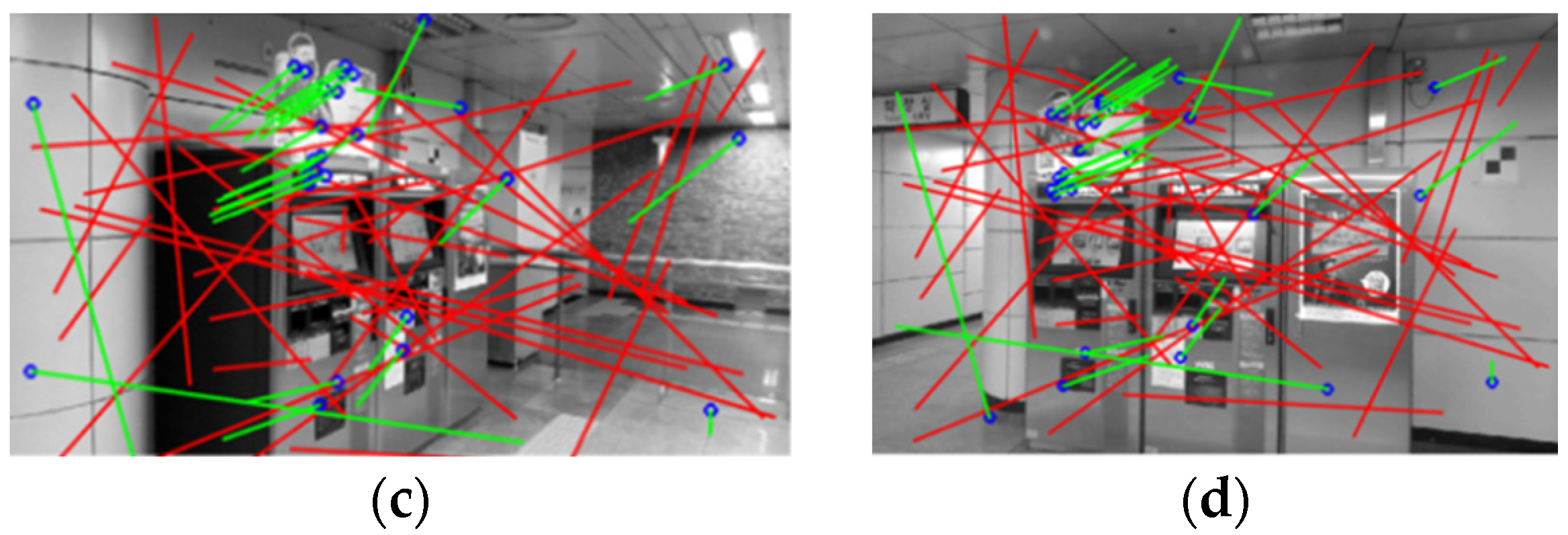

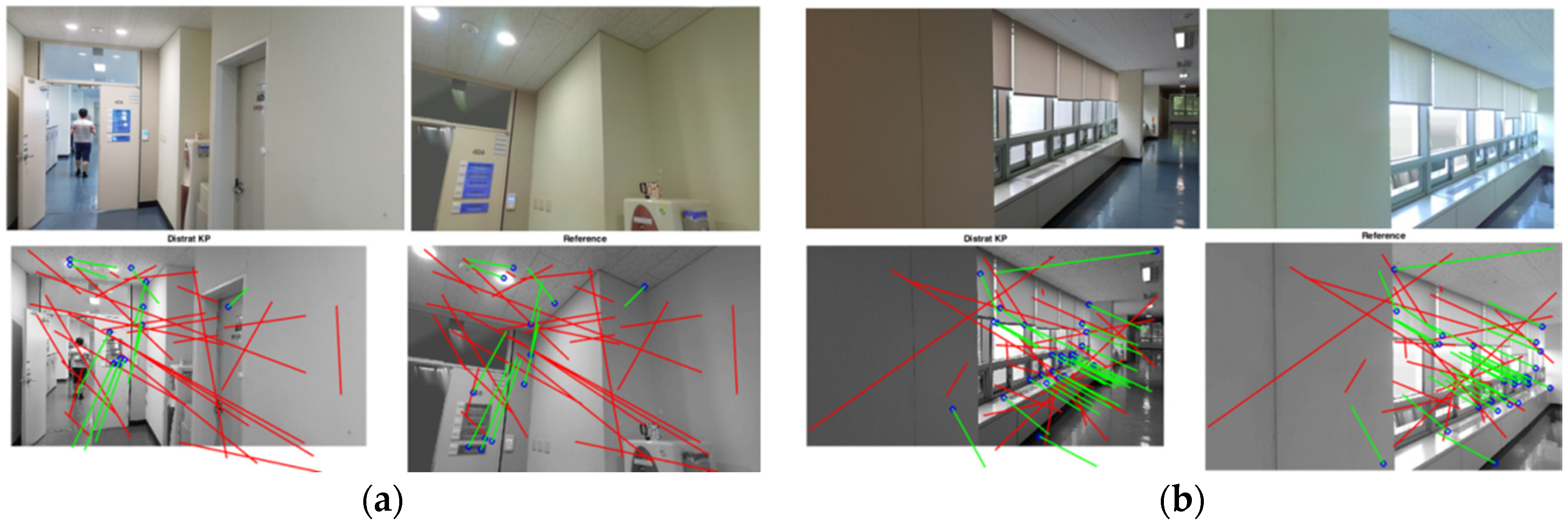

As stated in

Section 3.2, the visual search technology allows us to retrieve the best reference images form the RGB-D images database and ranked them with a priority score. These procedures were applied on the selected query images for both the test sites, and the results of the extraction are shown in

Table 2 for the Bangbae metro station and in

Table 3 for the ETRI building. In these tables, the obtained scores of the 1st ranked image selected by the CDVS server are reported. This is the best solutions from the three possible candidates proposed by CDVS. As shown in

Table 2, the score is always greater than 3, indicating quite good solutions. In most cases, the score is greater than 5, indicating a good solution. The time for the query retrieval process is estimated at about treee seconds. In the second test site, 10 check images have been acquired by the smartphone Samsung S7. The results of the reference image extraction using CDVS are greater than 3, indicating quite good solutions, excluding Image No. 2 (score = 2.54) that was ignored since the resulting IRBL solution was incorrect.

7. Conclusions and Future Works

The procedure that is reported in the article is well tested and demonstrates that the first part of the proposed workflow can be successfully performed without problems according to the area that needs to be surveyed. The timeframe is connected to the data acquisition and processing of the LiDAR data. New approaches, such as Kinect, SLAM instruments, ToF cameras, or photogrammetric SfM techniques are under development and have been studied to improve the quickness of the survey operations. Nowadays, the performance of the realised software is stable and work efficiently with a large dataset as well. Further improvements using a new version in C++ are under development to speed up the computational time for the generation of the RGB-D images. The resolution of the generated images is connected to the LiDAR model and especially to the on-board camera that is used for acquiring the RGB information after the scans. Compared to other 3D acquisition devices, this is the best solution. The developed CDVS procedure is efficient and works without any problems, delivering excellent results very quickly during the retrieval process.

The approach of the IRBL procedure is new. The most important improvement is connected to the use of the DISTRAT algorithm combined with RANSAC that speeds up the process 100 times compared to the use of the RANSAC only. The use of a more controlled photogrammetric approach allows us to evaluate the real accuracy of the positioning, as seen in the reported results. According to the evaluated accuracy in the previous sections, this approach can obtain correct indoor positioning using smartphone images with sub-metrical accuracy for the position and a few gons for attitude. The IRBL can obtain a correct solution in complex conditions (noise due to people, narrow corridors, artificial light, and other environmental problems).

Since the IRBL procedure is under testing, the available application is only developed in MATLAB and needs to be improved using other programming languages to obtain a product with higher performance in terms of usability and speed. The research is still in progress. First, an integration of the survey operation connecting to photogrammetric data and LiDAR data is under evaluation. Moreover, the employment of a Kinect, ToF cameras, and SLAM instruments are good options for the future works. Some first results using the Kinect are promising, and a more accurate analysis of the results is under evaluation. Furthermore, the next steps of the project according to the common research (Politecnico and ETRI) will be the server realisation using the CDVS technology to allow execution of the retrieval using the web.

Finally, the IRBL algorithm will be improved with the new development of a realisation of an application programming interface (API) that will allow to extracting the needed information for the indoor positioning and delivering the results directly on the smartphone.