Hybrid 3D Rendering of Large Map Data for Crisis Management

Abstract

:1. Introduction

2. Background

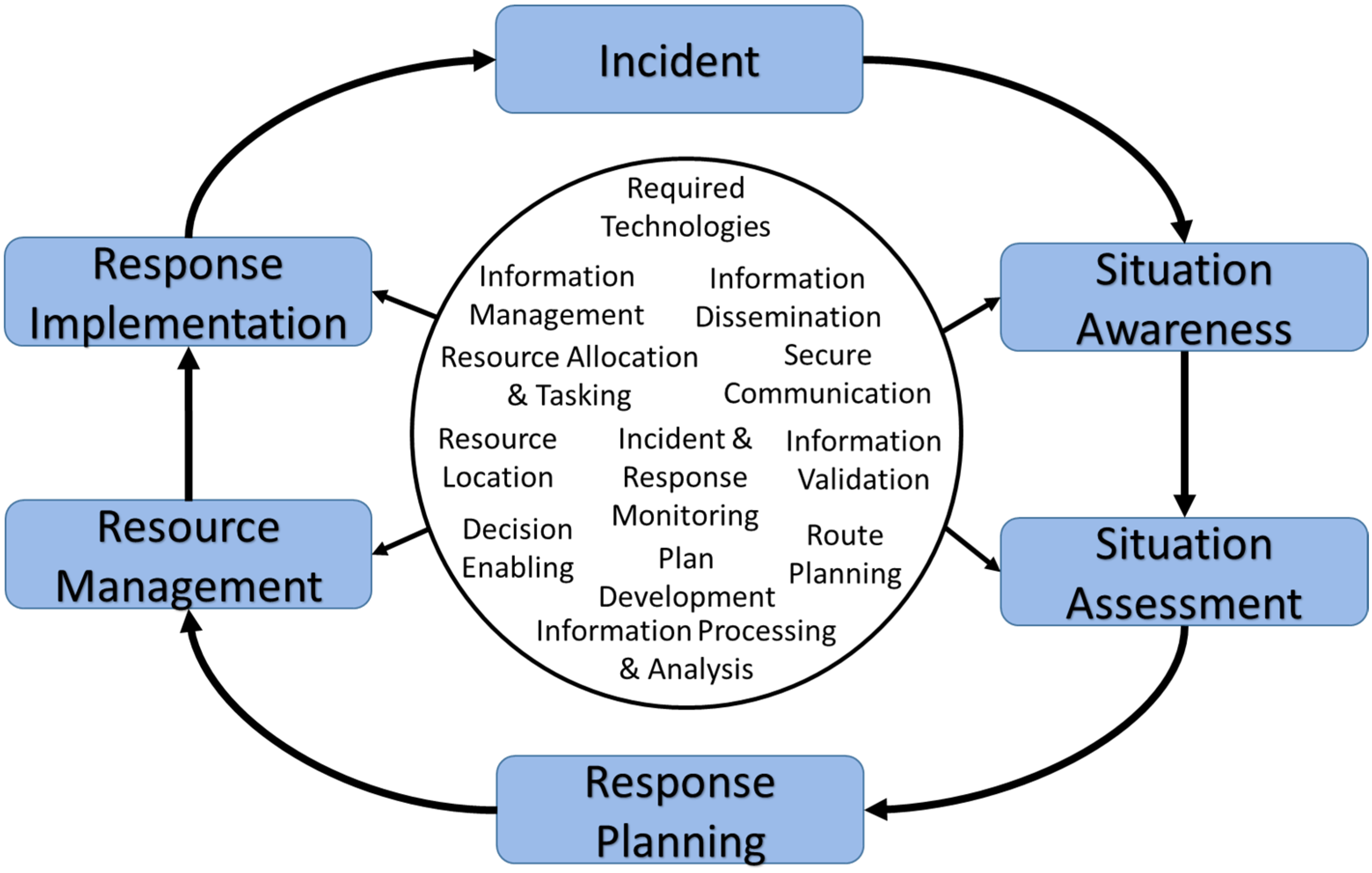

2.1. Crisis Management

2.2. Related Work

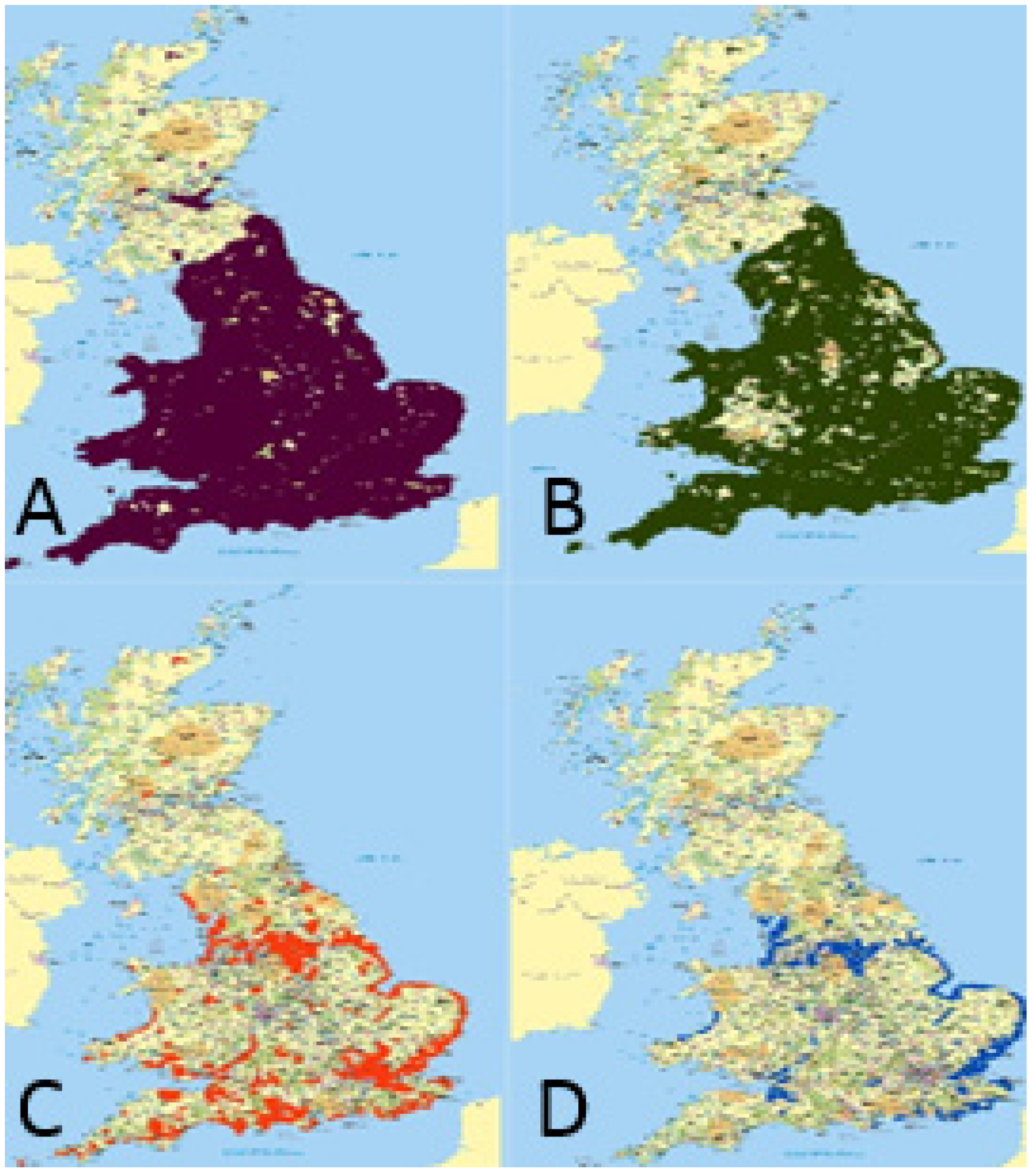

2.3. Digital Map Open Data

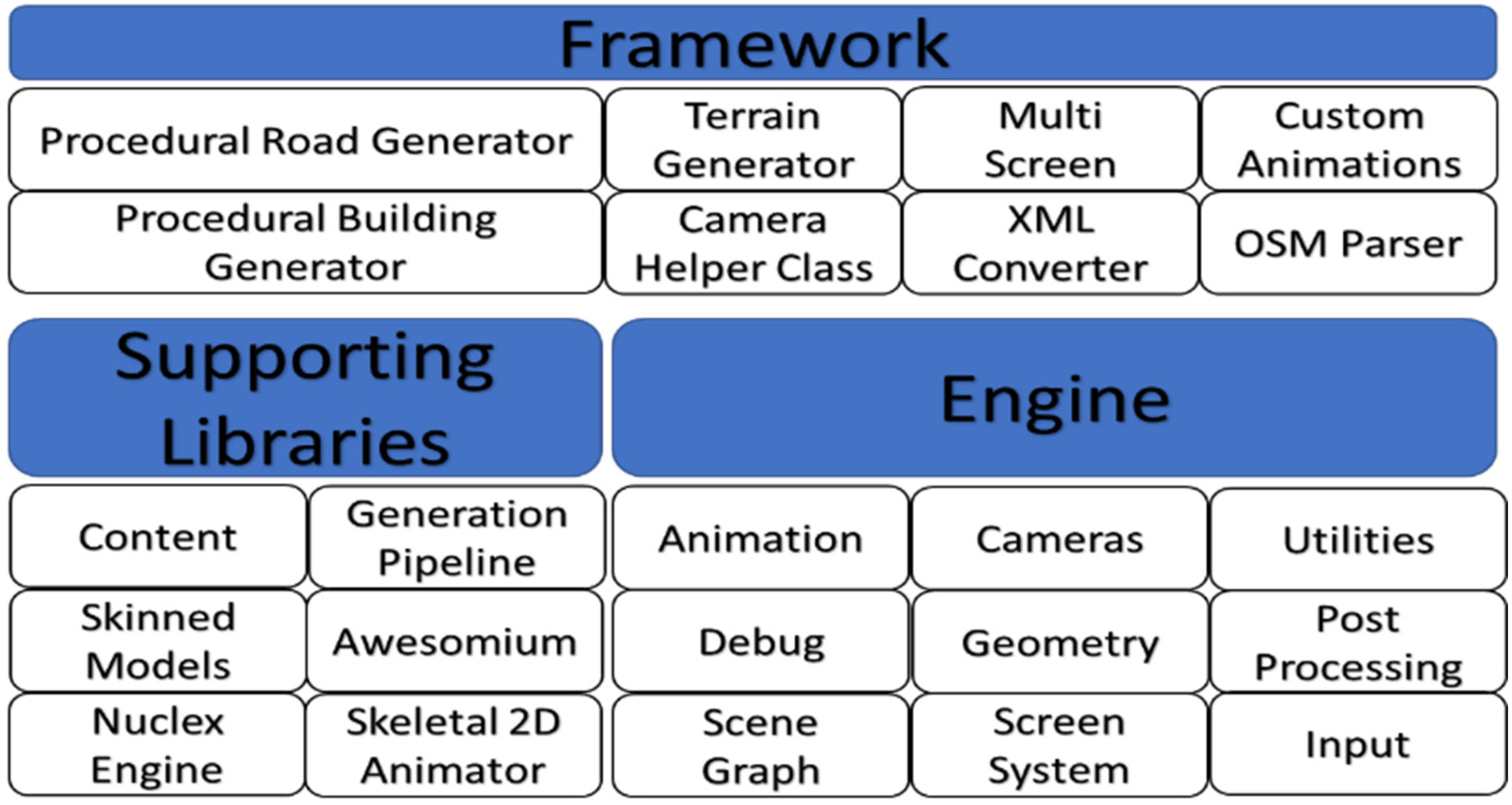

3. Visualisation Framework

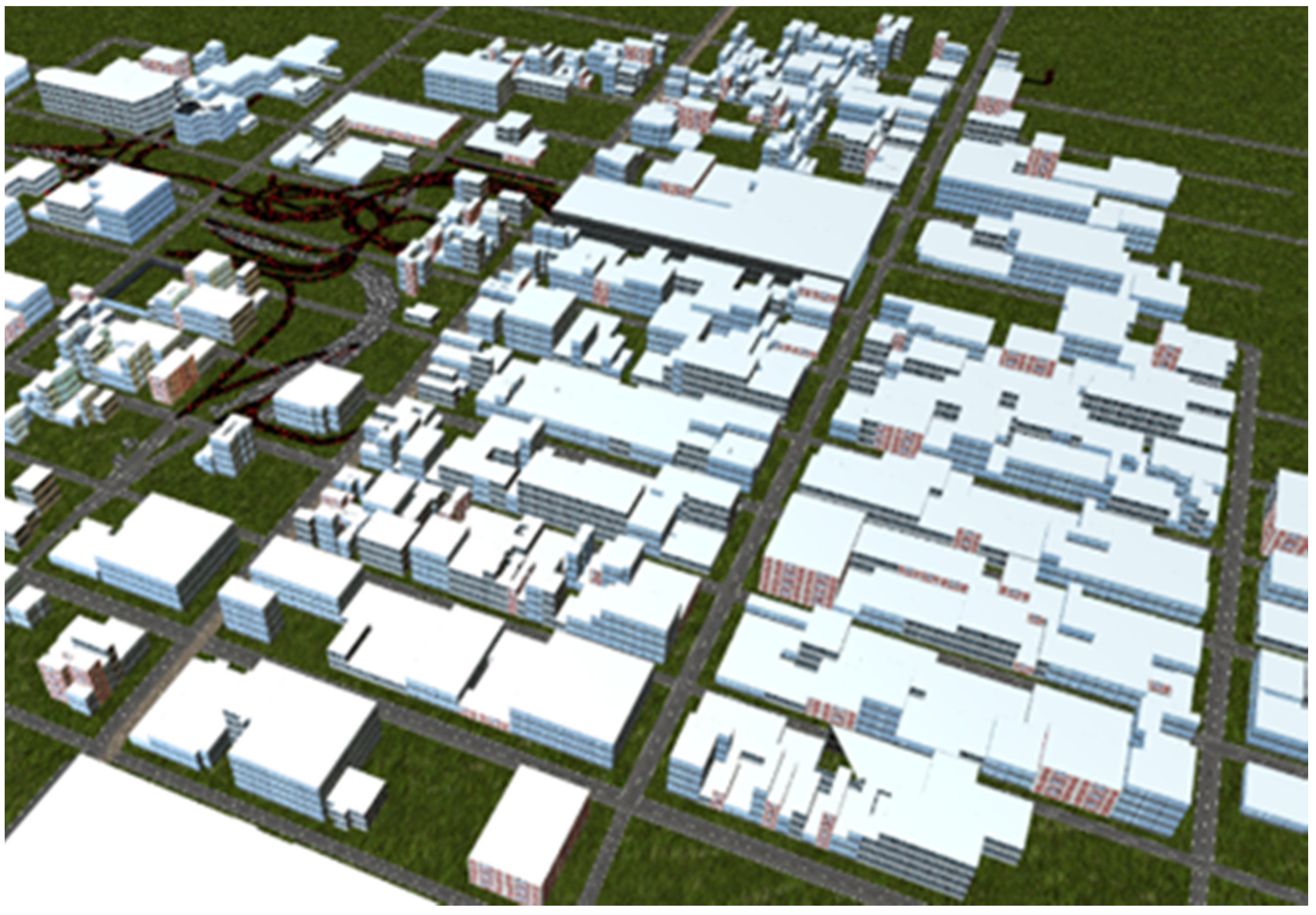

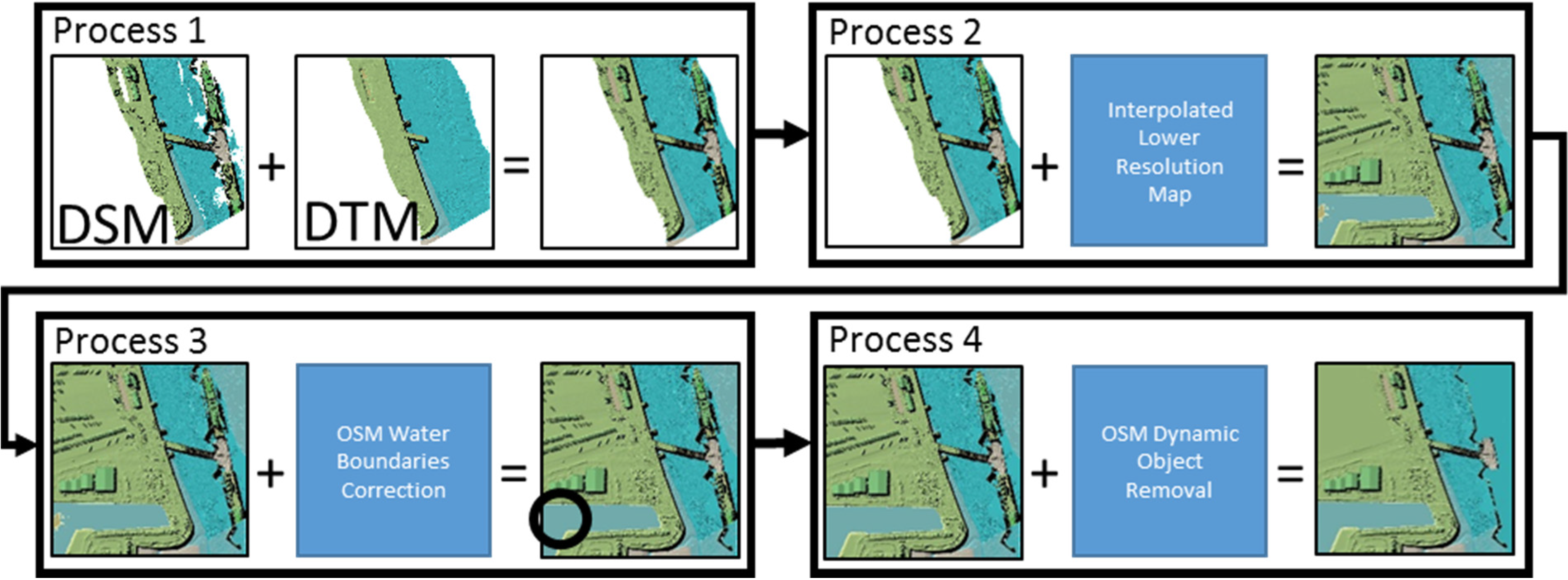

3.1. Map Data Generator

| LiDAR Map | Area | Points Contained |

|---|---|---|

| 2 m | 1 km2 | 5002 = 250,000 |

| 1 m | 1 km2 | 10002 = 1,000,000 |

| 0.5 m | 500 m2 | 10002 = 1,000,000 |

| 0.25 m | 500 m2 | 20002 = 4,000,000 |

| Proposed 0.25 m | 1 km2 | 40002 = 16,000,000 |

| Process | Time |

|---|---|

| Serialisation of OSM map file | 5977 milliseconds |

| Converting node longitude and latitude to X,Y,Z position | 3 minutes, 38 seconds, 287 milliseconds |

| Extracting and generating 3D building meshes | 1 minute, 48 seconds, 228 milliseconds. |

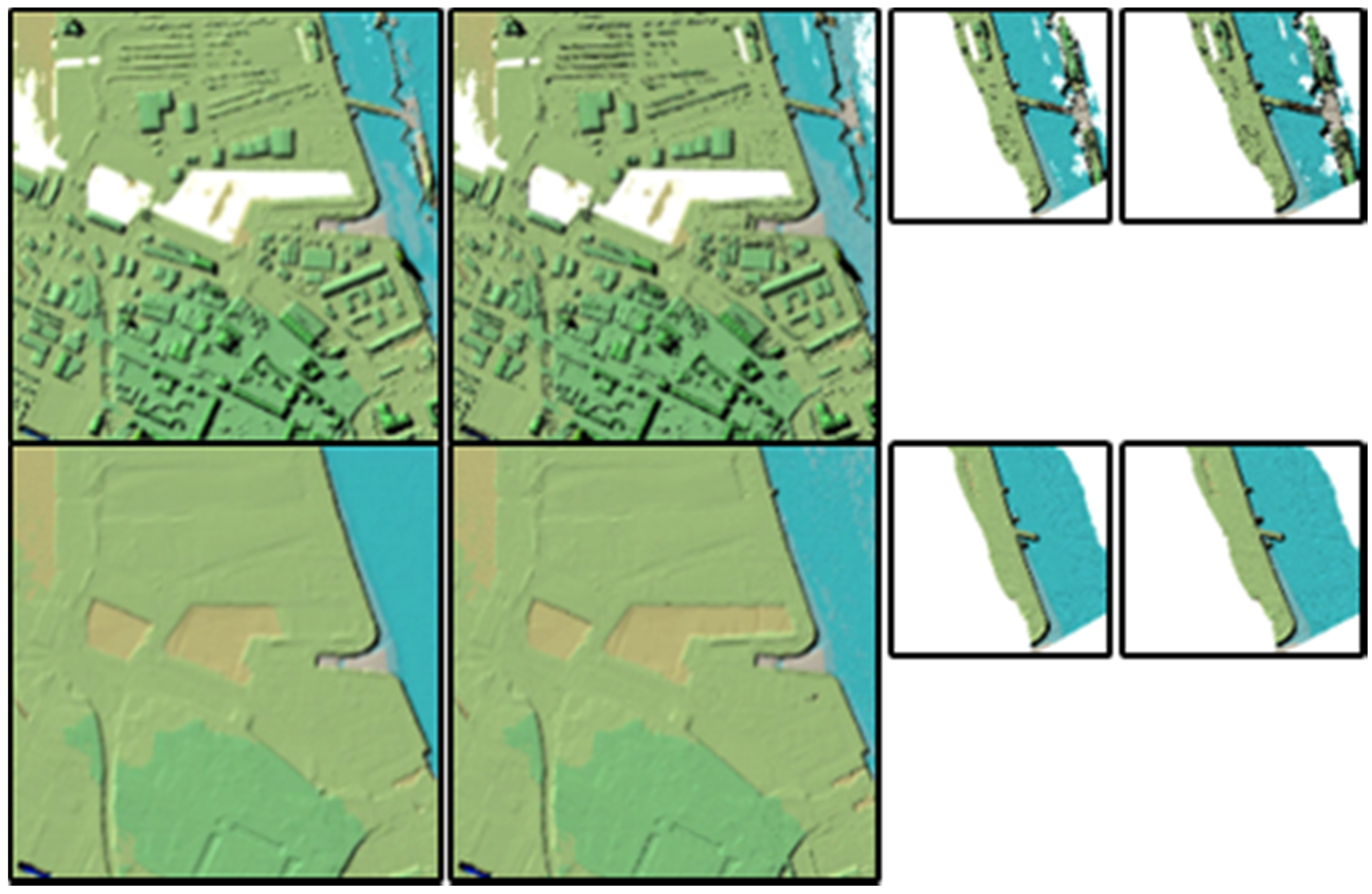

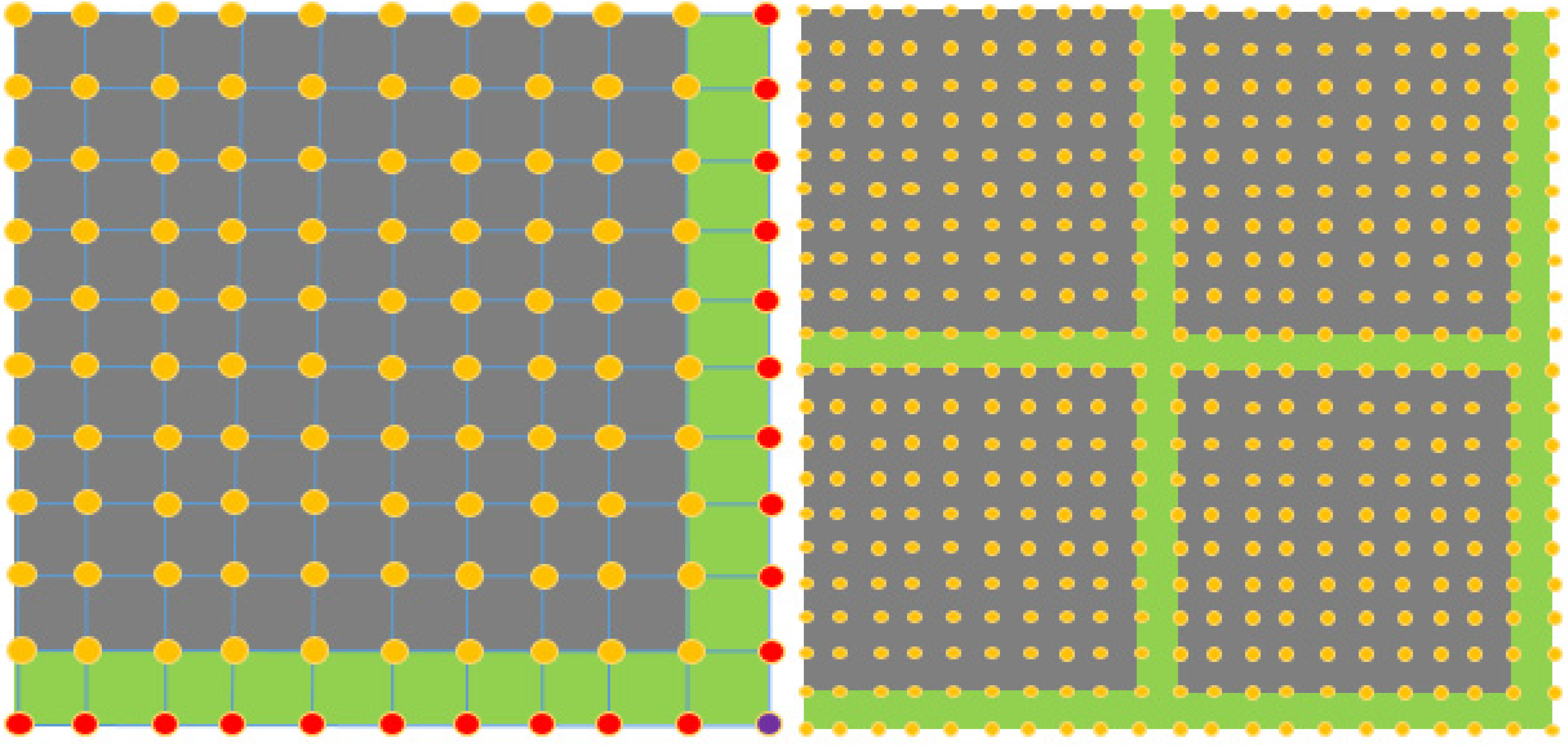

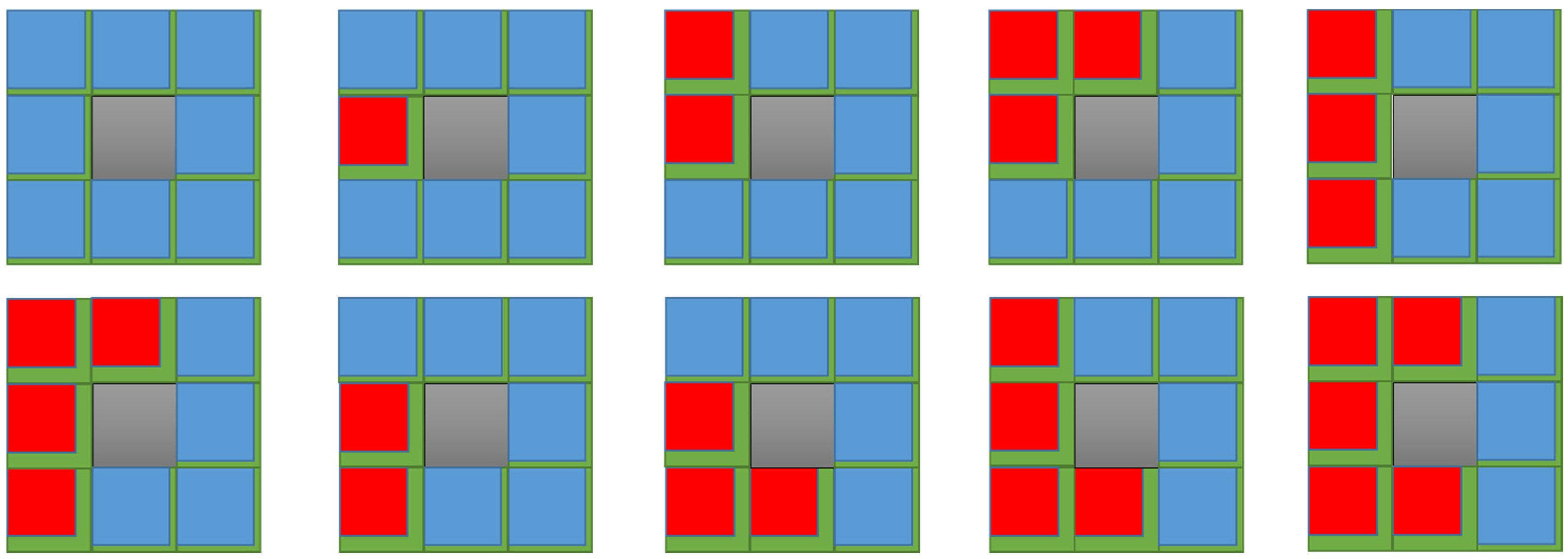

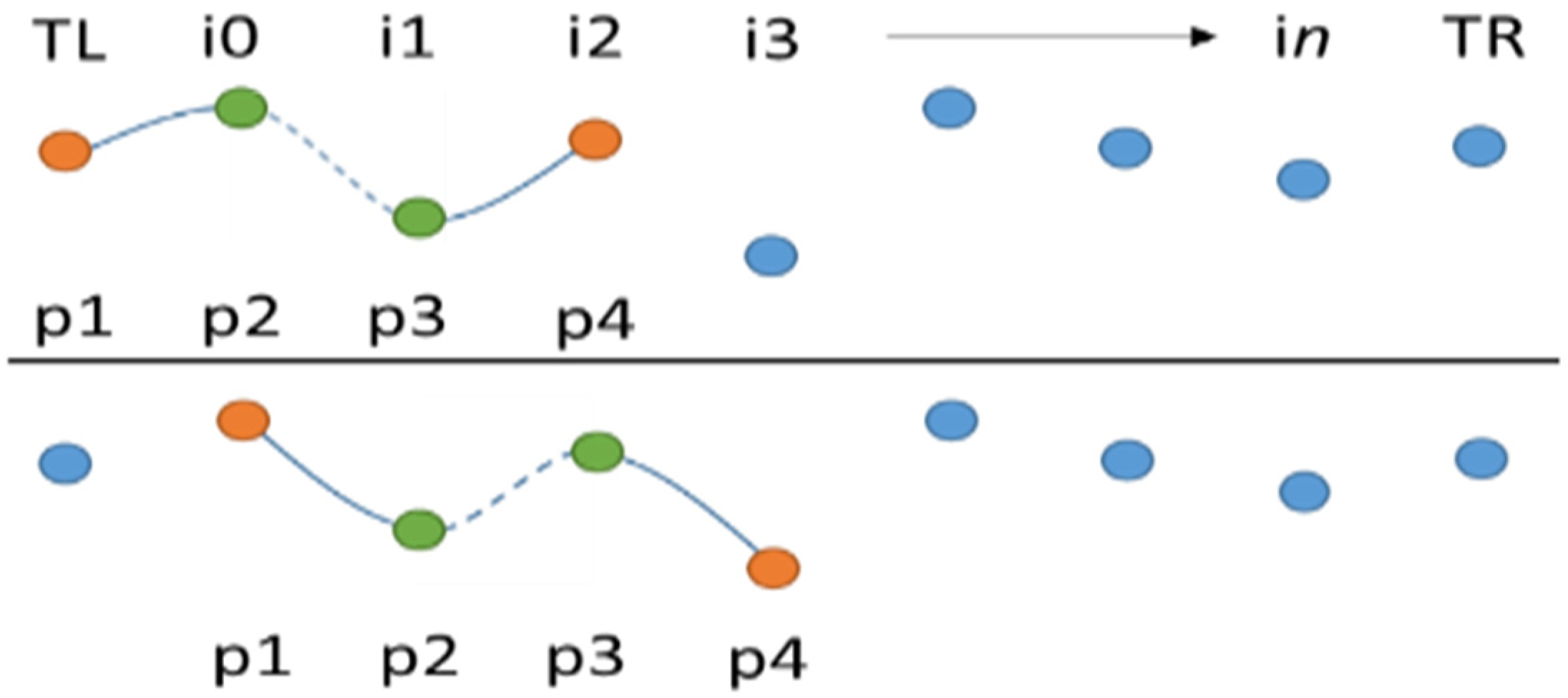

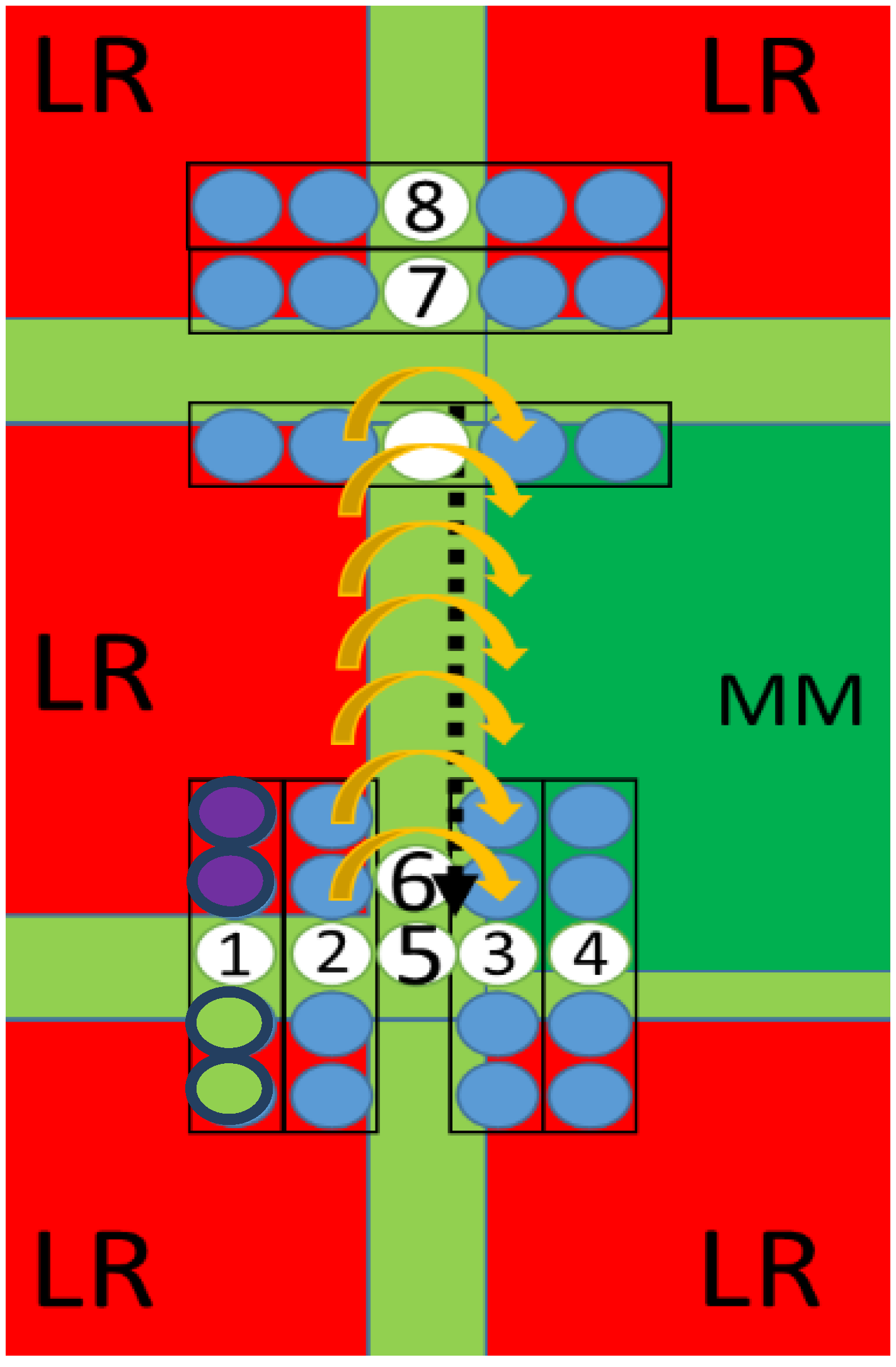

3.2. High Resolution Map Generation

| Maps with Missing Neighbours to the: | Count |

|---|---|

| Left | 1300 |

| Right | 1300 |

| Top | 1100 |

| Bottom | 1100 |

| Top-left only | 5 |

| Top-right only | 4 |

| Bottom-left only | 2 |

| Bottom-right only | 3 |

| Top Left, Left, Bottom Left, Bottom, Bottom Right | 3 |

| Top Right, Right, Bottom Right, Bottom, Bottom Left | 4 |

| Bottom Left, Left, Top Left, Top, Top Right | 6 |

| Bottom Right, Right, Top Right, Top, Top Left | 5 |

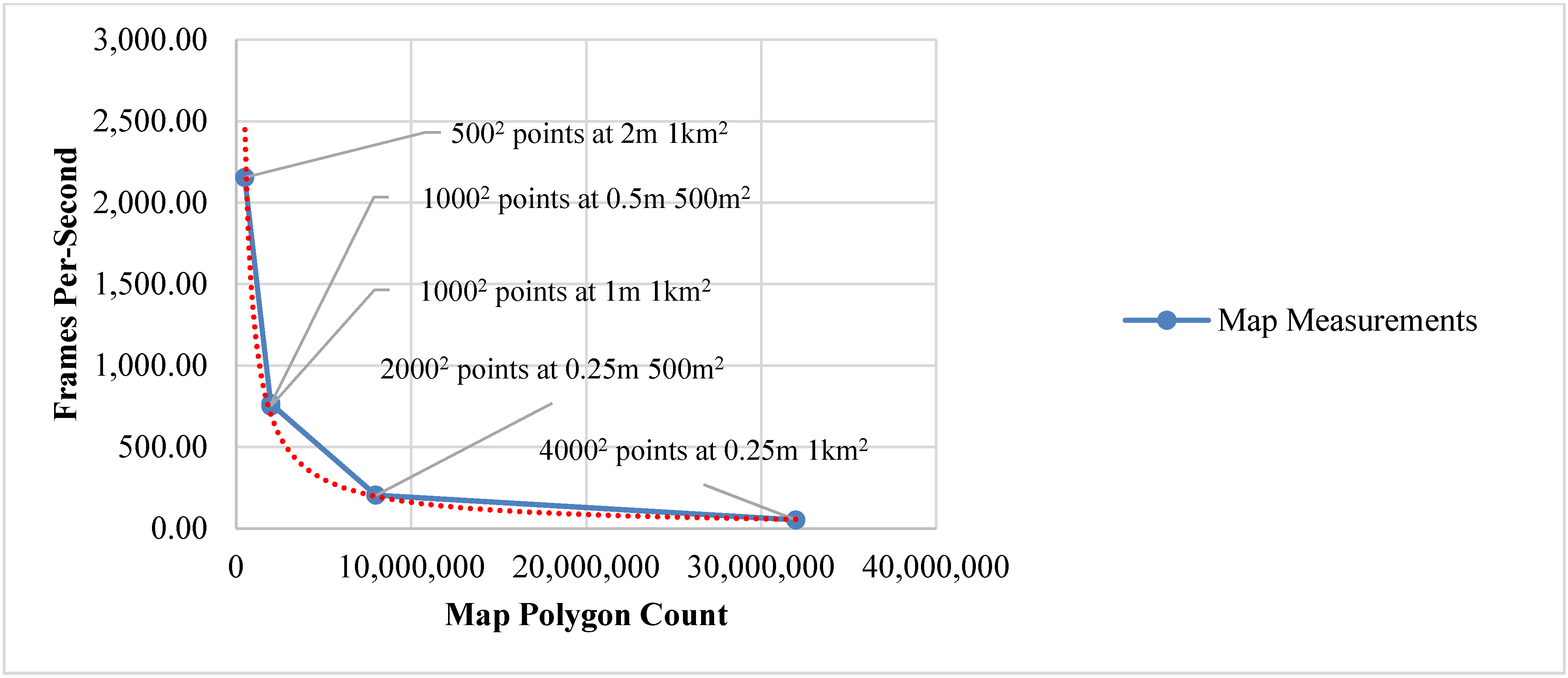

4. Evaluation

| Map Dimensions: Points within Map at Meter Cell Size | Index Count | Vertex Count | Polygon Count | FPS | Draw MS | GPU MS | Vertex Buffer Count |

|---|---|---|---|---|---|---|---|

| 5002 points at 2 m 1 km2 | 1,494,006 | 250,000 | 498,002 | 2,153.98 | 0.08 | 0.33 | 1 |

| 10002 points at 1 m 1 km2 | 5,988,006 | 1,000,000 | 1,996,002 | 751.15 | 0.18 | 0.24 | 1 |

| 10002 points at 0.5 m 500 m2 | 5,988,006 | 1,000,000 | 1,996,002 | 764.99 | 0.08 | 1.76 | 1 |

| 20002 points at 0.25 m 500 m2 | 23,976,006 | 4,000,000 | 7,992,002 | 203.73 | 0.17 | 4.56 | 1 |

| 40002 points at 0.25 m 1 km2 | 95,952,006 | 16,000,000 | 31,984,002 | 51.66 | 0.23 | 20.38 | 1 |

| Map Dimensions: Points within Map at Meter Cell Size | Index Count | Vertex Count | Polygon Count | FPS | Draw MS | GPU MS | Vertex Buffer Count |

|---|---|---|---|---|---|---|---|

| 1*20002 points at 0.25 m 500 m2 | 23,976,006 | 4,000,000 | 7,992,002 | 203.73 | 0.17 | 4.56 | 1 |

| 2*20002 points at 0.25 m 500 m2 | 47,952,012 | 8,000,000 | 15,984,004 | 103.41 | 0.18 | 9.52 | 2 |

| 4*20002 points at 0.25 m 500 m2 | 95,904,024 | 16,000,000 | 31,968,008 | 52.04 | 0.19 | 18.76 | 4 |

| 8*20002 points at 0.25 m 500 m2 | 191,808,048 | 32,000,000 | 63,936,016 | 11.75 | 0.26 | 84.64 | 8 |

| 1*40002 points at 0.25 m 1 km2 | 95,952,006 | 16,000,000 | 31,984,002 | 51.66 | 0.17 | 18.51 | 1 |

| 2*40002 points at 0.25 m 1 km2 | 191,904,012 | 32,000,000 | 63,968,004 | 12.81 | 0.26 | 71.92 | 2 |

| 4*40002 points at 0.25 m 1 km2 | 383,808,024 | 64,000,000 | 127,936,008 | 4.68 | 0.27 | 211.99 | 4 |

| 8*40002 points at 0.25 m 1 km2 | 767,616,048 | 128,000,000 | 255,872,016 | X | XX | X | 8 |

5. Conclusion

6. Future Work

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Folger, P. Geospatial Information and Geographic Information Systems (GIS): Current Issues and Future Challenges; DIANE Publishing: Collingdale, PA, USA, 2009. [Google Scholar]

- What is GIS. Available online: http://www.esri.com/what-is-gis (accessed on 30 March 2015).

- Folger, P. Geospatial Information and Geographic Information Systems (GIS ): An Overview for Congress; DIANE Publishing: Collingdale, PA, USA, 2011. [Google Scholar]

- Miller, A. Trends in process control systems security. IEEE Secur. Priv. 2005, 3, 57–60. [Google Scholar] [CrossRef]

- Robertson, G.; Mary, C.; Van Dantzich, M. Immersion in desktop virtual reality. In Proceedings of the 10th Annual ACM Symposium on User Interface Software and Technology, Banff, AB, Canada, 14–17 October 1997.

- Mihail, R.P.; Goldsmith, J.; Jacobs, N.; Jaromczyk, J.W. Teaching graphics for games using Microsoft XNA. In Proceedings of 18th International Conference on Computer Games: AI, Animation, Mobile, Interactive Multimedia, Educational & Serious Games, Louisville, KY, USA, 30 July–1 August 2013.

- Bourke, P. Low cost projection environment for immersive gaming. J. Multimed. 2008, 3, 41–46. [Google Scholar] [CrossRef]

- Geomatics Group: Aerial LIDAR Data, Aerial Photography and Spatial Data. Available online: https://www.geomatics-group.co.uk/geocms/ (accessed on 30 March 2015).

- Cheng, L.; Gong, J.; Li, M.; Liu, Y. 3D building model reconstruction from multi-view aerial imagery and lidar data. Photogramm. Eng. Remote Sens. 2011, 77, 125–139. [Google Scholar] [CrossRef]

- Leberl, F.; Irschara, A.; Pock, T.; Meixner, P.; Gruber, M.; Scholz, S.; Wiechert, A. Point Clouds: Lidar versus 3D Vision. Photogramm. Eng. Remote Sens. 2010, 76, 1123–1134. [Google Scholar] [CrossRef]

- OpenStreetMap.Org. Available online: http://www.openstreetmap.org/#map=15/53.4312/-2.8737 (accessed on 23 June 2015).

- Britain’s Mapping Agency | Ordnance Survey. Available online: http://www.ordnancesurvey.co.uk/ (accessed on 30 March 2015).

- Parish, Y.I.H.; Müller, P. Procedural modeling of cities. In Proceedings of the 28th Annual Conference on Computer Graphics and Interactive Techniques, Los Angeles, CA, USA, 12–17 August 2001.

- Bradley, B. Towards the Procedural Generation of Urban Building Interiors Table of Contents. Master’s Thesis, University of Hull, Yorkshire, UK, 2005. [Google Scholar]

- Wonka, P.; Aliaga, D.; Müller, P.; Vanegas, C. Modeling 3D urban spaces using procedural and simulation-based techniques. In Proceedings of the 2011 Annual Conference on Computer Graphics and Interactive Techniques, Hong Kong, China, 7–11 August 2011.

- Yannakakis, G.N.; Togelius, J. Experience-driven procedural content generation. IEEE Trans. Affect. Comput. 2011, 2, 147–161. [Google Scholar] [CrossRef]

- Chaplot, V.; Darboux, F.; Bourennane, H.; Leguédois, S.; Silvera, N.; Phachomphon, K. Accuracy of interpolation techniques for the derivation of digital elevation models in relation to landform types and data density. Geomorphology 2006, 77, 126–141. [Google Scholar] [CrossRef]

- Twigg, C. Catmull-rom splines. Computer 2003, 41, 4–6. [Google Scholar]

- Marschner, S.R.; Lobb, R.J. An evaluation of reconstruction filters for volume rendering. In Proceedings of 1994 IEEE Visualization Conference, Washington, DC, USA, 17–21 October 1994.

- Dudenhoeffer, D.; Hartley, S.; Permann, M. Critical infrastructure interdependency modeling: A survey of critical infrastructure interdependency modeling. Available online: http://www5vip.inl.gov/technicalpublications/Documents/3489532.pdf (accessed on 23 June 2015).

- Hernantes, J.; Rich, E.; Laugé, A.; Labaka, L.; Sarriegi, J.M. Learning before the storm: Modeling multiple stakeholder activities in support of crisis management, a practical case. Technol. Forecast. Soc. Change 2013, 80, 1742–1755. [Google Scholar] [CrossRef]

- Aqil, M.; Kita, I.; Yano, A.; Soichi, N. Decision support system for flood crisis management using artificial neural network. Int. J. Intell. Technol. 2006, 1, 70–76. [Google Scholar]

- Rinaldi, S. Modeling and simulating critical infrastructures and their interdependencies. In Proceedings of the 37th Annual Hawaii International Conference on System Sciences, Big Island, HI, USA, 5–8 January 2004.

- Kwan, M.P.; Lee, J. Emergency response after 9/11: The potential of real-time 3D GIS for quick emergency response in micro-spatial environments. Comput. Environ. Urban Syst. 2005, 29, 93–113. [Google Scholar] [CrossRef]

- Smelik, R.; Tutenel, T. Interactive creation of virtual worlds using procedural sketching. In Proceedings of the 2010 Eurographics, Norrköping, Sweden, 4–7 May 2010.

- Cui, X.; Shi, H. A*-based pathfinding in modern computer games. Int. J. Comput. Sci. Netw. Secur. 2011, 11, 125–130. [Google Scholar]

- Kumar, P.; Bottaci, L.; Mehdi, Q.; Gough, N.; Natkin, S. Efficient path finding for 2D games. In Proceedings of the 5th International Conference on Computer Games: Artificial Intelligence, Design and Education, Leicester, UK, 8–10 November 2004.

- Björnsson, Y.; Halldórsson, K. Improved heuristics for optimal path-finding on game maps. In Proceedings of the Second Artificial Intelligence and Interactive Digital Entertainment Conference, Marina del Rey, CA, USA, 20–23 June 2006.

- Graham, R.; McCabe, H.; Sheridan, S. Pathfinding in computer games. ITB J. 2003, 8, 57–81. [Google Scholar]

- Kumari, S.; Geethanjali, N. A survey on shortest path routing algorithms for public transport travel. Glob. J. Comput. Sci. 2010, 9, 73–76. [Google Scholar]

- Smith, G.; Whitehead, J.; Mateas, M. Tanagra: Reactive planning and constraint solving for mixed-initiative level design. Comput. Intell. AI Games 2011, 3, 201–215. [Google Scholar]

- Isaacs, J.P.; Falconer, R.E.; Gilmour, D.J.; Blackwood, D.J. Enhancing urban sustainability using 3D visualisation. Available online: https://repository.abertay.ac.uk/jspui/bitstream/10373/1122/1/udap164-163.pdf (accessed on 23 June 2015).

- Kellomäki, T. Interaction with dynamic large bodies in efficient, real-time water simulation. J. WSCG 2013, 21, 117–126. [Google Scholar]

- Piccoli, C. CityEngine for Archaeology. Available online: http://www.academia.edu/10586805/CityEngine_for_Archaeology (accessed on 23 June 2015).

- CyberCity3D. Available online: http://www.cybercity3d.com/newcc3d/ (accessed on 27 May 2015).

- Togelius, J.; Friberger, M.G. Bar Chart Ball, a Data Game. In Proceedings of the 8th International Conference on the Foundations of Digital Games, Crete, Greece, 24 June 2013.

- Friberger, M.G.; Togelius, J.; Cardona, A.B.; Ermacora, M.; Mousten, A.; Jensen, M.M. Data games. In Proceedings of 2013 Foundations of Digital Games, Crete, Greece, 14–17 May 2013.

- Tully, D.; El Rhalibi, A.; Merabti, M.; Shen, Y.; Carter, C. Game based decision support system and visualisation for crisis management and response. In Proceedings of the 15th Annual PostGraduate Symposium on the Convergence of Telecommunications, Networking and Broadcasting, Liverpool, UK, 24 June 2014.

- MonoGame | Write Once, Play Everywhere. Available online: http://www.monogame.net/ (accessed on 30 March 2015).

- Indraprastha, A.; Shinozaki, M.; Program, U.D. The investigation on using Unity3D game engine in urban design study. J. ICT Res. Appl. 2009, 3, 1–18. [Google Scholar] [CrossRef]

- Jokinen, P.; Tarhio, J.; Ukkonen, E. A comparison of approximate string matching algorithms. Software—Practice Exp. 1996, 26, 1–4. [Google Scholar] [CrossRef]

- Strugar, F. Continuous distance-dependent level of detail for rendering heightmaps. J. Graph. GPU Game Tools. 2009, 14, 1–15. [Google Scholar] [CrossRef]

- Manya, N.; Ayyala, I.; Benger, W. Cartographic rendering of elevation surfaces using procedural contour lines. In Proceedings of 21st WSCG International Conferences in Central Europe on Computer Graphics, Visualization and Computer Vision, Prague, Czech Republic, 20 May 2013.

© 2015 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Tully, D.; Rhalibi, A.E.; Carter, C.; Sudirman, S. Hybrid 3D Rendering of Large Map Data for Crisis Management. ISPRS Int. J. Geo-Inf. 2015, 4, 1033-1054. https://doi.org/10.3390/ijgi4031033

Tully D, Rhalibi AE, Carter C, Sudirman S. Hybrid 3D Rendering of Large Map Data for Crisis Management. ISPRS International Journal of Geo-Information. 2015; 4(3):1033-1054. https://doi.org/10.3390/ijgi4031033

Chicago/Turabian StyleTully, David, Abdennour El Rhalibi, Christopher Carter, and Sud Sudirman. 2015. "Hybrid 3D Rendering of Large Map Data for Crisis Management" ISPRS International Journal of Geo-Information 4, no. 3: 1033-1054. https://doi.org/10.3390/ijgi4031033

APA StyleTully, D., Rhalibi, A. E., Carter, C., & Sudirman, S. (2015). Hybrid 3D Rendering of Large Map Data for Crisis Management. ISPRS International Journal of Geo-Information, 4(3), 1033-1054. https://doi.org/10.3390/ijgi4031033