Cognitive Themes Emerging from Air Photo Interpretation Texts Published to 1960

Abstract

:1. Introduction

1.1. Expertise

1.2. Content Analysis

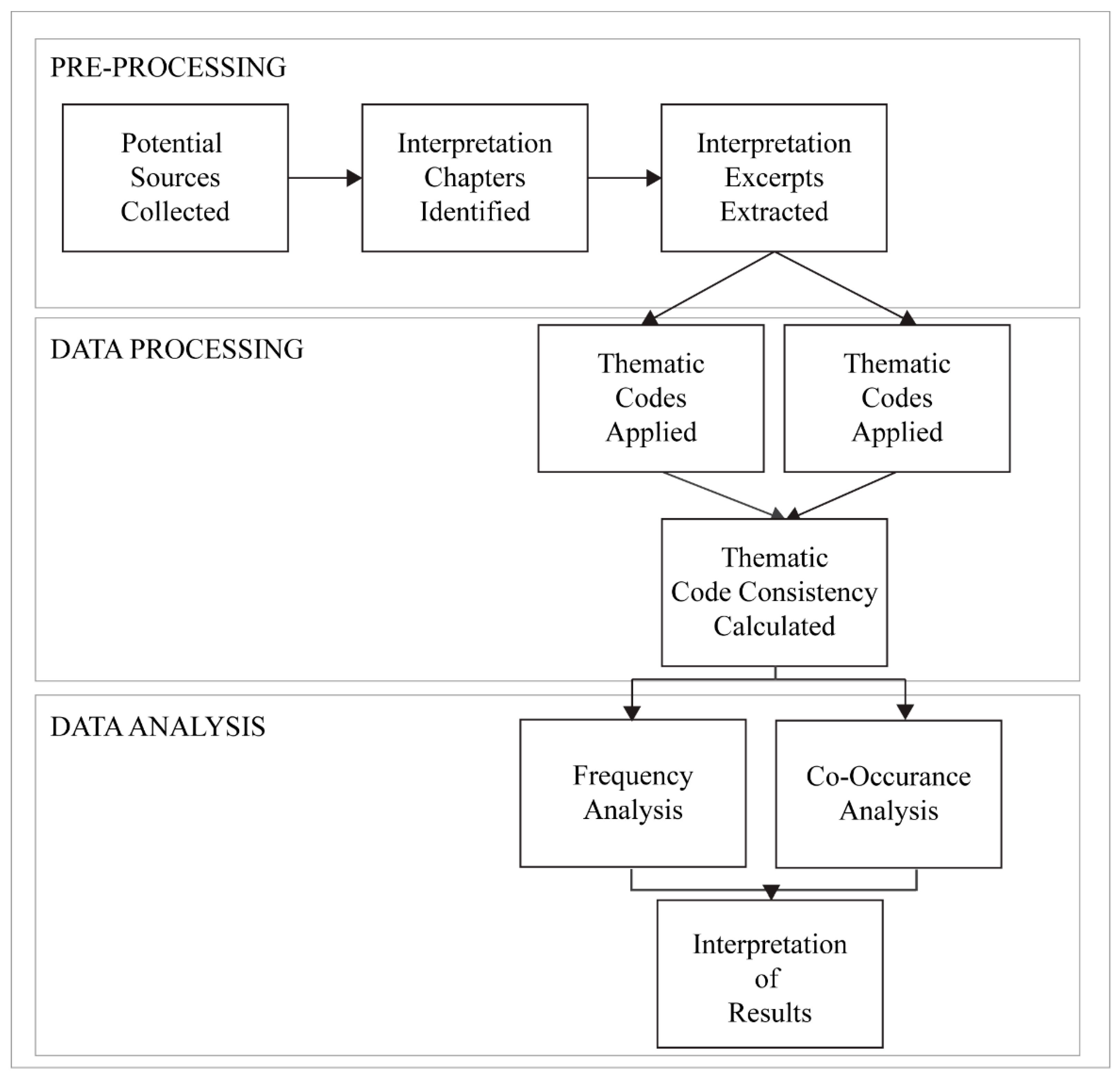

2. Methods

2.1. Code Structure

2.1.1. Interpretation Elements

| Code | Definition |

|---|---|

| Association | Association is the relationship between some objects that leads to the confirmation to their presence. |

| Color | Color is a property of an object determined by the wavelength of the light, which it reflects. |

| Height | The height of a feature represents the vertical distance of an object’s top most point to the ground. |

| Pattern | Pattern is the repetition of a feature characteristic, dependent upon the scale and resolution of the image. |

| Resolution | Resolution is the ability to resolve features on the landscape. This is typically discussed as pixel size in modern day, but also boundary contrast, distance, and edge gradients. |

| Shadow | The shadow is caused by an absence of light, due to an object blocking it. |

| Shape | The shape of the feature is a combination of the geometric properties of an object. |

| Site | The location-specific features in an image that provide information unique to the place. |

| Size | The size of a feature here represents the two dimensional length or width of a feature. This is differentiated here to reflect the fact that size and height are frequently discussed separately in the texts. |

| Texture | Texture is the appearance of smoothness or roughness caused by variation in tonal values of an image. |

| Tone | The grayscale value that is dependent upon the reflection of light from the surface of a feature. |

2.1.2. Knowledge

| Code | Definition |

|---|---|

| Procedural Knowledge | The knowledge of how to perform interpretation including knowledge of both the tools and process of analysis. |

| Conceptual Knowledge | The knowledge of facts and concepts used in the interpretation process, especially knowledge from a particular scientific domain. |

| Experiential Knowledge | Knowledge gained through experience in photo interpretation or in field based data collection. |

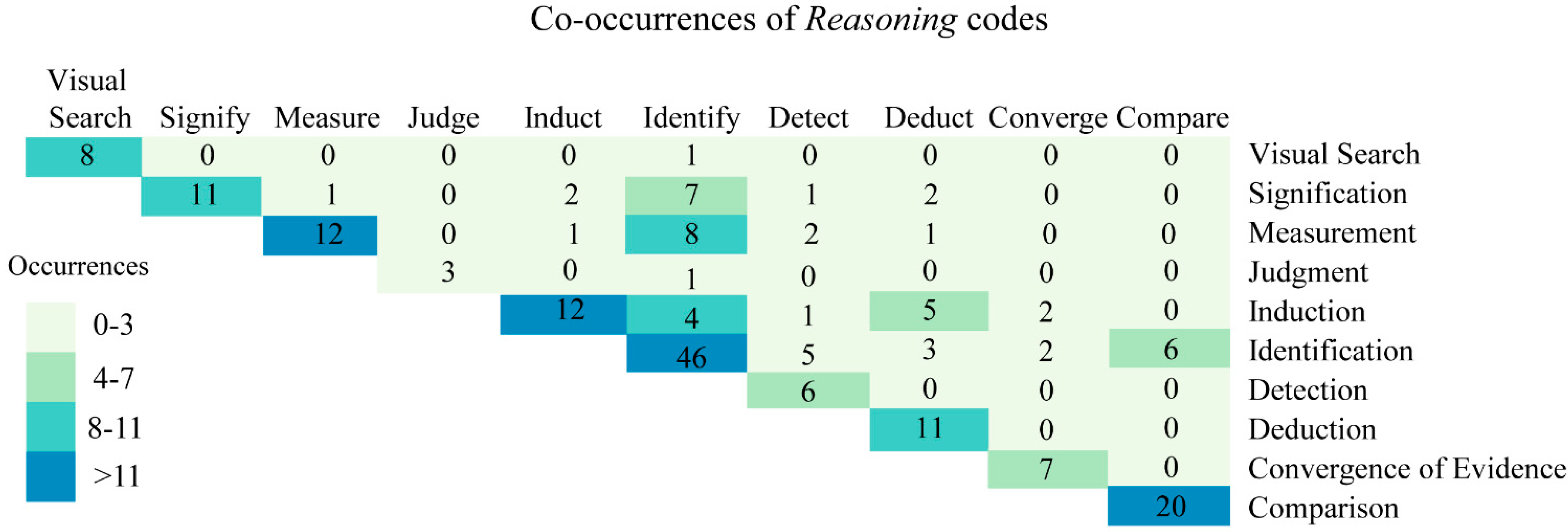

2.1.3. Reasoning Skills

2.2. Coding Process

| Subcategory | Code | Definition |

|---|---|---|

| Logical Reasoning | Induction | Evidence is used to support a probable conclusion. |

| Deduction | A necessarily true conclusion is reached based on determination of a set of verifiable truths. | |

| Convergent Evidence | A probable conclusion is reached upon the convergence of results from inductions from multiple sources of information. | |

| Interpretation Tasks | Search | The process of visually scanning an image. |

| Detection | The process of noticing an image feature. | |

| Identification | The process of recognizing an image feature. | |

| Comparison | The process of comparing two sources of information (image features, multiple images, or other types). | |

| Judgment | The process of determining a characteristic of an image feature. | |

| Measurement | The process of measuring the relative size of an image feature. | |

| Signification | The process of judging the importance or utility of an image feature to solving an analytical problem. |

2.3. Analysis

3. Results

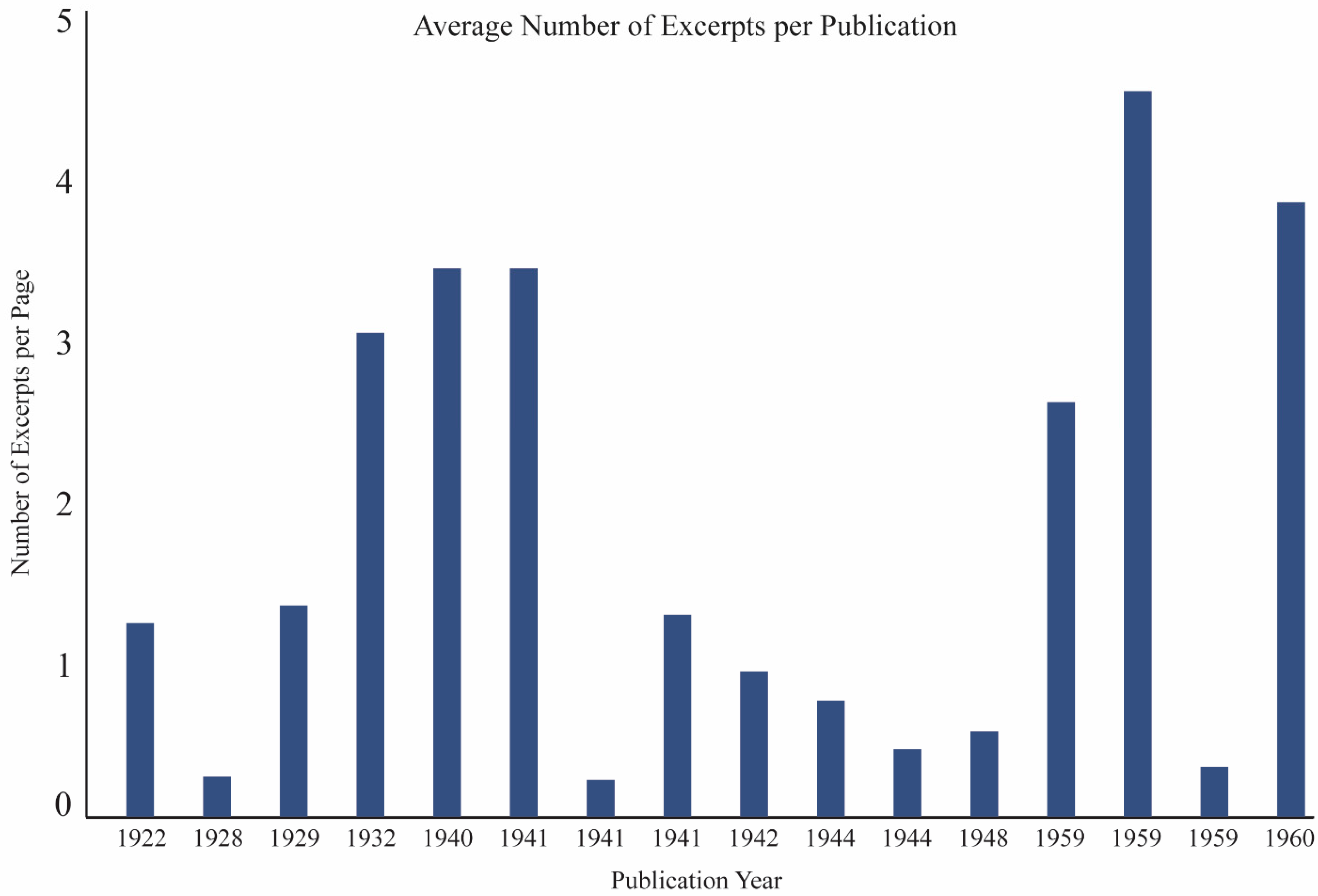

3.1. Texts

| Publication Date | Author | Book Title | Section Number |

|---|---|---|---|

| 1944 | Abrams | Essentials of Aerial Surveying and Photo Interpretation * | 9 |

| 1941 | Bagley | Aeriophotography and Autosurveying * | 6 |

| 1932 | Department of the Interior | Topical Bulletin No. 62: The Use of Aerial Photographs for Mapping * | 6 |

| 1942 | Eardley | Aerial Photographs: Their Use and Interpretation * | 4 |

| 1940 | Hart | Air Photography Applied to Surveying | 2 |

| 1941 | Heavey | Map and Aerial Photo Reading Simplified | 8 |

| 1922 | Lee | The Face of Earth As Seen From Above | 1 |

| 1959 | Leuder | Aerial Photographic Interpretation: Principles and Applications | 1 |

| 1944 | Lobeck and Tellington | Military Maps and Air Photographs * | 6 |

| 1959 | Ray | Aerial Photographs in Geologic Interpretation and Mapping | 1 |

| 1929 | Royal War Office | Manual of Map Reading, Photo Reading, and Field Sketching ** | 12 |

| 1959 | Schwidefsky and Fosberry | An Outline of Photogrammetry # | 5 |

| 1948 | Spurr | Aerial Photographs in Forestry * | 3 |

| 1941 | U.S. War Department | Field Manual 21–25: Elementary Map and Aerial Photograph Reading | 8 |

| 1928 | Winchester | Aerial Photography | 18 |

| 1960 | Rabbens | Manual of Photo Interpretation | 3 |

3.2. Coding Reliability

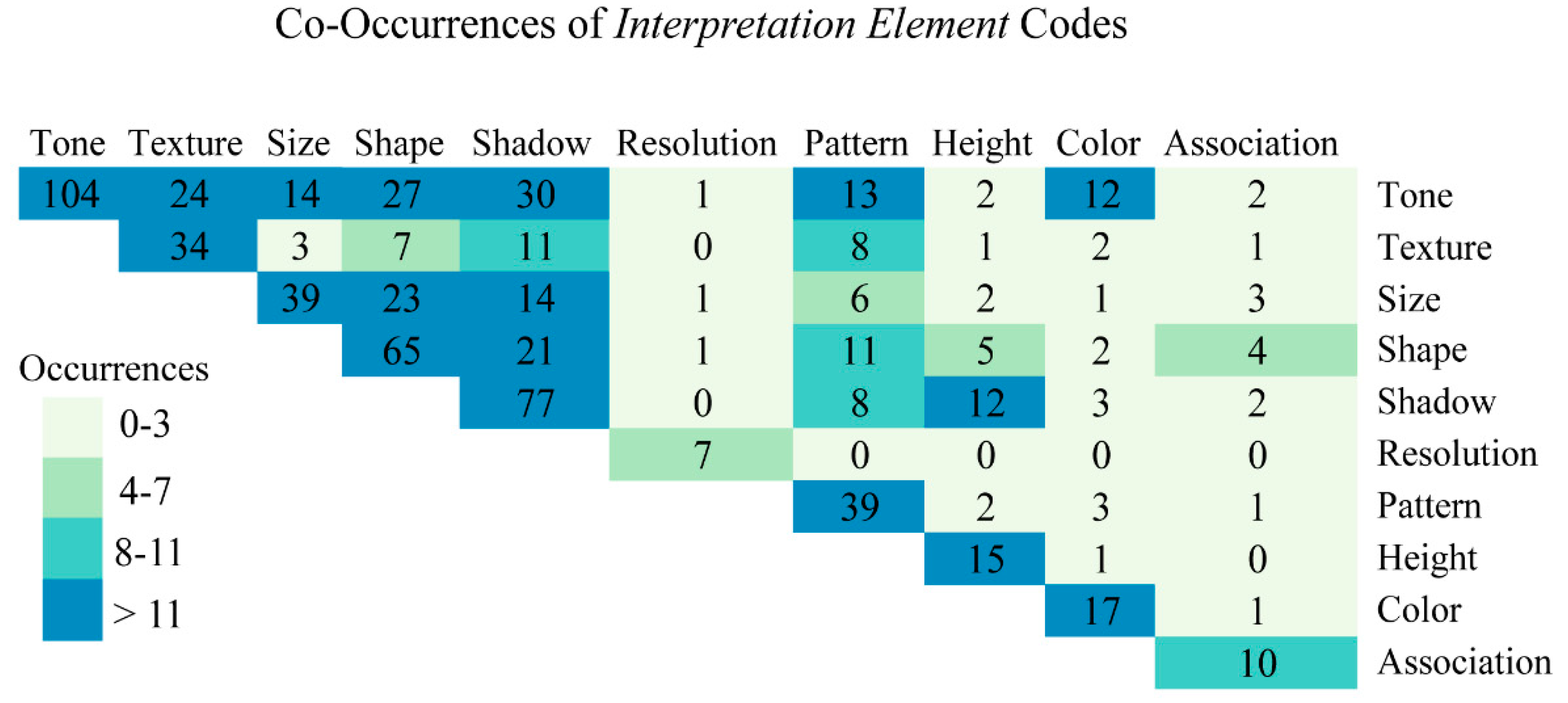

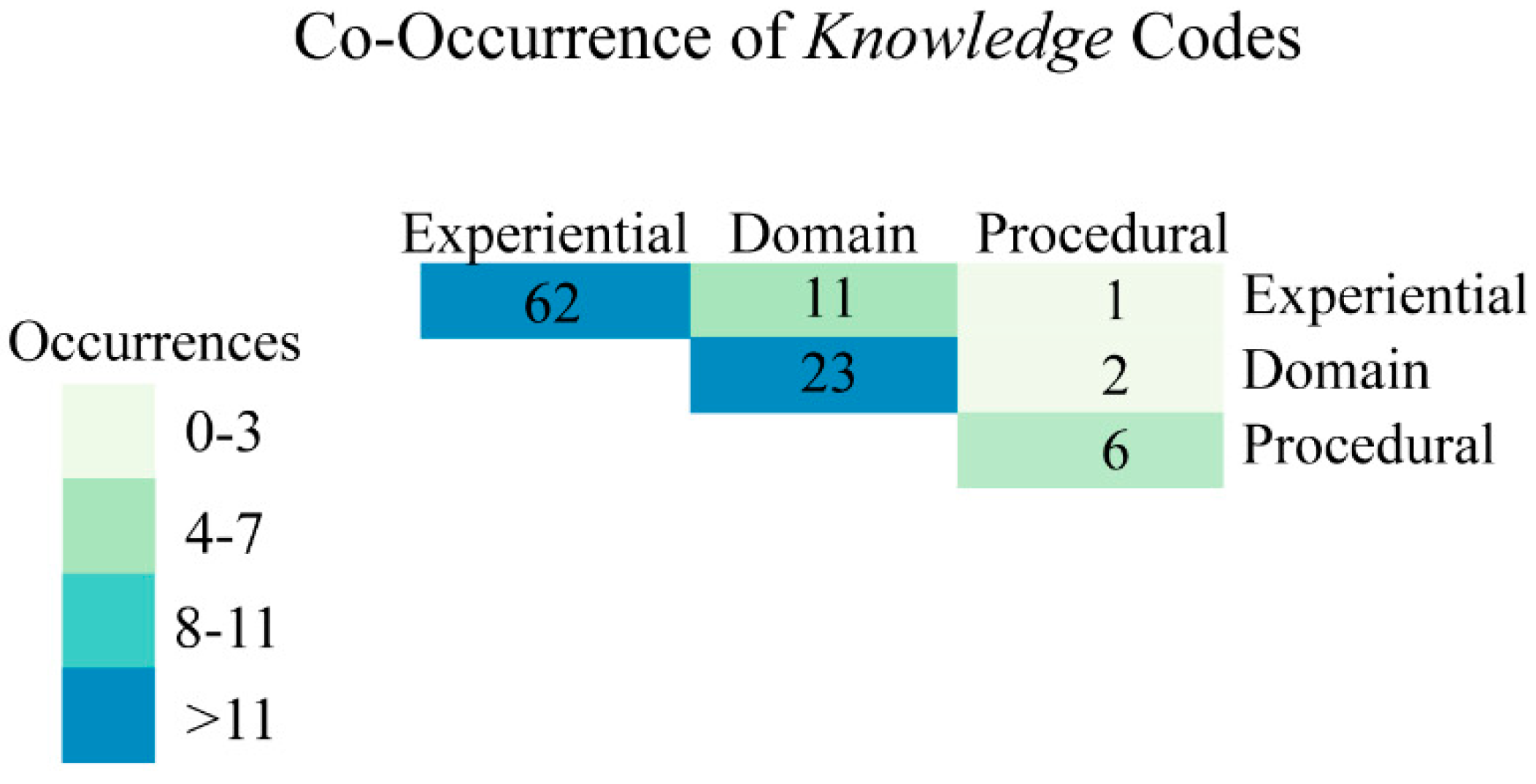

3.3. Code Analysis

| Category | Text Occurrences | Excerpt Occurrences | Dominant Code |

|---|---|---|---|

| Interpretation Elements | 15 texts | 407 excerpts | Tone (n = 104) |

| Reasoning Skills | 13 texts | 136 excerpts | Identification (n = 46) |

| Knowledge | 14 texts | 91 excerpts | Experience (n = 48) |

4. Discussion

5. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Hoffman, R.R.; Markman, A.B. Interpreting Remote Sensing Imagery: Human Factors, 1st ed.; CRC Press: Boca Raton, FL, USA, 2001; p. 283. [Google Scholar]

- Nakashima, R.; Kobayashi, K.; Maeda, E.; Yoshikawa, T.; Yokosawa, K. Visual search of experts in medical image reading: The effect of training, target prevalence, and expert knowledge. Front. Psychol. 2013, 4. [Google Scholar] [CrossRef] [PubMed]

- Goodchild, M.F. Citizens as sensors: The world of volunteered geography. GeoJournal 2007, 69, 211–221. [Google Scholar] [CrossRef]

- Fritz, S.; McCallum, I.; Schill, C.; Perger, C.; Grillmayer, R.; Achard, F.; Kraxner, F.; Obersteiner, M. Geo-wiki.Org: The use of crowdsourcing to improve global land cover. Remote Sens. 2009, 1, 345–354. [Google Scholar] [CrossRef]

- Meier, P. Crisis mapping in action: How open source software and global volunteer networks are changing the world, one map at a time. J. Map Geogr. Libr. 2012, 8, 89–100. [Google Scholar] [CrossRef]

- Parks, L. Digging into Google Earth: An analysis of “crisis in darfur”. Geoforum 2009, 40, 535–545. [Google Scholar] [CrossRef]

- Summerson, C. A philosophy for photo interpreters. Photogramm. Eng. 1954, 20, 396–397. [Google Scholar]

- Ericsson, K.A.; Lehmann, A.C. Expert and exceptional performance: Evidence of maximal adaptation to task constraints. Annu. Rev. Psychol. 1996, 47, 273–305. [Google Scholar] [CrossRef] [PubMed]

- Ericsson, K.A. The influence of experience and deliberate practice on the development of superior expert performance. In The Cambridge Handbook of Expertise and Expert Performance; Cambridge University Press: Cambridge, MA, USA, 2006; pp. 683–703. [Google Scholar]

- Werner, S.; Thies, B. Is “change blindness” attenuated by domain-specific expertise? An expert-novices comparison of change detection in football images. Vis. Cogn. 2000, 7, 163–173. [Google Scholar] [CrossRef]

- Glaser, R.; Chi, M.T.; Farr, M.J. The Nature of Expertise; Lawrence Erlbaum Associates: Hillsdale, NJ, USA, 1988. [Google Scholar]

- Posner, M.I. Abstraction and the Process of Recognition; Academic Press: New York, NY, USA, 1969. [Google Scholar]

- Johnson, E.J. Expertise and decision under uncertainty: Performance and process. In The Nature of Expertise; Chi, M.T.H., Glaser, R., Farr, M.J., Eds.; Psycholgy Press: Hillsdale, NJ, USA, 1988; pp. 209–228. [Google Scholar]

- Nodine, C.F.; Kundel, H.L.; Mello-Thoms, C.; Weinstein, S.P.; Orel, S.G.; Sullivan, D.C.; Conant, E.F. How experience and training influence mammography expertise. Acad. Radiol. 1999, 6, 575–585. [Google Scholar] [CrossRef] [PubMed]

- Wood, G.; Batt, J.; Appelboam, A.; Harris, A.; Wilson, M.R. Exploring the impact of expertise, clinical history, and visual search on electrocardiogram interpretation. Med. Decis. Mak. 2014, 34, 75–83. [Google Scholar] [CrossRef]

- Myles-Worsley, M.; Johnston, W.A.; Simons, M.A. The influence of expertise on X-ray image processing. J. Exp. Psychol.: Learn. Mem. Cogn. 1988, 14, 553–557. [Google Scholar] [CrossRef]

- Krupinski, E.A. The role of perception in imaging: Past and future. Semin. Nucl. Med. 2011, 41, 392–400. [Google Scholar] [CrossRef] [PubMed]

- Schmidt, H.; Norman, G.; Boshuizen, H. A cognitive perspective on medical expertise: Theory and implication. Acad. Med. 1990, 65, 611–621. [Google Scholar] [CrossRef] [PubMed]

- Barrington, L.; Ghosh, S.; Greene, M.; Har-Noy, S.; Berger, J.; Gill, S.; Lin, A.Y.M.; Huyck, C. Crowdsourcing earthquake damage assessment using remote sensing imagery. Ann. Geophys. 2012, 54. [Google Scholar] [CrossRef]

- Fritz, S.; McCallum, I.; Schill, C.; Perger, C.; See, L.; Schepaschenko, D.; van der Velde, M.; Kraxner, F.; Obersteiner, M. Geo-wiki: An online platform for improving global land cover. Environ. Model. Softw. 2012, 31, 110–123. [Google Scholar] [CrossRef]

- Kerle, N. Remote sensing based post-disaster damage mapping with collaborative methods. In Intelligent Systems for Crisis Management; Springer: London, UK, 2013; pp. 121–133. [Google Scholar]

- Kerle, N.; Hoffman, R.R. Collaborative damage mapping for emergency response: The role of cognitive systems engineering. Nat. Hazards Earth Syst. Sci. 2013, 13, 97–113. [Google Scholar] [CrossRef]

- Hoffman, R.R. What is a Hill? An Analysis of the Meanings of Generic Topographic Terms; Battelle Memorial Institute: Research Triangle Park, NC, USA, 1985. [Google Scholar]

- Cole, K.; Stevens-Adams, S.; McNamara, L.; Ganter, J. Applying cognitive work analysis to a synthetic aperture radar system. In Engineering Psychology and Cognitive Ergonomics; Springer: Berlin, Germany, 2014; pp. 313–324. [Google Scholar]

- Bianchetti, R.A. Looking Back to Inform the Future: The Role of Cognition in Forest Disturbance Characterization from Remote Sensing Imagery; Pennsylvania State University: University Park, PA, USA, 2014. [Google Scholar]

- Hoffman, R.R. Human factors psychology in the support of forecasting: The design of advanced meteorological workstations. Weather Forecast. 1991, 6, 98–110. [Google Scholar] [CrossRef]

- Hoffman, R.R.; Pike, R. On the specification of the information available for the perception and description of the natural terrain. In Local Applications of the Ecological Approach to Human-Machine Systems; CRC Press: Boca Raton, FL, USA, 1995; Volume 2, pp. 285–323. [Google Scholar]

- Patel, V.L.; Kaufman, D.R.; Arocha, J.F. Emerging paradigms of cognition in medical decision-making. J. Biomed. Inform. 2002, 35, 52–75. [Google Scholar] [CrossRef] [PubMed]

- Lloyd, R.; Hodgson, M.E.; Stokes, A. Visual categorization with aerial photographs. Ann. Assoc. Am. Geogr. 2002, 92, 241–266. [Google Scholar] [CrossRef]

- Nilsson, H.-E. Remote sensing and image analysis in plant pathology. Can. J. Plant Pathol. 1995, 17, 154–166. [Google Scholar] [CrossRef]

- Medin, D.L.; Lynch, E.B.; Coley, J.D.; Atran, S. Categorization and reasoning among tree experts: Do all roads lead to rome? Cogn. Psychol. 1997, 32, 49–96. [Google Scholar] [CrossRef] [PubMed]

- Lansdale, M.; Underwood, G.; Davies, C. Something overlooked? How experts in change detection use visual saliency. Appl. Cogn. Psychol. 2010, 24, 213–225. [Google Scholar] [CrossRef]

- Davies, C.; Tompkinson, W.; Donnelly, N.; Gordon, L.; Cave, K. Visual saliency as an aid to updating digital maps. Comput. Hum. Behav. 2006, 22, 672–684. [Google Scholar] [CrossRef]

- Lloyd, R.; Hodgson, M.E. Visual search for land use objects in aerial photographs. Cartogr. Geogr. Inf. Sci. 2002, 29, 3–15. [Google Scholar] [CrossRef]

- Hodgson, M. Window size and visual image classification accuracy: An experimental approach. In 1994 Asprs Acsm Annual Convention and 2VOL; ASPRS: Falls Church, VA, USA, 1994; Volume 2, pp. 209–218. [Google Scholar]

- Hoffman, R.R. Methodological Preliminaries to the Development of an Expert System for Aerial Photo Interpretation; U.S. Army Corps of Engineers: Fort Belvoir, VA, USA, 1984. [Google Scholar]

- Mirel, B.; Eichinger, F.; Keller, B.J.; Kretzler, M. A cognitive task analysis of a visual analytic workflow: Exploring molecular interaction networks in systems biology. J. Biomed. Discov. Collab. 2011, 6, 1–33. [Google Scholar] [CrossRef] [PubMed]

- Carley, K. Coding choices for textual analysis: A comparison of content analysis and map analysis. Sociol. Methodol. 1993, 23, 75–126. [Google Scholar] [CrossRef]

- Berelson, B. Content Analysis in Communication Research; The Free Press: Gelncoe, IL, USA, 1952. [Google Scholar]

- Moodie, D. Content analysis: A method for historical geography. Area 1971, 3, 146–149. [Google Scholar]

- Hawley, A.J. Environmental perception: Nature and ellen churchill semple. Southeast. Geogr. 1968, 8, 54–59. [Google Scholar] [CrossRef]

- Kent, A. Topographic maps: Methodological approaches for analyzing cartographic style. J. Map Geogr. Libr. 2009, 5, 131–156. [Google Scholar] [CrossRef]

- Kent, A.J.; Vujakovic, P. Stylistic diversity in european state 1:50,000 topographic maps. Cartogr. J. 2009, 46, 179–213. [Google Scholar] [CrossRef]

- Kent, A.J.; Vujakovic, P. Cartographic language: Towards a new paradigm for understanding stylistic diversity in topographic maps. Cartogr. J. 2011, 48, 21–40. [Google Scholar] [CrossRef]

- Muehlenhaus, I. Genealogy that counts: Using content analysis to explore the evolution of persuasive cartography. Cartographica 2011, 46, 28–40. [Google Scholar] [CrossRef]

- Krippendorff, K. Content Analysis: An Introduction to Its Methodology; Sage: New York, NY, USA, 2012. [Google Scholar]

- Hsieh, H.-F.; Shannon, S.E. Three approaches to qualitative content analysis. Qual. Health Res. 2005, 15, 1277–1288. [Google Scholar] [CrossRef] [PubMed]

- Zhang, Y.; Wildemuth, B.M. Qualitative analysis of conten. In Applications of Social Research Methods to Questions in Information and Library Science; ABC-CLIO: Westport, CT, USA, 2009; pp. 308–319. [Google Scholar]

- Potter, W.J.; Levine-Donnerstein, D. Rethinking validity and reliability in content analysis. J. Appl. Commun. Res. 1999, 27, 258–284. [Google Scholar] [CrossRef]

- Kondracki, N.L.; Wellman, N.S.; Amundson, D.R. Content analysis: Review of methods and their applications in nutrition education. J. Nutr. Educ. Behav. 2002, 34, 224–230. [Google Scholar] [CrossRef] [PubMed]

- Sandelowski, M. Focus on research methods-whatever happened to qualitative description? Res. Nurs. Health 2000, 23, 334–340. [Google Scholar] [CrossRef] [PubMed]

- Marsh, E.E.; White, M.D. Content analysis: A flexible methodology. Libr. Trends 2006, 55, 22–45. [Google Scholar] [CrossRef]

- Rabben, E.L.; Chalmers, E.L., Jr.; Manley, E.; Pickup, J. Fundamentals of photo interpretation. In Manual of Photographic Interpretation; Colwell, R.N., Ed.; American Society of Photogrammetry and Remote Sensing: Washington, DC, USA, 1960; pp. 99–168. [Google Scholar]

- Klein, G.A.; Hoffman, R.R. Perceptual-cognitive aspects of expertise. In Cognitive Science Foundations of Instruction; Routledge: Hillsdale, NJ, USA, 1993; pp. 203–226. [Google Scholar]

- Rogers, E.; Arkin, R.C. Visual interaction: A link between perception and problem solving. In Proceedings of the 1991 IEEE International Conference on Systems, Man, and Cybernetics, Charlottesville, VA, USA, 13–16 October 1991; pp. 1265–1270.

- Hochberg, J. On cognition in perception: Perceptual coupling and unconscious inference. Cognition 1981, 10, 127–134. [Google Scholar] [CrossRef] [PubMed]

- Olson, C.E. Elements of photographic interpretation common to several sensors. Photogramm. Eng. Remote Sens. 1960, 26, 651–656. [Google Scholar]

- Estes, J.E.; Hajic, E.J.; Tinney, L.R. Fundamentals of image analysis: Analysis of visible and thermal infrared data. Manu. Remote Sens. 1983, 2, 1233–2440. [Google Scholar]

- Avery, T.E.; Berlin, G.L. Principles of air photo interpretation. In Fundamentals of Remote Sensing and Airphoto Interpretation; Maxwell Macmillan: New York, NY, USA, 1992; pp. 51–70. [Google Scholar]

- Bertin, J. Semiology of Graphics: Diagrams, Networks, Maps; University of Wisconsin Press: Madison, WI, USA, 1983; p. 460. [Google Scholar]

- Fischer, M.M.; Nijkamp, P. Geographic information systems and spatial analysis. Ann. Reg. Sci. 1992, 26, 3–17. [Google Scholar] [CrossRef]

- Thorndyke, P.W.; Hayes-Roth, B. Differences in spatial knowledge acquired from maps and navigation. Cogn. Psychol. 1982, 14, 560–589. [Google Scholar] [CrossRef] [PubMed]

- Freundschuh, S.M. Spatial Knowledge Acquisition of Urban Environments from Maps and Navigation Experience; State University of New York at Buffalo: Buffalo, NY, USA, 1992. [Google Scholar]

- De Jong, T.; Ferguson-Hessler, M.G. Types and qualities of knowledge. Educ. Psychol. 1996, 31, 105–113. [Google Scholar] [CrossRef]

- Lesgold, A.; Rubinson, H.; Feltovich, P.; Glaser, R.; Klopfer, D.; Wang, Y. Expertise in a complex skill: Diagnosing X-ray pictures. In The Nature of Expertise; Chi, M.T.H., Glaser, R., Farr, M.J., Eds.; Taylor and Francis: Hoboken, NJ, USA, 1988; pp. 311–342. [Google Scholar]

- Colwell, R.N. A systematic analysis of some factors affecting photographic interpretation. Photogramm. Eng. 1954, 20, 433–454. [Google Scholar]

- Campbell, J.B. Introduction to Remote Sensing; Guiliford Press: New York, NY, USA, 2002. [Google Scholar]

- Rogers, E. A cognitive theory of visual interaction. In Diagrammatic Reasoning: Cognitive and Computational Perspectives; Glasgow, J., Narayanan, N.H., Karan, B.C., Eds.; MIT Press: Cambridge, MA USA, 1995; pp. 481–500. [Google Scholar]

- Baker, V.R. Geosemiosis. Geol. Soc. Am. Bull. 1999, 111, 633–645. [Google Scholar] [CrossRef]

- Colwell, R.N. Four decades of progress in photographic interpretation since the founding of Commission VII (IP). Int. Archiv. Photogramm. Remote Sens. 1993, 29, 683–683. [Google Scholar]

- Bazeley, P.; Jackson, K. Qualitative Data Analysis with Nvivo; Sage Publications Limited: Thousand Oaks, CA, USA, 2013. [Google Scholar]

- Pruitt, E.L. The office of naval research and geography. Ann. Assoc. Am. Geogr. 1979, 69, 103–108. [Google Scholar] [CrossRef]

- Goddard, G.W.; Copp, D.S. Overview: A Life-Long Adventure in Aerial Photography; Doubleday: New York, NY, USA, 1969. [Google Scholar]

- Lee, W.T. The Face of the Earth as Seen from the Air: A Study in the Application of Airplane Photography to Geography; American Geographical Society: New York, NY, USA, 1922. [Google Scholar]

- Domin, D.S. A content analysis of general chemistry laboratory manuals for evidence of higher-order cognitive tasks. J. Chem. Educ. 1999. [Google Scholar] [CrossRef]

- Tinsley, H.E.; Brown, S.D. Handbook of Applied Multivariate Statistics and Mathematical Modeling; Academic Press: New York, NY, USA, 2000. [Google Scholar]

- DeVon, H.A.; Block, M.E.; Moyle-Wright, P.; Ernst, D.M.; Hayden, S.J.; Lazzara, D.J.; Savoy, S.M.; Kostas-Polston, E. A psychometric toolbox for testing validity and reliability. J. Nurs. Scholarsh. 2007, 39, 155–164. [Google Scholar] [CrossRef] [PubMed]

- Colwell, R.N. Manual of Photographic Interpretation; American Society of Photogrammetry: Herndon, VA, USA, 1960; p. 972. [Google Scholar]

- Colwell, R.N. The photo interpretation picture in 1960. Photogrammetria 1960, 16, 292–314. [Google Scholar] [CrossRef]

- Chamberlin, T.C. The method of multiple working hypotheses. Science 1890, 15, 92–96. [Google Scholar] [PubMed]

- Lee, W.T. Airplanes and geography. Geogr. Rev. 1920, 10, 310–325. [Google Scholar] [CrossRef]

- Hay, G.J.; Blaschke, T. Special issue: Geographic objectbased image analysis (GEOBIA). Photogramm. Eng. Remote Sens. 2009, 76, 121–122. [Google Scholar]

- Gardin, S.; van Laere, S.M.J.; van Coillie, F.M.B.; Anseel, F.; Duyck, W.; de Wulf, R.R.; Verbeke, L.P.C. Remote sensing meets psychology: A concept for operator performance assessment. Remote Sens. Lett. 2011, 2, 251–257. [Google Scholar] [CrossRef]

- Svatonova, H.; Rybansky, M. Children Observe the Digital Earth from above: How They Read Aerial and Satellite Images; IOP Publishing: Kuching, Malaysia, 2013. [Google Scholar]

- Rogers, E. A study of visual reasoning in medical diagnosis. In Proceedings of Eighteenth Annual Conference of the Cognitive Science Society, San Diego, CA, USA, 12–15 July 1996; pp. 213–218.

- Van Collie, F.; Gardin, S.; Anseel, F.; Duvuk, W.; Verbeke, L.; de Wulf, R. Variability of operator performance in remote sensing image interpretation: The importance of human and external factors. Int. J. Remote Sens. 2014, 35, 754–778. [Google Scholar] [CrossRef]

- Battersby, S.; Hodgson, M.E.; Wang, J. Spatial resolution imagery requirements for identifying structure damage in a hurricane disasters: A cognitive approach. Photogramm. Eng. Remote Sens. (PE&RS) 2012, 78, 625–635. [Google Scholar] [CrossRef]

© 2015 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Bianchetti, R.A.; MacEachren, A.M. Cognitive Themes Emerging from Air Photo Interpretation Texts Published to 1960. ISPRS Int. J. Geo-Inf. 2015, 4, 551-571. https://doi.org/10.3390/ijgi4020551

Bianchetti RA, MacEachren AM. Cognitive Themes Emerging from Air Photo Interpretation Texts Published to 1960. ISPRS International Journal of Geo-Information. 2015; 4(2):551-571. https://doi.org/10.3390/ijgi4020551

Chicago/Turabian StyleBianchetti, Raechel A., and Alan M. MacEachren. 2015. "Cognitive Themes Emerging from Air Photo Interpretation Texts Published to 1960" ISPRS International Journal of Geo-Information 4, no. 2: 551-571. https://doi.org/10.3390/ijgi4020551

APA StyleBianchetti, R. A., & MacEachren, A. M. (2015). Cognitive Themes Emerging from Air Photo Interpretation Texts Published to 1960. ISPRS International Journal of Geo-Information, 4(2), 551-571. https://doi.org/10.3390/ijgi4020551