A Spatially Intelligent Public Participation System for the Environmental Impact Assessment Process

Abstract

:1. Introduction

2. Study Area Selection

2.1. California Energy Problem and Study Site Selection

2.2. Existing Website Problems

3. Conceptual Framework Design

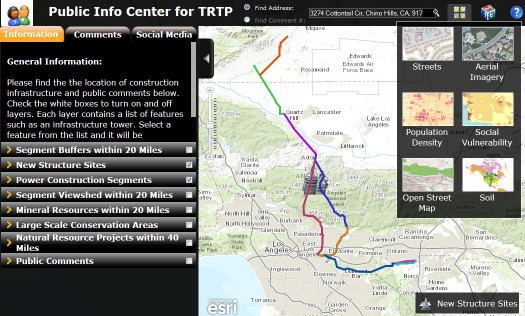

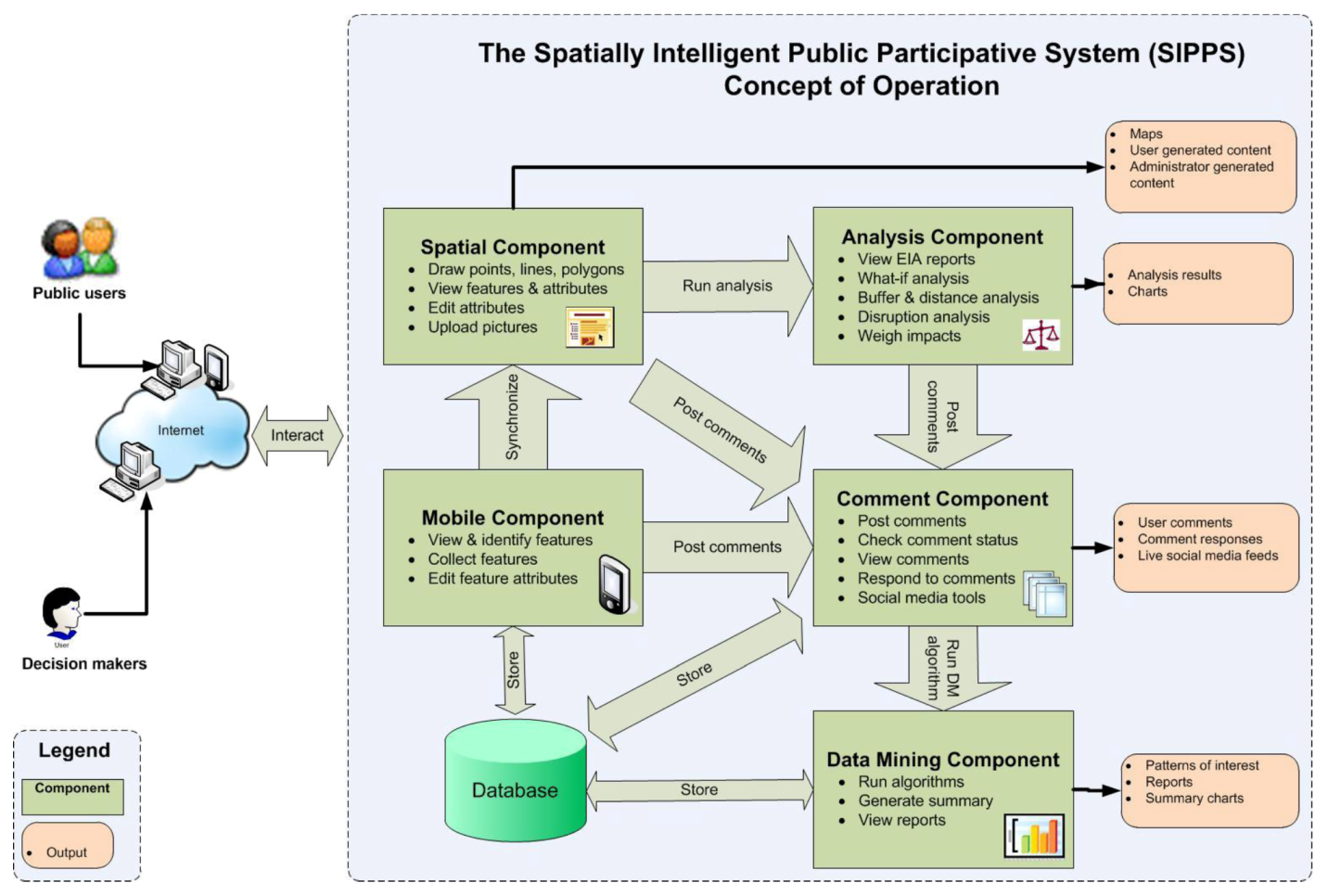

- The spatial component visualizes the EIA data on a map viewer to assist scoping and planning and distributes the EIA geospatial information through a graphical user interface (GUI) to improve public outreach. This component will allow a user to dynamically navigate maps, view and toggle environmental information layers related to an EIA project, locate geographic objects (e.g., a proposed power station) on the map and review object attributes (e.g., the height of a power tower) in a pop-up window. One of the most critical activities in the EIA scoping phase is to identify potential alternatives [34]. Adequate consideration of various alternatives can mitigate possible bias, improve process transparency, increase stakeholders’ confidence and contribute to a balanced decision-making process. With the help of the spatial component, stakeholders will be able to assist in the identification of potential impacts using feature editing tools and maps from the area (e.g., a public user may use the tool to draw a new polyline in a different place on the map to indicate another possible electric power transmission segment that would meet the same purpose and need for the TRTP project).

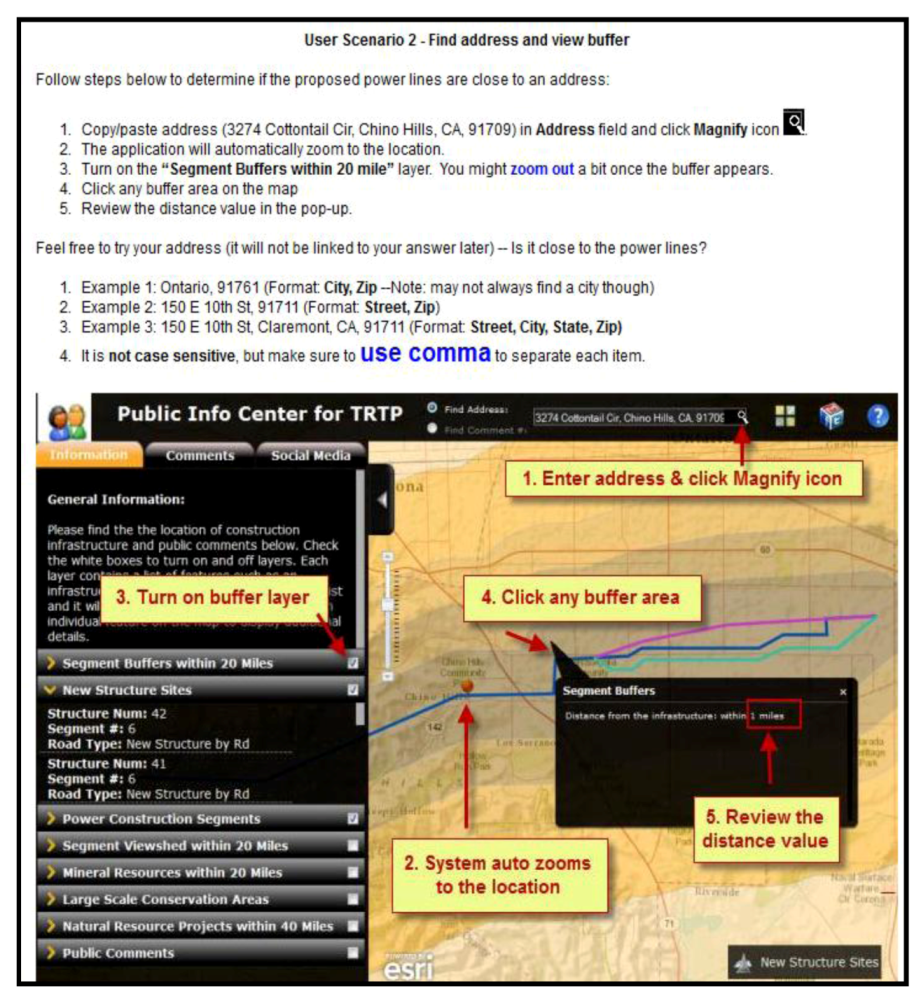

- The analysis component supports planning and discloses further intelligence that may not be otherwise obvious to increase environmental awareness. The analysis component will enable target users to perform scenario analysis and what-if analysis, weigh environmental factors for different project alternatives and outcomes and contemplate how a selected location they care about is spatially related to and potentially affected by the proposed EIA activities such as the construction of power transmission lines.

- The comment component provides a platform for comment collection, review and management. This component will allow a public user to review existing comments posted by other citizens, interact with other user comments, rate comments and post new comments specific to geographic locations on the map. Comments are no longer limited to text, but can also be user-generated content, such as PDF files, pictures, audio files or videos that can be uploaded to associate with comments on the map. This component will also facilitate decision makers to categorize comments, update comment status (e.g., status for submitted, reviewed and responded comments), respond to comments and review the spatial and temporal relationship among comments to obtain a holistic view. In addition, social media tools (e.g., YouTube, Flickr and Twitter) are included in the comment component to facilitate information sharing. With the increasing influence of VGI, information from virtual communities and social media networks becomes an important part of local intelligence and contributes to the aggregation of web maps and user-generated content. This part in the component will retrieve live feeds that contain keywords about the selected EIA project from YouTube, Flickr and Twitter and display the feeds dynamically with their spatial footprints on the map viewer. In this way, users can easily access the component to discover project events and activities through social media posts.

- The data mining component will use data mining algorithms such as clustering analysis to support comment analysis. By reviewing the results and graphs generated in data mining, decision makers will be able to have a better understanding about the micro-culture and composition of the local community, classify diverse interests and concerns and identify potential patterns and underlying issues that may not be easily seen through pure textual files. In this way, fairness and trust is enhanced.

- The mobile component will engage the public through another dimension. With the help of the mobile component, public users will be able to use a mobile platform to view the proposed EIA activities, perform basic map navigation, identify geographic objects and their attributes, review and post comments and associate the user-generated content such as a mobile picture taken via their mobile camera to their comments.

4. Prototype Development

4.1. Data Collection and Processing

4.2. Application Design and Implementation

5. Evaluation and Survey Design

5.1. Evaluation Criteria

5.2. Survey Design and Distribution

6. Results and Analysis

6.1. Results for Demographic Questions

6.2. Results for Scenario Questions

| Scenario Questions | Mean | Std. Deviation |

|---|---|---|

| 10. The power segments information is useful to me in understanding the project. | 2.44 | 0.763 |

| 11. Viewing the buffer information helps me to understand the project. | 2.75 | 0.722 |

| 12. Viewing the viewshed information helps me to understand the project. | 2.73 | 0.697 |

| 13. The layers information helps me to understand the project and its potential impact. | 2.88 | 0.680 |

| 14. Viewing the Social Vulnerability Index Map helps me in understanding the potential impact of the project. | 3.00 | 0.690 |

| 15. Reviewing my neighbors’ comments improves my understanding about the project. | 2.99 | 0.645 |

| 16. Knowing that I can review my neighbor’s comments makes me more willing to participate in the commenting process. | 3.07 | 0.632 |

| 17. Knowing that I can share my comments with the public will promote my participation in the project. | 2.96 | 0.715 |

| 18. Compared to the old system, this application helps me interact with other public citizens. | 2.89 | 0.598 |

| 19. Compared to the old system, I am more willing to mention or share this application with my family and friends. | 2.86 | 0.620 |

| 20. Sharing comments will improve the transparency of the project EIA process. | 3.19 | 0.607 |

| 22. I am more willing to submit my comments using this application than using emails. | 2.81 | 0.699 |

| 23. I am more willing to submit my comments using this application than attending physical meetings. | 3.28 | 0.750 |

| 24. I feel more flexible in expressing my ideas on the proposed project using this application than using emails. | 2.84 | 0.769 |

| 25. I will save more time in the EIA participation process using the application than using email. | 2.93 | 0.784 |

| 26. The information retrieved from the social media network improves my understanding about the project. | 2.78 | 0.713 |

| 27. Including the social media tools in the application makes me more willing to participate in the project. | 2.78 | 0.822 |

| 28. Compared to an FTP site, I am more able to access the TRTP information with this website. | 3.00 | 0.673 |

| 29. I think a website like this will be effective in promoting environmental awareness. | 3.07 | 0.651 |

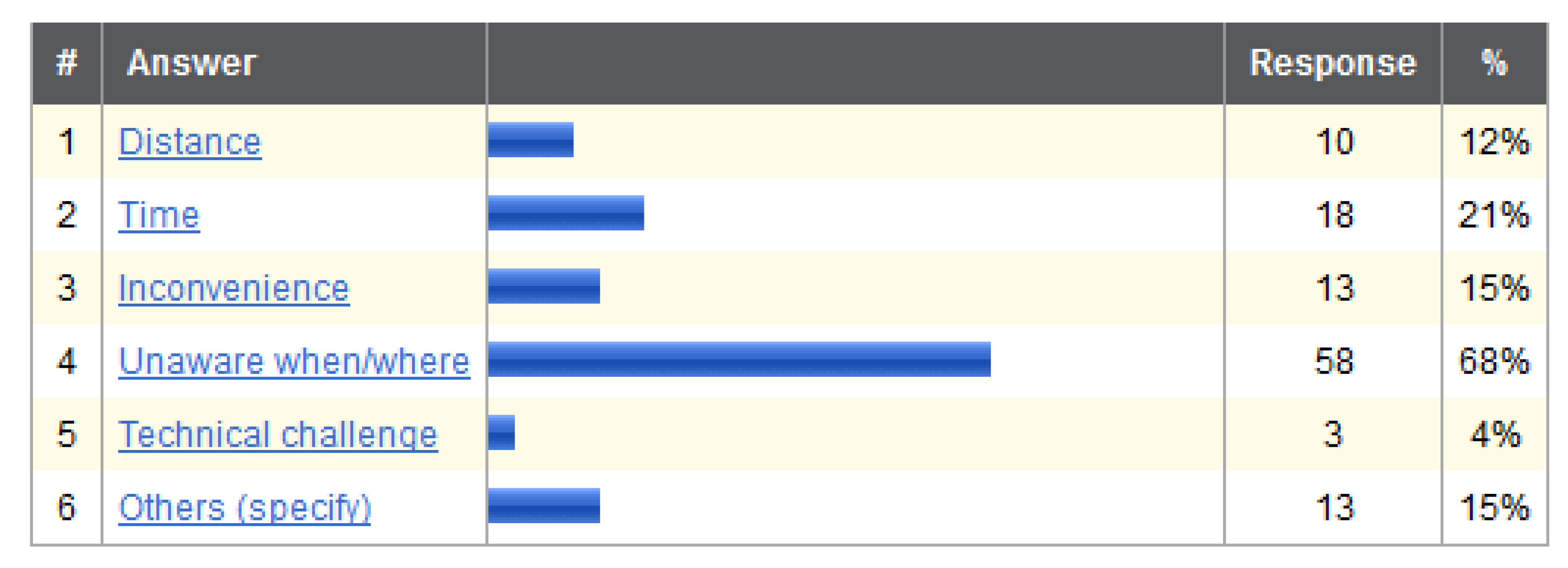

- Questions 11, 12, 28 are all positively correlated to Question 25. Questions 11 and 12 ask if providing buffer and viewshed information helps public users to improve their understanding about the EIA project. Question 28 compares information accessibility between this prototype website and the old TRTP project site, and Question 25 asks if using the new system will save more time than email for a citizen to participate in the EIA process. The interpretation is that the respondents agreed that a web-based application with better information accessibility and presentation was likely to save them more time than emails in their EIA participation process. As previously shown in Figure 4, 68% of the respondents did not know where and when an EIA took place and 21% selected time as the primary reason to not participate in EIAs. This suggests that the traditional participative methods failed to deliver satisfactory information accessibility and time efficiency to the public users. With the old TRTP system, it was time consuming for the public users to explore the lengthy EIA documents to obtain the specific information they look for; with emails, they had to describe their particular situations in abstract text. On the contrary, with the help of the new system, participants can now easily review digital maps to capture a quick snapshot of the most concerned topics or locations and then submit comments by simply clicking their concerned location on the map and filling out the comment form electronically. Again the correlation suggests that the prototype system has improved the information accessibility and time efficiency and, thus, the effectiveness of public participation.

- Questions 12 and 26 are positively correlated to Question 28. Questions 12 and 26 are related to providing viewshed information and social media feeds to public users, and Question 28 compares information accessibility of the new system with that of the original project website. As previously mentioned in Section 6.1, 80% of the participants reported daily usage of social media tools. Those tools can be a major information intake source in the user’s daily information ingestion pattern; reading social media information may even become a habit. Literature also suggested that many public service activities and environmental agencies are currently faced with the challenge of budget cuts and personnel reduction, which led to a limited public outreach [26]. A more active virtual community enabled by VGI and social media tools is able to mitigate the burden on public outreach and benefit data collection and information dissemination. Since the original TRTP EIA project site does not provide any information such as viewshed and social media feeds to the public users, including this additional information in the new system fits the daily information absorbing pattern of the participants and, thus, improves information accessibility by providing instantaneous data access in the EIA process.

- Question 14 is positively correlated to Question 27 at the p < 0.01 level and to all of the other scenario questions at the p < 0.05 level. Question 14 asks whether viewing the Social Vulnerability Index Map is helpful for participants to understand the potential impact of the project. Social vulnerability refers to sensitivity to the exposure, due to characteristics such as age, low income, disability or home value [40]. This index helps to identify the areas of relatively greater potential social economic impact from disaster or hazards on a county or state level. The strong correlation between Question 14 and all other scenario questions can be an indication that there is a concern for the EIA project’s social-economic impacts on people’s perception towards risks and hazards surrounding them. Therefore, providing the public with this information addresses this concern and will motivate people to participate in EIA projects.

- Questions 15–19 and 26 are all pertinent to sharing and interaction (through reviewing neighbor comments, posting new comments, reaching out to family and friends or social media tools), and they are positively correlated as well. This is an indication that providing the public with the capability to review and share information in an interactive manner is an effective way to improve public participation.

- Questions 23 and 24 are positively correlated to Question 27. Questions 23 and 24 compare flexibility and the comment submission method of the new system with the traditional public participative methods (email and physical meetings), and Question 27 asks if including the social media tools in the application increases user willingness to participate in the project. The interpretation is that the users who agreed that including social media tools increased their willingness to participate were more likely to agree that the new system was better than email or physical meetings for them to participate in EIA. This interpretation again indicates that including social media tools may promote user preference of the prototype over the traditional participative methods and, thus, boost public participation in the EIA process.

6.3. Results for SUS Questions

| Question | Strongly Disagree | Disagree | Neutral | Agree | Strongly Agree | Total Responses | Mean |

|---|---|---|---|---|---|---|---|

| 31. I think that I would like to use this system frequently. | 11 | 19 | 33 | 20 | 2 | 85 | 2.8 |

| 33. I thought the system was easy to use. | 6 | 13 | 27 | 34 | 5 | 85 | 3.22 |

| 35. I found the various functions in this system were well integrated. | 2 | 6 | 26 | 47 | 4 | 85 | 3.53 |

| 37. I would imagine that most people would learn to use this system very quickly. | 8 | 27 | 19 | 29 | 2 | 85 | 2.88 |

| 39. I felt very confident using the system. | 6 | 21 | 29 | 24 | 5 | 85 | 3.01 |

| 32. I found the system unnecessarily complex. | 9 | 20 | 26 | 25 | 5 | 85 | 2.96 |

| 34. I think that I would need the support of a technical person to be able to use this system. | 10 | 33 | 20 | 19 | 3 | 85 | 2.67 |

| 36. I thought there was too much inconsistency in this system. | 7 | 41 | 26 | 8 | 3 | 85 | 2.52 |

| 38. I found the system very cumbersome to use. | 8 | 29 | 27 | 17 | 4 | 85 | 2.76 |

| 40. I needed to learn a lot of things before I could get going with this system. | 7 | 26 | 18 | 25 | 9 | 85 | 3.04 |

6.4. Optional Questions Results

6.5. Reliability Analysis

7. Limitations and Remarks

8. Contributions and Conclusions

Acknowledgments

References

- Del Furia, L.; Wallace-Jones, J. The effectiveness of provisions and quality of practices concerning public participation in EIA in Italy. Environ. Impact Asses. 2000, 20, 457–479. [Google Scholar]

- Qin, X.; Tao, C. Analysis of Public Constructoin and the Use of Envrionmental Information. In Proceedings of International Conference on Mechanic Automation and Control Engineering (MACE), Wuhan, China, 26–28 June 2010; pp. 1948–1951.

- Lowndes, V.; Pratchett, L.; Stoker, G. Trends in public participation: Part 1—Local government perspectives. Public Admin. 2001, 79, 205–222. [Google Scholar] [CrossRef]

- Gonzalez, A.; Gilmer, A.; Foley, R.; Sweeney, J.; Fry, J. Technology-aided participative methods in environmental assessment: An international perspective. Comput. Environ. Urban 2008, 32, 303–316. [Google Scholar] [CrossRef]

- Harris, T.; Weiner, D. GIS and Society: The Social Implications of How People, Space, and Environment are Represented in GIS. Available online: www.ncgia.ucsb.edu/Publications/Tech_Reports/96/96–7.PDF (accessed on 13 January 2013).

- Sieber, R. Public participation geographic information systems: A literature review and framework. Ann. Assoc. Am. Geogr. 2006, 96, 491–507. [Google Scholar] [CrossRef]

- Al-Kodmany, K. GIS and the Artist: Shaping the Image of a Neighbourhood in Participatory Environmental Design. In Community Participation and Geographic Information Systems, 1st ed.; Craig, W.J., Harris, T.M., Weiner, D., Eds.; Taylor and Francis: New York, NY, USA, 2002; pp. 320–329. [Google Scholar]

- Bojórquez-Tapia, L.; Díaz-Mondragón, S.; Ezcurra, E. GIS-based approach for participatory decisionmaking and land suitability assessment. Int. J. Geogr. Inf. Sci. 2001, 15, 129–151. [Google Scholar] [CrossRef]

- Kingston, R.; Carver, S.; Evans, A.; Turton, I. Web-based public participation Geographical Information Systems: An aid to local environmental decision-making. Comput. Environ. Urban 2000, 24, 109–125. [Google Scholar] [CrossRef]

- Haklay, M.E. Public access to environmental information: Past, present and future. Comput. Environ. Urban 2003, 27, 163–180. [Google Scholar]

- Stefanidis, A.; Crooks, A.; Radzikowski, J. Harvesting ambient geospatial information from social media feeds. Environ. Manage. 2012, 49, 816–832. [Google Scholar]

- Yap, L.F.; Bessho, M.; Koshizuka, N.; Sakamura, K. User-Generated Content for Location-Based Services: A Review. In Virtual Communities, Social Networks and Collaboration; Lazakidou, A.A., Ed.; Springer Science: New York, NY, USA, 2012; Volume 15, p. 163. [Google Scholar]

- Howe, J. The Rise of Crowdsourcing. Available online: http://www.wired.com/wired/archive/14.06/crowds.html (accessed on 13 January 2013).

- Brabham, D.C. Crowdsourcing as a model for problem solving: An introduction and cases. Int. J. Res. New Media Technol. 2008, 14, 75–90. [Google Scholar]

- Committee on New Research Directions for the National Geospatial-Intelligence Agency; Mapping Science Committee; Board on Earth Sciences and Resources; Division on Earth and Life Studies. Workshop on New Research Directions for the National Geospatial-Intelligence Agency; The National Academies Press: Washington, DC, USA, 2010, 1st ed. pp. 2–3. Available online: http://www.nap.edu/catalog.php?record_id=12964 (accessed on 13 January 2013).

- Goodchild, M.F. NeoGeography and the nature of geographic expertise. J. Locat. Based Serv. 2009, 3, 82–96. [Google Scholar] [CrossRef]

- Núñez-Redó, M.; Díaz, L.; Gil, J.; González, D.; Huerta, J. Discovery and Integration of Web 2.0 Content into Geospatial Information Infrastructures: A Use Case in Wild Fire Monitoring. In Proceedings of the IFIP WG 8.4/8.9 International Cross Domain Conference on Availability, Reliability, and Security for Business, Enterprise and Health Information Systems, Vienna, Austria, 22–26 August 2011; Tjoa, A.M., Oqirchmayr, G., You, I., Xu, L., Eds.; Springer-Verlag: Vienna, Austria, 2011; pp. 50–68. [Google Scholar]

- Goodchild, M.F. Citizens as voluntary sensors: Spatial data infrastructure in the world of Web 2.0. Int. J. Spat. Data Infrastruct. Res. 2007, 2, 24–32. [Google Scholar]

- Tulloch, D.L. Many Many Maps: Empowerment and Online Participatory Mapping. Available online: http://firstmonday.org/htbin/cgiwrap/bin/ojs/index.php/fm/article/view/1620/1535 (accessed on 13 January 2013).

- Turner, A.J. Introduction to Neogeography; O’Reilly Media: Sebastopol, CA, USA, 2006; pp. 2–3. [Google Scholar]

- Flanagin, A.J.; Metzger, M.J. The credibility of volunteered geographic information. GeoJournal 2008, 72, 137–148. [Google Scholar] [CrossRef]

- Belimpasakis, P.; Saaranen, A. Sharing with people: A system for user-centric content sharing. Multimed. Syst. 2010, 16, 399–421. [Google Scholar]

- Daz, L.; Granell, C.; Gould, M.; Huerta, J. Managing user-generated information in geospatial cyber infrastructures. Future Gener. Comput. Syst. 2011, 27, 304–314. [Google Scholar] [CrossRef]

- Creighton, J. The Public Participation Handbook: Making Better Decisions through Citizen Involvement, 1st ed.; Jossey-Bass: San Francisco, CA, USA, 2005; p. 244. [Google Scholar]

- Ho, S.; Rajabifard, A. Learning from the Crowd: The Role of Volunteered Geographic Information in Realising a Spatially Enabled Society. In Proceedings of GSDI 12 World Conference—Realising Spatially Enabled Societies, Singapore, 19–22 October 2010.

- Werts, J.D.; Mikhailova, E.A.; Post, C.J.; Sharp, J.L. An integrated WebGIS framework for volunteered geographic information and social media in soil and water conservation. Environ. Manage. 2012, 49, 816–832. [Google Scholar] [CrossRef]

- Danielsen, F.; Burgess, N.D.; Jensen, P.M.; Pirhofer-Walzl, K. Environmental monitoring: The scale and speed of implementation varies according to the degree of peoples involvement. J. App. Ecol. 2010, 47, 1166–1168. [Google Scholar] [CrossRef]

- Seeger, C.J. The role of facilitated volunteered geographic information in the landscape planning and site design process. GeoJournal 2008, 72, 199–213. [Google Scholar] [CrossRef]

- Kelly, M.; Tuxen, K.; Kearns, F. Geospatial informatics for management of a new forest disease: Sudden oak death. Photogramm. Eng. Remote Sensing 2004, 70, 1001–1004. [Google Scholar]

- Eick, C.; Vaezian, B.; Jiang, D.; Wang, J. Discovery of Interesting Regions in Spatial Datasets Using Supervised Clustering. In Proceedings of the 10th European Conference on Principles and Practice of Knowledge Discovery in Databases, Berlin, Germany, 18–22 September 2006; pp. 127–138.

- Rzhetsky, A.; Seringhaus, M.; Gerstein, M.B. Getting started in text mining: Part two. PLoS Comput. Biol. 2009, 5. [Google Scholar] [CrossRef]

- Maue, P.; Schade, S. Data integration in the geospatial semantic web. J. Cases Inf. Technol. 2009, 11, 100–122. [Google Scholar] [CrossRef]

- Gao, L.; Chang, E.; Han, S. Powerful tool to expand business intelligence: Text mining. World Acad. Sci. Eng. Technol. 2005, 8, 110–115. [Google Scholar]

- Glasson, J.; Therivel, R.; Chadwick, A. Introduction to Environmental Impact Assessment, 3rd ed.; Routledge: New York, NY, USA, 2005; p. 127. [Google Scholar]

- Bishop, P.; Davis, G. Mapping public participation in policy choices. Aust. J. Publ. Admin. 2006, 61, 14–29. [Google Scholar]

- Pring, G.; Noe, S.Y. The Emerging International Law of Public Participation Affecting Global Mining, Energy and Resource Development. In Human Rights in Natural Resource Development: Public Participation in the Sustainable Development of Mining and Energy Resources, 1st ed.; Zillman, D.M., Lucas, A., Pring, G., Eds.; Oxford University Press: New York, NY, USA, 2002; pp. 11–76. [Google Scholar]

- De Steiguer, J.E. Evaluating the Effectiveness of Multi-Criteria Decision Models for Improving Public Participation. Accession No. 0194153. Available online: http://www.reeis.usda.gov/web/crisprojectpages/194153.html (accessed on 29 July 2012).

- Pischke, F.; Cashmore, M. Decision-oriented environmental assessment: An empirical study of its theory and methods. Environ. Impact Asses. 2006, 26, 643–662. [Google Scholar] [CrossRef]

- Bangor, A.; Kortum, P.T.; Miller, J.A. An empirical evaluation of the System Usability Scale (SUS). Int. J. Human-Comput. Interact. 2008, 24, 574–594. [Google Scholar] [CrossRef]

- Cutter, S.L.; Mitchell, J.T.; Scott, M.S. Revealing the vulnerability of people and places: A case study of Georgetown County, South Carolina. Ann. Assn. Amer. Geogr. 2000, 90, 713–737. [Google Scholar] [CrossRef]

- Gouveia, C.; Fonseca, A. New approaches to environmental monitoring: The use of ICT to explore volunteered geographic information. GeoJournal 2008, 72, 185–197. [Google Scholar] [CrossRef]

- Elwood, S. Volunteered geographic information key questions, concepts and methods to guide emerging research and practice. GeoJournal 2008, 72, 133–135. [Google Scholar] [CrossRef]

- Schade, S.; Díaz, L.; Ostermann, F.; Spinsanti, L.; Luraschi, G.; Cox, S.; Nuñez, M.; de Longueville, B. Citizen-based sensing of crisis events: Sensor web enablement for volunteered geographic information. Appl. Geomat. 2011, 5, 3–18. [Google Scholar]

- Yanenko, O.; Schilieder, C. Enhancing the Quality of Volunteered Geographic Information: A Constraint-Based Approach. In Bridging the Geographic Information Sciences; Gensel, J., Josselin, D., Vandenbroucke, D., Eds.; Springer-Verlag: Berlin/Heidelberg, Germany, 2012; pp. 429–446. [Google Scholar]

- Bishr, M.; Mantelas, L. A trust and reputation model for filtering and classifying knowledge about urban growth. GeoJournal 2008, 72, 229–237. [Google Scholar] [CrossRef]

- Mummidi, L.; Krumm, J. Discovering points of interest from users’ map annotations. GeoJournal 2008, 72, 215–227. [Google Scholar] [CrossRef]

- Lee, V. Volunteer Monitoring: A Brief History. Available online: http://water.epa.gov/type/rsl/monitoring/upload/2004_10_13_monitoring_volunteer_newsletter_volmon06no1.pdf (accessed on 2 April 2013).

- De Longueville, B.; Annoni, A.; Schade, S.; Ostländer, N.; Whitmore, C. Digital Earth’s nervous system for crisis events: Real-time sensor web enablement of Volunteered Geographic Information. Int. J. Digital Earth 2010, 3, 242–259. [Google Scholar]

- Girres, J.-F.; Touya, G. Quality assessment of the French OpenStreetMap dataset. Trans. GIS 2010, 14, 435–459. [Google Scholar] [CrossRef]

- Connors, J.P.; Lei, S.; Kelly, M. Citizen science in the age of Neogeography: Utilizing volunteered geographic information for environmental monitoring. Ann. Assn. Amer. Geogr. 2012, 102, 1267–1289. [Google Scholar] [CrossRef]

- O’Faircheallaigh, C. Public participation and environmental impact assessment: Purpose, implications, and lessons for public policy making. Environ. Impact Asses. 2010, 30, 19–27. [Google Scholar] [CrossRef]

- Oden, M.D.; Lentz, R.G. Digital divide or digital opportunity in the Mississippi delta region of the US. Telecommun. Policy 2001, 25, 291–313. [Google Scholar] [CrossRef]

© 2013 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution license (http://creativecommons.org/licenses/by/3.0/).

Share and Cite

Lei, L.; Hilton, B. A Spatially Intelligent Public Participation System for the Environmental Impact Assessment Process. ISPRS Int. J. Geo-Inf. 2013, 2, 480-506. https://doi.org/10.3390/ijgi2020480

Lei L, Hilton B. A Spatially Intelligent Public Participation System for the Environmental Impact Assessment Process. ISPRS International Journal of Geo-Information. 2013; 2(2):480-506. https://doi.org/10.3390/ijgi2020480

Chicago/Turabian StyleLei, Lei, and Brian Hilton. 2013. "A Spatially Intelligent Public Participation System for the Environmental Impact Assessment Process" ISPRS International Journal of Geo-Information 2, no. 2: 480-506. https://doi.org/10.3390/ijgi2020480

APA StyleLei, L., & Hilton, B. (2013). A Spatially Intelligent Public Participation System for the Environmental Impact Assessment Process. ISPRS International Journal of Geo-Information, 2(2), 480-506. https://doi.org/10.3390/ijgi2020480