Enhancing Electric Vehicle Charging Infrastructure Planning with Pre-Trained Language Models and Spatial Analysis: Insights from Beijing User Reviews

Abstract

1. Introduction

2. Data Collection and Processing

2.1. Data Acquisition

2.2. Data Statistics and Pre-Processing

2.3. Data Annotation Strategy

2.3.1. Sentiment Labeling

2.3.2. Topic Labeling

2.4. Descriptive Analysis of Data

3. Methods

3.1. General Framework

3.2. Model Architecture

| Algorithm 1: Multi-task PLM framework |

|

| 1: Initialize model parameters (PLM encoder and classification head) 2: Initialize AdamW optimizer with learning rate and weight decay 3: for epoch = 1 to do: 4: for each batch from do: 5: Obtain contextualized hidden states: , where 6: if then: 7: Aggregate representation using the [CLS] token: 8: else if then: 9: Compute attention scores: 10: Compute attention weights: 11: Create context vector: end if 12: Pass pooled vector through the final linear layer to get logits: 13: if then: 14: Apply softmax to get probabilities: 15: Compute Cross-Entropy Loss: 16: else if then: 17: Compute Binary Cross-Entropy with Logits Loss: 19: Perform backpropagation to compute gradients 20: Update model parameters using the optimizer: 21: end for 22: end for 23: return |

3.3. Experimental Setup

3.3.1. Evaluation Indicators

3.3.2. Hyperparameterization

4. Results and Discussion

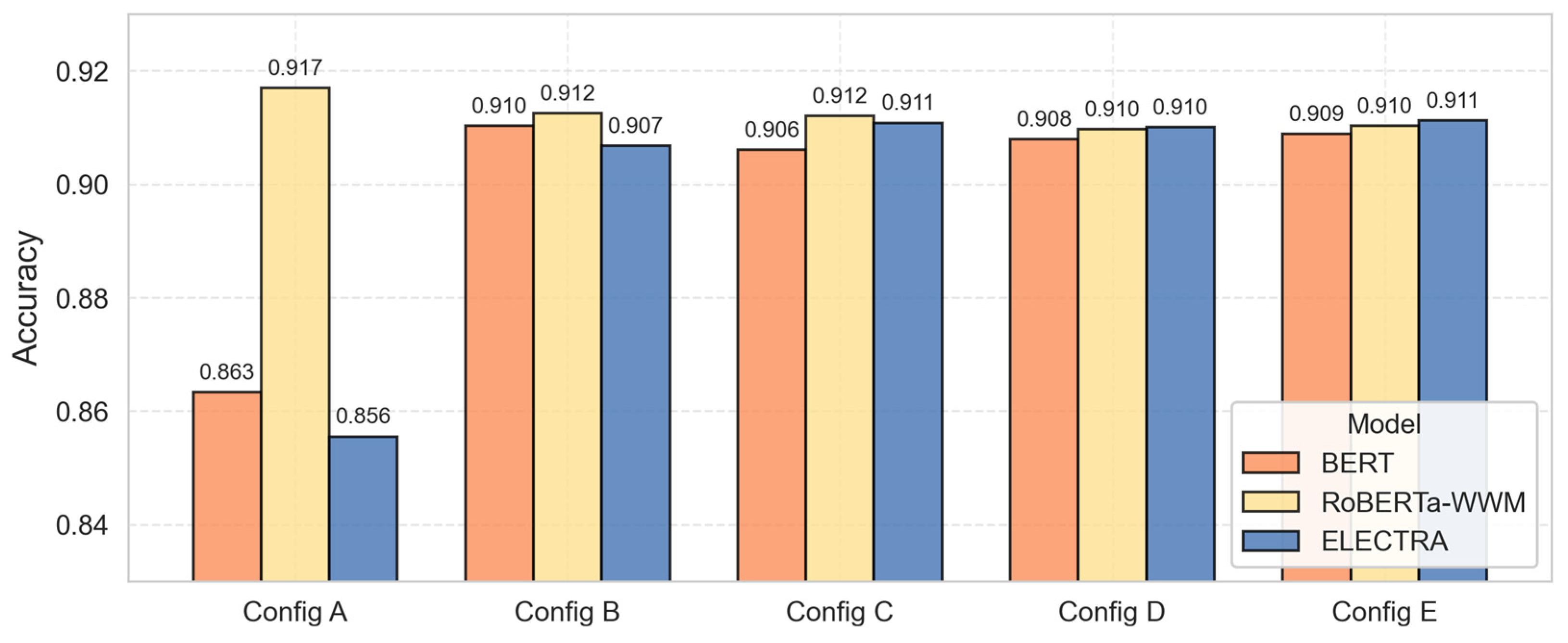

4.1. Overall Model Performance Comparison

4.2. Sentiment Analysis Results

4.2.1. Model Performance on Sentiment Recognition

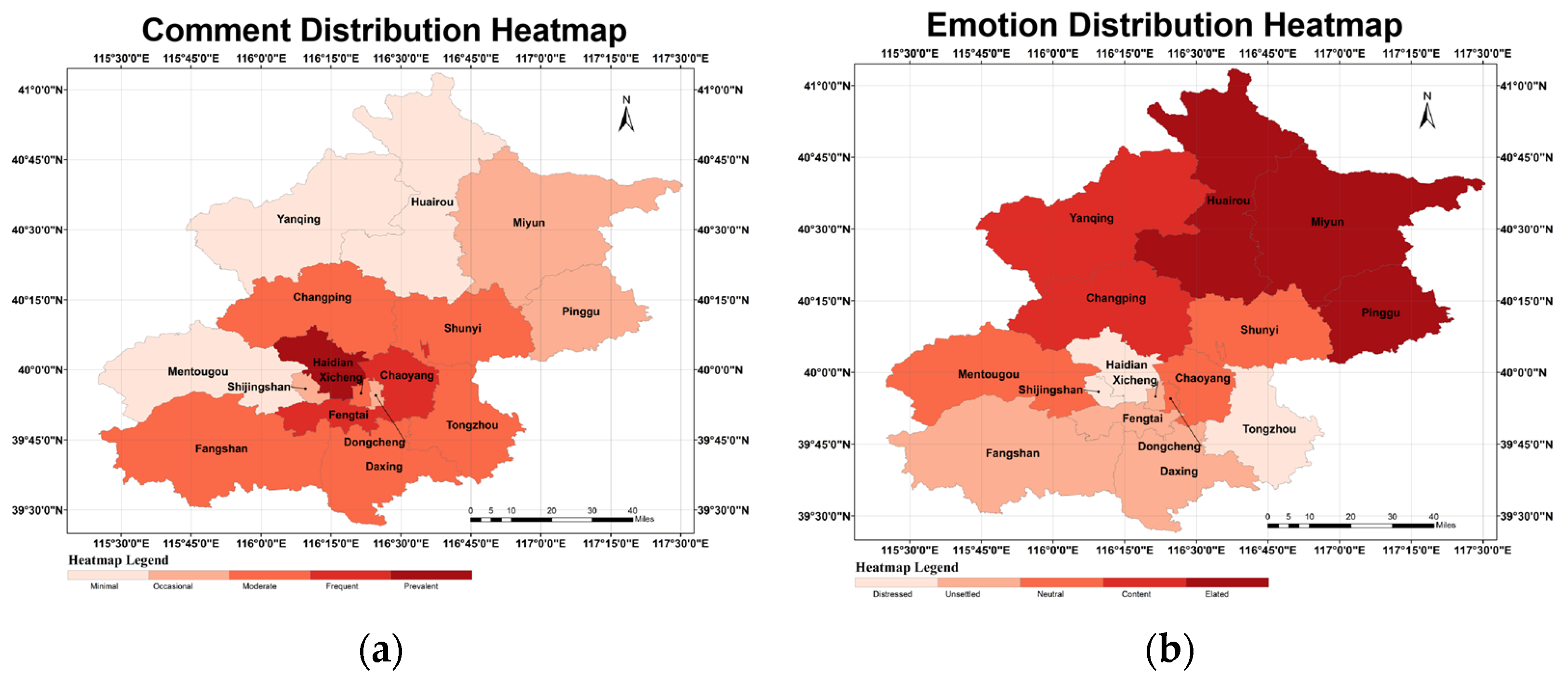

4.2.2. Spatial Distribution of Sentiment Patterns

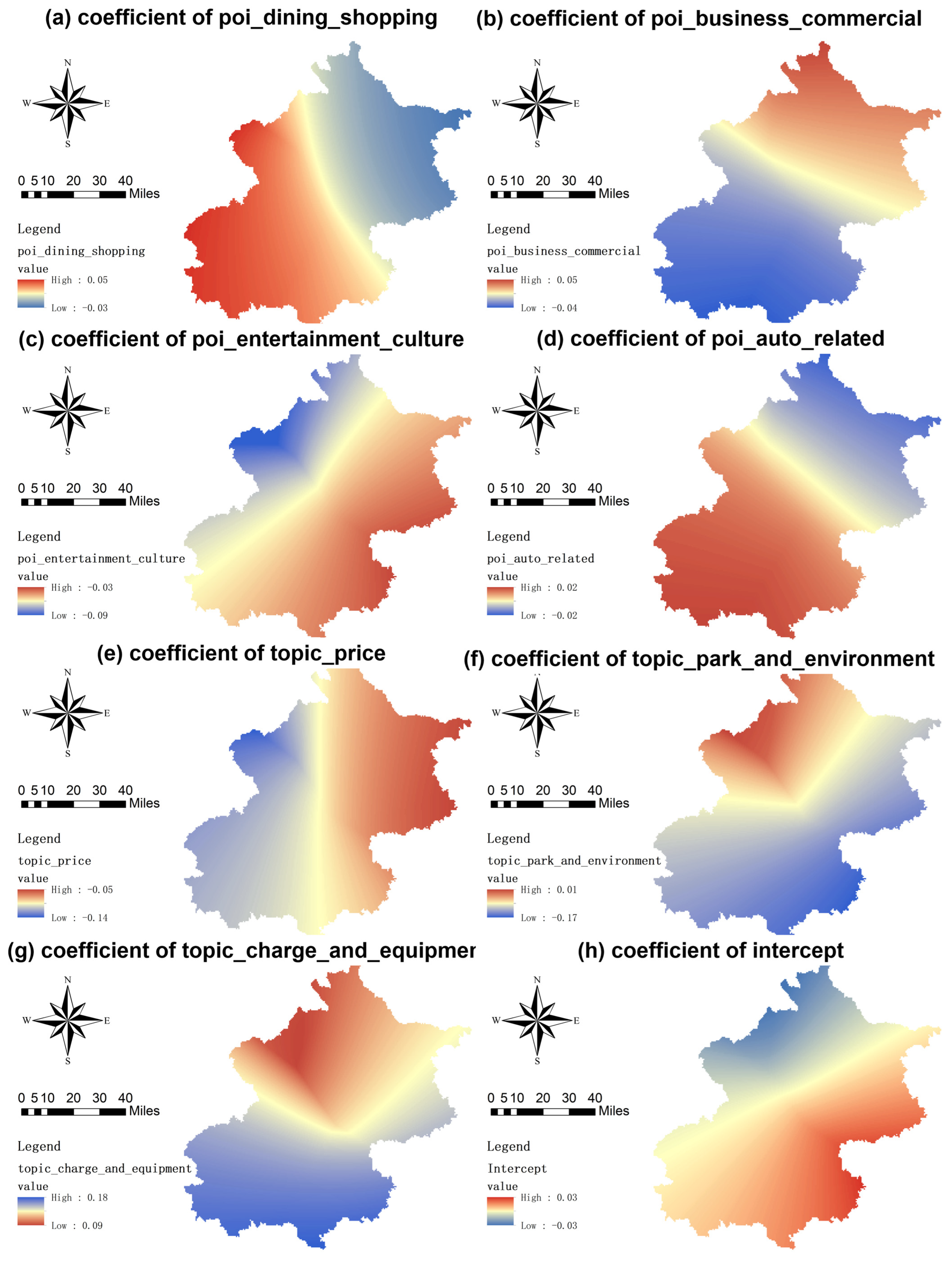

4.2.3. Geographically Weighted Regression Analysis

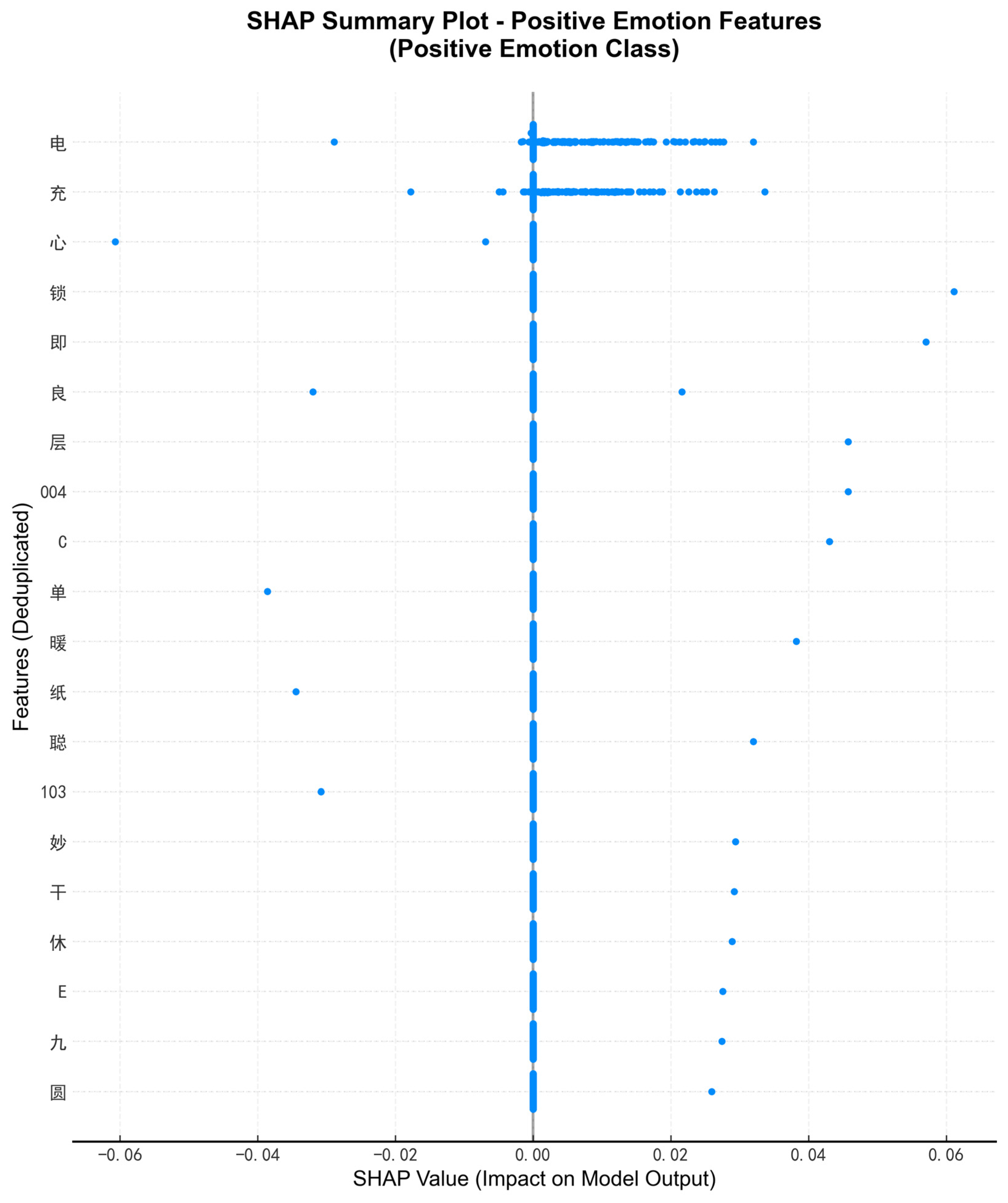

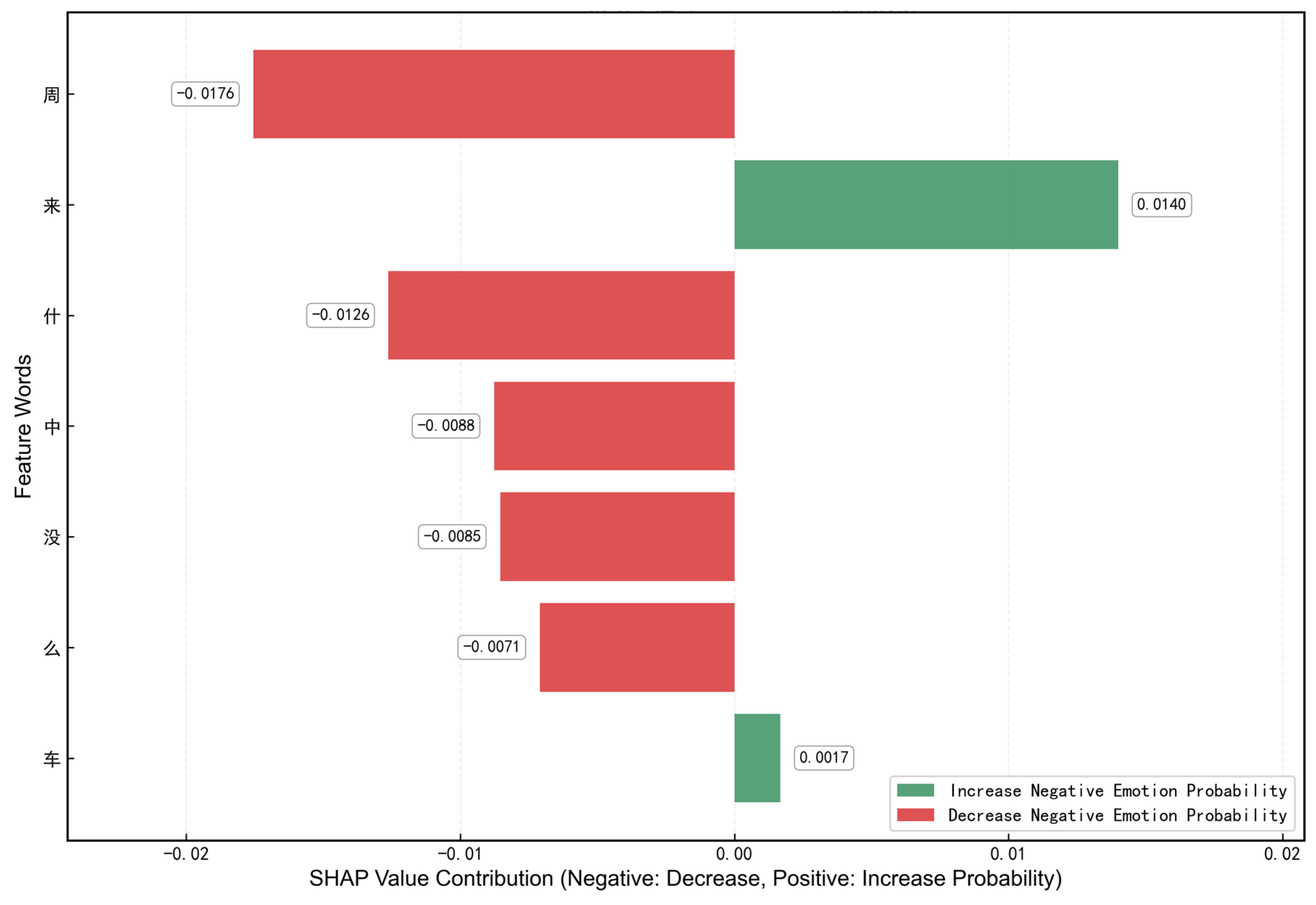

4.3. Model Interpretability Analysis Using SHAP

4.4. Topic Analysis Results

4.4.1. Fine-Grained vs. Coarse-Grained Topic Recognition Performance

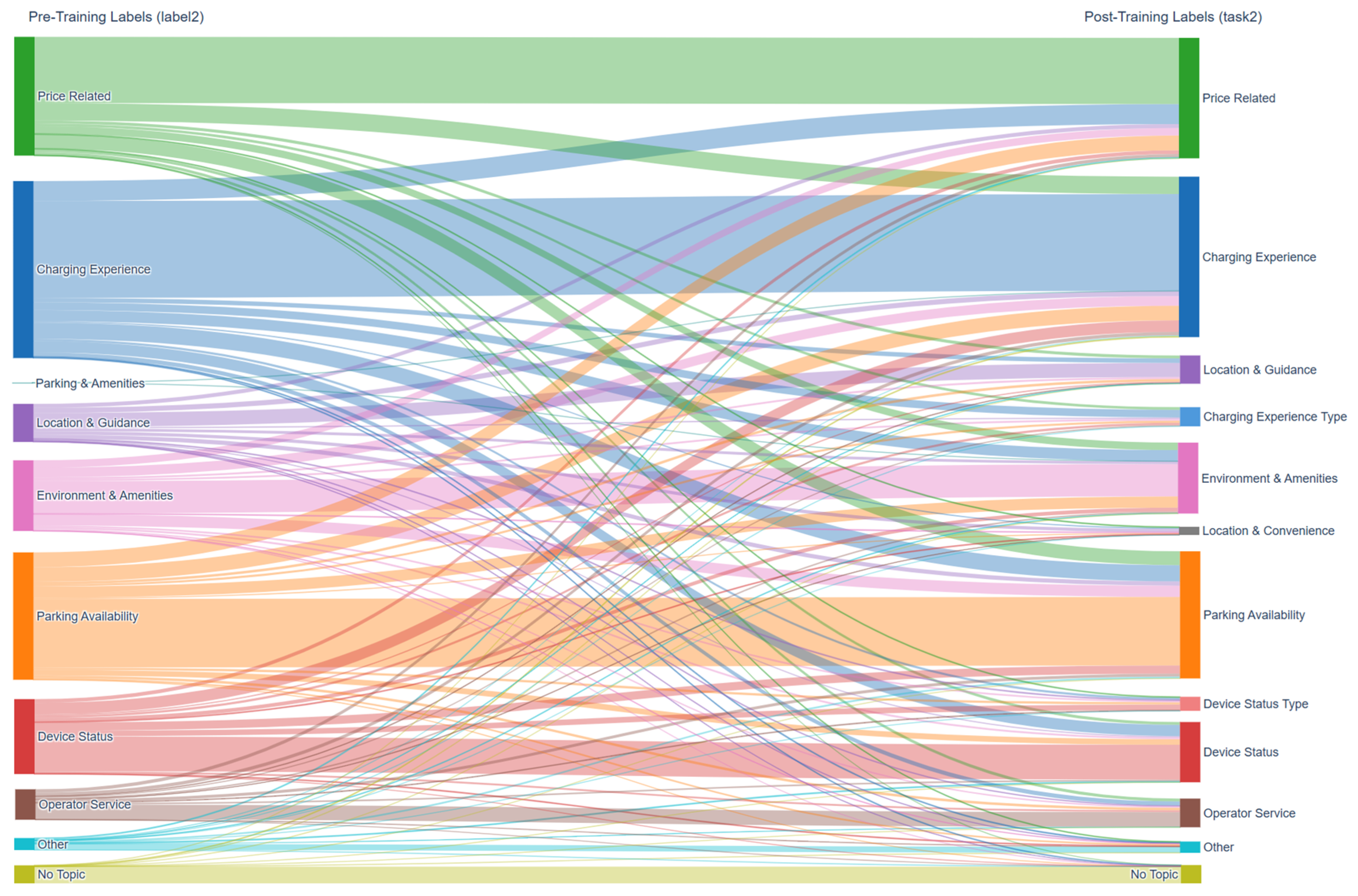

4.4.2. Model-Driven Label Taxonomy Normalization

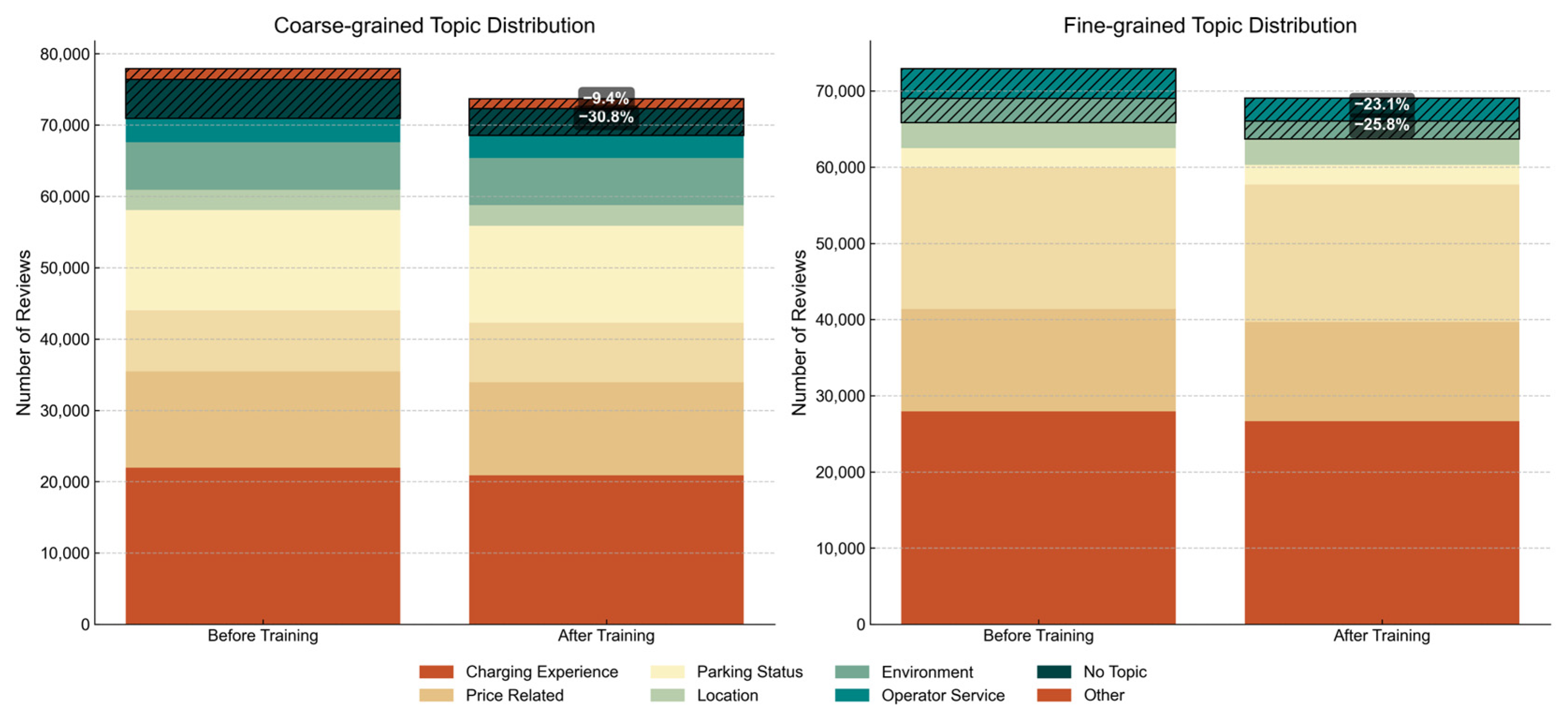

4.4.3. Topic Denoising and Information Enhancement

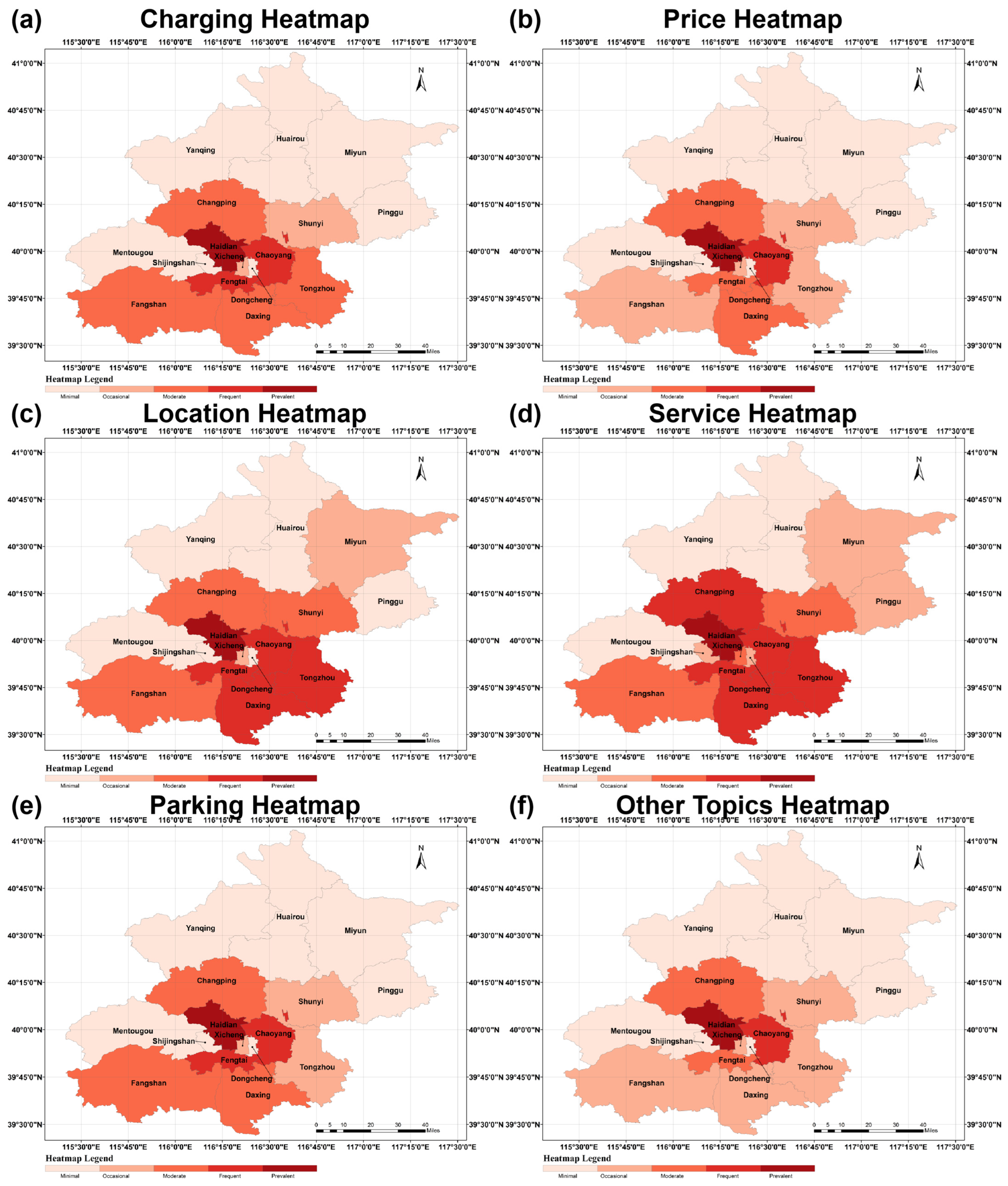

4.5. Spatial Analysis and Application Insights

4.5.1. Spatial Clustering of Charging Station Topics

4.5.2. Regional Anomalies and Their Implications

4.5.3. Correlation Between Topics and Spatial Features

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- LaMonaca, S.; Ryan, L. The state of play in electric vehicle charging services—A review of infrastructure provision, players, and policies. Renew. Sustain. Energy Rev. 2022, 154, 111733. [Google Scholar] [CrossRef]

- Bhat, F.A.; Tiwari, G.Y.; Verma, A. Preferences for public electric vehicle charging infrastructure locations: A discrete choice analysis. Transp. Policy 2024, 149, 177–197. [Google Scholar] [CrossRef]

- Li, S.G.; Liu, F.; Zhang, Y.Q.; Zhu, B.Y.; Zhu, H.; Yu, Z.X. Text Mining of User-Generated Content (UGC) for Business Applications in E-Commerce: A Systematic Review. Mathematics 2022, 10, 3554. [Google Scholar] [CrossRef]

- Yang, L.; Li, Y.; Wang, J.; Sherratt, R.S. Sentiment Analysis for E-Commerce Product Reviews in Chinese Based on Sentiment Lexicon and Deep Learning. IEEE Access 2020, 8, 23522–23530. [Google Scholar] [CrossRef]

- Kim, Y. Convolutional Neural Networks for Sentence Classification. In Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing, Doha, Qatar, 25–29 October 2014; pp. 1746–1751. [Google Scholar]

- Alemayehu, F.; Meshesha, M.; Abate, J. Amharic political sentiment analysis using deep learning approaches. Sci. Rep. 2023, 13, 17982. [Google Scholar] [CrossRef] [PubMed]

- Onan, A. Bidirectional convolutional recurrent neural network architecture with group-wise enhancement mechanism for text sentiment classification. J. King Saud Univ. Comput. Inf. Sci. 2022, 34, 2098–2117. [Google Scholar] [CrossRef]

- Devlin, J.; Chang, M.-W.; Lee, K.; Toutanova, K. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Minneapolis, MN, USA, 2–7 June 2019; pp. 4171–4186. [Google Scholar]

- Liu, Y.; Ott, M.; Goyal, N.; Du, J.; Joshi, M.; Chen, D.; Levy, O.; Lewis, M.; Zettlemoyer, L.; Stoyanov, V. RoBERTa: A Robustly Optimized BERT Pretraining Approach. arXiv 2019, arXiv:1907.11692. [Google Scholar] [CrossRef]

- Cui, Y.; Che, W.; Liu, T.; Qin, B.; Yang, Z. Pre-Training with Whole Word Masking for Chinese BERT. IEEE/ACM Trans. Audio Speech Lang. Process. 2021, 29, 3504–3514. [Google Scholar] [CrossRef]

- Clark, K.; Luong, M.-T.; Le, Q.V.; Manning, C.D. ELECTRA: Pre-training Text Encoders as Discriminators Rather Than Generators. arXiv 2020, arXiv:2003.10555. [Google Scholar] [CrossRef]

- Nazir, A.; Rao, Y.; Wu, L.W.; Sun, L. Issues and Challenges of Aspect-based Sentiment Analysis: A Comprehensive Survey. IEEE Trans. Affect. Comput. 2022, 13, 845–863. [Google Scholar] [CrossRef]

- Zhang, W.X.; Li, X.; Deng, Y.; Bing, L.D.; Lam, W. A Survey on Aspect-Based Sentiment Analysis: Tasks, Methods, and Challenges. IEEE Trans. Knowl. Data Eng. 2023, 35, 11019–11038. [Google Scholar] [CrossRef]

- Abdelgwad, M.M.; Soliman, T.H.A.; Taloba, A.; Farghaly, M.F. Arabic aspect based sentiment analysis using bidirectional GRU based models. J. King Saud Univ. Comput. Inf. Sci. 2022, 34, 6652–6662. [Google Scholar] [CrossRef]

- Hu, B.; Ester, M. Spatial topic modeling in online social media for location recommendation. In Proceedings of the 7th ACM Conference on Recommender Systems, Hong Kong, China, 12–16 October 2013; pp. 25–32. [Google Scholar]

- Mao, H.; Fan, Y.; Tong, M. Research on aspect-based sentiment analysis of movie reviews based on deep learning. J. Inf. Sci. 2024. [Google Scholar] [CrossRef]

- Ren, X.; Sun, S.; Yuan, R. A Study on Selection Strategies for Battery Electric Vehicles Based on Sentiments, Analysis, and the MCDM Model. Math. Probl. Eng. 2021, 2021, 9984343. [Google Scholar] [CrossRef]

- Chen, S.; Tu, C. Fine-Grained Sentiment Analysis of Electric Vehicle User Reviews: A Bidirectional LSTM Approach to Capturing Emotional Intensity in Chinese Text. arXiv 2024, arXiv:2412.03873. [Google Scholar] [CrossRef]

- Sharma, H.; Ud Din, F.; Ogunleye, B. Electric Vehicle Sentiment Analysis Using Large Language Models. Analytics 2024, 3, 425–438. [Google Scholar] [CrossRef]

- Obiedat, R.; Al-Darras, D.; Alzaghoul, E.; Harfoushi, O. Arabic Aspect-Based Sentiment Analysis: A Systematic Literature Review. IEEE Access 2021, 9, 152628–152645. [Google Scholar] [CrossRef]

- Li, F.; Huang, M.; Yang, Y.; Zhu, X. Learning to identify review spam. In Proceedings of the Twenty-Second International Joint Conference on Artificial Intelligence, Barcelona, Spain, 16–22 July 2011; Volume 3, pp. 2488–2493. [Google Scholar]

- Huang, C.; He, G. Text Clustering as Classification with LLMs. arXiv 2024, arXiv:2410.00927. [Google Scholar] [CrossRef]

- Gilardi, F.; Alizadeh, M.; Kubli, M. ChatGPT outperforms crowd workers for text-annotation tasks. Proc. Natl. Acad. Sci. USA 2023, 120, e2305016120. [Google Scholar] [CrossRef]

- Fonferko-Shadrach, B.; Strafford, H.; Jones, C.; Khan, R.A.; Brown, S.; Edwards, J.; Hawken, J.; Shrimpton, L.E.; White, C.P.; Powell, R.; et al. Annotation of epilepsy clinic letters for natural language processing. J. Biomed. Semant. 2024, 15, 17. [Google Scholar] [CrossRef]

- Ivanisenko, T.V.; Demenkov, P.S.; Ivanisenko, V.A. An Accurate and Efficient Approach to Knowledge Extraction from Scientific Publications Using Structured Ontology Models, Graph Neural Networks, and Large Language Models. Int. J. Mol. Sci. 2024, 25, 11811. [Google Scholar] [CrossRef] [PubMed]

- Zhu, L.; Xu, M.; Bao, Y.; Xu, Y.; Kong, X. Deep learning for aspect-based sentiment analysis: A review. PeerJ Comput. Sci. 2022, 8, e1044. [Google Scholar] [CrossRef]

- Wu, Z.; Cao, G.; Mo, W. Multi-Tasking for Aspect-Based Sentiment Analysis via Constructing Auxiliary Self-Supervision ACOP Task. IEEE Access 2023, 11, 82924–82932. [Google Scholar] [CrossRef]

- Bucher, M.J.J.; Martini, M. Fine-tuned’ small’ LLMs (still) significantly outperform zero-shot generative AI models in text classification. arXiv 2024, arXiv:2406.08660. [Google Scholar]

- Wang, Y.; Qu, W.; Ye, X. Selecting between BERT and GPT for text classification in political science research. arXiv 2024, arXiv:2411.05050. [Google Scholar] [CrossRef]

- Malvankar, K.; Fallon, E.; Connolly, P.; Flanagan, K. Performance optimization for transformer models on text classification tasks. In Proceedings of the 2023 International Conference on Emerging Techniques in Computational Intelligence (ICETCI), Hyderabad, India, 21–23 September 2023; pp. 105–111. [Google Scholar]

| Emotional Labels | Basis of Judgment | Typical Example | Typical Example (English) |

|---|---|---|---|

| Emotionally Positive | Comments that express positive emotions, such as satisfaction and appreciation. | 很赞,最近一年多都在用 | Great, I’ve been using it for over a year now. |

| Emotionally Negative | Comments that express dissatisfaction, complaints, and other negative emotions. | 一个半小时都充不满,太慢了浪费时间 | Takes an hour and a half and still can’t fully charge, way too slow and a waste of time. |

| Emotionally Neutral | Comments that do not contain explicit emotional tendencies or objective statements. | 应该是新开的站吧,桩子挺多,地方不是太大,错车要注意安全 | Must be a newly opened station—lots of chargers, but the space isn’t very big, so be careful when passing other cars. |

| Invalid Feedback | Comments whose content is not related to the charging service or that do not contain substantive information. | 1,234,567,890 | 1,234,567,890 |

| Level 1 Topic | Secondary Topic | Key Content Points and Examples | Typical Example | Typical Example (English) |

|---|---|---|---|---|

| Charging experience | Smooth charging | Fast charging, easy operation, and smooth process. | 充电真心慢 一个小时充了百分之35 | Charging is really slow, only 35% in an hour. |

| Charging problems | Slow charging, interruption or jumping off the gun, charging failure, unstable power, and model incompatibility. | |||

| Condition of equipment | Good equipment | Adequate equipment, easy to use, neat appearance, clear screen. Example: “Good facilities”. | 第一个老卡不上,换了一个,对女生可能还是费劲点 | The first one kept getting stuck, switched to another, still a bit tough for girls. |

| Equipment issues | The equipment is damaged, in short supply, outdated, defective in design, and inconvenient to use. | |||

| Location and convenience | Good location | Charging stations are conveniently located and easy to find, navigation is accurate, and parking spaces are close to the charging stations. | 很好找,每层停车场入口就能看见。 | Easy to find, visible at every parking lot entrance. |

| Location issues | Charging stations are hard to find, navigation is incorrect, and entrances are challenging to locate. | |||

| Clear guidance | Station directions are clear, app navigation is accurate, and bad piles are marked. | |||

| Lack of guidance | Confusing station directions, incorrect app navigation, and unmarked piles. | |||

| Price-related | Reasonable price | Affordable, cost-effective, and discounted. | 停车费太贵了12一小时了 | Parking fee is too expensive, 12 per hour. |

| Price issues | Charging, service, and parking fees are expensive, opaque, and inaccurately calculated. | |||

| The situation regarding parking spaces | Good parking | Plenty of parking, free parking, and well-managed dedicated spaces. | 油车占位现象严重,应加强管理 | Gas cars occupying spots is a serious issue, management should improve. |

| The problem of parking spaces | Parking spaces are tight; you have to wait in line; fuel trucks occupy space; parking fees are high; cars still take up space after filling up. | |||

| Environment and supporting facilities | Favorable environment | It is a clean environment, brightly lit, sheltered, and well-appointed. Example: “There are restrooms”. | 环境不错不错不错不错不错 | The environment is nice nice nice nice nice. |

| Environmental issues | The environment is dirty, dimly lit, and lacks support, with a poor Internet signal. | |||

| Operator services | Good service | Customer service is responsive, the staff is committed, the app is stable, and offers are real. | 总得来说满意 余额目前没发现怎能退回 | Overall satisfied, haven’t figured out how to refund the balance yet. |

| Service issues | Slow customer service response, employee negligence, a hard-to-use app, and payment, billing, balance, and membership issues. | |||

| (sth. or sb) else | Other issues | Specific information of value to other users that is not included in the above categorization. | 我真的不想评论的 烦人 | I really didn’t want to comment, so annoying. |

| Untitled | Untitled | No clear topic | 不错不错不错不错不错 | Nice. Nice. Nice. Nice. Nice. |

| Secondary Topic | Scope of Topics Covered at the First Level | Typical Example | Typical Example (English) |

|---|---|---|---|

| Charging experience and equipment | Charging experience + device status | 有一把枪用不了了,管理员赶紧修修吧 | There’s a charging gun that’s not working, please fix it quickly, administrator. |

| Location and guidance | Location and guidance | 商场环境好车位充足,交通便利 | The mall has a great environment with plenty of parking spaces and convenient transportation. |

| Price-related | Price-related | 太贵了 比国家电网的贵太多了 | It’s too expensive, much pricier than State Grid. |

| Car parking and environment package | Parking situation + environment and facilities | 全是油车占位 | All the spots are taken by gas cars. |

| Carrier service-related | Carrier-service related | 把红包用了,还有几块钱就得了,可能不来了。 | Use the discount, just save a few bucks, might not come again. |

| (sth. or sb) else | (sth. or sb) else | 这个月就可以做出一些改变 | This month, we can make some changes. |

| Untitled | Untitled | 好满意 | So satisfied. |

| Task Type | Metric | Formula | Interpretation |

|---|---|---|---|

| Sentiment analysis (single-label) | Accuracy | Overall classification correctness | |

| Macro-F1 | Unweighted average F1 across classes | ||

| Weighted-F1 | Support-weighted F1 across classes | ||

| Topic classification (multi-label) | Micro-F1 | Global F1 aggregating all labels | |

| Macro-F1 | Unweighted average F1 across labels | ||

| Sample-F1 | Average F1 per sample instance |

| Hyperparameter | Config A | Config B | Config C | Config D | Config E |

|---|---|---|---|---|---|

| Max sequence length | 128 | 128 | 256 | 128 | 128 |

| Batch size | 64 | 128 | 32 | 64 | 64 |

| Learning rate | 2 × 10−5 | 1 × 10−5 | 2 × 10−5 | 2 × 10−5 | 2 × 10−5 |

| Epochs | 20 | 20 | 20 | 20 | 30 |

| Dropout rate | 0.1 | 0.1 | 0.2 | 0.1 | 0.1 |

| Loss weights (S, CT, FT *) | [1.0, 1.0, 1.0] | [1.0, 1.0, 1.0] | [1.0, 1.0, 1.0] | [1.5, 1.0, 1.0] | [1.0, 1.0, 1.0] |

| Patience | 3 | 3 | 3 | 3 | 5 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Published by MDPI on behalf of the International Society for Photogrammetry and Remote Sensing. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Hou, Y.; Wang, P.; Yao, Z.; Zheng, X.; Chen, Z. Enhancing Electric Vehicle Charging Infrastructure Planning with Pre-Trained Language Models and Spatial Analysis: Insights from Beijing User Reviews. ISPRS Int. J. Geo-Inf. 2025, 14, 325. https://doi.org/10.3390/ijgi14090325

Hou Y, Wang P, Yao Z, Zheng X, Chen Z. Enhancing Electric Vehicle Charging Infrastructure Planning with Pre-Trained Language Models and Spatial Analysis: Insights from Beijing User Reviews. ISPRS International Journal of Geo-Information. 2025; 14(9):325. https://doi.org/10.3390/ijgi14090325

Chicago/Turabian StyleHou, Yanxin, Peipei Wang, Zhuozhuang Yao, Xinqi Zheng, and Ziying Chen. 2025. "Enhancing Electric Vehicle Charging Infrastructure Planning with Pre-Trained Language Models and Spatial Analysis: Insights from Beijing User Reviews" ISPRS International Journal of Geo-Information 14, no. 9: 325. https://doi.org/10.3390/ijgi14090325

APA StyleHou, Y., Wang, P., Yao, Z., Zheng, X., & Chen, Z. (2025). Enhancing Electric Vehicle Charging Infrastructure Planning with Pre-Trained Language Models and Spatial Analysis: Insights from Beijing User Reviews. ISPRS International Journal of Geo-Information, 14(9), 325. https://doi.org/10.3390/ijgi14090325