A Novel Multirobot System for Plant Phenotyping †

Abstract

1. Introduction

2. Materials and Methods

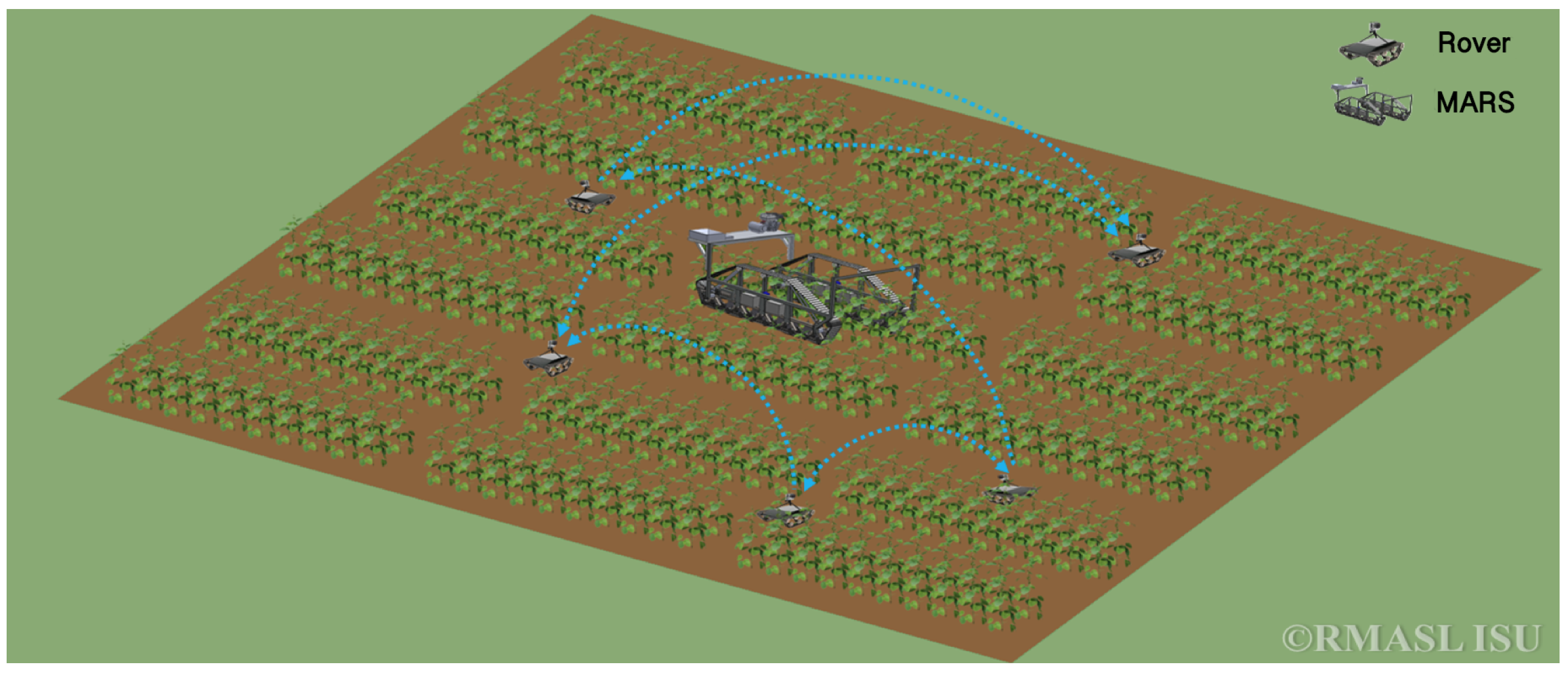

- Smaller light weight robots equipped with low resolution sensors that acquire frequent measurements, and infer changes that take place at small-time scales. We refer to them as rover.

- A mobile platform carrying high resolution sensors for accurate plant disease detection and analysis of spread of disease. The platform can be autonomous or guided by a human. For example, in [14], we present the construction of a Modular Autonomous Rover for Sensing (MARS) that uses the information provided by the rovers to capture hyperspectral images of plants.

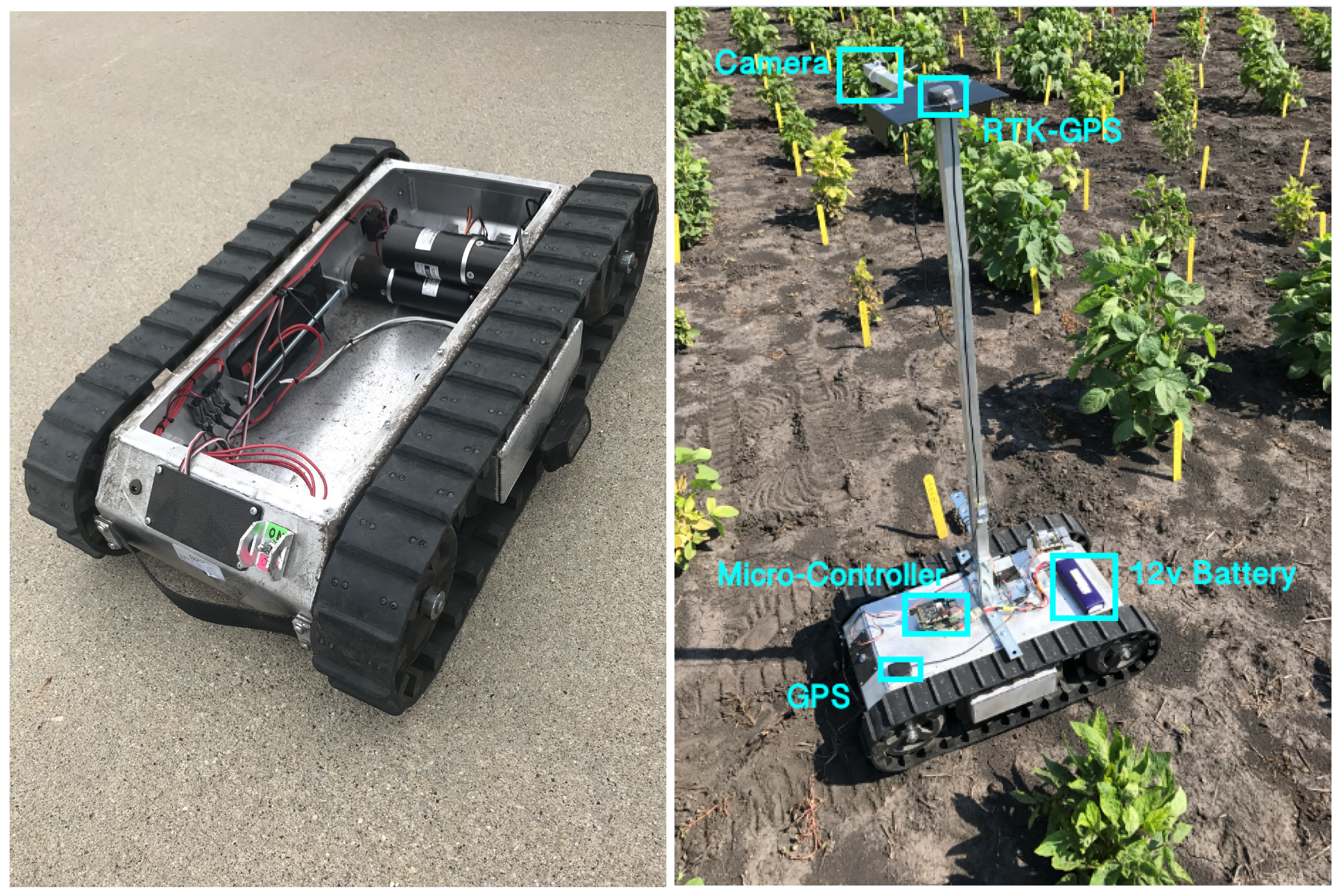

2.1. Robot Configurations

System Requirements for Rover

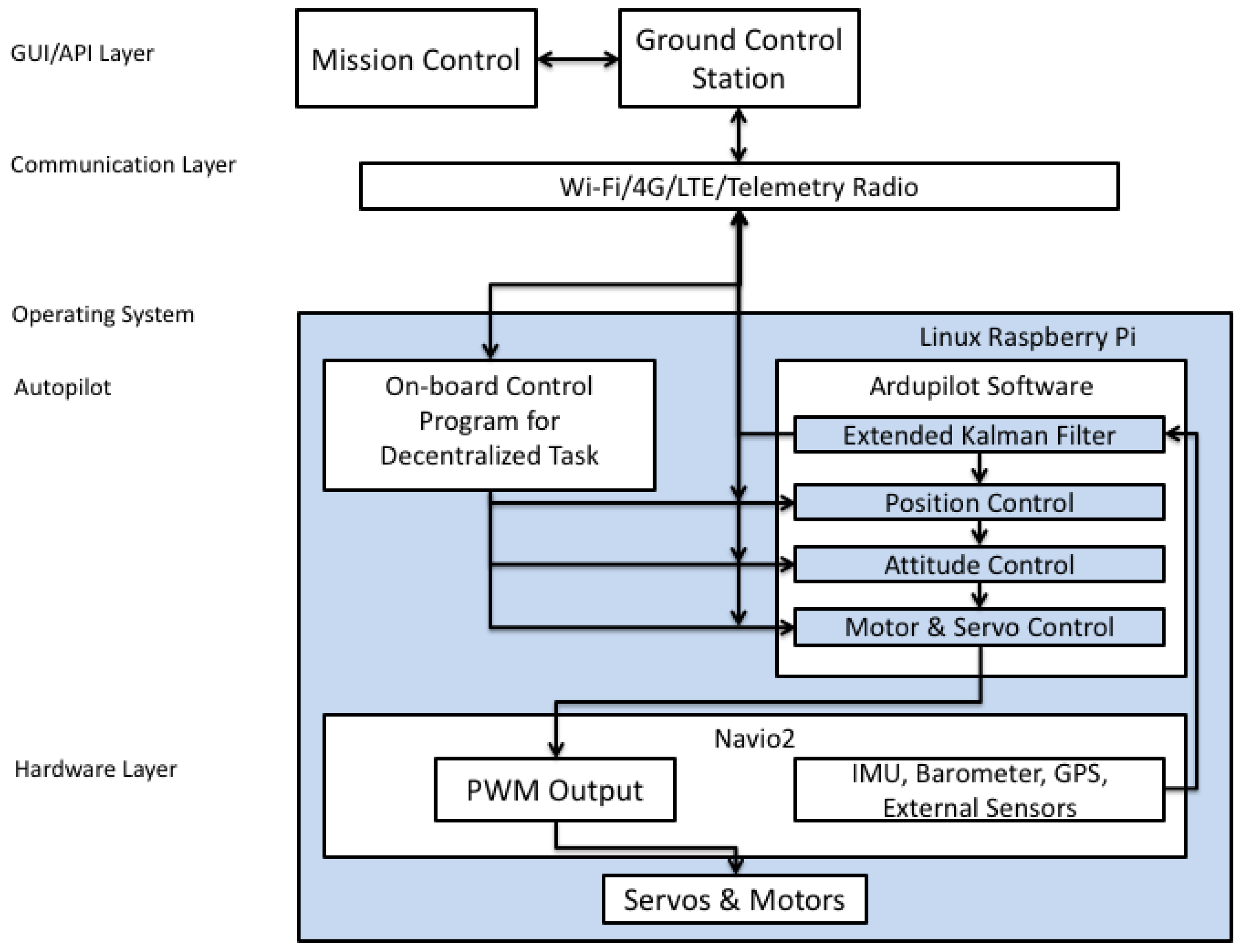

2.2. System Architecture

2.3. Micro-Controller and Sensors

2.4. Middleware and Architecture

2.5. Current Application

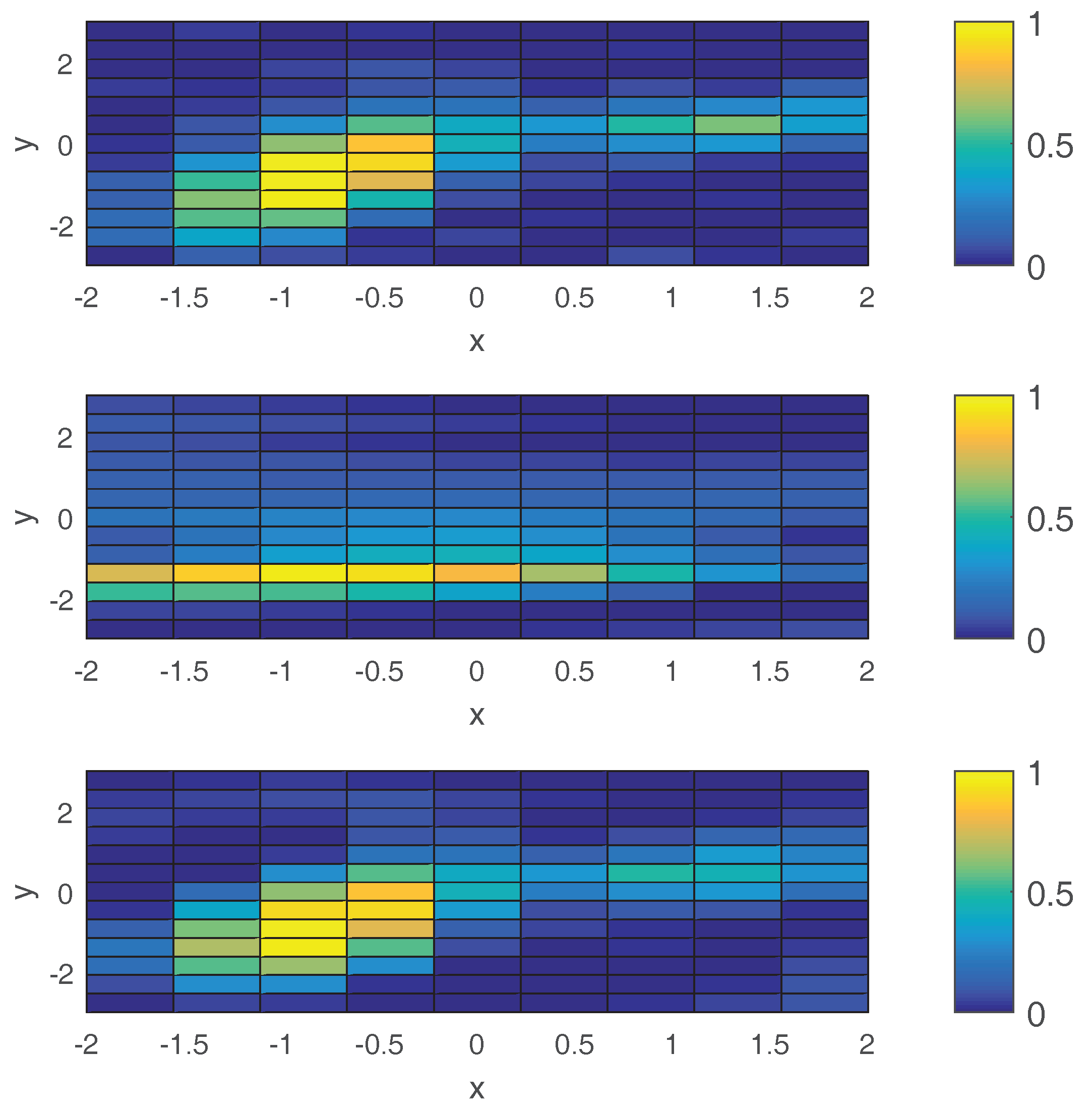

2.5.1. Gaussian Process

- be training input;

- be test input;

- be function values corresponding to test input;

- be training output;

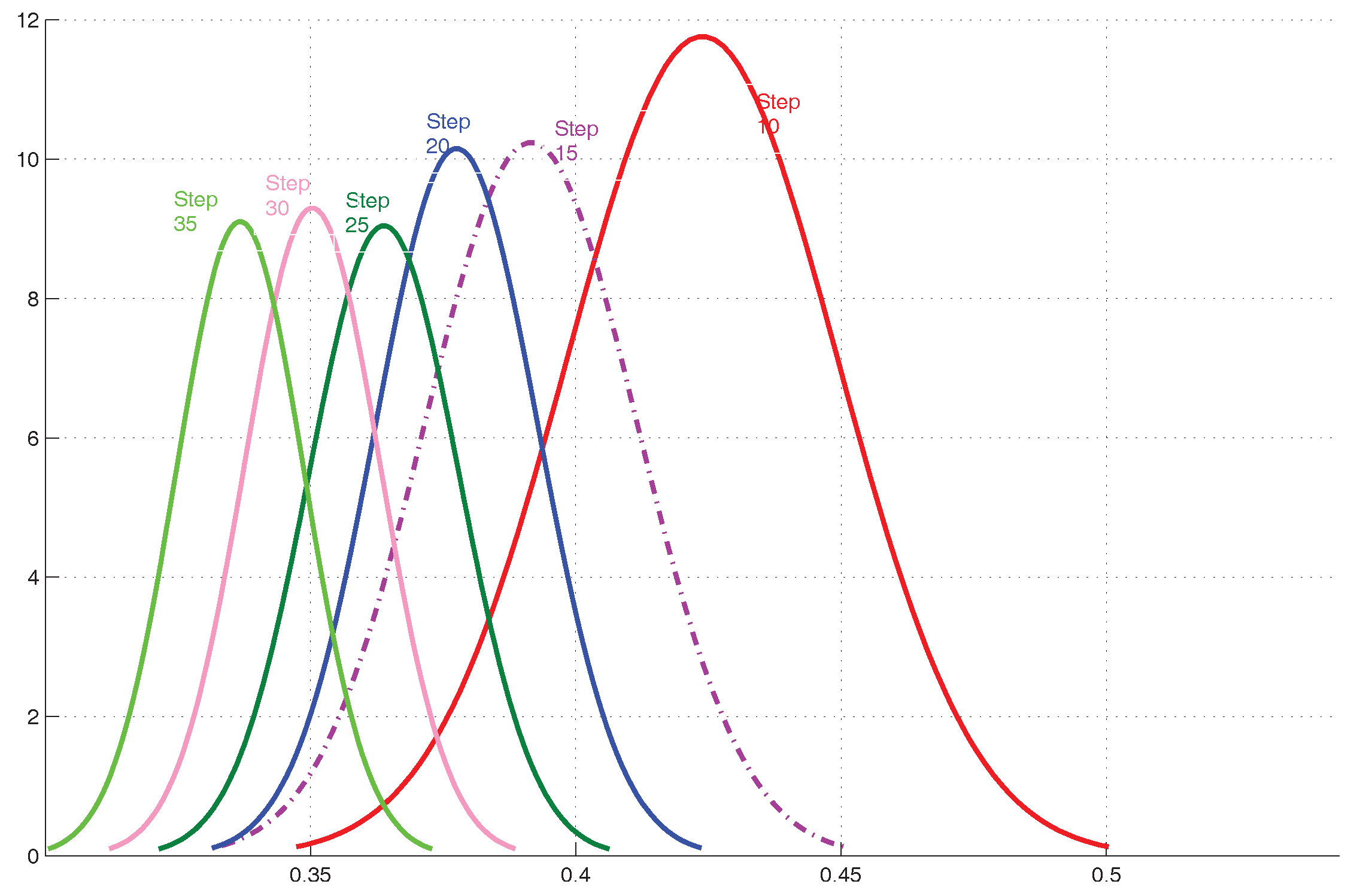

2.5.2. Estimation of Hyperparameters

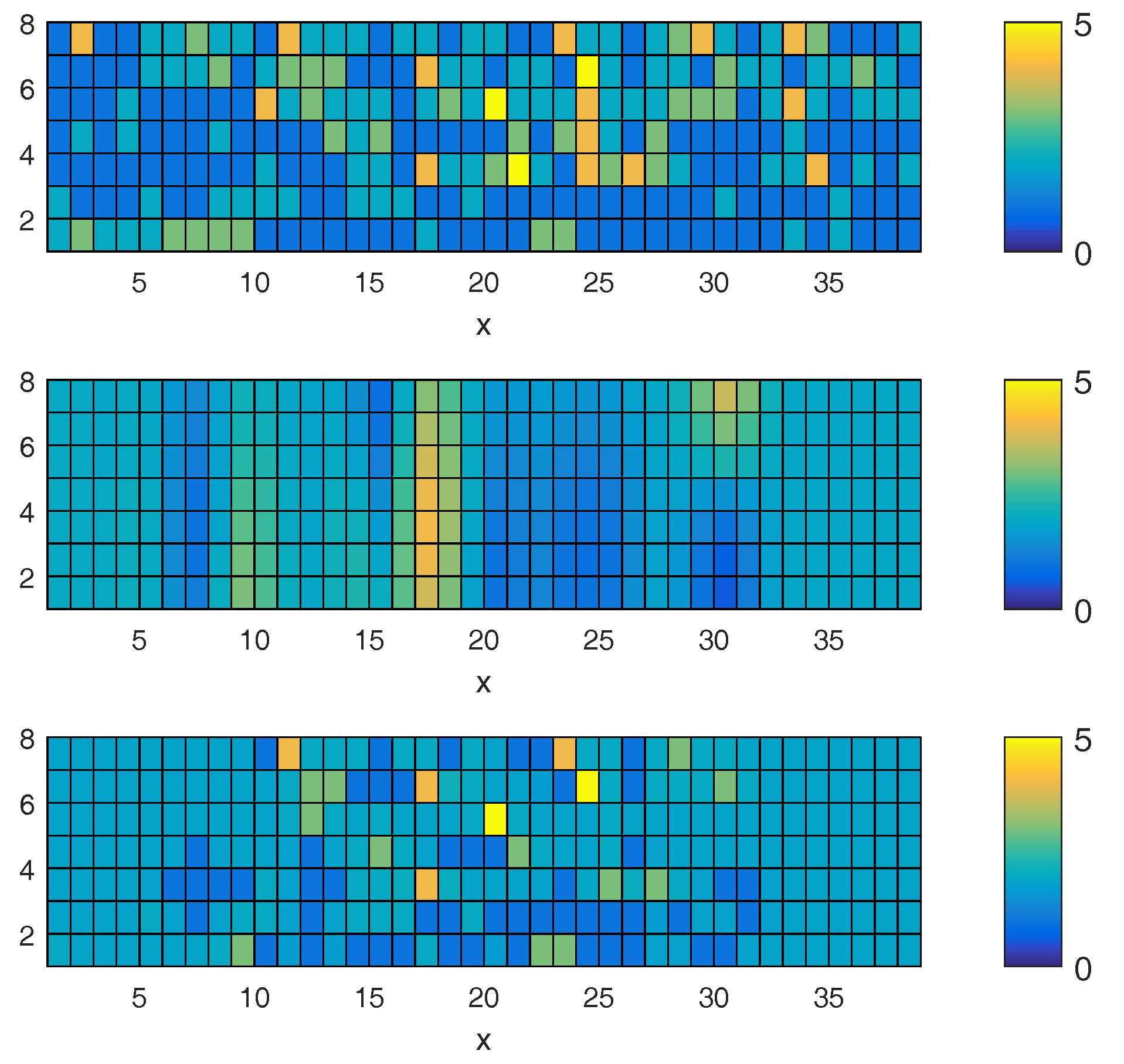

2.5.3. Informative Motions

| Algorithm 1 Informative Motions and Nonparametric Learning |

|

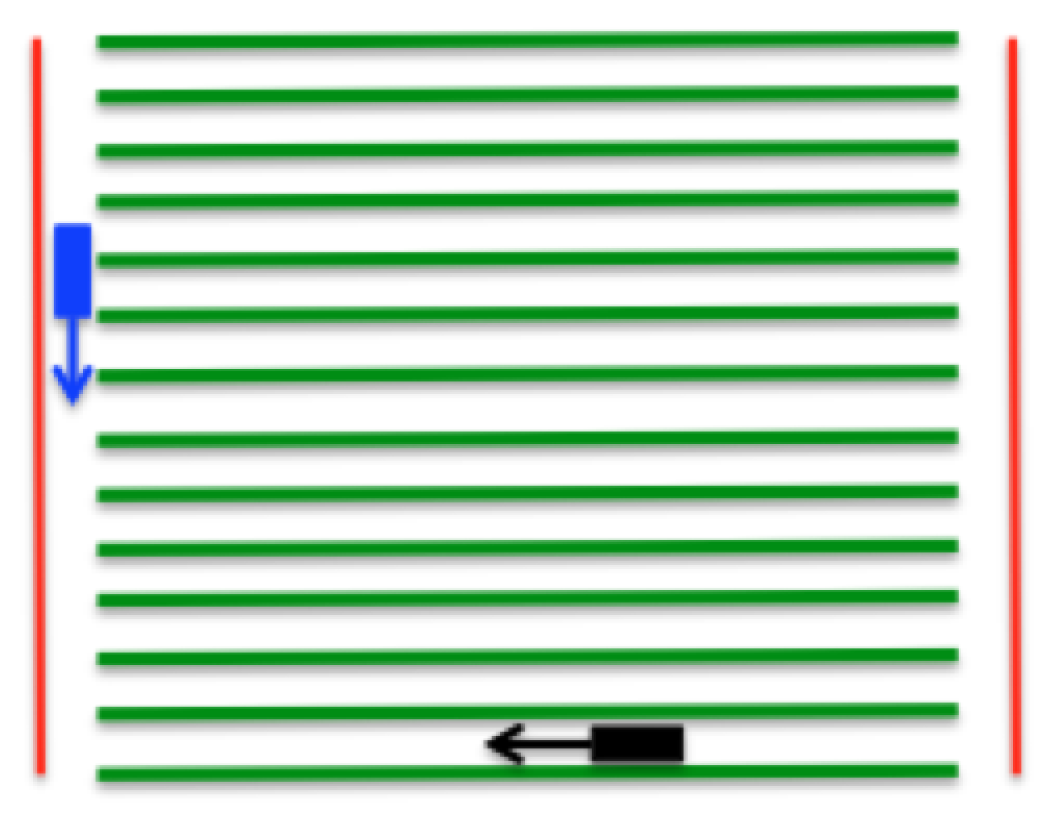

2.5.4. Path Planning

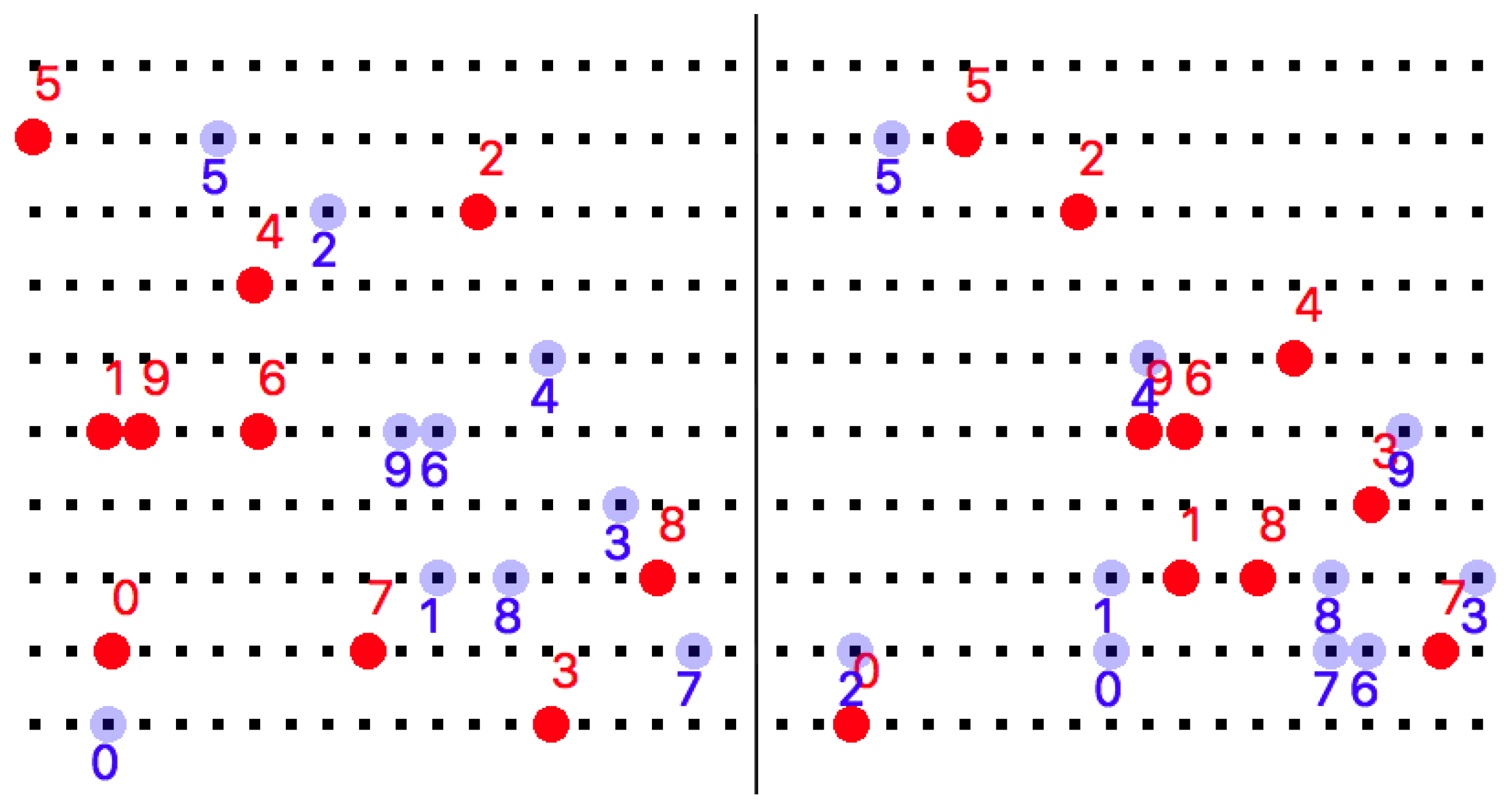

2.5.5. Robot–Target Assignment

2.5.6. Tasks Scheduling

- If , then there is a unique reassignment that allows robots to leave the row, and the remaining n robots to reach their goals without any collision.

- If , then there is a unique reassignment that allows robots to enter the row, and the remaining m robots in the row to reach the m goal points without any collision.

- Let us consider a labeling of robots from left to right with the first robot on the left numbered as 1. Goals are labeled from left to right in a similar manner. Goal is assigned to robot , is assigned to , is assigned to , and so on, until is assigned to . The leftover robots can leave the row without any collision with other robots. This reassignment does not change the sum of the path lengths. Between any two robots being reassigned, the original travel distance for robot and are and . The distance between and is d. After reassignment, the traveling distance for is , and the traveling distance of is . The total traveling distance is still . Since the total traveling distance remains unchanged, the total time required to complete the task will not increase either.

- Consider the same labeling as in the previous case. Goal is assigned to robot , is assigned to , is assigned to , and so on, until is assigned to . The leftover goals can be taken care of by robots entering from left path without collision. We sort these robots with increasing order by traveling distance to this row. Then, we assign the leftover goals from right to left with the sorted robots. Similarly, this reassignment does not change the sum of path lengths, and does not increase the total time required to complete the task.

2.5.7. Time Complexity

| Algorithm 2 Compute cost matrix given robots and goal points position |

| Input: Two matrices. and are robots and goal points location sets Output: Cost matrix 1: function CostMatrix() 2: for each do 3: for each do 4: 5: end for 6: end for return 7: end function |

3. Results

3.1. Experimental Setup

3.2. Simulation

4. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Xavier, A.; Hall, B.; Hearst, A.A.; Cherkauer, K.A.; Rainey, K.M. Genetic Architecture of Phenomic-Enabled Canopy Coverage in Glycine max. Genetics 2017, 206, 1081–1089. [Google Scholar] [CrossRef] [PubMed]

- Naik, H.S.; Zhang, J.; Lofquist, A.; Assefa, T.; Sarkar, S.; Ackerman, D.; Singh, A.; Singh, A.K.; Ganapathysubramanian, B. A real-time phenotyping framework using machine learning for plant stress severity rating in soybean. Plant Methods 2017, 13, 23. [Google Scholar] [CrossRef] [PubMed]

- Bai, G.; Ge, Y.; Hussain, W.; Baenziger, P.S.; Graef, G. A multi-sensor system for high throughput field phenotyping in soybean and wheat breeding. Comput. Electron. Agric. 2016, 128, 181–192. [Google Scholar] [CrossRef]

- Shi, Y.; Thomasson, J.A.; Murray, S.C.; Pugh, N.A.; Rooney, W.L.; Shafian, S.; Rajan, N.; Rouze, G.; Morgan, C.L.; Neely, H.L.; et al. Unmanned aerial vehicles for high-throughput phenotyping and agronomic research. PloS ONE 2016, 11, e0159781. [Google Scholar] [CrossRef] [PubMed]

- Tattaris, M.; Reynolds, M.P.; Chapman, S.C. A Direct Comparison of Remote Sensing Approaches for High-Throughput Phenotyping in Plant Breeding. Front. Plant Sci. 2016, 7, 1131. [Google Scholar] [CrossRef] [PubMed]

- Ruckelshausen, A.; Biber, P.; Dorna, M.; Gremmes, H.; Klose, R.; Linz, A.; Rahe, F.; Resch, R.; Thiel, M.; Trautz, D.; et al. BoniRob: an autonomous field robot platform for individual plant phenotyping. In Precision Agriculture ’09; Wageningen Academic Publishers: Wageningen, The Netherlands, 2009. [Google Scholar]

- Gonzalez-de Santos, P.; Ribeiro, A.; Fernandez-Quintanilla, C.; Lopez-Granados, F.; Brandstoetter, M.; Tomic, S.; Pedrazzi, S.; Peruzzi, A.; Pajares, G.; Kaplanis, G.; et al. Fleets of robots for environmentally-safe pest control in agriculture. Precis. Agric. 2017, 18, 574–614. [Google Scholar] [CrossRef]

- Bawden, O.; Ball, D.; Kulk, J.; Perez, T.; Russell, R. A lightweight, modular robotic vehicle for the sustainable intensification of agriculture. In Proceedings of the Australian Conference on Robotics and Automation (ACRA 2014), Melbourne, Australia, 2–4 December 2014. [Google Scholar]

- Grimstad, L.; From, P.J. The Thorvald II agricultural robotic system. Robotics 2017, 6, 24. [Google Scholar] [CrossRef]

- Fernandez, M.G.S.; Bao, Y.; Tang, L.; Schnable, P.S. A high-throughput, field-based phenotyping technology for tall biomass crops. Plant Phys. 2017. [Google Scholar] [CrossRef] [PubMed]

- Arbo, M.H.; Utstumo, T.; Brekke, E.F.; Gravdahl, J.T. Unscented Multi-Point Smoother for Fusion of Delayed Displacement Measurements: Application to Agricultural Robots. Model. Identif. Control 2017, 38, 1–9. [Google Scholar] [CrossRef]

- Batey, T. Soil compaction and soil management—A review. Soil Use Manag. 2009, 25, 335–345. [Google Scholar] [CrossRef]

- Nawaz, M.F.; Bourrie, G.; Trolard, F. Soil compaction impact and modelling. A review. Agron. Sustain. Dev. 2013, 33, 291–309. [Google Scholar] [CrossRef]

- Saha, H.; Gao, T.; Emadi, H.; Jiang, Z.; Singh, A.; Ganapathysubramanian, B.; Sarkar, S.; Singh, A.; Bhattacharya, S. Autonomous Mobile Sensing Platform for Spatio-Temporal Plant Phenotyping. In Proceedings of the ASME 2017 Dynamic Systems and Control Conference, Tysons, VA, USA, 11–13 October 2017; p. V002T21A005. [Google Scholar]

- Pi, R. Raspberry Pi Model B, 2015. Available online: http://www.meccanismocomplesso.org (accessed on 30 July 2018).

- Ferdoush, S.; Li, X. Wireless sensor network system design using Raspberry Pi and Arduino for environmental monitoring applications. Procedia Comput. Sci. 2014, 34, 103–110. [Google Scholar] [CrossRef]

- Williams, C.K.; Rasmussen, C.E. Gaussian Processes for Machine Learning; MIT Press: Cambridge, MA, USA, 2006. [Google Scholar]

- Rasmussen, C.E. Gaussian processes for machine learning. J. Mach. Learn. Res. 2010, 11, 3011–3015. [Google Scholar]

- Ma, K.C.; Liu, L.; Sukhatme, G.S. Informative planning and online learning with sparse gaussian processes. In Proceedings of the 2017 IEEE International Conference on Robotics and Automation (ICRA), Singapore, 29 May–3 June 2017; pp. 4292–4298. [Google Scholar]

- Kuhn, H.W. The Hungarian method for the assignment problem. Nav. Res. Logist. Quart. 1955, 2, 83–97. [Google Scholar] [CrossRef]

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Gao, T.; Emadi, H.; Saha, H.; Zhang, J.; Lofquist, A.; Singh, A.; Ganapathysubramanian, B.; Sarkar, S.; Singh, A.K.; Bhattacharya, S. A Novel Multirobot System for Plant Phenotyping. Robotics 2018, 7, 61. https://doi.org/10.3390/robotics7040061

Gao T, Emadi H, Saha H, Zhang J, Lofquist A, Singh A, Ganapathysubramanian B, Sarkar S, Singh AK, Bhattacharya S. A Novel Multirobot System for Plant Phenotyping. Robotics. 2018; 7(4):61. https://doi.org/10.3390/robotics7040061

Chicago/Turabian StyleGao, Tianshuang, Hamid Emadi, Homagni Saha, Jiaoping Zhang, Alec Lofquist, Arti Singh, Baskar Ganapathysubramanian, Soumik Sarkar, Asheesh K. Singh, and Sourabh Bhattacharya. 2018. "A Novel Multirobot System for Plant Phenotyping" Robotics 7, no. 4: 61. https://doi.org/10.3390/robotics7040061

APA StyleGao, T., Emadi, H., Saha, H., Zhang, J., Lofquist, A., Singh, A., Ganapathysubramanian, B., Sarkar, S., Singh, A. K., & Bhattacharya, S. (2018). A Novel Multirobot System for Plant Phenotyping. Robotics, 7(4), 61. https://doi.org/10.3390/robotics7040061