1. Introduction

One person dies every 37 s in the United States from cardiovascular disease [

1]. Around 74% of all deaths in the United States occur as a result of 10 causes. Heart disease (23.5%) is the leading cause of death for both men and women. This is the case in the U.S. and worldwide [

1,

2].

Over the past few decades, the market for digital health applications has seen a huge increase in requirements. The development of the Internet of Things (IoT) and data analysis provided the necessary elements to implement a sensor network for health purposes. ECG monitoring is one of the most important health care monitoring systems. Modern alarm systems can record and analyze heart health 24 h a day, 7 days a week, and quickly report unusual health conditions to a remote health center, which can potentially save lives [

3]. Since the devices recording ECG signals allow the collection of large amounts of data, researchers are working on the development of more effective algorithms based on machine learning methods, which enables automatic diagnosis of the patient’s heart condition. In recent years, there has been a trend of dynamic development of methods using convolution neural networks and deep learning. The above trend applies to many areas of life and the economy [

4,

5,

6,

7,

8]. It is not surprising then that it has also become an indicator of technological progress in medicine.

1.1. Related Work in Machine Learning

Models based on supervised learning often take the form of artificial neural networks (ANN) [

9]. An example is an algorithm for identifying patients with atrial fibrillation during sinus rhythm. The researchers conducted a retrospective analysis of outcome prediction with the use of a convolutional neural network (CNN) designed in the Keras Framework with a Tensorflow (Google, Mountain View, CA, USA) backend and Python [

10]. Another example of the use of CNN in the diagnosis of heart disease is research conducted by Nahian Ibn Hasan and Arnab Bhattacharjee [

11] who implemented a hybrid approach with the use of Empirical Mode Decomposition and CNN to classify ECG signals. Tahsin M. Rahman et al. [

12] used Support Vector Machine (SVM) in the early detection of kidney disease using ECG signals. Min-Gu Kim and Sung Bum Pan [

13] implemented one dimension (1-D) ensemble CNN for real-time ECG recognition. Peng Zhao et al. [

14] compared CNN with several baseline classification schemes, including SVM, KNN (K-Nearest Neighbor), LR (Logistic Regression), RF (Random Forest), DT (Decision Tree), and GBDT (Gradient Boosting Decision Tree). Wei Zhao et al. [

15] developed a deep neural network to classify the heartbeat on the ECG signals following the guideline of the ANSI/AAMI EC57. Iraia Isasi et al. [

16] proposed a robust machine learning architecture for a reliable ECG rhythm analysis during cardiopulmonary resuscitation. The following methods were tested: ANN, SVM, KLR (Kernel Logistic Regression), and BDT (Boosting of Decision Trees). A similar comparison was made by Janghel and Pandey [

17]. Sara S. Abdeldayem and Thirimachos Bourlai [

18] have developed a very interesting concept for analyzing ECG-based signals using spectral correlation and 2-D CNN.

Another example of the use of 2-D CNN to analyze ECG signals are research studies of Yıldırım et al. [

19] and Elif Izci et al. [

20]. They proved that 2-D CNN, which is an image-based ECG signal classification structure, achieves better performance than 1-D CNN. Indra Hermawan et al. [

21] proposed a method for ECG signals quality assessment (SQA) by using a temporal feature and heuristic rule. Mihaela Porumb et al. [

22] proposed a CNN + RNN system for hypoglycemic events detection based on ECG. Y.T. Sheen [

23] proved that the wavelet-based demodulating function can be successfully used in the 3D spectral analysis for vibration signals. A. Diker et al. [

24] achieved 95% accuracy in ECG signal classification thanks to the Wavelet Kernel Extreme Learning Machine algorithm.

H. M. Lynn et al. [

25] proposed a deep Recurrent Neural Networks (RNNs) based on a Gated Recurrent Unit (GRU) in a bidirectional manner (BGRU) for human identification from ECG-based biometrics. This is a classification task that aims to identify a subject from a given time-series sequential data. The models were evaluated with two publicly available datasets: ECG-ID Database (ECGID) and MIT-BIH Arrhythmia Database (MITDB). Thanks to the presented concept, 98.6% accuracy was achieved. M. Salem et al. [

26] used the long-short-term memory (LSTM) network with feature extraction using spectrograms to classify ECG signals. A total of 97.3% accuracy was obtained.

Very interesting large-scale research has recently been published by H. Zhu et al. in

The Lancet Digital Health journal [

27]. A CNN model was trained to diagnose 20 ECG arrhythmias, including all of the common rhythm or conduction abnormality types. The performance of the model was compared with 53 physicians working in cardiology departments across a wide range of experience levels from 0 to more than 12 years. It turned out that the CNN model exceeded the performance of physicians clinically qualified in ECG interpretation.

Table 1 shows a comparison of selected ECG signal classification methods.

1.2. Related Work in Reference to LSTM

The long-short-term memory (LSTM) networks are specifically designed to find patterns in time [

28], such as ECG signals, to generate improved performance. ECG signals are typical sequences. Therefore, the ability to remember characteristic fragments of time series is crucial during the learning process. The fact of having long-term and short-term memory was the main reason that the LSTM network was selected as the basic structure of the predictive system in this study. Jan Werth et al. [

29] compared the performance of ECG signals classification in automatic sleep state classification in preterm infants. They tested two types of neural networks—LSTM and gated recurrent unit (GRU). Ö. Yildirim [

30] implemented feature extraction based on the Daubechies dB6 wavelet family. Signals decomposed into sub-bands by wavelet transform were transformed into an appropriate form for the LSTM inputs. Thanks to the wavelet sequences layer, LSTM network accuracy reached 99.39%. The basic difference between Yildirim’s research and ours is that the features we extracted (Instantaneous Frequency and Spectral Entropy) are spectral in nature. Ö. Yildirim converted the ECG signal into a wavelet. Another difference refers to the structure of the LSTM network. Ö. Yildirim tested two types of structures. The first LSTM network had two unidirectional LSTM layers, and two dense (fully connected) layers. The second network consisted of two bidirectional LSTM, and two dense (fully connected) layers. In both networks, the input was a single signal, which was converted to a wavelet. In our research, we used a simpler network structure consisting of a single bidirectional LSTM layer and one fully connected layer. However, a single ECG signal has been converted into a double signal consisting of the instantaneous frequency and the spectral entropy.

Y.S. Jeong et al. [

36] developed an algorithm for the real-time prediction of blood pressure between the induction of anesthesia and the start of surgery. This is a regression problem. Blood pressure is predicted three minutes in advance. The authors used RNN to capture arbitrary features from sequential vital signs and made predictions based on the features. Aside from the fact that the above studies dealt with a subject slightly different from the ECG, the major difference with the method presented in this paper is that blood pressure prediction is a regression problem when ECG categorization is a classification problem. In a neural network with an RNN architecture, a 27-element vector of raw data was used, while, in the presented model, an LSTM with a single input signal was used, which then, as a result of feature extraction, is converted into two extracted features — Instantaneous Frequency and Spectral Entropy.

Corneliu T. C. Arsene, R. Hankins, and H. Yin [

37] applied a CNN regression model and LSTM network capable of rejecting very high levels of noise in the ECG signals. This is a situation that has not been addressed before.

Ramya et al. [

38] used QRS complex detection and feature extraction for envisioning ventricular arrhythmia from ECG. Yu-Jhen Chen et al. [

31] proposed to use an architecture combining CNN and LSTM to develop a classification of cardiac arrhythmias. Ahmed Mostayed et al. [

39] proposed a Bi-directional LSTM classifier to detect pathologies in 12-lead ECG signals. S. Saadatnejad, M. Oveisi, and M. Hashemi [

40] designed the LSTM-Based ECG classification algorithm for continuous monitoring on personal wearable devices. Junli Gao et al. [

41] introduced an LSTM network with focal loss (FL) to detect arrhythmia on an imbalanced ECG dataset.

They improved the training effect by inhibiting the impact of a large number of easy normal ECG beat data on model training. Cardiac arrhythmia detection from single-lead ECG was the subject of research conducted by Dhwaj Verma and Sonali Agarwal [

42]. They used a hybrid system consisting of 1-D convolution and LSTM assisted by oversampling.

Yen-Chun Chang et al. [

43] implemented LSTM for atrial fibrillation detection by exploiting the spectral and temporal characteristics of ECG signals with 98.3% accuracy. Yuen, Dong, and Lu [

44] developed a novel CNN-LSTM structure for detecting QRS complexes in noisy ECG signals. The last two examples of research concern binary classification.

M. Zihlmann et al. [

32] indicated that aggregation of features across time using the convolutional and LSTM network is more effective than average in the ECG classification. To preprocess the data they computed, the one-sided spectrogram of the time-domain input ECG signal applied a logarithmic transform. Average accuracy of 82.3% was achieved.

1.3. Objective and Contribution

The overall goal of the research was to develop a more effective method of ECG signal classification. It was proven that the spectral extraction of features using logarithmic transform and the use of the LSTM neural network gives good results [

32]. In the presented model, a single raw ECG signal was transformed into two signals of spectrograms, generated by various time transformations, which increased the effectiveness of prediction.

The main contribution of this article is as follows:

Development of an effective LSTM model for classifying six categories of ECG signals, using two time-frequency (TF) moments extracted from the spectrograms - instantaneous frequency (IF) and spectral entropy (SE).

Comparison of the effectiveness of LSTM network training in the variant with a single raw ECG signal at the input and in the variant with two input features (IF and SE).

With regard to signal noise reduction, noise is stationary while the raw signal is cyclo-stationary [

18]. This fact causes the extraction of features based on spectral images of raw ECG signals, which makes it possible to skip the noise reduction stage.

2. Materials and Methods

2.1. Problem Description

In ECG signal classification, the problem is the uncertainty of the prediction. The above problem is important especially because it concerns human health and life. Hence, there is a need to develop classification methods that reduce the level of prediction uncertainty to an absolute minimum.

The next part of the article presents two models of LSTM networks, whose task was to classify six types of heart dysfunction based on ECG signals. The first model uses LSTM with a single input (raw ECG signal). The second model uses LSTM with two features, extracted from the raw ECG signal using Fourier transform and spectrograms.

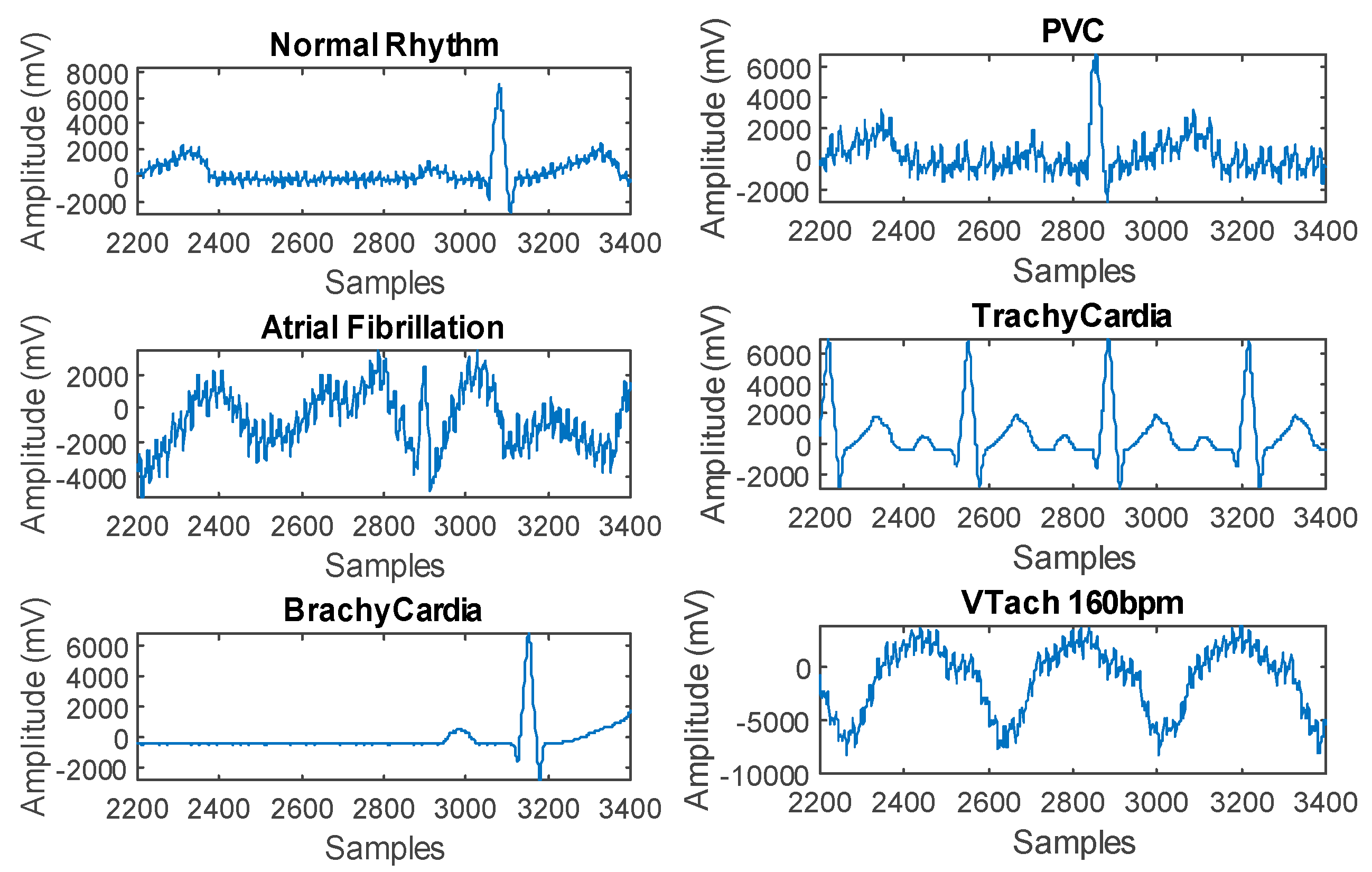

The following classes can be distinguished in the ECG data sets used: natural ECG signal at 60 bpm (N), atrial fibrillation (A), bradycardia at 30 bpm, tachycardia at 180 bpm, premature ventricular contraction (PVC), and ventricular tachycardia. The waveforms of individual ECG partial signals are shown in

Figure 1.

2.2. Dataset Acquisition

The data used to train the LSTM network were generated using FLUKE “ProSim 4 Vital Sign and ECG Simulator.” Dataset counted 3121 signals. Each signal included 5 s and 5000 measurements (5 kHz).

Table 2 presents the numbers of signals belonging to individual classes. Initially, the largest number of signals (1140) referred to Normal ECG, while only 60 measurement signals were assigned to the Brachycardia category.

Taking into account the number of signals belonging to particular categories of ECG dysfunction, the division of data into the training and test sets was established in the proportions 95/5. Thus, the division of the most numerous signal category, which is Normal ECG, into the training and testing set was 1080/60 (1080 + 60 = 1140). Since 36.5% of the signals are normal, a classifier would learn that it can achieve high accuracy by simply classifying all signals as normal. To avoid this error, the dysfunctional data was extended by duplicating the dysfunctional signals in the data set. As a result, the same number of normal and dysfunctional signals was obtained. This type of duplication, commonly known as oversampling, is one form of data augmentation used in deep learning.

As part of oversampling, the training signals of all other categories were multiplied to 1080. The signals for the testing set were duplicated similarly by obtaining 60 signals for each ECG category.

2.3. LSTM Architecture

In the presented research, a deep LSTM network was used to classify ECG signals. An important feature that distinguishes LSTM from other methods is the ability to learn long-term relationships between time steps in time series or given sequences.

Figure 2 presents a diagram of the LSTM network structure. The LSTM layer consists of two states — hidden (initial) and cell state. The output data of the LSTM layer for a given time step

t is contained in a hidden state at

t [

45].

The cell status contains information learned on the basis of previous time steps. At each time stage, the LSTM layer adds or removes information from the cell’s state. Information updates are controlled by means of gates. Individual gates control levels of cell states: f—reset (forget), (i)—input gate controls the level of cell state update, (o)—output gate, g—cell candidate. They add information to the cell state. Weights W, the recurrent weights R, and biases b can be described by Formula (1).

where

i, f, o, and

g denote the input, forget and output gates, and cell candidate, respectively. The cell state in a given time step

t is descripted by

, where ⊙ denotes element-wise multiplication of vectors. The hidden state at time step

t can be described as

where

is the state activation function. The Equations (2)–(5) describe the components of the LSTM layer in time step

t.

In the above formulas, means gate activation function. In the LSTM network layers, the sigmoidal activation function was used. It can be expressed by .

2.4. Classification of Raw ECG Signal

The first variant tested was the LSTM network with five layers was used. In the case of neural networks, there are no strict rules for selecting network parameters (e.g., number of layers, number of neurons in layers, transfer functions) for specific types of problems. Accordingly, the network parameters are selected experimentally. This was also the case in this scenario.

Table 3 specifies the optimal parameters of the neural network for classification of a raw ECG signal, containing one double layer LSTM with 200 hidden neurons. The conducted experiments showed that a smaller number of hidden neurons causes a deterioration of network quality while increasing the number of neurons and adding subsequent layers extends the learning process without causing an increase in the quality of the LSTM network.

The first layer in the LSTM model is sequence input. The sequence input layer aims to enter sequential data into the network. The next is the bidirectional layer BiLSTM. The bidirectional LSTM layer learns long-term relationships between signal time steps or sequence data in both directions (forward with feedback). These relationships are important when there is a need for the network to learn from full-time series at each time step. The third layer of the LSTM is a fully connected layer. This layer multiplies the numerical input values by the weight matrix and also adds a vector of biases.

In deep networks, one or more fully connected layers are introduced after convolution and down sampling layers. If the input to a fully connected layer is a sequence as in the case of LSTM, then the fully connected layer works individually at each stage. If the output of the layer placed before the fully connected layer is an array A

1 of the size X by Y by Z, then the fully connected layer output is an array A

2 of the size X’ (output size) by Y by Z. At the time step

t, the appropriate input of A

2 is

, where

is the time step

t of A and

is the bias. In these studies, Glorot initializer was the initiating algorithm for the weights of this layer [

46]. The penultimate layer is the softmax. This type of layer is typical for deep classification neural networks. The softmax layer is always preceded by a fully connected layer. Formula

shows the softmax activation function, where

,

.

The last layer computes the cross entropy loss for classification problems with mutually exclusive classes. To ensure training sets of a sufficiently large number, aggregation of measurement data with similar characteristics was made. The adaptive moment estimation (ADAM) algorithm [

47] was used to train the LSTM network. The BiLSTM layer has the following parameters: state activation function—tanh, gate activation function— sigmoid, mini batch size = 150, initial learning rate = 0.01, sequence length = 1000, gradient threshold = 1. The above parameters were determined experimentally. Various variants of the model containing the dropout layer were tested with a probability ranging from 0.1 to 0.5. The tests showed that, in the case studied, adding dropout layers did not increase the network generalization ability. Due to the above, we decided not to use this type of layer in the presented predictive model.

2.5. Spectral Feature Extraction

The second variant of the tested LSTM network was a model powered by two spectral inputs (TF moments) - instantaneous frequency (IF) and spectral entropy (SE). IF and SE values were calculated as a result of feature extraction. Extracting features from the data can help improve the training and testing accuracies of the classifier. To select the features to extract, an approach was used that calculates time-frequency images, such as spectrograms, and used them to train LSTM.

CNN is best suited for classifying multidimensional images. Since LSTM was used in this case, it is important to translate this approach so that it applies to one-dimensional signals. TF moments extract information from spectral images. Any moment can be used as a one-dimensional feature suitable for entering data into LSTM.

Figure 3 shows six classes of spectrograms corresponding to each type of ECG signal.

The first chosen TF moment was instantaneous frequency (IF) of a nonstationary signal. IF is a time-varying parameter that relates to the average of the frequencies present in the signal. Function

calculates the spectrogram power spectrum

of the input, and uses the spectrum as a time-frequency distribution. Function

estimates the instantaneous frequency using Equation (6).

where:

—contains the power spectrum estimate of each channel of an input signal,

t—sample times vector,

f—spectrum frequencies [

48].

To compute the instantaneous spectral entropy given a time-frequency power spectrogram

, the probability distribution at time

is Equation (7).

The second extracted TF moment was spectral entropy (SE). The SE measures its spectral power distribution of the ECG signal. The SE index is derived from the concept of Shannon entropy or information entropy known from information theory. To calculate SE, the normalized power distribution of the signal in the frequency domain should be treated analogously to the probability distribution and calculate its Shannon entropy [

49]. This means that, to calculate SE, Shannon Entropy should be treated as a signal spectral entropy. This property was used to extract features from the raw ECG signal. The equations for SE arise from the formulas for the power spectrum and signal probability distribution

. For a signal

, the power spectrum is

, where

is the discrete Fourier transform of

and

follows as shown in Equation (8).

The spectral entropy H can be described with Formula (9).

To compute the instantaneous spectral entropy for power spectrogram

for a given time-frequency

, the probability distribution at time

is shown below.

Lastly, SE at time

can be expressed by Formula (11).

The time-dependent frequency of signals as the first moment of the power spectrograms was estimated. The spectrograms using short-time Fourier transforms over time windows were computed. We applied 129 time windows in this case. The time values correspond to the centers of the time windows.

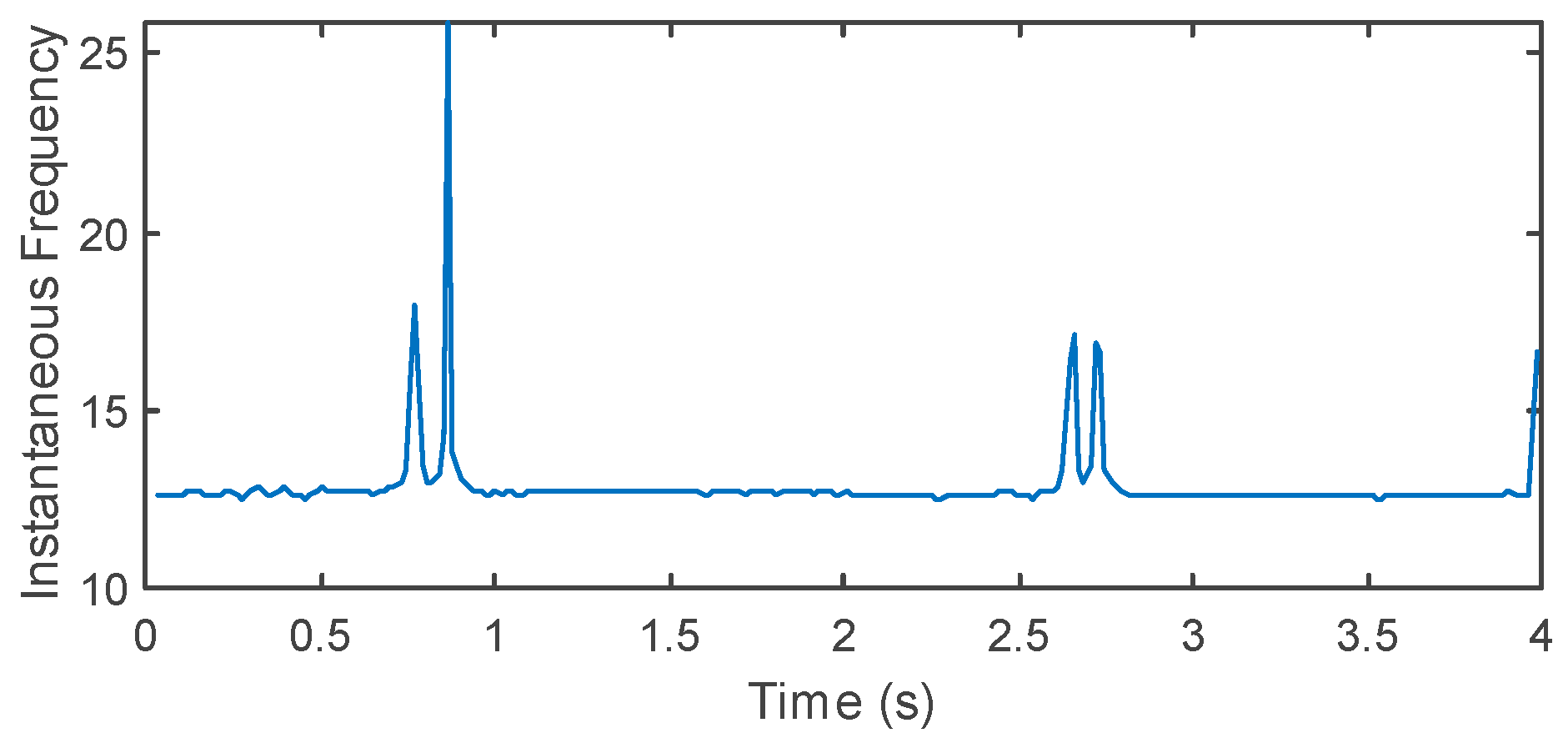

Figure 4 shows the instantaneous frequency (IF) visualization for each type of ECG signal. The second estimated time-frequency moment of the power spectrogram was spectral entropy (SE). SE measures how flat (or spiky) the signal spectrum is. Function (11) estimates SE based on a power spectrogram. As for IF estimation, 129 time windows were used to create the spectrogram. The time outputs of the function correspond to the center of the time windows.

Figure 5 shows SE visualization for each type of ECG signal. IF and SE have mean values that differ by almost one order or magnitude (

and

). There is some risk that the mean instantaneous frequency (

) is too high. In such a case, the data will not allow effective training of the LSTM.

When a network needs to match data with a large value range and high mean, such an input can slow down the training process and reduce network convergence. The training set mean and the standard deviation to standardize the training and testing sets were used. Standardization, or z-scoring, is a popular way to improve network performance during training. The means of the instantaneous frequency and spectral entropy after standardization were and . The conducted experiments showed that data standardization increased the accuracy level of the neural network trained in a specific time period by about 30%.

The LSTM after feature extraction had five layers. The LSTM network after extraction of two features has five layers, which is the same as in the previous variant.

Table 4 presents the activation numbers and learnable parameters for the layers of LSTM with improved inputs.

3. Results

Below are the results of training and testing two variants of the LSTM network. The first option used single inputs, which were the ECG time series. In the second variant, the single input was replaced by a double input, consisting of two IF and SE spectral features extracted from the raw ECG signal with Fourier transform. The division of the entire dataset into training and testing sets is presented in

Table 2.

3.1. LSTM with a Singular Raw ECG Signal Input

Before starting the training process, classifier options were specified. The number of epochs was set to 10 to allow the network to make 10 passes through training data. The minibatch size was set to 150. Because of this, the LSTM network considered 150 training signals simultaneously. The initial training rate was set to 0.01. This value was intended to accelerate the training process. In order for the device to not run out of memory due to taking into account too much data at the same time, the signal was divided into smaller parts. The maximum sequence length was set to 1000. To stabilize the training process by preventing the gradient from being too large, the gradient threshold was set to 1. An adaptive moment estimation solver (ADAM) was used. ADAM works better with recurrent neural networks (RNN), which include LSTM and stochastic gradient descent with momentum (SGDM). The metrics for the LSTM model quality were cross-entropy and accuracy. Accuracy is the percentage of correctly classified observations for all cases (12).

where

Nc denotes the number of pixels reconstructed correctly and

N denotes the total number of pixels [

50]. Cross entropy loss between network predictions and target values is defined as Equation (13).

where

N represents the number of observations,

M represents the number of responses,

represents patterns, and

represents network outputs.

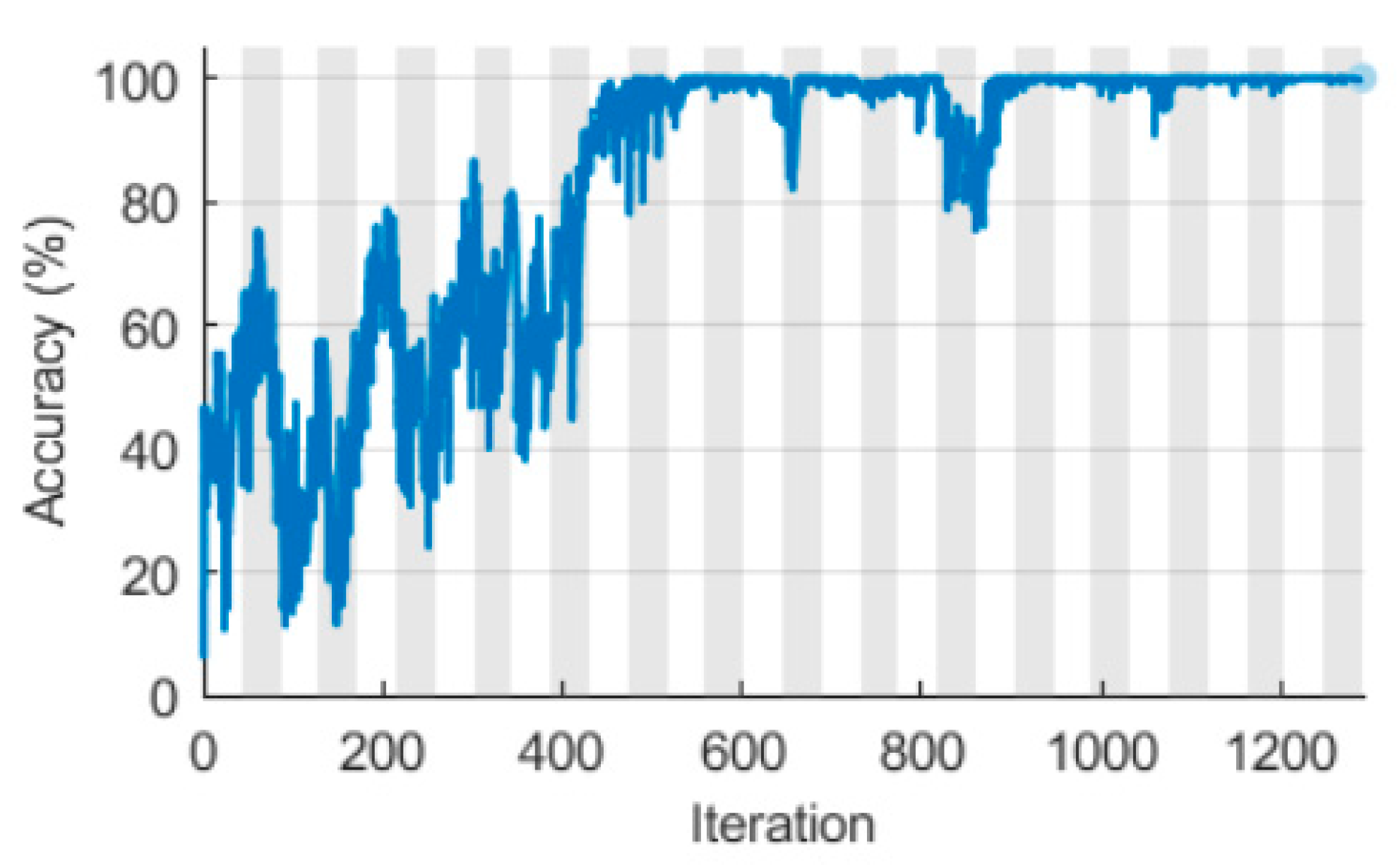

Figure 6 shows the training progress for the LSTM network with a raw input.

The training-progress plot exemplifies the training accuracy. Actually, it reflects the classification accuracy of each minibatch. For ideal training progress, this value increases to 100%. At the end of the training process, the classifier’s training accuracy oscillates between 50% and 70%. It has taken about 9 min to train. The computation was conducted on a PC with the following configuration: Intel® Core™ i5-8400 CPU 2.80 GHz, 16 GB RAM, GPU NVIDIA GeForce RTX 2070. The GPU was utilized.

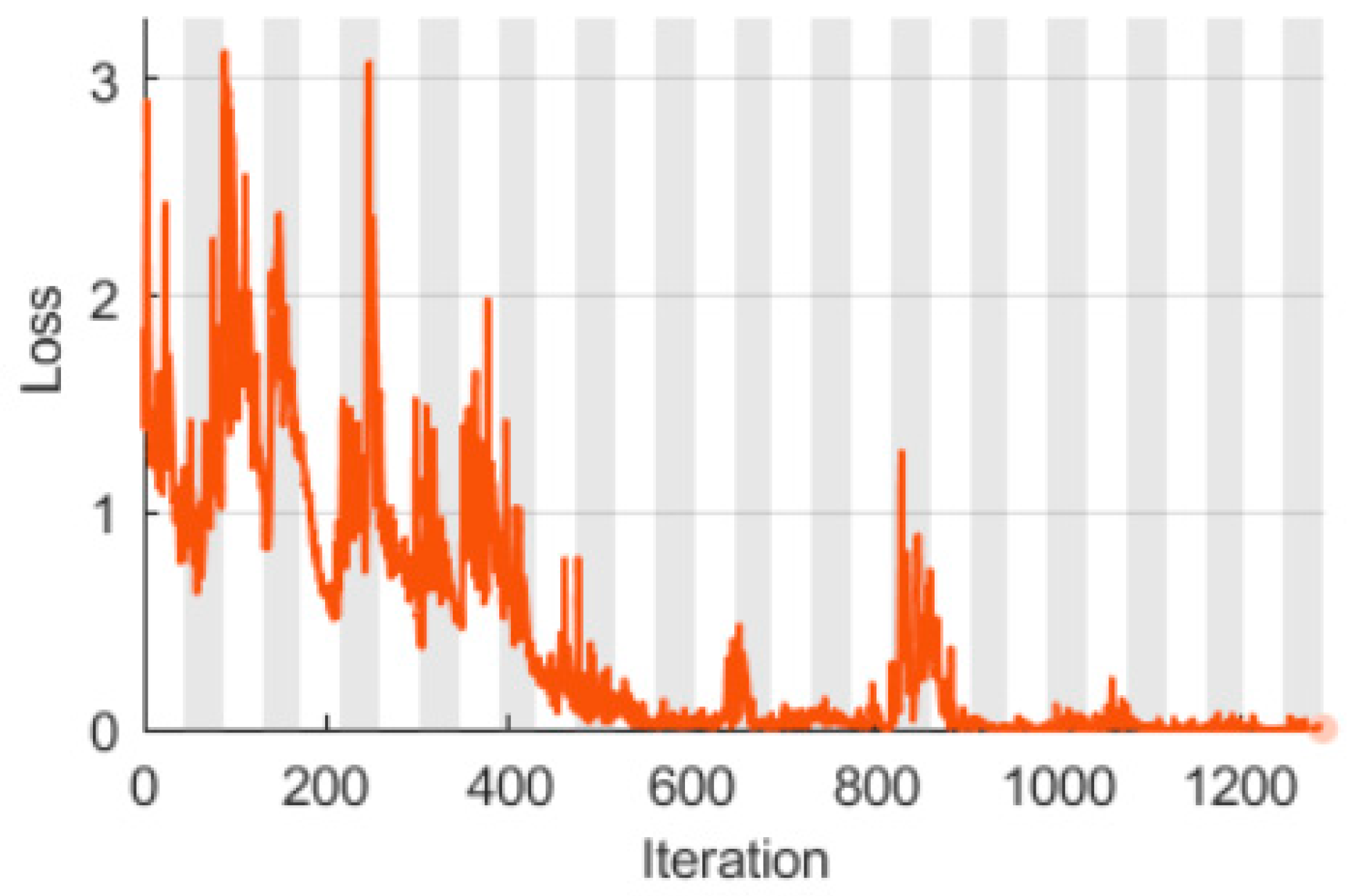

Figure 7 shows the LSTM learning process with a single raw ECG signal in the context of the Loss indicator. The graph displays the loss of training, which is the loss of cross-entropy for each mini-batch. When training goes perfectly, the loss should decrease to zero. The shape of this plot confirms all the information that results from

Figure 6.

It can be seen that the two training plots (

Figure 6 and

Figure 7) are not convergent. They oscillate between extreme values without any upward or downward trend. This oscillation means that the training accuracy does not improve and the training loss does not decrease. This happens almost from the beginning of training progress. The plot stabilized after an initial improvement in training accuracy.

Changing training options did not help the network achieve convergence. Decreasing the mini-batch size and reducing the initial learn rate resulted in longer training time, but did not help the LSTM learn better.

Figure 8 shows the confusion matrix for the training set of the LSTM with a raw ECG signal. PVC (Accuracy = 9.8%) and VTach 160 bpm (Accuracy = 3.3%) were the worst classified cardiac dysfunctions.

Figure 9 illustrates the confusion matrix for the testing set of the LSTM with a raw ECG signal.

Similar to the training set, PVC (Accuracy = 6.7%) and VTach 160 bpm (Accuracy = 1.7%) were the worst classified cardiac dysfunctions. The Brachycardia classification reached 100% accuracy. For a single raw ECG signal, the mean LSTM accuracy for the training set was 71.6%. The same metric for the testing set was 70.8%. Certainly, such low accuracy values do not testify to sufficient LSTM quality.

3.2. LSTM with Double Spectral Input Features

Figure 10 shows the raining accuracy for the LSTM network with the double spectral input. This plot is analogous to

Figure 7. It is clear that the shape of the plot is different than in the case of

Figure 6. Accuracy reaches the maximum value relatively quickly, and the fluctuations are low in amplitude. Some anomalies can be observed around the 650th and 860th iteration, but they are sporadic and do not cause the learning process to be unstable. The training time of the network, in this case, was about 1.5 min.

Figure 11 shows the LSTM learning process with the double spectral input in the context of the Loss indicator. The shape of this plot fully confirms the conclusions resulting from the analysis of

Figure 6.

Figure 12 presents the confusion matrix for the training set of the LSTM with a double spectral ECG signal. Compared to

Figure 8, the confusion matrix indicates decisive progress in the quality of LSTM network training. In principle,

Figure 12 confirms what is already apparent from

Figure 10 and

Figure 11, i.e., high-quality classification. It can be seen that, apart from the Normal ECG class, all other heart dysfunctions are classified correctly.

Figure 13 (see below) is a continuation of the conclusions drawn from previous observations. During this time, all six classifiers achieved 100% prediction accuracy.

In the case of a double, spectrally extracted ECG signal, the mean LSTM accuracy for the training set was 99.98%. The same metric for the testing set was 100%. Clearly, spectral extraction of features from the raw ECG signal has contributed to improving the quality of the classification of heart dysfunction.

3.3. Model Validation in Real Conditions

For the final verification of the generalization capacity of the newly developed LSTM network, additional validation was carried out using real data. In our laboratory, a prototype of a special vest has been developed, which allows you to perform tomographic measurements and reconstructions, as well as ECG measurements [

51,

52].

Figure 14 shows the arrangement of electrodes seen from the inside of the vest.

The vest is equipped with a set of 102 specially designed textile electrodes. Each of the electrodes consists of three layers. The center of the electrode contains a laser cut sponge. The construction diagram of a single electrode is shown in

Figure 15.

A conductive material is wrapped around the sponge. The electrically conductive material comes with a metal pin used in the textile industry that allows the electrode to be connected. This layer forms the upper part of the electrode. The back of the electrode is covered with silicone, which has a dual function. First, it binds all parts of the electrode together and also provides flexibility. Each electrode is connected with a shielded cable with a diameter of Ø 1 mm in which the screen is connected to the ground of the measuring device.

The arrangement of electrodes was developed in such a way as to enable measurement and reconstruction of 3D tomographic images with such techniques as Body Surface Potential Mapping (BSPM), Electrical Impedance Tomography (EIT) [

53], and Electrical Capacitance Tomography (ECT) [

6,

54]. The electrode system also allows you to perform a full 12-channel ECG.

In order to verify the trained LSTM network, real ECG data was collected from two volunteers. Measurements were made using the electronic vest prototype. Volunteer No. 1 was diagnosed with PVC. Volunteer No. 2 was healthy.

Figure 16 shows the ECG signal for volunteer No. 1 (PVC).

Figure 17 shows the ECG spectrogram of volunteer No. 1.

Figure 18 shows the first extracted feature of the spectral entropy (SE) for the ECG signal of volunteer No. 1.

Figure 19 shows the first extracted feature-Instantaneous Frequency (IF) for the ECG signal of volunteer No. 1. Comparing the raw ECG signal (

Figure 16) with the SE and IF signals (

Figure 18 and

Figure 19), it can be seen that the transformed signals contain large enhanced changes while the small changes of the raw signal are smoothed. Feature extraction reduced noise associated with measurements made by the vest electrode system and highlighted signal changes key to classification.

Table 5 presents the LSTM network prediction results for both volunteers. In addition to the final results clearly indicating that the ECG signal of volunteer No. 1 was classified as PVC and the volunteer signal No. 2 was categorized as normal ECG,

Table 5 also shows the percentage probabilities for individual categories of ECG signals.

Experiments on real data have shown that, for both volunteer No. 1 (PVC) and volunteer No. 2 (Normal ECG), the LSTM network classifies correctly and reliably. In both examined cases, the probabilities of indications are greater than 99%.

4. Discussion

All the experiments presented in this article were conducted in Matlab with the use of a Deep Learning Toolbox and Signal Processing Toolbox. Researchers usually use Python with the Keras Deep Learning library and TensorFlow, which is a comprehensive open-source machine learning platform, for ECG signal classification. Python is the right tool for software development and implementation, but Matlab has many features and functionalities that give it an advantage in the research phase. Matlab provides flexible, two-way integration with both Python and many other programming languages. Thanks to this, different teams can work together and use Matlab algorithms in production software and information systems. Furthermore, Matlab allows you to easily implement and test algorithms, develop the computational codes, quickly debug, use a large database of built-in algorithms, develop applications with graphics user interface, and much more.

The general impression emerging from the literature review on the subject of machine learning methods implemented for the issue of ECG signal classification indicates some limitations. These limitations apply to the direct processing of raw ECG signals. It turns out that the vast majority of classification systems of this type do not achieve prediction accuracy exceeding 90%. ECG signal processing, based on denoise or features extraction, allows for a significant improvement in classification results.

A clear trend can be observed in the pursuit of automation of monitoring and diagnosis of patients’ diseases. The way to implement this type of intention is smart wearables [

44]. Separating the decision-making center from the doctor requires a responsible approach. The use of machine learning techniques aims to minimize wrong decisions in health. There is plenty of evidence that machine learning is a practical and effective prediction and classification tool that finds application in many areas of life and the economy [

6,

55,

56,

57].

Automatic recognition of heart dysfunctions still requires many improvements. Commercial solutions implemented are very cautious. For example, Apple offers a smartwatch that diagnoses atrial fibrillation based on a single-channel ECG signal. It still has very limited functionality.

For the automation of monitoring and diagnosis of health problems to reach a pragmatic level, two conditions should be met. The first condition is the ability to recognize many types of heart dysfunction instead of only the binary (sick or healthy) classification. The second condition is very high classification accuracy. In principle, accuracy should be 100% because the classifier is to replace a doctor. Until the algorithms provide such high-quality classification, in-depth research into a solution to this problem will be necessary.

5. Conclusions

The research presented in this article confirmed that the extraction of features from the raw ECG signal is an effective method leading to the improvement of the classification quality based on the LSTM network. In particular, the conversion of a single signal into spectrographic images can be accomplished using a Fourier transform. In the present case, two time-frequency (TF) moments extracted from the spectrograms known as instantaneous frequency (IF) and spectral entropy (SE) were used.

For a single raw ECG signal, the mean LSTM accuracy for the testing set was 70.8%. The same metric for the LSTM with a double spectral ECG signal was 100%. This result was obtained by classifying six types of cardiac dysfunctions. In a similar work, Chang et al. [

43] developed a binary LSTM classifier in which the task was to detect atrial fibrillation only. Obtained accuracy for the training set was 98% and 85% for the testing set.

Spectral feature extraction results in a reduction of the number of variables in the training set. Thanks to this, the network learns faster. The learning accuracy increases because the input contains more relevant information and less noise. It can be noticed that the Fourier transformation denoises the raw ECG signal.

The number of ECG signals for Brachycardia was the smallest of all six classes and was only 60. Therefore, the test sets for all classes were 60 as well. As seen in

Table 2, the numbers of all training sets are 1080, which means significant oversampling for Brachycardia.

The fact of a small number of cases for Brachycardia can be the reason for two bigger fluctuations in the second variant of LSTM (

Figure 10 and

Figure 11). This does not undermine the results of research and high-quality LSTM network. On the contrary, if more data were used, it is expected to smooth the plot completely.

LSTM works more effectively than CNN due to the transformation of the ECG signal into spectral features. CNNs have been designed to classify images, but these networks have no memory and are, therefore, unsuitable for forecasting time series or signals. LSTM after the transformation of a single input signal into a double spectral signal combines both features, including signal memory and high performance, in image recognition. This makes the quality of the LSTM network trained in this way extremely high.

The presented method has the following advantages compared to other known algorithms for classification of ECG signals.

Very high effectiveness—100% accuracy was obtained on the testing set,

It is possible to classify up to six categories of signals (five diseases and one for a healthy heart),

Combination of the advantages of convolution neural networks for image classification and recursive networks having implemented memory mechanisms,

Relatively low level of complexity—a single layer of BiLSTM with 200 hidden units was enough,

Increase in the number of input signals from one raw ECG to two spectral inputs (TF moments)—instantaneous frequency (IF) and spectral entropy (SE),

Converting raw ECG to two TF moments has reduced the amount of data needed to train the neural network. Thanks to this, the network learns not only more effectively but several times faster.

The presented solutions also have some limitations. It is uncertain how a trained LSTM network would behave if data from other ECG devices were used at the input. In order to reduce the uncertainty of results, more measurement data from different devices, carried out in different conditions on various groups of patients, should be collected.

Future research will be conducted toward the use of ECG signal classification algorithms combined with the use of intelligent clothing. A specially designed sensor system located in the vest will provide ECG data, and the LSTM algorithm will monitor the patient’s condition on an ongoing basis. This kind of concept requires solving a number of problems related to both hardware and software as well as obtaining a noise-free ECG signal. Future research will aim to develop a fully functional wearable Tektronix device for ECG monitoring.