Perceptual Metric Guided Deep Attention Network for Single Image Super-Resolution

Abstract

1. Introduction

2. Related Work

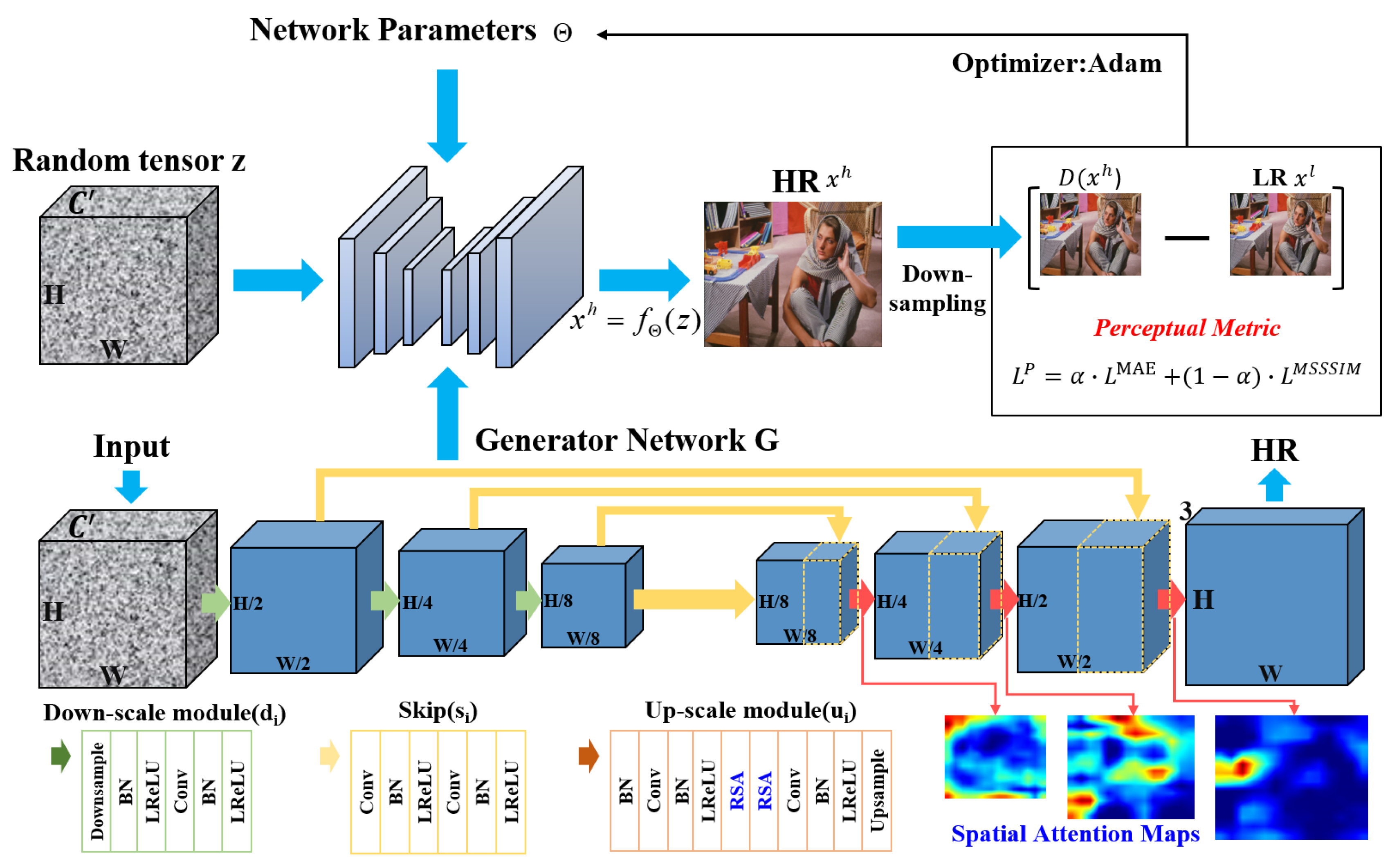

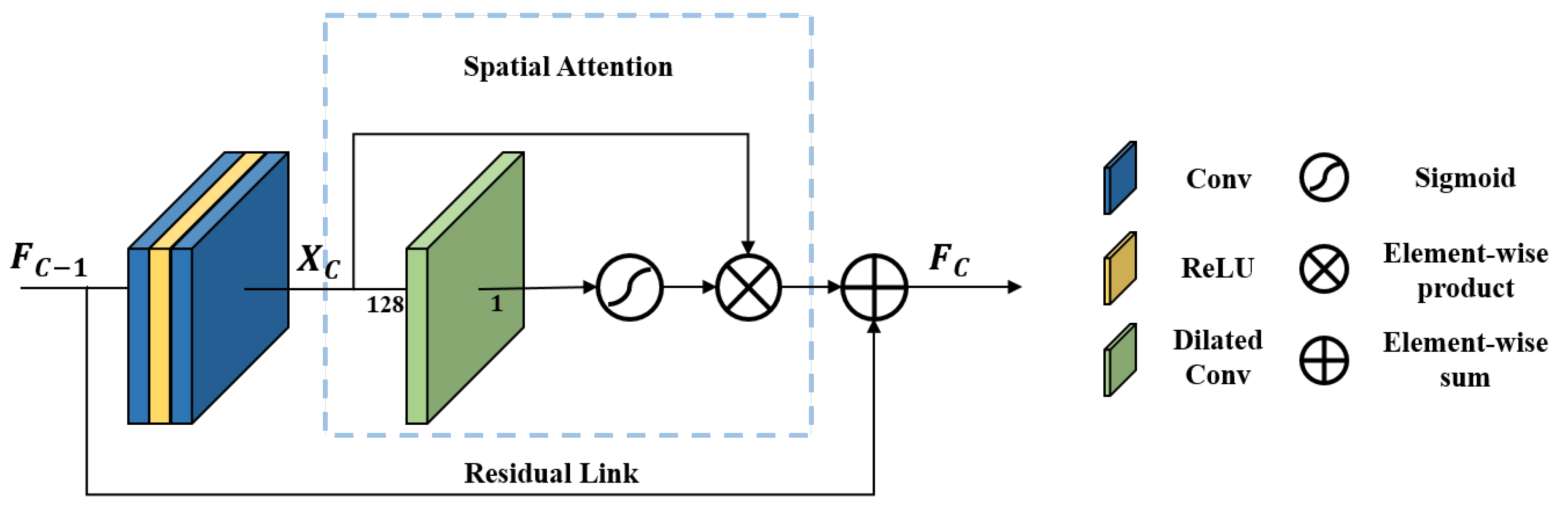

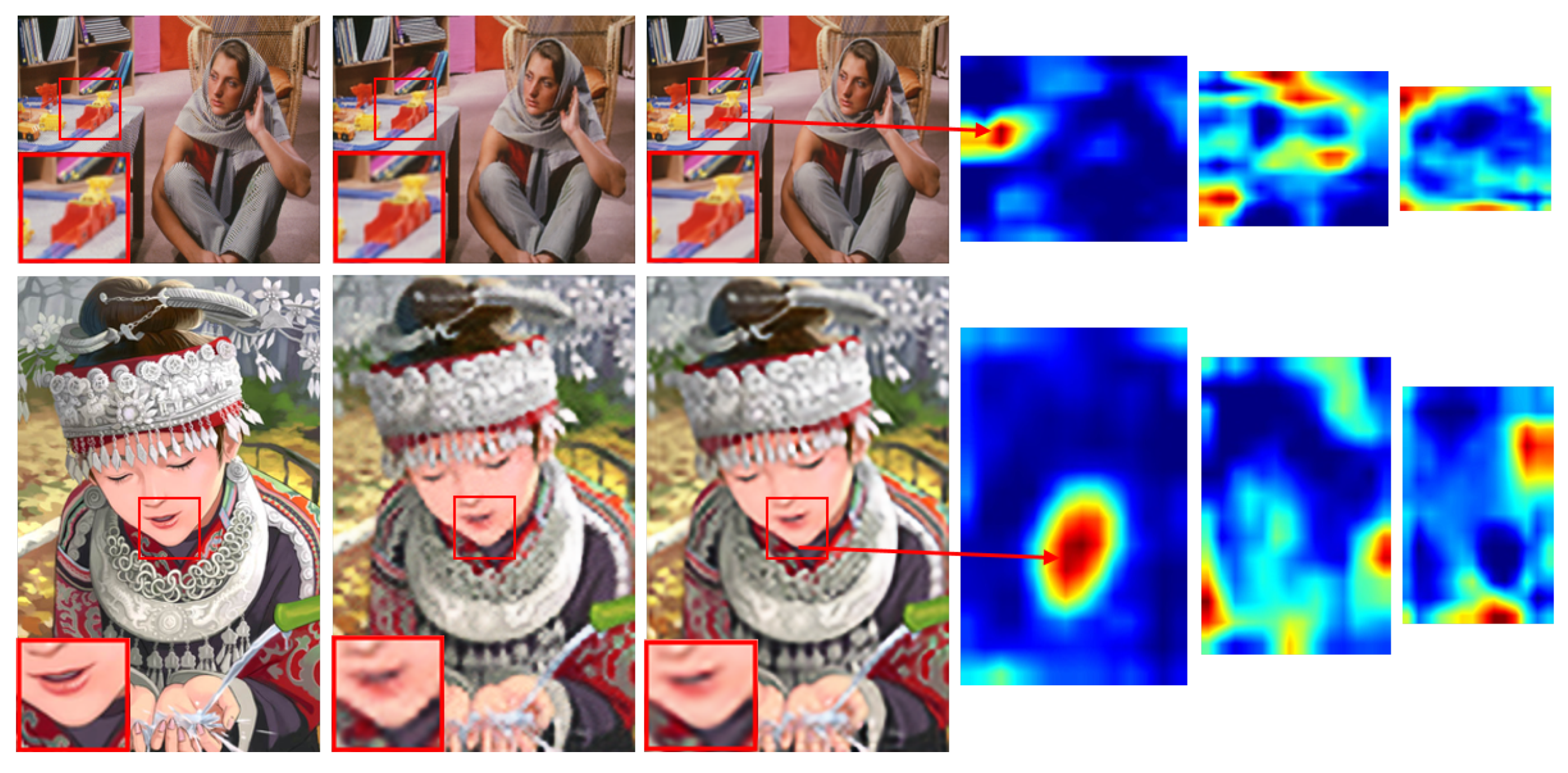

3. Perceptual Metric Guided Deep Attention Network

3.1. Network Architecture

3.2. Loss Function

4. Experimental Results and Analysis

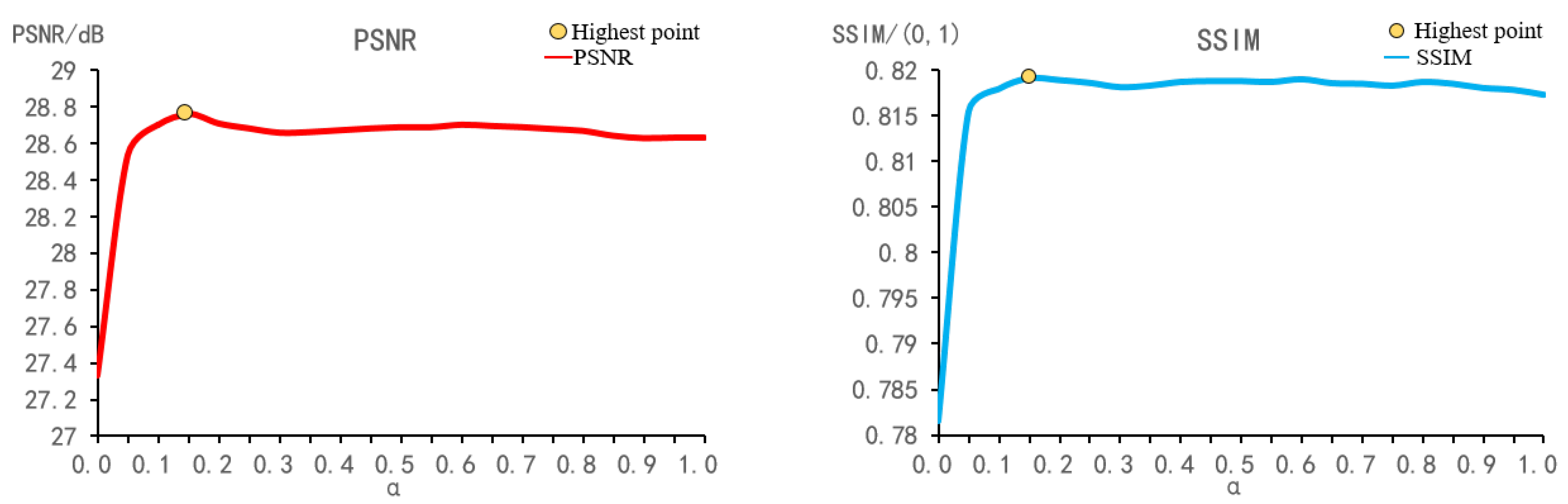

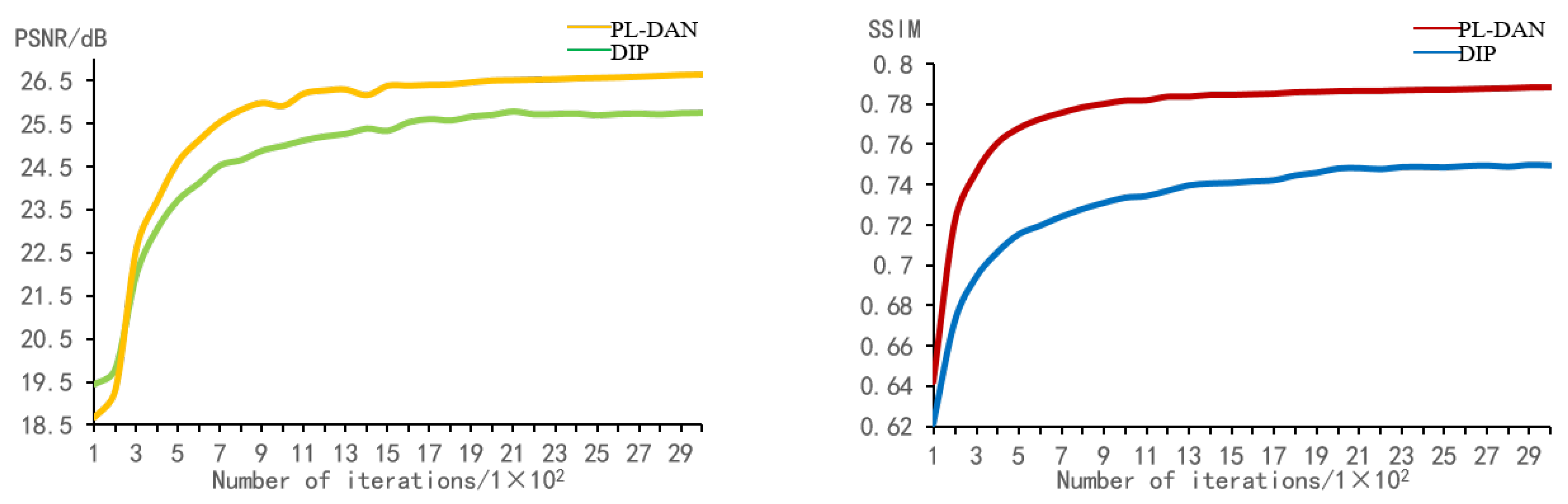

4.1. Parameters Analysis

4.2. Ablation Studies

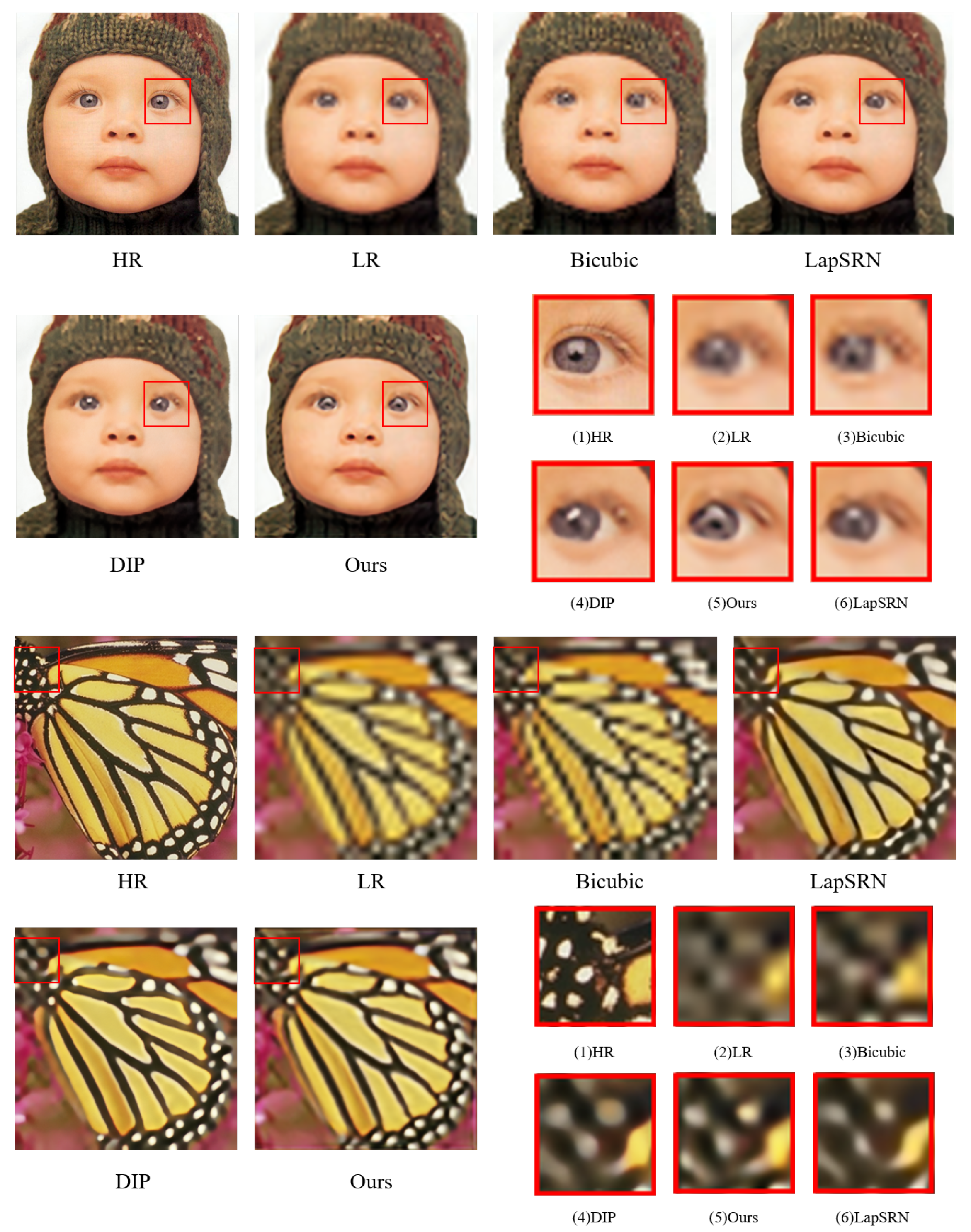

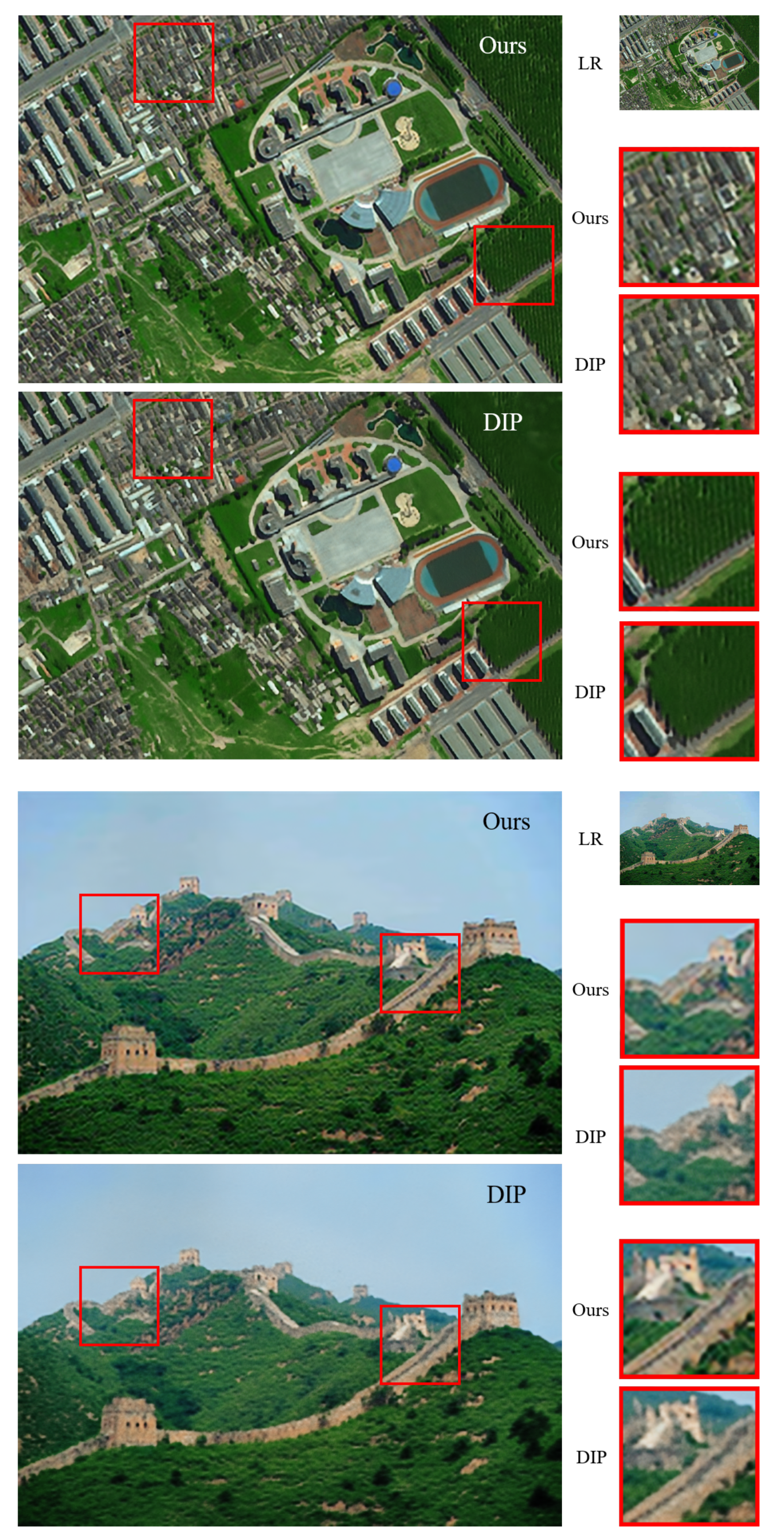

4.3. Performance Comparison

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Pouliot, D.; Latifovic, R.; Pasher, J.; Duffe, J. Landsat Super-Resolution Enhancement Using Convolution Neural Networks and Sentinel-2 for Training. Remote Sens. 2018, 10, 394. [Google Scholar] [CrossRef]

- Cherukuri, V.; Guo, T.; Schiff, S.; Monga, V. Deep MR Brain Image Super-Resolution Using Spatio-Structural Priors. IEEE Trans. Image Process. 2020, 29, 1368–1383. [Google Scholar] [CrossRef] [PubMed]

- Keys, R. Cubic convolution interpolation for digital image processing. IEEE Trans. Acoust. Speech Signal Process. 1981, 29, 1153–1160. [Google Scholar] [CrossRef]

- Sajjadi, M.S.M.; Scholkopf, B.; Hirsch, M. Enhancenet: Single image super-resolution through automated texture synthesis. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 4491–4500. [Google Scholar]

- Kim, K.I.; Kwon, Y. Single-image super-resolution using sparse regression and natural image prior. IEEE Trans. Pattern Anal. Mach. Intell. 2010, 32, 1127–1133. [Google Scholar] [PubMed]

- Freeman, W.T.; Jones, T.R.; Pasztor, E.C. Example-based super-resolution. IEEE Comput. Graph. Appl. 2002, 22, 56–65. [Google Scholar] [CrossRef]

- Yang, J.; Wright, J.; Huang, T.; Ma, Y. Image superresolution via sparse representation. IEEE Trans. Image Process. 2010, 19, 2861–2873. [Google Scholar] [CrossRef] [PubMed]

- Sun, Y.; Chen, J.; Liu, Q.; Liu, G. Learning image compressed sensing with sub-pixel convolutional generative adversarial network. Pattern Recognit. 2020, 98, 107051. [Google Scholar] [CrossRef]

- Li, K.; Wu, Z.; Peng, K.C.; Ernst, J.; Fu, Y. Tell me where to look: Guided attention inference network. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 9215–9223. [Google Scholar]

- Yang, W.; Zhang, X.; Tian, Y.; Wang, W.; Xue, J.H.; Liao, Q. Deep Learning for Single Image Super-Resolution: A Brief Review. IEEE Trans. Multimed. 2019, 21, 3106–3121. [Google Scholar] [CrossRef]

- Ulyanov, D.; Vedaldi, A.; Lempitsky, V. Deep image prior. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 9446–9454. [Google Scholar]

- Wang, Z.; Bovik, A.C.; Sheikh, H.R.; Simoncelli, E.P. Image quality assessment: From error visibility to structural similarity. IEEE Trans. Image Process. 2004, 13, 600–612. [Google Scholar] [CrossRef] [PubMed]

- Zhao, H.; Gallo, O.; Frosio, I.; Kautz, J. Loss functions for neural networks for image processing. IEEE Trans. Comput. Imaging 2017, 3, 47–57. [Google Scholar] [CrossRef]

- Viet, K.H.; Ren, J.; Xu, X.; Zhao, S.; Xie, G.; Vargas, V.M. Deep Learning Based Single Image Super-resolution: A Survey. Int. J. Autom. Comput. 2019, 16, 413–426. [Google Scholar]

- Dong, C.; Loy, C.C.; He, K.; Tang, X. Image super-resolution using deep convolutional networks. IEEE Trans. Pattern Anal. Mach. Intell. 2015, 38, 295–307. [Google Scholar] [CrossRef] [PubMed]

- Kim, J.; Lee, J.K.; Lee, K.M. Accurate image super-resolution using very deep convolutional networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 1646–1654. [Google Scholar]

- Zhang, K.; Zuo, W.; Gu, S.; Zhang, L. Learning deep CNN denoiser prior for image restoration. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 3929–3938. [Google Scholar]

- Kim, J.; Lee, J.K.; Lee, K.M. Deeply-Recursive Convolutional Network for Image Super-Resolution. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016. [Google Scholar]

- Dong, C.; Loy, C.C.; Tang, X. Accelerating the Super-Resolution Convolutional Neural Network. Eur. Conf. Comput. Vis. 2016, 391–407. [Google Scholar] [CrossRef]

- Shi, W.; Caballero, J.; Huszár, F.; Totz, J.; Aitken, A.P.; Bishop, R.; Rueckert, D.; Wang, Z. Real-time single image and video super-resolution using an efficient sub-pixel convolutional neural network. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 1874–1883. [Google Scholar]

- Lim, B.; Son, S.; Kim, H.; Nah, S.; Lee, K.M. Enhanced deep residual networks for single image super-resolution. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, Honolulu, HI, USA, 21–26 July 2017; pp. 136–144. [Google Scholar]

- Ledig, C.; Theis, L.; Huszár, F.; Caballero, J.; Cunningham, A.; Acosta, A.; Aitken, A.; Tejani, A.; Totz, J.; Wang, Z.; et al. Photo-realistic single image super-resolution using a generative adversarial network. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 4681–4690. [Google Scholar]

- Lai, W.S.; Huang, J.B.; Ahuja, N.; Yang, M.H. Deep laplacian pyramid networks for fast and accurate super-resolution. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 624–632. [Google Scholar]

- Feng, X.; Su, X.; Shen, J.; Jin, H. Single Space Object Image Denoising and Super-Resolution Reconstructing Using Deep Convolutional Networks. Remote Sens. 2019, 11, 1910. [Google Scholar] [CrossRef]

- Zhang, H.; Sindagi, V.; Patel, V.M. Image de-raining using a conditional generative adversarial network. IEEE Trans. Circuits Syst. Video Technol. 2019. [Google Scholar] [CrossRef]

- Zhang, Y.; Li, K.; Li, K.; Wang, L.; Zhong, B.; Fu, Y. Image super-resolution using very deep residual channel attention networks. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 286–301. [Google Scholar]

- Wang, F.; Jiang, M.; Qian, C.; Yang, S.; Li, C.; Zhang, H.; Wang, X.; Tang, X. Residual attention network for image classification. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 3156–3164. [Google Scholar]

- Glorot, X.; Bordes, A.; Bengio, Y. Deep sparse rectifier neural networks. In Proceedings of the Fourteenth International Conference on Artificial Intelligence and Statistics, Ft. Lauderdale, FL, USA, 11–13 April 2011; pp. 315–323. [Google Scholar]

- Yu, F.; Koltun, V. Multi-scale context aggregation by dilated convolutions. arXiv 2015, arXiv:1511.07122. [Google Scholar]

- Bevilacqua, M.; Roumy, A.; Guillemot, C.; Marie, A.M. Low-complexity single-image super-resolution based on nonnegative neighbor embedding. In Proceedings of the 23rd British Machine Vision Conference, Surrey, UK, 3–7 September 2012. [Google Scholar]

- Zeyde, R.; Elad, M.; Protter, M. On single image scale-up using sparse-representations. Int. Conf. Curves Surfaces 2010, 711–730. [Google Scholar] [CrossRef]

- Paszke, A.; Gross, S.; Chintala, S.; Chanan, G.; Yang, E.; DeVito, Z.; Lin, Z.; Desmaison, A.; Antiga, L.; Lerer, A. Automatic differentiation in pytorch. In Proceedings of the 31st Conference on Neural Information Processing Systems (NIPS 2017), Long Beach, CA, USA, 4–9 December 2017. [Google Scholar]

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

- Legge, G.E.; Foley, J. Contour detection and hierarchical image segmentation. J. Opt. Soc. Am. 1980, 70, 1458–1471. [Google Scholar] [CrossRef] [PubMed]

- Arbelaez, P.; Maire, M.; Fowlkes, C.; Malik, J. Contour detection and hierarchical image segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2011, 33, 898–916. [Google Scholar] [CrossRef] [PubMed]

| Block | Layer | Name | Parameters<Kernel-Inchannel-Outchannel-Padding-Stride> |

|---|---|---|---|

| Input | In-1 | Conv | 3-64-128-1-1 |

| Down-scale module | Down-1 | Conv | 3-128-128-1-2 |

| Down-2 | Conv | 3-128-128-1-1 | |

| Skip module | Skip-1 | Conv | 3-128-64-1-1 |

| Skip-2 | Conv | 1-64-4-1-1 | |

| Up-scale module | Up-1 | Conv | 3-132-128-1-1 |

| RSA | Conv | 3-128-128-1-1 | |

| Conv | 3-128-128-1-1 | ||

| DilatedConv | 3-128-1-3-1 <dilation = 3> | ||

| Up-2 | Conv | 1-128-128-0-1 | |

| Output | Out-1 | Conv | 1-128-3-0-1 |

| Image | DIP | PM-DAN w/o RSA | PM-DAN w/o PL | PM-DAN |

|---|---|---|---|---|

| PSNR/SSIM | PSNR/SSIM | PSNR/SSIM | PSNR/SSIM | |

| Baboon | 22.29/0.5195 | 22.59/0.5323 | 22.62/0.5419 | 22.68/0.5481 |

| Barbara | 25.53/0.7286 | 25.56/0.7310 | 25.60/0.7358 | 25.77/0.7472 |

| Bridge | 23.09/0.5861 | 23.45/0.5701 | 23.41/0.5617 | 23.68/0.5914 |

| Coastguard | 25.81/0.6490 | 26.00/0.6169 | 25.89/0.6351 | 26.05/0.6415 |

| Comic | 22.18/0.6889 | 22.43/0.6866 | 22.49/0.7016 | 22.58/0.7075 |

| Face | 31.02/0.7507 | 31.97/0.7927 | 32.01/0.7944 | 32.11/0.8014 |

| Flowers | 26.14/0.7617 | 26.63/0.7839 | 26.65/0.7880 | 26.93/0.7998 |

| Foreman | 31.66/0.8845 | 31.96/0.9010 | 31.84/0.8970 | 32.49/0.9082 |

| Lenna | 30.83/0.8367 | 31.15/0.8487 | 31.27/0.8498 | 31.36/0.8556 |

| Man | 26.09/0.7079 | 26.49/0.7280 | 26.57/0.7405 | 26.75/0.7507 |

| Monarch | 29.98/0.9083 | 29.77/0.9093 | 30.02/0.9159 | 30.39/0.9236 |

| Pepper | 32.08/0.8524 | 32.23/0.8599 | 32.45/0.8646 | 32.77/0.8708 |

| Ppt3 | 24.38/0.8815 | 24.31/0.8832 | 24.74/0.8906 | 25.10/0.9050 |

| Zebra | 25.71/0.7477 | 26.02/0.7777 | 26.21/0.7791 | 26.53/0.7871 |

| AVG. | 26.91/0.7503 | 27.18/0.7588 | 27.27/0.7640 | 27.51/0.7742 |

| Set5 ×4 | Bicubic(NT) | DIP(NT) | PM-DAN(NT) | SRCNN(T) | LapSRN(T) |

|---|---|---|---|---|---|

| PSNR/SSIM | PSNR/SSIM | PSNR/SSIM | PSNR/SSIM | PSNR/SSIM | |

| Baby | 31.78/0.8365 | 31.49/0.8589 | 32.65/0.8881 | 33.13/0.8835 | 33.55/0.9044 |

| Brid | 30.20/0.8496 | 31.80/0.9052 | 32.83/0.9265 | 32.52/0.9095 | 33.76/0.9063 |

| Butterfly | 22.13/0.7542 | 26.23/0.8805 | 26.32/0.8811 | 25.44/0.8503 | 27.28/0.8883 |

| Head | 31.34/0.7820 | 31.04/0.7609 | 31.97/0.7962 | 32.45/0.7817 | 32.62/0.8101 |

| Woman | 26.75/0.8299 | 28.93/0.8788 | 29.47/0.9021 | 28.88/0.8542 | 30.72/0.9159 |

| AVG. | 28.44/0.8104 | 29.89/0.8568 | 30.65/0.8788 | 30.48/0.8558 | 31.59/0.8850 |

| Set5 ×8 | Bicubic(NT) | DIP(NT) | PM-DAN(NT) | LapSRN(T) |

|---|---|---|---|---|

| PSNR/SSIM | PSNR/SSIM | PSNR/SSIM | PSNR/SSIM | |

| Baby | 27.28/0.7166 | 28.28/0.7548 | 28.84/0.7645 | 28.88/0.7701 |

| Brid | 25.28/0.7015 | 27.09/0.7628 | 26.92/0.7580 | 27.10/0.7615 |

| Butterfly | 17.74/0.5661 | 20.02/0.6705 | 20.60/0.6811 | 19.97/0.6789 |

| Head | 28.82/0.6016 | 29.55/0.6879 | 29.52/0.6941 | 29.76/0.7103 |

| Woman | 22.74/0.7043 | 24.50/0.7555 | 24.77/0.7635 | 24.79/0.7692 |

| AVG. | 24.37/0.6580 | 25.88/0.7263 | 26.13/0.7322 | 26.10/0.7380 |

| Set14 ×4 | Bicubic(NT) | DIP(NT) | PM-DAN(NT) | SRCNN(T) | LapSRN(T) |

|---|---|---|---|---|---|

| PSNR/SSIM | PSNR/SSIM | PSNR/SSIM | PSNR/SSIM | PSNR/SSIM | |

| Baboon | 22.44/0.4712 | 22.29/0.5195 | 22.68/0.5481 | 22.72/0.5015 | 22.83/0.5372 |

| Barbara | 25.15/0.6793 | 25.53/0.7286 | 25.77/0.7472 | 25.75/0.7322 | 25.69/0.7454 |

| Bridge | 22.96/0.5328 | 23.09/0.5861 | 23.68/0.5914 | 23.75/0.5955 | 23.74/0.6203 |

| Coastguard | 25.53/0.5353 | 25.81/0.6490 | 26.05/0.6415 | 26.03/0.5610 | 26.21/0.6016 |

| Comic | 21.59/0.5650 | 22.18/0.6889 | 22.58/0.7075 | 22.69/0.6701 | 22.90/0.7067 |

| Face | 31.34/0.7440 | 31.02/0.7507 | 32.11/0.8014 | 32.37/0.7796 | 32.62/0.7996 |

| Flowers | 25.33/0.7126 | 26.14/0.7617 | 26.93/0.7998 | 27.13/0.7821 | 27.54/0.7925 |

| Foreman | 29.45/0.8654 | 31.66/0.8845 | 32.49/0.9085 | 32.11/0.8991 | 33.59/0.9219 |

| Lenna | 29.84/0.8139 | 30.83/0.8367 | 31.36/0.8556 | 31.40/0.8453 | 31.98/0.8543 |

| Man | 25.70/0.6677 | 26.09/0.7079 | 26.75/0.7507 | 26.88/0.7303 | 27.27/0.7624 |

| Monarch | 27.45/0.8923 | 29.98/0.9083 | 30.39/0.9236 | 30.21/0.9193 | 31.62/0.9230 |

| Pepper | 30.63/0.8427 | 32.08/0.8524 | 32.77/0.8708 | 32.97/0.8673 | 33.88/0.8551 |

| Ppt3 | 21.78/0.8353 | 24.38/0.8815 | 25.10/0.9045 | 24.79/0.8964 | 25.36/0.9119 |

| Zebra | 24.01/0.6799 | 25.71/0.7477 | 26.53/0.7871 | 26.08/0.7488 | 26.98/0.7758 |

| AVG. | 25.92/0.7027 | 26.91/0.7503 | 27.51/0.7742 | 27.49/0.7520 | 27.97/0.7720 |

| Set14 ×8 | Bicubic(NT) | DIP(NT) | PM-DAN(NT) | LapSRN(T) |

|---|---|---|---|---|

| PSNR/SSIM | PSNR/SSIM | PSNR/SSIM | PSNR/SSIM | |

| Baboon | 21.28/0.3292 | 21.37/0.3688 | 21.46/0.3694 | 21.51/0.3744 |

| Barbara | 23.44/0.5649 | 23.90/0.6153 | 24.04/0.6168 | 24.21/0.6231 |

| Bridge | 21.54/0.3614 | 21.58/0.3970 | 22.13/0.4001 | 22.11/0.4097 |

| Coastguard | 23.65/0.4028 | 24.17/0.4236 | 24.32/0.4300 | 24.10/0.4303 |

| Comic | 19.25/0.3848 | 19.79/0.4498 | 20.04/0.4531 | 20.06/0.4579 |

| Face | 28.79/0.6589 | 29.48/0.6915 | 29.58/0.6945 | 29.85/0.7092 |

| Flowers | 22.06/0.5539 | 22.93/0.5953 | 22.93/0.5960 | 23.31/0.5941 |

| Foreman | 25.37/0.7587 | 27.01/0.8223 | 28.16/0.8224 | 28.13/0.8217 |

| Lenna | 26.27/0.7053 | 27.72/0.7553 | 28.00/0.7572 | 28.22/0.7637 |

| Man | 23.06/0.5247 | 23.92/0.5639 | 23.88/0.5724 | 24.20/0.5789 |

| Monarch | 23.18/0.7753 | 24.02/0.8085 | 24.98/0.8093 | 24.97/0.8147 |

| Pepper | 26.55/0.7406 | 28.63/0.7975 | 29.01/0.7980 | 29.22/0.8058 |

| Ppt3 | 18.62/0.7062 | 20.09/0.7606 | 20.52/0.7671 | 20.13/0.7717 |

| Zebra | 19.59/0.4572 | 20.25/0.5086 | 21.05/0.5241 | 20.28/0.5253 |

| AVG. | 23.04/0.5660 | 23.91/0.6112 | 24.27/0.6150 | 24.31/0.6200 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sun, Y.; Shi, Y.; Yang, Y.; Zhou, W. Perceptual Metric Guided Deep Attention Network for Single Image Super-Resolution. Electronics 2020, 9, 1145. https://doi.org/10.3390/electronics9071145

Sun Y, Shi Y, Yang Y, Zhou W. Perceptual Metric Guided Deep Attention Network for Single Image Super-Resolution. Electronics. 2020; 9(7):1145. https://doi.org/10.3390/electronics9071145

Chicago/Turabian StyleSun, Yubao, Yuyang Shi, Ying Yang, and Wangping Zhou. 2020. "Perceptual Metric Guided Deep Attention Network for Single Image Super-Resolution" Electronics 9, no. 7: 1145. https://doi.org/10.3390/electronics9071145

APA StyleSun, Y., Shi, Y., Yang, Y., & Zhou, W. (2020). Perceptual Metric Guided Deep Attention Network for Single Image Super-Resolution. Electronics, 9(7), 1145. https://doi.org/10.3390/electronics9071145