Evaluation of Wearable Electronics for Epilepsy: A Systematic Review

Abstract

1. Introduction

- Tonic seizures (TS) associated with contractions of the muscles;

- Clonic seizures (CS) associated with repeated contractions and relaxation of muscles;

- Tonic-clonic seizures (TCS) associated with stiffening followed by shaking;

- Myoclonic seizures (MS) associated with twitching regions of muscles;

- Atonic seizures associated with loss of muscle strength;

- Absence seizures associated with individuals appearing detached or inattentive.

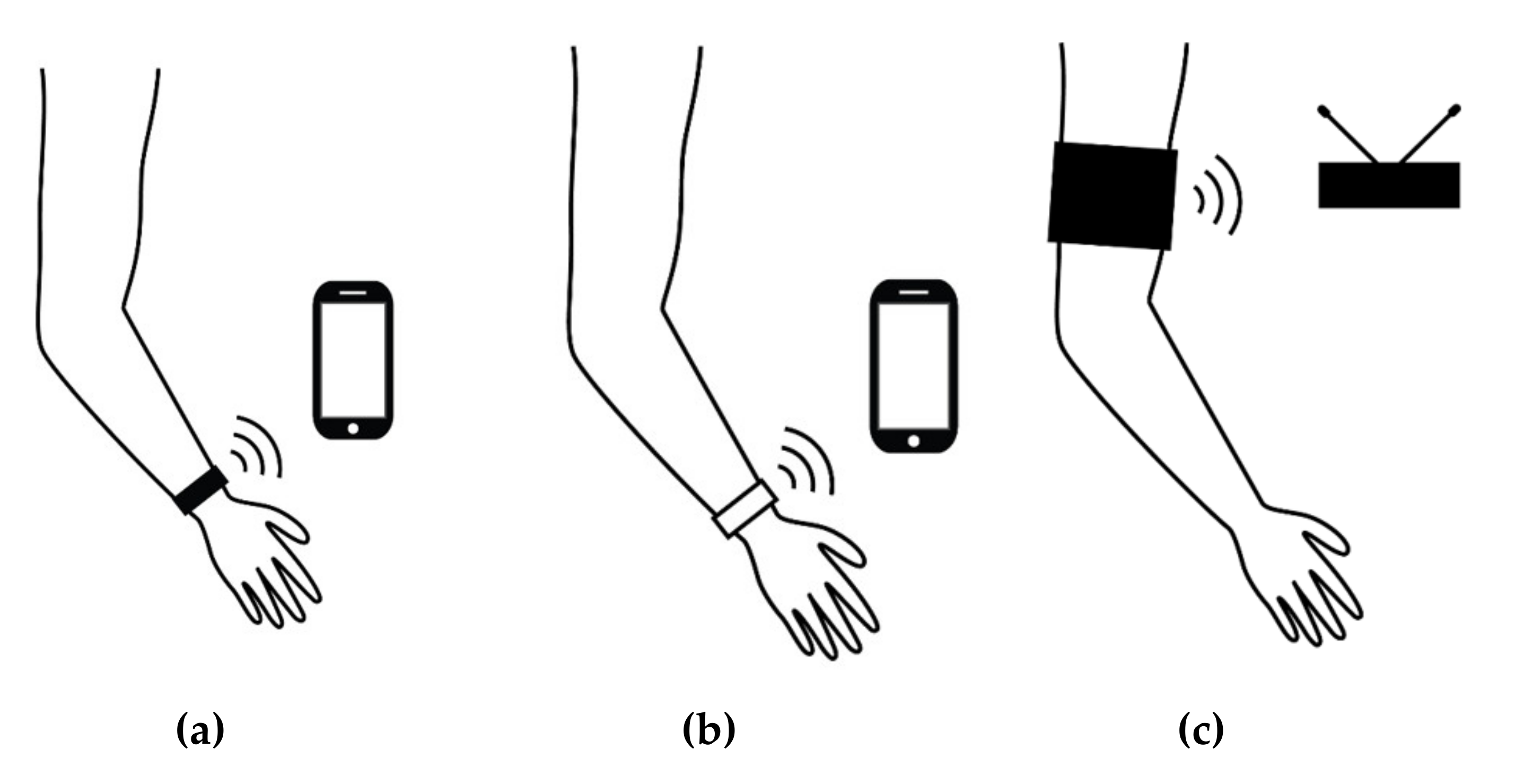

1.1. Wearable Electronics for Epilepsy Seizure Detection

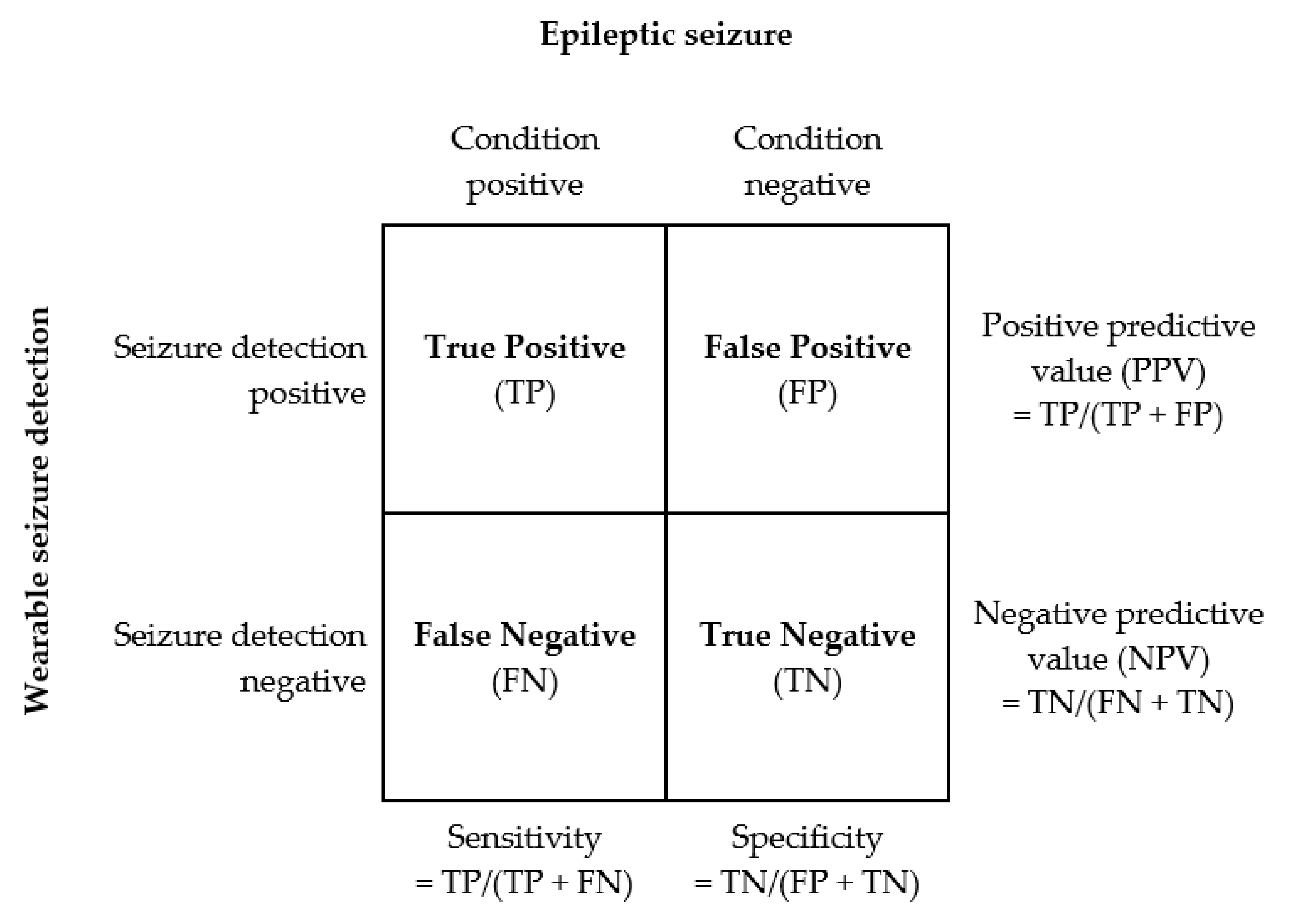

Detection Performance

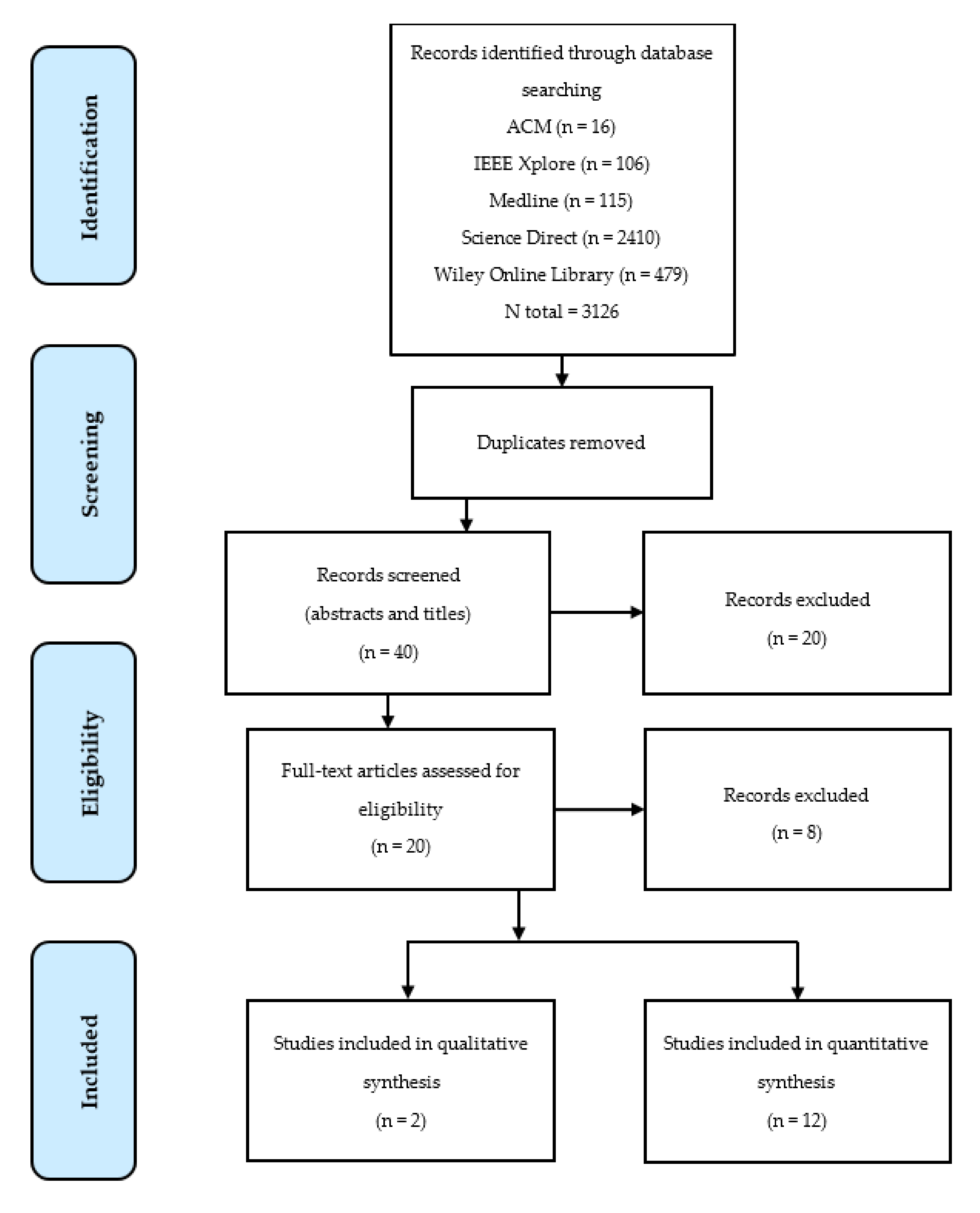

2. Method

2.1. Search Strategy

2.2. Eligibility Criteria and Selection

- Primary studies in peer-reviewed literature;

- Studies where the main theme is consumer wearable electronics for epilepsy seizure detection;

- Studies reporting quantitative and/or qualitative assessment data.

3. Results

3.1. Quantitative Studies

3.1.1. Clinical Setting

3.1.2. Free-Living Environment

3.1.3. Data Failures—Missing and Unusable Data

3.2. Qualitative Studies

4. Discussion

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Abbreviations

| BTCS | Bilateral tonic-clonic seizures |

| CPS | Complex partial seizures |

| CS | Clonic seizures |

| ECG | Electrocardiogram |

| EDA | Electrodermal activity |

| EMG | Electromyography |

| FN | False negative |

| FNV/R | False negative value/rate |

| FP | False positive |

| FPV/R | False positive value/rate |

| FS | Focal seizures |

| FTC | Focal tonic–clonic |

| GTC | General tonic-clonic |

| HRV | Heart rate variability |

| kNN | k-nearest neighbour |

| MS | Myoclonic seizures |

| MTS | Myoclonic-tonic seizures |

| NB | Naïve Bayes classifier |

| NPV/R | Negative predictive value/rate |

| PMS | Predominantly motor seizures |

| PNMS | Predominantly non-motor seizures |

| PPG | Photoplethysmography |

| PPV/R | Positive predictive value/rate |

| PRV | Pulse rate variability |

| PS | Partial onset seizures |

| RF | Random forest |

| SUDEP | Sudden unexpected death in epilepsy |

| SVM | Support vector machine |

| TCS | Tonic-clonic seizures |

| TLS | Temporal lobe seizures |

| TN | True negative |

| TP | True positive |

| TPV/R | True positive value/rate |

| TS | Tonic seizures |

| vEEG | Video electroencephalogram |

References

- WHO. Epilepsy: A Public Health Imperative; World Health Organization: Geneva, Switzerland, 2019. [Google Scholar]

- Sheng, J.; Liu, S.; Qin, H.; Li, B.; Zhang, X. Drug-resistant epilepsy and surgery. Curr. Neuropharmacol. 2018, 16, 17–28. [Google Scholar] [CrossRef] [PubMed]

- Van de Vel, A.; Verhaert, K.; Ceulemans, B. Critical evaluation of four different seizure detection systems tested on one patient with focal and generalized tonic and clonic seizures. Epilepsy Behav. 2014, 37, 91–94. [Google Scholar] [CrossRef] [PubMed]

- Van Andel, J.; Thijs, R.; de Weerd, A.; Arends, J.; Leijten, F. Non-EEG based ambulatory seizure detection designed for home use: What is available and how will it influence epilepsy care? Epilepsy Behav. 2016, 57, 82–89. [Google Scholar] [CrossRef] [PubMed]

- Wannamaker, B.B. Autonomic Nervous System and Epilepsy. Epilepsia 1985, 26, S31–S39. [Google Scholar] [CrossRef] [PubMed]

- Baumgartner, C.; Lurger, S.; Leutmezer, F. Autonomic symptoms during epileptic seizures. Epileptic Disord. 2001, 3, 103–116. [Google Scholar] [PubMed]

- Bruno, E.; Simblett, S.; Lang, A.; Biondi, A.; Odoi, C.; Schulze-Bonhage, A.; Wykes, T.; Richardson, M. Wearable technology in epilepsy: The views of patients, caregivers, and healthcare professionals. Epilepsy Behav. 2018, 85, 141–149. [Google Scholar] [CrossRef] [PubMed]

- Meritam, P.; Ryvlin, P.; Beniczky, S. User-based evaluation of applicability and usability of a wearable accelerometer device for detecting bilateral tonic-clonic seizures: A field study. Epilepsia 2018, 59, 48–52. [Google Scholar] [CrossRef] [PubMed]

- Fisher, R.S.; Blum, D.E.; DiVentura, B.; Vannest, J.; Hixson, J.D.; Moss, R.; Herman, S.T.; Fureman, B.E.; French, J.A. Seizure diaries for clinical research and practice: Limitations and future prospects. Epilepsy Behav. 2012, 24, 304–310. [Google Scholar] [CrossRef] [PubMed]

- Bidwell, J.; Khuwatsamrit, T.; Askew, B.; Ehrenberg, J.; Helmers, S. Seizure reporting technologies for epilepsy treatment: A review of clinical information needs and supporting technologies. Seizure 2015, 32, 109–117. [Google Scholar] [CrossRef] [PubMed]

- Casson, A.J.; Yates, D.C.; Smith, S.J.; Duncan, J.S.; Rodriguez-Villegas, E. Wearable electroencephalography. IEEE Eng. Med. Biol. Mag. 2010, 29, 44–56. [Google Scholar] [CrossRef] [PubMed]

- Mukhopadhyay, S.C. Wearable sensors for human activity monitoring: A review. IEEE Sens. J. 2014, 15, 1321–1330. [Google Scholar] [CrossRef]

- Collins, T.; Pires, I.; Oniani, S.; Woolley, S. How Reliable is Your Wearable Heart Rate Monitor? The Conversation, Health+ Medicine. Available online: https://theconversation.com/how-reliable-is-your-wearable-heart-rate-monitor-98095 (accessed on 7 June 2020).

- Xu, K.; Lu, Y.; Takei, K. Multifunctional Skin-Inspired Flexible Sensor Systems for Wearable Electronics. Adv. Mater. Technol. 2019, 4, 1800628. [Google Scholar] [CrossRef]

- Oniani, S.; Woolley, S.I.; Pires, I.M.; Garcia, N.M.; Collins, T.; Ledger, S.; Pandyan, A. Reliability assessment of new and updated consumer-grade activity and heart rate monitors. In Proceedings of the IARIA Conference on Sensor Device Technologies and Applications SENSORDEVICES, Venice, Italy, 16–20 September 2018. [Google Scholar]

- Kamišalić, A.; Fister, I.; Turkanović, M.; Karakatič, S. Sensors and functionalities of non-invasive wrist-wearable devices: A review. Sensors 2018, 18, 1714. [Google Scholar] [CrossRef] [PubMed]

- Tamura, T.; Maeda, Y.; Sekine, M.; Yoshida, M. Wearable photoplethysmographic sensors—past and present. Electronics 2014, 3, 282–302. [Google Scholar] [CrossRef]

- Sarcevic, P.; Kincses, Z.; Pletl, S. Online human movement classification using wrist-worn wireless sensors. J. Ambient Intell. Humaniz. Comput. 2019, 10, 89–106. [Google Scholar] [CrossRef]

- Sharma, N.; Gedeon, T. Objective measures, sensors and computational techniques for stress recognition and classification: A survey. Comput. Methods Programs Biomed. 2012, 108, 1287–1301. [Google Scholar] [CrossRef] [PubMed]

- Johansson, D.; Malmgren, K.; Alt Murphy, M. Wearable sensors for clinical applications in epilepsy, Parkinson’s disease, and stroke: A mixed-methods systematic review. J. Neurol. 2018, 265, 1740–1752. [Google Scholar] [CrossRef] [PubMed]

- Empatica Inc. Boston, USA and Empatica Srl, Milano, Italy (E3, E4 and Embrace). Available online: https://www.empatica.com (accessed on 12 May 2020).

- Danish Care Technology (Epi-Care Free), Sorø, Denmark, UK. Available online: https://danishcare.co.uk/epicare-free/ (accessed on 12 May 2020).

- LivAssured, B.V. (NightWatch), Leiden, The Netherlands. Available online: https://www.nightwatch.nl/ (accessed on 12 May 2020).

- Smart Monitor (SmartWatch Inspyre), San Jose, USA. Available online: https://smart-monitor.com/about-smartwatch-inspyre-by-smart-monitor/ (accessed on 12 May 2020).

- Collins, T.; Woolley, S.I.; Oniani, S.; Pires, I.M.; Garcia, N.M.; Ledger, S.J.; Pandyan, A. Version Reporting and Assessment Approaches for New and Updated Activity and Heart Rate Monitors. Sensors 2019, 19, 1705. [Google Scholar] [CrossRef] [PubMed]

- Kitchenham, B.A.; Dyba, T.; Jorgensen, M. May Evidence-based software engineering. In Proceedings of the 26th International Conference on Software Engineering, Edinburgh, UK, 28 May 2004. [Google Scholar]

- Kitchenham, B.A.; Budgen, D.; Brereton, P. Evidence-based Software Engineering and Systematic Reviews; Taylor and Francis Group: Boca Raton, FL, USA, 2015. [Google Scholar]

- Moher, D.; Liberati, A.; Tetzlaff, J.; Altman, D.G.; PRISMA Group. Preferred reporting items for systematic reviews and meta-analyses: The PRISMA statement. PLoS Med. 2009, 6, e1000097. [Google Scholar] [CrossRef] [PubMed]

- Dybå, T.; Dingsøyr, T. Empirical studies of agile software development: A systematic review. Inf. Softw. Technol. 2008, 50, 833–859. [Google Scholar] [CrossRef]

- Kramer, U.; Kipervasser, S.; Shlitner, A.; Kuzniecky, R. A Novel Portable Seizure Detection Alarm System: Preliminary Results. J. Clin. Neurophysiol. 2011, 28, 36–38. [Google Scholar] [CrossRef] [PubMed]

- Ozanne, A.; Johansson, D.; Hällgren Graneheim, U.; Malmgren, K.; Bergquist, F.; Alt Murphy, M. Wearables in epilepsy and Parkinson’s disease-A focus group study. Acta Neurol. Scand. 2017, 137, 188–194. [Google Scholar] [CrossRef] [PubMed]

- Heldberg, B.; Kautz, T.; Leutheuser, H.; Hopfengartner, R.; Kasper, B.; Eskofier, B. Using wearable sensors for semiology-independent seizure detection—Towards ambulatory monitoring of epilepsy. In Proceedings of the 2015 37th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), Milan, Italy, 25–29 August 2015; pp. 5593–5596. [Google Scholar]

- Al-Bakri, A.; Villamar, M.; Haddix, C.; Bensalem-Owen, M.; Sunderam, S. Noninvasive seizure prediction using autonomic measurements in patients with refractory epilepsy. In Proceedings of the 2018 40th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), Honolulu, HI, USA, 18–21 July 2018; pp. 2422–2425. [Google Scholar]

- Vandecasteele, K.; De Cooman, T.; Gu, Y.; Cleeren, E.; Claes, K.; Paesschen, W.; Huffel, S.; Hunyadi, B. Automated Epileptic Seizure Detection Based on Wearable ECG and PPG in a Hospital Environment. Sensors 2017, 17, 2338. [Google Scholar] [CrossRef] [PubMed]

- Regalia, G.; Onorati, F.; Lai, M.; Caborni, C.; Picard, R. Multimodal wrist-worn devices for seizure detection and advancing research: Focus on the Empatica wristbands. Epilepsy Res. 2019, 153, 79–82. [Google Scholar] [CrossRef] [PubMed]

- Lockman, J.; Fisher, R.; Olson, D. Detection of seizure-like movements using a wrist accelerometer. Epilepsy Behav. 2011, 20, 638–641. [Google Scholar] [CrossRef] [PubMed]

- Patterson, A.; Mudigoudar, B.; Fulton, S.; McGregor, A.; Poppel, K.; Wheless, M.; Brooks, L.; Wheless, J. SmartWatch by SmartMonitor: Assessment of Seizure Detection Efficacy for Various Seizure Types in Children, a Large Prospective Single-Center Study. Pediatric Neurol. 2015, 53, 309–311. [Google Scholar] [CrossRef] [PubMed]

- Velez, M.; Fisher, R.; Bartlett, V.; Le, S. Tracking generalized tonic-clonic seizures with a wrist accelerometer linked to an online database. Seizure 2016, 39, 13–18. [Google Scholar] [CrossRef] [PubMed]

- Beniczky, S.; Polster, T.; Kjaer, T.; Hjalgrim, H. Detection of generalized tonic-clonic seizures by a wireless wrist accelerometer: A prospective, multicenter study. Epilepsia 2013, 54, 58–61. [Google Scholar] [CrossRef] [PubMed]

- Onorati, F.; Regalia, G.; Caborni, C.; Migliorini, M.; Bender, D.; Poh, M.; Frazier, C.; Kovitch Thropp, E.; Mynatt, E.; Bidwell, J.; et al. Multicenter clinical assessment of improved wearable multimodal convulsive seizure detectors. Epilepsia 2017, 58, 1870–1879. [Google Scholar] [CrossRef] [PubMed]

- Arends, J.; Thijs, R.; Gutter, T.; Ungureanu, C.; Cluitmans, P.; Van Dijk, J.; van Andel, J.; Tan, F.; de Weerd, A.; Vledder, B.; et al. Multimodal nocturnal seizure detection in a residential care setting. J. Neurol. 2018, 91, 2010–2019. [Google Scholar] [CrossRef] [PubMed]

- Jory, C.; Shankar, R.; Coker, D.; McLean, B.; Hanna, J.; Newman, C. Safe and sound? A systematic literature review of seizure detection methods for personal use. Seizure 2016, 36, 4–15. [Google Scholar] [CrossRef] [PubMed]

- OCEBM Levels of Evidence Working Group. The Oxford Levels of Evidence 2. Oxford Centre for Evidence-Based Medicine. Available online: https://www.cebm.net/index.aspx?o=5653 (accessed on 7 June 2020).

- Woolley, S.; Collins, T.; Mitchell, J.; Fredericks, D. Investigation of wearable health tracker version updates. BMJ Health Care Inform. 2019, 26, e100083. [Google Scholar] [CrossRef] [PubMed]

- Bent, B.; Goldstein, B.A.; Kibbe, W.A.; Dunn, J.P. Investigating sources of inaccuracy in wearable optical heart rate sensors. NPJ Digit. Med. 2020, 3, 1–9. [Google Scholar] [CrossRef] [PubMed]

| Device | Sensors | Manufacturer/Supplier | Software/Applications | Hardware/Platform |

|---|---|---|---|---|

| Embrace/Embrace 2 E4 | Accelerometer PPG Temperature EDA Gyroscope (Embrace 2) | Empatica Inc./Srl (Boston, USA/Milan, Italy) | Alert App Mate App | Apple/Android Smartphone |

| Epi-Care free | Accelerometer Gyroscope | Danish Care Technology ApS (Sorø, Denmark) | Epi-Care App | Apple/Android Smartphone |

| NightWatch | Accelerometer PPG | LivAssured B.V. (Leiden, The Netherlands) | NightWatch online portal | Dedicated base station |

| Smart Monitor (SmartWatch/Inspyre App) | Accelerometer PPG | Smart Monitor (San Jose, USA) | Smart Monitor App Web Portal | Apple/Android Smartphone and compatible Samsung and Apple Watches |

| No. Studies = 12 | ||

|---|---|---|

| No. Quantitative = 12 | No. Qualitative = 2 | |

| Clinical setting = 8 | Free-living = 4 | - |

| No. participants/patients = 341 | No. participants/patients = 169 | No. participants/patients = 104 |

| TOTAL = 510 | TOTAL = 104 | |

| Clinical Settings | ||||

|---|---|---|---|---|

| Study | Device | No. Participants | No. Seizures Detected | Duration |

| Heldberg et al., 2015 [32] | E3 | 8 | 55 | 23 days |

| Al-Bakri et al., 2018 [33] | E4 | 3 | unspecified | 4–5 days (1 h intervals) |

| Vandecasteele et al., 2017 [34] | E4 | 11 | 47 | 29 days |

| Regalia et al., 2019 [35] | Embrace and E4 | 135 | 40 | unspecified |

| Lockman et al., 2011 [36] | SmartWatch | 40 | 7 | 487 days |

| Patterson et al., 2015 [37] | SmartWatch | 41 | 30 | unspecified |

| Velez et al., 2016 [38] | SmartWatch | 30 | 12 | 1–9 days |

| Beniczky et al., 2013 [39] | Epi-Care free | 73 | 35 | 17–171 hours |

| - | - | TOTAL = 341 | TOTAL = 226 | - |

| Authors/ No. Participants | Device | Seizure | Sensitivity | Specificity | FAR | PPV/R | Detection Latency |

|---|---|---|---|---|---|---|---|

| Heldberg et al., 2015 [32] 8 participants | E3 | PNMS, PMS | 89.1% (kNN) 87.3% (RF) | 93.1% (kNN) 95.2% (RF) | - | - | - |

| Al-Bakri et al., 2018 [33] 3 participants | E4 | - | 84% (NB) (preictal sleep) 78% (NB) (preictal wake) | 79% (NB) (preictal sleep) 80% (NB) (preictal wake) | - | - | - |

| Vandecasteele et al., 2017 [34] 11 participants | E4 (PPG) | TLS, CPS | 32% (SVM) | - | 1.80 per hour | 1.43% | - |

| Regalia et al., 2019 [35] 135 participants | E4 and Embrace | GTC | 100% | - | 0.42 per day | - | - |

| Lockman et al., 2011 [36] 40 participants | SmartWatch | TCS | 87.5% | - | - | - | - |

| Patterson et al., 2015 [37] 41 participants | SmartWatch | TS, GTC, MS, MTS, PS | 16% | - | - | - | - |

| Velez et al., 2016 [38] 30 participants | SmartWatch | TCS | 92.3% | - | - | - | - |

| Beniczky et al., 2013 [39] 73 participants | Epi-Care free | TCS | 90% | - | 0.2 per day | - | 55 s |

| Free-Living Settings | ||||

|---|---|---|---|---|

| References | Device | Participants | No. Seizures Detected | Duration |

| Onorati et al., 2017 [40] | E3 and E4 | 69 | 32 | 247 days |

| Van de Vel et al., 2014 [3] | Epi-Care free | 1 | 9 | 19 nights |

| Meritam et al., 2018 [8] | Epi-Care free | 71 | - | 15 months median (24 days to 6 years) |

| Arends et al., 2018 [41] | NightWatch | 28 | 809 | 1826 nights |

| - | - | TOTAL = 169 | TOTAL = 850 | - |

| Study/No: of Participants | Device | Seizure | Sensitivity | Specificity | FAR | PPV/R | Detection Latency |

|---|---|---|---|---|---|---|---|

| Onorati et al., 2017 [40] 69 participants | E3 and E4 | BTCS, FTC | 83.64% (Classifier I) 92.73% (Classifier II) 94.55% (Classifier III) | - | 0.29 per day (Classifier I) 0.21 per day (Classifier II) 0.20 per day (Classifier III) | - | 31.2 s (Classifier I) 29.3 s (Classifier II) 29.3 s (Classifier III) |

| Van de Vel et al., 2014 [3] 1 participant | Epi-Care free | TS, CS, TCS | 41% | - | 0.05 per night | - | - |

| Meritam et al., 2018 [8] 71 participants | Epi-Care free | BTCS | 90% BTCS median | - | 0.1 per day median | - | - |

| Arends et al., 2018 [41] 28 participants | NightWatch | MS, TC, TCS, Hyperkinetic | 86% median | - | 0.25 per night median | 49% median | - |

| Studies | Device | Participants | Data Failures | Reasons |

|---|---|---|---|---|

| Vandecasteele et al., 2017 [34] | E4 | 11 | PPG motion artefacts | Motion artefacts “PPG signal was drastically affected … 55% of the seizures could not be detected because of motion artefacts … no reliable HR could be extracted” |

| Velez et al., 2016 [38] | SmartWatch | 30 | 3 occasions | 2× wireless communication failures and 1× device not worn during seizure |

| Beniczky et al., 2013 [39] | Epi-Care free | 73 | “15 times” | “Device deficiencies” (including 2× “technical error”, 11× ”battery failure”) |

| Study/ No. Participants | Stakeholder Views and Observations | |

|---|---|---|

| Benefits | Barriers/Concerns | |

| Arends et al., 2018 [41] 33 qualitative carer respondents |

|

|

| Meritam et al., 2018 [8] 71 qualitative patient respondents |

|

|

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Rukasha, T.; I Woolley, S.; Kyriacou, T.; Collins, T. Evaluation of Wearable Electronics for Epilepsy: A Systematic Review. Electronics 2020, 9, 968. https://doi.org/10.3390/electronics9060968

Rukasha T, I Woolley S, Kyriacou T, Collins T. Evaluation of Wearable Electronics for Epilepsy: A Systematic Review. Electronics. 2020; 9(6):968. https://doi.org/10.3390/electronics9060968

Chicago/Turabian StyleRukasha, Tendai, Sandra I Woolley, Theocharis Kyriacou, and Tim Collins. 2020. "Evaluation of Wearable Electronics for Epilepsy: A Systematic Review" Electronics 9, no. 6: 968. https://doi.org/10.3390/electronics9060968

APA StyleRukasha, T., I Woolley, S., Kyriacou, T., & Collins, T. (2020). Evaluation of Wearable Electronics for Epilepsy: A Systematic Review. Electronics, 9(6), 968. https://doi.org/10.3390/electronics9060968