A Dynamically Reconfigurable BbNN Architecture for Scalable Neuroevolution in Hardware

Abstract

1. Introduction

- A scalable BbNN hardware architecture with reduced usage of DSPs and BRAMs. The proposed architecture supports feedback loops, includes a novel synchronization mechanism and a simplified implementation of the activation function.

- A novel approach for the network adaptation that exploits the advanced dynamic and partial reconfiguration features offered by the IMPRESS tool to obtain dynamic scalability and an efficient parameter and topology configuration during evolution.

- The integration of the proposed architecture, implemented on an SoC FPGA, with the OpenAI toolkit, conforming a hardware-in-the-loop simulation platform. This platform shows the applicability of the proposed neuroevolvable hardware architecture as a reinforcement learning solution for control problems.

2. Block-Based Neural Networks

2.1. Basic Principles

2.2. Related Works

3. Existing Approaches to Scalability

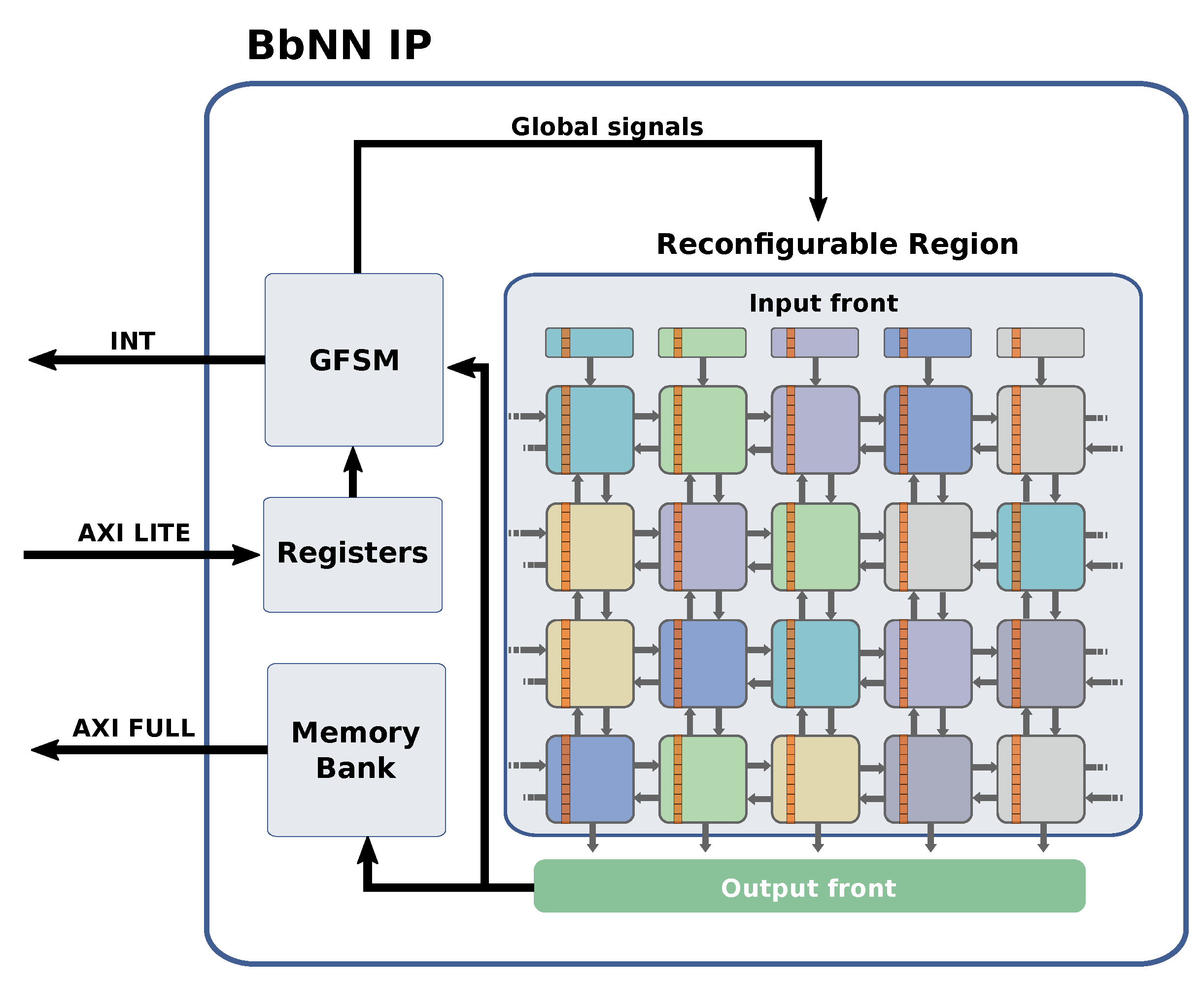

4. Proposed Bbnn Architecture

- g: is the activation function of the neuron.

- : is the input of the neuron.

- : is the is the connection weight between the input and the output.

- : is the bias applied to the output.

- : is the output of the neuron.

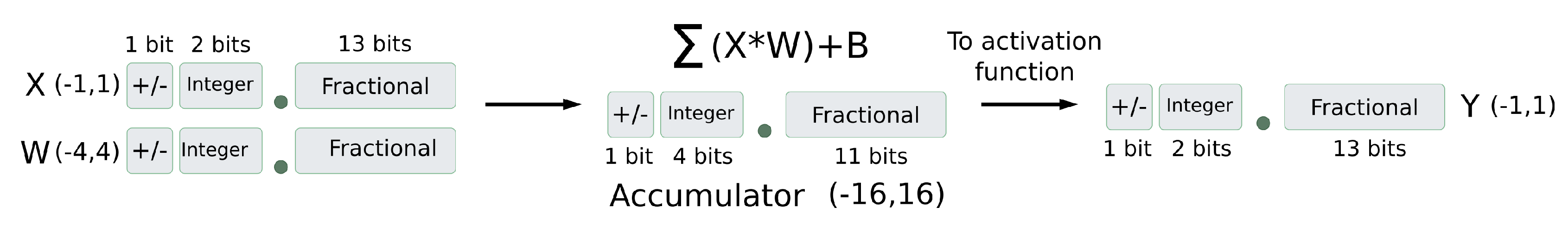

4.1. Numerical Optimizations

4.1.1. Numerical Range for Inputs and Parameters

4.1.2. Fixed-Point Representation Scheme

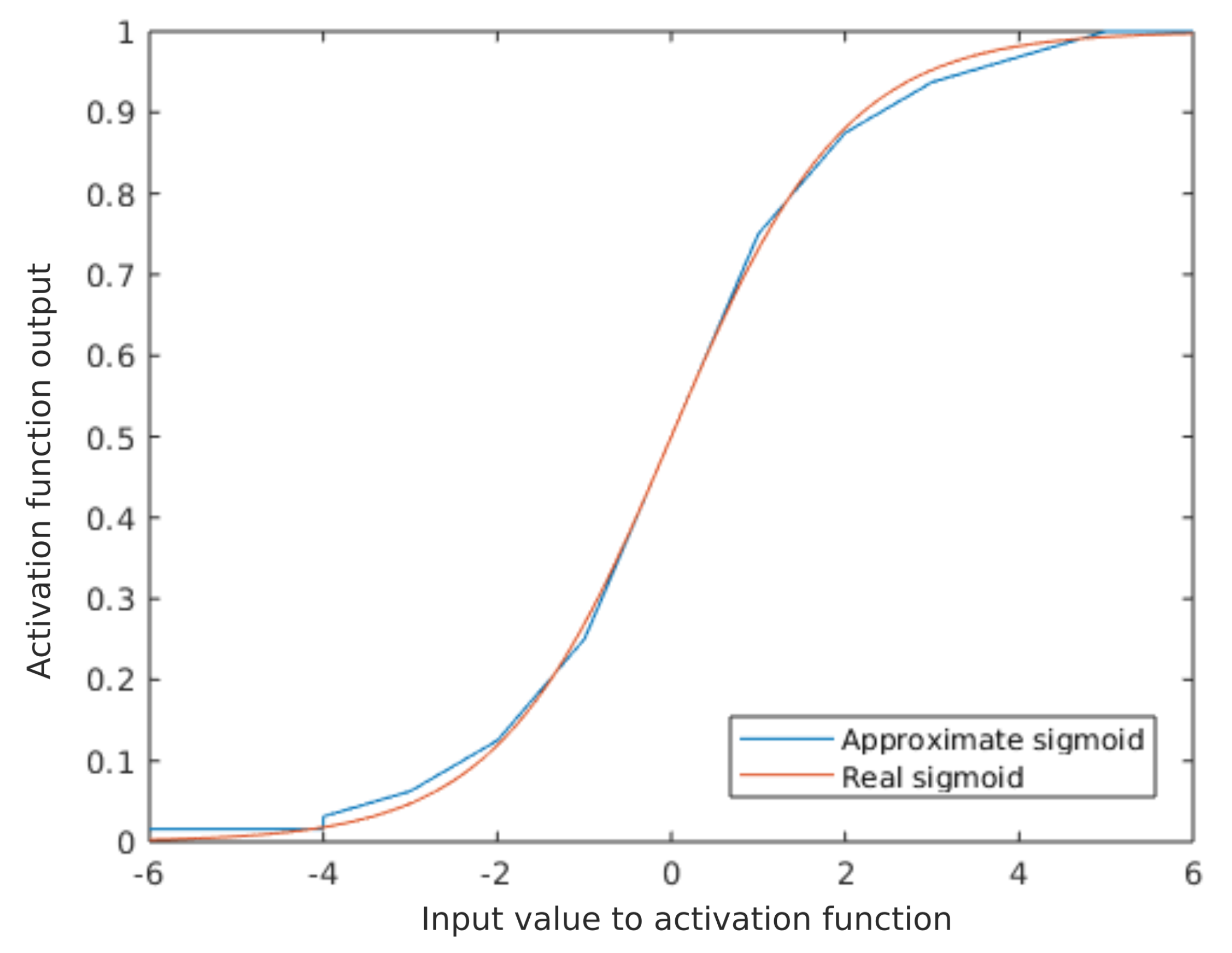

4.1.3. Approximation of the Activation Function

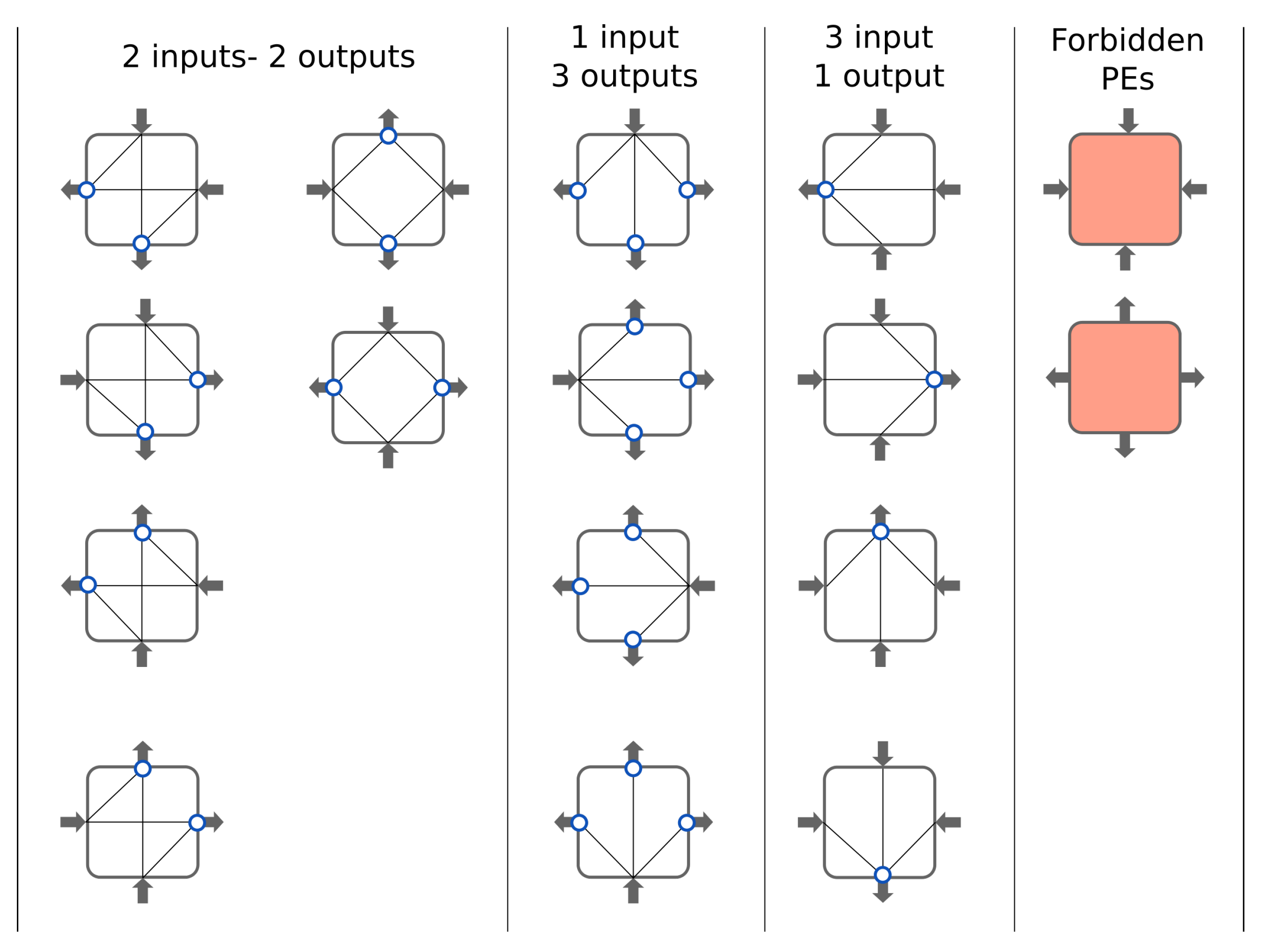

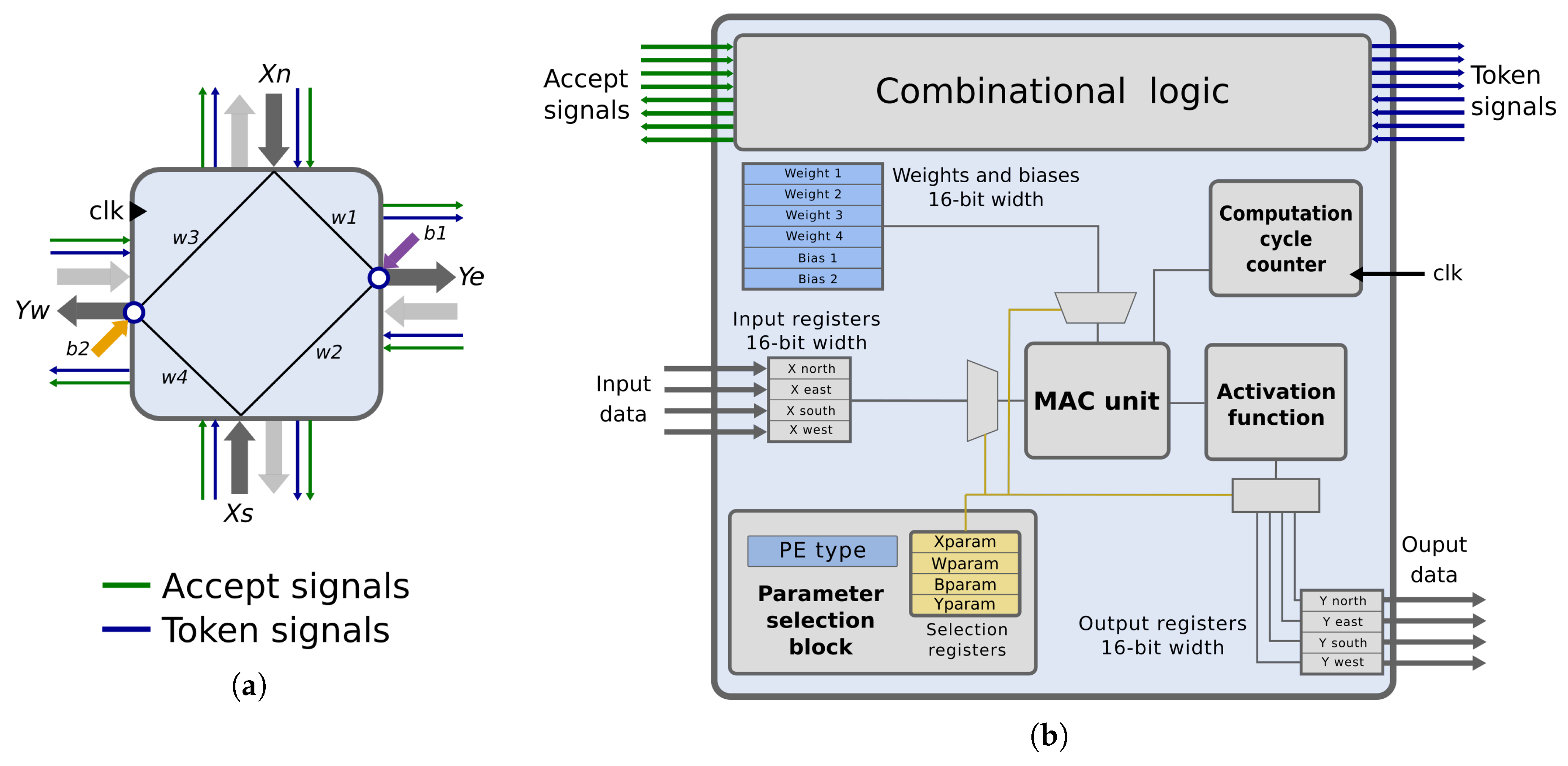

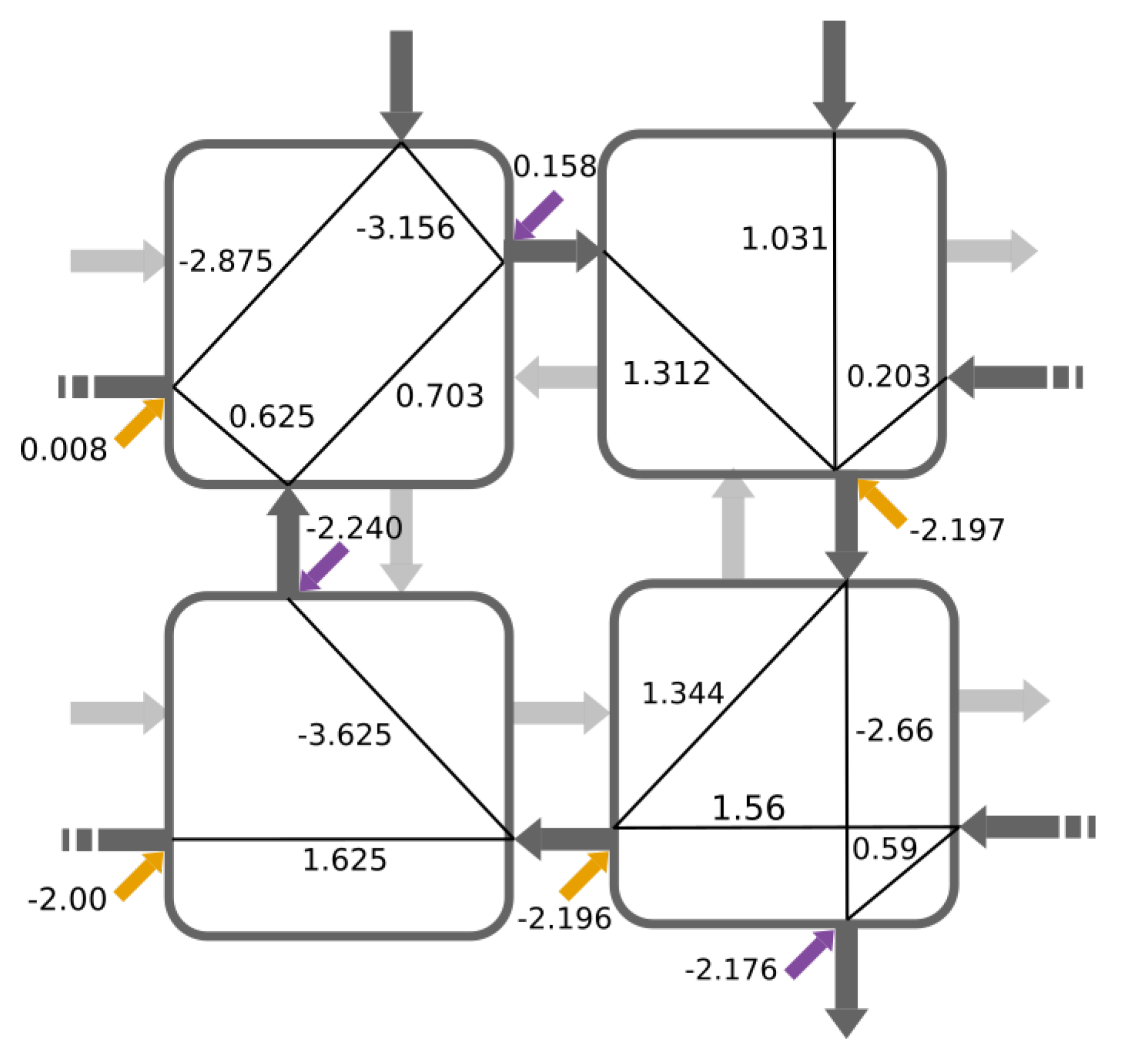

4.2. Proposed Processing Element Architecture

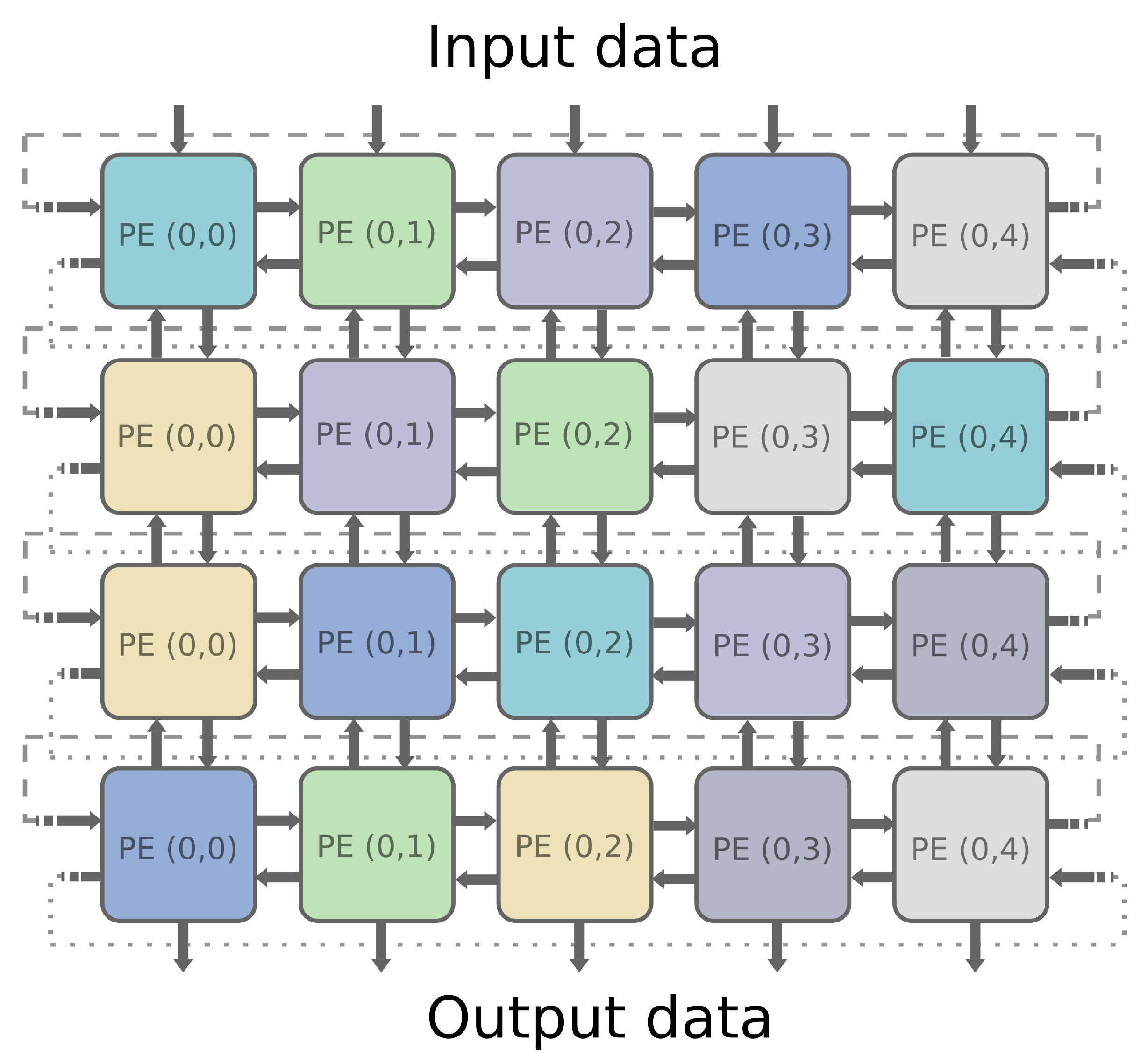

- Input data: one input signal per PE side (, , , )

- Output data: one output signal per PE side (y, y, y, y).

- Token signals: one token signal per input/output port. They are part of the synchronization mechanism. They indicate that intermediate results are ready to be consumed. They are set to one by the producer PE and set to zero by the consumer PE.

- Accept signals: one accept signal per input/output port. These signals avoid overwriting unconsumed data. They are also part of the token-based synchronization scheme. Accept signals are set to zero when the consumer has not consumed inputs or while it is triggered, and they are set to one when the link has no data, and the consumer PE is idle.

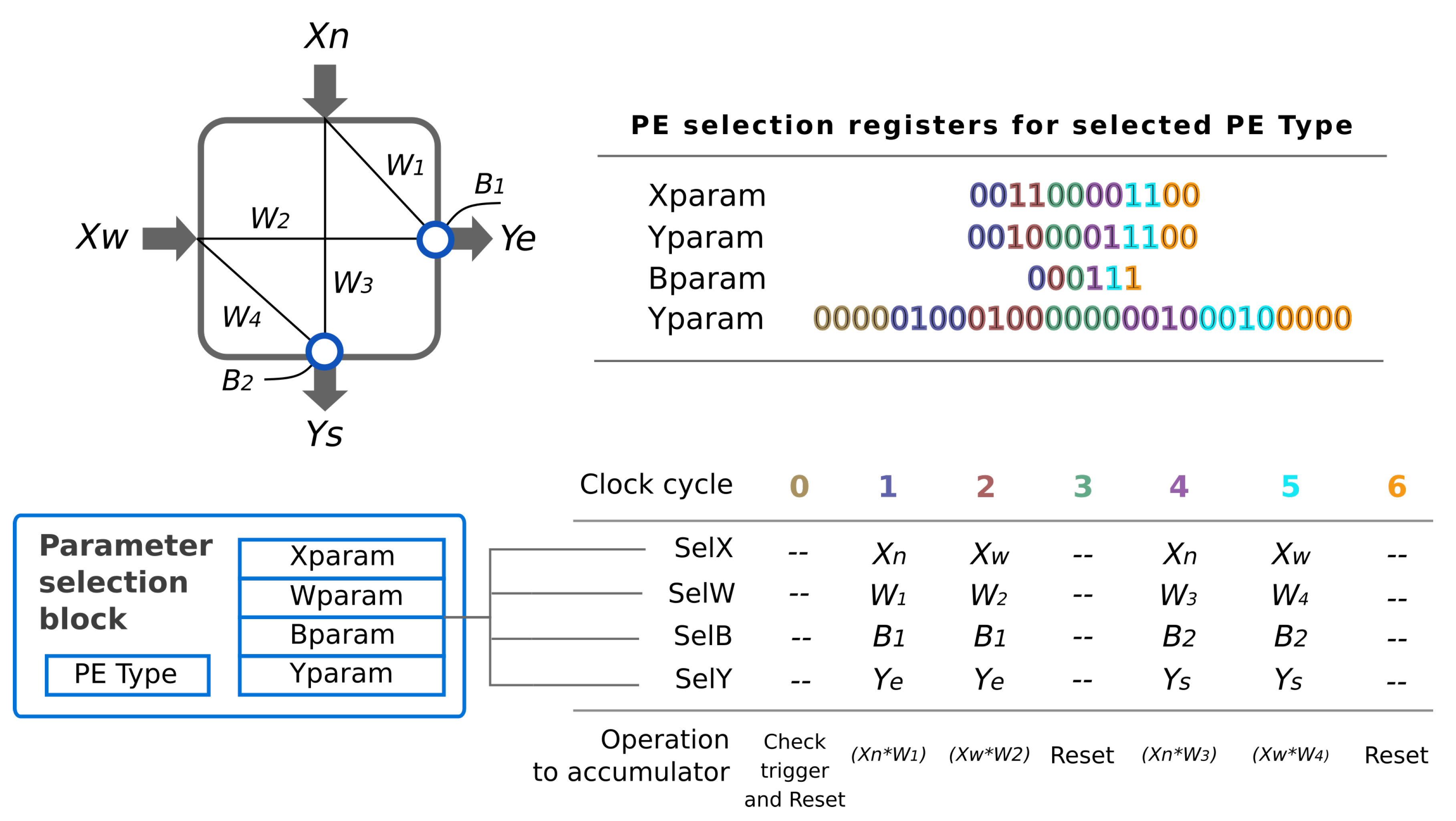

- Parameter selection block: it generates the signals that select the proper operands at the right clock cycles depending on the values stored in the parameter selection registers (Xparam, Wparam, Bparam and Yparam). These values are chosen from the PE type.

- MAC Unit: it performs all the calculations to generate the weighted sum during the computation cycle.

- Computation cycle counter: this counter controls the computation cycle stage.

- Activation function: this block computes the approximation of the sigmoid function, as it was described in the previous section.

- Synchronization logic: this logic checks the values of the token signals to trigger the computation cycle. When the operations are executed, it generates the output ports tokens. This logic also manages the accept signals.

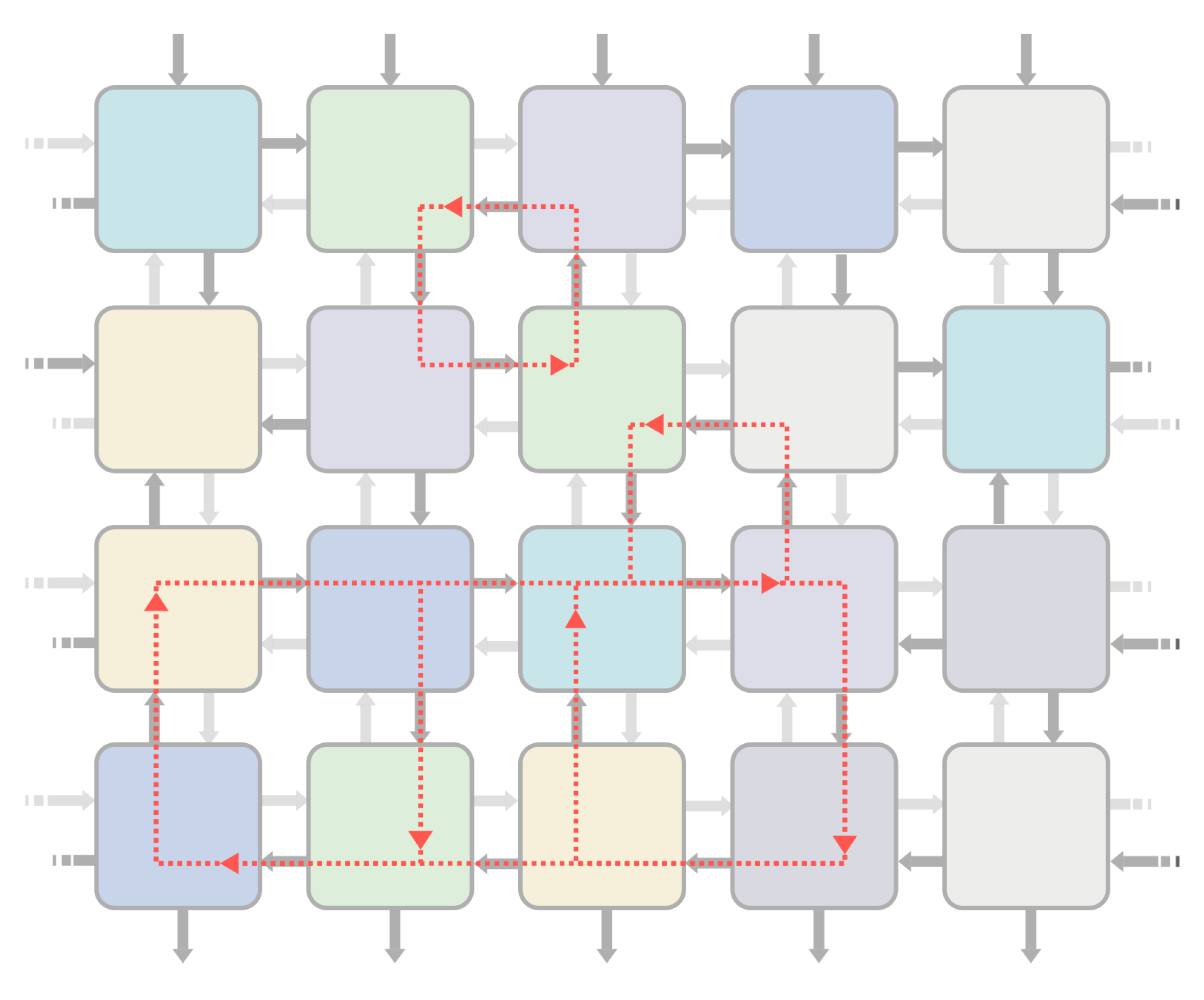

4.3. From the Basic PE to the Block-Based Neural Network IP

4.4. Management of Latency and Datapath Imbalance

5. Proposed Evolutionary Algorithm

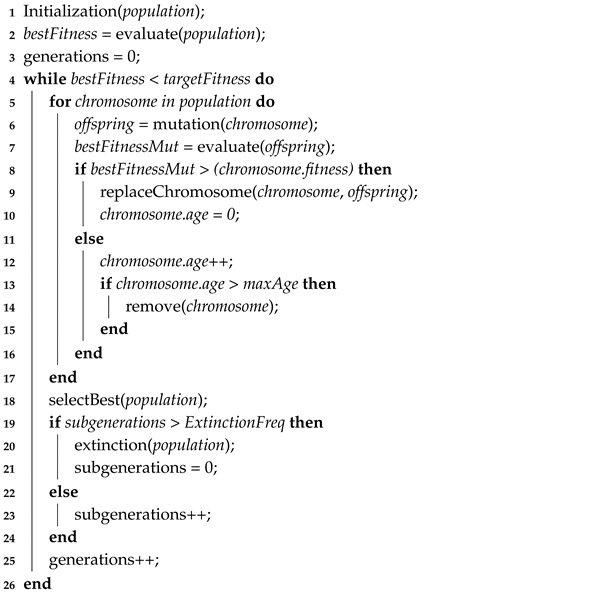

| Algorithm 1: Evolutionary algorithm |

|

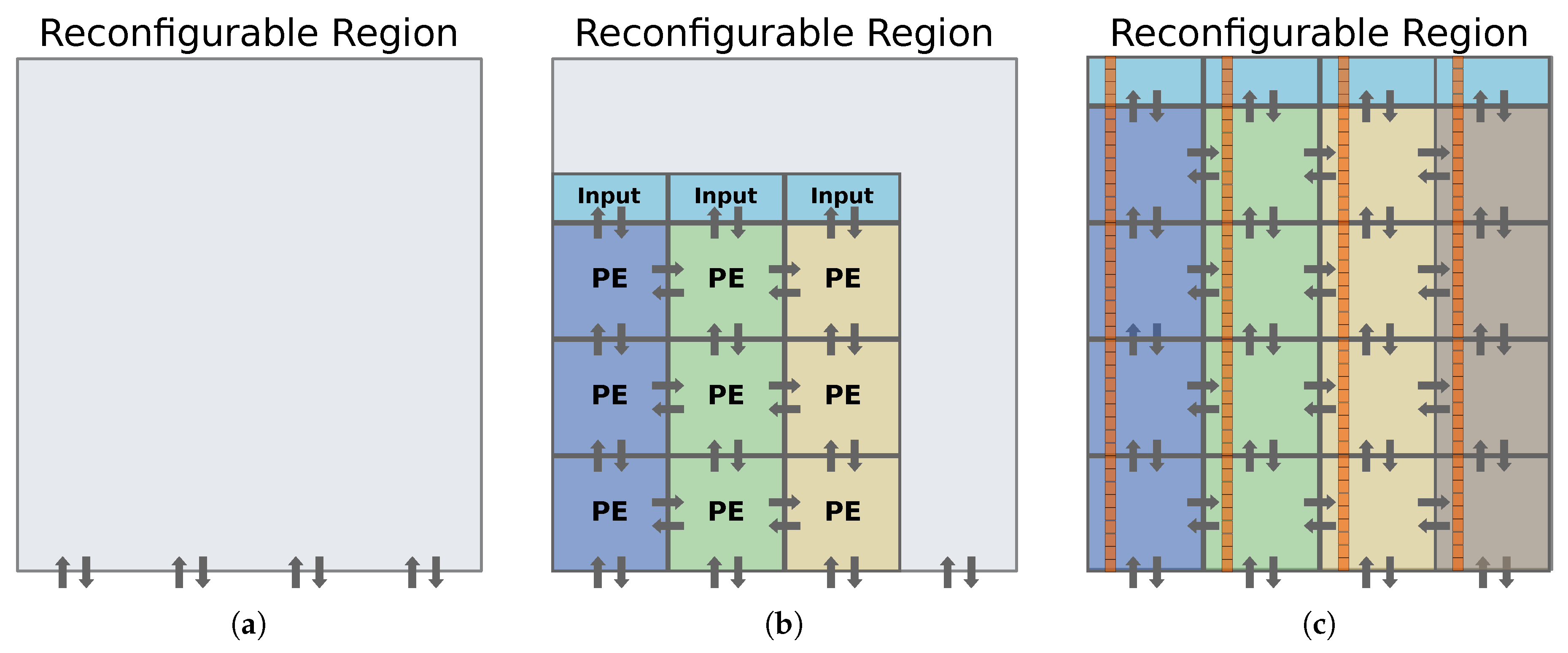

6. A New Approach to Build a Scalable Bbnn

7. Results

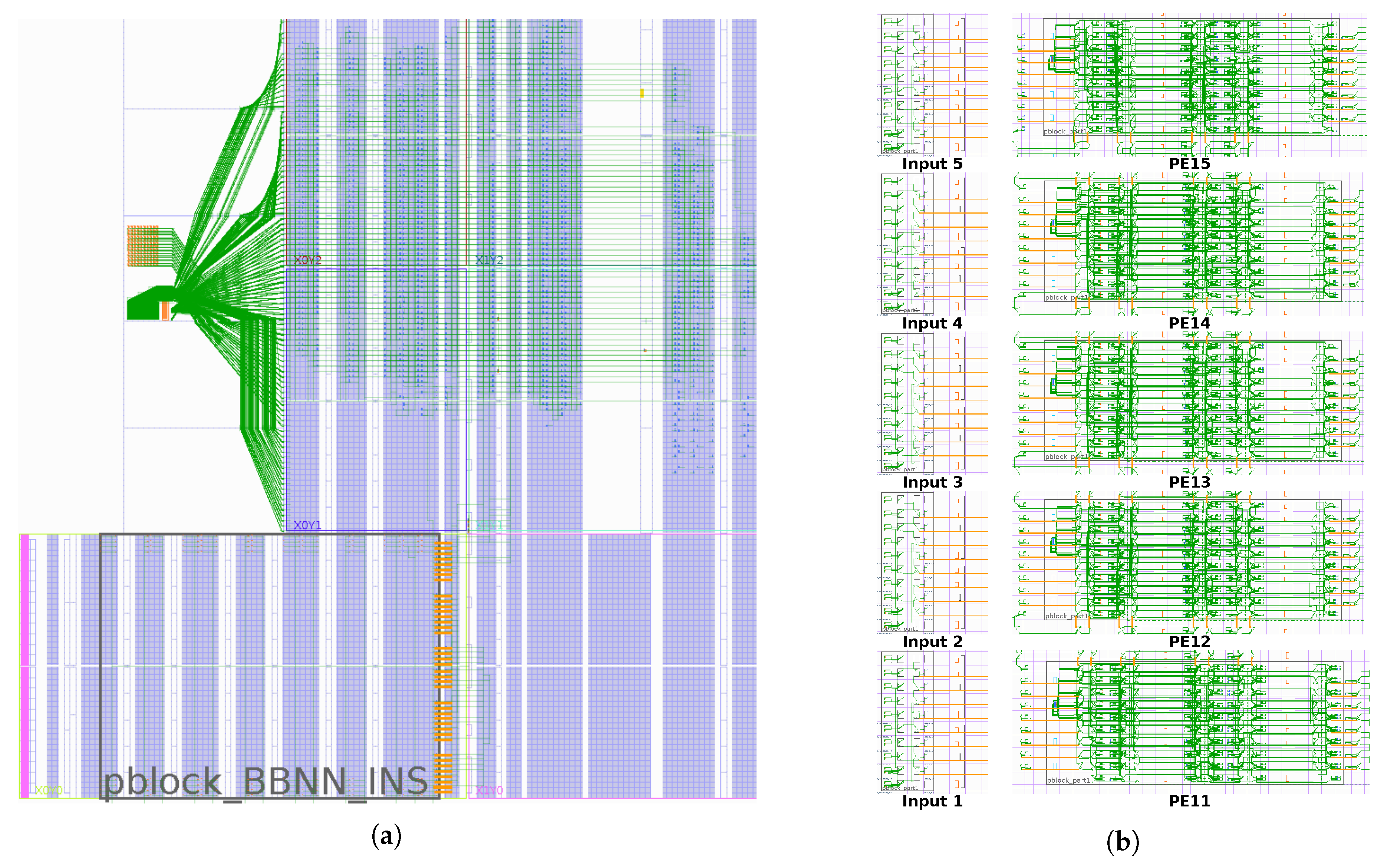

7.1. Logic Resource Utilization and Reconfiguration Times

7.2. Case Studies

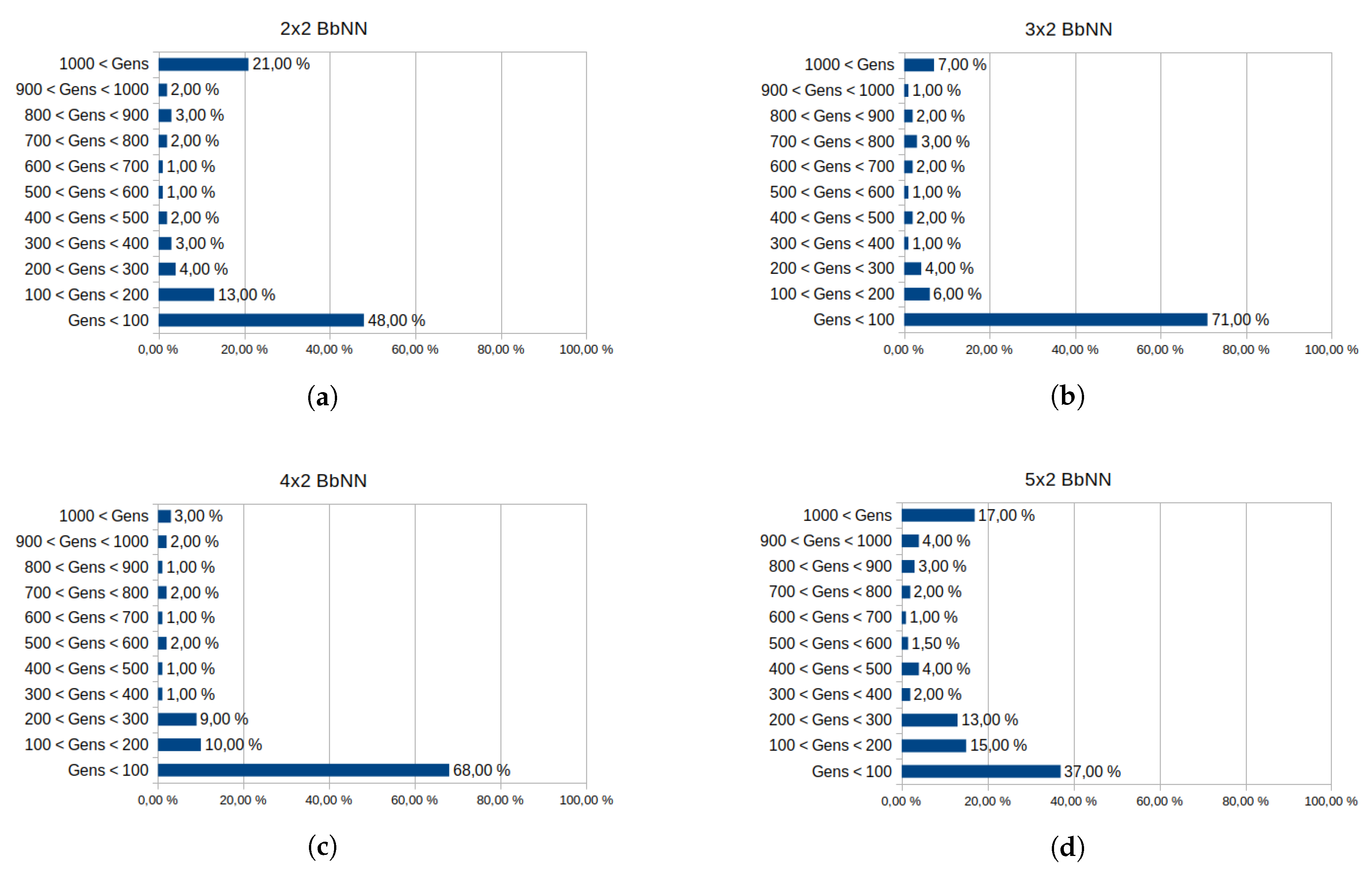

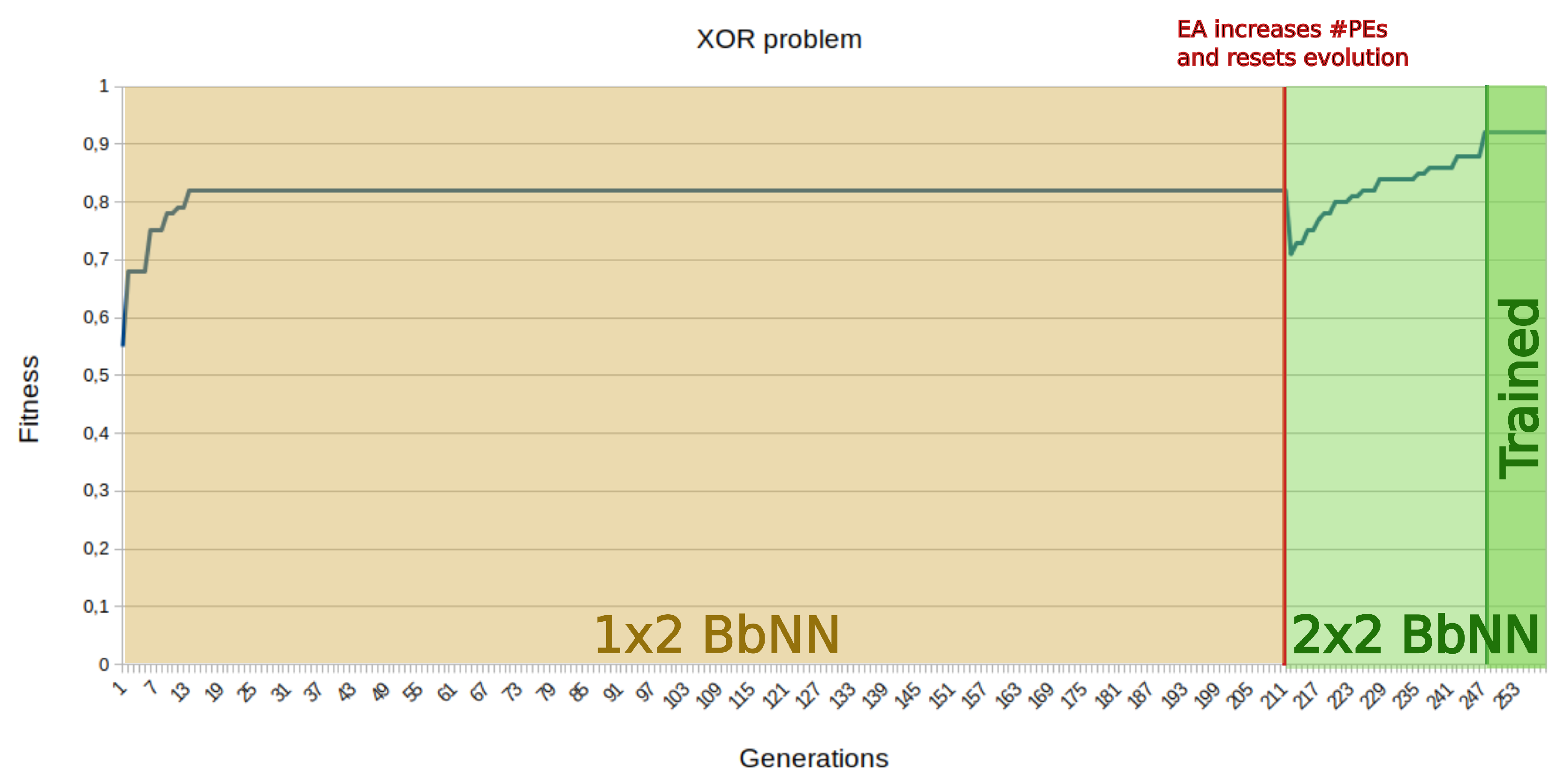

7.2.1. Classification Domain: The Xor Problem

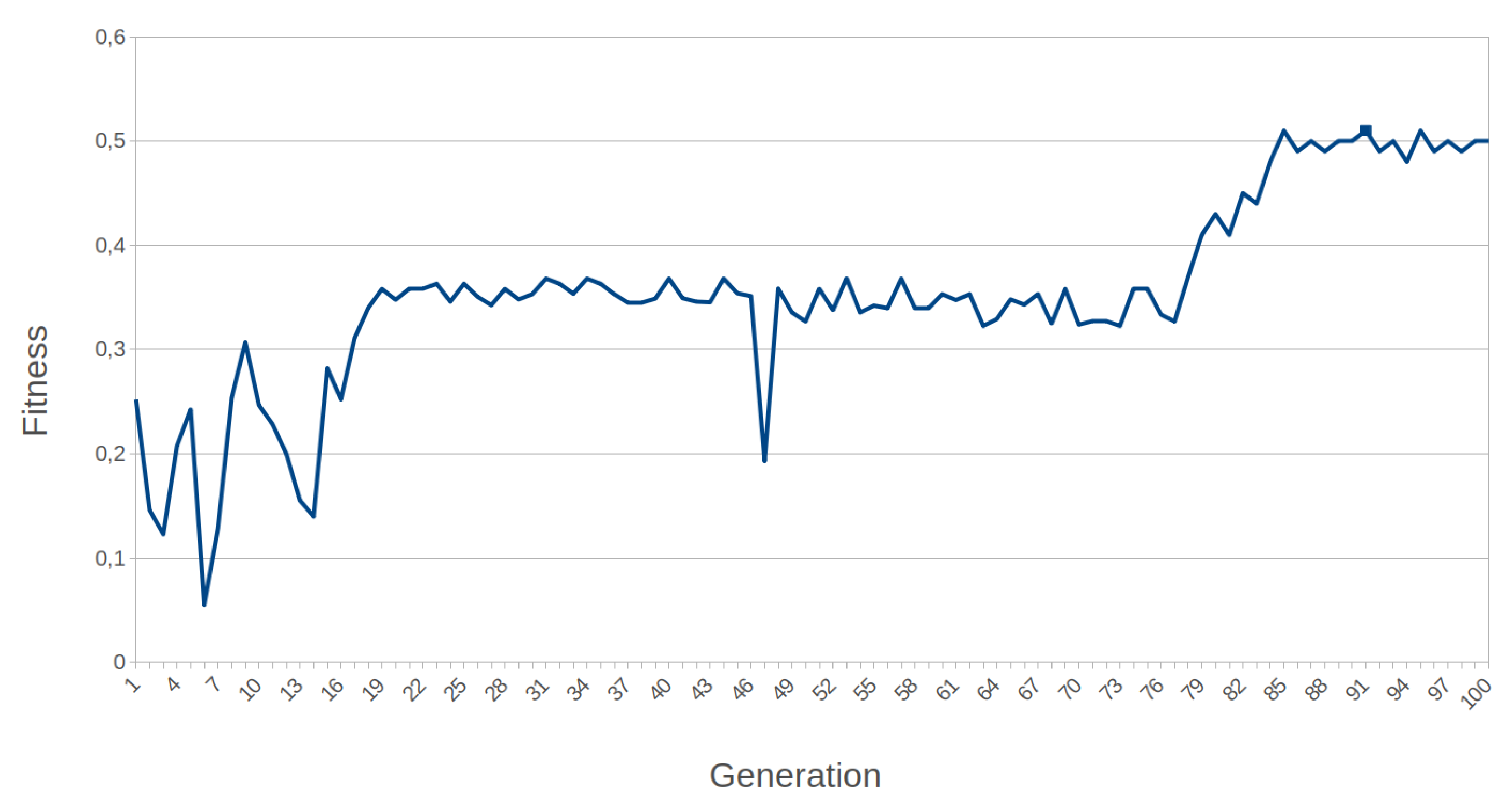

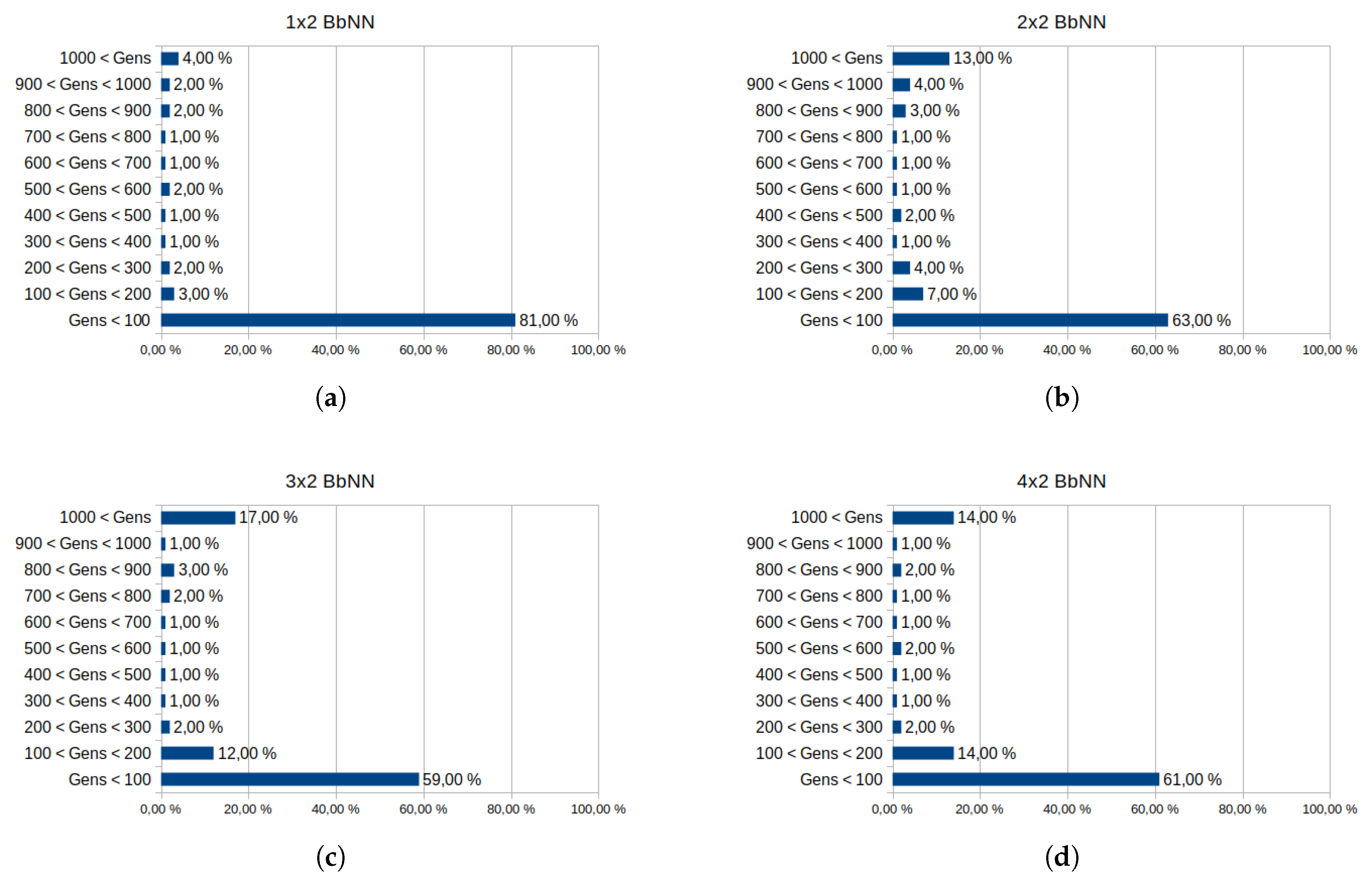

7.2.2. Control Domain: Mountain Car

- Fitness score in the range (−0.12, 0): the steps component in the fitness function is equal to zero since the circuit performs 200 control actions without any success. The car is far from the goal position at the end of the episode.

- Fitness score in the range (0, 0.05): the steps component is also null because all control actions were consumed, but the circuit can drive up the car near the desired position.

- Fitness score over 0.05: circuits scored in this range can drive the car to the goal position in less than 200 steps. The fewer control actions needed, the higher the score is. We consider that achieving the top of the hill with 150 steps can be considered good behavior. This corresponds to a fitness score over 0.4, which is set as the threshold to consider a problem as solved.

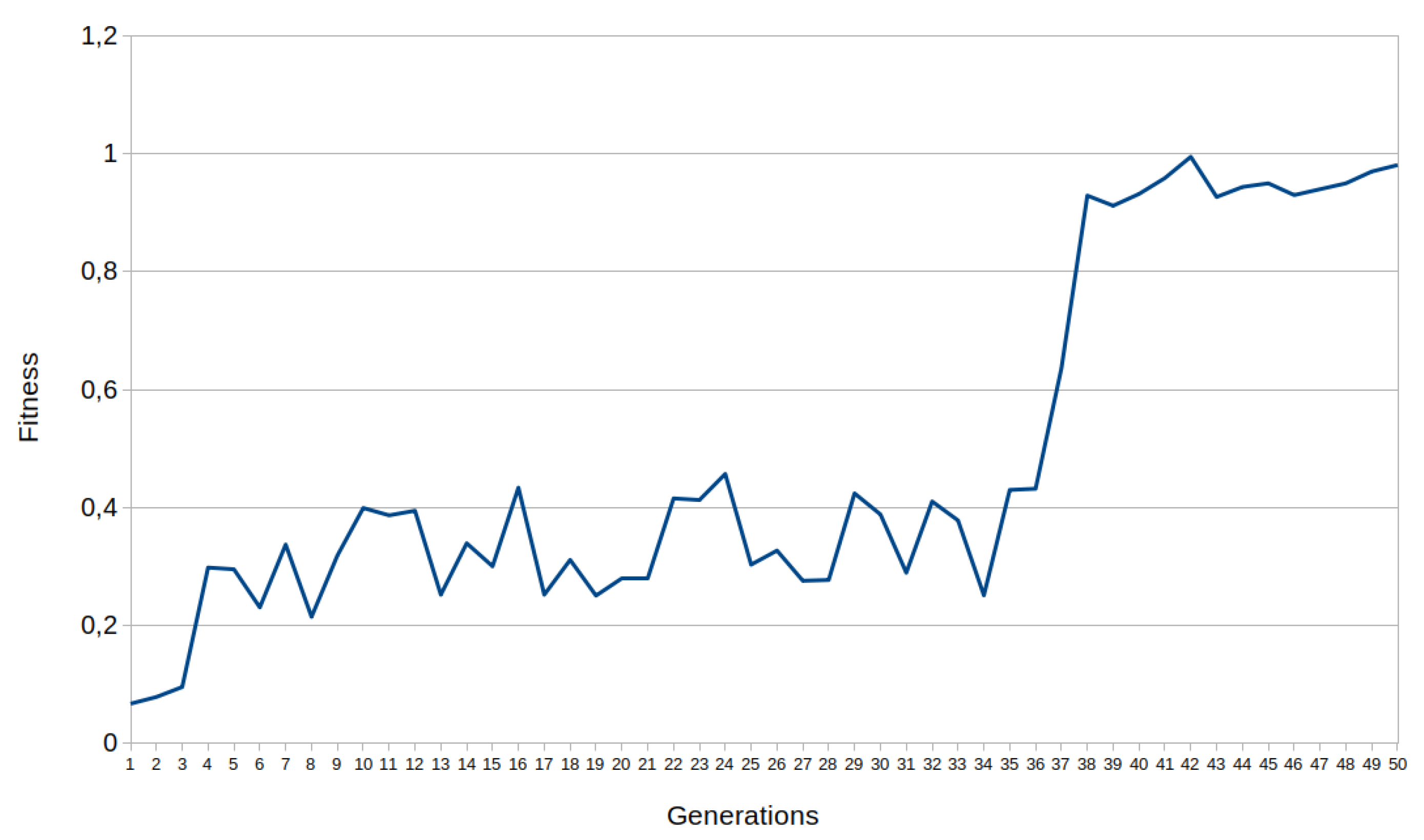

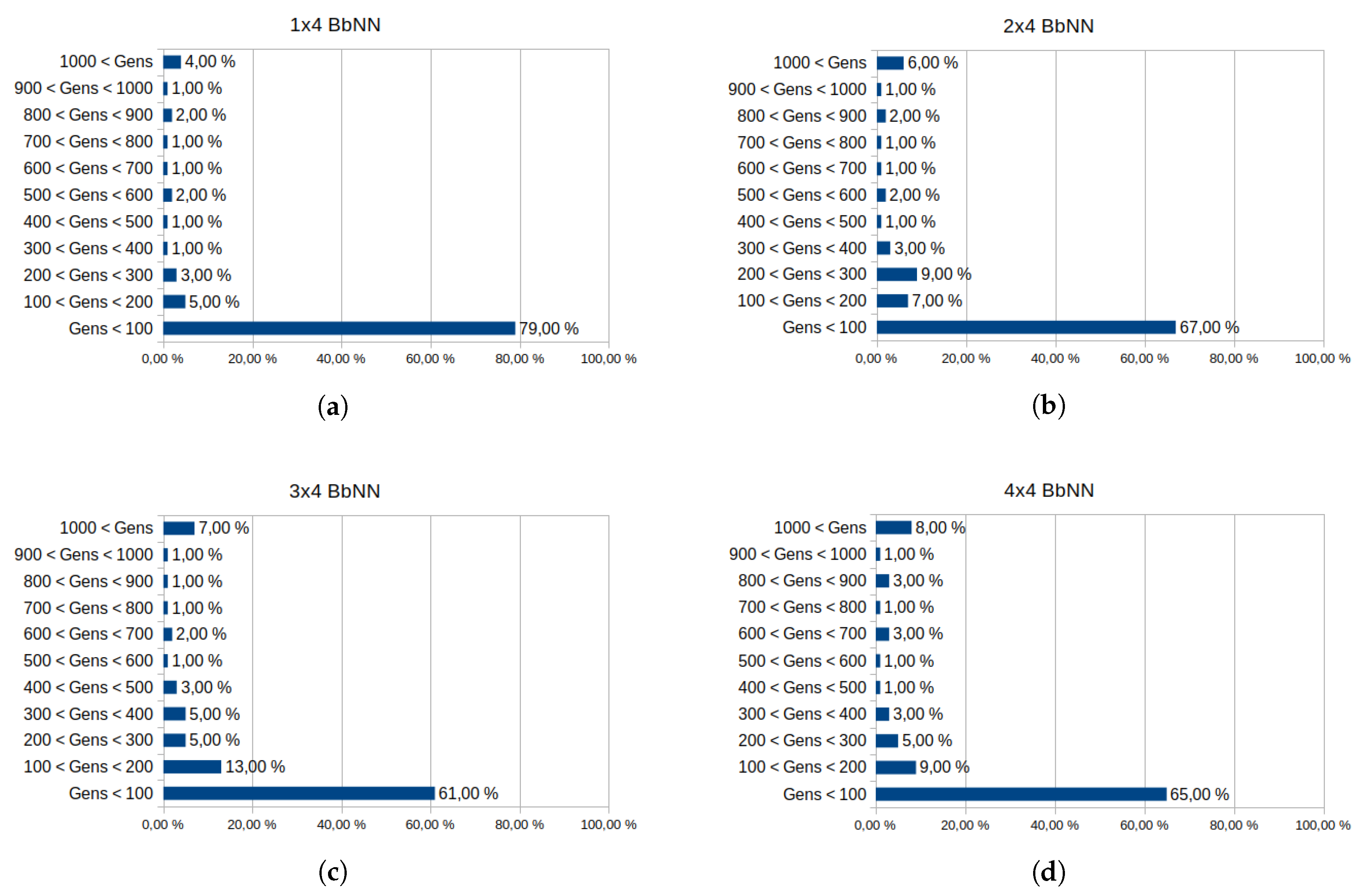

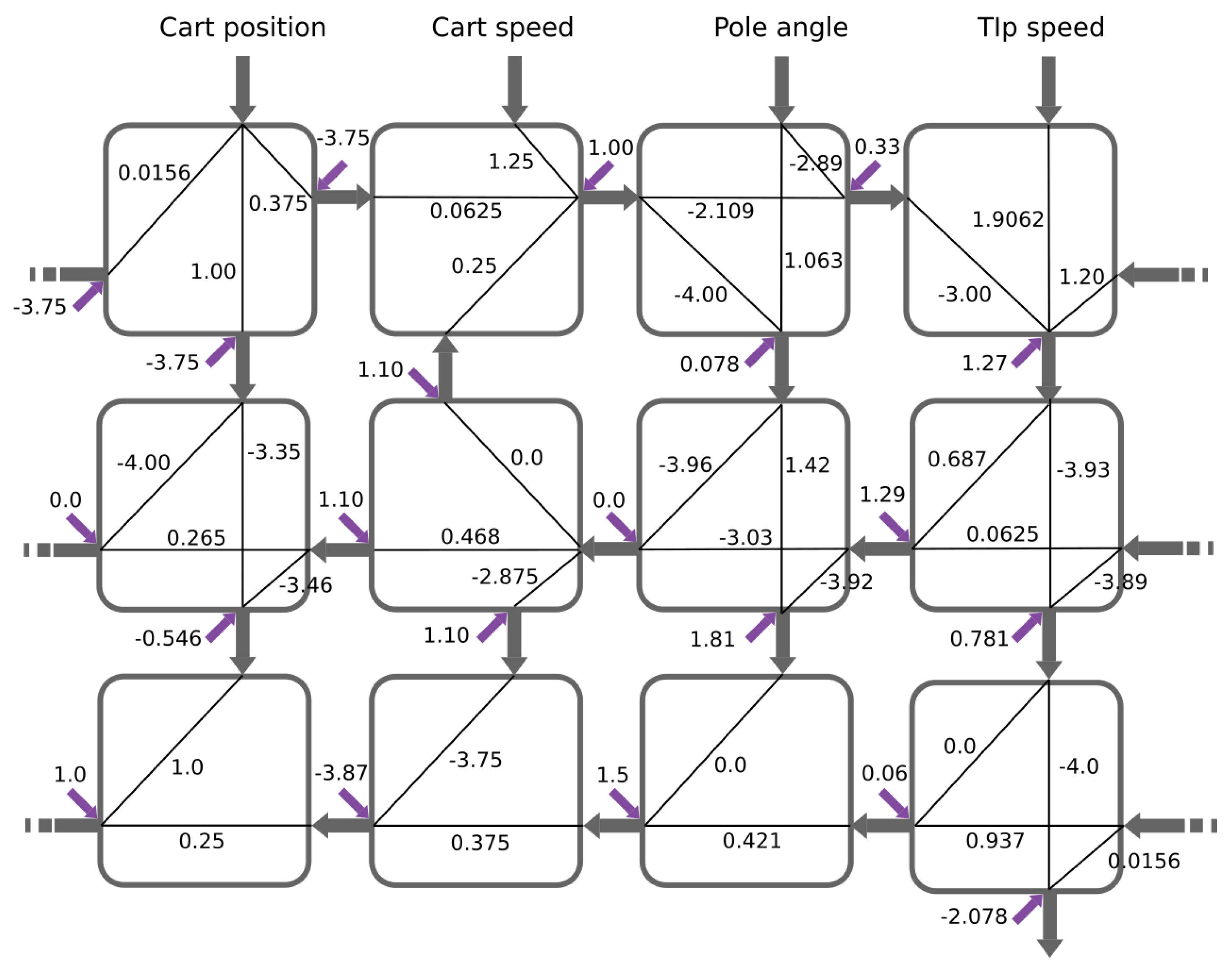

7.2.3. Control Domain: Cart Pole

- : is the parameter in the observation space.

- : is the maximum value in the range of parameter in the observation space.

- : represents the instability of the pole after the control action.

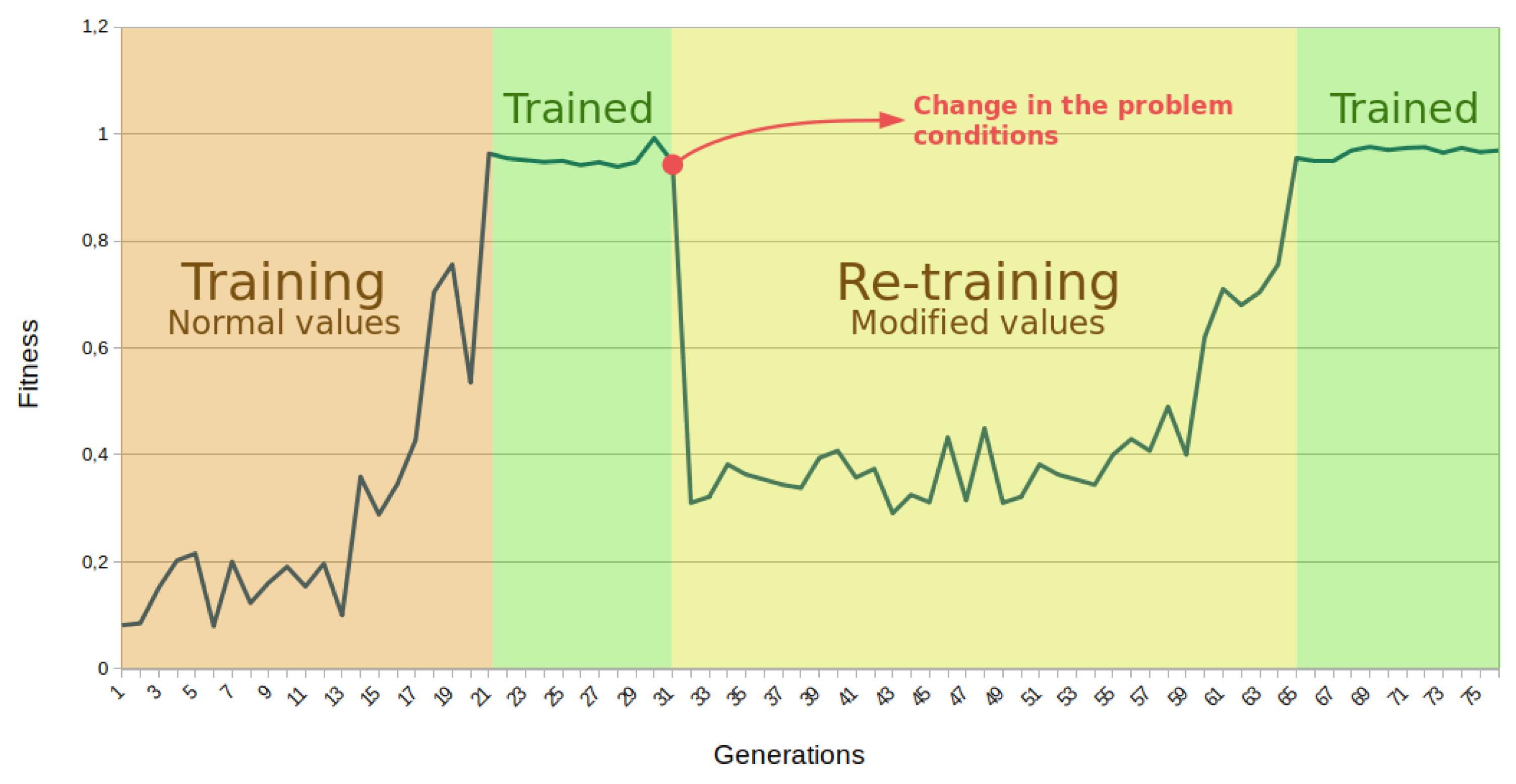

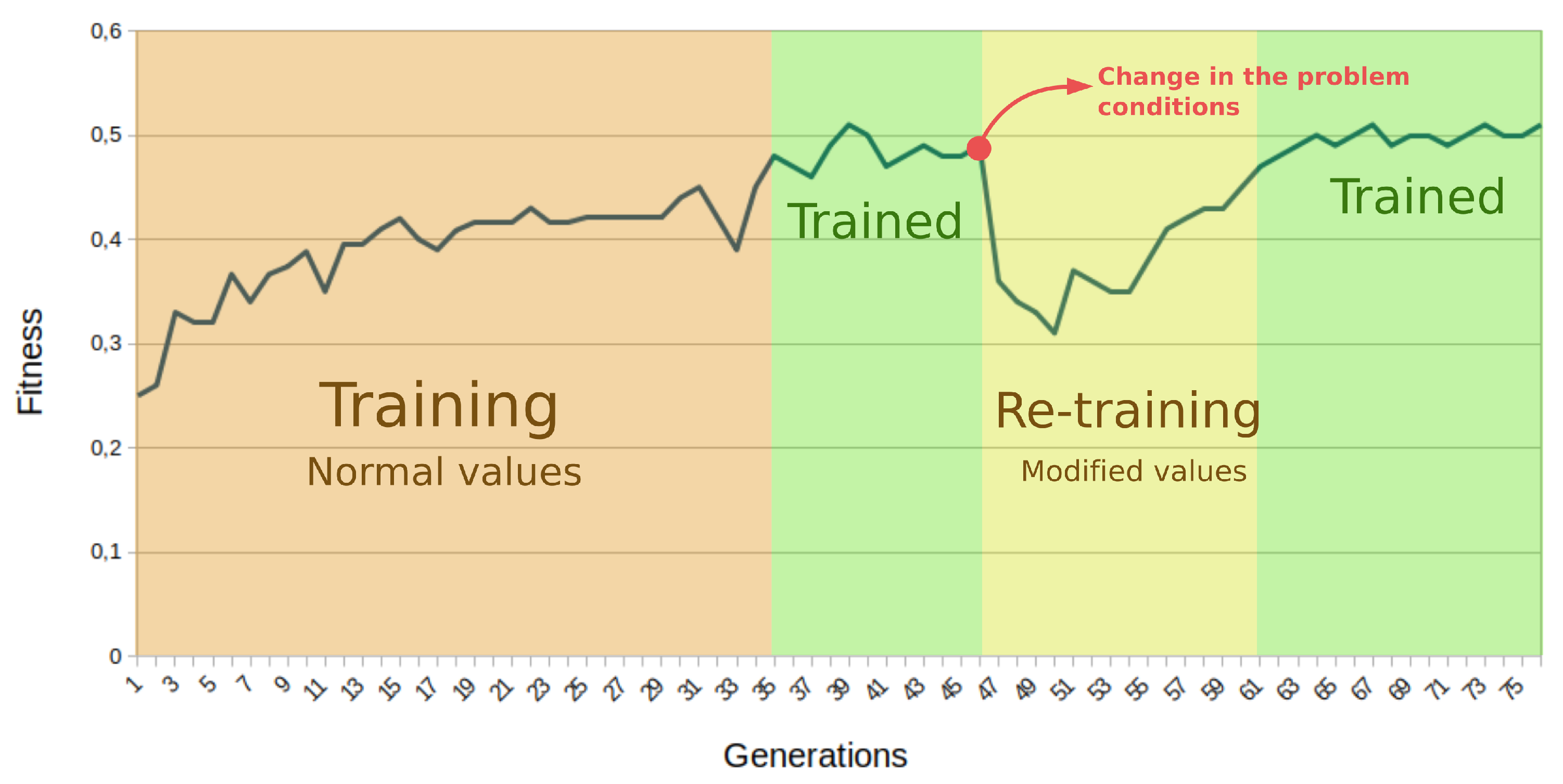

7.3. Online Adaptation for Control in Dynamic Environments

8. Conclusions and Future Work

Author Contributions

Funding

Conflicts of Interest

References

- Goldberg, D.E. Genetic Algorithms in Search, Optimization and Machine Learning, 1st ed.; Addison-Wesley Longman Publishing Co., Inc.: Boston, MA, USA, 1989. [Google Scholar]

- Alattas, R.J.; Patel, S.; Sobh, T.M. Evolutionary modular robotics: Survey and analysis. J. Intell. Robot. Syst. 2019, 95, 815–828. [Google Scholar] [CrossRef]

- Kriegman, S.; Blackiston, D.; Levin, M.; Bongard, J. A scalable pipeline for designing reconfigurable organisms. Proc. Natl. Acad. Sci. USA 2020, 117, 1853–1859. [Google Scholar] [CrossRef] [PubMed]

- Zebulum, R.S.; Pacheco, M.A.; Vellasco, M. Comparison of different evolutionary methodologies applied to electronic filter design. In Proceedings of the 1998 IEEE International Conference on Evolutionary Computation Proceedings, IEEE World Congress on Computational Intelligence (Cat. No. 98TH8360), Anchorage, AK, USA, 4–9 May 1998; pp. 434–439. [Google Scholar]

- Hornby, G.; Globus, A.; Linden, D.; Lohn, J. Automated antenna design with evolutionary algorithms. In Space 2006; American Institute of Aeronautics and Astronautics: Reston, VA, USA, 2006; p. 7242. [Google Scholar]

- Sekanina, L. Virtual Reconfigurable Circuits for Real-World Applications of Evolvable Hardware. In Proceedings of the 5th International Conference on Evolvable Systems: From Biology to Hardware, Wuhan, China, 21–23 September 2007; pp. 186–197. [Google Scholar] [CrossRef]

- Stanley, K.O.; Clune, J.; Lehman, J.; Miikkulainen, R. Designing neural networks through neuroevolution. Nat. Mach. Intell. 2019, 1, 24–35. [Google Scholar] [CrossRef]

- Stanley, K.O.; Miikkulainen, R. Evolving Neural Networks through Augmenting Topologies. Evol. Comput. 2002, 10, 99–127. [Google Scholar] [CrossRef] [PubMed]

- Stanley, K.O.; D’Ambrosio, D.B.; Gauci, J. A Hypercube-Based Encoding for Evolving Large-Scale Neural Networks. Artif. Life 2009, 15, 185–212. [Google Scholar] [CrossRef] [PubMed]

- Such, F.P.; Madhavan, V.; Conti, E.; Lehman, J.; Stanley, K.O.; Clune, J. Deep neuroevolution: Genetic algorithms are a competitive alternative for training deep neural networks for reinforcement learning. arXiv 2017, arXiv:1712.06567. [Google Scholar]

- Moon, S.W.; Kong, S.G. Block-based neural networks. IEEE Trans. Neural Netw. 2001, 12, 307–317. [Google Scholar] [CrossRef] [PubMed]

- Zamacola, R.; Martínez, A.G.; Mora, J.; Otero, A.; de La Torre, E. IMPRESS: Automated Tool for the Implementation of Highly Flexible Partial Reconfigurable Systems with Xilinx Vivado. In Proceedings of the 2018 International Conference on ReConFigurable Computing and FPGAs (ReConFig), Cancun, Mexico, 3–5 December 2018; pp. 1–8. [Google Scholar] [CrossRef]

- Zamacola, R.; García Martínez, A.; Mora, J.; Otero, A.; de la Torre, E. Automated Tool and Runtime Support for Fine-Grain Reconfiguration in Highly Flexible Reconfigurable Systems. In Proceedings of the 2019 IEEE 27th Annual International Symposium on Field-Programmable Custom Computing Machines (FCCM), San Diego, CA, USA, 28 April–1 May 2019; p. 307. [Google Scholar] [CrossRef]

- Brockman, G.; Cheung, V.; Pettersson, L.; Schneider, J.; Schulman, J.; Tang, J.; Zaremba, W. OpenAI Gym. arXiv 2016, arXiv:1606.01540. [Google Scholar]

- Yanling, Z.; Bimin, D.; Zhanrong, W. Analysis and study of perceptron to solve XOR problem. In Proceedings of the 2nd International Workshop on Autonomous Decentralized System, Beijing, China, 7 November 2002; pp. 168–173. [Google Scholar] [CrossRef]

- Merchant, S.; Peterson, G.D.; Park, S.K.; Kong, S.G. FPGA Implementation of Evolvable Block-based Neural Networks. In Proceedings of the 2006 IEEE International Conference on Evolutionary Computation, Vancouver, BC, Canada, 16–21 July 2006; pp. 3129–3136. [Google Scholar] [CrossRef]

- Merchant, S.; Peterson, G.; Kong, S. Intrinsic Embedded Hardware Evolution of Block-based Neural Networks. In Proceedings of the 2006 International Conference on Engineering of Reconfigurable Systems & Algorithms, ERSA 2006, Las Vegas, NV, USA, 26–29 June 2006; pp. 211–214. [Google Scholar]

- Lee, K.; Hamagami, T. Performance Oriented Block-Based Neural Network Model by Parallelized Neighbor’s Communication. In Proceedings of the 2018 IEEE International Conference on Systems, Man, and Cybernetics (SMC), Miyazaki, Japan, 7–10 October 2018; pp. 1623–1628. [Google Scholar] [CrossRef]

- Merchant, S.; Peterson, G. Evolvable Block-Based Neural Network Design for Applications in Dynamic Environments. VLSI Design 2010, 2010, 251210. [Google Scholar] [CrossRef]

- Jewajinda, Y.; Chongstitvatana, P. FPGA-based online-learning using parallel genetic algorithm and neural network for ECG signal classification. In Proceedings of the ECTI-CON2010: The 2010 ECTI International Confernce on Electrical Engineering/Electronics, Computer, Telecommunications and Information Technology, Chiang Mai, Thailand, 19–21 May 2010; pp. 1050–1054. [Google Scholar]

- Nambiar, V.P.; Khalil-Hani, M.; Sahnoun, R.; Marsono, M. Hardware implementation of evolvable block-based neural networks utilizing a cost efficient sigmoid-like activation function. Neurocomputing 2014, 140, 228–241. [Google Scholar] [CrossRef]

- Jiang, W.; Kong, S. A Least-Squares Learning for Block-based Neural Networks. Adv. Neural Netw. A Suppl. (DCDIS) 2007, 14, 242–247. [Google Scholar]

- Nambiar, V.P.; Khalil-Hani, M.; Marsono, M.; Sia, C. Optimization of structure and system latency in evolvable block-based neural networks using genetic algorithm. Neurocomputing 2014, 145, 285–302. [Google Scholar] [CrossRef]

- Kong, S. Time series prediction with evolvable block-based neural networks. In Proceedings of the 2004 IEEE International Joint Conference on Neural Networks, Budapest, Hungary, 25–29 July 2004; Volume 2, pp. 1579–1583. [Google Scholar] [CrossRef]

- Jiang, W.; Kong, S.G.; Peterson, G.D. ECG signal classification using block-based neural networks. In Proceedings of the 2005 IEEE International Joint Conference on Neural Networks, Montreal, QC, Canada, 31 July–4 August 2005; Volume 1, pp. 326–331. [Google Scholar] [CrossRef]

- Nambiar, V.P.; Khalil-Hani, M.; Marsono, M.N. Evolvable Block-based Neural Networks for real-time classification of heart arrhythmia From ECG signals. In Proceedings of the 2012 IEEE-EMBS Conference on Biomedical Engineering and Sciences, Langkawi, Malaysia, 17–19 December 2012; pp. 866–871. [Google Scholar] [CrossRef]

- Nambiar, V.P.; Khalil-Hani, M.; Sia, C.W.; Marsono, M.N. Evolvable Block-based Neural Networks for classification of driver drowsiness based on heart rate variability. In Proceedings of the 2012 IEEE International Conference on Circuits and Systems (ICCAS), Kuala Lumpur, Malaysia, 3–4 October 2012; pp. 156–161. [Google Scholar] [CrossRef]

- San, P.; Ling, S.H.; Nguyen, H. Block based neural network for hypoglycemia detection. In Proceedings of the Annual International Conference of the IEEE Engineering in Medicine and Biology Society, Boston, MA, USA, 30 August–3 September 2011; pp. 5666–5669. [Google Scholar] [CrossRef]

- Karaköse, M.; Akin, E. Dynamical Fuzzy Control with Block Based Neural Network; Technical Report; Department of Computer Engineering, Fırat University: Elazıǧ, Turkey, 2006. [Google Scholar]

- Tran, Q.A.; Jiang, F.; Ha, Q.M. Evolving Block-Based Neural Network and Field Programmable Gate Arrays for Host-Based Intrusion Detection System. In Proceedings of the 2012 Fourth International Conference on Knowledge and Systems Engineering, Danang, Vietnam, 17–19 August 2012; pp. 86–92. [Google Scholar] [CrossRef]

- Samajdar, A.; Mannan, P.; Garg, K.; Krishna, T. GeneSys: Enabling Continuous Learning through Neural Network Evolution in Hardware. In Proceedings of the 2018 51st Annual IEEE/ACM International Symposium on Microarchitecture (MICRO), Fukuoka, Japan, 20–24 October 2018. [Google Scholar]

- Upegui, A.; Pena-Reyes, C.A.; Sanchez, E. An FPGA platform for on-line topology exploration of spiking neural networks. Microprocess. Microsyst. 2005, 29, 211–223. [Google Scholar] [CrossRef]

- Miller, J.F. Cartesian Genetic Programming. Natural Computing Series; Springer: Berlin/Heidelberg, Germany, 2011. [Google Scholar] [CrossRef]

- Gallego, A.; Mora, J.; Otero, A.; de la Torre, E.; Riesgo, T. A scalable evolvable hardware processing array. In Proceedings of the 2013 International Conference on Reconfigurable Computing and FPGAs (ReConFig), Cancun, Mexico, 9–11 December 2013; pp. 1–7. [Google Scholar] [CrossRef]

- Miller, J.; Job, D.; Vassilev, V. Principles in the Evolutionary Design of Digital Circuits—Part I. Genet. Program. Evolvable Mach. 2000, 1, 7–35. [Google Scholar] [CrossRef]

- Torroja, Y.; Riesgo, T.; de la Torre, E.; Uceda, J. Design for reusability: Generic and configurable designs. In Proceedings of System Modeling and Code Reusability; Springer: Boston, MA, USA, 1997; pp. 11–21. [Google Scholar]

- Jiang, Y.; Pattichis, M.S. A dynamically reconfigurable architecture system for time-varying image constraints (DRASTIC) for motion JPEG. J. Real-Time Image Process. 2014, 14, 395–411. [Google Scholar] [CrossRef]

- Jiang, Y.; Pattichis, M. A dynamically reconfigurable deblocking filter for H.264/AVC codec. In Proceedings of the 2012 Conference Record of the Forty Sixth Asilomar Conference on Signals, Systems and Computers (ASILOMAR), Pacific Grove, CA, USA, 4–7 November 2012; pp. 2036–2040. [Google Scholar] [CrossRef]

- Jacoby, A.; Llamocca, D. Dynamic Dual Fixed-Point CORDIC Implementation. In Proceedings of the 2017 IEEE International Parallel and Distributed Processing Symposium Workshops (IPDPSW), Orlando, FL, USA, 29 May–2 June 2017; pp. 235–240. [Google Scholar] [CrossRef]

- Khraisha, R.; Lee, J. A Bit-Rate Aware Scalable H.264/AVC Deblocking Filter Using Dynamic Partial Reconfiguration. Signal Process. Syst. 2012, 66, 225–234. [Google Scholar] [CrossRef]

- Koch, D.; Torresen, J.; Beckhoff, C.; Ziener, D.; Dennl, C.; Breuer, V.; Teich, J.; Feilen, M.; Stechele, W. Partial reconfiguration on FPGAs in practice—Tools and applications. In Proceedings of the Architecture of Computing Systems (ARCS 2012), Munich, Germany, 28 February–2 March 2012; pp. 1–12. [Google Scholar]

- Hannachi, M.; Rabah, H.; Ben Abdelali, A.; Mtibaa, A. Dynamic reconfigurable architecture for adaptive DCT implementation. In Proceedings of the 2016 2nd International Conference on Advanced Technologies for Signal and Image Processing (ATSIP), Monastir, Tunisia, 21–23 March 2016; pp. 189–193. [Google Scholar] [CrossRef]

- Huang, J.; Lee, J. A Self-Reconfigurable Platform for Scalable DCT Computation Using Compressed Partial Bitstreams and BlockRAM Prefetching. In Proceedings of the 2009 IEEE Computer Society Annual Symposium on VLSI, Tampa, FL, USA, 13–15 May 2009; pp. 67–72. [Google Scholar] [CrossRef]

- Sudarsanam, A.; Barnes, R.; Carver, J.; Kallam, R.; Dasu, A. Dynamically reconfigurable systolic array accelerators: A case study with extended Kalman filter and discrete wavelet transform algorithms. IET Comput. Digit. Tech. 2010, 4, 126–142. [Google Scholar] [CrossRef]

- Cervero, T.; Otero, A.; Lopez, S.; de la Torre, E.; Marrero Callico, G.; Riesgo, T.; Sarmiento, R. A scalable H.264/AVC deblocking filter architecture. J. Real-Time Image Process. 2016, 12, 81–105. [Google Scholar] [CrossRef]

- Otero, A.; de la Torre, E.; Riesgo, T. Dreams: A tool for the design of dynamically reconfigurable embedded and modular systems. In Proceedings of the 2012 International Conference on Reconfigurable Computing and FPGAs, Cancun, Mexico, 5–7 December 2012; pp. 1–8. [Google Scholar] [CrossRef]

- Kulkarni, A.; Stroobandt, D. How to efficiently reconfigure tunable lookup tables for dynamic circuit specialization. Int. J. Reconfig. Comput. 2016, 2016, 5340318. [Google Scholar] [CrossRef]

- Mora, J.; de la Torre, E. Accelerating the evolution of a systolic array-based evolvable hardware system. Microprocess. Microsyst. 2018, 56, 144–156. [Google Scholar] [CrossRef]

- Jewajinda, Y. An Adaptive Hardware Classifier in FPGA based-on a Cellular Compact Genetic Algorithm and Block-based Neural Network. In Proceedings of the 2008 International Symposium on Communications and Information Technologies, Lao, China, 21–23 October 2008; pp. 658–663. [Google Scholar]

- Hansen, S.G.; Koch, D.; Torresen, J. High Speed Partial Run-Time Reconfiguration Using Enhanced ICAP Hard Macro. In Proceedings of the 2011 IEEE International Symposium on Parallel and Distributed Processing Workshops and Phd Forum, Shanghai, China, 16–20 May 2011; pp. 174–180. [Google Scholar] [CrossRef]

| SelX | Input | SelW | Weight | SelB | Bias | SelY | Output |

|---|---|---|---|---|---|---|---|

| 00 | 00 | 0 | 0001 | ||||

| 01 | 01 | 1 | 0010 | ||||

| 10 | 10 | - | - | 0100 | |||

| 11 | 11 | - | - | 1000 | |||

| - | - | - | - | - | - | 0000 | Reset acc. |

| Parameter | Type | Value | Functionality |

|---|---|---|---|

| TargetFitness | Float (0, 1.0) | Application dependant | Desired fitness |

| Pop-size | Int | 15 | Number of chromosomes in the population |

| N-offspring | Int | 10 | Number of mutated copies from one chromosome |

| MaxAge | Int | 7 | Maximum number of stalled generations |

| ExtinctionFreq | Int | 5 | Generations between extinctions |

| MutationRate | Float (0, 1.0) | 0.3 | Percentage of data altered in the mutation |

| Resource Type | Static System | Individual PE |

|---|---|---|

| LUTs | 7966 | 473 (95.84%) * |

| FFs | 7939 | 163 (16.98%) * |

| DSPs | 0 | 1 (25%) * |

| BRAMs | 2 | 0 |

| Work | Platform | Logic Elements * | Memory Elements | DSP Elements | Activation Function |

|---|---|---|---|---|---|

| Proposed architecture | Zynq XC7Z020 | 473 | 163 FFs | 1 | Sigmoid-no DSP |

| Nambiar [21] | Stratix III | 231 | 276 FFs | 2 | Tanh-piecewise |

| Jewajinda [49] | Virtex V | 263 | 341 FFs | 1 | Sigmoid-LUT based |

| Merchant [19] | Virtex-II Pro | 338 | 4BRAM | 1 | Sigmoid-LUT based |

| Lee and Hamagami [18] ** | Stratix IV | 186 | 40 FFs | 8 | Linear |

| Task | Inference | Training |

|---|---|---|

| BbNN computation | <0.63 s | <0.63 s |

| Input data transference | 6.1 s | 6.1 s |

| Output data transference | 4.3 s | 4.3 s |

| BbNN configuration | - | 41.7 s |

| Fitness computation | - | Application dependant |

| Throughput | 90.66 KOPS | Application dependant |

| BbNN Size | BbNN Computation (s) | Input Data (s) | Output Data (s) | BbNN Configuration (s) | |||

|---|---|---|---|---|---|---|---|

| Fine-Grain | AXI | Fine-Grain | AXI | Fine-Grain | AXI | ||

| 1 × 3 BbNN | <0.21 s | 6.1 | 4.5 | - | 4.3 | 15.8 | 19.7 |

| 3 × 3 BbNN | <0.63 s | 6.1 | 4.5 | - | 4.3 | 41.7 | 58.9 |

| 3 × 5 BbNN | <1.05 s | 6.6 | 7.7 | - | 7 | 56 | 94.1 |

| Performance Indicator | 2 × 2 BbNN | 3 × 2 BbNN | 4 × 2 BbNN | 5 × 2 BbNN |

|---|---|---|---|---|

| Best fitness | 0.95 | 0.97 | 0.98 | 0.95 |

| Average tested configurations | 19,954 | 13,434 | 13,036 | 20,090 |

| Average generations | 133 | 91 | 87 | 140 |

| Performance Indicator | 1 × 2 BbNN | 2 × 2 BbNN | 3 × 2 BbNN | 4 × 2 BbNN |

|---|---|---|---|---|

| Best fitness | 0.41 | 0.46 | 0.45 | 0.43 |

| Average tested configurations | 323 | 1.156 | 3.899 | 984 |

| Average generations | 3 | 8 | 26 | 7 |

| Performance Indicator | 1 × 4 BbNN | 2 × 4 BbNN | 3 × 4 BbNN | 4 × 4 BbNN |

|---|---|---|---|---|

| Best fitness | 0.977 | 0.973 | 0.970 | 0.978 |

| Average tested configurations | 518 | 2101 | 8651 | 2051 |

| Average generations | 4 | 14 | 58 | 14 |

| Problem | Parameter | Normal Value | Modified Value |

|---|---|---|---|

| Cart Pole | Gravity | 9.8 m/s | 20.0 m/s |

| Pole length | 0.5 m | 0.1 m | |

| Cart mass | 1.0 Kg | 1.5 Kg | |

| Mountain Car | Engine power | 0.001 | 0.0008 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

García, A.; Zamacola, R.; Otero, A.; de la Torre, E. A Dynamically Reconfigurable BbNN Architecture for Scalable Neuroevolution in Hardware. Electronics 2020, 9, 803. https://doi.org/10.3390/electronics9050803

García A, Zamacola R, Otero A, de la Torre E. A Dynamically Reconfigurable BbNN Architecture for Scalable Neuroevolution in Hardware. Electronics. 2020; 9(5):803. https://doi.org/10.3390/electronics9050803

Chicago/Turabian StyleGarcía, Alberto, Rafael Zamacola, Andrés Otero, and Eduardo de la Torre. 2020. "A Dynamically Reconfigurable BbNN Architecture for Scalable Neuroevolution in Hardware" Electronics 9, no. 5: 803. https://doi.org/10.3390/electronics9050803

APA StyleGarcía, A., Zamacola, R., Otero, A., & de la Torre, E. (2020). A Dynamically Reconfigurable BbNN Architecture for Scalable Neuroevolution in Hardware. Electronics, 9(5), 803. https://doi.org/10.3390/electronics9050803