1. Introduction

Future artificial intelligence (AI) assistants must be more than just question-and-answer machines. Computers need to express and recognize affect and emotions because affective computers can help reduce user frustration during interactions and enable smooth communication between users and computers [

1]. Computers with unnatural expressions and reactions could deter human–computer interaction (HCI) and frustrate users. For instance, when users expect their conversational agents to deliver factual information without intense emotions, but conversational agents speak with intense emotions, users will become frustrated. Therefore, the feedback that matches with users’ perceptions of natural human-computer interaction needs to be designed.

Most studies in the field of affective computing have applied emotion to convey an agent’s internal states naturally. However, this research applied the concept of personality to implement more natural feedback for a conversational agent for the following reasons: (1) The concept of personality can allow individualized and complex features to be conveyed simultaneously; (2) it is possible to predict users’ perceptions by giving a personality to conversational agents; and (3) with personality, agents can implement consistent patterns of reactions rather than simple and immediate reactions.

Personalities are not innate for computer systems or interfaces; thus, humans must program them. In addition, they cannot be defined in a single sentence; instead, they are combinations of abilities, beliefs, preferences, dispositions, behaviors, and temperamental features with diverse behavioral and emotional attributes [

2,

3]. Therefore, the study posits the following general research question:

RQ. How can conversational agents effectively express personalities?Personalities can be expressed with two factors: (1) behavioral features (gestures, movements) and (2) verbal traits (voice, speech style). People with different personalities demonstrate different amplitudes and speeds of gestures and movements. Extroverts demonstrate faster, wider, and broader movements than introverts [

4]. In addition, extroverts tend to demonstrate more reactive and faster movements and body gestures than introverts [

5]. Personality and verbal traits are also highly interrelated. Extroverts tend to express more emotionality with positive emotions and use fewer formal expressions with agreement and gratitude [

6]. Extroverts also demonstrate shorter silences and use more positive words and informal expressions than introverts. Introverts use more abstract words and formal words than extroverts [

2,

7].

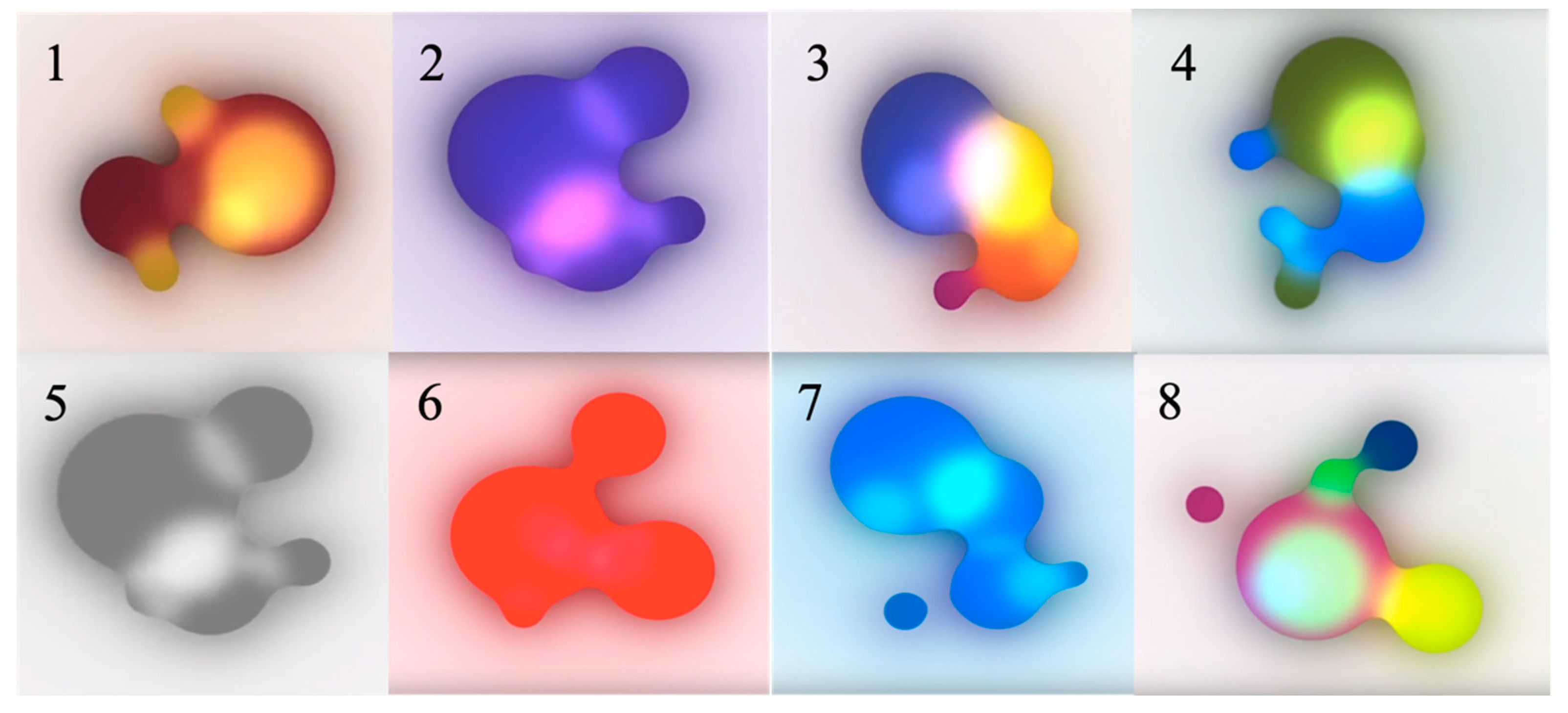

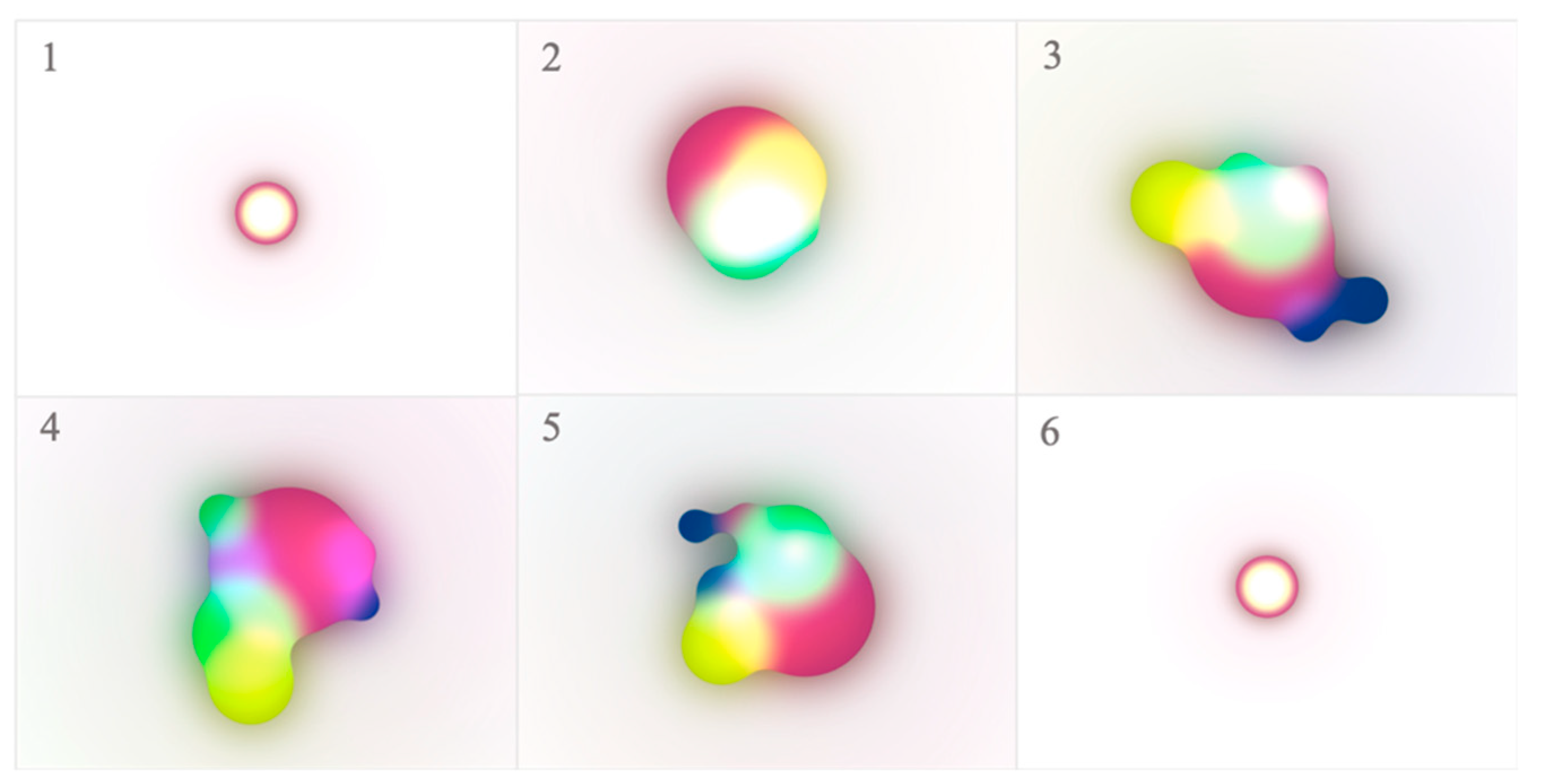

Gestures are difficult to implement with current conversational agents because most of them are designed in the form of AI speakers. Existing conversational agents in the current market, including Amazon Alexa and Google Home, are not able to implement gesture movements. Instead of using gestures, AI speakers deliver simple visual feedback through a smart display in response to voice commands. Considering the current form of conversational agents, visual feedback was chosen as the personality expression element. As people with different personalities demonstrate different gesture speeds, the lighting speed could also be perceived as different personalities. Quick lighting is perceived as more active than slow lighting; therefore, the study posits the following research question: RQ 1. Can different visual feedback be perceived as different personalities?

Most current AI speakers use a consistent voice with the same speech style. As extroverts and introverts demonstrate different verbal traits, different verbal traits are perceived as different personalities. Therefore, the study posits the second research question: RQ 2. Can different verbal cues be perceived as different personalities?

Unlike previous studies, the current study applied a wider range of personalities rather than focusing on expressing only two contrasting personalities, such as extroversion and introversion. In addition, personality was expressed with diverse factors, including visual feedback with different colors and motions and five verbal cues, rather than focusing on a single element.

5. Discussion

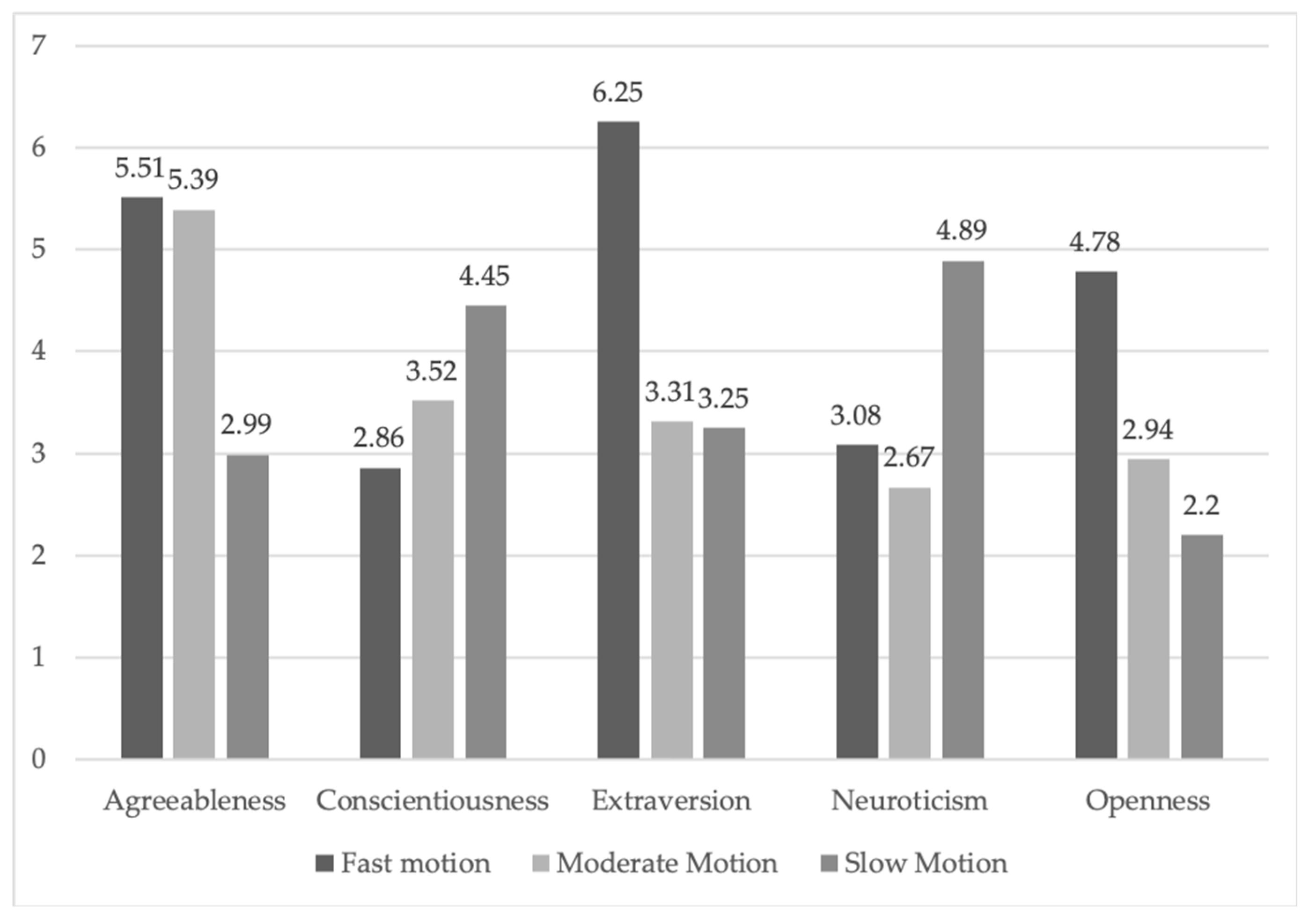

Considering the visual feedback results, different motions of visual feedback were highly influential on the perceptions of personalities. Fast, moderate, and slow motions could express different personalities, which answers the first research question of whether visual feedback with different motions could express different personalities. Regardless of colors, visual feedback with fast and moderate-speed motions were perceived as agreeable and kind (agreeableness). Visual feedback with slow motions was perceived as deliberate and careful (conscientiousness). Visual feedback with fast and moderate motions was considered to have a creative and imaginative personality (openness). Visual feedback with fast motions was considered active and sociable (extraversion). Visual feedback with slow motions was considered depressed and anxious (neuroticism). Different motions of visual feedback were perceived as different personalities.

The study suggested that fast motions were appropriate for expressing positive personalities such as agreeableness and extraversion. The traits of extroversion were related to ambition and sociability. Agreeableness can be interpreted as likability, cooperation, social conformity, and love [

32], and these traits are more highly related to positive personalities than the other Big Five personalities. The results supported the previous finding that users’ perceptions of extraversion increased as the motion level increased [

45]. Considering that previous studies about motions were conducted with virtual characters’ behavioral movements, this study provides design implications for more diverse formats of appearance constraint agents. The study argues that using slow motions is suitable for personalities that are usually perceived as negative concerning the results of personality perceptions associated with slow motions. The results reveal that slow motions were perceived as neurotic and conscientious. According to the Big Five personality trait study [

43], traits associated with neuroticism were highly related to emotional instability, anxiety, and insecurity. In addition, traits associated with conscientiousness were related to thoroughness and planning, which could be negatively perceived in relationships. Slow motions of visual feedback are required to design conversational agents with negative personalities, such as neuroticism and conscientiousness. This is similar to human–human conversations because actively reactive conversational partners are perceived as having more active and positive personalities than passive and sullen conversational partners [

5].

The study demonstrated that color was not a valid factor for expressing different personality traits. Even though the experimental materials were designed with the viewpoint that colors have different traits, colors do not show any consistent or significant patterns depending on different personality traits. Even though the two studies used experimental materials with diverse colors, such as red, purple, green, and green with blue, consistent patterns of personality perceptions were only demonstrated depending on the motion speed, regardless of color.

The visual feedback results highlight the issue of color subjectivity and objectivity. The subjectivity and objectivity of colors are a highly controversial issue among color scientists. Based on the argument that color perceptions can be organized with objective standards and systems, the relationship between colors and personalities has been widely observed and studied, particularly in human psychology. For instance, personality testing systems, such as the Color Pyramid Test [

46], exist to evaluate personalities depending on different colors. In addition, according to the theory of color [

39], red, yellow, and orange are related to exciting and enlivening features, blue and purple are related to anxious and yearning features, yellow is related to anger, and black is related to depression. Bold colors are more suitable for expressing dominant personalities than submissive personalities [

20].

The current study argues that colors are not suitable for expressing diverse personalities because personal preference is decisive in the perception of colors. The finding of this study that colors are not influential factors is supported by diverse color perception studies. Color eliminativism, which is the notion that physical objects are not colored at all and colors are perceived psychologically rather than objectively, supports the current study’s results [

47]. In addition, the color researcher Jean-Philippe Lenclos’s study of geographical color demonstrated that colors could be explained and defined based on geographical, cultural, and geographical conditions [

48], which is highly related to the ecological perspective on color [

40]. The correlations between specific colors and psychological factors as neither objective nor complicated [

49]; therefore, more sophisticated color schemes that reflect other design elements should be designed for future studies.

The results could suggest the possibility that the number of colors could influence personality perceptions rather than their hue. In particular, both visual feedbacks 3 and 8 demonstrated significantly high mean values compared with other visual feedback instances in extraversion. Considering that only visual feedbacks 3 and 8 used three colors, while the others used only one or two colors, we suggest that using diverse colors rather than a single color could be suitable for expressing extraversion. However, since this study cannot ignore the impacts of hues, this study’s results do not fully support this argument.

Perceptions of the conversational agent’s personalities differed according to vocal gender. Fast speaking speed was perceived differently depending on the vocal gender. When the male voice spoke fast, it was perceived as emotionally unstable and nervous, but the female voice speaking fast was perceived as sociable and outgoing. Sociable and outgoing personalities are considered more positive than unstable and nervous personalities [

50]. Even though the same verbal cue was used, the personality perception differed depending on vocal gender. In particular, people tended to perceive female voices as more positive than male voices. This result is highly related to the previous study findings that people prefer female extraverted voices for assistive social robots for elders [

43,

51].

The limitation of this study was that the design standard for choosing the colors of the visual feedback was based on previous studies, which could be subjective. Perceptions of colors could be influenced by personal preferences and cultural backgrounds. The findings of this study could be restricted to subjective and personal perceptions. For future studies, participants’ characteristics, such as personalities and gender, could be added as variables. Another limitation is that we did not separately measure the personality perceptions depending on motions and colors because visual feedback was designed with the combination of these two elements. Based on these findings, with more detailed conditions, the impacts of motions could be measured separately for a future study.

6. Conclusions

This study has explored how a conversational agent’s personalities can be expressed. The current study provided a novel approach to implement the natural feedback of conversational agents with the concept of personality rather than emotion. In addition, rather than focusing on two contrasting personalities, the current study applied wider personality categories. The study sought to implement more natural feedback by applying more diverse personality expression cues.

Visual feedback was used as an experimental material to answer the first research question: "Can different visual feedback be perceived as different personalities?" The results demonstrated that different motions of visual feedback were highly influential on the perception of personality regardless of color. Visual feedback with different motions could express different personalities. Visual feedback with slow motion was perceived as depressed and anxious, while fast motion was perceived as active and sociable. Moderate motion was perceived as having a creative, agreeable, and imaginative personality. Slow motion was perceived as conscientious and neurotic.

Verbal cues were used as experimental materials to answer the second research question: "Can different verbal cues be perceived as different personalities?" The results demonstrated that different verbal cues were perceived as different personalities. Regarding conscientiousness, the male voice with low emotionality and the female voice with a slow and moderate speed were statistically significant. The conversational agent with a voice with high emotionality, the female voice with fast speech, and the male voice asking many questions were perceived as extraverts. The female voice with high emotionality was likely perceived as having an open personality. The male voice speaking fast with low wordiness was perceived as neurotic.

The overall results of the conversational agents could be applied to diverse interfaces designed with smart displays, such as AI speakers, social robots, cars, and Internet of Things environments. In addition, this study applied a wider range of personalities rather than focusing on two contrasting personalities. Furthermore, more diverse elements were applied in personality expressions rather than focusing on simple factors.