1. Introduction

Multiple-antenna techniques are well known to provide spatial diversity and multiplexing gains [

1,

2,

3]. Over the last few decades, the benefits of multiple-antenna communications have been verified in both theory and practice. On the other hand, tensor-based signaling approaches that utilize several signal dimensions such as time, space, and code, are seen as good technologies for improving the information transmission rate and enhancing communication reliability [

4,

5,

6]. Against this background, the problem of joint symbol and channel estimation is resolved by using tensor-based signaling approaches, and a number of semi-blind or blind receivers have been proposed for multiple-input multiple-output (MIMO) systems.

A parallel factor (PARAFAC) [

7] based receiver is proposed in [

8] by using the Khatri–Rao space-time (KRST) coding scheme, which can achieve a flexible tradeoff between error performance and transmission efficiency. In [

9], the authors extend the KRST coding scheme by using the linear constellation precoding, and then developing several semi-blind receivers. These semi-blind receivers allow a joint symbol and channel estimation without requiring pilot sequences for the instantaneous channel state information (CSI) acquisition. In [

10], the authors develop a new tensor-based receiver in MIMO relay systems for channel estimation by using PARAFAC analysis. A low complexity PARAFAC-based channel estimation scheme for non-regenerative MIMO relay systems is developed in [

11]. In [

12], a novel semi-blind receiver is derived using a multiple KRST coding scheme for joint symbol and channel estimation. More recently, a nested PARAFAC-based receiver for cooperative MIMO communications is proposed in [

13], and three-step and double two-step alternating least squares (ALS) algorithms are proposed to fit the nested PARAFAC model for estimating system parameters. For millimeter wave (mmWave) massive MIMO systems, a PARAFAC decomposition-based algorithm is developed in [

14] to jointly estimate channel parameters of multiple users. In [

15], the algorithm in [

14] is extended to mmWave MIMO orthogonal frequency division multiplexing (MIMO-OFDM) systems for channel estimation, and Cramér–Rao bound (CRB) results for channel parameters are also derived. Considering the channel estimation issue in the presence of pilot contamination for multi-cell massive MIMO systems, a new PARAFAC-based approach is proposed in [

16] to jointly estimate directions of arrival, fading coefficients, and delays. Although these works [

8,

9,

10,

11,

12,

13,

14,

15,

16] consider different design approaches, their common feature is using the PARAFAC model, which needs to know the first column or row of one loading matrix to eliminate scaling ambiguity. Furthermore, the ALS algorithm used in these receivers exhibits a convergence problem when ill-conditioned factor matrices exist [

17].

In contrast to the ALS algorithm, the Levenberg–Marquardt (LM) algorithm updates all the parameters to be estimated at the same time. The LM algorithm is successfully used to fit some tensor models, adapt to collinearity problems, and provide quadratic convergence [

18,

19,

20]. A LM algorithm is first proposed for fitting PARAFAC model in [

18]. In [

19], the authors present a LM algorithm to the decomposition of the Block Component Model (BCM) in the uplink of a wideband direct-sequence code-division multiple access (DS-CDMA) systems. Recently, a LM algorithm was developed in [

20] to jointly estimate information symbol and channel matrices for a generalized PARATUCK2 model. As an iterative algorithm, the LM algorithm is also sensitive to initialization. Thus, the optimization of the initial value is important to improve the performance of the LM algorithm.

In [

21], a tensor-based space-coding scheme using PARATUCK2 model is developed. For the PARATUCK2 model, the number of channel uses can be different from one transmitted data stream to another. In [

22], a generalized PARATUCK2 model is proposed by exploiting a tensor space-time (TST) coding. Recently, a Kronecker product least squares (KPLS) receiver is proposed in [

23] to estimate the symbol and channel matrices. More recently, it is shown in [

24] that a KPLS receiver can be extended to all the tensor-based systems. Although the KPLS receiver is a non-iterative and low-complexity solution, it needs the related core tensor unfolding to be right-invertible, which is a relatively harsh condition in signal design.

Inspired by [

21] and [

22], we considered a simple tensor space-time coding scheme for multiple-antenna systems, along with an efficient receiver. The allocation factor and the space-time code factor in the TST coding scheme in [

22] are independent, while the allocation factor in our coding scheme is also a three-dimensional space-time code factor. Thanks to the special structure of the proposed coding scheme, the received signal can be constructed as a Tucker-2 model [

25,

26], which has uniqueness property under some suitable conditions. Then, a robust semi-blind receiver based on optimized LM algorithm is presented for joint channel and symbol estimation. Uniqueness and identifiability issues for the constructed Tucker-2 model are also discussed in this paper. Compared with existing receivers, the proposed receiver has a better estimation performance. Moreover, the proposed semi-blind receiver can be extended to the multi-user massive MIMO system. For the low-rank channel, the proposed receiver still has good performance for joint symbol and channel estimation even in the shorter length of code and information symbol, and larger number of data streams.

The organization of this paper is as follows.

Section 2 presents a brief overview of the Tucker model. In

Section 3, the system model is presented and the associated tensor signal model is formulated.

Section 4 briefly reviews the receiver with the ALS algorithm and describes the proposed semi-blind receiver based on the optimized LM algorithm.

Section 5 extends the proposed semi-blind receiver to multi-user massive MIMO systems for joint symbol and channel estimation. In

Section 6, some simulation results are shown to demonstrate the performance of our semi-blind receiver. Conclusions are drawn in

Section 7.

Notation: Scalars, vectors, matrices, and tensors are denoted by lower-case letters , boldface lower-case letters , boldface capitals , and underlined boldface capitals , respectively. , , , and represent transpose, conjugate transpose, inverse, and Moore–Penrose pseudo-inverse of the matrix , respectively. denotes the Frobenius norm of . denotes the identity matrix. The operator stacks the columns of its matrix argument to a vector, while represents the inverse vectorization operation. The Kronecker matrix product is denoted by ⊗. The term corresponds the diagonal matrix out of the i-th row of .

3. System Model

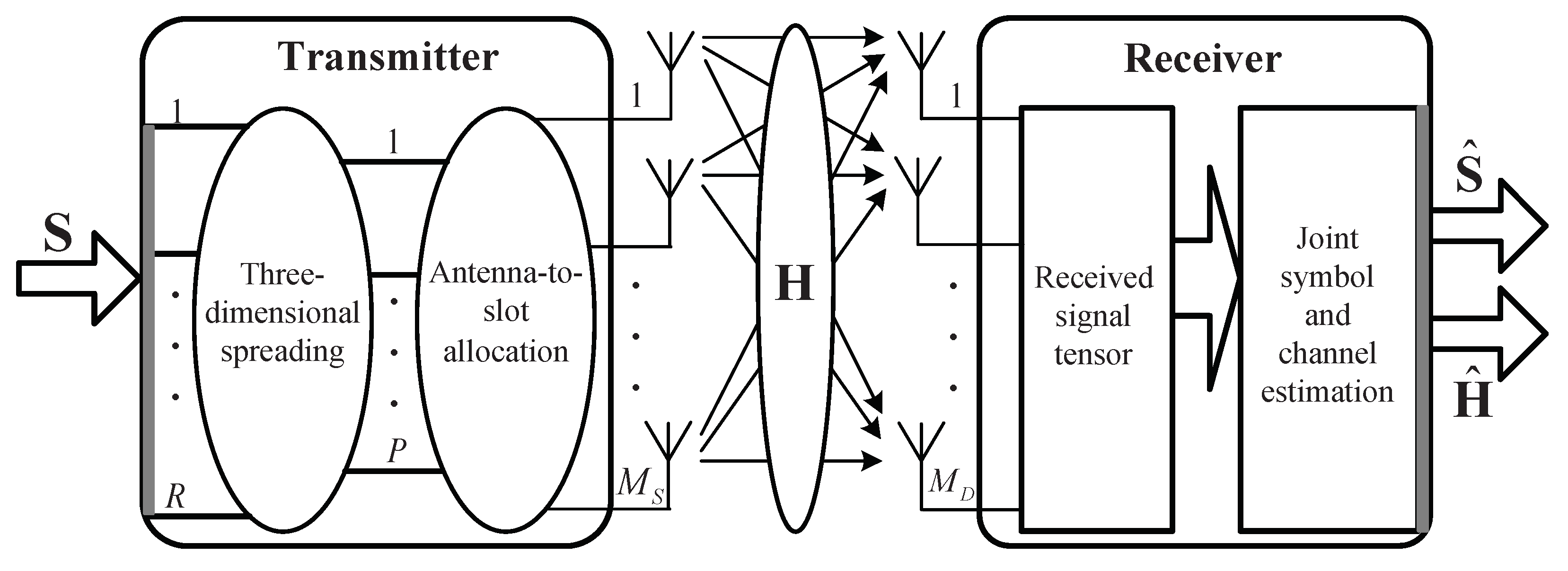

Consider a multiple-antenna system with

transmit antennas and

receive antennas as shown in

Figure 1.

represents the channel coefficient between the

-th transmit antenna and the

-th receive antenna (

,

).

represents the

n-th symbol of the

r-th data stream (

,

), with each data stream being formed of

N information symbols. Each symbol

is coded by a three-dimensional space-time code

(

), whose dimensions are the numbers of transmit antennas, data streams, and chips, respectively. We then define the antenna-to-slot allocation factor

, which is 0 or 1. Both the transmitter and the receiver know these factors

and

.

The signal transmitted from

-th transmit antenna, during the

n-th symbol period of the

p-th chip, is given by:

where

and

are

-th and

-th elements of signal matrix

and the antenna-to-slot allocation matrix

, respectively.

and

are typical elements of the transmitted signal tensor

and the coding tensor

, respectively. The elements in

are chosen as

, where

is taken from random uniformly distributed pseudorandom numbers. In our tensor coding scheme, the number of transmitted data streams is not restricted to be equal to that of transmit antennas, and the data streams can be allocated to an arbitrary set of transmitted antennas. Without considering the allocation of stream-to-slot, the coding scheme in [

21] can be regarded as a special case of our tensor coding scheme with a fixed two-dimensional space-time code.

Assuming Rayleigh flat fading channels, then the discrete-time baseband signal at the

-th receive antenna can be written as:

where

is the

-th element of channel matrix

,

and

are typical elements of the received signal tensor

and the noise tensor

, respectively.

3.1. Constructed Tucker-2 Model

Let us define

, where

is the typical element of the compound tensor

. So Equation (

7) can be written as:

By comparing Equation (

4) with Equation (

8), the received signal tensor

of noiseless signals satisfies a Tucker-2 model, with the following correspondences:

Using the mode-

n product representation, the model (

8) can be written as:

where

and

represent the two loading matrices, and

is the core tensor.

Let us define

,

,

and

as the

p-th matrix slice of

,

,

, and

, respectively. We have

. By defining

,

,

, and

, we can obtain four compact forms of the Tucker-2 model (

11):

with,

and,

In this paper, two following assumptions are satisfied.

(a) The antenna-to-slot allocation matrix does not have an all-zero column. This means that at least one transmit antenna is used during each time slot;

(b) Both the transmitter and receiver know the allocation matrix and the coding tensor .

3.2. Uniqueness Issue

Due to the loading matrices factors being unique up to nonsingular matrices, the generalized Tucker-2 model is not essentially unique. This consequence can be verified by using the property of the mode-

n product:

where the noise tensor

has been omitted for convenience of notation,

and

are nonsingular matrices.

It is shown that applying the uniqueness theorem of the Tucker model in [

25], if the core tensor

is known, then

and

are unique to a scaling ambiguity, i.e.,

where

and

are alternative solutions for

and

, respectively,

and

. Consequently, the priori knowledge of only one symbol is enough to resolve this scaling ambiguity factor

. Compared to the PARAFAC model used in existing receivers, the constructed Tucker-2 model only needs a priori knowledge of one symbol to eliminate the scaling ambiguity. Therefore, our scheme has higher spectral efficiency.

3.3. Identifiability Conditions

The identifiability for the constructed Tucker-2 model is an assignable problem for recovering the parameters to be estimated. In this paper, it is directly linked to the estimation of the signal matrix and channel matrix from the received signal tensor . Conditions of parameter identifiability is given in the following theorem.

Theorem 1. (Sufficient Conditions): Assuming that has independent and identically distributed (i.i.d.) entries, and has a full column rank. denotes the number of nonzero elements in . Then sufficient conditions for identifiability of signal matrix and channel matrix are: Proof of Theorem 1. From Equation (

12) and Equation (

13), necessary and sufficient conditions for identifiability of

and

requires that

and

have a full column rank, i.e.,

Under the assumptions in Theorem 1 that

has i.i.d. entries,

can ensure

has a full column rank. Since

and

have a full column-rank, then

has a full column rank, i.e.,

. Therefore, Equation (

21) is satisfied if

has a full column rank. We rewrite

from Equation (

15) as:

where

. Due to

being a specially constructed matrix with different generators, any two rows (or two columns) of

are linearly independent. If the number of non-zero rows of

is greater than or equal to the number of columns of

(i.e.,

), then

is full column rank. Thus,

and

can ensure that condition (

21) is satisfied.

Since

has a full column rank, we can deduce that

. Thus, condition (

22) is satisfied if

has a full column rank. We rewrite

from Equation (

16) as:

where

and

. Recall that

does not have an all-zero column, which means that

has a full column rank. We have that

is full column rank if

is full row rank. Since

has the block diagonal structure and

has different generators,

ensures that

has full row rank. Therefore,

can ensure that condition (

22) is satisfied. This ends the proof. ☐

Remark 1. The conditions in Theorem 1 is sufficient but not necessary for parameter identifiability. Sufficient condition (21) and condition (22) also concern the ALS algorithm. In fact, identifiability of signal and channel parameters is possible in our simulation results when . Necessary conditions for parameter identifiability is based on the dimensions of and . If the channel matrix does not have a full column or row rank, i.e., , where L is the rank of the channel matrix . Thus, identifiability conditions of Theorem 1 are no longer applicable because of the low-rank property of . However, we can also deduce identifiability conditions based on Equations (21) and (22), i.e., necessary and sufficient conditions for identifiability of and requires that and have full column rank. For this case, we will do further analysis in Section 5. 4. Semi-Blind Receiver

The ALS algorithm is a classical solution for fitting tensor models. However, it is well known that the ALS algorithm exhibits a convergence problem when collinearity is present in one or more modes [

27,

28]. The LM algorithm is successfully used to fit the PARAFAC and PARATUCK2 models, adapted to collinearity problems, and provide quadratic convergence [

19,

20]. As an iterative algorithm, the LM algorithm is also sensitive to initialization. Thus, the optimization of the initial value is important to improving the performance of the LM algorithm.

In this section, a novel semi-blind receiver based on the optimized LM algorithm is developed for joint symbol and channel estimation. The basic principle of the optimized LM algorithm is to first resort to a LSK approximation problem [

29,

30], based on the singular value decomposition (SVD) of rank-1 matrix to initialize the symbol and channel matrices, and then update these two matrices at the same time in each iteration. Finally, the modified singular value projection (SVP) based algorithm [

31,

32] is used to further improve the performance of channel estimation.

The proposed optimal initialization method is based on the Kronecker least squares algorithm, which exploits SVD-based rank-one approximations to get an initial estimation of and from their Kronecker matrix product.

By post-multiplying Equation (

14) with

, we get

, where

and

are initial estimates of

and

. According to the Theorem 2.1 in [

29], we have:

where

is a rank-one matrix, and

is, given that:

In this case, the Kronecker product matrix

has been rearranged into a rank-one matrix

. Applying SVD to the rank-one matrix

, the vectors

and

can be estimated by using a rank-one approximation method, i.e., by computing its largest singular value and the corresponding left and right singular vectors.

and

are determined up to a scaling factor, which can be removed by setting

as in [

27,

30]. The detailed process is shown below.

By applying SVD to the rank-one matrix

, we have:

where

is a diagonal matrix containing singular values of

,

and

are unitary matrices. Using the rank-one approximation of

, we have:

where

is the largest singular value, and

and

are the corresponding left and right singular vectors. Thus, vectors

and

can be estimated as:

where

is the scalar factor.

and

are determined up to this scalar factor. In practical communication systems, this scalar factor

can be removed by setting

. Thus, the value

in this paper is equal to

when

. Note that we can also choose Equation (

14) to implement the above optimal initialization procedure.

Define a parameter vector stacking all the unknowns as:

where

,

, and

. The cost function to be minimized is given by:

where

is the typical element of the tensor

, which denotes the output tensor in absence of noise.

denotes the vector of residuals and

.

Let the

be the Jacobian matrix of

with respect to

, and

be the gradient of

with respect to

.

and

are respectively defined by:

The optimized LM algorithm consists in optimizing

, and estimating

at the

-th iteration from

at the

i-th iteration via

. The step

is updated by solving the following modified normal equations:

where

is the damping parameter to ensure that

is a descent direction. The whole procedure of the optimized LM algorithm used in our semi-blind receiver is listed in Algorithm 1.

Due to the partitioned structure of

, the Jacobian matrix

can be written as

, where

and

are respectively given by:

The permutation matrix

is given by:

where

and

are the

n-th and

-th column vectors of the identity matrices

and

, respectively.

| Algorithm 1 The optimized LM algorithm |

First stage: • Compute the LS estimate of : ; • Rearrange to a rank-one matrix ; • Apply the SVD on : ; • Calculate initialization matrices and : . Second stage: Initialization: Initialize , and ; set and ; whiledo Step 1. Compute and respectively; Step 2. Compute : ; Step 3. Update : ; Step 4. Calculate the gain rate : , where ; Step 5. Update : If , is ture, and set and . Otherwise, is invalid, and set and ; Step 6.; end Acquire and : , . Compute : If , . Otherwise, . Remove the scaling ambiguity: , . |

We can then build the blocks of

as follows:

The terms

,

and

can be respectively written as:

Similarly, the partitioned structure of

allows us to write

as the concatenation of the following two gradients:

where

and

are respectively given by:

In Algorithm 1, the estimated matrix

is projected onto a low rank estimated matrix

by the SVP based algorithm when

. Here

is calculated as

, where

denotes the

l-th largest singular value of

,

and

are the corresponding left and right singular vectors. The overall complexity of the optimized LM algorithm mainly depends on the per-iteration complexity and the numbers of iterations. The per-iteration complexity of this algorithm can be estimated as

. Since the antenna-to-slot allocation matrix and the coding tensor are fixed and known at the receiver, the convergence of the optimized LM algorithm is usually achieved in only a few iterations. The average number of iterations for the optimized LM algorithm will be further analyzed in

Section 6.

5. Extension to Multi-User Massive Mimo Systems

In the following section, we show that the developed algorithm can be applied to multi-user massive MIMO systems with hybrid precoding architecture for joint symbol and channel estimation. We consider a fully-connected hybrid precoding architecture, which is the typical model of massive MIMO systems. The base station communicates with

M users simultaneously, and each mobile station is equipped with

antennas. The base station is equipped with

antennas and

independent radio frequency chains to transmit

R streams for

receive antennas in each mobile station. In the considered downlink system, each symbol

is coded by a three-dimensional baseband code

followed by a radio frequency code

in the base station. At the

m-th (

) mobile station, the discrete-time baseband signal at the

-th receive antenna is written as:

where

and

are

-th and

-th elements of the radio frequency precoder matrix

and the massive MIMO channel matrix

, respectively.

is the typical element of the received signal tensor

. Then Equation (

45) can be rewritten as:

where,

Following [

33,

34], we also adopt a geometric channel model with

scatterers between the base station and the

m-th mobile station,

. Under this model, the channel matrix

is expressed as:

where

denotes the complex gain of

l-th path,

, and

are

l-th ’s azimuth angles of arrival and departure (AoAs/AoDs) of the mobile station and base station, respectively.

and

are receive and transmit antenna array at a specific AoA and AoD, respectively. Finally,

and

are the steering vectors at the base station and mobile station, respectively. If uniform linear arrays are considered, the steering vectors

and

are respectively given by:

where

denotes the signal wavelength, and

d is the distance between two neighboring antenna elements.

Similar to the analysis of

Section 3.1, the received signal tensor

of noiseless signal also satisfies the Tucker-2 model, and the proposed algorithm in

Section 4 remains suitable for joint symbol and channel estimation at each mobile station. However, two points are important to note here. First, identifiability conditions of Theorem 1 are no longer applicable because of the low-rank property of

. However, we can deduce new identifiability conditions based on Equations (

21) and (

22), i.e., necessary and sufficient conditions for identifiability of

and

require that

and

have full column rank. For convenience of analysis, we assume that the antenna-to-slot allocation matrix is all-ones matrix. Then, we have the following theorem.

Theorem 2. Assuming that the path gains of the low-rank channel are Rayleigh distributed, and N and R are large enough. Then sufficient conditions for identifiability of and are: Proof of Theorem 2. The channel model

is expressed as Equation (

48). The rank of

is

, and the path gains of the

are Rayleigh distributed.

is a full rank matrix, which contains different generators. Consequently,

ensures that

have full column rank. Since

is a low-rank, i.e.,

,

can ensure

has the full column rank. Since

N and

R are large enough, and

has the random nature, the rank of

is equal to

N or

R. Moreover,

is also a full rank matrix because of its special structure. We deduce that

is full column rank if

, i.e.,

. Therefore, condition (

51) can ensure identifiability of

and

. This ends the proof of Theorem 2. ☐

Second, the low-rank property of the mmWave massive MIMO channel should be exploited. Due to very limited scattering of the mmWave channel and larger quantities of transmitting and receiving antennas, is usually less than and . Different from the conventional MIMO channel matrix that usually has full column or row rank, the rank of the mmWave massive MIMO channel matrix is much smaller than its dimension. This is called ‘low-rank property’ of the mmWave massive MIMO channel matrix. Therefore, the final part of the proposed Algorithm 1 takes advantage of this low-rank constraint to further improve the estimation accuracy of the channel.

6. Simulation Results and Discussion

We studied the performance of the proposed semi-blind receiver through numerical simulations. The channel matrix

has independent and identically distributed (i.i.d.) complex Gaussian entries with zero-mean and unit variance. The default values of the system parameters are set to

, and the antenna-to-slot allocation matrix is all-ones matrix. Throughout the simulation, the coding tensor

is known at the receiver. Quadrature phase-shift keying (QPSK) constellations are used to modulate the transmitted symbols. All results are averaged over 10,000 independent Monte Carlo simulations. As in [

8,

9], the signal-to-noise ratio (SNR) at the receiver is defined as:

where

denotes the noise-free signal tensor (the tensor-of-interest) containing both symbol and channel parameters. For each channel realization, the normalized mean square error (NMSE) for different receivers is computed as

, where

is the estimation of

at convergence.

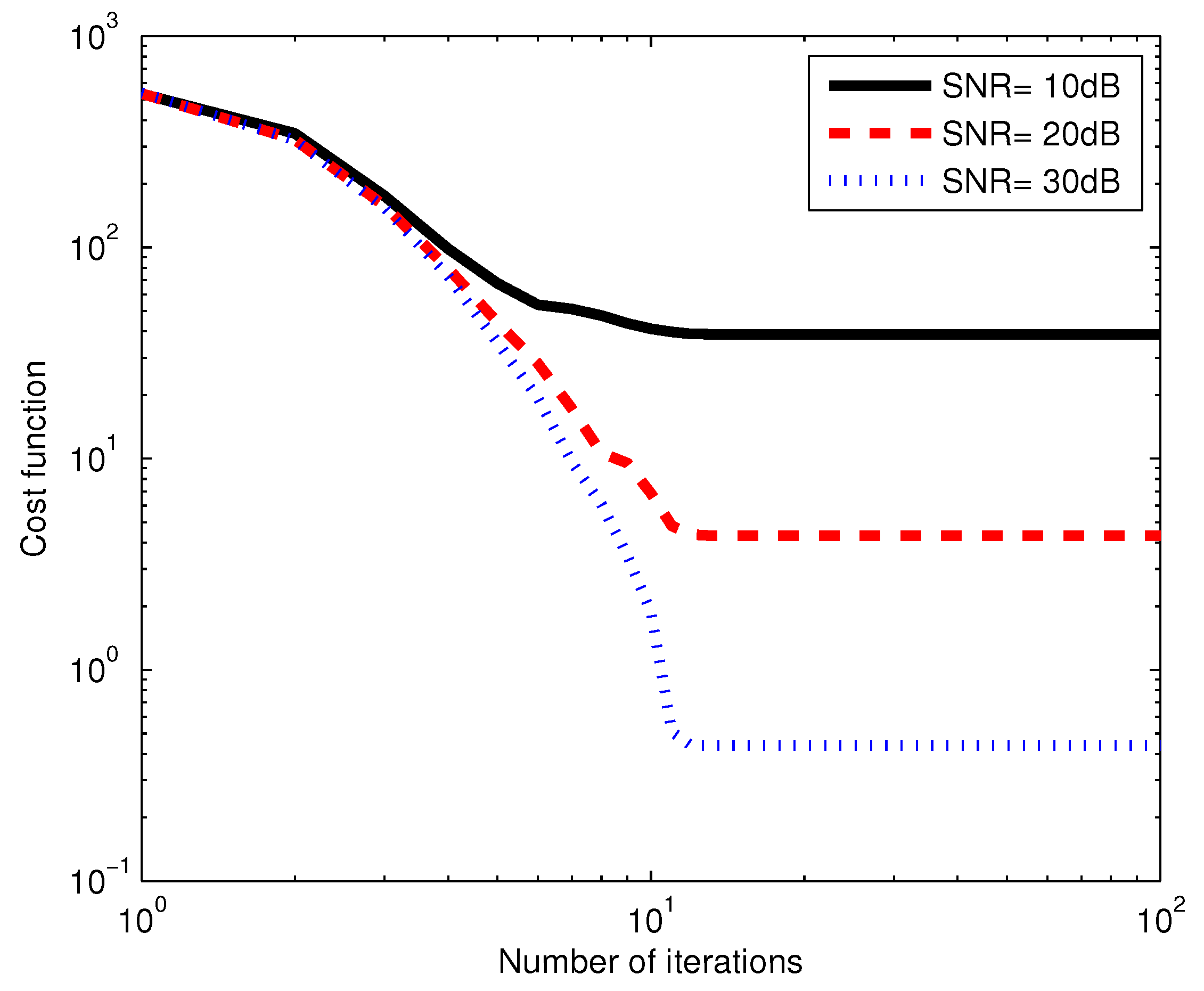

In the first example, we evaluate the convergence performance of the optimized LM algorithm, which is used in our semi-blind receiver. We assume the system design parameters

and

. In

Figure 2, the average value of the cost function is plotted versus the number of iterations, for three SNR values. We observe from

Figure 2 that for each SNR value, the cost function decreases as the number of iterations increases until the algorithm converges. We can also see that for the same number of iterations, the cost function decreases as SNR increases. The proposed algorithm needs few iterations to converge. For instance, the optimized LM algorithm achieves convergence in about 10 iterations at the SNR of 20 dB.

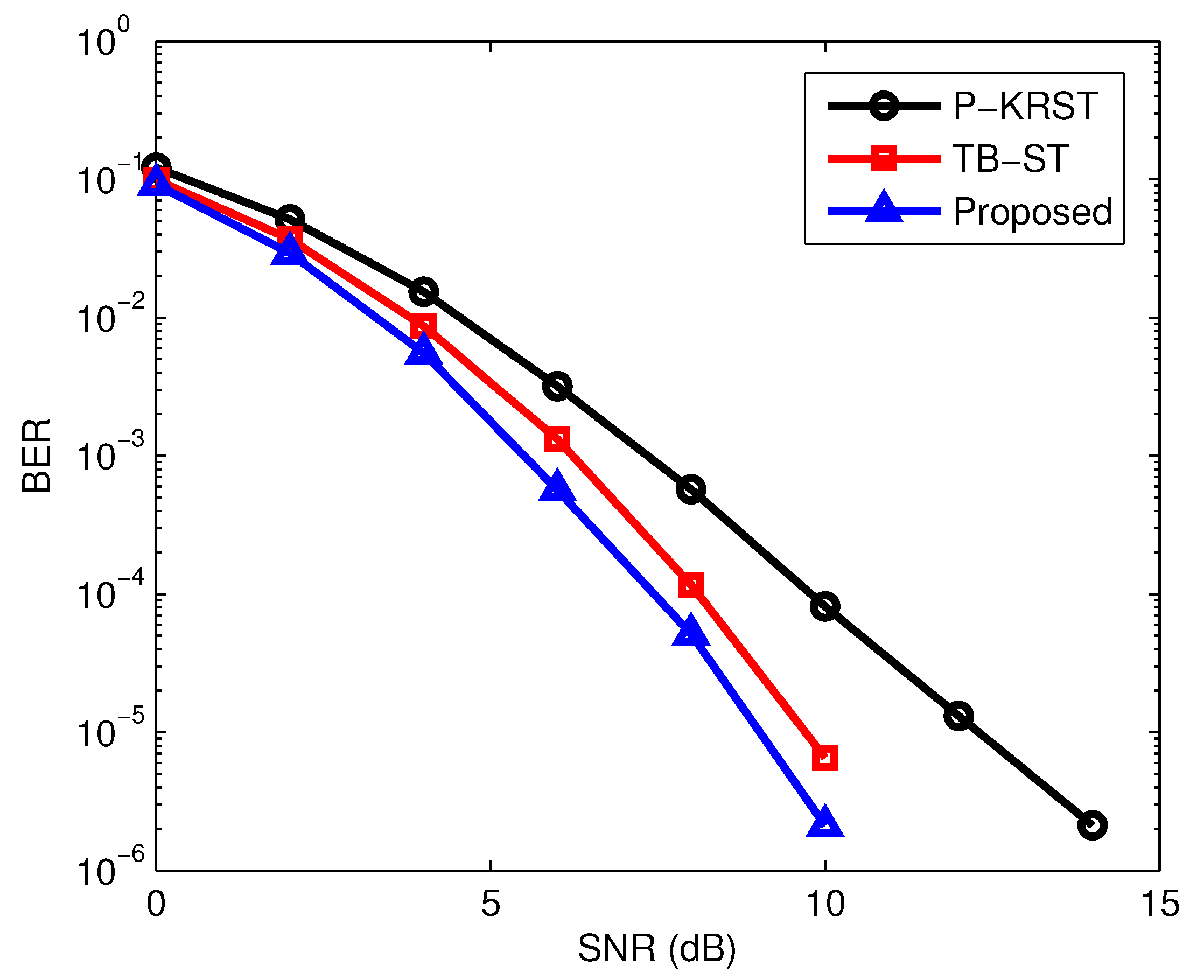

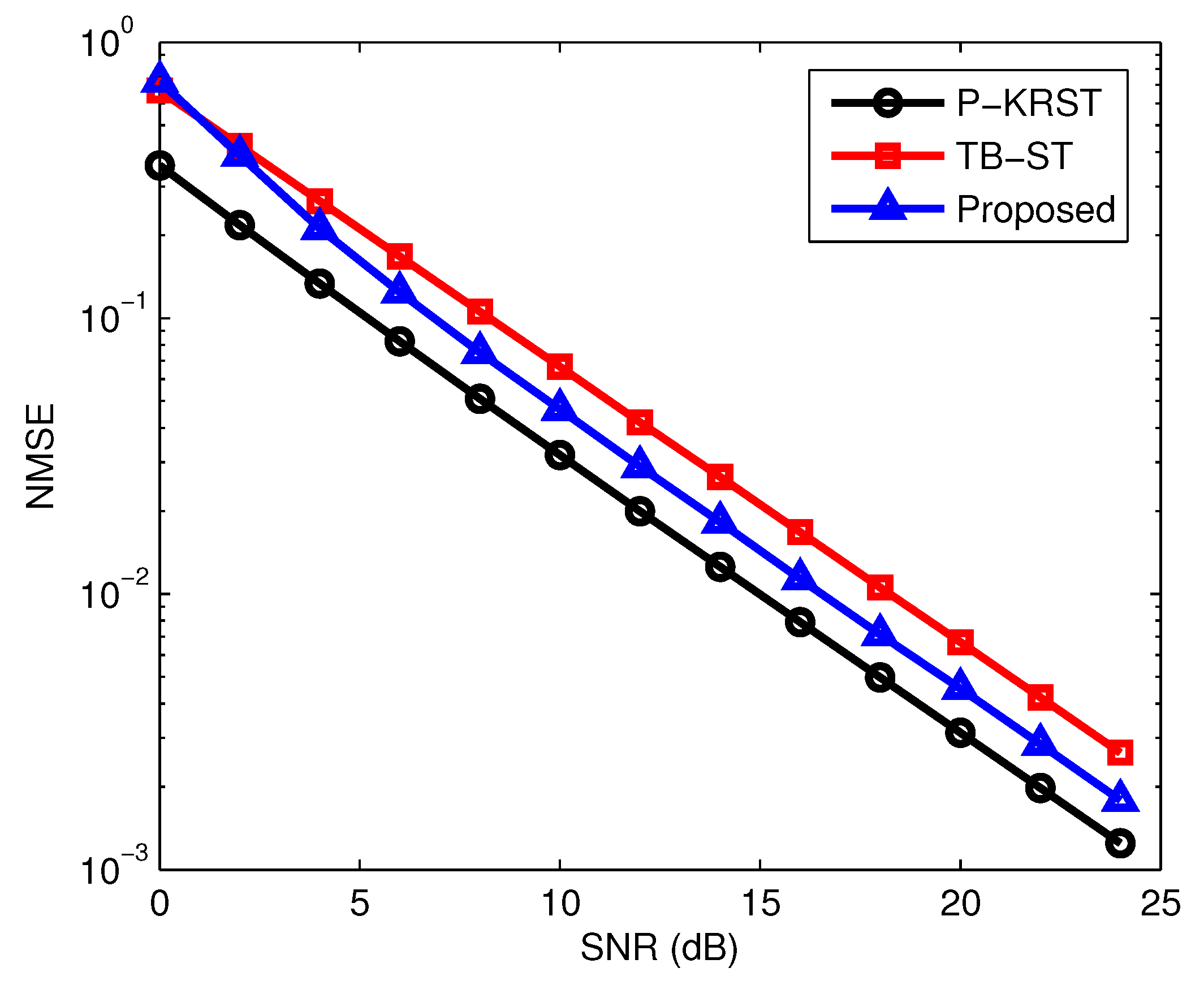

In the second example, we evaluated the estimation performance of the proposed semi-blind receiver in terms of bit error rate (BER) and the NMSE of channel estimation. In particular, we compared the PARAFAC-based receiver with KRST (P-KRST) coding scheme in [

8] and the training-based receiver with the space-time (TB-ST) coding scheme. For the TB-ST scheme, the symbol matrix is composed of two parts as in [

9], i.e., the training symbol matrix and the unknown data symbol matrix.

denotes the length of the channel training sequence in the TB-ST receiver.

The transmission rates for the proposed coding scheme and the KRST coding scheme are and (data symbols per symbol period), respectively. However, the KRST coding scheme needs to know the first column of signal matrix to eliminate the scaling ambiguity, while the proposed coding scheme only needs to know to eliminate the scaling ambiguity. Thus, the efficient transmission rates for the proposed coding scheme and the KRST coding scheme are and , respectively. To ensure a fair comparison, the proposed coding scheme and the KRST coding scheme should keep the same efficient transmission rate, i.e., . Thus, the system design parameters in this example are set equal to , , and . For TB-ST coding scheme, we divide , where blocks and are used for channel training and data transmitting. Therefore, the length of the channel training sequence in the TB-ST receiver is .

The BER performance of different receivers versus SNR is shown in

Figure 3. It can be seen that the proposed semi-blind receiver outperforms the P-KRST and TB-ST receiver. The NMSE performance of the different receivers is demonstrated in

Figure 4. It can be seen from

Figure 4 that the P-KRST receiver has the best performance of channel estimation, and the proposed semi-blind receiver yields a smaller NMSE compared with the TB-ST receiver. From [

8], the per-iteration complexity in the PARAFAC based receiver is

. The complexity of the TB-ST scheme can be estimated as

. The per-iteration complexity of the proposed O-LM algorithm is given at the end of

Section 4. The TB-ST scheme has the least computational complexity due to the use of the channel training sequence. Due to the adoption of the simple KRST coding scheme, the PARAFAC based receiver has lower complexity than that of the proposed receiver. However, the TB-ST receiver requires a long channel training sequence, the PARAFAC-based receiver needs to know the first column or row of the signal matrix to eliminate the scaling ambiguity, but the proposed receiver only needs to know one symbol of the signal matrix.

In the third example, we evaluated and compared the performance of the traditional ALS (T-ALS) and optimized LM (O-LM) algorithms. We assume the system design parameters

and

. Correlated MIMO channel is considered in this example, and the channel matrix

is modeled as in [

35], where

denotes the normalized correlation coefficient with magnitude

. We consider

(non-correlation) and

(strong correlation), respectively. For each Monte Carlo run, the T-ALS algorithm is initialized with ten different random matrices as in [

20,

36]. The estimation performance is evaluated after selecting the best initialization, which is the one that results in the minimum value of

.

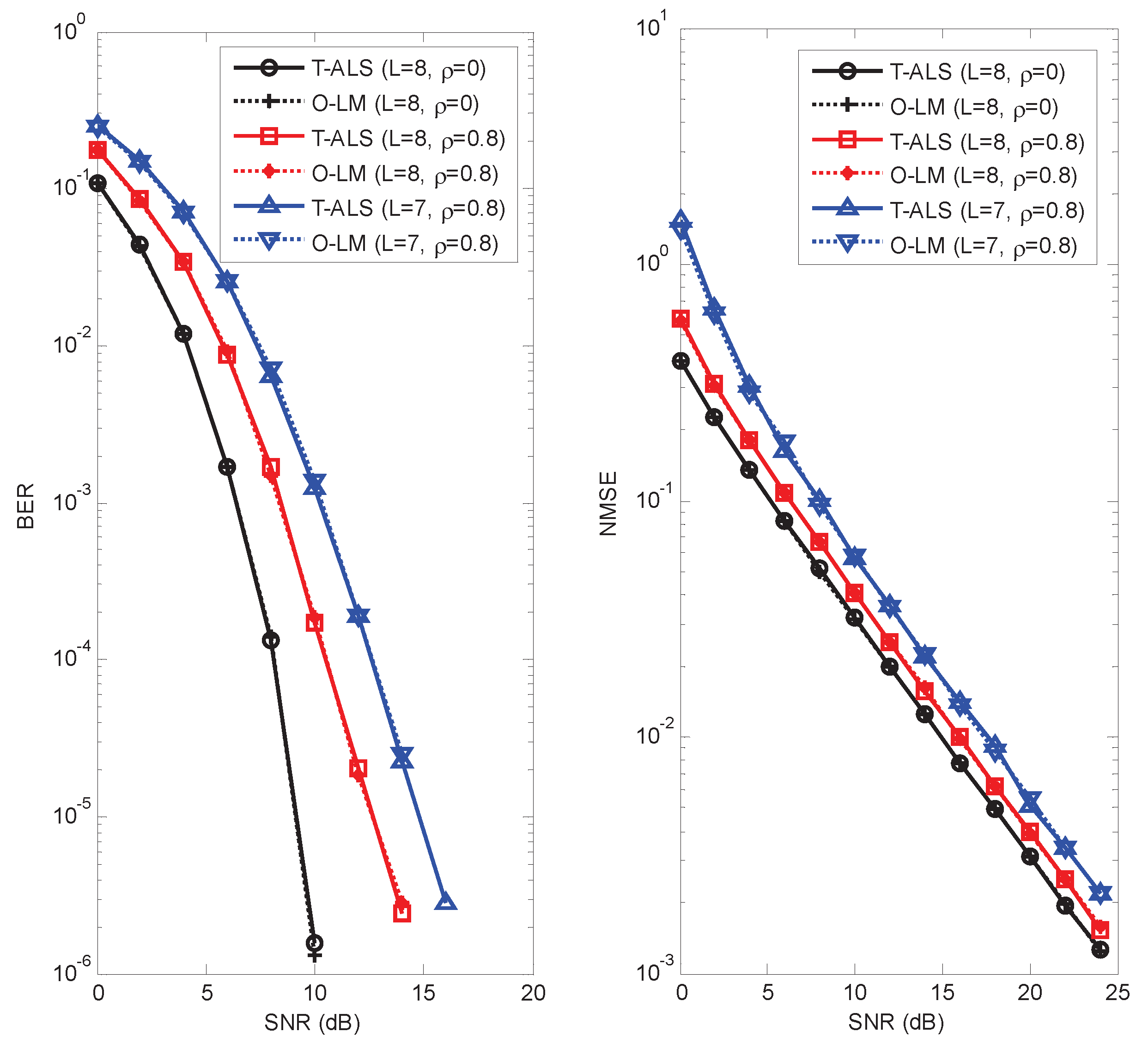

We observe from

Figure 5 that the T-ALS and O-LM algorithms give a similar BER and NMSE performance, which means that these two algorithms converge to the same point. For the right subfigure of

Figure 5, the NMSE of the T-ALS and O-LM algorithms is also shown in

Table 1 for the sake of comparison. We can also observe from

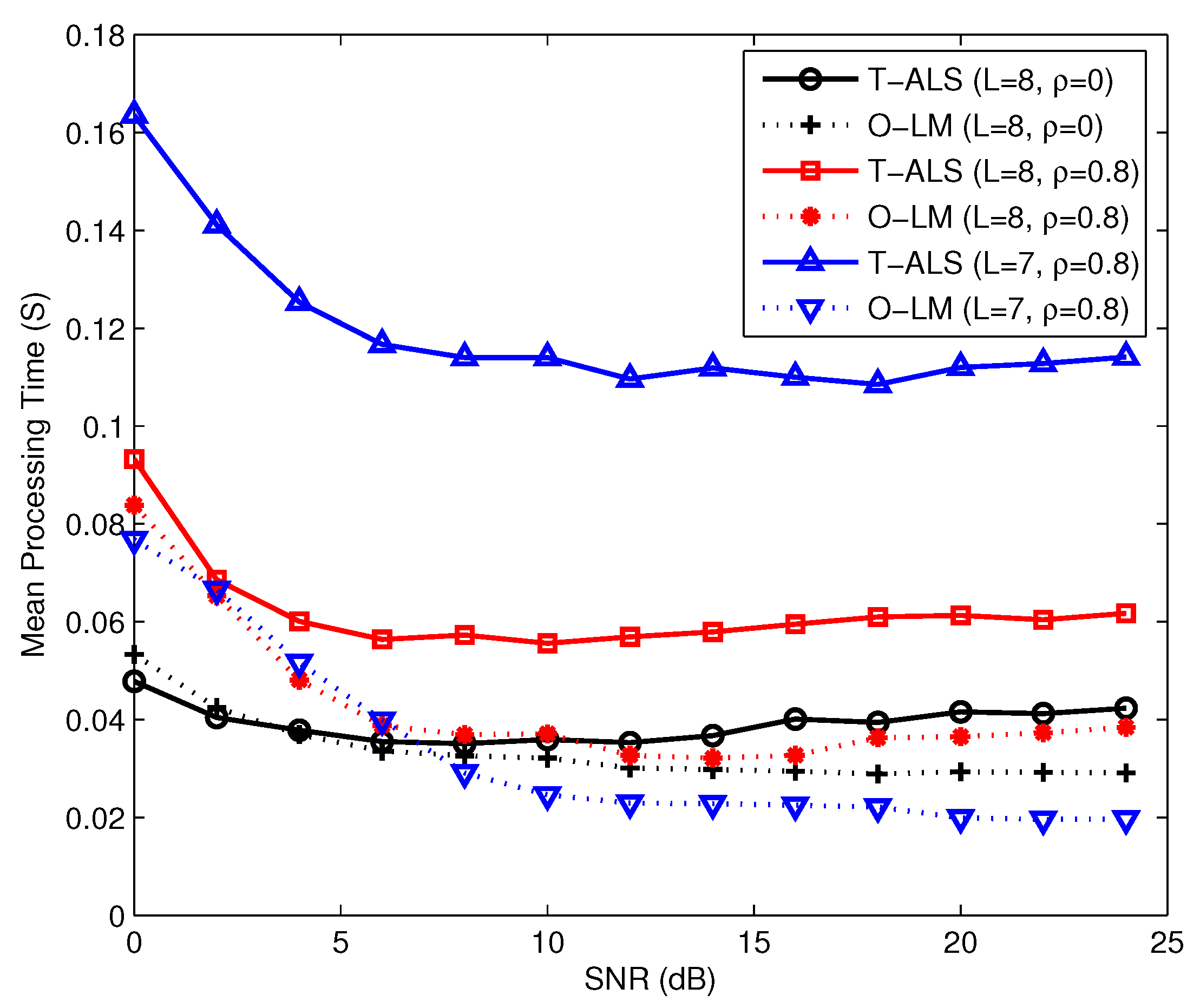

Figure 5 that for these two algorithms, BER and NMSE performance degrade when the channel becomes strongly correlated. The overall complexities of the O-LM algorithm and the ALS algorithm depend on the per-iteration complexity and the number of iterations. The per-iteration complexity of the O-LM algorithm is higher than that of the T-ALS algorithm. However, because of the robustness of the O-LM algorithm, the O-LM algorithm needs fewer iterations compared with the T-ALS algorithm. Therefore, the proposed algorithm has lower complexity compared with the existing T-ALS algorithm. The mean processing times required in the T-ALS and O-LM algorithms are shown in

Figure 6. We observe that the mean processing time required in the O-LM algorithm is shorter than that of the T-ALS algorithm, especially when the channel becomes strongly correlated. From

Figure 6 we can also observe that the advantage of the O-LM algorithm is obvious as

L decreases from 8 to 7 compared with the T-ALS algorithm.

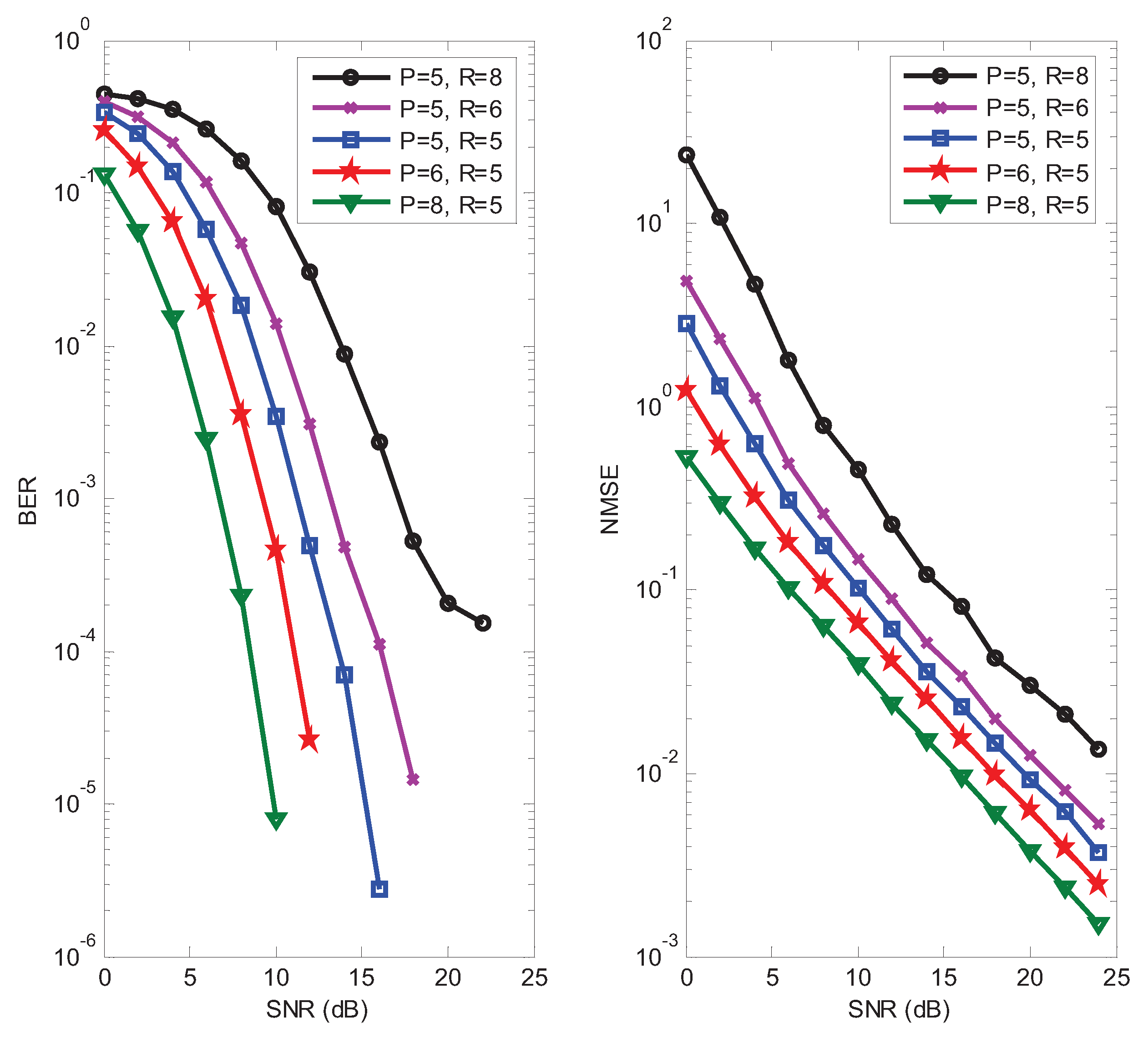

In the fourth example, the influence of design parameters

for the proposed receiver is studied. In the left subfigure of

Figure 7, it can be seen that the BER decreases when

P increases, which expounds the performance gain brought by the time diversity. It can also be seen from this subfigure that the BER increases as the number of data streams

R increases. The impact of design parameters

on the NMSE performance is shown in the right subfigure of

Figure 7. As expected, we can observe that the NMSE decreases linearly as a function of

P, and increases as

R increases. Hence, appropriate values for the design parameters

P and

R can be selected according to requirements of the system performance and transmission rate.

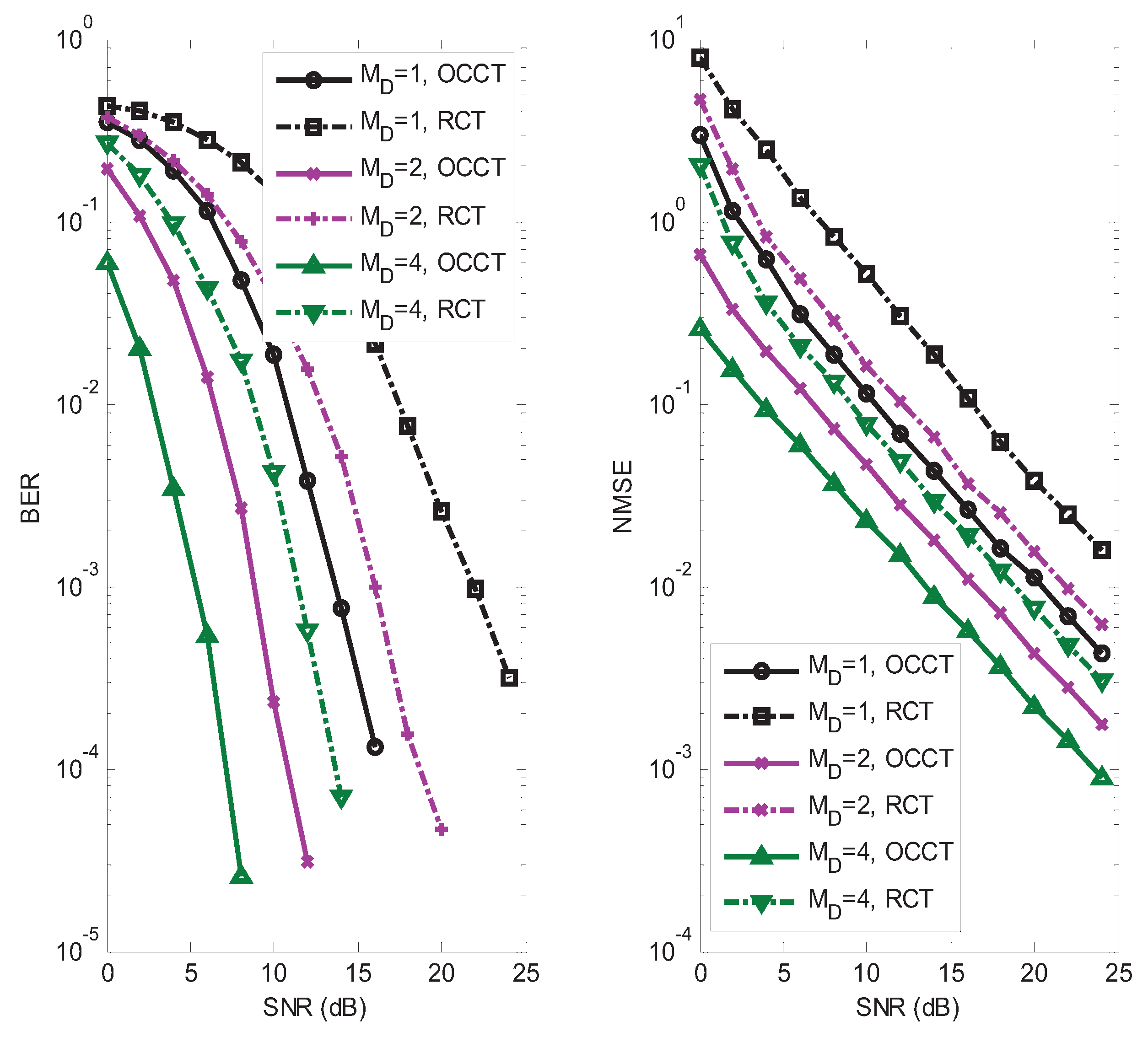

In the fifth example, we assume

,

, and

for our semi-blind receiver. The influence of the receive antenna was analyzed. We also compared the performance of our chosen coding tensor (OCCT)

with the random coding tensor (RCT) whose entries are circularly-symmetric Gaussian random variables. In

Figure 8, it can be seen that both the BER and NMSE decrease when

increases, which expounds the performance gain brought by the receive diversity. We also observed from

Figure 8 that OCCT has a better performance than RCT. Although OCCT is suboptimal, this choice has good symbol and channel identifiability properties, which is advantageous from a receiver design viewpoint.

In the sixth example, we studied the estimation performance of two different transmission schemes for our semi-blind receiver. The default values of the system parameters were set to

and

. In scheme 1, we assume

,

, and

. Three different antenna-to-slot allocation matrices are given as follows:

In scheme 2, we assume

,

, and

. Three different antenna-to-slot allocation matrixes are given as follows:

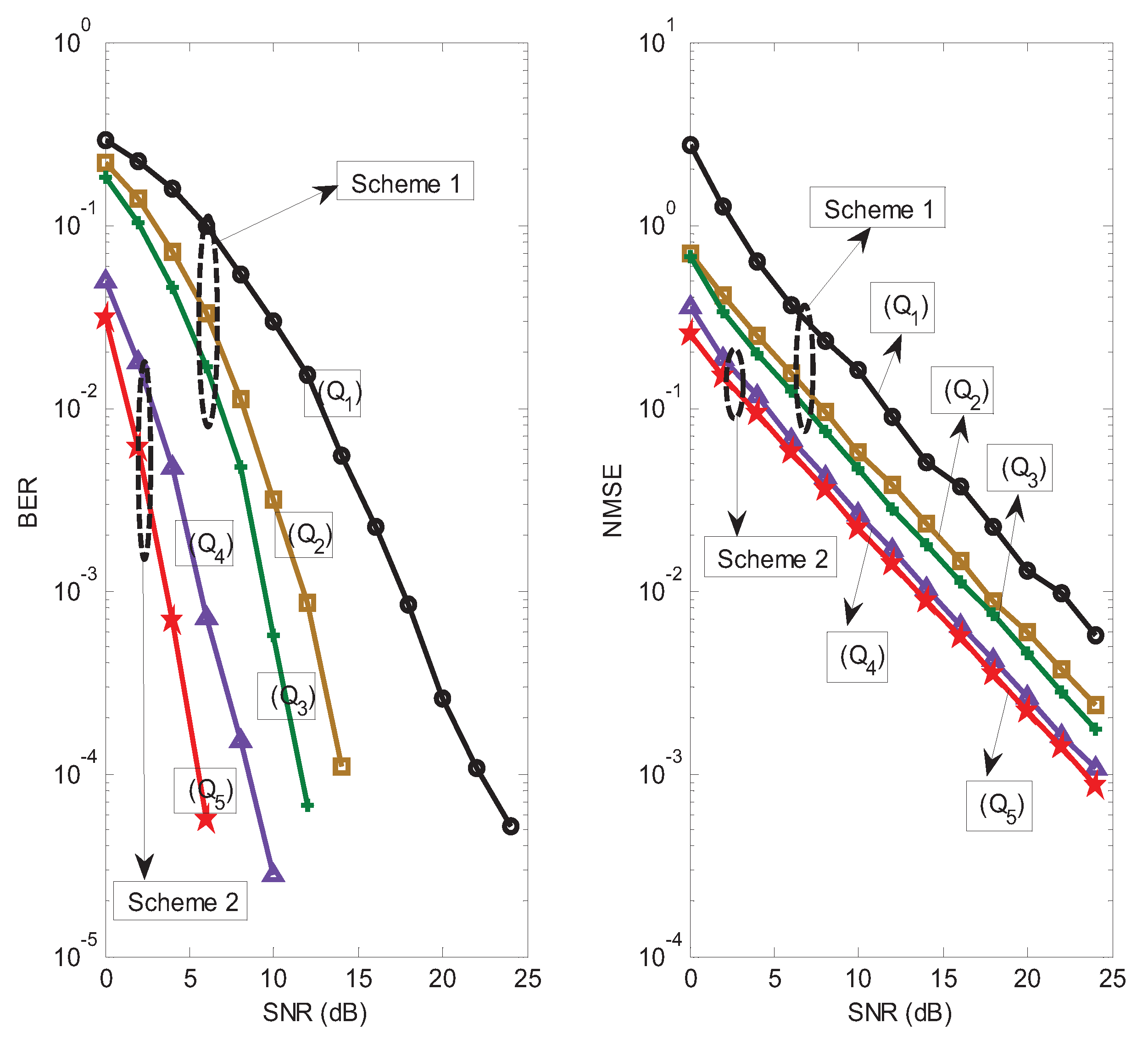

The BER and NMSE performance of the proposed receiver for different schemes is shown in

Figure 9. For scheme 1, the proposed receiver with

has a better BER and NMSE performance than that of the proposed receiver with

. The reason is that the allocation matrix

provides a higher transmit spatial diversity gain than the allocation matrix

. For the same reason, the allocation matrix

outperforms

, and the allocation matrix

outperforms

. We also observe in

Figure 9 that scheme 2 has a better BER and NMSE performance than scheme 1. The reason is that scheme 2 can provide a higher coding diversity than scheme 1. It is worth noting that scheme 1 has higher spectral efficiency compared with scheme 2. The transmission rates for scheme 1 and scheme 2 are about 5/6 and 3/7 (data symbols per symbol period), respectively. In summary, a desired tradeoff between estimation performance and transmission rate can be obtained by designing a suitable scheme.

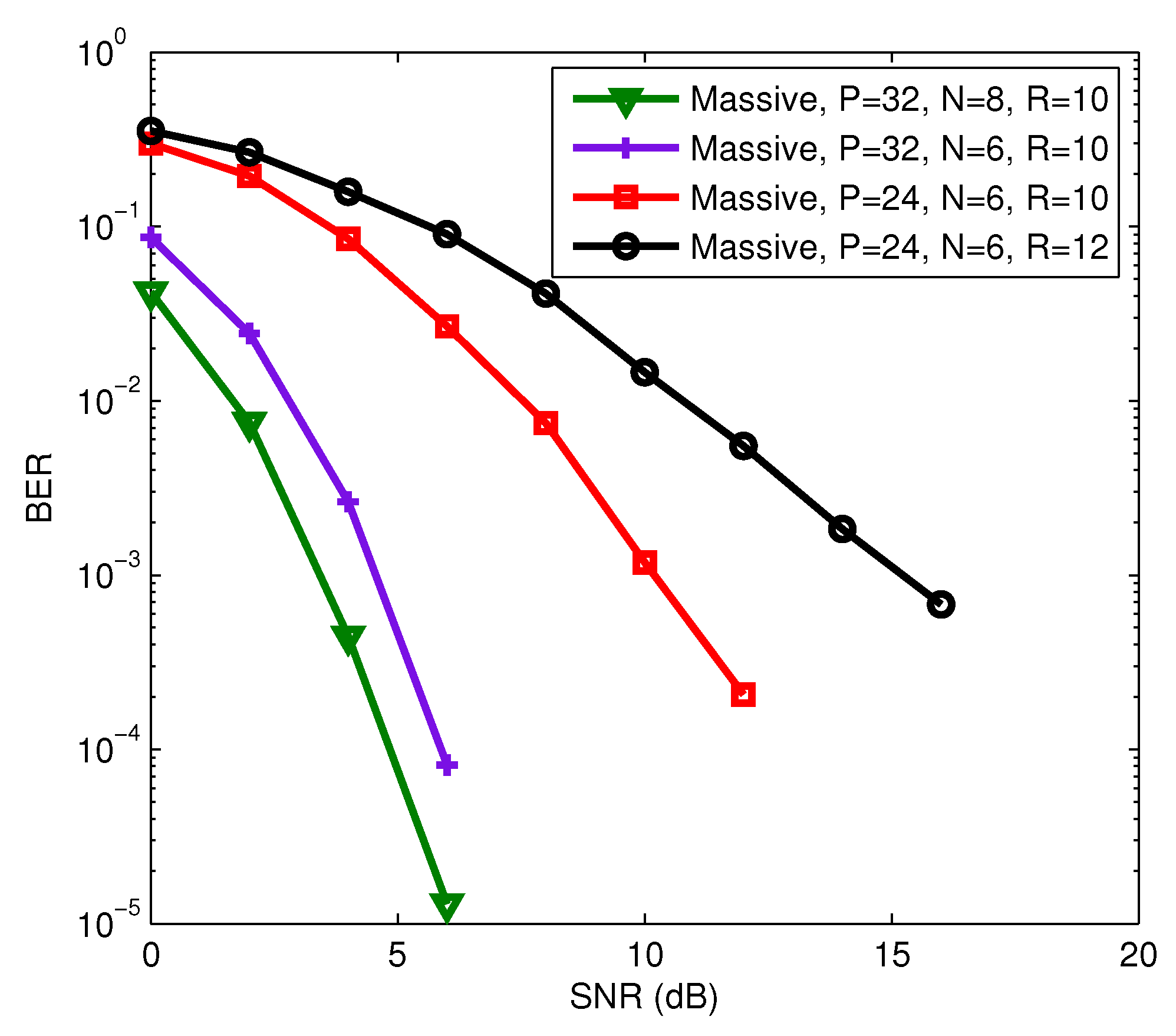

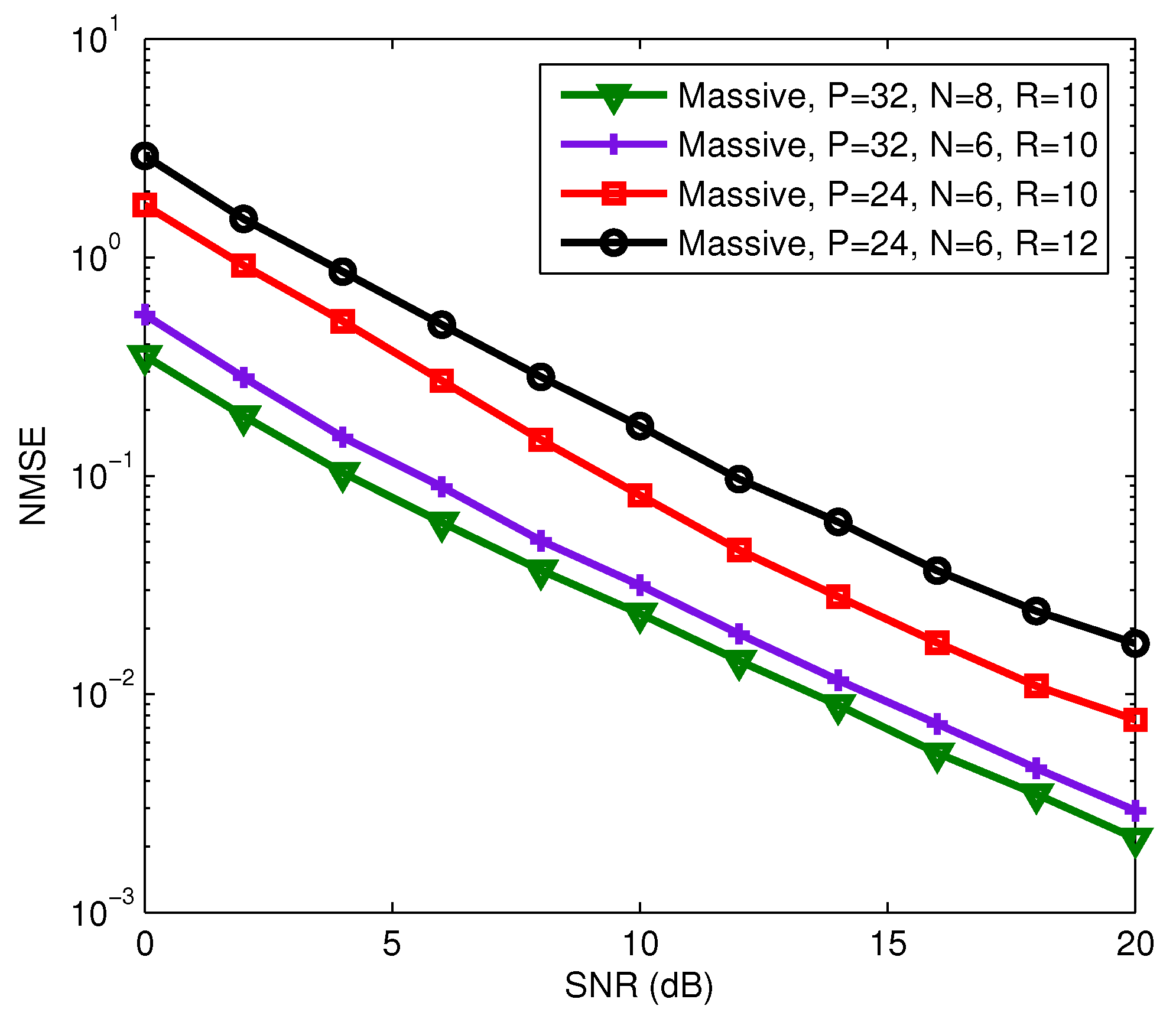

In final example, the multi-user massive MIMO system with a fully-connected hybrid precoding architecture was considered, where

,

, and

for all

. The carrier frequency of this system is set as 28 GHz [

37], and

. We assume that AoAs/AoDs are uniformly distributed in

. For the considering multi-user massive MIMO system, we also evaluate the estimation performance of the proposed receiver in terms of BER and NMSE of channel estimation. It can be seen from

Figure 10 and

Figure 11 that the BER and NMSE of the proposed semi-blind receiver decrease as

P and

N increase, and increase as

R increases. The increase of

P will reduce the transmission rate, but the increase or decrease of

N has no effect on the transmission rate. That means that we can improve the estimation performance of the proposed semi-blind receiver by increasing

N if the channel is constant over a long time interval before changing to another realization. We also observed from

Figure 10 and

Figure 11 that the proposed semi-blind receiver still has a good performance for joint symbol and channel estimation even in a shorter length of code and information symbol, and a larger number of data streams, i.e.,

,

, and

.