WiFreeze: Multiresolution Scalograms for Freezing of Gait Detection in Parkinson’s Leveraging 5G Spectrum with Deep Learning

Abstract

:1. Introduction

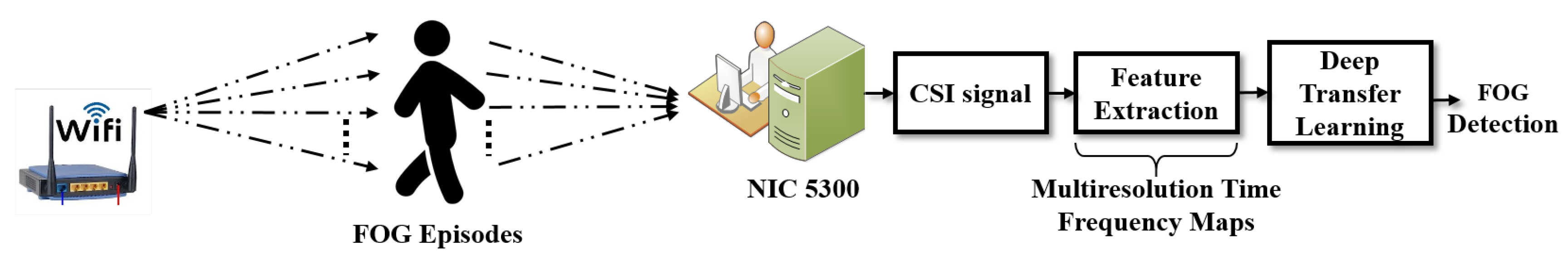

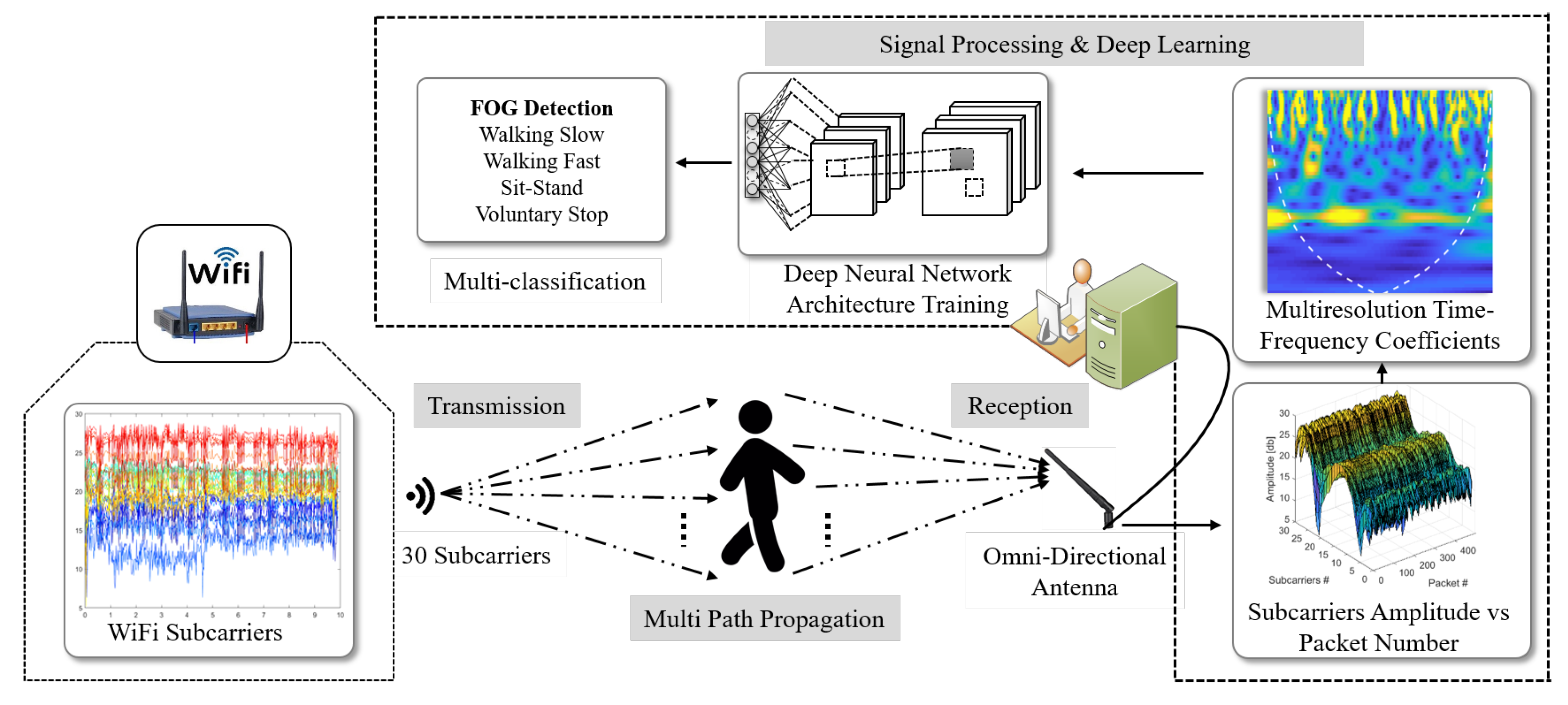

- Proposed WiFi sensing system leveraging deep learning for high multi-classification accuracy for FOG detection compared to other activities including “voluntary stop”.

- Utilized multiresolution scalograms of subcarrier CSI amplitudes as discriminating features for detection of FOG, due to their ability to detect transients in CSI signals at different resolutions.

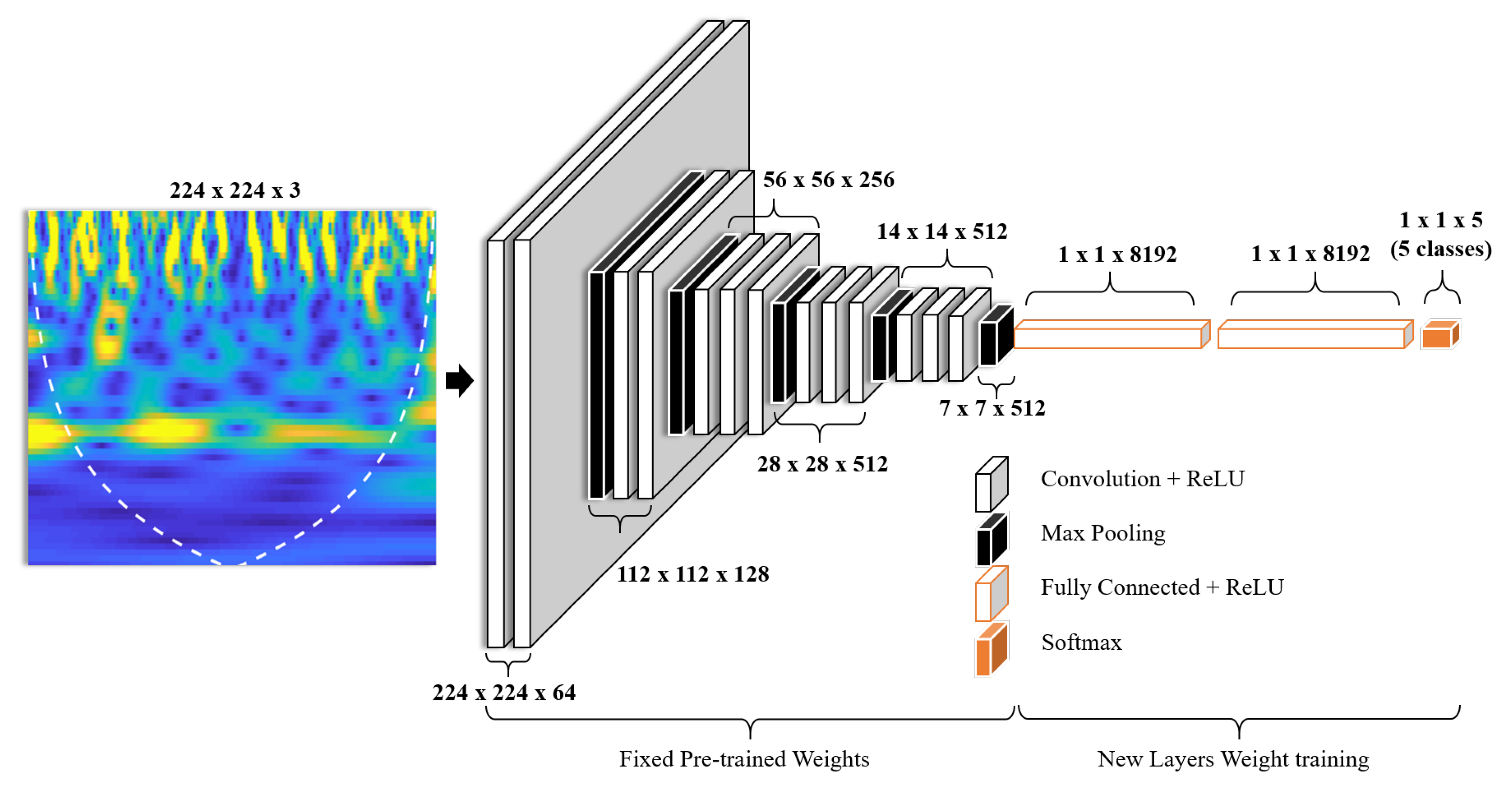

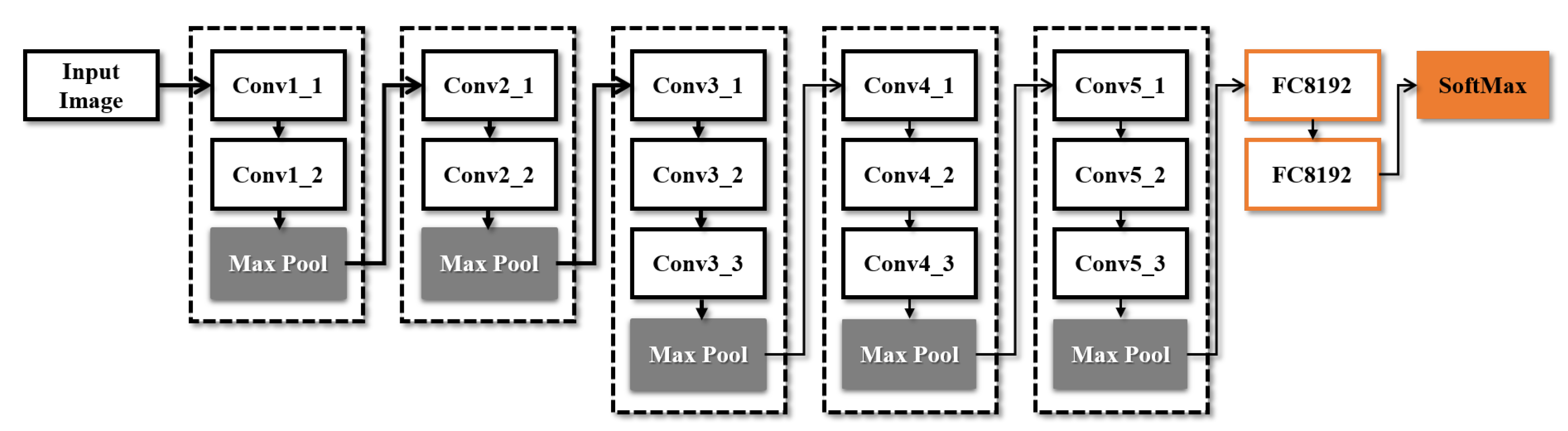

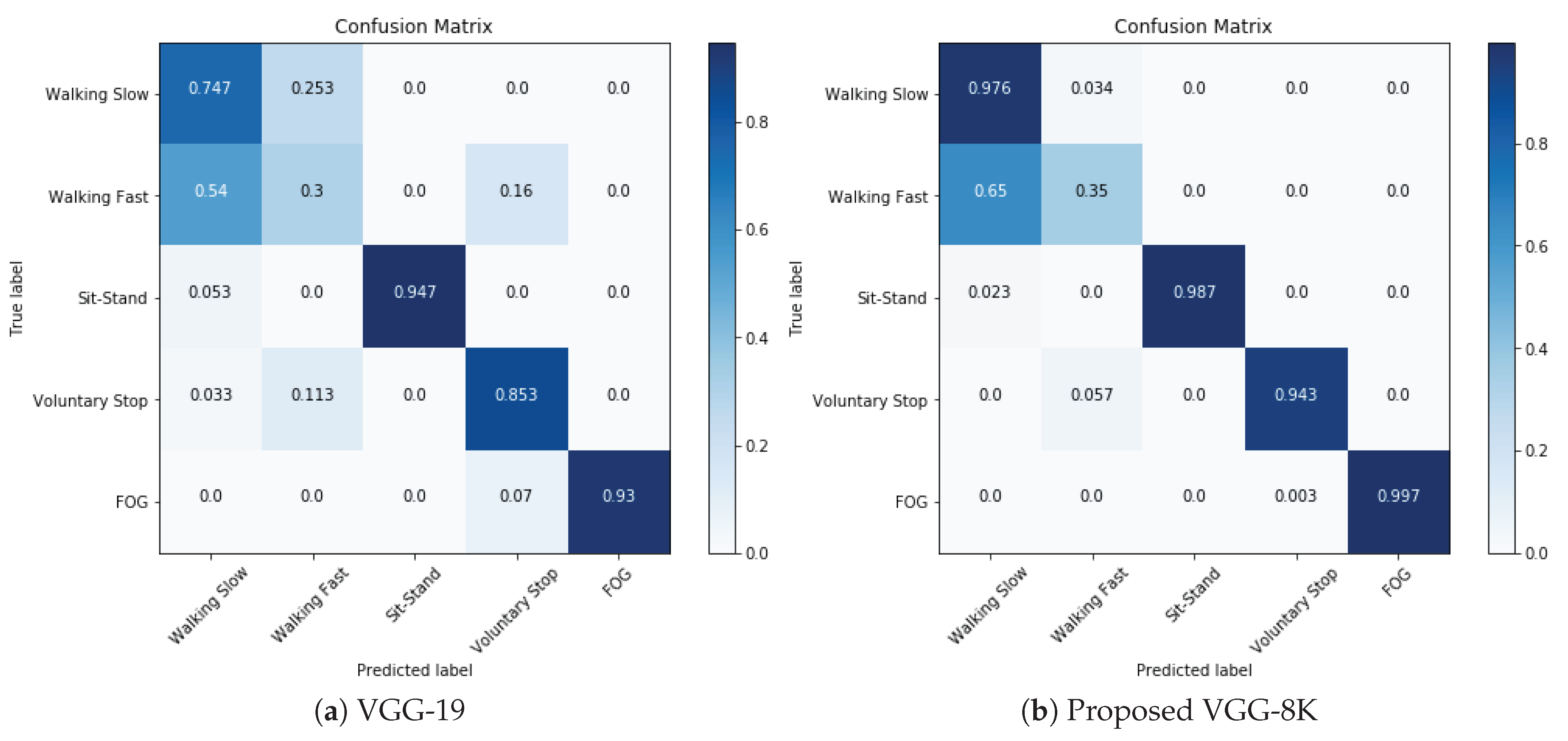

- Engineered and modeled a very deep CNN architecture, namely, VGG-8K with 8192 neurons in each of the fully connected layers, with transfer learning for highest classification accuracy of FOG compared to current state-of-the-art deep CNN models.

2. Channel State Information Preliminaries

3. Methodology

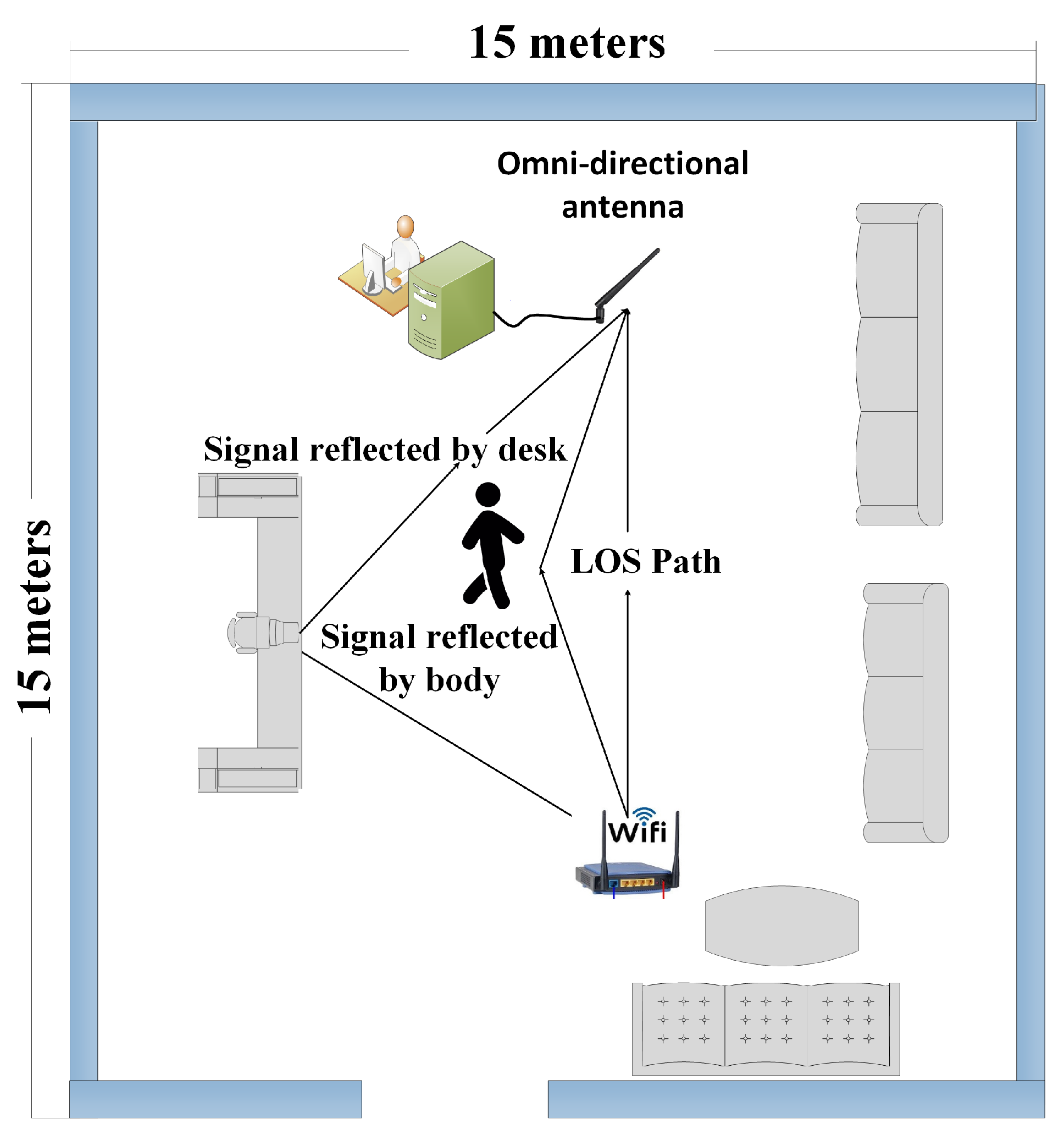

3.1. FOG Detection Set-Up

3.2. Experimental Procedure

4. The Proposed WiFreeze System

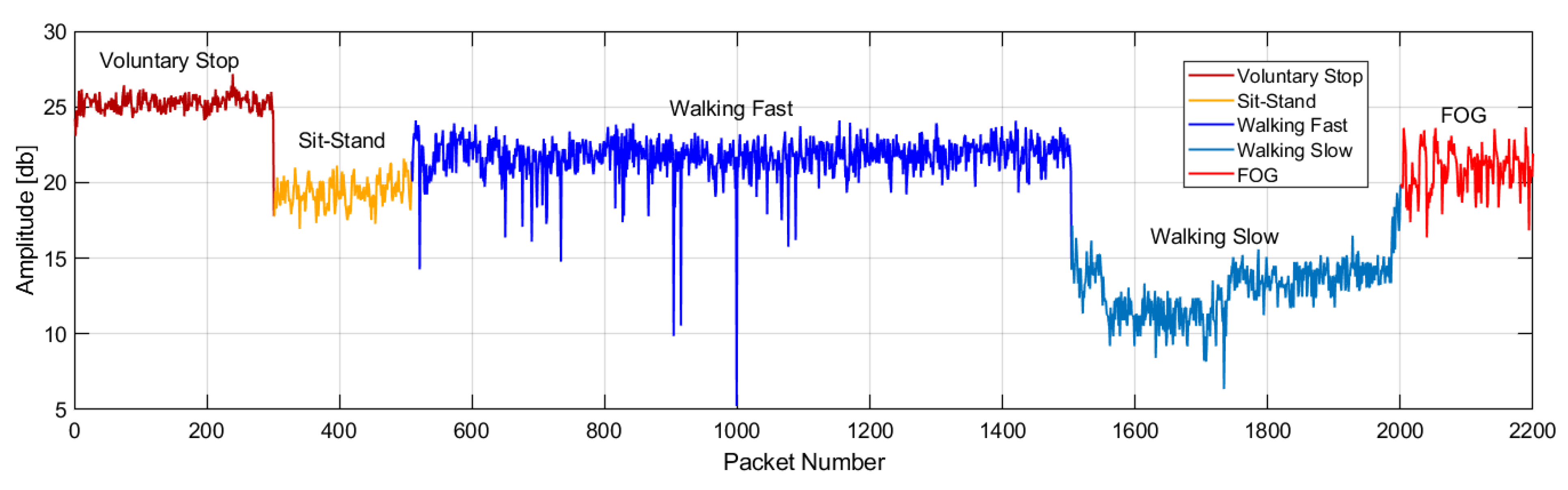

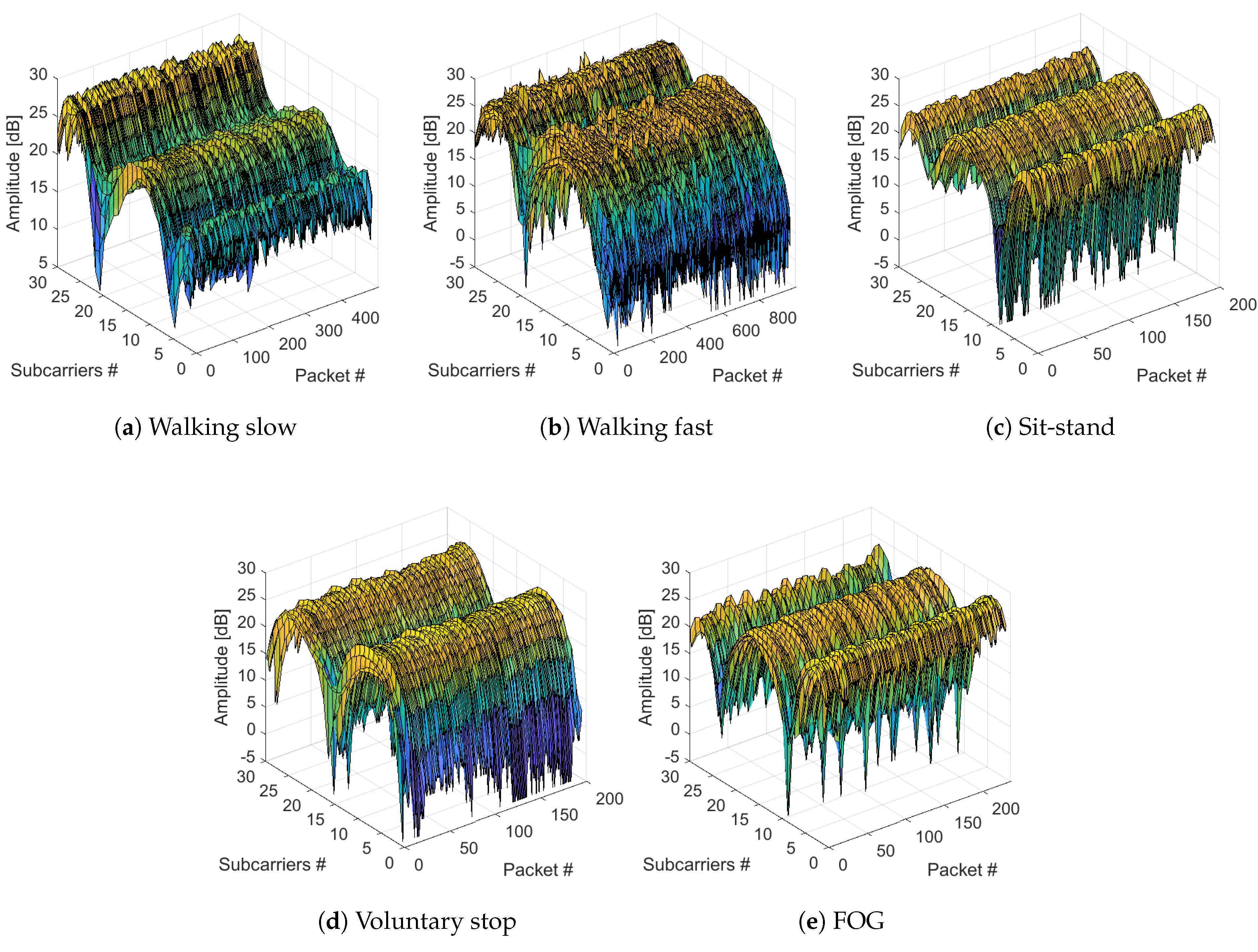

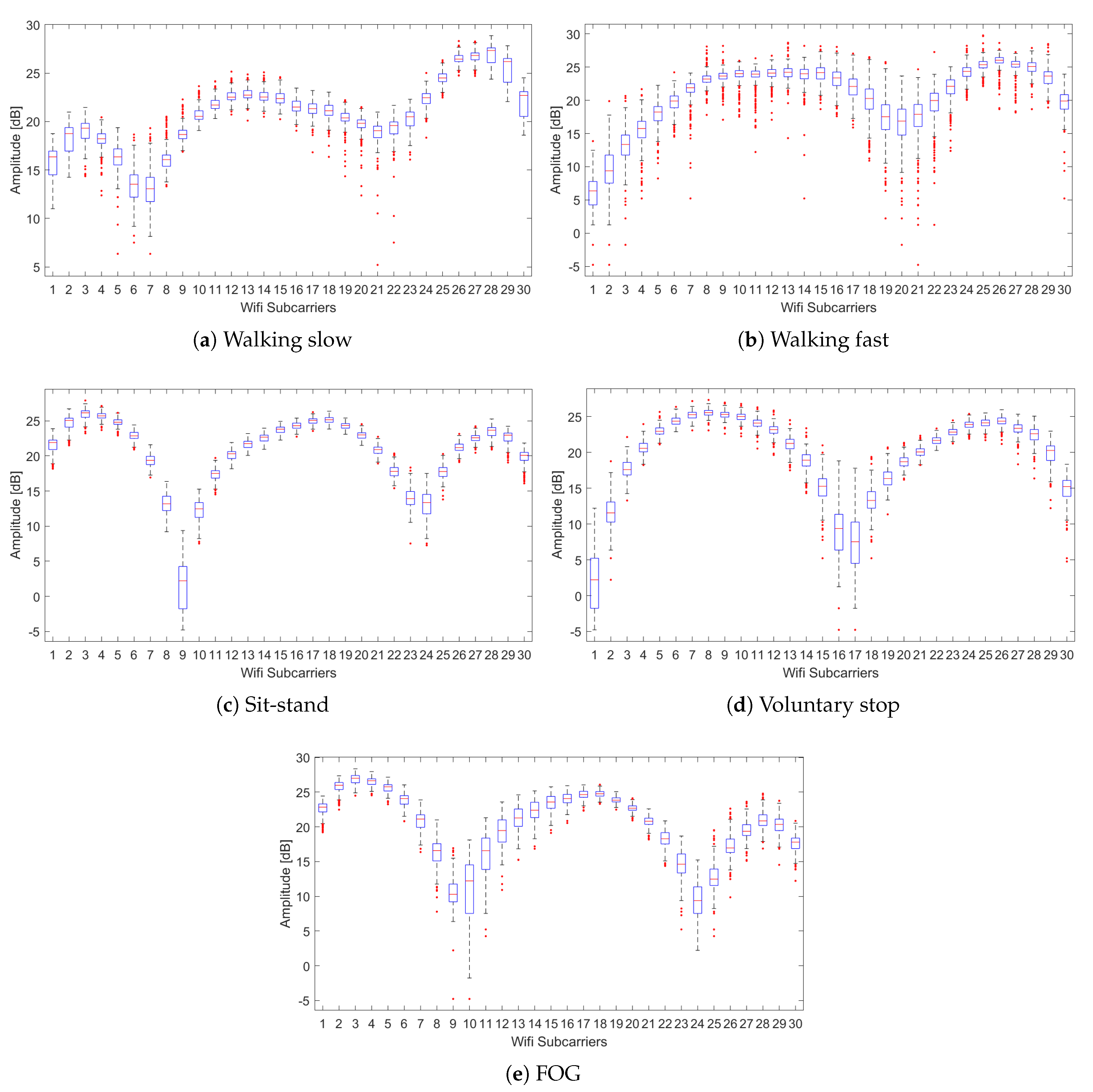

4.1. CSI for FOG and Activities

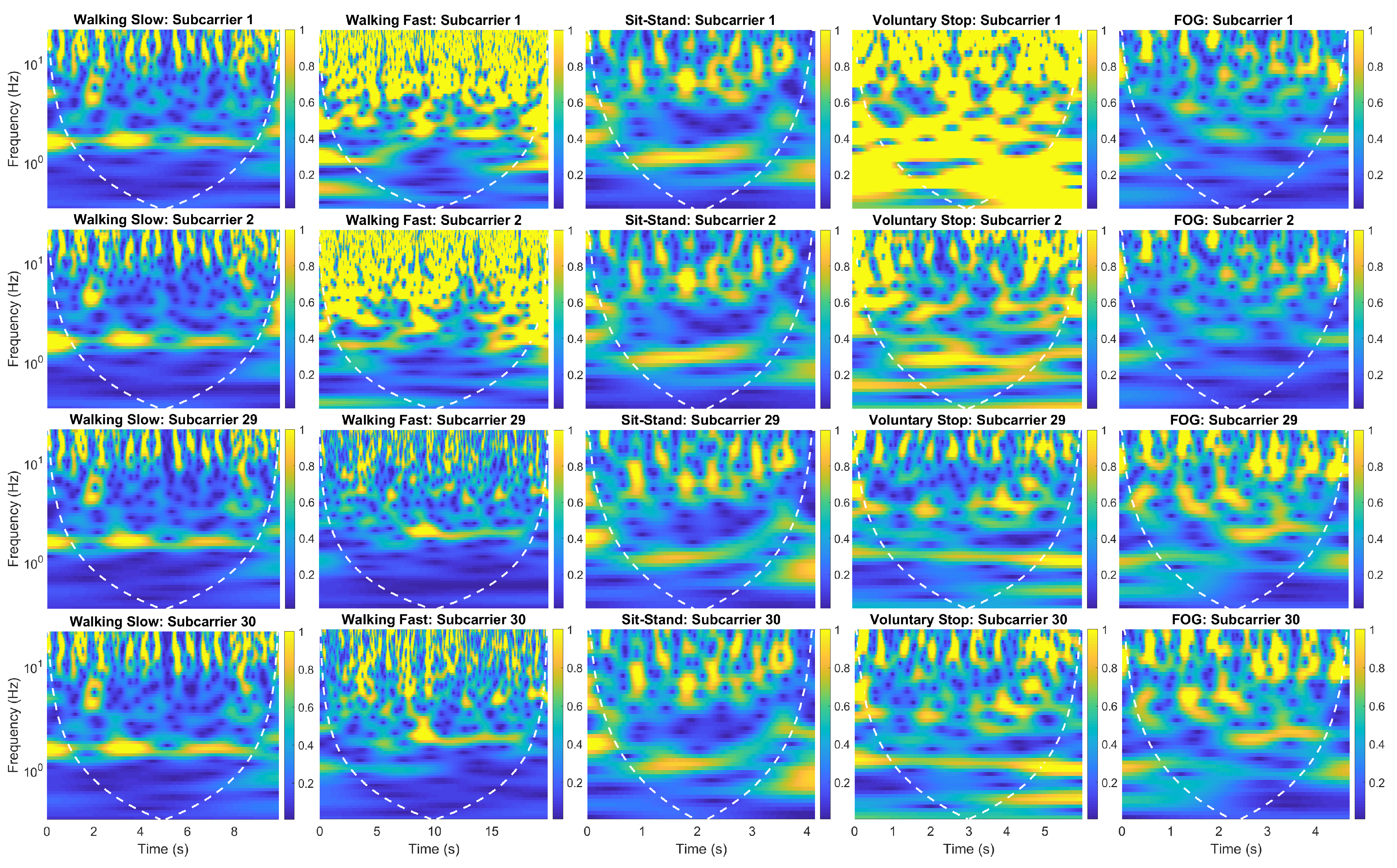

4.2. Multiresolution Time-Frequency Scalograms

4.3. The Proposed Deep Network for WiFreeze System

5. Results and Discussion

6. Conclusions

Author Contributions

Conflicts of Interest

References

- Parkinson’s UK. The Incidence and Prevalence of Parkinson’s in the UK: Results from the Clinical Practice Research Datalink Summary Report. Available online: https://www.parkinsons.org.uk/sites/default/files/2018-01/CS2960%20Incidence%20and%20prevalence%20report%20branding%20summary%20report.pdf (accessed on 5 August 2019).

- Prateek, G.V.; Skog, I.; McNeely, M.E.; Duncan, R.P.; Earhart, G.M.; Nehorai, A. Modeling, Detecting, and Tracking Freezing of Gait in Parkinson Disease Using Inertial Sensors. IEEE Trans. Biomed. Eng. 2018, 65, 2152–2161. [Google Scholar] [CrossRef] [PubMed]

- Li, G.; Ma, J.; Cui, S.; He, Y.; Xiao, Q.; Liu, J.; Chen, S. Parkinson’s disease in China: A forty-year growing track of bedside work. Transl. Neurodegener. 2019, 8, 22. [Google Scholar] [CrossRef] [PubMed]

- Braak, H.; Ghebremedhin, E.; Rüb, U.; Bratzke, H.; Del Tredici, K. Stages in the development of Parkinson’s disease-related pathology. Cell Tissue Res. 2004, 318, 121–134. [Google Scholar] [CrossRef]

- Shulman, L.M.; Gruber-Baldini, A.L.; Anderson, K.E.; Vaughan, C.G.; Reich, S.G.; Fishman, P.S.; Weiner, W.J. The evolution of disability in Parkinson disease. Mov. Disord. 2008, 23, 790–796. [Google Scholar] [CrossRef] [PubMed]

- Giladi, N.; Nieuwboer, A. Understanding and treating freezing of gait in parkinsonism, proposed working definition, and setting the stage. Mov. Disord. Off. J. Mov. Disord. Soc. 2008, 23, S423–S425. [Google Scholar] [CrossRef] [PubMed]

- Bloem, B.R.; Hausdorff, J.M.; Visser, J.E.; Giladi, N. Falls and freezing of gait in Parkinson’s disease: A review of two interconnected, episodic phenomena. Mov. Disord. Off. J. Mov. Disord. Soc. 2004, 19, 871–884. [Google Scholar] [CrossRef] [PubMed]

- Nieuwboer, A.; Rochester, L.; Herman, T.; Vandenberghe, W.; Emil, G.E.; Thomaes, T.; Giladi, N. Reliability of the new freezing of gait questionnaire: Agreement between patients with Parkinson’s disease and their carers. Gait Posture 2009, 30, 459–463. [Google Scholar] [CrossRef]

- Camps, J.; Samà, A.; Martín, M.; Rodríguez-Martín, D.; Pérez-López, C.; Moreno Arostegui, J.M.; Cabestany, J.; Català, A.; Alcaine, S.; Mestre, B.; et al. Deep learning for freezing of gait detection in Parkinson’s disease patients in their homes using a waist-worn inertial measurement unit. Knowl.-Based Syst. 2018, 139, 119–131. [Google Scholar] [CrossRef]

- Samà, A.; Rodríguez-Martín, D.; Pérez-López, C.; Català, A.; Alcaine, S.; Mestre, B.; Prats, A.; Crespo, M.C.; Bayés, À. Determining the optimal features in freezing of gait detection through a single waist accelerometer in home environments. Pattern Recognit. Lett. 2018, 105, 135–143. [Google Scholar] [CrossRef]

- Kim, H.B.; Lee, H.J.; Lee, W.W.; Kim, S.K.; Jeon, H.S.; Park, H.Y.; Shin, C.W.; Yi, W.J.; Jeon, B.; Park, K.S. Validation of Freezing-of-Gait Monitoring Using Smartphone. Telemed. e-Health 2018, 24, 899–907. [Google Scholar] [CrossRef]

- Capecci, M.; Pepa, L.; Verdini, F.; Ceravolo, M.G. A smartphone-based architecture to detect and quantify freezing of gait in Parkinson’s disease. Gait Posture 2016, 50, 28–33. [Google Scholar] [CrossRef] [PubMed]

- Pepa, L.; Ciabattoni, L.; Verdini, F.; Capecci, M.; Ceravolo, M.G. Smartphone based Fuzzy Logic freezing of gait detection in Parkinson’s Disease. In Proceedings of the MESA 2014—10th IEEE/ASME International Conference on Mechatronic and Embedded Systems and Applications, Senigallia, Italy, 10–12 September 2014. [Google Scholar] [CrossRef]

- Mitoma, H.; Hayashi, R.; Yanagisawa, N.; Tsukagoshi, H. Characteristics of parkinsonian and ataxic gaits: A study using surface electromyograms, angular displacements and floor reaction forces. J. Neurol. Sci. 2000, 174, 22–39. [Google Scholar] [CrossRef]

- Nieuwboer, A.; Dom, R.; De Weerdt, W.; Desloovere, K.; Janssens, L.; Stijn, V. Electromyographic profiles of gait prior to onset of freezing episodes in patients with Parkinson’s disease. Brain 2004, 127, 1650–1660. [Google Scholar] [CrossRef] [PubMed]

- Plotnik, M.; Giladi, N.; Balash, Y.; Peretz, C.; Hausdorff, J.M. Is freezing of gait in Parkinson’s disease related to asymmetric motor function? Ann. Neurol. Off. J. Am. Neurol. Assoc. Child Neurol. Soc. 2005, 57, 656–663. [Google Scholar] [CrossRef]

- Hausdorff, J.; Schaafsma, J.; Balash, Y.; Bartels, A.; Gurevich, T.; Giladi, N. Impaired regulation of stride variability in Parkinson’s disease subjects with freezing of gait. Exp. Brain Res. 2003, 149, 187–194. [Google Scholar] [CrossRef]

- Amini, A.; Banitsas, K.; Hosseinzadeh, S. A new technique for foot-off and foot contact detection in a gait cycle based on the knee joint angle using microsoft kinect v2. In Proceedings of the 2017 IEEE EMBS International Conference on Biomedical & Health Informatics (BHI), Orlando, FL, USA, 16–19 February 2017; pp. 153–156. [Google Scholar]

- Bigy, A.A.M.; Banitsas, K.; Badii, A.; Cosmas, J. Recognition of postures and Freezing of Gait in Parkinson’s disease patients using Microsoft Kinect sensor. In Proceedings of the 2015 7th International IEEE/EMBS Conference on Neural Engineering (NER), Montpellier, France, 22–24 April 2015; pp. 731–734. [Google Scholar]

- Qian, K.; Wu, C.; Yang, Z.; Liu, Y.; He, F.; Xing, T. Enabling contactless detection of moving humans with dynamic speeds using CSI. ACM Trans. Embed. Comput. Syst. (TECS) 2018, 17, 52. [Google Scholar] [CrossRef]

- Soltanaghaei, E.; Kalyanaraman, A.; Whitehouse, K. Peripheral wifi vision: Exploiting multipath reflections for more sensitive human sensing. In Proceedings of the 4th International on Workshop on Physical Analytics, Niagara Falls, NY, USA, 19 June 2017; pp. 13–18. [Google Scholar]

- Zhou, R.; Lu, X.; Zhao, P.; Chen, J. Device-free presence detection and localization with SVM and CSI fingerprinting. IEEE Sens. J. 2017, 17, 7990–7999. [Google Scholar] [CrossRef]

- Zhang, F.; Niu, K.; Xiong, J.; Jin, B.; Gu, T.; Jiang, Y.; Zhang, D. Towards a Diffraction-based Sensing Approach on Human Activity Recognition. Proc. ACM Interact. Mob. Wearable Ubiquitous Technol. 2019, 3, 33. [Google Scholar] [CrossRef]

- Wang, W.; Liu, A.X.; Shahzad, M.; Ling, K.; Lu, S. Understanding and modeling of wifi signal based human activity recognition. In Proceedings of the 21st Annual International Conference on Mobile Computing and Networking, Paris, France, 7–11 September 2015; pp. 65–76. [Google Scholar]

- Wang, Y.; Liu, J.; Chen, Y.; Gruteser, M.; Yang, J.; Liu, H. E-eyes: Device-free location-oriented activity identification using fine-grained wifi signatures. In Proceedings of the 20th Annual International Conference on Mobile Computing and Networking, Maui, HI, USA, 7–11 September 2014; pp. 617–628. [Google Scholar]

- Gu, Y.; Zhang, Y.; Huang, M.; Ren, F. Your WiFi Knows You Fall: A Channel Data-driven Device-free Fall Sensing System. In Proceedings of the 2018 5th IEEE International Conference on Cloud Computing and Intelligence Systems (CCIS), Nanjing, China, 23–25 November 2018; pp. 943–947. [Google Scholar]

- Tian, Y.; Lee, G.H.; He, H.; Hsu, C.Y.; Katabi, D. RF-based fall monitoring using convolutional neural networks. Proc. ACM Interac. Mob. Wearable Ubiquitous Technol. 2018, 2, 137. [Google Scholar] [CrossRef]

- Wang, Y.; Wu, K.; Ni, L.M. Wifall: Device-free fall detection by wireless networks. IEEE Trans. Mob. Comput. 2016, 16, 581–594. [Google Scholar] [CrossRef]

- Wang, H.; Zhang, D.; Wang, Y.; Ma, J.; Wang, Y.; Li, S. RT-Fall: A real-time and contactless fall detection system with commodity WiFi devices. IEEE Trans. Mob. Comput. 2016, 16, 511–526. [Google Scholar] [CrossRef]

- Venkatnarayan, R.H.; Page, G.; Shahzad, M. Multi-user gesture recognition using WiFi. In Proceedings of the 16th Annual International Conference on Mobile Systems, Applications, and Services, Munich, Germany, 10–15 June 2018; pp. 401–413. [Google Scholar]

- Zheng, Y.; Zhang, Y.; Qian, K.; Zhang, G.; Liu, Y.; Wu, C.; Yang, Z. Zero-Effort Cross-Domain Gesture Recognition with Wi-Fi. In Proceedings of the 17th Annual International Conference on Mobile Systems, Applications, and Services, Seoul, Korea, 17–21 June 2019; pp. 313–325. [Google Scholar]

- Islam, M.T.; Nirjon, S. Wi-Fringe: Leveraging Text Semantics in WiFi CSI-Based Device-Free Named Gesture Recognition. arXiv 2019, arXiv:1908.06803. [Google Scholar]

- Nkabiti, K.P.; Chen, Y.; Sultan, K.; Armand, B. A Deep Bidirectional LSTM Recurrent Neural Networks for Identifying Humans Indoors Using Channel State Information. In Proceedings of the 2019 28th Wireless and Optical Communications Conference (WOCC), Beijing, China, 9–10 May 2019; pp. 1–5. [Google Scholar]

- Chen, Y.; Dong, W.; Gao, Y.; Liu, X.; Gu, T. Rapid: A multimodal and device-free approach using noise estimation for robust person identification. Proc. ACM Interact. Mob. Wearable Ubiquitous Technol. 2017, 1, 41. [Google Scholar] [CrossRef]

- Cheng, L.; Wang, J. How can I guard my AP?: Non-intrusive user identification for mobile devices using WiFi signals. In Proceedings of the 17th ACM International Symposium on Mobile Ad Hoc Networking and Computing, Paderborn, Germany, 5–8 July 2016; pp. 91–100. [Google Scholar]

- Ministry of Industry and Information Technology of the People’s Republic of China. Ministry of Industry and Information Technology on the Fifth Generation International Mobile Telecommunications System (IMT-2020) Using 3300–3600 MHz and 4800–5000 MHz Band Related Matters Notice; Ministry of Industry and Information Technology of the People’s Republic of China: Beijing, China, 6 June 2017.

- Abadi, M.; Barham, P.; Chen, J.; Chen, Z.; Davis, A.; Dean, J.; Devin, M.; Ghemawat, S.; Irving, G.; Isard, M.; et al. Tensorflow: A system for large-scale machine learning. In Proceedings of the 12th {USENIX} Symposium on Operating Systems Design and Implementation ({OSDI} 16), Savannah, GA, USA, 2–4 November 2016; pp. 265–283. [Google Scholar]

- Chollet, F.; others. Keras: The python deep learning library. Astrophysics Source Code Library. 2018. Available online: https://ascl.net/1806.022 (accessed on 5 August 2019).

- Carneiro, T.; Da Nóbrega, R.V.M.; Nepomuceno, T.; Bian, G.B.; De Albuquerque, V.H.C.; Reboucas Filho, P.P. Performance Analysis of Google Colaboratory as a Tool for Accelerating Deep Learning Applications. IEEE Access 2018, 6, 61677–61685. [Google Scholar] [CrossRef]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, arXiv:1409.1556. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 26 June–1 July 2016; pp. 770–778. [Google Scholar]

- Rao, R. Wavelet transforms. In Encyclopedia of Imaging Science and Technology; John Wiley & Sons: Hoboken, NJ, USA, 2002. [Google Scholar] [CrossRef]

- Lilly, J.M.; Olhede, S.C. Generalized Morse wavelets as a superfamily of analytic wavelets. IEEE Trans. Signal Process. 2012, 60, 6036–6041. [Google Scholar] [CrossRef]

- Deng, J.; Dong, W.; Socher, R.; Li, L.J.; Li, K.; Fei-Fei, L. Imagenet: A large-scale hierarchical image database. In Proceedings of the 2009 IEEE Conference on Computer Vision and Pattern Recognition, Miami, FL, USA, 20–25 June 2009; pp. 248–255. [Google Scholar]

- Zhang, W.; Du, T.; Wang, J. Deep learning over multi-field categorical data. In European Conference on Information Retrieval; Springer: Cham, Switzerland, 2016; pp. 45–57. [Google Scholar]

- Camps, J.; Samà, A.; Martín, M.; Rodríguez-Martín, D.; Pérez-López, C.; Alcaine, S.; Mestre, B.; Prats, A.; Crespo, M.C.; Cabestany, J.; et al. Deep learning for detecting freezing of gait episodes in Parkinson’s disease based on accelerometers. In International Work-Conference on Artificial Neural Networks; Springer: Cham, Switzerland, 2017; pp. 344–355. [Google Scholar]

- Rodríguez-Martín, D.; Samà, A.; Pérez-López, C.; Català, A.; Moreno Arostegui, J.M.; Cabestany, J.; Bayés, À.; Alcaine, S.; Mestre, B.; Prats, A.; et al. Home detection of freezing of gait using support vector machines through a single waist-worn triaxial accelerometer. PLoS ONE 2017, 12, e0171764. [Google Scholar] [CrossRef]

- Masiala, S.; Huijbers, W.; Atzmueller, M. Feature-Set-Engineering for Detecting Freezing of Gait in Parkinson’s Disease using Deep Recurrent Neural Networks. arXiv 2019, arXiv:1909.03428. [Google Scholar]

- Amini, A.; Banitsas, K.; Young, W.R. Kinect4FOG: Monitoring and improving mobility in people with Parkinson’s using a novel system incorporating the Microsoft Kinect v2. Disabil. Rehabil. Assist. Technol. 2019, 14, 566–573. [Google Scholar] [CrossRef]

- Sun, R.; Wang, Z.; Martens, K.E.; Lewis, S. Convolutional 3D Attention Network for Video Based Freezing of Gait Recognition. In Proceedings of the 2018 Digital Image Computing: Techniques and Applications (DICTA), Canberra, Australia, 10–13 December 2018; pp. 1–7. [Google Scholar]

- Hu, K.; Wang, Z.; Mei, S.; Ehgoetz, K.; Yao, T.; Lewis, S.; Feng, D. Vision-based freezing of gait detection with anatomic directed graph representation. IEEE J. Biomed. Health Inform. 2019. [Google Scholar] [CrossRef]

- Hu, K.; Wang, Z.; Wang, W.; Martens, K.A.E.; Wang, L.; Tan, T.; Lewis, S.J.; Feng, D.D. Graph Sequence Recurrent Neural Network for Vision-based Freezing of Gait Detection. IEEE Trans. Image Process. 2019. [Google Scholar] [CrossRef] [PubMed]

- Kim, H.; Lee, H.J.; Lee, W.; Kwon, S.; Kim, S.K.; Jeon, H.S.; Park, H.; Shin, C.W.; Yi, W.J.; Jeon, B.S.; et al. Unconstrained detection of freezing of Gait in Parkinson’s disease patients using smartphone. In Proceedings of the 2015 37th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), Milan, Italy, 25–29 August 2015; pp. 3751–3754. [Google Scholar] [CrossRef]

| Deep Neural | Walking Slow | Walking Fast | Sit-Stand | Voluntary Stop | FOG | Multi-Class |

|---|---|---|---|---|---|---|

| Networks | Accuracy [CI] (%) | Accuracy [CI] (%) | Accuracy [CI] (%) | Accuracy [CI] (%) | Accuracy [CI] (%) | Accuracy [CI] (%) |

| ResNet50 | 45.00 [42.35–47.64] | 25.00 [22.62–27.31] | 15.00 [13.00–16.90] | 45.00 [42.33–47.65] | 67.00 [64.54–69.51] | 39.40 [36.77–42.00] |

| ResNet101 | 60.00 [57.42–62.61] | 27.00 [24.57–29.37] | 30.00 [27.50–32.44] | 27.00 [24.57–29.37] | 54.00 [51.36–56.66] | 39.60 [36.97–42.21] |

| VGG16 | 60.00 [57.42–62.61] | 35.00 [32.42–37.54] | 81.00 [79.00–83.09] | 87.00 [85.31–88.79] | 91.00 [89.60–92.53] | 70.80 [68.43–73.23] |

| VGG19 | 74.67 [72.42–76.99] | 30.00 [27.50–32.44] | 94.67 [93.60–95.87] | 85.33 [83.55–87.22] | 93.00 [91.77–94.36] | 75.53 [73.31–77.82] |

| Proposed VGG-8K | 97.60 [96.69–98.42] | 35.00 [32.42–37.54] | 98.70 [98.26–99.30] | 94.30 [93.20–95.54] | 99.70 [99.65–99.99] | 85.06 [83.26–86.96] |

| Authors | Detection System | Sensors | Patients | Algorithm | Sensitivity | Specificity | Accuracy |

|---|---|---|---|---|---|---|---|

| Prateek et al. [2] | Wearable devices | IMU | 16 | Likelihood ratio | 86.00% | - | 81.03% |

| Camps et al. [46] | Wearable devices | Accelerometer | 15 | CNN | 88.60% | 78.00% | 83.33% |

| Sama et al. [10] | Wearable devices | Accelerometer | 15 | Support vector machine | 91.81% | 87.45% | 89.60% |

| Rodriguez et al. [47] | Wearable devices | Accelerometer | 21 | Support vector machine | 88.10% | 80.10% | 84.00% |

| Camps et al. [9] | Wearable devices | IMU | 21 | CNN | 92.60% | 88.70% | 89.00% |

| Masiala et al. [48] | Wearable devices | Accelerometer | 10 | Deep RNN | 85.00% | 89.00% | - |

| Aminis et al. [49] | Vision based | Camera, depth | 15 | Position offset tracking | - | - | 88.60% |

| Bigy et al. [19] | Vision based | Camera, depth | 7 | Body joint positions | - | - | 92.00% |

| Sun et al. [50] | Vision based | Camera | 45 | 3D CNN | 68.20% | 80.80% | 79.30% |

| Hu et al. [51] | Vision based | Camera | 45 | Graph CNN | 81.90% | 82.10% | 82.10% |

| Hu et al. [52] | Vision based | Camera | 45 | Deep RNN | 83.80% | 82.30% | 82.50% |

| Kim et al. [53] | Smart phone | Accelerometer, gyro | 15 | AdaBoost.M1 | 87.00% | 89.20% | 86.00% |

| Kim et al. [11] | Smart phone | Accelerometer, gyro | 32 | CNN | 93.80% | 90.10% | 92.00% |

| Capecci et al. [12] | Smart phone | Accelerometer | 20 | Spectrum, cadence | 87.57% | 94.97% | 92.86% |

| Pepa et al. [13] | Smart phone | Accelerometer | 18 | Fuzzy inference system | 89.00% | 97.00% | 93.00% |

| Proposed WiFreeze | WiFi 5G Spect. | Wireless sensing | 15 | Multi-Class Deep CNN | 97.08% | 100.00% | 99.70% |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Tahir, A.; Ahmad, J.; Shah, S.A.; Morison, G.; Skelton, D.A.; Larijani, H.; Abbasi, Q.H.; Imran, M.A.; Gibson, R.M. WiFreeze: Multiresolution Scalograms for Freezing of Gait Detection in Parkinson’s Leveraging 5G Spectrum with Deep Learning. Electronics 2019, 8, 1433. https://doi.org/10.3390/electronics8121433

Tahir A, Ahmad J, Shah SA, Morison G, Skelton DA, Larijani H, Abbasi QH, Imran MA, Gibson RM. WiFreeze: Multiresolution Scalograms for Freezing of Gait Detection in Parkinson’s Leveraging 5G Spectrum with Deep Learning. Electronics. 2019; 8(12):1433. https://doi.org/10.3390/electronics8121433

Chicago/Turabian StyleTahir, Ahsen, Jawad Ahmad, Syed Aziz Shah, Gordon Morison, Dawn A. Skelton, Hadi Larijani, Qammer H. Abbasi, Muhammad Ali Imran, and Ryan M. Gibson. 2019. "WiFreeze: Multiresolution Scalograms for Freezing of Gait Detection in Parkinson’s Leveraging 5G Spectrum with Deep Learning" Electronics 8, no. 12: 1433. https://doi.org/10.3390/electronics8121433

APA StyleTahir, A., Ahmad, J., Shah, S. A., Morison, G., Skelton, D. A., Larijani, H., Abbasi, Q. H., Imran, M. A., & Gibson, R. M. (2019). WiFreeze: Multiresolution Scalograms for Freezing of Gait Detection in Parkinson’s Leveraging 5G Spectrum with Deep Learning. Electronics, 8(12), 1433. https://doi.org/10.3390/electronics8121433