Fast and Accurate Memory Simulation by Integrating DRAMSim2 into McSimA+

Abstract

:1. Introduction and Motivation

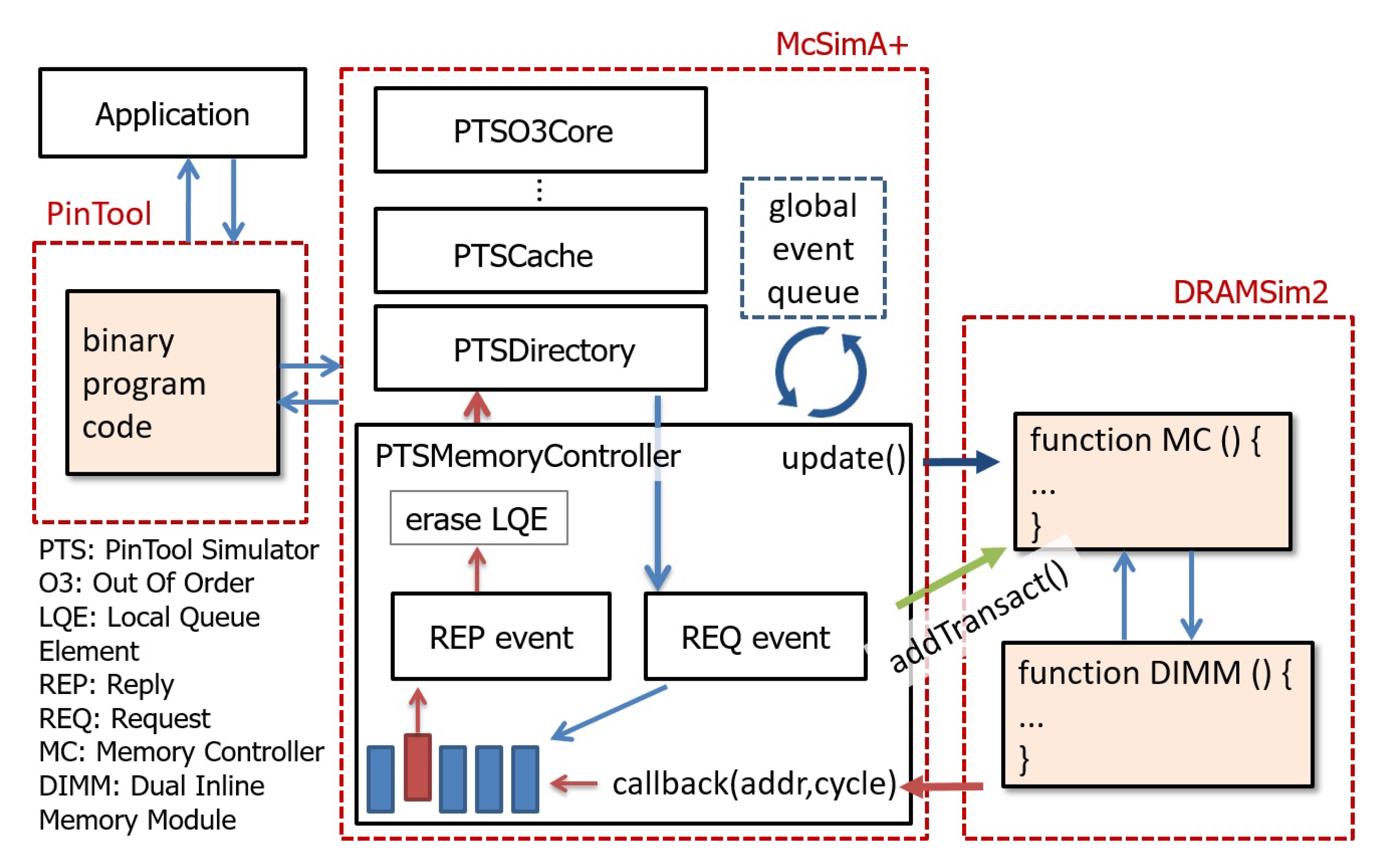

2. Simulators

3. Integration

4. Result Comparison and Verification

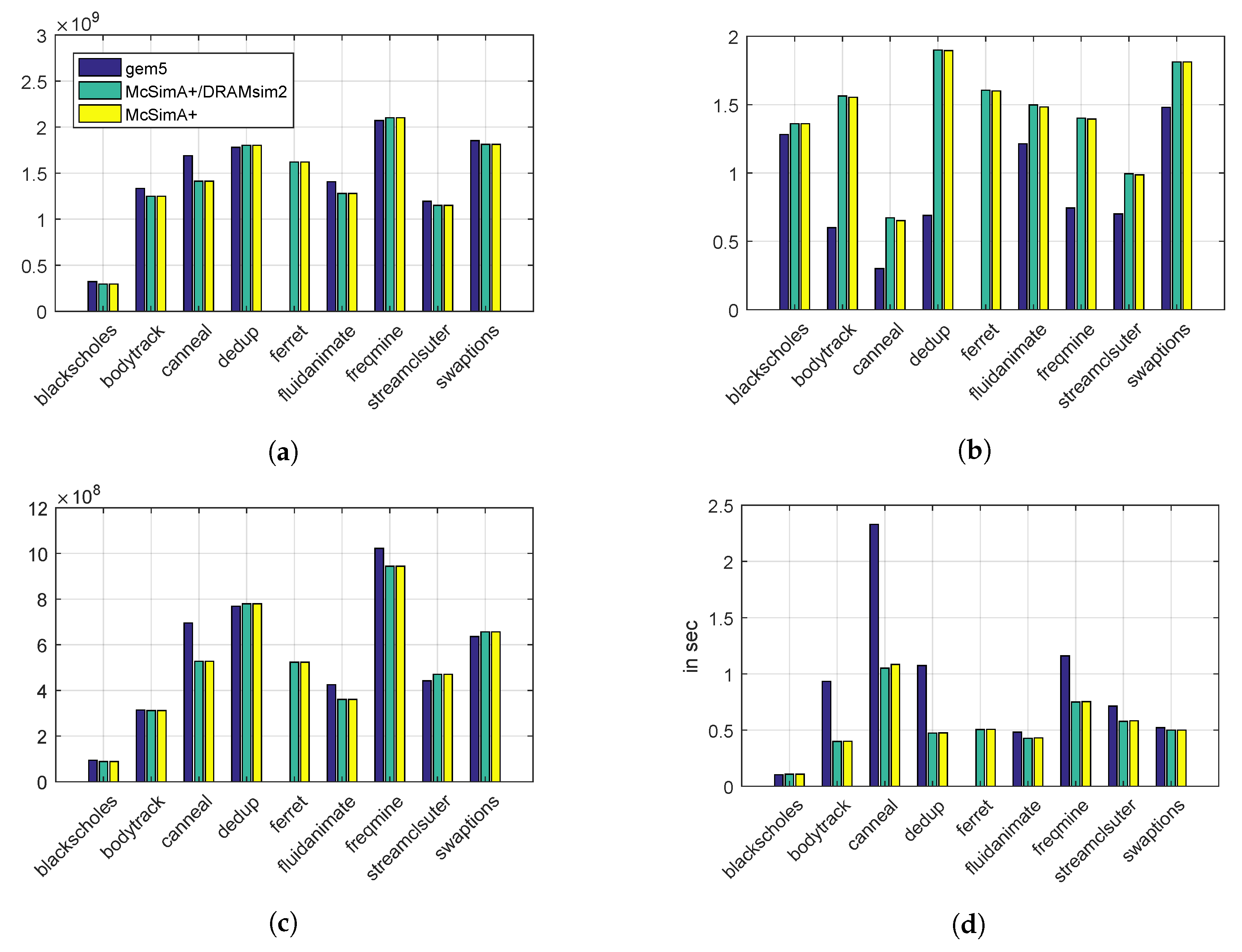

4.1. Simulated System Performance

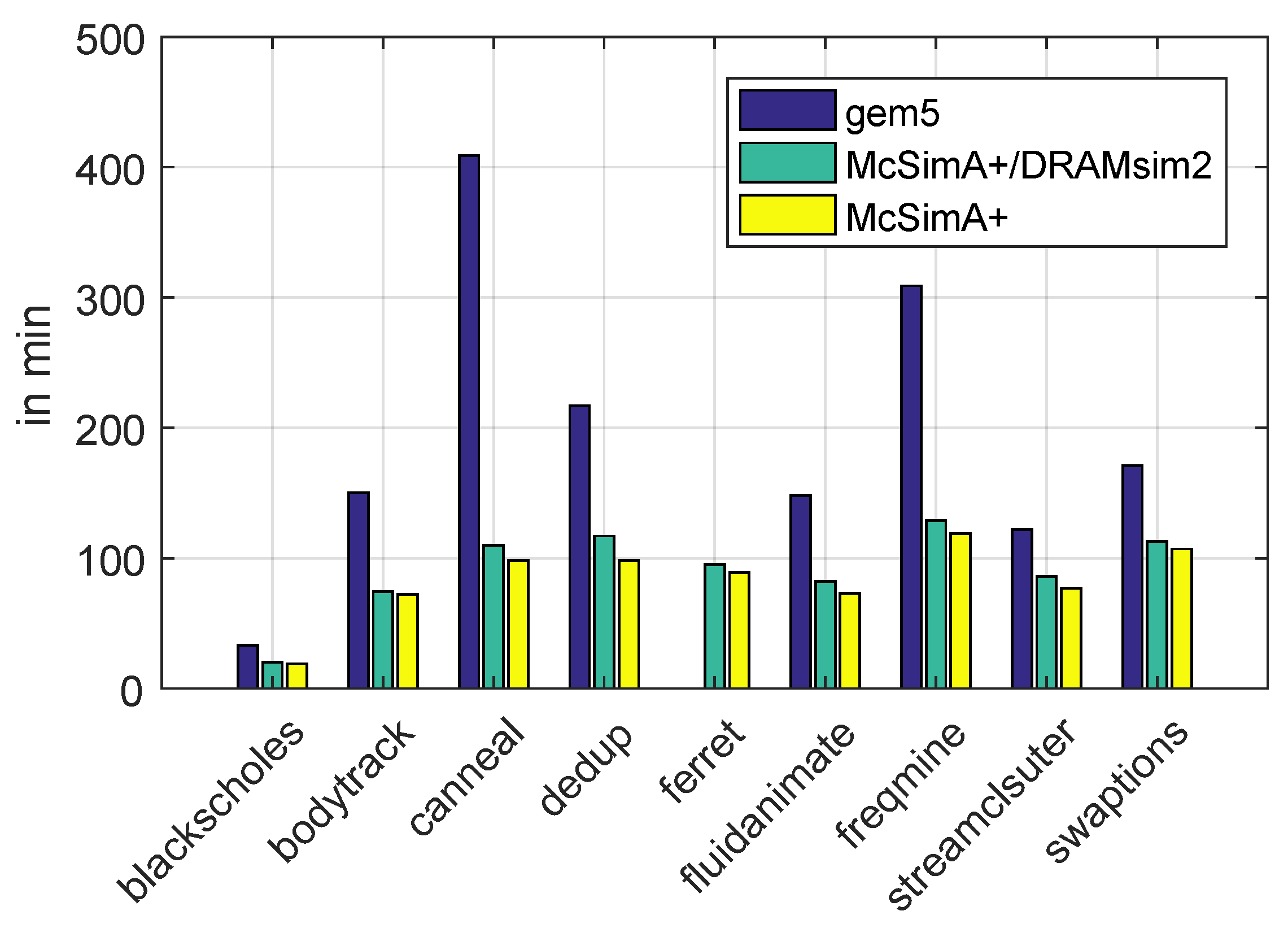

4.2. Simulation Performance

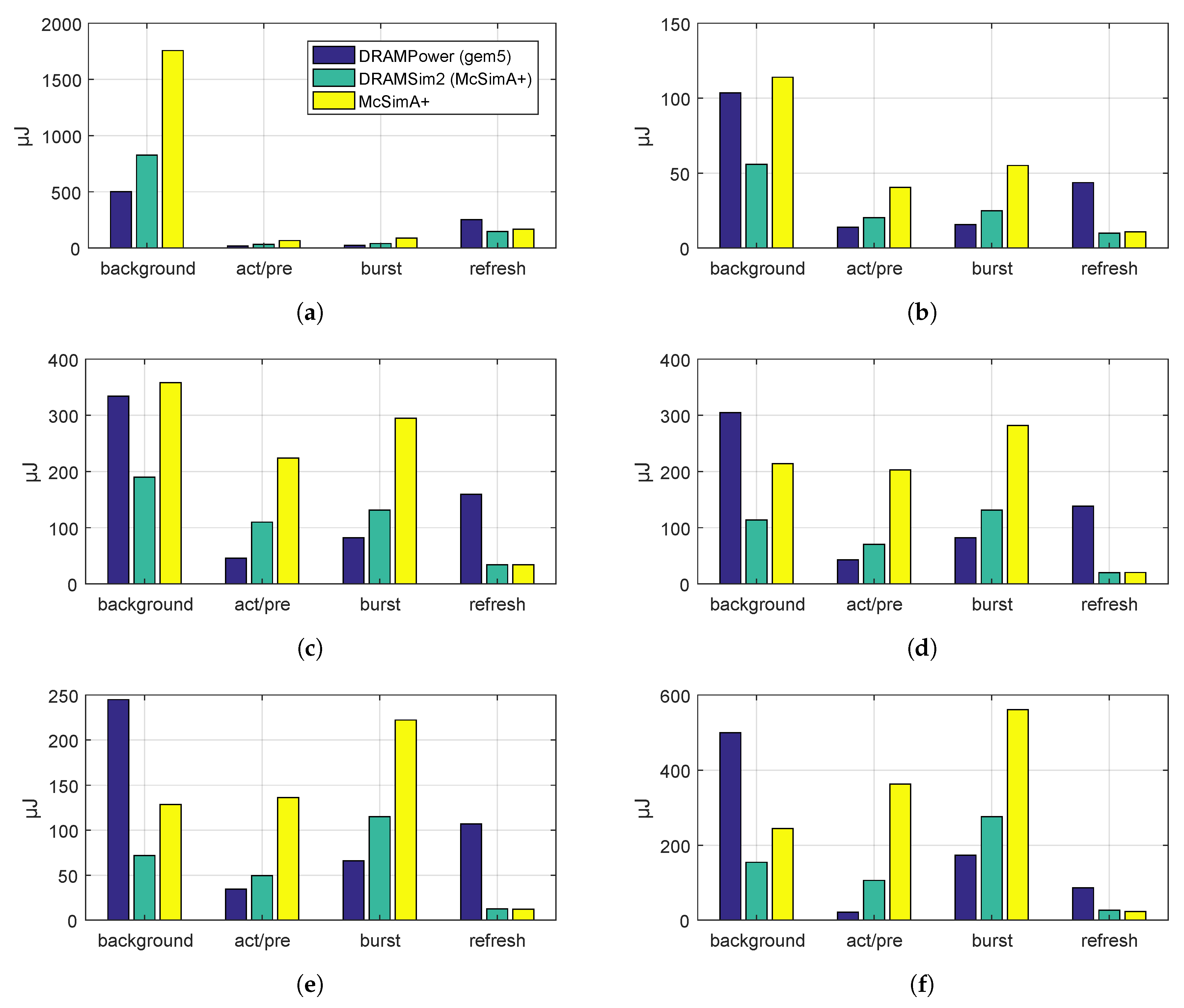

4.3. Memory Power Model

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Akram, A.; Sawalha, L. A Comparison of x86 Computer Architecture Simulators; Technical Report TR-CASRL-1-2016; Western Michigan University: Kalamazoo, MI, USA, 2016. [Google Scholar]

- Calculating Memory System Power for DDR. 2001. Available online: https://www.micron.com/~/media/documents/products/technical-note/dram/tn4603.pdf (accessed on 15 March 2018).

- Rosenfeld, P.; Cooper-Balis, E.; Jacob, B. DRAMSim2: A Cycle Accurate Memory System Simulator. IEEE Comput. Archit. Lett. 2011, 10, 16–19. [Google Scholar] [CrossRef]

- Ahn, J.H.; Li, S.O.; Jouppi, N.P. McSimA+: A manycore simulator with application-level+ simulation and detailed microarchitecture modeling. In Proceedings of the 2013 IEEE International Symposium on Performance Analysis of Systems and Software (ISPASS), Austin, TX, USA, 21–23 April 2013; pp. 74–85. [Google Scholar]

- Binkert, N.; Beckmann, B.; Black, G.; Reinhardt, S.K.; Saidi, A.; Basu, A.; Hestness, J.; Hower, D.R.; Krishna, T.; Sardashti, S.; et al. The gem5 simulator. SIGARCH Comput. Archit. News 2011, 39, 1–7. [Google Scholar] [CrossRef]

- Chandrasekar, K.; Akesson, B.; Goossens, K. Improved Power Modeling of DDR SDRAMs. In Proceedings of the 2011 14th Euromicro Conference on Digital System Design, Oulu, Finland, 31 August–2 September 2011; pp. 99–108. [Google Scholar]

- Luk, C.-K.; Cohn, R.; Muth, R.; Patil, H.; Klauser, A.; Lowney, G.; Wallace, S.; Reddi, V.J.; Hazelwood, K. Pin: Building Customized Program Analysis Tools with Dynamic Instrumentation. In Proceedings of the 2005 ACM SIGPLAN Conference on Programming Language Design and Implementation (PLDI), Chicago, IL, USA, 12–15 June 2005. [Google Scholar]

- Pagemap, from the Userspace Perspective. Available online: https://www.kernel.org/doc/Documentation/vm/pagemap.txt (accessed on 5 March 2018).

- Bienia, C.; Kumar, S.; Li, K. PARSEC vs. SPLASH-2: A Quantitative Comparison of Two Multithreaded Benchmark Suites on Chip-Multiprocessors. In Proceedings of the IEEE International Symposium on Workload Characterization, Seattle, WA, USA, 14–16 Septmeber 2008; pp. 47–56. [Google Scholar]

- DDR3 SDRAM Features. 2009. Available online: https://micron.com/~/media/documents/products/data-sheet/dram/ddr3/4gb_ddr3_sdram.pdf (accessed on 5 March 2018).

| Parameter | Simulated System |

|---|---|

| CPU model | Out Of Order (OOO) |

| CPU frequency | |

| Software threads | 1 |

| Memory controller | 1 |

| Memory modules (DIMM) | 1 |

| Main memory frequency | 800 |

| Main memory size | 2 |

| L1 data cache size | 32 kB |

| L2 cache size | 256 kB |

| RADIX | ARRAY | SWAP-2 | SWAP-5 | SWAP-10 | STREAM | |

|---|---|---|---|---|---|---|

| total instructions | 28 m | 3.4 m | 5.6 m | 2.3 m | 1.2 m | 1.25 m |

| memory reads | 52 k | 34 k | 163 k | 163 k | 131 k | 395 k |

| memory writes | 23 k | 13 k | 92 k | 92 k | 73 k | 125 k |

| mem transact./instr. | 0.026% | 1.4% | 4.5% | 11.3% | 17.3% | 42% |

| instr./sec (McSimA+) | 210 k | 173 k | 188 k | 140 k | 117 k | 104 k |

| instr./sec (combined) | 207 k | 161 k | 161 k | 118 k | 90 k | 66 k |

| instr./sec (gem5) | 178 k | 139 k | 115 k | 72 k | 53 k | 36 k |

| DRAMSim2 | DRAMPower | McSimA+ |

|---|---|---|

| background | ACT bg + PRE bg + ACT pdn + PRE pdn | standby |

| ACT/PRE | ACT + PRE | dynamic |

| burst | write + read | i/o |

| refresh | refresh + self-refresh | refresh |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Bick, K.; Nguyen, D.T.; Lee, H.-J.; Kim, H. Fast and Accurate Memory Simulation by Integrating DRAMSim2 into McSimA+. Electronics 2018, 7, 152. https://doi.org/10.3390/electronics7080152

Bick K, Nguyen DT, Lee H-J, Kim H. Fast and Accurate Memory Simulation by Integrating DRAMSim2 into McSimA+. Electronics. 2018; 7(8):152. https://doi.org/10.3390/electronics7080152

Chicago/Turabian StyleBick, Konstantin, Duy Thanh Nguyen, Hyuk-Jae Lee, and Hyun Kim. 2018. "Fast and Accurate Memory Simulation by Integrating DRAMSim2 into McSimA+" Electronics 7, no. 8: 152. https://doi.org/10.3390/electronics7080152

APA StyleBick, K., Nguyen, D. T., Lee, H.-J., & Kim, H. (2018). Fast and Accurate Memory Simulation by Integrating DRAMSim2 into McSimA+. Electronics, 7(8), 152. https://doi.org/10.3390/electronics7080152