The Enhanced Firefly Algorithm Based on Modified Exploitation and Exploration Mechanism

Abstract

:1. Introduction

2. Firefly Algorithm

3. The Proposed Adaptive Approach for Firefly Algorithm

| Algorithm 1: The Proposed Firefly Algorithm Improved |

| Input: Cost Function , Initial population of Fireflies , the Max number of Iteration , light absorption coefficient , the randomness coefficient Generate initial random population initialize the Light Intensity at by . While Loop: till do Determine the value of the adaptive parameters () in Equations (3) and (4) For loop: for each all fireflies do Inner loop: for each all fireflies do Evaluate the distance, between the two particles () Evaluate the attractiveness If , move firefly towards ; Update parameter values (γ, α) as Equations (3) and (4) Evaluate new solution as in Equation (5) end if End For |

| End For Update light intensity Rank the fireflies and find the current global best values End While |

| Output: the best fireflies solutions and elapsed time |

4. Accuracy and Convergence of the Presented Algorithm

4.1. Unimodal Function

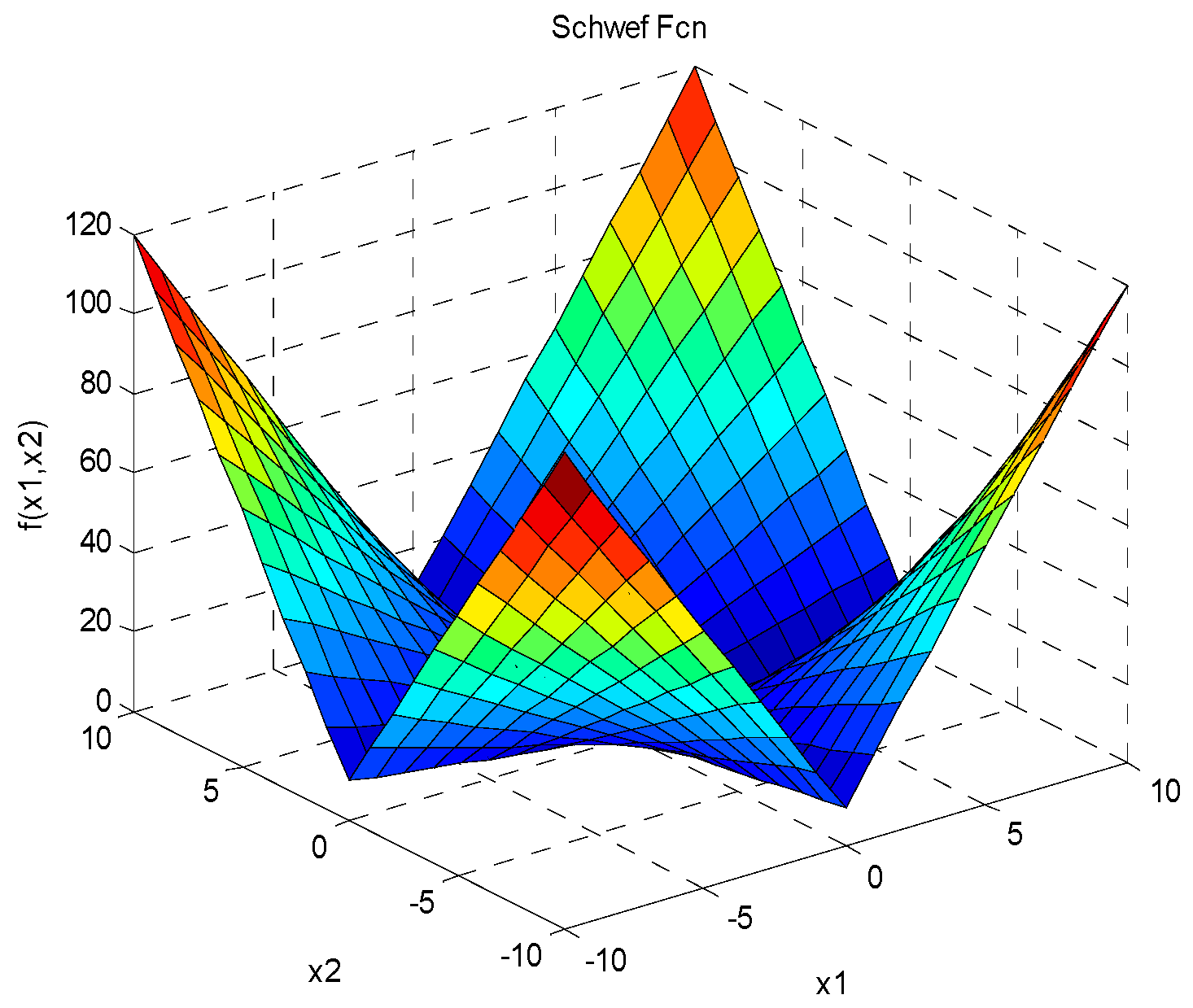

4.2. Multimodal Function

4.3. Other Optimization Methods

4.4. Computational Complexity

4.5. Statistical Test

5. Discussion

6. Conclusions

Author Contributions

Conflicts of Interest

References

- Yang, X.-S. Engineering Optimization: An Introduction with Metaheuristic Applications; John Wiley & Sons: Hoboken, NJ, USA, 2010. [Google Scholar]

- Tang, W.J.; Wu, Q.H. Biologically inspired optimization: A review. Trans. Inst. Meas. Control 2009, 31, 495–515. [Google Scholar] [CrossRef]

- Simon, D. Evolutionary Optimization Algorithms; John Wiley & Sons: Hoboken, NJ, USA, 2013. [Google Scholar]

- Singiresu, S.R. Engineering Optimization Theory and Practice, 4th ed.; John Wiley & Sons: Hoboken, NJ, USA, 2009. [Google Scholar]

- Talbi, E.G. Metaheuristics: From Design to Implementation; John Wiley & Sons: Hoboken, NJ, USA, 2009. [Google Scholar]

- Castro, L.N. Nature-Inspired Computing Design, Development, and Applications; IGI Global: Hershey, PA, USA, 2012. [Google Scholar]

- Blum, C.; Roli, A. Metaheuristics in combinatorial optimization: Overview and conceptual comparison. ACM Comput. Surv. 2003, 35, 268–308. [Google Scholar] [CrossRef]

- Russell, C.E.; Shi, Y. Comparison between genetic algorithms and particle swarm optimization. In Proceedings of the Evolutionary Programming VII, 7th International Conference, San Diego, CA, USA, 25–27 March 1998. [Google Scholar]

- Das, S.; Suganthan, P.N. Differential evolution: A survey of the state-of-the-art. IEEE Trans. Evol. Comput. 2010, 15, 4–31. [Google Scholar] [CrossRef]

- Albataineh, Z.; Salem, F.; Ababneh, J.I. Linear phase FIR low pass filter design using hybrid differential evolution. Int. J. Res. Wirel. Syst. 2012, 1, 43–49. [Google Scholar]

- Yang, X.-S.; Deb, S. Cuckoo search: Recent advances and applications. Neural Comput. Appl. 2014, 9, 169–174. [Google Scholar] [CrossRef]

- Kennedy, J.; Eberhart, R. Particle swarm optimization. In Proceedings of the ICNN’95—International Conference on Neural Networks, Perth, Australia, 27 November–1 December 1995. [Google Scholar]

- Shi, Y.; Eberhart, R.C. Parameter selection in particle swarm optimization. In Proceedings of the 7th International Conference on Evolutionary Programming VII, San Diego, CA, USA, 25–27 March 1998. [Google Scholar]

- Yang, X.-S.; He, X. Firefly algorithm: Recent advances and applications. Int. J. Swarm Intell. 2013, 1, 36–50. [Google Scholar] [CrossRef]

- Binitha, S.; Sathya, S.S. A survey of bio inspired optimization algorithms. Int. J. Soft Comput. Eng. 2012, 2, 137–151. [Google Scholar]

- Yang, X.-S. Firefly algorithms for multimodal optimization. In Proceedings of the 5th International Conference on Stochastic Algorithms: Foundations and Applications, Sapporo, Japan, 26–28 October 2009. [Google Scholar]

- Wang, H.; Wang, W.; Sun, H.; Zhao, J.; Zhang, H.; Liu, J.; Zhou, X. A new firefly algorithm with local search for numerical optimization. In Proceedings of the Computational Intelligence and Intelligent Systems: 7th International Symposium (ISICA), Guangzhou, China, 21–22 November 2015. [Google Scholar]

- Wang, H.; Wang, W.; Sun, H.; Rahnamayan, S. Firefly algorithm with random attraction. Int. J. Bio-Inspired Comput. 2016, 8, 33–41. [Google Scholar] [CrossRef]

- Wang, G.-G.; Guo, L.; Duan, H.; Wang, H. A new improved firefly algorithm for global numerical optimization. J. Comput. Theor. Nanosci. 2014, 11, 477–485. [Google Scholar] [CrossRef]

- Cheung, N.J.; Ding, X.M.; Shen, H.B. Adaptive firefly algorithm: Parameter analysis and its application. PLoS ONE 2014, 9, e112634. [Google Scholar] [CrossRef] [PubMed]

- Yang, X.-S. Firefly algorithm, stochastic test functions and design optimization. Int. J. Bio-Inspired Comput. 2010, 2, 78–84. [Google Scholar] [CrossRef]

- Engelbrecht, A.P. Heterogeneous particle swarm optimization. In Proceedings of the International Conference on Swarm Intelligence, Brussels, Belgium, 8–10 September 2010. [Google Scholar]

- Virtual Library of Simulation Experiments: Test Functions and Database. Available online: https://www.sfu.ca/~ssurjano/ (accessed on 20 March 2018).

- Clerc, M. Standard Particle Swarm Optimization. Available online: http://clerc.maurice.free.fr/pso/SPSO_descriptions.pdf (accessed on 10 March 2018).

- Albataineh, Z.; Salem, F. New blind multiuser detection in DS-CDMA using H-DE and ICA algorithms. In Proceedings of the International Conference on 2013 4th Intelligent Systems Modelling & Simulation (ISMS), Bangkok, Thailand, 29–31 January 2013. [Google Scholar]

- Yu, S.H.; Zhu, S.L.; Ma, Y.; Mao, D.M. A variable step size firefly algorithm for numerical optimization. Appl. Math. Comput. 2015, 263, 214–220. [Google Scholar] [CrossRef]

- Fister, I., Jr.; Yang, X.S.; Fister, I.; Brest, J. Memetic firefly algorithm for combinatorial optimization. In Bioinspired Optimization Methods and their Applications (BIOMA 2012); Filipic, B., Silc, J., Eds.; Jozef Stefan Institute: Ljubljana, Slovenia, 2012. [Google Scholar]

- Wang, H.; Rahnamayan, S.; Sun, H.; Omran, M.G.H. Gaussian bare-bones differential evolution. IEEE Trans. Cybern. 2013, 43, 634–647. [Google Scholar] [CrossRef] [PubMed]

- Derrac, J.; García, S.; Molina, D.; Herrera, F. A practical tutorial on the use of nonparametric statistical tests as a methodology for comparing evolutionary and swarm intelligence algorithms. Swarm Evol. Comput. 2011, 1, 3–18. [Google Scholar] [CrossRef]

- Chih, M. Three pseudo-utility ratio-inspired particle swarm optimization with local search for multidimensional knapsack problem. Swarm Evol. Comput. 2018, 39, 279–296. [Google Scholar] [CrossRef]

| Variables | The Original FA Method | The Proposed FA Method c = 5 | ||||||

|---|---|---|---|---|---|---|---|---|

| Population (n) | Dimension (d) | Itrmax | Cost Function f(x) | Time (s) | Cost Function f(x) | Time (s) | ||

| 6 | 2 | 50 | 6.58824 × 10−4 | +/− 2.03 × 10−3 | 0.019 | 4.22032 × 10−11 | +/− 3.11 × 10−8 | 0.017649662 |

| 500 | 6.13740 × 10−5 | +/− 1.23 × 10−4 | 0.068 | 1.88611 × 10−88 | +/− 5.23 × 10−53 | 0.146790572 | ||

| 30 | 50 | 8.11338 × 101 | +/− 9.23 | 0.017 | 2.21701 × 10−8 | +/− 4.01 × 10−5 | 0.017149309 | |

| 500 | 2.10145 × 101 | +/− 1.03 × 101 | 0.076 | 3.77617 × 10−88 | +/− 6.11 × 10−51 | 0.150035174 | ||

| 30 | 2 | 50 | 3.93047 × 10−4 | +/− 5.01 × 10−3 | 0.097 | 3.05356 × 10−17 | +/− 4.03 × 10−11 | 0.109078429 |

| 500 | 7.42205 × 10−5 | +/− 6.17 × 10−4 | 0.859 | 1.5336 × 10−98 | +/− 5.12 × 10−75 | 1.049852353 | ||

| 30 | 50 | 1.79201 × 101 | +/− 7.73 | 0.106 | 3.02683 × 10−9 | +/− 1.22 × 10−5 | 0.119447301 | |

| 500 | 5.70892 × 10−1 | +/− 9.13 × 10−1 | 0.967 | 5.9831 × 10−93 | +/− 5.31 × 10−73 | 1.255127879 | ||

| 100 | 2 | 50 | 8.19849 × 10−4 | +/− 9.52 × 10−5 | 0.871 | 2.66275 × 10−24 | +/− 3.31 × 10−16 | 0.926686182 |

| 500 | 7.36599 × 10−5 | +/− 1.33 × 10−4 | 8.388 | 2.6357 × 10−119 | +/− 1.11 × 10−85 | 9.85979571 | ||

| 30 | 50 | 5.47774 | +/− 8.33 | 0.997 | 3.1563 × 10−10 | +/− 5.01 × 10−6 | 1.096175286 | |

| 500 | 4.62718 × 10−1 | +/− 8.35 × 10−2 | 9.302 | 5.0678 × 10−100 | +/− 1.73 × 10−83 | 10.67208132 | ||

| Variables | The Original FA Method | The Proposed FA Method c = 5 | ||||||

|---|---|---|---|---|---|---|---|---|

| Population (n) | Dimension (d) | Itrmax | Cost Function f(x) | Time (s) | Cost Function f(x) | Time (s) | ||

| 6 | 2 | 50 | 2.456 × 10−1 | +/− 9.21 × 10−1 | 0.022 | 8.70213 × 10−4 | +/− 5.63 × 10−2 | 0.017334055 |

| 500 | 2.76 × 10−2 | +/− 7.03 × 10−2 | 0.082 | 2.360659 × 10−3 | +/− 3.11 × 10−1 | 0.166245785 | ||

| 30 | 50 | 2.32 × 102 | +/− 9.13 × 101 | 0.022 | 3.25949207 | +/− 8.75 × 101 | 0.023739867 | |

| 500 | 2.15 × 102 | +/− 5.21 × 101 | 0.118 | 2.586502461 | +/− 7.05 × 101 | 0.195115349 | ||

| 30 | 2 | 50 | 3.64 × 10−2 | +/− 7.03 × 10−2 | 0.128 | 1.053531 × 10−3 | +/− 3.51 × 10−2 | 0.112729302 |

| 500 | 7.68 × 10−4 | +/− 5.19 × 10−4 | 1.184 | 1.22458 × 10−4 | +/− 1.33 × 10−3 | 1.038401091 | ||

| 30 | 50 | 2.06 × 102 | +/− 9.25 × 101 | 0.133 | 1.989531603 | +/− 8.23 | 0.135518497 | |

| 500 | 1.56 × 102 | +/− 9.72 × 101 | 1.163 | 1.815948116 | +/− 7.33 | 1.421920632 | ||

| 100 | 2 | 50 | 4.73 × 10−3 | +/− 8.78 × 10−3 | 0.903 | 1.24 × 10−4 | +/− 7.12 × 10−3 | 0.958555 |

| 500 | 8.84 × 10−4 | +/− 6.15 × 10−4 | 8.501 | 4.12474 × 10−5 | +/− 2.33 × 10−5 | 9.402039477 | ||

| 30 | 50 | 2.22 × 102 | +/− 9.25 × 102 | 1.356 | 1.94852802 | +/− 7.77 | 1.120368893 | |

| 500 | 1.33× 102 | +/− 7.88 × 102 | 9.971 | 1.797004014 | +/− 5.53 | 11.20427867 | ||

| Function’s Number | Name | Expression |

|---|---|---|

| F1 | Ackley Function | , |

| F2 | Sphere Function | , |

| F3 | Rosenbrock Function | , |

| F4 | Rastrigin Function | , |

| F5 | Schwefel Problem 2.22 | , |

| F6 | Griewank Function | , |

| Function | Standard FA | VSSFA | MFA | SPSO | DE | The proposed FA with c = 5 | ||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Mean | Mean | Mean | Mean | Mean | Mean | |||||||

| F1 | 3.21 × 10−2 | +/− 1.09 × 10−2 | 2.31 × 101 | +/− 4.5 × 101 | 7.31 × 10−4 | +/− 8.55 × 10−3 | 8.75 × 100 | +/− 9.77 × 100 | 1.03 × 101 | +/− 4.1 × 101 | 0 | +/− 0 |

| F2 | 7.87 × 101 | +/− 5.3 × 101 | 6.83 × 101 | +/− 9.21 × 101 | 2.71 × 10−4 | +/− 3.34 × 10−4 | 4.31 × 10−4 | +/− 5.33 × 10−4 | 5.11 × 101 | +/− 4.1 × 101 | 1.12 × 10−185 | +/− 7.03 × 10−123 |

| PF3 | 5.71 × 10−2 | +/− 9.55 × 10−2 | 4.75 × 10+3 | +/− 7.1 × 103 | 3.95 × 101 | +/− 8.3 × 101 | 5.31 × 100 | +/− 5.2 × 100 | 7.71 × 101 | +/− 8.5 × 101 | 3.30 × 10−187 | +/− 3.65 × 10−153 |

| F4 | 5.12 × 102 | +/− 8.7 × 101 | 4.23 × 102 | +/− 7.1 × 102 | 3.53 × 101 | +/− 2.3 × 101 | 1.92 × 103 | +/− 2.7 × 103 | 2.13 × 104 | +/− 2.2 × 104 | 28.88 × 100 | +/− 2.01 × 10−2 |

| F5 | 4.87 × 10−2 | +/− 7.23 × 10−2 | 9.28 × 101 | +/− 9.3 × 101 | 8.01 × 10−4 | +/− 7.33 × 10−4 | 1.95 × 100 | +/− 8.7 × 100 | 7.27 × 101 | +/− 1.3 × 101 | 5.98 × 10−93 | +/− 3.25 × 10−78 |

| F6 | 1.41 × 10−3 | +/− 9.88 × 10−3 | 8.75 × 101 | +/− 9.2 × 101 | 5.33 × 10−3 | +/− 7.35 × 10−3 | 7.72 × 10−3 | +/− 8.75 × 10−3 | 8.52 × 100 | +/− 9.6 × 100 | 1.48 × 10−5 | +/− 8.22 × 10−4 |

| Function | Standard FA | Proposed FA c = 1 | Proposed FA c = 2 | Proposed FA c = 3 | Proposed FA c= 5 | |||||

|---|---|---|---|---|---|---|---|---|---|---|

| Mean | Mean | Mean | Mean | Mean | ||||||

| F1 | 3.21 × 10−2 | +/− 1.09 × 10−2 | 0 | +/− 0 | 0 | +/− 0 | 0 | +/− 0 | 0 | +/− 0 |

| F2 | 7.87 × 101 | +/− 5.3 × 101 | 2.72 × 10−48 | +/− 4.11 × 10−23 | 1.93 × 10−112 | +/− 3.33 × 10−83 | 2.85 × 10−155 | +/− 1.71 × 10−95 | 1.12 × 10−185 | +/− 7.03 × 10−123 |

| F3 | 5.71 × 10−2 | +/− 9.55 × 10−2 | 1.51 × 10−48 | +/− 2.57 × 10−25 | 2.87 × 10−113 | +/− 7.23 × 10−92 | 7.25 × 10−151 | +/− 2.22 × 10−105 | 3.30 × 10−187 | +/− 3.65 × 10−153 |

| F4 | 5.12 × 102 | +/− 8.7 × 101 | 28.86 × 100 | +/−2.00 × 10−2 | 28.86 × 100 | +/− 2.00 × 10−2 | 28.87 × 100 | +/−2.01 × 10−2 | 28.88 × 100 | +/− 2.01 × 10−2 |

| F5 | 4.87 × 10−2 | +/− 7.23 × 10−2 | 2.21 × 10−24 | +/− 8.11 × 10−15 | 1.66 × 10−58 | +/− 5.88 × 10−37 | 2.52 × 10−78 | +/− 6.21 × 10−52 | 5.98 × 10−93 | +/− 3.25 × 10−78 |

| F6 | 1.41 × 10−3 | +/− 9.88 × 10−3 | 1.26 × 10−4 | +/− 3.83 × 10−3 | 2.48 × 10−4 | +/− 8.57 × 10−3 | 1.47 × 10−4 | +/− 4.51 × 10−4 | 1.48 × 10−5 | +/− 8.22 × 10−4 |

| Functions | Standard FA | VSSFA | MFA | SPSO | DE | The Proposed FA with c = 5 |

|---|---|---|---|---|---|---|

| F1 | 10% | 25% | 11% | 22% | 9% | 23% |

| F2 | 11% | 26% | 12% | 20% | 10% | 21% |

| F3 | 10% | 23% | 12% | 20% | 11% | 24% |

| F4 | 10% | 25% | 11% | 22% | 9% | 23% |

| F5 | 9% | 26% | 11% | 20% | 10% | 24% |

| F6 | 11% | 24% | 12% | 20% | 10% | 23% |

| Average | Quade | Friedman | Aligned Friedman | |||

|---|---|---|---|---|---|---|

| Rank | Method | Score | Method | Score | Method | Score |

| 1 | VSSFA | 7.6235 | VSSFA | 7.5026 | VSSFA | 82.5832 |

| 2 | SPSO | 6.8235 | SPSO | 6.8125 | SPSO | 75.1472 |

| 3 | DE | 6.5121 | DE | 6.5011 | DE | 72.1322 |

| 4 | FA | 6.4157 | FA | 6.4113 | FA | 68.3582 |

| 5 | MFA | 5.5201 | MFA | 5.1090 | MFA | 52.4235 |

| 6 | Proposed FA | 3.1052 | Proposed FA | 3.3075 | Proposed FA | 38.8885 |

| Statistic | 4.011302 | 35.2354 | 12.75773 | |||

| p-value | 0.0056273 | 0.000271 | 0.367715 | |||

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sababha, M.; Zohdy, M.; Kafafy, M. The Enhanced Firefly Algorithm Based on Modified Exploitation and Exploration Mechanism. Electronics 2018, 7, 132. https://doi.org/10.3390/electronics7080132

Sababha M, Zohdy M, Kafafy M. The Enhanced Firefly Algorithm Based on Modified Exploitation and Exploration Mechanism. Electronics. 2018; 7(8):132. https://doi.org/10.3390/electronics7080132

Chicago/Turabian StyleSababha, Moath, Mohamed Zohdy, and Maged Kafafy. 2018. "The Enhanced Firefly Algorithm Based on Modified Exploitation and Exploration Mechanism" Electronics 7, no. 8: 132. https://doi.org/10.3390/electronics7080132

APA StyleSababha, M., Zohdy, M., & Kafafy, M. (2018). The Enhanced Firefly Algorithm Based on Modified Exploitation and Exploration Mechanism. Electronics, 7(8), 132. https://doi.org/10.3390/electronics7080132