1. Introduction

Interactive art involves its spectators in more than just a viewing capacity. This interactivity can range from spectators perceiving that they are interacting with a passive art piece to pieces where input from the spectator influences the artwork [

1]. Over the years, interactive art has evolved from simple mechanical contraptions [

2] to installations involving some form of computer processing [

3,

4] or that are completely virtual in their output [

5,

6].

Since its introduction, the Raspberry Pi Single Board Computer (SBC) has provided an all-in-one platform that allows artists to carry out processing and hardware interaction on a single low-cost piece of hardware. This has led to it being used in many interactive art installations and the Raspberry Pi foundation have dedicated a section of their website [

7] to documenting artistic works that incorporate Raspberry Pi SBCs.

The

Go! Rhinos campaign was a mass public art event run by Marwell Wildlife in Southampton, UK for 10 weeks during the summer of 2013 [

8]. The event involved 36 businesses and 58 schools placing decorated fibreglass rhinos along an ‘art trail’ in Southampton City centre, with the aim of raising awareness of the conservation threat faced by wild rhinos, and showcased local creativity and artistic talent.

The event provided an opportunity to promote Electronics and Computer Science at the University of Southampton and act as a platform for electronics and computing outreach activities. A team of electronic engineers, computer scientists, marketing specialists and artists from within the University were brought together to design and develop a unique interactive cyber-rhino called Erica, shown in

Figure 1. Erica was designed to be a Dynamic-Interactive (varying) [

9] art piece where her behaviour is not only determined by the environment that she is in but also by her physical interactions with viewers—very much like a cyber-physical toy or Tamagotchi [

10]. Internally, Erica is powered by a network of five Raspberry Pi SBCs connected to a series of capacitive touch sensors, cameras, servos, stepper motors, speakers, independently addressable LEDs and Liquid Crystal Displays (LCDs). These devices were carefully chosen to implement the desired features.

This article discusses in depth the impact and considerations of installing a piece of interactive art using Raspberry Pi SBCs in a public setting as well as the implementation methods. The paper is organised as follows.

Section 2 discusses the features of Erica that brought her to life.

Section 3 describes the initial implementation of Erica and the lessons learned, while

Section 4 goes on to discuss the deployment of Erica into the wild.

Section 5 describes the upgrades and maintenance after Erica’s time with the general public.

Section 6 demonstrates the impact of Erica with regards to public engagement and outreach while

Section 7 provides a concluding statement.

2. Features

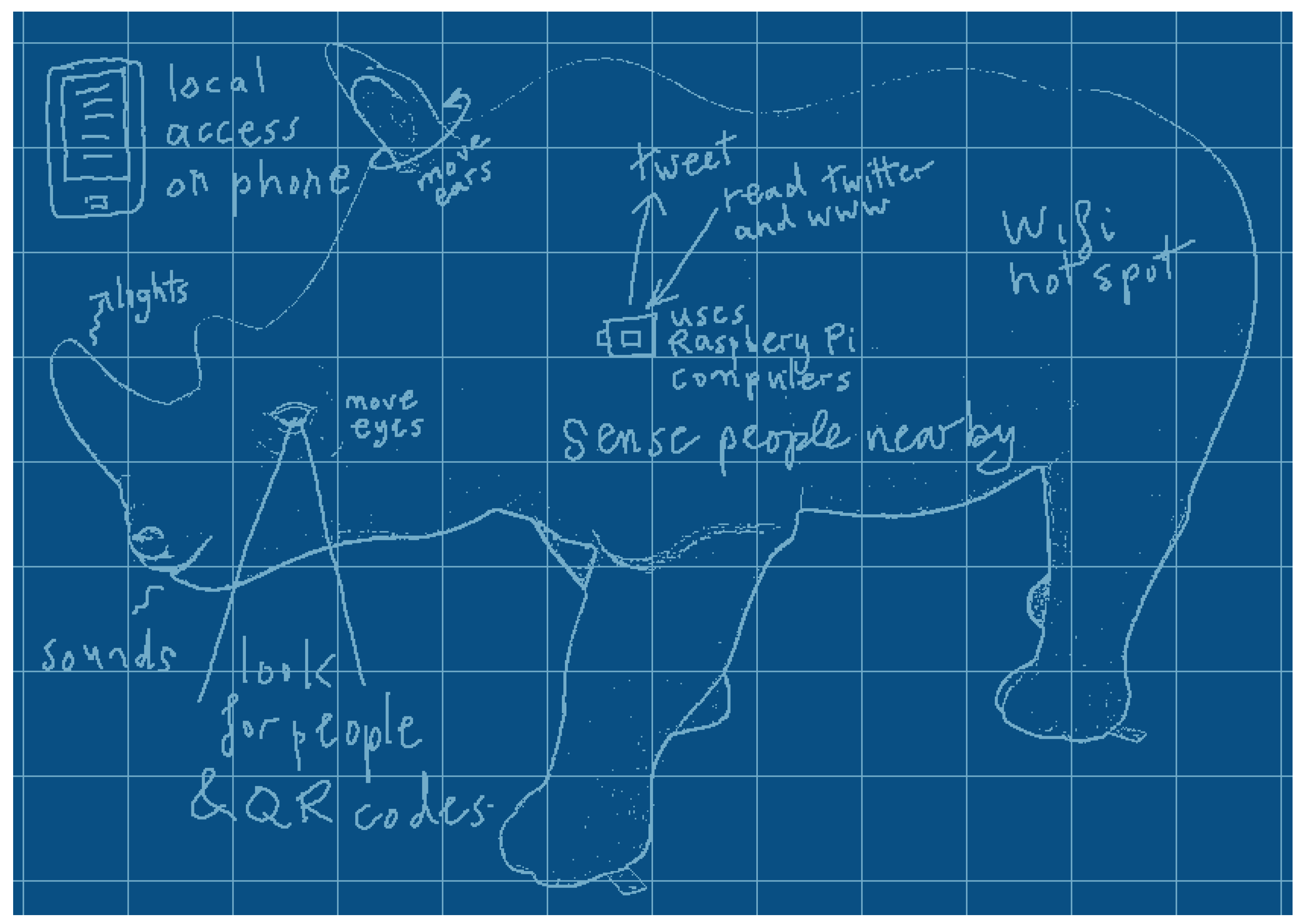

The initial concept of Erica was as a cyber-physical entity that merged actions inspired by natural behaviours with a showcase of the different facets of electronics and computer science in an interactive way. The Raspberry Pi was the platform of choice for its novelty, popularity with hobbyists and schools and its wide availability. The media awareness of the Raspberry Pi also helped to promote Erica. Additionally, the availability of the Raspberry Pi and open-source nature of Erica’s design would permit interested people to inexpensively implement aspects of her at home. After several brainstorming sessions, an extensive list of desirable features that could be implemented was compiled. Each of these features was classified as either an input (a ‘sense’), an output (a ‘behaviour’) or both as shown in

Table 1. A broad range of features was selected to cover different areas of electronics and computer science, ranging from sensors and actuators to image processing and open data analytics, leading to an initial design drawing as shown in

Figure 2.

When an input occurs, it is processed and an appropriate response is generated. These responses can be broken down into two categories: ‘reactive’ and ‘emotive’. Reactive behaviours occur almost immediately and are a direct response to an interaction, such as a grunt being generated when a touch sensor is touched. This immediate feedback provides a strong link between the interaction and the response, which is beneficial when demonstrating how the sensors and actuators are connected. Erica’s reactive behaviours can be thought of as being similar to reflexes in humans; however, they cover a broader range of interactions.

Rather than each interaction having a static response, it was decided that Erica should also have several emotive responses. This was achieved by implementing four emotive states, each with seven distinct levels, that triggered additional output events and influenced the outcome of future interactions. Emotive responses are based on a cumulative time-decaying set of ‘emotions’ as shown in

Table 2 alongside the input sensors that contribute to their level and output events. When Erica is left alone for an extended period of time, she goes to sleep and recovers energy, but her interest, fullness and mood decay.

The ‘emotion’ that turned out to be a favourite with adults and children alike is fullness. Fullness automatically decreases over time and is incremented every time she is fed (by touching the chin sensor), accompanied by a grunt noise. If Erica is fed too many times in quick succession, a more juvenile sound is also played.

2.1. Visual System

It was desired that Erica should be able to see like a real rhino so a visual system consisting of two cameras (one for each eye) was conceived. At the time of development, the Pi Camera [

11] was not available so two USB webcams were chosen. Even if the Pi Camera had been available, they would have been less suitable than webcams due to mounting and cable length/flexibility issues. Initially, it was planned that the eyes would have two-axis pan-tilt; however, this proved impractical in the limited space available within the head. As such, a single servo was used to enable left-right panning about the vertical axis.

Software was built using the OpenIMAJ libraries [

12] developed at Southampton—the use of cross-platform Java code and the inbuilt native libraries for video capture, combined with the use of commodity webcams. This portability ensured that it was possible to test the software on various platforms without need for recompilation or code changes, which substantially helped with rapid prototyping of features. Additionally, this had the added benefit of improved accessibility of the public to experiment with image processing using Erica’s open source examples.

The original idea for the visual system was that it would perform real-time face tracking and orientate the cameras such that the dominant detected face in each image would be in the centre of the captured frame. The restriction to panning on a single axis and performance limitations of the Raspberry Pi meant that the tracking was not as smooth and apparent as desired. Therefore, it was decided that the visual system should be used for interactions that did not require immediate feedback to the user. In particular, the software for the eyes was setup to process each frame and perform both face detection (using the standard Haar-cascade approach [

13] implemented in OpenIMAJ) and QR-code detection (using OpenIMAJ with the ZXing “Zebra Crossing” library [

14]). This achieved recognition at a rate of a few frames a second (specifically, using the Raspberry Pi model B, the frame-rate achieved was around five frames per second, while the Raspberry Pi 2 managed around ten frames per second).

2.2. Open Data

Open Data, specifically Linked Open Data [

15], is a subject in which the University of Southampton has a rich research history. Linked Open Data is, in summary, information made available in a computer-readable form with a license that allows re-use. It was decided that Erica should both consume and publish Linked Open Data. Erica periodically checks a number of online data sources in order to get an idea of her environment. The most novel use for this is a function for checking the current weather conditions and reacting accordingly. Erica will get cold if the temperature drops, and will sneeze if the pollen count is too high.

Every hour, a script runs that takes a copy of Erica’s current emotive state and converts it into an open format known as RDF (Resource Description Framework). This is then published to Erica’s website and can be queried by any programmers who wish to interact with Erica. If an internet connection is not available, the script silently exits and tries again the next hour. The data in its RDF form is held on the website [

16] rather than on Erica herself, so that it is always available even when Erica has no internet connection.

2.3. Features Summary

Having worked out a list of features to be included in Erica, how they were implemented needed to be carefully considered. The design choice of using Raspberry Pi SBCs as preference over a small form factor PC caused some additional challenges that would not otherwise have been faced.

3. Initial Implementation

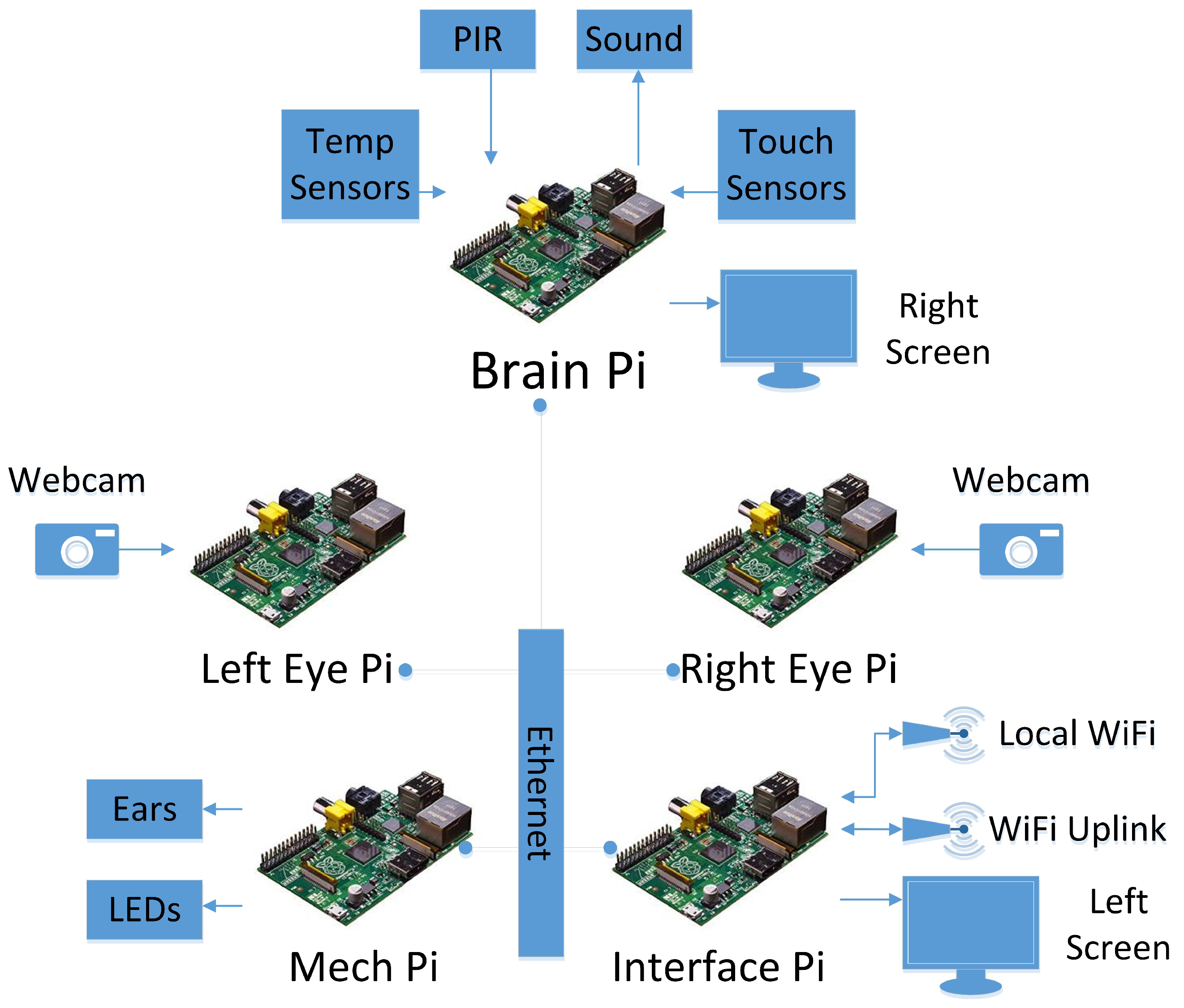

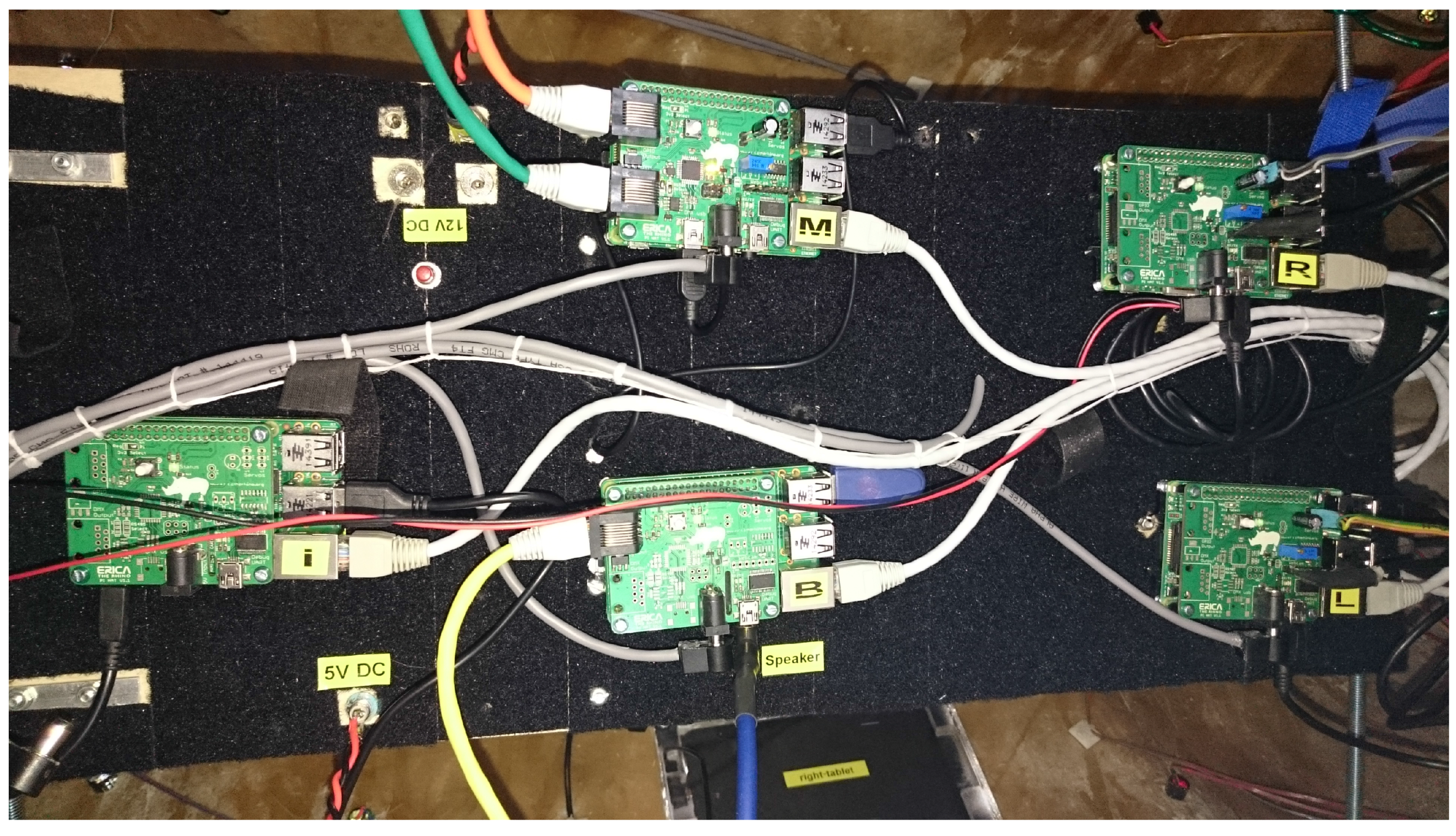

During initial development, it was quickly found that a single Raspberry Pi was not sufficient to handle all of the processing required for the desired features. As such, a distributed system of five Raspberry Pi SBCs was conceived with each one being responsible for a different aspect of Erica’s operation.

Figure 3 depicts a block diagram of the initial implementation and shows how all of the inputs and outputs are connected to the SBCs.

The overhead of the visual system required one Raspberry Pi per eye to give acceptable performance. LED and servo control required a number of I/O pins so one Raspberry Pi was dedicated to this task. To co-ordinate the actions of these Pi SBCs into a coherent entity, another Pi, the Brain Pi, was dedicated to controlling the whole system and was responsible for the PIR sensor, touch sensors, temperature sensor and sound output. Details of the operation of the Brain Pi are discussed further in

Section 3.3. Finally, a fifth Raspberry Pi was used to provide network connectivity to the outside world.

Erica included two HDMI-connected 7” displays, one on each side. These were each connected to a separate Raspberry Pi and used to display information about Erica’s mood, the Go! Rhinos campaign and rhino conservation. These displays were deliberately positioned at different heights to allow for easy viewing for both adults and children alike.

3.1. Physical Design

Erica was delivered as a sealed white fibreglass shell with no access to the interior. The artistic design of Erica was outside the areas of expertise of the authors, so talent was sought elsewhere in the University. A design competition was run at the Winchester School of Art where undergraduate students submitted potential designs. The winning artist was invited to paint Erica in their design, which was then displayed in the Southampton city centre for 10 weeks.

Rather than hiding the electronics inside Erica, it was felt that being able to see what was driving her would add to her appeal and general intrigue, so it was decided to make them a visible feature. This was achieved by making the access hatch that was cut in Erica’s belly out of clear perspex (formed to the same shape as the fibreglass that was cut out) and placing mirror tiles on the plinth beneath to allow viewers to see inside easily. The Raspberry Pi SBCs were mounted upside down on a board suspended above the perspex window and illuminated by two LED strip lights.

The webcams chosen to act as Erica’s eyes had a ring of LEDs around the lens designed to be used to provide front-light to the webcam image. A digitally controllable variable resistor allowed software brightness control, so the LEDs could be used to simulate blinking. The webcams were then inserted inside a plastic hemisphere that was painted to resemble an eye with an iris. For installation, the eyes for the fibreglass moulding were carefully cut out so that the webcams would be in an anatomically correct position. Once mounted, it was noted that the eye mechanism was vulnerable to physical damage, especially as the eyes were at a child-friendly height, so colourless, domed perspex protective lenses were formed to fit within the eye socket and sealed to prevent external interference, as can be seen in

Figure 4a.

Erica’s moving ears were implemented by cutting off the fibreglass ears and remounting them to stepper motors so that they could be rotated freely. As the only external moving components, specific care was needed to prevent injury to people and to ensure that the mechanism could withstand being investigated by curious bystanders. This was achieved by mounting the ears magnetically to the stepper motor shafts, limiting the available torque. This, however, made it relatively easy to remove the ears so they were tethered to prevent them from being dropped and to discourage theft, as shown in

Figure 4b.

Two distinct groups of LEDs were also inserted into her shell: RGB LEDs on her horn and mono-colour LEDs of differing colours on her body. The horn LEDs were installed in differing patterns on her short and long horns. The body LEDs were incorporated into her artistic design, being placed at the ends of her painted wires.

3.2. Networking and Monitoring

By choosing to use multiple SBCs to provide the compute power needed to run Erica, a means to interconnect these was essential. As Erica would need to be moved between locations, it was decided to run an internal network to provide this connectivity, which could then connect out to the Internet at a single point. There were two options considered for this, either an off-the-shelf router or to use a Pi. The USB ports available on a Pi gave the flexibility required to add both additional wired Ethernet, as well as wireless interfaces. This arrangement would give more flexibility in configuring these interfaces (for DNS, DHCP, NAT, routing, firewalling, etc.), whereas an off-the-shelf router with its generic firmware may have not been sufficiently configurable.

The initial design of the network ended up with the Interface Pi having three separate interfaces, which was facilitated by connecting a powered hub to one of its USB ports to provide the required capacity both of ports as well as power. These three interfaces consisted of:

A wired internal interface to connect to the other four SBCs over an internal network.

A WiFi uplink interface to connect to the Internet provided by a USB wireless dongle and high-gain antenna.

A WiFi access point interface to allow those in the vicinity to interact with Erica using smartphones, provided by a USB wireless dongle with a standard antenna.

It was decided that no internet access would be available on the WiFi access point, as this would be a publicly available unprotected network and therefore any Internet access was liable to be abused. It was recognised early in the development process that remote access to monitor if various electronically controlled aspects of Erica were behaving as expected was essential. This also allowed certain features to be fixed when they were not working. This needed to be achieved in a way that was independent of the parent network providing the uplink to the Internet.

This remote access was facilitated by two separate means, which had both previously been investigated in earlier sensor network deployments [

17]. This first of these techniques was to create an SSH tunnel out from the Interface Pi through the parent network to a device on the Internet that could accept SSH connections. This tunnel would allow SSH connections from this device directly onto the Interface Pi without needing to know either the current (private) IP address of its uplink or the IP address of the parent network’s gateway.

The second technique was to register for an IPv6 [

18] tunnel with a tunnel broker. SixXS [

19] provides a variety of IPv6 tunnel options for which AICCU (Automatic IPv6 Connectivity Client Utility) meets the key requirement for Erica, to facilitate as simply as possible, routeable global IPv6 addresses for each Pi, allowing them to be connected to directly (rather than requiring a proxy via the device that maintained the IPv4 SSH tunnel previously described). These IPv6 addresses could then be assigned hostnames using DNS AAAA records for the ericatherhino.org domain, significantly simplifying the task of accessing and monitoring the Pi SBCs remotely. However, for security reasons this was carefully firewalled, and SSH was only allowed using public key authentication.

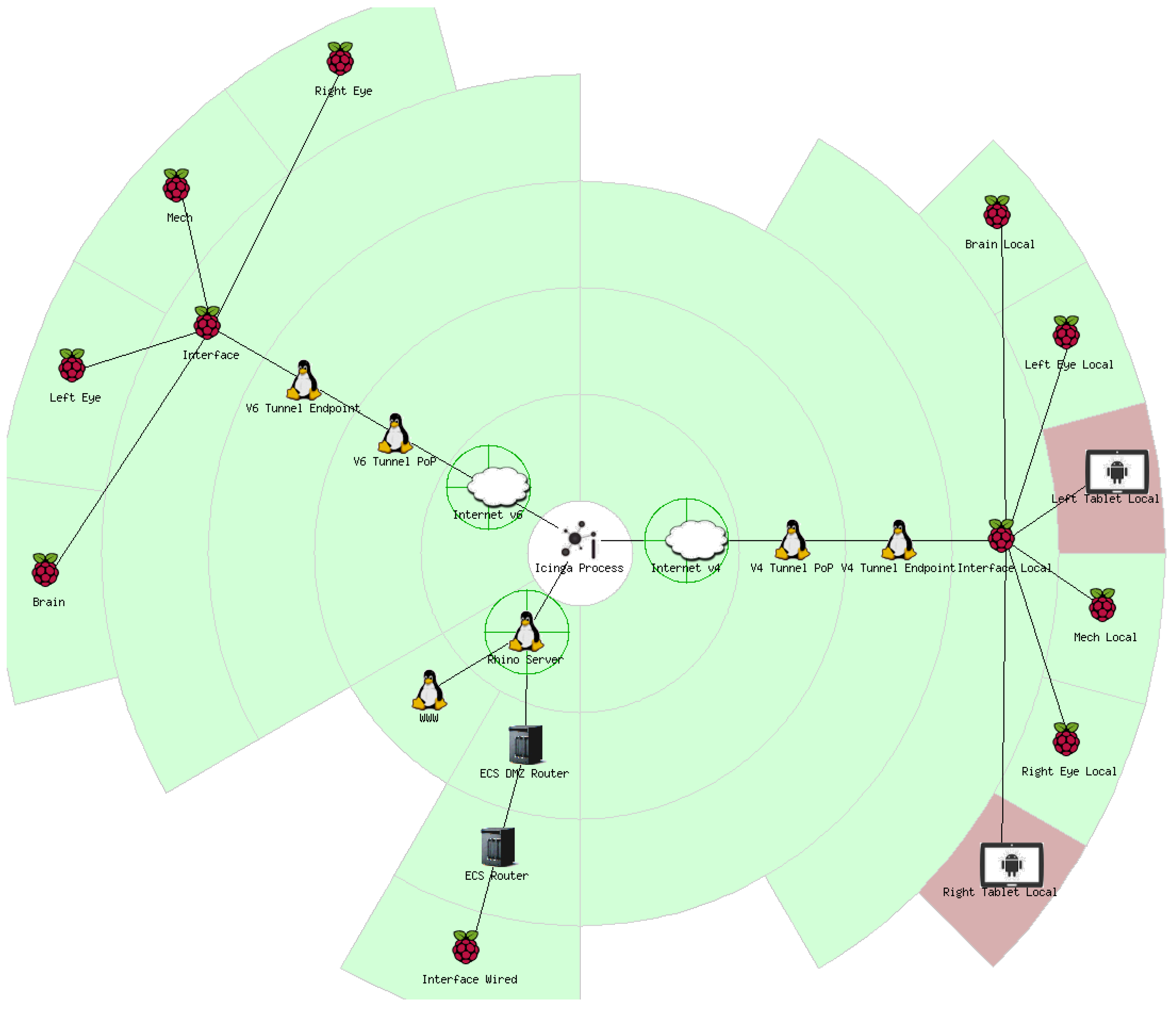

Monitoring of Erica was implemented using Icinga [

20], a scalable open source monitoring system. At its most basic level, Icinga allows monitoring of hosts and common services (e.g., Ping, HTTP, SSH, DNS, etc.). It also allows dependencies between hosts and services to be defined, so some hosts or services are only monitored when other hosts or services are available. Through this means, Erica’s infrastructure could be represented in the status map shown in

Figure 5.

One particular feature of Icinga is that an accompanying application can be run on monitored hosts to allow scripts to be run to test bespoke features, when prompted by Icinga. This was made use of to ensure that particular applications were running on specific Raspberry Pi SBCs, prompting ‘rhino engineers’ to restart the required programs when necessary. It also made it possible to observe when particular interactions were not occurring in near real-time. This provided the ability to remotely determine if a feature was broken in a timely manner, allowing remedial action to be taken. It also provided information on simple trends that helped resolve regularly occurring faults or to evaluate the popularity of different interactions.

3.3. Brain Development

Due to the distributed nature of Erica’s hardware, it was important that there should be a middleware capable of both receiving events from the various sensors and triggering commands to cause a reaction. This was deployed on the Brain Pi, with the sensors that require fast responses (touch & presence) also connected to it.

The software itself was implemented using the Django [

21] web framework, and provided a RESTful API (REpresentation State Transfer Application Programming Interface) to the other Pi SBCs. Each Pi that wants to send events runs a RhinoComponent web service, using the lightweight CherryPy [

22] for simplicity. This registers with the Brain Pi on boot, indicating its name (e.g., ‘left-eye’) and a URL that is able to receive commands.

When a sensor is triggered, the Pi responsible for that sensor sends an event to the brain. This has the structure of ‘source.component.action’, e.g., ‘interaction.chin.press’. The source indicates what caused the event (e.g., a sensor interaction, twitter, environment, or the brain itself); the component informs the brain as to the originating component (e.g., the chin, or the left eye); and the action gives the interaction that was actually performed (e.g., press, scan, detect). A dictionary of key/value pairs can also be sent, giving extra information (e.g., which side of the chin was pressed).

As soon as an event arrives, a collection of scripts are executed, known as ‘behaviour scripts’. These are intentionally simple and small, giving the entire team the ability to add new behaviours without having to modify the underlying server code. They can read and modify Erica’s emotional states, described earlier, and trigger commands. A short-term memory (capped at 100 items) and a long-term memory (holding counts of events) are also available. For example, if a face is detected by one of the eyes, Erica’s mood and interest are increased, the appropriate eye is told to blink, a sound is played, and the website is updated. As a side-effect, the short-term memory will include the face detection event, and the long-term memory will show that one more face detection event has been handled.

The blink and sound playback actions in this example are performed by triggering commands. When the behaviour script triggers ‘lefteye.lights.blink’, the command is sent to the Left-eye Pi via the URL that it registered earlier. The component on the Pi can then affect the webcam’s LED.

There are also some events that are not caused by external stimuli: an idle event is triggered at set intervals, so Erica’s hunger and tiredness can be updated; and an event is triggered every hour, allowing Erica to send messages at appropriate times.

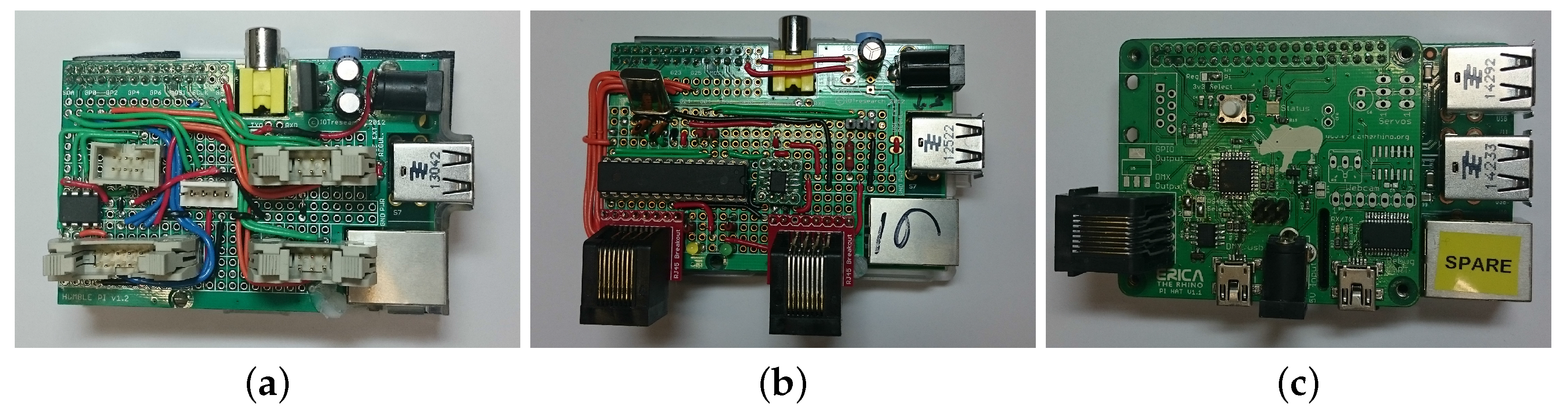

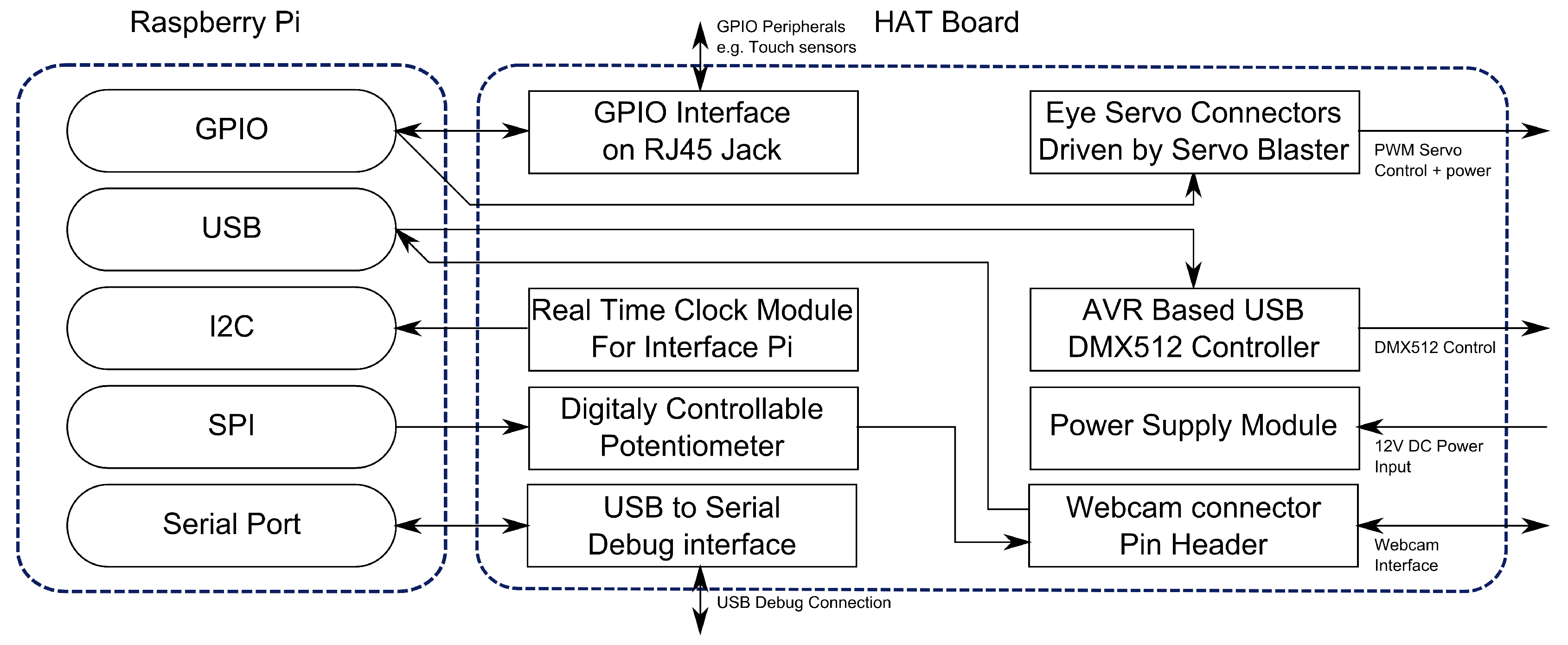

3.4. Electronic Interface Hardware

Each of the Pi SBCs in Erica has an interface board mounted to it. The interface boards were made using the Humble Pi prototyping boards, which are designed to fit on top of the Pi. Each of the Pi SBCs had a different interface board providing the necessary electronics. The Interface Pi is the simplest just requiring an RTC to allow Erica to maintain accurate time when no internet connection is available. The Pi SBCs responsible for eye control were provided with the hardware required to drive servos and simulate blinking as discussed in

Section 3.1. The Brain Pi has a digital temperature sensor (TMP102) along with connections for the touch and PIR sensors. The Mech Pi interface contained the connectors to link to the ear control boards drivers and master control hardware for the LED subsystem. An example of an interface board from this generation of hardware is shown in

Figure 6a.

Erica’s other circuit boards were initially made using strip board to allow for fast iterative development of the electronics required. Whilst this enabled Erica to be made quickly, the process of making spares was extremely time consuming, leading to only a couple of spares of the most common parts being made. This led to a change in approach after the initial deployment, as at least six of each of the main boards were required.

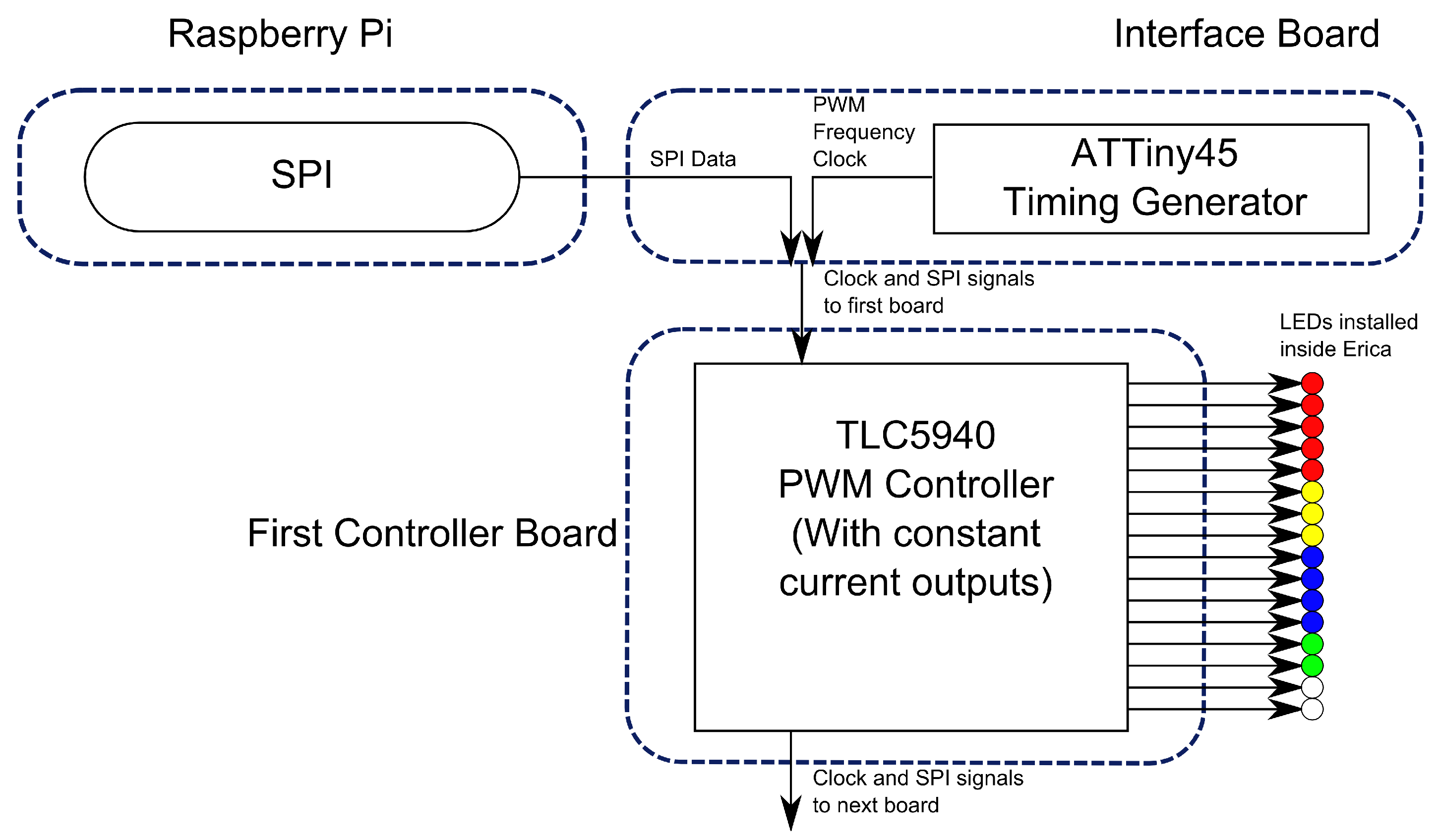

An example of a circuit board that was used widely in Erica’s construction was the LED controller. Erica has 32 single colour and 15 RGB LEDs distributed around her body. Rather than have all these LEDs connected to a single controller board, a distributed control system was used. This simplified the cabling required inside Erica. Each LED (or colour, if RGB) had a separate PWM control channel. The distributed dimmers were connected together using a shared SPI bus which originates from the Mech Pi. The structure of the the lighting control can be seen in

Figure 7.

4. Go! Rhinos Deployment

In summer 2013, Marwell Wildlife organised an ‘art trail’ around the city of Southampton, UK. The 36 life size rhinos and 62 smaller rhinos were on display for 10 weeks, and enjoyed by approximately 250,000 residents and visitors [

8]. At the conclusion of the art trail, the life size rhinos were sold by auction raising a total of £124,700 for three charitable causes.

Unlike the other life-size rhinos on the art trail, Erica was located inside a local shopping centre. This location was chosen because of the availability of power, network and the realisation that making Erica both rain proof and resilient to vandalism would not be feasible. There was one particular unforeseen problem. The location had a large skylight which acted like a greenhouse and allowed direct sunlight to illuminate Erica’s mostly black paintwork resulting in her internal temperature exceeding 45 °C on several occasions. While the Raspberry Pi SBCs handled this without issue, it was found that the glue holding circuit boards and cables inside of Erica was not able to cope, turning a series of neat cable looms and mounted hardware into a mess, leading to hardware failures.

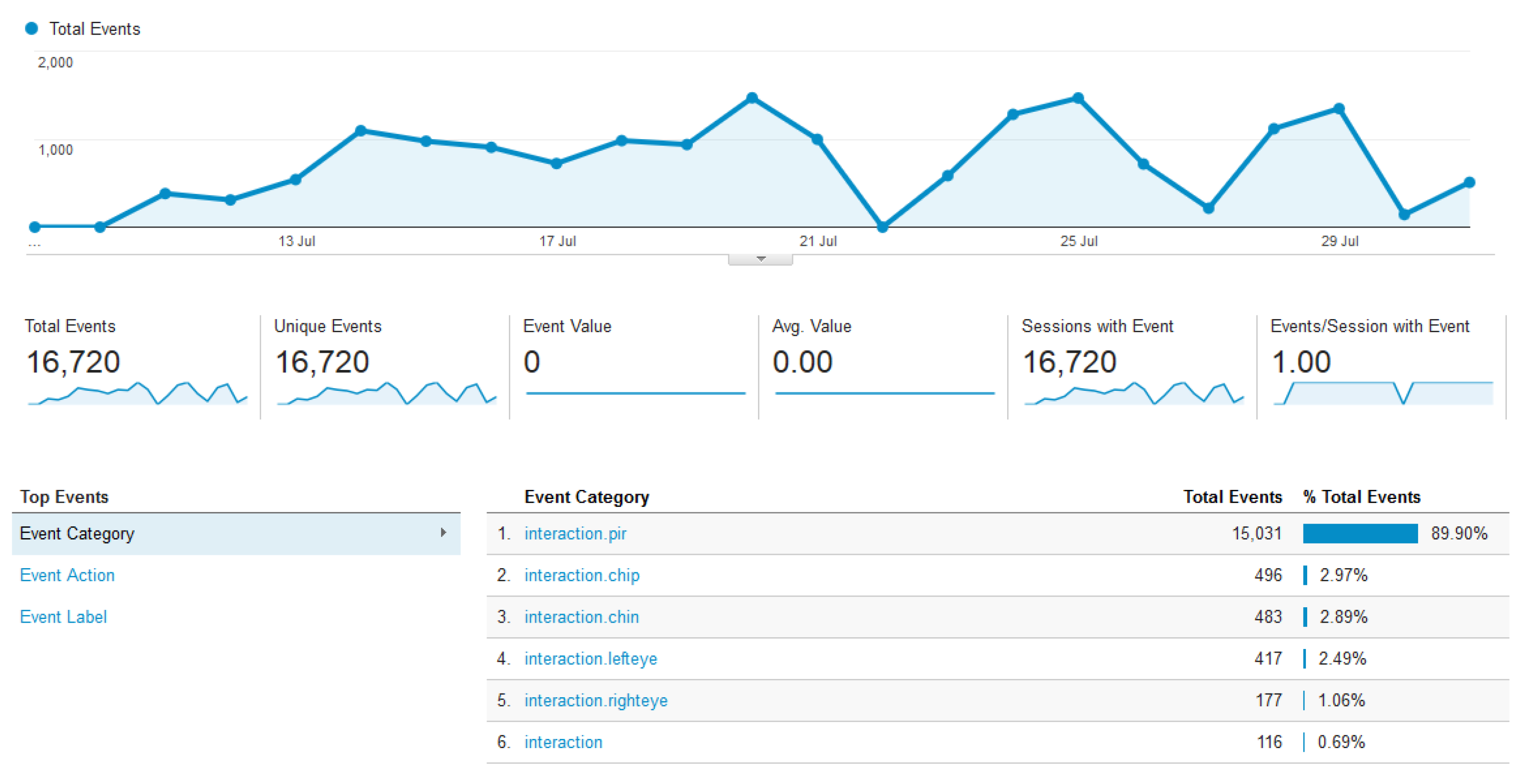

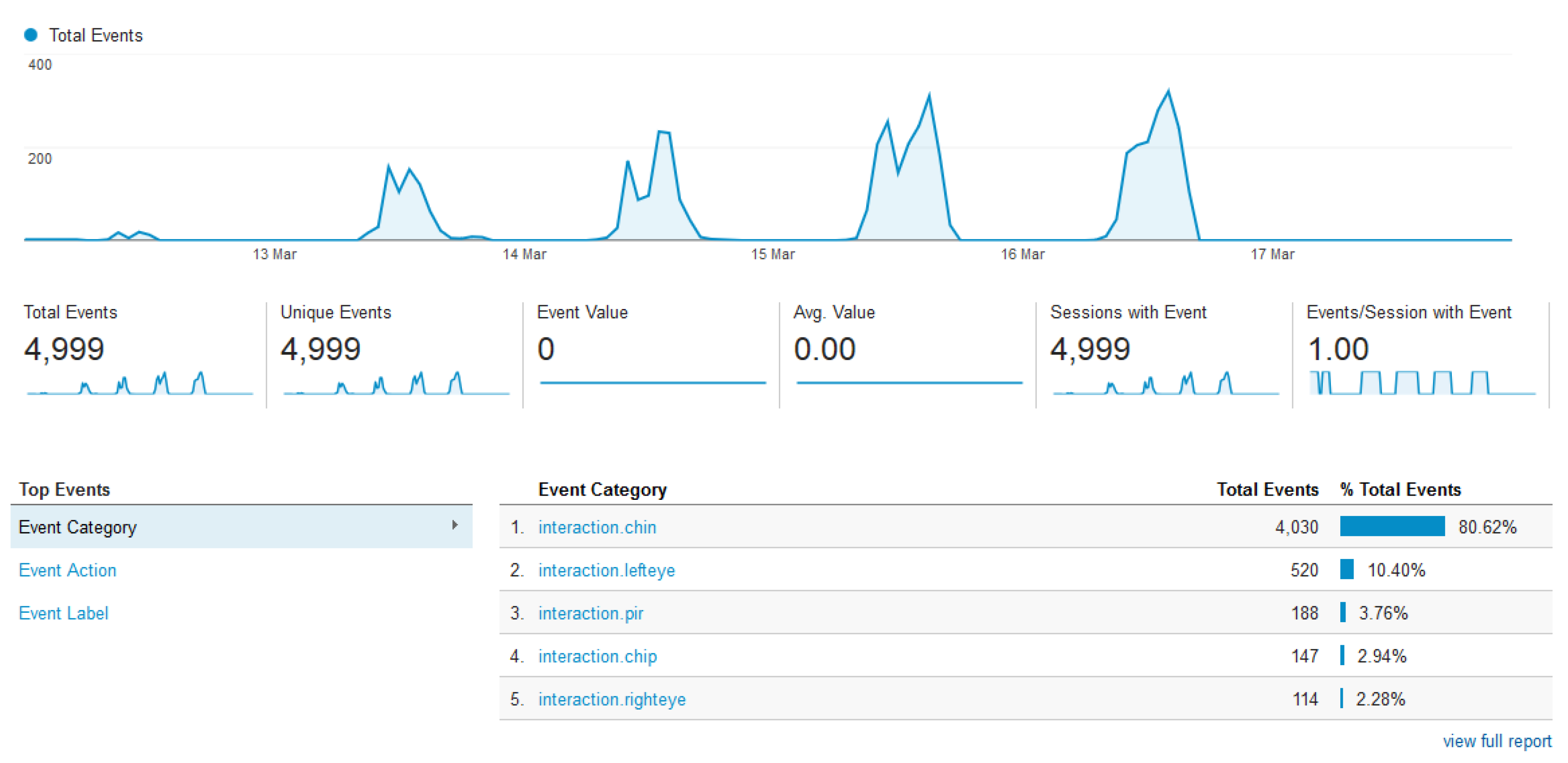

Despite the thermally induced hardware failure, Erica’s deployment was a success with Erica’s analytics, as seen in

Figure 8, showing that a significant number of people interacted with her. This shows that the majority of the interactions observed were from the PIR sensor. This has been attributed to the fact that this sensor did not require visitors to actively engage with Erica, meaning a substantial proportion of the count could be people passively observing her or just walking past. It was also observed that the other interactions available were not immediately obvious. Whilst there was signage describing the different ways that Erica could be interacted with, this was not presented in a child accessible way. Children would approach Erica and start randomly touching and stroking her, until their carers explained the interaction functionality available, having read the signage provided.

Areas where public expectations differed significantly from the design were at the screens embedded in Erica’s sides. These displays cycled automatically through a set content sequence including: details of the other rhinos on the art-trail, Erica’s mood, and details of conservation efforts in the wild. The public expectation, however, was for these to be touch screens that would provide additional methods of interacting with Erica.

One of the features built into Erica that is immediately accessible is the window in her belly to view the electronics. Whilst adults tended to use the mirrors to save having to bend down, children were much more likely to crawl around underneath Erica herself in order to get the best view possible. The team of “Rhino Engineers” responsible for maintaining Erica were all issued with bright red branded t-shirts. Whilst wearing these t-shirts, team members were approached by members of the public who were wanting to know more, or provide feedback including bug reports. This feedback combined with team members’ own observations were used to steer decisions behind the upgrades detailed in

Section 5.

5. Upgrades and Maintenance

After the

Go! Rhinos deployment, Erica returned to the University and the opportunity was taken to carry out general maintenance, perform upgrades based on feedback received and repair damage sustained during the deployment. The majority of the upgrades, with the exception of upgrading to Raspberry Pi 2s, were done in order that Erica could be taken to the 2014 Big Bang fair [

23] as part of the University exhibit.

5.1. Physical Changes

Whilst performing maintenance on Erica during her time on the art trail, it was found that removing the main board was a time consuming task, due to the numerous connections to the body electronics. To address this, the electronics were redesigned to use a limited number of category 5 network cables for all signals connected to the Raspberry Pi SBCs. The new design of cabling infrastructure is shown in

Figure 9, with changes to the electronics discussed in

Section 5.3.

The cabling redesign was also extended into the plinth on which Erica is mounted. During the Go! Rhinos deployment, Erica’s only physical external connection was the main power. This was ideal when Erica was left unattended in a shopping centre but was limiting for exhibition use and debugging. To improve usability, the plinth was fitted with PowerCon input and output power connections, network connections for both internal and external network, audio outputs (for when her internal speaker is not loud enough) and HDMI & USB connections to the interface Pi for debugging. All these connections were carried up from the plinth through Erica’s legs, but can be unplugged to enable the plinth to be removed for transport.

5.2. Processing Upgrades

The performance offered by the original Raspberry Pi model B proved to be a significant limitation and affected all stages of the project, influencing architecture decisions and limiting responsiveness to interactions. When the Raspberry Pi 2 [

24] was announced in 2015, it was an obvious decision to upgrade all Erica’s Raspberry Pi SBCs to this new model to increase performance. Erica’s overall responsiveness improved and allowed for more complex interactions, but the biggest difference observed was in the improvement in the performance of her eyes. Face detection now happens significantly faster and it was possible to implement the ‘QR Cubes’ and ‘See what I see’ as discussed in

Section 5.4.

During the deployment, issues with SD card reliability were encountered. These issues have been explained by the fact that, in all deployment scenarios, the power has occasionally been cut off without performing a graceful shutdown first. This has been a recurring problem through Erica’s multi-year lifetime. In order to simplify the process of recreating SD cards when needed and keeping the systems up to date, Puppet [

25] scripts were created allowing the images to be rebuilt on replacement cards.

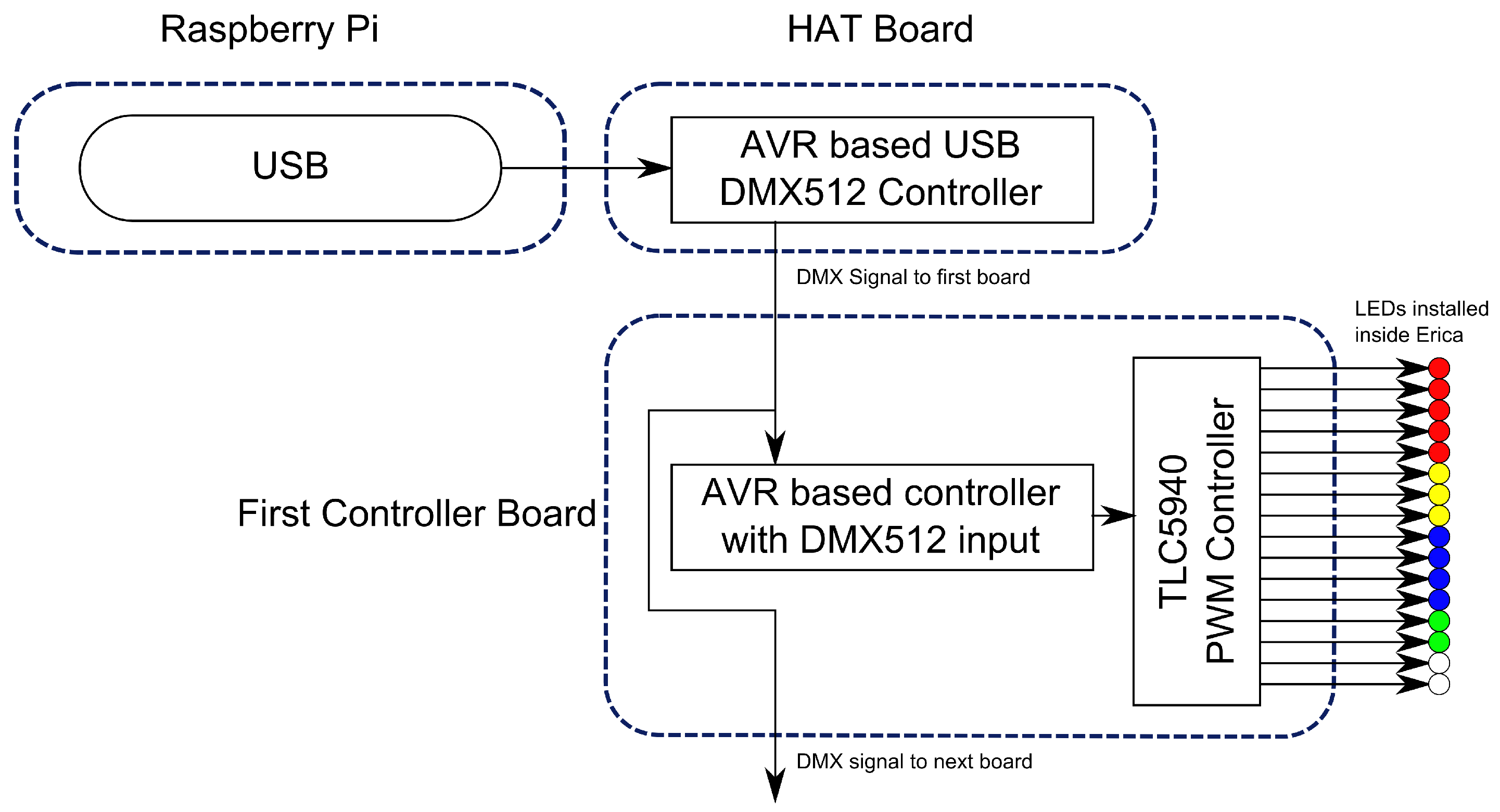

The LED subsystem had proven to be unreliable and susceptible to RF interference during the

Go! Rhinos deployment. This was primarily due to the use of 3.3-volt SPI signals over excessively long cables. The replacement communication protocol chosen was DMX512 [

26] as this is designed to cope with cable lengths significantly greater than needed. Given this change, a new design of hardware was needed, as shown in

Figure 10. The hardware required for the main control interface is shown in

Figure 6b. Having learnt from the scalability issues of using stripboard and having more development time, a PCB was created and the interface on the Pi was replaced with an open source DMX controller.

5.3. LED Hardware Upgrades

The form factor change of the Pi 2 when compared to the model B Pi required a redesign of the hardware interface boards. This new generation of boards was designed to be HAT-compliant (Hardware Attached on Top) [

27]. Rather than create a separate HAT for each function, it was decided that a single modular HAT design (as shown diagrammatically in

Figure 11 and built in

Figure 6c) would simplify deployment and maintenance. These HATs contain an RTC, eye control hardware, a DMX controller and GPIO (General Purpose Input/Output) breakout. The designs for these HATs and all the associated software is Open Source and is available from the Erica github [

28].

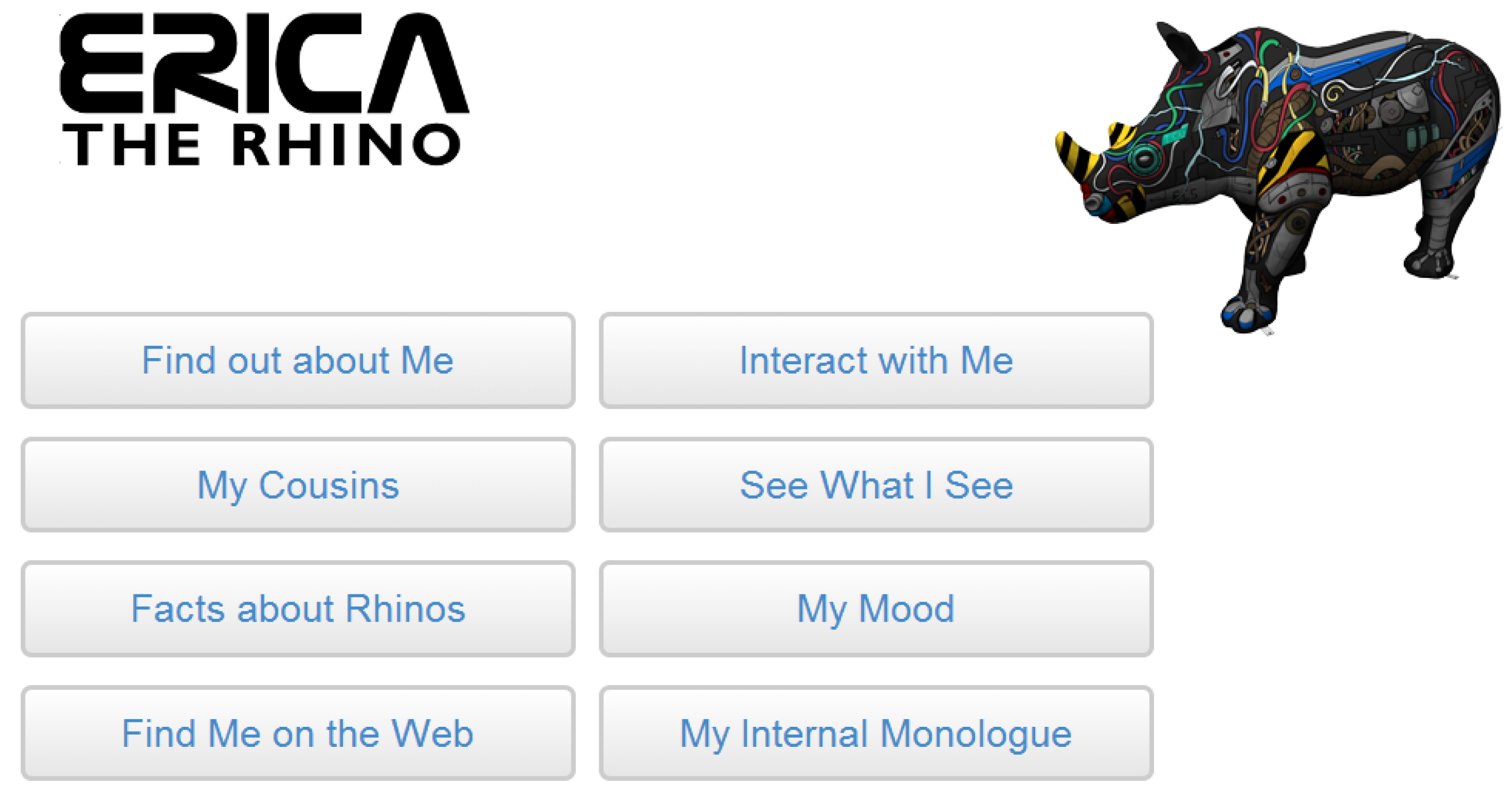

5.4. Screens & Interaction

Initially, the 7” screens mounted in Erica’s sides were HDMI monitors attached to the Brain and Interface Pi SBCs. These were intended to display a loop of static pages for visitors to consume. However, shortly after deploying them onto the art trail, several passers by commented that they were expecting them to be touch screens with interactive content. This reaction continued throughout the deployment. Therefore, it was decided to make an architectural change and replace these screens with Android-based tablet devices connected to Erica’s local wireless network to provide touch interaction with dynamic content. This was done before the Pi touch [

29] displays were available, and if this were to be done now, these displays would be the more obvious choice.

The tablets display a web-based menu system in a kiosk-mode browser that allows visitors to interactively view information about Erica, her mood, rhino conservation and the other Rhinos from the

Go! Rhinos campaign. They also allow visitors to trigger Erica’s eye movement, ear movement, horn LED colour change and body LED animation. The decision was also taken to allow people to see what Erica could see as it was a requested feature. A screen shot of the web interface is shown in

Figure 12.

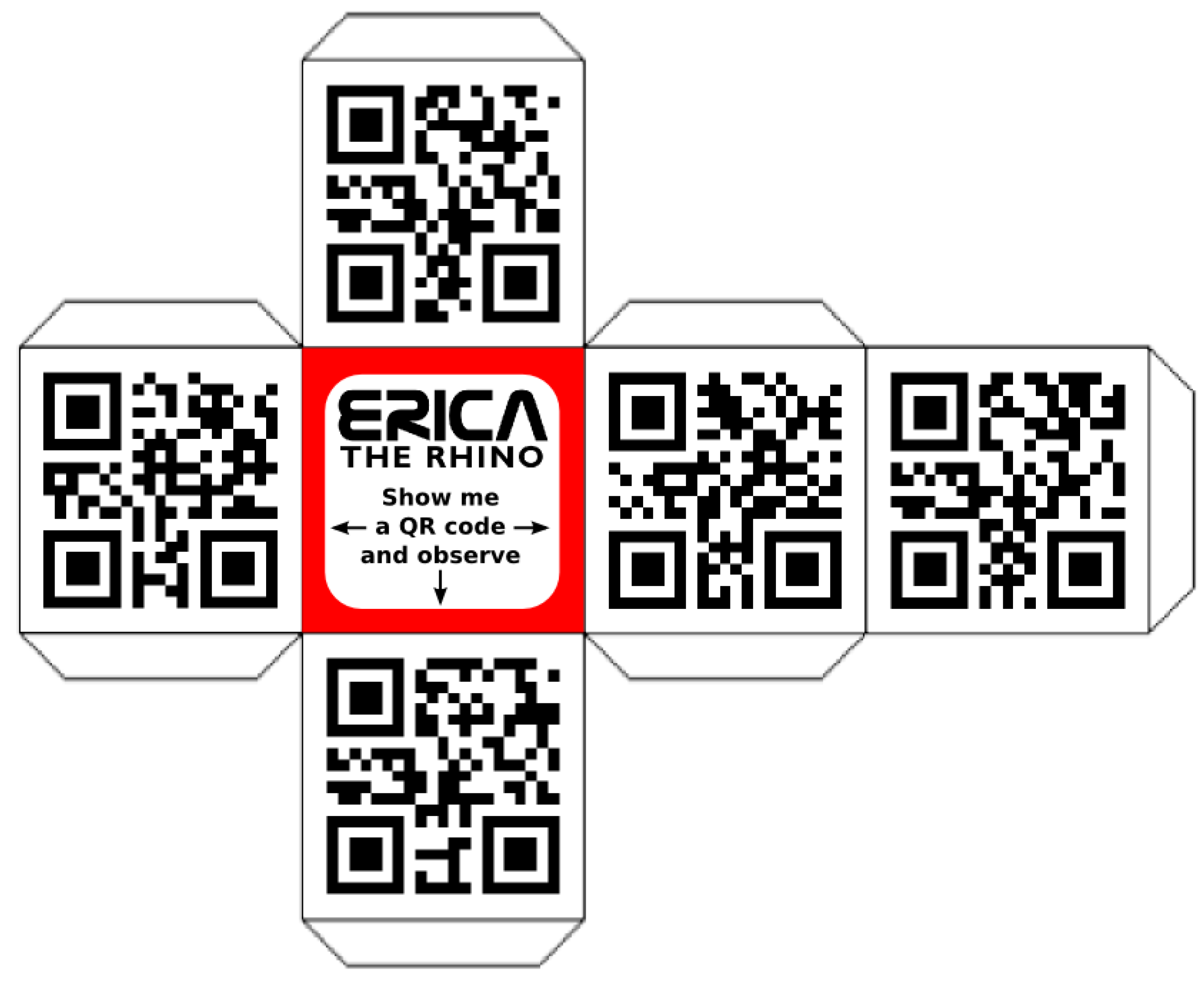

Even after introducing interactive touch screens, it was still felt that the range and ease of interactions with Erica was lacking. The ability to identify QR cubes using Erica’s two webcam eyes had never been fully exploited in a way that was simple and intuitive to an average visitor. Therefore, in June 2015, prior to the University of Southampton’s open days, a number of cardboard cubes were constructed, as illustrated in

Figure 13.

Each of the five QR codes on each cube represent a word within a theme and will lead to some reaction from Erica. One set of codes plays samples of music across a particular theme. Another set allows all of the body LEDs of one colour to be switched on or off depending on which eye the QR code is presented. The final set express one of five different emotions that involve at least two separate outputs.

These cubes have been particularly useful in increasing the amount of interactions with Erica on a day-to-day basis in her permanent home at the University of Southampton. The amount of time a right eye QR code scan has spent in Erica’s short time memory has increased twenty-fold, and, for the left eye, this has increased over one-hundred-fold.

Whilst these improvements were being developed and deployed, Erica was touring the country and receiving visitors at her permanent home in Southampton.

6. Public Engagement and Impact

In terms of public engagement, there were three key outcomes from developing Erica.

While Erica was being initially developed, nine classes (approximately 230 pupils) were invited to see Erica at the University and discuss the sorts of interactions that they could imagine having with her. The pupils then learned about the basics of programming and how the hardware and software inside Erica worked. This was evaluated using questionnaires given to all students, which showed that the classes enjoyed understanding the potential of technology. All of these classes have since returned to the university for follow-up computing workshops.

Evaluating feedback from the general public, the mirror tiles placed underneath Erica to allow people to easily see the technology inside and the visits from the ‘rhino engineers’ when things needed fixing were both received positively. In particular, it helped make the public aware that this was a research project rather than a commercial one and gave people the opportunity to find out how Erica functioned.

While on display during the

Go! Rhinos campaign, a pop-up classroom was run that taught programming to almost 1200 young people from the local community. They were told about Raspberry Pi computers, and how they could use software to build their own rhino components. Several participants made return visits to this workshop and parents were impressed at how much their children had learnt and carried on learning at home. These sessions were run in addition to the outreach sessions organised by Marwell Wildlife as part of the wider

Go! Rhinos campaign. This campaign proved so successful that Marwell Wildlife are organising a follow on event this year focusing on Zebras [

30].

As a result of the project, the authors have been approached by the organisers of science public engagement events to take Erica on tour. Erica was on display at the Big Bang Fair in March 2014 where there were approximately 5000 interactions over the four-day long event. Approximately 4000 of these interactions were people “feeding” Erica by touching her chin sensor as shown in

Figure 14. Erica was also at the 2015 Cheltenham Science Festival. In addition to external visits, she has been part of the internal university science days for the last three years, which see approximately 4000 to 5000 people through the door each year.

No matter where Erica has been displayed she has received interest from parents and children alike, with conversations ranging from electronics and programming to rhino conversation via her artwork. She was a finalist in the UK Public Engagement Awards, obtained a University of Southampton Vice-Chancellor’s award, appeared in international media [

31] and has been used as an example of Pi outreach by companies such as RS [

32], and Rapid Electronics [

33].

Erica is now on permanent display in the foyer of the Mountbatten building at the University of Southampton where she has regular interactions with staff and students of the University along with members of the local community. It is safe to say that Erica is now a local celebrity!

7. Conclusions

Erica the Rhino was created as a piece of interactive artwork to promote Electronics and Computer Science and the University of Southampton. In order to achieve this, an inter-disciplinary team was brought together from across the University. A feature set was decided upon and implemented, along with an artistic design for Erica’s exterior. In order to implement these features, it was decided to use Raspberry Pi SBCs, as this way anyone interested in the technologies in use could acquire the same hardware cheaply to enable them to experiment themselves. Furthermore, as all the software and custom hardware created for this project is Open Source, other parties could develop their own art pieces using the same foundations.

The choice of using Raspberry Pi SBCs inside Erica to provide the compute power has influenced the entire design of Erica, both in terms of features available and how they are implemented. The same features could have been implemented using a less complicated architecture by combining a few Arduinos [

34] with a small form factor PC. The outreach and engagement benefits of using the Pi have vastly outweighed the additional complication that it brought. In terms of outreach, Erica has been seen by several thousand young people and has prompted conversations on a wide variety of topics, some of whom have been inspired to continue learning at home. Overall, the entire project has been very successful, surpassing any expectations that the team had when the project was started.