Dunhuang Mural Style Transfer Using Vision Mamba: In-Context Prompting and Physically Motivated HSV Modulation

Abstract

1. Introduction

- Core Framework: We propose Dh-Mamba, a novel hierarchical visual Mamba framework tailored for high-fidelity cultural heritage style transfer. By replacing quadratic attention with linear-time in-context prompting (CrossMamba), it achieves globally consistent style propagation while maintaining high computational efficiency ().

- Key Mechanisms: Within this framework, we design a style-aware dynamic modulation strategy. By dynamically regulating the memory horizon of the state-space model based on target texture complexity, the network achieves adaptive control over the granularity and continuity of the generated strokes.

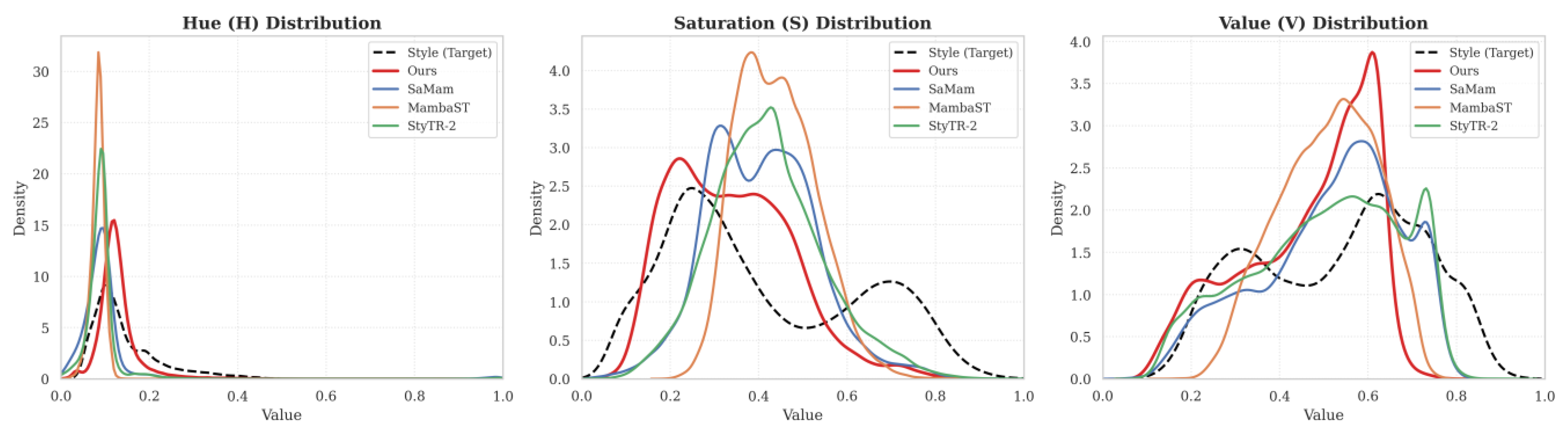

- Physically Motivated Design: Motivated by the multidimensional physical degradation of Dunhuang murals (e.g., pigment oxidation), we introduce an Orthogonal Decoupled HSV Modulator. By enforcing orthogonal regularization in the latent space, it facilitates the disentanglement of color and structure attributes, mitigating oxidation-related artifacts and accurately reproducing authentic mineral pigment palettes without structural contamination.

2. Related Work

2.1. CNN-Based and GAN-Based Style Transfer

2.2. Transformer-Based Style Transfer

2.3. State Space Models for Style Transfer

3. Methodology

3.1. Prerequisite Knowledge: Discretized State-Space Model

3.2. Overview

3.3. CrossMamba: In-Context Style Injection

3.4. Style-Aware Dynamics Modulation

3.5. Orthogonal HSV Modulator

3.6. Loss Functions

- Perceptual Content Loss (): Computing the Euclidean distance between the feature maps of the generated image and the content image at the relu4_1 layer of the VGG-19 network:

- Style Loss (): Matching the Gram matrix statistics of multi-layer VGG features:Among them, Denotes the Gram matrix computation, .

- Wavelet Texture Loss (): To eliminate checkerboard artifacts and enhance high-frequency details, we introduce the Haar wavelet transform (DWT). The image is decomposed into the low-frequency LL and high-frequency sub-bands {LH, HL, HH}, with constraints applied only to the high-frequency components:

4. Experiments

4.1. Implementation Details

4.2. Evaluation Details

4.3. Quantitative Results

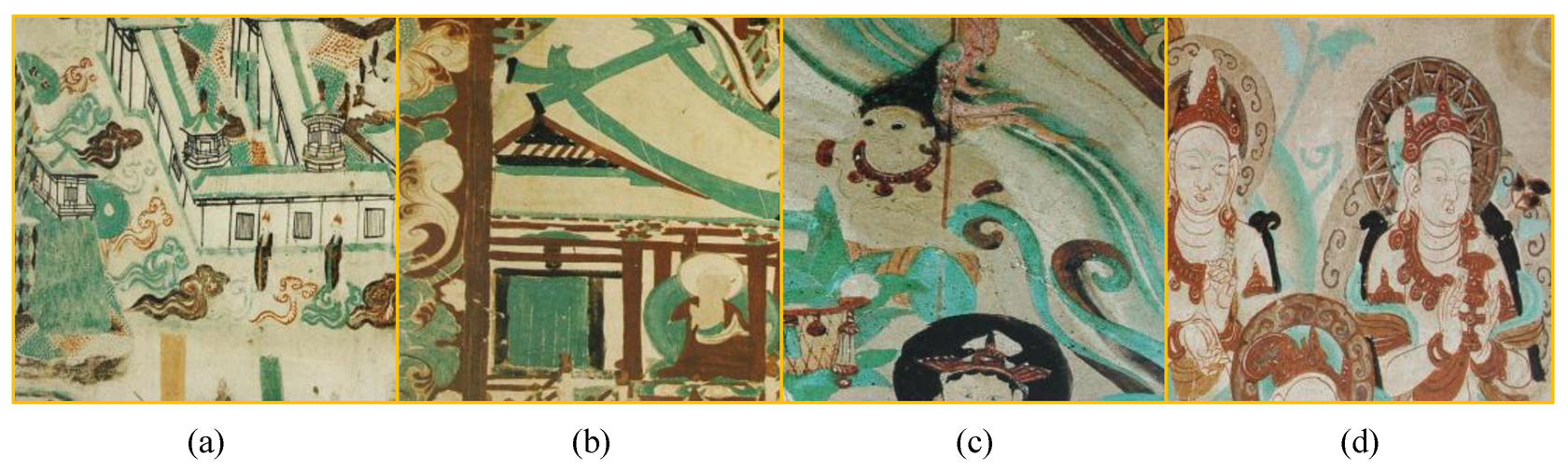

4.4. Qualitative Analysis

4.5. Ablation Study

4.5.1. Additive Ablation on Core Modules

4.5.2. Fine-Grained Ablation on CrossMamba and Key Sub-Designs

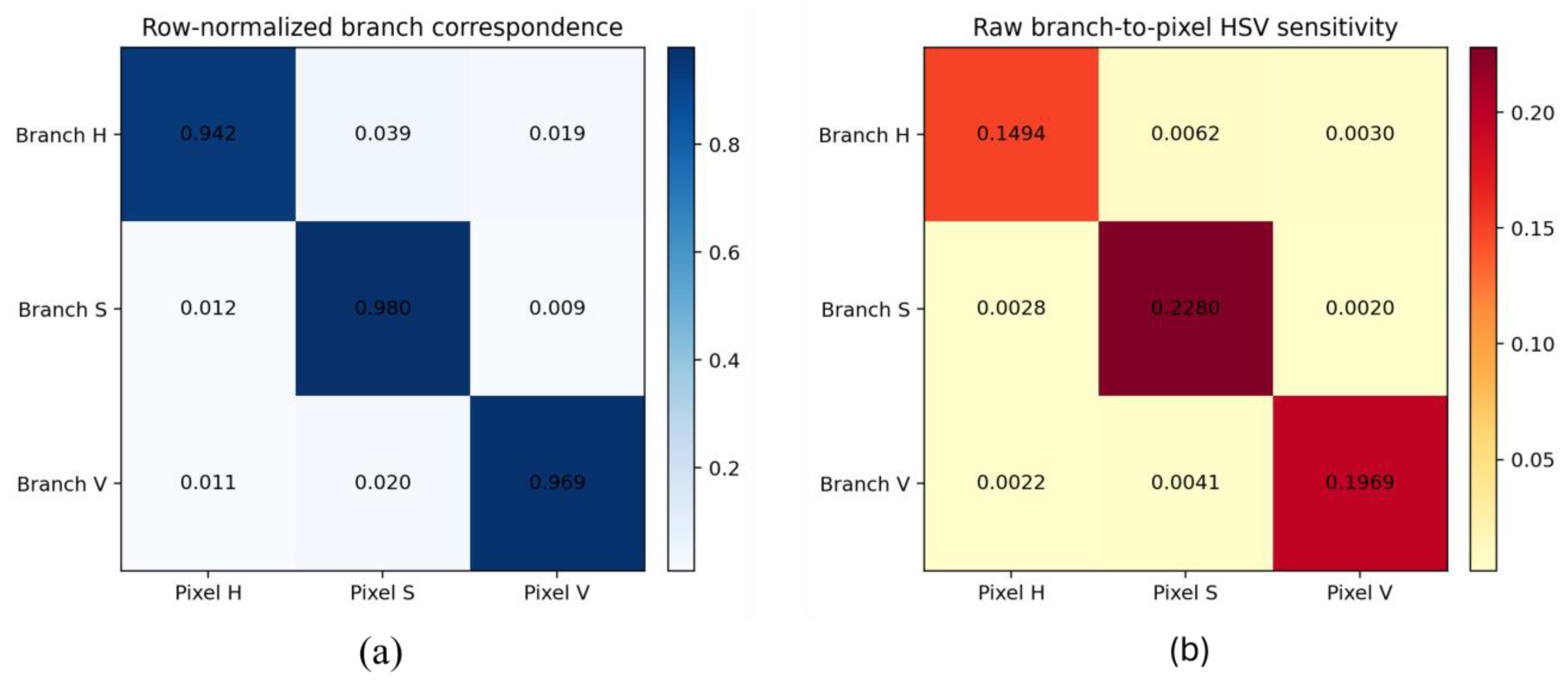

4.5.3. Validation of Branch-to-Pixel HSV Correspondence

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| IST | Image Style Transfer |

| CNN | Convolutional Neural Network |

| GAN | Generative Adversarial Network |

| SSM | Structured State Space Model |

| HSV | Hue–Saturation–Value |

| DWT | Discrete Wavelet Transform |

| FID | Fréchet Inception Distance |

| ArtFID | Art Fréchet Inception Distance |

| LPIPS | Learned Perceptual Image Patch Similarity |

| VGG | Visual Geometry Group network |

| LLM | Large Language Model |

| U-Net | U-shaped Network |

| GPU | Graphics Processing Unit |

References

- Gatys, L.A.; Ecker, A.S.; Bethge, M. Image style transfer using convolutional neural networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 2414–2423. [Google Scholar]

- Jing, Y.; Yang, Y.; Feng, Z.; Ye, J.; Yu, Y.; Song, M. Neural style transfer: A review. IEEE Trans. Vis. Comput. Graph. 2020, 26, 3365–3385. [Google Scholar] [CrossRef] [PubMed]

- Chen, D.; Yuan, L.; Liao, J.; Yu, N.; Hua, G. Stereoscopic neural style transfer. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–22 June 2018; pp. 6654–6663. [Google Scholar]

- Cao, Y.; Zhang, Y.; Lin, Y.; Wu, K. Dunhuang art style transfer via hierarchical vision transformer and color consistency constraints. IEEE Trans. Consum. Electron. 2025, 71, 3240–3251. [Google Scholar] [CrossRef]

- Ding, Y.; Wang, H.; Liu, N.; Li, T. TCP-RBA: Semi-supervised learning for traditional Chinese painting classification with random brushwork augment. J. Intell. Fuzzy Syst. 2024, 46, 10653–10663. [Google Scholar] [CrossRef]

- Liu, N.; Wang, H.; Lu, L.; Ding, Y.; Tian, M. Research on the Influence of Multi-Scene Feature Classification on Ink and Wash Style Transfer Effect of ChipGAN. IEEE Access 2024, 12, 129733–129752. [Google Scholar] [CrossRef]

- Chen, C.; Wang, H. Traditional cloud pattern classification algorithm based on semi-supervision with random line augment. Sci. Rep. 2025, 15, 29225. [Google Scholar] [CrossRef] [PubMed]

- Huang, X.; Belongie, S. Arbitrary style transfer in real-time with adaptive instance normalization. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 1501–1510. [Google Scholar]

- Park, D.Y.; Lee, K.H. Arbitrary style transfer with style-attentional networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 15–20 June 2019; pp. 5880–5888. [Google Scholar]

- Zhang, Y.; Li, M.; Li, R.; Jia, K.; Zhang, L. Exact feature distribution matching for arbitrary style transfer and domain generalization. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 18–24 June 2022; pp. 8035–8045. [Google Scholar]

- Risser, E.; Wilmot, P.; Barnes, C. Stable and controllable neural texture synthesis and style transfer using histogram losses. arXiv 2017, arXiv:1701.08893. [Google Scholar] [CrossRef]

- Zhang, H.; Dana, K. Multi-style generative network for real-time transfer. In Proceedings of the European Conference on Computer Vision (ECCV) Workshops, Munich, Germany, 8–14 September 2018. [Google Scholar]

- Deng, Y.; Tang, F.; Dong, W.; Ma, C.; Pan, X.; Wang, L.; Xu, C. StyTr2: Image style transfer with transformers. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 11326–11336. [Google Scholar]

- Wu, X.; Hu, Z.; Sheng, L.; Xu, D. StyleFormer: Real-time arbitrary style transfer via parametric style composition. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 10–17 October 2021; pp. 14618–14627. [Google Scholar]

- Huang, S.; An, J.; Wei, D.; Luo, J.; Pfister, H. QuantArt: Quantizing image style transfer towards high visual fidelity. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Vancouver, BC, Canada, 17–24 June 2023; pp. 5947–5956. [Google Scholar]

- Dosovitskiy, A. An image is worth 16 × 16 words: Transformers for image recognition at scale. arXiv 2020, arXiv:2010.11929. [Google Scholar]

- Yoo, J.; Uh, Y.; Chun, S.; Kang, B.; Ha, J.W. Photorealistic style transfer via wavelet transforms. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Republic of Korea, 27 October–2 November 2019; pp. 9036–9045. [Google Scholar]

- Chen, Z.; Wang, W.; Xie, E.; Lu, T.; Luo, P. Towards ultra-resolution neural style transfer via thumbnail instance normalization. In Proceedings of the AAAI Conference on Artificial Intelligence, Virtual, 22 February–1 March 2022; Volume 36, pp. 393–401. [Google Scholar]

- Gu, A.; Dao, T. Mamba: Linear-Time Sequence Modeling with Selective State Spaces. arXiv 2023, arXiv:2312.00752. [Google Scholar] [CrossRef]

- Liu, H.; Wang, L.; Zhang, Y.; Yu, Z.; Guo, Y. SaMam: Style-aware state space model for arbitrary image style transfer. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 11–15 June 2025; pp. 28468–28478. [Google Scholar]

- Botti, F.; Ergasti, A.; Rossi, L.; Fontanini, T.; Ferrari, C.; Bertozzi, M.; Prati, A. Mamba-ST: State space model for efficient style transfer. In Proceedings of the 2025 IEEE/CVF Winter Conference on Applications of Computer Vision (WACV), Tucson, AZ, USA, 28 February–4 March 2025; pp. 7797–7806. [Google Scholar]

- Zhu, L.; Liao, B.; Zhang, Q.; Wang, X.; Liu, W.; Wang, X. Vision Mamba: Efficient visual representation learning with bidirectional state space model. arXiv 2024, arXiv:2401.09417. [Google Scholar] [CrossRef]

- Liu, Y.; Tian, Y.; Zhao, Y.; Yu, H.; Xie, L.; Wang, Y.; Sun, J.; Liu, Y. VMamba: Visual state space model. Adv. Neural Inf. Process. Syst. 2024, 37, 103031–103063. [Google Scholar]

- Jeon, M. Adaptive normalization mamba with multi scale trend decomposition and patch moe encoding. arXiv 2025, arXiv:2512.06929. [Google Scholar] [CrossRef]

- Chung, J.; Hyun, S.; Heo, J.P. Style injection in diffusion: A training-free approach for adapting large-scale diffusion models for style transfer. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 16–22 June 2024; pp. 8795–8805. [Google Scholar]

- Zhang, Y.; Huang, N.; Tang, F.; Huang, H.; Ma, C.; Dong, W.; Xu, C. Inversion-based style transfer with diffusion models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Vancouver, BC, Canada, 17–24 June 2023; pp. 10146–10156. [Google Scholar]

- Li, X.; Cao, H.; Zhang, Z.; Hu, J.; Jin, Y.; Zhao, Z. Artistic neural style transfer algorithms with activation smoothing. In Proceedings of the 2025 2nd International Conference on Informatics Education and Computer Technology Applications (IECCT), Kuala Lumpur, Malaysia, 17–19 January 2025; pp. 1–6. [Google Scholar]

- Zhu, J.Y.; Park, T.; Isola, P.; Efros, A.A. Unpaired image-to-image translation using cycle-consistent adversarial networks. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 2223–2232. [Google Scholar]

- Zheng, C.; Cham, T.J.; Cai, J. The spatially-correlative loss for various image translation tasks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 20–25 June 2021; pp. 16407–16417. [Google Scholar]

- Liu, S.; Lin, T.; He, D.; Li, F.; Wang, M.; Li, X.; Sun, Z.; Li, Q.; Ding, E. AdaAttN: Revisit attention mechanism in arbitrary neural style transfer. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 10–17 October 2021; pp. 6649–6658. [Google Scholar]

- Hong, K.; Jean, S.; Lee, J.; Ahn, N.; Kim, K.; Lee, P.; Kim, D.; Uh, Y.; Byun, H. AesPA-Net: Aesthetic Pattern-Aware Style Transfer Networks. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Paris, France, 1–6 October 2023; pp. 22758–22767. [Google Scholar]

- Kwon, G.; Ye, J.C. ClipStyler: Image style transfer with a single text condition. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 18–24 June 2022; pp. 18062–18071. [Google Scholar]

- Wang, Z.; Liu, Z.-S. StyleMamba: State Space Model for Efficient Text-driven Image Style Transfer. arXiv 2024, arXiv:2405.05027. [Google Scholar]

- Hong, Z.; Dong, N.; Di, Y.; Xu, X.; Hu, R.; Shao, Y.; Ling, R.; Wang, Y.; Wang, J.; Zhang, Z.; et al. StyMam: A Mamba-Based Generator for Artistic Style Transfer. arXiv 2026, arXiv:2601.12954. [Google Scholar]

- Brown, T.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.D.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A.; et al. Language models are few-shot learners. Adv. Neural Inf. Process. Syst. 2020, 33, 1877–1901. [Google Scholar]

| Model | ArtFID ↓ | FID ↓ | LPIPS ↓ | CFSD ↓ | Memory Usage (MiB) ↓ | |

|---|---|---|---|---|---|---|

| F-LSeSim | GAN | 209.01 | 117.83 | 0.76 | 0.57 | 10.52 |

| CycleGAN | GAN | 285.56 | 172.01 | 0.66 | 0.50 | 11.43 |

| AdaAttN | Transformer | 234.12 | 144.52 | 0.62 | 0.53 | 26.57 |

| AesPA-NET | Transformer | 295.74 | 186.32 | 0.59 | 0.52 | 24.20 |

| StyTr2 | Transformer | 245.44 | 155.98 | 0.58 | 0.49 | 35.39 |

| SaMam | Mamba | 242.68 | 156.21 | 0.56 | 0.48 | 18.50 |

| Mamba-ST | Mamba | 214.06 | 142.11 | 0.51 | 0.55 | 14.14 |

| Ours | Mamba | 163.15 | 106.05 | 0.53 | 0.51 | 20.14 |

| Model | 256 × 256 (ms) | 512 × 512 (ms) | 1024 × 1024 (ms) | 2048 × 2048 (ms) |

|---|---|---|---|---|

| AdaAttN | 3193.03 | 3676.55 | 4083.25 | 5360.98 |

| AesPA-Net | 3168.75 | 3576.83 | 4083.25 | 5360.98 |

| StyTr2 | 3243.95 | 3495.89 | 5716.82 | — |

| SaMam | 42.50 | 155.15 | 668.10 | 3126.91 |

| Mamba-ST | 39.74 | 166.45 | 659.73 | 3029.42 |

| Ours | 37.21 | 144.89 | 599.23 | 3042.88 |

| Model | Style-Aware Δ | HSV Modulator | CrossMamba | ArtFID ↓ | FID ↓ | LPIPS ↓ | CFSD ↓ | MiB ↓ |

|---|---|---|---|---|---|---|---|---|

| Baseline | False | False | False | 195.38 | 125.01 | 0.57 | 0.52 | 18.17 |

| Model A (dt) | True | False | False | 191.07 | 123.68 | 0.54 | 0.52 | 18.47 |

| Model B (HSV) | False | True | False | 204.10 | 131.66 | 0.55 | 0.51 | 18.37 |

| Model (dt + HSV) | True | True | False | 186.15 | 119.89 | 0.53 | 0.51 | 19.57 |

| Full Model | True | True | True | 163.15 | 106.05 | 0.52 | 0.50 | 20.14 |

| Configuration | ArtFID ↓ | FID ↓ | LPIPS ↓ | CFSD ↓ | |

|---|---|---|---|---|---|

| A | Bi-CrossMamba | 163.15 | 106.05 | 0.5269 | 0.5089 |

| B | No-CrossMamba | 172.76 | 111.42 | 0.5493 | 0.5154 |

| C | Uni-CrossMamba | 167.96 | 108.75 | 0.5437 | 0.5127 |

| D | w/o Style Prefix in Backward Pass | 171.61 | 112.59 | 0.5357 | 0.5166 |

| E | w/o Dynamic Δt | 164.89 | 107.97 | 0.5411 | 0.5135 |

| F | w/o Orthogonal Loss | 184.29 | 118.42 | 0.5545 | 0.5318 |

| G | w/o Modal Embedding | 184.78 | 121.02 | 0.5283 | 0.5236 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Qin, P.; Liu, L.; Wang, H.; Ma, S.; Chen, C.; Han, Z.; Cheng, M. Dunhuang Mural Style Transfer Using Vision Mamba: In-Context Prompting and Physically Motivated HSV Modulation. Electronics 2026, 15, 1578. https://doi.org/10.3390/electronics15081578

Qin P, Liu L, Wang H, Ma S, Chen C, Han Z, Cheng M. Dunhuang Mural Style Transfer Using Vision Mamba: In-Context Prompting and Physically Motivated HSV Modulation. Electronics. 2026; 15(8):1578. https://doi.org/10.3390/electronics15081578

Chicago/Turabian StyleQin, Peijun, Long Liu, Hongjuan Wang, Siyuan Ma, Cui Chen, Zixuan Han, and Mingzhi Cheng. 2026. "Dunhuang Mural Style Transfer Using Vision Mamba: In-Context Prompting and Physically Motivated HSV Modulation" Electronics 15, no. 8: 1578. https://doi.org/10.3390/electronics15081578

APA StyleQin, P., Liu, L., Wang, H., Ma, S., Chen, C., Han, Z., & Cheng, M. (2026). Dunhuang Mural Style Transfer Using Vision Mamba: In-Context Prompting and Physically Motivated HSV Modulation. Electronics, 15(8), 1578. https://doi.org/10.3390/electronics15081578